Abstract

Introduction

Colonoscopy is a complex medical procedure that requires the simultaneous coordination of technical skills for endoscope manipulation, cognitive skills for lesion diagnosis, and integrative skills for communication and teamwork. Due to these factors, effective training of apprentices can be difficult to achieve. Traditionally, colonoscopy apprentices learned by training in clinical settings while being supervised by an experienced colonoscopist. Over the last few decades, simulation-based training methods have become broadly accepted for the training or assessing colonoscopy skills by novices. As structured platforms designed for specific fields, simulators allow the replication of real-world settings where repetitive training is not possible due to being too expensive or even dangerous.1–3

A main concern regarding the use of simulators for training or evaluation is their validation.4–6 With the focus on simulators, validation should verify the simulator’s realism, that is, how similar it is to the real-world elements it simulates, as well as its usefulness, for example as a training and assessment tool, without overlooking the simulator’s usability, that is, its user-friendliness.

According to the literature, two different types of validation are distinguished

7

: • Subjective approaches involve the evaluation of the perspectives of users who engage in a simulated procedure, both novices and experts. • Objective approaches focus on direct measurements determining the ability of the simulator to differentiate between varying levels of expertise or assess the impact of skill transfer from training in simulators in a measured manner (i.e., by using performance parameters provided by the simulator).

Usually, to carry out a correct validation process of simulators (and in particular those of colonoscopies), it must focus on these two types, usually considering first a low-level validation (subjective), to then proceed to a high level one (objective). In addition to these two categories, a third component that deserves validation is usability (i.e., user-friendliness, intuitive user interface). This is considered a transversal category that goes through the aforementioned category classification since it impacts all facets of the simulator. In any case, it tends to be assessed through subjective validation approaches. 8

There are several issues to consider in the subjective validation approach of simulators, particularly in the case of colonoscopy simulators. First, the level of realism in the colonoscopy scenario, as it is imperative to contemplate the degree of accuracy in the depiction of the large intestine organ models. Factors such as textures, colors, shapes, level of intricacy, and even potential pathological conditions that may be portrayed within them should be considered. Second, it is worth considering the responses of the simulator to various manipulations, such as haptic interactions, force feedback, tolerance for applied forces, and deformations, depending on the specific type of simulator under consideration. Finally, it is imperative to consider the users’ perspective on whether the educational inputs they are receiving aligns with the intended offerings of the simulator. For instance, if a simulator claims to provide education on specific medical conditions, it is crucial that users actually receive that education in a targeted manner when utilizing the simulator. Two categories of subjective validity are distinguished in the literature9,10: • Face validity: The degree to which the items of instrument look as though they are an adequate reflection of the construct to be measured. In the simulation domain, it refers to how well the simulator reflects aspects of the real subject. • Content validity: The degree to which the content of an instrument is an adequate reflection of the construct to be measured. In the simulation domain, the extent to which a feature of the simulator is really being adequately addressed.

For example, a simulator intended for colonoscopy technical skill development that ends up just improving the theoretical knowledge about colonoscopy has low content validity.

The purpose of this paper is to review the different approaches that have been used in recent years to conduct a subjective assessment of colonoscopy simulators, specifically the computerized models. We are specifically interested in this category of simulators as we are presently engaged in the development of our own computerized simulator. 11 To achieve this objective, a systematic review of the methods and procedures for the subjective validation of computerized colonoscopy simulators has been carried out focusing on the identification of the key features that should be considered.

Methodology

The systematic review of existing literature was carried out by conducting searches in multiple databases with varying scopes (following certain PRISMA 12 principles), and it was registered on the Open Science Framework (OSF) (Registration Number: [10.17,605/OSF.IO/PYR4N]). This review was not registered on PROSPERO. These included PubMed, which indirectly covers MEDLINE (its largest indexed subset), both of which are known for their prominence in the medical field. Additionally, multidisciplinary databases such as Google Scholar and ScienceDirect were used, along with IEEE Xplore, a database specializing in IT and computing.

In relation to the search terms, these included: assessment, evaluation, validity, survey, questionnaire, effectiveness, effectivity, face validity, content validity, subjective validity, usability, fidelity, realism, and validity testing. These terms, which all relate to subjective validation, were searched in combination with the expression “colonoscopy simulator” (e.g., “colonoscopy simulator survey”) in order to identify all relevant papers relating to colonoscopy simulation. Regarding the review process, it comprised three sequential steps. Firstly, an initial search was conducted using the cited expressions, selecting for each expression in each database the first 50 results if available. Next, papers were screened by reviewing their titles and abstracts to filter out irrelevant ones while removing duplicates. Finally, the remaining papers underwent a comprehensive analysis, selecting only the pertinent ones to be included in the review.

Studies published since 2010 that conducted subjective validation of computerized colonoscopy simulators were included in the review. At the time of conducting the systematic review, we initially considered that a range of 10 years was appropriate for state-of-the-art studies. However, in order to include several significant studies in the review, we extended the range to 13 years (2010-2023). The exclusion criteria were as follows: • Reviews, congress abstracts, and inaccessible papers were excluded. • Papers pertaining to endoscopic methods other than colonoscopy were also excluded (e.g., Esophagogastroduodenoscopy (EGD), Endoscopic retrograde cholangiopancreatography (ERCP)) and those related to surgical procedures (e.g., Laparoscopy). • Studies regarding robotized endoscopes or automated navigation systems for colonoscopy simulation were excluded, as only traditional colonoscopy procedures with manual endoscope control were considered in this review. • Publications without access to the subjective validation method (e.g., questionnaires) or, in its absence, without a clear definition of the validated aspects were also excluded.

Results

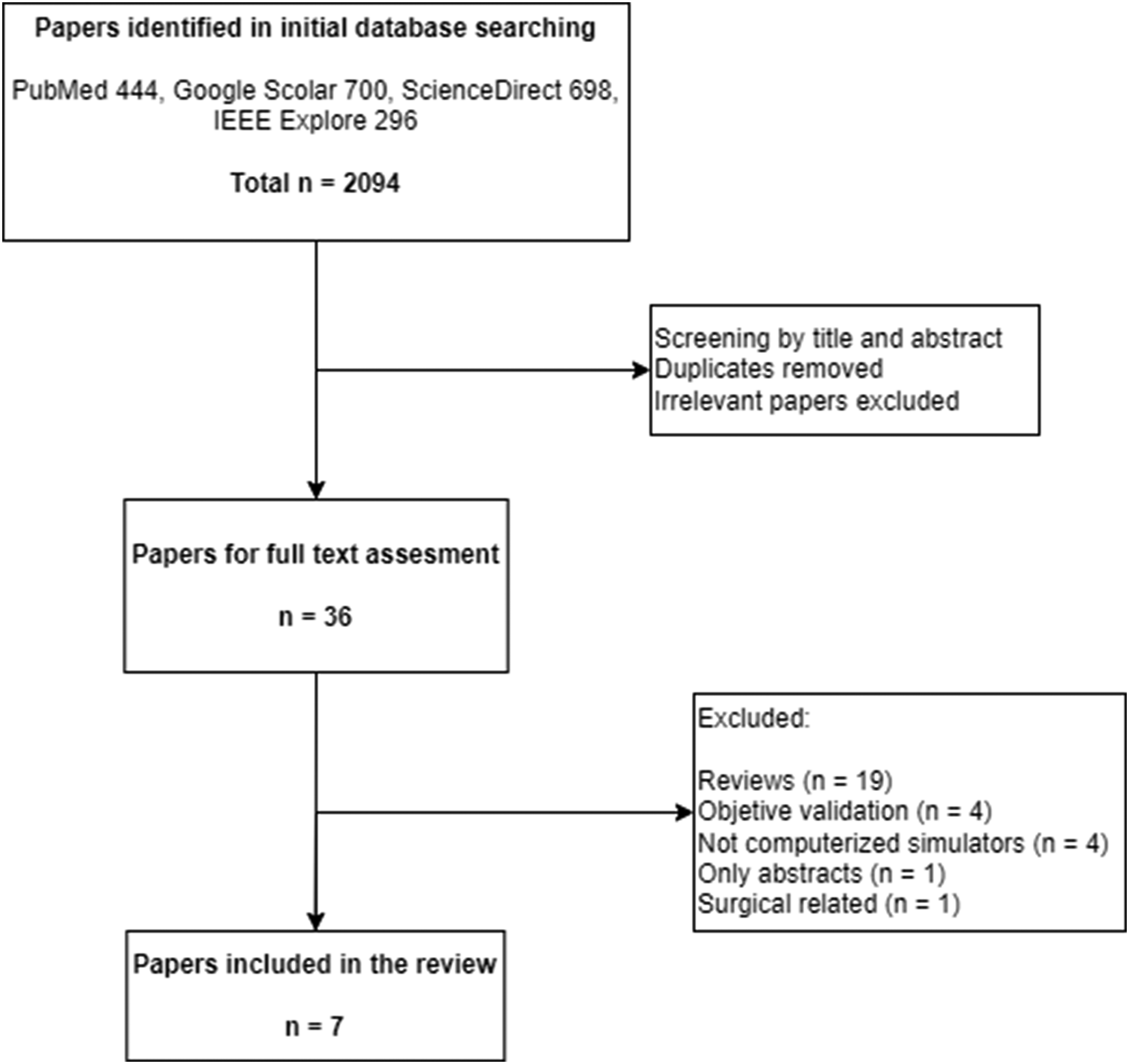

The results of the research are depicted in Figure 1. An initial search in all the databases identified 2094 papers. From these, after screening by title and abstract, removing duplicates, and excluding irrelevant studies, 36 papers remained for review. After a screening of the full text, 29 papers were excluded for meeting several exclusion criteria (Figure 1), leaving a total of seven papers for inclusion in the review (Table 1). Paper search, screening, and selection process. Summary of papers included in the review.

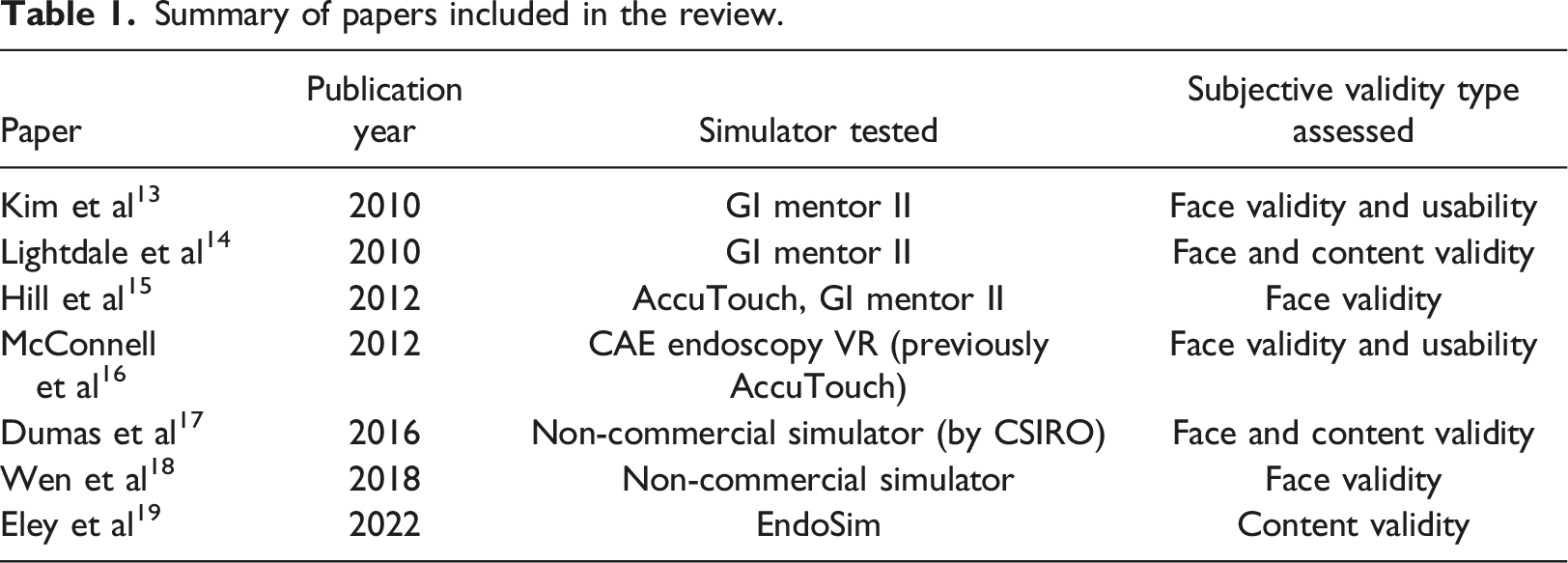

Regarding the simulators tested in the studies: three papers validated the GI Mentor II simulator (Simbionix)13–15; two validated the CAE Endoscopy VR simulator (previously AccuTouch)15,16; two validated unnamed non-commercial simulators17,18; and one validated the Endosim simulator. 19

As shown in Table 1, face validity was assessed in all studies except for the paper of Eley et al. 19 Face validity was reported in the study, but a further review of the validation indicated that content validity was assessed instead. Content validity was reported for the CAE Endoscopy VR. 16 However, after further reviewing the questionnaires used, we concluded that face validity and usability were tested instead. Content validity was also tested but not reported for the GI Mentor II 14 and a non-commercial simulator. 17 Usability was tested in two studies, one for the GI Mentor 13 and one for the CAE Endoscopy VR, 16 but not reported in the studies. A detailed explanation regarding reported but not tested validities or vice versa due to the mislabeling of validity types in the studies is provided below.

The validation procedures conducted in each study were as follows: • Kim et al.

13

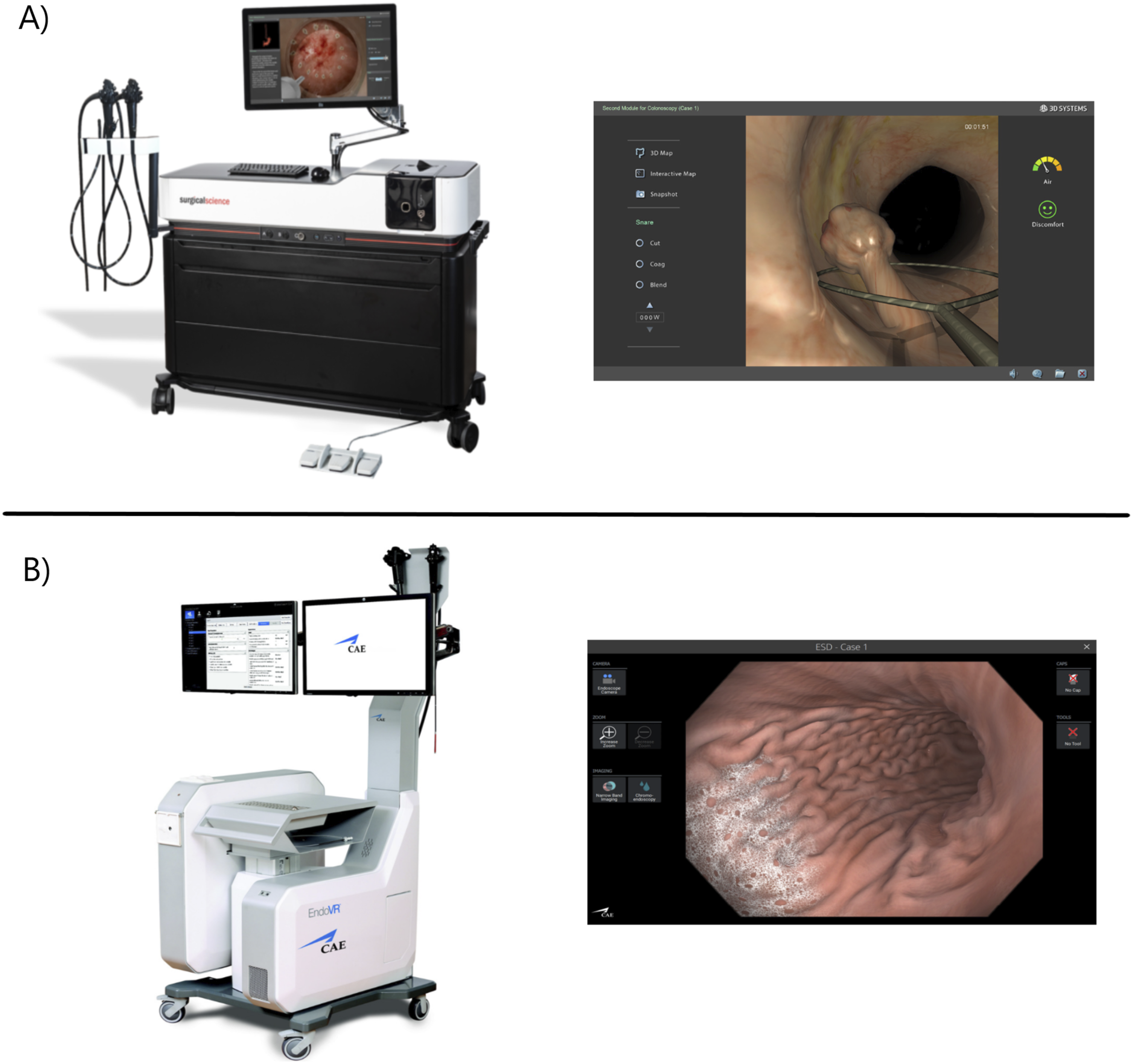

conducted an observational trial where 13 novice and expert gastroenterology attending physicians performed 50 exercises (30 EGDs and 20 colonoscopies) in the GI Mentor II simulator (Figure 2) to assess its realism and usability. After completing the exercises, two questionnaires were provided, one for novices and one for experts. Experts were asked about the visual, anatomical, mechanical, and overall realism of the simulator. On the other hand, novices were asked about control, sensory, and distraction factors, while realism remained secondary. Improvements in proficiency or knowledge obtained from the experience were thoroughly evaluated by novices. However, these are subjective ratings of the simulator by the novices and should not be considered as pertaining to any subjective validity type. Neither realism (face validity) nor the degree to which colonoscopy is correctly addressed by the simulator (content validity) were assessed by those questions. The same can be said of the included questions regarding the didactic value (usefulness) of the simulator for training and certification. Questions under the distraction category, among others, evaluated the usability of the simulator (e.g., interference due to the display quality, losing track of surroundings), despite being reported as face validity questions. Another study by McConnell et al.

16

conducted a trial where 11 novice and expert gastroenterology attending physicians performed 18 colonoscopies and 6 EGDs in the CAE Endoscopy VR (previously known as AccuTouch, Figure 2). Despite using the same questionnaires as the previous study, content validity was reported instead of face and usability. Once again, this seems to be a mislabeling of the validity type underlying the questions in the surveys. As seen in Table 1, for the purposes of this review, the study was labeled as reporting face validity and usability. • Lightdale et al.

14

conducted an educational study about using computer-based endoscopic simulators (CBES) in gastroenterology. First-year pediatric endoscopy trainees selected over a 5-year period (n = 25) were also asked to answer a survey after completing EGD and colonoscopy exercises in the GI Mentor II, comparing the simulation with live patient procedures. The survey reported face validity, including questions regarding the visual and mechanical realism of the simulator. Questions regarding the realism of stages of the colonoscopy procedure, such as intubating and navigation through the sigmoid or to the cecum. However, these are questions about colonoscopy skills, that is, about the extent to which the content of the simulator is representative of the knowledge or skills that have to be learned in the real colonoscopy environment. Therefore, they should be considered questions regarding the content validity of the simulator. • The realism of the GI Mentor II simulator was also assessed, along with the realism of CAE Endo VR (previously AccuTouch) and other two non-computerized simulators, in an observational study by Hill et al.

15

Expert colonoscopists executed several colonoscopy exercises and completed the Colonoscopy Simulator Realism Questionnaire (CSRQ) implemented in the study. The questionnaire assessed several realism aspects, categorized as: physical arrangement of equipment, endoscope, anatomical structure of the colon, visual representation of the colon, haptic response, visual response, difficulty of navigation, looping, and patient discomfort. As the questionnaire was developed to be used with any kind of simulator, only the questions regarding computerized simulators were considered for this review. • Dumas et al.

17

conducted a trial to test a new haptic device included in the non-commercial colonoscopy simulator developed by the Commonwealth Scientific and Industrial Research Organization (CSIRO). A group of gastroenterology experts completed several colonoscopy exercises in the simulator, and a brief post-questionnaire was used to measure their subjective experience. The survey included questions regarding the realism of the force feedback, the graphic realism, and the device handling (face validity). A question to rate how trustworthy the simulator was in quantifying accurate measures of performance was also included (content validity). • Another non-commercial simulator was validated by Wen et al.

18

a study in which an endoscopy simulator following the lines of GI Mentor II or CAE Endo VR was implemented. 15 experienced gastroenterologists tested the simulator and completed a survey regarding the realism of the simulated environment, including visual effects, endoscope mechanics, and haptic experience. The questionnaire was not included in the paper. • Eley et al.

19

conducted an observational study to evaluate the face validity of the Endosim simulator. A total of 12 exercises were developed within the simulator for the purpose of this study, and a survey was provided to a group of colonoscopy experts upon the completion of these exercises. The exercises were evaluated by the experts, with each exercise associated with different skills of colonoscopy (e.g., colon visualization, navigation skill, loop management, knob handling). Experts were asked to rate the degree to which the exercises replicated the colonoscopy skills being tested, and the study presented the results as pertaining to face validity. However, the questions seem to instead inquire about the extent to which the content of the simulator (its exercises) is representative of the knowledge or skills that have to be learned in the real colonoscopy environment. Therefore, we concluded that content validity was instead tested in this study. A) GI Mentor simulator, image courtesy of Symbionix; B) CAE EndoVR (previously Accutouch) simulator, image courtesy of CAE Healthcare. On the left, the colonoscopy simulator; on the right, a sample screen of its simulation.

Discussion

From the reviewed papers, we found that all studies used questionnaires provided to groups of endoscopy physicians with varying levels of experience for the validation process. This was expected, as it is the simplest and most direct data collection tool to inquire about subjective experiences. Six studies used 5-level Likert scale questions in their questionnaires, while only one used 6-level Likert scale questions.

15

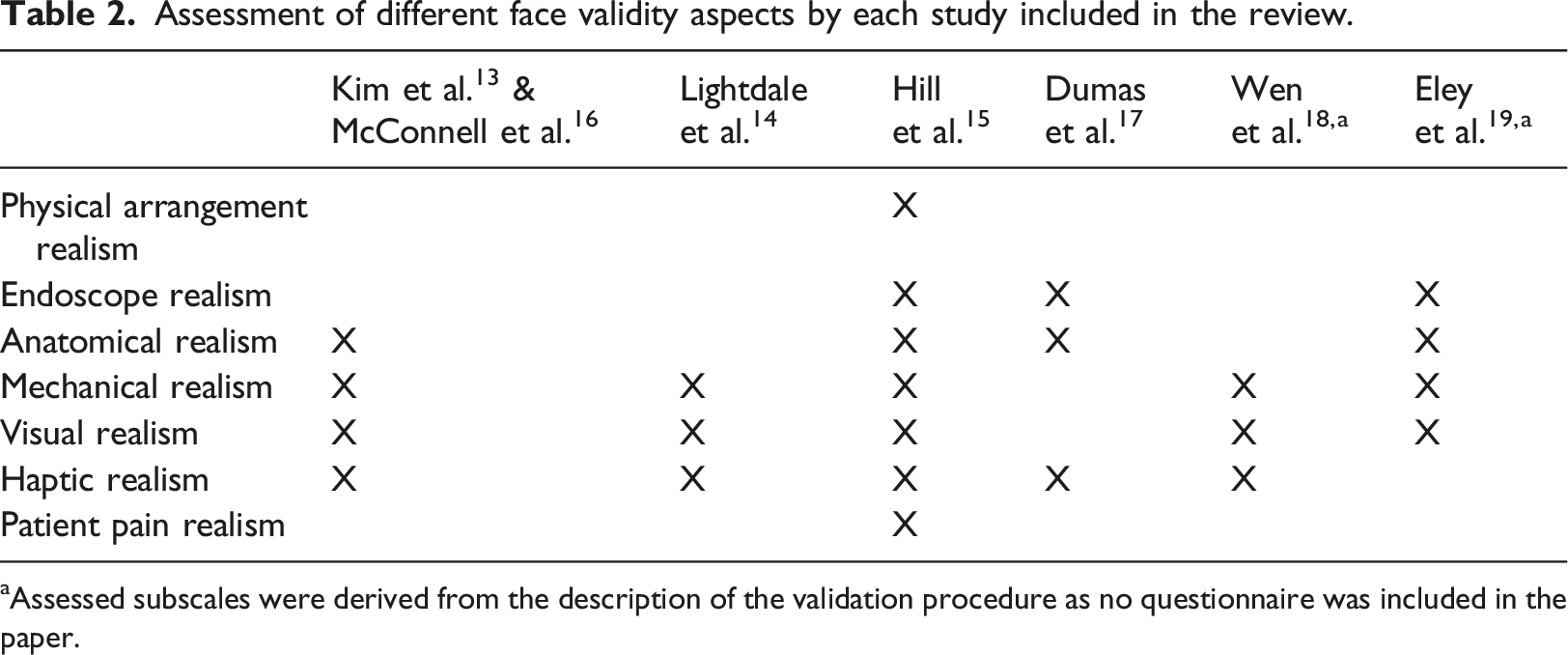

It could be observed that despite using different surveys (only two studies used the same one13,16) with different questions, several major aspects or subscales regarding the subjective validity of the simulators were assessed in almost all the studies. Other aspects were assessed in fewer studies, but the subject rated them as important regarding the validation of a simulator.

15

All these subscales relate to the face validity of the simulator and can be described as follows: • Physical arrangement realism: How realistic is the spatial disposition of the elements used in the simulator, such as endoscopes, monitors, or the entry socket for the endoscope (anus). • Endoscope realism: How realistic is the endoscope device, regarding its weight, appearance, and size/length of the control head and insertion tube. Only applicable to those simulators that include their own endoscope. • Anatomical realism: How realistic is the anatomical model of the colon, including the realism of intestine segments, folds, haustra, angulations, Houston Valves, hepatic/splenic flexures, or pathologies (e.g., polys, diverticula), among others. • Mechanical realism: How realistic are the mechanical models of both the endoscope and large intestine, that is, their physics-driven behavior and responses to interactions. The realism of loops during exploration, insufflation and deflation mechanics, or the bending of the endoscope and its dynamics (i.e., translation, rotation, and distal end angulation) are included in this subscale. • Haptic realism: How realistic is the haptic feedback provided by the elements of the simulator, including the realism of the auditory feedback from patient pain/discomfort, feedback of the endoscope (e.g., feel of the buttons and knobs), or the realism of force and resistance while manipulating the endoscope. • Visual realism: How realistic are the on screen visuals of the simulator, such as the simulated mucosa, visual effects and particles, the lighting during the procedures, or the visual feedback for patient pain/discomfort. • Patient pain realism: How realistic is the amount of pain/discomfort experienced by the simulated patient and how it is simulated.

Assessment of different face validity aspects by each study included in the review.

aAssessed subscales were derived from the description of the validation procedure as no questionnaire was included in the paper.

Regarding the subjective validity assessed, another important observation is that face validity was tested in almost all studies (n = 6), except in one that only tested content validity (despite reporting face validity). 19 On the other hand, content validity was only tested in three studies and less thoroughly in comparison with the face validations. This absence of content validity is of significant importance, as it is common to conduct an objective validation of a simulator after a subjective one focused on content validity, or even in the same study.13,16,19 For example, when testing if novices are improving while training colonoscopy on a simulator (construct validity), if the domain of colonoscopy training is not totally addressed by the simulator (null or low content validity), the construct validity measured will be flawed. It cannot be stated that the simulator has construct validity for colonoscopy training if it only includes sigmoidoscopy training, something that could be detected by testing content validity. Therefore, before validating objectively a simulator, it is of major importance to have previously conducted a thoroughly subjective validation, with special regard to the content validity type.

Similarly, only two of the studies included in this review assessed the usability of the tested simulators. Most studies only tested the simulators from a medical perspective, disregarding the engineering perspective. On-screen visuals (e.g., mucosa, particle effects) and the anatomical realism of the models are validated, but not the user interface and its usage (e.g., disposition of the menus, interface navigation). One of the major benefits of computerized simulators is the ability to easily change or repeat training scenarios and automatically obtain simulated patient data or performance parameters. A simulator may end up having low usability due to badly presented data, unclear navigation, unclear menus or tools, and even small text fonts. This would only make the user experience counter-intuitive, affecting their acceptance of the simulator 20 and consequently the expected skill proficiency increase. Medical-device usability has already been recognized as an important factor influencing medical-help quality, convenience, and user satisfaction, with several standards developed for medical-device design. 21 From these, usability questions should be developed and included in any survey for the subjective validation of computerized medical simulators.

The study also reveals some mislabeling issues regarding the type of validation performed reported in the literature. In some studies,13,16 usability questions were reported as face validity questions or face validity questions as content ones 16 or vice versa. 14 In another case, content validity was reported as face validity. 19 In addition, several questions included in the questionnaires of the studies simply did not fit under any subjective validity category, such as inquiring about new techniques learned or improvements in proficiency by using the simulator. This mislabeling is a serious problem that arises from the ambiguous definitions found in the literature for validity types, especially in the content case.22–25 The use of the “realism” tagline is a clear example. Questions such as “How realistic is the procedure of X?” or “How realistic are the measures provided for the skill X? In the simulator domain are sometimes labeled as related to face validity due to the tagline. However, the questions address skills necessary for the simulator domain, that is, content validity. Therefore, before conducting any subjective validation study, it is important to consider what wants to be tested and then thoroughly evaluate the established questionnaires, ensuring that the questions are not flawed, that is, addressing undesired factors due to misinterpretations of the validity types.

However, given the narrow focus of the review on computerized colonoscopy simulators and the rigorous exclusion criteria, this paper reviewed a limited number of studies. This may be attributed to the fact that subjective validation is frequently regarded as a secondary objective of the study, as evidenced during the screening phase. Nevertheless, despite the limited number of studies reviewed, this paper effectively brings attention to and provides examples of the problems associated with mislabeled validity types in the relevant literature. In addition, the review highlights important aspects to consider when subjectively validating colonoscopy simulators, being the (CSRQ) by Hill et al. 15 an appropriate reference for face validation. Therefore, the paper achieves its objective of reviewing different approaches for the validation of computerized simulators, as a first step for the design of a standard validation procedure for this type of simulators.

Conclusion

Subjective validation of a computerized colonoscopy simulator is an initial assessment that should be performed before using it for training or assessment purposes. In this paper we have shown that since 2010 very few papers have conducted this type of validation. Screening of the studies included in the review showed that the subjective validation of this simulators has been less prominent, being conducted in most cases as a minor part of an objective validation study. This can be attributed to the existence of subjective validations of the main commercial simulators before 2010 and the lack of new relevant colonoscopy simulators since then. Nevertheless, as long as we are considering the development of a new simulator, the availability of a standardized validation process and tool would be valuable.

The study shows the misunderstanding in the validity types results in a mislabeling in the reported validities. The presence of only a few major commercial simulators in the colonoscopy field which do not allow third party contributions and the absence of open solutions further relegates the subjective validation to the background, as there are no new simulator modules or systems to be freely assessed.

Among the validation procedures included in the studies, the Colonoscopy Simulator Realism Questionnaire (CSRQ) was selected as the most competent questionnaire for the assessment of face validity of computerized simulators. This should be used as guide for the development of new subjective validation surveys. In future work, variations of the CSRQ should be considered to reduce the number of questions and cover all the validation aspects in a more compact form. Furthermore, complements to the questionnaire will be developed to assess content validity and usability.

Footnotes

Author contributions

Conceptualization and Investigation, A.L.L., F.A.M.F. and M.C.R.; Writing - Original Draft, A.L.L.; Writing - Review & Editing, F.A.M.F. and M.C.R.; Supervision and Project administration, M.L.N.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work has received financial support from Consellería de Educación, Ciencia, Universidades e Formación Profesional and co-funded by EU, and from the Galician Regional Government under project GPC-ED431B 2023/37. Additionally, this work was partially supported by the Xunta de Galicia through the Axudas á Etapa Predoutoral Program, grant ED481A-2023-084, Spain.