Abstract

Objective

This study aimed to explore radiologists’ views on using an artificial intelligence (AI) tool named ScreenTrustCAD with Philips equipment) as a diagnostic decision support tool in mammography screening during a clinical trial at Capio Sankt Göran Hospital, Sweden.

Methods

We conducted semi-structured interviews with seven breast imaging radiologists, evaluated using inductive thematic content analysis.

Results

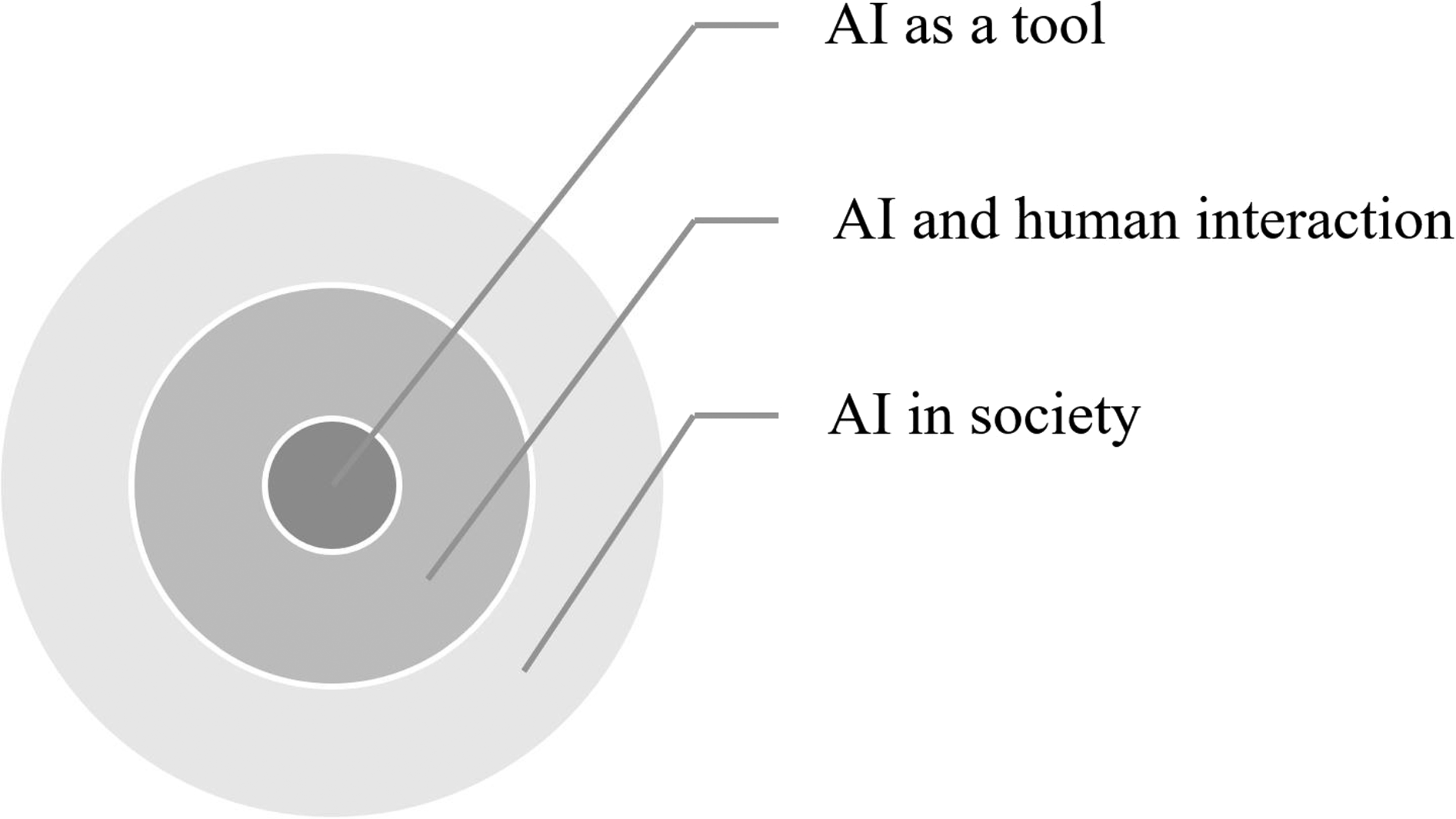

We identified three main thematic categories: AI in society, reflecting views on AI’s contribution to the healthcare system; AI-human interactions, addressing the radiologists’ self-perceptions when using the AI and its potential challenges to their profession; and AI as a tool among others. The radiologists were generally positive towards AI, and they felt comfortable handling its sometimes-ambiguous outputs and erroneous evaluations. While they did not feel that it would undermine their profession, they preferred using it as a complementary reader rather than an independent one.

Conclusion

The results suggested that breast radiology could become a launch pad for AI in healthcare. We recommend that this exploratory work on subjective perceptions be complemented by quantitative assessments to generalize the findings.

Introduction

Many people believe that widespread adoption of Artificial Intelligence (AI) is the next step in the growing digitalization of society. It has even been talked about as a driver of a new industrial revolution. 1 In healthcare, machine learning-based AI comes with promises to improve well-being, increase efficiency, and reduce costs. Its adoption in this sector is growing fast worldwide, as it supports patient prioritization, diagnosis, and risk assessments. AI can also predict diseases, guide treatment and discover new phenotypes.2,3

AI has a large potential to improve population-wide breast cancer screening programs. These programs encounter the dual challenges of allocating extensive radiologist hours to evaluate predominantly healthy women, while at the same time reducing the proportion of cancers going undetected despite consistent screening. In recent years, there has been great progress in terms of improving AI accuracy in radiology, and several AI cancer-detection software systems have successfully been developed within mammography.4–6 Research has demonstrated that the deployment of AI cancer detectors to triage mammograms could potentially reduce the radiologists’ workload by more than half and detect cancers earlier. 7

To optimize the overall performance of AI, several aspects need to be considered, such as patient outcome, cost-effectiveness, and patient experience 8 – preferably on a case-by-case basis. 9 In particular, the effect that AI will have on the relationship between professionals and patients remains highly uncertain, and it will probably vary largely across settings. 10 A good understanding of users’ views is essential in this process. Experienced clinicians are in a good position to assess the impact that AI will have on their work specifically and radiology in general. Therefore, their views are the focus of the current interview study.

There is extensive research documenting AI’s capacity to match or surpass human diagnostic abilities in medical imaging, and relatively many studies have examined the role of AI in healthcare on a more conceptual and hypothetical level.3–6 However, few investigations have delved into the experiences and concerns among the technology’s users, the radiologists.11,12 Especially scarce are studies exploring perspectives among radiologists who have employed AI for diagnostics in a real clinical setting over an extended duration of time. This study aimed to fill this gap by exploring radiologists’ experiences and perceptions of using AI (ScreenTrustCAD with Philips equipment) as a diagnostic tool for breast cancer screening at the Capio Sankt Göran Hospital, Sweden, in a clinical trial (NCT 04778670). 13

Method

Design

The study was based on qualitative semi-structured interviews. We conducted semi-structured interviews with seven well trained clinical radiologists with extensive experience of reading mammography. This way, we could explore their experiences and views of using AI for breast cancer detection in a screening program.

Participants and clinical setting

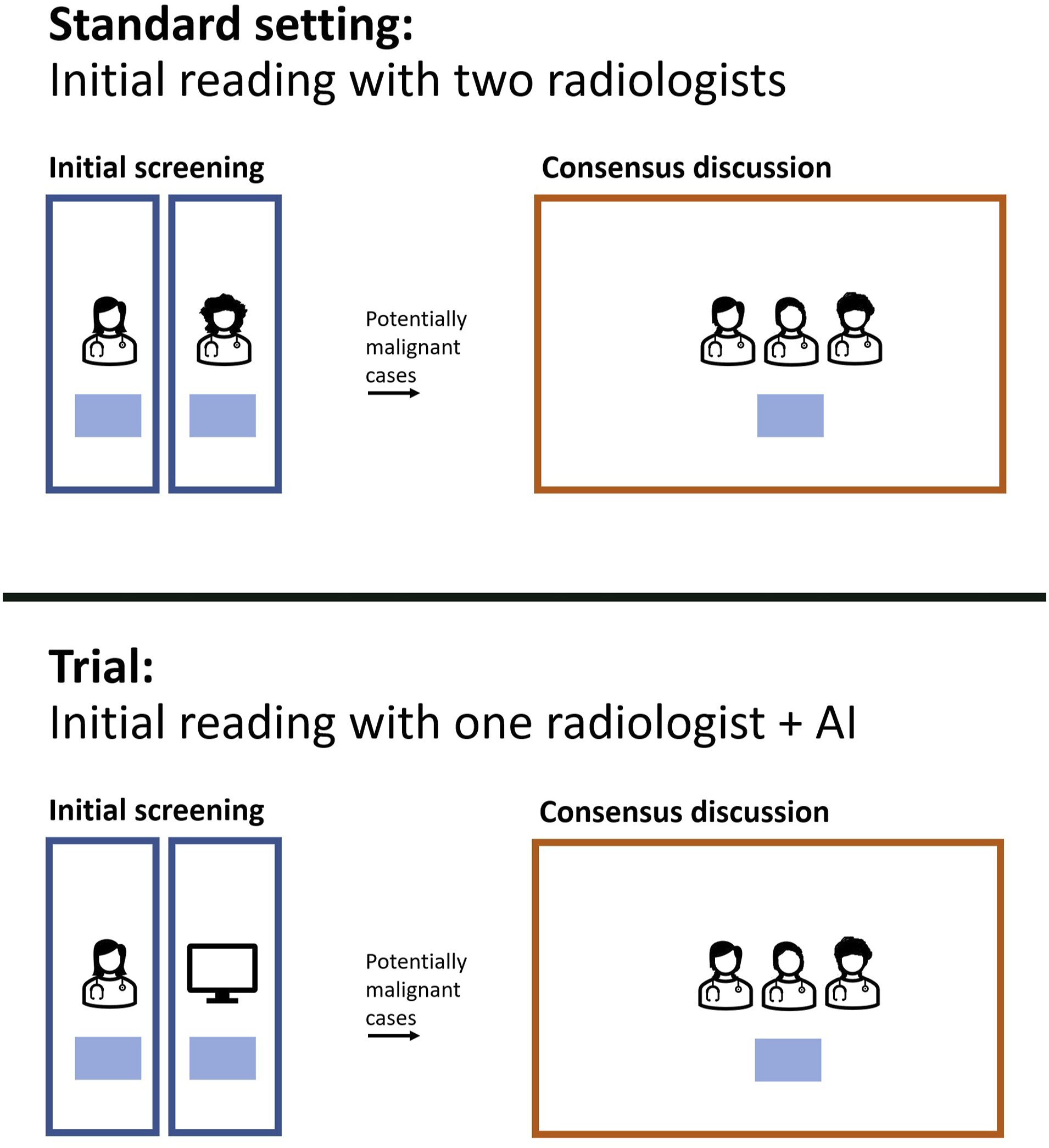

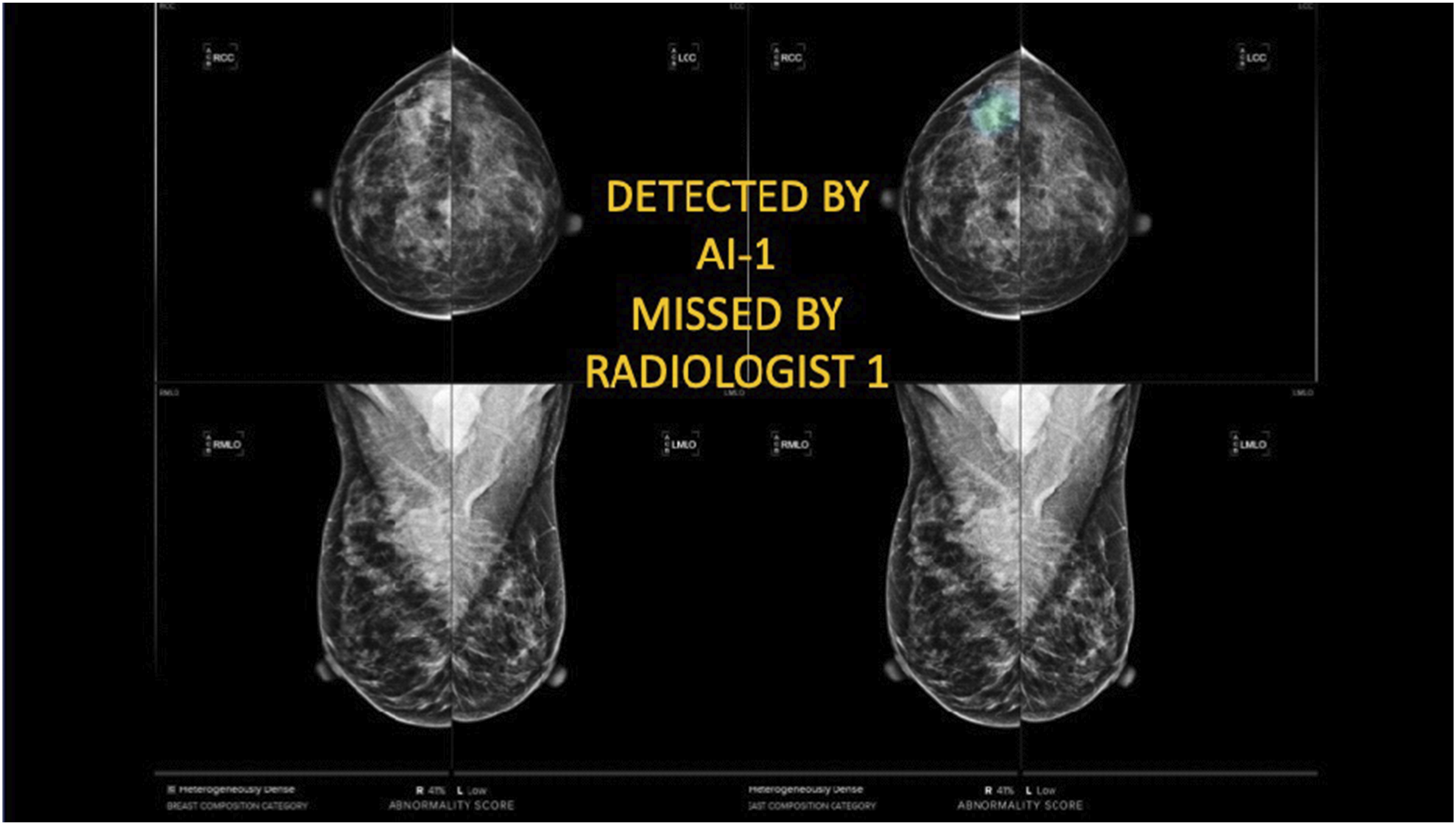

All the participants used an AI system for diagnostics in an ongoing clinical trial starting in April 2021 at Capio Sankt Göran Hospital in Stockholm, Sweden, registered on ClinicalTrials.gov. It specifically involved ScreenTrustCAD, NCT 04778670 with Philips equipment (see Conceptual diagram of the screening process in the standard routine (top) and the primary analysis in the trial (bottom), in which the initial screening represents the first round, while the consensus discussion with three or more radiologists is the second round. Note that the trial also considered the performance of the screening process with ‘only AI’ and ‘two radiologists plus AI’ in the first round. Example of a mammogram in the clinical trial. This case was flagged by the AI but not the radiologist in the first round, although upon closer examination, it did not lead to a recall decision in the consensus discussion in the second round.

Box 1

The foundation for the current article was a prospective clinical trial following a paired screen-positive design, previously published in. 13 This trial offered a unique opportunity to assess radiologists’ perceptions of using AI in a real clinical setting, specifically Philips Microdose SI Universal equipment (Eindhoven, Netherlands). When this interview study was implemented, it had been ongoing for a period of over a year, with start on April 1, 2021. The trial involved mammogram data collection from in total 55,581 women (aged 40-74 years).

Before the trial, the standard procedure at the clinic was that two radiologists independently reviewed mammograms in the first round (Figure 1). The images that they found to be relevant for further discussion were then sent to a second round, in which several experienced radiologists looked at the mammograms and decided whether the woman in question should be encouraged to revisit the hospital for further examinations.

In the trial, the AI was introduced in the first round, while the second round remained the same as before. The AI system acted as an independent, silent, reader that performed the same task as a radiologist, that is, to flag or not to flag a mammogram for consensus discussion. The radiologists were unable to adjust the threshold that controlled at what abnormality score the examination was flagged, as it had been predetermined by calibrating on prior images and cancer cases from the same hospital.

For the exams that went to the consensus discussion, the radiologists were able to view the output of the AI in terms of its score and the localization of the lesion and then decide if they would recall the woman for further diagnostics. The same applied to an examination flagged for consensus discussion by a radiologist. The main evaluation study of the trial focused on whether the procedure with one radiologist together with the AI performed equally well (or better) than the standard routine. The trail also assessed single reading by AI alone and triple reading by two radiologists plus AI, compared with standard-of-care double reading by two radiologists.

Data collection

The interviews were conducted in May and June 2022 by the first author (JVJ). They were done in Swedish in a quiet room at the hospital, lasting from 15 to 48 min each. They were recorded with consent from the participants. We began by asking the participants about their views on the process of using AI as a diagnostic tool. Thereafter, we asked about their experiences and perceptions of the technology. See the supplementary file for the semi-structured interview guide with open-ended questions.14,15 The questions were reviewed by the research team and pilot-tested with two radiologists before the study. Minor wording adjustments were made based on the feedback from the pilot. The AI system’s name was specified, and questions about procurement and ethical concerns, including potential impacts on patient dignity, were incorporated.

Data analysis

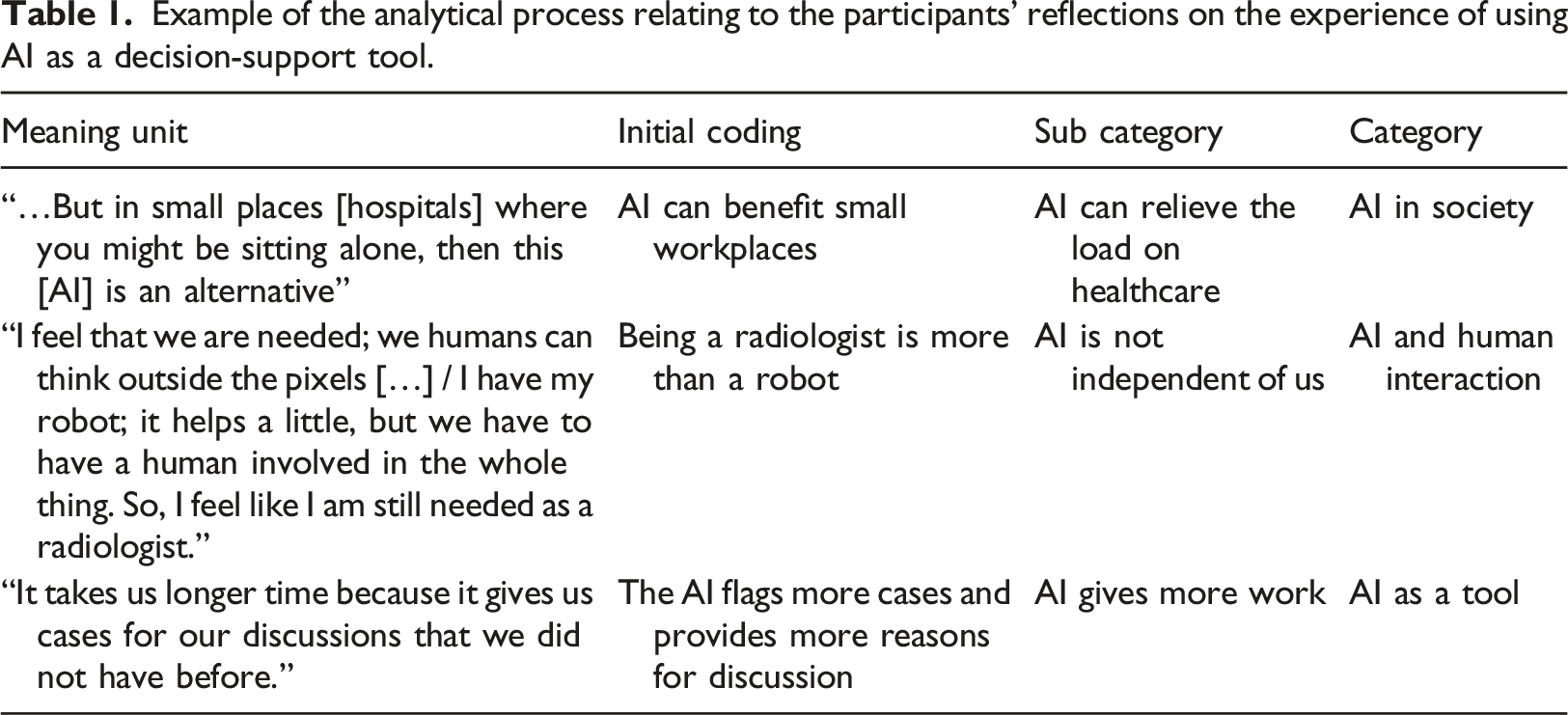

Example of the analytical process relating to the participants’ reflections on the experience of using AI as a decision-support tool.

Results

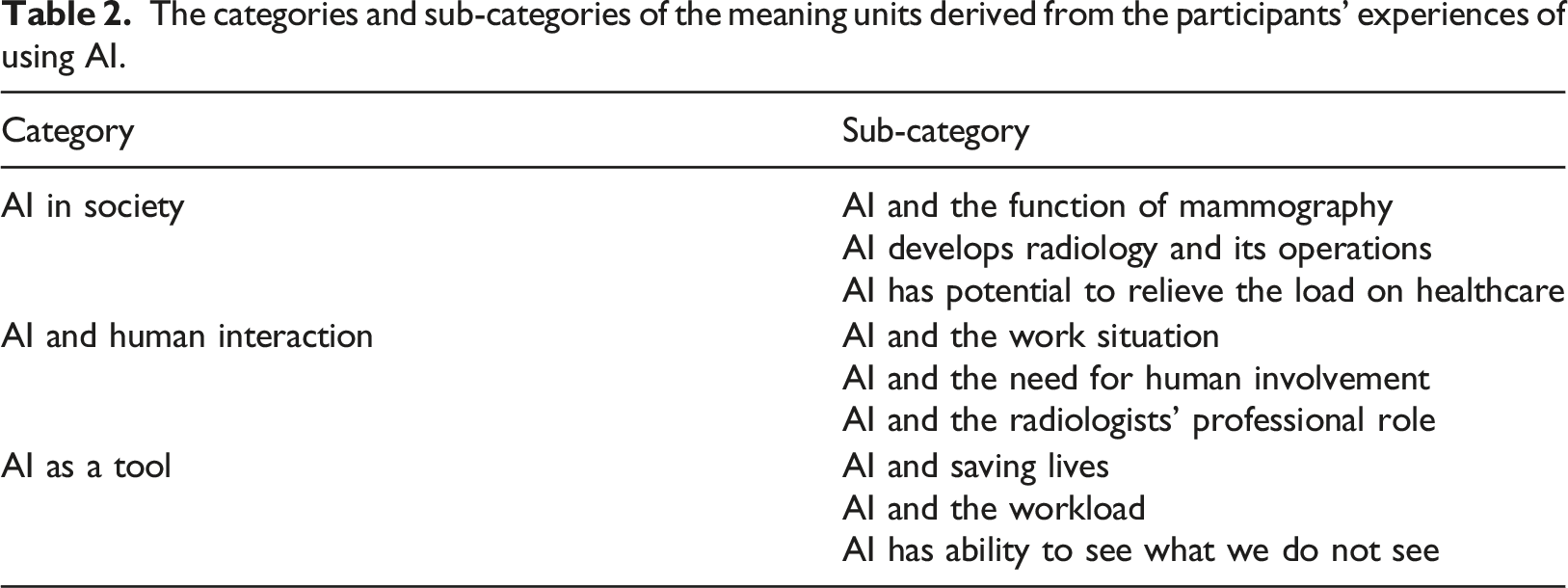

The categories and sub-categories of the meaning units derived from the participants’ experiences of using AI.

AI in society

This category was expressed in three ways in the interviews.

AI and the function of mammography

This subcategory pertained to mammography’s societal role and AI’s integration within it. The participants emphasized that the main challenge in mammography is to strike a balance between reducing unnecessary follow-up calls and pursuing all potential concerns. Thus, weighing sensitivity versus specificity is central, regardless of AI’s involvement. They collectively believed that attempting to handle all flagged cases by the AI would overwhelm their unit. Not expecting perfection, they viewed the technology to be an evolving contributor to mammography’s broader mission. Trusting future validation studies, they deliberated on the AI’s reaction threshold for potential lesions: That is the difficult thing about it all, about not taking back too many and not missing anything, and we should have the same expectations on it [AI] as well. (Participant 6)

On the topic of respectful implementation of AI, the participants did not think that the technology had harmed the screened women in any way. During the trial, the AI was added as a third independent reviewer alongside two radiologists, resulting in three reviewers. If the trial is successful, only one radiologist and the AI will evaluate the first round. This was expected to increase sensitivity and improve care. They reasoned that the women were respected if the performance of the screening procedure as a whole was similar, or higher, as compared to the standard procedure. The participants had divergent views on whether informing women about AI’s use would enhance the mammography program: some of them believed that detailed information would not improve the process, while others stressed the importance of full transparency.

AI develops radiology and its operations

Another aspect had to do with the implementation of AI in radiology as part of a greater advancement towards more individualized healthcare. The participants expressed that breast cancer screening today has a ‘one-size fits all’ approach: it is age-based, starting from 40 and ending at 74, and mammography is used as a general screening tool. They reasoned that radiology in general, and mammography in particular, is ready to develop its practices, adopt novel technologies, and become more individualized. You can combine such a risk model in many different ways. You can have breast density, you can have risk algorithms, you can have tumor algorithms, you can also have genetic information, and age, of course. (Participant 5)

Box 2

The interviews were recorded and then transcribed verbatim by a professional transcription company. The first author (JVJ) read the transcripts thoroughly and listened to the interviews in their entirety to verify the texts. Subsequently, JVJ analyzed the transcripts to identify meaning units: phrases, sentences, or paragraphs that expressed the radiologists’ experiences. 16 We then coded each meaning unit using the open coding technique. 20 percent of the meaning units were coded by the two authors (JVJ and EE) and then jointly discussed and interpreted. The rest of the interview transcripts were coded by JVJ. We used the software Atlas.ti Web 17 in the analysis process.

In the next stage, we compared the meaning units, finding similarities and differences. Codes that reflected related concepts were grouped into categories and sub-categories by JVJ, which were then discussed and reviewed thoroughly with EE (Table 1). The interviews and the analysis were carried out in Swedish. The quotes presented in this article have been translated into English by the authors and then slightly edited for readability.

Several of the participants also expressed that it is something evidently wasteful about having two well-trained radiologists spend time examining healthy people, and that this is an issue that AI has a great potential to alleviate. They also found it exciting to be part of an area where new technology is used and developed. They saw it as valuable to follow the technological development and be part of the AI implementation process to contribute to a more attractive workplace and profession.

AI has potential to relieve the load on healthcare

The participants articulated that AI could be relevant to reduce queues and mitigate the workload in smaller hospitals or healthcare units that do not have access to two radiologists. Also, the technology can be valuable in hospitals where many radiologists are expected to retire soon. I can think of small places where they are understaffed or only have one doctor, then it is certainly helpful, a tool that can help… (Participant 4)

At the same time, the participants also argued that savings and financial gains should not guide the implementation of AI in healthcare. They expressed a wish to be involved, or at least get their voices heard, in terms of which AI products are procured for their unit. They identified a risk that decision-makers who focus on the financial dimension might want to replace radiologists simply to reduce costs, without due consideration to the quality of the technology. I have experienced that before, that you sometimes have to fight for good quality; we had to fight for a very long time to get a system as good as this, and the first decision was a cheaper system, which we had heard was not so good. (Participant 3)

AI and human interaction

This category focused on the radiologists’ self-perception while working with the AI, its impact on their work environment, and whether it posed fundamental challenges to their profession.

AI and the work situation

The participants expressed that the AI fitted well into their work routine and that it functioned smoothly. They liked the technology, and it even got a name (‘CADen’). They found that it had potential to streamline initial readings, especially in straightforward cases, and they hoped that it could differentiate simple from complex ones.

The participants found comfort in employing AI as a third reader in the initial screening. They suggested using it as an adjunct to two radiologists in the first reading for enhanced implementation, though economic constraints were recognized. One radiologist considered that after the trial period, if AI is implemented, she would be the only human involved in the initial screening, with the AI as the only second reader, and foresaw heightened responsibility for inaccuracies. …then it is my sole responsibility, and I will have a harder time accepting it [making mistakes]. It is always difficult to accept if you have missed something because this is such a serious thing ... But the burden becomes heavier if I can only blame myself. (Participant 4)

AI and the need for human involvement

The participants were largely positive about implementing AI in their work. However, they also found that the decision support provided by the AI had to be complemented by humans. Several challenges were identified regarding its ability to work independently of human knowledge and experience. It was perceived that if one radiologist would be replaced by an AI, then the remaining one would have to be a very experienced examiner, because otherwise cancers would be missed. One participant questioned AI’s ability to surpass two skilled radiologists, while another believed that it could not outperform a radiologist working under optimal conditions. AI was considered to be a tool among others, not all-knowing. In contrast, another participant valued AI’s detection of imperceptible signals as a complement to human readers.

For some participants, working with AI was stressful and uncertain. Understanding its reactions and trusting its outcomes posed challenges. The radiologists’ emotions varied: one felt lonely when the AI’s decisions diverged from their own, while another emphasized critical thinking, viewing AI as a mere tool, not the truth. […] although you don’t remove your own opinion. One wouldn’t rely on it alone but in combination with [one’s own opinion]. (Participant 6)

AI and the radiologists’ professional role

None of the participants expressed that the AI threatened their profession at a fundamental level. They argued that their workload is extensive, and that the AI could be a tool to relieve this, reasoning that being a radiologist in a screening program is ‘so much more than reviewing mammograms’; it is about taking samples, performing other exams, making judgments about the development of the disease, engaging in detective work regarding the next step, and taking care of concerned women. e.g., difficult cases need individualized procedures (e.g., dense breasts need ultrasound). Thus, they found that the AI only replaces a part of their work. I feel that we are needed; we humans who can think outside the pixels, or, yes, whatever it is called, all the coordinates… we can compare old and new [pictures]. (Participant 7)

They saw potential in AI freeing up their time for other tasks due to the high workload. Yet, one radiologist noted the need for human variation, suggesting AI’s exclusion of obvious cases might be a drawback. ...the brain needs a few simple cases to rest on, to be able to keep the concentration up on the more difficult cases. (Participant 4)

Further, they also reasoned that to be a trained radiologist, you need to review many pictures. There is a risk that radiologists will be deprived of routines and experiences if AI would be widely deployed. They expressed concerns that they might lose their skills over time, or that newly educated radiologists would never achieve full competence in screening mammograms.

AI as a tool

The last category that we identified reflected the idea that the radiologists experienced AI as a tool among others.

AI and saving lives

The radiologists expressed that AI has potential to see things and patterns that the human eye cannot perceive, and that it could be used to find cancers earlier in the development of the disease, which would save lives. They reasoned that AI could also save resources because of fewer late-stage operations. In addition, they highlighted the possibility to reduce morbidity and not just mortality. They expressed that, from an ethical point of view, it is important to reduce suffering among those affected by cancer: I think it is just as important to reduce morbidity and reduce the number of pre-operations and fine scars with it [the AI]. (Participant 5)

AI and the workload

The participants expressed a united concern that the AI flagged more cases than the radiologists in the first screening, which increased the number of cases in the second one: the group discussion. This theme was by far the most extensive subcategory in the data. Thus, it was perceived that the technology generated many false-positive responses, and that it increased the overall workload. For example, the AI reacted to dense breasts that the radiologists knew were not cancerous. Hence, the AI led to more work in the second review, but not the first. The participants found that the AI ‘messed it up’ for them because of all the time it took to handle the extra cases. More resources would be used in the recall process, which might not be economically possible.

AI has the ability to see what we do not see

The participants expressed a wish that the AI, to a greater extent, would pick up where humans fall short, that is, that it would capture things that they could not see themselves, because ‘it does not think like a human’. However, they did not find that the AI did this generally, as their experience was that it only outperformed them in few, isolated cases. Instead, they perceived that the AI was more of a safety net, a means to provide confirmation, rather than a method to correct their mistakes.

The radiologists considered the AI to be under development. They wished that the technology would get better over time, that it could account for new data, compare across historical mammograms, as well as compare two images of the same breast. Also, they hoped that it would not only account for two-dimensional images separately, but also put two images together and understand how they complement each other in three dimensions. In the future, they wanted the AI to be able to add up the different perspectives, as the radiologists do today. I think I’m better than the CAD [AI]. I have the possibility to see all the old pictures, and read notes, and the CAD does not have such information. (Participant 1)

Discussion

In the process of formulating the categories, we found that the radiologists’ perceptions of using the AI were expressed at three levels of abstraction. With inspiration from phenomenography research,18,19 we identified relationships between the three main categories. We conceptualize these as overlapping circles, showing perceptions towards AI usage at the micro, meso, and macro levels

20

(Figure 3). The study group’s perception of the use of AI as a decision tool.

The micro level, AI as a tool, relates to whether the participants perceived that the AI was a useful technology, which regards attitudes toward IT innovations in the workplace. As such, this level relates to theories within Information Systems, such as the Technology Acceptance Model (TAM). 21 This model posits that perceived usefulness and perceived ease of use are key factors that influence the acceptance of IT. The current study can be seen as exploratory research that could support future quantitative studies based on TAM with a focus on AI within breast imaging. Such research could consider various potential drivers of radiologists’ acceptance of AI, such as diagnostic utility, interpretability, mental support, and trust, for example.

The meso level, AI and human interaction, concerns perceptions around the relationship between the technology and the human decision-makers in the workplace. This regards novel work routines as well as the radiologists’ future professional roles with AI. Observations at this level are very important, considering the participants’ in-depth understanding of their own work tasks and professional functions. Given that radiology may become an early adopter of AI, their views are relevant contributions to broader discussions around the future of work with increasing automation and computerization, as found in Frey and Osborne 22 and Frey, 23 for instance.

Finally, the macro level, AI in society, accounts more generally for the participants’ perceptions of AI in relation to the healthcare sector and society at large. Findings at this level concerns the ongoing debate relating to the effects of AI on what it means to be human in a wider sense, which, for example, concerns human agency, democracy, dependence lock-in, and the emergence of a ‘black box’ society. 24 As highlighted in this literature, the limitations regarding interpretability is a key ethical challenge for AI across domains, 10 although it was not highlighted by the interviewed radiologists in this study. Nevertheless, we recommend that future research considers this aspect as a potential factor underlying acceptance of AI in healthcare.

The first level is a premise for the other ones, as AI needs to function well as a tool before the radiologists will trust it and adapt their behavior to it at the meso level. When radiologists as well as other healthcare practitioners change their work routines, AI can contribute to reducing the load on healthcare on the aggregate, macro level. One specific AI application that is used in one specific healthcare unit becomes part of a larger transformational change. A societal shift may take place as AI systems are deployed across professional disciplines.

The anticipated role of AI ahead

The participants expressed a positive attitude toward AI’s role in developing the breast cancer screening program, even though some of them had objections about how well the AI functioned as an independent reader. They expressed hope that the AI would improve over time, account for historical pictures, put together two pictures, and interpret them in three dimensions. This type of feedback underlines the imperative for manufacturers to engage with radiologists to improve the technology given its functions in a clinical setting.

Our results further suggest that radiology is a good starting point for AI in healthcare, not only because the technology seemed to function well for image analysis, 13 but also because the radiologists as a group seemed comfortable with the type of output that the AI generated. Results in the form of scores and sometimes ambiguous outcomes seemed to fit well into their professional frame of mind; they were accustomed to managing the trade-off between recalling too few (risk for untreated disease) and too many women (risk for unwarranted distress). They also perceived that they could handle potential erroneous assessments by the technology. This suggests that the adoption of AI within breast imaging radiology will be relatively fast as compared to other medical fields.

AI and the profession

Our findings are consistent with an earlier review which found that many radiologists doubt that AI can make independent diagnoses and that it will not fully replace them as readers. Instead of replacing them, radiologists generally expect that AI will function as a ‘co-pilot’ that will reduce errors and conduct repetitive tasks.25,26 Mittelstadt 10 reasoned that new norms might appear with greater reliance on AI systems, which will combine more face-to-face time between clinicians and patients with greater diagnostic abilities of AI. The views of the participants in this paper concur with these assessments.

Our results, supported by previous studies,12,27–29 further indicate that radiologists tend to have a positive attitude toward the use of AI in radiology and that they do not fear that the technology will undermine their profession. The participants generally perceived that AI tools will change the practice and make it more exciting, and most of them would still choose to specialize in radiology if given a choice. They expected to play a role in the development and validation of AI as a tool in medical imaging, and they did not believe that they would be replaced by an AI system. This is consistent with an earlier study where radiologists believed that the technology was part of a positive trend toward more individualized and patient-oriented radiology. 28

The participants reasoned that increased usage of AI was a desirable path for their profession, which they found to be much broader than reading images. They wished that they would be able to better meet the screened women’s individual needs if the AI could relieve them of the time required for image analyses. This would allow them to spend more time with them and more often investigate them with follow-up processes. These findings are in agreement with the prediction by Davenport and Kalakota, 30 who argued that AI in healthcare will probably imply that clinicians will devote more time to tasks that demand uniquely human skills, such as empathy and big-picture reasoning. They are also consistent with previous studies focusing on the women’s perspective, which have found that women are generally positive to AI in radiology, even though they also express concerns of AI replacing radiologists totally, worrying about the absence of a ‘human touch’ in the diagnostic process.31–33

It is important that this anticipated shift in tasks is communicated to those who educate radiologists to ensure that they are prepared to meet the demands of the profession in the future, with a greater focus on patient interaction. In agreement, Yu et al. 34 emphasized that the medical education system has a key role to play in ensuring that clinicians adapt to their new roles with AI. Their work will have a stronger emphasis on the integration of information, interpretation, and emotional support for the patients. As radiologists increasingly view AI as a collaborative tool, we suggest that educational institutions adapt training in interpreting AI-generated outputs, understanding its limitations, and prioritizing patient interaction. Moreover, professional development will be essential to navigate the evolving landscape of AI-enhanced healthcare.

The belief that radiologists might lose their skills in reading images when they are doing it less was a concern raised by the participants; they wondered whether it will be possible to both relieve their workload, while also maintaining their reading skills over time. There appears to be a large challenge in insuring against de-skilling, so that radiologists can meet new developments, for example related to new types of lesions.

AI-radiologist interactions

Previous literature has articulated the risk that healthcare personnel place too much faith in the output of AI processes.35,36 Automation bias, or over-confidence in automation, is a potential negative implication of AI in healthcare. 1 However, this issue was not mentioned by the participants in this study. Many of them expressed that they were critical of the AI’s assessments and that they would not accept its judgment without further investigation. A likely explanation was that they were not fully satisfied with the way that the AI worked, which seems to have kept them attentive to potential mistakes. For example, they found it to be a limitation that it could neither consider historical pictures nor differentiate potential cancer from scarred tissue, which made them less inclined to completely trust the AI. Rather, they saw the result from the AI as a radiologist’s evaluation, with a similar, or lower, contribution. Nevertheless, given that this study was focused on subjective perceptions, we recommend that future research examine automation bias quantitatively in this type of context. Such research would address the tension between trust and the risk for automation bias among radiologists and the risk for ‘automation complacency’.

Strengths and limitations

This study explored radiologists’ subjective views towards AI in mammography, providing insights into the challenges involved in the development, implementation, acceptance and procurement of AI ahead. By doing this, our research intended to inform strategies for integrating AI into clinical practice. One major strength of the study was that the interviewed radiologists were actively using AI in their work, which reduced the risk for hypothetical bias, where individuals report something different from what they would actually do, which has been encountered in similar studies. 37

However, our findings only considered a specific radiology unit in a specific hospital in Sweden, and they may only apply to this setting and for this particular type of AI, limiting the transferability of the results. Depending on their function, other AI tools will involve other perceptions. We also recognize that our findings account for the viewpoints of academic radiologists. Despite the beliefs among the participants that the AI would not threaten their profession, it is possible that this medical field will change fundamentally in the future. This may imply higher expectations on radiologists in terms of absorbing new knowledge about treatments and adopting ever more advanced AI imaging tools, for example. We acknowledge that beliefs among radiologists are likely to vary depending on the complexity of the tasks that they normally undertake. Views are likely to differ among radiologists whose work is limited to image analysis tasks in small private clinics versus radiologists in larger public institutions, for instance. Studies of other units and tools would address this gap. Furthermore, we believe that a quantitative research design would be relevant to assess the generalizability of our findings. One suggestion is to conduct a Discrete Choice Experiment (DCE), which could explore factors such as acceptable recall rates, accuracy, and frequency of follow-up appointments. 38

Conclusion

This study explored radiologists’ views on the use of AI as a diagnostic tool in a clinical setting involving screening mammography. The participants’ observations regarded the quality of the technology as a tool, the nature of the AI-radiologist interactions, and the role of AI with regards to the healthcare sector and society at large. The richness of their feedback suggests that clinicians should be included in the development and procurement processes of AI ahead. This would likely promote more efficient and accepted use of AI as a clinical tool. Lastly, the results indicated that radiologists are comfortable with the type of output that is generated by AI, which can be ambiguous and sometimes erroneous. This suggests that mammography could be a springboard for AI in healthcare.

Supplemental Material

Supplemental Material - ‘Humans think outside the pixels’ – Radiologists’ perceptions of using AI for breast cancer detection in mammography screening in a clinical setting

Supplemental Material for Humans think outside the pixels’ – Radiologists’ perceptions of using AI for breast cancer detection in mammography screening in a clinical setting by Jennifer Viberg Johansson and Emma Engström in Health Informatics Journal

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Wallenberg AI, Autonomous Systems and Software Program – Humanities and Society (WASP-HS) funded by the Marianne and Marcus Wallenberg Foundation (Grant agreement no. MMW 2020.0093, Project AICare).

Ethical statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.