Abstract

Results of radiology imaging studies are not typically comprehensible to patients. With the advances in artificial intelligence (AI) technology in recent years, it is expected that AI technology can aid patients’ understanding of radiology imaging data. The aim of this study is to understand patients’ perceptions and acceptance of using AI technology to interpret their radiology reports. We conducted semi-structured interviews with 13 participants to elicit reflections pertaining to the use of AI technology in radiology report interpretation. A thematic analysis approach was employed to analyze the interview data. Participants have a generally positive attitude toward using AI-based systems to comprehend their radiology reports. AI is perceived to be particularly useful in seeking actionable information, confirming the doctor’s opinions, and preparing for the consultation. However, we also found various concerns related to the use of AI in this context, such as cyber-security, accuracy, and lack of empathy. Our results highlight the necessity of providing AI explanations to promote people’s trust and acceptance of AI. Designers of patient-centered AI systems should employ user-centered design approaches to address patients’ concerns. Such systems should also be designed to promote trust and deliver concerning health results in an empathetic manner to optimize the user experience.

Introduction

Diagnostic imaging tests, such as magnetic resonance imaging (MRI) and computerized tomography (CT), play an essential role in clinical diagnoses. Following the tests, radiology reports describing the image files are written by radiologists to communicate their interpretation of imaging findings to referring physicians. 1 Radiology report findings have traditionally been discussed between the interpreting radiologist and the referring physician and the imaging data are used almost exclusively by physicians. However, growing evidence suggests that patients are increasingly interested in timely and direct access to their radiology data.2–4

In line with a general interest in patient-centered radiology reporting, policies and initiatives, such as American Recovery and Reinvestment Act (ARRA) 5 and OpenNotes, 6 have been implemented to encourage healthcare organizations to give patients easy and full access to their medical imaging data through patient-facing technologies, such as online patient portals.7–10 It is well acknowledged by the clinical informatics field that increasing patient access to their health data is beneficial for promoting patient education, supporting patient-centered care, and enhancing patient-clinician interactions.2,4,11–13 However, despite those potential benefits, patients’ current use of diagnostic radiology data via patient portals is still limited in part due to the technical nature of the report content.4,14,15

To address this barrier, research in clinical informatics has applied techniques such as natural language processing (NLP) to provide explanations of medical concepts extracted from radiology reports.16,17 While these efforts are useful to aid patients’ understanding of medical terminologies, patients still have difficulty identifying meaningful information out of their radiology report, such as whether the report describes a normal or abnormal finding and what actions are needed to perform (e.g. call their doctor for an urgent appointment).18–20 A recent study found that many patients accessed patient portals not just to review the data but also to obtain useful and actional information so that they can make informed decisions. 18 These needs, however, have not been fully addressed by the current design of patient portals.19,21

With the advances in artificial intelligence (AI) technology in recent years, it is anticipated that the AI technology can empower patient-facing systems to fulfill patients’ information needs in interpreting their clinical data.22–24 In fact, AI-powered systems are increasingly being used in a variety of healthcare domains, such as health education,25,26 self-diagnoses,27–29 and mental health,30,31 to provide immediate and personalized health information to patients. 32 With instructions from these systems, patients can get an overview of their health condition and make informed decisions. Furthermore, the literature suggests that given the rise in the aging population of the United States and its associated healthcare costs, patient-facing AI systems will likely become the first point of contact for primary care.26,27,33 Despite its high potential, little is known about patients’ perceptions and acceptance of this novel technology.34,35 Our study set out to fill this knowledge gap by examining patients’ perceptions of using AI-based health systems in the context of comprehending radiology reports received via the patient portal. This is part of a larger research effort to design and develop AI-powered informatics tools to aid patients interpreting their diagnostic results (e.g. imaging data, laboratory test results).

In this study, we conducted an interview study using a technology probe approach with 13 participants to examine the following three research questions (RQs):

RQ1: What are patients’ perceived benefits and concerns of using AI-based technology to interpret radiology imaging findings?

RQ2: How to promote patients’ acceptance and trust of AI technology in communicating radiology imaging findings?

RQ3: How to design trustworthy, patient-centered AI systems to support patient comprehending their clinical data?

Methods

Data collection

To gauge patients’ general perceptions concerning the use of patient-facing, AI-based systems to interpret imaging data and radiology reports, we conducted semi-structured interviews with 13 participants between August and September 2019. The participants took part in our previous study which focused on understanding patients’ challenges and needs in interpreting diagnostic results. All participants have recent experience with using patient portals to review their diagnostic results before the study took place. This study has been approved and overseen by the first author’s University Institutional Review Board (IRB).

The participants reported their demographics in a short questionnaire, which was deployed online and completed by participants before the interview (see Supplemental material). In addition to general demographic questions (e.g. age, gender, education background, and ethnicity), participants were also asked to rate their health literacy and technology literacy on a scale of 1-5 (“1” denotes low literacy whereas “5” denotes high literacy). Definitions of health literacy 36 and technology literacy 37 were provided to help participants understand the meaning of these two concepts. Almost all participants reported medium to high level of technology literacy and health literacy, with only one participant (P11) rating her technology literacy as “low to medium”. Characteristics of the participants are summarized in Table 1.

Characteristics of study participants.

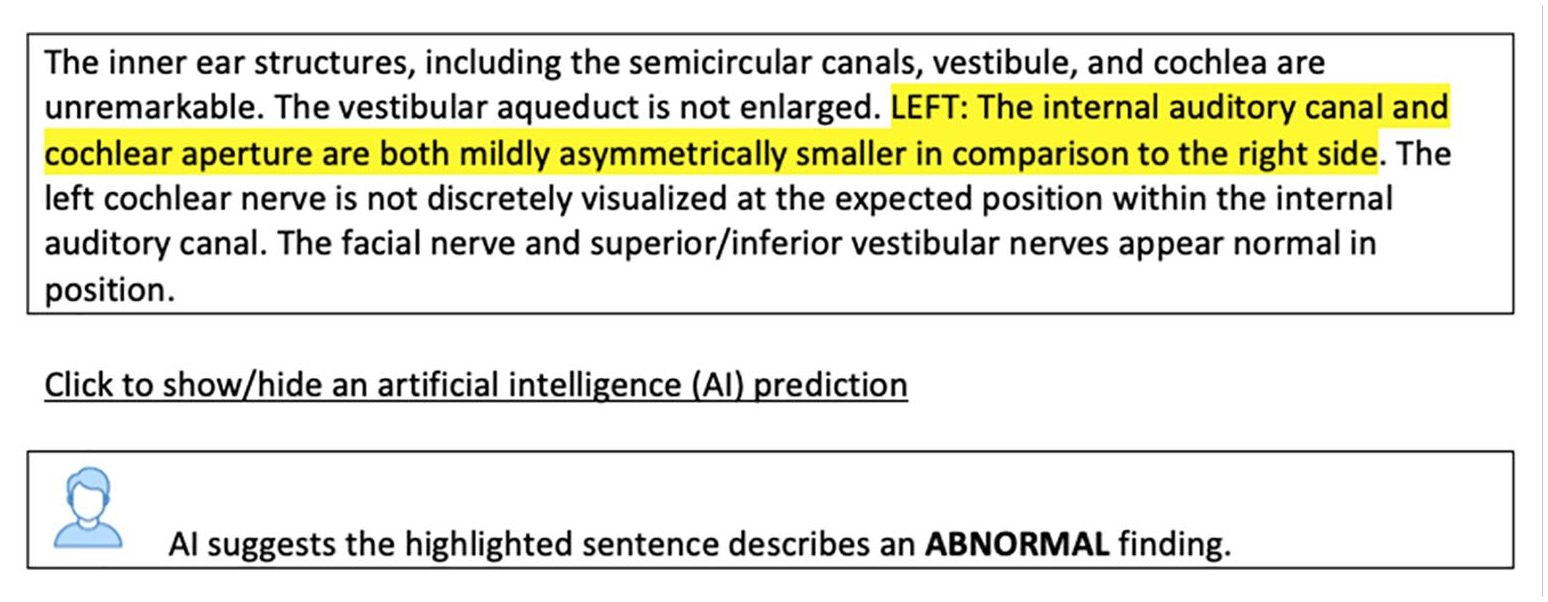

The interview protocol was developed in an iterative manner by the researchers and pilot tested with a small group of people to ensure the clarity and relevance of the questions, and to estimate the length of time to complete the interview. The interviews were conducted by two researchers who had completed research ethics online trainings. During an interview, we first asked the participant to read and sign the informed consent form which explained participant rights including the ability to withdraw from the study at any time without penalty. The interview began with general questions about participants’ awareness and experience of using AI-based systems to consult health-related information. It was assumed that many participants had no experience with AI-driven healthcare systems in the past; thus we used a technology probe approach 38 to demonstrate a system prototype which could predict whether a radiology report describes a normal or abnormal finding (Figure 1). The purpose of using this approach was not to explore usability issues or collect information around system use. Instead, we introduced the system prototype to elicit reflections from participants pertaining to the application of AI technology in radiology report interpretation and to reveal technology needs, concerns, and desires.39–41 The prototype has a web-based interface, showing the report content and the algorithmic predictions (Figure 1). To develop the system prototype, we used a linear support vector machine (SVM) model to train a dataset created in a previous study. 42 The dataset consisted of 276 sentences retrieved from de-identified radiology reports that were annotated by the subject matter experts (i.e. radiologists), and 727 sentences that were annotated by crowd workers. 42 They annotated the radiology reports as describing either “normal” or “abnormal” findings (we refer to these annotations as “labels”). The AI model was trained using all the crowd-annotated data (727 sentences and labels). We then used the 276 expert-annotated labels as the “gold standard” to evaluate the performance of the model. The AI model had a great performance (around 90%) in predicting the normality of the findings described in a radiology report. Once the system demonstration was completed, the researchers continued the interview by asking about their perceived benefits and concerns of using AI-based health systems, the types of medical information they would like to receive from such systems, and the ways to increase their acceptance and trust of AI outputs. All interviews were audio recorded with participants’ permission. All participants received a $20 compensation after the study.

The prototype interface.

Data analysis

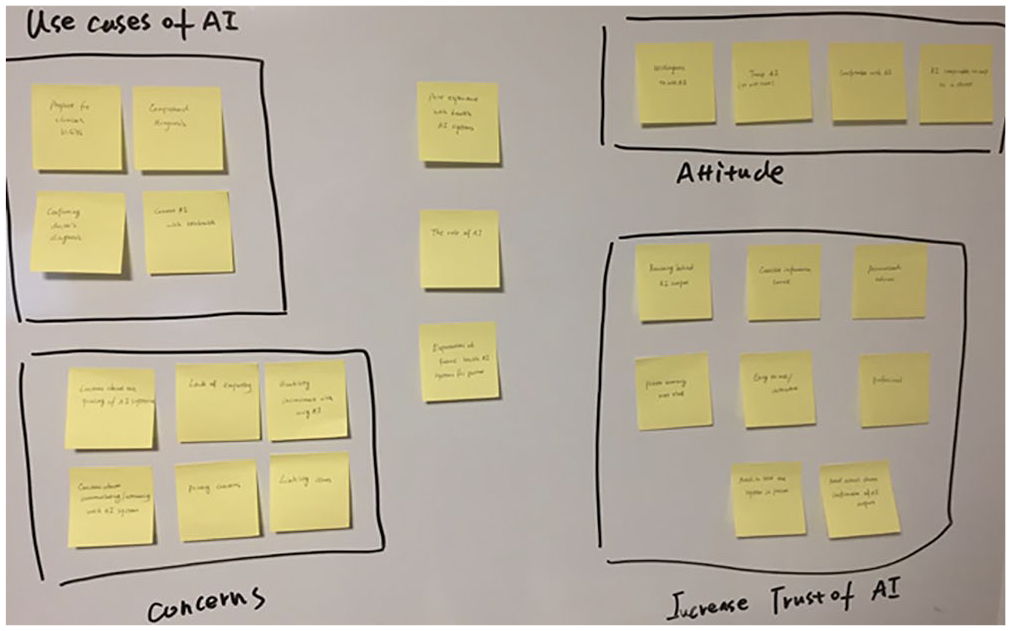

All interviews were transcribed verbatim. The transcripts were managed using Nvivo (QSR International, version 12), a software for organizing and analyzing qualitative data. Two researchers first independently analyzed a small set of interview transcripts (n = 3) using the open coding technique. 43 We chose this coding technique because it could generate rich thematic analyses through line-by-line, iterative-inductive analysis of interview transcripts, revealing detailed observations of participants’ perspectives and opinions while ensuring study validity. 44 The initial list of codes and code definitions were generated and then discussed in a group session until a consensus was reached. This step was followed by creating a codebook which defines each code to standardize the coding process for the rest of the interview transcripts. The researchers then used the codebook to complete the analysis. The agreement of coding results between two researchers was substantial (i.e. greater than 85%). Disagreements were discussed and resolved during weekly group meetings. New codes that emerged through this process were also discussed by the research team to determine whether they should be kept, merged, or removed. Using affinity diagrams, 45 the final list of codes was grouped under themes describing participant’s perceptions and concerns of health AI systems, as well as the approaches for enhancing the system’s acceptability and trust. The process of grouping codes is illustrated in Figure 2. For example, “concerns about the quality of AI predictions”, “lack empathy”, “privacy concerns”, and a few other codes (bottom left, Figure 2) were deemed relevant to the participants’ concerns about using the AI system. Thus, we grouped them into a larger theme, namely “concerns”. This step was followed by identifying representative quotes to support the claims.

Using affinity diagram to group codes into larger themes.

Results

General perceptions of using AI tools to interpret diagnostic results

There was a general lack of familiarity and use of patient-facing, AI-based systems amongst participants. All participants reported never used such systems to consult clinical information and were generally unclear about what these systems can do. However, after interacting with our system prototype, a majority of participants considered AI-driven tools would be useful and suitable for interpreting a radiology report: “I like the idea, I like the concept. I’ve got no problem with using it. It’s gonna happen no matter what. We’re all just in for a future of a lot more AI interaction. [. . .] I am actually looking forward to it.” [P2]

Participants also discussed certain scenarios in which AI-driven systems can facilitate the comprehension of radiology reports. First, a majority of participants perceived AI to be particularly useful when they might struggle to comprehend their physician’s diagnosis. They stated that they could use these systems to seek more personalized and actionable information to better make sense of their radiology report and confirm their physician’s diagnosis. In particular, as people are often confused about the meaning of the imaging findings, they noted that providing a normal vs. abnormal interpretation, as demonstrated by our system prototype, is very useful. They also expressed an interest in obtaining information related to the prognosis and treatment options from AI systems, if possible at all: “If an AI system could access your medical record and tell you this is the most likely thing, that would be great.” [P8] Getting such information could help them determine what actions are needed. However, allowing patients to use AI systems to consult the meaning of diagnostic radiology findings outside of clinical settings may raise practical and ethical issues. For example, there exists legitimate concerns about the accountability of AI systems in decision making. 46 When it comes to high-stake conditions, such as communicating medical findings to patients, policies and guidelines should be in place to regulate the use of such systems and clearly define the accountability of AI outputs. 47

Furthermore, patients may visit and consult with more than one doctors and undergo multiple radiology imaging studies regarding a medical problem. A few participants discussed the possibility of using AI systems to evaluate and compare different opinions received from their physicians with respect to their diagnostic imaging studies: “I could get a second opinion because I found my doctors interpret my results differently. Maybe it could look into that.” [P8]

Lastly, AI systems were perceived as a useful tool in allowing an individual to prepare for their clinical visits. The systems could provide credible information that they can use to conduct their own research along with contemplating and constructing questions prior to the consultation. By doing so, they can become more prepared for the conversation with their physician, as one participant explained: “Maybe give a user questions that they can ask the doctor, because that’s the other thing I noticed, is that a lot of people don’t get the results they want, or the medical outcomes, because they don’t know what questions to ask the doctor. The doctor has like two minutes to talk to you. [. . .] The patient doesn’t know what to ask, so doctors would be like, ‘Hmmm, they are not complaining about this.’ But if AI could be like, ‘Hey, here is your results, do you feel this? Or do you have problems breathing? Or so on and so forth, and if you do, please bring this up with your doctor.’ My stepmother works in the ER and she’s an RN [Registered Nurse]. And she’s like, ‘Half the time when people come in, if they were just able to ask the right questions, they would be in and out, they’d start treatment immediately.’” [P5]

Concerns

Despite the general positive attitude, participants noted that AI-based systems should be a supplementary service (“a helpful adjunct to doctors” [P3]) rather than a replacement of the professional health force: “I think it can be like a useful tool for the doctor to make the diagnosis, but you can’t remove the human element from it. You still need a doctor to make the final decision.” [P9] They also expressed several major concerns that caused their hesitance with using patient-facing AI systems as part of their healthcare, including accuracy, cyber-security, and lack of empathy.

First, a major concern is related to the quality, trustworthiness, and accuracy of the medical information provided by AI systems: “I would need proof that it works and what you’re actually getting is meaningful information. Like it’s not just some crap. If it’s going to make recommendations to me, I want them to be proven that they’re actually legit.” [P13] Indeed, as healthcare is a high-stake context, users need to ensure the AI-generated predictions and medical recommendations are accurate and reliable, otherwise, they may perceive it as a risk. Our participants further explained that they wouldn’t solely rely on AI to make sense of their radiology report; instead, they may use other approaches for assurance purpose, such as looking up information online (“I may have to Google afterwards just to confirm”), and seeking confirmation from a doctor (“It has to be approved by my doctor, you know, something reassuring that this is not just some crap AI”).

Another major concern expressed by many participants is related to cybersecurity, privacy, and in particular, whether AI systems could maintain confidentiality so that sensitive health information was protected from potential hacking or data leakage, as one participant described: “It’s just those concerns that how safe it is out there. Well, you hear things get hacked. What happens if my health stuff gets hacked and it can be used against me?” [P11].

While a few participants thought that well-designed patient-facing AI systems can be more accurate and logical compared with doctors, the lack of human presence was seen as the main limitation. In particular, radiology imaging studies sometimes are used to diagnose complicated diseases (e.g. brain tumors, lung cancers), communicating an interpretation of radiology report (e.g. normal vs. concerning findings) without the presence of a medical professional may cause needless anxiety and negative emotions. Participants therefore expressed concerns about the inability of AI to understand emotional issues. Aligned with previous work, 34 the responses given by AI were seen as depersonalized and inhuman: “For serious topics like cancer or a disease that may be killing you, you don’t want AI telling you ‘you’re going to die’. [. . .] we are not at the point where AI basically understands emotions. So in kind of sensitive things, you kind of just want it to be like, ‘hey, here’s your results, here are the steps you can do while you’re waiting for your doctor.’ [. . .] Give a warning, but do it in a way that doesn’t freak them [people] out. Again, humans want empathy” [P5]. This echoed the argument that health information systems need to consider patients’ emotional needs when they communicate concerning health news. 48

Increasing acceptability and trustworthy of AI-based systems in communicating radiology report findings

As described above, participants had concerns about using AI systems to make sense of their radiology report. In particular, they tended to have doubts about the quality and trustworthiness of AI outputs, such as the predictions and recommended medical information. When asked about how to address this concern, the majority of participants expressed the need to increase system transparency by explaining how AI arrived at its conclusion. This echoes previous research findings stating that AI explanations are often demanded by lay users to determine whether to trust or distrust the AI’s outputs.49,50 During the interview, we probed for more information with regard to what types of AI explanations participants need for trust-building. We grouped the needed AI explanations into four categories, including logic/reasoning, information sources, system reliability, and personalization. Next, we describe each category in greater detail.

System logic and reasoning: Prior work has demonstrated that providing information related to how a system works, including its policies, logic, and limitations, could be a viable way to enhance users’ understanding and trust in system-generated recommendations.49,51 In a similar vein, in our study, participants expressed the importance of knowing how AI systems generate medical information to help them quickly and accurately discern whether or not to trust those medical-related recommendations: “I’d be more interested in knowing what kind of machine learning was used for the AI and how it arrives at its results [. . .] I’d be interested in seeing the details, the sample size or what algorithms are being used, because it could just be using a sample of 10 people.” [P10]

System reliability: System reliability has a significant impact on user trust and acceptance of technology. 52 Our participants would prefer to know such information as under what circumstances has this system been wrong in the past to determine whether they would trust and act on AI-generated outputs. One participant explained: “It can be useful if offering information about how many errors have occurred in the past. Such as how much of the times it was correct, actual results like it was correct 80% of the time or something like that.” [P10] In addition to the performance history, participants also mentioned it would be useful to know the system’s confidence level/score, which reflects the chances that the AI is correct for a particular prediction.

Information sources: Presenting the information sources underpinning a system has been shown to improve a user’s understanding of system functionality and trust of system outputs. 53 Aligned with the previous work, our participants agreed that being able to see information sources used in AI prediction can help them eliminate any doubt about the qualities of the system’s data and establish appropriate and calibrated trust toward the recommendations: “My level of trust would depend on the source naturally. If it’s from Joe down the street, obviously I wouldn’t be too crazy about it. But if it’s from a trusted source, like a well-respected medical organization or something like that, like John Hopkins or Mayo Clinic, that would probably help build a little bit of trust.” [P4]

Personalization: Providing personalized information is a key feature of intelligent and recommender systems.53,54 Our participants expressed the necessity of understanding whether and how their inputs (e.g. diagnostic results, demographic information, and past medical history) are taken and modeled by AI, and to what extent system outputs are personalized for them. Understanding such information is important to achieve acceptable levels of user trust and engagement: “I think it would be helpful if I could know how much the information given from the AI was applicable to a person that has given the information to AI.” [P9]

Discussion

Prior work has suggested that current patient-facing systems, such as patient portals, do not adequately meet patients’ information needs when they attempt to interpret their radiology imaging data. 21 In recent years, due to the advancement of AI technology, it is expected that it can empower patient-facing systems to provide immediate and personalized health information to patients based on their diagnostic results and medical context. 32 In this study, we are interested in examining patients’ perceptions about using AI-driven systems to make sense of their diagnostic radiology report, the barriers or concerns they may have, and viable ways to address those concerns in order to achieve the best uptake. To this end, we conducted an interview study using the technology probe approach with 13 participants who have used patient portals recently to access and review their diagnostic results. To the best of our knowledge, this is the first study looking into the use of AI-driven systems in comprehending diagnostic results from the perspective of patients.

We found that people had a generally positive attitude toward using AI to comprehend their radiology reports. They perceived AI systems to be particularly useful in seeking personalized and actionable information, evaluating and confirming doctor’s interpretations, and preparing for the clinical visit. For example, participants considered showing the normality indication of a diagnostic report to be very useful in aiding their understanding of the radiology report. Knowing such information helps them determine appropriate actions they can take, such as scheduling an urgent appointment with a clinician. A majority of participants also expressed the interest of using AI systems to help them better prepare for the clinical visit so that they can make the best use of the limited interaction time with clinicians. For example, systems could suggest consumer-friendly and credible information they can use to conduct their own research and prepare for the consultation.

However, despite the perceived benefits, they also expressed several concerns mainly pertaining to the trustworthiness, cybersecurity, and lack of empathy of AI-based systems. This finding highlights that AI system designers need to take into consideration patients’ perceptions and opinions to better address their concerns and remove any potential barriers, and in turn, maximize engagement and utilization. 34 While the passage of time is necessary for the health AI systems to be adopted and trusted, 55 our participants expressed the desire to see more explanations from AI to promote acceptance and foster trust. For example, participants generally expressed the desire to know the details about the system’s reliability, including the AI-prediction accuracy. This finding is supported by several other studies that have highlighted the importance of showing system reliability data to users.56–58

They also preferred to know information about how the system operates and how its outputs are generated (e.g. logic/reasoning). As highlighted by prior work, enabling users to understand system functionality is beneficial as this information improves the transparency of systems and helps users build an appropriate mental model of the system.53,59 However, one significant challenge is that due to the scale and complexity of AI algorithms (e.g. neural networks, deep learning), the current methods that enable AI systems to explain their outputs are still premature.60,61 One of the most popular approaches to explain a prediction made by AI algorithms is listing the features with the highest weights contributing to a model’s prediction. 62 For example, our current model can be improved to explain its reasoning by saying “the word ‘asymmetrically smaller’, which AI associates with abnormal diagnosis, is contributing to this prediction”. However, future work needs to examine whether such an explanation satisfies a patient’s needs to understand the AI.

Participants also consider information sources that AI health systems leveraged to provide medical advice as an important factor for trust-building. Affording decision makers (e.g. patients) the ability to see the quality and the source of information can improve the transparency of system operations and help people better understand the system’s functionality, and thus, increasing the level of trustworthiness of AI system-generated outputs. 61 Indeed, if the data on which AI is operating is exceedingly noisy or biased, its outputs may be incorrect or inappropriate. 63 The key of making data-related information available to users is presenting the data in a manner that is more readily understood and comprehensible, especially for users with limited technology background. 64 Future research can examine and evaluate different techniques (e.g. textual vs graphical) to explain the underlying data to patients.

Information related to how their personal health data and input is taken into account by AI systems is also something participants considered worthy of merit. The concept of personalization is central to the discussion of system transparency.65,66 That is because people often want to understand how they are modeled by the system, if at all, and to what extent system outputs (e.g. interpretation of radiology report) represent their unique health status and concerns. Therefore, providing information about how AI systems used people’s personal health information to derive predictions and recommend medical information is critical for such systems to achieve acceptable levels of user trust and engagement.

Even though our participants wanted to be informed about the meaning of radiology imaging data, it may arise needless anxiety and negative emotions when communicating concerning diagnostic results or serious diagnosis. As our participants stated, AI lacks emotional support that can take place during a face-to-face consultation with a skilled medical professional. Therefore, it is necessary to take actions to mitigate emotional stress when using AI systems to communicate radiology report findings. For example, patients need options to determine whether they want to receive the interpretations, especially abnormal or even more concerning results. AI systems can use this information to tailor health news to patients’ preferences and deliver potentially stressful health information in a more empathic manner. 48

The present study had a number of limitations. First, the demonstration of the system prototype and the opportunity for participants to directly interact with it likely strengthen the validity of the findings. However, the prototype shows some medical cases that were not directly experienced by participants, which may slightly affect their perceptions. Second, using the technology probe approach and showing a sample system prototype can be helpful in deepening discussion on an unfamiliar technology, however, it may also cause the participants to limit their discussions to the use of that specific system instead of more general AI systems. To better cope with this methodological limitation, we clearly explained to the participants that the system was only used to demonstrate a small piece of what AI technology can do in communicating diagnostic results. The participants were encouraged to think outside the box with regard to under what circumstances and how patient-facing, AI-empowered systems can help them comprehend radiology imaging findings. Third, because AI technology is pretty novel to lay individuals, it is likely that the study results are bounded by the novelty effect of the technology. In our future work, we will look into how the novelty effect of AI-based health system plays out in patients’ perceptions and acceptance through longitudinal studies. Fourth, due to the sample size, we didn’t evaluate the correlation between participants’ characteristics (e.g. age, gender, and technology literacy) and their perceptions of AI technology. Our subsequent work includes a large-scale survey study to examine the effects of socio-demographic factors on patients’ acceptance and perceptions of AI systems. Lastly, our results may not generalize to all types of patients because our sample consisted of a large majority of participants who self-reported to have medium to high level of technology literacy. In our subsequent study, we will include more marginalized populations (e.g. less literate people, older adults) to capture diverse views representative of potential users and examine how to aid their understanding of diagnostic results through AI technology.

Conclusions

In this work, we conducted an interview study to examine patients’ perceptions of using AI technology to make sense of their radiology imaging findings. Our results showed that people have a generally positive attitude with using patient-facing, AI-powered systems to obtain actionable information, evaluate and compare doctor’s diagnosis, and prepare for the consultation. Despite so, we found various concerns pertaining to the use of AI in this context, such as cybersecurity, accuracy, and lack of empathy. Our results also highlight the necessity of increasing AI system transparency and providing more explanations to promote people’s trust and acceptance of AI outputs. We conclude this paper by discussing implications for designing patient-centered and trustworthy AI-powered, patient-facing applications.

Supplemental Material

sj-docx-1-jhi-10.1177_14604582211011215 – Supplemental material for Patients’ perceptions of using artificial intelligence (AI)-based technology to comprehend radiology imaging data

Supplemental material, sj-docx-1-jhi-10.1177_14604582211011215 for Patients’ perceptions of using artificial intelligence (AI)-based technology to comprehend radiology imaging data by Zhan Zhang, Daniel Citardi, Dakuo Wang, Yegin Genc, Juan Shan and Xiangmin Fan in Health Informatics Journal

Footnotes

Acknowledgements

We thank the participants of this study. We also thank the reviewers for their feedback.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

This study has been approved by the first author’s University Institutional Review Board (IRB).

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.