Abstract

Autism spectrum disorder is an umbrella term for a group of neurodevelopmental disorders that is associated with impairments to social interaction, communication, and behaviour. Typically, autism spectrum disorder is first detected with a screening tool (e.g. modified checklist for autism in toddlers). However, the interpretation of autism spectrum disorder behavioural symptoms varies across cultures: the sensitivity of modified checklist for autism in toddlers is as low as 25 per cent in Sri Lanka. A culturally sensitive screening tool called pictorial autism assessment schedule has overcome this problem. Low- and middle-income countries have a shortage of mental health specialists, which is a key barrier for obtaining an early autism spectrum disorder diagnosis. Early identification of autism spectrum disorder enables intervention before atypical patterns of behaviour and brain function become established. This article proposes a culturally sensitive autism spectrum disorder screening mobile application. The proposed application embeds an intelligent machine learning model and uses a clinically validated symptom checklist to monitor and detect autism spectrum disorder in low- and middle-income countries for the first time. Machine learning models were trained on clinical pictorial autism assessment schedule data and their predictive performance was evaluated, which demonstrated that the random forest was the optimal classifier (area under the receiver operating characteristic (0.98)) for embedding into the mobile screening tool. In addition, feature selection demonstrated that many pictorial autism assessment schedule questions are redundant and can be removed to optimise the screening process.

Introduction

Autism spectrum disorder (ASD) is a developmental disorder that affects social interaction, communication, and behaviour. ASD can be diagnosed at any age, but symptoms manifest within 24 months of birth, and ASD affects up to 3.8 children per 1000 in the United Kingdom. 1 However, less evidence is available for ASD prevalence estimates in low- and middle-income countries (LMIC). 2 ASD detection is poor in LMIC compared with developed countries due to research and funding limitations in LMIC. To date, LMIC have only completed limited research to determine how many of their citizens are autistic, and health officials who say ASD is non-existent in their regions likely do not know how to identify it. 3 This is a deeply concerning issue and there is an urgent need for more support and services for these individuals’ living in LMIC.

Early identification of ASD in children enables intensive intervention before neuronal pruning is completed. 4 It has been reported that not addressing ASD at a young age has a major influence on development into adulthood and results in a high economic cost, exceeding the lifetime costs of asthma, intellectual disability, and diabetes. 5 It should be noted that many LMIC provide medical and care home facilities for free or with reduced costs for their citizens. However, it can still cost a substantial amount of money and governments must allocate this in their annual budget. In addition, people with mental health disorders cannot be included in the national workforce, which will negatively impact the economy of LMIC.

Typically, ASD is first identified in children using a screening tool that implements a symptom checklist such as the modified checklist for autism in toddlers, revised with follow-up (M-CHAT-R/F). 6 Screening tools are preferred over clinical observations at this early stage as atypical behaviour can be absent or amplified in busy outpatient clinics (e.g. due to anxiety in an unfamiliar environment), impairing ASD detection. M-CHAT-R/F is a pair of 20 items’ checklists of symptoms and is valid for children between 16 and 30 months old. M-CHAT and its derivatives are a popular choice for screening ASD in young children as they are quick to administer (taking 2–3 min to complete). As ASD is an inherently biological phenomenon, the symptom checklists are consistent across different ethnic environments. However, the description and interpretation of ASD behavioural symptoms varies across different cultures. Therefore, culture can affect the ability of screening tools to detect ASD. The sensitivity of M-CHAT is as low as 25 per cent 7 in Sri Lanka. To overcome this, a culturally sensitive screening tool that incorporates written and pictorial content has been developed called pictorial autism assessment schedule (PAAS). 8 PAAS has been found to be an effective screening tool for ASD in Sri Lanka, with a sensitivity of up to 88 per cent when discriminating between ASD and neurotypical paediatric subjects.

Both screening tools and ASD interventions are usually administered by mental health specialists in collaboration with the child’s parents. LMIC have a shortage of mental health specialists, which is a key barrier for obtaining an ASD diagnosis and accessing services that improve the prognosis of autistic children. Long waiting times (often multiple years) are, therefore, common in LMIC and many children do not receive any diagnosis or treatment at all, with a treatment gap of up to 100 per cent in some areas. 5 Increasing the number of mental health specialists is unrealistic for many LMIC due to insufficient resources. An alternative approach is to use non-specialist healthcare workers to administer the screening tool at home or in healthcare clinics. The benefit of this approach is twofold: first, minimal additional resources are required. Second, there is a low awareness about ASD in LMIC. Parents will not be aware of developmental delays and their potential link to ASD and will instead consider the symptoms of developmental delay to be normal behavioural deviations (i.e. they may consider their child to be badly behaved rather than developmentally delayed). ASD screening can be integrated into standard paediatric checkups performed by non-specialist healthcare workers (e.g. vaccination procedures) to improve diagnosis rates and to increase parental awareness of developmental delays. To assist non-specialist healthcare workers, it is essential to develop computer-aided tools to conduct ASD screening and provide early intervention activities for autistic children.

To overcome the challenges described above, a mobile application that predicts ASD from a clinically validated culturally sensitive symptom checklist is proposed. Multiple machine learning algorithms are thoroughly evaluated on clinical PAAS data, and the best performing algorithm is embedded into the application. The proposed application can be administered by non-specialist healthcare workers in LMIC at home, to advise if a clinical referral is recommended. As more data are collected, the application can be refined and improved with software updates. An analysis of the PAAS checklist with feature selection algorithms can reveal which questions are superfluous and can be used to refine the checklist in the future. In this article, an innovative mobile application for ASD screening in LMIC is developed. There are limited studies that have embedded an intelligent machine learning algorithm into a mobile screening application. An ASD screening application that incorporates intelligent decision making in combination with a culturally sensitive and clinically validated screening tool is novel, and this combined approach will reduce the burden of the shortage of mental health services in LMIC. In addition, this will also enable the early detection of ASD in LMIC, improving clinical outcomes. Furthermore, valuable data can be collected about the prevalence of ASD in LMIC, which is severely lacking.

This article is structured as follows: the sections on ‘Machine learning and intelligent methods for autism detection’ and ‘Autism screening mobile applications’ critically review the current machine learning algorithms applied to ASD prediction and mobile ASD screening applications. The ‘Methods’ section describes the data collection and analytics pipeline. The section ‘Model evaluation’ reviews the predictive performance of each model, discusses choosing a final model for embedding into the mobile screening an application, and investigates the importance of PAAS questions for ASD detection. In the final section, conclusions, future work, and limitations are presented and considered.

Literature review

Machine learning and intelligent methods for autism detection

Machine learning algorithms have been broadly applied to detect ASD and to investigate its uncertain aetiology. 9 For ASD detection, a variety of data types have been used as input to supervised learning algorithms, including screening tools such as the autism diagnosis interview revised (ADI-R) 10 and the autism diagnostic observation schedule–Generic (ADOS-G). 11 Popular supervised learning models included support vector machines (SVMs) 12 and decision tree (DT) variants.13,14 Random forests, 15 neural networks, 16 least absolute shrinkage and selection operator (LASSO) regression, 17 and ridge regression 17 were also popular. Other input data types, such as functional magnetic resonance imaging (MRI), 18 eye tracking, 19 and genetic data, 20 have also been used for the detection of ASD.

A method of combining questionnaire and home video screening data has been shown to be a reliable method of detecting early autism at home. 21 The output of two independent machine learning classifiers was combined into a single screening outcome, with clinical results showing a sensitivity of up to 70 per cent and a specificity of up to 67 per cent. This approach included novel feature selection, feature engineering, and feature encoding methods to achieve this result.

A new machine learning method called rules-machine learning has been developed to detect ASD from questionnaire data.22,23 Many of the machine learning approaches discussed above, such as artificial neural networks, are known as black box models. The decision that a black box model makes cannot be interpreted 24 from the structure of the model (e.g. neuron weights). The rules-machine learning approach induces rules to provide a knowledge base that domain experts can interpret to provide an understanding of why a decision has been reached.

Feature selection has often been applied to autism screening questionnaires to identify minimal feature subsets to speed up the diagnosis process. 9 Notably, a novel computational intelligence algorithm called variable analysis has been used to detect a small set of core relevant features, while maintaining predictive performance.23,25

An interesting approach that detects ASD from the presence of co-occurring conditions has been proposed. 26 For example, co-occurring conditions could include obesity, developmental delay, and speech problems. The approach demonstrated an accuracy of up to 86 per cent for two classes (ASD present or absent). The approach could not reliably predict the severity of ASD from the co-occurring conditions.

An adaptive Bayesian classifier (ABC) has been used to predict ASD. 27 An advantage of the ABC system is that the classifier will reclassify data if a confidence threshold is not met. This would be particularly useful for clinical applications. Combining the output of multiple weak predictors to form a single strong predictor (ensembling) is a common technique in machine learning. 28 A proposed system called ensemble classification for autism screening (ECAS) 29 implements an ensemble approach to predict ASD from data collected via the ASDTests Android application23,25 – described further in the next subsection – in children. The ensemble system performed better in benchmarks compared with other common machine learning algorithms. However, ensemble systems are generally considered to be black boxes, and it is difficult to understand why certain features contribute to the overall decision. A logistic regression analysis of the same data has been undertaken 30 to screen for ASD and identify influential features for ASD detection. The system was capable of accurately predicting ASD in adults and children, while identifying key features of interest.

More advanced computational intelligence approaches, such as fuzzy set theory, have been applied for ASD detection in adults. A hybrid system based on fuzzy rules has been proposed to predict ASD with a high degree of accuracy. 31 Fuzzy set theory can model the uncertainty present in real-world data in a transparent way, which is particularly useful for biomedical and clinical applications. 32

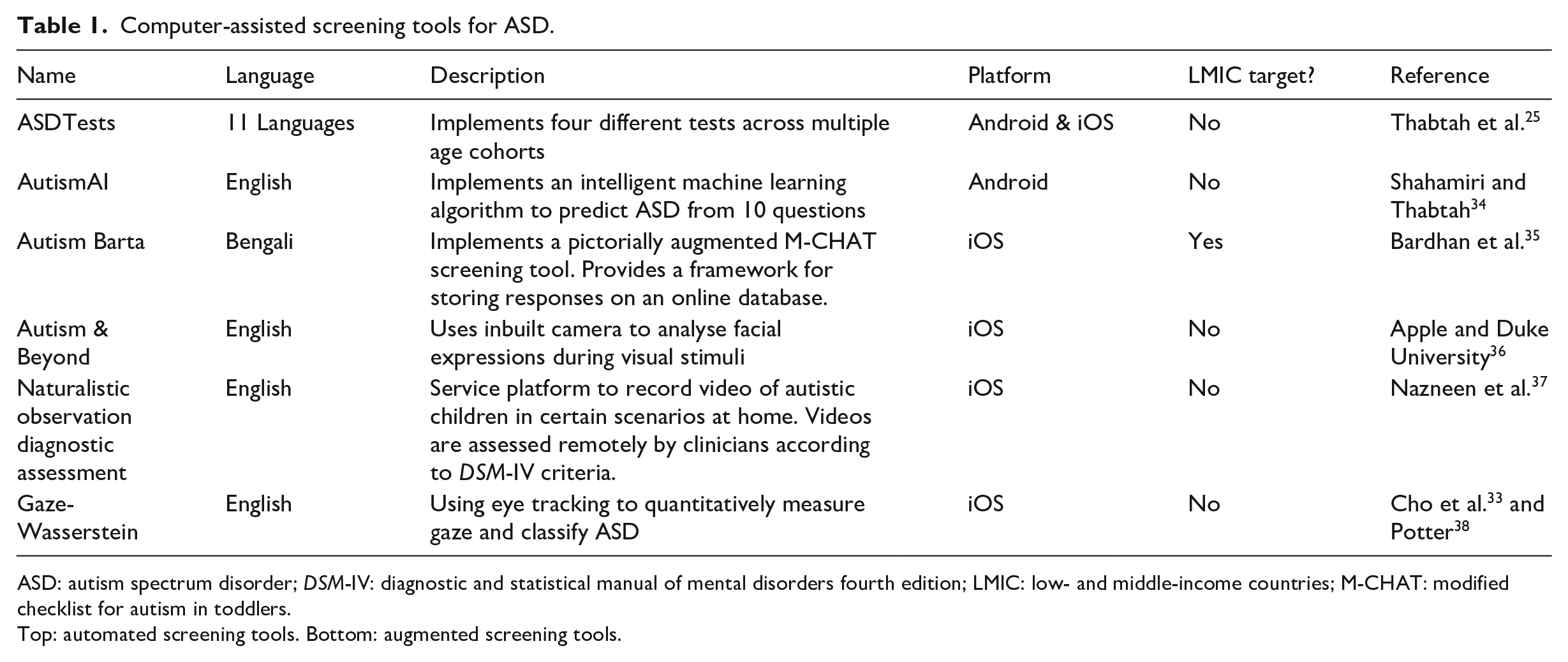

Autism screening mobile applications

A variety of computer-aided screening tools for ASD detection have been developed. The computer-aided tools fall into two broad categories: automating current paper-based screening tools (e.g. M-CHAT) or augmenting screening tools (e.g. using gaze tracking to collect a quantitative measure for classification 33 ). A significant number of these computer-aided tools have been implemented as a mobile application (see Table 1), as smart devices provide an ideal platform for mental health disorder screening. 39 Smart devices have a large amount of sensors and are widely available (including in LMIC): 36 per cent of the world’s population owned a smartphone in 2018. 40 The automation tools implement a variety of standardised symptom checklists (e.g. M-CHAT) and sometimes incorporate other data modalities to improve comprehension (e.g. pictorial data in addition to textual data). The augmentation tools typically record quantitative data (e.g. from eye gaze) and perform classification using supervised learning algorithms to identify ASD in subjects.

Computer-assisted screening tools for ASD.

ASD: autism spectrum disorder;

Top: automated screening tools. Bottom: augmented screening tools.

ASDTests23,25 is an Android application that contains four different screening tests for toddlers, children, adolescents, and adults. It is proposed that the application can be used by health professionals to signpost individuals towards a formal autism diagnosis. The application also provides the opportunity to collect valuable data from the four different age groups to improve the efficiency and accuracy of the screening process. However, the application does not incorporate an intelligent machine learning decision model to make the recommendation, and the question sets have not been developed in collaboration with clinicians from LMIC as PAAS has. AutismAI 34 is an Android application that incorporates intelligent machine learning decisions from 10 questions according to an age cohort. However, these 10 questions have not been validated in a clinical setting in LMIC as PAAS has.

Methods

Data collection

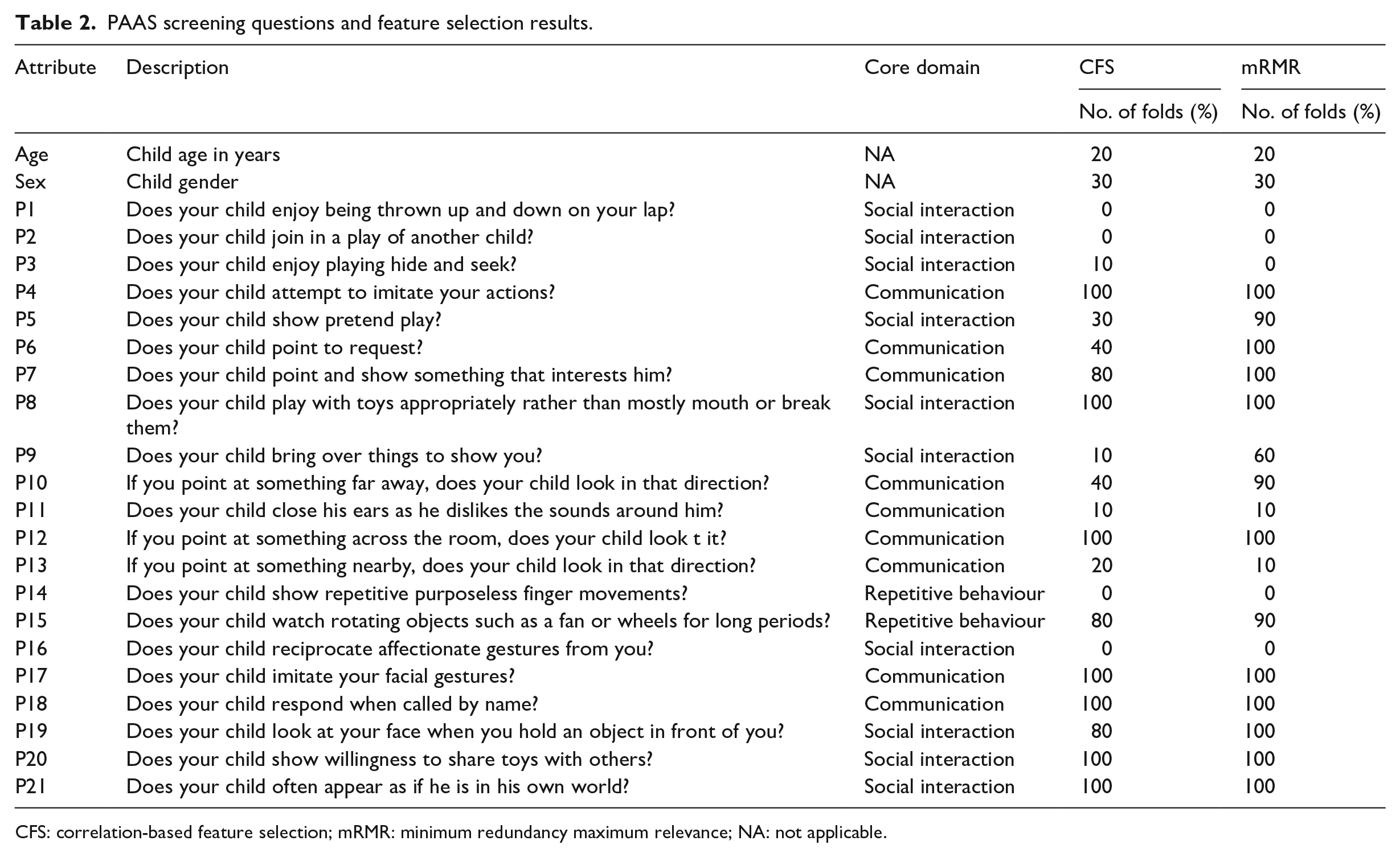

The PAAS checklist was developed from a mixture of different sources, including

PAAS screening questions and feature selection results.

CFS: correlation-based feature selection; mRMR: minimum redundancy maximum relevance; NA: not applicable.

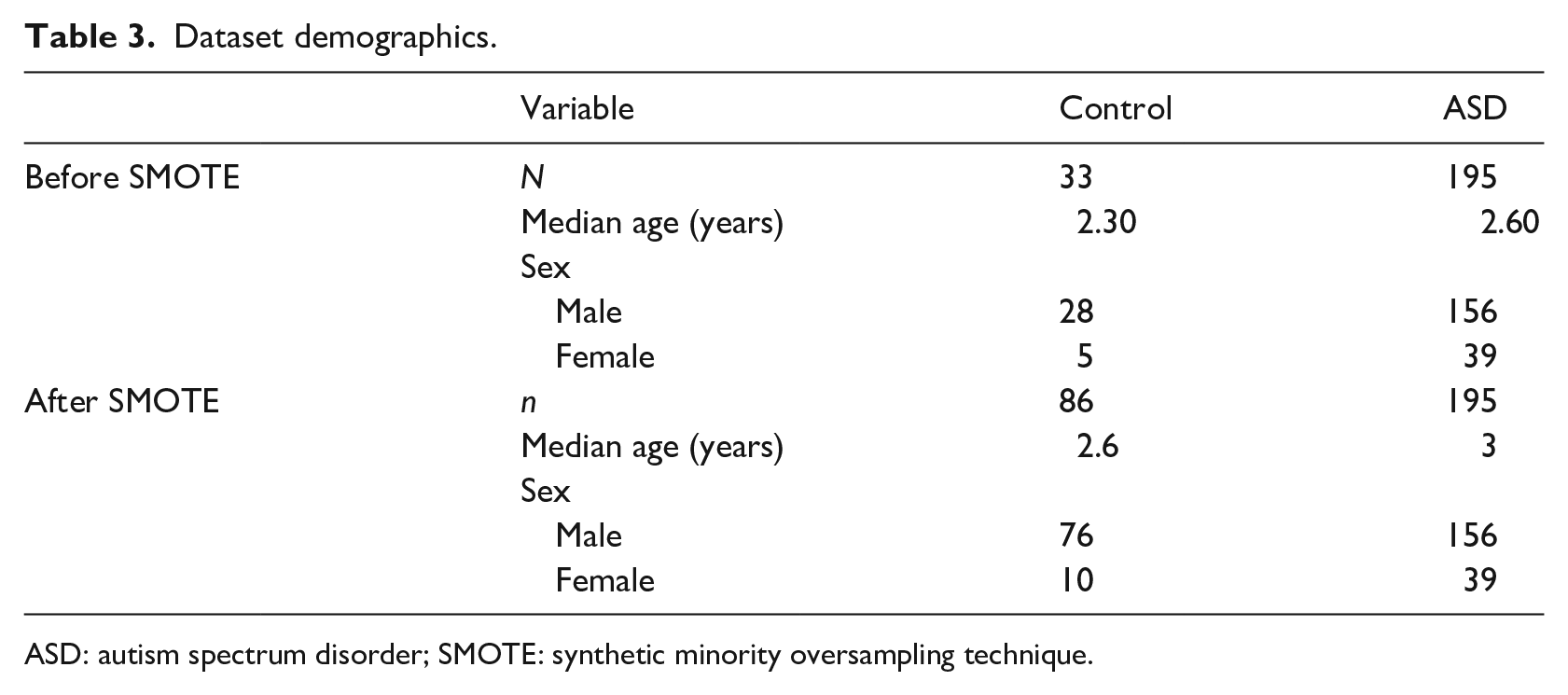

PAAS responses from 228 children (see top section of Table 3) were collected and used to train and evaluate a variety of different supervised learning models, including 33 control subjects and 195 ASD subjects. Up to 5 per cent of PAAS were missing for each subject, but no imputation was performed to replace the missing values. The rationale for this was that the lack of a response was caused by uncertainty (i.e. the parent is unsure if their child performs a certain behaviour), and therefore, missing answers contained useful information. Each child had their diagnosis (i.e. ASD or neurotypical) confirmed by clinical observation independent of the decision provided by PAAS.

Dataset demographics.

ASD: autism spectrum disorder; SMOTE: synthetic minority oversampling technique.

Prediction models

A learning pipeline that included data resampling, a variety of classification models, and feature ranking was applied to train a model and evaluate its performance and overall suitability.

Imbalanced data

The distribution of the two classes is approximately 20 per cent (control) to 80 per cent (ASD). This class imbalance can have an impact on many classification algorithms, typically by introducing a performance bias in favour of the majority class.

42

For example, if a classification algorithm classified all samples as ASD by default, then it will have an accuracy of approximately 80 per cent. Class imbalance can, therefore, affect the ability of classification algorithms to generalise well to unseen data and care must be taken when evaluating the performance of the classifier (e.g. by using evaluation metrics that are insensitive to class imbalance). Resampling the data is a popular method of mitigating class imbalance.

42

We applied the synthetic minority oversampling technique

43

(SMOTE) in Weka to the data (see bottom section of Table 3, random seed

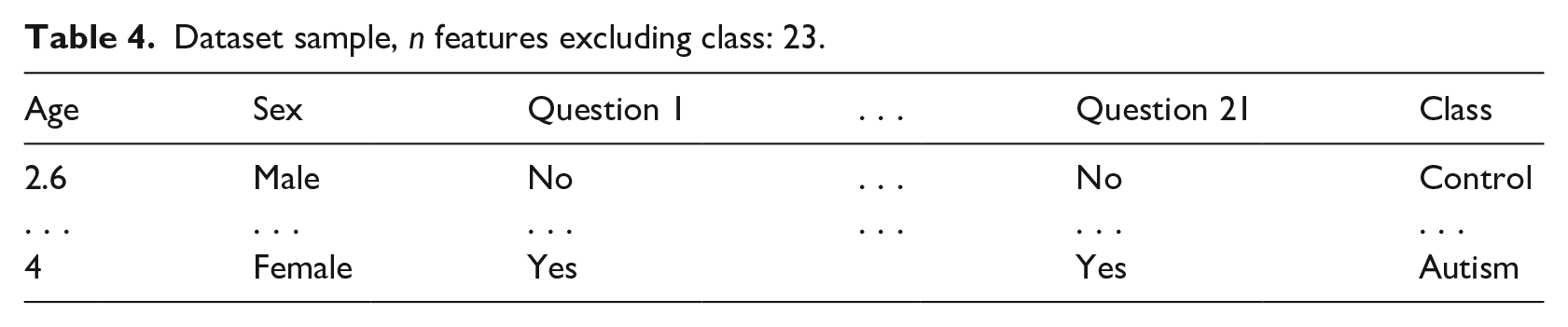

Dataset sample,

Classification algorithms

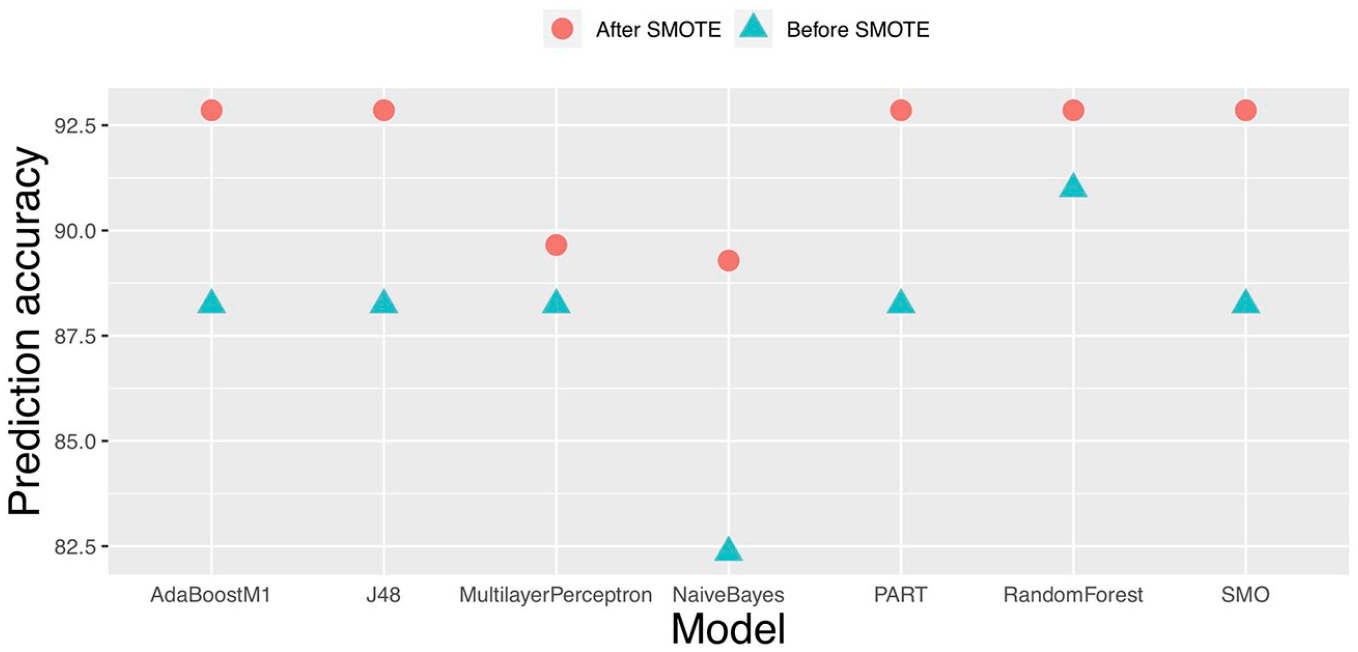

A variety of popular data mining techniques

44

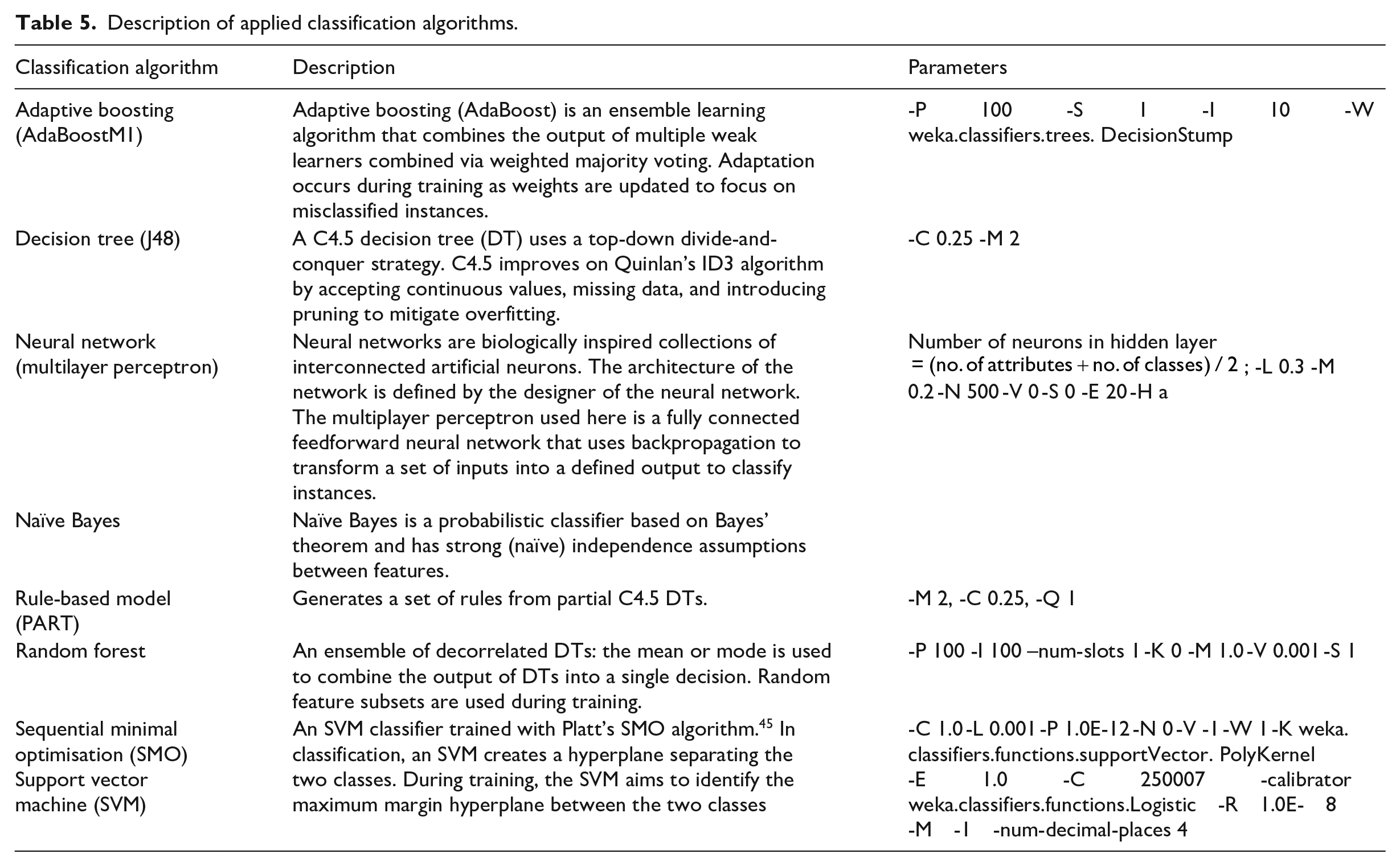

were evaluated to test their suitability for the ASD prediction task. The evaluated algorithms include adaptive boosting, DTs, neural networks, naïve Bayes, rule-based models, and random forests. A description of the applied algorithms is provided in Table 5. The models were implemented using the Weka version 3.8.2 data mining software.

46

Performance metrics were generated using 10 iterations of 10-fold cross validation to understand the generalisation ability of each model. The generated performance metrics of each model were compared using a paired

Description of applied classification algorithms.

Feature selection

Correlation-based feature selection (CFS) 47 and minimum redundancy maximum relevance (mRMR) 48 were applied to the dataset with 10-fold cross validation, to verify the usefulness of the features. The feature subsets identified were not used for data collection or during the training process, but is useful for optimising clinical applications of PAAS (mobile application or paper based) in the future. Removing questions with no predictive value can speed up the screening process, which would be useful during a home visit by a non-specialist healthcare worker.

Model evaluation

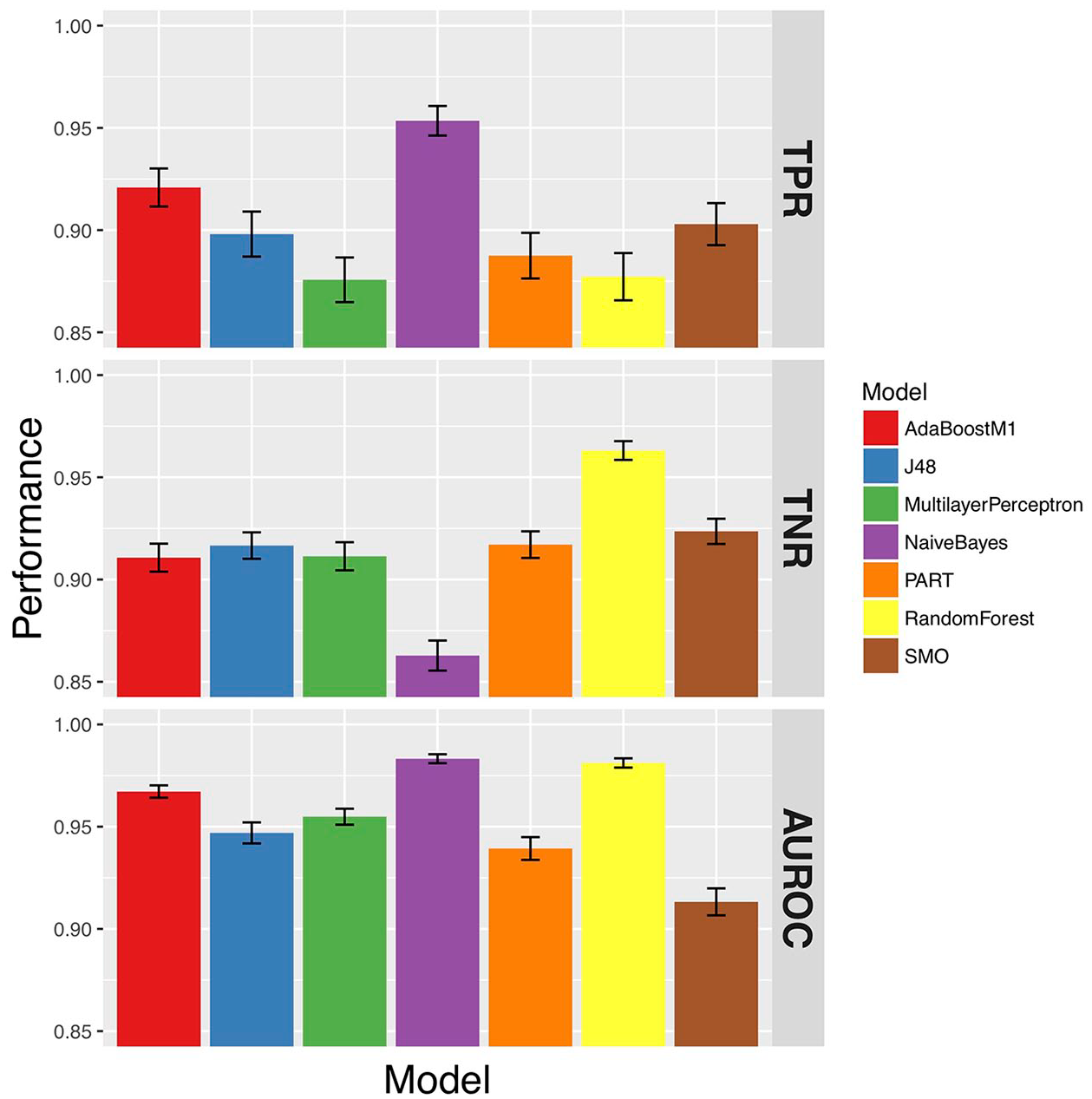

The predictive performance of models was evaluated using the true-positive rate (TPR; also known as sensitivity), false-positive rate (FPR), true-negative rate (TNR; also known as specificity), false-negative rate (FNR), and area under the receiver operating characteristic (AUROC). By evaluating a range of metrics, any bias introduced by class imbalance can be mitigated, and a more thorough understanding of the model’s performance can be gained.

Predictive performance

Models were trained using the yes/no responses to 21 PAAS items and additionally the child’s age and gender. Although PAAS does not use demographic information to predict ASD, the rationale for including both features as predictors lies in the biological pathophysiology of ASD. First, as ASD is a developmental disorder, age could be useful in order to determine whether behaviours are absent because of atypical development or because of immaturity. For example, an 18-month-old child that is missing a key behaviour that is predictive for autism may begin to spontaneously demonstrate that behaviour at 2 years of age. This is less likely to occur in a 5-year-old child, and thus, the behaviour could be a better predictor for an older child. This is particularly true for questions that test the ability of the child to perform social interactions and communicate (and less important for questions regarding repetitive behaviour). Understanding if this is the case could help improve PAAS and other symptom checklist–based screening tools in the future. Second, there is a variety of sex differences in ASD. Approximately four times more males than females are diagnosed with ASD on average. 49 In addition, behaviours could be inconsistent across gender as children’s brains develop differently depending on gender (e.g. females typically have better social mimicry skills which is thought to contribute to female underdiagnosis of ASD). 50

The performance of the models was assessed using the Weka experimenter environment. Only post-SMOTE data were input to the Weka experimenter environment; 10 iterations of 10-fold cross validation were used to generate sufficient performance metrics for valid statistical comparison (i.e. each model was trained and tested 100 times). All significance testing was conducted using a corrected paired

True-positive rate (TPR), true-negative rate (TNR), and area under the receiver operator curve (AUROC) of the models.

False-negative rate (FNR) and false-positive rate (FPR) of the models.

Due to the class imbalance in the dataset, it is sensible to place greater importance on the AUROC when evaluating the models, which is not sensitive to class imbalance. From this evaluation, it is clear that the random forest had the best AUROC, FPR, and TNR. The TPR and FNR were similar to all other algorithms. From this, the random forest was chosen to be embedded into the final mobile screening application.

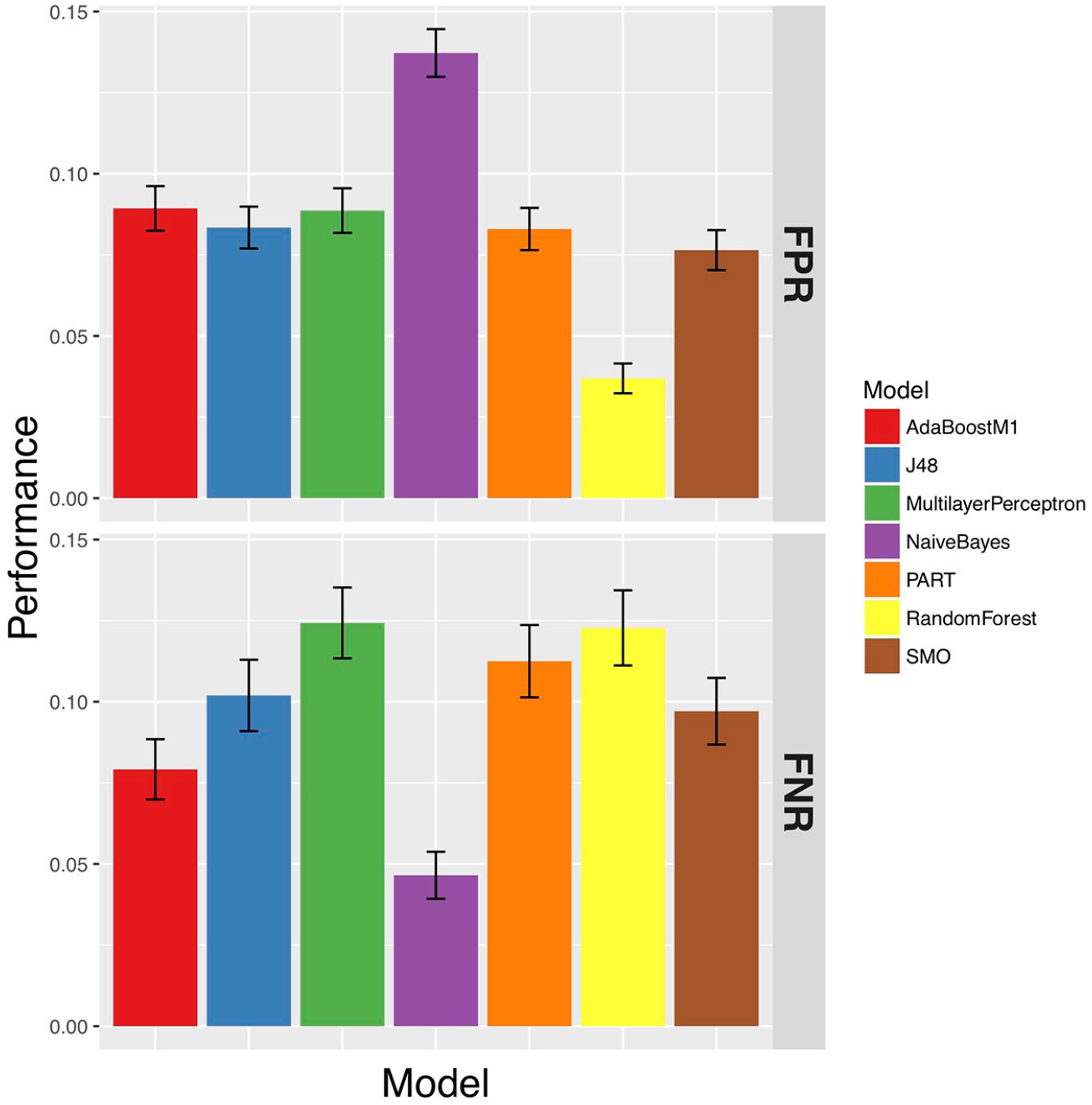

The application of SMOTE improved the predictive performance for all of the models except AdaBoostM1 and ZeroR, and the random forest and Multilayer Perceptron improved the most (see Figure 3).

Effect of SMOTE on model classification accuracy.

The predictive ability of the random forest exceeded the performance of the paper-based PAAS system. The standard PAAS checklist has reported a TPR of 88 per cent and a TNR of 93 per cent. 8 The random forest model reported a TPR of 96 per cent and a TNR of 97 per cent. One of the limitations of PAAS was that it used an arbitrary cut-off score rather than a standardised one. The improved performance shows that the random forest has learned to classify from the data better than the arbitrary cut-off designed by the developers of PAAS.

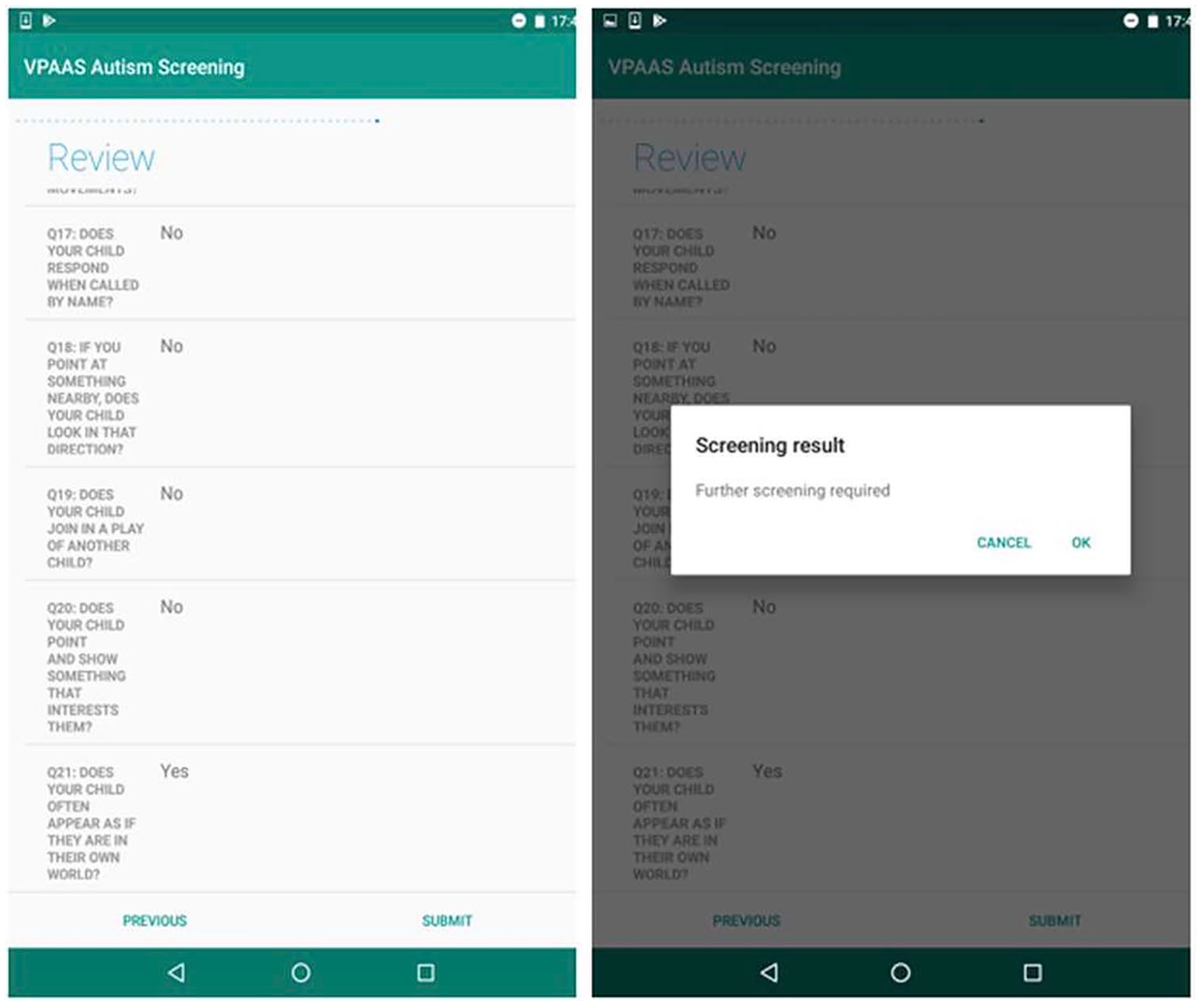

As smart devices provide an ideal platform for mental health disorder assessment, the random forest model is integrated into an Android tablet-based application to assist non-specialist healthcare workers to screen for ASD. A sample result from the developed tablet-based autism screening tool is shown in Figure 4. To conduct the tablet-based autism screening, a user needs to respond to 21 PAAS items and additionally the child’s age and gender. After completing this information, the user will be redirected to the review screen to confirm all the questions and answers are provided correctly. Once the user clicks on the submit button on the review screen (see Figure 4 (left)), they will be automatically redirected to the screening result (see Figure 4 (right)). The screening result screen provides suggestions whether a further screening is needed with a healthcare specialist or not.

A sample of screenshots from the tablet-based autism screening tool.

Selected features

The selected features provide insight into what questions are most important for predicting ASD in a paediatric cohort (see Table 2). Questions were separated into four broad areas according to their core domain: communication, social interaction, repetitive behaviour, and demographic (demographic questions are not part of the standard PAAS questionnaire but are recorded separately). Only around half of the questions across all core domains were retained consistently over multiple folds. This suggests that PAAS could be significantly reduced in size, while maintaining predictive power, saving time, and resources. Interestingly, although age and gender were hypothesised to be important for predicting ASD, they were not present in the majority of folds. As ASD is a developmental disorder, it was thought that age could be useful to determine if absent behaviours were absent because of physical immaturity or developmental delay caused by ASD. In addition, many aspects of ASD and normal behaviours are different across genders. This could be because the behaviours described in PAAS are identical across genders or other undetected cultural factors.

Conclusion and future work

Due to cultural reasons, ASD awareness is low in LMIC. Resource constraints meant that when ASD is identified, patients are often left untreated for long periods of time. Early identification and diagnosis is important to improve clinical outcomes of young children with ASD. Smart devices represent an ideal platform for a computer-aided tool, as they are highly accessible and prevalent across the world. Most existing screening tools automate standard screening checklists such as M-CHAT-R/F. Only the AutismAI application embeds an intelligent machine learning model to arrive at a decision. However, AutismAI does not incorporate a clinically validated and culturally specific symptom checklist. This article proposed a novel mobile application for ASD screening using a culturally sensitive symptom checklist and embedded machine learning model. A variety of supervised learning models were trained on PAAS data collected clinically and the best performing model – the random forest – was chosen to be embedded in the tablet-based mobile application. The proposed application has shown greater predictive performance than current paper-based methods (PAAS). The new application is important to improve ASD awareness and detection, by enabling non-specialist healthcare workers to screen for ASD during home visits. Furthermore, valuable data can be collected about the prevalence of ASD in LMIC (which is currently scarce) and resources allocated correctly to decrease treatment delays.

The predictive performance was analysed using multiple metrics to ensure a thorough evaluation of predictive power was conducted, including TPR, TNR, FPR, FNR, AUROC, and accuracy. We found the random forest model performed better (TPR: 88% and TNR: 96%) than the standard paper-based approach (TPR: 88% and TNR: 93%): the model has learned from the data how to classify ASD, whereas PAAS uses an arbitrary scale to make this decision. In addition, feature selection revealed that the most important questions were related to the communication and social interaction core domain, and approximately a quarter of the questions included by PAAS were irrelevant for ASD prediction. Therefore, the efficiency of PAAS could be improved in the future by removing these irrelevant questions, which is valuable in environments such as busy outpatient clinics.

A limitation of this work is the quantity of data available, particularly for the control subjects. The decision of PAAS for each child must be independently confirmed by an experienced mental health specialist in a clinical setting. Therefore, confirming that a child does not have ASD with a mental health specialist is difficult due to limited resources, particularly when many other children with other developmental disorders are in need of referral. In future work, a new (class-balanced) cohort will be recruited to validate the predictive model on independent data. In addition, the decision made by the random forest algorithm cannot be examined and interpreted by domain experts. In the future work, it may prove to be advantageous to use a fully transparent (e.g. rule-based model) supervised learning algorithm.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research outlined here was supported by Department of Economy under the Global Challenge Research Fund grants. The funding sources had no role in the design, analysis, or interpretation of data or in the preparation of the report or decision to publish.