Abstract

Objective

Children and adolescents with intellectual and developmental disabilities (IDD), particularly those with autism spectrum disorder, are at increased risk of challenging behaviors such as self-injury, aggression, elopement, and property destruction. To mitigate these challenges, it is crucial to focus on early signs of distress that may lead to these behaviors. These early signs might not be visible to the human eye but could be detected by predictive machine learning (ML) models that utilizes real-time sensing. Current behavioral assessment practices lack such proactive predictive models. This study developed and pilot-tested real-time early agitation capture technology (REACT), a real-time multimodal ML model to detect early signs of distress, termed “agitations.” Integrating multimodal sensing, ML, and human expertise could make behavioral assessments for people with IDD safer and more efficient.

Methods

We leveraged wearable technology to collect behavioral and physiological data from three children with IDD aged 6 to 9 years. The effectiveness of the REACT system was measured using F1 score, assessing its usefulness at the time of agitation to 20s prior.

Results

The REACT system was able to detect agitations with an average F1 score of 78.69% at the time of agitation and 68.20% 20s prior.

Conclusion

The findings support the use of the REACT model for real-time, proactive detection of agitations in children with IDD. This approach not only improves the accuracy of detecting distress signals that are imperceptible to the human eye but also increases the window for timely intervention before behavioral escalation, thereby enhancing safety, well-being, and inclusion for this vulnerable population. We believe that such technological support system will enhance user autonomy, self-advocacy, and self-determination.

Keywords

Introduction

Children and adolescents with intellectual and developmental disabilities (IDD) show increased risk of displaying challenging behaviors. 1 “Challenging” behavior (as used in the behavior analytic literature2,3), sometimes referred to as “problem behavior” or “severe behavior,” is defined in this work as behavior that places individuals and caregivers at risk for injury, results in property damage, or displaces individuals from their homes and communities. Examples of challenging behaviors include self-injury, aggression toward others, elopement, and property destruction, as documented in literature.4,5 Up to 50% of people with IDD present with some form of challenging behaviors, with 5–10% developing more severe presentations. 6 This is especially true for children and adolescents with autism spectrum disorder (ASD),7,8 two-thirds of whom may display challenging behaviors before adulthood. 9

Challenging behaviors exact a serious toll on individuals and families as well as educational and healthcare systems. Youths who present with severe challenging behaviors frequently experience restrictive management techniques, such as seclusion and restraint, and have increased emergency room visits or psychiatric hospitalizations related to these behavior concerns.4,5,9–11 Persistent challenging behaviors can put children and their caregivers at risk of potential physical harm 12 and prevent children from learning new skills, 13 excluding them from school services and community opportunities that could increase their quality of life. 14 This situation presents a critical challenge for individuals exhibiting these behaviors, their families, and caregivers, making it a key area for the development of innovative technologies aimed at enhancing outcomes within the community.

Caregivers of children with IDD often observe a pattern of agitation or early signs that may precede challenging behaviors. Agitation can manifest in various forms, some of which can be visible to a human observer and others which are more difficult to detect. An agitated state may show up as increased restlessness, changes in facial expressions, repetitive movements, or shifts in vocal tone. Caregivers, who are experts on their children, become skilled at recognizing subtle behavioral cues that may not be immediately evident to others, However, they usually cannot detect physiological markers of behavioral escalation, like changes in heart rate (HR), sweating, and other autonomic reactions. These are less salient and require specialized equipment for detection.

Caregivers of children with IDD often observe a pattern of agitation or early signs that may precede challenging behaviors. Agitation can manifest in various forms, some of which can be visible to a human observer and others which are more difficult to detect. An agitated state may show up as increased restlessness, changes in facial expressions, repetitive movements, or shifts in vocal tone. Physiological responses require instrumentation to detect, such as changes in HR, sweating, and other autonomic reactions. These are telling signs of agitation that can provide early warning before behaviors become more pronounced. Caregivers, through their sustained and attentive interactions with their children, become skilled at recognizing subtle behavioral cues that may not be immediately evident to others, although they find it challenging to discern the physiological signs, which are less salient and often require specialized equipment to monitor effectively.

Recognizing the earliest signs of agitation, including subtle physiologic changes, is crucial. This provides an opportunity for timely intervention, potentially averting escalation of agitation into more severe incidents. By understanding and responding to early agitation, caregivers can employ specific strategies or interventions tailored to the individual's needs, thereby creating a safer and more supportive environment. This insight from parents and caregivers is invaluable, as it contributes to a better understanding of the child's behavior patterns and aids professionals in developing more effective behavioral interventions.15–17

Identifying agitation before it escalates represents a significant advancement in behavior management strategies by proactively attempting to prevent behavior escalation instead of responding after a behavior has already occurred. The ability to foresee these early indicators, leveraging cutting-edge machine learning (ML) algorithms and wearable sensor technology, enables an early detection framework. ML algorithms are particularly adept at discerning physiological indicators of agitation, such as HR and sweat response, which are not apparent to human observation.18–20 Utilizing sensors, we can measure these physiological responses, providing data that ML algorithms can analyze to offer a reliable and objective assessment. These algorithms excel not only in analyzing physiological signals but also in observable behaviors; they can detect patterns and nuances in behavior, ensuring a comprehensive understanding of both overt and subtle signs of agitation. Such early detection facilitates interventions at the nascent stages of agitation that could potentially avert the escalation of behaviors. Ultimately, this predictive model could lead to a safer, more nurturing environment, enhancing the quality of care and reducing the overall stress for both individuals and their support systems. 21

The integration of innovative technological applications into the field of applied behavior analysis (ABA), particularly in the detection of agitation, presents a significant opportunity to enhance current practices. Board Certified Behavior Analysts (BCBAs®), who conduct behavioral assessments, may face challenges detecting subtle agitation behaviors and have little opportunity to predict these early warning signs that challenging behavior may follow. These behaviors can be elusive, sometimes going unnoticed by caregivers or misinterpreted due to their subtlety. Even with expert human observers, several indicators of agitation, like nuanced physiological responses or minor changes in posture, can be difficult to detect. This challenge is not a reflection of the limitations of BCBAs but rather an inherent constraint of human observation. 22 While BCBAs are skilled in manipulating environmental factors to initiate and reinforce target behaviors, many existing methods for doing so do not allow for the development of robust predictive models that account for the intricate aspects of client behavior and physiology. Developing such models could significantly enhance the effectiveness of interventions for clients with challenging behaviors.

Affective computing is a field that enables intelligent systems to recognize, infer, and interpret human emotions and mental and behavior states using various signals such as behavioral (e.g. motion and eye gaze) and physiological (e.g. HR and body temperature) signals. 23 Goodwin et al. demonstrated that changes in physiological signals can be used to predict imminent aggression for youths with ASD who are minimally verbal using HR and galvanic skin response (GSR), also known as electrodermal activity. 24 Imbiriba et al. improved the accuracy of this study using principal component analysis and a nonlinear kernel-based support vector machine classifier with the same physiological responses. 25 Sarabadani et al. used an ensemble of classifiers to detect affective states induced by high and low arousal stimuli of positive and negative valence or high and low arousal using GSR, electrocardiograms, respiration (RSP), and temperature. 26 Inferred states such as users’ engagement using GSR, RSP, and photoplethysmography (PPG) signals were used in a virtual reality driving simulator to improve engagement with the systems. 27 Motion data captured from children with ASD using accelerometers have been used to recognize body movements (e.g. hand flapping, body rocking, etc.) reflecting agitation or frustration in classroom settings.28,29 Body-worn accelerometers have also been used to recognize self-injurious behavior in children with ASD. 30

In addition to the work presented on deciphering affective states based on unimodal signals such as physiology or body gestures exclusively, several studies have shown promise in using data from multimodal sources. Multimodal sources allow for inferences that cannot be obtained from a single sensor or whose quality exceeds that of an inference drawn from a single source. Amat et al. and Tauseef et al. developed a collaborative virtual environment to encourage and measure teamwork between autistic and neurotypical adults in a workplace setting using speech, eye gaze, and game controller data.31,32 Plunk et al. expanded on virtual teamwork-based collaborative activities with semisupervised behavior labeling. 33 A human-machine interaction system for dementia intervention has been developed with multimodal physiological data collected from its users.34,35 Adiani et al. developed a multimodal job interview simulator for training autistic individuals. 36 Siddharth et al. developed a wearable multimodal biosensing system that can collect, synchronize, record, and transmit data from multiple biosensors: PPG, EEG, eye-gaze headset, body motion capture, GSR, etc. 37 Communication and coordination skills in children with ASD were assessed using multimodal signals including speech, gestures, and synchronized motion. 38 Eye gaze, video recordings, and EEG have been used for early detection of ASD in children. 39

While the prediction of agitation that precedes challenging behavior is underresearched, strides have been made in the adjacent field of forecasting challenging behaviors. However, existing technological approaches to predicting challenging behaviors have several limitations. First, existing ML models for predicting challenging behaviors were not developed by embedding BCBAs during training data collection. A prediction model grounded in clinical insight using BCBA input as ground truth will likely have closer overlap with expert human observation, be more accurate and meaningful, and enhance acceptance within care systems. Additionally, using a structured measurement context facilitates faster sampling of valid data than unstructured sampling. 40 However, previous pioneering studies have required nine or more hours of unstructured observation to build a predictive model. 25 In one recent study, it was necessary for participants to aggress up to 130 times during observations for the model to be built. 25 Repeatedly eliciting aggressive behaviors not only demands considerable time but also poses risks to the well-being and safety of both participants and observers. This human cost for building the model may be too much for some. Although expected to be robust and reliable, multimodal models have been underexplored in this field, thereby offering opportunities for innovation and improvement.

To overcome these limitations, our prior research 41 introduced a predictive multimodal framework designed to alert caregivers about agitation behaviors in children with ASD. It established a clinically grounded, multimodal model that anticipated agitations in children with ASD, leveraging technological advancements for more effective intervention. Predictive multimodal framework to alert caregivers of problem behaviors for children with ASD, PreMAC, used commercial and customized sensors including a Microsoft Kinect, an E4 wristband (Empatica Inc.), a custom wearable intelligent noninvasive gesture sensor (WINGS), and a specifically developed tablet application for recording direct observations of participant behavior. While PreMAC has proven effective in offline settings, it has not yet been applied in real-time scenarios, which is crucial for interventions to be most effective. To meaningfully help intervention and reduce risk, proactive real-time detection of agitations is necessary.

In this study, we extend the work of, PreMAC, 41 by working closely with BCBAs, psychologists, caregivers, and engineers to refine, deploy, and evaluate an ML model to proactively predict agitation behaviors. Specifically, in a controlled laboratory environment, we car ried out behavior assessments in collaboration with BCBAs and caregivers, carefully evoking behaviors identified as signals of agitation by caregivers, ensuring the process was safe for all participants. Simultaneously, our multimodal system collected physiological (e.g. HR and GSR) and behavioral (e.g. body movement and vocalization) changes. After the assessment, we used this information to train a ML model to recognize the underlying physiological and behavioral changes that could indicate the imminent occurrence of agitations. Significantly, our approach not only identifies participant agitation as it occurs but anticipates episodes before their observable onset, marking a crucial step forward in preempting and effectively preventing escalation to dangerous behaviors.

Recent advancements in assistive technologies have increasingly focused on empowering individuals with disabilities by enhancing rather than diminishing their agency.42,43 These technologies are developed to support more autonomous and self-directed living, enabling individuals to perform daily activities more independently and engage fully in community life. Technologies that integrate multiple modalities, such as sensory inputs from wearable devices, environmental controls, and communication aids, have been particularly effective in enhancing the functionality and independence of people with disabilities.44,45 Our approach empowers users and caregivers to take proactive steps, thereby fostering a sense of control and improving overall well-being. This approach not only aligns with contemporary efforts to enhance the agency of people with disabilities through technology but also contributes to the broader goals of inclusion and empowerment within the disability community.

The primary contribution of this work is to present a Pilot Study of a real-time early agitation capture technology (REACT) to detect imminent occurrence of agitation in real time. This extends our earlier work, PreMAC, which only provided offline analysis capabilities. In addition, we further extended our previous work by introducing a new auditory modality to capture agitation associated with vocal tone and verbalization. We preliminarily validate our work through a two-visit training-testing study with three children with IDD with varying agitations.

Methods

Participants

All procedures were approved by the Institutional Review Board. All participants and their caregivers provided informed assent and consent. Less verbal participants were provided with visual schedules to facilitate assent discussions or allow for study discontinuation. Caregivers were present for the duration of study sessions and asked to advocate for their children by letting study staff know if behaviors shown were unusually intense, distressed, or unsafe.

Participants were recruited from an existing clinical database that includes individuals with and without documented developmental disabilities (such as ASD) whose guardians have consented to be contacted about research opportunities. Eligibility criteria included being between 3–17 years of age; being from a family that spoke primarily English within the home; having a high likelihood of tolerating the wearable devices (no significant sensitivities to fabric textures, sleeve length, and so on); and presenting with predictable, frequent, and observable challenging behaviors that could be evoked by a therapist as part of a session. Interested caregivers completed an intake interview with a study BCBA to determine eligibility and appropriateness based on the child's phenotypic and behavior profile. The study's purpose and activities, including the use of wearable devices, were explained, with caregivers engaged in discussion about participant sensory profiles and the likelihood of tolerating such procedures before the initial study visit. Additionally, we sought to learn if the participants were likely to escalate relatively quickly with an unfamiliar adult in a clinical setting, and if so, would they have the same capacity to return to a calm state. If the caregiver and the BCBA agreed that participants could likely tolerate activities and engage safely in the research protocol, they were enrolled in the study.

At the study visit, participants and their caregivers completed written informed consent and assent through which study procedures were again described. Participants were given the opportunity to interact with and try on the wearable sensors, facilitating an understanding of the technology prior to participation. Ample time was provided for both participants and their representatives to ask questions and express any concerns.

In addition to the use of visual support for less verbal participants, observable dissenting behaviors were monitored and interpreted with the help of therapists and caregivers, ensuring that participants’ nonverbal cues were respected throughout the study. Importantly, participants were informed and reminded that they could ask to stop participation at any time during the study, further reinforcing our commitment to ethical research practices and respect for individual autonomy. This approach underscores the concept of informed consent as an ongoing process throughout the study duration.

In this work, we present data collection, analysis, and modeling as three individual case studies. Individualized modeling is necessary as each participant had varying ranges of physiological and behavioral signals, as well as different agitations.

Participant 1 was a 6-year-old male with minimal spontaneous spoken language. Challenging behaviors of concern to his family included screaming, pushing items away, hitting himself in the leg or the face, and falling to the floor. Signs of agitation identified by his family included unhappy sounds, an upset face, arm flapping and tensing, and walking away.

Participant 2 was a 9-year-old male with an extensive and expressive vocabulary. Challenging behaviors of concern to his family included elopement and aggression toward adults and children (hitting, kicking, biting). Signs of agitation identified by his family included a raised voice, running away, verbal threats, movements (balled up fists, sudden jumping, throwing his hands at his sides), and pulling items from another person's grasp.

Participant 3 was an 8-year-old female with strong receptive language but articulation errors that impacted her expressive communication skills. Challenging behaviors of concern to her family included hitting, kicking, screaming, and falling to the floor. Signals of agitation identified by her family included forcefully tensing her body, swinging her arms, clenching her fists, or wiggling her body in her seat; using a louder or angrier voice, or speaking more rapidly; changing her facial expression (knitting her eyebrows); pushing items away, or stating, “you are annoying.”

Experimental design

In our study, we developed a framework that allowed for the therapist to safely evoke agitation behaviors within a laboratory environment, as identified by caregivers. This framework was inspired by the principles of functional analysis (FA) and incorporates aspects of the interview-informed synthesized contingency analysis (IISCA). FA, a foundational approach in ABA, methodically identifies the functions of challenging behaviors, while IISCA specifically emphasizes the critical role of caregiver insights. 46 Working in collaboration with BCBAs and caregivers, we conducted behavior assessments with a strong emphasis on maintaining participant safety throughout the process. Within this framework, BCBAs engage with caregivers through an open-ended interview to understand the specific environmental and behavioral contexts of individuals. 47 This understanding informs the selection and presentation of stimuli that can either evoke behavior, known as establishing operations (EOs), 48 or serve as reinforcing stimuli (SRs) to manage or modify the behavior. The BCBA methodically presents and escalates EOs, closely monitoring for signs of agitation, before delivering SRs. This approach is tailored to each case, guided by the caregiver's real-time feedback communicated through an earbud. An independent data collector observes the assessments and uses a data collection application to record the conditions of the assessment and the behaviors of the participant. The process of EO and SR presentation repeats once the BCBA has verified that the participant's behavior has returned to normal levels and can receive the reinforcers indicated by their caregivers. The data gathered were then instrumental in training our ML model to proactively predict agitation behaviors in real-time, leveraging the clinical expertise of BCBAs to inform model development.

For the study, each participant takes part in two assessments following this framework, with each assessment lasting approximately an hour. In Assessment 1, data are collected for training the predictive model, and in Assessment 2, signs of agitations are predicted in real-time using the trained model. During each assessment, a data collection tool called the data collection controller, which synchronizes all modalities, is deployed for multimodal data collection. To validate those results, a second BCBA observes the visit to engage in traditional methods of direct behavior observation that served as the ground truth regarding the occurrence of agitation or challenging behavior. Once the multimodal data have been collected and synchronized, the data are then denoised and used to extract features. The features extracted from Assessment 1's data are then used to train a ML model using the ground truth labels. This model is then used to predict the agitation in Assessment 2. Figure 1(a) highlights the process of training the ML model, and Figure 1(b) highlights the process of proactively predicting agitation before these episodes were observable using the underlying physiological and behavioral signals. All procedures were reviewed by a team of expert BCBAs as well as parents of children with ASD and approved by the Institutional Review Board.

Two step training and testing mechanism: (a) schematic of training of the machine learning model using physiological and behavioral signals with BCBA provided identification of agitations; (b) schematic of the testing of the trained machine learning model using physiological and behavioral signals to predict the occurrence of agitations.

Because physiological and behavior signals as well as signs of agitation vary between individuals, we will be presenting our results as three individual case studies. While each participant was scheduled for a one-and-a-half-hour session, the assessment length varied across participants due to circumstances such as different sensitivities to EOs and SRs, varying lengths of time to return to a calm state, and time limitations of the caregivers. Specifically, the number of episodes featuring observable agitation evoked within the assessments varied as certain participants tended to be more sensitive to EOs while others were not.

Materials

As seen in Figure 1, different system components work together to capture annotated participants’ behavioral and physiological signals during the assessments. A wearable physiological sensor is used to capture several peripheral physiological signals, a custom-made movement detection sensor, WINGS, is used to capture the upper body pose, and a wireless microphone is used to capture the audio signal. Additionally, a tablet-based application, behavior data collection integrator (BDCI), is used by BCBAs to record each occurrence of the participants’ behavior in real- time. These components, in conjunction, lead to a fully annotated dataset used for training and testing proactive real-time agitation detection ML models. Various technical components of our system are discussed below.

Wearable sensor for peripheral physiology

A medical-grade, noninvasive wearable physiological sensor called the E4 [https://www.empatica.com/research/e4/] is used to capture real-time blood volume pulse (BVP, 64 Hz) and GSR (64 Hz). The BVP signals are filtered through a 1 Hz to 8 Hz band-pass filter and used to calculate the HR. Additionally, tonic skin conductance level and phasic skin conductance response are extracted from the GSR signal 49 as they correlate with physiological arousal level. 50 Physiological signals are aggregated using a 60-s rolling window to account for their slow-moving nature.

WINGS for motion detection

We capture the upper body pose, such as hand flapping and body rocking, using the roll, pitch, and yaw of the upper limbs and torso of the participant through a custom-designed sensor called WINGS that uses five inertial measurement units (IMUs). The WINGS sensor, developed in our previous work,

41

uses IMUs to capture bodily orientation in space using three internal sensors: an accelerometer, gyroscope, and magnetometer. By placing five IMUs, one on each shoulder, one on each elbow, and one on the back of the participants, the upper body pose can be reconstructed using the measurements from each sensor. Each IMU wirelessly communicates its reading to an Arduino Uno microcontroller, which then transmits it to a computer workstation.

51

The internal sensors of the IMU are used to compute the roll (θ), pitch (ψ), and yaw (ϕ) angles of the torso and limbs. With this information, the orientation of the participant's upper body can be calculated through the orientation of the shoulders, elbows, and back. The θ, ψ, and ϕ were computed using a one-second rolling window. Several methods exist to extract the θ, ψ, and ϕ, but we choose to use the following functions:

52

Microphone for audio signal

In this work, we also capture vocalizations, which have been shown to reflect at least 24 kinds of emotional states regardless of verbal content, 53 to detect agitations. These audio signals are captured from microphones and processed into various features such as pitch, volume, and zero-cross rate, which are then used for analysis. We use mel frequency cepstral coefficients (MFCCs), which are a compact representation of possibly infinite sinusoids in an audio signal.54–56

We capture audio using a wireless lavalier lapel system. The system uses 2.4G wireless technology with dual transmitters and one receiver to wirelessly record audio for up to 5 h within a range of 164 feet sampled at 441 kHz. The raw audio signals are processed to generate the MFCCs. The MFCCs are extracted using six steps: (a) split the audio into small frames, (b) smoothen the transition between frames through windowing, (c) calculate the discrete Fourier transform, (d) apply 40 triangular filters known as filter banks, (e) pass through a log function, and (f) apply discrete cosine transform. 57

Behavior assessment application

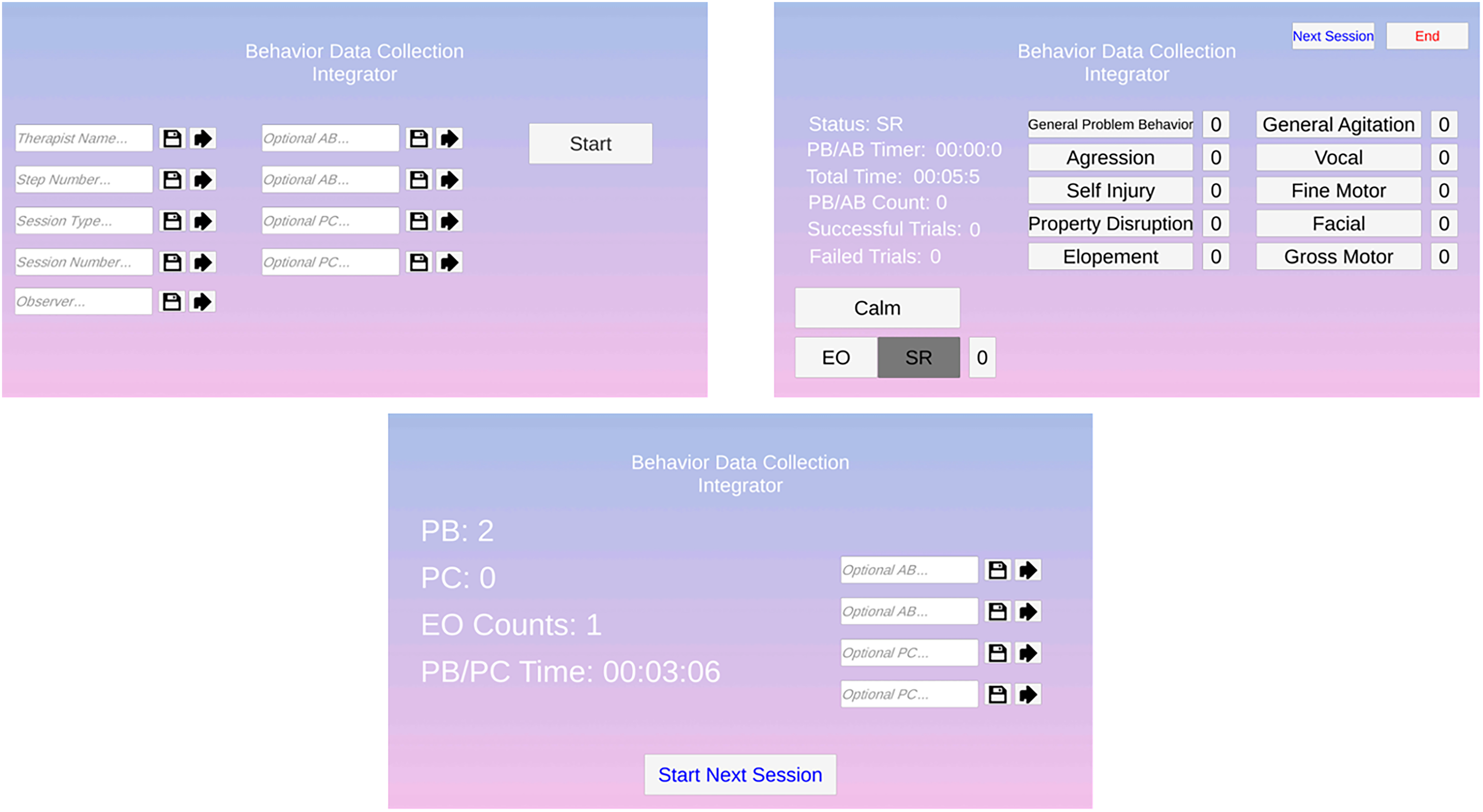

We modified an application developed in a prior study that allows for behavior annotation using a reactive and responsive cross-platform application. 58 The application has three pages: initialization, assessment, and summary (Figure 2). The initialization page allows for the BCBA to input participant information, therapist information, assessment number, and assessment type (i.e. training or testing), as well as unique agitations and challenging behaviors for a specific participant. On the assessment page, the BCBA can record their observations in real time while a JavaScript Object Notation file is produced with times- tamped occurrences. Once a session has been completed, the summary page displays information pertinent to BCBAs regarding the session.

Overview of the behavior assessment application, illustrating its three primary pages: initialization (for entering participant and session details), assessment (for real-time observation and data recording), and summary (displaying session insights postassessment).

Procedures

Figure 3 shows the experimental setup with two rooms, located in a clinical space, with a one-way mirror separating them. The participant and a BCBA are in the experimental room while the observers and additional BCBAs are in the observational space. The participant wears an E4 sensor that collects physiological data. Participants are given the choice to wear it on either an ankle or a wrist. The WINGS system, which is a cotton hoodie outfitted with sensors, was worn on the upper body along with a lapel microphone placed in the hoodie. The BCBA in the experimental space is also wearing a lapel microphone. Both microphones send data to a receiver at a workstation in the observational space, which is also occupied by an engineering researcher, the participants’ primary caregivers, the BCBA ground truth data collector, and a BCBA assessment manager. The BCBA assessment manager observes the assessment and takes input from the caregiver to guide the assessment toward a safe and data-rich outcome. The therapist in the experimental space communicates with the observers via Bluetooth earbud to ensure the time components of the experimental protocol are correctly executed.

Data collection setup, showing two connected spaces: the experimental room for participants and BCBAs, equipped with sensors, and the observational room for the research team and caregivers, with equipment for monitoring and communication.

Following a multielement design for single-subject research, the data collection experiment had both test (T) sessions, which alternated between EO and SR conditions, and control (C) sessions, which maintained SR conditions throughout. The typical session followed a Control-Test-Control-Test-Test (CTCTT) structure; however, this was occasionally modified during the assessment based on the BCBAs discretion. During EO presentations, the BCBA attempted to evoke agitation using conditions reported by the caregivers to lead to behavioral escalation. Commonly reported stimuli to evoke agitation included requesting the completion of homework assignments, removing preferred toys or electronics, and withdrawing preferred social attention. Following EO presentations in which agitation behaviors were witnessed, the BCBA provided preferred attention and items/activities for at least 90 s to allow for de- escalation during SR conditions. To ensure the participant had returned to a calm state (i.e. lack of agitation or challenging behavior), the caregiver provided feedback to both BCBAs. Figure 4 provides the structure of the experimental procedure.

Overview of experimental procedure for BCBAs outlining the structured approach followed by BCBAs, a CTCTT sequence of test and control sessions, adaptable based on their discretion. It includes evoking agitations under specific conditions and implementing strategies for deescalation, with caregiver feedback ensuring participant calmness.

Caregivers always observed participants throughout each session and provided input on participant comfort and safety, as well as providing real-time feedback on the application of procedures intended to evoke escalation or calm states in the participant. Importantly, the goal was to develop a prediction model not for challenging behavior itself, but for agitations based on caregiver identification of signs of behavior escalation. That is, the goal was not to evoke unsafe behavior or prolong participant distress. Notably, no parent or participant requested to stop a session, underscoring the effectiveness of our approach in maintaining participant comfort and safety throughout the study.

Analysis

The predictive framework utilized Assessment 1 data for training a ML model, and Assessment 2 data for real-time testing and evaluation. During the assessments, the observing BCBA used the BDCI to note occurrences of agitations. This created a log of agitation episodes with their corresponding timestamps that we used as ground- truth labels when training and evaluating the ML model. The following sections explain the steps the framework follows beginning with synchronizing and preprocessing the multimodal data.

Preprocessing

For our ML model, we used the following multimodal data inputs: physiological (E4) and behavior (WINGs and microphone). Due to the variability in sampling rate across modalities discussed in the Materials section, the collected data needed to be synchronized. In addition, features needed to be extracted to train the ML model. Features were extracted by applying a rolling window to the physiological, audio, and WINGS data after synchronization. The rolling window extracted the mean, minimum, and maximum values within the specified window. We chose these features based on our previous work 41 as well as other published literature in the field21,37,59 that showed potential for these features to capture challenging behaviors. MFCCs were extracted from the audio modality. Table 1 shows the features extracted and their units. Table 2 details the length of each assessment and the number of agitations displayed in each assessment across the three participants.

Features extracted for the machine learning model derived from physiological, audio, and WINGS data.

MFCC: mel frequency cepstral coefficient; WING: wearable intelligent noninvasive gesture sensor.

Duration of each assessment and the frequency of agitations observed for each of the three participant case studies.

ML model and real-time prediction

In our ML analysis, we utilized 32 ML models for proactive real-time prediction analysis such as Logistic Regression, AdaBoost Classifier, and Ridge Classifier. Each model was subjected to Bayesian optimization to determine the most effective hyperparameters through maximizing the F1 score, thereby ensuring optimal performance for each participant. Bayesian optimization is a powerful strategy for hyperparameter tuning, particularly effective in high-dimensional spaces. It works by construct ing a probabilistic model that maps hyperparameters to a probability of a score on the objective function. This method iteratively updates the model with results from previous evaluations, using these insights to choose the next set of hyperparameters to evaluate. The models were implemented in Python using Scikit-Learn. 60 The optimization approach also included determining the ideal window length for our labels, acknowledging that agitations occur over a period rather than instantaneously; they start seconds before their annotation and last for a few seconds afterward. An example of a 30-s window is shown in Figure 5.

Example of applying a 30-s window to agitation labels, reflecting the duration over which agitations typically unfold.

After a thorough evaluation, we chose the AdaBoost Classifier as our predictive model. Adaptive Boosting is an ensemble learning technique that works by combining multi ple weak learners, typically decision trees, to create a robust classifier. Each successive model in the AdaBoost sequence focuses on the instances that were incorrectly predicted by the previous model, thereby continuously improving its performance. We trained the AdaBoost Classifier to predict agitation behaviors using the preprocessed data collected in Assessment 1. Once the model was trained with Assessment 1's data, Assessment 2's data were preprocessed to make real-time predictions by feeding the data into the model as if the data were coming from an experimental session as a real-time data stream. An additional step was taken to process the predictions made on Assessment 2's data before evaluating the performance of the model. Since an agitation is not an instantaneous event, we decided that if our model predicts an agitation within the identified window around an agitation, then the remainder of the band following the correct prediction should also be marked as correct as shown in Figure 6.

Example of how predictions were refined to account for the duration of agitation events, with a focus on marking the extended period following a correctly predicted agitation as accurate.

Model analysis

We evaluated the performance of our model using Assessment 2 data. Data from Assessment 2 were collected and fed into the model trained on Assessment 1 data as a real-time data stream to simulate prediction capability. To do so, we implemented a time shifts to simulate real-time predictions. We implemented six time shifts of 5 s, 10 s, 15 s, 20 s, 25 s, and 30 s to see how far in advance agitations can be predicted. Considering the specific requirements of our study, we emphasized the importance of the F1 score as a key metric in our model evaluation as shown in equation (4). It is vital in situations like ours where both false negatives and false positives carry significant consequences as our predictions need to be trusted. Missing an agitation (false negative) could potentially lead to challenging behaviors, and inaccurately predicting an agitation (false positive) could lead to mistrust in the model. This approach ensures that the model is not only sensitive to detecting agitations but also maintains a low rate of false predictions, striking a balance for effective and reliable predictions.

While recall, precision, and accuracy are important during analysis, they are not the primary metrics in this study. High recall ensures minimal missed detections of agitation precursors. However, recall alone could increase the propensity for false positives, which could undermine confidence in the model. Precision is valuable in confirming the relevance of detected behaviors, but relying on it exclusively does not capture the model's ability to consistently identify all relevant agitation precursors. Accuracy, reflecting the overall rate of correct predictions, does not always present a clear picture, especially in datasets where agitation events are sparse compared to nonagitation events.

Results

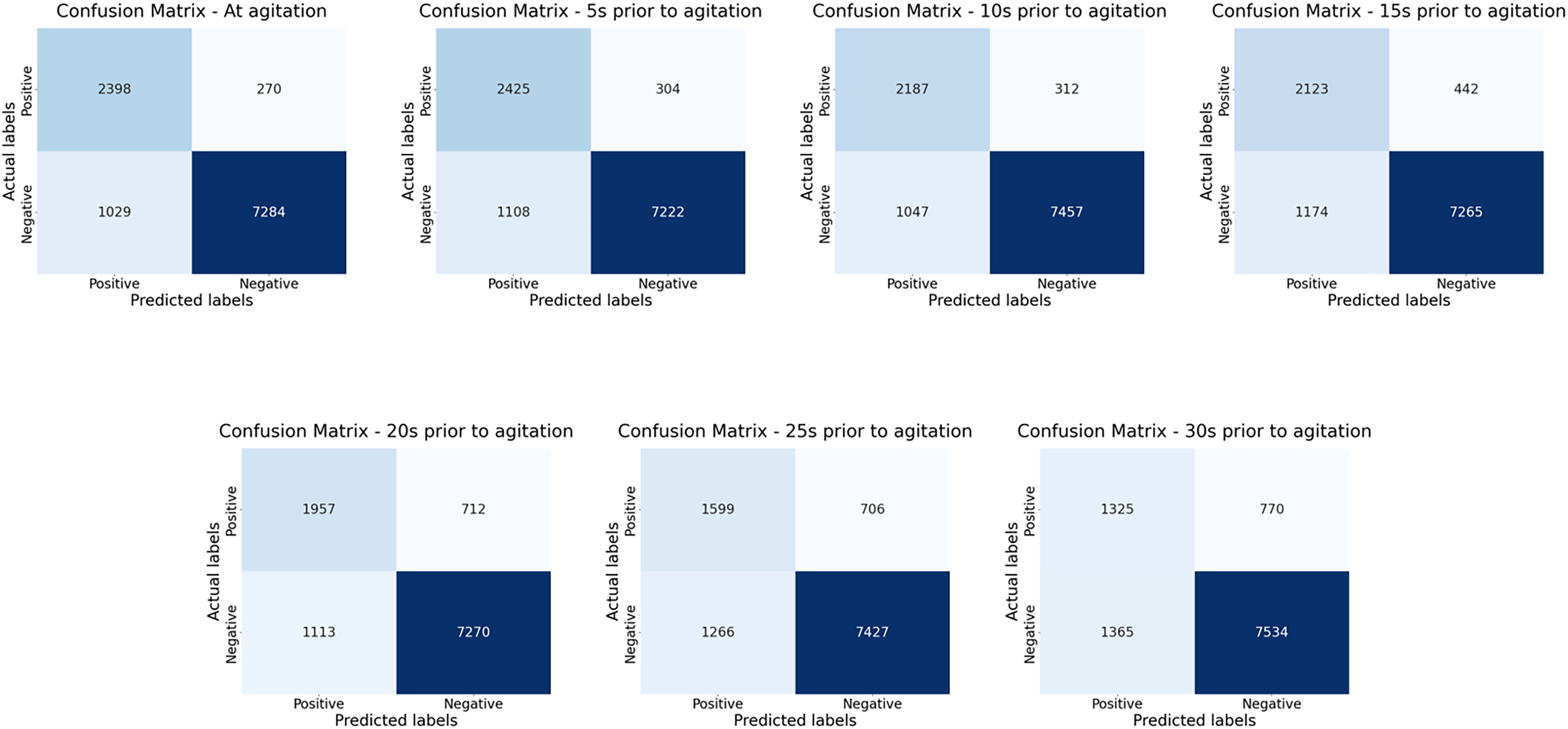

In our analysis, we evaluated numerous ML models. Table 3 presents the top four algorithms we identified based on performance, detailing the F1 score, recall, and accuracy metrics, as well as the optimized hyperparameters for each. Among these, AdaBoost Classifier consistently demonstrated exceptional performance across the metrics, leading us to adopt it as our primary classifier for further analyses. Figure 7 displays the confusion matrices for the AdaBoost Classifier.

Confusion matrices displaying the true positives, true negatives, false positives, and false negatives for the AdaBoost model used in agitation prediction. Each matrix corresponds to various distances from the agitation.

Performance evaluation of the top four machine learning algorithms, presenting F1 score, recall, and accuracy metrics, complemented by their respective optimized hyperparameters.

Figure 8 shows the metrics for each participant with different prediction distances. In general, we would expect the scores to drop as predictions are made further in advance of the agitation until a certain threshold. Across all three experiments, the scores began to drop below an acceptable performance when making predictions more than 20 s in advance. Our results showed an average F1 score across the three participants of 78.69% at the time of agitation, 77.45% at 5 s prior, 76.30% at 10 s prior, 72.43% at 15 s prior, 68.20% at 20 s prior, 61.86% at 25 s prior, and 55.38% at 30 s prior.

Comparative metrics across participants focusing on the change in performance metrics as predictions are made increasingly earlier before the agitation occurs.

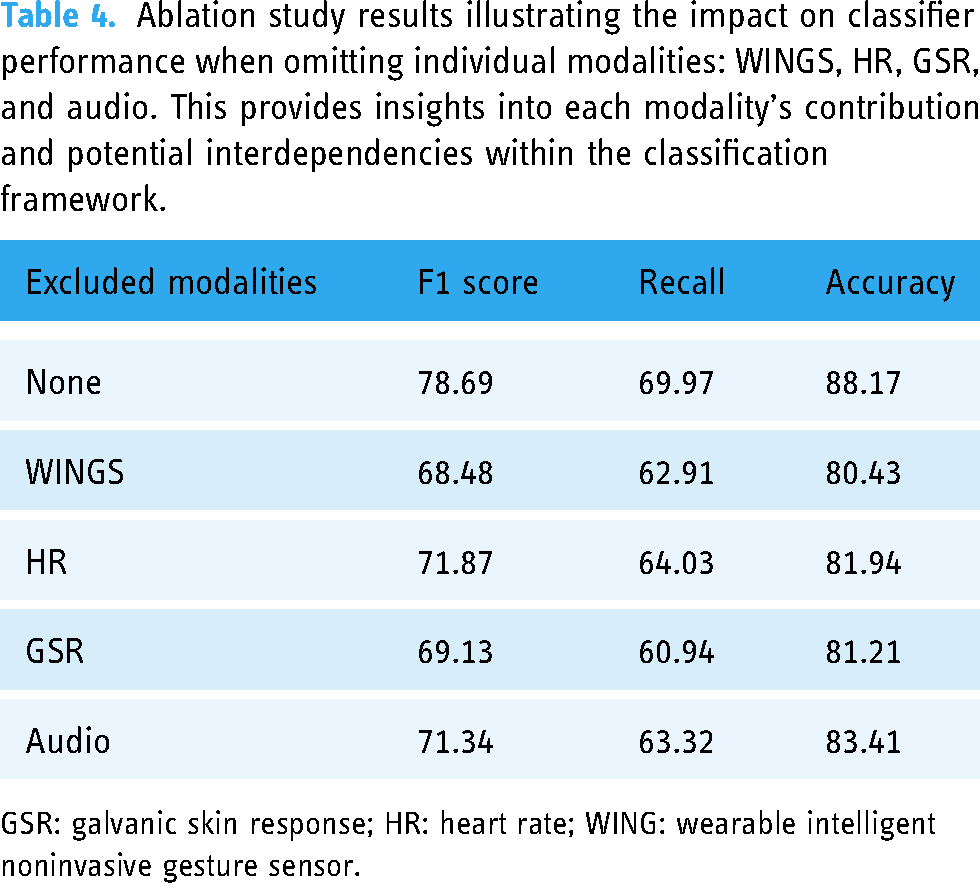

Additionally, we conducted an ablation study focusing on predictions at the time of agitations to gauge the significance of individual modalities on the overall performance of our classification model. In this study, we sequentially omitted one of the following modalities: WINGS, HR, GSR, and audio. The objective was to discern the distinct contributions of each modality to the classifier’s accuracy and probe for potential dependencies or redundancies. The outcomes of this ablation assessment provided valuable insights into the hierarchical significance of these modalities within our classification framework, as delineated in Table 4.

Ablation study results illustrating the impact on classifier performance when omitting individual modalities: WINGS, HR, GSR, and audio. This provides insights into each modality's contribution and potential interdependencies within the classification framework.

GSR: galvanic skin response; HR: heart rate; WING: wearable intelligent noninvasive gesture sensor.

In our commitment to advancing scientific knowledge and supporting reproducibility, both the code and the dataset used in this study are available upon request through Vanderbilt University, via the following link: https://lab.vanderbilt.edu/rasl/dataset-and-or-code-request/.

Discussion

In our work, we employed ML and affective computing techniques to proactively identify agitations in children with IDD, as reported by caregivers. This approach is based on the understanding that these agitations, if recognized promptly, could help in preventing their escalation into challenging behaviors.

We build upon existing work to demonstrate several promising technological advancements. First, we advanced the capabilities of our previous wearable system 41 by integrating the capacity for proactive real-time prediction, enabling BCBAs to adapt their interventions before participants’ behavior escalates. Additionally, we expanded our multimodal data capture to include audio, to integrate vocalizations. This led to more robust agitation detection than achieved in our previous study. We also expanded upon our data capture application to include customizable, BCBA- defined agitations for child-specific annotation. Each of these achievements represents promising steps toward wearable systems capable of multimodal data capture and agitation detection within community settings.

Our preliminary trial with three participants highlighted the predictive value of our ML model. We were able to predict agitation based on physiological, body movement behavior, and vocalization behavior data captured through various sensors. Our sensors accurately captured subtle signals such as HR and electrodermal activity, which are imperceptible to the observing BCBA. Sensors also captured joint angles of the upper body and vocalizations, both of which were described as important reflections of agitations by participants’ caregivers. Body motions such as hand flapping and body rocking were recognized in other studies to detect agitation and self- injurious behaviors in children with ASD.28–30 Nonlexical vocal sounds and vocalization have been captured to recognize at least 24 kinds of various emotions. 53 Our work incorporates multimodal data through joint angles for body motion detection and vocalization for emotion to generate robust agitation detection models.

We found that as participants approached an occurrence of agitation, the signals we captured varied from their calm state. In this work, our algorithm capitalized on that deviation to determine that agitation was likely. Each participant had an individualized model trained during the first visit. These models were tested with data from the second visit. It was shown that agitation can be reliably predicted with an average F1 score of 68.20% at 20 s prior to the agitation behavior. While the predictive performance decreases as the prediction window extends further into the future, this is an expected tradeoff. The ability to provide earlier warnings, even with somewhat reduced performance, is a significant advantage, offering caregivers the opportunity to take preventative measures. Some forms of agitation are more readily detectable than others. For instance, behaviors like fist banging present clearer signals for the model, whereas more subtle actions such as a making a fist pose a greater challenge. Although these variations influence the overall predictive scores, the results are still promising. Despite the variation in detectability, the models maintain a significant capacity to predict crucial signs of agitation, providing valuable insights for caregivers.

In our ablation study, we examined the distinct contributions of each modality by selectively omitting them and observing the resultant classification performance. The removal of WINGS led to discernible reductions in all metrics, underscoring its central significance. When we removed HR and GSR modalities, distinct outcomes emerged. Excluding HR resulted in a significant but less pronounced drop than the other modalities, highlighting its auxiliary role in the classification. In contrast, the omission of GSR led to performance metrics closely mirroring those seen with the removal of WINGS, elucidating its integral contribution. Notably, when audio data was excluded, the drop in metrics was more substantial than that observed with HR, emphasizing audio's pivotal role in the classification process. These results accentuate the individual and collective relevance of the modalities in enhancing classification outcomes.

To our knowledge, our study is the first to employ multimodal data capture and ML for the specific purpose of early-stage agitation prediction, shifting the focus from behaviors that have already escalated. Other teams have predicted imminent aggression (escalated behavior) one minute before they occur. 24 We take this concept further by focusing on early signs of agitation, as identified by caregivers, thereby reducing risk, and providing valuable time for preventative measures before behaviors escalate to challenging levels. Specifically, our goal is to maximize utility and participant safety during behavior assessment approaches that can place individuals at significant risk of harm. An important focus of ongoing work is evaluation not only of efficacy, but also of safety, tolerability, and acceptability to future potential users, including youths with challenging behaviors.

Building on the capability to proactively detect agitations, the next progression in behavioral analysis is the identification of precursors to problem behaviors. While agitation indicates a general state of distress—often identified by caregivers—it is the precursors that bear a closer relation to the challenging behaviors themselves. These precursors are the final observable behaviors before a challenge occurs, serving as a critical alert to imminent escalation. By focusing on these precursors, caregivers and clinicians are afforded crucial additional time to implement targeted interventions aimed at preventing the challenging behaviors from occurring. 21 Within this context, our model has the potential to be an asset in FA and practical functional assessment (PFA). FA is a methodical approach for identifying the causes and functions of challenging behaviors through observation and manipulation of environmental variables for the purpose of treating challenging behavior. PFA, a novel set of FA procedures streamlines this process by focusing on the immediate precursors to challenging behavior, thus minimizing the occurrence of challenging behaviors during the assessment. As we continue to refine our model, we anticipate it could play a critical role in the future of behavior assessments, offering a more proactive and less intrusive means to support individuals with IDD and their caregivers.

Ethical considerations

Introducing ML into clinical settings can open the door to privacy and security concerns. The REACT system involves the collection of sensitive behavioral data. Ensuring the privacy and security of this data is of utmost importance. Therefore, data collection and storage policies were clearly established, and any applications were encrypted to ensure the protection of privacy for participants.

An additional issue is ensuring informed consent and assent. Many individuals with IDD receiving services are nonspeaking, making it difficult to explain the wearable sensors and ensure comfort during data collection. Therefore, we set clear guidelines to ensure no one was forced to wear sensors against their will, to confirm understanding of the system by guardians, to respect the autonomy of the individuals, and to guarantee complete transparency when including ML in behavioral therapy.

ML is highly susceptible to bias. When developing algorithms, this is an important consideration especially when utilizing group models. One way to avoid this is to always utilize individualized ML models for agitation prediction as we did in this study. However, this is not always possible as training a model requires large amounts of data. When group models are utilized, it is essential to ensure equal representation across race, gender, and ethnicity to reduce bias in ML. In the application of predicting agitations, models must be trained on data representative of varying types of agitations as they are individual-specific.

The most crucial ethical consideration when introducing ML into a clinical setting is to acknowledge that while ML can offer valuable insights, it is not infallible and may occasionally generate errors or inaccurate recommendations. ML should be utilized to inform and aid clinicians in decision-making, not replace them. Therefore, it is essential for clinicians to exercise caution and never solely rely on ML without critical evaluation and human judgment.

It is crucial to emphasize that the REACT system is designed to augment, not replace, the human elements of care and intervention strategies. The application of ML in this context is intended to support BCBAs and caregivers by providing additional tools for understanding and responding to the needs of individuals with IDD, rather than diminishing the caregiver's role or the individual's autonomy. To ensure that technology is used respectfully and ethically, the REACT system must be integrated into care strategies in a way that promotes collaboration between the technology and human caregivers. This approach fosters a balance on both technological and human insights, ensuring that interventions remain person- centered. Additionally, continuous training for caregivers on the ethical use of this technology and ongoing dialogue with stakeholders about its impact are vital in maintaining a humane approach to behavioral management. These steps help safeguard against any potential depersonalization and ensure that technology serves as an aid, enhancing the ability to deliver compassionate and individualized care.

Furthermore, incorporating these technologies into behav ioral interventions not only augments the capabilities of BCBAs but also promotes user autonomy and supports self- advocacy and self-determination among individuals with dis abilities. By providing real-time data and predictive insights, these tools could empower individuals to better understand and communicate their own emotional and physiological states, fostering a greater sense of control over their environment and interactions. This shift toward technology- enhanced, personalized interventions aligns with contemporary goals in disability rights, emphasizing respect for individual agency, and the enabling of people with disabilities to lead more self-directed lives.

Limitations and future directions

Several limitations exist that warrant attention in future work. Individualized models were developed in our current study to account for the unique characteristics and variations in physiological and behavioral signals, as well as agitations, among participants. This personalized approach allowed us to tailor the predictive model to each individual's specific needs. However, as we continue our research, we envision moving toward the development of group models. By working with larger participant cohorts, we aim to explore the potential for building models that can generalize across individuals while still accommodating individual differences. The development of group models will enhance the scalability and broader application of our approach, facilitating more widespread implementation and benefiting a larger population of individuals with IDD.

We focused on creating individualized model in this initial investigative work to assess the feasibility of the presented approach. Although the results are promising, more participants will be required to generalize the findings of our approach across a broader spectrum of the IDD population. However, the insights gained from this limited cohort are critical for initial model testing, providing a foundational understanding for subsequent larger-scale studies. Future research will aim to include a larger number of participants, which will not only validate and potentially enhance the accuracy of our predictive models but also ensure that our findings are more broadly applicable and representative of the diverse needs and conditions within the IDD community.

Our main goal during the assessments is to safely collect information about the most prevalent agitations to a child without pushing them toward challenging behavior. While this ensures safety, we are limited in how many occurrences of agitations can be evoked during assessments leading to a paucity of data for each agitation. Therefore, in future iterations of this work, longer assessments, or multiple assessments, particularly those already being conducted as behavior standards of care in the community, are required to collect more thorough training data.

Additionally, to reduce excessive stimulus from wires and electronics, the next version of WINGS will be wireless, and the clothing which holds WINGs needs to be purposefully built using comfortable clothing for children with various sensory profiles as determined through stakeholder input. We also found that the wristband used to capture physiological signals was prone to occasional loss of connectivity, dropping of data packets, and stagnant signals despite the high cost, warranting the need for the development of more reliable wearable physiological sensors. To make REACT portable, data collection and prediction need to be moved from computer-based to smartphone-based.

In this study, formal feedback from participants or their caregivers was not systematically collected as part of the methodology. However, it is important to note that during the study period, no adverse comments or concerns were raised by either caregivers or participants regarding the use of the technology. Recognizing the value of such feedback in evaluating the impact and acceptance of assistive technologies, future works will include a more structured feedback collection process in future studies. This will enable us to better understand the reception of the technology by the disability community and to ensure that it not only meets functional expectations but also aligns with the personal and social contexts of the users.

Despite these limitations, our current system can produce a robust ML algorithm for the proactive real-time detection of agitations with multimodal data collected using wearable sensors and a customized tablet for data annotation. In this work, we produced a real-time agitation prediction system to provide BCBAs with valuable intervention time without escalating to challenging behaviors. Technology of this kind could improve the safety and efficiency of treatment protocols by notifying interventionists, caregivers, or, in the future, people with challenging behaviors themselves that behavior escalation is likely, prompting them to use evidence-based interventions for timely deescalation or redirection.

Conclusion

This study demonstrates the efficacy of REACT in proactively predicting early signs of agitation in children with IDD using a multimodal data approach. By integrating ML techniques with sensory data capture from wearable technologies, our model successfully provides real-time, anticipatory alerts that allow caregivers and behavior analysts to intervene before behaviors escalate. The inclusion of multiple data modalities, such as physiological signals, body movements, and vocalizations, enriches the robustness of our predictions, significantly enhancing the predictive value of our model. Furthermore, our ablation study highlights the essential contributions of each modality to the overall performance, emphasizing the need for a comprehensive approach in future designs. As we continue to refine our system, we aim to extend its application to broader community settings and integrate it into existing behavioral analysis frameworks to maximize both participant safety and the effectiveness of interventions. The findings support the use of the REACT model for real-time, proactive detection of agitations in children with IDD. This approach not only improves the accuracy of detecting distress signals that are imperceptible to the human eye but also increases the window for timely intervention before behavioral escalation, thereby enhancing safety, well-being, and inclusion for this vulnerable population.

As development continues, maintaining an ongoing dialogue with the IDD community is crucial to ensure that the technology aligns with the real-world needs and preferences of those it is designed to support.

This collaboration is essential not only for optimizing functionality and effectiveness but also for reinforcing ethical standards that protect and empower the community of individuals with IDD. Through these efforts, technological advancements in behavioral analysis can continue to promote inclusion, respect, and empowerment for all individuals.

Footnotes

Acknowledgments

We thank Dr. Adithyan Rajaraman (Vanderbilt University Medical Center, Treatment and Research Institute for Autism Spectrum Disorders) for providing comments on the manuscript. We thank Lauren Shibley, BCBA, David Reichley, BCBA, and Amy Swanson, M.A. (Vanderbilt University Medical Center, Treatment and Research Institute for Autism Spectrum Disorders) for their contributions to these pilot experimental sessions. Additionally, we would like to thank all the children and their families for participating in the study.

Contributions

NK: methodology, analysis, investigation, data curation. AP: methodology, analysis, investigation, data curation. ZZ: concep tualization, investigation, funding acquisition. DA: analysis. JS: conceptualization, investigation. AW: conceptualization, supervision, project administration, funding acquisition. NS: conceptualization, resources, supervision, project administration, funding acquisition.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

This study was approved by the Vanderbilt Institutional Review Board (211846), and written informed consent was obtained from legally authorized representative of all participants.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation (grant number 2124002).

Guarantor

NK