Abstract

This study aimed to assess drug–drug interaction alert interfaces and to examine the relationship between compliance with human factors principles and user-preferences of alerts. Three reviewers independently evaluated drug–drug interaction alert interfaces in seven electronic systems using the Instrument-for-Evaluating-Human-Factors-Principles-in-Medication-Related-Decision-Support-Alerts (I-MeDeSA). Fifty-three doctors and pharmacists completed a survey to rate the alert interfaces from best to worst and reported on liked and disliked features. Human factors compliance and user-preferences of alerts were compared. Statistical analysis revealed no significant association between I-MeDeSA scores and user-preferences. However, the strengths and weaknesses of drug–drug interaction alerts from users’ perspectives were in-line with the human factors constructs evaluated by the I-MeDeSA. I-MeDeSA in its current form, is unable to identify alerts that are preferred by the users. The design principles assessed by I-MeDeSA appear to be sound, but its arbitrary allocation of points to each human factors construct may not reflect the relative importance that the end-users place on different aspects of alert design.

Introduction

Drug–drug interactions (DDIs) are a major cause of adverse drug events, which result in significant patient morbidity and mortality leading to increased healthcare costs.1–4 Electronic DDI alerts have been implemented as a form of clinical decision support in most of the modern electronic prescribing and dispensing systems. Such alerts serve to warn healthcare professionals of potentially harmful DDIs during the medication prescribing or dispensing process. 5 However, previous studies have found that users override most DDI alerts (i.e. bypass alerts without following alert recommendations),6–8 with override rates of up to 95% reported by some studies.7–10

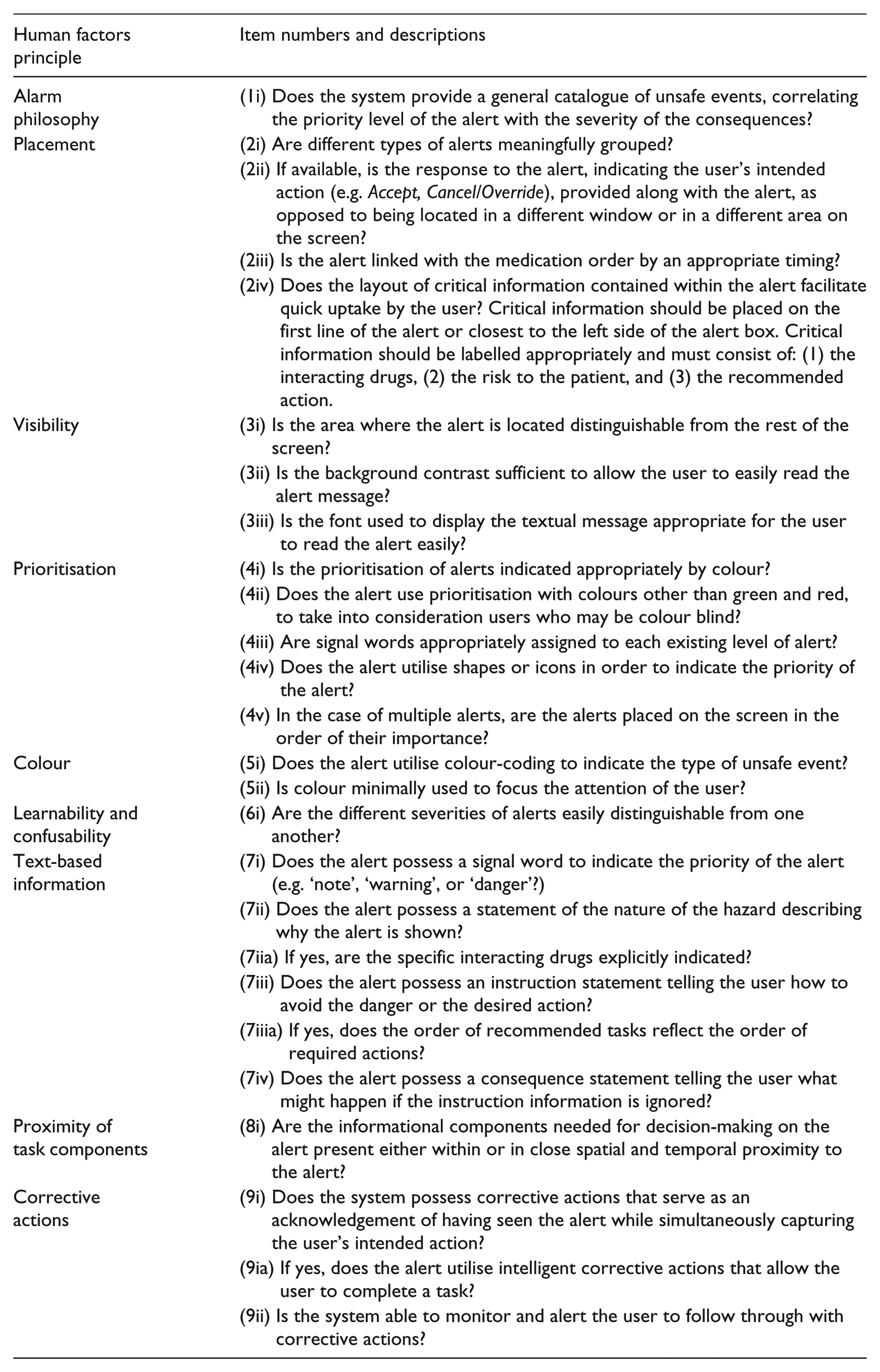

Numerous factors can impact on DDI alert acceptance, including specificity and sensitivity of alerts, alert number, and alert design. This article focuses on alert design, as a poor alert design is a frequent complaint among the users.11–14 Alert design encompasses multiple aspects of alert implementation, including the process of generating and classifying alerts, the appearance of alerts (user interface design, including content, layout, etc), and how users interact with alerts to accomplish tasks. Recent research has focused on identifying human factors (HF) principles of good DDI alert design to improve user acceptance and increase alert effectiveness. 5 HF is the scientific discipline that applies knowledge of human capabilities and limitations to design tasks and systems to promote efficiency, ease-of-use, and error-free performance.5,15 In 2011, the Instrument for Evaluating Human Factors Principles in Medication-Related Decision Support Alerts (I-MeDeSA) was developed to assess compliance of DDI alerts with HF design principles. 16 The I-MeDeSA assesses compliance with nine design principles (e.g. visibility, colour, content) using 26 items, with binary scoring (i.e. 1 or 0 indicating a yes or no answer, respectively – see Appendix 1). This tool is primarily aimed at guiding improvement of alert designs and has been used to evaluate DDI alerts of electronic systems in the United States and South Korea.17–19

The I-MeDeSA appears to be the only publicly available tool for evaluating alert design. The validation of the I-MeDeSA involved content validation by three HF experts, pilot testing, and inter-rater reliability testing. 16 However, previous studies have identified a number of shortcomings with the tool.18,19 These include the use of conditional scoring (i.e. scoring a one (‘yes’) for some I-MeDeSA items is dependent on scoring a one for a preceding item), ambiguous wording, and items that are irrelevant to some system configurations.

One aspect of the I-MeDeSA’s validity that has not been evaluated is its ability to identify alerts that are well accepted by the users. If the I-MeDeSA is effective in distinguishing a good HF design from a bad one, we would expect alerts with high I-MeDeSA scores to be preferred by users to those with low I-MeDeSA scores. The current study set out to assess this premise and had two aims (1) to evaluate HF compliance in DDI alerts across seven electronic systems using the I-MeDeSA and (2) to determine whether a relationship exists between the I-MeDeSA scores obtained and user preferences of these DDI alert interfaces.

Materials and methods

Evaluation of HF compliance using the I-MeDeSA

DDI alerts in seven electronic systems were evaluated. These systems are currently being used in the Australian hospital and primary care settings, but most were not developed in Australia itself. Hospital computerised provider order entry (CPOE) systems were Cerner’s PowerChart, DXC Technology’s MedChart, and InterSystem’s Trakcare. Primary care Electronic Medical Record (EMR) systems were Best Practice and Medical Director, and pharmacy dispensing systems were FRED and iPharmacy. These seven systems were chosen for evaluation because they represent some of the most frequently used hospital, primary care, and pharmacy dispensing systems in Australia.

Three reviewers, two HF researchers (M.B., W.Y.Z.), and a medical science honours student (D.L.) undertook multiple site visits to hospitals, clinics, and offices to evaluate the DDI alerts in each system. Hospitals and clinics were chosen based on the system in use, ensuring all seven systems would be evaluated. The researchers approached a contact at each site and asked them to nominate an appropriate person for delivering a demonstration. During visits, experienced users or system administrators provided a ‘walk through’ of each system and answered questions relating to system features that could not be determined from the demonstration alone (e.g. whether a catalogue of unsafe events was available to users). During these ‘walkthroughs’, a list of DDIs of differing severity were ‘prescribed’ to generate multiple DDI alerts. The reviewers would, therefore, experience the entire process of prescribing/dispensing medications, generation of different DDI alerts, and methods of interacting with the alerts. After each visit, the reviewers independently evaluated DDI alerts in each system using the I-MeDeSA on the basis of the information gathered. Once a system had been independently reviewed and scored, any differences in the reviewers’ scores for the system were carefully discussed as a group to reach a consensus score for each item and the final system score. In addition to the live demonstrations of each system, reviewers were provided with screenshots of DDI alerts generated in each system for the user preference survey.

User preference survey

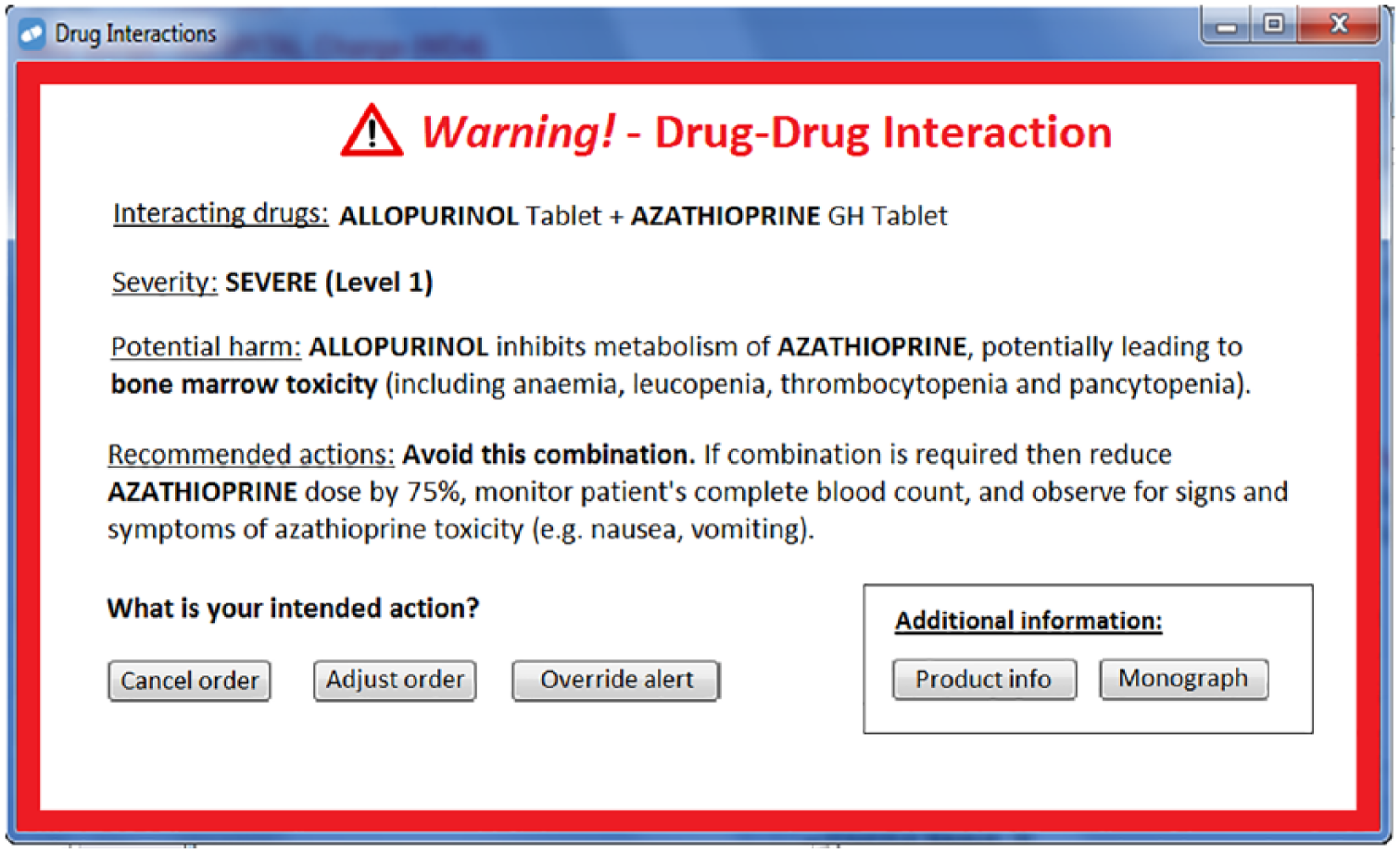

A four-question survey was developed to enable healthcare professionals to rate and comment on the seven DDI alert interfaces evaluated in Part 1 of the study. Basic demographic information was collected, including the electronic systems that the participants had previously used, the number of years they had been using electronic systems, and the average number of alerts they encountered per day while using the electronic systems. The survey then presented screenshots of a major alert involving the interaction between allopurinol and azathioprine taken from each system.

In addition, an eighth ‘mock-up’ screenshot, representing an interface that complies with relevant HF principles in the I-MeDeSA, was designed and included in the survey after evaluation by the three reviewers (Figure 1). No information was displayed in the screenshots that enabled identification of the electronic system or the site of implementation. In the survey, the participants were asked to rank the eight alerts from best to worst using a numerical scale (one indicating best design, eight indicating worst design). The participants were also provided with a comment box to report any liked or disliked features of the alerts. Both online (using Qualtrics™ software) and paper versions of the survey were made available to the participants.

The I-MeDeSA ‘mock-up’ DDI alert interface included in the survey. This interface was designed to comply with the I-MeDeSA items relating to visual and informational aspects of alert design.

Hospital doctors, pharmacists, and general practitioners were invited to take part in the survey. Recruitment of the participants involved dissemination of an online survey via three hospital email mailing lists (two from separate large tertiary teaching hospitals and one from a private hospital, in total > 500 participants). These three hospitals were the same institutions that had been visited by the reviewers for evaluation of alerts (Part 1 of the study). Paper versions of the survey were distributed during a weekly hospital pharmacy meeting (approximately 20 attendees) and a monthly general practitioner meeting (approximately 15 attendees). In total, 53 survey responses were received. This research complied with the (Australian) National Statement on Ethical Conduct in Human Research and was approved by the Human Research Ethics Committees at St Vincent’s Hospital Sydney and Macquarie University. Informed consent was obtained from each participant.

Data analysis

Descriptive statistics were utilised in the analysis and presentation of original I-MeDeSA scores for all systems (out of 26 – with all original I-MeDeSA items included). Krippendorff’s alpha was calculated to determine the inter-rater agreement.

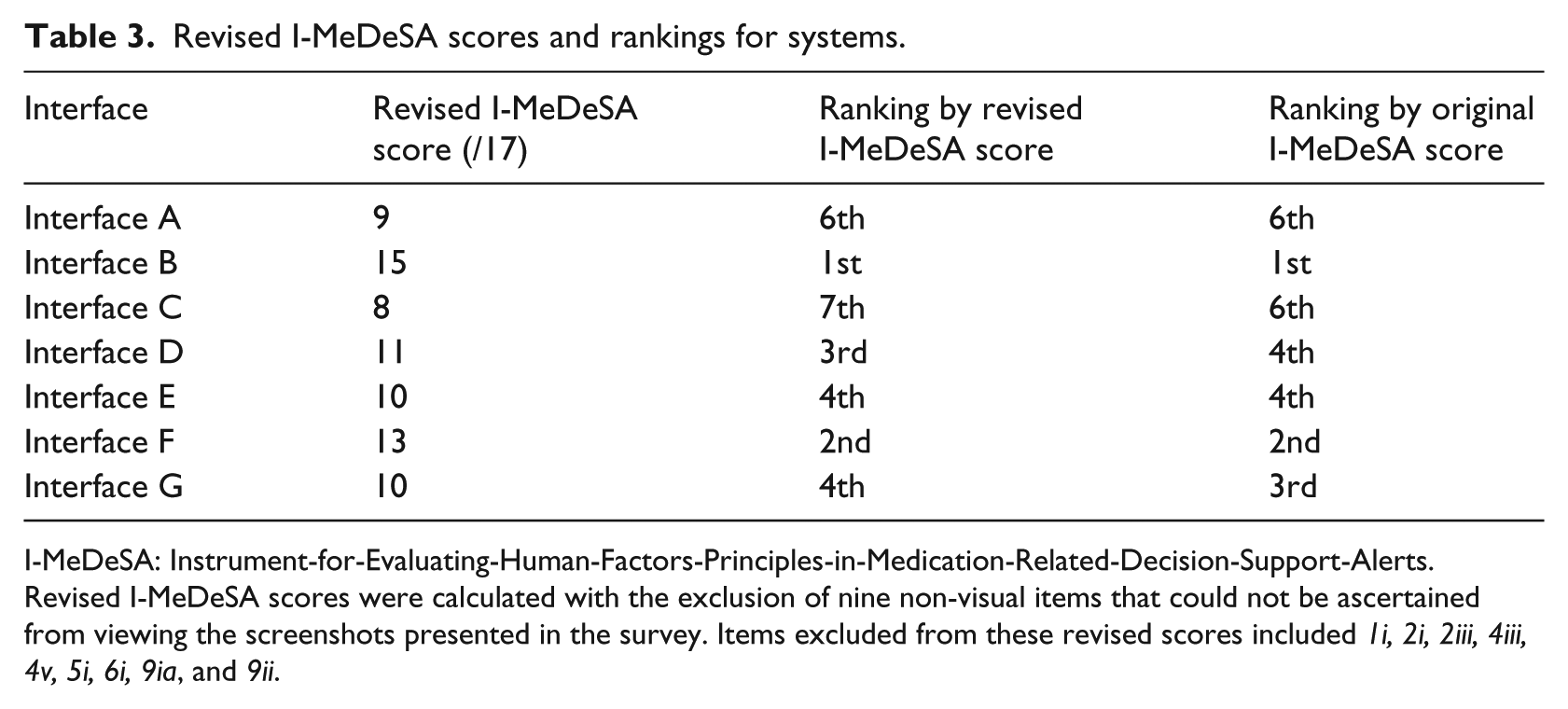

Screenshots taken from the systems were ranked on the basis of average user preferences and compared to rankings of the systems based on revised I-MeDeSA scores (out of 17 – with nine I-MeDeSA items excluded from this revised score). Revised I-MeDeSA scores were calculated by excluding nine I-MeDeSA items that did not relate to the visual aspects of individual alerts and could not be ascertained from viewing the screenshots presented in the survey. The items excluded from these revised scores included 1i, 2i, 2iii, 4iii, 4v, 5i, 6i, 9ia, and 9ii (see Appendix 1).

IBM® SPSS® 25 Statistics software was used to perform frequency distributions of user preference rankings, as well as a Spearman’s rank-order correlation to determine the strength of the association between the revised I-MeDeSA scores and user preferences. Free-text comments were reviewed to identify common ‘liked’ and ‘disliked’ features.

Results

I-MeDeSA scores

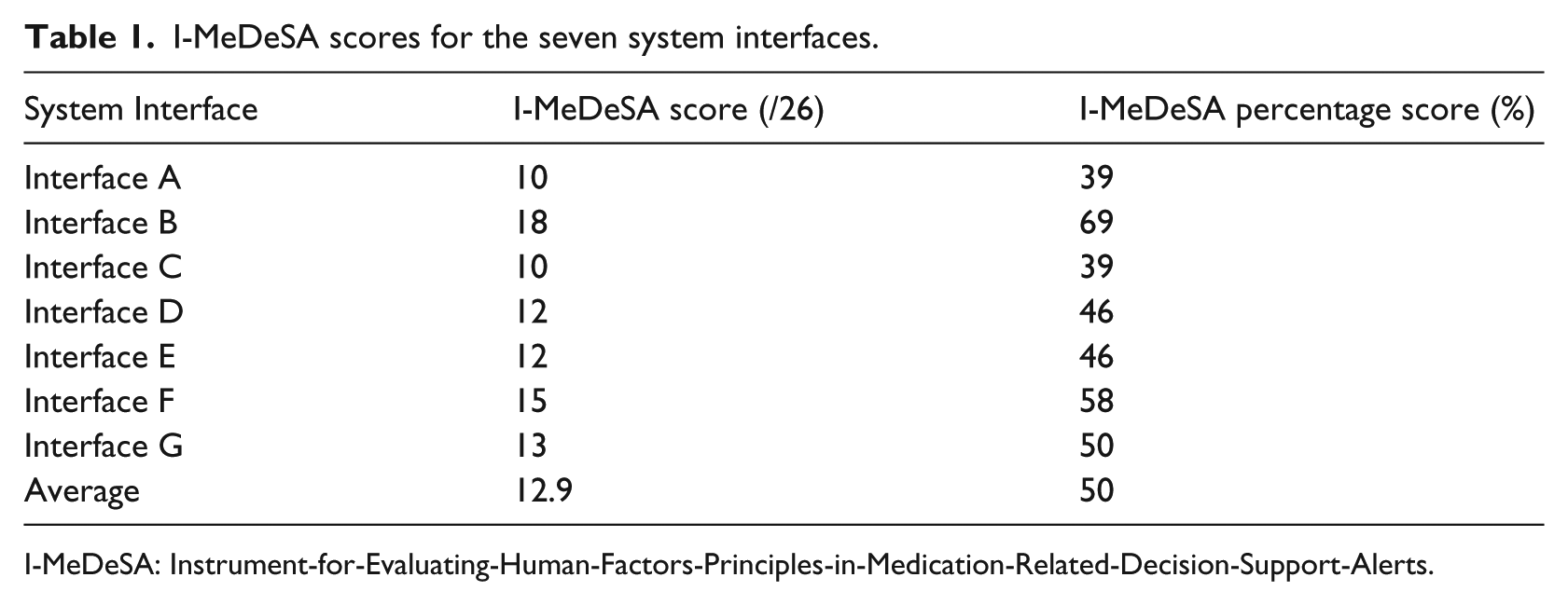

I-MeDeSA scores ranged from 10/26 to 18/26, with the average score across all systems found to be 12.9 (50%) and the median 12/26 (Table 1). The reviewers were reasonably consistent in their scoring of systems with Krippendorff’s alpha found to be 0.7584, 95% confidence interval (CI) (0.7035, 0.8133).

I-MeDeSA scores for the seven system interfaces.

I-MeDeSA: Instrument-for-Evaluating-Human-Factors-Principles-in-Medication-Related-Decision-Support-Alerts.

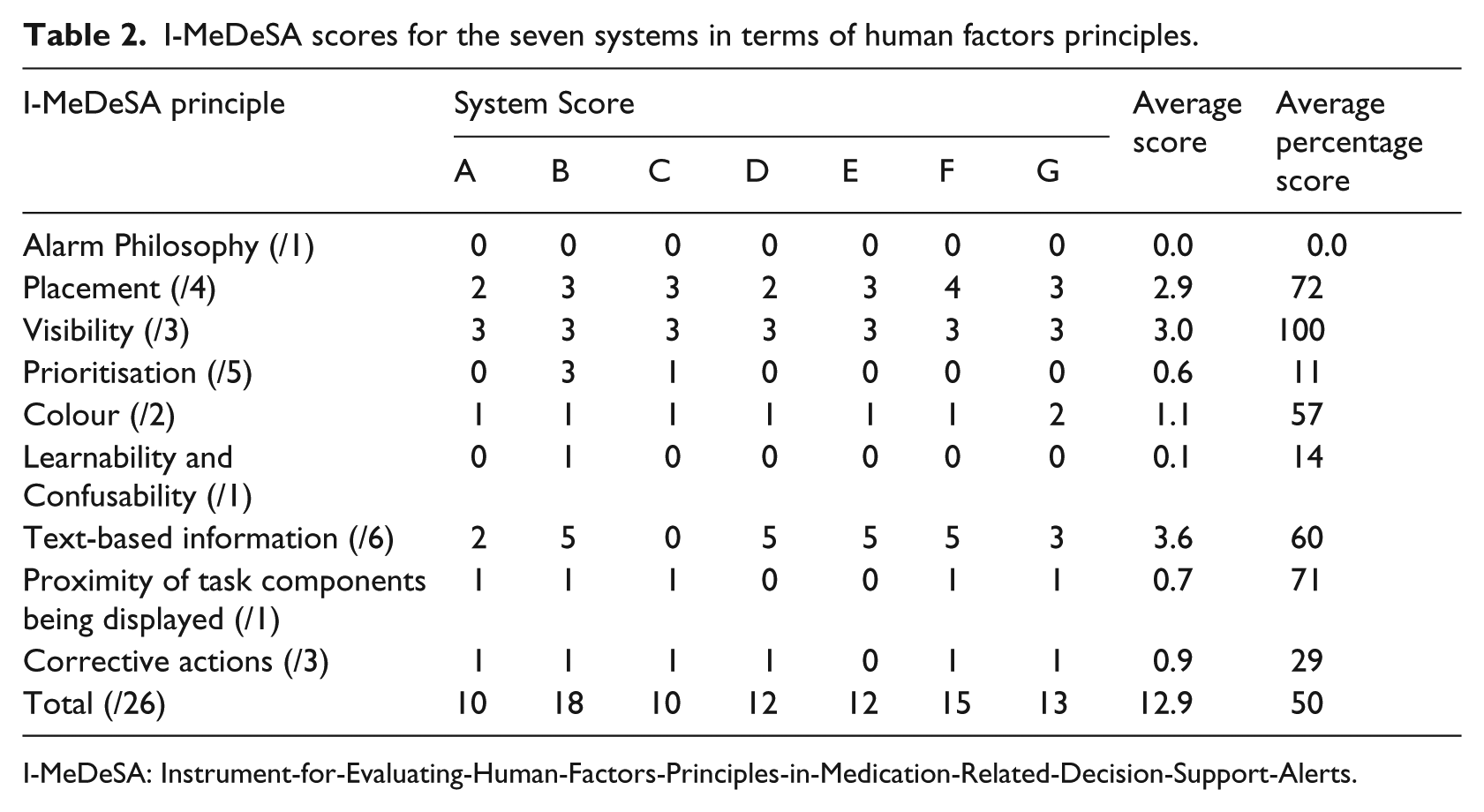

As shown in Table 2, systems scored best in items relating to ‘visibility’ (mean score 100%). This was because all the systems used appropriate contrast and positioning to allow the alert to be easily distinguished from other user interface elements. In addition, all the alerts included appropriate font (i.e. a mixture of upper and lower-case rather than all upper-case lettering) that allowed the alert text to be read easily. On average, systems scored worst in items relating to ‘alarm philosophy’ (mean score 0%). This was because they lacked an easily accessible catalogue or documentation for users that described the underlying guidelines or algorithms used to classify DDI alert severities/priorities (i.e. why certain DDIs were presented as more severe or important than others).

I-MeDeSA scores for the seven systems in terms of human factors principles.

I-MeDeSA: Instrument-for-Evaluating-Human-Factors-Principles-in-Medication-Related-Decision-Support-Alerts.

User preferences of alert interfaces

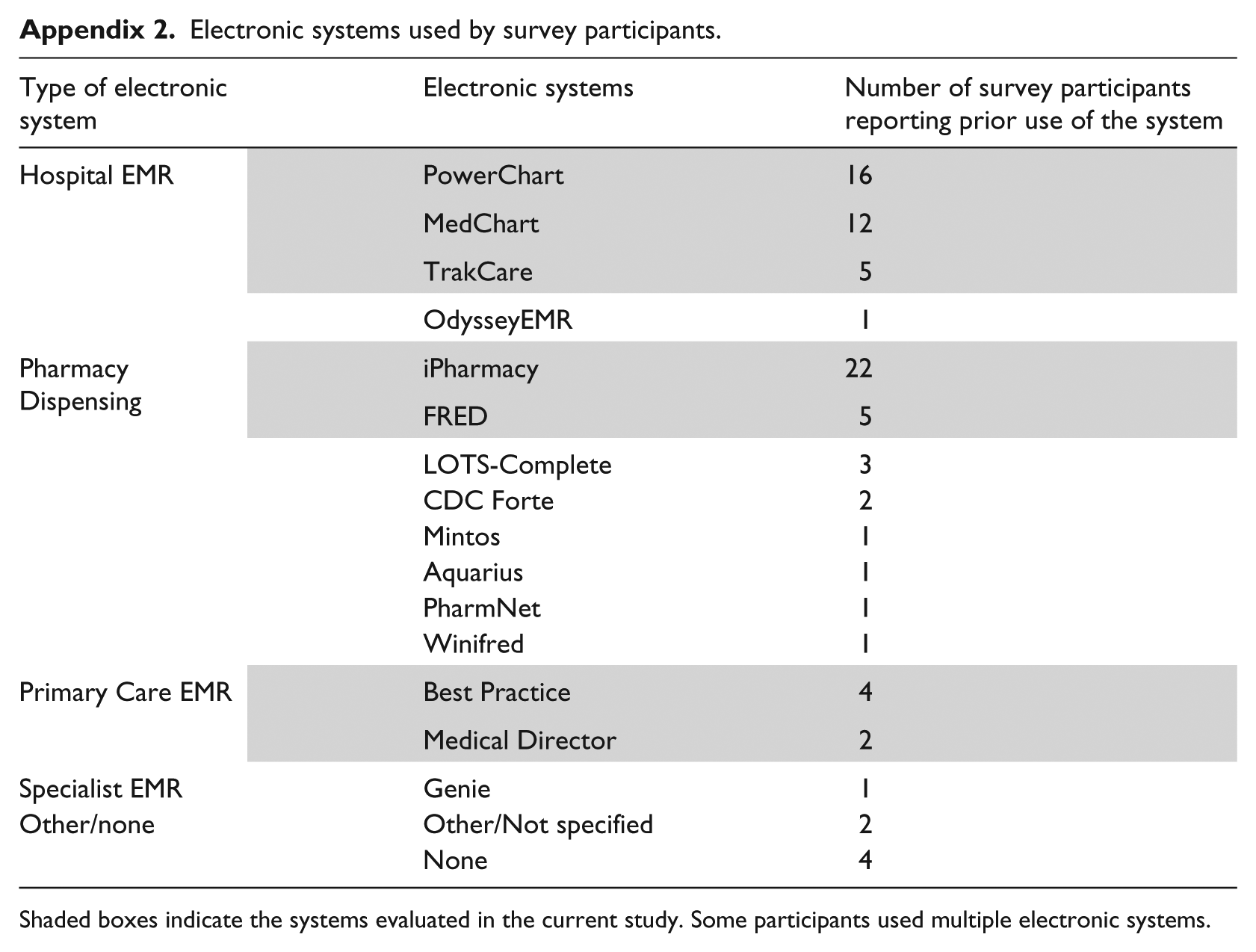

Of the 53 survey responses received, one response was not valid (participant rated the systems out of eight rather than assigning ranks from one to eight), while four participants reported not having any experience with an electronic prescribing or dispensing system. The remaining participants had spent, on an average, 6.9 years using electronic prescribing/dispensing systems and reported receiving, on an average, 18 DDI alerts per day. The three most common systems used by the participants were iPharmacy, PowerChart, and MedChart (Appendix 2).

Revision of I-MeDeSA scores through the exclusion of nine non-visual items resulted in only minor changes in the ranking of the systems (Table 3). Based on these revised I-MeDeSA scores, Interface B once again scored the highest (15/17) and Interface C scored the lowest (8/17).

Revised I-MeDeSA scores and rankings for systems.

I-MeDeSA: Instrument-for-Evaluating-Human-Factors-Principles-in-Medication-Related-Decision-Support-Alerts.

Revised I-MeDeSA scores were calculated with the exclusion of nine non-visual items that could not be ascertained from viewing the screenshots presented in the survey. Items excluded from these revised scores included 1i, 2i, 2iii, 4iii, 4v, 5i, 6i, 9ia, and 9ii.

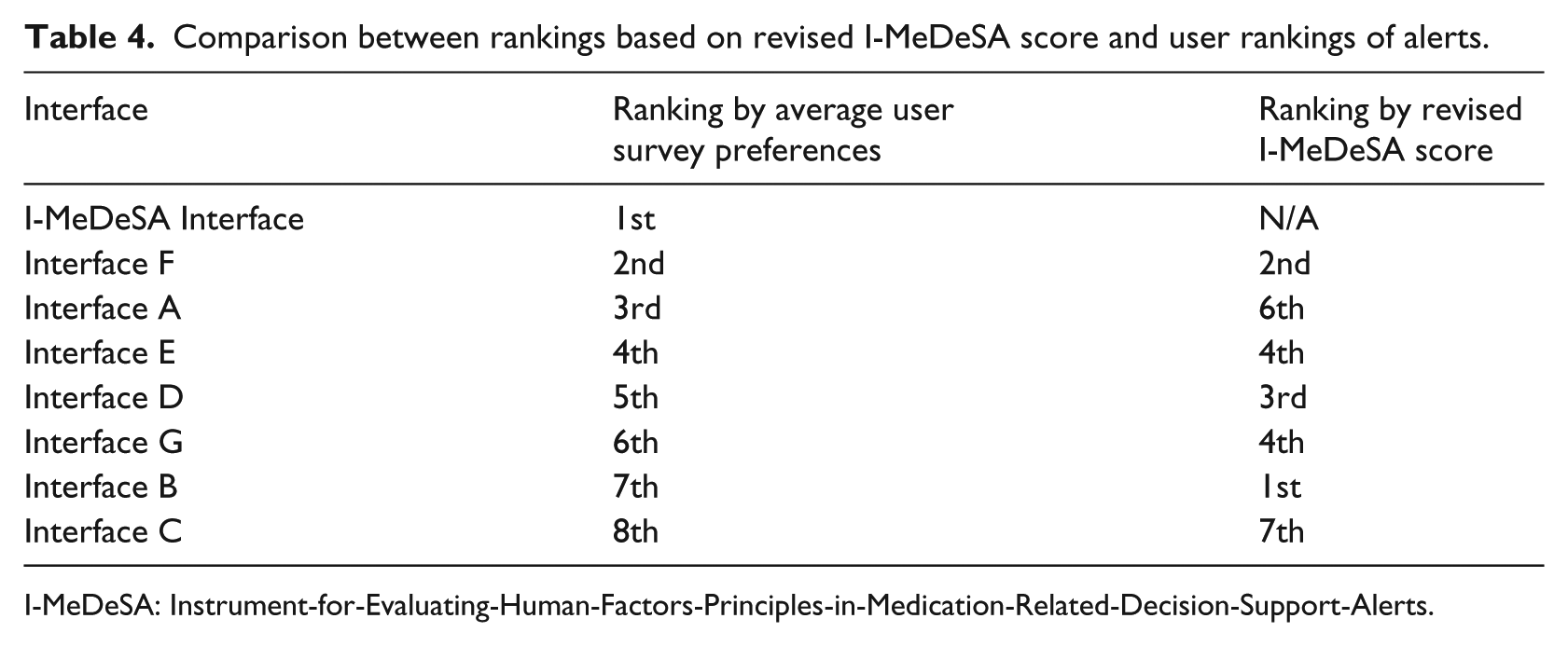

On average, our mock DDI alert, which was compliant with all of I-MeDeSA’s visual items was rated as the best interface by the respondents (Table 4). Interface C received the lowest revised I-MeDeSA score and was also rated as the worst system by the users. However, a Spearman’s rank-order correlation revealed only a weak, non-significant positive correlation between the rankings based on revised I-MeDeSA scores and average user rankings (Spearman’s correlation coefficient, rs = 0.180; p = 0.699).

Comparison between rankings based on revised I-MeDeSA score and user rankings of alerts.

I-MeDeSA: Instrument-for-Evaluating-Human-Factors-Principles-in-Medication-Related-Decision-Support-Alerts.

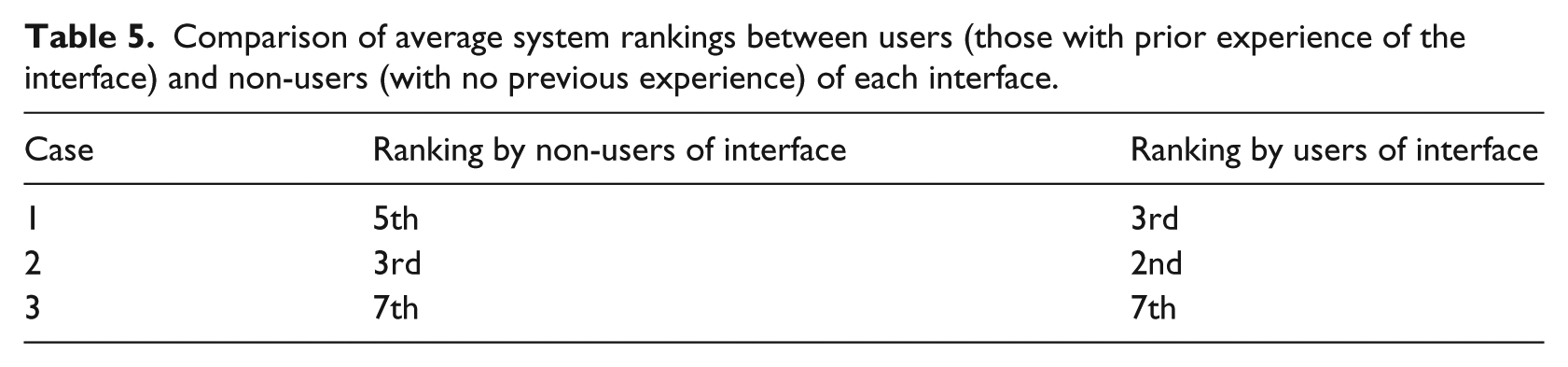

Previous experience of systems and user rankings

For the three most commonly used systems, iPharmacy, PowerChart, and MedChart, we examined whether previous experience with the system had impacted the users’ perceptions of the DDI alert in that system. As shown in Table 5, no significant differences in ranking was found when comparing the average rankings given by non-users and users of each system.

Comparison of average system rankings between users (those with prior experience of the interface) and non-users (with no previous experience) of each interface.

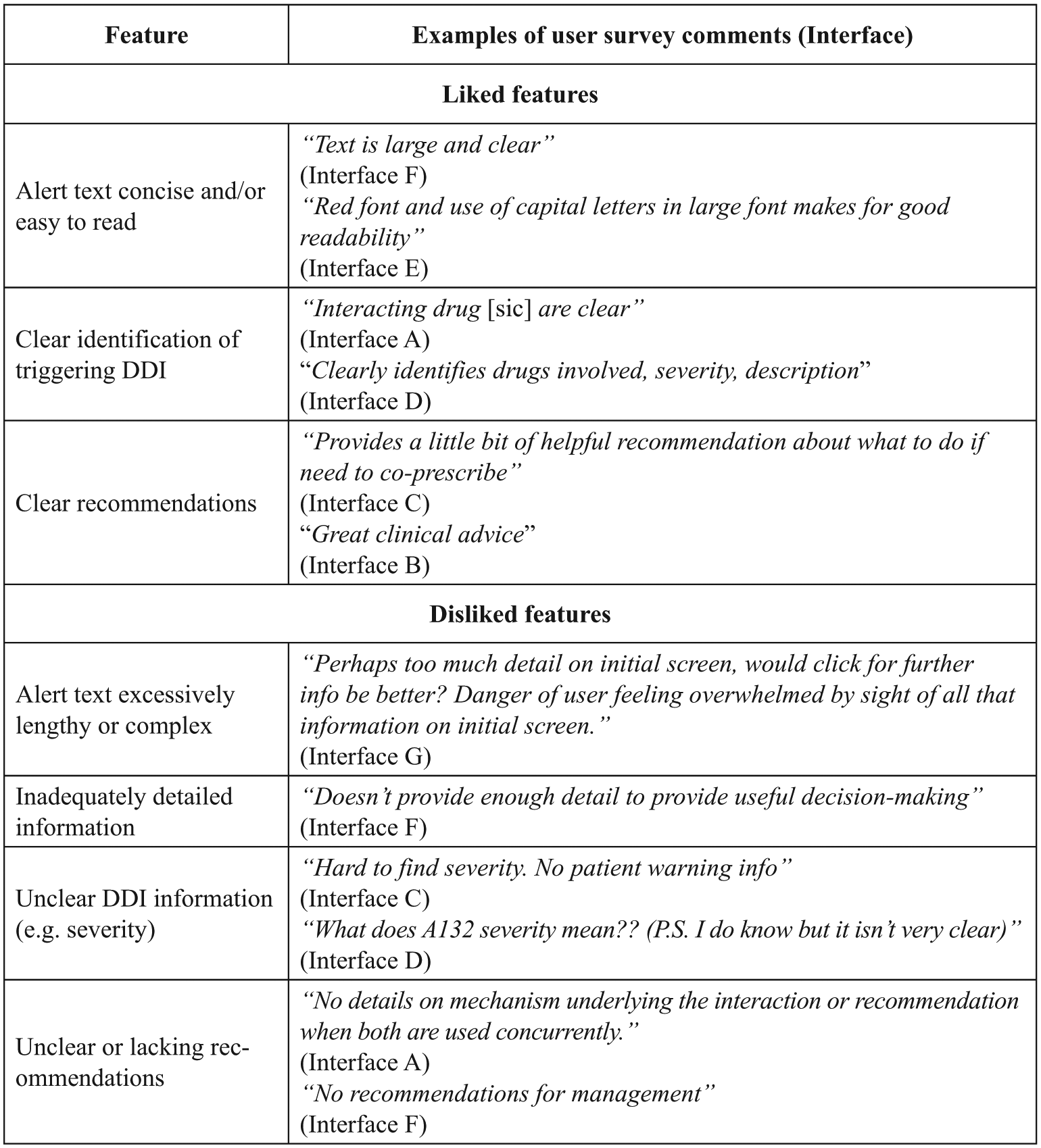

Analysis of free-text responses

Figure 2 shows the most frequently reported liked (negative) and disliked (positive) features of alert interfaces reported by the survey respondnts, with examples of the free-text comments made by the respondents (and the system being referred to). The most frequently reported liked feature across systems was that alert text was concise and/or easy to read. The most frequently reported disliked feature was that some systems included excessively lengthy or complex alerts.

Most frequently reported liked and disliked features of alerts reported by users in the free-text comment field in the survey.

Discussion

DDI alert interfaces currently in use in Australian hospitals and primary care settings generally showed poor compliance with HF design principles when assessed with the I-MeDeSA. Poor compliance was particularly apparent in areas such as ‘Alarm Philosophy’, ‘Prioritisation’, and ‘Corrective Actions’. We found no significant correlation between I-MeDeSA scores and user preferences, suggesting that the instrument, in its current form, may not be accurately identifying the systems that are preferred by the users. However, liked and disliked features of alerts, as reported by the users, were in line with a number of HF principles evaluated by the I-MeDeSA.

A weakness common to all DDI alerting systems was their ‘Alarm Philosophy’, which relates to whether the systems provide documentation that enables users to understand how and why the system prioritises certain events as unsafe. Although some systems possessed such documentation, it was only available to the system administrators for purposes such as updating and debugging. This finding is similar to the previous evaluations, where only two systems (out of 15 evaluated in those studies) offered accessible documentation on the algorithms used to assign DDI priority levels.17,18 All systems can improve in this area by making such information accessible to end-users. However, a potential barrier to this is the proprietary nature of the algorithms/catalogues that many systems are based upon, which could prevent disclosure due to licencing restrictions. 20

‘Prioritisation’ was another key area where the systems performed poorly. Poor compliance with this HF principle was primarily due to a lack of, or inappropriate use of colours, shapes/icons, and signal words (e.g. Warning!) to indicate different alert priorities. The highest scoring system in this construct used colours (red, orange, and yellow) and icons (e.g. stop octagon, triangle) to convey information such as alert type, severity, and priority. This was a primary point of differentiation between this system and all the other systems. However, reviewers noted that the size of the coloured icons used in this system was quite small. Interestingly, meaning this design issue was not evaluated by the tool.

Another common weakness of alert interfaces related to ‘Corrective Actions’. All the systems lacked ‘intelligent corrective actions’, which reduce the steps required to change a medication order in response to an alert. For example, an alert recommending a reduction in the dose of one medication to prevent an interaction will typically require the user to accept the alert and then navigate back to the appropriate window to make this dosage change. In contrast, an alert with ‘intelligent corrective actions’ would automatically direct the user to the appropriate ordering window to quickly adjust the dose. Monitoring functions, which ensure that corrective actions are carried out by the user and alert the user again if not completed, were also absent from most of the systems. While the addition of monitoring functions and associated alerts may increase the likelihood of alert recommendations being followed through, this approach could potentially exacerbate other problems, excessive alerts leading to alert fatigue. 21

The most common liked and disliked features of alerts reported in free-text comments aligned well with the HF principles evaluated by the I-MeDeSA. For example, participants liked the use of concise and simple text within alerts, clear identification of the DDI that generated the alert, and the provision of clear recommendations when responding to an alert. They disliked the use of wordy or complex text and inadequate information provided within the alerts. These comments map to the HF principles of ‘Placement’ and ‘Text-based information’ (see Appendix 1) and indicate that some of the HF principles evaluated by I-MeDeSA reflect aspects of alert design that are important to the end-users. This was further supported by our finding that the I-MeDeSA mock interface (designed to satisfy I-MeDeSA HF principles) was the interface that was most preferred by the users. Despite these findings, we found no statistically significant relationship between the ranking of alert interfaces by revised I-MeDeSA scores and ranking by user preferences. While the HF principles tested by the I-MeDeSA appear to be sound, the arbitrary number of items (and so points) assigned to each HF construct may have contributed to the differences between user preferences and I-MeDeSA scores. As an illustration of this, it is unclear why some HF principles have five points assigned to them (e.g. ‘prioritisation’) while others are weighted less significantly with only one point assigned (e.g. ‘learnability and confusability’). The end-users may place greater importance on particular aspects of alert design, which may not be weighted as such by the I-MeDeSA scoring system.

In moving forward, we suggest identifying characteristics of an alert’s design that have the most significant impact on usability and are most important to the end-users. For example, Marcilly et al. 22 detailed an evidence-based framework (linking usability principles, usability flaws, and workplace usage problems) that could be used to review the usability of current forms of clinical decision support, including DDI alerts. Subsequent review papers have identified multiple usability flaws and made recommendations based on these to guide improved alert design.23,24 Evaluation tools based on these alternative approaches would provide an interesting comparison to the HF-based framework of I-MeDeSA.

Important limitations of this study include the relatively small number of survey responses and the use of static screenshots for the user preference survey. The survey respondents were unable to interact and experience the true functionality of each system and therefore could not evaluate certain aspects of alert design. However, we attempted to control this by comparing user preference scores with rankings based on modified I-MeDeSA scores (which included only visual items that could be discerned from screenshots) when performing data analysis. Future research could involve direct interaction between study participants and alert interfaces to more accurately capture the user preferences of alerting systems. It is also important to note that many of the alert characteristics evaluated here are not customisable at the local level (i.e. by the development team) and thus would require vendor time and resources to modify.

Conclusion

HF compliance of alerts across seven systems used in Australia was very poor, indicating that there is much room for improvement in alert interface design. We found no direct association between I-MeDeSA scores and user preferences of alerts. However, the principles tested by I-MeDeSA appear to be sound. Instead, the arbitrary allocation of points to each HF construct may not reflect the relative importance that end-users place on different aspects of alert design. A useful and robust method for evaluation of DDI alert designs remains elusive but is necessary to guide improvement of alerting systems and thus, increase their effectiveness.

Footnotes

Appendix 1. Instrument for evaluating human factor principles in medication related decision support alerts (I-MeDeSA). 11

| Human factors principle | Item numbers and descriptions |

|---|---|

| Alarm philosophy | (1i) Does the system provide a general catalogue of unsafe events, correlating the priority level of the alert with the severity of the consequences? |

| Placement | (2i) Are different types of alerts meaningfully grouped? |

| (2ii) If available, is the response to the alert, indicating the user’s intended action (e.g. Accept, Cancel/Override), provided along with the alert, as opposed to being located in a different window or in a different area on the screen? | |

| (2iii) Is the alert linked with the medication order by an appropriate timing? | |

| (2iv) Does the layout of critical information contained within the alert facilitate quick uptake by the user? Critical information should be placed on the first line of the alert or closest to the left side of the alert box. Critical information should be labelled appropriately and must consist of: (1) the interacting drugs, (2) the risk to the patient, and (3) the recommended action. | |

| Visibility | (3i) Is the area where the alert is located distinguishable from the rest of the screen? |

| (3ii) Is the background contrast sufficient to allow the user to easily read the alert message? | |

| (3iii) Is the font used to display the textual message appropriate for the user to read the alert easily? | |

| Prioritisation | (4i) Is the prioritisation of alerts indicated appropriately by colour? |

| (4ii) Does the alert use prioritisation with colours other than green and red, to take into consideration users who may be colour blind? | |

| (4iii) Are signal words appropriately assigned to each existing level of alert? | |

| (4iv) Does the alert utilise shapes or icons in order to indicate the priority of the alert? | |

| (4v) In the case of multiple alerts, are the alerts placed on the screen in the order of their importance? | |

| Colour | (5i) Does the alert utilise colour-coding to indicate the type of unsafe event? |

| (5ii) Is colour minimally used to focus the attention of the user? | |

| Learnability and confusability | (6i) Are the different severities of alerts easily distinguishable from one another? |

| Text-based information | (7i) Does the alert possess a signal word to indicate the priority of the alert (e.g. ‘note’, ‘warning’, or ‘danger’?) |

| (7ii) Does the alert possess a statement of the nature of the hazard describing why the alert is shown? | |

| (7iia) If yes, are the specific interacting drugs explicitly indicated? | |

| (7iii) Does the alert possess an instruction statement telling the user how to avoid the danger or the desired action? | |

| (7iiia) If yes, does the order of recommended tasks reflect the order of required actions? | |

| (7iv) Does the alert possess a consequence statement telling the user what might happen if the instruction information is ignored? | |

| Proximity of task components | (8i) Are the informational components needed for decision-making on the alert present either within or in close spatial and temporal proximity to the alert? |

| Corrective actions | (9i) Does the system possess corrective actions that serve as an acknowledgement of having seen the alert while simultaneously capturing the user’s intended action? |

| (9ia) If yes, does the alert utilise intelligent corrective actions that allow the user to complete a task? | |

| (9ii) Is the system able to monitor and alert the user to follow through with corrective actions? |

Appendix 2. Electronic systems used by survey participants

| Type of electronic system | Electronic systems | Number of survey participants reporting prior use of the system |

|---|---|---|

| Hospital EMR | PowerChart | 16 |

| MedChart | 12 | |

| TrakCare | 5 | |

| OdysseyEMR | 1 | |

| Pharmacy Dispensing | iPharmacy | 22 |

| FRED | 5 | |

| LOTS-Complete | 3 | |

| CDC Forte | 2 | |

| Mintos | 1 | |

| Aquarius | 1 | |

| PharmNet | 1 | |

| Winifred | 1 | |

| Primary Care EMR | Best Practice | 4 |

| Medical Director | 2 | |

| Specialist EMR | Genie | 1 |

| Other/none | Other/Not specified | 2 |

| None | 4 |

Shaded boxes indicate the systems evaluated in the current study. Some participants used multiple electronic systems.

Appendix 3

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the National Health and Medical Research Council Programme grant number 1054146.