Abstract

To establish a process for the development of a prioritization tool for a clinical decision support build within a computerized provider order entry system and concurrently to prioritize alerts for Saint Luke’s Health System. The process of prioritizing clinical decision support alerts included (a) consensus sessions to establish a prioritization process and identify clinical decision support alerts through a modified Delphi process and (b) a clinical decision support survey to validate the results. All members of our health system’s physician quality organization, Saint Luke’s Care as well as clinicians, administrators, and pharmacy staff throughout Saint Luke’s Health System, were invited to participate in this confidential survey. The consensus sessions yielded a prioritization process through alert contextualization and associated Likert-type scales. Utilizing this process, the clinical decision support survey polled the opinions of 850 clinicians with a 64.7 percent response rate. Three of the top rated alerts were approved for the pre-implementation build at Saint Luke’s Health System: Acute Myocardial Infarction Core Measure Sets, Deep Vein Thrombosis Prophylaxis within 4 h, and Criteria for Sepsis. This study establishes a process for developing a prioritization tool for a clinical decision support build within a computerized provider order entry system that may be applicable to similar institutions.

Background and significance

Computerized provider order entry (CPOE) has the potential to improve the delivery of health care and is a primary incentive for implementing sophisticated clinical information systems. 1 The majority of these systems have demonstrated an improvement in the process of care, but are less likely to show improved patient outcomes. 2 To increase their impact, CPOE systems incorporate clinical decision support (CDS) tools, linking evidence-based guidelines to clinicians’ decisions to enhance quality.3,4 The integration and robustness of CDS within CPOE systems vary. Basic CDS may offer limited interventional capacity (identifies a drug–drug interaction), whereas advanced support integrates clinical guidance (identifies a patient with potential diagnosis and recommends an action).

Despite the potential for improved processes and patient outcomes, institutional resources often limit the capacity of CDS. 5 Alert construction is expensive and time-consuming and requires careful planning, pilot testing, and training. Post-implementation alert maintenance requires continued investment in policy updates, process changes, and infrastructure enhancements. Therefore, resource allocation during the initial CPOE build as well as providing ongoing support is challenging for most institutions. These issues are often addressed through an informal, subjective, and institution-specific process.

The implementation of advanced CDS is often limited by the burden of alert-handling or alert fatigue. 6 Research has shown that drug-safety alerts and reminders from CDS systems are often disregarded by prescribers. Reidmann et al. 7 reported alert override-rates of 49 to 96 percent. In addition, increasing numbers of drug-safety alerts potentially lead to user desensitization. 7 This desensitization may lead to practitioners ignoring critical warnings and is likely a significant factor in the prevention of behavior change. A recent review of CDS systems suggests that the alerting threshold is too low and the alerts are sensitive but not specific. 8 Research has shown that surveys and interviews with users and focus groups can raise important concerns that can be assessed and modified to increase the effectiveness of the CDS and improve patient outcomes. 8

Objective

The primary objective of this study was to define a process for developing a prioritization tool that would provide guidance for a CDS build within a CPOE system. In accordance with our institution’s goals, the CDS should aid the use of evidence-based treatment and reduce dangerous adverse events with minimal workflow interruptions. The secondary objective was to prioritize specific CDS alerts for the Saint Luke’s Health System (SLHS) CPOE system.

Materials and methods

Survey participants

Saint Luke’s Care (SLC) is a physician quality organization dedicated to quality medical care through the use of evidence-based medicine within the SLHS of Kansas City, a 10-hospital not-for-profit health-care system with a service area of more than 2 million people. SLC physicians determine best practices for SLHS. All 630 members of SLC were contacted to participate in the confidential survey.

Nonphysician subject matter experts (SMEs) were identified in the various practice areas, specialties, and disciplines that contribute to clinical documentation. This included various levels of nursing care (critical care, med/surg, emergency, rehabilitation, surgical) and specialties, such as wound care, blood conservation, code blue team, quality, and ancillary disciplines (respiratory therapy, nutrition, spiritual care, physical therapy, occupational therapy, and case management). Within each of these groups, a representative from each hospital entity was chosen to have system representation. The chief nursing officers and system leaders provided names of staff for each of these groups.

Finally, all the pharmacists in the SLHS were asked to complete the survey.

Survey

The sample consisted of the following three sections:

Demographics regarding the survey population, including participants’ professions, physicians’ specialties, and participants’ prior experience with CPOE.

A prioritization tool developed by the authors (M.W., S.M., D.D., J.H., B.B.). The authors achieved consensus through a modified Delphi rating process in three iterative rounds of rating and discussion. The authors identified six facets by which the impact of a CDS alert could be evaluated: Quality of Care, Legal and Regulatory Compliance, Organizational Liability, Daily Workload, Patient Safety, and Likelihood of Occurrence.

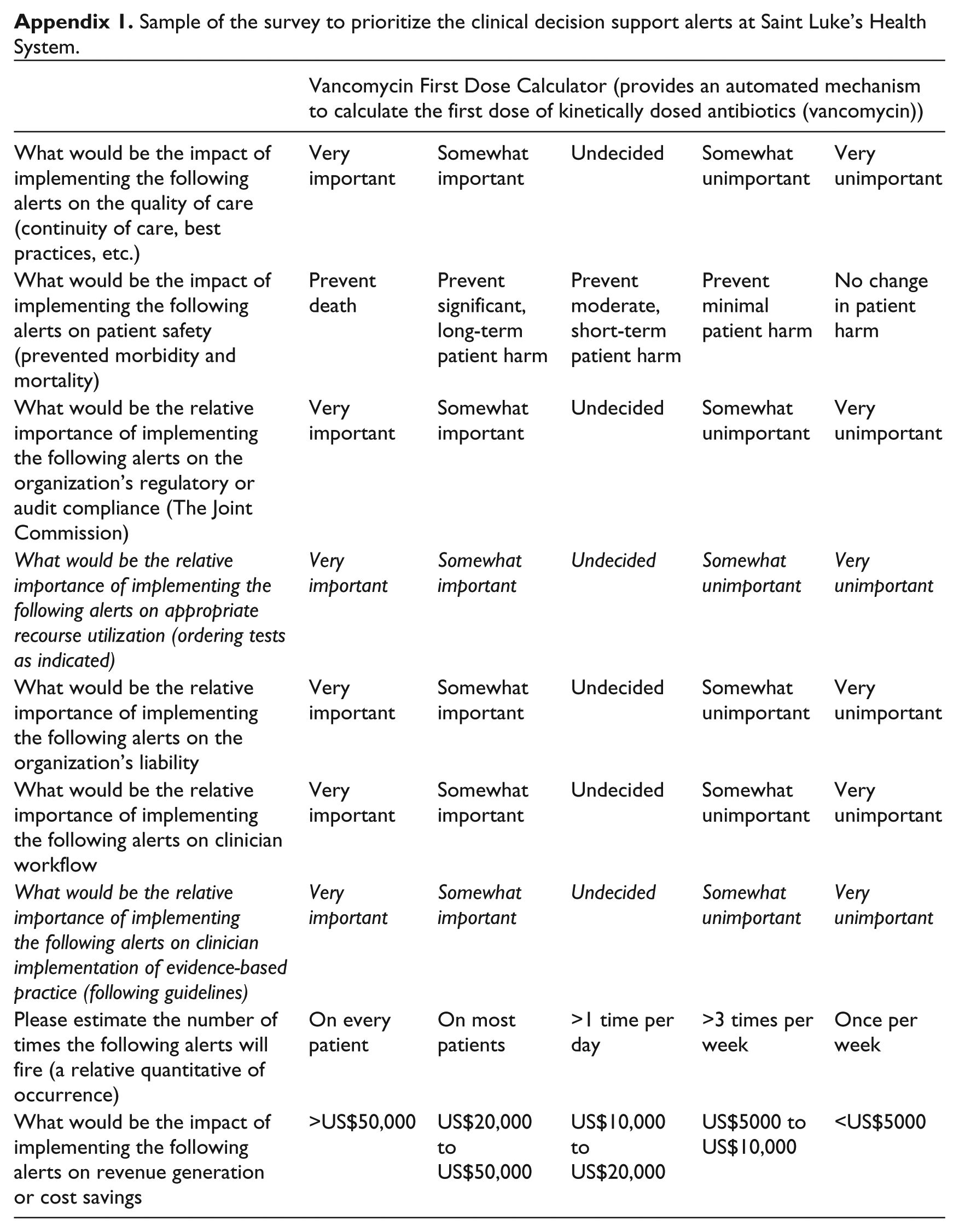

The prioritization tool was cross-matched with 13 potential decision support alerts that were defined using expert consensus sessions that included three components to conform with the modified Delphi process. 9 A sample of this part of the survey is included in Appendix 1.The 13 potential alerts were as follows:

Vancomycin First Dose Calculator (provides an automated mechanism to calculate the first dose of kinetically dosed antibiotics (vancomycin)).

Inpatient Culture With Inappropriate Antibiotic (informs users when the patient’s drug does not treat or is ineffective per culture sensitivities).

Stress Ulcer Prophylaxis Discontinuation (alerts providers when stress ulcer prophylaxis is inappropriate and may be discontinued).

Intravenous (IV) to PO Conversion (provides users with an automated alert to consider discontinuing converting an IV to a PO medication in conjunction with equivalent dosing).

SrCr Monitoring (informs users when a patient meets criteria for Acute Kidney Injury (see risk, injury, failure, loss, and end stage (RIFLE) and Acute Kidney Injury Network (AKIN) staging criteria)). 10

Deep vein thrombosis (DVT) Prophylaxis within 4 h (automated guideline to select recommended DVT prophylaxis if not entered 4 h post admission).

Risk Evaluation and Mitigation Strategy (REMS) Program Required (alerts providers to Food and Drug Administration (FDA) mandated REMS requirements).

Criteria for Sepsis (alerts providers when patients meet criteria for sepsis during admission).

Core Measure Sets: acute myocardial infarction (AMI) (prompts providers to remain in compliance with Center for Medicare & Medicaid Services (CMS) Core Measure Sets, for example, when a patient has a diagnosis of AMI and does not have an aspirin order within 24 h of admission).

Core Measure Sets: heart failure (HF) (prompts providers to remain in compliance with CMS Core Measure Sets, for example, identifies patients with a diagnosis of congestive heart failure (CHF) and left ventricular ejection fraction below 40 percent without a beta blocker).

Core Measure Sets: Pneumonia (prompts providers to remain in compliance with CMS Core Measure Sets, for example, when blood cultures have not been performed prior to 24 h after hospital arrival in patients with a differential diagnosis of pneumonia).

Core Measure Sets: Surgical Care Improvement Project (prompts providers to remain in compliance with CMS Core Measure Sets, for example, alerts providers 24 h after surgery end time if prophylactic antibiotics have not been discontinued).

Core Measure Sets: Stroke (prompts providers to remain in compliance with CMS Core Measure Sets, for example, alerts providers at discharge when the patient has a diagnosis of ischemic stroke and is not on antithrombotic therapy).

The survey was critically reviewed and tested by the research team, and select physicians were asked to pilot the survey to provide face validity. After revisions, the full survey was deployed to the study participants using SurveyMonkey™ (surveymonkey.com, LLC., Palo Alto, CA). An initial invitation to complete the survey was sent via email on 18 March 2013. Weekly reminders were sent thereafter until data collection ended on 12 April 2013. We were able to track participation and export the data for analysis using this platform.

The Saint Luke’s Hospital Institutional Review Board deemed this study exempt, and the research received no specific grant from any funding agency in the public, commercial or not-for-profit sectors.

Data analysis

Descriptive statistics were performed on participant demographic data including measures of central tendency (median and mode) and variability (range, standard deviation). Factor analysis is a statistical method of grouping highly correlated variables which measure different dimensions of a complex characteristic, such as prioritization. Factor analysis was used to verify that the prioritization tool was measuring distinguishable characteristics impacting priority hypothesized by the authors. Cronbach’s (or coefficient) alpha was used to test for the reliability of the prioritization tool or the degree to which individual variables team together to measure a characteristic. It is basically the average of the correlations of each item in a scale to the total scale. For the total priority score, missing values were replaced with the mean of any of the six characteristics that had been answered, and if none were answered, the total priority score was denoted as missing. The Kruskal–Wallis analysis of variance (ANOVA) by ranks was used to analyze the Likert-type scale ratings. ANOVA with post hoc Tukey Analysis was used to determine the difference between respondent groups in total priority scores for the 13 alerts. We used weighted measures (physician = 1, pharmacist = 3, SME = .67) for the samples since their total numbers varied by factors of 3–4. To control for the multiple comparisons problem (39 comparisons), we used a p value of .001 to determine significance. IBM™ Statistical Package for the Social Sciences (SPSS™) Statistics (Copyright © IBM Corporation 1989, 2011) was used for statistical analysis.

Results

Survey participants

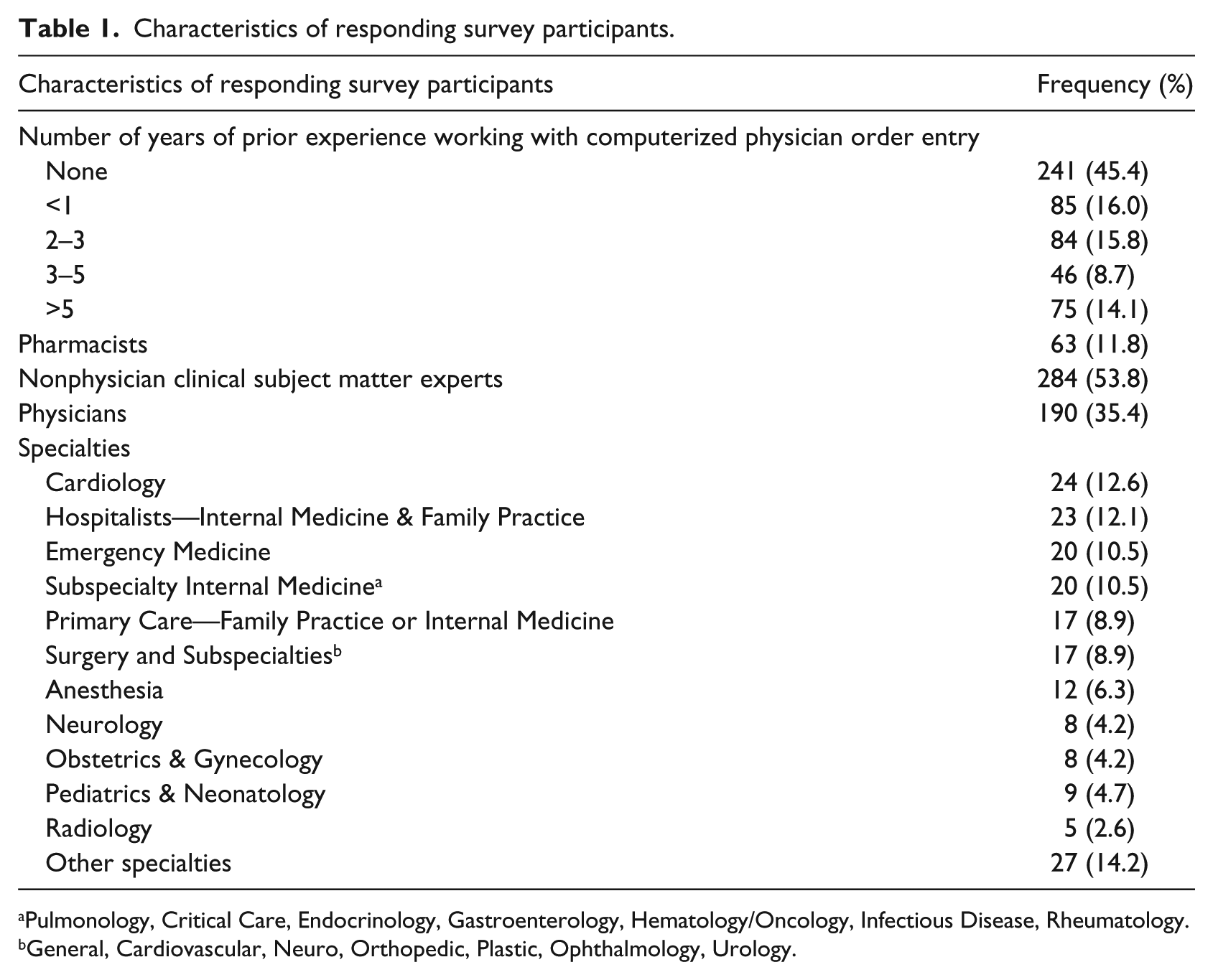

The survey was distributed to 850 participants meeting the inclusion criteria. A total of 550 responses were received for an overall response rate of 64.7 percent. Demographics regarding the survey population are displayed in Table 1.

Characteristics of responding survey participants.

Pulmonology, Critical Care, Endocrinology, Gastroenterology, Hematology/Oncology, Infectious Disease, Rheumatology.

General, Cardiovascular, Neuro, Orthopedic, Plastic, Ophthalmology, Urology.

Reliability and validity of the prioritization tool

Factor analysis was performed using the SMEs, a subgroup with a variety of jobs and experiences, to determine whether the prioritization tool was measuring distinct characteristics. The six Likert-type questions were applied to each of the 13 potential alerts. Varimax and Promax rotation delivered components (or factors) that explained 87.8 percent of the variance in the way the questions were answered, and the patterns led us to believe the questionnaire had modest construct validity. Appendix 2 provides details supporting the reliability and validity of the prioritization tool. All six prioritization characteristics separated out into individual components in the factor analysis with the exception that “Organizational Liability” and “Legal and Regulatory Compliance” loaded together on one component. This indicates difficulty separating those two issues out at least statistically. Cronbach’s alpha (.78) revealed a high degree of internal reliability of the tool for each alert that was evaluated.

CDS alerts

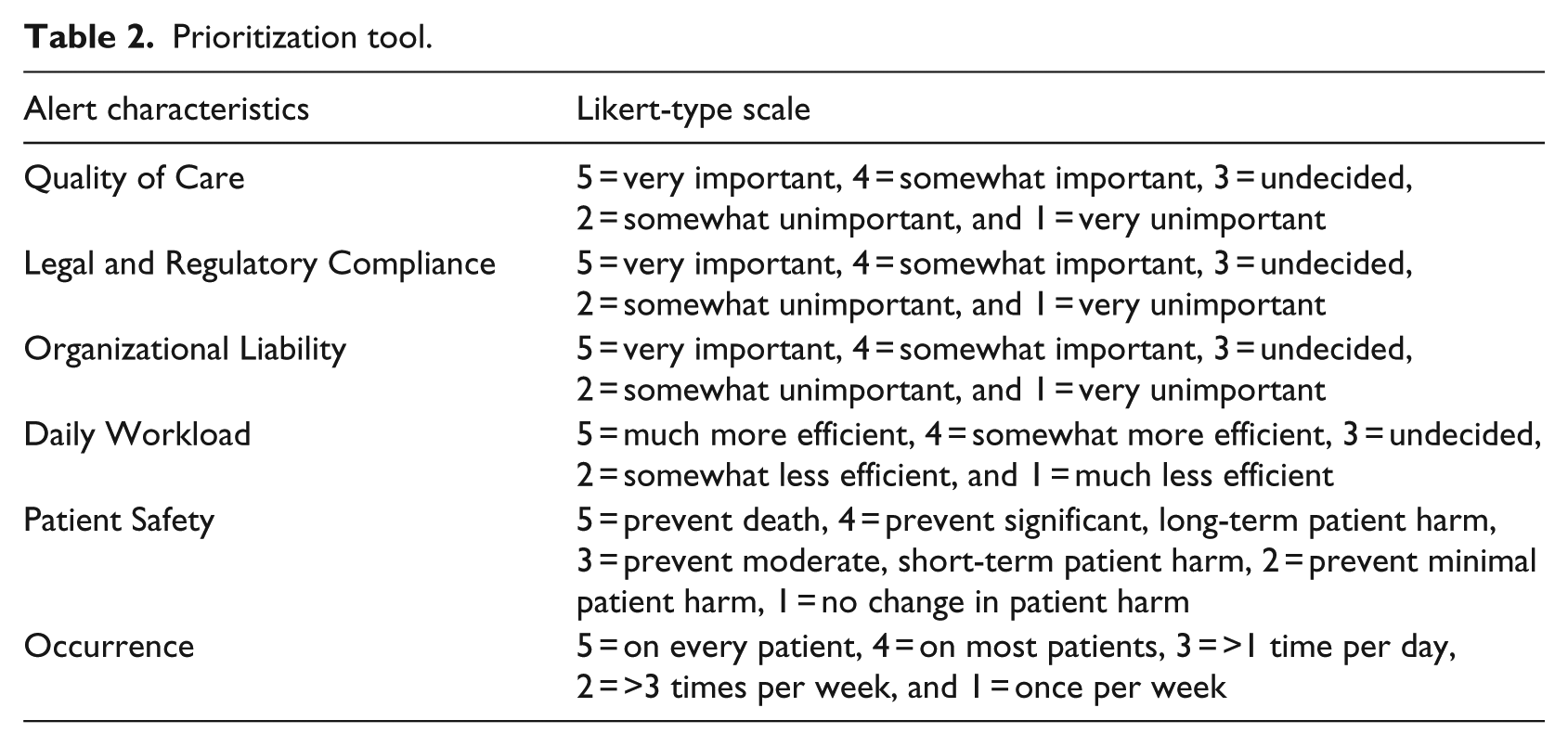

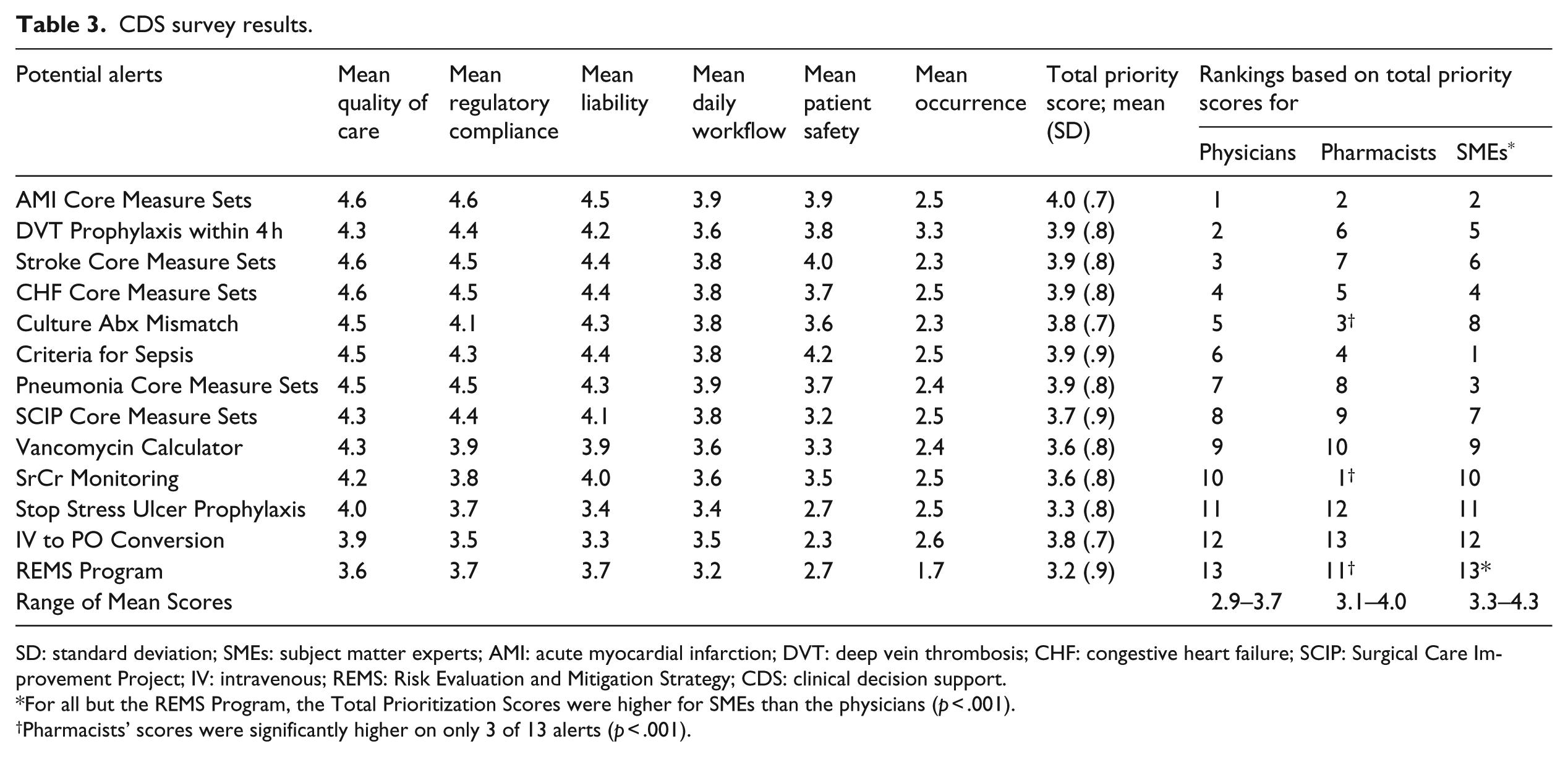

Participants were asked to rate the 13 alerts according to predetermined characteristics using the Likert-type scale (Table 2). The mean score for each alert characteristic ranged from 1.7 to 4.7 (median 3.8). The mean total priority score for all participants (Table 3) for the 13 alerts based on the average participants’ ratings ranged from 3.2 to 4.0.

Prioritization tool.

CDS survey results.

SD: standard deviation; SMEs: subject matter experts; AMI: acute myocardial infarction; DVT: deep vein thrombosis; CHF: congestive heart failure; SCIP: Surgical Care Improvement Project; IV: intravenous; REMS: Risk Evaluation and Mitigation Strategy; CDS: clinical decision support.

For all but the REMS Program, the Total Prioritization Scores were higher for SMEs than the physicians (p < .001).

Pharmacists’ scores were significantly higher on only 3 of 13 alerts (p < .001).

The mean total priority score for all participants was compared for each category of participant. For all but the “REMS Program Alert,” the Total Prioritization Scores were significantly higher for SMEs than the physicians (p < .001), whereas pharmacists’ scores were significantly higher on only 3 of 13 alerts than physicians’ scores (p < .001). In order to highlight the differences in priorities between the three groups, the alerts were ranked based on their groups’ scores (Table 3). Pharmacists scored an alert for Serum Creatinine Monitoring that would “inform users when a patient meets criteria for acute kidney injury” highest and gave it a significantly higher score than physicians (4.0 vs 3.3, p < .001). SMEs rated an alert for Sepsis Criteria that would “alert providers when patients meet criteria for sepsis during admission” with the highest score (4.3 vs 3.5 in physicians, p < .001). Pharmacists scored an alert relatively high compared to physicians that would “inform users when the patient’s drug does not treat or is ineffective per culture sensitivities” (3.9 vs 3.6, p < .001).

Discussion

A process was established to evaluate the prioritizing of alerts within a CPOE system that may be applicable to similar institutions. Using consensus sessions that engaged a group of experts in a modified Delphi rating process, six characteristics and associated Likert-type scales to prioritize alerts were identified. 9 The list of characteristics embodies a proportion of alerts that affect important aspects of patient care. This targeted assessment can be effectively employed in a survey and/or committee on a continuous basis.

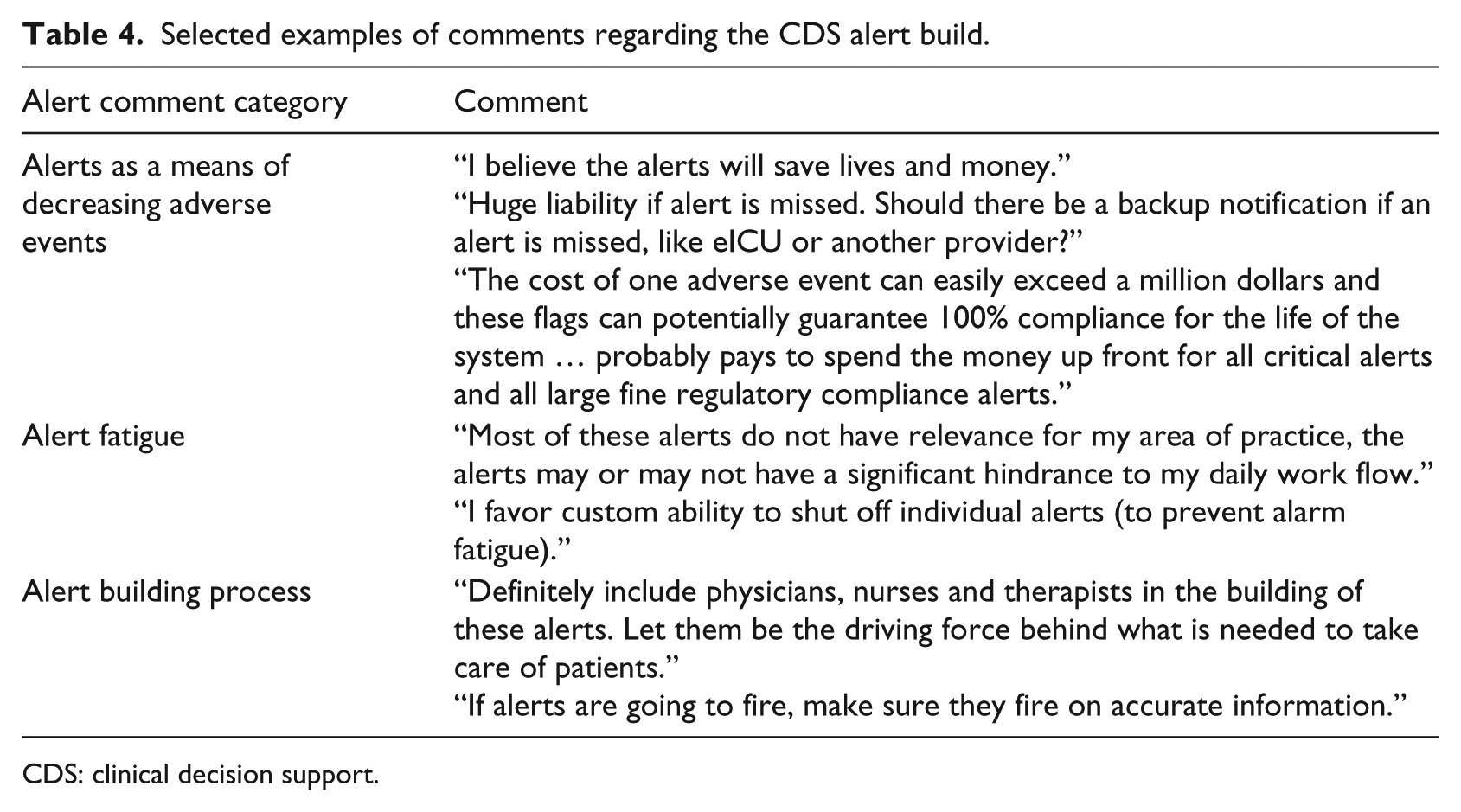

A total of 39 respondents provided written comments about the CDS alert system build process. Selected examples of these comments are found in Table 4.

Selected examples of comments regarding the CDS alert build.

CDS: clinical decision support.

A total of 13 alerts were identified and prioritized based on their impact on patient care. All alerts were considered complex in nature and were not pre-built into model CPOE systems. Although a comprehensive list of alerts was not established, alerts pertinent to institutions such as SLHS were identified while keeping user desensitization in mind. Utilization of a large survey sample, with diverse expertise in medical practice, provides validity to this tool so it can be applied as a starting point to evaluate CDS alerts at other institutions. This survey indicated that the top rated alerts were AMI Core Measure Sets, DVT Prophylaxis within 4 h, and Criteria for Sepsis. Thus, these alerts among others were prioritized and built for the implementation at SLHS in March 2014. There was no statistically significant difference between the top three alerts.

The tool has been found to have reliability and validity. In particular, the inter-evaluator reliability was high based on Cronbach’s alpha analysis. Our pilot testing showed it had face validity across different categories of personnel and concurrent validity across prioritization characteristics. Further work may need to be given to help survey respondents understand the differences between “Organizational Liability” and “Legal and Regulatory Compliance.”

The developed prioritization tool, according to the investigators’ knowledge, is the first attempt to systematically evaluate CDS alerts within a CPOE system. Components of this study, such as the alert characteristics, have been employed in related research that sought to determine where in the workflow the alert should appear, who receives the alerts, and how many alerts were optimal; however, none of these studies incorporated what alerts should fire.11,12

Within CPOE structures, there is significant CDS variability. Institutions with more robust CDS alerts may realize cost reduction through diminished Adverse Drug Events (ADEs) via reducing the duration of patient hospitalization, cost of treating ADEs, and litigation exposure. 12 Likewise, additional revenue from meeting core measures may be attained. 12

Future research should identify practice standards to address CPOE maintenance. This includes a system to address CDS requests in an organized and timely manner through a standardized form; an interdisciplinary committee including informatics, pharmacy, and physician staff to evaluate and prioritize requests; and a method to ensure consistent assessment. These recommendations should also address metrics to measure alert efficacy and impact on an ongoing basis. In order to further analyze the proposed alerts, the alert characteristics could be split into more specific indicators. It is also recommended in future iterative sessions to incorporate a qualitative method of collecting and analyzing participants’ impressions regarding their participation in these sessions.

There are a number of limitations to our study. First, there is a potential sampling bias as individuals chosen to participate were not randomly sampled, and all participants meeting the inclusion criteria received an invitation to complete the survey. No information is known about the 35 percent of participants who did not respond to the survey. Moreover, an unknown sampling bias due to participants’ area of practice may exist. This demographic was only collected for physicians and is unavailable for participants of other professions due to the manner in which data were collated and de-identified upon its return. For example, one cannot determine (1) the total percentage of participants that practice in cardiology, (2) whether participants who practice in cardiology rate the AMI Core Measure Sets higher than participants in other areas of practice, or (3) whether participants in one area of practice rate alerts differently according to their profession (nurse, physician, pharmacist, etc.). Additionally, the respondent groups were of different sizes. To control for this difference, we used weighted measures, but found the results were the same as the unweighted measures. Second, it was a questionnaire survey study and is subject to recall bias. Third, the ability to generalize the study results is limited by the nature of the alerts evaluated. Our study evaluated alerts specific to the needs of SLHS, and each institution must consider the CDS alerts that address their needs. An important future effort is monitoring and ensuring that less than optimal use of the system by practitioners is overcome by evaluating workflow and systemic processes. In addition, the ability to build CDS alerts is dependent on the capabilities of each individual facility’s CPOE system.

Conclusion

In conclusion, our study establishes a process for developing a prioritization tool for a CDS build within a CPOE system that may be applicable to similar institutions. Six alert characteristics determined by a group of experts using a validated modified Delphi rating process were defined, and associated Likert-type scales were used to prioritize alerts. A total of 13 alerts, chosen for their impact on patient care, were identified for prioritization. Utilizing this tool, the CDS survey prioritized alerts for construction within the SLHS CPOE. Our results may be beneficial to health systems planning CPOE implementation.

Footnotes

Appendix 2

Appendix 1.

Sample of the survey to prioritize the clinical decision support alerts at Saint Luke’s Health System.

| Vancomycin First Dose Calculator (provides an automated mechanism to calculate the first dose of kinetically dosed antibiotics (vancomycin)) | |||||

|---|---|---|---|---|---|

| What would be the impact of implementing the following alerts on the quality of care (continuity of care, best practices, etc.) | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| What would be the impact of implementing the following alerts on patient safety (prevented morbidity and mortality) | Prevent death | Prevent significant, long-term patient harm | Prevent moderate, short-term patient harm | Prevent minimal patient harm | No change in patient harm |

| What would be the relative importance of implementing the following alerts on the organization’s regulatory or audit compliance (The Joint Commission) | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| What would be the relative importance of implementing the following alerts on appropriate recourse utilization (ordering tests as indicated) | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| What would be the relative importance of implementing the following alerts on the organization’s liability | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| What would be the relative importance of implementing the following alerts on clinician workflow | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| What would be the relative importance of implementing the following alerts on clinician implementation of evidence-based practice (following guidelines) | Very important | Somewhat important | Undecided | Somewhat unimportant | Very unimportant |

| Please estimate the number of times the following alerts will fire (a relative quantitative of occurrence) | On every patient | On most patients | >1 time per day | >3 times per week | Once per week |

| What would be the impact of implementing the following alerts on revenue generation or cost savings | >US$50,000 | US$20,000 to US$50,000 | US$10,000 to US$20,000 | US$5000 to US$10,000 | <US$5000 |

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.