Abstract

Effort has been made to study the effect of medication-related clinical decision support systems in the inpatient setting; however, there is not much known about the usability of these systems. The goal of this study is to systematically review studies that focused on the usability aspects such as effectiveness, efficiency, and satisfaction of these systems. We systematically searched relevant articles in Scopus, Embase, and PubMed from 1 January 2000 to 1 January 2016, and found 22 articles. Based on Van Welie’s usability model, we categorized usability aspects in terms of usage indicators and means. Our results showed that evidence was mainly found for effectiveness and efficiency. They showed positive results in the usage indicators errors and safety and performance speed. The means warnings and adaptability also had mostly positive results. To date, the effects satisfaction of clinical decision support system remains understudied. Aspects such as memorability, learnability, and consistency require more attention.

Introduction

Medication errors occur frequently in health care and are costly expenses, both economically and clinically.1–4 There is evidence that up to 9.1 percent of all prescriptions contain errors, but literature on the subject varies. 5 The consequences of medication errors can be severe, impacting mortality and morbidity; an estimated 7000 deaths each year in the United States are attributed to medication errors. 1

Electronic prescribing is an opportunity to reduce the amount of medication errors.6–8 Electronic prescribing or e-prescribing refers to a computerized physician order entry (CPOE) with or without clinical decision support system (CDSS). We define CPOE as “a system that enables physicians or other medical personnel to enter medical orders in an electronic system” and CDSS as “a system that supports physicians and other medical personnel in decision making.” Clinical decision support is known to improve clinical practice. 9

A study done by Gartner for the Swedish Ministry of Health and Social Affairs estimates that 100,000 inpatient adverse drug events can be prevented yearly by the use of computerized order entry and clinical decision support. According to this study, this would result in 700,000 saved bed-days and almost €300 million saved. 10

CDSSs have been proven to have positive effects on clinical practice, with safety and quality benefits being among these effects.9,11,12 However, the effect of some of the aspects of CDSSs, such as usability, which is defined by the International Standards Organization (ISO) as “the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency, and satisfaction in a specified context of use” 13 has not been studied yet extensively. Since usability is one of the success criteria of implementing and utilizing CDSSs, it is important to study the consensus on this criterion. Previous studies, however, showed that usability aspects of CDSSs and integration of these systems within e-prescribing systems still need improvement in different settings.14–16 A list of 168 instances of usability flaws of medication-related alerting functions was generated previously. This list could act as a checklist for medication-related CDSSs during their design or evaluation process. 14 However, a systematic study of the usability of medication related CDSSs in the inpatient setting is missing which indicates what the current evidence is regarding the effectiveness, efficiency, and satisfaction pillars of the usability.

This article intends, therefore, to summarize all relevant literature on the usability of CDSSs. Only CDSSs that assist during the medication process in the inpatient setting were considered in this review.

Methods

Definitions

Usability is defined as “the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency, and satisfaction in a specified context of use” by the ISO. 17 ISO has divided usability into three subcategories: effectiveness, efficiency, or satisfaction. For this study, the following definitions were used for each of these aspects as defined in ISO 9241-11:

Effectiveness: “the accuracy and completeness with which users achieved specified goals.”

Efficiency: “the resources expended in relation to the accuracy and completeness with which users achieve goals.”

Satisfaction: “freedom from discomfort, and positive attitude to the use of the product.”

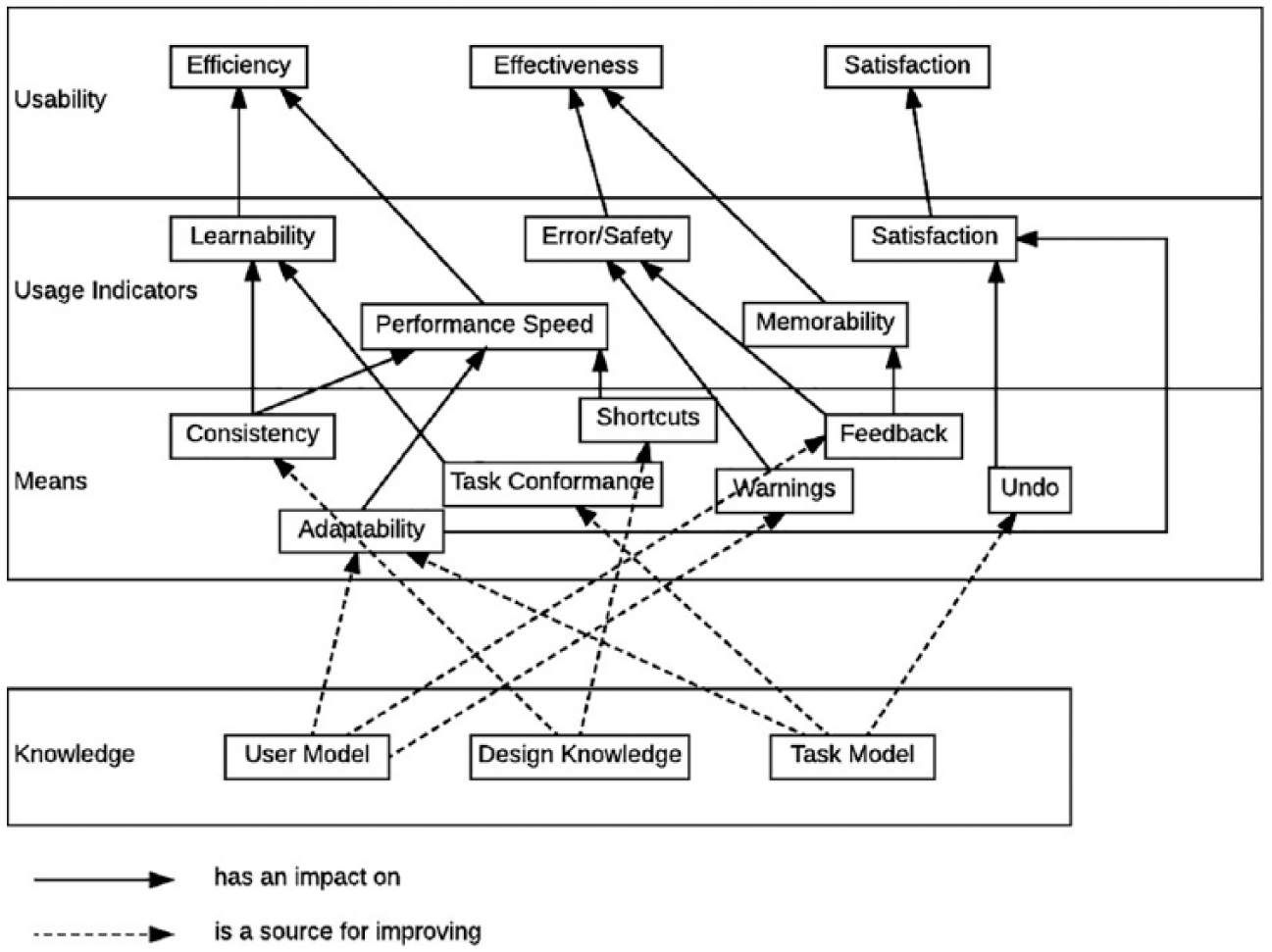

In order to provide operationalized measures of usability, the Van Welie’s model was used. 18 This model consists of three related layers. The first one comprises the three aspects of usability, that is, effectiveness, efficiency, and satisfaction used by the ISO. 17 The second layer relates usage indicators to these aspects. The third layer provides means by which the usage indicators can be measured. Effectiveness has two usage indicators, errors and safety, defined as the rate of errors, and memorability, defined as retention over time. Efficiency has two usage indicators as well: learnability and performance speed. They are defined as, respectively, the time to learn and the speed of performance. Satisfaction has only one usage indicator: satisfaction, defined as the user satisfaction.

We aim to summarize the results pertaining to the three aspects of usability and to explore which of Van Welie’s usage indicators need further exploration in CDSS evaluation research.

The third layer provides means by which the usage indicators can be measured. These include consistency, the availability of undo operations, and the presence of feedback. These usage indicators are then analyzed to discover which usability categories in the ISO standard need further investigation.

Data sources and inclusion and exclusion criteria

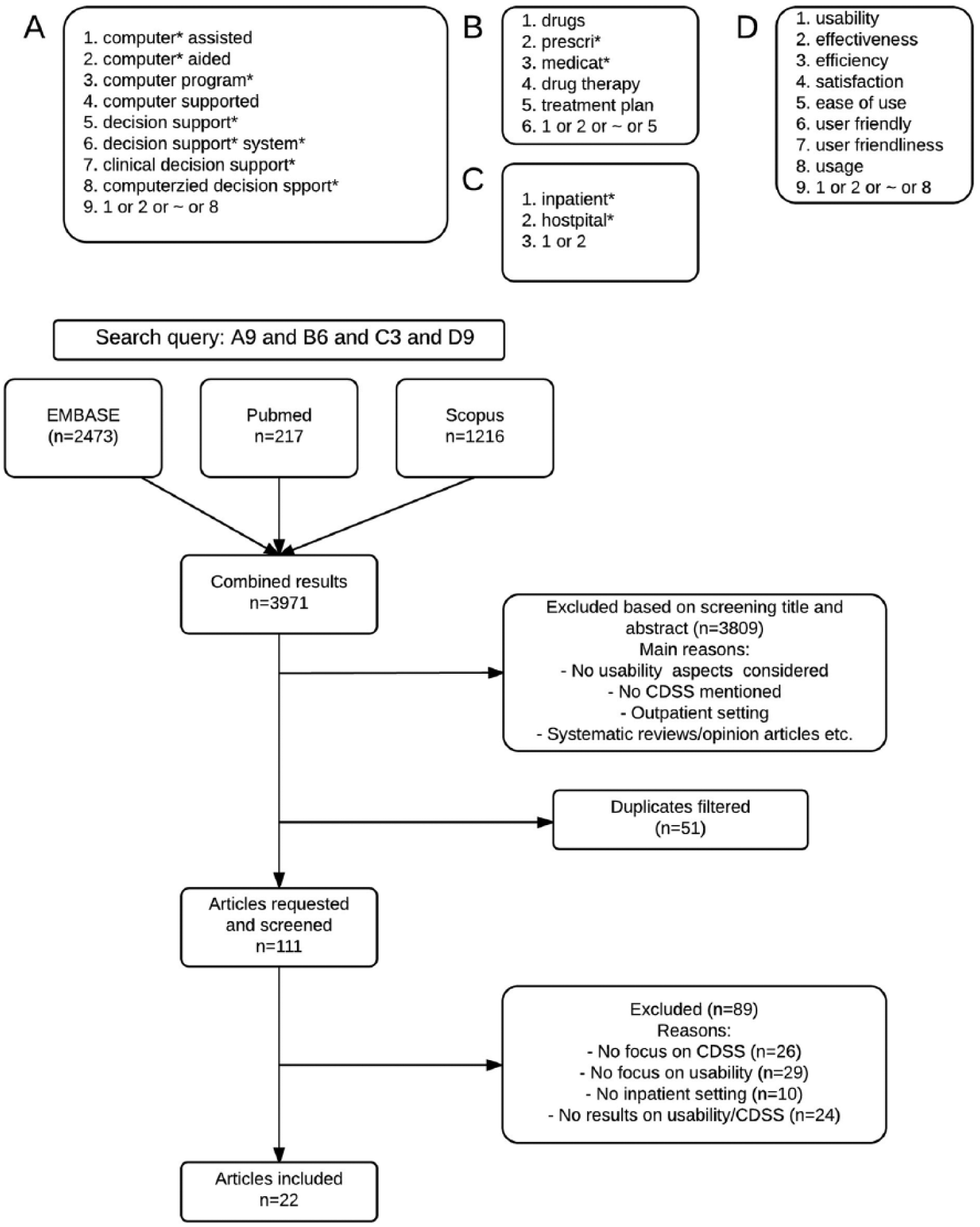

Articles were searched in Scopus®, PubMed®, and EMBASE®, published between 1 January 2000 and 1 January 2016. We filtered the results to exclude articles in non-English languages, as well as papers regarding non-medical disciplines or animals, opinion papers, congress papers, and letters. The final queries were executed on 4 February 2016. Figure 1 illustrates the search strategies that were applied for our literature search. The query we used was composed of four separate parts, with the first part (A) having keywords relating to electronic prescription and decision support, the second part (B) medication related keywords, the third part (C) the inpatient setting and the fourth part (D) usability. The four parts were combined using “and” statements resulting in the final query.

Flow chart of the search queries.

Articles were selected if they met the criteria of evaluating aspects of usability of CDSSs related to medication prescription in an inpatient setting. Studies that were patient-centered instead of clinician-centered, were performed in an outpatient setting, studied cost-effectiveness, or had a CPOE or e-prescribing system without CDSS were excluded from selection.

Data extraction and analysis

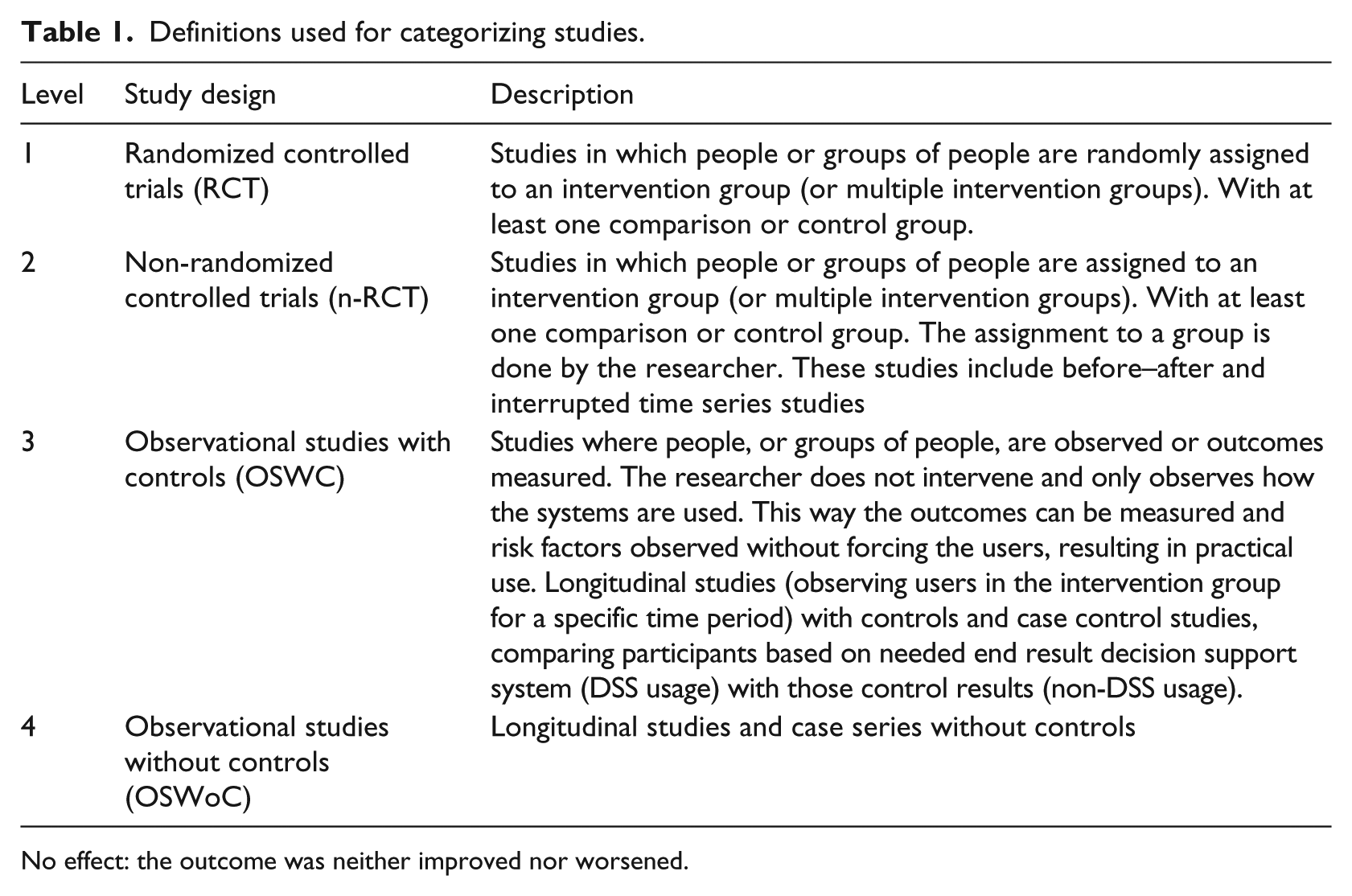

All article titles and abstracts were independently screened by two reviewers (B.K., H.A.) to select those qualified for inclusion. Any discrepancy was resolved by consensus among the two reviewers, and if needed, a third reviewer (M.A.) was consulted. Extract data from included articles (author, year, country, and so on); inclusion and exclusion and demographic data were organized using structured spreadsheets. Studies were categorized based on their usability aspect (effectiveness, efficiency, or satisfaction) and, if possible, further levels as defined by Van Welie et al. 18 This was done in three steps: first the general usability aspect, followed by the usage indicator, and last by the means. In addition, all studies were divided into four categories according to a modified version of the hierarchy of study designs developed by the University of California, San Francisco, Stanford Evidence-Based Center as can be seen in Table 1. We also divided the results in outcome groups based on their type of effect. The types of effect that were possible were

Positive: overall, the outcome improved;

Negative: overall, the outcome worsened;

Mixed: the outcome was both improved and worsened.

Definitions used for categorizing studies.

No effect: the outcome was neither improved nor worsened.

Results

After running the final query, a combined result of 3970 articles was acquired. Screening the titles and abstracts of these articles, a total of 162 articles was selected for full text review. In all, 51 articles were discarded as duplicates. The remaining 111 articles were thoroughly read and a total of 89 articles were excluded due to a lack of focus on clinical decision support (n = 26), a lack of focus on usability (29), a lack of research results on usability/CDSS (n = 24), or because the research was done in an outpatient setting (n = 10). In total, 22 articles were classified as relevant and included in the final selection.

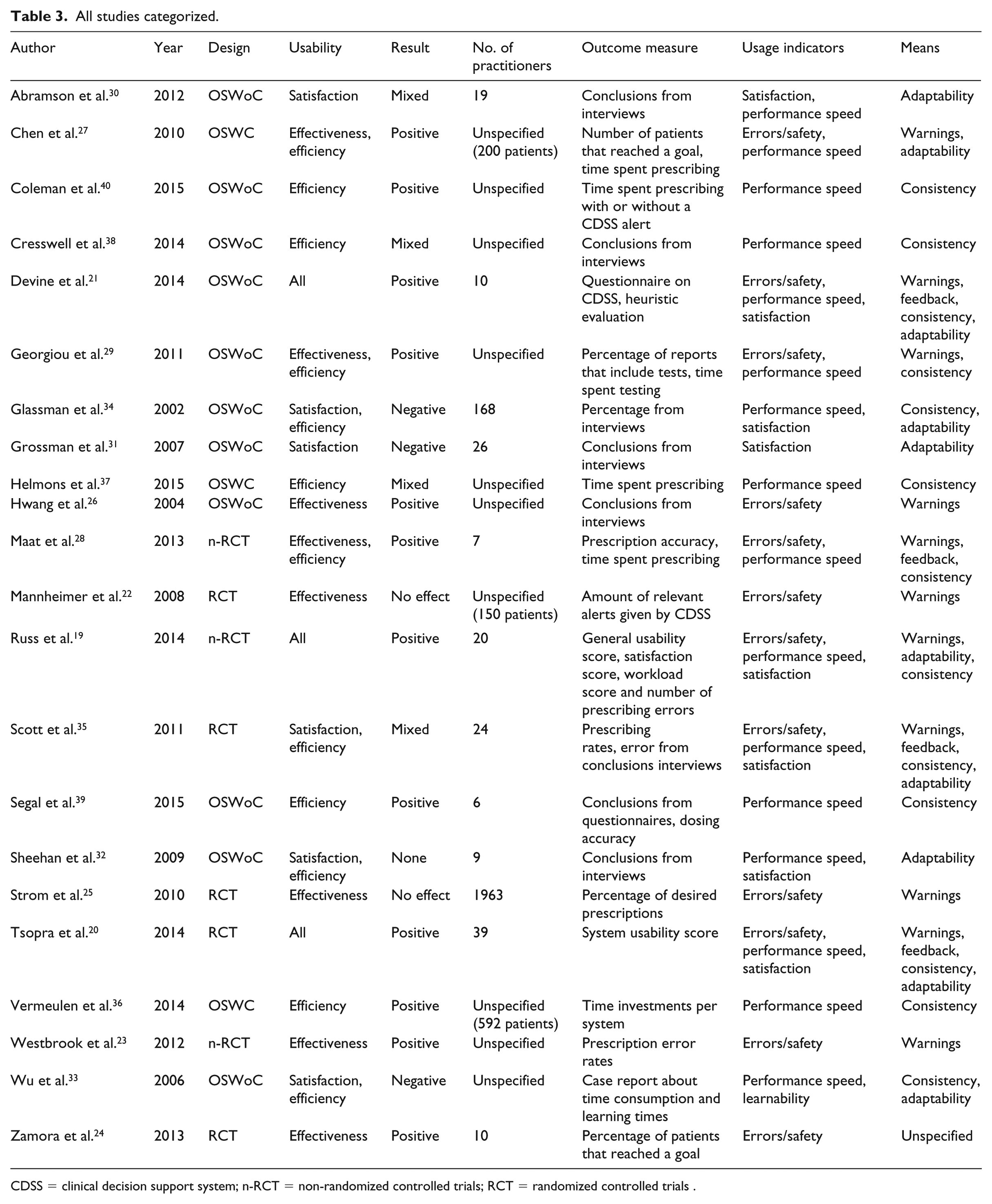

Out of the 22 studies, 9 (41%) were done in North America, 9 (41%) in Europe, 2 (9%) in Asia, and 2 (9%) in Oceania. In total, 12 studies (54%) reported a positive usability of CDSS systems, while 3 (14%) concluded negative results, 3 (14%) found no effect at all, and 4 (18%) found mixed results. A total of 10 articles (45%) were observational studies without controls, 5 (23%) were randomized controlled trials, 4 (18%) were observational studies with controls, and 3 (14%) were non-randomized controlled trials. In total, 2 studies were done before 2005 and 6 before 2010. All other studies were performed after 2010.

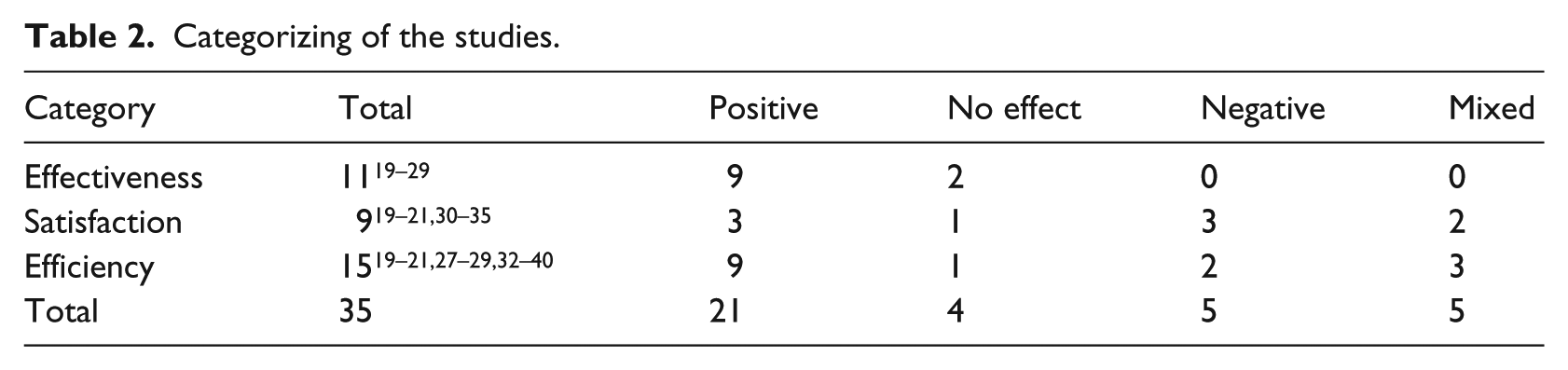

Based on their outcome, each article was categorized in one of the main aspects of usability according to ISO definitions. These results are summarized in Table 2. A total of 11 studies could be categorized in effectiveness, 15 in efficiency, and 9 in satisfaction. One should note that some studies evaluated more than one specific area of usability, so the sum of 35 is higher than the total number of included articles. This also causes certain studies to appear in the table up to three times, namely, the three studies19–21 that studied all three aspects and reported positive results for all three aspects. A complete overview of each study and its categorization is provided in Table 3. The different aspects of the usability of CDSSs in the inpatient setting related to medication will be discussed in the next sections.

Categorizing of the studies.

All studies categorized.

CDSS = clinical decision support system; n-RCT = non-randomized controlled trials; RCT = randomized controlled trials .

Effectiveness

Eleven (50%)19–29 studies investigated the effectiveness of CDSSs, out of which three (27%)19–21 studied all three aspects of usability and three (27%)27–29 studied effectiveness and efficiency. Three (27%)21,26,29 studies on effectiveness were observational studies without controls, one (9%) 27 was an observational study with controls, four20,24,25 (36%) were randomized controlled trials, and three (27%)19,23,28 were non-randomized controlled trials. Nine (81%)19–21,23,24,26–29 report an increase in effectiveness by using a CDSS regardless of the focus of their outcome, while two (18%)22,25 find that the effectiveness does not change. Two studies (18%) report an increase in effectiveness after improving the CDSS with usability principles in mind, while the other nine (81%) look at the difference between no CDS and CDSS. The latter group had no negative results but did have two articles that found no effect,22,25 the other seven were positive.

We analyzed the results based on Van Welie’s model (see Figure 2). All effectiveness studies used the usage indicator errors/safety. The second usage indicator for effectiveness, memorability, was not studied. The articles focused mostly on the right dosing of medication (36%), detecting prescription errors (36%) and helping physicians making better decisions (18%).

Layered model of usability as made by Van Welie et al. 18

The means that belong to the usage indicator errors/safety are warnings and feedback. All the articles that studied effectiveness used warnings as their means, with two of them also using feedback in addition to the warnings. The two studies that investigated feedback and warnings20,21 both (100%) reported positive results. The nine studies that looked at just warnings19,22–29 got positive results in seven (78%) cases19,23,24,26–29 and no results in two cases22,25 (22%).

Satisfaction

A total of nine (41%)19–21,30–35 studies looked at satisfaction, from which two (22%)30,31 solely studied satisfaction. There were three (33%)19–21 studies that looked at all aspects and four (44%)32–35 articles took a look at both satisfaction and efficiency. Six (66%)21,30–34 of the studies studying satisfaction were observational studies without controls, two (22%)20,35 were randomized controlled trials, and one (11%) 19 was a non-randomized controlled trial. Three studies (33%) report a positive effect, while three (33%) report a negative effect, two (22%) a mixed effect, and one (11%) reports no effect at all. Six studies got their results via an interview or usability survey (System Usability Scale (SUS), Computer System Usability Questionnaire (CSUQ), and Post Study System Usability Questionnaire (PSSUQ)), while the three studies that studied at all aspects used case scenarios or think-aloud. Four (44%) of the nine studies had quantitative results, while five (56%) had qualitative results.

Using Van Welie to further specify the usability aspects, we found that all articles used satisfaction as a usage indicator. The usage indicator satisfaction has two means: undo and adaptability. Undo as a mean was not studied, while all studies used adaptability as their mean.

Efficiency

Fifteen (68%)19–21,27–29,32–40 articles were included that studied efficiency. Out of those articles, five (33%) studied efficiency solely, four (27%) both efficiency and satisfaction, three (20%) looked at efficiency and effectiveness, and three (20%)19–21 studied all three usability aspects. As for study designs, two (13%) were randomized controlled trials, two others (13%) non-randomized controlled trials, four (27%) were observational studies with controls, and seven (47%) were observational studies without controls. A total of nine articles (60%) concluded that there was a positive effect on the efficiency, while one (7%) found no effect at all, two (13%) found a negative effect, and three (20%) found mixed effects.

By using Van Welie, we further analyzed the efficiency results. One of the 15 studies 19 (7%) took a look at the workload of the physician with a positive result. The same study, along with one other 33 (13%) studied the usage indicator learnability, with, respectively, one positive and one negative result. Learnability was measured by analyzing video footage 19 and reviewing prescription times and previous uses 33 and resulted in positive and negative results, respectively. The other usage indicator, performance speed, was studied in 1319–21,27–29,33,34,36–40 studies. Nine19–21,27–29,36,39,40 (69%) found positive results, two33,34 (15%) found negative results, and two37,38 others (15%) found mixed results. Two studies that used interviews found a mixed effect 35 and no effect, 32 respectively.

The means regarding learnability and performance speed are consistency, task conformance, and adaptability. Six studies included both consistency and adaptability19–21,33–35 as their means, with three19–21 (50%) being positive, two33,34 (33%) with negative conclusions, and finally one 35 with mixed results. Seven studies investigated just consistency.28,29,36–40 They drew positive conclusions in five studies28,29,36,39,40 (71%) and mixed results in the other two37,38 cases. Last, two studies looked at only adaptability.27,32 One 32 found no results whatsoever, while the other 27 found positive results. The mean task conformance was not studied.

Discussion

Main findings

Our systematic review analyzed the available literature on the different aspects of usability of CDSSs in the inpatient setting related to medication prescription. The results of the review are mixed for satisfaction, but generally positive for effectiveness and efficiency. The satisfaction aspect was relatively low, even when not considering the mixed results studies. Only 12 out of the 22 articles included studied only one aspect of usability.

The usability aspect in the effectiveness category was only studied by looking at Van Welie’s usage indicator errors/safety. This is remarkable, because the other usage indicator, memorability, was not studied at all. A large part (81%) of the research focusing on reducing errors and safety was positive, with the other 18% having no results. This indicates that the use of a CDS could have a positive impact on the errors and safety of the medication prescription which is in line with the previous research. Three of these results were obtained, however, with systems tailored to a very specific goal. Although all three of these studies had a positive effect, generalizing them is harder. The means by which effectiveness was studied was clearly focused on warnings. This is not surprising, given the type of system studied. The studies that also included feedback were positive but few. This implies that generating warnings might be easier; as for feedback, more insight knowledge on the situation is needed. Although giving feedback seems very valuable, it might be harder to implement.

Almost all studies on satisfaction consisted of interviews or questionnaires. Think-aloud, case scenarios, and other usability methods to measure satisfaction take a lot more time and are more difficult to perform, which might explain their absence. Other reasons could be that satisfaction is always subjective and personal, making it harder to study in a quantitative way, and that it might not be the top priority of researchers. Our findings show that the results were mixed and no general trend could be identified. This might be caused by the lack of coherent techniques mentioned earlier. Interestingly, only the mean adaptability was used in the nine studies on satisfaction. The other, undo, was not studied at all. This could indicate either a lack of interest or simply a lack of awareness of researchers in this area.

In the category efficiency, 9 out of 15 (64%) found positive results. Only one study investigated the mental workload of the physician. Two studies investigated learnability, but found different results. An explanation for the fact that so few studies investigated learnability and workload aspects could be that it is harder to measure these phenomena than other aspects such as time spent using a system. Thirteen studies—including the ones that investigated learnability—studied performance speed, making it the most popular way to study efficiency by far. Although most research (69%) reported an increase in efficiency, 15% report exactly the opposite: the physician was slowed down by CDSS usage. In addition, two studies conclude mixed results on efficiency. The general trend seems to be that despite most users experience a better efficiency, some view the system as time consuming and therefore a burden. Factors such as previous experience, amount of training time, or having longer experience to use that specific system could influence the efficiency results.

Another aspect to consider is the effect that a CPOE has on a CDSS, since most of the studies we included (81%) studied a CDSS in combination with a CPOE. Even if the CDSS is well designed, certain usability aspects may suffer if it is integrated in a poorly designed CPOE. Two systematic reviews done by Khajouei and Jaspers41,42 on the usability and design of CPOE systems found that the design and configuration of CPOE systems are important. One 42 found that the configuration has an impact on the ease of use, the task behavior when ordering drugs, and the medication error rate. The other 41 notes that the design of a CPOE system is a two-edged sword because it can both be positive for the user and create problems with the usability, work flow, and medication errors. We also found mixed results regarding this aspect.

The origin of the studies is also remarkable: 18 out of 22 articles were from either North America or Europe, giving our review a bias toward Western countries. This may cause our review to be of limited interest to people from different parts of the world, since they may have a different attitude toward medical systems or even implemented a different core system due to different work processes.

The way usability was studied in the articles included is remarkable: there were wide differences in how studies operationalized usability, ranging from interviews to accuracy tests and formal usability scores. Interviews and time measurements were the most popular, with accuracy tests as runner up. More formal methods, such as usability scores and questionnaires, were not too often used, so there is definitely room for improvement there.

Although our review indicates that the effectiveness and efficiency aspects of the usability of CDSSs are generally positive, more research is needed regarding the different aspects of usability of these systems. Two studies19,20 investigated changes in an existing CDS according to usability principles and found better usability results after the change, suggesting that improving the usability of CDSSs does have an effect on user satisfaction.

The usability measures by van Welie were chosen for this study both because of its thoroughness and of its structure. The latter allowed us to break down usability in categories for analysis, therefore providing a general framework for this research. It should be noted, however, that a different model might have resulted in a different classification of the articles.

Comparison with other literature

There have been several (systematic) reviews in the last 15 years on clinical decision support.9,11,43–47 Some11,43,46,47 of them do study aspects of usability, but there is no review that fully reviews all aspects for a certain setting. Furthermore, the most recent review is from 2012, 43 while the others have been published eight or more years ago.

Bright et al. 43 looks, among other things, at efficiency and workload, but does not fully study satisfaction and effectiveness. They did not specifically study the inpatient setting and not all systems were medication related. Their results showed that although there is evidence that demonstrates positive effects of CDSSs, it is surprisingly sparse. In a study by Kaushal et al., 11 the same conclusion regarding error rates is drawn: there is evidence, but it is not convincing enough to draw conclusions. His review does not study any other aspects of usability though, and it dates from 2003. Wolfstadt et al. 46 studied the effect of CDSSs on adverse drug events, but did not discuss general effectiveness or satisfaction and efficiency. She studied both inpatient and outpatient settings, but found most results in the inpatient setting. The authors concluded that more research is needed on this subject.

Eslami et al. 47 focused on computerized physician medication order entry systems. The study is done in the inpatient setting; however, it is mostly about CPOE systems with only little focus on the usability of these systems. He not only finds positive results for satisfaction and usability but also suggests that more research is needed. Marcilly et al. 14 did a study to find usability flaws in medication-related alerting functions which were defined as the violations of usability design principles. In addition, they focused on alerting systems that supported the prescribing of medications, which were used in general hospitals or in primary care general practices. Aligned with their aim, they generated a list of flaws that were found in the literature, which could serve as a checklist for checking the usability of medication-related alerting functions during the design or evaluation process of such systems. In our study, we focused on the inpatient setting and studied the means that measure the usage indicators, which then led to generating knowledge about the level of usability aspects (effectiveness, efficiency, and satisfaction). We also investigated which measures and categories need to be given more attention in future studies regarding the usability pillars of the ISO definition.

Last, because there exists no summary of outpatient literature, a parallel study was performed for that setting. The results of that study can be found elsewhere.

Limitations and strengths

To the best of our knowledge, this is the first systematic review that studied all Van Welie’s aspects of usability of a CDSS in the inpatient setting in detail. Another strength is the fact that our review uses mostly recently conducted research, with 73 percent of our included articles originating from 2010 or later. We used structured data to extract data from these articles systematically.

Our review also has some known limitations. First, the lack of heterogeneous results of our articles made it impossible to do a meta-analysis. Second, although we extensively and systematically searched for articles, it is plausible that we missed studies. Furthermore, we used a mix of the ISO definitions and Van Welie et al.’s 18 usability model in order to cover usability aspects. Had we used another definition or model, we might have found other aspects. Finally, it should be mentioned that the means from Van Welie’s model were not the only possible means to achieve the improvement of the usage indicators; according to Van Welie et al., these were at the moment of the article a given set of appropriate means, and more means might be possible to define. These means were merely a grouping of the best means according to the authors.

Conclusion

In conclusion, evidence could mainly be found for effectiveness and efficiency and showed a high rate of positive results in errors/safety and speed performance. To date, the effects satisfaction of CDSSs regarding medication prescription in the inpatient setting remains understudied. Usability aspects such as memorability, learnability, shortcuts, and undo require more attention. Studies are still needed to generate better insight into the user model as well as the task model for these systems regarding medication prescription in the inpatient setting.

Footnotes

Author contribution

M.A., M.L., and B.K. conceived the study and study design. A.H. and B.K. carried out the search and data analysis under supervision of M.A. M.A. and M.L. coordinated the study. B.K. drafted the manuscript. All authors participated in the interpretation of data and critically read and revised the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.