Abstract

Cancer registry data often lack complete chemotherapy and radiation therapy information. To conduct treatment disparity surveillance, we linked 2005–2009 Nebraska Cancer Registry data with Nebraska hospital discharge data. Due to the high quality of both datasets and the proposed linkage procedure, we had a linkage rate of 97 percent. We demonstrate the utilization of the linked dataset in case finding, treatment update, and treatment surveillance. The results show that the linked dataset is likely to identify up to 5 percent of potential missed cases. We investigated the use of radiation therapy in treating colorectal and breast cancers as case-finding examples. The linked dataset found 12 percent and 14 percent more treatment cases for colorectal and breast cancer patients, respectively.

Keywords

Introduction

State cancer registries are available for all 50 US states and the District of Columbia. Incidence and mortality information from state cancer registries has been routinely used for cancer surveillance. The Centers for Disease Control and Prevention (CDC) National Program of Cancer Registries (NPCR) regularly publishes federal statistics on cancer incidence in the United State Cancer Statistics report, using combined state registry data. While previous studies using cancer registry data have made significant contributions to cancer incidence and staging surveillance,1,2 treatment information in cancer registries has rarely been used to inform the public and policy makers about treatment disparity and treatment effectiveness. As the national priority has moved from disease surveillance to eliminating disparities in cancer care,3,4 demands for cancer care surveillance have increased. In 2000, the Institute of Medicine recommended that investigators use existing data systems, such as the NPCR, to measure variations in the use of appropriate standards of cancer care and to assess care outcomes 5 to determine whether cancer patients in the United States are receiving the most effective care. However, cancer registry data do not usually have complete treatment information, making it difficult to investigate cancer care disparities using only registry data.

To overcome registry data limitations, previous studies have used three approaches to examine cancer treatment disparities. One approach uses hospital data to examine treatment disparities,6,7 but samples from selected hospitals tend to be small and the results are not generalizable. The second approach uses an enlarged sample, linking Surveillance Epidemiology and End Results (SEER) and Medicare datasets. Studies using SEER–Medicare linked datasets are able to identify detailed chemotherapy (CT) and radiation therapy (RT) treatment among those aged 65 years or older,8–12 but those not eligible for Medicare are not included. The third approach uses surveys to supplement cancer registry information. 13 However, this method is costly, which makes it economically unfeasible for surveillance purposes that require updated CT or RT information for all cancer sites. In addition, survey data may be less complete than registry data, as many physicians would not respond to a survey.

Previous studies have linked a statewide cancer registry with hospital discharge data for cancer case ascertainment 14 and for uncovering hard-to-find racial categories.15,16 Polednak 17 linked Connecticut (a SEER state) cancer registry data to hospital discharge data, and he expanded linkage population to all ages. However, his purpose was to examine postmastectomy breast reconstructive surgery in the discharge data rather than updating cancer treatment in the cancer registry. To our knowledge, a data linkage strategy has not been used to identify cancer treatment information, particularly outpatient treatment. Although the SEER database has been linked to Medicare claims data for gaining treatment and treatment cost information, there are needs to study treatment patterns for non-SEER states and non-Medicare patients.

In this study, we conducted cancer treatment surveillance by linking the Nebraska Cancer Registry (NCR) with Nebraska hospital discharge data (NHDD). The intent was to develop a protocol for linking cancer registry and HDD and assess its feasibility and utility. In Nebraska, almost 100 percent of RT is hospital based, and an overwhelming majority of CT is administered in outpatient settings. Similar to linked SEER–Medicare data, linked NCR data and NHDD will result in a population-based data source that will include both Medicare and non-Medicare patients. In addition, the linked dataset can provide information on non-cancer-related diagnoses, detailed cancer-related treatments, and the cost of care and can therefore be used for treatment surveillance, clinical epidemiology, and health services research.

Method

NCR data

Since 1995, the NCR has continuously received the North American Association of Central Cancer Registries’ gold standard awards for quality, completeness, and timeliness. The NCR includes data on cancer patients who reside in Nebraska and/or who are diagnosed or treated for cancer in Nebraska; patient-identifiable information, such as patient ID, name, social security number, and street address; and standard data items, such as patient demographics, tumor site, first-course treatment, and treatment facility ID. Although the majority of cancer cases are registered shortly after diagnosis, case finding is an ongoing process, and it takes up to 2 years after diagnosis to get all cancer cases into the system. For this reason, we selected data from 2005 to 2009, which assured complete case ascertainment.

NHDD

NHDD are maintained by the Nebraska Hospital Association (NHA) and are based on the standard UB-04 form. For the 2005–2009 study period, we had over 13 million hospital records. NHDD include patient-identifiable information, disposition information, demographic variables, diagnostic codes, and procedure codes for all inpatient and outpatient visits to Nebraska’s 87 nonmilitary hospitals. The identifiable information in the NHDD file includes patient ID, patient name, address, and hospital ID. Hospital visits include outpatient visits for such things as CT, RT, emergency room care, and rehabilitation care. Current procedural terminology (CPT) codes used for insurance billing purposes can be retrieved to identify different treatment categories. For the purpose of augmenting outpatient treatment information, we requested CPT codes that directly indicated RT or CT. For CT procedures, the CPT codes include 96400–96549, C8953–C9415, and G0355/G0359; for RT procedures, the CPT codes include 70010–79999. 18

Inpatient procedures with an International Classification of Diseases, Ninth Revision (ICD-9) code indicating cancer treatment were requested, but specific RT or CT codes for billing were not requested due to bundled diagnosis-related group (DRG) codes that cannot uniquely differentiate CT and RT. For this reason, we only searched the ICD-9 procedure codes up to the sixth procedure and coded CT and RT procedures as much as we can. Specifically, we used ICD-9 procedure codes in “99.25,” “17.70,” “94.25,” “94.22,” and “99.28” to capture CT-related procedures and used the codes from 99.21 to 99.29 to capture RT-related procedures. 19

Linkage strategy

We considered deterministic and probabilistic linkage methods and chose the probabilistic method due to lack of a unique identifier (e.g. social security number) between the two datasets. We designed the linkage process according to Newcombe’s four steps, 20 (1) data preparation, (2) matching and merging, (3) manual review, and (4) verification, and used Link Plus 2.0 for data linkage. 21 In addition, all these steps were conducted onsite at the NHA.The resultant file for the analysis had no name, address, and zip code identifiers. The Institutional Review Board (IRB) of University of Nebraska Medical Center approved this study design with the exempt status.

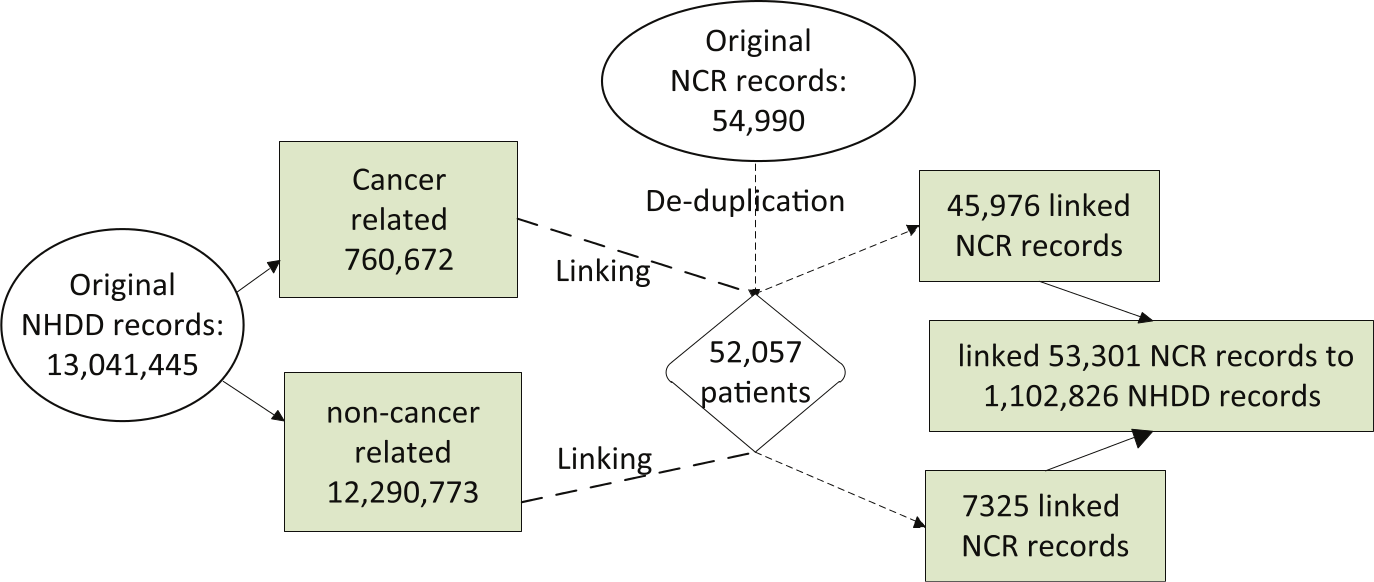

1. Data preparation. Data preparation included checking data quality, de-duplication, and parsing and standardization of the linkage variables common in both datasets. The process started with the NCR data for 1 January 2005 to 31 December 2009, with 54,990 records (Figure 1, top circle), which included both in-state and out-of-state patients. The de-duplication process was based on all linkage variables: first name, last name, date of birth, sex, resident county, resident zip code, and primary cancer site with less than 0.1 percent of registry cases had missing linkage variables. This step resulted in 52,027 unique patients (Figure 1, diamond box).

Flowchart for linking NCR and NHDD records.

Since the 5-year NHDD file was extremely large, we divided it into cancer-related and non-cancer-related datasets to increase computational efficiency (Figure 1, left circle). For each record, we searched the International Classification of Diseases, Ninth Revision, Clinical Modification (ICD-9-CM) diagnostic codes for up to the 10th diagnostic. 14 If any of them were in the range of 140–208 or equaled to 2386, we classified it as cancer related. 19

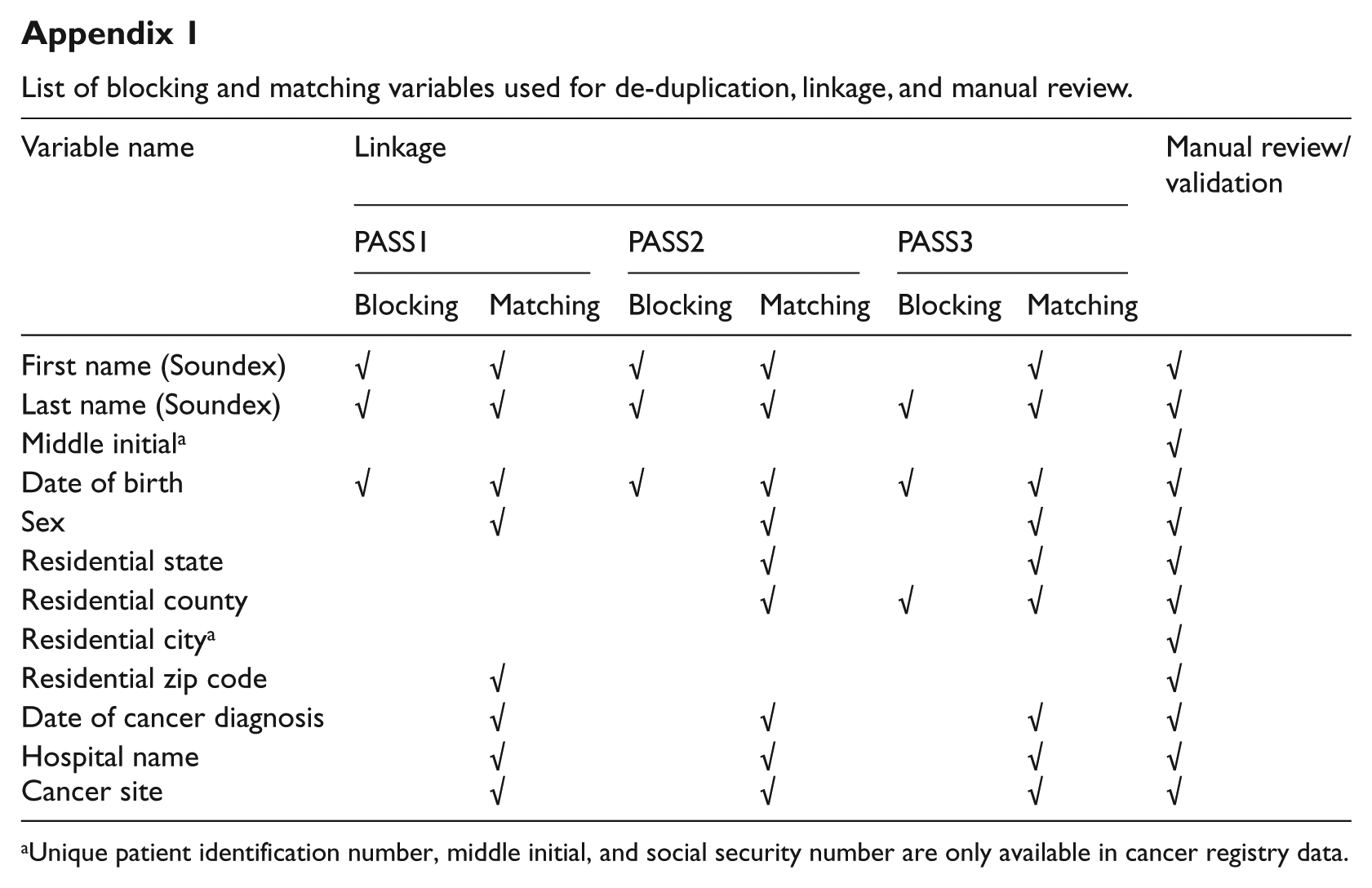

2. Matching. The cancer-related and non-cancer-related datasets were the basis for partitioning the NHDD file into mutually exclusive blocks and comparing only records within each block during the linkage process (Appendix 1). When a linkage is complete, Link Plus calculates a score (total linkage weight) for all comparisons of a case in the NCR file with a case in the NHDD file and generates a default cutoff weight above which values are considered as potential matches.21,22

3. Manual review. All potential matched pairs above the cutoff weight were reviewed manually after each linkage run. We also considered a pair as a true match if there was a minor discrepancy, such as an obvious typo in the matching variables; transposed first and last names; either the first name, the last name, or the middle initial matched with another unmatched name; variants of a first name that the soundex did not catch; a hyphenated first name or last name; transposed birth month and day; and closeness of the date of cancer diagnosis and the date of hospital admission. Based on these criteria, we used two master-level statisticians with working experience of linking different datasets to carry out the review process on a rotating basis. After manual review, we had 53,301 NCR records that corresponded to 1,102,826 NHDD records (Figure 1, left most box).

4. Validation. To validate the potential match of 54,990 records, we randomly selected 400 linkage pairs for an independent manual review, because a random sample of 381 records would be sufficient at the 95 percent confidence interval. Three master-level trained public health data analysts from Nebraska Department of Health & Human Services (NE-DHHS) carried out the independent validation separately based on the 400 pairs and 12 variables (Appendix 1).

Treatment augmentation strategy

We focus on RT treatment updates for colorectal cancer (CRC) and breast cancer. We chose all CRC records as a general case study, because substantial rural and urban variation were reported. 23 We selected American Joint Committee on Cancer (AJCC) stage II and III breast cancer patients as a specific case study, because the National Comprehensive Cancer Network recommends adjuvant RT for all those patients. 24

In order to update RT for a cancer patient, it is necessary to meet two conditions: (1) the RT is for the diagnosed cancer site and (2) the linked hospital stays or visits are after the site-specific diagnostic date. For condition (1), we selected patients with the primary site being either breast cancer or CRC only. For condition (2), we used date of diagnosis to exclude all outpatient visits prior to the diagnosis date. Although there were no missing service dates from the NHDD file, about 2.5 percent diagnostic dates were missing from the NCR file. To preserve usable records with missing months or days, we designed an algorithm to hierarchically delete missing records. When diagnosis and treatment years are the same, records with missing months were deleted, when diagnosis and treatment months and years are the same, records with missing calendar days were deleted. There were a total of 288 NCR records were deleted from the 53,301 linked records in the above process.

Results

Linkage quality

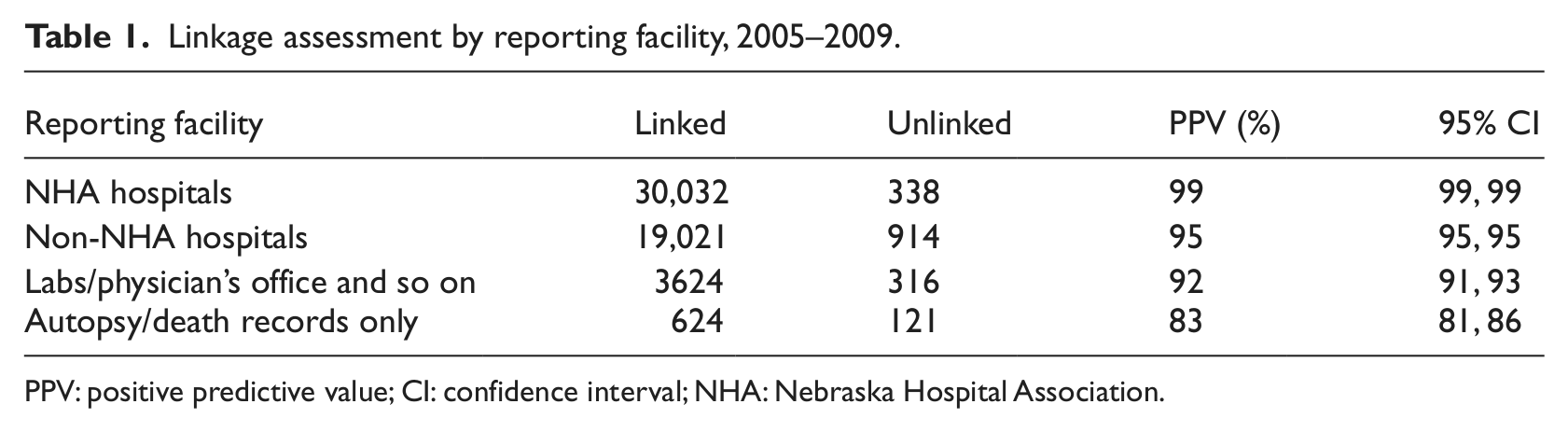

Of the 54,990 NCR records, 53,301 were linked to NHDD records with a crude linkage rate of 97 percent. The linkage rate varied by reporting sources (Table 1). The highest linkage rate, 99 percent, was from the NHA reporting hospitals. Cancer cases reported through autopsy or death certificate had a linkage rate of 83 percent. Manual reviews by three judges yielded 3, 1, and 0 false positives, respectively, resulting in an average false positive rate of 0.3 percent. These reviews as well as the results presented in Table 1 suggested that the linkage yielded very high-quality data.

Linkage assessment by reporting facility, 2005–2009.

PPV: positive predictive value; CI: confidence interval; NHA: Nebraska Hospital Association.

Potential to aid case finding

Although the main purpose of this study was to improve treatment information, it is always important for the NCR to augment potential missing cases. In the NHDD cancer-related dataset (i.e. with a cancer-related ICD-9 code), we found 19,907 person-specific records with the primary diagnosis of cancer that were not in the NCR. We put these records in the potential NCR cancer case file (PNCCF). Since person-specific records included multiple visits, primarily for outpatient care, we selected a unique record for each person in the PNCCF, and this process yielded 4270 unique patient-based records. We also deleted suspected out-of-state patients who might have sought continued outpatient care in Nebraska while traveling. We selected all out-of-state outpatients and deleted those with only one RT or CT visit, which resulted in 3593 records not captured by the NCR 2005–2009 incidence file.

However, some of the 3593 NHA records could be patients prior to 2005 from the NCR file that were not included in the linkage process above. We quickly linked these records with the NCR file from 1990 to 2005 by the deterministic data linkage method, using first name, last name, sex, and birth date. This process yielded 934 patients from the early NCR file who continued their cancer-related RT after 2005. By removing these records, we had 2659 records to be further assessed for case finding.

Potential to improve radiation treatment information in the NCR

As mentioned earlier, we used RT for CRC as a general case study that included all linked CRC patients. Based on the method that placed a set of RT visits after the corresponding CRC diagnostic date, we had 2150 CRC patients in the linked NCR–NHDD file. Of those, 750 records in the NCR file showed CT or RT as the first course of treatment and 90 records were identified from outpatient CPT codes indicating receipt of RT after cancer diagnosis. These findings represented an improvement of 12 percent over the information captured by the NCR file. To assess rural–urban difference in CRC treatment, we divided the 90 newly found cases into metropolitan and nonmetropolitan counties. Although 60 percent of Nebraskans live in metropolitan counties, 60 percent of the augmented RT patients were from nonmetropolitan areas. This preliminary result suggested that missing treatment records in the NCR were more likely to be for rural patients than for urban patients. Although 12 percent of additional records may not seem like a large number, those records raised the overall RT treatment rate in Nebraska from 34.9 percent to 39.1 percent.

Potential to improve radiation treatment information for public health

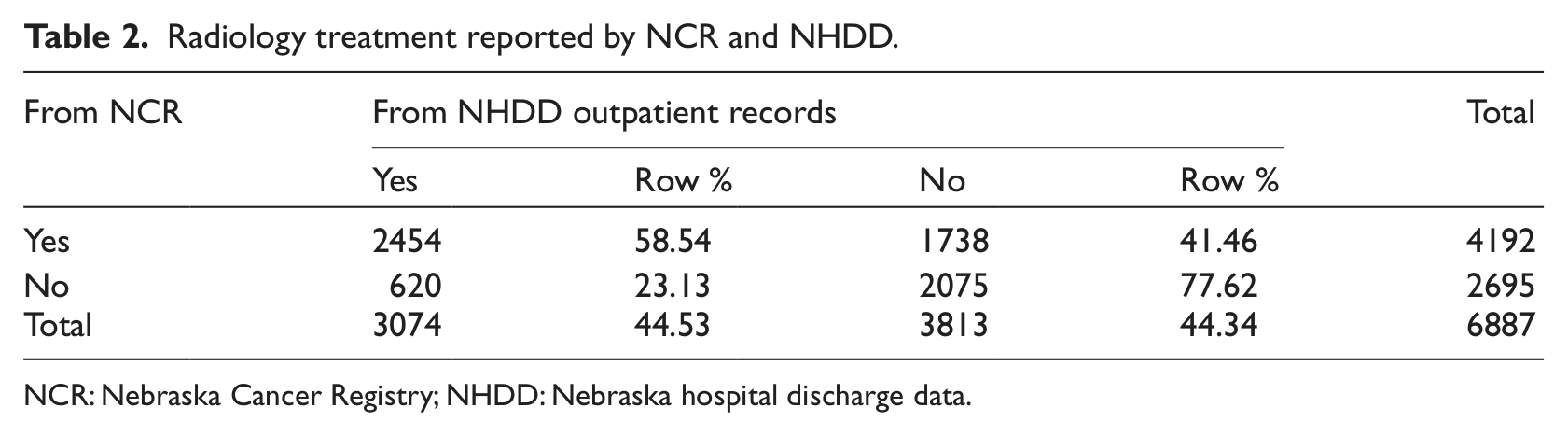

In our analysis of CRC treatment, we did not know how many cancer patients would need RT, and therefore, we could not assess whether the 12 percent additional RT records were adequate for updating CRC RT records. To investigate further, a total of 6887 AJCC stages II and III breast cancer patients, who were expected to have RT, were identified (Table 2). Of the total, 2454 had RT confirmed by both the NCR and the NHDD. Of the total, 1738 patients were reported to have received RT by the NCR, but records for these patients were not found in the outpatient CPT codes. Of the 2695 patients not reported to have received RT by the NCR, 620 (23.13%) were found in the NHDD file. These 620 records represent a 14.8 percent improvement over the 4192 records initially shown to have received RT. A total of 69.9 percent of patients had verifiable RT.

Radiology treatment reported by NCR and NHDD.

NCR: Nebraska Cancer Registry; NHDD: Nebraska hospital discharge data.

Discussion

In this study, we linked NCR and NHDD data for 2005–2009. Our linkage was population based that included all age groups and all types of cancer. We used Link Plus 2.0 and followed an established procedure of data preparation, matching, manual review, and independent validation. Because the quality of both datasets was high and because we adhered to the protocol, the linkage rate was 97 percent overall. Only NCR records from autopsy or death certificates had a low linkage rate (83%), which was expected, as some individuals who died had not gone to a hospital.

Linking hospital discharge data with registry data has emerged as a major source of gaining diagnosis and treatment procedure information.25,26 This study piloted three applications: population-based case finding, cancer site-specific treatment information enhancement, and site- and stage-specific treatment information enhancement. Our experience suggests that population-based case finding via a potential false negative (i.e. those records with a cancer diagnosis in the NHDD file but not in the NCR) will require further study about a new definition of cancer cases. We identified 2659 Nebraska patients in the NHDD who had some indication of cancer treatment but who were not in the NCR file. They represented about 5 percent of the total cases from 2005 to 2009. One area that needs further clarification is outpatient care of out-of-state patients. If these patients’ primary diagnosis and treatment took place outside the state of Nebraska, and their postsurgery CT or RT outpatient care took place in Nebraska, hospital staff may be less diligent about reporting their care in the NCR than they are about reporting the care of Nebraska citizens.

Our effort to identify additional RT cases for CRC and breast cancer patients resulted in modest 12 percent and 15 percent improvements. Since all breast cancer stage II and III patients were expected to have RT, we focus on their assessment. After the update, the RT rate increased from 61 percent to 70 percent, which still left about 30 percent of the records unverified for RT. Although the increase in the treatment rate seems modest, it is consistent with a previous study that compared cancer registry with a chart review for the patients of PacifiCare of California, which shows RT rate increased from 30.6 to 41.1. 27 In addition, even though the 70 percent RT rate seems low, it was just about 8 percent below a chart review–based study from the AVON Center for Breast Care in Atlanta, where all breast patients were below the age of 72 years and had breast conserving surgery. 28 Since our study had no age and surgery restrictions, the lower percentage was expected. Finally, the study period in this study covers the adoption of hospital-based quality improvement (QI) standard for stage I, II, and III breast cancer that was endorsed by the National Quality Forum (NQF) in 2007. As shown in a recent study that prior to 2005 and 2006 period, the RT rate was not very high (76%), and the rate increased to 96 percent after 2007. 29

There are several additional reasons for missing RT data in this linkage project. First, in order to ensure that outpatient RT care was cancer specific, we placed outpatient service dates for RT after cancer diagnosis. However, some dates are missing in NCR, and we might have missed a small number of cancer treatments due to date discrepancies. Second, some RT may have been coded with other outpatient procedures that we were unable to straightforwardly select. Third, we assumed that hospital-based RT treatment was captured primarily by the NCR or from the inpatient data file, as it is primarily a hospital-based registry. However, some first-course RT might have been missed by the NCR and the bundled DRG codes from the NHDD cannot uniquely identify RT. Although it is possible to uncover specific procedure codes, for both inpatient and outpatient care, doing so requires different protocols to govern the data request. Since our linkage protocol was designed to capture primarily outpatient cancer care, future studies should design a protocol that includes both inpatient and outpatient cancer treatment codes. In addition, some patients who had surgery in Nebraska hospitals may have received CT and RT out-of-state, which would not have been captured within the NHDD. Finally, we did not have a validation sample, and therefore, we were unable to tell whether the NHDD-linked file captured all RT cases. We selected all stage II and III breast cancer patients, and all of them were supposed to have had RT. However, some patients may not have followed the prescribed treatment. Although we expected that more than 70 percent of these patients would have received RT, expecting a 100 percent RT rate might be unrealistic.

A major caveat that cannot be addressed under our cancer treatment surveillance protocol is lack of hospital-based validation. In order to carry out quality indicator improvement, many hospitals started to review cancer treatments.27,28 If we could incorporate one or two hospital-based chart review processes, we could then validate our linkage and identify more detailed protocols for both inpatient- and outpatient-based billings that not only accurately reflect various RT and CT billings but also preserve confidentiality and integrity of hospital discharge data.

The limitations described above provide avenues for future studies. First, our study provided an empirical basis for further improvement of linkage protocols that may broaden the inclusion of CPT and other bundled procedure codes. Second, multiple and repeated procedures reinforced the need for improvement in diagnosis month and date completeness in the NCR. As information networks are connected throughout the state, it will be possible to develop a real-time treatment update information system, so that missed information could be checked in real time or close to real time.

In addition to the above cancer registry–specific data quality enhancement, the linked NCR–NHDD database provides access to additional information about comorbidity and service charges. For example, we can use primary to tertiary diagnosis codes to develop comorbidity indices. Since non-cancer-related hospital visits are also linked, postsurgery health service needs can be examined. Moreover, linked cancer treatment and other information will likely become a valuable asset for public health practitioners and researchers. 30 Examples of possible uses of such linked data include surveillance of treatment disparity, health outcome comparison of different treatment regimens, and population-based health policy development.

Footnotes

Appendix

List of blocking and matching variables used for de-duplication, linkage, and manual review.

| Variable name | Linkage |

Manual review/validation | |||||

|---|---|---|---|---|---|---|---|

| PASS1 |

PASS2 |

PASS3 |

|||||

| Blocking | Matching | Blocking | Matching | Blocking | Matching | ||

| First name (Soundex) | √ | √ | √ | √ | √ | √ | |

| Last name (Soundex) | √ | √ | √ | √ | √ | √ | √ |

| Middle initial a | √ | ||||||

| Date of birth | √ | √ | √ | √ | √ | √ | √ |

| Sex | √ | √ | √ | √ | |||

| Residential state | √ | √ | √ | ||||

| Residential county | √ | √ | √ | √ | |||

| Residential city a | √ | ||||||

| Residential zip code | √ | √ | |||||

| Date of cancer diagnosis | √ | √ | √ | √ | |||

| Hospital name | √ | √ | √ | √ | |||

| Cancer site | √ | √ | √ | √ | |||

Unique patient identification number, middle initial, and social security number are only available in cancer registry data.

Acknowledgements

The authors thank Y Chen, C Liao, and J Qin for their assistance with a random review process, Sue Nardie for editorial support, and Kevin Conway for preparing hospital discharge data.

Funding

This project was funded in part by the Centers for Disease Control and Prevention (CDC) grants for Strengthening Public Health Infrastructure for Improved Health Outcomes (5U58CD001310-02) and for the Nebraska Cancer Registry (5U58DP000811).