Abstract

Trauma centers manage an active Trauma Registry from which research, quality improvement, and epidemiologic information are extracted to ensure optimal care of the trauma patient. We evaluated coding procedures using the Relational Trauma Scoring System™ to determine the relative accuracy of the Relational Trauma Scoring System for coding diagnoses in comparison to the standard retrospective chart-based format. Charts from 150 patients admitted to a level I trauma service were abstracted using standard methods. These charts were then randomized and abstracted by trauma nurse clinicians with coding software aide. For charts scored pre-training, percent correct for the trauma nurse clinicians ranged from 52 to 64 percent, while the registrars scored 51 percent correct. After training, percentage correct for the trauma nurse clinicians increased to a range of 80–86 percent. Our research has demonstrated implementable changes that can significantly increase the accuracy of data from trauma centers.

Keywords

Background

Trauma centers, verified by the American College of Surgeons—Committee on Trauma (ACS-COT), are mandated to manage an active Trauma Registry (TR) from which research, quality improvement, and epidemiologic information can be extracted. 1 These local data are subsequently submitted to the National Trauma Data Bank® (NTDB®), the largest data collection bank for trauma research in the United States, with the primary intention of improving the quality of care in trauma centers nationwide. In addition to improving quality, the NTDB has become a source for dissemination of research findings and treatment algorithms. 2 Trauma coding is commonly based upon the International Classification of Diseases-9 (ICD-9) diagnosis as well as the Abbreviated Injury Scale (AIS). The ICD-9 Injury Severity Scores (ICISSs) have been proven to forecast trauma outcomes better than the Injury Severity Score (ISS) derived from the AIS, the long-time gold standard, 3 but it has also been criticized for multiple sources of potential errors affecting the accuracy of a diagnosis (e.g. clinicians, disease type, current state of medical knowledge, and technology). 4 Potential sources of error include incorrect coding, subjective charting, and difficulty in interpreting physician data, leading to inconsistencies within the final submitted codes. 4

Data from TR are entirely dependent upon the accuracy and completeness of the information entered, as inaccurate information on the specific nature and severity of injury has the potential to profoundly skew outcomes calculations. Additionally, the validity of this information has important implications beyond the originating institutions, as these data are reported to regional, state, and national agencies and organizations. Previous authors have demonstrated significant room for improvement due to variability in collection methods within and among institutions.4–6 Since this information has the ability to affect the care of patients on a variety of levels, and as the reliance on multiple layers of human interpretation during data acquisition and entry results in the potential propagation and potentiation of error at each level, the validity of these pooled results must ultimately be called into question.

Studies in national and international settings demonstrate wide variation in charting accuracy using ICD-9, ranging from 84 to 20 percent.4–7 In Australia, Curtis et al. reported a 74 percent error rate after a retrospective review of 100 trauma patient admissions, which resulted in identification of over US$39,000 in additional funding. The authors attributed the high error rate to reasons including the complex nature of trauma patients, extensive patient records, and poor documentation. 7 Smith found a 9 percent discordance in their center for discharge diagnoses in a prospective study of 174 re-coded and randomized charts, with errors examined to the third digit level of ICD-9 coding. 8 It was then surmised that this error rate could be decreased by physician coding education. 9 Campbell et al.’s 10 review in 2001 on discharge coding accuracy in England found a median ICD-9 discharge diagnosis accuracy of 77 percent based on 21 previous studies examining the accuracy of hospital discharge codes. A group from France examined the consistency of charting and the commonality between charts coded by two users, one being the treating physician and the other an offsite physician. 5 This research resulted in high rates of variability between coders, only matching the primary diagnosis 34 percent of the time. Although problems with coding accuracy are apparent from the literature in other settings, this has not been studied specifically in the setting of trauma.4–6 It was suspected that the coding or abstracting process at our institution had room for improvement to achieve consistency produced and recorded among our trauma coders, as it was noted that an inordinate number of injuries were coded in the fourth and fifth digits as “not otherwise specified” in the final ICD-9 codes submitted, despite availability of definitive information, which significantly alters the estimated severity. For example, the fourth and fifth digits determine whether fractures are open or closed, the length of loss of consciousness for traumatic brain injury, and when a spinal cord injury is complete or incomplete. Furthermore, the coding and abstracting process is subject to multiple levels of possible human error prior to submission, beginning with physician documentation including admission documentation and daily progress notes. The trauma registrar, a registered nurse, and certified specialist in trauma registries (CSTR) review these data and assign the most appropriate diagnosis codes based upon the available information. As much of the interpretative process is dependent on the registrars, variations in levels of education and clinical experience may contribute to variations in the data and selected codes. Therefore, in an attempt to improve performance and quality efforts within our institution, we hypothesized that utilizing trauma nurse clinicians (TNCs) who have more detailed clinical knowledge and who had received supplementary training in a coding and trauma scoring software system would increase the accuracy and consistency of coded chart data as compared to the traditional registrar coding process.

Methods

The study was approved by the hospital’s institutional review board. A total of 150 patient charts were selected that had been abstracted, scored, coded, and entered into the hospital’s TR between June 2010 and June 2011. Charts that were selected had previously been chosen for review during an ACS-COT re-verification visit and had been previously abstracted and coded using a combination of HIM coding software, TR coding system, and/or manual look-up process based on registrar preferences. Codes included specific injuries and co-existing disease states and complications. Data for these charts were collected by both TNCs and registrar from the patient medical during the hospitalization. The registrars coding experience averaged 7.7 years of trauma-specific coding experience with upper and lower bounds of 7 and 8 years, respectively. Each registrar has completed courses in medical terminology, anatomy and physiology, and AIS coding, with two of the three holding current AIS certification. This meets and/or exceeds the requirements for registrars as outline by the Resources for Optimal Care of the Injured Patient. 1

The Relational Trauma Scoring System™ (RTSS™) is a web-based injury coding software application that allows trauma registrars and TNCs to transform trauma scoring systems (e.g. ICD-9, AIS, and ISS) into trauma codes to be submitted to the NTDB. The application included a total of 12 h of training sessions which acted as the intervention. Instead of using the registrar decision-based chart coding, the RTSS system uses a prospective process to develop codes based in a relational fashion. Three TNCs were selected to participate in the study. The TNCs first completed a 2-h training session that primarily covered logistical use of the program. Following this training, the TNCs were then randomly assigned 75 charts to review and code using a secure web-based link to the software program. After completion of the 75 charts, the TNCs participated in an instructor led 6-week course with a duration of 2 h each week. This course included review of ICD-9 diagnosis coding, anatomy and physiology, chart analysis and interpretation, as well as comprehensive training on structural methodology used by the software. After the completion of the training, the TNCs were again randomly assigned another different 75 charts. Due to an error in data migration, data from 20 of the randomized charts of a single user were expunged. This was accounted for by eliminating the charts associated with those that were missing.

In order to provide an objective decision on chart consistency, an outside coding arbiter was employed. The outside expert was an experienced nurse registrar holding certifications both as a CSTR and AIS, possessing 9 years of experience. Using a manual look-up process, the outside coding expert evaluated each chart coded by the TNCs as well as the registrars to determine whether the ICD-9 diagnoses made were correct, incorrect, lacked specificity, or were over-coded. A correct diagnosis meant that the outside expert agreed completely with the diagnosis made. An incorrect diagnosis meant that the outside expert (1) disagreed with the diagnosis and (2) it did not fall into categories for lacking specificity or over-coding. Lack of specificity was determined when the interpretation or coding lacked detail supported by the documentation, although the parent code category or overall concept was correct. Over-coding was determined when the interpretation, parent code, or category was correct, but the final code made references to additional details not supported by the documentation.

Statistical analysis

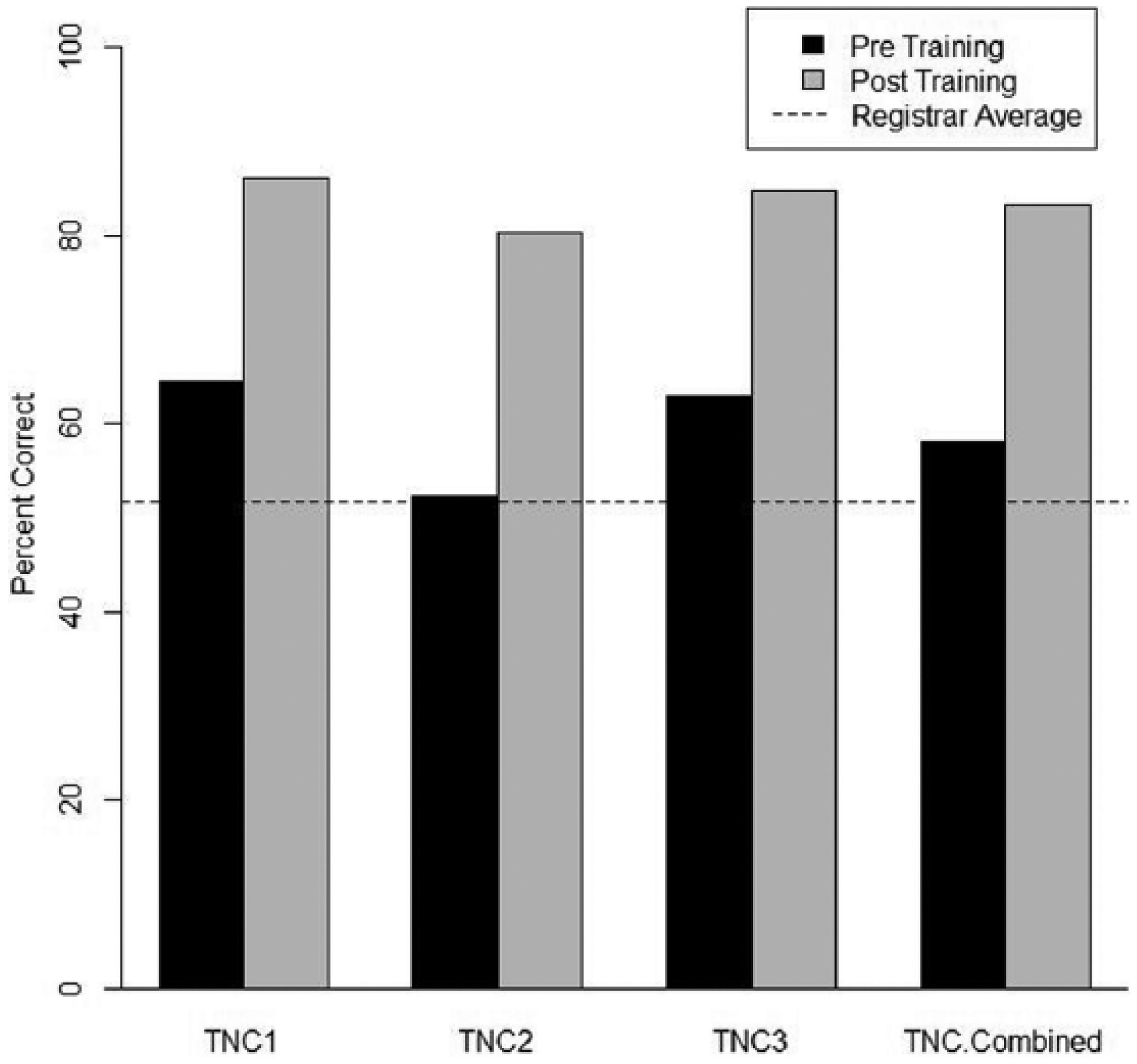

Every chart was reviewed by two of the three TNCs. The agreement between the users was calculated in two ways: First, it was calculated as the percentage of agreement across all charts pre- and post-training. The second calculation was performed as the average percent agreement per chart, with the difference in the second calculation being that charts with a greater number of codes were not given more weight than charts with fewer codes. The distribution of scoring by the expert was summarized using counts and percentages for each coder, as well as for all the TNCs combined. The change in the percentage of codes scored as correct, lack of specificity, and over-coding for the charts pre- and post-training were analyzed used McNemar’s tests. The average agreement between the TNCs for each chart was also calculated pre- and post-training (Figure 1).

Registrar vs Trauma TNC accuracy, Pre- and Post- training.

Results

For charts scored pre-training, the percent correct for the TNCs using RTSS ranged from 52 to 64 percent, while the registrar had 51 percent correct as scored by the expert. After TNCs received training, their percent correct on the next set of charts increased to a range of 80–86 percent. The registrar, who did not receive the training, coded these same charts without using RTSS and was scored as having 52 percent correct. The change in percent correct was significant for all TNCs individually and combined (p < .0001).

ICD-9 codes graded as lacking specificity and over-coded were analyzed similarly. Pre-training lack of specificity ranged from 5 to 8 percent for the TNCs and was 13 percent for the registrar, and over-coding ranged from 8 to 13 percent for TNCs and 14 percent for the registrar. The TNCs’ over-coding dropped from a pre-training average of 11 to 0 percent post-training. Additionally, none of the TNCs’ codes was scored as lacking specificity or over-coded post-training. The drop in lack of specificity and over-coding was also statistically significant (p < .0001) for all TNCs individually and combined. The analysis on agreement between TNCs shows that in pre-training, the average agreement for a chart was 54.2 percent. After training, the agreement increased to 59.8 percent, but the difference was not statistically significant.

Discussion

In an atmosphere that values evidence-based medical practice, it is important for health-care institutions to be aware of their own coding and data collection processes. NTDB data are used strictly for research purposes and quality improvement at present, but the same complications noted here—lack of internal consistency, lack of specificity, and over-coding—have undoubtedly edged their way into other venues of coding.

We intended to decrease our lack of specificity and over-coding by comparing the registrars’ coded charts to data entered by coding-naive TNCs with assistance from the RTSS program. These data showed an increased overall accuracy of 7 percent when comparing naive TNCs to the registrars, as well as a decreased rate of lack of specificity and over-coding. The combination of clinically experienced TNCs using the RTSS with a relatively short training program thus demonstrated significantly improved data quality. Although the registrars possessed more-than-adequate experience, they were clearly at a disadvantage when compared to the TNCs using RTSS, as the clinical knowledge of the TNCs is an invaluable asset when interpreting physician notes, radiology reports, and other sources of medical jargon. In addition, although employing trauma nurses as coders is more expensive, the benefit of their clinical experience, relationships with other members of the clinical team, and ability to ask questions at bedside for clarification and knowledge of anatomy and physiology make for more accurate codes and better benchmarking against other hospitals and better data for research to base policies and guidelines. The RTSS program was designed with the intention to guide the user through the coding process, essentially eliminating minor decisions and possible inconsistencies bred by human error. This likely contributed to the 6 percent decrease in codes deemed to be lacking in specificity and the 3 percent decrease in over-coding. Given the improvement in accuracy of coding realized by utilizing a software system and clinically based personnel, consideration for similar standardization should be considered for all reporting facilities with the goal of improving the aggregate accuracy of pooled data.

We also demonstrated significant improvement in accuracy after a relatively short training program (12 h over six sessions) consisting of education regarding medical terminology, interpreting physician writing style, and basic training on the RTSS program. The TNCs showed a considerably higher level of post-training performance that improved significantly in all categories. Also notable was the complete absence of ICD-9 diagnosis codes lacking in specificity post-training. From this information, it is clear that even a small amount of training was quite beneficial to the TNCs. Compared to the highly experienced registrar, we demonstrated that TNCs with significantly less training were able to provide much more reliable data. A well-designed program addressing the challenges of chart review seems highly beneficial and would have a positive impact on the validity of NTDB data.

Using the results of our study, we have markedly improved our system for trauma data collection and submission, using only TNCs who have undergone the 12-h training session in conjunction with the RTSS program for data input. We have further increased the ability of our TNCs to directly communicate with our attending physicians by improving physical proximity and by encouraging direct contact and clarification with trauma and radiology staff. This small improvement alone has led to an easily apparent decrease in ambiguity when decoding written charts by increasing physician awareness of points of confusion, likely minimizing the amount of uncertainty that has previously been seen in physician charting. 11 Future research may want to explore whether similar training would result in improvement in coding accuracy across disciplines. The results of this study may also suggest a potential cost saving. Given the increased efficiency of the TNC’s using coding software, less nonclinical trauma department staff may be required to manage the TR, and subsequently decreasing operational cost to the department. Additionally, some facilities may see financial benefits such as increased reimbursement for improved coding accuracy.

These results clearly demonstrate the need for increased attention to coding systems. It is likely that this problem permeates into coding data that are collected and used in other areas of trauma medicine, such as billing and discharge diagnoses. In 1988, Hsai et al. 12 encountered coding accuracy in Medicare billing with a large sample size of 7050 medical records from 239 hospitals, finding an error rate of 20.8 percent in coding, with 61.7 percent of these errors benefitting the hospital. This is differentiated from our data in that it suggests a coding bias from a hospital billing standpoint but demonstrates that coding error has a significant downstream effect on patients care in ways separate from downstream research outcomes. It also shows that our own trauma coding data accuracy was comparable in amount of errors as compared to lower intensity health-care delivery.

This study contains several inherent limitations, including the use of a single arbiter as the standard for chart accuracy, while an increased number of arbitrators or registrars would lead to a stronger standard. Additionally, due to a technical issue during data migration, the codes derived from 20 of the charts abstracted by a single user were not recoverable. These charts were randomized prior to the data loss. As a precaution, we completely discarded the data from these cross-matched charts abstracted by other users. We also removed all of the concordant charts coded by other users. This decreases the power of the study but would likely have minimal effect upon the results.

Summary

Our research has shown that simple and easily implementable changes in coding and data collection processes can significantly increase the accuracy and thereby the usability of the data contributed to the NTDB. We first showed that trained nonclinical registrars had significantly less ability to accurately abstract charts when compared to a coding-naive TNC using an RTSS program. We then were able to show that the accuracy of these TNCs was significantly increased by a 12-h training course. Additionally, we encouraged communication among TNCs and physicians to improve data accuracy. Future research efforts will involve the education of our trauma physicians to improve data specificity by more complete documentation even before the coding process begins, ideally increasing our accuracy as well as the efficiency of the coding process at our level I trauma center. In the future, it would be of value to investigate the improvement of coding accuracy if it were performed by the attending physician using a trauma coder for assistance. Comparing these outcomes may delineate other areas for possible improvement.

Footnotes

Acknowledgements

M.L.F. was responsible for the study conception and oversight. M.L.F. and N.R. were involved in study design. M.E., G.A.F., M.R., and M.L.F. were involved in the writing and discussion of both the manuscript and abstract. M.B., N.R., and A.M.W. were responsible for data analysis and interpretation. All authors contributed substantially to the revision and critical edits. Poster presentation at the Trauma Quality Improvement Program Annual Meeting and Training in Chicago, Illinois, on 9–11 November 2014. Oral presentation at the North Texas Chapter of the American College of Surgeons Annual Meeting in Dallas, Texas, on 21 February 2015.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Stanley Seeger Surgical Fund of the Baylor Health Care System Foundation.