Abstract

A critical mass of public health practitioners with expertise in analytic techniques and best practices in comparative effectiveness research is needed to fuel informed decisions and improve the quality of health care. The purpose of this case study is to describe the development and formative evaluation of a technology-enhanced comparative effectiveness research learning curriculum and to assess its potential utility to improve core comparative effectiveness research competencies among the public health workforce. Selected public health experts formed a multidisciplinary research collaborative and participated in the development and evaluation of a blended 15-week comprehensive e-comparative effectiveness research training program, which incorporated an array of health informatics technologies. Results indicate that research-based organizations can use a systematic, flexible, and rapid means of instructing their workforce using technology-enhanced authoring tools, learning management systems, survey research software, online communities of practice, and mobile communication for effective and creative comparative effectiveness research training of the public health workforce.

Keywords

Introduction

Comparative effectiveness research (CER) is a rigorous scientific approach that attempts to quantify the relative “benefits and harms of different interventions and strategies to prevent, diagnose, treat, and monitor health conditions.” 1 As a promising strategy to improve health decision making and control medical spending, CER was recently made a national priority through legislation in the United States. 1 However, public health professionals currently possess insufficient capability to appropriately leverage data and implement best CER practices, making workforce training and development in CER imperative.2,3 Innovative public health workforce training programs that properly integrate health informatics into curriculum development and evaluation can generate a sustainable infrastructure for capacity building in CER, empowering researchers with the tools they need to fully synthesize health information to enable informed and useful decision making. 4

Although federal workgroups and committees have examined workforce needs and gaps, identified requisite competencies for CER, and characterized mechanisms to support training in CER, little data exist regarding the most effective means of reaching working professionals to achieve CER capacity-building objectives.2,3 Programs that span several weeks or months and use exclusively traditional face-to-face classroom delivery mechanisms are impractical for full-time employees or those geographically removed from the training site. However, learning can be facilitated and supported through the use of information and communications technology (e-learning) to meet the unique needs of the public health workforce, offering unparallel flexibility and convenience for trainees.5,6 E-learning provides optimal learning flexibility by making course content accessible at a time most convenient to busy learners, either in real time or asynchronously,5–7 and combining with face-to-face engagement (blended e-learning). Blended e-learning is becoming a pedagogical methodology of choice in academic institutions, private businesses, and in allied health workforce development.8–10 Furthermore, a blended learning approach can be complemented with online evaluative approaches to efficiently package a CER curriculum that reaches a critical mass of the public health workforce and builds their capacity to address national research priorities.

The purpose of this study is to describe the development and evaluation of a blended learning CER curriculum targeting public health professionals, which followed four specific objectives: (1) to appraise the CER training needs of a selected group of public health professionals, (2) to design a tailored training curriculum using participant input, (3) to identify the most valuable elements of the program, and (4) to evaluate the overall perceived utility of a blended learning approach for developing CER competencies among the public health workforce.

Methods

We used a single-case study design 11 to illustrate the operational framework of a blended learning CER training program, namely, e-CER (pilot intervention), and a modified Delphi evaluation (formative evaluation) that integrated a variety of informatics and communication tools.

Context/setting

The e-CER training program was initiated as part of a larger human resource development (HRD) strategy for an interdisciplinary research collaborative group located at the University of South Florida (USF) in Tampa, Florida. The overall goal of the HRD initiative was to develop an educational environment able to bring together diverse researchers within the framework of formative research training on best practices in CER and cost-effectiveness analysis (CEA). The HRD initiative was led by the principal investigator of the research collaborative (H.M.S.) and coordinated by the lead Epidemiology/Statistical Data Analysis Manager (J.L.S.). Two program designers/evaluators (A.A.S. and M.C.N.) were assigned with the development of a training intervention focused on CER, which is the product described in this article. The study was funded in part by an R01 grant from Agency for Health Care Research and Quality (AHRQ)/National Institutes of Health (NIH).

Participants

A panel of eight public health researchers with varying levels of professional experience (i.e. MDs, PhDs, MPHs, and graduate students) was invited to participate voluntarily in the pilot phase of e-CER study. Participants collectively held the following professional positions at the USF College of Public Health and College of Medicine: two faculty members (a full tenured professor and an assistant professor), one research associate, four graduate and research assistants, and one executive research administrator. Participants were members of the research collaborative and did not receive additional monetary incentives for participating. Their participation encompassed continuous involvement throughout the design, development, implementation (i.e. 2–4 h self-learning plus 1.5 h face-to-face meeting weekly, for 15 weeks), and evaluation of the CER training program. Although this research on curricular methods (i.e. instructional techniques) did not require institutional review board (IRB), participants were asked to sign an informed consent form for their participation, which encompassed the recognition that each participant would have certain responsibilities in the program itself, should recognize the training needs of other participants, and should have equal rights within the group learning.

Data collection methods

Given the heterogeneous group of participants who were called upon to participate from different public health disciplines, an innovative approach to training and evaluation was necessary to maximize use of resources, ensure relevance and utility of the training, and use trainees’ time efficiently. In such context, the integration of health informatics and communication technologies, particularly web-based learning and online assessments, could help us overcome these challenges. Additional measures were also needed to minimize the effect of power relations and ensure validity and confidentiality of data collection because participants were connected to the same institution (employed by the same organization as the researchers). This relationship could have potential effects on program outcomes if traditionally proctored course evaluations or other face-to-face methodologies were used. Hence, we decided to use the Delphi technique to minimize the potential impact of power relations among individuals from the same institution. This technique is an anonymous structured group interview method particularly useful to gather consensus equitably and confidentially, while avoiding power struggles among participants. These features could be enhanced with online data collection. Therefore, a participatory approach using blended e-learning and a technology-enhanced Delphi technique was identified as optimal delivery strategies to successfully integrate a broad range of experts’ views.

Data collection occurred in three phases conducted from January through June 2011: (1) needs assessment to inform course development, (2) pilot implementation and course assessments, and (3) formative evaluation using a modified Delphi technique.

Needs assessment

Initially, we compiled core CER competencies from the published literature1,12,13 and then administered an anonymous online survey to participants to assess training needs, to characterize pretraining expertise in CER, and to quantify baseline participants’ perceived self-efficacy to conduct CER in practice. We also organized informal discussions with participants to identify additional issues and course delivery considerations.

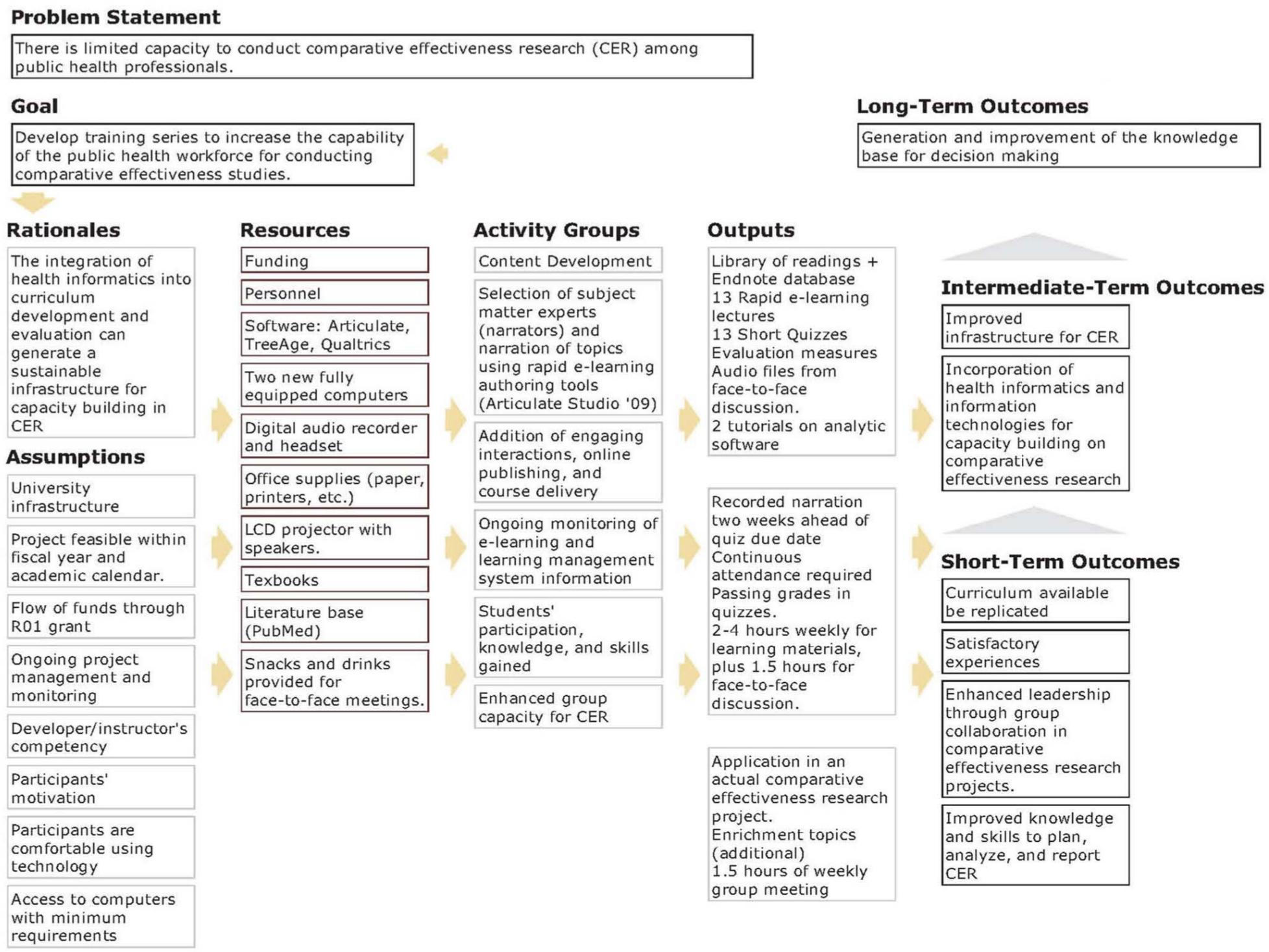

Course assessments

During course development, we designed pre- and posttests that comprehensively assess core CER competencies; these were administered before and after the training program to measure knowledge gained. Short quizzes were also given weekly; however, grading was based on completion rather than performance. At the end of the program, we obtained input from participants in constructing the program logic model 14 using flowcharting and freely available logic model builder software. 15 The logic model was designed to identify training outcomes, the activities to be conducted to achieve those outcomes and the resources required to effectively implement program operations, and to guide the development of targeted evaluation questions.

Formative program evaluation using an e-Delphi technique

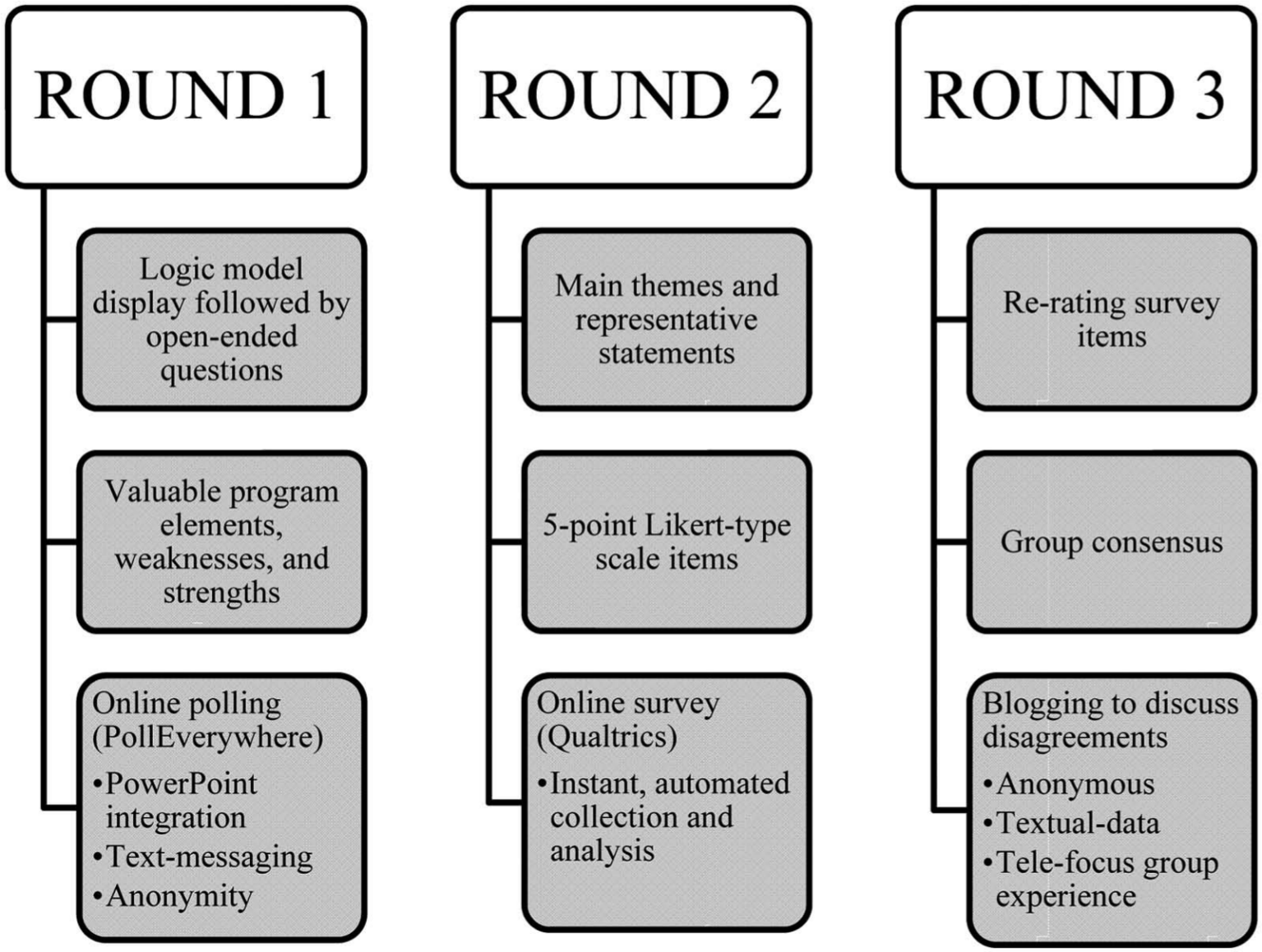

The Delphi method is a well-established mixed methods technique used with groups of experts to develop programs, forecast potential scenarios, design appropriate curriculum, to set priorities, and perform effective program evaluation.16–20 Key features are anonymity, iteration, feedback, and consensus. 21 The recommended composition and size of the Delphi panel varies from 5 to 10 people with expertise on a particular topic to 15–30 or more for heterogeneous groups having diverse professional backgrounds. 16 The Delphi technique comprises successive rounds of questions to gather program critiques confidentially and anonymously to avoid undesired direct confrontation among participants. This process occurs iteratively until reasonable consensus of opinion is attained. 16 Although the classic Delphi technique is a rigorous approach for eliciting expert opinion, it is also time-consuming (up to 3–6 months to get mailed questionnaires back), demands continued participation, 22 and can be cumbersome to implement in a workplace setting. In view of the issues carried by the classic Delphi technique, we devised a modified Delphi technique using a variety of digital technology tools, including the e-Delphi. 23 We integrated emailing, online survey software, text messaging, online polling, and blogging into staff meetings to assist the data collection process and facilitate ongoing participation. 23 The entire e-Delphi program evaluation consisted of three successive rounds conducted over three sessions (Figure 1).

Technology-enhanced Delphi technique.

Round 1

After displaying the logic model as a visual cue, we asked three stimulus questions: (1) “What elements of the CER curriculum were most valuable to you for enhancing your capacity to conduct CER?”, (2) “What was particularly frustrating about the CER program?”, and 3) “How can the CER curriculum be improved to increase your capacity to conduct CER studies?” We displayed these questions on-screen in a PowerPoint presentation and used Poll Everywhere software to enable anonymous text message voting. Using mobile devices, participants submitted feedback confidentially via text message(s) to a dedicated number. Responses were updated in the presentation real time, allowing participants to consider others’ opinions.

Round 2

First-round responses were reorganized into main themes and were utilized to develop a new online survey, created with the Qualtrics software. The survey URL was distributed to participants via email, who were asked to rate each item using a 5-point Likert-type scale. This process allowed participants to assess and rate the relative importance of responses provided in round 1. Results were presented graphically (vertical bars with percentages) during the next staff meeting. Anonymity and confidentiality were maintained by making the survey link open access, without HTTP referer verification and with anonymized responses (no personal information recorded).

Round 3

Each item was re-presented to participants along with group rating scores from round 2, and they were asked to rerate the relative importance of each item after considering round 2 results in a subsequent online survey. The goal of this step was not only to allow participants to reconsider their own answers but also to streamline opposing opinions and move towards group consensus (opportunity to rerate their answers based on aggregated group responses). We then presented the results of round 3 to participants highlighting the areas in which consensus was not achieved. In this regard, the median was used as predefined criterion (above the median indicating considerable consensus and below the median indicating considerable disagreement). Finally, participants were invited to a blog designed specifically to elicit discussion on the reason(s) for persisting disagreements. Comments were posted using pseudonyms and feedback was permitted over a 2-week period.

Informatics and communication technology description

To develop the e-CER content, we performed a MEDLINE 24 search to identify and obtain relevant CER literature, reference textbooks,25,26 and official reports.1,12,13,27 We organized literature by topic area, and these comprised the required and recommended reading material. A repository of lectures were created using PowerPoint slides and scripted for narration. We adopted a rapid e-learning approach to develop online lectures using Articulate Rapid E-Learning Studio ’09 (Articulate, New York, 2009), a powerful authoring software package. 28 This tool integrates PowerPoint slides with engaging interactions, self-assessments, and time tracking features that maximize learners’ control into flash-based presentations for online viewing. To increase trainee connectedness and facilitate CER competency retention, participants were invited to narrate a presentation of their choice. Articulate presentations were then enabled for display on commonly used browsers and internet-enabled smartphones (mobile learning).

We used Qualtrics, an industry leading online survey research software, 29 to design survey questions for student assessments (pre- and posttests), as well as Delphi rounds 2–3 questionnaires. Qualtrics permitted the distribution of web surveys to all program participants via email, with collected data sent immediately to a hosted secure server, where reporting and descriptive statistics were readily generated. We exported the raw data to an Excel spreadsheet for more detailed analysis. To enrich survey data, we used Google blogger, a free online collaborative and interactive blogging space, 30 to establish a forum for expressing anonymous opinions specific to CER training aspects, particularly following the third round of the Delphi technique.

Finally, we employed an established learning management system, Blackboard platform 9.1 (Blackboard, Inc., Washington, DC, 2010) to host and deliver all course content online. 31 Participants needed only an email account to access online content and to communicate with other students and facilitators.

Mixed data analysis

The qualitative analysis of textual data from the first round of the Delphi technique and the blog consisted of thematic analysis by two independent coders utilizing the MAXQDA software. 32 The first stage of thematic analysis involved synthesis of the open-ended responses into a small number of representative categories or themes. These were used to develop a survey for subsequent rounds of the Delphi technique (rounds 2–3). Representative comments posted to the blog were also extracted to illustrate participants’ diverse viewpoints on topics with considerable disagreement.

The needs assessment, pretest, and posttest were analyzed quantitatively using descriptive statistics available in Qualtrics (frequencies, percentages, and measures of central tendency) for each Likert-type response elicited through the Delphi technique. The analysis of the Delphi questionnaires consisted of a classical Delphi analysis (median as reference). The median is the recommended cutoff value for skewed samples that are frequently used in Delphi panels. Values equal to or greater than the median were indicative of more agreement and reflected reasonable consensus.19,33

The Delphi technique, as a consensus method, has been a very useful methodology to forecast possible scenarios.19,23,33,34 Hence, our case study could provide some contextual information useful to potential program planners in the design or replication of subsequent CER trainings. On the other hand, the data being analyzed in our Delphi study lacked optimal statistical power (we had only eight original study participants and one dropout prior to the end of the study). Furthermore, in the presence of a small panel (e.g. less than 10 experts), the Delphi estimates may be less precise. 35 In addition, group bias is also possible because the selected individuals from USF cannot be considered to be representative of the whole population, which is the target of the CER training. Hence, our approach was not designed to provide generalizable findings and clearly operate under a qualitative validity framework.

Nevertheless, program decision makers, who see value in our approach to the integration of technology for curriculum development and evaluation, may wish to scale up this project to larger audiences or to replicate our experience. However, they will naturally feel limited in their assessment of the future implications due to the sample size of our study. Hence, as an expansion to the Delphi technique, we decided to use bootstrap methods 36 with the data from the final round of the Delphi to simulate results of wider project implementation and verify the stability of the obtained estimates. Bootstrapping can be particularly useful to deal with small samples and skewed distributions, as well as to verify the validity of the Delphi-derived data. 37

By enhancing the Delphi analysis with bootstrap simulated data, 95 percent confidence intervals (CIs) can be calculated for the median (i.e. the percentile method). Consequently, we complemented the classic Delphi with bootstrapping, in which data collected for our single experiment were used to simulate what the results would have been if the experiment was to be repeated over and over with a new sample of participants. Hence, we amplified the estimates generated by the Delphi technique by generating 2000 bootstrap samples of expert ratings (using SAS system 9.2 computer package), as an exercise that could illustrate the long-term worksite implementation of blended learning in CER, and to improve the precision of the estimates generated by our small expert panel opinion relative to the utility of e-learning for CER for enhancing the capacities of public health researchers. These bootstrap samples were created by sampling with replacement from the original dataset. The method provides an alternative to large sample techniques when asymptotic properties are not met or when the standard error of the estimate has complicated mathematical characteristics. 36 Similarly, to the classic Delphi analysis, we applied bootstrap samples to estimate the median score of 5-point Likert-type scaled items and their 95 percent CIs because the mean as cutoff value is discouraged due to the sensitivity of this central tendency measure to extreme values.19,33

Particularly, we considered strong agreement for items with median scores greater than 3. We used the following algorithm to generate B = 2000 bootstrap samples: (1) we constructed an empirical distribution function, F, from the observed data. F places probability 1/n on each observed data point x1, x2, …, xn (n = 7); (2) we then drew a bootstrap sample

Results

Needs assessment and piloting the curriculum

The needs assessment survey revealed that participants had collective experience in diverse research areas including epidemiology, biostatistics, social science, global health, program development and planning, health education, project management, obstetrics and gynecology, preventive medicine, pediatrics, and social marketing. Participants identified themselves as having various baseline skills in areas of data management, data linkage, statistical analysis, geospatial analysis, health services analysis, scientific research and evaluation, grant writing, project coordination, monitoring and evaluation, and teaching.

The self-assessment tool on pretraining areas of CER competence indicated that the workgroup felt proficient in some areas of CER but were completely lacking in others. The group was strongest in the design and conduct of epidemiological research studies and the assessment of the impact of public health and medical interventions on health outcomes. A subset of the group also felt adept at using extant data sources and conducting statistical analyses. Conversely, the weakest area identified by participants was conducting CEA for CER studies, followed closely by policymaking in health and health care. Responses also established management of electronic databases and advanced statistical methods, such as decision tree modeling and probabilistic sensitivity analysis, as areas where training was needed. A comparison of pretest (mean score: 31.4%) and posttest (mean score: 80.0%) results indicated substantial increase in knowledge among the group of participants (n = 7 for pretest; n = 6 for posttest).

Program evaluation

Program logic model

The program logic model that depicts the inputs, program operations, and expected outcomes is presented in Figure 2.

CER program logic model.

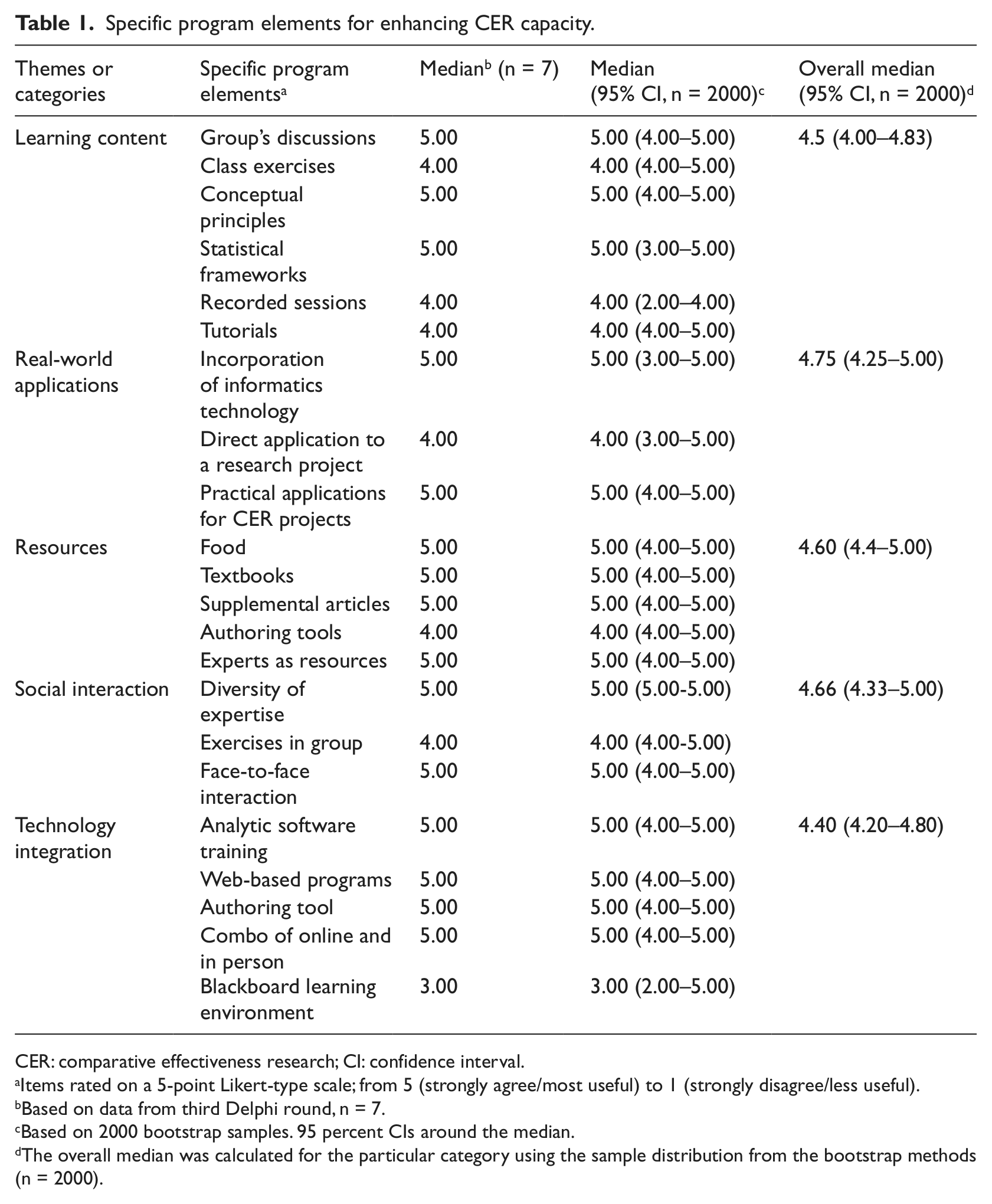

Useful elements of the training program for enhancing CER capacity

During the first Delphi round, participants generated 56 discussion issues, which were then organized into 5 main categories of e-learning or main themes. The five main themes were learning content, applications to real-world, resources, social interactions, and technology integration. In addition, participants suggested ways to improve the program and indicated overall criteria to assess program utility. In the second Delphi round, participants quantitatively rated these thematic areas and their representative items. In the third Delphi round, participants rerated these thematic areas and reasonable consensus was attained.

Useful learning content and strategies included presentations on analytic frameworks for CEA, the conceptual principles of CER and CEA, and discussion of articles on CER. Participants also valued presentations and demonstrations using analytic software. For instance, one participant stated, “Something I found most useful was the presentation of TreeAge,” to which another member echoed, “Learning about the different statistical methods and frameworks was very useful to me.” Participants articulated their appreciation for real-world applications of the e-CER training, particularly the relevance of the analytic methods to their own projects, as evidenced by the following participant statement: “I particularly valued the ability to transform conceptual principles into practical applications of comparative effectiveness research.” They also expressed satisfaction with the application of information technology (IT) to solve training needs.

Participants appreciated the repository of resources for CER, such as textbook readings, journal articles, and the asynchronous availability of interactive online presentations. Face-to-face social interactions with other trainees were well-liked, but the integration of health informatics technology and distance learning into the program was equally valued. For example, one trainee expressed “I liked the use of web-based programs such as ‘Poll Everywhere’ [audience response system],” while another participant stated, “I liked the combo of online and in person platforms.”

After identifying major themes during the first Delphi round, participants were asked to iteratively quantify the strength of their support. We observed changes from round 2 (n = 8) to round 3 (n = 7); notably, some neutral responses (neither disagree nor agree) were changed to reflect a firmer stance in line with the majority of responses. This resulted in a similar overall trend but with stronger group agreement and clearer areas of disagreement. For simplicity, we present only the results of round 3 (n = 7). Subsequently, we used the bootstrap samples (n = 2000) to generate specific item medians and overall medians (main themes), which served to determine the most useful e-CER program elements to enhance CER capacity (Table 1).

Specific program elements for enhancing CER capacity.

CER: comparative effectiveness research; CI: confidence interval.

Items rated on a 5-point Likert-type scale; from 5 (strongly agree/most useful) to 1 (strongly disagree/less useful).

Based on data from third Delphi round, n = 7.

Based on 2000 bootstrap samples. 95 percent CIs around the median.

The overall median was calculated for the particular category using the sample distribution from the bootstrap methods (n = 2000).

Among the five main themes, we ranked the most valued program components and the specific features within those components, using the generated medians from the bootstrap samples. In order of importance, the most highly valued program components were real-world applications (median = 4.75, 95% CI: 4.25–5.00), social interactions (median = 4.66, 95% CI: 4.33–5.00), resources (median = 4.60, 95% CI: 4.4–5.00), learning content (median = 4.5, 95% CI: 4.00–4.83), and technology integration (median = 4.40, 95% CI: 4.20–4.80). The incorporation of health informatics with CER methods and practical case studies were the two most valued real-world applications. The most helpful features of social interaction were the diversity of expertise in the group and face-to-face contact. Participants named the textbook and having other public health experts as resources with which to consult as the most appreciated assets. Approaching conceptual principles and statistical frameworks in group discussions were salient aspects for learning content. Finally, the most valued parts of technology integration were features of the overall blended learning approach.

Areas of persistent disagreement following round 3 were voiced on the e-CER blog. For example, not everyone perceived the availability of narrated, online lectures as advantageous. One participant commented that they were only “useful when the narrator appeared to be interested in what he or she was trying to bestow upon the group,” while another participant agreed, “… [they were] very interesting when the narrator was energetic and engaged.” Participants suggested a rating system for narrators, with those rated highest recording all lectures. One participant added, “We should include more video tutorials, not just static presentations.” Participants also raised issues with the duration of online and face-to-face components stating, “Online narrations should not last more than 30 minutes” and “We need to keep group sessions within the scheduled time of 1 hour.”

Participants also disagreed on the utility of the learning content management system (Blackboard), some perceiving it as “boring” and “too complex.” Another person seemed satisfied offering, “I have no other experience with anything else than Blackboard. Thus, I liked it.”

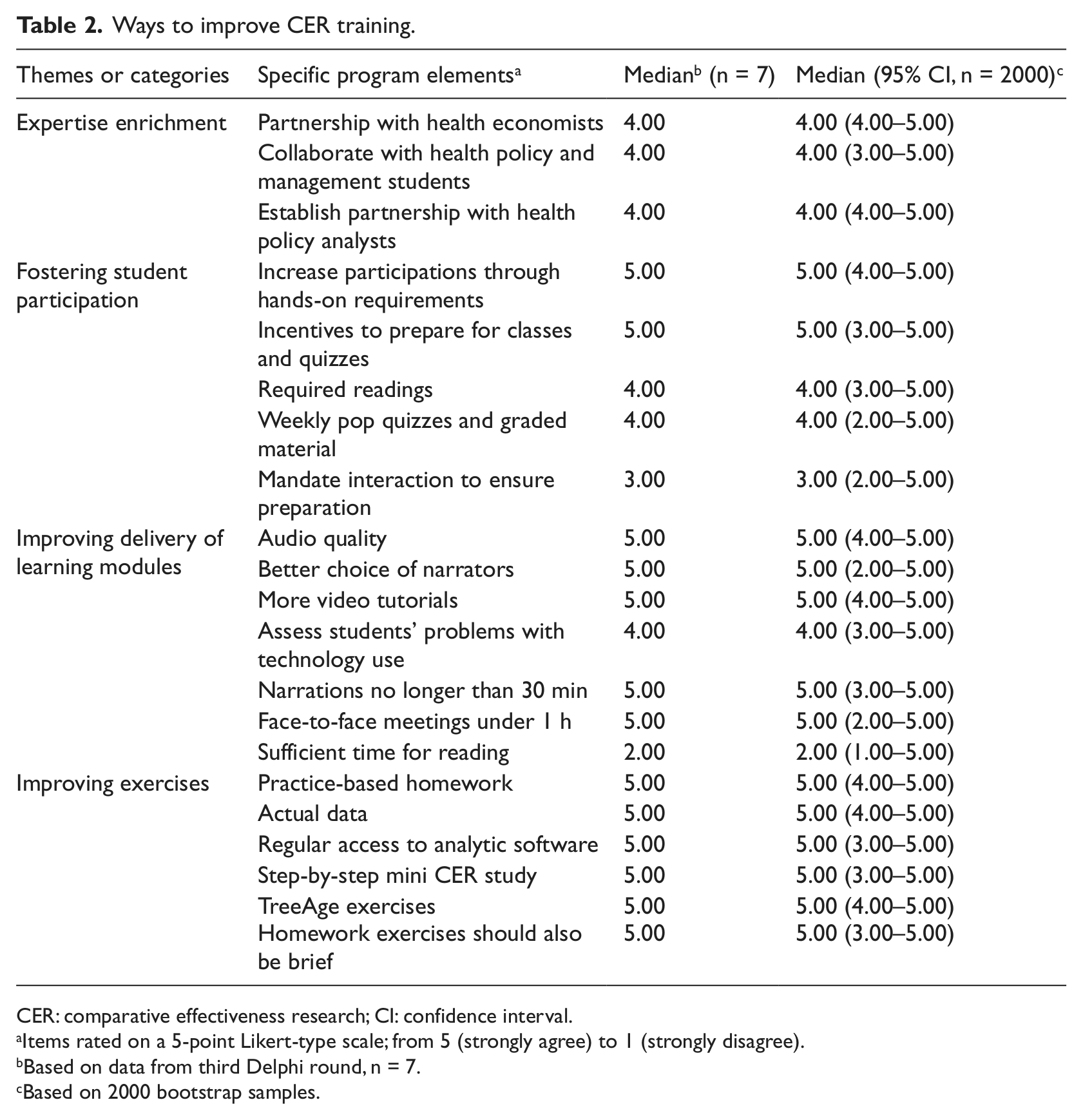

Additional suggested training improvements included enriching the professional expertise of the collaborative, enhancing participation, improving quality of online sessions, and offering additional practical exercises (Table 2). One member suggested, “We should establish a partnership with policy analysts” and another stated, “We should invite a health economist to join the team.” Suggestions for improving the participation of trainees included increasing hands-on requirements, leveraging incentive mechanisms to encourage better preparation for classes, and administration of more pop quizzes and graded assignments. One participant stated, “I think with our busy schedules, mandated activities will provide the necessary push to become an active learner.” However, this was not a universal sentiment. Another participant indicated, “I think we cannot mandate anything. This cannot be requested of people. Better to provide some incentives.” Participants also recommended more practice problems, homework assignments that use real data, and a comprehensive CER research project. Responses also indicated that trainees were sensitive to scheduling and work demands, with one participant remarking, “Homework exercises should also be brief as it is difficult to find time outside class to do exercises.”

Ways to improve CER training.

CER: comparative effectiveness research; CI: confidence interval.

Items rated on a 5-point Likert-type scale; from 5 (strongly agree) to 1 (strongly disagree).

Based on data from third Delphi round, n = 7.

Based on 2000 bootstrap samples.

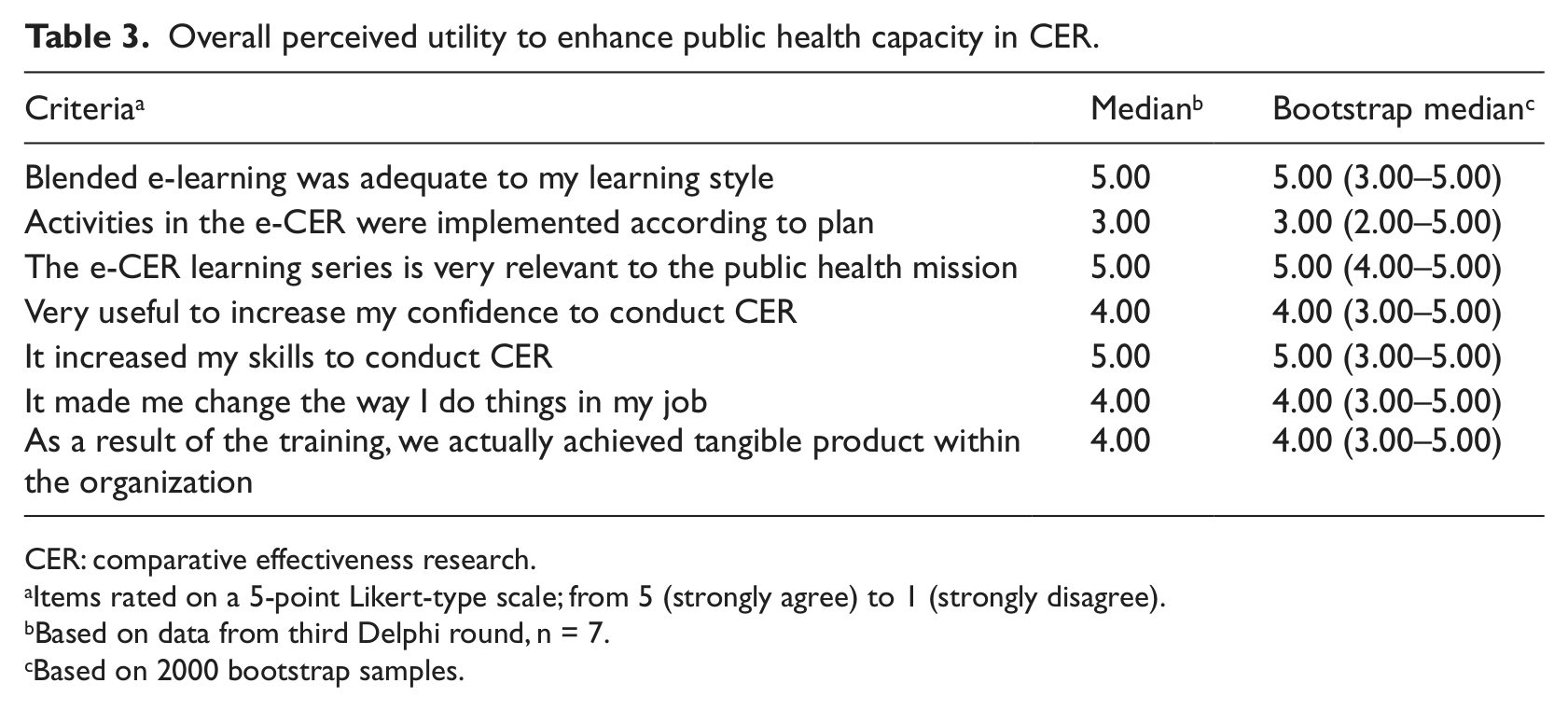

Overall utility of the blended learning program for enhancing CER competencies

Participants were asked to evaluate the potential utility of our blended learning curriculum and then to rate their agreement with representative statements (Table 3). These items focused on (1) the ability of the training curriculum to fit different learning styles, (2) the adequacy of instructional delivery methods, (3) the relevancy to the public health mission, (4) the perceived increase in skills, (5) the reported behavioral change, and (6) the tangible impact for the participating research collaborative. In general, participants felt that the curriculum was relevant to the public health mission (median = 5.00, 95% CI: 4.00–5.00). Trainees also indicated that the blended approach suited their learning styles (median = 5.00, 95%CI: 3.00–5.00) and that it increased their skills (median = 5.00, 95% CI: 3.00–5.00) and confidence to conduct CER (median = 4.00, 95% CI: 3.00–5.00). They further identified their ability to achieve tangible product within the organization (median = 4.00, 95% CI: 3.00–5.00) and cited productive changes in their professional practice (median = 4.00, 95% CI: 3.00–5.00).

Overall perceived utility to enhance public health capacity in CER.

CER: comparative effectiveness research.

Items rated on a 5-point Likert-type scale; from 5 (strongly agree) to 1 (strongly disagree).

Based on data from third Delphi round, n = 7.

Based on 2000 bootstrap samples.

Discussion

This study examined the utility of a participatory, blended learning approach for CER training among the public health workforce. Our findings indicate that incorporating health informatics technology into curriculum development, implementation, and evaluation can result in a meaningful, impactful learning experience. This was evidenced by a high degree of consensus in all categories (classic Delphi with median ≥3 or bootstrap 95% CI for median = 3–5). Results demonstrated that a blended learning approach can be a convenient and effective educational strategy for increasing CER proficiency among the public health workforce.

Blended learning can be used to deliver educational material effectively, while providing flexible access to diverse information 5 at times most convenient to working professionals. Blended learning can enhance interdisciplinary collaboration and facilitate knowledge sharing and decision-making process. 38 By streamlining the content and delivery of workforce training in CER, accountability and learning are fostered to increase CER proficiency and achievement of national research goals. 13

We integrated a variety of informatics and communication technologies not only for curriculum development but also to conduct a mixed method evaluation innovatively. Qualitative and quantitative data collection methods and analyses were implemented with digital technologies to find answers to the “what” (program elements), “how much” (strength of group consensus), and the “why” (illustrative quotes). We first used qualitative methods to identify useful program elements using freely available polling software, while quantitative methods were used to assess group consensus using online surveys distributed via email. Subsequent qualitative methods augmented and explained contradictory survey responses via an online blog. The use of these technologies permitted a successful implementation of a mixed methodology, resulting in a systematic, credible, and transferable approach to formative evaluation of blended e-learning on CER.39,40

Qualitative and quantitative analyses also complemented each other to yield more meaningful and robust results. For instance, the thematic analysis informed the development of the survey that was further analyzed quantitatively. Although an attempt to counteract the limitation of small sample size was made by verifying the stability of the findings with bootstrapping, and thus generating more precise estimates, nevertheless, the purpose of this study was not to generalize findings. Instead, the aim of our case study was to provide an illustration of a real-life complex training situation, which resulted in improved decision making locally. In this particular, the methodological soundness of the case study depended on two key aspects: first, the participatory approach to curriculum development, which resulted in rapid e-learning integrated into worksite activities; and second, the democratic nature of the Delphi inquiry, which resulted in group aggregated data enriched with individual’s perspectives, shared understanding of the program effects, and enhanced validity (credibility and confirmability) of the evaluation results for decision making and program improvement. 40

Another advantage, inherent to the Delphi technique, was the assessment of valuable program elements from the perspective of trainees without generating conflict. The integration of online polling, online survey software suites, and blogging enhanced the key features of the Delphi (i.e. anonymity, iteration, and feedback) by securing confidentiality, automated data collection and analysis, improving retention, and reducing execution time (from traditional 3–6 months to less than a month). Our modified e-Delphi could serve as a model for the systematic assessments of CER training programs and other workforce development projects.

The public health infrastructure needs to be strengthened to overcome important gaps in research capabilities before the United States can improve medical decision making and reduce health-care spending. 41 Our case study demonstrated a viable approach to address existing CER knowledge gaps among the public health workforce because we were responsive to the learning needs and demands of professional trainees. The methods we described allow for flexible delivery of content, malleable to most workplaces, making wider implementation possible and requisite for achievement of national priorities.

Footnotes

Conflict of interest

The content is solely the responsibility of the authors and does not necessarily represent the official views of the AHRQ or the University of South Florida.

Funding

This study is supported by funding from the Agency for Health Care Research and Quality (AHRQ) through a grant on Clinically Enhanced Multi-Purpose Administrative Dataset for Comparative Effectiveness Research (Award no. 1R0111HS019997).