Abstract

Evidence suggests that the rate of reporting abuse, harassment and problematic content to platforms is substantially low. This article assesses the extent to which platform interfaces may contribute to discouraging the use of reporting as a remedy to online harms. Using a walkthrough method, we analyse reporting interfaces for the extent to which they may contribute to a lack of trust in reporting. The study found that reporting interfaces (1) did not provide appropriate access to platform policy or guidelines, (2) failed to provide options for dialogue, testimonials or mechanisms to report in formats supporting user wellbeing needs, (3) were consistently framed as individualising and transactional rather than brokering care or peer support, and (4) added to the opacity of platform intervention and decision-making processes. We argue the available interfaces do not do enough to protect users from digital harms.

Introduction

Experiencing hostility, incivility and hate speech in online settings has become commonplace for users of digital communication and social media over the past decade. For example, a 2021 Pew Research Centre study found that 41% of adults in the United States have experienced some form of online harassment—a tripling of the rate in five years—and 25% of adults experienced more severe forms of online abuse, such as threats, stalking, sexual harassment and image-based abuse (Vogels, 2021). An estimated 14% of Australian adults are subject to hate speech and substantially more to other forms of online hostility (eSafety Commissioner, 2020), while a survey of women conducted for Amnesty International found that one-third had experienced some form of online harassment (Amnesty International, 2018a). In China, a poll of more than 2,000 social media users found 40% had experienced online abuse, with 16% of victim-survivors experiencing suicidality as a result (Radio Free Asia, 2022). It is widely recognised that women and minorities are disproportionately targeted by serious online abuse and harassment, including often hate speech (Cinelli et al., 2021).

Increasing rates of online hostility has sponsored inquiries, legislation and policy initiatives in a range of jurisdictions (Flew, 2021), concerns about wellbeing and mental health (Keighley 2022), calls for more effective platform moderation (Gillespie, 2018), and more stringent state regulation of platforms (Christchurch Call, 2019). Responding to these calls, most major platforms have reviewed their previous terms of service and moderation practices, including the interfaces through which users who have been victims of, encountered or witnessed online hostility can self-report. Known as “reactive moderation” (Jesutofumni, 2023), the intent to protect users who recognise harassment and abuse and (are willing to report it) by intervening subsequent to a report follows regulatory mechanisms familiar in many institutions, client services and corporate management (Suzor, 2019). Although there is no available data on the differential between the extent of online abuse or harassment and the rate of reporting, it is widely recognised that reporting, alongside other forms of complaint, is very significantly lower (Ahmed, 2021; Haslop et al., 2021; Plan International, 2020; University College London, 2021).

This paper provides a framework for understanding one potential cause of low rates of reporting online abuse: the limitations of platform reporting interfaces and the ways in which these may be engender distrust in reports being taken seriously through a lack of transparency and care practices for complainants. Investigating how platforms’ reporting interfaces might condition the willingness to persist in future reporting is a necessary first step in understanding and contextualising the widespread argument that platforms’ reporting mechanisms are “inadequate and ineffective” (Amnesty International, 2018b). That is, putting the interface into the context of understandings of abuse, harassment, hate, complaint-making and interpersonal engagement allows us to explore a lynchpin factor in why users may choose not to report abuse.

The paper draws on findings from an Australian Research Council project, Addressing Online Hostility in Australian Digital Cultures, which aims to provide a comprehensive account of everyday experiences of online hostility, abuse, trolling and hate speech. The small segment of the research reported here provides an initial framework to begin mapping out the experience of reporting abuse by analysing a small, exemplary range of platforms’ reporting interfaces available to everyday users. We begin by extrapolating from recent literature to summarise extant explanations and factors affecting reporting of problematic content among both victims and bystanders. We then present a summary of a ‘walkthrough’ (Light et al., 2018) exercise of the reporting interface for seven popular platforms, followed by a contextualised assessment of four core elements that may affect the uptake and persistent use of reporting: (i) the decoupling of reporting from platform policy information; (ii) the failure to provide a dialogic interaction for making complaints; (iii) the individualised and transactional nature of the interface that denies the benefits of utilising networked interactivity among similar victims-survivors for mutual and peer support; and (iv) the opacity of the reporting process and follow-up options in the interface itself. We argue that the reporting process does not take up the affordance of interactive digital communication tools to provide adequate protection and remedy for those experiencing or witnessing digital harms.

Known factors affecting the reporting of online abuse

What is reporting?

Curation and moderation of online settings is a long-recognised framework of online communication settings from early Web 1.0 forums and listservs, pre-dating the development of contemporary platforms. Moderation is typically reactive, occurring after a post is published, since it is argued that delays resulting from real-time human moderation stymies engagement and/or site traffic (Suzor, 2019). Reporting content already posted that is perceived as harassing or abusive (by the recipient) or otherwise problematic (by a bystander) is, of course, part of a wider cultural practice of complaint-making about other media, especially broadcast, that is ingrained in contemporary culture, in the sense of lived experience and way of life (Creed, 2003). Arguably, one of the most important distinctions between complaining to broadcast media organisations and reporting problematic online content is that digital platforms today typically have in-built, easy-to-use reporting interfaces to help manage large numbers of complaints, while earlier media forms required the use of other channels such as letter-writing or phone calls to complain.

Despite the limitations of a reactive approach, reporting directly to platforms has been offered in scholarship, policy and regulation as an effective method to combat problematic content (Sori and Vehovar, 2022). Like corporate regulation models in which infractions of industry standards are reported first to the corporation before taken up by an external agency or regulator, platform reporting models are understood to apply at least some social pressure on actors to adapt to desired community standards (Ashkatorab, 2018; Suzor, 2019). Naturally, what is perceived as harassing, harmful, offensive or problematic content in any media is subjective, diverse and varied, but the notion of recognised community standards or norms such as the reasonable person test has been utilised in a way that avoids having to establish rules of censorship in advance (Butler 1997). Reporting thus relies on confidence that the report one is making will be assessed against a reasonable person standard.

Motivations for reporting and not reporting

Only one empirical study, relying on cognitive psychology, has hypothesised reasons why users report or do not report digital harms. Wong and colleagues (2016) argued that those who report online incidents undertook a cognitive appraisal of (i) the risk involved in reporting or not reporting, and (ii) an assessment of their individual capacity to cope with the incident. That is, users make a calculation of the emergency of the incident and the resources available to them to judge the effectiveness of investing the time in making a report. They argue that this calculation is underwritten by a social appraisal of the normative significance of the incident—that is, the extent to which they would be rewarded or punished for reporting online issues or problematic content. Given the cultural history of complaint-making and increasing rates of online abuse, it should therefore follow that reporting online abuse would be understood to be a desirable goal. While valuable in drawing attention to individual user motivation, the study emphasises the psycho-social in a way that leaves out the context of how users ‘interface’ with reporting processes and the potential impact on motivation of what can be considered ‘unavailable’ practices for complaint-making.

Other studies have attempted to provide socio-cultural contexts governing motivations to report or not report. Such arguments as to why users might choose not to report have been made in ways which go beyond cognitive appraisal to underscore a range of personal and social factors affecting a decision to make a report. Scarduzio and colleagues (2021) found, for example, that users who have experienced online sexual harassment often did not report due to (1) emotional discomfort of making a report when the perpetrator was known to them (such as a co-worker), and (2) a capacity to cope with the harassment, only reporting when they had felt “fed up” with the abuse. That is, a personal threshold must be passed before reporting online abuse or harassment became desirable. However, some researchers have also found that bystanders are more likely to report problematic content than the victim-survivors of online abuse themselves (Ashktorab, 2018; Wong et al., 2016), indicating that a sense of urgency, personal harm or frustration may not be as significant a factor in why people choose to report or not report.

Other reasons given in recent scholarship for disavowing opportunities to report online abuse and harassment include user concerns about secondary victimisation, particularly among LGBTQ+ and minority users (Asquith, 2012; Duguay et al., 2020), and the wider anecdotal sense that platform rules are haphazardly enforced (Suzor, 2019). It has also been suggested that some users who have been victimised prefer to avoid the added emotional discomfort that may result from making a report or the risk of being negatively judged for complaining or lacking resilience to put up with abuse (Scarduzio et al., 2021). One study suggested that reporting was increasingly seen as a fruitless ‘struggle’ since perpetrators were becoming more strategic about their content to avoid post removals or bans (Sori and Vehovar, 2022). Finally, some scholarship has pointed to the idea that hate speech, abuse and harassment, including particularly misogynistic and anti-minority speech, have become so normalised that its targets are increasingly less capable of recognising it as prohibited or as a potential breach of terms of service (e.g., Khalil 2022; Ringrose, 2018).

Some literature has discussed the emerging option of reporting not to platforms but to statutory third parties such as police or government agencies. The use of third-party reporting, however, does not in itself explain the lower rates of reporting to platforms: it has been noted that where police have a statutory role in addressing threats, abuse and defamation—such as in the United Kingdom—they are typically perceived only as a complaints setting of last resort, it is felt police overburdened, or that ‘online’ harms will not be taken seriously (Akhtar and Morrison, 2019; Broll and Huey, 2015). Some research has also investigated reporting to other third-party institutions, such as universities. Haslop and colleagues (2021), for example, found that students who had been abused online were unlikely to report to the university or students’ union, despite being aware of this facility. Where third-parties are authorised by statute or institutional rules to address complaints, then, indicative literature suggests they are not a competitor for reporting directly to platforms.

Broadly, the nascent literature on reporting online abuse and other problematic content suggests that under-reporting may be the result of cultural norms, such as fears of being negatively evaluated for making a report, for being seen as a person who complains vexatiously (Ahmed, 2021), or may be conditioned by a fear of secondary victimisation. As a step in better understanding why reporting through platform’s own frameworks occurs at rates substantially lower than the abuse itself, we begin with the hypothesis that platforms reporting interfaces may present a setting of affordance or disaffordance in ways which produce distrust in reporting or disenchantment with reporting processes. That is, to ask if the built-in opportunity to report problematic content or behaviour directly to platforms positions a user to feel it is an unpalatable option for making a complaint. While multiple cultural factors condition how all users act in online settings including those who have been or have witness abuse or harassment (Cover, 2023a), the language, arrangement and limitations of interfaces are themselves a significant cultural element in online practices and play a role in conditioning the motivation to report harmful or toxic content.

Walkthroughs

To develop some indicators on how the affordances and limitations of common platform reporting interfaces may condition the culture of reporting we adapted a ‘walkthrough’ methodology focused on arrangement and action of reporting options, interactivity and feedback potential. Walkthroughs are an innovative method of testing applications and practices to determine processes, pitfalls and potentials. Light et al. (2018) developed the walkthrough method to provide data about a digital app’s intended purpose, embedded cultural meanings and implied uses. The method has been taken up in digital ethnography as a useful starting point for user-centred investigation to provide a basis through which later to make sense of how users resist, appropriate and innovate in relation to applications and interfaces. The method recognises from the beginning that the perceived purpose, arrangement of digital interfaces and the cultural meanings that can be discerned through the language and symbolism shapes user experience. In that respect, the method is attentive to cultural approaches to emergent technologies, understanding that they shape practices while also being always a product of those practices and the meanings attached to them (Williams, 1990).

A technical walkthrough involves a researcher engaging with a digital interface and exploring its functions, potentialities and limitations in a systematic way. In the original methodology, four elements are investigated: (1) the user interface arrangement, such as placement of buttons and links; (2) the functions and features, including mandatory and optional use requirements; (3) the textual content and tone, such as the order of menus or categories available that shape use, and (4) the symbolic representation, cultural associations and connotations of the app’s language. Although providing indicative data for thematic and critical analysis, the method is recognised as only a first, reportable step to provide knowledge on which to build subsequent qualitative research (Light et al., 2018). It was utilised in the present study to make sense of the functionality and mechanism of reporting and complaint-making to platforms by analysing the hierarchy of menus, the textual content and tone, and the capacity for interactive engagement, all from a perspective attentive to normative complaint-making in other sectors, and undertaken to discern meanings that can be analysed for victim-survivor care as a contemporary practice in complaint handling (Ahmed, 2021).

We undertook walkthroughs of a representative sample of seven popular platforms’ reporting interfaces, noting that no platform with high user engagement reports its rate of abuse/harassment either by detection or report resolution, or provides data on distinctions between reported abuse/harassment and outcomes determined post-report. Platforms were chosen for their engagement numbers among both adults and young people, based on a list of the 28 most popular platforms by user engagement provided in 2022 by Semrush (Lyons, 2022). User numbers ranged from 150 million (Discord) to 2.9 billion (Facebook) monthly active users for the first quarter of 2022. The seven chosen platforms do not represent the seven most popular on that list; rather, a strategic choice was made to enable comparison and range. For example, WeChat—ranked as the fifth-highest number of active monthly users—was excluded to avoid issues that may arise with its multiple language accessibility derived from its Mandarin Chinese base, alongside anticipated cultural differences in reporting on a Chinese-based platform (Nash, 2023). LinkedIn, ranked at #18 was included to ensure inclusion of a platform focused on professional employment and business, while Discord, which is ranked at #25, was included to ensure a platform with substantial youth usage. The remaining five are represent those which are SEMrush in very high global use: Facebook and Instagram (both under the Meta umbrella), TikTok and X (also known as Twitter), each with over one billion active monthly users, and each of which has been the subject of parliamentary or congressional inquiries in relation to their role in the quality, veracity or surveillance of content in several jurisdictions over recent years (Broadwater and Conger, 2023; House Judiciary Committee, 2018).

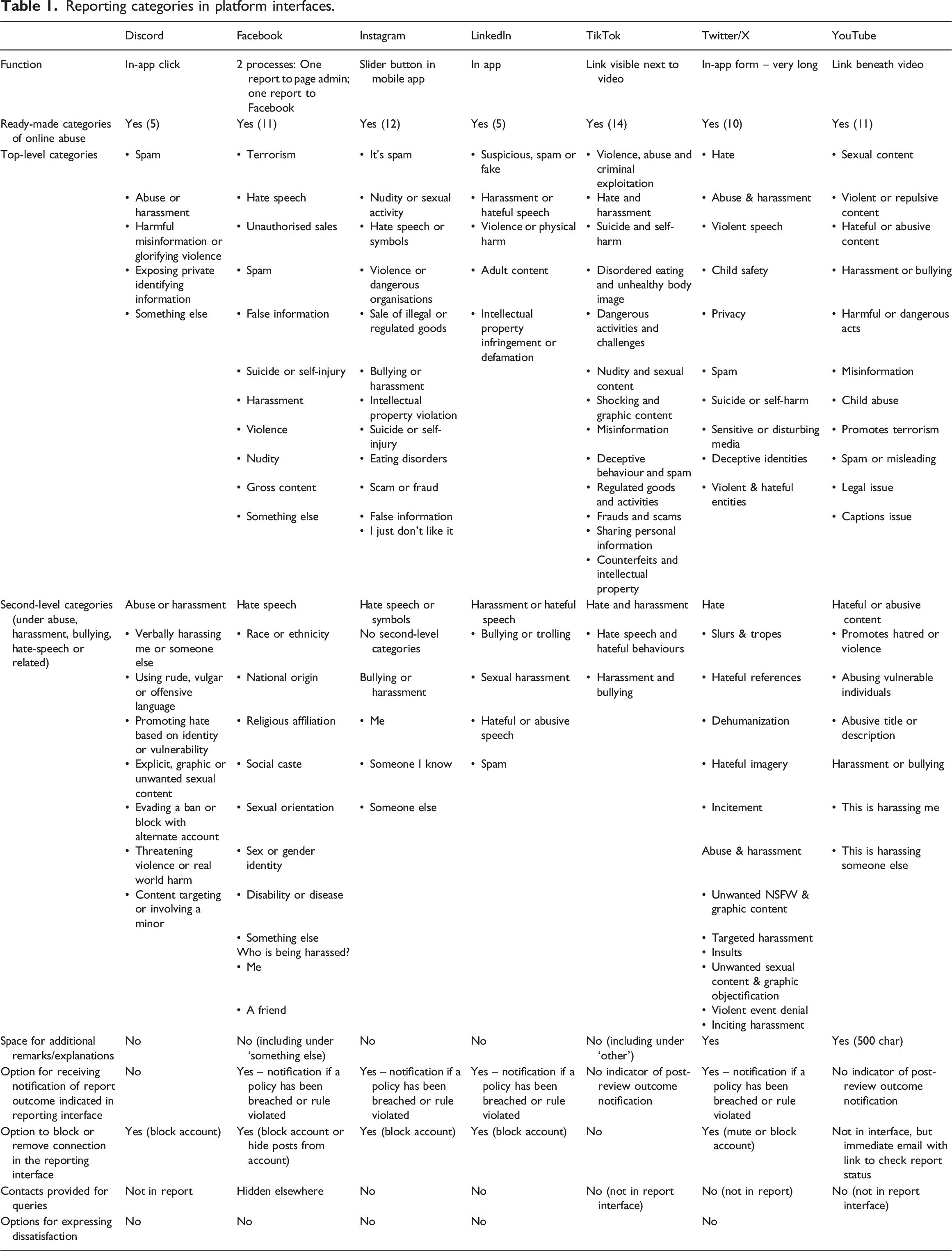

Reporting categories in platform interfaces.

As Table 1 indicates, each of the seven platforms surveyed provided a reporting interface of pre-provided categories to permit the user to self-classify the content for which moderation or removal is sought. Across the platforms, a top-level set of categories ranged from as few as five (Discord) to as many as fourteen (TikTok). For all platforms except Discord and LinkedIn, abuse/harassment or bullying was represented as a distinct category from hate speech, indicating a separation between behaviour that is determined by content and content that may be offensive or, in some jurisdictions, non-legal. TikTok retained a first-level category of “hate and harassment” as well as “Violence, abuse and criminal exploitation” although the latter referred only to abuse depicted in content, and not abuse of a user. Each of the platforms had second-level categories that, after selecting abuse or hate speech, would further define and categorise the problematic content. In terms of abuse/harassment, only the Meta platforms (Facebook and Instagram) and YouTube sought to determine if the complaint was about the abuse of the user or about abuse of a third party. In terms of hate speech (except Discord), some of the platforms’ second-level categories provided lists of ‘types’ of victims of hate speech, such as race and ethnicity, religious or gender identity (Meta), while others sought to categorise hate content by the form—for example, Twitter/X asked complainants if the hate was in the form of slurs and tropes, references, dehumanisation, imagery or incitement. Among the platforms surveyed, only Discord and LinkedIn included hate speech as a sub-category of abuse or harassment.

Five of the seven platforms did not allow space for additional remarks or for users to explain their choice of category—only YouTube (up to 500 characters) and, Twitter/X provided an opportunity to inform moderators with additional written information. The walkthrough sought to determine if complainants were informed at the time of making a report if they would receive a notification or feedback. Most of the platforms analysed, excluding YouTube and TikTok, noted subsequent to submission that the complainant would be informed, but only if there was a policy breach or rule violation. YouTube sends a separate email to the user to state same. The walkthrough also recorded if the reporting interface provided a ready-made option to block or remove a connection with the user who made the offending content: TikTok did not provide this function, while this was irrelevant for YouTube.

In light of the long-standing affordances of digital interactivity and communicative ease-of-use, the walkthrough was interested in the extent of dialogue and communication between the user and the platform that might be implied in the reporting interface, primarily (a) whether or not the interface provided opportunities to converse with moderators on the issue and/or provide testimony in a user’s own words; and (b) if there was an option to express dissatisfaction or make follow-up queries subsequent to any decision, both of which are standard practices in complaints processes. No platform provided opportunities for more than a glib description and all required the use of the classifications to progress the complaint. No platform provided contact details in the tool itself, although in all cases a user could search for generic contact details elsewhere on the service.

Assessment

An analysis of the interface walkthrough provides us with four key indicators as to why the use of the interface may play a role in discouraging ongoing reporting of problematic content to platforms, and how the tone and function of the interface and its limitations may position users to be disinclined to see reporting as a useful remedy for addressing abuse, harassment and other problematic content. We draw here on a range of cultural theory to make sense of the indicators and obstacles, and to provide a socio-cultural context to why the interface may discourage ongoing engagement with reporting.

A: Decoupling reporting from platform policy and community guidelines

Firstly, the interface makes it difficult for a user making a serious complaint to address their concerns in alignment with the platforms’ policies, terms of service or user guidelines. None of the platform reporting interfaces provided any kind of link or URL to the platforms’ policies, complaints practices or terms of services, leaving users to search for them by opening a separate tab and/or navigating away from the complaints form. However, Twitter/X, YouTube, TikTok and Meta provided brief guidelines as to the meanings of each classificatory button. For example, YouTube provides brief pop-ups to define terms, such as: “Harassment or bullying: content that threatens individuals or targets them with prolonged or malicious insults.” TikTok similarly provides a pop-up with some explanation of the terms after clicking on them. The remainder presented the categories as if the terminology was self-evident to all users. The norm represented by these high-profile platforms is, then, substantially different from the harassment expectations among both public and private sector services. In these settings there remains an expectation that complainants have easy access to policies, clarity on procedure, and knowledge of redress systems; such accessibility is considered best-practice and, increasingly, a minimum standard (Brennan and Douglas, 2002; Brewer, 2007).

One outcome from the risk of leaving users to determine the meaning of the classificatory terms or to rely on glib definitions is the atomisation of meaning, and the failure to utilise knowledge of platform processes and guidelines to foster a community of like-minded users ‘on the same page’. When assessed through theories of community belonging, in which the flow of transparent redress information is considered a necessary component of engagement among a network base (Wenger, 1998), we can argue that the failure to provide a link to the exact policy wording despite the simplicity of hyperlinking in those settings positions users seeking to making a complaint in two ways. Firstly, it normativises a practice in which users may not pursue information to ensure their report fits the policy. This is presumably based on an assumption that users will not wish to wade through explanatory documentation, despite evidence to the contrary in wider complaints-making practices (Ahmed, 2021). This effectively isolates users from the information that is designed to guide community sensibilities and behaviour on that platform (Cover et al., 2024). The interface positions the user for ‘fast reaction’ in alignment with the culture of instantaneity (Papacharissi, 2015: 44) that marks the contemporary practice of interactivity in digital culture rather than redress and complaints management practices.

Secondly, it leaves users making reports in a setting in which they are il-informed about, and disconnected from, the process. Just as few platforms are transparent about their moderation process (Burch, 2018), users are disincorporated from the process by a normative interface that does not provide adequate information about what happens to a report, where it will go, the anticipated time to address it, how they will be informed, if they have recourse to appeal, or how and for how long any information they’ve provided will be kept. Arguably, this disconnection from processual information may leave users disconcerted and uncertain about the value of spending time reporting to platforms; further empirical research will be needed to determine if this lack of transparency discourages reporting.

Given the assertion that online abuse and harassment is normalised to the point that it is less easy to recognise than it may have been in the earlier, Web 1.0 era (Haslop et al., 2021), a stronger connection between reporting and policy, better descriptions of digital harms that are more aligned with platform terms of service, and other mechanisms of explanation (such as links to video descriptions) would allow platforms to contribute a pedagogical component through the reporting interface. That is, if platforms are to be regarded as having a responsibility in design and policy to shape the behavioural norms of users (Brown and Hennis, 2021), and if reporting is the interface to activate intervention, then utilising the interface to educate users who are unlikely to read, find or access community guidelines on the expectations and the extent to which their suspected concerns over content and behaviour is correct presents an important opportunity.

B: Eschewing a dialogic approach to complaint-making

The walkthrough exercise indicated that the platforms’ reporting interface lacked a dialogic component to the reporting process. This absence takes two forms: (1) limitation of the interface to tick-box classification of the problem rather than permitting testimony in a form that suits the user or the issue, and (2) few options to provide further information, or converse with a moderator or community manager. A dialogic approach assumes that conversation, exchange and engagement are a necessary component to feeling part of a community, group or network (Miami Theory Collective, 1991), to a felt sense that one is recognised as a subject within a society (Alcoff, 1995), and a key affordance of digital networked communication (Gilpin, 2011). Indeed, dialogue can be the difference between attempting to raise a concern and gaining a sense that one is being ‘listened’ to (Dreher 2009). Despite many platforms’ public statements that their users form a community, the platform business model positions users as transactional clients (Flew, 2021) rather than as members of a networked community in the Web 1.0 model (Rheingold, 1993)—and this is borne out by the non-dialogic process of the reporting interface.

As noted above, of the interfaces viewed, only two (YouTube and Twitter/X) provided text boxes for (limited) further description of the issue being reported. Whether minimal further commentary or the limitation only to radio button descriptors, all interfaces restricted the possibility of ‘making a case’ over content/behaviour, including particularly material that may be complex and not fit into a simple classification system. For example, content that is deemed offensive but falls short of recognised definitions of hate speech is obviously debatable, but there is no opportunity for a user to explain why it may be harmful, to provide context or an indication of the emotional impact.

From a user perspective, then, it can be argued that the lack of capability for providing a testimonial in a form that suits the content or the user (e.g., a written statement, a recorded video or a real-time conversation with a moderator) positions the user as a person who is performing free, classifying labour for platforms and their moderator staff (Goldberg, 2018)—as a necessary requirement before a complaint can be submitted. The interfaces, then, operate as part of what Sara Ahmed (2021: 6) has identified as the “institutional mechanics” which present barriers that prevent more complex, difficult-to-resolve events from being heard as complaint. Sometimes an event which is traumatic to a user can only be described through testimony, as its knowability resists “simple comprehension” (Caruth, 1996: 6). As we have learned from the many cases of sexual abuse, harassment and intimate partner violence around the world, listening to victim-survivors in ways which allow them to describe what has occurred in their own way is not merely a means to resolution but an important care practice to respect the user as a subject (Fileborn and Loney-Howes, 2019). Although platforms need to be attentive to the substantial resource cost and employee harms if moderators and intervenors were required to read reports as lengthy testimonials, the limitations of a transactional interface may not only be ineffective in some cases, but may discourage users from utilising ongoing reporting as respectful framework for resolution.

In terms of understanding the opportunities for dialogue, exchange or engagement with platforms in the context of making a report, the walkthrough also sought to determine if any of the platforms provided opportunities to contact a moderator or platform personnel to further describe the problem, outline its urgency, describe its impact on the user or third party, provide further information, or seek follow-up or clarity. For example, we were interested in whether there was a free text contact form, or a link to an email address. While each platform provided contact details—typically a form—somewhere on the platform, none provided it as part of the reporting interface, leaving the visual and experiential impression that the act of reporting is wholly separate from contact, communication and engagement, underscoring the ways in which the interface is non-dialogic.

C: Individual, transactional reporting

A third indicator to emerge from the walkthroughs is that reporting is always an individualised transaction. Many people may, of course, report the same problematic content such as a hate speech post, high numbers of complaints may result in it being taken down, and indeed this may be organised through call-outs, responses to the post or by an active network of users operating together. However, the reporting interface always assumes an individual user who has been harmed and/or is concerned about a post. Although it is important not to assume that any user is necessarily alone at the time of making a report and may in fact be well-supported by family, peers, colleagues or other users (Cover, 2022), the interface positions the user to perform the act of reporting as a solitary event. That is, the reporting interface is deliberately arranged as a one-way communication from a singular user about their experience of a singular incident or problematic content. This is problematic in three ways: (i) it does not adequately support users who are experiencing multiple incidents or a sustained campaign of harassment; (ii) provides no context around previous reports, either about the same perpetrator or content or by the same user; and (iii) ignores opportunities to let a victim-survivor know that others may have reported the same content —in short, that they are ‘not alone’.

Although the problematic content, abuse or harassment may have occurred in a very public way, the process renders the complaint not only private but facilitates secrecy. This is part of a wider organisational complaints culture which Ahmed (2021) identifies as one which protects institutions rather than complainants or accused persons through erecting deliberate obstacles that “render so much of what is said, what is done, invisible and inaudible” (7). This is not to suggest that reporting and complaining should automatically be a public matter, given the substantial risk of defamation and other legal issues that may arise when another user has been wrongly accused. Rather, it is to argue that the individualisation of reporting through a practice of privacy may disregard the duty-of-care benefits both to the complainant and to the wider digital culture if the interface facilitated a less individualised practice, such as providing access for users to discuss the issue with other complainants of the same post.

The individualisation of reporting has two potential key impacts. Firstly, it leaves the user without opportunities for involving other persons in what may be a legitimate need for care through sharing (Cover, 2023b). Indeed, in the absence even of recommendations for self-care, counselling or other ordinary elements of a trauma protocol that form parts of many other institutional complaints systems, the platform interfaces position users as having to manage their self-care after abuse or harassment singularly. There is growing evidence that users who have been victimised online have innovated among themselves to develop private support groups for mutual care practices (Cover, 2022) which, arguably, may be more efficiently sponsored by platforms and assist platforms to fulfil social care responsibilities.

This individualised, care-absent framework fails to take advantage of contemporary knowledge that recognises the significance of moving away from care as a transactional process towards the benefits of mutual, collective care. For example, in the work of Joan Tronto (2013), care is theorised as effective and efficient when it goes beyond individual or personal responsibility to manage through a transaction or support-seeking and, instead, can rely on infrastructural supports to facilitate connection as a normative care practice—a movement away from ‘caring for’ or ‘caring about’ to ‘caring with’ as shared support and protection for those who have been harmed (The Care Collective, 2020). Indeed, we have witnessed this need substantially in the #MeToo movement in which the shared experience of recognising that others have been harmed in similar ways has provided a powerful therapeutic practice while raising awareness of the harms of abuse (McGowan, 2018; McRobbie, 2020). There is also similar evidence in a study seeking options for a more empathic care approach to victims of online abuse and hate speech which noted a preference among younger users for peer support rather than resolution from reporting (Phillips, 2018). Where the reporting interface positions the user in a communicative transaction with the platform, then, platforms fail to utilise the opportunity that such an interface might enable in providing peer support, care and a sense that others may be experiencing similar harms.

None of this, of course, is to suggest that the responsibility for care should rest on victim-survivors of abuse, or that informal care practices are the best or only remedy to being victimised online. Such a perspective would align with what James Brown and Gregory Hennis (2021) have identified as a traditional libertarian views in platform governance that not only pushes responsibility for self-care back to users, but actively endorses abusers by separating their actions from the consequences. Rather, what is suggested here is that in the ethical obligation of care towards those who have been victimised, responsibility rests with the platforms that establish and facilitate online communities to participate in enabling the full range of care, and that should not be limited to transactional and individualised provision or recommendation of care services, but to facilitate collective care for those who will benefit from a sense of shared victimisation, trauma and remedy. This, we argue, is a task neatly enabled through the reporting process, whether tacitly by indicating in the interface that others have experienced similar concern about content or behaviour reported, or enabling pseudo-anonymous opportunities to connect users who have been similarly harmed.

D: Opacity of the resolution process

Finally, the walkthrough sought to determine the extent to which reporting interfaces exacerbated existing issues of platform transparency. Platforms are regularly criticised for a lack of transparency in moderation, decision-making, use of feed algorithms and so on (Flew, 2021; Suzor, 2019; Cover, 2023a). The walkthroughs indicated a lack of transparency about the complaints process in a number of ways. Firstly, there is processual opacity: the interface and reporting action across all platforms viewed lacks information on how the assessment of reported content is made. While most platforms analysed in the study give some information elsewhere on the platform, such as in a community guidelines documents, these are not referenced at the time of making a report. There is some wider public information about how reports are assessed and rated, for example, it is known that Twitter and Facebook categorise problematic content that has been reported by rating as more harmful according to its likelihood to be seen by a greater number of others rather than by an assessment of the harm it may have caused to the user (DeCook et al., 2022). Arguably, this is something an individual user reporting may wish to know at the time of making a report in order to assess the value of providing the time to report. This is not to suggest that platform policy and procedure itself is necessarily always opaque, but that the singular mechanism for users to engage with complaints and reporting practices is wholly absent from the interface itself.

The walkthroughs also uncovered inconsistency in whether or not users were alerted that they would be provided with an outcome. While some platforms do indeed notify receipt of the report (e.g., YouTube through a separate email to the user account), most are unclear at the time of reporting on whether or not a user will receive an outcome statement, what action if any was taken, and whether there are opportunities for appeal. It has been anecdotally reported elsewhere that those who have reported problematic content rarely received further communication (Plan International, 2020). Arguably, an effect of the failure to provide adequate information at the time of reporting about the process, how decisions are made, criteria for action, anticipated time-frame, or other reasonable expectations that would ordinarily be clarified in an institutional complaints process potentially leaves the user who has reported in a state of “emotional discomfort” (Scarduzio et al., 2021: 72). Minimising emotional harms of a person who has reported is, again, a recognised component of support for complainants of abuse in other settings (Skoog and Kapetanovic 2021).

Conclusion

In discussing the culture of institutional complaints, Ahmed (2021) notes that people often decide not to make a complaint due to past experiences of complaining, including navigating unfriendly processes or because it is unclear on whether or not resolution and remedy have been achieved, even if an outcome is reported back to the complainant. In line with this, this component of our study has been interested in making sense of how the reporting interfaces might condition users to be disinterested in reporting problematic online content. Undertaking a walkthrough of a selection of popular platforms’ reporting interfaces has revealed an initial framework for apprehending possible reasons why users may find reporting problematic or harmful content directly to platforms unhelpful or undesirable. By understanding the organisational framework of reporting offered—and limited—by interfaces, we are better positioned to make sense of the digital-cultural factors that influence the willingness and desire to report (Scarduzio et al., 2021). Although the walkthrough suggests the reporting interfaces have a very high level of ease-of-use—a key element any app walkthrough seeks to uncover (Light et al., 2018)—their ‘usefulness’ (Wong et al., 2016) as a means of remedying abuse, harassment and harm, and for addressing problematic content or the toxicity of a digital setting, becomes suspect.

Walkthroughs provide a first, reportable step in making sense of how the opportunities and limitations of interfaces may encourage or discourage a culture of reporting problematic or abusive online content. There are, however, some limitations of the walkthrough method, including the need for further user-centric investigation to test the kind of findings outlined above in the context of everyday users. Indeed, the next step in understanding the utility of reporting to platforms as a means of remedying abuse and harm requires further empirical research with those who have experience of reporting. This is an opportunity to test the extent to which the interface, and the processes it represents or obscures, are key factors discouraging reporting, and how these intersect with broader user undestandings about the harms of online abuse and harassment. To do so is not merely to provide further understanding of platform reporting frameworks, but to provider platforms with insights drawn from people-centric and ethnographic practices that are inaccessible to platforms, designers and moderators in the context of reports themselves.

At this stage of the research, however, and in light of the four issues that emerged from the walkthrough, it is possible to make the nascent argument that to fulfil their social responsibility to protect their users in line with increasing community demands (Amnesty International 2018b; Christchurch Call, 2019; Flew 2021), platforms must invest substantially greater investment in the following: 1. Providing policy and procedure documentation plus better summaries (such as video explanations) to those reporting at the time of making a report, so that complainants go in informed and can align their complaint more readily with platform terms and expectations. These may, then, serve also as pedagogical resources towards increasing public understanding about why some content and behaviour is harmful. 2. Options to allow users to present testimonials in their own preferred form (a letter, an audio or video statement) will present better care opportunities. This may include opportunities to choose between radio button classification and alternative forms for reporting that maximise the dignity of the complainant. Attentive to the extant problem of resourcing moderation, there may be opportunities for artificial intelligence to summarise a users’ testimony for the benefit of moderators, utilising the nascent AI tools deployed in content monitoring by platforms (Kissinger et al., 2021). 3. Aligning reporting frameworks with contemporary understandings of mutual care, preferences for peer support and the de-isolation of those who have been harmed. This could take the form of anonymous peer support connections or contact facilitated by an interface when a report of substantial harm has been made, alongside the provision of links to recognised support.

Ultimately, there is a social, emotional and wellbeing cost incurred in the act of reporting abuse and harassment (Ahmed, 2021: 151) beyond the harm of toxic online behaviour or problematic content, whether or not reporting remedies the initial harm. It is therefore important that platforms minimise risks and offer more care, protection and capacity to report in diverse ways if they are to remain the key responsible party addressing the toxified digital ecology.