Abstract

With the increasing use of generative AI tools to enhance engagement on social media platforms, we are beginning to see AI-generated content contributing to digital harms, including particularly online abuse. Drawing on digital ethnography case studies, this article investigates the emergence of AI-generated ‘rage bait’ content, a severe form of trolling attempting to invoke users into adversity and outrage. AI-generated rage bait differs from other rage baiting because no human actor is involved in its creation or initial distribution. Case study examples of rage bait posts generated by AI tools are analysed. The article theorises the cultural causality of AI-generated rage bait and other digital harms, discussing how AI tools draw on extant datasets, practices and norms to further embed rage in the digital ecology. The article discusses some of the cultural and policy implications of digital harms originating from AI generators and provides a roadmap for further study.

Introduction

With the increasing use of generative AI tools to enhance engagement on social media platforms, we are beginning to see artificial and automated content contributing to digital harms, including abuse, trolling and disinformation (Liebowitz, 2025; Shoaib et al., 2023). While it is recognised that AI tools have been used by human actors to create malicious, problematic and harmful content such as ‘deepfake’ synthetic video and images (Cover, 2022a; Meikle, 2023), there are emerging examples of content that has been fully created and distributed by automated processes that, if and when generated by a human user, would be regarded as problematic, incivil or harmful. As automated content creation develops alongside the creative potential of AI tools and facilities, we encounter a gap in capability for making sense of a novel cultural, policy and communication issue in how to police, moderate and regulate automated content that may be harmful but has no malicious or reckless human user behind that content.

One emerging example is AI-generated ‘rage bait’. When created by a human user, rage bait is ordinarily considered a severe form of trolling that maliciously attempts to invoke other users into expressing outrage, thereby rewarding the user with engagement statistics that may drive income when monetised or some other reward such as the pleasure of attention. This article draws on work undertaken for the Australian Research Council project Online hostility in Australian Digital Cultures, which has been engaged in longitudinal digital ethnographies in a range of user, platform and social media settings to understand the cultural formations of increased online abuse and harassment, and the cultural conditions that give perpetrators a sense of ‘permission’ to create or share harmful, hateful or abusive content. During the project’s ethnographic study of potential digital harms on the question-and-answer forum-based platform Quora, it was uncovered that a number of rage bait questions ostensibly summoning hateful, aggressive and adversarial responses were being generated and circulated by recently introduced AI tools without the involvement, supervision or sharing by human users.

This article discusses the digital-cultural causality and implications of the automated, non-human production of rage bait. It introduces the cultural formation of rage bait as a digital harm operating within the contemporary platform attention economy. Using case studies and data drawn from the study, the article focuses on the advent of AI-generated rage bait content, discussing the context of its emergence through (a) AI’s reliance on extant datasets that normativise rage discourse, (b) the form of AI communication in mimicking common contemporary human communication and (c) the purpose of AI to generate content within the attention economy in which rage bait is most effective. The article ends with a discussion of some of the cultural and policy implications of digital harms that originate from AI generators and the further research that is needed to commence understanding the role and extent of generative AI in exacerbating digital toxification.

What is rage bait?

The emotive expression of rage has become a staple of many online forums known for hateful and harmful content and behaviour, and is widely referred to in digital and popular culture as trolling, online toxicity or hate speech (Kwong, 2025; Sheinin and Goldin, 2025). A substantial amount of online abuse, harassment and hate speech is driven by rage that has its roots in cultural disenfranchisement (Isenberg, 2016), disinformation and suspicion (Cover et al., 2022), and irrational or polarised emotive responses to otherness (Brown, 2019). That is, there is an understandable cultural formation in which a disenfranchised multitude has been positioned into the kind of enraged desperation expressed through abuse, harassment, exclusionary and injurious speech (Castaño-Pulgarín et al., 2021; Hardt and Negri, 2017), amplified by social media algorithms that normativise adversarial or enraging content as it attracts greater rates of attention and engagement (Poland, 2016; Waqas et al., 2019). Here, the business model of digital platforms exploits and encourages the free digital labour of users, sometimes through the monetisation of posts that both express and invoke rage because incivility, negative emotiveness, and the affect of enragement are more efficient in the attention economy, and therefore a more valuable commodity than phatic, passive or normative communication (Terranova, 2022).

One form of toxicity circulating on digital platforms is the product of deliberate ‘rage baiting’, in which enragement operates in a different register from the expressions of hate and adversity. Rage bait is a term that emerged in informal online discourse (Scott-Railton, 2022) to describe the manipulative practice of eliciting outrage and aggressive responsiveness to increase profile traffic, subscribers, algorithmic ranking and in some cases online revenue (Goitia-Doran, 2024; Munn, 2020). While the poster may or may not be expressing anger themselves about a topic, their content is deliberate and intentionally designed to invoke an outraged response or cause adversarial dialogue among other users (Jong-Fast, 2022). The expression riffs off the more familiar term ‘clickbait’ which is used to describe attention-grabbing links, memes and image thumbnails as part of an engagement strategy to draw online traffic to a particular site or account, often without the promised rewards of excitement, hidden truths, erotic stimulation or other ‘pleasures’ (Patten et al., 2024).

Rage baiting differs from clickbait in several key respects. Clickbait operates in a linear media pattern, encouraging users to follow a hyperlink to consume information, imagery or video content that may interest, whereas rage bait manipulates users into responding to material, statements, images, footage or other kinds of content likely to offend or inflame. Both operate within the attention economy to garner the notice of users in a setting of attention scarcity, but rage bait is better located within formations of interactivity (Cover, 2006) by positioning users to respond, engage with the post and interact with other users. That is, rage bait drives engagement rather than traffic. Both are important registers of circulation but, depending on reward opportunities provided by platforms, response and reply engagement (including between multiple third-party users) is considered more important that passive views and readership traffic (InCahootsCo, 2025). Others may be gratified or rewarded by rage bait’s engagement in non-monetary terms, for example, if it gives the poster a sense that generating outrage serves a moral purpose (Bandura, 1999). There is some nascent evidence that non-monetised rage bait has also been deployed for political manipulation by troll farms seeking to enhance extant social divisiveness (Talbot, 2025).

A common form of rage bait is a post or video that is racist or anti-minority in a way that would fall short of community standards of acceptable civility, sometimes overt and sometimes disguised as thoughtlessness or ignorance, but is likely to produce large numbers of ‘call out’ responses from those who find the post unacceptable, often from unrelated bystanders (Obermaier et al., 2021). For example, it may involve material that is a clear insult to a population, group, way-of-life or a public figure, insult being a communication form that deliberately seeks a reaction (Eribon, 2004). Or it might draw on politically-polarised assumptions by appearing to criticise a political figure or position (e.g. posting a negative statement about United States President Donald Trump) to cause hundreds or thousands of both supporters and detractors to respond in rage – both defensiveness of, and anger towards, the president – and argue in adversity among themselves, thereby gaining substantial engagement increases. At other times, rage bait may be less political but nevertheless produce a visceral reaction among users or viewers to engage through negative replies, calling out something disagreeable, such as extremely wasteful cleaning practices or deliberately disgusting recipes, both produced under the guise of helpful ‘how to’ videos but actually designed to invoke angry responses and debate (Scott, 2025). Underlying rage bait, then, is a cynicism in that the poster may not necessarily agree with the statement or be ignorant of community standards, but they post as if they are disagreeable, extreme or ignorant because it more effectively generates responsiveness than everyday personal and phatic communication forms.

Rage bait thus is designed to arouse a response as a more severe form of trolling – in its original meaning rather than its more contemporary use to refer to any toxic online behaviour (Gorman, 2013; Volkmer, 2023). Trolling’s early formation on Usenet groups was a behaviour that attempted to catch out ‘newbies’ unfamiliar with already-settled arguments on the group (Marwick and Lewis, 2016). In the pre-platform era, it was primarily a form of pranking, often using exaggeration or falsehood to generate humour, amusement and identification among an insider group (Clarke, 2018; Hardaker, 2010). In the post-platform era, rage bait breaks with Web 1.0 collective and participatory ethics and formations of play (Rheingold, 1993). However, it also operates as a cognate of the kind of sensationalism that seeks to produce outrage (Berry and Sobieraj, 2013), drawing on the much older form of tabloid headlines. While most tabloid leads and advertisements are simply emotive to encourage readership, a subset of those have, since the 1980s, drawn on existing polarised and extremist views to generate political responses and actions (Glynn, 2000), including on topics such as migration or gender identity (Butler, 2024); what is sometimes referred to as ‘dog whistling’ (Åkerlund, 2022) that generates a culture of anger and disagreement. Rage bait in digital settings thereby combines sensationalist dog-whistling and the older, less-harmful form of trolling, extended into offensiveness and insult to generate engagement in ways which may be toxifying to the digital ecology.

As Shin et al. (2025) have noted, due to its novelty there is little scholarship to date on rage bait, despite its ubiquity as a communicative form within the wider digital ecology. They note, however, the opportunities to build on extant research on the invocation of anger in online settings as a mechanism to drive user engagement. Unlike other emotive responses, anger, they note, is characterised by its capability to take action, respond and actively assign blame to others. Rage bait is thus more likely than other genres of digital communication to result in engagement due to its deliberate design to encourage interactive responses through the arousal of anger, indignation and outrage. Anger invokes a sense of injury, and in socio-cultural terms establishes an injured party (Ahmed, 2004). At the same time, it makes use of the kind of gullibility driven by online speeds of engagement and the absence of careful critique prior to responding (Groh et al., 2021).

In combining the precedents of early playful trolling and the more recent growth of information-based clickbait practices, it is has proven an effective and efficient mechanism that draws on political and socio-cultural polarisation, adversity and hostile ‘call-out’ practices (Cover et al., 2024) as everyday communication dispositions to generate an ‘unhealthy’ form engagement. It should be considered a form of digital harm not because of the offensiveness of the content or the motivation behind its generation, but for the effect it prompts in both individuals (feelings of offence, maliciously caused heightened negative emotion, insult on behalf of the self or others) and the wider digital ecology (toxification and unpleasantness of the online environment, increased deliberate disinformation, and distrust in the authenticity and genuineness of online content).

AI-induced rage bait: the Quora case study

A new departure that builds upon the rage bait phenomenon emerging in the AI era is that of rage bait posts that are artificially generated and circulated fully without the involvement of a human user. They are therefore without malicious or cynical intent or gratification for any user. Contrary to assumptions that AI tools may be useful in preventing harmful communication by automating moderation, guiding users or serving as exemplary posts of ethical online content (Milosevic et al., 2022), it can be said that generative AI tools are copying, repeating and expanding extant forms of problematic, harmful or toxifying ‘ways of being’ in contemporary digital and everyday culture through automated processes outside human producer supervision.

To illustrate the emergence of AI-generated rage bait, I use here a case study example drawn from the reddit-styled question-and-answer forum Quora. Quora, was established in 2009 by former Facebook employees Adam D’Angelo and Charlie Cheever, and was popular among elite tech sector employees, academics and serious amateur researchers and hobbyists (Schleifer, 2019). Notably, Quora doubled its regular monthly active users from 200 million to 400 million between 2017 and 2023 (Kumar, 2025), with the closure of Yahoo Answers in May 2021 being a likely significant contributor, arguably resulting in a substantial population change away from more intellectual topics and engaged, well-researched responses to glib opinion, unresearched statements and polarised abuse that had become familiar on Yahoo Answers (Victor, 2021). Between 2021 and 2024, Quora monetised questions in order to increase the raw resource for engagement that encourages reading, responding or answering, and comments on those answers.

This project has been conducting a longitudinal digital ethnography on digital harms that includes Quora as a key example of interactive question-and-answer prompting across a vast array of topics, collaborating with victims of online abuse and harassment in their self-care, mutual care, reporting activities and perpetration tracking (Cover, 2024; Cover et al., 2025). Following digital ethnography practices (Pink et al., 2016), the study has included sustained immersion of this investigator since 2020 into Quora spaces on select topics, conducting participatory observation of interaction, topic choice, longevity of user profiles, adaptation to the introduction of new tools and changes to site interfaces, reporting practices related to abuse, harassment, disinformation and other digital harms. Field notes and text captures subsequently coded as digital harms (abuse, harassment, hate speech, doxxing, disinformation, offensiveness, insult and deepfake image and video) have been analysed for user-perpetrator interactions, perpetrator background such as country of residence and stated career experience and education attainment, and evidence of discourse polarisation.

Given the popularity on Quora of discussions about the British royal family (history, constitutional law, sovereignty, titles and tabloid-style gossip), one key topic in which this investigator participated was that of Meghan Markle, the Duchess of Sussex, who is the centre of substantially polarised public views and a daily topic of British and North American tabloid publications (McGill, 2021). As the first mixed-race woman to marry a senior member of the British royal family, there is substantial hate discourse, racist stereotyping, disinformation and expressions of violence directed towards her on Quora, and significant harassment of those who have defended Ms Markle or corrected disinformation (Cover, 2022b; Ducey and Feagin, 2021). It was observed that Ms Markle’s racial background, career, philanthropy, marriage and motherhood have regularly been deployed in human-induced rage bait questions designed by anti-Meghan haters to invoke other haters into heated rants and sometimes hate speech. For example, questions that regularly referred to her title inaccurately as ‘Princess Meghan’ were assessed as rage bait because they were more likely to invoke very extreme angry responses from users who dislike her and disavow her status as the wife of a British prince. These fell into the study’s conceptualisation of normative, polarised and adversarial digital harms. It was through a routine assessment of the source of rage bait questions referring to Meghan Markle that the study stumbled upon evidence that some rage bait questions had been developed not by users but solely by Quora’s AI generator.

Quora and AI

Quora introduced its own AI tools to generate questions rather than rely on both monetised and non-monetised encouragements to users to maintain the flow of new questions as a resource to encourage traffic and engagement (and exposure to advertising), therefore more efficiently and regularly creating content. That is, rather than relying only on human users to ask and post questions, the tool automatically generates, shares and distributes new questions without any human oversight, approval or moderation. In September 2022, Quora introduced the Quora Prompt Generator focused on generating a larger volume of questions for users to answer in the face of declining questions – not as a replacement for human-generated questions but a numeric augmentation (Choudhary, 2022). Widely criticised by users in the study’s observation for non-factual, nonsensical, unrealistic or inane questions, it was enhanced in February 2023 under the name Quora Creator Tools, and is powered by GPT-4, GPT-4o and/or Anthropic Claude large language models (LLMs). Ostensibly, the enhancement was designed to help compete for traffic that may have been diverted by Google AI Overviews (powered by Gemini) which had lowered Quora on the list of top likely responses to Google search queries. Quora’s AI tools mimic user-to-user communication in an open forum setting, and do so by obscuring the AI generation from those who do not undertake a deliberate search for the account name that created the content.

The AI question bot generates new questions that it feeds automatically through algorithmic selection by email to users based on their recent topical engagement. A user can determine that a question was generated by Quora Creator Tools but only with some effort: Quora does not post the name of the user who asks any question; rather, an interested party must check the ‘question log’ through a series of clicks to find the name of the original poster (‘OP’). If the log shows it came from the Quora Creator Tools account, then the question was AI-generated and distributed. By clicking on the account, one can see the recent history of other questions, posts, comments and edits of a user account, including that of the Quora Creator Tools AI account, providing a history of several accessible hours of automatically-generated, time-stamped questions asked (approximately 3000) that can be scrolled back by hand in the form of a hyperlinked list. The list will reveal the most recent 3 hours and 15 minutes of questions asked by the AI tool. In lieu of an API interface enabling consistent scraping, the displayed questions can be seamlessly and quickly copied and pasted elsewhere for analysis.

Quora AI rage bait

As a question-and-answer forum, Quora has already lent itself well to general, human-induced rage bait through user questions that often encourage and amplify adversarial questions that attract a greater number of both readers and responders in accord with other platforms such as Facebook, X/Twitter and TikTok which have been found to use algorithmic feeds that amplify and favour incivil or adversarial content (Cabbuag and Abidin, 2024; Riedl et al., 2024). However, AI-generated questions are a new departure because they mimic human interrogation in a setting in which question-and-answer forum-based communication and its interfaces have been normalised.

The study was alerted to the possibility of AI-generated rage bait questions on Quora by checking the question log for the account names of ‘users’ or ‘perpetrators’ who had posted two Meghan Markle rage bait questions in June 2025:

Who are some of the most beautiful royals in history, and what makes them stand out compared to Meghan Markle?

What role did Kate Middleton's British background and family support play in her ability to adapt to royal life compared to Meghan Markle's experience?

At face value, both questions were assessed by the study as rage bait for two reasons. First, both draw on an ‘adversity between two women trope’ by calling on responders to contrast the wives of the two sons of King Charles III (Chappell and Young, 2017; Fogarty, 2012). Much like political posts in bipartisan parliamentary system countries, fictionalised or exaggerated narratives of adversity between two women, including along class or race demarcations, is a common element of rage bait posting because it encourages heavily polarised argumentation that is likely to become adversarial itself, often to the point of user-to-user aggression and harassment. Here, the questions both ‘bait’ users into either defending or admonishing Meghan by virtue of comparison with other British royals, including along racial, beauty and body-type opinions (Jha, 2015; Ward, 2021), common in relation to women public figures (Cover et al., 2024). Polarisation, as Axel Bruns (2019) has demonstrated, is not caused by digital platforms but may become normative when a topic is political or divisive, typically leading not to echo chambers and fragmentation but to engagement and heated debate.

Unsurprisingly, both of these exemplary questions resulted in the anticipated ‘enraged’ answers, generating 30 individual answers across both questions (plus additional comments and replies), comprising 7444 words of content. Approximately two-thirds of the replies have been classified as ‘rage responses’ using the study’s model of digital harms typology: disinformation, interpersonal aggression, enragement, heavy use of exclamation marks, coding of adversity between two women tropes, deliberate use of abusive nicknames and heated disagreement between users. Although the study was aware of Quora’s new AI-generation of questions, it was a significant finding that among questions that were wholly generated and circulated through an AI process without human agency were some that fit the category of rage bait.

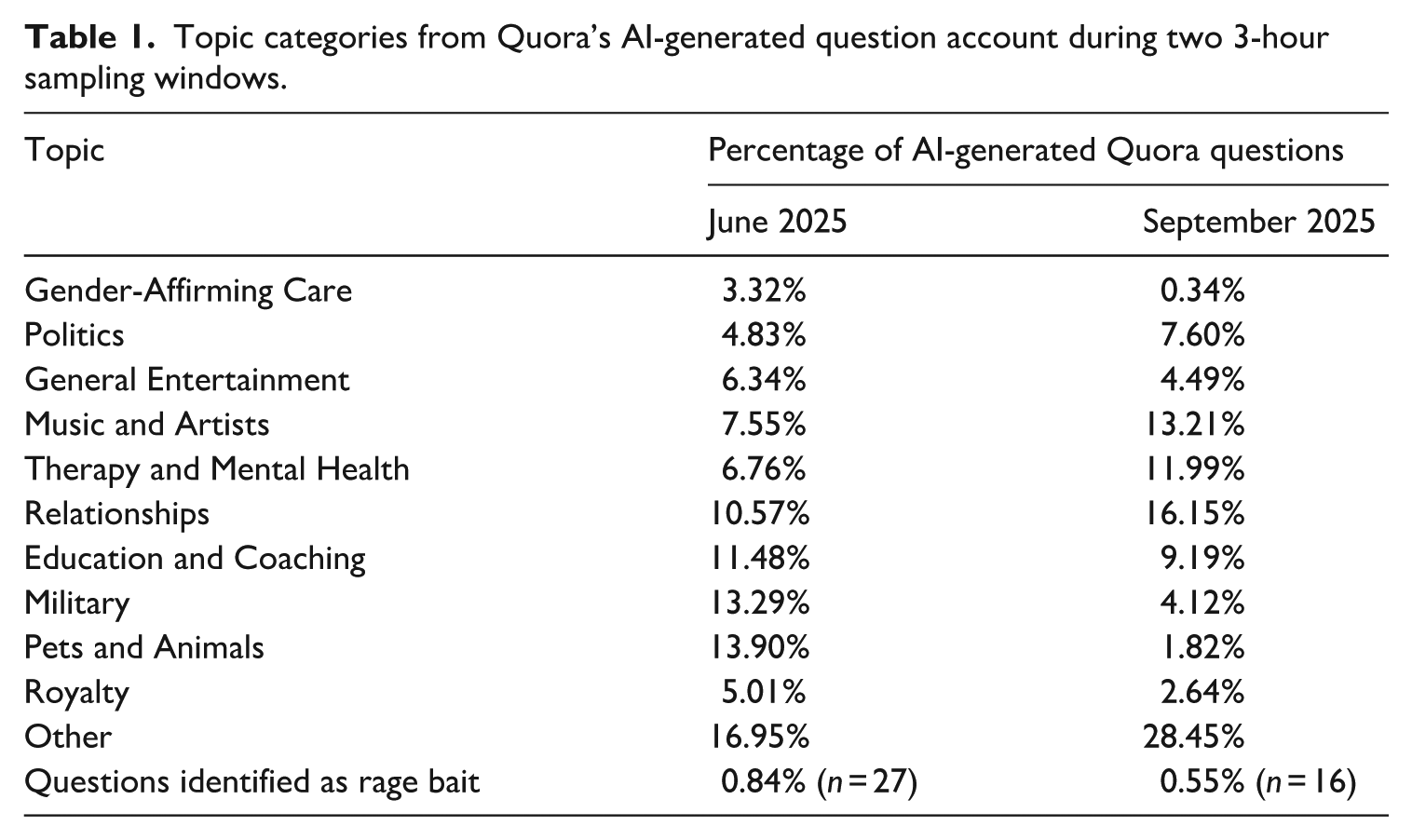

Although these two demonstrate how the platform’s use of AI tools is capable of producing rage bait content, they represent only a very small sample within a very narrow field of topical interest (British royalty). A small number of examples of AI-generated rage bait posting would ordinarily be immaterial and dismissed as anomalous. To determine the extensiveness, then, of the platform’s AI generation of rage bait posts, two assessments of were undertaken of the Quora Creator Tools AI-based question generator account, one in June and one in September 2025, scraping 180 minutes each of questions automatically generated by the AI tool. The June sample piloted the capture, while the September sample followed research discovery practice to replicate and compare samples, and determine the significance and feasibility of studying AI-generated rage bait. This research showed that 3188 (June) and 2960 questions were added during each 180-minute period, approximately 17 questions generated per minute. To enhance the digital ethnographic component of the study, the questions were framed and coded through a semantic search generator, organised by theme and discourse and cross-referenced with Quora’s own assigned topic categories to determine the number that may be rage bait questions. Both the June and September samples were then hand-coded by the author to check for accuracy, using both the generator and the hand-coding for accuracy without a secondary reliability check Table 1 indicates the percentage of topics, with some obvious ‘heated topics’ at the time of sampling (June 2025) including military, politics and gender-affirming care.

Topic categories from Quora’s AI-generated question account during two 3-hour sampling windows.

In both samples, questions that were likely to be rage bait were conservatively identified, with 27 (0.84%) and 16 (0.55%), respectively. Taking the average between the two samples as a trend, this would be greater than 1200 rage bait questions generated by AI without human oversight per week. Neither 3-hour sample produced any rage bait questions specifically about Meghan Markle; however, the generator did produce some in other topic areas that the study categorised as rage bait, including the following:

Why do some people think Vijay Mallya is being unfairly labelled a thief, while others believe he should be held accountable under Indian law?

What role does disinformation play in shaping voter opinions during elections involving controversial figures like Trump?

How do you explain to kids why someone controversial and accused of wrongdoing got reelected as president?

Should teachers be held accountable for their personal opinions on social media, especially when it's about political figures like Charlie Kirk?

What process determines which honorary military titles royals like Prince Harry can keep or lose when they quit official roles?

On the basis of the examples through the digital ethnography and the numeric indicators from the 3-hour sample window, including particularly the range of topics and the sample of questions that would likely produce polarised, aggressive, adversarial or outraged responses, it is therefore possible to suggest that the introduction of AI generation of questions as prompts for response and engagement are very likely to consistently include those that can be classified as rage bait and therefore that harm users and the digital ecology by fostering adversarial engagement.

The next two sections of this article address the contextual factors by which we can make some nascent understanding of why AI tools may unwittingly and without any human agency produce rage bait content when designed to encourage engagement, followed by a discussion of some of the implications that AI generation of rage bait and other digital harms may have for policy and scholarship on the regulation of digital platforms using AI to automatically generate content. This is to bring the discovery of generative AI’s role in automatically producing harmful or problematic content into a digital cultures framework by making a nascent assessment of how AI tools are exacerbating the toxification of the digital environment. That is, it is important to unpack the convergence of causalities to apprehend the automated generation of problematic or harmful content as more than a ‘common-sense’ inevitability of technological change and thereby to find points of intervention and critique.

The context of rage bait causality

The ‘reasons’ for generative AI’s outputs are not always discoverable due not only to the proprietary management and massive size of an AI system’s input datasets, but also to the lack of oversight in programming and training AI tools such that that it is impossible to reverse engineer the origins of AI-generated content (Wilson, 2023). That is, a neural net’s training setting differs significantly from other digital operations because it is not programmed line-by-line by human programmers but places the net in the training environment to ‘learn’ automatically. In that respect, we cannot know why an AI tool has produced specific Quora questions that are in the form of rage bait, nor can we forensically investigate causality to assign responsibility. We can, however, make sense of the likelihood by an assessment of the digital-cultural setting in which that AI tool has been trained. A digital-cultural approach acknowledges that AI is not a problem shaping effects as in technologically-determinist approaches, but its uses, values, issues and impacts (negative, positive and otherwise) emerge as with other digital phenomena in and through the extant cultural formations that include lived realities, means of communication, discourses of technological adaptation and the subjectivity of users (Cover, 2023). That is, we can form an understanding of the manner of its operation and reasonable assumptions about the general datasets used by it to learn what a question looks like and what questions will produce answers, alongside a comparative assessment of the cultural practice of interrogative interactivity.

In this respect, it is possible to theorise three contextual elements that will ‘cause’ an AI tool to produce rage bait: (a) the datasets on which that AI tool has been trained including existing questions found online such that it recognises without value deliberate rage bait questions as ordinary questions; (b) its basis in mimicking human-derived content to stand in for a human user asking such questions; and (c) the purpose of AI in the digital economy being to generate content that itself generates attention rather than guides, intervenes or demonstrates a value-based standard.

[A] The normativising force of AI datasets

Generative pretrained transformers or GPTs are trained on very LLMs in order to produce the kind of output that can be recognised in communication. Being a tool that works through statistical probabilities (Crawford, 2021), there is therefore a likelihood that it will reproduce that which is statistically represented in the dataset, and this may include rage bait questions. As most people today capably recognise, generative AI tools do not have a values-based intellect to judge rage bait content in its data and to thereby discard them as a model unless deliberately designed to do so. Rather, the human-induced use of rage bait in a portion of online content used by AI to determine what ‘questions’ or ‘content’ look like establishes the likelihood that a portion of generative AI’s output will also be rage bait. This ‘fact’ of AI technological formation results in two elements that guide AI tools to produce rage bait and other harmful digital content: normativities and biases.

Although there is a long tradition of media and cultural study of disciplinary ‘norms’ that establish a dichotomous boundary between an acceptable norm and an ‘abnormal’ marked by exclusion and abjection (Creed, 1993), more recent scholarship has pointed to the complex operation of normativities that emerged in the late 19th century and influenced the development of statistical administration, record-keeping, taxonomy and coding, including the coding undertaken by computer technologies. Norms in this context operate within a range represented by a distributional curve (Foucault, 2007). Such a range is built not on clustering but on proximity to a norm, allowing for diverse variation within a continuum of intelligibility. AI training on datasets does likewise: ‘questions’ suitable for Quora are plotted against a norm and can be assessed in terms of distance from that norm, thereby allowing for a percentage of outliers such as rage bait questions. While a human user or a values-based assessment may reject the utility of such questions, training on datasets’ normative range does not. This point underscores how AI functions are produced not as a sentient tool through programming code, but through its utilisation of the vast diversity in big data, assessed statistically to capture norms as range (Kilgarriff and Grefenstette, 2003). In this respect, an AI generator will produce a very large, very varied, diverse set of questions within a curve of intelligibility such that questions are recognised as questions but, by statistical necessity, they must include that which, when assessed from an ethical angle, would not necessarily be considered palatable, healthy or non-toxic. AI, of course, does not have the sentience to recognise how its knowledge base and training have been based on norms of extant data.

Norms lead to the second element: it is not just what is included in the dataset that rests on the normative distributional curve, but that which is excluded: bias. In statistical terms, bias refers to differences between a sample and a population where the sample is not truly representative – skewing the norm, an aspect of problematic data-gathering and record-keeping that can result in representations of norms experienced and felt as exclusive, discriminatory or problematically stereotypical (Crawford, 2021). LLMs are, from the beginning, inherent with biases that are then utilised by AI tools to generate content that is likely to carry that bias without deliberate, human-engineered limitations to the programming (Manning, 2022). While many rage bait questions are designed to invoke responsiveness by knowingly representing bias, bias in this context operates differently by over-sampling content in the training of a generator that it becomes marginally more likely than the ‘norm’ to generate questions that invoke rage. It may be a deliberate training, such as over-sampling content that has generated a greater number of responses, but is also a result of what Kissinger et al. (2021: 81) described in criticising AI for the ‘shallowness of what it learns’. This is, as Mark Coeckelbergh (2020) has indicated, an instance in which the goals of AI development to produce something beyond general intelligence is not met, and contemporary AI instead only mirrors and exacerbates some of the less-ethical and base traits of human interpersonal behaviour.

[B] Human mimicry

Beyond the norms and biases inherent in statistical machine learning, there is the simultaneous problem of AI generators designed to actively mimic human interactivity, which may in this case include asking questions that imitate the maliciousness of human users creating content for rage bait purposes. Indeed, the purpose of generative and other AI tools has been from the beginning to mimic human communication. As Roberto Pieraccini (2021) has noted, most AI tool interfaces have been designed to give a sense of some level of anthropomorphic behaviour such that even when we know we are using an AI device we are encouraged to think of it ‘as if’ a human being. When the Quora Creator Tools account asks a question, it is deliberately designed to have the look and feel of a ‘human’ question posted to the site.

Mimicry establishes a normativisation within communication cultures because the attempt to resemble that which something or someone is not reinforces a particular way of being (Scott, 1995). That is, by mimicking the form of content production as is found in the datasets and training on what constitutes a question, and failing to have the capacity to make an ethical judgement about the impact of a question beyond its capability to generate engagement, AI tools are not only likely to produce some amount of rage bait alongside other digital harms but reinforce rage bait through the naturalisation of repetition. AI-generated rage bait, then, goes beyond being the outcome when a few human actors are behaving maliciously. Rather, it actively normativises this problematic form of communication by divorcing its origin from maliciousness or cynical attention-grabbing (Markham, 2013). Simone Natale (2021) identified deception as a constitutive element of AI technologies – not as cynical trickery but as a side-effect of the attempt to performatively manage interaction with human users. This deception can be seen in the form of mimicry used by Quora’s AI tools: questions that appear ‘as if’ generated by a human user, and of which a user can only determine their AI generation if one undertakes the necessary clicks through the question log to query the source. Such steps, of course, are unlikely in a digital-cultural setting based on speed of interaction and movement between topics (Papacharissi, 2015).

To a generator, rage bait is a normative, human manner of asking questions that is performed as repetition. AI-generated rage bait, then, is not something that should be considered either beyond human communicative norms, nor considered beneath human communication because it is of a lesser kind, but through deception is positioned as beside human interactive communication norms, actively reinforcing and normativising rage bait from a separate, non-human angle and thereby also reinforcing its own learning for future growth of rage bait content production.

[C] AI and the attention economy

Finally, the purpose of some generative AI must be recognised: to operate within a wider framework of what we understand as the attention economy and to thereby foreground responses that capture and retain attention and attentiveness (Citton, 2017). Not all generative AI services seek to create revenue through advertising that depends on attention and engagement, some operate through subscription or combined advertising and advertising-free subscription models, and in some cases, various access levels are free and open source. Quora, however, relies on advertising revenue and thereby its implementation decisions rest on a business model centred on encouraging and retaining attention. Here, it is reasonable to assume that the deployment of AI tools to generate questions alongside those created by human users is intentionally related to the goal of increasing the capture of attention. The attention economy is, of course, at the heart of the contemporary platform business model (Wark, 2019) whether that be attention for the purpose of advertising to users or a surveillance capitalism approach that on-sells details of that attention to other businesses. That is, while generative AI may not have attention maximisation as its core, the utilisation of AI by platforms, business and services is more typically aligned to strategies related to the attention economy. In place of the cost of content generation, including monetised systems that pay some high-engagement content creators, the advent of generative AI provides a lower-cost, more consistent and diverse generation of content that attracts attention, albeit in ways which have a higher environmental and cultural cost for the digital and global ecology (Crawford, 2021).

Framing generative AI tools as mechanisms serving the digital platform business model by cheaply garnering attention provides a lens through which to assess why an AI generator may produce content that produces adversarial, incivil, hostile or outraged responses. This is because we already know that such generation when conducted by human users ‘works’ to gain attention in an unhealthy way (Cefai, 2020). This is not, then, to suggest that AI tools have necessarily been trained in any deliberate way to produce only attention-grabbing content, but that in the process of persistent learning there is a risk that any AI generator is deployed in a way that learns that attention in contemporary cultures is produced through the encouragement of sensationalist adversity. As Terranova (2022: 81) has noted, the attention economy operates outside practices of mutual care, civility and the socio-psychic apparatuses that bind communities, and does so in ways which are ‘substantially destructive’. In a digital setting in which outrage mobilises and harvests attention very effectively (Han, 2017), generative AI can be assessed as risking a very substantial exacerbation of that unhealthy mode of attention generation; the Quora case study suggests this is already at play if only at the margins of its production of interrogative content.

Conclusion

Uncovering the fact that AI tools may be actively producing content such as rage bait that risks toxifying the digital ecology invokes important questions for the study of ethical digital behaviour and for regulatory policy. Both require a shift in register. Arguably, the current frameworks for digital ethics are not capable of responding to these implications, because they remain grounded in the idea of a responsible human user, both subject to psycho-social assessment and asserting rights, in some jurisdictions, of freedom of expression and thought. In terms of the cultural formations and sustainability of the digital ecology, the production of toxic, adversity-generating and rage-inducing content by AI serves not merely to add incrementally to an existing problem, but actively normativises problematic and unethical communication through reinforcement. This is not simply because it mimics problematic human communicative traits found in the datasets from which AI tools draw their knowledge and ‘learning’. Rather, it normalises rage and adversity through a repetition that does not have a name, source or population group to whom the problematic communication can be ‘assigned’ and who can be made ‘responsible’ for that communication.

There are also urgent implications for policy-makers. Policy-makers will need to consider how regulatory models can account for harmful, offensive or problematic content that does not have a direct human source. While current policy interest has been focused on intellectual property and the false assertion of authorship when AI tools generate content, regulatory questions about allowability revealed by AI generation of rage bait point to the need for alternative regulatory approaches. For example, does AI content fall under freedom of expression constitutional provisions in jurisdictions that enshrine it? At what point in AI generation is regulatory intervention warranted – the datasets on which it is trained, the limitations placed on third-party deployment, or on those users who interact with AI as if a human user? Is there a responsibility on platforms and services to separate human-generated and AI-generated content or to clearly identify the difference? As Coeckelbergh (2020: 109) aptly puts it, ‘Who is responsible for the harms and benefits of the technology when humans delegate agency and decisions to AI?’

In this context, while a human-generated post that incorporates harmful, problematic or prohibited speech has a responsible author who can be penalised either by platform policy or by relevant statutory regulations in a specific jurisdiction, there are no available policy, legal or cultural frameworks for assigning responsibility to a non-human actor, particularly when problematic content is wholly generated and distributed by AI services. When that non-human actor is effectively baiting other users into rage, the possibilities for intervention rest solely with the platform that deploys that AI service – a problematic conflict in which policing of speech is handled only by those whose business models benefit monetarily by the speech (Kaye, 2019). One potential regulatory solution that has emerged through this study is to expand the definition of digital harm beyond individual, verifiable harms to users and re-focus on the harm to the digital ecology as a setting for informed interaction (Cover, 2024). This enables a re-positioning of AI systems away from being apprehended as if digital platforms or conveyers of material and to instead recognise their potential role as harming the digital ecology as the setting of interaction. This has, then, implications for intervention in both design and operation.

The component of the study described in this article provides early indicators of a growing problem of AI-generated digital harms. It is limited by its nascent sample that was unexpectedly uncovered while tracking human perpetration. Further study is needed to determine the exact rate by which an AI generator produces problematic, unethical or harmful content, and across a wider range of platforms. While such investigation requires the cooperation of platforms by providing workable data by which to make genuine assessment, this study has found enough evidence in a short capture pool and case studies to suggest a novel problem in the kind of content produced by generative AI. At the same time, nascent evidence of troll farms using AI tools to automatically generate rage bait for the purposes of political manipulation is an emerging security concern that warrants urgent research built on the approach described here. Eschewing the over-simplification of comparing and contrasting human-generated and AI-generated content will be the starting point for framing the next stages of study on the cultural impact of AI content.

Footnotes

Acknowledgements

N/A

Data availability

N/A

Ethics approval and informed consent

N/A

Funding

The author disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was funded by the Australian Research Council Discovery Programme, DP230100870