Abstract

The Australian regulatory framework that relates to the management of contemporary online harms emphasises the role of platforms in managing and policing infractions and has legislated civil penalties for their non-compliance. Although importantly shifting responsibility towards previously-exempt platforms, this approach contrasts with earlier regulatory options in which individual perpetrators were subject to penalties for harms they cause to others. This article discusses the regulatory framework, and the ways in which management the outsourcing to platforms of harm management not only foregoes procedural justice and due process but misses an opportunity to shape digital citizenship through the potential pedagogies of emphasising a penal regime. We analyse platform penalisation practices and present data from a survey of Australians that demonstrates public support for government penalties for perpetrators. We argue that extending the framework to penalise perpetrators in Australia not only aligns penalisation with remedial and restorative justice approaches, but helps shape community standards and expectations for safe online behaviour.

Introduction

In regard to digital harms (online abuse, harassment, cyberbullying and disinformation), the Australian regulatory framework has been designed to address harm-minimisation through the threat of penalisation of platforms if they fail to enforce statutory obligations for the safety of Australian users. Mirroring practices adopted in recent years in European law, this approach extends the role of social media corporations in policing acceptable and unacceptable content and behaviour, while de-emphasising the role of traditional public institutions such as criminal and civil law, judiciaries and government agencies in managing and redressing harmful interpersonal behaviour and engaging in reparative justice (Kaye, 2019). Regulating platforms to manage online harms as the centrepiece of the framework misses the opportunity, we argue, to foreground other remedies, levers and prevention mechanisms to reduce perpetration, including particularly education, strategic promotion of digital citizenship among adults, interjurisdictional legal cooperation, and shaping healthy online communities through penalties for digital harms to dissuade serious, repeat offenders in Australia. That is, despite extant laws that may be used to penalise individual Australians, the regulatory framework fails to foreground these in favour of regulating platforms and does so in ways which problematically empower platforms to undertake the kind of decision-making, penalty application and redress traditionally in the hands of public institutions.

The present arrangement opens significant policy questions over how perpetrators’ liability is positioned, apprehended and addressed, whether or not platforms are up to the task of policing content and behaviour themselves given their vested interests, how outsourcing penalisation to platforms who manage perpetration in terms of contractual arrangements with users outside of the due process of common law, and the extent to which a greater public and strategically-communicated focus on existing or new penalties for Australian perpetrators might support the necessary shaping of healthy digital citizenship, the latter of which remains conceptually problematic but nevertheless perceived as a contemporary ‘wicked problem’ (McCosker, Johns, and Vivienne, 2016). In other words, attempts to shape online behaviour publicly emphasise only one element of regulatory intervention (platform governance) miss the value of taking up the affordances of a comprehensive, multi-sectoral and whole-of-society approach.

This article draws on research undertaken for the Australian Research Council Discovery project, which aims to provide a comprehensive account of everyday experiences of online hostility, abuse, trolling and hate speech. Using our analyses of platform policies, statutory law and survey data, the research outlined in this article provides an early account of the legislative, policy and popular frameworks in regard to re-valuing the liability of individual perpetrators of serious, repeated digital harms defined by a standard that involves due process (Suzor, 2019). We make the case that penalty practices grounded in shaping desirable normative behaviour cannot operate through statutory practices that empower platforms to manage harms, but should deploy public institutions in the civil and remedial justice activities of determining harm and communicating practice limits through a penalisation regime.

We begin with a discussion of the dominant components of the Australian regulatory framework that emphasise the penalisation of platforms to manage harmful content and behaviour, and contrast this with older Australian law that can and does apply penalties to perpetrators, arguing that it has been sidelined in contemporary online safety discourse. We follow with a brief analysis of some of the penalty mechanisms used by platforms before strategically drawing on a small component of results from a nationwide survey outlining the rates at which a representative sample of Australian adults believe that perpetrators of online abuse should be penalised by the state. We make the case that a regulatory system that includes civil penalties for users, rather than principally platforms, provides an opportunity not merely to punish but to shape digital citizenship towards the minimisation of harms in light of the shortcomings of platforms to manage behaviour effectively. Our key argument in this article is that the current regulatory framework in regard to harmful or abusive content and the harassment of other users is limited by its emphasis on platform regulation, that obliging platforms to manage perpetrators rather than more active participation of state institutions is unjust on both victims and perpetrators themselves, and that further public discourse on penalisation should be part of the prevention, intervention and remedial discourse about online safety in Australia.

Penalties in legislation, policy and public discourse

The Australian framework for regulating digital harms principally emphasises the requirement of platforms to act against harmful content and uses the threat of civil penalties against platforms to enforce timely action. Penalising individual perpetrators is available in some laws, but is not regarded as the central component of regulation. For example, the Communications Legislation Amendment (Combatting Misinformation and Disinformation) Bill 2024, which did not proceed in the Australian parliament, sought to introduce civil penalties for any platform provider which failed to comply with any misinformation code established by the Australian Communications and Media Authority. The proposed legislation did not seek to penalise any individual user who knowingly or maliciously posted disinformation, thereby avoiding the politically sensitive question of state censorship. Instead, the proposed bill sought to achieve the same aim by requiring platforms to manage information quality and user education, or face the risk of penalisation. Similarly, the Online Safety Amendment (Social Media Minimum Age) 2024 (given assent December 2024), which introduced a requirement to restrict young people in Australia under the age of 16 years from accessing prescribed platforms, created civil penalties not for users, parents or third parties who enable a young person to access platform services, but for platforms which do not take ‘reasonable steps to prevent age-restricted users having accounts with the age-restricted social media platform’ (63D). Most significantly, Australia's Online Safety Act 2021, enables the eSafety Commissioner to require a platform to remove material subject to a complaint and determined to be found to be menacing, harassing or offensive. Failure to comply with a removal notice may leave the platform or service provider liable to a civil penalty or infringement notice. In cases where it may be necessary to provide a removal notice to an end-user (the perpetrator rather than the platform), failure to comply may result in a formal warning only – not a penalty. The only exception given in the Act is a penalty for an end-user who fails to comply with a removal notice in regard to shared intimate images.

One of the only other rare, notable exceptions that enables the penalisation of an individual perpetrator is the Privacy and Other Legislation Amendment 2024 (given assent December 2024), which provides a statutory framework to prevent the serious digital harm of doxxing (using a carriage service to make available, publish or distribute personal data of an individual such as name, image or telephone number in a way that may encourage harassment). Introduced subsequent to the widespread doxxing of Australians who had commented privately on the Israel–Palestine conflict (Hegarty, 2024), the legislation establishes doxxing as a crime with a punishment of individual perpetrators of up to 6 years’ imprisonment. This opens critical questions as to why this particular harm warrants attention to the liability of the individual, but not other harms that may occur in digital settings. It can be reasonably discerned that the application of a penal logic to this particular type of harm was intended to shape behaviour around the protection of privacy, and provides then a good example of the utility of penalisation in relation to other digital harm types.

Although the emphasis of the online safety legislative framework in Australia has been on requiring platforms to manage offensive content and behaviour on behalf of the state, there are a number of statutory tools available to both Commonwealth and state governments in regard to online offenses that can penalise individual perpetrators, in addition to the image-based abuse and doxxing laws described above. For example, various amendments to the Criminal Code Act 1995 created the offense of using a carriage service to cause serious harm (penalty: 7 years imprisonment), or to menace, harass or cause offence (penalty: 5 years imprisonment). Under the ordinary process of criminal law, a perpetrator will be prosecuted by having charges laid subsequent to the gathering of reasonable evidence, and the case will be heard in court, with recognised rules around evidence, witness statement and judicial decision-making on penalties applied. Prior case law (common or precedential law) built up over centuries is an important factor in determining harm and guilt, including particularly the key threshold test commonly attached to offenses: what a ‘reasonable person’ would regard as a harm. Mitigating circumstances and extent of prior offending may be involved in determining the penalty. Various rules, including the Evidence Act 1995, govern what information can and cannot be introduced. If sentenced, appeals may be lodged. In other words, a perpetrator of an online harm is subject to a penalty under legislation, but that penalty is determined through a transparent, public process conducted by a public institution that protects victims, protects perpetrators from over-reach, and – importantly – shapes broader community attitudes towards the offense. Various state laws similarly criminalise acts which may include online harassment and abuse in online settings, such as Queensland's Criminal Code Act 1899 which provides that a ‘person who unlawfully stalks, intimidates, harasses or abuses another person is guilty of a crime’ (Section 359E), with penalties up to 10 years imprisonment. In most cases, although Commonwealth and state laws pre-date the 2000s platformisation of digital communication, they remain both (i) available to be used if a perpetrator can be apprehended and charges laid; and (ii) examples of extant mechanisms that enable state institutions to intervene in the perpetration of digital harms.

Significant here is that between state/territory and Commonwealth laws, there is a framework already in place to address serious cases of digital harms through a recognised, juridical process that can determine penalties on perpetrators tried in Australia. However, these robust and tested processes of determination and assignment of penalty are markedly absent from public and government discourse on online safety, don’t form a significant part of any public pedagogy on the implications of harming another person online, and are marginalised by the dominant aspects of the Australian regulatory framework that obliges platforms themselves to determine and manage harms in practice, to act on behalf of the state, and to take principal responsibility for the shaping of online behaviour.

Arguing for the re-emphasis of extant legislation or new penalty processes based on recognised, long-standing legal practices is not to disavow the regulatory framework that places some responsibility on platforms. Rather, it is to argue for an extension of the dominant platform-oriented regulatory framework for three reasons. Firstly, most Australian legislation that requires platforms to act on digital harms contrasts with (and, usefully, undoes) earlier norms stemming from North American exemptions of platforms from responsibility for the content or practices of its users. Embodied in the United States of America 1996 introduction of Section 230 to the Communications Act 1934, this amendment provided that platforms were not publishers and therefore would be exempt from other laws that traditionally hold a publisher responsible for disseminating problematic or defamatory material (Kosseff, 2019). Section 230 pre-dates the more recent shift in the global public mood towards recognising platform complicity in the at-scale circulation and amplification of harmful material (Flew, 2021; Suzor, 2019). Section 230 also pre-dates the reported substantial increase in online abuse and harassment over the past half-decade (Sori and Vehovar, 2022) and the recognition in recent years that offensive and hateful online content and harassing behaviour can have very serious health impact on users (Keighley, 2022), including suicidality (Costello et al., 2019; Waqas et al., 2019). Statutory measures that contribute to re-positioning platforms as culpable and liable for harmful impacts therefore have substantial benefits and may help adjust platform business models that presently generate profit from adversity (Kaye, 2019).

Secondly, requiring platforms to take at least some responsibility for the management of perpetrators shifts some of the burden away from victims who have often had to self-manage the experience of harmful content and behaviour, whether through reporting and complaint-making, legal actions including self-resourced civil suits and defamation claims (Haslop et al., 2021) or self-care and private mutual-care practices (Cover, 2022); an important objective noted by the Australian government's Statutory Review of the Online Safety Act 2021 Issues paper (Department of Infrastructure, Transport Regional Development, Communications and the Arts, 2024).

Finally, a regulatory framework that places at least some requirement on platforms for responsibility and management of digital harms is necessary given the global and interjurisdictional complexities that emerge when harms occur in an international context, for example, a user in Brazil who seriously harasses a user in Australia (Vincent, 2017). That is, charges may be laid under the Criminal Code 1995 leading to the penalisation of an Australian who threatens a user inside or outside the jurisdiction, but attempting to charge and penalise a user in, say, Denmark who seriously harms an Australian user creates complex extra-territorial jurisdictional concerns. In this context, the Online Safety Act and other legislation provides an important mechanism to compel remedy, and enables the Commissioner to apply civil penalties to the platform to achieve that aim.

While the regulatory framework therefore has value, it nevertheless problematically places the bulk of emphasis onto platforms to participate in forms of policing speech, content and behaviour that was previously the purview of the state (Kaye, 2019). This, of course, is not necessarily a role platform companies have sought and one they increasingly reject owing to cost, including pushing moderation activities back onto users in the case of Twitter/X and Meta (Thompson and Conger, 2025). The practices fail to deploy due process principles in the management of problematic users and, as we argue here, the dominance of platform management of harms misses an important pedagogical opportunity afforded by public knowledge of penalties.

Platform penalties

In addition to the requirement to comply with government removal notices, platforms are empowered to penalise perpetrators on their own terms, through processes that are arguably misaligned with common legal practice such as recording decisions, drawing on prior rulings and allowing appeals, representation and testimony. Platform application of moderation, take-down decisions and penalisations such as user bans have been described as haphazard, inconsistent and reactive (Suzor, 2019). As Reyman and Sparby (2021) argue, platforms approach the management of harms through enforcement of punishments that often operate outside guiding, community values, and arguably have a conflict of interest given their business interests in circulating adversity that attracts engagement and attention.

We analysed the terms of service and related policies of twenty-three of the most popular platforms by user number provided in 2022 by Semrush (Lyons, 2022). 1 User numbers ranged from 150 million (Discord) to 2.9 billion (Facebook) monthly active users for the first quarter of 2022 (Cover et al., 2024b). The primary approach to punishing users within contractual terms of service for breaches or harms is bans or usage restrictions. Platform penalisations generally fall under one of the following enforcement categories: (a) warning notices; (b) content-targeted action such as forced removal, visibility downranking or content labelling; (c) user restrictions, such as the restricted use of certain platform features, suspension or termination of accounts; and (d) monetisation restrictions, such as halting the ability to accrue revenue from content. Aside from de-monetisation, platforms themselves are not legally capable of applying a pecuniary penalty or imprisonment, unlike Commonwealth and state law.

Meta (Facebook and Instagram), for example, penalises users who commit infractions against its terms on a strike enforcement basis. First violation results in a warning with no restrictions. Two to six strikes results in a restriction of some features. Seven strikes results in a one-day restriction from posting, commenting or creating a page. Further strikes result in additional restrictions up to 30 days. Meta indicates that other enforcement options include content removal, restrictions on creating advertisements, the disablement of accounts, de-monetisation and reductions in page visibility. The record of strikes expires after 90 days. YouTube operates a similar strike framework, ranging from a warning, to a 1-week restriction on uploading content and monetisation. A third strike within a 90-day period will result in the channel being permanently removed, while a single case of severe abuse or harm may result in immediate channel termination without warning or appeal.

Fifteen of the 23 platforms state in their service terms that they will terminate an account after repeat violations, while all others (excluding Slack, which provides no information on enforcement of policy) will temporarily suspend the account, either from access or from posting or creating content (Cover et al., 2024b). On the whole, the penalisation of users who violate terms vis-à-vis recognised digital harms is mechanised through the risk of suspension or permanent bans, usually meaning the closure of the account and/or the removal of all the account's prior content. The extent to which perpetrators are likely to be affected by an account termination or ban is likely to be variable: some users may be more attached to prior content than others, while there is little information on how platforms prevent perpetrators from establishing new accounts. In this respect, the extent to which these penalisation mechanisms shape user behaviour or the wider ecology is unknown.

There are a number of potential problems with relying on platforms to undertake these limited forms of penalisation as a means to discourage harmful content and behaviour. Firstly, it empowers platforms to judge the severity of harm on their own terms, in ways which may be influenced by business interests outside transparent due process norms, and in ways which may be out-of-step with legal definitions of harm. Many platforms, for example, build into their policies a differential threshold for addressing harmful content if it is directed towards public figures on the spurious basis that public figures are ‘newsworthy’ in ways that no statutory law would consider (Cover et al., 2024b) and may capture persons who are not reasonably recognised as public figures in other settings, such as the children of politicians or entertainers (Cover et al., 2024a). Here, the decision-making process of moderators – who may be third-party employees and required to quickly process thousands of complaints per day (Kaye, 2019) – determines perpetration not on the basis of the extent of harm, testimony, witness and prior practice as in state-based judicial processes, but on a judgement made by a moderator which falls short of the kind of due process consideration found in public institutional decision-making in relation to harms (Condis, 2021). That is, the decisions of courts and other legal processes are required to reflect statutory law, prior case law, and may be led by community standards or reasonable person arguments, whereas platforms that make decisions on who is penalised, how the penalty is applied, and the extent or length of penalisation have effectively been encouraged by regulatory frameworks to do so in ways that are unrecognisable to public institutions.

Secondly, the platform penalisation processes we analysed do not incorporate a clear and robust appeals process to investigate if a wrongful decision has been made (Cover et al., 2025). Nor do they provide a public record of decisions in the way that public institutions are required to do so, and that builds a body of case law to guide future determinations. This demonstrates the problem of a framework based on contract law (centred on the user agreement between users and the platform) rather that administrative or civil law processes which have been built up over many centuries as a broadly public and recorded system of judgement. As former United Nations Special Rapporteur on the promotion and protection of the right to freedom of opinion and expression David Kaye (2019) has commented, the decision-making processes of platforms has enormous power to shape public discourse and the lives of users – both perpetrators and victims in the case of harm – but such important judgements occur in an entirely opaque system that may not allow for nuance, clarity, and decision-making for the benefit of the community and the protection of legally-recognised rights.

Thirdly, the lack of transparency in platforms’ own penalties process means that penalisation is used to comply with regulatory pressures rather than either shape standards or partake in a remedial or restorative form of justice. For example, the use of shadow-banning by some platforms, which hides a user's content without them being informed, may penalise perpetrators but in its secrecy fails to provide any disciplinary, restorative and community benefits that underpin two centuries of penalisation through public institutions (Sierzputowski, 2019; Savolainen, 2022). While the practice may arguably shape the overall discourse available on the platform by reducing the risk of repetitive harmful content reaching other users’ feeds (Chen et al., 2024), it is at best a limited gestural compliance practice serving neither the goals of regulatory frameworks nor those of restorative, pedagogical and remedial effects. While the present Australian regulatory framework may help assign some responsibility back to platforms, the array of penalties used by platforms falls significantly short of the traditional purpose of state-based penalisation as a means to responsibly dissuade perpetration.

Everyday Australian views on government penalisation of perpetrators

Despite a regulatory framework that places culpability and management of penalties for violations on platforms rather than on perpetrators through public institutions, there is a discernible interest among everyday Australians in the direct penalisation and criminalisation of perpetrators of digital harms. The study commissioned a nationwide panel survey in November 2024, with 2520 representative adult participants reporting views, attitudes and experiences in relation to a range of digital harms (abuse, harassment, doxxing, deepfakes, disinformation and AI bias). Although the methods, full findings and analyses will be published elsewhere, in this article we make strategic use of one element in the survey to provide exemplary information on public attitudes towards penalisation. In the context of asking participants about their beliefs, attitudes, feelings and experiences about the increased degree of harmful and problematic online content and behaviour, we asked them to rate their view on the perceived potential effectiveness of some preventative and interventional remedies to digital harms. The list was of potential remedies was drawn up through the project's ethnographies with users, lawyers, and healthcare service providers.

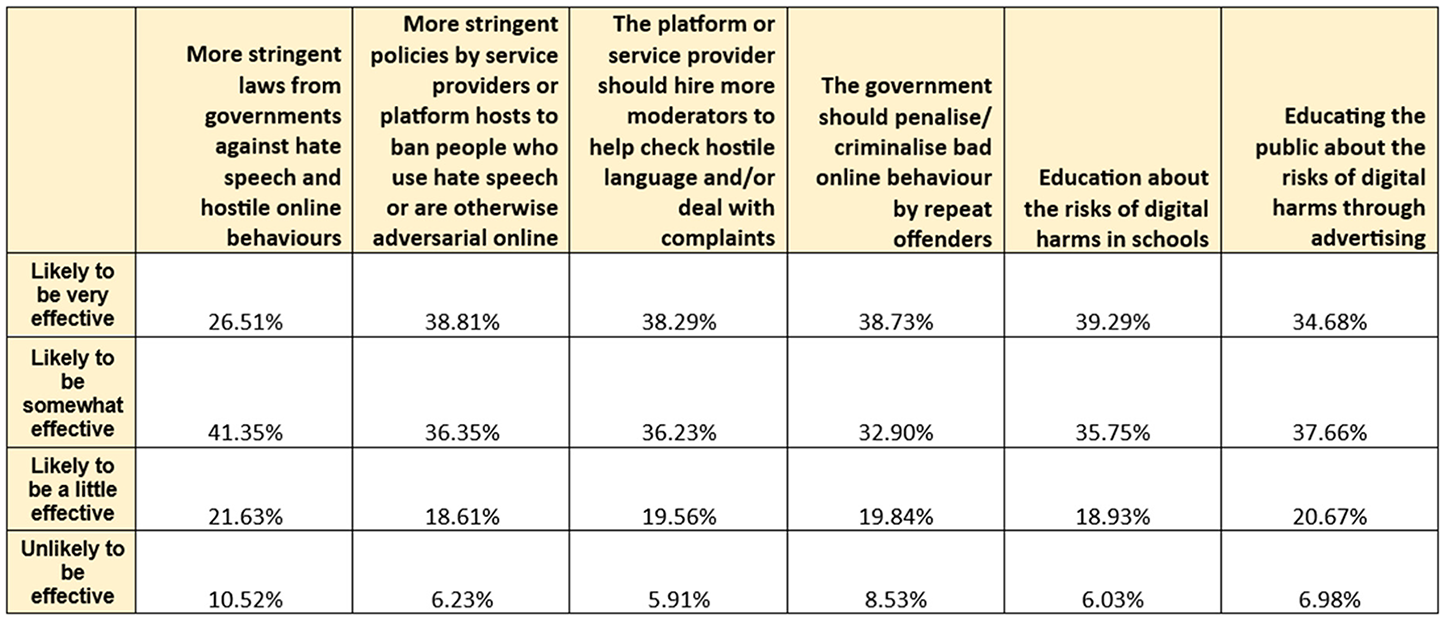

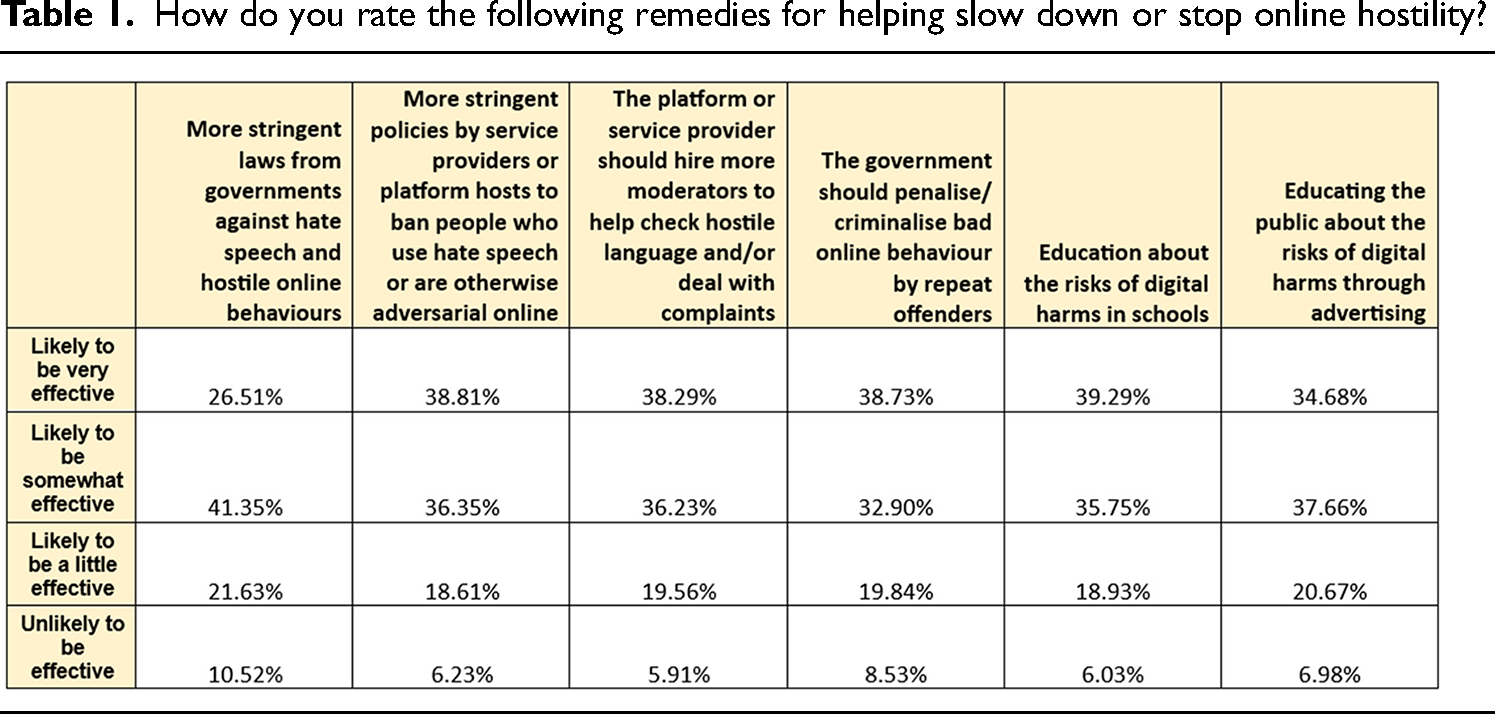

Table 1 provides a general population break-down of attitudes on the potential effectiveness remedies from regulation to education. It is notable that of the range of remedies offered, greater than 70% of participants were in broad agreement that all remedies except more stringent laws from governments against hate speech and hostile online behaviours were likely to be very or somewhat effective. 71.63% of participants felt that penalising or criminalising bad online behaviour by repeat offenders was likely to be very or somewhat effective; other than education about the risks of digital harms in schools, penalisation received the highest percentage of those who believe it likely to be very effective.

How do you rate the following remedies for helping slow down or stop online hostility?

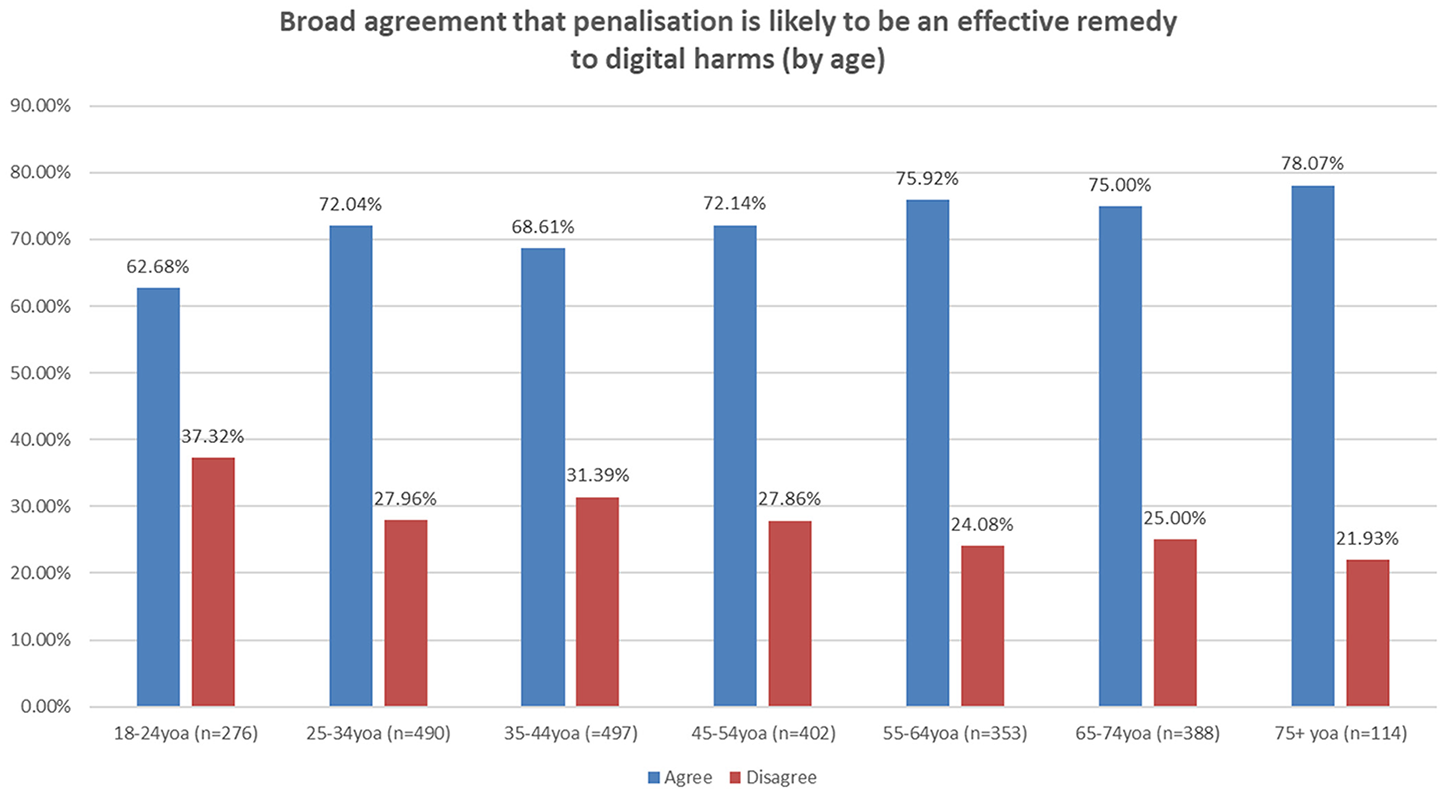

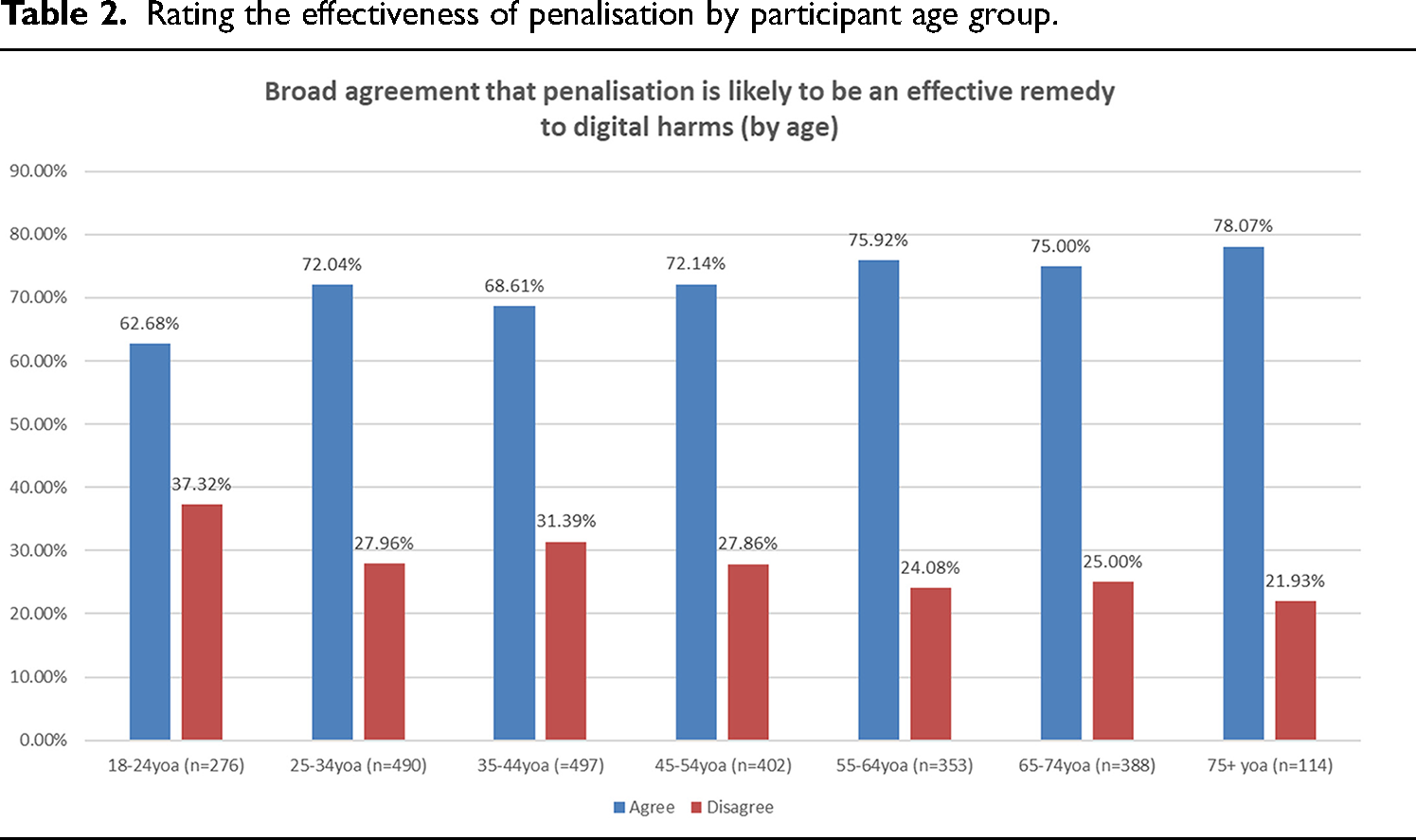

To gain some initial insights into what portion of the population feel government penalisation of bad online behaviour would be effective, we analysed responses on the penalisation remedy by participant demographics. A key, albeit expected, finding is that agreement in the effectiveness of penalisation increases with age, per Table 2. This mirrors a broader, Euro-American and Anglophone attitude to crime and policing germane to moral panics about crime as determined in the late 1970s in cultural studies: that increases in age and experience may intensify a belief in the effectiveness of punishment as a tenet of population behaviour management (Hall et al., 2017). Although all age groups were in broad agreement that penalisation was likely to be effective, there is a general increase in age up to 78% of those aged 75 years or older. Older generational groups may favour penalisation in frustration at a lack of substantial prevention over the decade in which they have witnessed the disruption of online communication and its effects on democratic practices and public debate (Bennett and Livingston, 2018). However, it may also imply a problematic carceral logic of punishment (Lopez, 2022) divorced from the pedagogical benefits of penalties we discuss below.

Rating the effectiveness of penalisation by participant age group.

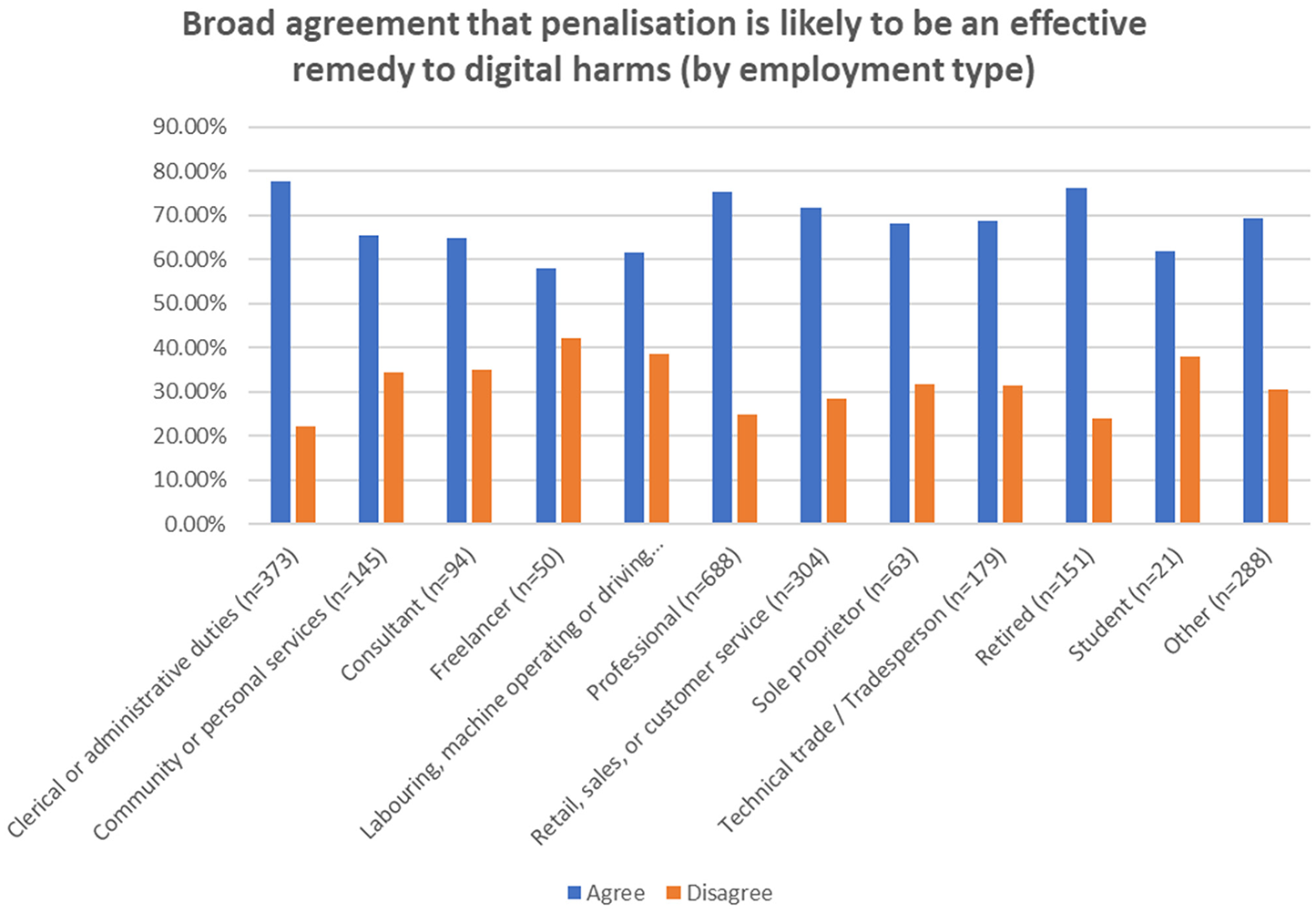

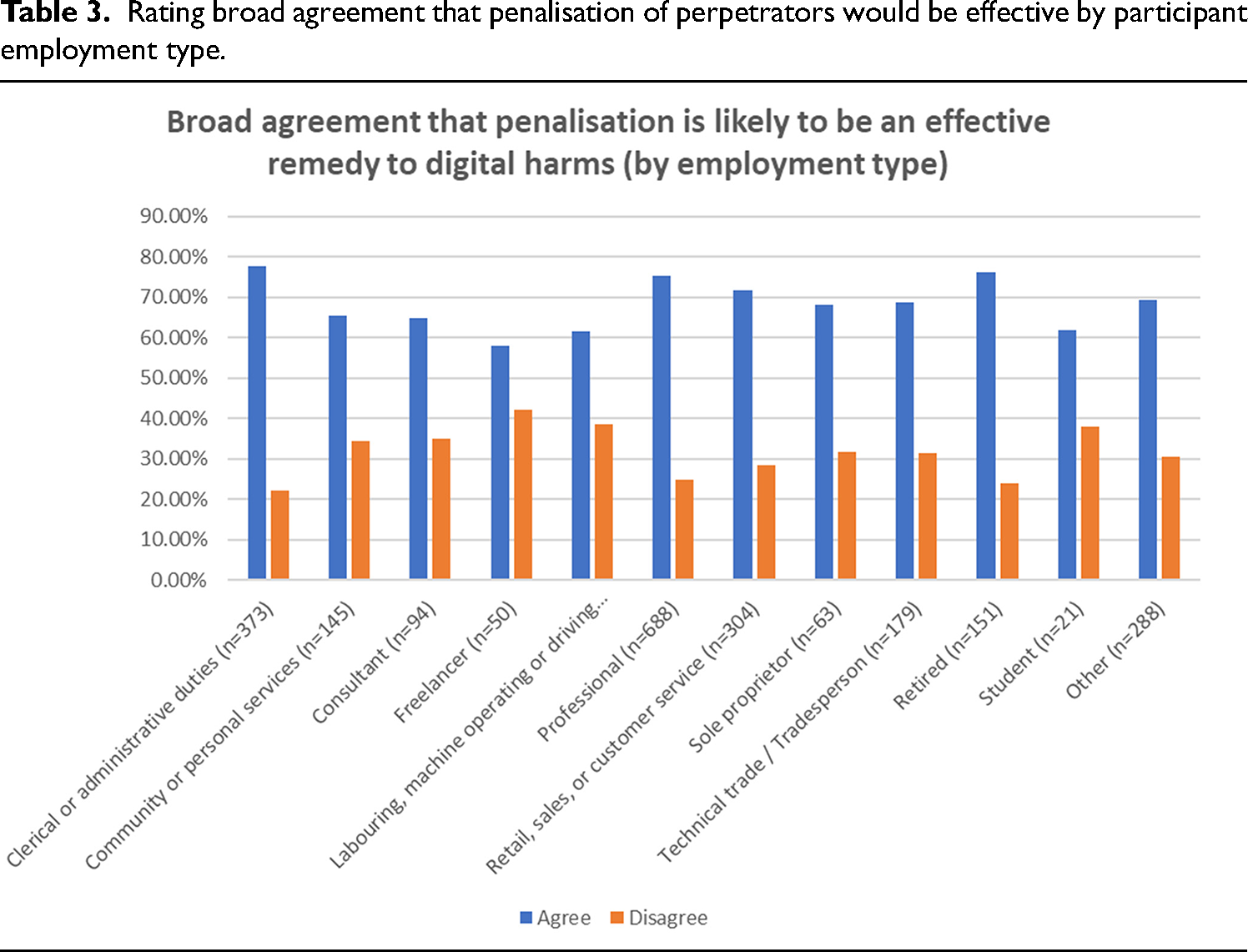

We also sought to understand if socio-demographic factors related to employment type and education attainment may make members of the public more or less likely to support government penalisation of perpetrators of digital harms. As Table 3 demonstrates, those in white-collar professions (clerical or administrative duties; professional employment; retail, sale or customer service) were, alongside retirees, found to be 70% or greater in broad agreement that government penalties would be effective, while all others (except freelancers) were at least 60% in broad agreement, possibly because freelancers are more likely to have the available time to pursue other remedies such as reporting and, with often insecure incomes, are less likely to appreciate a financial or carceral cost for perpetrators (Das, 2007).

Rating broad agreement that penalisation of perpetrators would be effective by participant employment type.

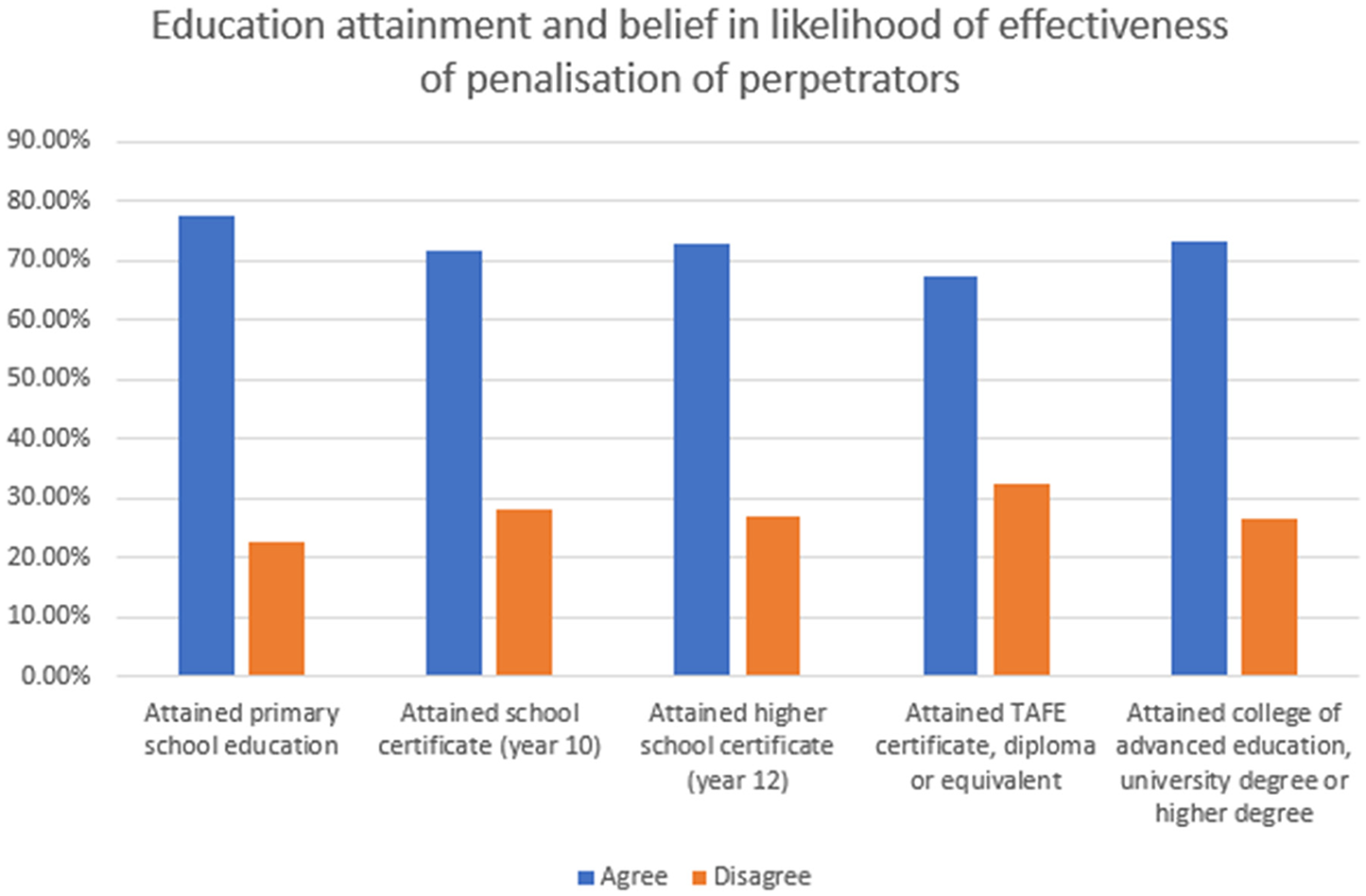

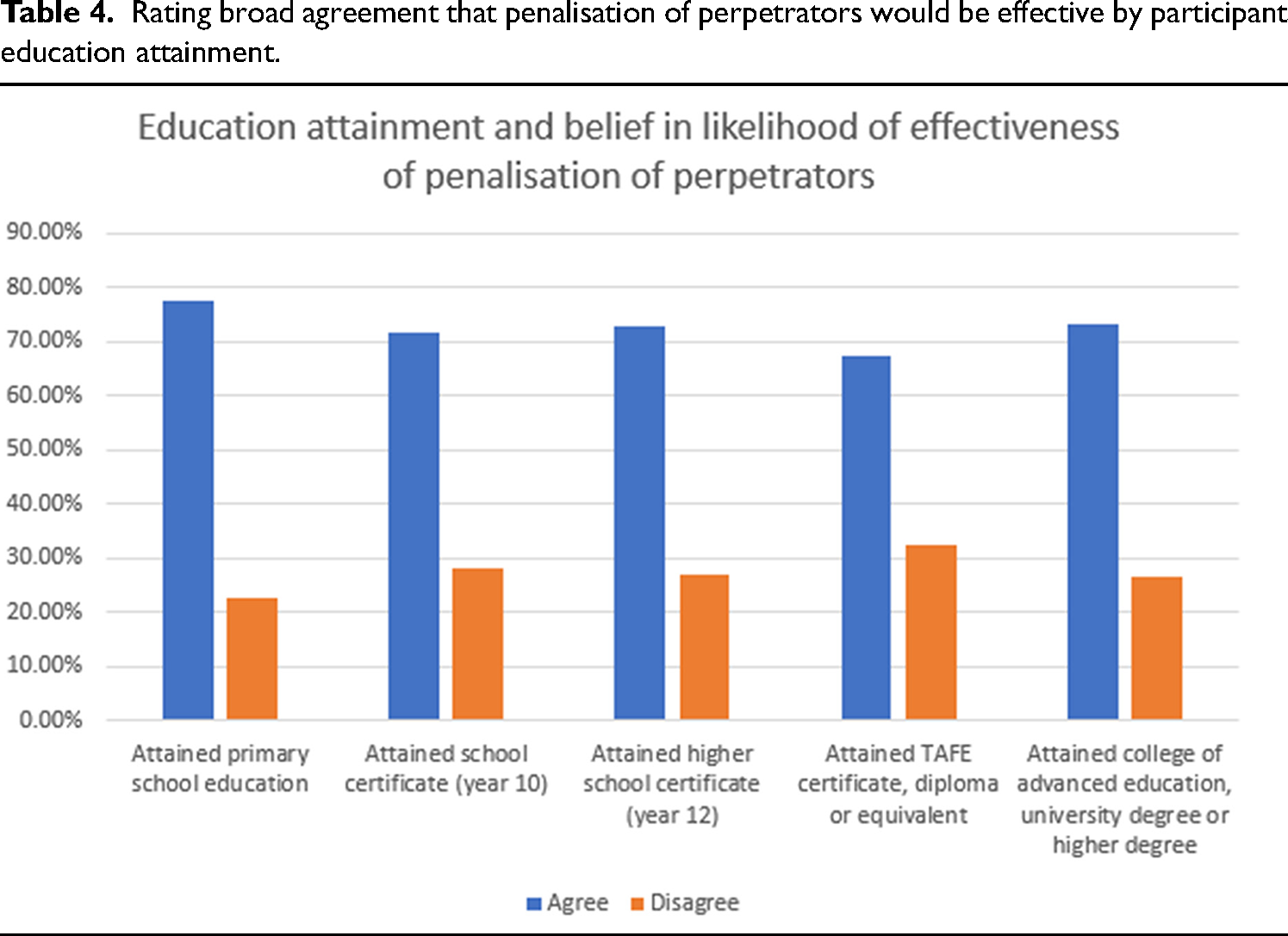

In the context of education attainment, broad agreement was – conversely – highest among those whose maximum attainment was primary school education at 77.42% in broad agreement; dropping to a steady 71% to 72% for higher levels of school, a lower 67.54% for those who had attained a TAFE certificate, diploma or equivalent, and then a notable increase to 73.30% for those with a college or university degree or higher degree. As the range was within 10 percentage points across all categories of education attainment, the polarised broad agreement with government penalisation gathered at the primary school and university education frames is not particularly meaningful, but may indicate that those with TAFE-level education may be more in favour of freedom of expression values, in which there should be no repercussions for online speech or behaviour, whereas more stringent rules and/or more critically-nuanced views may be held by the other groups in relation to education attainment (Kurti and Passer, 2023) (Table 4).

Rating broad agreement that penalisation of perpetrators would be effective by participant education attainment.

What this small component of the study's survey points to is that, despite some minor demographic distinctions, there is a broad indicator that a community belief in emphasising state-based penalties for individual perpetrators may be effective in reducing digital harms. In that respect, we would argue that the broad agreement with government-managed penalties for perpetrators of digital harms more generally is the result not only of the growing public mood for regulation of platforms (Flew, 2021) but a growing public frustration with platforms that are seen to be falling short in effective moderation (Phillips, 2015). Community support does not, of course, mean in itself that penalties will actually be effective. Nor does it indicate that Australia's statutory regulatory framework and its public communication should have emphasised the extant laws, common and civil law processes and institutions that enable the judgement and penalisation of individual Australian perpetrators as a key component of managing digital harms. Rather, two elements are necessary: further empirical research with the Australian community to determine to what extent everyday users understand and are aware of extant penalty options and their interplay with civil and administrative regulatory options for online safety; secondly, a public policy discussion on the future of online safety in light of the current framework's failures that incorporates re-emphasising penalisation as a pedagogical tool alongside other remedial initiatives.

The case for penalising perpetrators

On the basis of what is increasingly recognised as the negative population health outcomes of the failure of platforms to adequately address harmful, abusive and disinformational content and behaviour (Andrejevic, 2020; Flew, 2021; Waqas et al., 2019), there is a legitimate concern that a regulatory framework centred on requiring platforms to manage digital harms is not only not working, but actively empowers them as private corporations to engage in censorship and penalisation activities traditionally in the purview of the state, and to do so without the public scrutiny, due process or review practices familiar in democratic countries (Kaye, 2019). This is to say that the educational, pedagogical and promotional aspects of statutory penalisation is made absent when regulatory frameworks focus on the empowerment of platforms to penalise users in non-transparent ways.

A re-emphasis of extant penalisation practices for individual users is complex, and the point here is not to suggest that the state should be exclusively responsible for the day-to-day management of harms; an impractical and resource-heavy task given the need, as James Porter (2021) has noted, to distinguish on a case-by-case basis between content which is annoying but harmless, and systemic, organised and sustained behaviours that cause serious hurt to individuals and groups, the need to avoid vexatious and improper use of legal processes, and the requirement to process a very high volume of cases in a complex, interjurisdictional environment in which identification of perpetrators is difficult (Vincent, 2017). The point in re-emphasising penalties as a component of online safety and its concomitant public communication is not to replace or ignore platform responsibility, nor to provide an alternative management regime. Rather, it is to utilise the knowledge of penalisation to shape better behaviour, dissuade perpetrators and engage the public in what good digital citizenship looks like, while retaining the of course the option to prosecute serious, repeat perpetrators where platforms fail to act or where their perpetration occurs in the inter-platform ecology.

Re-emphasising penalisation, we argue, should be part of a wider multi-level and multi-sectoral cooperative practice in the regulation and promotion of online safety, good digital citizenship, healthy communication and the minimisation of harms. As Nash and Felton (2024) have argued, regulatory regimes reliant on platform reactive intervention and content controls falls short of duty of care principles that can be mechanised through safety-by-design and systems-based approaches. Rightly, they point out that the pathways to harm are complex with vulnerability to harm experienced diversely. In taking a cultural approach to communication technology, we argue that systems approaches must also consider how digital culture is shaped and regulated to normalise ethical behaviour; that is, user attitude and behaviour is as much entangled with systems as technologies and feed algorithms. In this context, a systems approach to digital regulation must incorporate the full array of education, promotion of good digital citizenship among adults, state guidance for algorithmic decision-making boundaries, requirements for platform cooperation in reactive moderation, and one element in this is the foreground of penalties to shape behaviour in the two registers we outline below.

Shaping behaviour

Although harsh criminal penalties, such as incarceration, are recognised as broadly ineffective in deterring serious crime (Paternoster, 2010) it remains the case in the Australian context that sanctions – typically imposed as civil financial penalties without the damaging stigma of criminal convictions – serve as a strong deterrent regulating and shaping public behaviour (Australian Law Reform Commission, 2010) while allowing due process and judicial fairness to govern. The aim of civil penalties is understood as wholly protective because the objective is the promotion of compliance through deterrence as being in the public interest, without the problems or costs of punishment, retribution or rehabilitation necessary in criminal law (Corrs, 2022). The case we are making is one of governments participating in the shaping of good digital citizenship through emphasising their role in policing serious perpetration of digital harms, much as they now do for doxxing and image-based infringements, not as an act of taking away from other perceived remedies (school digital literacy curricula; warranting safety-by-design among platforms, etc.) but to more clearly underpin desired public behaviour.

Populist perceptions of punishment are often framed by a misguided notion that penalties are utilised to extract revenge upon a perpetrator on behalf of victims or a community offended by the crime (Pratt, 2007), or to humiliate a transgressor (Anselmi, 2018). Penalty, carceral and punishment regimes have, however, been coded since the nineteenth century explicitly by the two-fold action of discipline on the one hand (reparative correction or re-education) and shaping a social body to assess, manage, modulate and prevent particular public contraventions from occurring at rates too high for social stability (Foucault, 2007).

There are several exemplary precedents among Australian states that demonstrate the effectiveness of penalties in conjunction with other actions and encouragements to shape behaviours related to the health, wellbeing and safety of individuals and bystanders: regulations that prohibit smoking in certain public places that penalise both the individual who breaches the law and the business on whose premises that law has been breached. For example, under the state of Victoria's Tobacco Act 1987, a person who transgresses the law by smoking in an enclosed workplace, or prescribed drinking and dining spaces, is subject to a penalty determined by units which may be remediated financially or through incarceration. However, under the same law, the occupier of a premises where the infraction has occurred is also subject to a penalty of twice or more the value depending on the nature of the business. The same law also requires the occupier of a premises to take preventative and educational action by the placement of no smoking signs or else they will have committed an offence. The aim of the law is given clearly in its preamble and purpose statements: that tobacco is so injurious to the health of both smokers and affected non-smokers that the Parliament of Victoria seeks to ‘discourage’ its use to reduce ill-health and death. To achieve this aim, the legislation establishes offences that are punishable through penalties for both the offender and for those who may through negligence or a failure to discourage smoking on their premises share in the liability for permitting the offense to occur. In the same way, a more clearly-articulated online safety framework that foregrounds both the liability of the platform (where a harm occurs or has been amplified) and the perpetrator (who has harmed, under legal definitions, another user) can more clearly articulated community standards and expectations and provide a stronger framework for addressing user behaviour in ways which are public, restorative and potentially transformative.

Anti-smoking legislation that creates penalties for smoking in prescribed public places does not, of course, fully prevent the offense because it is not possible to resource policing to the extent necessary to punish every transgressor (Boulton, 2023). However, the creation of such offenses, alongside educational campaigns and price-based discouragement mechanisms (tobacco taxes), have been widely understood to contribute to emergent social norms that influence population behaviour change. This occurs by utilising penalisation not as a means of punishment but by providing a public-institutional injunction of that which is considered unacceptable (Schoenaker et al., 2018). Indeed, by underscoring its unacceptability, a clear legislative framework of penalisation has been found to be more effective than other measures such as anti-smoking advertising and price mechanisms, while shaping a reduction in smoking up-take by younger persons (Bardsley and Olekalns, 1999; Goel and Nelson, 2006). Such precedents, then, use statutory penalties not merely to punish but to articulate behavioural expectations that protect the community from unhealthy actions. By understanding digital harms through discourses of user health in light of the growing evidence of harmful content/behaviour's impact on the health of victims and bystanders (Cover, 2024; Keighley, 2022), there is an opportunity to reshape the regulatory framework to incorporate perpetrator penalties as a clear signal of the kinds of behaviours that are not tolerated because they harm the self and others.

Harms to the digital ecology

At the same time as shaping individual behaviour in regard to the perpetration of digital harms such as abuse, harassment and malicious misrepresentation, a penal framework based on the statutory creation of offenses is, we argue, a factor that can re-shape the digital ecology as one based on norms other than adversity, insult, offensiveness, hostility and other forms of digital violence (Khalil, 2022; Morozov, 2013). This is not to argue for a return to a certain kind of digital citizenship framed by nostalgia for a pre-platform environment, nor to argue that the past digital culture of Web 1.0 was ever free from the violence of flame wars and bullying (Reid, 1998). Rather, it is to emphasise penalties to articulating more adequately what a desirable digital culture might look like in contrast to its present toxicity.

The Australian government in 2022 established Basic Online Safety Expectations, using an enabling component in the Online Safety Act 2021 that permits the Minister for Communications to set standards through the Office of the eSafety Commissioner; these were updated to reflect new legislation in 2024. The Basic Online Safety Expectations are, much like the legislative framework described above, directed principally towards platforms with the purpose of improving safety standards. Key expectations include: ensuring all users can use online services in a safe manner, that unlawful and harmful material will be minimised, and that platforms will enforce terms of service to ensure safe use. Again, the focus on platform responsibility alone does little to establish basic expectations on users, particularly in relation to form and manner of discourse, respectful engagement with other users, conforming with principles not to create malicious disinformation or deliberately misrepresent other users, or that repeated and sustained harassment and abuse are not tolerable behaviours in an online setting.

The digital ecology is, of course, more than simply a group of platforms providing services to users, but is a setting in which users and platforms interact with and across one another, in an array of competing and mutually-supportive interdependencies (Bruns, 2019). Requiring platforms as singular organisations to penalise perpetrators on behalf of the community enables perpetrators to persist in harmful behaviour across multiple domains in the ecology and often therefore to go unapprehended (Burch, 2018), including those who may harass another user with relatively mild content that fails to achieve a threshold of harm on an individual platform but is a substantially harmful form of stalking victim-survivors across multiple services (Haslop et al., 2021). Again, public institutions are the only bodies capable of building a case that addresses a perpetrator's harassment across the digital ecology, while individual platforms do not work collectively to moderate perpetration on anything other than their own sites. As significantly, penalisation of individual users enhances the online safety expectations through one of the key purposes of sentencing – the public denunciation of particular kinds of behaviours (Sentencing Advisory Council, 2011) by adding to the focus from expectations on platforms (capable of addressing individual instances of ‘content’) the expectations on ‘behaviour’ of individuals operating in the wider digital ecology.

Conclusion

In this article, we have identified a key gap in Australia's approach to the regulation of digital platforms in regard to harms, abuse and harassment – the de-emphasising of older models of penalisation and criminalisation to shape social harmony and good citizenship in favour of over-emphasising platform responsibility. Despite the benefits of the current regulatory regime towards engaging platforms to take responsibility for sharing problematic content, our study has shown that a representative sample of Australian adults feel that the government penalisation of repeat perpetrators would be an effective mechanism to addressing the rate and extent of digital harms. Arguing for a re-emphasis or expansion of state-based penalty frameworks to manage digital harms is not to promote a punitive solution to digital harms, but begins with the three existing problems we have identified in this article: (a) that a regulatory framework focused on obliging platforms to manage harms may useful point to platform responsibilities for amplification and promotion of adversarial content and behaviour but leaves them managing – poorly – a punitive framework of bans and shadow bans; (b) that this approach is an injustice to both perpetrators and victims because it cannot incorporate recognised norms of due process; and (c) the opacity of platform-managed action has no mechanism to shape and promote more ethical online behaviours in the form of reparative and transformative justice.

The key argument for re-emphasising perpetrator penalisation as a component of online safety regulation through extant or new civil penalties is the power to promote and communicate desirable digital citizenship as a preventative tool rather than rely on regulating platforms to manage reactive moderation. While state-based penalties cannot be deployed universally, as with other civil and criminal procedural matters, public institutions already have expertise well beyond that which can be provided by moderators acting reactively to singular issues or reports (Kaye, 2019) to ensure legality, fairness, determination in the context of testimony and, where warranted in extreme cases, a trial before peers; any penalty can thereby be determined judicially independent of government or business interests. If we are to recognise the digital ecology as a cohabited setting in which users thrive and/or are made vulnerable in interaction with others, then the serious and necessary work of shaping and limiting behaviours in order to reduce serious harms necessarily involves a wider array of procedures, interventions and preventative initiatives to underscore the importance of ethical digital citizenship, and a re-emphasis of penalisation for users is one necessary, albeit unfortunate, component.

Footnotes

Data availability statement

This research did not produce any data that is not already accessible in the public domain.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Australian Research Council Discovery Programme (grant number DP230100870).