Abstract

This study investigates post-truth messaging and participatory disinformation on Twitter, focusing on the activities of Craig Kelly, a former Australian member of parliament and a key figure previously accused of spreading health misinformation in Australia during the COVID-19 pandemic. We draw on Harsin's conceptualisation of post truth communication to analyse 4317 tweets and 5.2 million interactions with Kelly's account and his network of followers over a six-month period. Our novel empirical approach, combining coordination network analysis with a forensic qualitative approach, explores the participatory nature of online interaction, where fringe actors mobilise around Kelly's tweets. The findings demonstrate how political figures have a privileged and outsized role in public discourse, undermining scientific institutions and promoting anti-deliberative politics. This research underscores the role of participatory disinformation in the post-truth era and suggests that regulators, governments, and social media platforms work collaboratively to develop a whole-of-society framework to tackle misinformation.

Keywords

Introduction

The COVID-19 pandemic has had an unprecedented public health and economic impact across the globe. As of March 2024, there have been over 774 million confirmed infections of COVID-19 in the global population, resulting in just over 7 million deaths (World Health Organisation, 2024). The World Health Organisation (WHO) stated that the coronavirus pandemic is “the first pandemic in history in which technology and social media are being used on a massive scale to keep people safe, informed, productive and connected” (Simons, 2021). However, the rapid spread of COVID-19 may have been outpaced only by the spread of unverified and potentially misleading information about the disease (van der Linden, 2022). By unverified, we mean information that refers to claims, statements, or data that have not undergone a rigorous process of validation and cross-checking by credible sources or through established methods of scientific or journalistic inquiry (Kovach, 2014). Such information lacks confirmation from authoritative or expert sources and is therefore not reliable or factual, given what we currently know from longstanding or ‘sound’ processes of knowledge production (Laufer and Nissenbaum, 2023). Tedros Adhanom Ghebreyesus, the WHO's director general, in discussing the COVID-19 pandemic, stated: “We’re not just fighting a pandemic; we’re fighting an infodemic” (The Lancet Infectious Diseases, 2020).

In the context of the largest and most ambitious vaccine campaign in history, different anti-vaccination narratives emerged and continue to propagate across social media platforms with potentially significant public health impacts (Curiel and Ramírez, 2021; van der Linden, 2022). Anti-vaccination stances have existed as long as vaccines themselves, and it is acknowledged that all vaccines come with the potential for side effects even with extremely favourable risk/benefit profiles (Gallegos et al., 2022). However, vaccine hesitancy has increased and received more media coverage in response to the development of the COVID-19 vaccine. Analysis of anti-vaccination sentiment demonstrated specific concerns around the speed of development, the novelty of mRNA technology, relatively high survival rate of COVID-19 reducing the necessity for vaccines, and fear of side effects influenced their stance (Wong et al., 2021).

When discussing public communication related to the COVID-19 pandemic and vaccine, it is important to outline a definition of misinformation and disinformation, particularly as these concepts have undergone renewed calls for definitional clarity (Wardle, 2023). One of the most cited works in the field is from Wardle and Derakhshan, who state that disinformation is false information that is “a deliberate, intentional lie and points to people being actively disinformed by malicious actors” (2018, p. 44). Meanwhile, misinformation is information that is false, but the person sharing it believes it to be true (Wardle and Derakhshan, 2018). However, in this paper we reframe these concepts through the broader context of post-truth, drawing on Harsin's (2018) framework. By situating them in post-truth, we examine broader issues relating to trust and truth-telling, and the role of populist elites and the participatory logics of social media in shaping public discourse around politicised topics such as vaccines. In doing so, we engage with the concepts of mis- and disinformation as they are pertinent to both the literature and popular discourse but pay closer attention to issues around unverified health information, a persuasive communication strategy that lacks ‘secure standards of evidence’ (Harsin, 2006, p. 86) and in turn attracts considerable attention and participation on social media.

To examine this further, we focus on the online activity of Craig Kelly, a former member of parliament. Kelly has been subject to extensive media scrutiny and previous academic work regarding the content of his social media posts, which have been described as both misinformation and disinformation (Barry and Sanchez-Urribarri, 2022; Dalzell, 2021; Australian Broadcasting Corporation, 2021). Our justification for focussing on Kelly is that – despite no longer being an elected member of parliament – he continues to be a key actor in public discussions of vaccine efficacy and health misinformation, given the influence that elite cues have on opinion formation (Zaller, 1992) and his sizeable online following. In particular, we empirically examine how this participatory dynamic between populist elites and their audiences attracts activity from accounts that could be described as ‘inauthentic’ actors. Using novel network analysis techniques, we detect coordinated activity to examine whether, and how, Kelly's activity attracts engagement from accounts involved in what platforms term as ‘coordinated inauthentic behaviour’. While Craig Kelly is a central actor in this study, we expand beyond the discussion of political elites to consider their audience and impact.

Kelly is not unique in this by any means. Looking internationally, we observe populist global leaders such as Donald Trump and Jair Bolsonaro engaging in similar communication tactics as Kelly (Atfield, 2022; Barry and Sanchez-Urribarri, 2022). This is seen most acutely in the juxtaposition that Kelly proposes between the Australian people and their government, medical institutions, and other places of institutional and cultural knowledge. Kelly has a significantly greater audience on Twitter – now known as X – compared to other fringe Australian politicians and, at the time of writing, has an audience of over 123,000 followers. His elite position in the public sphere confers a privileged form of communication (Harsin, 2006) that, as we show later in this paper, strategically uses unverified or unverifiable assertions to manage audience attention at scale (Harsin, 2015). It is for these reasons that we focus on the online communications of Craig Kelly and the engagement with his tweets during the COVID-19 vaccine rollout and consider the participatory activity of his followers.

We expand on previous academic work regarding Craig Kelly and other populist political elites by analysing both Kelly's tweets and those of his most engaged followers to address the research questions outlined below. The remainder of this paper is as follows. Firstly, we historicise the mis- and disinformation that accompanied the COVID-19 vaccine rollout in 2021 and reactions to Craig Kelly's participation in spreading unverified and misleading narratives. Secondly, we review literature about participatory disinformation and the role of elite actors in the spread of unverifiable and potentially misleading narratives. Next, we conduct an empirical analysis of posts from Kelly's Twitter timeline in addition to the timelines of followers who engaged with his posts. The final section discusses the findings and contextualises them with respect to ongoing debates about regulation of social media and the broader political context of governing public health within post-truth conditions marked by declining trust in mainstream media, medical science, and health authorities.

How did Craig Kelly, as a member of Australian parliament, contribute to amplifying unverified and potentially misleading information on Twitter during the COVID-19 pandemic? In what way does Craig Kelly and his audience represent the changing nature of trust in a post-truth context? Is there evidence of inauthentic engagement from Craig Kelly's audience including bots and trolls?

Misinformation about the COVID-19 vaccine rollout in Australia

At the time of data collection in September 2021, there were three vaccines approved by the Australian Technical Advisory Group on Immunisation (ATAGI): Pfizer, AstraZeneca, and Moderna (Australian Government Department of Health, 2021). As of March 2024, Pfizer, Moderna, and Novavax vaccines are available for Australians with regularly updated boosters to reflect newer strains of COVID-19 (Department of Health and Aged Care, 2023). AstraZeneca remains approved but unavailable as other brands are preferred and recommended (Department of Health and Aged Care, 2023). Due to the logistics of the initial vaccine rollout, a majority of people aged over the age of 60 received AstraZeneca as their primary vaccination course (Australian Government Department of Health, 2021). The previous Therapeutic Goods Association (TGA) recommendation for under-60 s to be vaccinated with another brand was due to the small risk of thrombosis with thrombocytopenia following AstraZeneca vaccine in this age group (Australian Government Department of Health, 2021). There were nine deaths related to the AstraZeneca vaccine in Australia following the vaccine rollout in February 2021 (Therapeutic Goods Administration, 2021). Amid the 2021 outbreak of the Delta variant of COVID-19 in Greater Sydney, ATAGI reassessed their advice for young people in hotspot areas and encouraged them to re-assess the risk/benefit profile of AstraZeneca if Pfizer was not available (Lowrey, 2021).

Misinformation actors thrived on the uncertainty around the evolving situation and rapidly updating advice related to vaccines and AstraZeneca specifically. In a 2018 study of 140 countries, 79% of the global population perceived vaccines as safe and 84% viewed them as effective (Curiel and Ramírez, 2021). We acknowledge that no vaccine is risk free and side effects may occur with all vaccinations and medication. However, anti-vaccination sentiment is higher in relation to COVID-19 vaccines, and this has been amplified by misinformation spread through social media channels (Curiel and Ramírez, 2021). A study in the United Kingdom revealed that, amongst their nationally representative sample, an increase in vaccine hesitancy was associated with lower trust in government institutions (Jennings et al., 2021). This creates a vicious cycle as governmental distrust is also associated with a greater susceptibility to misinformation (Jennings et al., 2021). Australian misinformation actors may take advantage of uncertainty to push anti-vaccination narratives alongside other fringe beliefs, which results in a further erosion of trust in institutions and public health orders.

It is in this context of increased vaccine hesitancy and institutional distrust that we undertake the present study. Previous research shows that misinformation can be spread by a range of actors in the public sphere – citizens, organisations, celebrities, bots, trolls, and elected officials, to name a few (van der Linden, 2022; Ruffo et al., 2023). However, there is a growing recognition that ‘elite’ actors in the public sphere, such as politicians, journalists, and celebrities, have a disproportionate role in ‘super spreading’ misinformation given their privileged role as opinion leaders in society (Nielsen, 2024). We now know that ‘super spreaders’ with large followings are disproportionately responsible for the spread and amplification of harmful medical misinformation across platforms (Beckett, 2021). For example, a study by the Center for Countering Digital Hate revealed that just 12 people are responsible for up to 65% of vaccine misinformation on Facebook, Instagram, and Twitter (Bond, 2021). Likewise, politicians such as Donald Trump can be considered the worst spreaders of mis- and disinformation, as measured by engagement, reach, and ability to influence the rest of the media ecosystem (Applebaum, 2020; Etling et al., 2020). This effect is arguably amplified during times of turbulence such as a pandemic. In this paper we focus on Craig Kelly – a member of Australian parliament at the time of data collection – whose practices of spreading misinformation have been widely criticised and reported upon (e.g., Australian Broadcasting Corporation, 2021; Dalzell, 2020; Dalzell, 2021; Ferguson, 2021; Gillespie and Tamer, 2022; Henderson, 2021; Karp, 2021).

Contextualising Craig Kelly’s parliamentary terms and previous media coverage of misinformation

Between 2010 and 2022, Craig Kelly was an Australian member of parliament representing the constituents of Hughes, situated in the southern suburbs of Sydney (Parliament of Australia, 2022). Kelly was initially elected as a member of the Liberal Party but left to become an independent in February 2021 (Parliament of Australia, 2022). In August 2021, Kelly was approached to be the leader of the United Australia Party (UAP) - a minor, right-wing party established by businessman Clive Palmer (United Australia Party, 2021). Prior to Kelly leaving the Liberal party, Prime Minister Scott Morrison and his government garnered criticism for the refusal to publicly refute Kelly's online claims that undermined the government's own health advice regarding COVID-19 and vaccination (Dalzell, 2021).

Kelly has had a fraught relationship with social media platforms, due to his promotion of unverified and potentially misleading claims about public health (Macmillan and Worthington, 2021). In February 2021, Kelly was suspended from Facebook for a period of seven days due to his promotion of COVID-19 misinformation, particularly surrounding unproven treatment options, such as ivermectin and hydroxychloroquine (Ferguson, 2021). By April 2021, Kelly's Facebook page had been permanently removed from the platform due to repeated spread of unverified claims about COVID-19 (Macmillan and Worthington, 2021). Similarly, in July 2021, Kelly's Twitter account received a 7-day ‘freeze’ due to violating the platform's COVID-19 misleading information policy (Taylor and Karp, 2021).

At the time of data collection, Kelly's Twitter following was just over 60,000 and as of March 2024 it sits at over 123,000. However, despite his large following, it is not clear the full extent of misinformation on his account, who is engaging with it, and how it is being spread further into the media ecosystem. Without the answers to such questions, it remains difficult to assess the impact that Australian politicians and other opinion leaders have in amplifying unverified and potentially misleading information both domestically and to international audiences. While much discussion has recently focussed on the role of elites in spreading misinformation and influencing public opinion (Nielsen, 2024), there are few studies empirically or conceptually addressing this issue. Therefore, we embark on this study to begin addressing this gap in knowledge and explore the scale, scope, and impact of misinformation from Craig Kelly, as a former member of parliament, in the Australian Twittersphere to answer our proposed research questions.

Disinformation and participatory disinformation in a post-truth world

Social media, particularly during the COVID-19 pandemic, has been instrumental in the production and rapid spread of false or unverifiable information through affordances such as shares, retweets, and promoting visibility of high performing content (Kumar and Shah, 2018). While a preliminary definition of mis- and disinformation has already been provided, it is important to note that these concepts are becoming increasingly difficult to define (Wilde, 2022; Harsin, 2024). Contention continues amongst academics, social media platforms, and media practitioners around establishing a singular typology or definition (Kapantai et al., 2021). As noted in early work in the field from Fetzer, while misinformation is “false, mistaken, or misleading” information, disinformation is complicated by elements of intentionality and purposeful efforts to mislead, deceive, or confuse (2004, p. 234).

The increased spread of unverifiable and potentially misleading information is symptomatic of the movement towards a post-truth society. Prior to the twenty-first century, social trust was vested in “interlocking elite institutions [that] were discoverers, producers, and gatekeepers of truth”, with some examples being science, schools, and governments (Harsin, 2018, p. 3). From an epistemic perspective, post-truth does not refer to an absence of truth, but instead the increased tendency for people to reject these traditional forms of knowledge and instead embrace what they feel and believe to be truth. As described by Harsin: “Post-truth… emphasises discord, confusion, polarised views and understanding, well- and misinformed competing convictions, and elite attempts to produce and manage these “truth markets” or competitions” (2018, p. 3). This quote is emblematic of the communication styles that Craig Kelly and his audiences engage in on digital media platforms.

Craig Kelly, at the time of data collection, as a member of parliament with a privileged position and would be considered an ‘elite’ actor on social media. We can view his communication tactics as a form of attention management, a factor in post-truth politics. Proposed by Harsin (2018), attention management derives from the restructuring of communications to suit apps, platforms, and algorithms. In an age of a near constant stream of information – often incorrect, potentially misleading, or otherwise unverifiable - beamed directly to citizens, elite actors seek ways in which to manage this new economy of attention and spread their message strategically. Kelly's communications are exemplary of this modality of post-truth messaging – there is a consistent stream of propositions designed to evoke a sense of illusory truth, increasingly polarising political messages, and emotional appeals. As outlined by Harsin, “cognition in the attention economy is typically fast, emotional, and targeted with distractions” (2018, p. 13). This is a cognate form of elite political messaging that Harsin earlier described as ‘rumour bomb’ (Harsin, 2006). Drawing on works from Berenson in the 1950s, Harsin defines rumour as a kind of persuasive messaging involving propositions that lack ‘secure standards of evidence’ (Harsin, 2006, p. 86). He argues that the speed of digital networks means that politicians can utilise the affordances of social media to ‘bomb’ the public with unsubstantiated, emotionally-driven assertions – this is indeed a viable public relations strategy that has ‘unique circulatory power’ (Harsin, 2006, p. 87).

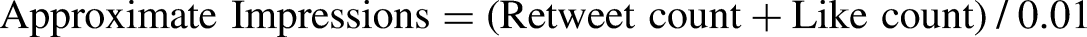

Figures 1 and 2 are an example of how Craig Kelly's messaging is reflective of a post-truth approach to taking advantage of and actively managing the attention economy. Figure 1 is in response to Kelly being permanently banned from Facebook for repeatedly spreading misinformation surrounding COVID-19 and the efficacy of vaccines and treatments in violation with the platform's guidelines (Taylor and Karp, 2021). In an exemplar of attention economy management, Kelly's response is emotional and a form of clickbait by making an accusation of inhibiting of freedom of speech without providing context of the platform's policies. There is a sense of urgency in the idea that democracy is threatened by the ‘other’ (in this case Victorian police) and an attempt to elicit a reaction. This evokes Harsin's concept of mediated time compression (2006, p. 101) where elites saturate networked publics with a near-constant stream of images and emotional appeals intended to create a fiduciary – or trust-based – affective connection rather than critical understanding or time-consuming deliberation of what is being asserted.

Craig Kelly’s reaction on Twitter to being banned from Facebook.

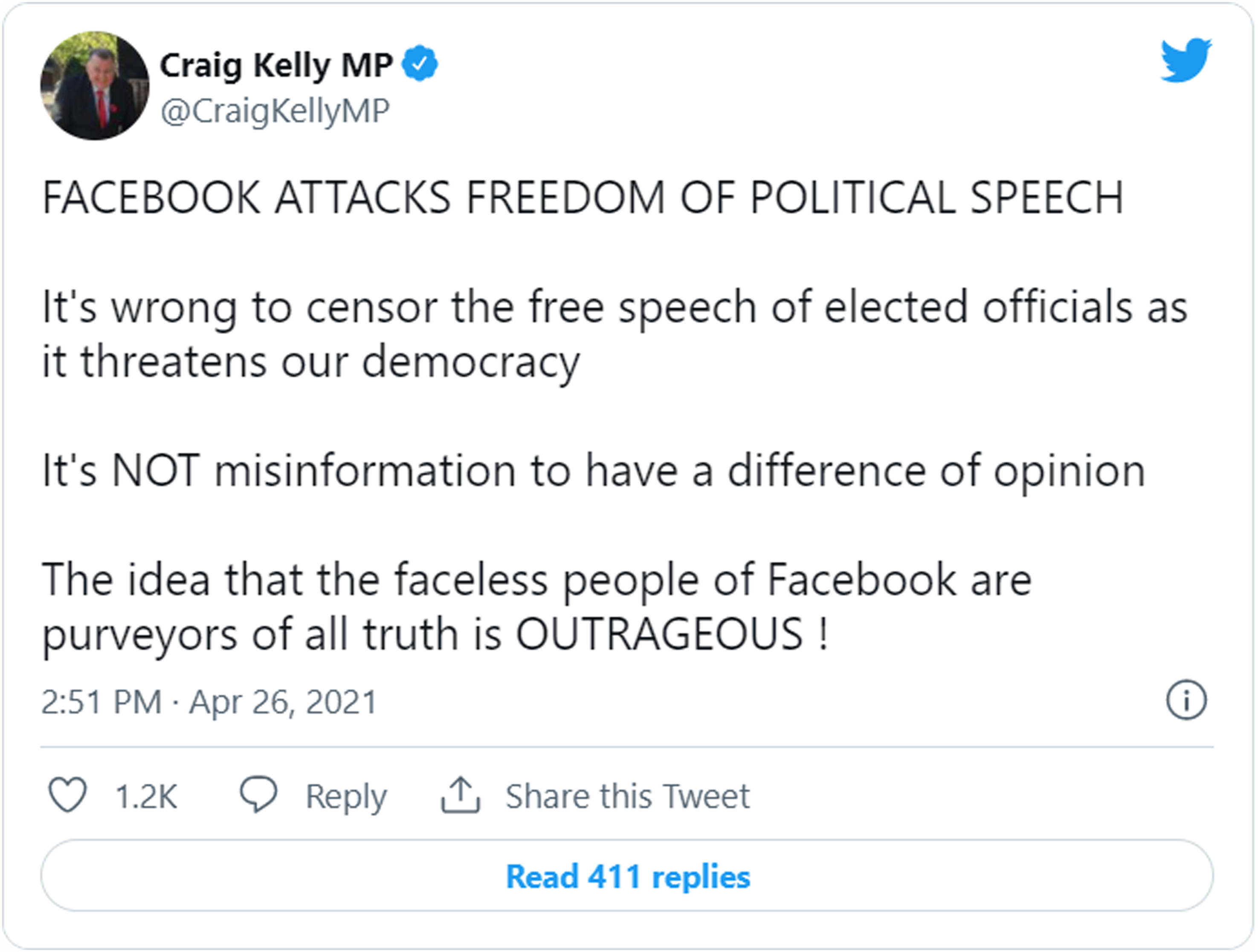

Craig Kelly’s response to a video allegedly depicting the use of excessive force by Victorian police in apprehending a person that was allegedly assumed to be attending anti-vaccination protests.

Figure 2 demonstrates an attempt to create this affective, post-truth connection between Craig Kelly and his audiences and thereby manage attention and participation. This is the idea that if information is repeated again and again, we will come to believe it – even if it is incorrect (Fazio et al., 2015). The amplification of the idea of brutality amongst Victorian police was just one of many tweets along this theme. Figure 2 also speaks to the fast turnaround required in post-truth communications; it amplified a narrative quickly and advocated for urgent action on the issue – again, this highlights Harsin's ‘time compression’ dimension of post-truth politics in social media spaces.

Building further on the work of post-truth politics and attention management, we now explore how this is also reflected in the concept of participatory disinformation and information operations (Starbird et al., 2019). An important aspect of the communication style of Kelly and many other political elites is not only that they are able to manage attention but also promote and retain participation of audiences. Previous research discusses the idea of “strategic information operations…. [encompassing] efforts by individuals and groups, including state and non-state actors, to manipulate public opinion and change how people perceive events in the world” (Starbird et al., 2019, p. 1). Starbird et al. (2019) argue that the affordances of social media platforms provide fertile ground for information operations including mis- and disinformation to emerge. This can be seen on Twitter with retweets; this affordance allows for the rapid spread of messaging from state and non-state actors with limited ability to confirm their veracity or for traditional news media to undertake verification of sources or fact-checking in time to debunk or offer rebuttal.

Social media affordances also shape the participatory exchanges that occur between elite actors and their audiences (Starbird et al., 2023). Previous work viewed engagement between elite actors and their audiences to be either top-down or bottom-up (Benkler et al., 2018). More recent work suggests that participatory exchanges are better explained by the multi-step flow model, wherein political elites act as ‘opinion leaders’ in an iterative feedback loop that incorporates “conversation starters, active engagers, influencers, network builders and information bridges” (Starbird et al., 2023, p. 14). In our case study, Craig Kelly exemplifies this type of communication as he retweets a wide variety of internet users from other influencers, health practitioners that align with his views, and his own followers.

We refer to Craig Kelly's social media posts using the terms misinformation and disinformation. This terminology is in line with how Kelly's posts have been characterised in previous work (Barry and Sanchez-Urribarri, 2022), as well as by the media and other politicians. Given his privileged role as a member of the Australian parliament, Kelly had access to the latest health advice and was well-positioned to understand the evolving scientific discourse around COVID-19 vaccines (Barry and Sanchez-Urribarri, 2022). While we do not claim to determine Kelly's intentions, our study examines how his posts are part of a broader environment of participatory disinformation, where the way unverified and potentially misleading information is received and acted upon by audiences plays a crucial role in the spread of disinformation.

Methods

Data collection

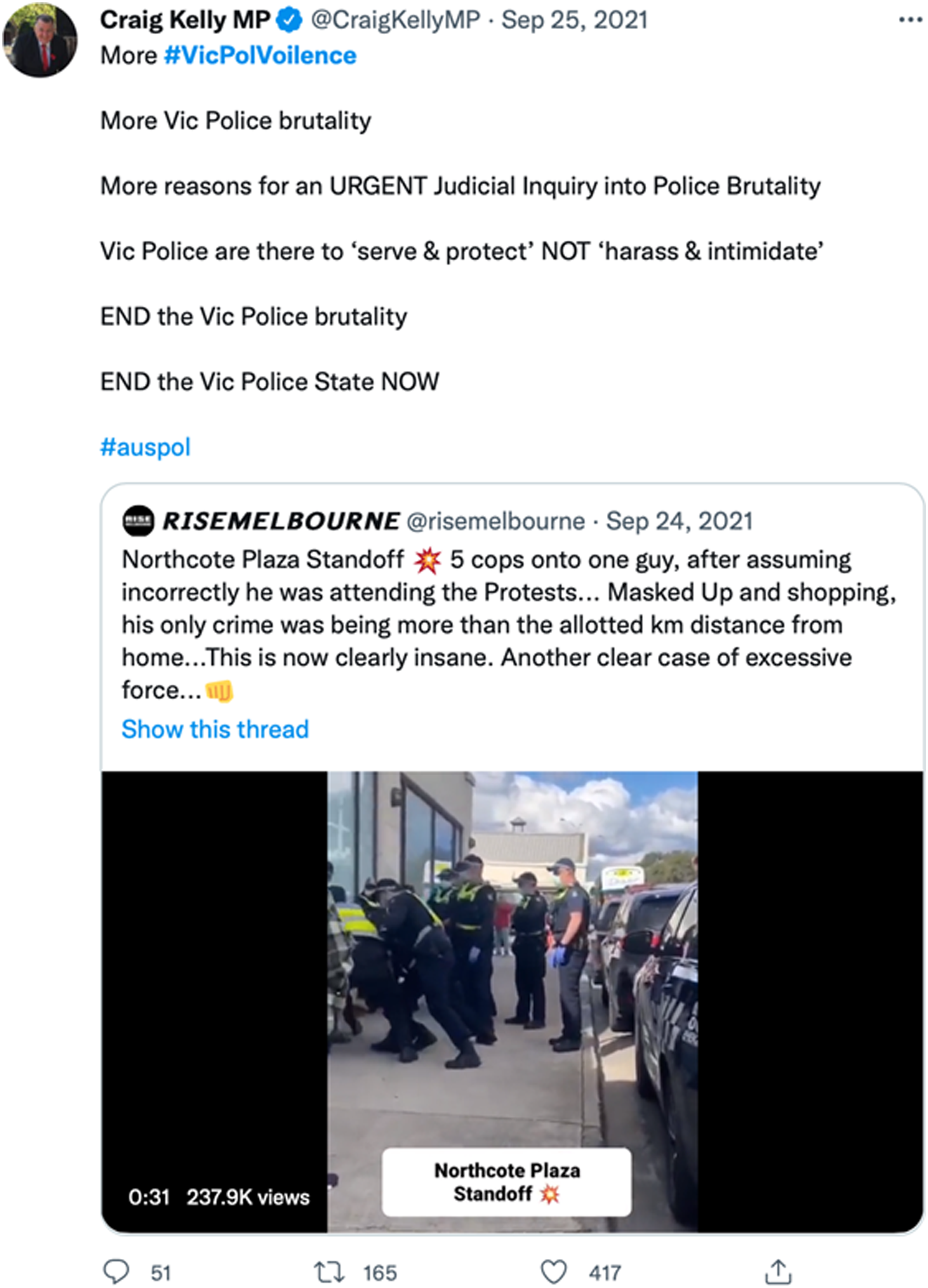

Three separate data collections were conducted for this report, to answer the research questions previously outlined. Firstly, 4317 tweets from Kelly's timeline were collected via the Twitter timeline API dating from 1 May 2021 to 24 September 2021 – approximately six months of Kelly's Twitter activity (tweet volume per day shown in Figure 3). Herein, this dataset is referred to as the ‘Kelly tweets dataset’. The rationale for this collection period was due to limits of the Twitter API and that approximately six months of data was considered to be representative of the views of Kelly on COVID-19 and vaccination. Secondly, we collected the replies and retweets of Kelly's original tweets, producing a dataset of 547,891 tweets encompassing these kinds of interactions with his account. Herein this dataset is referred to as the ‘Kelly interactions dataset’. The rationale for six months of data collection is due to limits placed by the Twitter API regarding volume of collection by researchers. Additionally, May 2021 to September 2021 represented some of the most contentious discussions during the COVID-19 vaccine rollout as different demographics became eligible and there was a significant surge in cases in some states. Our sample captured this discussion from Craig Kelly and his followers.

Craig Kelly tweet volume over time (1 May 2021 to 24 September 2021).

Ten days of tweet activity from the timelines of accounts that interacted the most with Kelly were collected between 14 September 2021 and 24 September 2021. 7063 accounts were collected based on the account having a minimum of 10 interactions with Kelly's account, as measured by either direct replies to or retweets of Kelly's tweets. Data from the 7063 accounts interacting with Kelly most often were collected during the data collection period, meaning any tweets that these accounts posted within those ten days became part of the dataset. This generated a dataset of 4,697,104 tweets, herein referred to as the ‘Timelines dataset’. This timeframe was chosen for logistical reasons; with the tweets of 7063 accounts to analyse, any greater data collection time window would have resulted in an unwieldy amount of data. A larger data collection would have been too computationally intensive to analyse given the scope of our research design.

To answer our research questions, we undertook descriptive statistical analysis of the Kelly tweets and Kelly interactions datasets, including the development of ‘impressions’ metrics imputed from the observed data – in this case, likes and retweets. At the time of data collection, impressions or tweet view counts were not available through the API. Thus, to understand the extent of exposure to Kelly's tweets, we needed to impute this using a standard quantitative heuristic as outlined in the next section.

We also used a combination of social network analysis and machine learning based bot detection. The network analysis involved applying the Coordination Network Toolkit (Graham, 2020) on the Timelines dataset to generate a network that maps coordinated retweet behaviour, known as a co-retweet network. This method derives from a technique developed specifically to detect political astroturfing on social media (Keller et al., 2020). The nodes in the co-retweet network are Twitter accounts that frequently interact with Kelly's tweets, and the links or edges in this network mean that account A and account B have both retweeted the same tweet within 60 s of each other, at least twice. We used a community detection algorithm (Blondel et al., 2008) to classify the nodes in the networks into clusters that we can label and examine in further detail using a qualitative forensic analysis approach involving close reading of tweets and account profiles By ‘qualitative forensic analysis’ we mean a systematic and detailed examination of the content within the coordinated retweet network to uncover underlying patterns, meanings, and likely motivations. This approach, like Hendrix and Morozoff's (2022) concept of ‘media forensics’ in disinformation research, involves an in-depth examination of the content of tweets, the nature of the interactions, and the characteristics of the account profiles involved in each cluster. By doing so, we take a principled qualitative approach that complements the digital methods to develop a ‘thick description’ (Geertz, 1973) of each cluster, providing a rich, detailed account that captures the complexity of social interactions and behaviours within the network.

Assessing reach and engagement with misinformation from a political actor

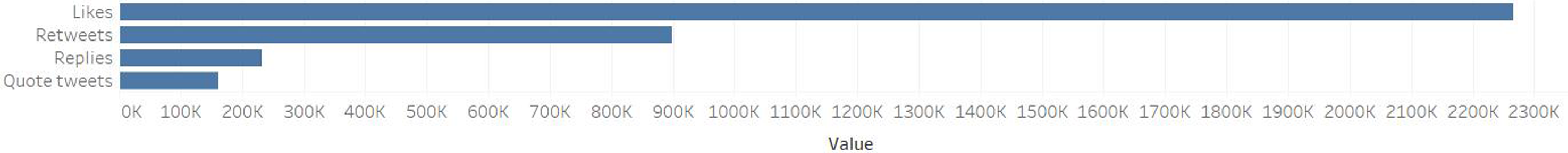

As discussed previously, Kelly's tweets have immense reach due to his privileged position as, at the time of data collection, an Australian MP and his ongoing large followership on Twitter and subsequently X. As Figure 4 shows, during the roughly six-month timeframe covered by our dataset, the Kelly tweets dataset reveals that Craig Kelly received 2,265,849 likes, 899,316 retweets from 48,390 unique accounts, attracted 232,235 replies, and 161,103 quote tweets.

Sum of engagement metrics for Craig Kelly’s Tweets.

On Twitter, impressions were defined as the number of times a user saw a tweet, while engagement data is the sum of the retweets and likes that a tweet receives. To assess the reach of Kelly's tweets in terms of the number of impressions, we approximate impressions by dividing publicly known engagement data by a conservative estimate of an engagement rate (i.e., engagement divided by impressions) of 1%. The professional trade press on social media analytics promotes a view that an engagement rate of about 1% is a reasonable approximation for a moderately active Twitter account (The Online Advertising Guide, 2020). This approximation is derived from direct engagement with key accounts and users, and open polls where readers contributed their own personal engagement rate data. It should be noted that Twitter's successor, X, provides more transparent data regarding impressions. However, at the time of data collection and writing, impressions were not yet publicly available. As such, we have utilised the following equation to determine approximate impressions:

Is there evidence of inauthentic engagement including bots and trolls?

To answer this research question, we turn to the Timelines dataset which, as described previously, contains a large-scale sample of tweets sent by accounts that frequently retweet or reply to Craig Kelly's tweets. This enables us to get a deeper understanding of who – or what – these accounts are that interact with Kelly on a regular basis. We define trolls as primarily human-controlled actors on social media platforms who act in a deceptive, destructive, and/or disruptive manner, often with an intent to harm and propagate abuse (Kim et al., 2019). On the other hand, we define social bots as computer-controlled social media accounts that often deceptively pose as humans using fabricated personas, and which seek to disrupt, bombard, and/or influence public discussions online.

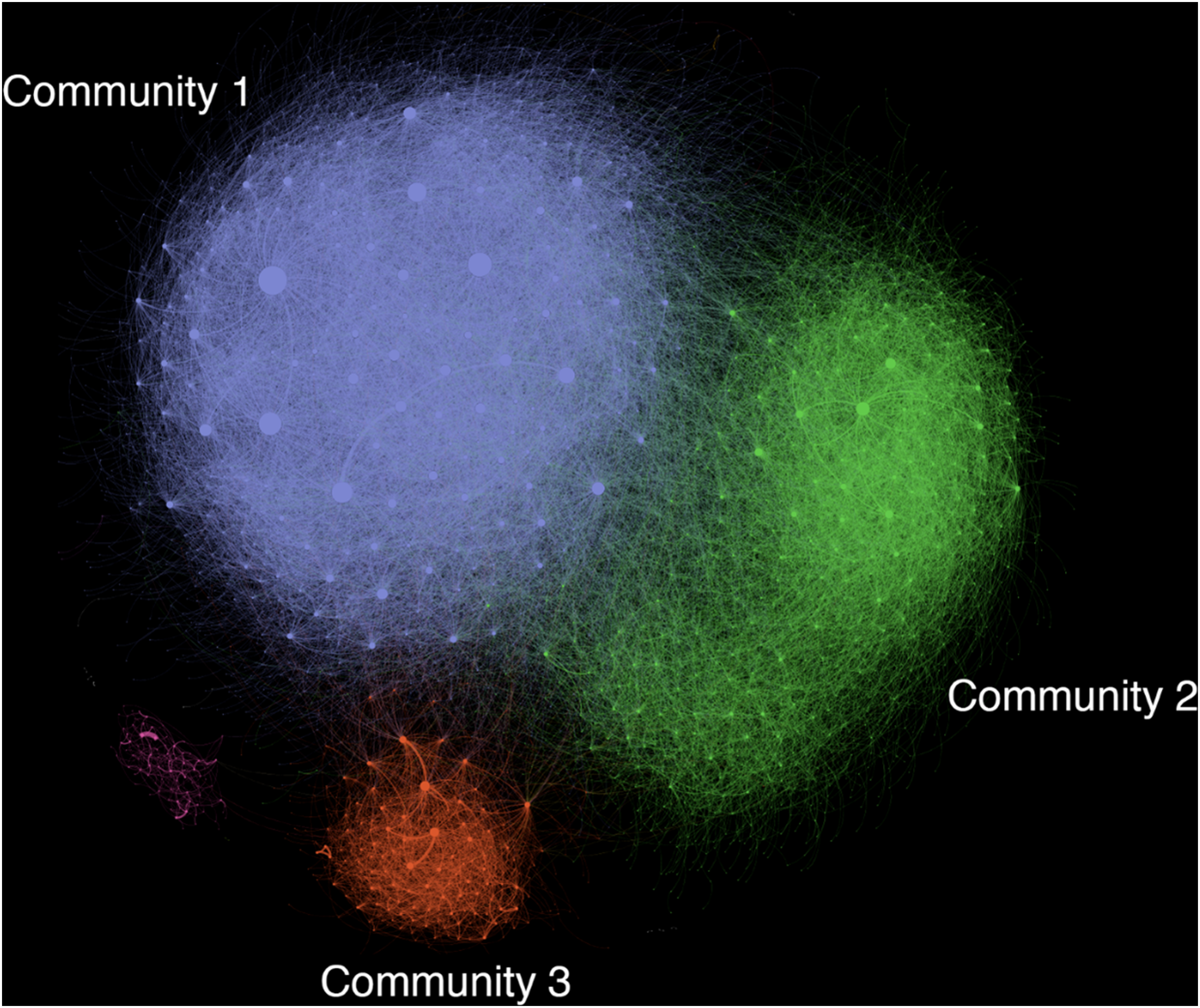

Figure 5 shows a network of coordinated retweet behaviour, known as a ‘co-retweet’ network (Graham, 2020), as described previously. Although lone trolls and bots are common, it is difficult to discover them systematically within such a large dataset. Therefore, we used a co-retweet network analysis approach to detect clusters of accounts that frequently amplify the same content at the same time. While co-retweeting often reflects genuine online campaigning such as online activism and fandom networks, it is also a signature behavioural pattern of malicious actors who coordinate together for the purposes of ‘brigading’ and harassing people and/or platform manipulation such as trying to force certain hashtags or topics to trend (Massanari, 2017). We observe three main loosely coordinated retweet communities as shown in Figure 5. We now describe each community and the key actors within them.

Co-ordinated retweet network of accounts that frequently engage with Craig Kelly MP's Twitter account.

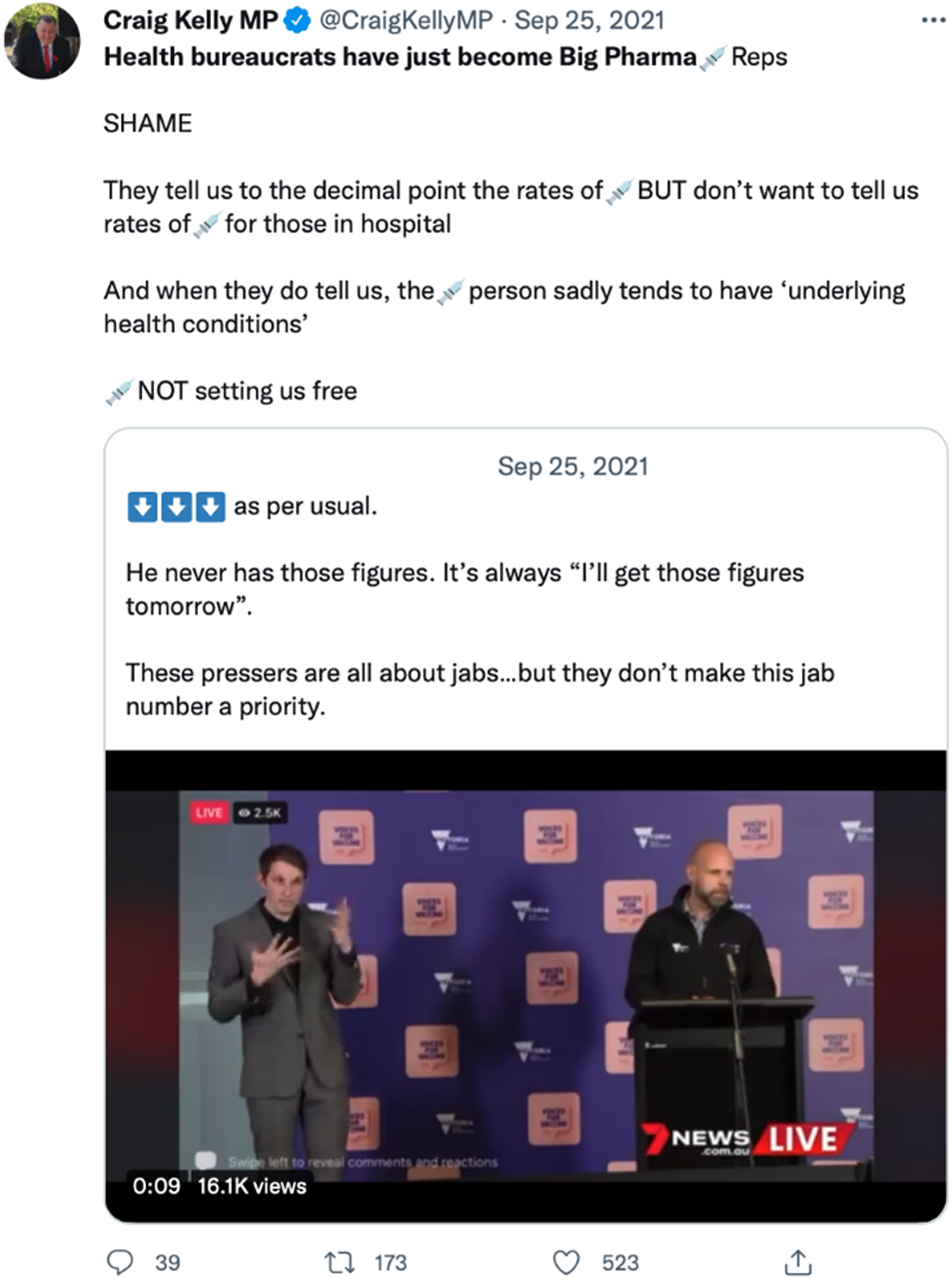

Community 1 consists of 49.9% of all nodes in the network (n = 1695) and consists of fringe far right activists. Qualitative analysis undertaken by the researchers including close reading of posts determined these accounts are hyperpartisan and are strongly opposed to the former Victorian Premier Daniel Andrews and the Australian Labor Party. This community contains content that undermines the efficacy of COVID-19 vaccines and amplifies narratives that promote anti-lockdown attitudes and alleged brutality by Victorian Police against lockdown protestors. They are loosely coordinated in the sense that they retweet the same content repeatedly within a short time of each other. Figures 6 shows a prototypical Craig Kelly tweet that is retweeted by Community 1. We observe that Kelly himself is quote tweeting a fringe account and framing the content to explicitly undermine the Victorian health authorities who he argues have ‘just become Big Pharma [needle emoji] Reps [representatives]’ (Figure 6).

Craig Kelly Tweet from 25 September 2021 demonstrating an example of content shared by community 1 discussing big pharma.

Community 2 consists of 1356 nodes (39.9% of total) and is a COVID-19 anti-vaccination and anti-mandate with a strong United States (US) and United Kingdom (UK) focus. The accounts are fringe activists, often with anonymous profiles, who use the Australian government as an example of authoritarian rule who impose and enforce vaccine mandates. Accounts in this network try to stoke civil unrest by tweeting about the ‘uprising’ of citizens around the world who are opposed to vaccine mandates and enforcement of lockdown measures by police. They amplify narratives that citizens around the world are fighting for ‘freedom’ against what they believe are tyrannical abuses of human rights by governments and health authorities. This evokes patterns that are emblematic of post-truth civil discourse, in that people are more inclined to believe what they feel is true, rather than what is factually correct or even acknowledging institutional forms of knowledge production (Harsin, 2018) that are ‘sound’ because they serve societal purposes and produce reliable knowledge (Laufer and Nissenbaum, 2023).

Community 3 is a coordinated bot-like network containing 216 nodes that spreads anti-Chinese Community Party (CCP) and COVID-19 conspiracy theory content. Figure 7 shows a typical profile of accounts in Community 3, which reveals that this is a coordinated campaign called ‘Himalaya Australia Digital Soldiers’. The account shown in Figure 7 has tweeted 92,100 times between March 2021 and March 2022, or an average of 250 tweets per day. The profile bio information is explicitly anti-CCP and conspiratorial in tone, repeating the unsubstantiated claim that COVID-19 is a bioweapon purposely designed by the CCP.

Typical example of account profile in community 3, showing the coordinated bot-like network of the ‘whistleblower movement’.

Almost all the accounts in Community 3 have the same profile branding as the account shown in Figure 7. Indeed, these accounts are part of the ‘Whistleblower Movement’ or ‘Whistleblower Revolution’ (爆料革命), a campaign funded by billionaire Guo Wengui – also known as Miles Guo amongst other aliases - a Chinese businessman in exile in the United States (Hilgers, 2018). Guo has ties to Trump's political strategist Steve Bannon and together they have been criticised for masterminding a sprawling network of online disinformation that spreads baseless conspiracy theories, anti-CCP sentiment, anti-vaccination rhetoric, and false allegations of election fraud in the 2020 US Presidential election (Whalen et al., 2021). This billionaire-backed information operation has been reported on previously by the Australian Strategic Policy Institute (ASPI) in relation to state-aligned disinformation relating to the Hong Kong protests (Uren et al., 2019). The specific operation discovered in Community 3 goes by the name ‘澳喜特战旅’ (Aoxi Special Forces Brigade). These accounts post and amplify content in English and Chinese, and they specifically amplify Australian right-wing accounts including Craig Kelly. Revisiting Figure 2, we observe an example of a tweet authored by Kelly which, according to our analysis, was amplified and retweeted in a coordinated manner by accounts within Community 3. Although he is not the focus of their retweet activity, Kelly's tweets about social unrest and advocating against lockdowns are picked up by these bot-like accounts to serve their own purposes and narratives.

Discussion and conclusion

We have demonstrated that Craig Kelly was a central part of a participatory disinformation campaign on Twitter and is a key driver of attention and participation within this network to unverified and misleading health information. His tweets were amplified and strategically addressed by a variety of communities, some of which are engaging in inauthentic activity but more generally in post-truth communication. Our findings suggest that Twitter and its successor X, has become a space that is poorly suited for democratic exchanges of information – and for deliberation – but instead is heavily influenced by users competing for attention and battling algorithmically against clickbait, trolling, and low-quality content (Harsin, 2018). This, in combination with Kelly's promotion of unverified medical and vaccine-related information while a member of parliament, is potentially damaging to public health and serves to erode trust in scientific and institutional authority. In this way, it represents an anti-deliberative, public relations-driven form of attention management that largely precludes the possibility of correction or verification. Moreover, it contributes to what Harsin describes as the fiduciary aspects of post-truth politics that eschews trust in society-wide authoritative truth-tellers in favour of populist micro-truth-tellers (2018) such as Kelly.

This privileged communication approach is anti-deliberative in the sense that audiences are bombarded with a near-constant stream of emotive imagery and text invoking the idea that citizens are at war with government and established institutions of the state and inviting them to take action and mobilise. Kelly's Twitter account was centrally important to his political strategy while still a member of parliament and continues to serve as a platform to gain a larger audience – this PR-driven form of political messaging thus has a life of its own well beyond the term of office. Our findings demonstrated that Kelly's tweets containing misinformation about COVID-19 and lockdown measures (Dalzell, 2021; Australian Broadcasting Corporation, 2021; Taylor and Karp, 2021) were amplified by his audience to reach up to hundreds of millions of screens. While Twitter is comparatively a small platform in terms of user population, it has a disproportionately important role in the global media ecology because of its focussed concentration on influential actors in the public sphere – journalists, politicians, celebrities, and news media (Fournier-Tombs, 2022). Kelly's Twitter – now X - account is therefore a uniquely important node in the expansion of the post-truth condition in Australian political discourse, in part because of the affordances of platforms such as Twitter that privilege attention and speed over veracity or quality of content.

As our findings show, Kelly's social media activity strategically addresses and attracts a highly engaged audience of predominantly far-right activists, anti-vaxxers, and conspiracy theorists who help to mobilise and amplify these post-truth narratives. This affords the opportunity for such fringe actors to take dangerous ideas and ‘trade up the chain’ (Marwick and Lewis, 2017) by trying to get the attention of mainstream media, celebrities and politicians, to make these beliefs appear more credible and reach a broader audience. As Graham et al. (2021) argue, tagging influential actors in the public sphere by mentioning them in tweets constitutes a form of ‘reverse agenda setting’, where fringe actors who are on the periphery exploit the affordances of social media to gain the attention of elites who then amplify their messages to mainstream audiences. Kelly already broadcasted to a mainstream audience given that he has spread misinformation in parliamentary speeches (Dalzell, 2021), which resulted in a reprimand by the former Prime Minister Scott Morrison (Henderson, 2021). This combination of political privilege and social media virality means that Kelly's impact on the spread of unverified and potentially misleading content is singularly important.

Contrary to the ‘hypodermic needle’ model of communication (Bineham, 2009), in the case where misinformation sent by political elites ‘injects’ belief changes in those exposed to it, our study identifies a more nuanced and consequential model of influence. Our findings suggest that politicians such as Craig Kelly both frame and are strategically drawn upon as resources by coordinated mis- and disinformation campaigners. Our discovery of large-scale coordinated communities of anti-vaccination and anti-lockdown far right activists shows how Kelly's tweets are heavily quote tweeted and retweeted within these communities to build up ‘evidence’ to support their conspiracy theories. At the same time, Kelly actively retweets and quote tweets members of these communities who are otherwise at the fringe of online discussions, driving their messaging closer to the core of mainstream attention. This evokes Starbird's elaboration of the feedback loop (Starbird, 2021) between political elites and their audiences comprised of fringe and civil society actors. In this way, politicians such as Kelly exploit the audience interplay and affordances of social media to frame issues for their audiences, who then strategically take up, modify, and act upon these frames. In turn, this generates viral content that captures the attention of political elites, reinforcing the frame and building a sense of shared grievance through repetition and illusory truth (Harsin, 2018) - a form of post-truth communication.

Starbird's notion of ‘participatory disinformation’ (2021) hereby usefully characterises the exchanges we observe in our analysis whereby coordinated communities of anti-vaxxers, conspiracy theorists, far right activists, and even billionaire-backed foreign information operations are positioned in a participatory feedback loop with politicians such as Craig Kelly. This highlights another key discussion point of our study: it is evident from analysing these practices that Kelly is a key node in a participatory disinformation feedback loop. Both Kelly and his audience's messaging adopts and repurposes domestic disinformation from the United States, in particular rhetoric that undermines trust in medical and liberal democratic authority. The messaging has all the hallmarks of US-style conspiratorial disinformation, as defined in the literature (Starbird, 2021): that a shadowy cadre of ‘Big Pharma’ elites are hiding the truth about vaccine harm and have captured political parties; that democratically elected governments are tyrannical and should be divested of political authority; and consequently, civil unrest and political violence is warranted. The January 6 Capitol riots are a spectre that haunts our analysis in this paper, given the similarity between what we observe in our analysis and the dynamics and messaging during the 2020 US Presidential election. By allowing this disinformation and these participatory dynamics to play out largely unchecked on Twitter, this provides a breeding ground where extremism festers and takes root.

We emphasise the importance for social media platforms to remain cognisant of the power of state actors and political elites when implementing moderation practices, as the privileged positions they occupy can lend credibility and provide large-scale exposure to otherwise fringe ideas that would remain on the periphery of public discourse. Our analysis, informed by findings in this paper and supported by broader literature on the global shift towards far-right and populist governments (Allen, 2023; Torner and Ivaldi, 2023), suggests that the affordances of social media may contribute to outcomes that are not in the interest of population health and the normative ideals of liberal democracy. Craig Kelly's account at the time of data collection was a forerunner to the concerning anti-establishment rhetoric that now exists on X, the successor to Twitter. The platform in its current iteration is representative of anti-public sphere discourse with the algorithmic highlighting of misinformed content and ‘shadow banning’ of authentic voices (Davis, 2021).

Whilst no longer a member of parliament, Kelly has provided an exemplar of how politicians as elite actors leverage their own trust-based authority in the public sphere to spread mis- and disinformation on social media well beyond their term of office. We recommend that social media platforms consider specialised rules and policies along with targeted, locally contextualised moderation to mitigate the consequences of politicians’ participatory interplay with audiences and mainstream media. In 2021, the Digital Industry Group Inc (DIGI) developed a voluntary Code of Practice on Misinformation and Disinformation for social media platforms, of which Twitter was one of eight signatories, to outline the steps they were taking to reduce mis- and disinformation. However, in November 2023, X (formerly known as Twitter) was removed as a signatory after committing a serious breach of the code by failing to respond to a complaint (Taylor, 2023). The complaint was related to X's removal of a feature which allowed users to report posts as mis- or disinformation (Taylor, 2023). Additionally to the voluntary code of practice, the Australian Government is, at the time of writing, considering public submissions to inform a potential new bill which proposes new powers for the Australian Communications and Media Authority to combat misinformation.

An example of locally relevant moderation practices would be a collaboration between Australian regulators such as the Australian Communications and Media Authority and social media platforms to develop enforceable industry codes to address domestic disinformation of this nature. We also acknowledge that moderation needs to tread a careful line between harm reduction and freedom of speech. Case studies in domestic disinformation such as Craig Kelly are especially prescient because Australian politicians are afforded protection for authorised electoral content (Office for the Minister for Communications, 2023). Future research should investigate how regulators and policymakers can re-evaluate the scope and mandate of industry codes, to recognise, intercept and take action to prevent anti-deliberative forms of political discussion that results from the participatory feedback loop of politicians, elites, and their audiences on social media.

Footnotes

Acknowledgements

We would like to thank Gavin Xun Zhou from the RMIT for his valuable insights and feedback on this paper. We would also like to thank the Digital Media Research Centre for their ongoing support. We also acknowledge the support of the Australian Centre of Excellence in Automated Decision-Making and Society (ADM + S) where Graham is an Associate Investigator.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was partially funded by the Australia Institute's Centre for Responsible Technology, Canberra.

Timothy Graham receives funding from the Australian Research Council for his Discovery Early Career Researcher Award (DE220101435), ‘Combatting Coordinated Inauthentic Behaviour on Social Media’.

Australian Research Council, (grant number Discovery Early Career Researcher Award (DE2201014).