Abstract

While service industries like healthcare and elder-care increasingly use technology to address staff shortages, its potential to support consumers experiencing vulnerability remains under-explored and under-utilized. For instance, elderly people who are experiencing vulnerability due to language loss or reduced proficiency in communicating in their national language can be supported by voice-based interfaces, such as robots, that can communicate with them in their native regional language. However, responses to such regional language adaptations, as well as the potential managerial benefits of tailoring service offerings to consumers with specific linguistic needs, remain unclear. This study begins to explore this area and reveals that elderly consumers prefer to interact with robots in their regional language rather than in the national language. We also find that caregivers are willing to pay a 30% premium for a companion robot that can use a regional language, and that regional language capabilities foster trust in robots in the general population, too. The theoretical contribution of the study draws from linguistics to show that these positive effects arise because regional synthetic speech is processed more fluently. The study also highlights human-likeness as a crucial boundary condition, as regional language adaptations unexpectedly backfire when delivered with machine-like voices. For service organizations, regional adaptations in voice-based interfaces present a unique opportunity to better serve linguistically vulnerable consumers while also fostering more positive attitudes in the general population, provided there is a high degree of human-likeness.

Keywords

Introduction

Over the past decades, technology has radically changed the way consumers interact with firms and frontline employees at the various points of contact along the customer journey (Ostrom et al. 2021). In particular, service industries like healthcare and elder-care are increasingly using technology to address staff shortages, which the World Health Organization (2023) projects will amount to a shortfall of up to 10 million healthcare workers worldwide by 2030. Yet these technological advancements also come with their own challenges, as not all consumers are equally equipped to benefit from them. Some consumers, and especially the elderly, can feel socially excluded and inadequately skilled when having to interact with technology, experiencing vulnerability, which is defined as a “dynamic state of powerlessness and susceptibility to harm” (Hermann, Yalcin Williams, and Puntoni 2023).

However, at the same time, technology can also serve as an ally that assists and supports consumers on their service journeys. Voice-based interfaces, for instance, offer a promising solution to make services more accessible because they enable more natural interactions with organizations. Consumers can engage with these systems in ways similar to interacting with human employees, but without the time and effort limitations typically associated with human staff (Hermann, Yalcin Williams, and Puntoni 2023; Zierau et al. 2022). An example of a voice-based interface that is increasingly used is the embodied robot, which is defined as “information technology in a physical embodiment, providing customized services by performing physical as well as nonphysical tasks with a high degree of autonomy” (Becker, Efendić, and Odekerken-Schröder 2022; De Keyser et al. 2019; Jörling, Böhm, and Paluch 2019, p. 405; van Doorn et al. 2017).

One important advantage of voice-based interfaces is their versatility regarding language. This enables seamless interaction with diverse populations across linguistic boundaries, one example being Google’s voice assistant’s fluency in regional Indian languages (Chahal and Mahajan 2024). Such versatility regarding language can be of particular importance for consumers experiencing linguistic vulnerability, such as migrants or members of linguistic minorities, as well as multilingual elderly people who over time experience age-related loss (attrition) of later-acquired languages (Hummert, Gartska, and Shaner 1995). A consequence of this attrition is that these elderly people retreating to their first language, which may be a regional language, especially given demographic patterns in which older adults remain concentrated in rural areas where regional languages are still dominant. Multilingual robots can therefore not only be a necessary precondition to enable the communication that is crucial for service provision but can also accommodate consumers experiencing linguistic vulnerability, such as elderly consumers with language loss.

However, the gaining of insights into how such consumers react to regionally adapted voice-based interfaces is lagging behind the technological developments, which are advancing apace. In general, even though it has long been accepted that language is pivotal for smooth communication and successful interactions on the service frontline, prior research has paid remarkably little attention to language use in human-to-human interactions (Holmqvist, Van Vaerenbergh, and Grönroos 2017), and even less to the use of synthetic language by bots (Guha et al. 2021). While the previously established preference for being served in one’s native language by humans (Holmqvist and Van Vaerenberg 2013) likely transfers to robotic service, it is unclear whether this also holds for regional language. Here, regional language refers to a language that is spoken in a specific geographical region—in daily communication, literature, media, and education—and may differ significantly from the country’s official national language in terms of syntax, semantics, and morphology (Carlson and McHenry 2006). 1

So far, research on the potential benefits of enabling bots to speak regional languages is very limited (Guha et al. 2021), and what research there is appears inconclusive, with robots and virtual agents imbued with regional speech being viewed as warmer and more likeable, and yet at the same time as less competent (Kühne, Rosenthal-von der Pütten, and Krämer 2013; Kühne et al. 2024; Lugrin et al. 2020; Sievers and Russwinkel 2023, Torre et al. 2018). Amplifying the risk of regional language implementations, regional language capabilities could raise consumer expectations regarding the robot’s human-like abilities, creating the risk of a backfire effect if the robot fails to meet these expectations (Torre and Le Maguer 2020). In general, a robot speaking regional language could be perceived as more human-like, potentially increasing its eeriness or triggering feelings of discomfort (Kühne et al. 2024).

These contradictory and inconclusive findings pose a dilemma for managers. While many companies express a commitment to social objectives such as inclusion and equitable service delivery (Hermann, Yalcin Williams, and Puntoni 2023), such uncertain benefits for potentially small consumer segments, and the above-highlighted risks, may make the business case of serving customers in their regional languages difficult to justify. Moreover, the quality of execution is critical, as has been seen with negative user responses to AI misunderstanding localized language (Chahal and Mahajan 2024), all of which adds to the development costs of effective regional language adaptations.

To address this tension, our research examines whether regional language adaptations foster or rather jeopardize consumer trust and their willingness to pay for a robot, and whether such adaptations backfire when there is a mismatch with a robot’s human-likeness. We therewith examine the potential of regional language adaptations to foster both inclusion and value creation, in particular in elder-care settings, in which three of our five studies are situated. In elder-care, there is a growing need for robots to speak regional languages, due to the combined challenges of increasing staff shortages and the growing population of elderly experiencing language loss and consequent vulnerability.

Importantly, we not only show that elderly consumers prefer to interact with a robot in their regional language rather than in the national language but also that potential caregivers are willing to pay a premium of 30% for a companion robot that speaks the regional language of their elderly family member. Regional adaptations also show benefits in the general population, which trusts a robot speaking the regional language more than its national-language speaking counterpart because regional synthetic speech is processed more fluently by consumers with a background from the same region. However, we also highlight an important boundary condition: the robot must have human-like features, such as human-like morphology and voice. Moreover, regional language adaptations can even backfire when the robot’s voice does not resemble a human voice, because the fluency of processing the regional language is undermined when the language is relayed in a machine-like voice.

For service organizations, integrating regional adaptations into voice-based interfaces presents a unique opportunity to better serve elderly consumers experiencing linguistic vulnerabilities and their caregivers, while fostering more positive attitudes in the general population, too. Such adaptations can also be viewed in the light of a growing recognition that design decisions carry ethical and inclusivity implications (Lobschat et al. 2021; Skarli 2021). Yet we also caution that the benefits from regional language adaptations are contingent on the technology having human-like features.

Summing up, the key contributions of this research are as follows:

(1) To explore the effect of regional language adaptations on the extent to which consumers prefer, and trust, a robot. This research responds to calls in the literature for more insights into the “benefits of bot/service robots with accents or dialects” (Guha et al. 2021, p. 36). In doing so, it bridges three fields that until now have been considered as being distinct—speech technology, linguistics, and leveraging service technology to mitigate consumer vulnerability—and develops and empirically tests a novel theoretical framework building on all three fields.

(2) To shed light on how processing fluency—an under-researched construct from the field of linguistics (Alter and Oppenheimer 2008b; Dragojevic 2020) that up until now has only been applied to human speech—can shape attitudes toward technology-based services using synthetic language, and the willingness to pay for it.

(3) To establish perceived human-likeness as an important boundary condition for reaping the benefits of regional language adaptations. As an important theoretical implication, this research shows that when applying the fluency principle to synthetic speech, the human-like qualities of the voice and of the device must be considered as well.

Our framework is tested in five studies, two of which are field studies involving real interactions between a human respondent and an embodied robot, where we also observe actual behavior. This study set-up responds to calls in the literature to investigate how clients react to “robots in the wild” instead of in the controlled environment of a laboratory (Jung and Hinds 2018).

Robots and Language Use on the Organizational Frontline

Language clearly matters in interpersonal communication at the organizational frontline (Holmqvist, Van Vaerenbergh, and Grönroos 2017). However, literature is still scarce on how language style (Kumar et al. 2022) and language use—human or synthetic—affect the consumer’s experience at the various contact points along the customer journey (Kim and Park 2023). Previous research does show that—with some exceptions (Holmqvist et al. 2019)—consumers fluent in more than one language prefer to be served in their native language, whether by robots (e.g., Salem, Ziadee, and Sakr 2014) or human frontline employees (e.g., Holmqvist and Van Vaerenberg 2013). One reason for this is the stronger emotional response triggered by one’s native language, as evidenced by more emotion-related brain activity (Huang and Rau 2023).

For elderly individuals experiencing second-language attrition, being addressed in their native language is particularly important. The gradual decline in the retention of second-language vocabulary and a diminishing grasp of grammatical structures leads such individuals to increasingly rely on their native language (first-language reversion) and to encounter challenges in utilizing their second language (Hummert, Gartska, and Shaner 1995). When they are no longer able to navigate in an environment where the second language is dominant, the elderly can experience a state of vulnerability, and feel powerless and socially excluded. Moreover, they can experience loneliness and be susceptible to risks and (unintentional) harm due to misunderstandings with caregivers. Voice-based interfaces with fine-grained speech technology delivered in a regional language hold great promise in overcoming the issues arising from such language attrition.

Yet findings in prior literature on how such regional adaptions are received are inconclusive, ranging from their having no effect, to regional speech positively enhancing the robot’s perceived social skills and warmth, to negative effects where a robot speaking in a regional language was viewed as being less competent and trustworthy than one speaking the national language (Kühne, Rosenthal-von der Pütten, and Krämer 2013; Kühne et al. 2024; Lugrin et al. 2020; Sievers and Russwinkel 2023; Torre et al. 2018). In human-to-human service encounters, humans speaking a regional language were viewed as less competent, unless the fit with the buyer’s own dialect was high (Mai and Hoffmann 2011). Therefore, implementing regional language in voice-based interfaces can also come with a downside.

Do Consumers Prefer Robots Speaking Their Regional (vs. National) Language?

For our theorizing, in line with previous literature on voice-based interfaces in service provision, we draw on insights from the field of linguistics (e.g., Zierau et al. 2022). Literature in the field of linguistics suggests that processing fluency is an important determinant in forming favorable or unfavorable language attitudes. Processing fluency is defined as the “ease or difficulty listeners experience processing a person’s speech” (Dragojevic 2020, p. 159). When a listener engages in the cognitive task of speech processing, the effort that is required to complete that task produces a corresponding metacognitive experience that can range from highly fluent (effortless) to highly disfluent (effortful) (Alter and Oppenheimer 2008b). One source of disfluency can be the perceptual difficulty that can arise if the speech is not in one’s native tongue (Kim and Park 2023).

According to the feelings-as-information theory, people use such metacognitive experiences as a heuristic for evaluating the stimulus itself. As highly fluent, easier processing is a more pleasant experience than disfluent processing, individuals tend to infer that the stimulus is more trustworthy, familiar, or preferable—not because of its objective qualities, but because of the positive affect associated with fluent processing (Alter and Oppenheimer 2008a; Schwarz et al. 2021; Schwarz and Clore 1983, 2003). Due to lower linguistic fluency, non-native speakers of English have been shown to provide lower ratings of US hotels than native speakers and indicate a lower willingness to pay for their services (Kim and Park 2023). Conversely, fluent processing has been found to increase the liking of, and preference for, advertisements, products, and brands (Schwarz et al. 2021).

While the previous literature has so far only examined the processing fluency of human speech (e.g., Dragojevic and Giles 2016), we expect that the fluency principle can also be applied to synthetic speech spoken by an embodied robot. For speakers of a regional language, synthetic regional speech should be more fluently processed, requiring less effort than processing national-language synthetic speech, which in turn should positively affect consumer attitudes and preferences.

Such positive effects of regional language use on attitudes and preferences can also be theoretically grounded in social categorization and intergroup processes. Research on human-to-human interactions shows that conversing in a regional language can lead to the speaker being categorized as a member of the listener’s in-group when both originate from the same region (Mai and Hoffmann 2011), resulting in a more positive evaluation. Previous literature on human–robot interactions has shown that robots presented as in-group members (e.g., based on ethnicity or team assignment) similarly elicit more positive reactions (e.g., Vanman and Kappas 2019). However, we expect that the degree to which regional speech can generate a strong or enduring in-group categorization in human–robot interactions is limited. This is because, unlike human speakers, robots lack the biographical grounding—such as being born, raised, and socialized within a specific region—that imbues regional language with social meaning. As a result, the in-group categorization likely remains fragile or superficial, as the robot may be perceived as lacking the categorical legitimacy required for genuine in-group membership (Hornsey and Hogg 2000).

Another theory relevant for the effects of robot regional speech on consumer attitudes and preferences is the “uncanny valley theory” (Mori, MacDorman, and Kageki 2012), which suggests that more human-like robots—that is, robots that resemble a human in embodiment, behavior, and/or voice—elicit a sense of eeriness. So in our context, the robot’s ability to speak the regional language could increase its perceived human-likeness and, as a consequence, evoke unsettling, negative downstream responses. However, so far, there is no direct empirical evidence for this effect (Kühne et al. 2024).

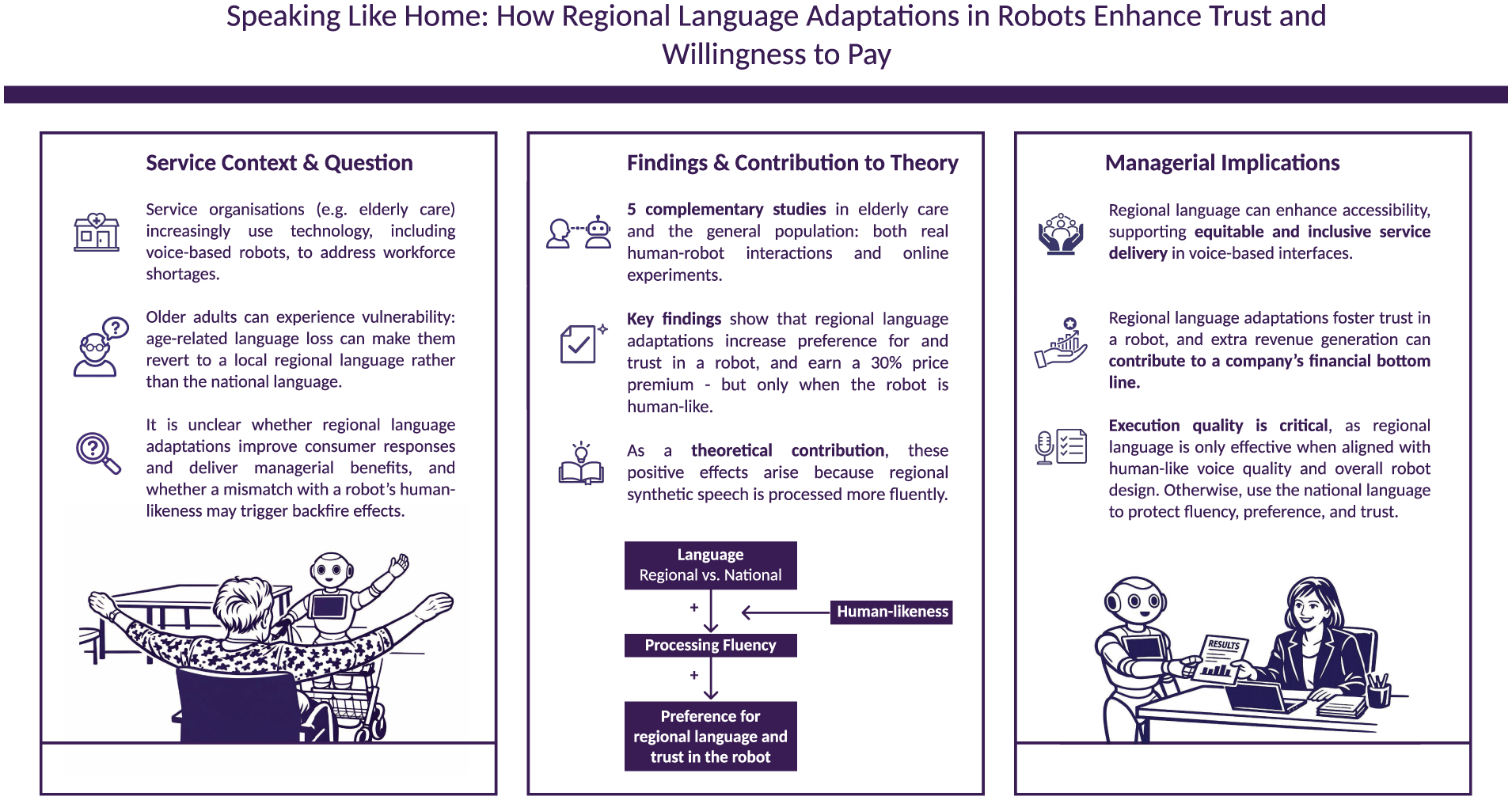

We therefore focus on processing fluency as the core explanatory mechanism in our framework (see Figure 1). Building on the feelings-as-information theory that metacognitive experiences are used as a source of information like any other source, we expect that the higher processing fluency of regional language compared with that of national language positively affects consumer attitudes and preferences (Dragojevic 2020; Schwarz et al. 2021). As managerially relevant service outcomes, we focus on consumers’ preference and willingness to pay for a robot with regional language capabilities, which are crucial elements in any business case for regional language adaptations, in addition to meeting the social objective of more inclusive service delivery (Hermann, Yalcin Williams, and Puntoni 2023). Building on the findings of Kim and Park (2023), which have related higher linguistic fluency to a higher willingness to pay for hospitality services, we expect speakers of a regional language to pay a premium for a robot with regional language adaptation.

Conceptual model.

Moreover, we examine trust as a fundamental aspect of communication, in particular in a healthcare environment, and as an important prerequisite to make interactions with machines accepted and successful (e.g., Huang and Rust 2021; Torre et al. 2018). Given that processing fluency elicits positive affect (Kim and Park 2023; Schwarz and Clore 1983, 2003), it also should lead to higher levels of trust when a robot speaks the person’s regional language rather than the national language. It is therefore hypothesized:

H1: Consumers (a) prefer a robot speaking their regional (vs. national) language, and (b) trust it more.

H2: Synthetic speech in a consumer’s regional (vs. national) language is more fluently processed, resulting in higher consumer preference and trust.

Does Anthropomorphism Ease Processing of Regional Synthetic Speech?

One important element affecting the acceptance of a robot is whether it prompts anthropomorphic interpretations, whereby people attribute human-like mental states—such as emotions or intentions—to the robot (Epley, Waytz, and Cacioppo 2007; Rothstein et al. 2021). A key factor in shaping such anthropomorphic interpretations, or anthropomorphism, is the human-likeness of the robot’s sensory and behavioral cues. In terms of embodiment, this relates to the extent to which the robot replicates human morphology and expressive capabilities, such as proportionate limbs, eye gaze, facial expressions, and smooth movement (Castro-González, Admoni, and Scassellati 2016; Lee and Hahn 2025; van Pinxteren et al. 2019). A human-like synthetic voice approximates natural human speech in prosody, intonation, and emotional tone, facilitating anthropomorphic interpretations.

Previous literature has extensively studied the role of robots’ human-likeness on acceptance, yielding mixed results (for an overview, see Blut et al. 2021). On the one hand, as mentioned above, anthropomorphic interpretations can trigger a sense of eeriness, negatively affecting trust in the robot (Mende et al. 2019; Mori, MacDorman, and Kageki 2012). On the other hand, research has found that consumers respond with increased trust, intention to use, and enjoyment to robots with human-like behavioral characteristics because they can relate easily to them and bond with them. A robot voice that sounds human, rather than robotic or monotonous, has been found to increase perceived fluency and engagement in human–robot interaction (Li, Broz, and Neerincx 2023; van Pinxteren et al. 2019).

Whether eliciting anthropomorphism enhances or jeopardizes the potential positive effects of regional speech adaptations has so far not been examined. The literature investigating accents in embodied robots speculates that robots that master accents may raise consumer expectations regarding the robot’s human-like abilities, and warns that any potential positive downstream effects will be undermined if the robot does not meet these expectations (Torre and Le Maguer 2020). Therefore, if the design of a robot does not align with these expectations—for example, it has a machine-like appearance and/or a robotic voice—this may jeopardize the positive effects of imbuing robots with regional speech on consumer preference and trust.

Moreover, a voice that does not resemble the human voice is also likely to undermine processing fluency. Similar to listening to foreign-accented speech (Dragojevic 2020; Dragojevic and Giles 2016), hearing a less familiar machine-like voice communicate in the consumer’s regional language may neutralize any expected increase in processing fluency over communicating in the national language. We therefore expect the positive downstream consequences on processing fluency, preference, and trust of a robot communicating in a consumer’s regional language to be reduced or lost when the robot lacks features facilitating anthropomorphism, such as a human-like voice or human-like embodiment.

H3: The effects of a robot speaking a regional (vs. a national) language on a) processing fluency and b) consumer preference and trust are mitigated when the robot is machine-like (vs. human-like).

Studies on Service Robots Speaking Regional Language

Empirical Overview

We test our hypotheses in a series of field and online studies. Studies 1 and 2 are field studies, conducted in an elder-care facility and a public building, respectively. Study 1 shows that elderly people prefer to do activities with a robot that speaks in their regional (vs. national) language. Study 2 shows that regional language adaptations also lead to advantages in the general population, and reveals that a robot speaking the consumer’s regional language is trusted more. It also sheds light on the mechanism underlying this preference and shows that regional synthetic speech is more fluently processed. Studies 3a and 3b, also situated in an elder-care setting, show that caregivers of elderly family members are potentially willing to pay more for a companion robot that speaks a regional language only when the robot is human-like. Study 4 shows that regional language adaptations may even backfire in the general population when the synthetic voice lacks human-like qualities. 2

For all of the studies, we recruited speakers of the local regional language because a regional language is not understood well by non-speakers, as it differs from the national language in terms of syntax, semantics, and morphology. Details on the generation of the regional and national speech can be found in Web Appendix A.

Study 1: Elderly People Prefer Activities With a Robot Speaking Their Regional Language

Study 1 is a within-subjects field experiment conducted in an elder-care facility located in Europe. We employed a within-subjects design because previous research has established that within-participants manipulations where participants experience changes in fluency are more powerful than between-participant manipulations (Schwarz et al. 2021).

Data Collection

The participants were 12 residents (ages = 80–93 years; eight women, four men) living within the care facility and who were able to speak the regional language. This relatively small sample size is comparable to the sample sizes of other studies conducted in the elder-care field, and reflects the challenges of gaining access to this population (e.g., Looije, Neerincx, and Cnossen 2010; Shanks et al. 2025). With help from an in-house supervisor and the care facility’s records, we screened the residents according to their ability to participate and to speak the regional language.

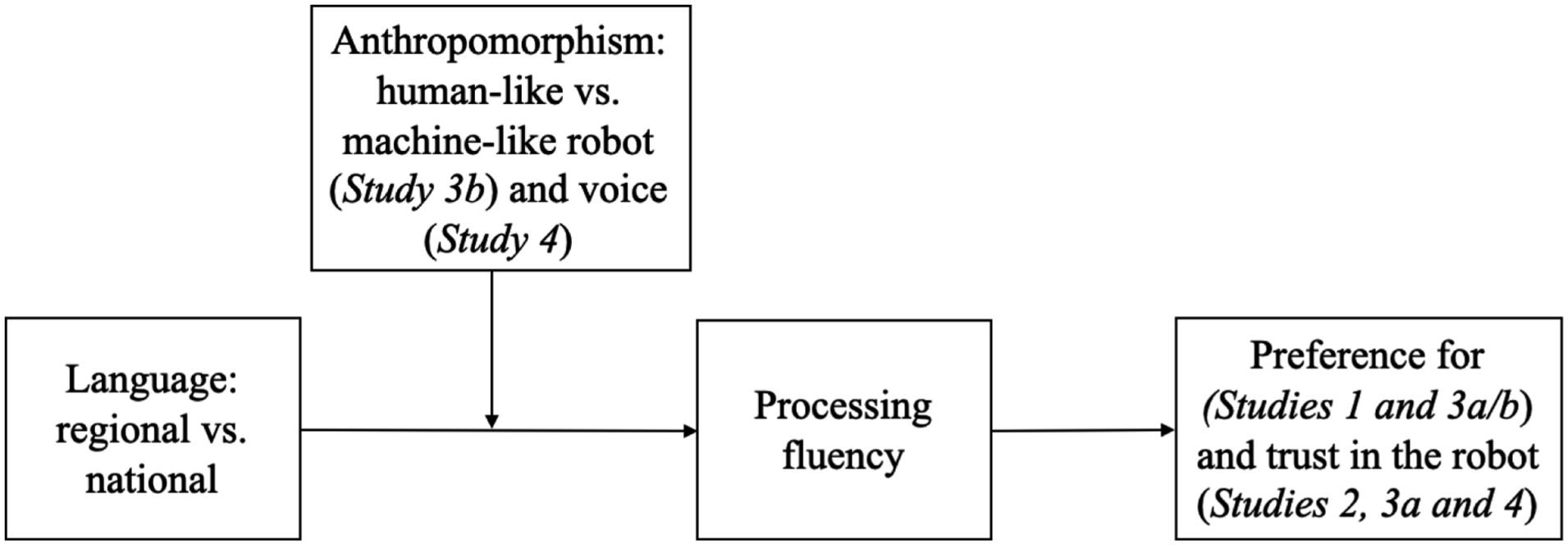

The study took place in a separate room in the facility where respondents interacted with the embodied robot “Pepper.” We remotely controlled the robot (the Wizard of Oz technique), with the programmer (i.e., the wizard) directing the robot out of the field of view of the participant during the interaction with the robot. When participants entered the room, they were seated in front of the robot or stayed seated in their wheelchairs (Figure 2). The robot introduced itself in the national language and explained that the participant could choose between two activities, one undertaken in the regional language and one in the national language, and instructed them how to indicate their preferred activity.

Human–robot interaction in Studies 1 (top) and 2 (middle), and Study 3 stimuli (bottom).

For several months in advance, we had worked with a programmer to develop four activities that the robot could undertake with the elderly clients: exercising to music, listening to a story, doing a quiz, or singing a song, with each lasting around 2 minutes. In designing these activities, we solicited input from healthcare professionals and adapted the activities based on their feedback. The translation from the national to the regional language was done by a teacher of regional language courses. We selected two different songs, one in the national language, and one in the regional language. Apart from the verbal communication, we also programmed the robot’s head movements and gestures as nonverbal communication cues that fitted the activities (e.g., “raise your arm”) and the scripted text.

Participants in two consecutive rounds made a choice between two activities, one offered in the regional language, the other in the national language. We randomized the combinations of all four activities and the order of using the regional or national language. Our sample of 12 participants therefore translates to 24 choices being made. After the activities, a member of the research team conducted a short interview with the participant to gather measures on the participant’s ability to understand (

Results

As expected, whether the robot offered the activity in the regional language or the national language influenced the chosen option. The regional language was preferred for 70.8% of the choices (national language for 29.2% of the choices), a significant difference according to a binomial test (exact Clopper-Pearson 95% confidence intervals (CI) of 48.9% to 87.4% for the regional language;

Robustness Check

To assess whether the preference for the regional language could be explained by participants’ regional language proficiency (Kühne et al. 2024), we conducted a logistic regression using self-reported proficiency (average of understanding and speaking,

Discussion

Study 1 confirms that during an actual human–robot interaction, in a field study in an elder-care setting, senior citizens were more likely to engage with a robot when it spoke their regional language, rather than the national language, as we had predicted in H1a. Different from prior literature (Kühne et al. 2024), the preference for regional language was not affected by participants’ regional language proficiency, probably because all of the participants had rather high proficiency. Study 2 generalizes the effects of regional language to the general population and to trust as another important service outcome, as well as shedding light on processing fluency as the underlying mechanism.

Study 2: Trust in an Embodied Robot Speaking Regional Language

Study 2 was a field study conducted in a medium-sized European city in a public building. Unlike Study 1, this time we employed a between-subjects design and let respondents again interact with the real embodied robot “Pepper,” speaking either the local regional language or the national language.

Data Collection

We conducted our study in the multifunctional area of the building, and invited visitors approaching that area to participate. They were told that they could talk to the robot in either the national language or the local regional language. Given the expected number of visitors and the computational capacity required, generating real-time text-to-speech output in both languages was not feasible. We therefore generated answers to six questions in both languages and integrated these into the robot via a custom-made platform.

The participants had a short conversation with the robot, one person at a time and in the self-selected regional or national language (see Figure 2). 3 The six questions they could ask the robot were shown on two posters: one in the regional language, the other in the national language. As soon as the robot recognized a human face, it started introducing itself in the selected language, and the participants could ask the robot the six questions in whatever order they wished. The experimenter positioned behind the robot unobtrusively triggered the sensor (e.g., patted the head of the robot, touched a finger) matching with the answer to the question that was being asked. In addition to the verbal communication, we also programmed head movements and gestures as nonverbal communication cues. 4 After interacting with the robot, the participants were asked to fill in a short survey assessing how the interaction was experienced, as well as providing socio-demographic information and an indication of their proficiency in the regional language.

Measures

Trust in the robot is assessed with two items (e.g, “The robot is trustworthy,” 1 = “fully disagree,” 7 = “fully agree”; De Wulf, Odekerken-Schröder, and Iacobucci, 2001,

Sample

A total of 115 respondents participated in the survey (101 complete surveys; 51 females). 48 respondents spoke to the robot in the regional language, 53 respondents in the national language. The respondents opting for the regional language indicated having better regional language capabilities than those choosing the national language (understand

Results

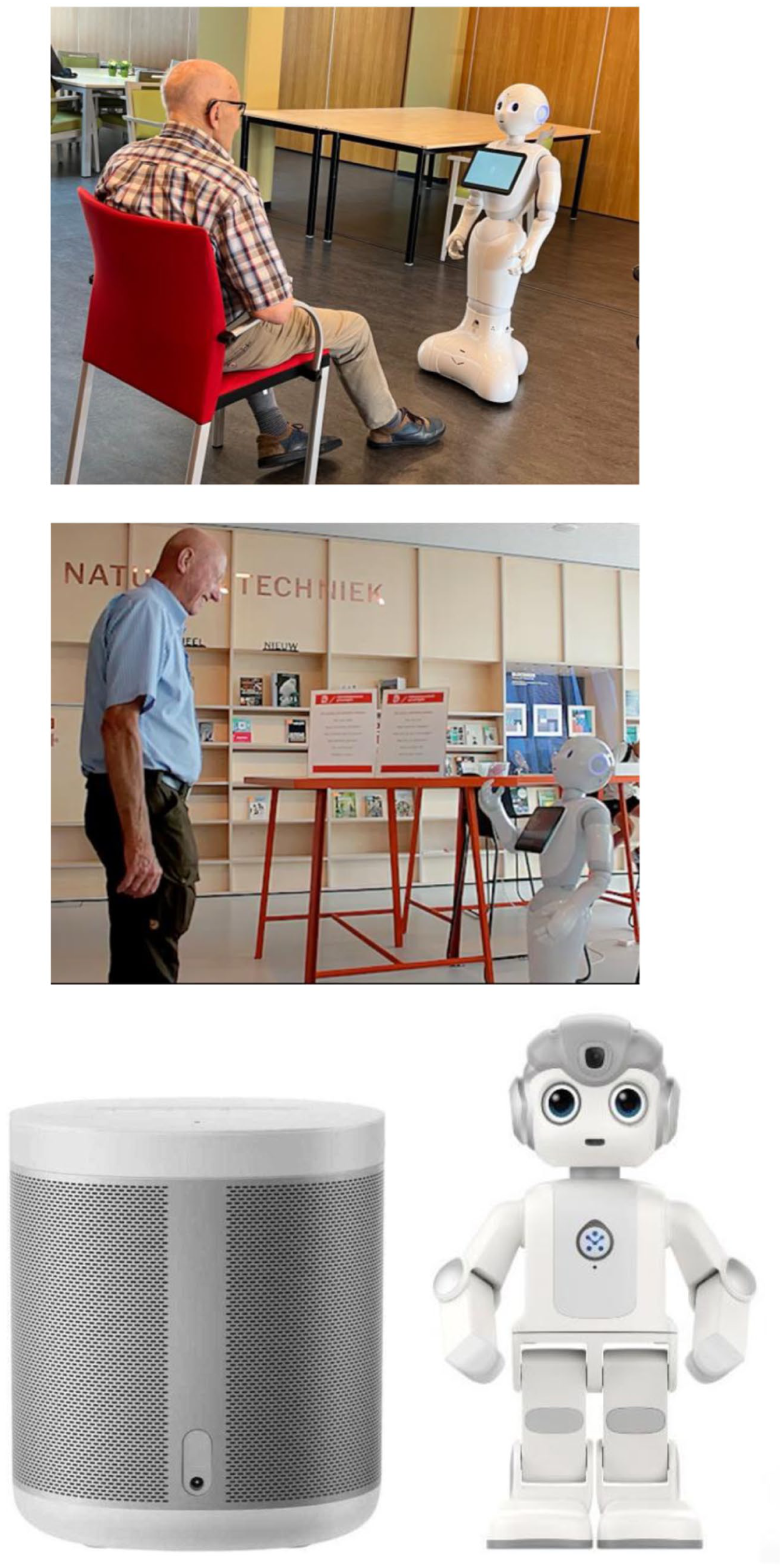

Trust in the Robot

An ANOVA of the effect of language (regional vs. national language) on trust in the robot revealed a marginally significant higher level of trust if the robot spoke the regional language rather than the national language, confirming H1b (

Study 2 results.

Processing Fluency

An ANOVA of the effect of language (regional vs. national language) on processing fluency showed that the robot speaking the regional language was associated with higher processing fluency than the robot speaking the national language (

Eeriness

Respondents perceived the robot speaking the regional language as more eerie than the robot speaking the national language (

Mediation Analysis

A mediation analysis revealed that processing fluency fully mediated the relationship between the language the robot used (regional vs. national) and trust in the robot (Hayes 2015, Model 4;

Robustness Check

Regional language proficiency (average of the four items, Cronbach’s alpha = .91) did not moderate the relationship between the language the robot used and trust in the robot, nor processing fluency (Hayes 2015, Model 1) (trust: interaction = −.09, 95% CI: −0.86, 0.68; processing fluency: interaction = .14, 95% CI: −0.16, 0.45).

Discussion

Studying a real-life interaction between a real robot and a human respondent, Study 2 showed that robots that speak the regional (vs. national) language are trusted more because the regional speech is processed more fluently, confirming H1b and H2. Interestingly, the robot was perceived as more eerie when it spoke the regional (vs. the national) language, which in turn (marginally) decreased trust. Given these inconclusive results, the goal of Studies 3 and 4 is to explore the role of anthropomorphism in more depth.

Study 3: Willingness to Pay for Regional Adaptation on Human-Like Robots

Inspired by real-life companion robots such as ElliQ, we investigate people’s willingness to pay for a monthly subscription for a care robot as a managerially relevant outcome variable in Studies 3a and 3b. We apply a proxy decision-making method (Convey, Holt, and Summers 2018) and elicit potential caregivers’ willingness to pay for having a social care robot in an elderly family member’s home. To generalize beyond one regional language, we select Flemish, a variety of Dutch that is primarily spoken in the northern region of Belgium and has distinct phonological, lexical, and grammatical features.

Study 3a: Willingness to Pay for Regional Adaptation

Data Collection

Study 3a applies a 2-cell (language: regional vs. national) between-subjects design. We recruited 152 (150 requested) Belgian participants on Prolific Academic (71 females; 4 did not disclose). Most respondents were between 18 and 29 years old (48%), with 29.6% between 30 and 39, 13.2% between 40 and 49, 5.3% between 50 and 59, and 4% older than 60 years. While our participants skew somewhat younger than the average informal caregiver, younger family members are often strongly involved in the adoption of care technology (Berridge and Wetle 2020). We excluded 11 participants who indicated they were not native speakers of either Dutch or Flemish, and two incomplete cases, leaving a sample of 139 participants.

Procedure and Measures

Respondents were asked to imagine to be a caregiver for an elderly family member. This family member still lives independently but requires in-home care. One of the care professionals involved in supporting this family member suggests using a care robot to assist with daily activities and provide reminders for medication, appointments, and personal care. You visit a local home care store and see the following care robot.

Respondents saw a picture of a social care robot, and were asked to listen to the following text spoken either in the regional language or the national language: Hello! I am a care robot, and I can support people with their daily activities. I can help structure the day by providing reminders for medication, appointments, and personal care. I can also tell a story, make jokes, or offer a listening ear. I can play music, and you can play games or do quizzes with me.

We assessed the respondents’ willingness to pay for a monthly subscription to use the robot for an elderly family member, using a slider scale from 0 to €100 (

As potential competing underlying mechanisms, we assessed novelty (“I have not seen a care robot like this before,” “This care robot is novel”;

Willingness to Pay for the Robot

An ANCOVA of the effect of language (national vs. regional) on willingness to pay for a monthly subscription for the robot for an elderly family member to use, controlling for age and gender as in the following Study 4,

6

revealed a higher willingness to pay for the care robot when the robot spoke regional language (

Trust in the Robot

Another ANCOVA on trust in the robot revealed that potential caregivers would trust the robot more if it spoke the regional (vs. national) language, in line with Study 2 and H1b (

Processing Fluency

Moreover, processing fluency is marginally higher when the robot spoke the regional (vs. national) language (

Eeriness of the Robot

The eeriness of the robot does not significantly differ depending on the language it speaks (

Mediation Analyses

We conducted serial mediation analysis to examine whether processing fluency and trust mediated the relationship between robot language (regional vs. national) and willingness to pay for the robot (Hayes 2015, Model 6), again with age and gender as covariates. The regional (vs. national) language was marginally more easily processed, increasing trust and willingness to pay for the robot (

To rule out any potential alternative mechanisms, we conducted a series of parallel mediation analyses with eeriness, novelty, perceptions of in-group membership, expectancy violation, perceived warmth, social presence, and identity affirmation as additional mediators of the relationship (Hayes 2015, Model 4); these constructs did not mediate the effects (eeriness:

Robustness Check

Including regional language proficiency as a moderator reveals that willingness to pay for the robot speaking the regional language marginally levels off with higher language proficiency (see Web Appendix B).

Study 3b: The Effect of Anthropomorphism on Willingness to Pay for Regional Adaptation

Data Collection

The goal of Study 3b is to investigate whether anthropomorphism impacts consumer reactions to a robot speaking the regional language, and applies a 2 (Language: Regional vs. National) × 2 (Type of Robot: Human-Like vs. Machine-Like) between-subjects design. Via Prolific Academic, we recruited 200 (200 requested) Belgian participants who did not take part in Study 3a (93 females; 3 did not disclose). Most respondents were between 18 and 29 years old (57%), with 30.5% between 30 and 39, 9% between 40 and 49, 1.5% between 50 and 59, and 2% older than 60 years. We removed 14 participants who indicated they were not native speakers of either Dutch or Flemish, and there were two incomplete cases, leaving a sample of 184 participants.

Procedure and Measures

We used the same scenario as in Study 3a, yet respondents saw a picture of a social care robot with either human-like morphology or a machine-like appearance to elicit different levels of anthropomorphism (see Figure 2).

We again assessed respondents’ willingness to pay (

An ANOVA of the effect of type of robot (human-like vs. machine-like), language (national vs. regional), and their interaction revealed a marginal effect of language on regional language proficiency (F(1,183) = 3.84;

Manipulation Check

An ANOVA of the effect of type of robot (human-like vs. machine-like) on its perceived human-likeness showed the expected patterns (

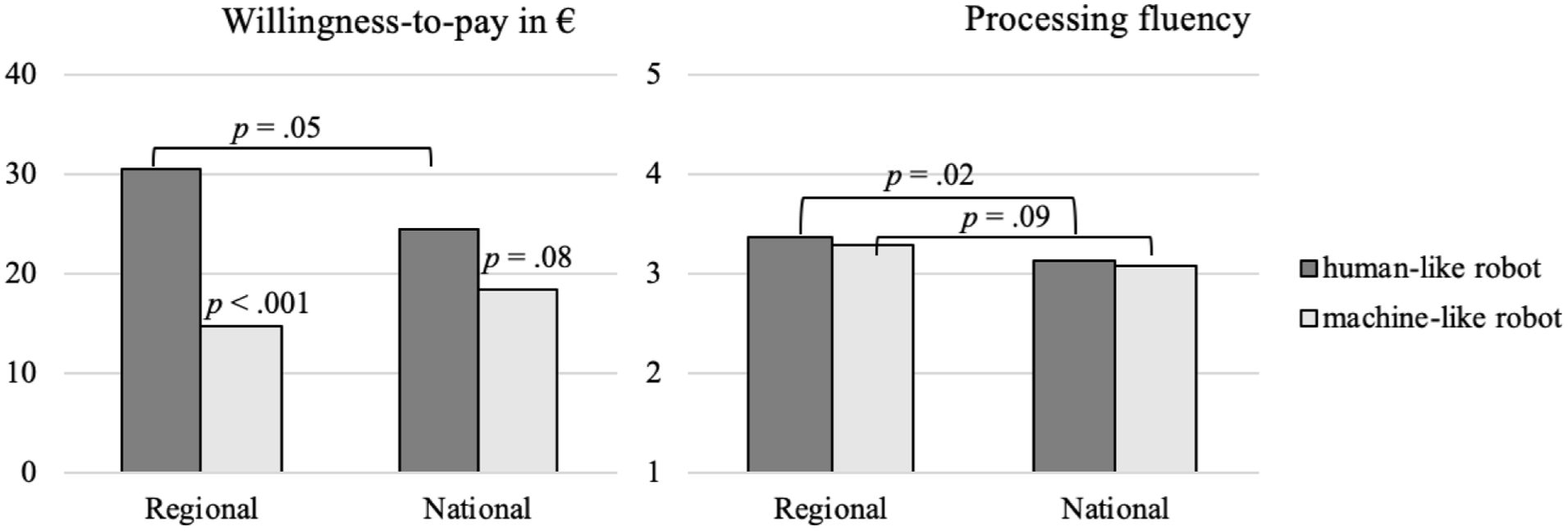

Willingness to Pay for the Robot

An ANCOVA of the effect of the type of robot (human-like vs. machine-like), language (national vs. regional), and their interaction on willingness to pay for a monthly subscription for the robot, controlling for age and gender,

7

showed a significant interaction effect (F(1,183) = 4.71;

Contrasts revealed that, in line with our expectations and supporting H3b, participants were willing to pay more for the human-like robot speaking the regional (vs. national) language (

Interestingly, willingness to pay more than doubled when the robot speaking the regional language was human-like (vs. machine-like;

Study 3b results.

Processing Fluency

An ANCOVA of the effect of robot type (human-like vs. machine-like), language (national vs. regional), and their interaction on processing fluency, again controlling for age and gender, revealed only a significant main effect of language (F(1,183) = 8.00;

Eeriness of the Robot

An ANCOVA of the effect on evoking a sense of eeriness about the robot of the type of robot (human-like vs. machine-like), language (national vs. regional), and their interaction, with age and gender as covariates, did not reveal significant differences (main effects: language F(1,183) = 2.64;

Moderated Mediation Analysis

We conducted a moderated mediation analysis to examine whether processing fluency mediated the relationship between robot language (regional vs. national) and willingness to pay for the robot, and whether the type of robot (human-like vs. machine-like) moderated this relationship (Hayes 2015, Model 7), again with age and gender as covariates. The results revealed that for the human-like robot, the regional (vs. national) language was more easily processed, increasing willingness to pay for the robot (

Robustness Check

Mirroring Study 3a’s results, willingness to pay for the regional-language speaking robot marginally levels off with higher language proficiency (Web Appendix B).

Discussion

Using willingness to pay for a monthly subscription for a care robot for an elderly family member to use, as a managerially relevant outcome, Studies 3a and 3b show that consumers are willing to pay a premium of around 30% (3a: 32%; 3b: 28%) for regional-language adaptation. Yet crucially, this benefit only holds if the robot has a human-like, and not machine-like, appearance, as predicted by H3b. Willingness to pay more than doubles if a regional-language speaking robot has a human-like appearance, showing strong support for the combination of regional language and human-like appearance.

In Study 3b, females report a significantly higher willingness to pay for a care robot than do males. A possible explanation for this is that women more often assume caregiving responsibilities and so value any care technology supporting them in these tasks more. It is also notable that in Study 3b, the differences in processing fluency between a human-like robot and a machine-like robot are not that large, which therefore does not lend support for H3a. One reason why we do not find strong effects of robot human-likeness on processing fluency may be that we look at the robot embodiment, and not at the human-likeness of the voice. Therefore, in Study 4, we vary the human-likeness of the robot’s speech.

Study 4: The Effect of Human-Like Speech on Trust in a Robot Speaking Regional Language

Study 4 applies a 2 (Language: Regional vs. National) × 2 (Type of Voice: Human-Like vs. Machine-Like) between-subjects design to investigate the human likeness of the synthetic speech as an important boundary condition for trusting a care robot. In addition to the human-like regional and national speech used in Studies 1 and 2, we created a more machine-like version of the synthetic voice by increasing pitch and adding echo. These acoustic modifications reduce the voice’s perceived human-likeness and elicit lower levels of anthropomorphism.

Data Collection

Power analysis using G*Power (v 3.1; Faul et al. 2009) (power of .80 and an alpha error probability of .05) yields a sample of 402 to detect a small to medium-sized effect comparable with the effect sizes obtained in Study 2, which uses the same outcome variables and regional language (

For the evaluation of the regional language-speaking robot, we recruited participants who spoke the regional language via a local cultural center dedicated to promoting the use of that regional language. All communication at this center is in the local regional language. The center distributed the link to our survey via their website, and via their Facebook page. To encourage participation, we enrolled respondents into a lottery to win one of five €25 vouchers for a large national online retailer. 199 respondents finished the regional-language survey (113 females; 14 did not disclose). Of these, 96 respondents were exposed to the robot with the machine-like voice, and 103 to the robot with the same human-like voice as used in Studies 1 and 2. Most respondents were between 18 and 29 years old (41.7%), with 12.1% between 30 and 39, 13.6% between 40 and 49, 21.6% between 50 and 59, and 11.0% older than 60 years. Our final sample consisted of 400 respondents. Chi-square tests reveal that gender (chi-square = 4.02;

Procedure and Measures

Respondents watched a video of a robot speaking in either the regional language or the national language, either with the same human-like voice we used in Studies 1 and 2, or with the new more machine-like version. In the video, the robot introduced itself as a care robot that can make people feel less lonely by telling a story or a joke and listening to them. The robot then invited respondents to participate in the survey.

Trust in the robot (Cronbach’s alpha = .86;

Results

Manipulation Check

An ANCOVA of the effect on perceived human-likeness of the type of voice (human-like vs. machine-like), with age and gender as covariates, shows that, as anticipated, the human-like voice is perceived to be more human than the machine-like voice (

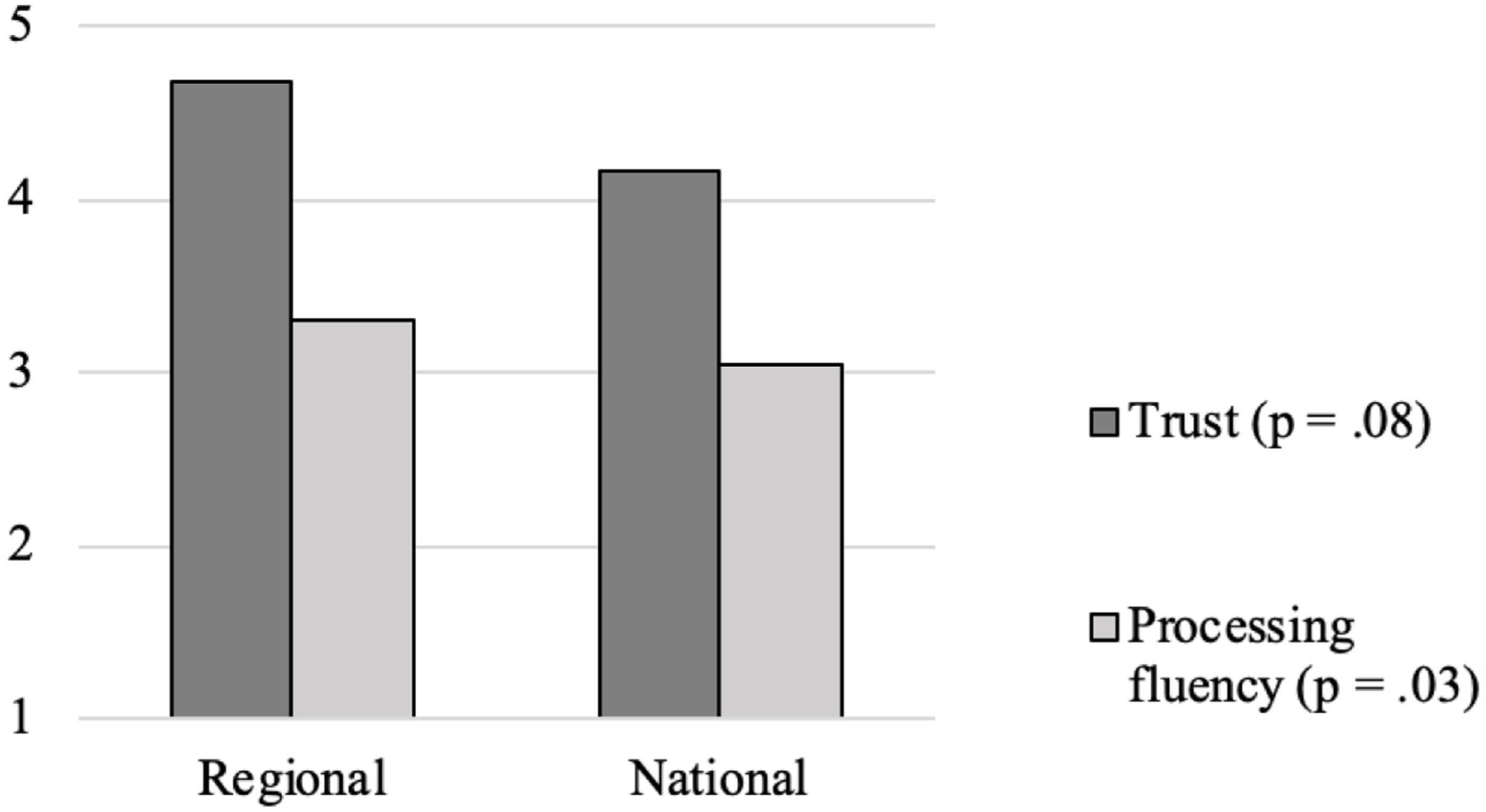

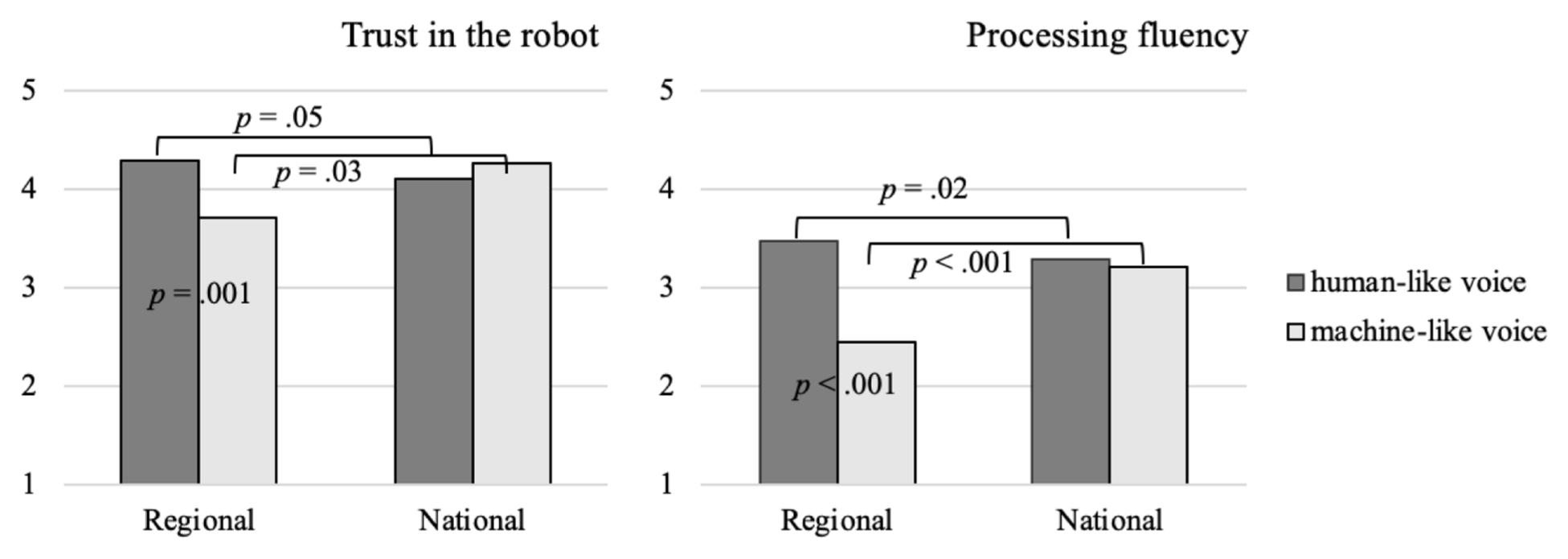

Trust in the Robot

An ANCOVA of the effect of the type of voice (human-like vs. machine-like), language (national vs. regional), and their interaction on trust in the robot, with age and gender as covariates, shows a significant interaction effect (F(1,399) = 9.27;

Contrasts reveal that, in line with the results of Study 2, participants trusted the robot with the human-like voice more when it spoke the regional language rather than the national language (

Study 4 results.

Processing Fluency

An ANCOVA of the effect of the type of voice (human-like vs. machine-like), language (national vs. regional), and their interaction on processing fluency, with age and gender as covariates, reveal significant main effects of the type of voice (F(1,399) = 68.80;

For the machine-like voice, we found the opposite effect. Participants processed the regional-language machine-like voice less fluently than the national-language machine-like voice (

Eeriness of the Robot

An ANOVA of the effect of the type of voice (human-like vs. machine-like), language (national vs. regional), and their interaction on evoking a sense of eeriness about the robot, with age and gender as covariates, only revealed a significant effect of language (national language: Mhuman-like = 3.69,

Mediation Analysis

We conducted a mediation analysis to examine whether processing fluency mediated the relationship between robot language (regional vs. national) and trust in the robot, and whether the type of robot voice (human-like vs. machine-like) moderated this relationship (Hayes 2015, Model 7), with gender and age as covariates. The results revealed that the regional-language human-like voice was more easily processed than its national counterpart, increasing trust in the robot (

We introduced eeriness as a parallel mediator of the relationships, and found that when the voice is human-like the lower eeriness of the regional language translates into higher trust (

Robustness Checks

Including language proficiency as an additional moderator of the relations reveals that respondents with high language proficiency react particularly negatively to a regional-language-speaking robot with a machine-like voice—both in terms of trust as well as processing fluency (see Web Appendix C for more detail).

Given the differences between the two samples, we employed a stratified propensity score matching using respondents’ age, gender, education level, and work experience in health care as predictors (Austin 2011; Rosenbaum and Rubin 1983). This yielded 372 matched pairs. A moderated mediation analysis (Hayes 2015, Model 7) with the propensity-matched sample confirmed our prior result that the regional-language human-like voice was more easily processed than its national counterpart, increasing trust in the robot (

Discussion

Study 4 reinforces the important boundary condition regarding the effects of equipping a robot with regional language adaptations revealed by Study 3, and shows that the positive effects of having a robot speak the regional language only hold when the robot’s voice is human-like, confirming H3a and H3b. Unexpectedly, we find a backfire effect of regional language when the voice is machine-like, implying that a machine-like voice speaking the national language is processed more fluently than one speaking the regional language, and so is trusted more. Interestingly, when the robot’s voice is human-like, the regional-language-speaking robot’s lower sense of eeriness supports the positive effects of fluency on trust, whereas in Study 2, the robot speaking the regional language was perceived as eerier.

General Discussion

The use of robots is urgently needed to ease pressing staff shortages in healthcare and elder-care services (Becker, Efendić, and Odekerken-Schröder 2022). Moreover, robots as voice-based interfaces enable a more natural interaction on the organizational frontline, which can reduce service access barriers. Powered by improvements in speech technology, such voice-based interfaces can feature a variety of language options, including regional languages. Equipped like this, robots can enhance inclusivity and communicate seamlessly with diverse populations across linguistic boundaries. This is an important advantage for consumers experiencing linguistic vulnerability, such as multilingual elderly experiencing second-language loss who are now struggling to navigate an environment where that language is dominant. This technology holds similar promise for using with migrants or members of linguistic minorities who struggle with monolingual digital services. However, while speech technology develops at speed, the questions of how consumers react to synthetic speech that is adapted to their region of origin, and whether there are any benefits for companies in doing so, remain largely unanswered.

(Elderly) Consumers Prefer a Robot Speaking Their Regional Language and Trust It More

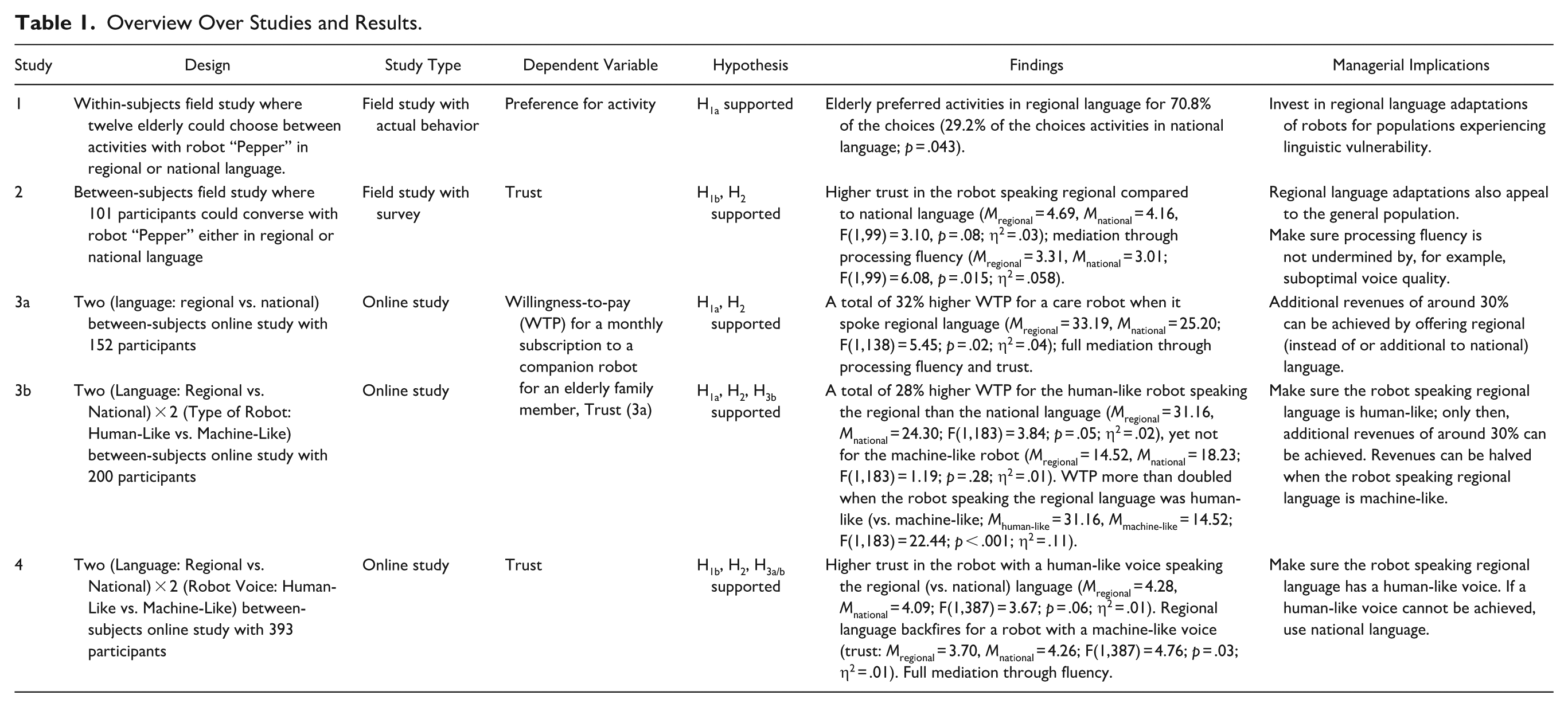

In three of our five studies, we show that elderly consumers prefer robot interactions where the regional language is spoken rather than the national language, and that their potential caregivers are willing to pay a premium of 30% for a companion robot that speaks a regional language (see Table 1 for an overview of the studies and their results.). Multilingual robots can therefore be a viable way to create more inclusive service interactions and to accommodate consumers who experience vulnerability due to language loss (Hermann, Yalcin Williams, and Puntoni 2023).

Overview Over Studies and Results.

Going beyond elderly consumers as a target group for care robots, in two of our studies, we observe similar benefits of regional language adaptations in the general population, with a (care) robot speaking the regional language being trusted more than its national-language-speaking counterpart. This sheds light on the potential benefits of enabling bots to speak in regional languages, an area where insights have until now been scarce or conflicting, and is one therefore in which more study is sorely needed (Guha et al. 2021). The findings also represent an important advancement in knowledge at the intersection of the fields of service marketing, technology acceptance in the service frontline, corporate digital responsibility, and linguistics. For service companies, the results show that regional language adaptations can be beneficial beyond the traditional target population: the—often small—segments of elderly people who are experiencing linguistic vulnerability. Interestingly, higher regional language proficiency did not translate into a willingness to pay more for the robot with regional language capabilities, perhaps because this segment views these capabilities as a necessary standard feature rather than an add-on.

. . . Because Synthetic Regional Language Spoken by a Robot Is More Easily Processed

The reason behind the positive effect of regional language adaptations is that consumers find synthetic speech in their regional language easier to process, which—in line with the previous literature showing the advantage of more fluent processing in human-to-human communication (Dragojevic 2020)—translates into positive downstream effects in human–robot interactions as well. We advance theory by showing that, in line with the feelings-as-information theory (Kim and Park 2023; Schwarz and Clore 1983, 2003), the metacognitive experience of easy processing translates into positive downstream effects for robot speech, as it does for human speech. However, even though language-processing fluency, as we show, critically shapes attitudes toward the adoption of technology-based services, and even willingness to pay for them, this area has so far hardly been considered in service research.

But: The Robot Must Be Human-Like

However, there is one important prerequisite in order for the benefits of using regional language in a robot to materialize: the robot’s appearance and voice must be human-like.

As an important theoretical implication, this research identifies eliciting anthropomorphism as a key factor for maximizing the benefits of regional language adaptations through processing fluency. While prior studies have only focused on language use or human-likeness in isolation, our findings underscore the importance of the interplay of these factors (e.g., Li, Broz, and Neerincx 2023; Lugrin et al. 2020). For service companies investigating the business case for serving customers in their regional language, Study 3b shows that it is only when the robot is human-like that additional revenues of around 30% can be potentially achieved by offering a regional language instead of, or in addition to, the national language.

Even more critically, revenues can be halved when the robot speaking the regional language is machine-like, and in Study 4, we even see an unexpected backfire effect when the regional language is being spoken with a more machine-like robot voice. This insight can perhaps explain why findings in the previous literature have so far been mixed. Moreover, advancing on prior literature on anthropomorphism and processing fluency (e.g., Li, Broz, and Neerincx 2023), we show that the robot’s embodiment is less important in facilitating the processing of regional speech than the human-likeness of the speech itself, with Study 3b showing that the robot’s embodiment did not significantly affect processing fluency, whereas in Study 4, a machine-like voice undermined processing fluency.

In particular, those who are highly proficient in a regional language are sensitive to processing fluency being undermined, for instance by being addressed in a machine-like voice. This sensitivity also has the potential to undermine the insights of Kühne et al. (2024) that those who are highly proficient in a regional language trust a robot more. Taken together, our results convincingly show that robot human-likeness, both in voice and embodiment, matters more when the robot speaks a regional language, potentially adding to the development costs of regional adaptations.

Outcomes regarding the impact of regional language on eeriness are mixed. Study 2 found that a greater sense of eeriness experienced when the robot spoke the regional language translated into (marginally) lower trust, whereas in Study 4 the regional-language-speaking robot was perceived as being less eerie, reinforcing the positive effect of fluency on trust—yet only when the robot’s voice was human-like. These differences are probably due to the different stimuli used (live interaction with an embodied robot versus video stimuli, respectively), and we also note that Studies 3a and 3b did not find the use of regional language to have any effect on creating a sense of eeriness. Clearly, more insights are needed on the interplay between regional language adaptations and the uncanny valley effect (Mende et al. 2019; Mori, MacDorman, and Kageki 2012).

Managerial Implications

Our findings offer promising implications for organizations considering using regional languages in their voice-based interfaces, such as robots. Regional language adaptations not only enhance accessibility for consumers experiencing linguistic vulnerability, mitigating their state of powerlessness but also support broader organizational goals related to equitable and inclusive service delivery for linguistically diverse consumer populations (Hermann, Yalcin Williams, and Puntoni 2023). At the same time, such adaptations can foster trust among the general population and support a company’s financial bottom line.

Regional language use that increases processing fluency led in our research to a 30% higher willingness to pay for a monthly subscription for a companion robot for an elderly family member—yet only when the robot was human-like, making regional language adaptation and human-likeness an ideal combination. Given that the underlying mechanism—more fluent processing—should be the same, we also expect our results to generalize to voice-based interfaces other than robots. As using a machine-like voice for communicating in a regional language backfires and undermines processing fluency and trust, managers are well-advised to opt for a robot speaking the national language if a human-sounding regional version is technically not feasible. Therefore, our study reinforces that the execution quality of regional adaptations is critical (Chahal and Mahajan 2024), which adds to the development costs. However, these additional costs need to be weighed against the ethical imperative to design for inclusion, rather than reinforcing existing exclusions through digital systems.

It is important, though, for managers to note that, because we exposed participants to the regional-language-speaking robot only if they self-identified as being fluent in the language, our conclusions apply only to regional synthetic speech that matches the background of the human listener. Therefore, robots and other voice-based interfaces should only use regional language when it matches the listener’s background. Practically, it would be advisable to let consumers self-select the language in which they wish to communicate.

We found evidence in one study that females were willing to pay more for a care robot than men were; reflecting that women disproportionately carry the burden of unpaid and often invisible care work and are more frequently exposed to the limitations of current caregiving technologies. However, rather than seeing women as a target group to extract value from, managers would be better served by treating women as critical stakeholders who can highlight unmet design needs and contribute to making technology more inclusive.

Finally, our insight that consumers’ more favorable reaction to a stimulus that is more fluently processed also applies to synthetic speech means that managers need to avoid any factors that might undermine processing fluency. That is, a bot should speak at an appropriate volume and speed, with optimal audio quality, and in sentences that are easy to follow.

Limitations of This Research and Avenues for Future Research

The limitations of our study open up further avenues for research. First, future research could identify other factors that increase or undermine the processing fluency of synthetic speech from a robot or other voice-based interface, such as the speed at which the bot speaks, or the complexity of the sentences or the vocabulary used. Second, the inconclusive results we obtained on the effect of regional speech on the sense of a robot being eerie point to a need to investigate how processing fluency and the uncanny valley effect are interrelated.

Third, not all of our studies were conducted among elderly consumers or their caregivers, given the difficulty in gaining access to these groups. More empirical work among these important target groups of care technology is needed. Fourth, we did not investigate consumer reactions to accented synthetic speech. Future research could investigate whether accented synthetic speech comes with the same advantages in processing fluency, trust, and likelihood to engage with a robot as we have shown exist for synthetic regional speech. Fifth, our studies were restricted to speakers of the selected local regional language, which precluded the possibility of examining alternative theoretical accounts, such as potential negative stereotype biases. This limitation might be overcome if future research exposes speakers with a different language background to accented synthetic speech. Sixth, the studies used one particular voice-based interface, an embodied robot. It will be important to confirm that the positive effects of adapting synthetic speech to consumers’ regions of origin also generalize to other interfaces, such as non-embodied chatbots or avatars.

Seventh, Study 3 employed a sample that skewed younger than the demographic profile of the average informal caregiver. While participants were explicitly instructed to adopt the role of a caregiver for an elderly relative, and such a proxy decision-making method is a well-established strategy in service research (Convey, Holt, and Summers 2018), it raises valid concerns about ecological validity. Therefore, we recommend that future studies include a more demographically representative caregiver population or adults with direct caregiving experience to enhance external validity.

In summary, we have shown that regional language adaptations in voice-based interfaces hold enormous promise. They contribute to more equitable service design by enabling service provision beyond linguistic barriers, while also supporting a company’s financial bottom line by increasing potential consumers’ willingness to pay, fostering more positive attitudes, and enhancing trust.

Supplemental Material

sj-docx-1-jsr-10.1177_10946705261432142 – Supplemental material for Speaking Like Home: How Regional Language Adaptations in Robots Enhance Trust and Willingness to Pay

Supplemental material, sj-docx-1-jsr-10.1177_10946705261432142 for Speaking Like Home: How Regional Language Adaptations in Robots Enhance Trust and Willingness to Pay by Jenny van Doorn and Giovanni Telussa in Journal of Service Research

Footnotes

Acknowledgements

The authors are grateful to Martijn Wieling, Nanna Hilton, Frank Hopwood, Tonny Romensen, Koen Buiten, Eveline Schmidt, and Wietse de Vries for their invaluable support in generating the synthetic voices and supporting the data collection.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Dutch Research Council (NWO) as project “ls a dialect speaking robot more easily accepted by the elderly?”, grant number NWA.1228.191.092. The views expressed in this manuscript are the sole responsibility of the author, and they do not necessarily reflect the views of NWO.

Supplemental Material

Supplemental material for this article is available online.