Abstract

This study examined whether altering the metaphor—or visual skin—of a cognitive assessment game affects its psychometric properties. BrainTagger is a suite of serious games used to assess executive functions such as processing speed, response inhibition, cognitive flexibility, and working memory. While the original version used a Whackamole metaphor, user feedback prompted the development of an alternative Gardening version with identical mechanics but a more mature visual theme. Using a within-subject design, 109 undergraduate participants completed both versions of 4 BrainTagger games. Correlation analyses revealed significant relationships between versions across all domains, particularly for working memory (r = .38), response inhibition (r = .36 for d-prime, −.75 for median correct RT), cognitive flexibility (r = .37), and processing speed (r = .61). However, the speed accuracy tradeoff in working memory performance was sensitive to metaphor changes, likely due to task demands requiring either the phonological loop or the visuo-spatial sketchpad. These findings suggest that while game metaphors can be adapted to suit user preferences without broadly compromising assessment validity, specific cognitive domains—such as working memory—may be more vulnerable to metaphor-driven variance. Implications for the design and validation of cognitive assessment tools are discussed.

Introduction

Cognitive assessment tools are widely used to screen for impairments in executive functions such as working memory, inhibition, and processing speed. Traditional methods such as the Montreal Cognitive Assessment (MoCA; Nasreddine et al., 2005) and the Mini-Mental State Examination (MMSE; Folstein et al., 1975) are clinically validated but limited by their paper-and-pencil format, time demands, and insensitivity to short-term changes in cognitive status. In contrast, serious games offer a promising avenue for scalable, repeatable, and engaging cognitive screening, especially in aging populations and long-term care environments.

BrainTagger is a suite of cognitive games designed to measure executive functions through short, self-administered tasks embedded in a game format (Urakami et al., 2021). Initially developed using a Whackamole metaphor—where players press a button to “whack” cartoon moles—the games were generally well received. However, older adult users in retirement and long-term care homes have occasionally described the Whackamole theme as too “childish.” In response, an alternate version was developed using a Gardening metaphor, where users dig up weeds or select flowers instead of hitting moles, while keeping the underlying cognitive task structure intact (Iglar et al., 2023).

This study investigates whether changing the visual metaphor of a cognitive game alters its psychometric properties. Specifically, it examines whether two versions of the same game—identical in task demands and motor responses but differing in metaphor—produce similar performance outcomes, and whether metaphor changes introduce unintended variability in cognitive assessments.

Background

Serious games for cognitive assessment aim to strike a balance between clinical rigor and user engagement. When properly designed, these games can improve compliance, support self-administration, and broaden accessibility (Anastasi & Urbina, 1997). However, their validation poses unique challenges: features that enhance playability can increase individual performance variability and reduce task purity (Zhang & Chignell, 2020). This trade-off underscores the need for rigorous psychometric validation of game-based tools against established benchmarks.

Traditional cognitive tests like the MoCA and MMSE are commonly used to diagnose conditions ranging from mild cognitive impairment to dementia. Some serious games have been validated against these tools (e.g., Liu et al., 2023), while others, including BrainTagger, have undergone construct-level validation by aligning game performance with standardized psychological tasks targeting specific cognitive domains (Hu et al., 2023; Tong et al., 2021).

Despite increasing use of visual and thematic “skins” in cognitive games, there is limited research on how these sensory components influence performance. Bipp et al. (2024) conducted a meta-analysis of 44 studies comparing game-based cognitive assessments to traditional tests. They reported a moderate overall correlation (r = .30) and called for further investigation into how game mechanics and aesthetics affect assessment validity. For instance, “Penguin Explorer” by Cognifit uses an animated penguin to measure inhibition and spatial planning, yet little if any evidence has been provided on how the game’s theme may affect cognitive outcomes. Similarly, Ganiti et al. (2018) demonstrated that auditory feedback can significantly impact engagement and performance in digital environments, suggesting that sensory design choices can shape how users experience and interpret cognitive tasks.

Given the lack of empirical data on the impact of metaphor changes, this study evaluates whether modifying a game’s visual skin alters the measurement properties of four BrainTagger tasks targeting core executive functions. It uses a within-subject design to control for individual differences and directly compares performance on Whackamole and Gardening versions of each task.

Method

Participants and Recruitment

Participants were 109 second-year undergraduate engineering students at a major North American university. Recruitment occurred through their enrollment in a mandatory laboratory course, and participation in the study was embedded within the course activities. Students completed cognitive assessment games as part of their regular coursework and were informed that their laboratory data could be used for research purposes subject to consent. Students who declined to allow their data to be used were excluded from analysis. The final sample consisted of 57 female students, 51 male students, and one student who preferred not to disclose their gender. No monetary compensation was provided; however, students received course credit for completing the laboratory activities, which included gameplay and the submission of a corresponding laboratory report. This study was reviewed and approved by the University of Toronto Research Ethics Board (Protocol #43771).

Game Suite

TAG-ME quick and PlANT-ME quick: Measures cognitive speed via response latency to mole appearances.

TAG-ME only and PlANT-ME only: Assesses response inhibition (a Go/No-Go task using moles with hats, or flowers, as distractors).

TAG-ME greater and PlANT-ME bigger: Stroop tasks measuring cognitive flexibility.

TAG-ME again and PlANT-ME again: N-back task assessing working memory through number recall.

Each game included 30 trials. Participants played the four games in the sequence presented above, with the option to stop at any time. Reaction times, accuracy, false alarms, and other types of responses were automatically recorded. The trials featured randomized visual stimuli, and the appearance duration of the characters (either moles or weeds) was adaptive—characters would vanish more quickly when participants responded rapidly and would remain visible longer if participants struggled to hit them in time, but only for the cognitive speed and response inhibition games.

Each game had two skin variants representing different metaphors: a Whackamole version and a Gardening version. Despite differences in visual presentation, the underlying task demands remained the same across skins. Participants were required to make decisions (e.g., whether to hit a target) and execute corresponding motor responses (e.g., pressing a button) in both versions. Figure 1 shows the TAG-ME Quick (Whackamole) version used to assess cognitive speed, where players needed to quickly “whack” moles as they appeared. Figure 2 shows the corresponding PLANT-ME Quick (Gardening) version, where players rapidly “dig up” weeds in a garden setting.

Screenshot of the TAG-ME quick (Whackamole) version for cognitive speed assessment.

Screenshot of the PLANT-ME quick (Gardening) version for cognitive speed assessment.

While the visual narrative and theme changed between the two versions, the cognitive processing and motor responses required by the players were designed to be equivalent, allowing for a focused evaluation of metaphor effects on task performance.

Procedure

The study was embedded into a 5-week laboratory assignment for a second-year undergraduate engineering course. Students participated remotely using their own computers, responding via keyboard (keys Q, W, E, A, S, D) to six corresponding holes displayed on the screen in the Whackamole (“TAG-ME”) and Gardening (“PLANT-ME”) versions of the games.

At the start of the first lab session, students received a website link to generate a unique four-digit participant number and a Google Form link to access the study materials. After entering their participant number, participants reviewed an overview of the experiment, provided informed consent, and completed demographic questions (age and gender).

Following the first session, students participated in four weekly gameplay sessions each focused on a different cognitive task (i.e., cognitive speed in the second session, response inhibition in the third session, cognitive flexibility in the fourth session, and working memory in the fifth session). Each week, students received a game site link through the course platform and were required to complete the assigned cognitive tasks within 48 hr.

Statistical Analysis

The primary aim of the analysis was to compare cognitive performance across different metaphor versions (Whackamole vs. Gardening) for each cognitive function. Data were screened for completeness and outliers. For each game, paired-sample t-tests were conducted to assess differences in performance (e.g., reaction time, accuracy) between the two metaphor conditions. Effect sizes (Cohen’s d) were calculated to estimate the magnitude of differences. Additionally, descriptive statistics (means and standard deviations) were reported for all major variables. An alpha level of .05 was used to determine statistical significance.

Results

Correlation Between TAG-ME and PLANT-ME Versions

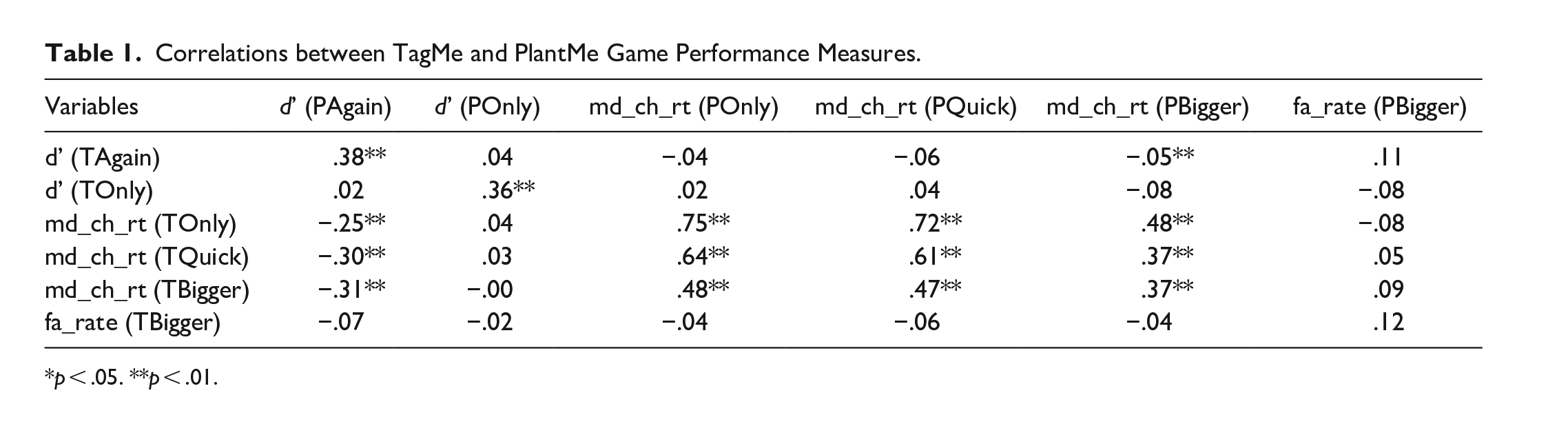

To evaluate whether changing the game metaphor impacted the measurement of cognitive constructs, we calculated correlations between performance measures from the Whackamole (first letter “T” for TAG-ME) and Gardening (first letter “P” for PLANT-ME) versions across four cognitive domains. Table 1 summarizes the correlations between performance metrics across the two versions. The meaning of variable names used in the table can be inferred from the discussion of the tabular results.

Correlations between TagMe and PlantMe Game Performance Measures.

p < .05. **p < .01.

Significant positive correlations were observed for key tasks. Performance on the working memory task (3-Back) showed a moderate correlation between versions (d’ between TAgain and PAgain, r = .38, p < .01), indicating reasonable construct conservation. Response inhibition tasks also showed strong convergence, with significant correlations for both d-prime accuracy (TOnly and POnly, r = .36, p < .01) and reaction times (TOnly RT and POnly RT, r = .75, p < .01).

Cognitive flexibility tasks showed significant correlations on reaction time measures (TBigger RT and PBigger RT, r = .37, p < .01), although no significant relationship was observed for accuracy measures. Similarly, cognitive speed tasks were strongly correlated between versions (TQuick RT and PQuick RT, r = .61, p < .01).

These results suggest that despite changes in the surface metaphor, the core cognitive constructs assessed by each game remained relatively stable.

Influence of Cognitive Speed Across Tasks

Cross-domain analysis showed that reaction times from the cognitive speed tasks correlated with performance in other cognitive domains, particularly in the Gardening version. PQuick reaction time was strongly related to response inhibition (TOnly RT, r = .72) and cognitive flexibility (TBigger RT, r = .47) in the Whackamole version. Similarly, TQuick RT correlated significantly with response inhibition (POnly RT, r = .64) and cognitive flexibility (PBigger RT, r = .37) in the Gardening version. Working memory performance in the Gardening version also correlated negatively with cognitive speed measures (ranging from −.25 to −.31), suggesting a greater influence of speed on accuracy for the working memory games.

Discussion

The results demonstrate that changing the game metaphor from Whackamole to Gardening preserved the measurement of cognitive speed, response inhibition, and cognitive flexibility. Moderate to strong correlations between TAG-ME and PLANT-ME versions suggest that executive function assessments can remain valid despite surface design changes, provided that core task demands are maintained.

However, working memory tasks appeared more sensitive to metaphor changes. Reaction time measures in the Gardening version correlated more strongly with cognitive speed tasks, indicating that players may have adopted a more speed-oriented strategy when interacting with the gardening-themed 3-Back task. This suggests that metaphor changes introducing different perceptual features (e.g., matching flower colors rather than numbers) may subtly alter cognitive engagement, particularly for tasks involving sustained memory or attentional control.

These findings have two key implications. First, surface changes such as metaphor shifts can enhance user engagement without undermining validity for many executive functions. Second, tasks targeting complex cognitive processes like working memory require closer scrutiny, as metaphor-driven changes in user strategy could affect assessment outcomes.

Limitations in this study include the use of a student sample, which limits generalizability to older or clinical populations. Future research should replicate these findings across diverse user groups and explore other types of design variation. Experimental manipulations explicitly targeting speed-accuracy trade-offs may also clarify how metaphor influences cognitive strategies.

Conclusion

This study examined the effects of changing game metaphors on cognitive assessment outcomes using the BrainTagger suite. Overall, results demonstrated that shifting from a Whackamole to a Gardening metaphor preserved the measurement of core executive functions, including cognitive speed, response inhibition, and cognitive flexibility. Moderate to strong correlations between versions indicated that surface-level design changes can be implemented without substantially compromising validity.

However, working memory tasks showed greater sensitivity to metaphor changes. In particular, players may have engaged different memory strategies when interacting with numbers versus flower colors, potentially affecting how information was encoded, stored, and retrieved. This aligns with prior research suggesting that numerical stimuli typically engage the phonological loop, while color-based visual stimuli recruit the visuospatial sketchpad subsystem of working memory (Baddeley, 1992). This shift in underlying memory strategies could account for the stronger associations observed between cognitive speed and working memory accuracy in the Gardening version.

These findings highlight the importance of validating any thematic or visual changes in cognitive assessment games, especially for tasks involving complex memory processes. Future work should explore how different types of stimuli influence cognitive strategies and extend these investigations to broader populations to ensure assessment reliability across diverse contexts.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: BrainTagger is co-owned by Mark Chignell, two other off-campus researchers, and the University of Toronto. In the future it is possible that a company may be formed and that BrainTagger may earn revenues. But at present BrainTagger is freely available for research use and the main motivation of our research is to collect scientific results associated with the use of the BrainTagger Cognitive assessment games.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Development of the BrainTagger games was funded by grant number IA-2021-211 “Target Acquisition Games for Measurement and Evaluation (TAG-ME) of Detailed Brain Function” from the Connaught Fund. Additional support was provided by the University of Toronto work study program.