Abstract

Working memory (WM) is a fundamental cognitive function, yet different tasks may assess distinct aspects of WM. We investigated the relationship between self-directed selection from memory sets and performance on the N-back task. One hundred nine students participated in the study (57 females, 51 males, one other). The N-back s and self-directed memory task measures were significantly but modestly correlated (r = .29), possibly because of a compressed (high) range of WM ability in our engineering student sample. Participants also played other games assessing cognitive speed, response inhibition, and cognitive flexibility. The gamified N-Back Task was significantly correlated with the gamified numerical Stroop task, whereas our gamified self-directed memory selection task was not. This raises the possibility that self-directed memory selection is a purer measure of working memory than the N-Back task, because there is less involvement of cognitive flexibility (as exemplified by the numerical Stroop task) in its performance.

Introduction

Working memory (WM) is a fundamental cognitive function that enables the temporary storage and manipulation of information necessary for performing cognitive tasks such as comprehension, reasoning, and learning (Baddeley & Hitch, 1974). While numerous paradigms have been developed to measure WM, different tasks often assess distinct cognitive processes, such as maintenance, updating, inhibition, or strategic control (Nyberg & Eriksson, 2016).

Two widely studied WM paradigms are the N-back task and self-directed memory selection tasks. The N-back task is externally paced and primarily targets continuous updating of memory representations, while self-directed memory tasks involve internally paced, strategic encoding and recall (Kane et al., 2007; Ritakallio et al., 2024). Despite both being considered WM tasks, the degree to which these paradigms measure overlapping constructs remains unclear. Additionally, from a psychometric perspective, the concept of task purity—the extent to which a task isolates a single cognitive function without contamination from others, such as processing speed or cognitive flexibility—has received increasing attention, particularly in the development of gamified cognitive assessments (Bipp et al., 2024).

Cognitive tasks with greater task purity are generally preferred when the goal is to evaluate a specific cognitive ability in isolation. However, gamification, while improving engagement and accessibility, may introduce construct-irrelevant variance that reduces task purity. In this study, we explore the construct overlap and task purity of two WM measures implemented as serious games within the BrainTagger suite: a self-directed memory selection task (TAG-ME Pick) and a multi-level N-back task (TAG-ME Again). By examining their correlations with each other and with other executive function tasks—response inhibition, cognitive flexibility, and processing speed—we aim to assess which task better isolates core WM processes.

Background

Working memory is commonly conceptualized as comprising multiple interrelated processes, including the updating of memory contents, inhibition of irrelevant information, and strategic maintenance over time (Miyake et al., 2000; Novick et al., 2020). The N-back task has become one of the most widely used tools for assessing WM updating, requiring participants to monitor a sequence of stimuli and respond when the current stimulus matches one presented N positions earlier. The N-Back task has different levels of difficulty (i.e., 1-Back, 2-Back, and 3-Back) and can be adapted to different modes of presentation (verbal, spatial, etc.). However, it has also been criticized for lacking specificity, as performance is influenced by factors such as processing speed, sustained attention, and inhibitory control (Burgess et al., 2011; Meule, 2017).

Self-directed memory tasks, by contrast, reflect more naturalistic memory strategies. These tasks require individuals to select items one at a time from a memory set in their personally chosen sequence, without selecting items that they have previously selected. This method engages internal metacognitive processes, such as prioritization and self-monitoring, which are common in real-world memory demands but may not be well captured by externally driven paradigms like the N-back task (Gajewski et al., 2018; van Dam et al., 2015). Importantly, the degree of overlap—or divergence—between such strategic memory tasks and traditional WM measures has not been thoroughly examined in gamified formats.

Gamification of cognitive assessments offers advantages in scalability, user engagement, and ecological validity, especially for applications in aging populations or non-clinical environments (Urakami et al., 2021). However, it also introduces variability in how tasks are perceived and performed, potentially reducing psychometric precision. Recent meta-analyses suggest that the average correlation between game-based and traditional cognitive assessments is modest (r = .30), highlighting the need to evaluate construct validity and task purity in these new formats (Bipp et al., 2024).

To address these gaps, our study investigates the degree of correlation between a gamified self-directed memory selection task and a gamified N-back task. We also examine their independence from other cognitive domains using correlational analyses with inhibition, flexibility, and speed tasks. This approach allows us to evaluate both the shared variance (construct overlap) and the unique variance (task purity) of each WM task.

Method

Participants

A total of 109 second-year undergraduate students (57 female, 51 male, 1 undisclosed) from a major North American university participated in this study as part of a mandatory Industrial Engineering laboratory course. Participation in the cognitive assessment activities was embedded within course requirements. Students were informed that their anonymized gameplay data could be used for research purposes contingent upon consent. Only data from students who provided this consent were included in the final analysis. No monetary compensation was provided; however, students received course credit for completing the laboratory exercises, which included game participation and a written lab report. This study was reviewed and approved by the University of Toronto Research Ethics Board (Protocol #43771)

Materials and Procedure

Participants played four working memory related gamified cognitive assessment tasks from the BrainTagger suite (Urakami et al., 2021), each targeting different cognitive domains. These games were completed in a fixed sequence during the lab period. Each game consisted of 30 trials, and gameplay was logged automatically, including metrics such as reaction time (RT), accuracy, and false alarm rate. The working memory tasks were:

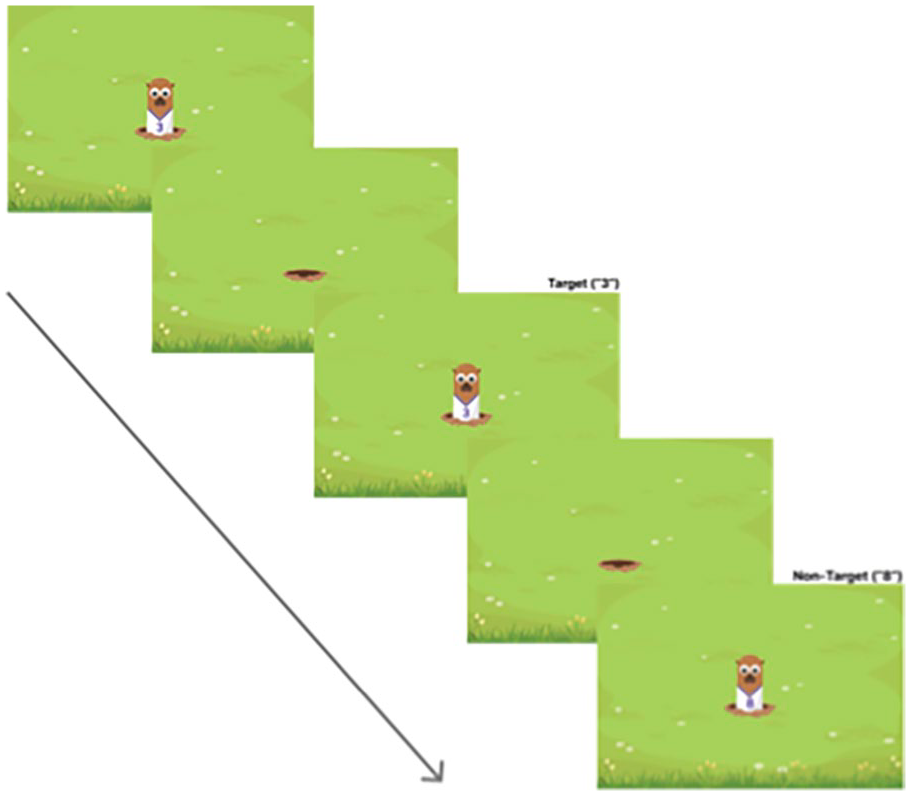

Working memory N-back task (TAG-ME again—easy, medium, hard): As shown in Figure 1, this task implemented 1-back, 2-back, and 3-back conditions using numeric stimuli to assess working memory updating and maintenance. Performance was quantified using d-prime (sensitivity index) and other derived metrics.

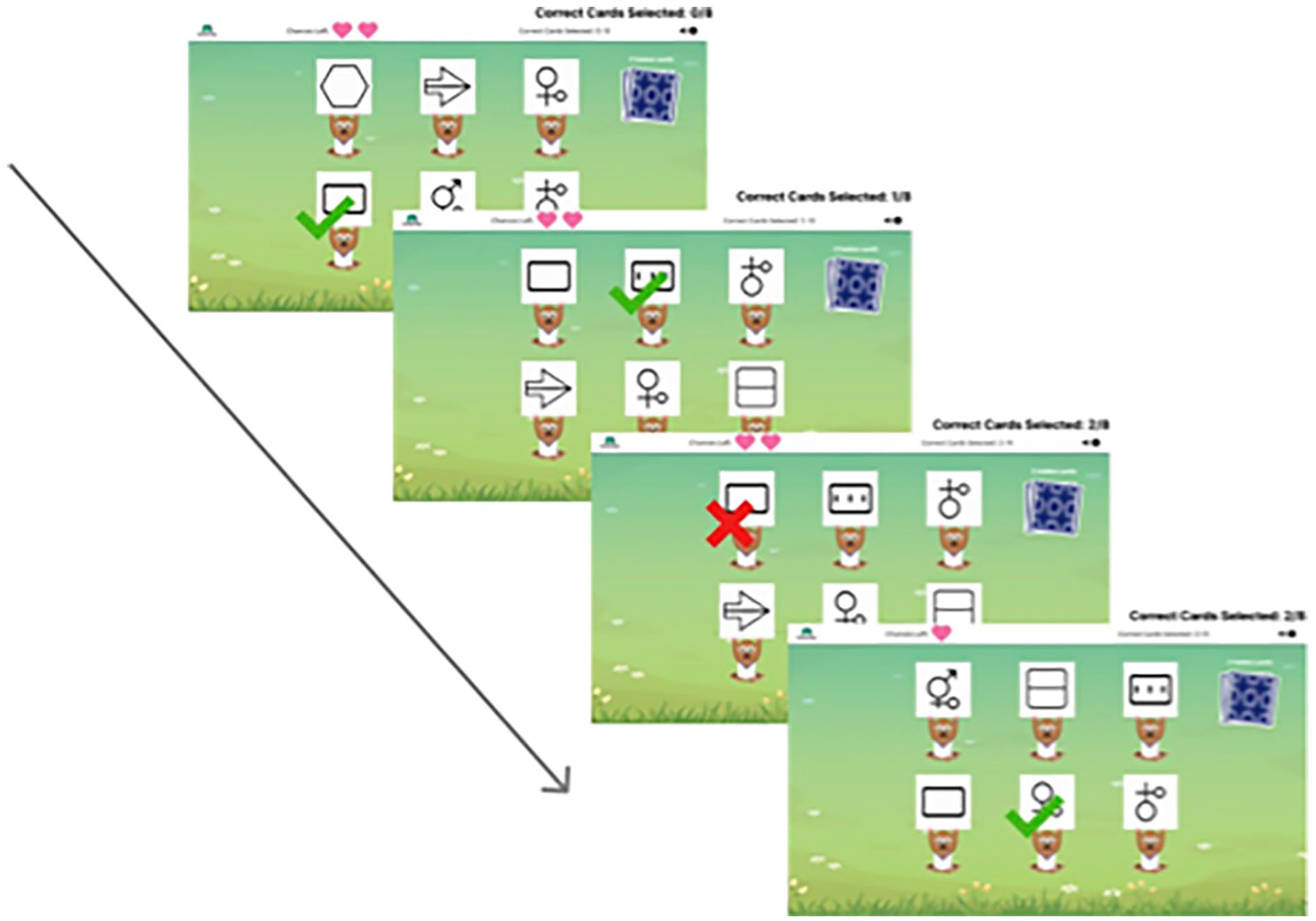

Self-directed memory selection task (TAG-ME Pick): As shown in Figure 2, this task assessed strategic memory encoding. Participants were shown a set of items to be remembered and aimed to pick the items sequentially, one at a time, without repetitions. Recall accuracy determined the maximum memory level achieved (i.e., the size of the largest memory set that participants could select from without repetitions).

Sequence from the TAG-ME again (1-back) game.

Sequence from the TAG-ME pick game, a self-directed memory selection task.

Additional tasks used to assess other executive functions (and cognitive speed) included:

Inhibition task (TAG-ME only): A Go/No-Go task evaluating participants’ ability to inhibit responses to specific stimuli.

Numerical stroop task (TAG-ME bigger): A measure of cognitive control and flexibility using congruent and incongruent trials.

Cognitive speed task (TAG-ME quick): A simple reaction-time-based task measuring general processing speed.

Statistical Analysis

Correlational analyses were used to examine the relationship between performance on the self-directed memory task (TAG-ME Pick) and the three versions of the N-back task (TAG-ME Again). We further assessed task purity by examining the degree of independence between these two working memory tasks and the other executive function tasks (inhibition, cognitive flexibility, and processing speed). Greater independence from unrelated cognitive domains (i.e., lower pairwise correlations) was interpreted as indicative of higher task purity.

Results

Correlation Between Working Memory Tasks

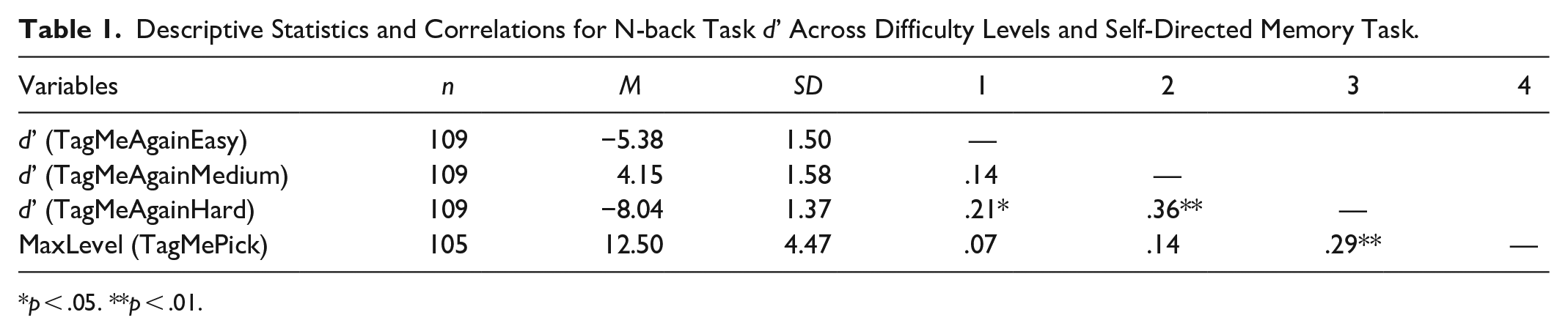

To assess the relationship between the two WM tasks—self-directed memory selection (TAG-ME Pick) and the N-back task (TAG-ME Again)—we conducted Pearson correlation analyses across three difficulty levels of the N-back task (Easy = 1-back, Medium = 2-back, Hard = 3-back). As shown in Table 1, the self-directed memory task (measured by MaxLevel achieved) showed a significant positive correlation only with the Hard (3-back) version of the N-back task, r = .29, p < .01. Correlations with the Easy and Medium versions were nonsignificant. This finding suggests that, for an engineering student population, where WM would generally be expected to be high, self-directed memory performance is associated with N-back performance only under high cognitive load (i.e., the 3-Back condition), where strategic control and memory updating demands are greatest.

Descriptive Statistics and Correlations for N-back Task d’ Across Difficulty Levels and Self-Directed Memory Task.

p < .05. **p < .01.

Task Purity Analysis

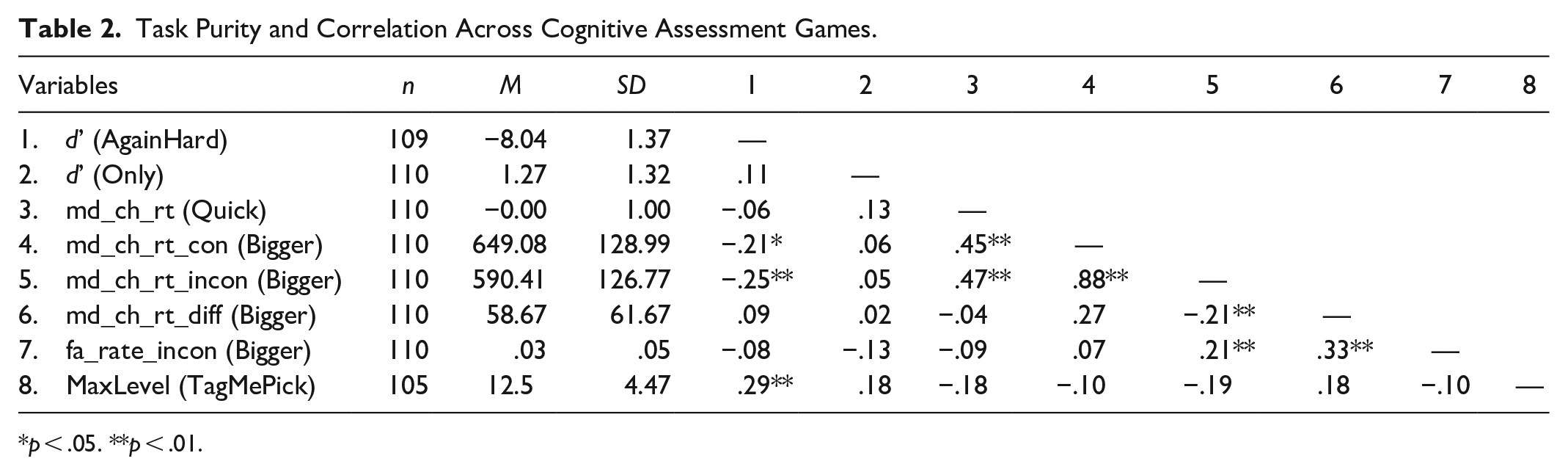

To evaluate task purity, we examined how each WM task correlated with other executive function tasks—specifically, inhibition (TAG-ME Only), cognitive flexibility (TAG-ME Bigger), and processing speed (TAG-ME Quick). As shown in Table 2, the 3-back N-back task significantly correlated with congruent reaction times (r = −.21, p < .05) and incongruent reaction times (r = −.25, p < .01) in the Stroop-like TAG-ME Bigger task.

Task Purity and Correlation Across Cognitive Assessment Games.

p < .05. **p < .01.

In contrast, the self-directed memory task exhibited no significant correlations with any of the non-WM tasks, suggesting it may more specifically measure WM processes. These results provide evidence in support of the hypothesis that the self-directed task has greater task purity than the N-back task in this gamified assessment context.

Discussion

This study compared two gamified working memory tasks—TAG-ME Again (N-back) and TAG-ME Pick (self-directed memory selection)—to evaluate their construct overlap and task purity. While both are designed to assess working memory, only the Hard (3-back) N-back condition showed a significant correlation with the self-directed task. This indicates that the N-back task is only similar to the self-directed memory task under high cognitive load for an undergraduate engineering student sample.

An important factor potentially contributing to these differences is the nature of the stimuli used in the two tasks. In the N-back task, participants monitor and respond to numeric digits displayed on moles—an abstract and uniform stimulus set. In contrast, the self-directed memory task that we used presented graphic icons (e.g., squares or pictorial stimuli reminiscent of alchemical symbols) from which participants selectively chose items to encode and later recall. These differences in stimulus modality and complexity may influence the strategies participants use. The visual distinctiveness of stimuli may facilitate chunking or categorical encoding, while numeric stimuli may engage more domain-specific rehearsal or comparison processes.

Task purity analyses further support the distinction between these tasks. TAG-ME Again Hard was significantly correlated with cognitive flexibility metrics from the Stroop-like task (TAG-ME Bigger), indicating that it draws on at least one executive process beyond memory updating. TAG-ME Pick, however, showed no significant correlations with inhibition, flexibility, or processing speed measures, suggesting it provides a more isolated measure of working memory—particularly strategic encoding and maintenance.

These findings align with previous critiques that the N-back task, despite its popularity, is not process-pure (Burgess et al., 2011; Meule, 2017). Our results extend this literature by showing that self-directed memory selection, when implemented as a gamified task, may reduce cross-domain contamination and better reflect individual differences in memory control strategies (Ritakallio et al., 2024).

Limitations of this study include the use of a student sample and reliance on the BrainTagger games rather than other cognitive assessment game frameworks. However, past research has demonstrated that BrainTagger games are valid in terms of correlations with standard psychological tasks for working memory (Hu et al., 2025); and response inhibitions (Tong et al., 2021).

Conclusion

Our findings suggest that although both the N-back and self-directed memory selection tasks measure aspects of working memory, they differ substantially in the cognitive processes they engage. These differences may be due in part to stimulus presentation format—numbers in the N-back task versus visual icons in the self-directed task—which shape the cognitive strategies participants use during task performance.

Critically, the self-directed task demonstrated greater task purity, exhibiting no significant correlations with other executive functions such as inhibition or flexibility. This makes it a promising alternative for researchers and clinicians seeking to isolate core working memory processes without confounding influences. As digital cognitive assessments gain momentum, identifying tasks that are both engaging and focused on a specific psychometric construct remains essential.

Future research should explore how variations in stimulus type and task framing affect working memory performance across diverse populations, and whether self-directed tasks can serve as more sensitive indicators of real-world cognitive function.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: BrainTagger is co-owned by Mark Chignell, two other off-campus researchers, and the University of Toronto. In the future it is possible that a company may be formed and that BrainTagger may earn revenues. But at present BrainTagger is freely available for research use and the main motivation of our research is to collect scientific results associated with the use of the BrainTagger cognitive assessment games.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Development of the BrainTagger games was funded by grant number IA-2021-211 “Target Acquisition Games for Measurement and Evaluation (TAG-ME) of Detailed Brain Function” from the Connaught Fund. Additional support was provided by the University of Toronto work study program.