Abstract

The United Kingdom’s Defence Science and Technology Laboratory (Dstl) has developed an innovative military cognitive task battery tool titled: Interactive Measures of Performance and Assessment of Cognitive Tasks (IMPACT). The purpose of the tool is to support the objective assessment of cognitive task performance and task demand across a range of military system types, use cases and experimental settings. IMPACT consists of six, reconfigurable, generic military tasks, and an intuitive user interface for the experimenter to configure the tool and collect data. Initial validation of the IMPACT tool has been undertaken and a number of early adopters have used the tool to support trials, system development and experimentation. The validation results show that the six individual IMPACT tasks broadly stimulate the associated cognitive attributes; as mapped and originally conceived during the design and development of the tool; as measured by subjective assessment techniques, psychophysiological monitoring, and performance measures. IMPACT continues to be refined in response to feedback from the validation exercise and early adopters of the tool.

Background

There are very few reconfigurable batteries of cognitive tasks orientated towards the wide variety of tasks undertaken by military personnel. The most widely recognised and commonly used across defence is the National Aeronautics & Space Administration’s (NASA) Multi-Attribute Test Battery-II (MATB-II), which is specifically aircrew focused (Comstock and Arnegard, 1992).

A lack of military orientated task batteries often means experimental work must rely on the use of tasks that are not well aligned to the experimental setting, scenario or are unfamiliar to participants reducing the immersive experience and may detract from the training exercise or wider study. Dstl determined that there was a need to develop an objective performance battery, which is designed around a generic set of military orientated tasks (Sabine and Thompson, 2024).

Design

To fill this gap Dstl has undertaken a Programme of work to develop a tool to support the objective assessment of cognitive task performance and task demand across a range of military system types, use cases and experimental settings. The tool, titled the Interactive Measures of Performance and Assessment of Cognitive Tasks (IMPACT), uses a suite of computer-based, military themed, cognitively orientated tasks to capture human performance data (reaction time, accuracy).

The tool is versatile and easy to use and the task performance data captured enables the level of cognitive task performance and cognitive demand placed on users to be assessed. The tool can be employed in a variety of settings including simulations, laboratories, and naturalistic environments such as military exercises on training areas, or during equipment trials. The tool also includes an intuitive user interface for the experimenter to configure the tool and collect data.

The tasks selected for IMPACT are indicative of a range of typical military cognitive tasks and were chosen for their familiarity to military users and to maintain face validity and immersion in the experimental construct or exercise being undertaken.

How and Why Would You Use IMPACT?

IMPACT is intended to be used in two main applications:

As a primary task to measure the impact of an independent variable on cognitive performance. For example, participants would complete an IMPACT task at various times after the administration of an intervention and any differences in objective task performance (e.g., reaction time, accuracy) used to provide insight into the effectiveness of the intervention.

As a secondary (or dual) task where the participant’s performance on the IMPACT task provides an insight into the level of “spare capacity” available; as resources are diverted away from the IMPACT task and performance deteriorates in order to maintain primary task performance.

Development of Impact

Seventeen candidate military tasks were identified through discussion with serving Military Advisors (MAs) embedded within Dstl. These tasks were selected so that anyone who has completed basic military training would be familiar with them, even if they do not form part of their day-to-day military role (Sabine, 2022).

From the candidate tasks identified, six were selected by the Dstl project team based on the anticipated cognitive properties that each task encompasses, overall balance of tasks and the most likely, near term applications of the tool. The tool architecture is designed to allow additional tasks to be added in future should they be required.

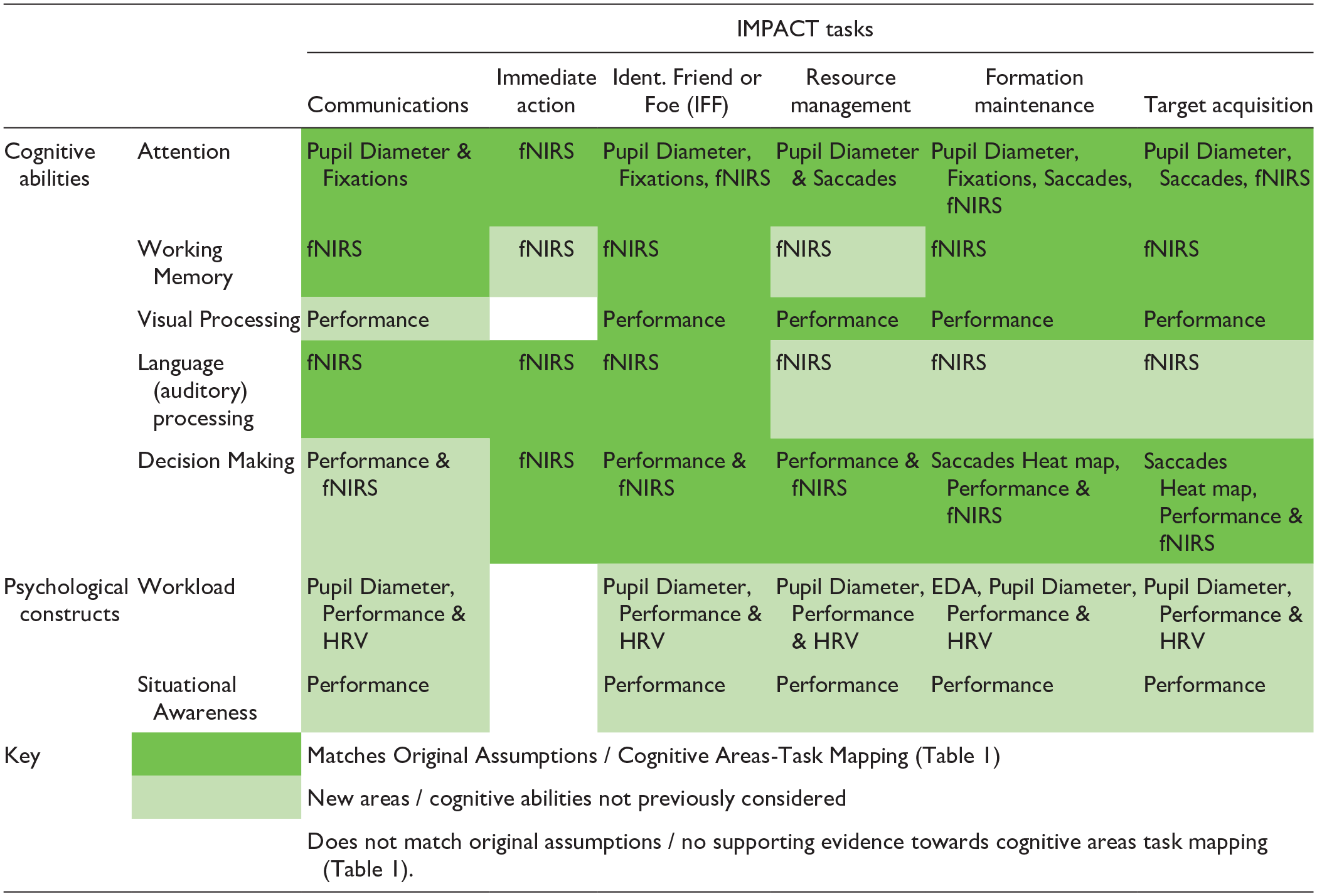

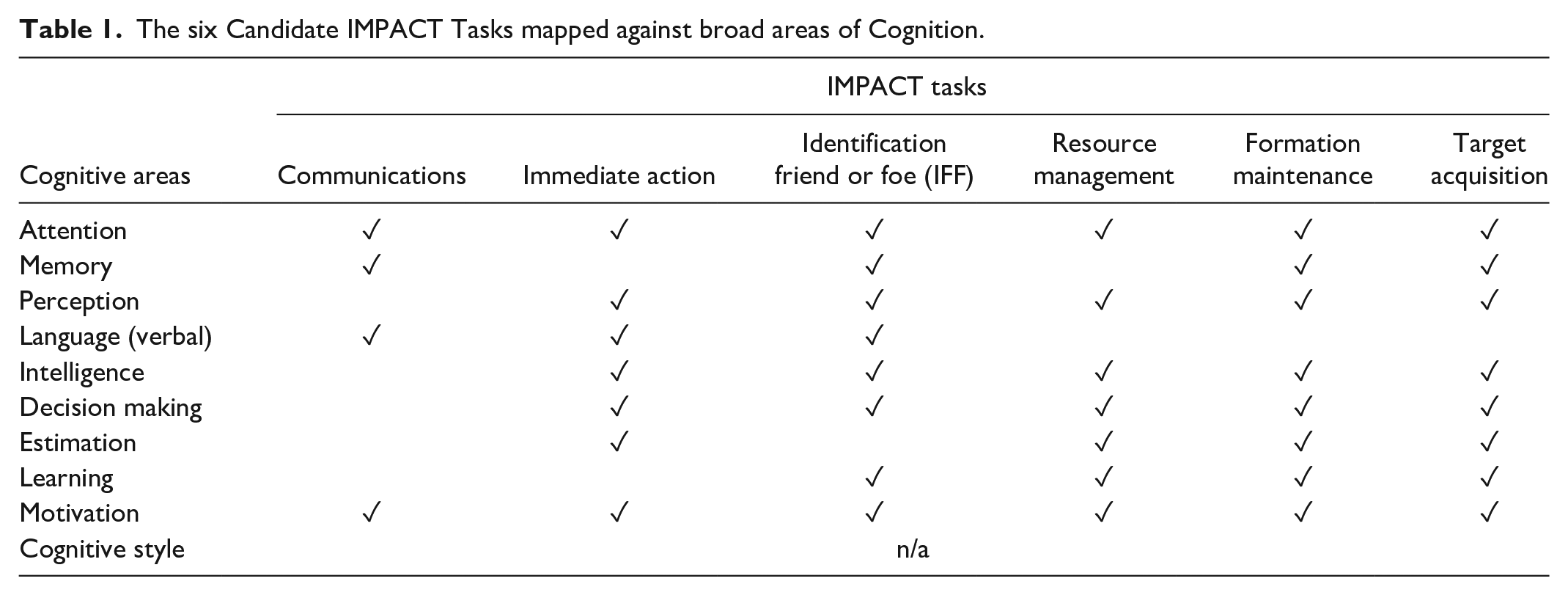

Each of the six IMPACT tasks, are intended to stimulate multiple areas of cognition. Table 1 shows the “Cognitive Area-Task” mapping between each task and various cognitive elements, based upon the work of Tatlock et al. (2015).

The six Candidate IMPACT Tasks mapped against broad areas of Cognition.

Later in this paper, details of a study undertaken to explore how successfully the six IMPACT tasks challenge these individual cognitive areas is presented.

Through open competition, Frazer-Nash Consultancy were selected to undertake the design and development of IMPACT. An Agile development methodology was adopted, consisting of multiple design sprints, each focusing on different aspects of the tool.

Overview of the Six IMPACT Tasks

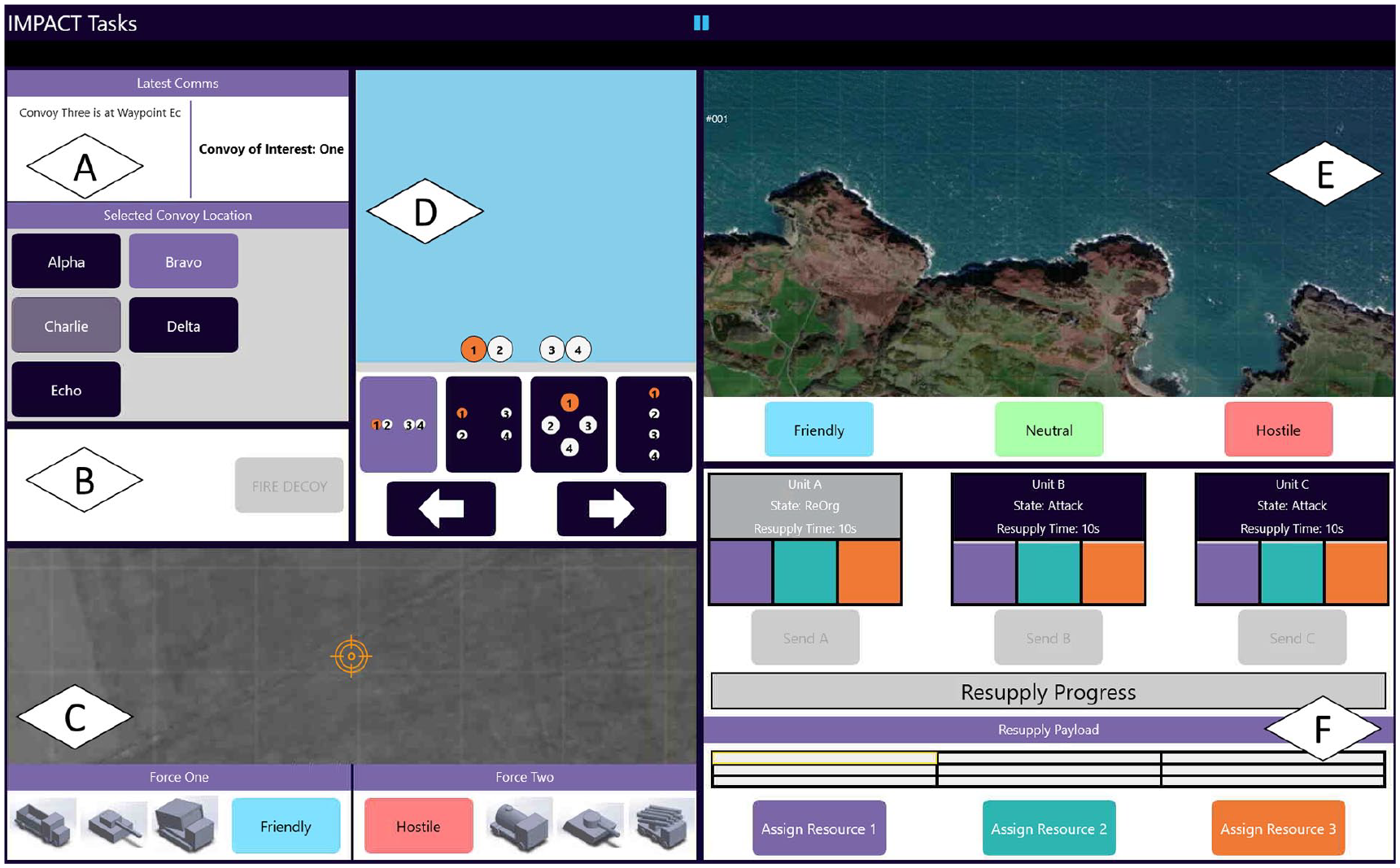

The communications task (Figure 1a) is representative of a text and audio communications application. The participant is presented with textual and auditory messages about convoys and their locations, which they have to monitor for a specific convoy of interest and note the reported location using the controls provided. Response time and accuracy are recorded as measures of task performance.

All six individual IMPACT tasks. (A) Communications Task, (B) Immediate Action Task, (C) Target Acquisition Task, (D) Formation Maintenance, (E) IFF Task and (F) Resource Management Task.

The immediate action task (Figure 1b) is a simple alert-response task where the participant, when presented with a visual (and optionally an audible) alert, must respond with the press of an acknowledgement button. There are six pre-set alert types a researcher may choose from, as well as the option to create bespoke alerts. Response time and accuracy are recorded.

The target acquisition task (Figure 1c) presents the participant with an airborne surveillance style, side scrolling image with targets overlaid. These targets must be detected, selected, assessed against reference images and then classified correctly using the controls provided. The number of targets selected, and identified (correctly or incorrectly) are recorded.

The formation maintenance task (Figure 1d) gives the participant control over a formation of four uncrewed vehicles in a top down scrolling game environment. The participant must change the formation shape and move it laterally to neutralise mines and avoid sand bars, as they advance down the screen. The number of mines neutralised and uncrewed vehicles remaining are recorded as measures of task performance.

The IFF task (Figure 1e) presents the participant with visual representations of radar tracks together with an auditory message instructing them how to classify the track (friendly, neutral or hostile). The user must select the right track on screen and use the provided controls to correctly classify it. Response time and accuracy are recorded.

The resource management task (Figure 1f) presents the participant with a visual representation (in the form of bar graphs) of the amount of three different types of resource that three military units have available. These units consume resources at different rates and the participant must use the controls provided to select the quantity of the three resources to supply and which unit to provide it to. A range of resource performance scores may be measured from the task interaction data recorded (e.g., maintaining sufficient supplies).

Using IMPACT

IMPACT will run on most modern laptops, desktops and tablets and supports a range of user input devices. Experimenters can choose to present a single task, or any combination of multiple tasks (including all six at once) depending on display size and task legibility. The experimenter can resize and reposition each task according to need. Task load can be adjusted for intensity and complexity; enabling cognitive demands to be tailored to suit the experiment. Once designed, a configuration can be exported and loaded onto other devices as required. The tool includes task instructions and ‘practice runs’ to allow participants to familiarise themselves with each task before data capture, thereby reducing confounding learning/practice effects. None of the six tasks require any prior knowledge or training.

A key design feature of IMPACT is the simplicity of the experimenter’s user interface. It does not require any knowledge of how to edit code as has been a necessity for similar products like the NASA MAT-B II. IMPACT provides an intuitive form based configuration interface for the experimenter. In addition, the data captured during experimental runs are easy to download and process, in order to quickly analyse and derive insights. Task performance data is automatically written to a .csv file that can be imported into popular analysis software such as Microsoft Excel. This data is time-stamped from the system clock to enable mapping against external temporal events. No participant data is retained in the IMPACT tool itself, it is saved externally in a user definable location on the host device.

Researcher End User Engagement

At the start of the Development phase a workshop was held with the prospective community of IMPACT users within Dstl. This included participants from across the human sciences professions and other interested parties. The feedback provided was useful in the development of user requirements for the tool. Further feedback was gathered from MAs involved in the initial design concept, which was fed back into subsequent sprints. Towards the end of the development phase the prospective IMPACT user community in Dstl was reengaged for further feedback together with Beta testing to inform the final stages of tool development.

Validation

Through open competition, Thales UK were selected by Dstl to undertake an independent validation of the IMPACT tool. Validation, in the context of this work, has focused upon construct validity; and the gathering of evidence to support or challenge the relationship between the IMPACT tasks and various aspects of cognition (as presented in Table 1). A favourable ethical opinion via the established Ministry of Defence JSP 536 Ethical Review process was received prior to commencement. Representative military end users (N = 35) took part in the study in June 2023.

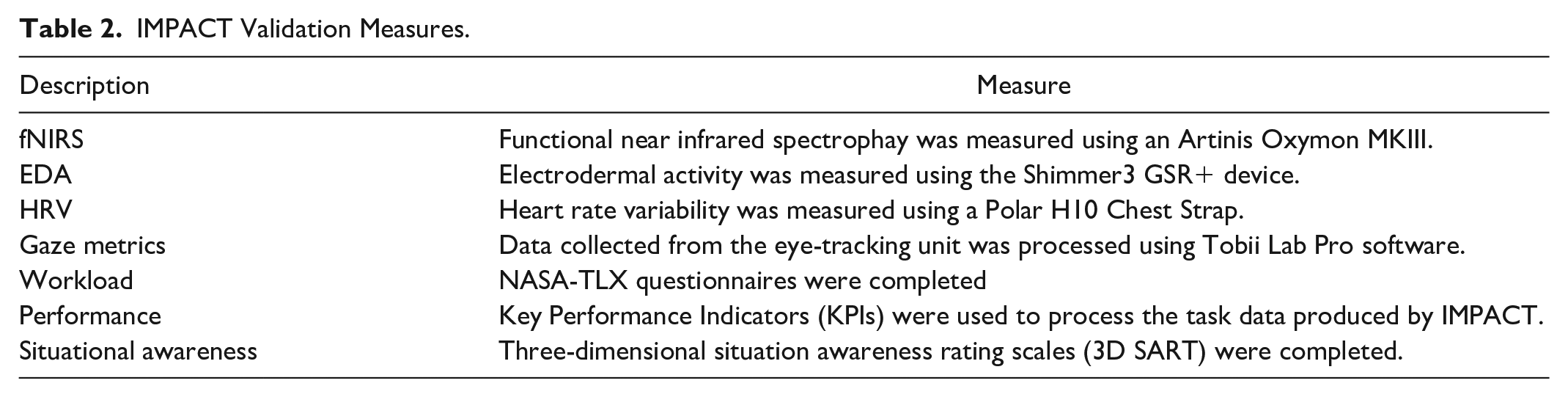

Thales developed an experimental protocol using a multi-method approach to validation (Table 2). Participants’ performance on each IMPACT tasks was recorded and compared with the relevant validation measures, which included subjective assessment techniques, psychophysiological monitoring and performance measures (Sturgess et al., 2024). Each IMPACT task was evaluated individually and task presentation order was counterbalanced. Each task was performed twice by each participant with the difficulty of the task adjusted to simulate high and low task load conditions.

IMPACT Validation Measures.

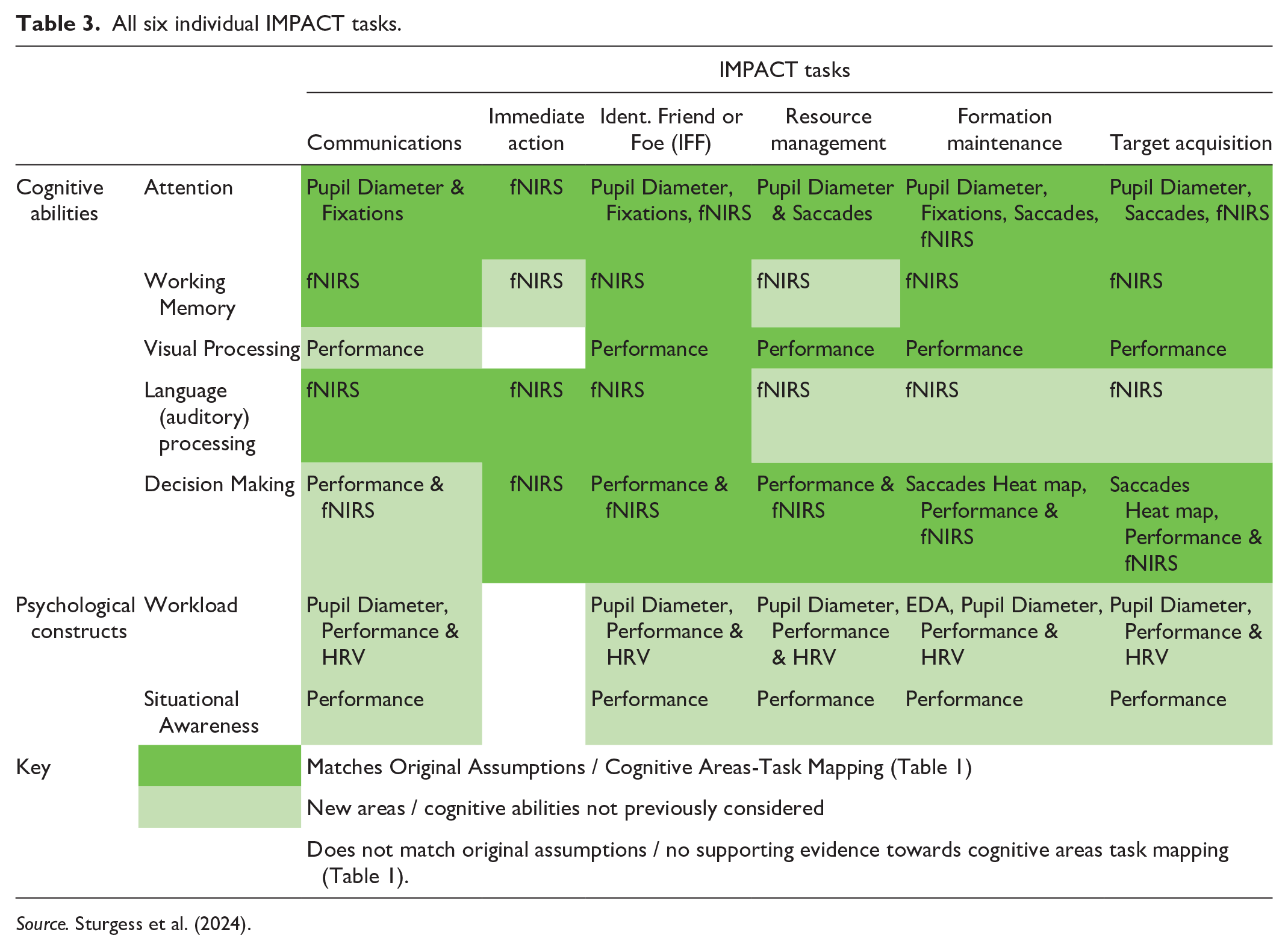

As part of the validation study, Sturgess et al. (2024) re-worked and consolidated the original “cognitive factor-task” mapping (as illustrated in Table 1). This consolidation was undertaken in order to achieve an effective measurable alignment between IMPACT tasks, the consolidated cognitive areas, and the techniques (Table 3) used to assess these elements. This re-working, presented in Table 3, splits the Cognitive Areas into underpinning “Cognitive Abilities” and “Psychological Constructs.” Table 3 also presents the key results of the study, indicating where supportive (or not) evidence was collected regarding links between IMPACT tasks and different areas of cognition, as measured by other techniques.

All six individual IMPACT tasks.

Source. Sturgess et al. (2024).

The results of this study (Table 3) show differences in task loading have led to observable differences in subjective, performance and psychophysiological behavior. In particular, the primary measure of cortical activity change—in different areas of the brain (as measured my fNIRS)—show change, as a consequence of manipulating task load; these different brain regions are typically associated with working memory, decision-making, language, intelligence, perception and attention (Carlén, 2017).

These results (Table 3) indicate that IMPACT largely performs as designed for—stimulating multiple aspects of cognition and driving cognitive demands and that the IMPACT tasks broadly meet or exceed the anticipated cognitive attributes-tasks relationship, providing construct validation evidence to the mapping first proposed in Table 1 (Sabine, 2022).

In addition to and concurrent with the validation work presented here, a number of projects have utilised IMPACT to support their research. This provided useful feedback on the usability of the tool in a research based context. However, validation is an ongoing process and more research is needed to explore its ability to elicit different behavioural outcomes when used more widely by different participant samples and within different experimental designs. It is considered that this is best achieved through real-world application and exploitation of IMPACT by early-adopters.

Conclusion

The combination of early experimenter feedback and validation findings indicate that the IMPACT tool has significant potential to support future defence trials and experimentation where cognitive load and/or cognitive task performance are of interest. However, it is important to recognise that further testing using IMPACT is required to (ia) fully understand its capabilities and (b) build upon this evidence base to both build and maintain user confidence in the ability of the tool to accurately measure what it claims to measure (Sturgess et al., 2024).

There is ample scope for future development of the tool with many stakeholders already interested in adopting and developing IMPACT within Dstl. Dstl are currently exploring the release pathways for IMPACT into the wider defence and academic community.

Footnotes

Acknowledgements

Dstl would like to thank Frazer-Nash Consultancy, Thales UK and early adopters within Dstl and Industry for their contributions to the design, development, and assessment of IMPACT to-date. Particular thanks go to: Stuart Pullin, Samia Abdi, James Waller, Tom Dalby, Dr Heather Taylor, Leon Singleton, John Sweet, Dr Samson Palmer, Dr Vicky Steane, Erinn Sturgess, Dr Mark Chattington.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

© Crown copyright (2024), Dstl. This information is licensed under the Open Government Licence v3.0. To view this licence, visit ![]() . Where we have identified any third party copyright information you will need to obtain permission from the copyright holders concerned. Any enquiries regarding this publication should be sent to:

. Where we have identified any third party copyright information you will need to obtain permission from the copyright holders concerned. Any enquiries regarding this publication should be sent to: