Abstract

Considering human motivation, is it more effective to assign work tasks to shop floor workers or let them choose among a set of open tasks? This paper reports the results of a field experiment conducted with a manufacturer that uses wearable devices to distribute shop floor tasks. Drawing on theory on human motivation, we analyze 66,233 machine status reports and 31,429 work tasks completed in two manufacturing plants in Germany and Italy. We rely on a Difference-in-Differences approach in a 13-week-long field experiment. The results show that allowing workers to choose their next task shows no aggregate productivity difference but reveals heterogeneous behavioral effects. We find that workers respond more slowly to tasks (response time), but once accepted, tasks are completed faster (completion time). We discuss the potentially canceling effects and uncover evidence for behavioral mechanisms such as social loafing. This study has significant consequences for production managers in charge of implementing choice-based digital task assignment systems.

Introduction

Distributing tasks among workers is a central problem in production and operations management. Previous research has often examined task choice and assignment from an operations research perspective, utilizing models for task assignment (Ernst et al., 2006; Lewis et al., 1998), task reassignment and worker collaboration (Işik et al., 2021), flexible versus fixed assignment systems (Zavadlav et al., 1996), or dynamic task assignment protocols (Lim and Wu, 2014). While these contributions have made valuable progress in the field of task assignment research, they assume that workers do not interpret their work assignments or consider their own agency and proceed directly to task completion. However, it is important to acknowledge that humans are not machines (Donohue et al., 2020; Shunko et al., 2018; Tan and Staats, 2020). In this paper, we investigate the behavioral effects of granting workers the autonomy of

The manufacturing company we cooperate with has transformed its organization of shop floors from bounded areas operated by a small team of workers each, as is common in manufacturing, into a more free-roaming skill-based organization. The introduction of industrial smartwatches enabled this change. The manufacturer codified workers’ skills and communicated open work tasks via the smartwatches to idle and

We conduct a field experiment in two factories involving real operators working in real manufacturing operations. We are investigating the effects of granting workers autonomy in selecting their tasks, rather than having tasks delegated to them. The system initially assigns production tasks to available and suitably skilled workers on the shop floor once sensors detect a task, such as a machine interruption, in real time. Our study focuses on unscheduled machine interruptions (breakdowns), which are among the most critical tasks in many automated manufacturing settings. To examine whether offering a choice improves performance, we analyze 66,233 machine status reports and 31,429 completed work tasks across two manufacturing plants of a Tier 2 supplier in the automotive industry in Germany and Italy. We compare productivity before and after the treatment in one plant and compare the results with a control group in the other plant using a Difference-in-Differences (DiD) approach. In addition to overall

Our results indicate that implementing autonomy through the provision of choice in digitalized task assignment systems, such as the one we study, has no significant effects on overall productivity. Two behavioral responses primarily drive this null effect: Workers take longer to respond to new tasks, but complete them more quickly once they are accepted. In our study, the increase in response time is larger than the decrease in completion time, which prolongs the total time to complete a task overall. Post hoc analyses further reveal that the treatment affects workers’ task choice behavior, increases the intervals between interruptions—a beneficial effect—and may lead to shirking and social loafing. These findings offer guidance for scholars and managers in designing human–machine interfaces amid the digital transformation of manufacturing. Additionally, we contribute to the broader discourse on autonomy—an essential construct in foundational theories of human motivation—by highlighting that it improves some but not all dimensions of worker performance in the context of manufacturing systems supported by wearable technology.

We extend prior research by strengthening the integration of worker behavior into the scholarly discussion of task assignment and delegation, particularly using new technology like wearables (Franke et al., 2024; Kwasnitschka et al., 2024). Previous studies have rarely documented effects on worker behavior and have also not explored shifts from one task assignment system to another in a field environment, particularly in the manufacturing sector. Therefore, the behavioral and socio-technical consequences of such changes in production systems—such as transitioning from relatively low autonomy task delegation to relatively high autonomy task choice—are underrepresented in the current literature. Simultaneously, studies have indicated that worker autonomy is one of the most significant variables in work organization affected by innovative technologies in manufacturing (Cagliano et al., 2019).

Literature Review and Hypothesis Development

Task choice is a form of autonomy, which refers to the capacity to self-organize one’s experience and behavior (Deci and Ryan, 2000). According to a fundamental theory of human motivation, Self-Determination Theory, autonomy is a fundamental requirement for an individual’s well-being and personal growth (Deci and Ryan, 2000). The theory also includes two other requirements for competence and relatedness, which represent independent and innate intrinsic factors of human motivation. Studies argue that individuals who experience high autonomy and feel in control of their outcomes, such as by making their own choices, feel higher intrinsic motivation. Conversely, when individuals perceive their environment as controlling, intrinsic motivation diminishes (Deci et al., 1989). In our manufacturing context, where shop floor workers have fixed salaries, the intrinsic perspective is important for performance. Additionally, Self-Determination Theory posits that supporting autonomy can yield other positive outcomes, such as improved well-being and psychological growth (Deci and Ryan, 2000; Patall et al., 2008), and performance (Moller et al., 2006).

Human motivation has been extensively studied in management and organizational research (Deci et al., 2017; Van den Broeck et al., 2016), although its application in production and operations management is relatively limited. However, the concepts of behavioral autonomy and self-determination are not entirely new to operations management. The literature has addressed various problems using the theory such as worker recruiting for inventory auditing (Ta et al., 2021), the performance of call center employees (Ashkanani et al., 2022), and effects of motivation on supplier selection (Franke et al., 2022; Roehrich et al., 2017) and project management (Chandrasekaran and Mishra, 2012; Haas, 2006; Sarafan et al., 2022).

Previous studies have differentiated various facets of autonomy, including choosing work methods, scheduling work, and establishing evaluation criteria (Breaugh, 1985). Our study primarily relates to autonomy in terms of choice over work scheduling. Workers can either choose what task to do next or a system delegates the next task to them (Breaugh, 1985). Task

A few studies have contributed insights that are closely relevant to our study. Raveendran et al. (2021) suggested that choice outperforms the traditional delegation of tasks to workers by managers. They found that choice has an advantage, particularly when workers have specialized skills but narrow responsibilities and tasks are modular and challenging to plan. Additionally, Baldwin and Clark (2006) demonstrated that when task division is transparent, self-selected work increases the likelihood that workers will choose to contribute rather than free-ride. Moreover, a laboratory experiment by Kamei and Markussen (2023) showed that democratic task assignments can enhance efficiency in work teams. Furthermore, the autonomy of choice also involves the evaluation of different options. In this regard, Basu and Savani (2017) also contributed a relevant study to the discussion around choice by showing that individuals make better decisions and engage in deeper cognitive processing when they view options simultaneously rather than sequentially. Besides all the beneficial effects of autonomy, research has also shown that highly autonomous workers, such as gig workers, often struggle to identify with their employers and sought ways to increase identification (Ai et al., 2023).

Some valuable studies have been published on the effect of choice but Baldwin and Clark (2006) and Basu and Savani (2017), for instance, contributed from software development and consumer choice settings, respectively. Here, it remains unclear whether these findings directly apply to other contexts and types of decisions, such as decisions regarding open work tasks in a production setting, which occur at a much higher frequency. An emerging sub-stream in operations that meets the high frequency of production is picking. In this stream, De Lombaert et al. (2025) provide a relevant and recent framed field example that stands in contrast to Raveendran et al. (2021) or Kamei and Markussen (2023), suggesting that the choice of tasks does not contribute to operational efficiency beyond improved worker perceptions. At the same time, a similar study in the same context has shown to deliver positive performance effects (Chen et al., 2024). Interestingly, recent field research shows that previous informal agreements among pickers can be contradicted by introducing new choice regimes, which ultimately reduces workers’ satisfaction (Westphal et al., 2025).

Besides the growing research in order picking, the healthcare sector has also focused on autonomy, such as allowing staff to batch patients. Studies point out that this hurts performance in hospitals (Feizi et al., 2023; Hossain and List, 2012). Relatedly, it has been shown that performance may be reduced when doctors prefer simple over complex tasks (Ibanez et al., 2018; Kc et al., 2020). Both studies find that favoring easy tasks impedes learning, which can lead to adverse performance outcomes. The role of learning in task choice is complex. Unlike these studies in healthcare, our study is set in a more standardized and repetitive production context with less potential for learning.

Overall, the research on autonomy in task choice is growing but inconclusive. The field does not consistently weigh the benefits and possible risks of allowing workers to choose. Our field experiment adds to this discussion. In our setting, we propose that workers may alter their behavior when granted the autonomy to review and choose tasks. Specifically, our study posits that granting the freedom of choice should enhance several work outcomes, in line with prior research from other contexts. Interestingly, we were not able to universally support this optimistic prediction and discuss how the idiosyncratic manufacturing environment using wearables affects the results.

We contribute to the nascent behavioral operations literature on task choice (e.g., Chen et al., 2024; Westphal et al., 2025) with evidence on the role of worker autonomy in a unique, digitalized production environment. In our context, the concept of autonomous choice is particularly relevant. The ability of workers to choose tasks, facilitated by the smartwatch system, aligns with the notion of self-organization that characterizes autonomy (Deci and Ryan, 2000). However, in practice, digital systems often still exhibit controlling features in the workday of shop floor employees. Thus, our study examines the effects of increasing worker autonomy through task choice in manufacturing. We examine different regimes of choice: One system offers workers an overview of pending tasks to choose from, while another fully automates task allocation by assigning tasks directly to workers.

Hypotheses

Our study proposes differences between two distinct work task assignment systems that we refer to as delegation and choice. Delegation implies that a digital system automatically matches open tasks and workers by assigning each worker to

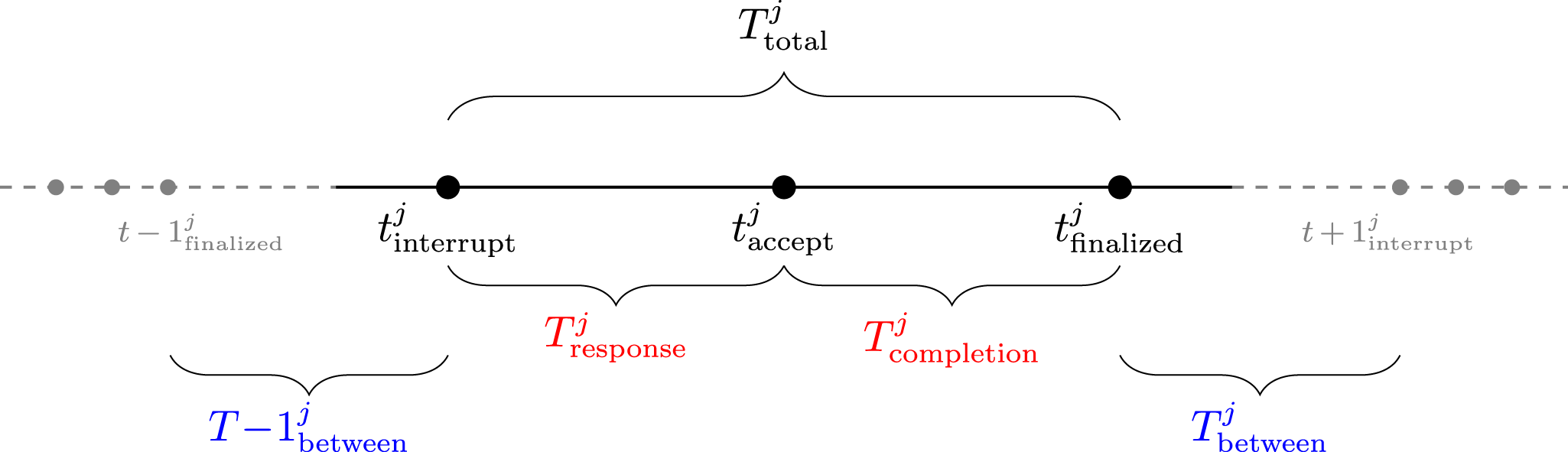

Self-Determination Theory has also been suggested to have implications for individuals’ task performance (Williams and Luthans, 1992). In our context, we conceptualize task performance in terms of response time and completion time. Response time refers to the interval between a task’s creation and immediate posting in the system until a worker accepts it. Completion time refers to the time required to complete a task once it has been accepted.

We hypothesize that transitioning from delegation to choice will impact response time by reducing the time a task remains waiting until acceptance by a worker. According to Self-Determination Theory, individuals who have the freedom to choose develop a higher level of intrinsic motivation for their actions (Patall et al., 2008). When individuals feel autonomous and are intrinsically motivated, they demonstrate proactive engagement with the tasks they encounter (Grant et al., 2011). Once workers have the autonomy to choose from a transparent set of options, the positive effects can manifest in terms of proactive behavior, including faster responses to pending work tasks. This argument aligns with studies that have demonstrated how autonomy increases the likelihood of proactive behavior contributing to task performance outcomes (Kim et al., 2009).

In contrast to the choice scenario, pro-activism is not feasible in the delegation scenario, where the task assignment system prescribes specific tasks to workers and expects compliance. This system, in fact, actively suppresses pro-activism and workers taking self-guided initiative on the factory floor. Consistently, a lack of autonomy can also cause secondary adverse effects on pro-activism as employees tend to focus on the negative aspects of a controlling situation, thereby impeding them from taking quick actions (Bledow et al., 2022). On the contrary, the choice scenario can potentially reduce the response time by dismantling controlling aspects of work that consume workers’ cognitive resources (Bledow et al., 2022). Consequently, it seems more likely that workers would promptly begin working on pending tasks in the choice scenario compared to the delegation scenario. We hypothesize:

Enabling workers to choose tasks more autonomously (choice) will result in shorter response time compared to delegating single tasks to them (delegation).

Existing research indicates that workers with the freedom to choose tasks aligned with their personal preferences tend to exert greater effort overall (Iyengar and Lepper, 2000; Patall et al., 2008). Moreover, employees tend to demonstrate more prosocial behaviors when granted autonomy (Donald et al., 2021), and autonomy enhances the impact of beneficial personality traits on job performance (Barrick and Mount, 1993). In our study, we contribute to this perspective by incorporating the aspect of skill into our arguments.

In our context, workers only have visibility of the tasks on the smartwatch that are aligned with their assigned codified skill. Codified skills reduce each worker’s competencies to binary indicators, allowing integration into digital systems. However, beyond these codified representations, workers also possess tacit knowledge about their level of task mastery on the factory floor. Workers choose tasks based on personal preferences among those for which they are deemed qualified according to the codified skill set. These preferred tasks may be seen as more enjoyable or interesting to workers, leading them to apply themselves with greater intensity, resulting in reduced processing time. Consistently, research suggests that workers derive satisfaction from utilizing their most developed skills, which can trigger a sense of flow, prompting them to prioritize these tasks first (Kamei and Markussen, 2023; Spreitzer et al., 2005). This alignment between skills and tasks should reduce the completion time. As a result, we anticipate that the mechanisms described above will decrease the time required to resolve machine interruptions on the shop floor. Based on this rationale, we hypothesize:

Enabling workers to choose tasks more autonomously (choice) will result in shorter completion times compared to delegating single tasks to them (delegation).

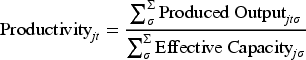

In addition to probable effects on the response time and completion time, we argue that the choice scenario will enhance productivity. In our research context, productivity is determined by the ratio between the actual number of units produced and the expected production volume. The expected volume represents the effective capacity, taking into account planned downtime, such as machine setup times. We contend that increased autonomy will yield benefits for workers’ intrinsic motivation, as they feel more in control and self-efficacious when they receive information about all pending tasks. This is expected to improve productivity.

As in other highly automated field settings, productivity can be difficult to attribute to individuals, as it can only be accurately measured at the final stage of production (Senoner et al., 2022). The underlying assumption in our theorizing is, therefore, that operator behavior has a meaningful influence on machine output. Several empirical studies have shown that operator behavior can directly affect productivity (Blanes i Vidal and Nossol, 2011; De Leeuw and Van Den Berg, 2011; Hossain and List, 2012). Especially in settings where workers are consistently working in one factory hall, as in our case, individual effort, attention, and motivation are likely to manifest in machine-level outcomes. Thus, improvements in machine performance can serve as a reasonable proxy for the behavioral impact of our intervention, allowing us to theorize at the individual level while testing at the operational machine level.

We expect that offering choice will have favorable effects in manufacturing, particularly through increased motivation. Along the lines of earlier theoretical work (e.g., Langfred and Moye, 2004), we expect that providing autonomy via choice will lead workers to experience improved psychological states of responsibility and flow. Related empirical studies have indicated that higher autonomy also leads to an expansion in the breadth of activities completed by employees (Morgeson et al., 2005). This implies that in the choice scenario, workers will not only respond with increased effort but also realize additional pooling benefits on the factory floor as interruptions can then be addressed by a more flexible workforce with overlapping roles and skills. In general, workers are also likely to exert greater effort as a form of reciprocation for the provision of choice (Eisenberger et al., 2001). In summary, we expect that autonomy contributes to an overall increase in productivity on the shop floor based on beneficial psychological states, role breadth, and worker reciprocation. Thus, we hypothesize:

Enabling workers to choose tasks more autonomously (choice) will result in higher productivity compared to delegating single tasks to them (delegation).

Study Design

Our study utilizes a pre-post assessment of human behavior and system performance in a controlled field environment. We conducted the study in two comparable production facilities in Italy and Germany. The geographical distance helped us avoid contagion effects during the treatment (Ibanez and Staats, 2019). First, we established their comparability based on field knowledge to ensure that both groups share similar processes (i.e., molding) and the high automation level typical in the hosting company. Second, we analytically verified that both groups are comparable (see “Parallel Trends Assumption” in Section 6). As a result, we identified and verified two similar groups and subsequently allocated the treatment between them randomly, as in a coin toss. Our randomization level is at the plant level to reduce spillovers that can threaten internal validity (Gao et al., 2023). Combining randomness with in-depth field knowledge, this stratified procedure prevents possible randomization biases that can occur when allocating the experimental conditions randomly across groups that are not comparable or not representative of the target population (Ibanez and Staats, 2019). The randomization outcome was that the better-performing group was determined as our treatment group. This makes it more difficult to find performance improvements and our estimates overall more conservative.

Beyond reducing randomization biases, our quasiexperimental approach using stratification has various additional strengths, such as increasing the relevance of research and strengthening collaboration between scholars and practitioners (Grant and Wall, 2009). Moreover, conducting a field experiment enables us to test our hypotheses within an authentic production environment, enhancing validity and realism (Morales et al., 2017). Furthermore, our participants consist of actual production workers (i.e., “targeted sample”), as opposed to alternative participant sources such as student pools, which can introduce limitations in research designs (Eckerd et al., 2021). Moreover, all actions and decisions made by participants in our study have real consequences rather than being hypothetical, and we observe objective operational outcome variables, both of which are recommended features for rigorous experiments (Bachrach and Bendoly, 2011).

We conducted our study in collaboration with a global manufacturing firm that serves as a supplier for the automotive industry, specializing in producing complex metal parts. Our host company is a large supplier with an annual turnover of approximately USD 9 billion. The main production process involves three steps from press molding via thermal treatment to further mechanical treatments. Automation plays a significant role in the molding and mechanical treatment steps. Thermal treatment is a more straightforward and comparably less technology-driven process, in which parts are treated with heat in large furnaces. For our study, we focus on the molding process. The molding production area in each plant comprises approximately 50 machines, operating in three main states: Producing parts, planned downtime for setup or idling, and unplanned downtime, primarily due to interruptions. Interruptions may arise due to a shortage of raw materials, a full finished goods buffer, or technical malfunctions like robotics collisions or damaged tools. Our study centers explicitly on these machine interruptions, as they represent a significant source of machine downtime within the factory.

Workers in our digitalized production environment do not roam the production area to spontaneously identify and resolve problems, as would be the case in non-digitalized production settings. Instead, machines and sensors communicate with a central system, which matches tasks to available and suitably skilled workers in real-time. The initial delegation system simultaneously notifies all available and adequately skilled workers by directly prompting them on their wearable smartwatches. The tasks are pushed to the smartwatch following the first-in-first-out principle. Once a worker accepts a task, it disappears from the smartwatches of other workers, and the tasked worker does not receive further prompts until the task is completed. Online Supplement A provides impressions from the digitized shop floor. In our field experiment, all system features remain constant except for the presentation of tasks to workers. The following section describes the treatment in detail.

Treatment

The treatment involves modifying the work allocation for the treatment group, transitioning from unsolicited prompts displayed on workers’ smartwatches (delegation) to presenting a list of available tasks for active selection (choice). Like the previous system, each worker’s list only includes tasks corresponding to their codified skills, and a task can appear on lists of multiple workers simultaneously. When one worker picks a task, it disappears from others’ watches, as in the prior system setup. Therefore, other features of the assignment process remained unchanged during the experiment, with the treatment depicted in Figure 1 as the sole variation.

Overview of

The left-hand picture illustrates the delegation scenario. The smartwatch displays a direct prompt about an interruption at Palletizer 3175 at 15:46—a task that aligns with the worker’s skill profile. The interruption describes a blockage of the chain conveyor. In this scenario, workers view one task at a time and face significant implicit pressure to accept. While rejection is technically possible, acceptance is generally expected. This reflects limited autonomy in the sense of Self-Determination Theory. The right-hand picture shows the choice scenario where the smartwatch lists all pending production tasks that match the worker’s skill profile. Other pending tasks can be viewed by scrolling up and down. This scenario reflects high autonomy.

Our experimental procedure included two main phases. In the first phase, production was carried out using the initial delegation system. This system had been initially implemented 17 months earlier. To mitigate the impact of exogenous influences, we limited the pretreatment phase to the 10 weeks preceding the delegation-choice system change (see Online Supplement B for sensitivity analyses concerning the pretreatment period length). The post-treatment phase encompassed the three weeks following the system change, allowing for a comparison of the two periods and further minimizing any exogenous effects. We cannot cover a longer post-treatment period since the company decided to implement the new system across all its locations. Thus, the field experiment in our main analysis spans a total of 13 weeks. We note that our relatively short post-treatment period is comparable in length to other field experiments in production facilities (Hossain and List, 2012), and we also conducted an interrupted time series analysis over 20 weeks as additional support (see Online Supplement C).

The system change was communicated from management to the shop floor through team leaders, shift meetings, and printed one-page summaries, following the standard practice in the factory. The information was disseminated one week prior to the actual change to ensure sufficient advance notice and to comply with the company’s standard practices. On the day of the system change, two researchers attended all shift handover meetings to address any questions or concerns related to the new system. Therefore, our study does not involve deception but also does not explicitly disclose the hypotheses of our research (Eckerd et al., 2021). We took care to avoid providing instructions on whether or how the workforce should modify their work routines or behaviors in response to the change. We emphasized that the research team had no financial interests in the outcomes, minimizing demand effects through desirability (Eckerd et al., 2021). Previous studies have demonstrated that only strong and deliberate signals can trigger significant demand effects (De Quidt et al., 2018), which we carefully avoided in our procedures.

We mitigated possible Hawthorne effects by regularly conducting observational visits before the manipulation, which helped establish a low power distance between the external researchers and the workers. Consequently, the presence of researchers was not a novel or surprising situation for the workers, minimizing the potential triggering effect. We analytically examine this aspect in Section 6. No incentives were provided during the experiment to avoid altering the existing reward structure. As our study involved administering the treatment as an objective variation of parameters, we did not perform a traditional manipulation check, as recommended by Lonati et al. (2018).

Empirical Strategy

Evaluation Strategy

We distinguish between three variables that the treatment is expected to affect. First, we consider

To emphasize the sequential nature of interruptions—where new ones may occur following the resolution of a previous task—we define the interval between two consecutive interruptions as

All the measures described above are directly observed, following recommended practices for operations management experiments (Bachrach and Bendoly, 2011). Finally, we note that the three dependent variables belong to two data sets. The task-related variables (i.e., response time and completion time) were obtained from a data set that tracks all tasks using the smartwatch technology. The productivity variable was obtained by extracting hourly objective output data recorded by the machines (i.e., machine status report data).

Data Strategy

For our main analysis, we collected task-related data over a 13-week period, comprising 31,429 tasks, along with unit output data at the machine level based on 66,233 machine status reports, covering both the treatment and control groups. We show more detailed descriptive statistics in Section 5. To fully leverage the richness of our datasets, we conduct our analysis at the most granular level available for both datasets—namely, the machine, task, and hourly level.

We incorporated dummy variables to distinguish between the three shifts and their respective workforce in the regression equation. Second, we included the current workload as a variable to capture the effects of varying demand. The workload was calculated as the ratio between the net total number of active machines (excluding machines undergoing planned downtime) and the clocked hours of workers. Furthermore, we account for the daily interruptions each machine experiences, as recorded exclusively in our machine status report dataset, as well as the number of users notified per task, which is available only in the task data.

We processed the task data captured by the smartwatch system and the machine data collected from the manufacturing execution system before the analysis. In the machine data, which served as the basis for testing H3, we excluded all records related to planned or unplanned maintenance. This exclusion was crucial as our aim was to measure the system performance of different task assignment systems in handling interruptions, rather than assessing the performance of maintenance activities. Additionally, we excluded weekends as they do not reflect normal operational conditions. For instance, workers take on a range of tasks that are not recorded by the system, like cleaning the machines or preparing setups. The task dataset, which formed the basis for testing H1 and H2, contained information on machine interruptions that were resolved by workers. Similarly, we excluded production days with reduced working hours and weekends from this dataset.

We replaced the upper and lower

Identification Strategy

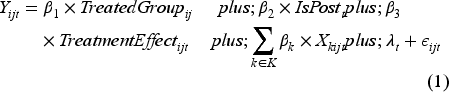

To examine the impact of the choice system on our dependent variables, we employ a DiD estimation strategy. The treatment group received the new choice system, while the control group continued to operate as before, such that we set an index for the group as

DiD is a well-established econometric approach, for instance, used to assess the effect of policies (Card and Krueger, 2000). It has also been successfully applied to issues related to operations management (Lu and Lu, 2017; Pierce et al., 2015; Staats et al., 2017; Tan and Netessine, 2020). In our study, we leverage machine IDs to distinguish between machines in the treatment and control groups in order to assess the effect of the choice system on productivity. To analyze completion time and response time, we use task IDs to determine whether a task was completed under treatment or control conditions. Specifically, we estimate the following baseline regression at the machine (or task) level to evaluate the impact of the choice system on productivity, completion time, and response time:

We estimate this regression using both the task-level data—where we assess the treatment effect on response and completion time—and the machine status report data, where we examine the treatment effect on productivity. All dependent variables are measured on a continuous scale. In the following section, we describe additional components incorporated to tailor our parsimonious regression model to the challenges posed by our panel dataset.

Descriptive Findings

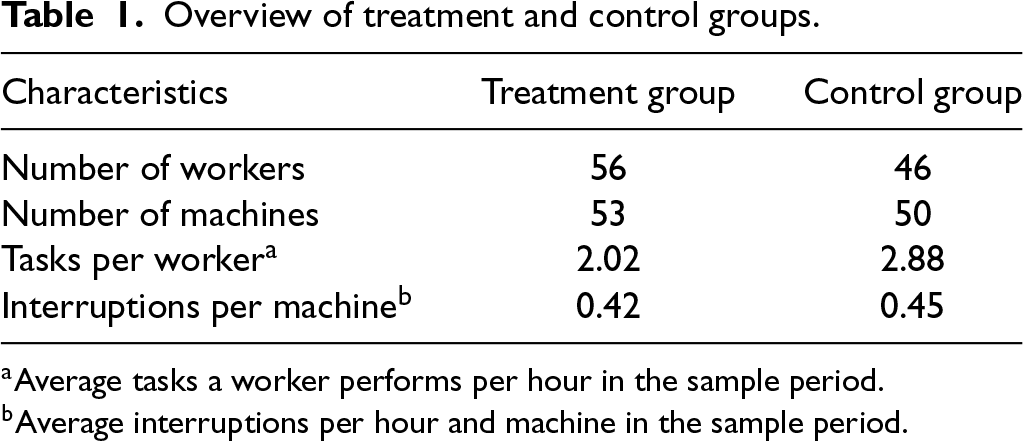

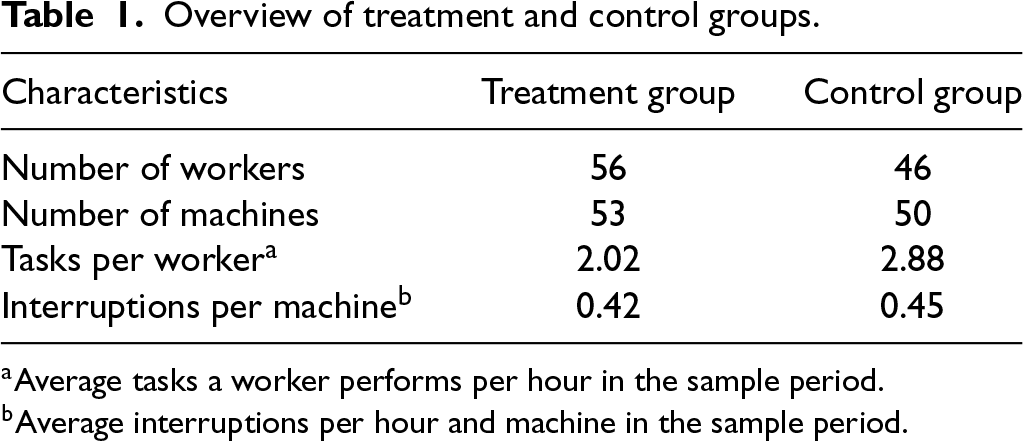

Before presenting our DiD results, we report descriptive statistics as shown in Table 1. The treatment and control groups have a similar number of workers and machines, as well as comparable averages in interruptions per machine and tasks per worker. Structural similarity is a key factor supporting thevalidity of the parallel trends assumption underlying the DiD approach. We further assess this assumption in Section 6.1 and Online Supplement E. Additional descriptive insights on our key variables are provided in Online Supplement F.

Overview of treatment and control groups.

Average tasks a worker performs per hour in the sample period.

Average interruptions per hour and machine in the sample period.

Overview of treatment and control groups.

Average tasks a worker performs per hour in the sample period.

Average interruptions per hour and machine in the sample period.

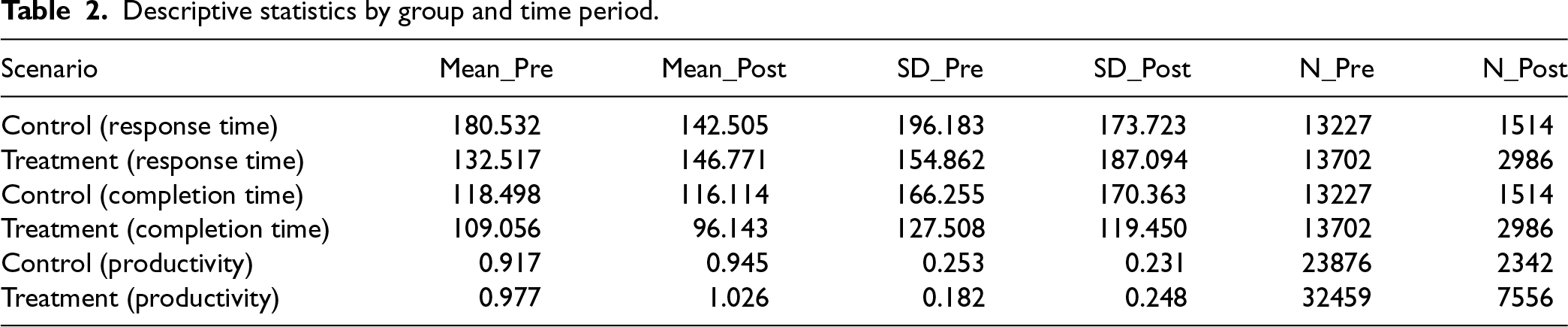

Moreover, to provide a more granular comparison between the treatment and control groups over the entire treatment period, we present summary statistics for the dependent variables—response time, completion time, and productivity—in Table 2. We observe that the mean response and completion times are slightly higher in the control group than in the treatment group. Regarding machine productivity, we note that the average value is higher in the treatment group compared to the control group. Overall, the treatment group performs better, which makes further improvement more difficult and our results more conservative.

Descriptive statistics by group and time period.

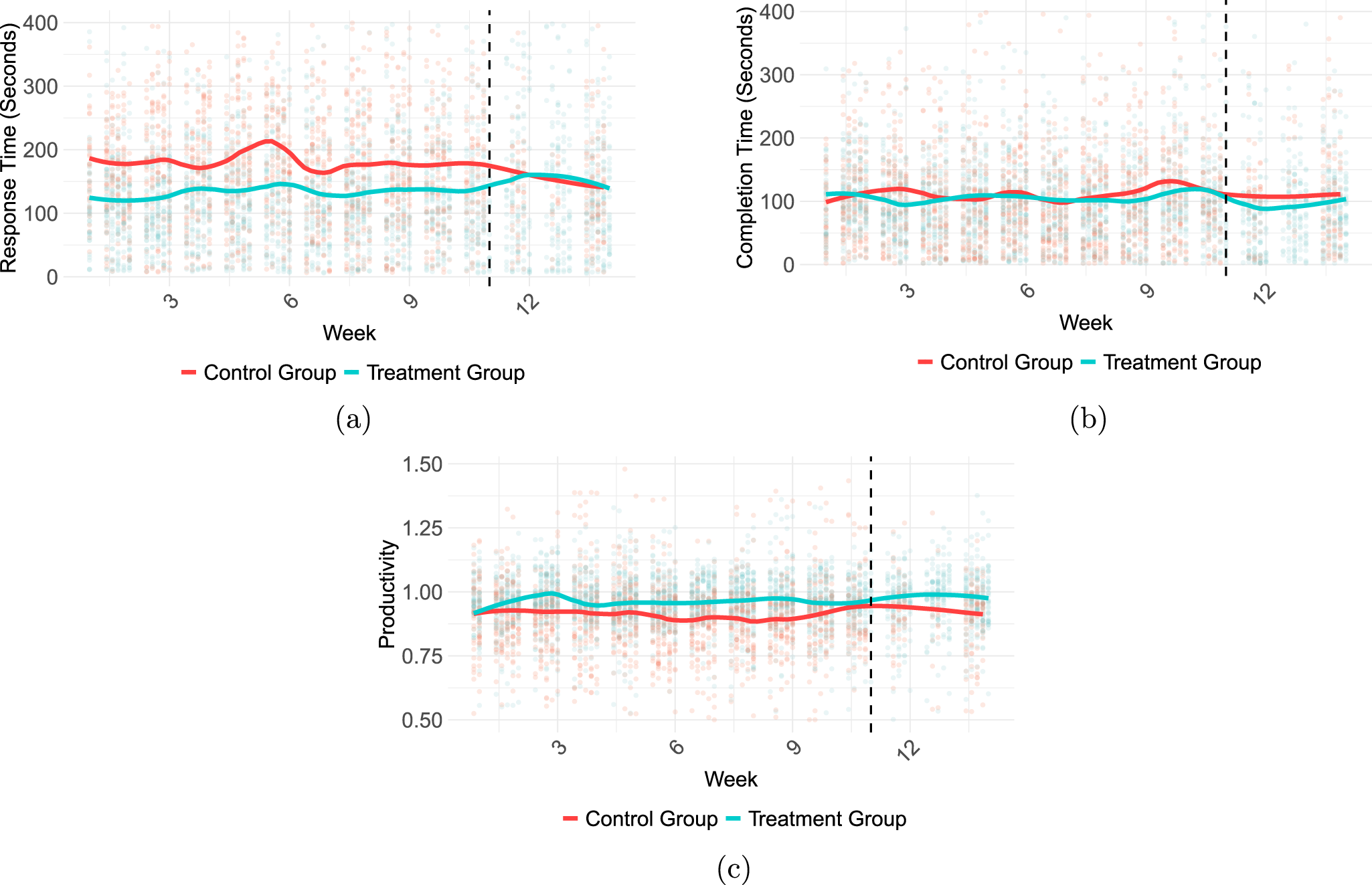

We illustrate the development of our dependent variables in Figure 2. These charts display daily and machine-specific response times (a), completion times (b), and productivity (c), along with a locally weighted and smoothed trend line for each group across the study period. Visual inspection of the moving averages suggests that both groups exhibit similar patterns prior to the treatment. As our assessment is executed at the machine level rather than the group level, assessing stationarity solely through trends on the group level is not the ideal strategy. In other words, Figure 2 cannot support robust conclusions on stationarity or the comparability of the groups. Instead, we address stationarity using statistical tests on the machine level in Online Supplement G to preclude random walk time series. Other robustness checks underlining the validity of our DiD setup are later discussed in detail.

Dependent variables time series. (a) Response time (sec); (b) Completion time (sec); (c) Productivity.

Effect on Response Time

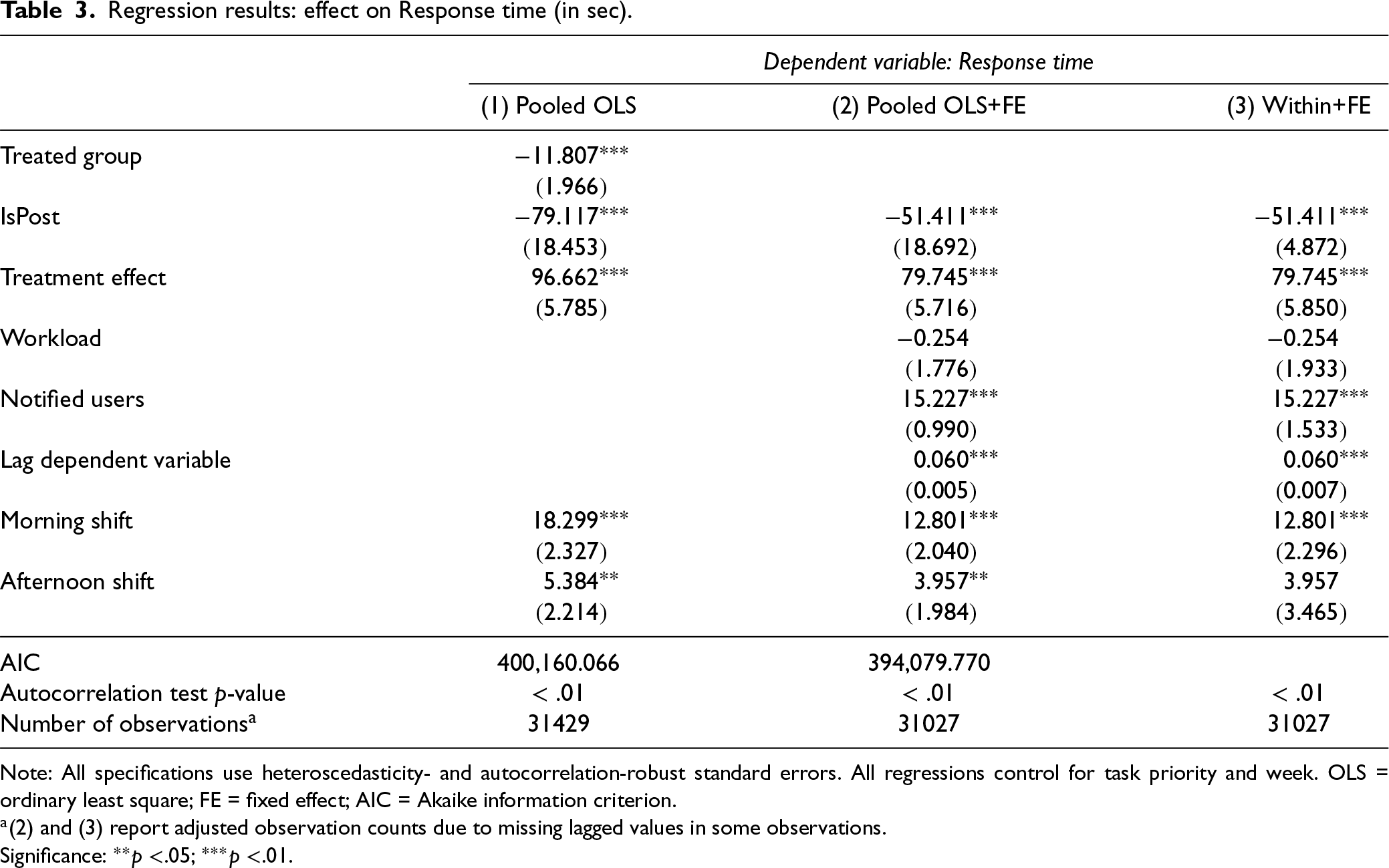

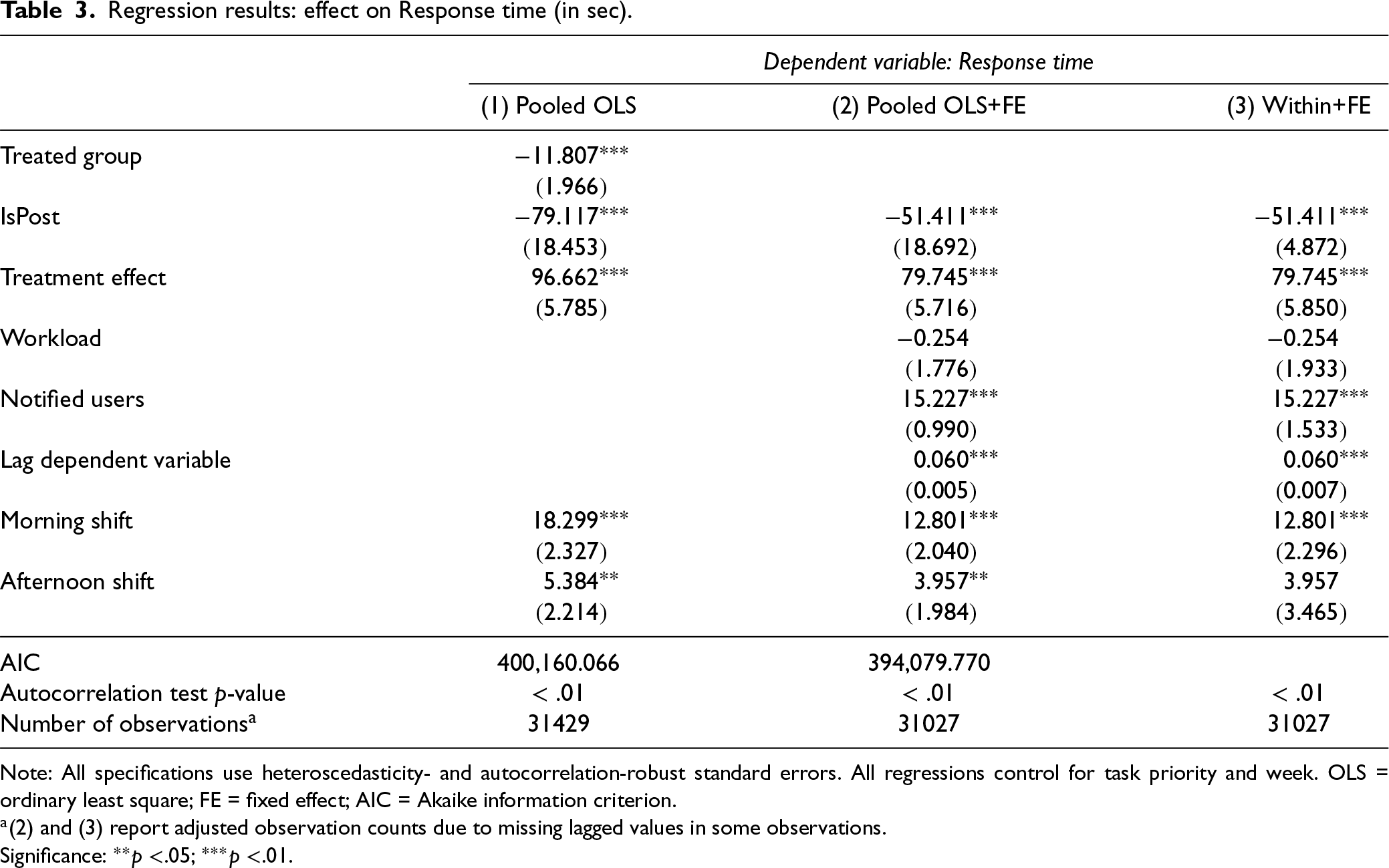

Table 3 presents the treatment effects of the choice system on response time in the treatment group relative to the control group (DiD). All reported specifications include time FEs at the weekly level and on the shift level to account for general trends affecting both groups over time and on each day.

Regression results: effect on Response time (in sec).

Note: All specifications use heteroscedasticity- and autocorrelation-robust standard errors. All regressions control for task priority and week. OLS = ordinary least square; FE = fixed effect; AIC = Akaike information criterion.

(2) and (3) report adjusted observation counts due to missing lagged values in some observations.

Significance:

.05;

.01.

Regression results: effect on Response time (in sec).

Note: All specifications use heteroscedasticity- and autocorrelation-robust standard errors. All regressions control for task priority and week. OLS = ordinary least square; FE = fixed effect; AIC = Akaike information criterion.

(2) and (3) report adjusted observation counts due to missing lagged values in some observations.

Significance:

In specification (1), we employ a parsimonious model without control variables, using pooled OLS estimations that ignore the panel structure—treating all observations as independent—and include only a group dummy. In (2), we estimate a pooled OLS model with machine-specific FEs, implemented via dummy variables for each machine and additional control variables. In (3), we estimate a FEs panel model at the machine level using the within estimator, also using all control variables. This controls for time-invariant unobserved heterogeneity at the machine level. Across all specifications, we observe a positive treatment effect, indicating that workers spend more time responding to a new task. In other words, allowing workers to choose their next task increases the time tasks remain unaccepted. Additionally, we find that the number of notified users affects response time—informing more users leads to longer response times. This indicates that social loafing may play a role in the factory.

To address autocorrelation, we conduct Breusch-Godfrey– tests for models (1) and (2), and a panel-specific Breusch-Godfrey– version for model (3). The

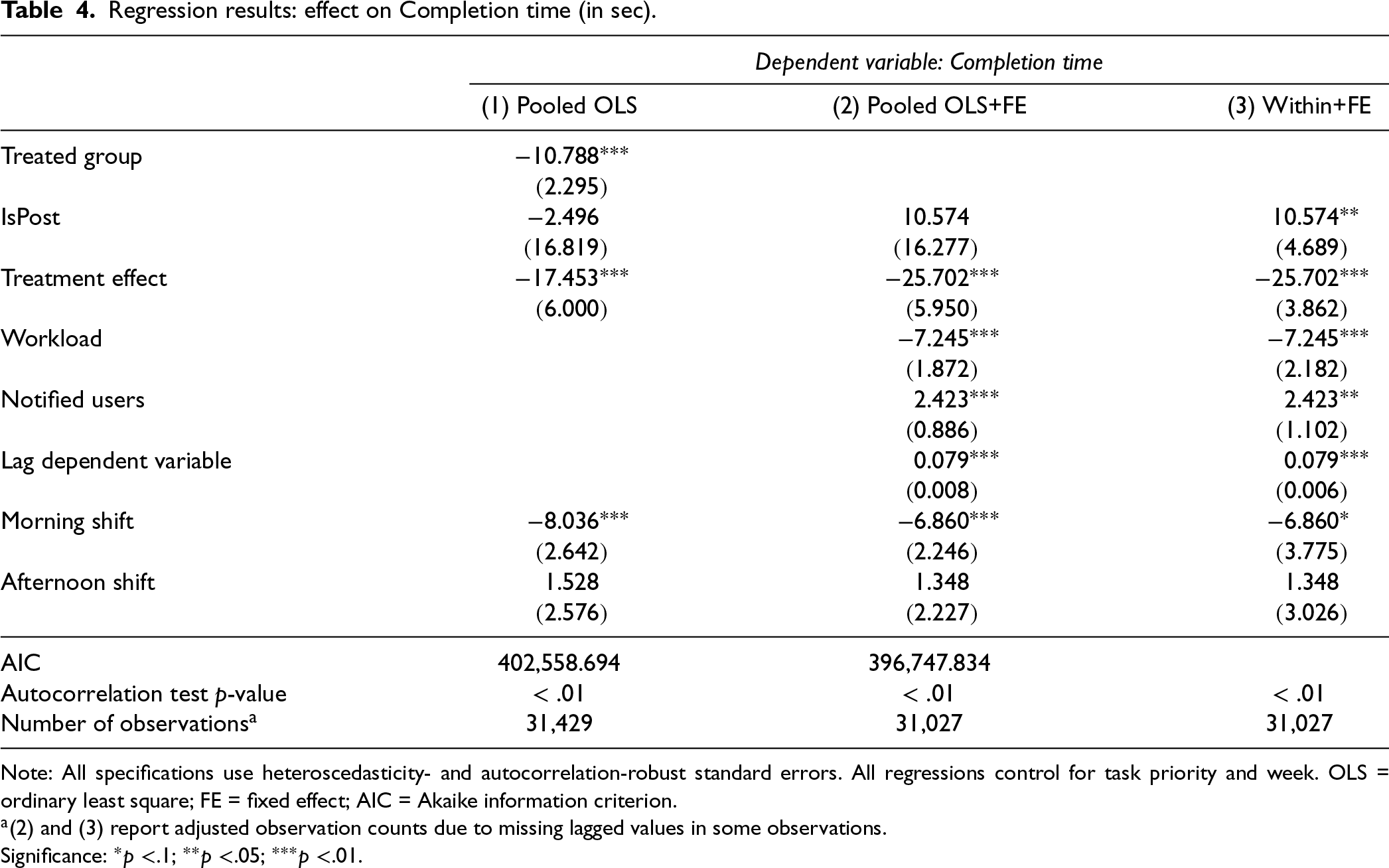

In Table 4, we present the regression results for our second dependent variable, completion time, following the same structure as before based on a (1) simple pooled OLS model, a (2) pooled OLS model with FE and controls, and a (3) within-panel model with FE and controls.

We find that the treatment is associated with a decrease in completion time. This suggests that giving workers the ability to choose tasks autonomously leads to faster completion of accepted tasks. In our post hoc analyses, we further explore the behavioral mechanisms behind these results.

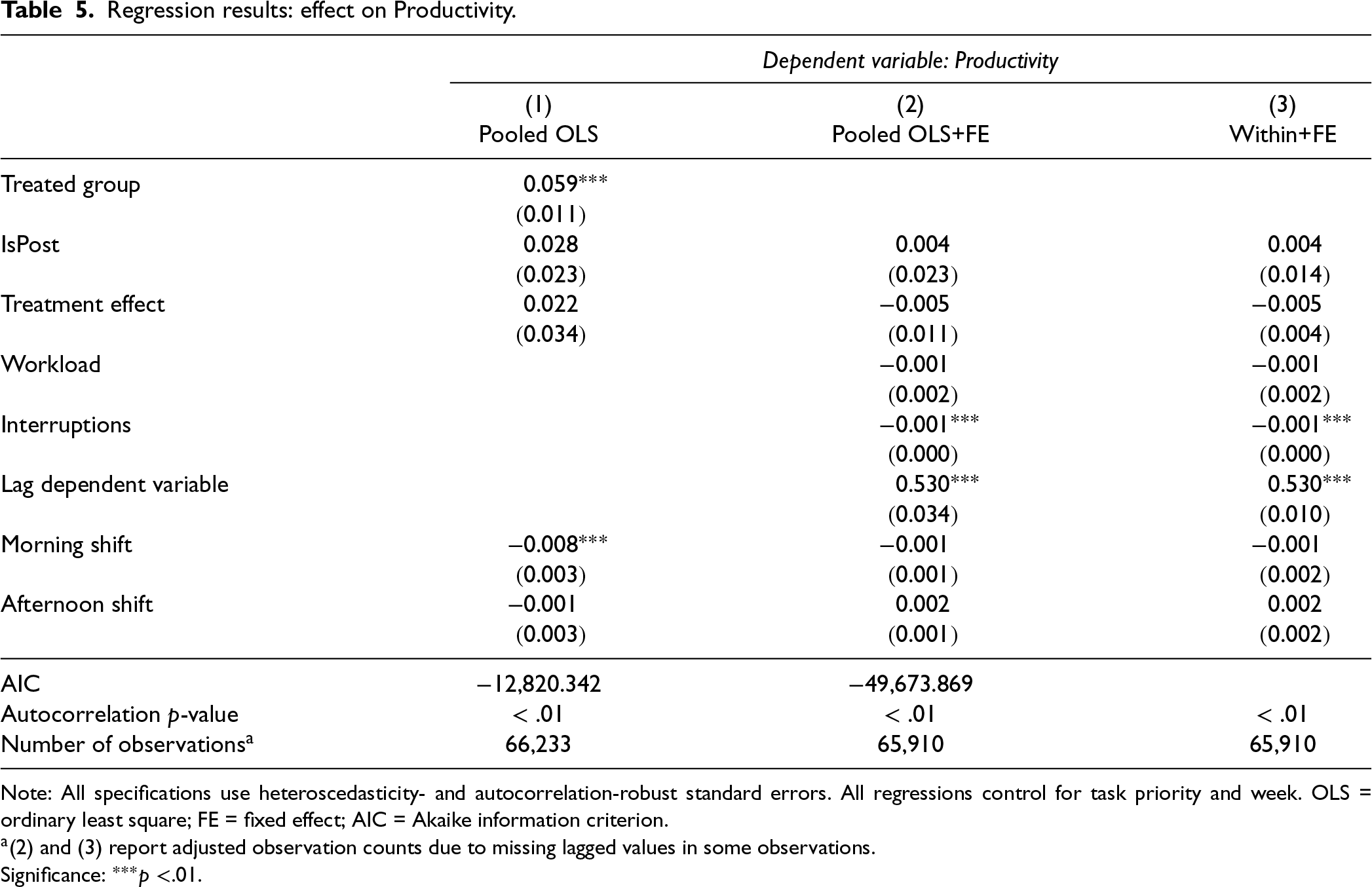

Effect on Productivity

Instead of analyzing task-level data—which we used in the two previous regressions—we now focus on our second data set, that is machine status report data at the machine level. Productivity of single tasks is not measurable in our highly automated context, as in other studies too (Senoner et al., 2022). We employ the same specification design as in the previous regressions using (1) a pooled OLS without controls, (2) a pooled OLS with FE and controls, and (3) a within-panel model with FE and controls (see Table 5). We do not find a significant aggregate effect of the treatment on overall machine productivity. This suggests that competing behavioral effects have canceled each other out, resulting in the overall null effect. We explore this further in several post hoc analyses.

In summary, introducing the choice system increases workers’ response time when selecting tasks—a potentially undesirable outcome from a management perspective. However, task completion time decreases after implementing the new system—an advantageous effect. Ultimately, the total time from the occurrence of the interruption to its final completion increases, as the increase in response time exceeds the decrease in completion time. In total, we find no significant impact on machine productivity. We next examine the robustness of these results before exploring underlying mechanisms through post hoc tests.

Robustness Checks

We show the basic robustness checks of a field experiment below. These include detailed testing of the parallel trends assumption, examining a potential Hawthorne effect, and performing a placebo test. In the Online Supplement, we additionally provide an extensive set of advanced robustness checks, which further increase the confidence in our findings. Specifically, we test the sensitivity of our findings regarding different pretreatment periods (Online Supplement B), run an interrupted time series analysis to exclude any influence of the control group (Online Supplement C), run alternative parallel trends tests (Online Supplement E), examine the stationarity of our data (Online Supplement G), run regressions on data without removing outliers (Online Supplement H), assess endogeneity using a General Method of Moments approach (Online Supplement I), use an artificial control group based on different pretreatment data (Online Supplement J), and conduct a Bayesian analysis for better uncertainty quantification (Online Supplement K).

Parallel Trends Assumption

The credibility of the DiD approach hinges on the assumption of parallel trends, positing that treated and control groups would have followed similar performance trajectories absent the intervention. We support this assumption by drawing on detailed knowledge of both groups’ production processes (see Section 4.3) and the pretreatment trends of the dependent variables in Figure 2. The figures show that the treated and control groups follow comparable trends before the intervention.

To formally test the assumption of parallel trends, we analyze whether the relative performance gap between the two groups changed over time before the treatment. Specifically, we estimate an interaction between group differences and a linear time trend that we include into the pooled OLS with FE (2) for all dependent variables using only the pretreatment period. A significant interaction would suggest diverging trends pretreatment. However,

Hawthorne Effect

The Hawthorne effect is a potential confounding factor that could distort the true impact of the treatment on worker performance. It refers to a temporary shift in performance due to workers’ awareness of being monitored (Landsberger, 1957). To minimize the potential Hawthorne effect, we asked the company management to maintain a “business as usual” approach, avoiding any unusual or excessive attention from management on the shop floor. During the study, only team leaders who were regularly present on the shop floor represented management. Additionally, during the treatment phase, the research team limited their visits to the day of system implementation to address any questions or concerns, thereby reducing possible Hawthorne effects.

The system change was announced one week before implementation, consistent with standard practice in the company. If a Hawthorne effect were present, we would expect performance changes starting at the announcement. To assess this, we analyzed the two weeks prior to the treatment, distinguishing between the preannouncement and postannouncement weeks. Using the pooled OLS with FE and controls regression model (2) where we include higher-order time effects (see Online Supplement E) and autocorrelation- and heteroscedasticity-robust standard error estimation, we find no significant differences between treatment and control groups:

Placebo Tests

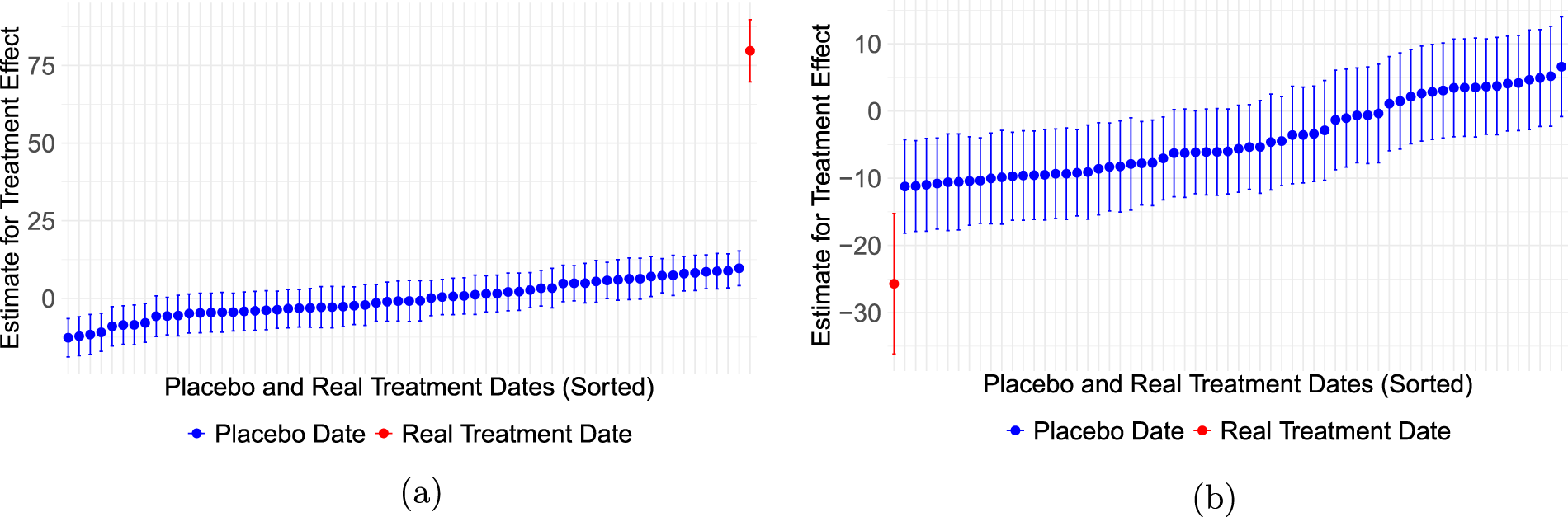

We mitigate the possibility of identifying false-positive outcomes in our investigation (Bertrand et al., 2004). For that purpose, we run a placebo test guided by the approach in Pierce et al. (2015), re-allocating the treatment to 60 randomly chosen dates from the pretreatment period that are not the actual treatment date. If the hypothetical “treatments” showed an effect, it would indicate a possible false positive in the main hypothesis test. We run the procedure using our pooled OLS with FE estimation (2) for all our dependent variables and maintain the same length of the pre- and post-treatment period also in our placebo tests to obtain comparable standard errors for all simulated coefficients.

Figure 3 presents the results for the significant treatment effect on response and completion time. Each point represents the treatment estimate along with its 95% confidence interval, ranked by size. The response time plot clearly indicates that a strong treatment effect appears only at the actual treatment date. Similarly, the completion time plot shows a much stronger effect at the real date compared to the placebo dates, though the difference is less pronounced than for response time. For completeness, we also run the placebo test for productivity, which demonstrates that the real treatment date aligns consistently with the placebo treatments when no significant effect is present (see Online Supplement L).

Placebo Test - 60 dates from the pretreatment period and the real treatment date. (a) Response time (sec); (b) Completion time (sec).

After assessing how the provision of choice affects our dependent variables using different analysis approaches, we study the underlying mechanisms driving these outcomes. Specifically, we move beyond examining task choice and productivity at the machine level toward more detailed analyses—particularly at the individual worker level—to uncover behavioral mechanisms. In our post hoc analysis, we emphasize the following primary pathways through which our intervention impacts productivity, specifically through the following:

We first demonstrate that workers change their choosing behavior and that

Effect on Task Choice Behavior

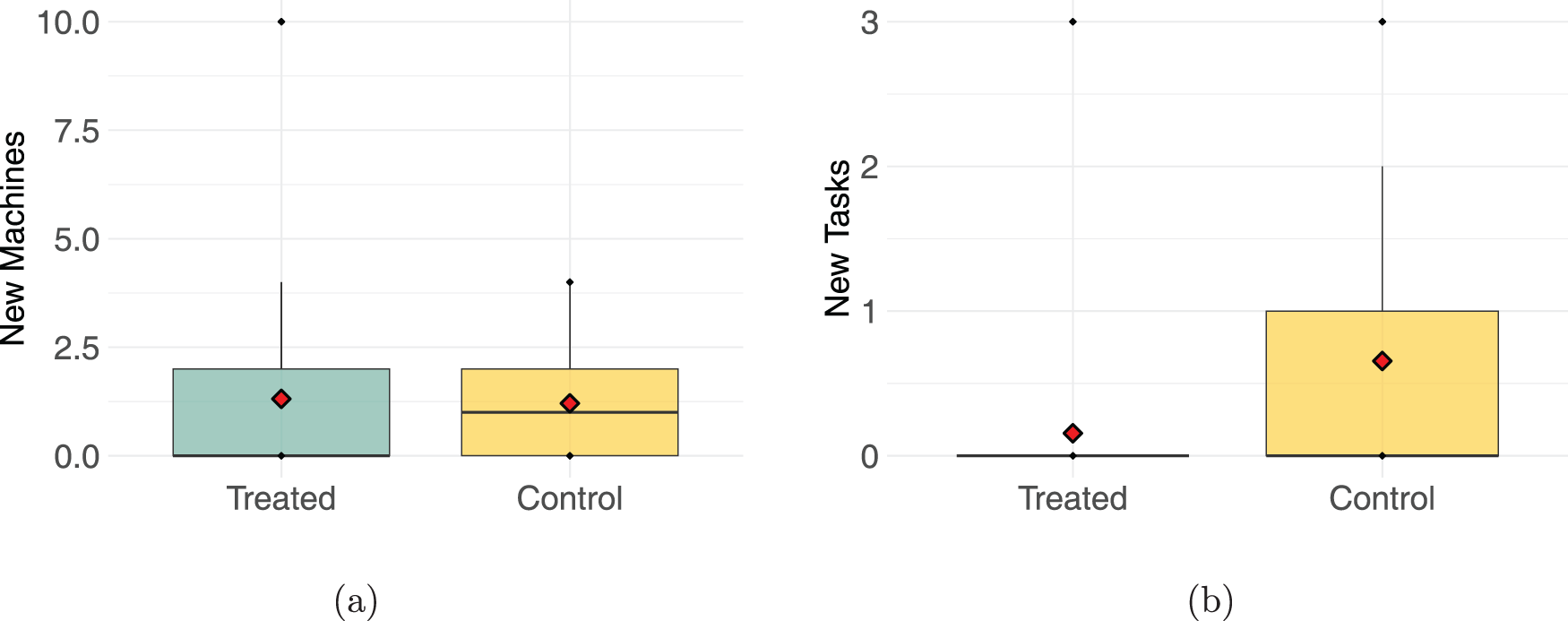

We examine whether workers tend to select particular tasks or machines when given a choice. For each worker, we identify the machines they operated and the tasks they performed before the treatment. With that information, we can test how often workers in the treatment and control groups addressed unfamiliar tasks or machines they had

Comparison of new machines and new tasks handled after treatment. (a) New machines; (b) new tasks. Note: One-sided Wilcoxon tests show a pre-post increase in new machines operated for both groups (

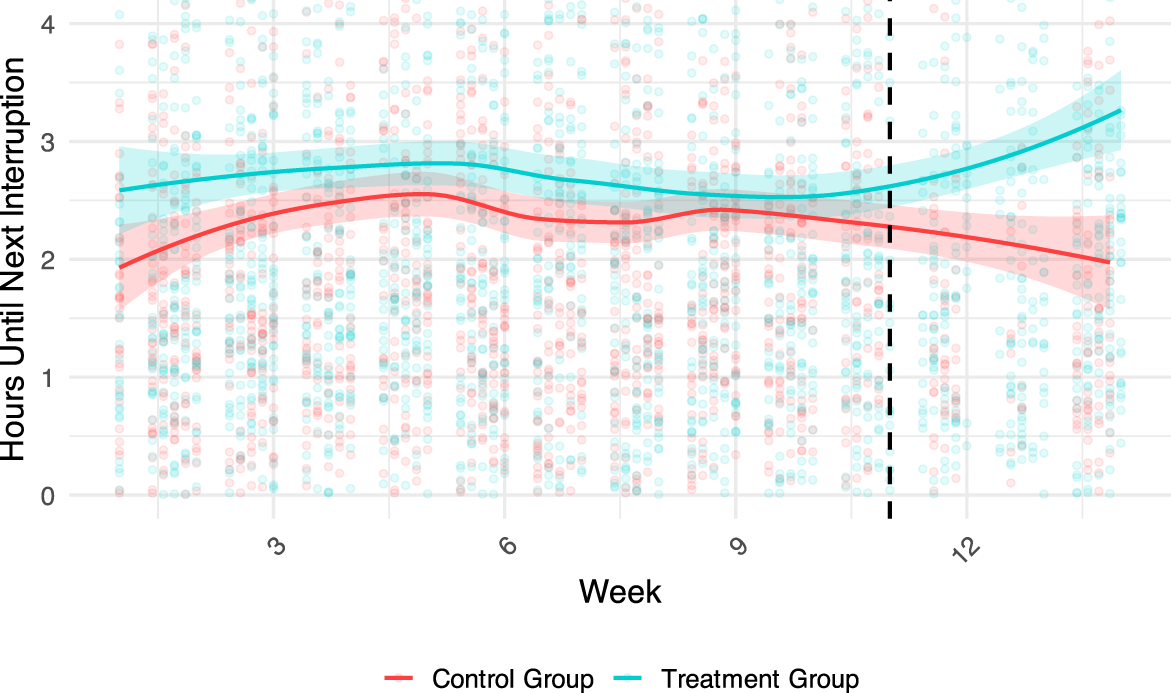

In a next step, we investigate whether this task choice behavior in favor of established abilities and past experience impacts the quality of work. Specifically, we assess whether it affects the time between interruptions, which would indicate that workers are finding more long-lasting solutions to problems. We find that the time until the next interruption occurs—

Time between machine interruptions.

Figure 5 suggests that employees given a choice to complete tasks with more care or using richer past experience, reducing the risk of further interruptions. Increasing the length of periods that machines run without interruption should increase productivity. Table 5 shows that the effect of interruptions on productivity is highly significant, although low in magnitude. We argue that this is particularly driven by

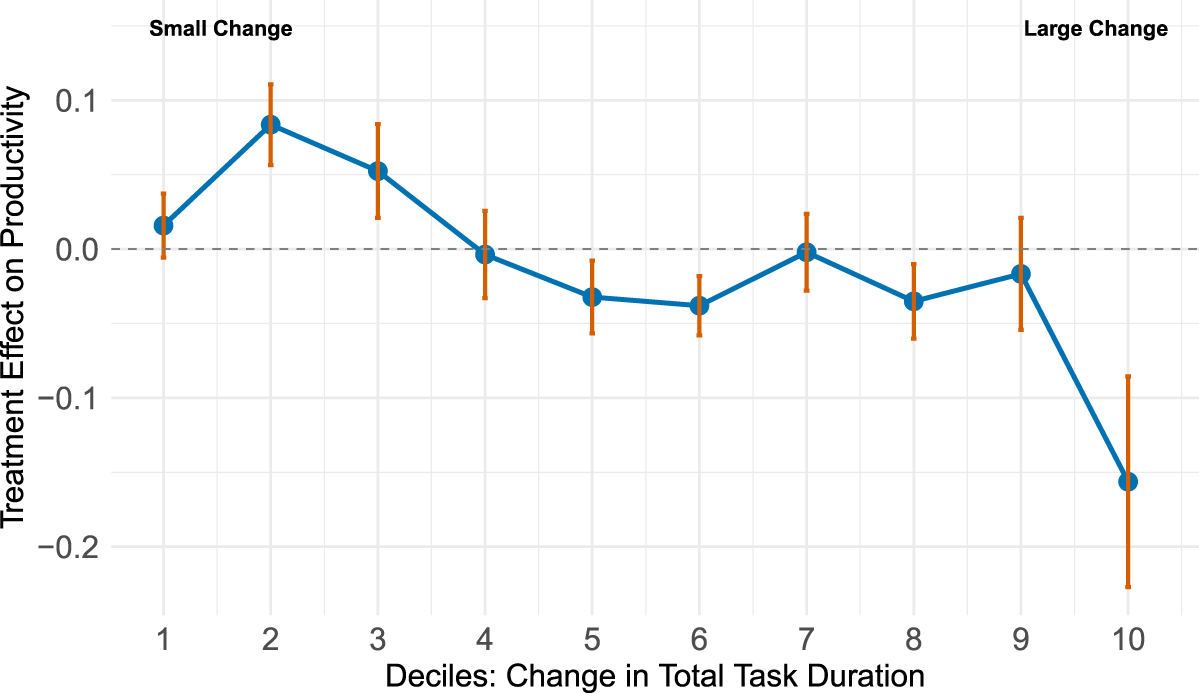

In our next post hoc test, we assess whether the treatment effect on machine productivity varies depending on the average

Comparison of treatment effect depending on the change in total duration. Note: Deciles are defined by the total change in task duration between the pre- and post-period. We run ten panel regressions (specification 3) on the decile-specific samples and report the corresponding treatment effects.

Regression results: effect on Completion time (in sec).

Note: All specifications use heteroscedasticity- and autocorrelation-robust standard errors. All regressions control for task priority and week. OLS = ordinary least square; FE = fixed effect; AIC = Akaike information criterion.

(2) and (3) report adjusted observation counts due to missing lagged values in some observations.

Significance:

Regression results: effect on Productivity.

Note: All specifications use heteroscedasticity- and autocorrelation-robust standard errors. All regressions control for task priority and week. OLS = ordinary least square; FE = fixed effect; AIC = Akaike information criterion.

(2) and (3) report adjusted observation counts due to missing lagged values in some observations.

Significance:

In other words, the longer tasks remain unresolved, the stronger the negative effect on productivity. This is not unexpected, but we see that choice has enabled workers to de-prioritize a small fraction of tasks. These have a strong negative effect on productivity. This challenge seems to be at least partly due to social loafing, which is made possible by the choice system. Table 3 shows that for every additional worker that is notified about an interruption, the response time increases by 15 s. This is a strong sign for social loafing as workers can observe the number of present coworkers in our factory setting. Thus, negative productivity effects occur when the total task duration increases significantly. This indicates that our treatment caused heterogeneous effects, which our Bayesian findings in Online Supplement K illustrate in more detail.

Our post hoc findings can be summarized as follows. First, recall from Table 3 that workers spend more time selecting their tasks due to the introduction of the treatment. Second, we find that when workers are allowed to select their tasks, they tend to choose those they are already experienced in (Figure 4), extending the time until subsequent interruptions occur (Table 5). Third, we see that allowing workers to choose only their preferred tasks leads to a faster completion of the respective tasks (Table 4).

The partly contradictory effects may elucidate the absence of a discernible net impact on productivity at a broader level (Table 5). Nevertheless, Figure 6 reveals varied impacts on productivity due to treatment effects, particularly showing a decrease in productivity when machines are neglected for prolonged durations. Arguably, these machines are consistently unpopular with the workforce. In the following, we derive implications on how to address this challenge in a choice-based system.

Our field experiment examines a change in a modern production system that leverages innovative smartwatch technology to distribute work tasks. The implementation of smartwatches has enabled the company to undergo a fundamental transformation of the shop floor organization, which raises a new question for managers: How should workers be tasked with new work? Should they receive tasks directly onto personal devices—like smartwatches—in a

Our findings partly support and partly oppose the assumption of Self-Determination Theory that increased autonomy generally leads to an improvement in performance outcomes (Deci and Ryan, 1980, 1985; Ryan and Deci, 2000). Our findings suggest that introducing autonomy into a digitalized production system through the provision of choice is a double-edged sword: Workers take longer to select a task (i.e., an increase in response time), but once they do, they execute it faster (i.e., a decrease in completion time). In our context, where tasks are relatively short in duration, these opposing effects result in no net impact on overall productivity. This raises the question: What are the underlying mechanisms behind this effect?

An interesting observation is that the share of time workers spend on machines they have not previously used increases after the treatment in both the treatment and control groups (see Figure 4(a)). However, workers in the treatment group only completed tasks they had previously performed on these new machines (Figure 4(b)). In other words, when given autonomy through the provision of choice, workers tend to choose tasks they are most familiar with, but do not mind which machine is interrupted. This behavior has implications for both response time and completion time.

We observe that workers complete tasks faster when given a choice. This aligns with prior work showing that motivation increases based on the self-affirmation that workers draw from using their core skill set (Kamei and Markussen, 2023; Spreitzer et al., 2005). Furthermore, workers who stick with what they did previously, as discussed above, seem to find longer-lasting solutions for machine interruptions (see Figure 5). As encouraging as this result is for practice, it also bears a conceptual challenge for research. We cannot fully disentangle whether we observe an improvement purely based on stronger motivation, or purely based on matching stronger individual experience/skills to the problem, or both. Research on Self-Determination Theory and work motivation emphasizes the inextricable link between intrinsic motivation, autonomy, and task-related skills and performance (e.g., Deci et al., 2017). This inherent connection can only be disentangled in highly controlled laboratory studies (Kamei and Markussen, 2023). Nevertheless, Weibel et al. (2010) support our motivation-based view by showing that motivation has a particularly positive effect on more straightforward tasks—those that are easiest for workers to perform. Figure 5 in our post hoc analyses shows exactly that: A positive effect on the time between machine interruptions caused by workers doing what they previously did. After all, we as scholars must also ask ourselves whether it is meaningful to disentangle motivation and skill matching, given that corporate management cannot isolate them in A/B tests on the shop floor.

Consistent with Self-Determination Theory, we find benefits of granting autonomy in completion time, but the task choice behavior we observe also has negative consequences: It significantly slows down workers’ responses to pending tasks. This result is in conflict with our earlier theorizing based on motivation. The increase in response time may be attributed to two factors. First, the need to identify a specific option—such as a familiar task (see Figure 4)—likely increases information gathering (e.g., scrolling through a list) and processing demands (e.g., evaluating which tasks one knows well). This may also help explain the mixed findings in the literature on the relationship between autonomous choice and responsiveness: Laboratory research has shown that relatively simple sequencing choices improve reaction times (Ziv and Lidor, 2021). In contrast, in our real-world field experiment, the information-processing demand for making a choice was relatively high. This underscores the relevance of information richness associated with choices in future research and managerial practice (Chernev et al., 2015). The increase in richness is particularly relevant for production and operations management, as digitalization significantly increases the density and richness of operational information in manufacturing with the goal of empowering shop floor employees (Leyer et al., 2019). Therefore, our study should motivate further research to investigate the relationship between the right level of information presented during choice, particularly in other contexts that require even more swift and accurate responses, such as healthcare or disaster relief.

A second factor that may contribute to the increase in response time is that workers may wait for tasks they are already familiar with. This behavior could be interpreted as signs of social loafing, as suggested by the significant positive effect of the number of users notified—one of our control variables—on the response time (see Table 3). The more workers receive the same task, the longer it takes for any one of them to accept it. In our study context, workers can anticipate how many colleagues will be notified for a task, as they are familiar with each other’s skill profiles and can directly observe the number of workers on the current shift. This highlights a key insight from our study: If production managers increase workers’ choices, they should also anticipate potential social loafing effects.

Future research could address this challenge by exploring ways to preserve autonomy while mitigating its downsides. For example, as suggested by Lount Jr and Wilk (2014), publicly displaying individual workers’ response times may help reduce social loafing when workers can choose their next task. This points to the emerging discussion on social loafing in operations that has highlighted the ability of workers to observe one another is a crucial factor (Cantor and Jin, 2019; Do et al., 2018; Wang and Zhou, 2018). Since behavioral transparency is not always feasible in crowded and highly automated factory halls, as in our study, future field interventions in digitalized factories should consider displaying a “busy status” to other workers as a potential remedy to social loafing. This form of public information bears relatively less risks of negative psychological consequences, vis-a-vis displaying an average response time as suggested in the literature.

Another unexpected finding from our study is the null effect on productivity. Taking all results together, it is probable that the single effects of slower response and faster completion cancel each other out. Since the factory has a dedicated workforce, unlike in gig work or freelancer settings, we can assume with reason that worker behavior contributes to productivity changes (see also Figure 6). Response and completion times shorten and elongate the overall time it takes for an interruption to be solved (see Table 3 and Table 4). Another possible explanation that we should remind ourselves of is the treatment factory’s high workload and high performance level (see Figure 2(c)). Naturally, a high-performing factory will experience more difficulty improving further. Additionally, taking into account previous research on the impact of workload on efficiency (e.g., Kc and Terwiesch, 2009; Oliva and Sterman, 2001), our study provides evidence that choice does not enhance overall system performance time when workers are already operating at peak capacity. This finding aligns with the work of De Lombaert et al. (2025) who were also not able to document overall performance gains in an order picking setting. Our study offers some support to what De Lombaert et al. (2025) suspected as a possible explanation in their study: “this [task choice] only brings about an additional ponderation time. Potentially, the latter is compensated by work pace improvements induced by the former” (p.799). Our study offers empirical support to complement De Lombaert et al. (2025), who did not measure response time. However, the findings around task choice are still mixed and far from reaching a conclusion. For example, Kc et al. (2020) have shown that altering task choice patterns under high workload can even have negative performance consequences. The overall mixed findings suggest that the workload may serve as an important moderator for the effect of choice on overall performance. Future laboratory or simulation studies may be able to trace this relation more closely, as they are better equipped to run sensitivity analyses using different configurations of operational variables, such as utilization.

In conclusion, our study does not fundamentally question the prevailing perspective of Self-Determination Theory that increased autonomy generally leads to improved performance outcomes (Deci and Ryan, 1980, 1985; Ryan and Deci, 2000). However, we add nuance to the literature that points out that autonomy alone does not improve all dimensions of performance (De Lombaert et al., 2025; Gonzalez-Mulé et al., 2016). Future research should design and test hybrid designs that find a middle ground between choice and delegation.

Implications for Managers

This study offers several practical implications. First, managers considering the introduction of task choice autonomy should be aware that increased choice opportunities may come with side effects—such as longer response times—that can offset potential performance gains when work tasks are as short as in our context. Production managers may mitigate these delays by reducing the number of task options presented, thereby lowering the cognitive load on workers. This means that boundary-less pooling in the factory should remain a vision for large facilities that bear high risks of choice overload. Instead, investing effort into carefully designing partnering areas for pooling can pay off by increasing efficiency (Jordan and Graves, 1995).

Second, autonomy in digital task assignment systems may lead to increased social loafing. Our study has shown that when more employees see a task in a choice-based system, tasks wait longer for processing. The research on social loafing in operations is still emerging. Future studies in the field can try limiting the number of workers notified about a given task, displaying workers’ busy/available status, or displaying an average response time per person. For example, notifying fewer workers may create a greater sense of responsibility compared to notifying many workers simultaneously.

Finally, our treatment not only showed potential for improved task performance features (i.e., faster completion times) but also increased workers’ satisfaction with the task allocation process, as measured in an auxiliary survey 1 . While we acknowledge that this survey is anecdotal considering the single item we used, it still points at a significant potential in terms of worker well-being and satisfaction. Satisfaction is of significant managerial relevance since positive employee attitudes and behaviors have a causal positive effect on later business outcomes (Koys, 2001). This should not be left out of consideration in digitalization efforts in manufacturing.

In summary, what do these implications mean for the future of designing of production systems and workers’ autonomy in task choice? Our study informs some preliminary conclusions: Production managers may address the behavioral side effects—such as social loafing—for example by reducing the number of displayed task options per worker or limiting how many workers are prompted. Managers can also mitigate side effects by combining delegation and choice, thus leveraging the advantages of both approaches. For instance, if response times increase for specific tasks, managers might temporarily switch from a choice-based system to delegated task assignment for those tasks. Finally, with smartwatch systems like the one used in our study, managers can gain deeper insights into their workforce’s task-choice behavior. These insights can potentially support targeted cross-training and may help prevent unnecessary overspecialization. Future research can explore these and other system design directions.

Conclusion

In this study, we analyze a dataset consisting of 66,233 machine status reports and 31,429 work tasks collected across two plants of a manufacturing company. Using a DiD approach, we estimated the effect of granting workers the freedom to choose their next work task from a list of open tasks rather than tasks being assigned to them one after the other. Our findings showed that providing a choice in task allocation has mixed implications for performance outcomes. Specifically, we observed that productivity measured on a machine level was not affected, but the response time increased by 80 s when workers were given a choice. At the same time, the time to complete a task was reduced by 26 s. Therefore, our results support the notion that managers should consider granting more autonomy when responsiveness is of lesser importance. However, our study also emphasizes the importance of carefully assessing how increased autonomy impacts the complexity of workers’ decision-making processes and their tendency to rely on others to do the work. We call for future studies to examine this link further.

Limitations and Future Research

Our study is subject to several limitations. First, our treatment group performed at a relatively high productivity level. This makes our results more conservative, but could also be a reason why we did not document additional performance gains. Notably, other recent studies also found no such improvements (De Lombaert et al., 2025). Second, workers’ skills will never be perfectly codified in a digital system. Human skill levels are opaque and not directly measurable in most practical contexts. This means that our study cannot fully trace how well the prior delegation system had matched tasks to workers initially. Therefore, we also cannot fully disentangle whether the benefits of the choice system that we measure are based on earlier misconfigurations of the delegation system, our theory, or both. Other field studies face the same challenges: Pasparakis et al. (2021) assign the lead during order picking to a robot (delegation) or to the human (choice), and measure a composite effect of motivation and possible differences in the picking sequence that humans can introduce. The only way to disentangle the effects is a laboratory study that is based on the simplest of tasks that anyone can perform, such as counting. Such treatments have been examined in interesting research (Kamei and Markussen, 2023). However, the factory managers of our partnering company would not allow their systems to randomly ignore the decisions of their workers and to potentially frustrate them in a real-world, practical context. We thus complement the earlier evidence with data from the field, accepting that we cannot fully isolate motivation and matching in our context. Such trade-offs are typical for making experimental design choices (Eckerd et al., 2021).

Our study focuses on troubleshooting of breakdowns. These tasks were fairly brief, and the adverse effect on response time was not compensated by improvements in completion time. This led to a combined increase of the overall duration for completing a task. Future research could explore whether these effects persist in contexts where completion times are substantially longer than response times. These settings could be scheduled maintenance-repair-and-overhaul tasks of complex machinery in aviation or setups in the chemical industry, which often require a full shift or days to complete.

Another limitation is related to the task characteristics of our field experiment. Specifically, workers had control over only a small portion of the value chain, and their work did not have a visible impact on the work of others. These features of control and visibility have been suggested as necessary conditions for autonomy to have a positive effect (Wall et al., 1986) and may thus further explain the null results in our study and prior research as well (De Lombaert et al., 2025). Consequently, we call for additional research to conduct similar experiments involving tasks with different job characteristics to ascertain the generalizability of our results.

Last, our study is confined to batch-type production and relies on the implemented smartwatch system. We encourage future research to explore different production types, such as assembly lines or job shop production, as well as alternative effects when workers use smartphones or tablets that are not directly worn by the workers instead. In doing so, we can deepen our understanding of autonomy in digitally enabled production systems and open new avenues for exploring how rapidly proliferating technologies, such as wearables, may shape, moderate, or even redefine the role of autonomy on the shop floor.

Supplemental Material

sj-pdf-1-pao-10.1177_10591478251400469 - Supplemental material for Give Me a Choice! A Field Experiment on Task Choice Enabled by Wearables

Supplemental material, sj-pdf-1-pao-10.1177_10591478251400469 for Give Me a Choice! A Field Experiment on Task Choice Enabled by Wearables by Daniel Kwasnitschka, Henrik Franke, Richard von Maydell and Torbjørn Netland in Production and Operations Management

Footnotes

Acknowledgments

Part of this research was conducted at the Joint Research Center of the European Commission. The contents of this publication do not necessarily reflect the position or opinion of the European Commission. The authors would like to thank the editors and the anonymous reviewers for their valuable comments and constructive suggestions, which greatly improved the quality of this manuscript. We also gratefully acknowledge the support of the company for providing access to data and resources that made this research possible.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Notes

How to cite this article

Kwasnitschka D, Franke H, von Maydell R and Netland T (2025) Give Me a Choice! A Field Experiment on Task Choice Enabled by Wearables.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.