Abstract

Given the rapid rise of (generative) artificial intelligence (AI) and the global proliferation of AI-generated misinformation, research on how deceptive narratives are created, disseminated, and addressed has become increasingly urgent. This case study investigates emerging trends in fact-checking practices related to AI-generated misinformation across Brazil, Germany, and the United Kingdom. Adopting a mixed-methods approach that combines a quantitative content analysis of 136 verification articles (2023–2024) and 13 expert interviews, this study (1) maps emerging trends in AI-generated misinformation, (2) examines current detection methods and debunking strategies employed by fact-checkers, and (3) explores how fact-checkers assess the spread of AI-generated misinformation and respond to its challenges. While the overall scope of verified AI-generated misinformation remains limited, the findings underscore its growing potential to disrupt the information ecosystem. The results revealed notable country-specific variations in topics, targets, intentions, AI elements, generative models, detection methods, and debunking strategies.

Introduction

The rapid development and widespread use of artificial intelligence (AI) technologies are transforming public communication by enabling new ways of producing content, enhancing distribution through personalization, and fostering dynamic audience engagement (Sarısakaloğlu, 2025). However, these advancements also bring challenges, particularly the rise of misinformation that disrupts the information landscape. AI-driven tools enable content manipulation and automate misinformation campaigns, further challenging journalistic verification efforts (Vizoso et al., 2021). Shortly after the release of generative AI models like OpenAI’s ChatGPT (November 2022) and Midjourney V5 (March 2023), deceptive AI-generated content, including fabricated text, hyper-realistic images, audio, and videos, began to circulate globally (Zhou et al., 2023).

The accessibility of generative AI tools (e.g., GPT-4, BERT, DALL·E, Stable Diffusion), which do not require technical expertise such as programming skills, allows individuals to create and spread textual, auditory, and visual misinformation across domains such as politics, entertainment, and science. This democratization lowers barriers for malicious actors, increasing misinformation risks and reducing information quality. AI technologies are often employed to generate synthetic media that escalates propaganda, weakens informational ecosystems, and spreads false beliefs (Baele & Brace, 2024; Jungherr & Schroeder, 2023). Freedom House noted that generative AI has been used in 16 countries to erode trust, influence voting, target opponents, and manipulate public discourse (Funk et al., 2023).

While misinformation has been widely studied, research on AI-generated forms, such as deepfakes, remains in its early stages (Hameleers et al., 2022; Simon et al., 2023; Vaccari & Chadwick, 2020). Some argue that concerns about AI-generated misinformation reflect a “moral panic” lacking empirical evidence (Jungherr & Schroeder, 2021). Existing research often focuses on single-country cases or cross-national comparisons between countries with strong resilience to misinformation. In contrast, less attention is given to cross-national analyses of contexts where populist rhetoric, the absence of Public Service Broadcasters, and heavy reliance on social media increase susceptibility to deceptive narratives, with AI playing a more pervasive role (Humprecht et al., 2020; Rodríguez-Pérez & García-Vargas, 2021).

The dual dangers of public deception and declining trust in media content (Corsi et al., 2024) underscore the need to study the dynamics of synthetic media across contexts. Researchers must consistently track and critically assess the evolving synthetic media landscape. While studies have explored individual facets of AI-generated misinformation (Chu-Ke & Dong, 2024; Kreps et al., 2022; Zhou et al., 2023), a systematic, comparative quantitative content analysis across different media systems remains absent. Addressing this gap, our study offers a case-based analysis of AI-generated misinformation detected by prominent fact-checking organizations and fact-checking units operating within global news agencies across Brazil, Germany, and the United Kingdom (UK), three countries that differ significantly in their levels of misinformation resilience (Humprecht et al., 2020). To complement the insights gained from the quantitative content analysis, we conducted 13 expert interviews with fact-checkers, adding depth to our understanding of current detection practices and challenges in verification.

Against this backdrop, this study analyzes verification articles to (1) maps trends by examining key aspects, including topics, origins, targets, intentions, elements, and generative models, (2) synthesizes findings from fact-checkers’ articles to identify detection methods and debunking strategies, (3) explores how fact-checkers assess the spread of AI-generated misinformation and the ways they address its challenges. In doing so, it offers insights into current fact-checking practices and provides a foundation for further research on detection methods in an increasingly complex information landscape.

Understanding misinformation and disinformation

In 2016, “fake news” became a buzzword during the US presidential election, largely due to its use by Donald Trump, sparking a rise in misinformation studies (Madrid-Morales & Wasserman, 2021). Egelhofer and Lecheler (2019) defined fake news as a genre imitating journalism and as a political label to discredit the media. Tandoc et al. (2018) categorized fake news into satire, parody, fabrication, manipulation, advertising, and propaganda based on truthfulness and intent to deceive. Scholars now distinguish particularly between misinformation, defined as unintentional inaccuracies, and disinformation, referring to intentional falsehoods (Wardle & Derakhshan, 2017); although neither is a new phenomenon, their current scale can significantly undermine public discourse (UNESCO, 2023).

Hameleers (2023) refined the definition of disinformation as a deliberate, context-specific act of deception involving the creation, manipulation, fabrication, or decontextualization of information to mislead and maximize the creator’s benefit. His definition highlights six key elements: intentionality, context dependence, covert techniques, manipulation, benefit maximization, and the goal of misleading. Motivations behind spreading falsehoods can vary: Politically motivated disinformation can destabilize foreign states, legitimize radical agendas, or enhance a politician’s image (Bennett & Kneuer, 2024). Ideologically driven disinformation seeks to persuade audiences to adopt specific values and beliefs, rather than influence electoral outcomes or disrupting democratic systems. Financial incentives, such as online fraud or health-related disinformation, often aim to provoke fear or anxiety, driving individuals to purchase products or services promoted by the perpetrators (Hameleers, 2023).

Since our study aims to analyze not only verification articles that address intentionally false or misleading content (e.g., chatbots impersonating legitimate payment services to steal credit card details and IDs) but also those cases involving wholly or partially false information shared with unclear or unintentional motives (e.g., misunderstood satire, parodies, or internet jokes), we adopt the term misinformation as an umbrella concept, following O’Connor and Weatherall (2019). Using misinformation in this sense acknowledges the practical challenges of reliably assessing intent and allows for a broader categorization that encompasses both unintentional inaccuracies and deliberate falsehoods, going beyond the narrower scope of disinformation.

An infocalypse of AI-generated misinformation: Deepfakes as a catalyst for deceptive content creation

AI technologies have significantly impacted the dynamics of misinformation, providing individuals with powerful tools to create convincing falsehoods that spread rapidly, thereby undermining traditional fact-checking and information validation methods (Zhou et al., 2023). While no universal definition of AI exists, it can broadly be defined as software or hardware systems designed to simulate human intelligence and behavior (Sarısakaloğlu, 2025). These systems function in physical or digital environments by perceiving their surroundings, processing structured and unstructured data, assessing options, and selecting the most effective actions to achieve specific objectives (European Commission, 2019; Sarısakaloğlu & Löffelholz, in press). Recent advancements in AI often rely on machine learning (ML) techniques, such as deep learning, which employ multi-layered neural networks to analyze data, identify patterns, and improve performance through iterative learning (de Vries, 2020; Jungherr & Schroeder, 2023; Mitchell, 2013). A key development in this context is the emergence of generative AI models, driven by transformer-based deep neural networks (DNNs) like large language models (LLMs), which have substantially lowered the barrier to fabricating convincing content and amplifying falsehoods at scale.

For textual misinformation, natural language processing (NLP) systems enable machines to understand and generate human language convincingly. For visual misinformation, computer vision, which focuses on processing and interpreting visual data, is used to create and manipulate images and videos for deceptive purposes. Additionally, AI supports the automation of social bots, which are software-driven non-human accounts that autonomously distribute (mis)information and engage in activities such as liking, commenting, sharing, and participating in discussions (Tucker et al., 2018). These bots are trained on extensive datasets derived from genuine user interactions, enabling them to create synthetic profiles that mimic real accounts and adapt their behaviors to resemble human responses (Hameleers, 2023).

In addition to using techniques that artificially amplify and imitate authentic social media interactions, actors spreading misinformation employ other strategies to enhance the plausibility of false information. These tactics aim to increase the credibility of falsehoods, making them appear factual. Whittaker et al. (2020) use the term synthetic media as an umbrella for “all automatically and artificially generated or manipulated media” (p. 91). This includes synthesized audio, virtual reality, and highly sophisticated digital imagery creation, with deepfakes causing the most concern.

Deepfakes are generated using ML techniques, where DNNs are trained on large datasets to modify neuron connections (de Vries, 2020; Kalpokas, 2021; Whittaker et al., 2020), producing convincing videos, audios, and images that manipulate public figures’ speech, behavior, and expressions (Jacobsen & Simpson, 2023). Photo deepfakes swap faces or bodies in images, sometimes altering features like mouth or eye movements (Farid et al., 2019). Audio deepfakes replicate voices from audio recordings or text. Video deepfakes alter faces or body movements and synchronize them with audio to create the illusion that a person said or did something they never actually did (Farid et al., 2019; Whittaker et al., 2020).

However, not all AI-generated content is deceptive, nor is all deceptive content produced by AI. Fact-checkers and studies emphasize that most deception still relies on decontextualization (Cazzamatta, 2025b; Hameleers, 2024). Hameleers (2024) found decontextualized footage used deceptively in the context of the Russia-Ukraine War and Israel-Palestine conflict. A study on synthetic media spread on X (Corsi et al., 2024) concluded that while most synthetic media is non-political or created for comedic purposes, deepfakes targeting political figures raise concerns about AI misuse (Hameleers et al., 2022; Vaccari & Chadwick, 2020).

The advancements in synthetic media have profound implications. A report by Deeptrace (Ajder et al., 2019) states that synthetic media has reshaped the perception of audiovisual content, challenging its traditional role as a trusted source of evidence. Research indicates that synthetic media significantly influences interaction patterns in online environments (Baele & Brace, 2024), blurring the lines between fact and fiction, eroding trust in media authenticity, and complicating information verification. This phenomenon challenges journalism’s role in delivering accurate and reliable information, posing risks to public trust and the integrity of communication systems (de Vries, 2020).

Journalism's response: Fact-checking tools for detecting and debunking synthetic media

Journalism, defined as “a set of transparent, independent procedures aimed at gathering, verifying, and reporting truthful information of consequence to citizens in a democracy” (Craft & Davis, 2016, p. 34), is vital for democratic discourse. However, its role as a facilitator of informed public debate is increasingly challenged by misinformation. In response, fact-checking initiatives have emerged to verify political statements, rumors, and public claims against evidence-based standards (Graves, 2022). While internal fact-checking has always been part of journalism, the spread of misinformation has amplified the need for post-publication verification. This has led to the growth of fact-checking organizations as independent NGOs, civil society entities, and newsroom extensions (Cazzamatta, 2025b; Graves & Cherubini, 2016). These organizations debunk misinformation across platforms and reduce its circulation (Bélair-Gagnon et al., 2023), helping to strengthen democracy by promoting transparency, accountability, and informed public discourse (Amazeen, 2013; Singer, 2018).

While fake news reflects “the collapse of the old news order and the chaos of contemporary public communication” (Waisbord, 2018, p. 1868), the rise of AI-generated misinformation exacerbates this disruption. By distorting information and complicating verification, journalism faces increasing pressure to maintain its credibility and provide reliable news (Thomson et al., 2022). This shift threatens journalism’s role as a democratic safeguard, raising critical questions about the future of news reporting and public communication in an AI-dominated era (Sarısakaloğlu, 2024).

To tackle these challenges, journalists and fact-checkers now use advanced tools for verifying images, texts, videos, and websites (Brandtzaeg et al., 2018; Dierickx et al., 2023; Vizoso et al., 2021). News agencies like Agence France-Presse (AFP) have developed AI-supported verification tools, including Vera.ai and WeVerify (AFP, 2024). These organizations possess significant resources, enabling them to employ programmers or journalists with technological expertise (Cazzamatta, 2025b; Lewis & Usher, 2014). The InVid-WeVerify plugin for detecting synthetic media and deepfakes, though still in beta version and not yet publicly accessible, is currently available to researchers and fact-checkers (Vera.AI, 2024). Several detectors, including True Media, Hive, AI or Not AI, Hugging Face, and Neuraforge, use ML and forensic analysis to detect AI-generated deceptive content. However, the effectiveness of fact-checking in combating AI-generated misinformation remains a subject of ongoing debate (Cazzamatta & Sarısakaloğlu, 2025; Dierickx et al., 2024). While fact-checking can play a crucial role in identifying and correcting false claims, its impact is often limited by the speed and scale at which AI-generated content spreads. Moreover, these systems may fail to identify new AI-generated media designed to bypass detection, and they typically offer probabilistic rather than definitive conclusions (Jacobsen & Simpson, 2023; Wittenberg et al., 2024).

NLP technologies enable automated fact-checking by processing verifiable statements, such as transcriptions and translations of political discourse across live broadcasts, online media, and social networks. These statements are cross-referenced with databases of verified claims and compared with credible sources and data. Fact-checking organizations like Full Fact in the UK are leveraging AI technologies to scale their operations. Additionally, AI-driven tools help distribute corrective information to audiences exposed to misinformation through various channels (Full Fact, 2024; Graves, 2018).

Building on the previous theoretical discussion, we pose the following three research questions:

Method

These three questions were addressed through a mixed-methods approach, combining quantitative content analysis of AI-generated misinformation trends with qualitative expert interviews with fact-checkers from Brazil, Germany, and the UK. To answer RQ1, which explored trends in AI misinformation creation, we examined the topics most frequently associated with such misinformation, the common sources and targets, and the intentions behind its creation or dissemination. Additionally, we identified the specific AI techniques (e.g., image manipulation, audio, deepfakes) and generative models commonly used to produce deceptive content. For RQ2, we synthesized insights from verification articles to assess how fact-checkers detect synthetic content, the methods they use to verify AI-generated material, and strategies used to debunk falsehoods. Finally, we conducted qualitative expert interviews to address RQ3.

Selection of countries

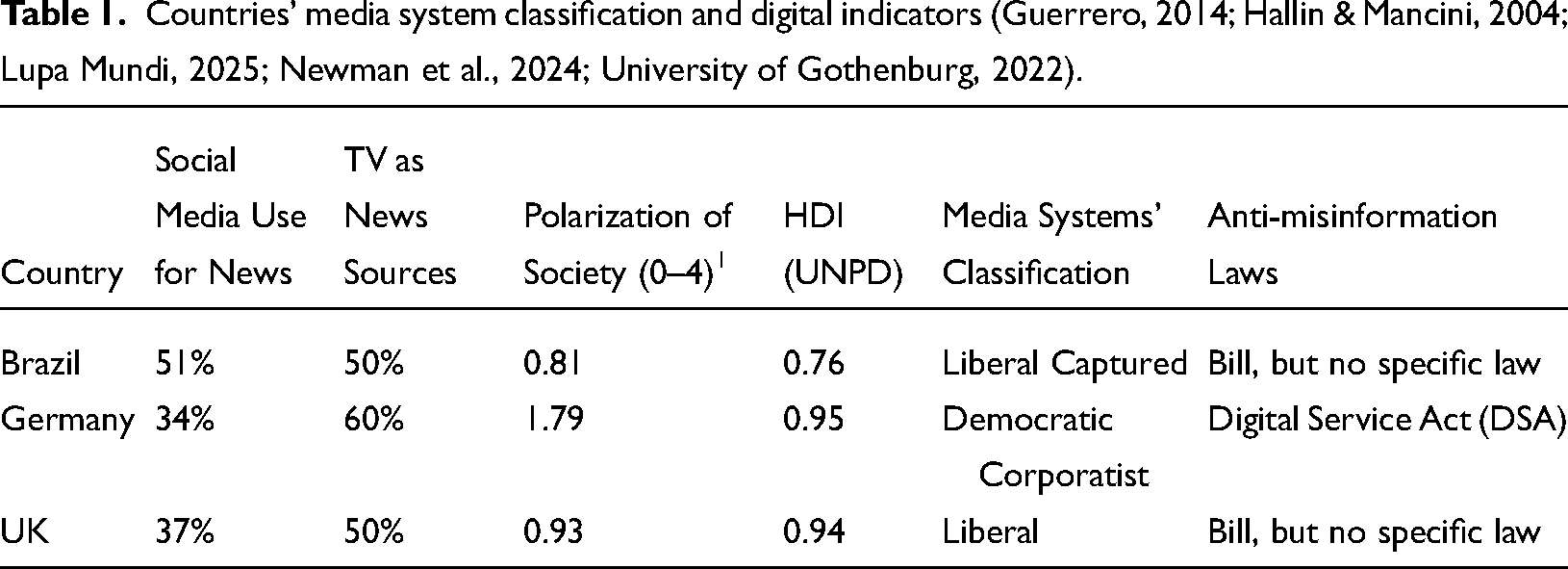

The spread of misinformation, the use of AI detection, and fact-checking practices are shaped by media systems and political contexts. Our approach followed Norris (2009, p. 322), who aimed “to identify common regularities that prove robust across widely varied contexts.” Our country selection was guided by media system characteristics and digital indicators. Media system typologies reflect varying levels of journalistic professionalism and regulatory traditions, or the absence thereof, which influence the online information environments in which fact-checkers operate. Germany represents the democratic corporatist model, while the UK exemplifies the liberal model (Hallin & Mancini, 2004). To expand the analysis beyond Western democracies, we included Brazil, one of the largest democracies in the Global South. Despite some similarities with the Mediterranean model, Brazil has also been classified as “liberal captured” (Guerrero, 2014), characterized by concentrated media markets, aggressive deregulation, regulatory inefficiencies, weak professional standards, and patronage-driven practices.

Media system traditions are also reflected in regulatory framework practices. For instance, Germany is the only country in our study governed by the Digital Services Act (DSA), which mandates content moderation, imposes sanctions on platforms, and establishes a regulatory framework for platform governance, aligning with the European tradition of legal regulatory frameworks and independent oversight (Ferreau, 2024). In contrast, the UK lacks a specific legal framework to address misinformation. While the Online Safety Act mentions misinformation, its focus is primarily on establishing an advisory committee to assist Ofcom and revising media literacy policies, rather than directly regulating content moderation (Full Fact, 2023). In Brazil, the absence of comprehensive content moderation laws outside electoral periods has prompted the Supreme Federal Court to intervene and require platforms to take action against harmful misinformation (Tomaz, 2023). Brazil’s media system remains largely unregulated and lacks independent oversight (Stroppa & Napolitano, 2020). Similarly, the country’s legal framework for tackling online misinformation relies primarily on judicial oversight, as evidenced by the Superior Electoral Court’s monitoring efforts during election periods.

Acknowledging the influence of digital factors on misinformation (Humprecht et al., 2020), we also accounted for variables such as social media usage for news, societal polarization, and Human Development Index (HDI) (see Table 1). For example, in countries where social media use for news surpasses television consumption and where political polarization is pronounced, citizens are more likely to encounter AI-generated misinformation (Humprecht et al., 2020). Similarly, HDI may help explain the prevalence of economically motivated online scams, particularly in less economically stable societies. Despite these considerations, the selection of countries was constrained by language, time, and funding limitations. Overall, we consider the selection appropriate for identifying consistent patterns that hold across diverse contexts, as it reflects a range of factors including different media systems, varying degrees of regulatory traditions, specific laws addressing misinformation, patterns of social media use for news, levels of societal polarization, and differences in HDI.

Countries’ media system classification and digital indicators (Guerrero, 2014; Hallin & Mancini, 2004; Lupa Mundi, 2025; Newman et al., 2024; University of Gothenburg, 2022).

Selection of organizations and data collection

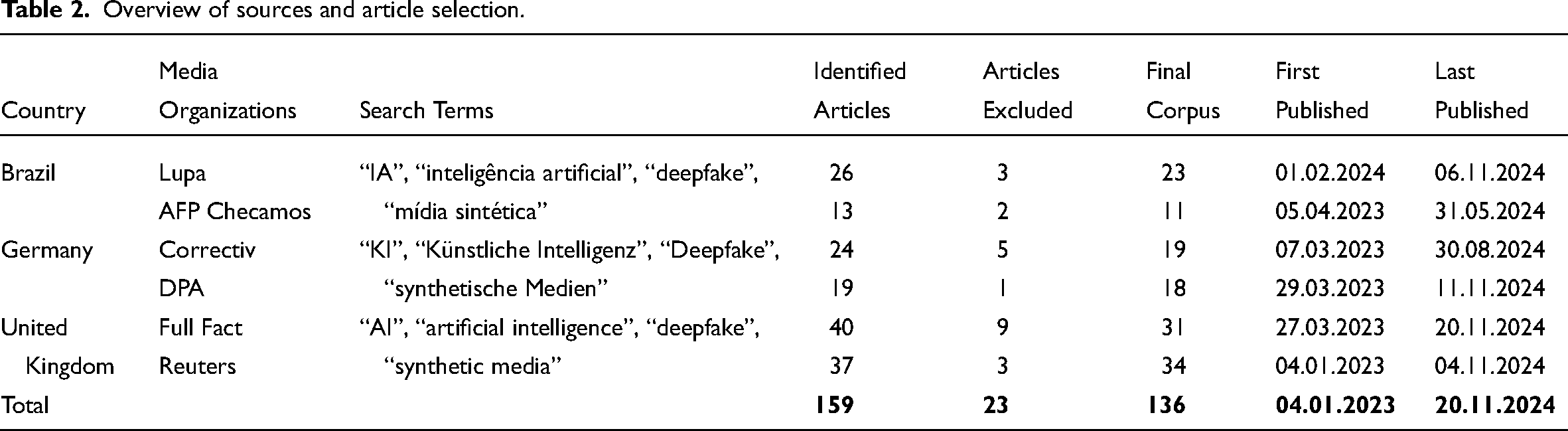

In each selected country, we included the following leading independent organizations and the verification desks of global news agencies: Lupa and AFP Checamos in Brazil, Correctiv and DPA in Germany, and Full Fact and Reuters in the UK. These organizations possess extensive experience with fact-checking automation and AI detection, including participation in initiatives such as the Vera.AI project, which enables effective monitoring of national and international online landscapes.

To examine how these media organizations responded to AI-generated misinformation, we aligned our data collection with a key turning point: the release of ChatGPT-3.5 by OpenAI in November 2022, marking a significant advance in the rise of generative models. We collected data for our quantitative content analysis from January 2023 to November 20, 2024, prior to the start of coding. Using the Feeder extension, we gathered links from fact-checking organizations focused on deepfakes and AI-generated falsehoods.

The search for verification articles was conducted using the keywords: “AI”, “artificial intelligence”, “deepfake”, and “synthetic media”, including their German and Portuguese translations. To avoid bias in interpretation, all articles were analyzed in their original language. We focused on articles featuring an (allegedly) content creation-related AI element. Articles addressing general AI developments, explanatory pieces, reports, opinion articles, investigative journalism, AI detection guides, inaccurate claims about AI, or unrelated rumors were excluded. We also excluded cases involving editing techniques like Photoshop or cheapfakes. This resulted in a total of 136 articles related to AI-generated or allegedly AI-generated misinformation, as detailed in Table 2.

Overview of sources and article selection.

The data reflect deceptions selected by fact-checkers for their potential to cause harm, go viral, or remain relevant due to audience concerns about authenticity, rather than all content on social media. While the number of articles may appear modest, it constitutes a full census of all relevant items published by the selected fact-checking organizations and fact-checking units embedded in international news agencies within the defined study period, covering not only domestic cases but also international issues. This suggests that AI-generated misinformation remains an emerging phenomenon, consistent with findings from previous research on the topic (Corsi et al., 2024). Nevertheless, the fact that such cases are already being flagged and verified suggests a growing awareness and urgency among fact-checkers to address this evolving threat.

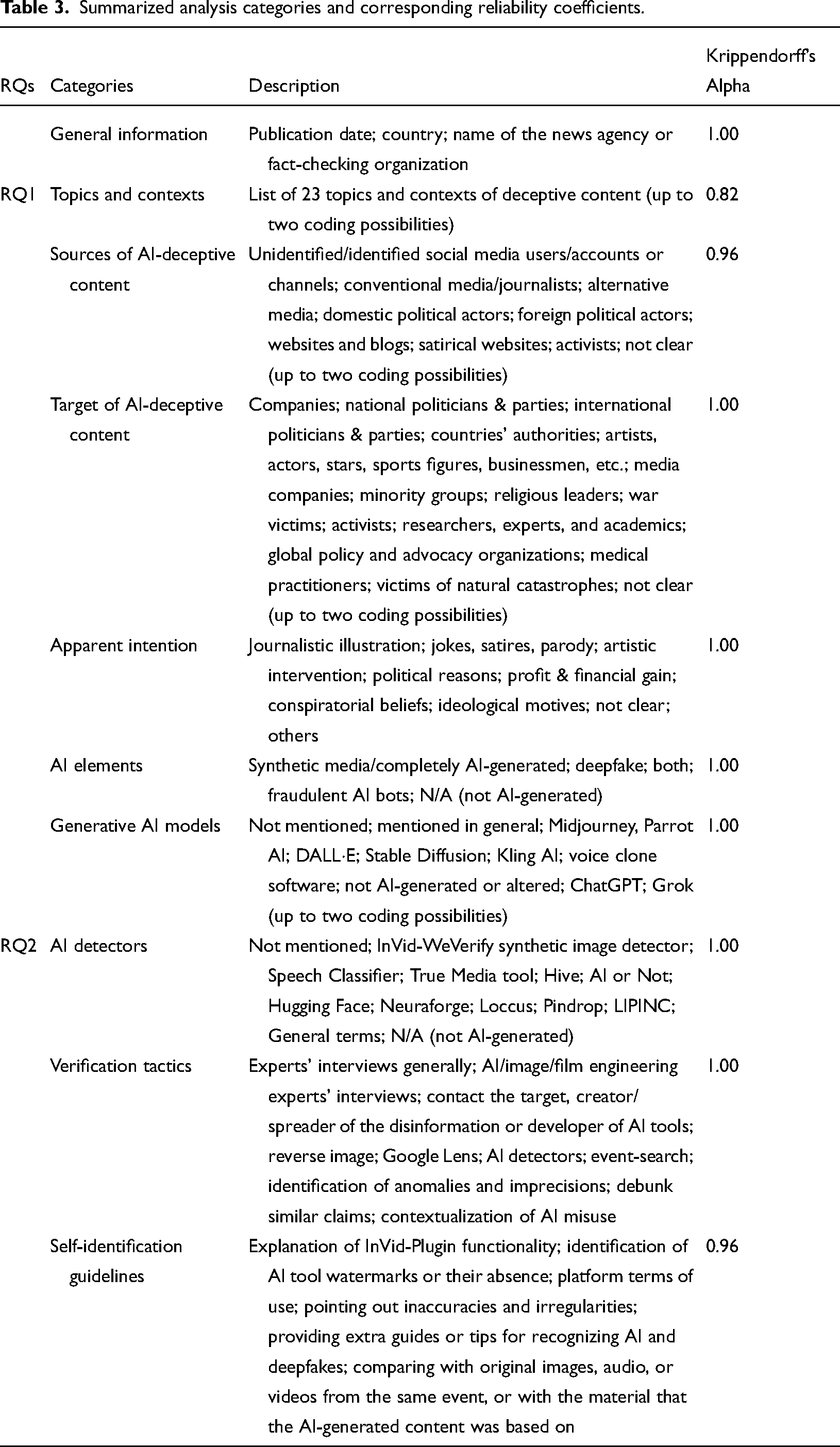

Category of analysis, pre-test, reliability, and data analysis

To provide a comprehensive analysis, we first conducted a preliminary reading of the entire sample to identify common topics of AI-generated deceptive content, the AI tools used, and the detection methods employed. The process of identifying topics in misinformation involved meticulous coding based on a predefined category list developed during a pre-test. When the topic and context of misinformation diverged significantly, both aspects were coded, allowing up to two topics per item. For example, an AI-generated image depicting police kneeling before a Muslim man was categorized under religion due to its religious component. However, since the image circulated during mass protests in the UK in 2024, both the religious topic and the protest context were incorporated into the analysis. After this pre-test, we developed the codebook to target the categories outlined in Table 3. For a detailed description of the categories, refer to the Supplemental Material. The authors of this research first conducted a pre-test to enhance coder agreement, subsequently coded the entire dataset themselves. Inter-coder reliability was assessed using Krippendorff’s alpha, based on a sample of 50 articles, constituting over one-third of the total articles analyzed.

Summarized analysis categories and corresponding reliability coefficients.

Correspondence analysis was used to investigate similarities and differences among categories and trends across countries. This method visualizes cross-table patterns, highlighting deviations between observed and expected values based on standardized residuals. Categories with similar deviations are grouped, identifying patterns and differences. Variables near the center are less distinctive, while the proximity of row (e.g., topics) or column labels (e.g., countries) indicates their similarity after appropriate normalization. The relationship between variables (countries × topics) is assessed using two metrics: (a) distance from the origin, indicating distinctiveness, and (b) the angle between lines connecting variables to the origin. Greater distances and narrower angles indicate stronger associations. The visualization highlights relative deviations, not absolute values. For more details, see Beh and Lombardo (2014) or Greenacre (2017). Corresponding residual tables are in the Supplemental Material.

Expert interviews as a complementary method

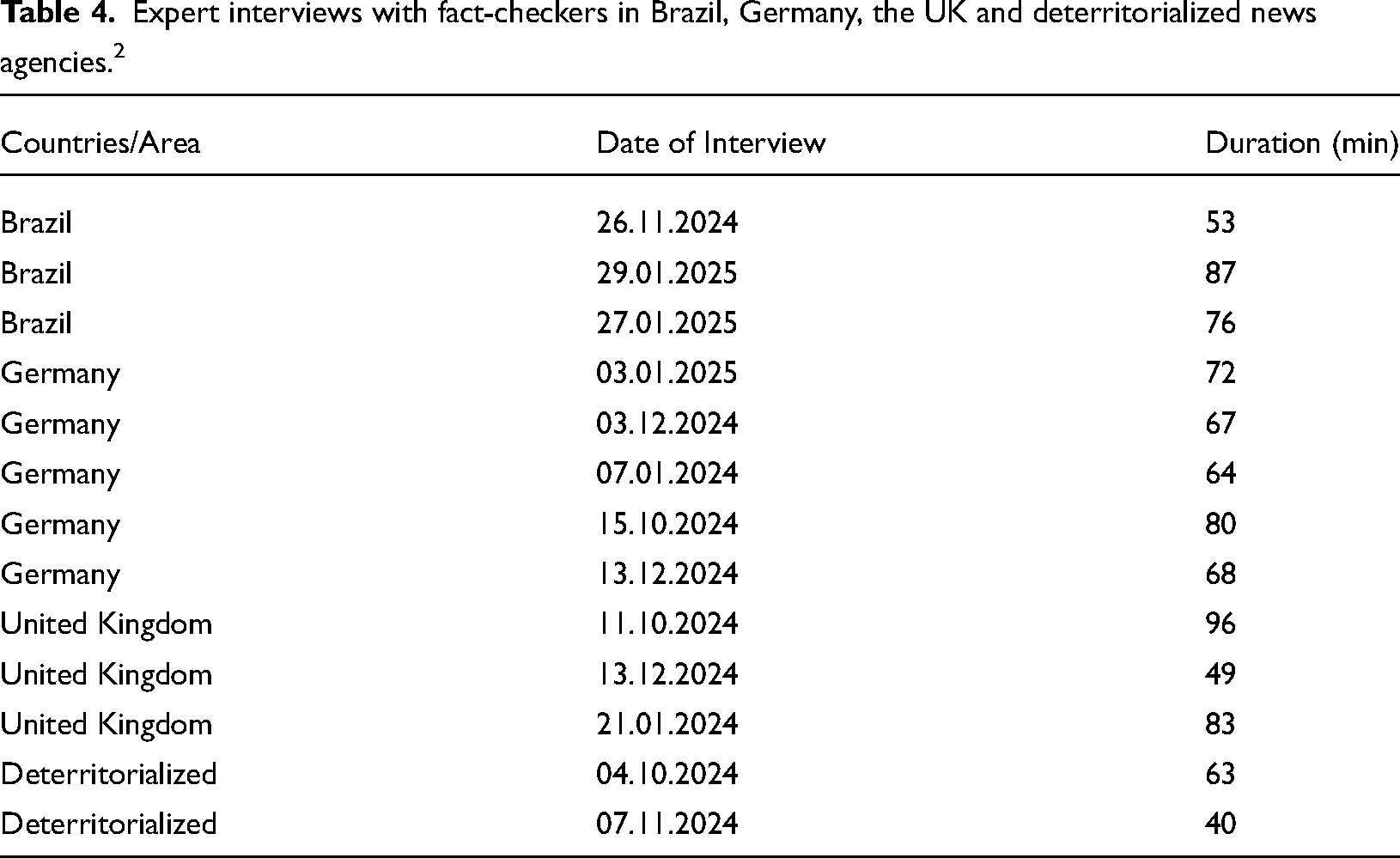

To address our third research question and complement the findings from the quantitative content analysis, we conducted 13 expert interviews with fact-checkers between October 2024 and January 2025, using the video conferencing tool Webex. These experts combine technical, procedural, and interpretive knowledge informed by broader societal contexts (Bogner, 2002; Gläser & Laudel, 2010). Unlike open-ended interviews focused on personal narratives, expert interviews emphasize institutional contexts and shared organizational knowledge (Meuser & Nagel, 2002). Interviewees comprised representatives from both the organizations analyzed in the content analysis for each country and additional fact-checking entities.

The interview guidelines were developed within a broader research project examining influences at the individual (micro), organizational (meso), and systemic (macro) levels. For this article, the focus was on AI-related developments, in particular the perceived rise of AI-generated misinformation, the challenges of verifying such content, and the reliability of existing detection models. Table 4 details the distribution of countries, interview dates, and conversation lengths. To ensure confidentiality and comply with the European Union’s General Data Protection Regulation (GDPR), all interviews were anonymized. After transcription, we conducted a qualitative textual analysis using NVivo software. The analysis was guided by the third research question and relevant theoretical frameworks, employing a flexible and iterative interpretive approach.

Expert interviews with fact-checkers in Brazil, Germany, the UK and deterritorialized news agencies. 2

Using an inductive, hierarchical coding approach (Tracy, 2013), we initially identified recurring patterns and core themes within the interview transcripts. Initial codes, such as the everyday challenges of debunking AI-generated misinformation, were grouped into broader thematic categories (e.g., the reliability of detection models, reliance on traditional journalistic practices). In the subsequent phase, we refined these categories by developing sub-themes until achieving theoretical saturation (Tracy, 2013). Although qualitative research of this nature is not designed for statistical generalization, it provides valuable insights into the operational conditions and adaptive strategies of fact-checkers. These findings serve to complement the results of the quantitative content analysis presented earlier.

Findings

Trends in the creation and dissemination of AI-generated misinformation (RQ1)

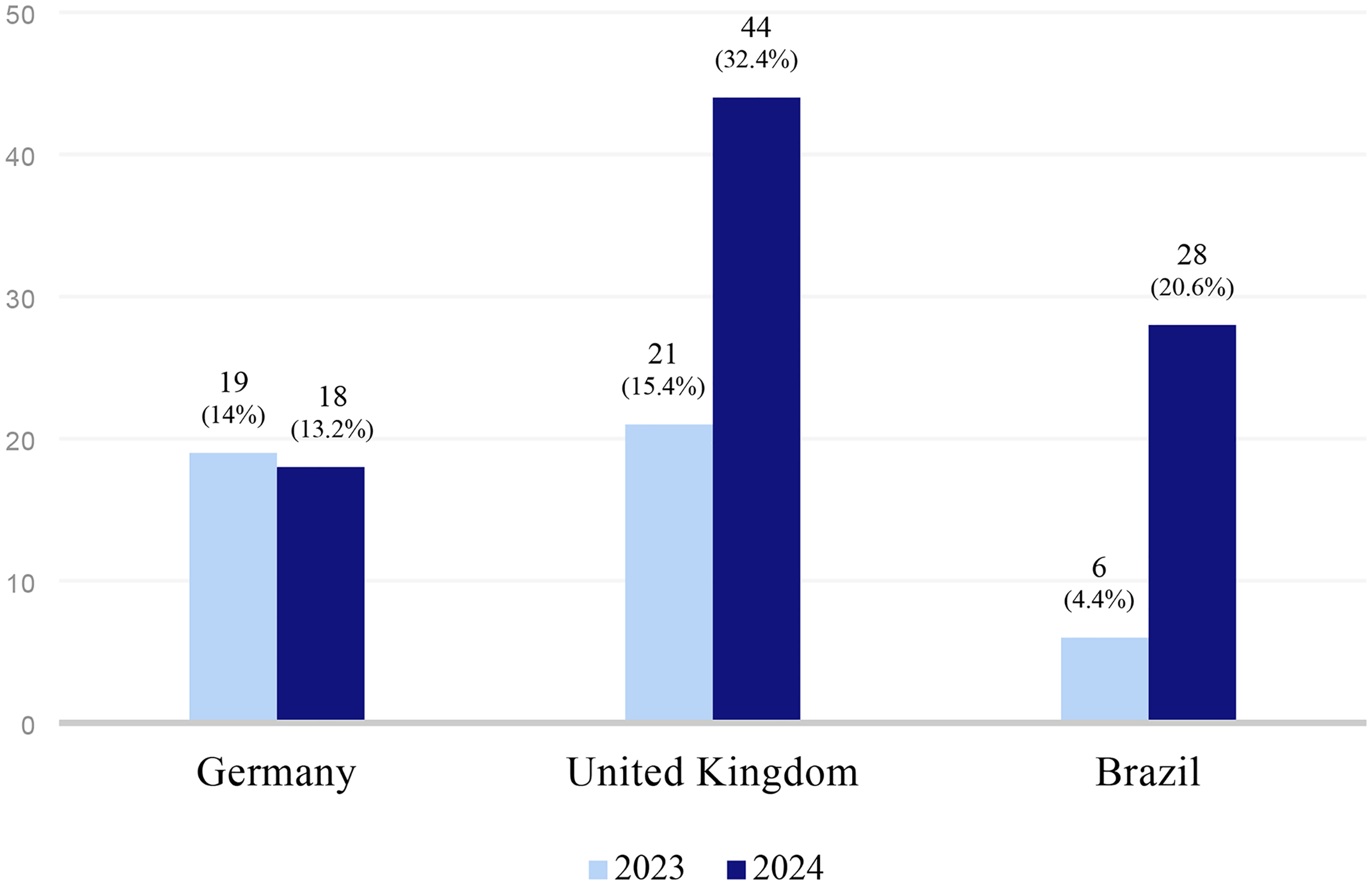

The UK, which exhibits the highest level of societal polarization among the countries (see Table 1), recorded the highest number of cases, representing almost half of the analyzed articles (47.8%). From 2023 to 2024, the prevalence of AI-generated misinformation in the UK more than doubled (see Figure 1). This increase is likely linked to the political crisis that emerged after the killing of children in Southport, followed by widespread misinformation on social media about the suspect’s identity, political radicalization, and the spread of far-right riots across the country (Bayley, 2024; Cadwalladr, 2024). Moreover, the general election in the UK, which marked the end of 14 years of conservative government, also appears to have been a contributing factor to the spike in AI-generated misinformation.

In contrast, Germany, which exhibits the lowest levels of societal polarization and has specific legislation against misinformation (see Table 1), accounted for just over a quarter of the detections (27.2%), with a relatively stable distribution between 2023 and 2024. The comparatively lower circulation of AI-generated misinformation may also be attributed to the absence of major destabilizing events, such as political crises, that often act as catalysts for the spread of misinformation. Brazil recorded the lowest share, accounting for one-quarter (25.0%) of cases. Nevertheless, detections count grew significantly between the two years. Given that AI developments are typically driven by the Global North (Sarısakaloğlu, 2025), Brazil may have initially required additional time in 2023 for these technologies to gain traction. However, by 2024, during the Brazilian municipal elections, AI-generated misinformation saw a significant increase. The adoption of such technologies for creating deceptive content also correlates with Brazil’s highest rate of social media use for news and societal polarization among the selected nations. A striking finding is the significant increase in the total number of detections across the three countries, rising from 46 articles in 2023 to 90 in 2024. This spike suggests an increased adoption and popularization of AI by malicious actors, alongside heightened awareness of AI-generated misinformation and enhanced detection capabilities among media organizations.

Topics of AI-generated misinformation

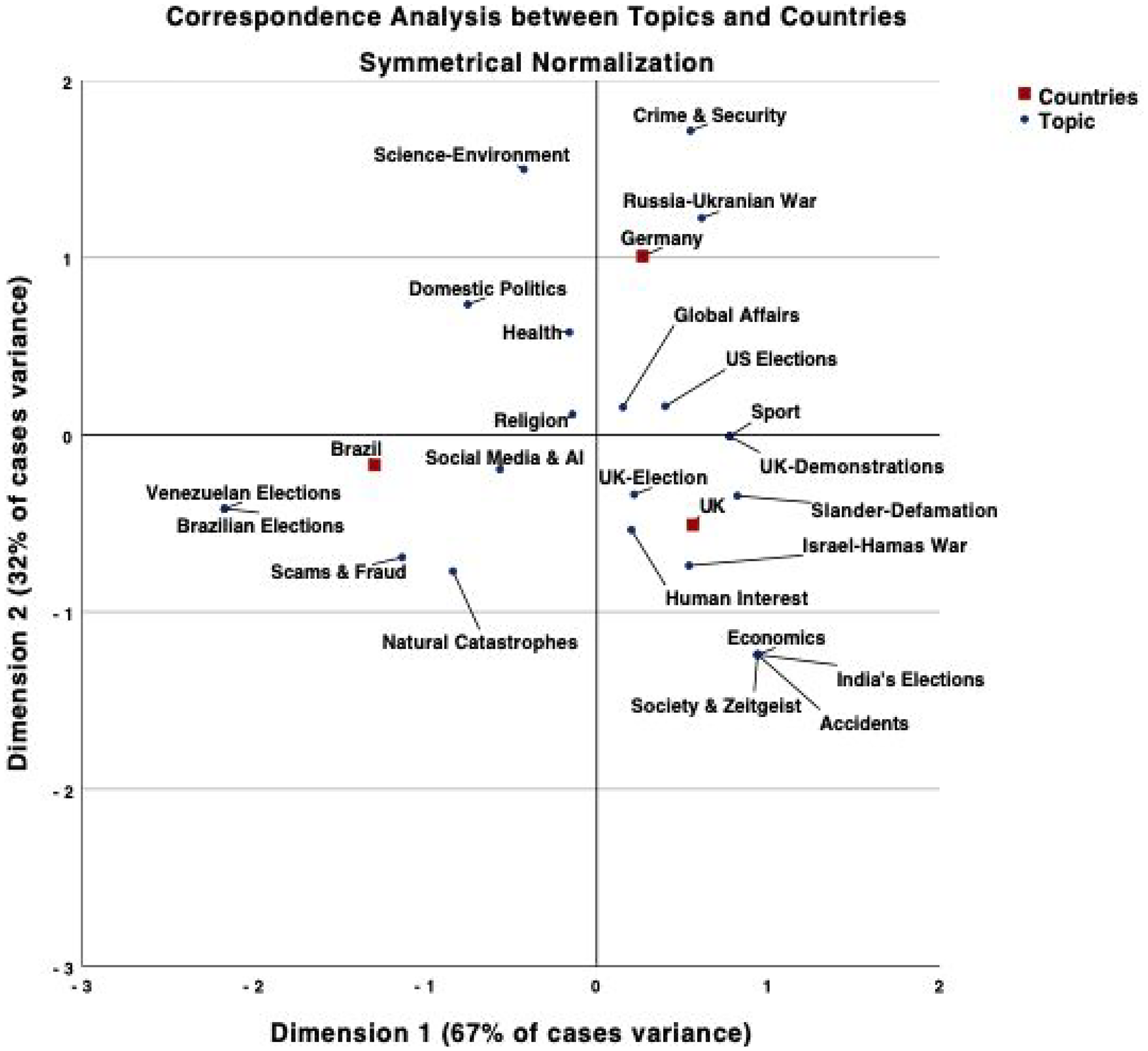

Among the 23 identified topics, AI-generated misinformation targeting public figures and politicians emerged as the most prevalent (n = 33; 12.1%) topic, highlighting its strategic use to delegitimize, slander, and defame influential individuals or institutions (see targets below). Domestic politics accounted for 7.7% (n = 21), demonstrating the vulnerability of governance and political discourse to manipulation (see Supplemental Material). The US election ranked third, comprising 6.3% (n = 17) of topics, followed by international affairs (n = 15; 5.5%) and human-interest stories, such as emotionally charged cultural, gossip, and entertainment narratives (n = 14; 5.1%). Additional topics included deceptive content involving prominent figures, such as Elon Musk, and narratives targeting media and social media platforms (n = 11; 4.0%) (Figure 2).

Distribution of AI-generated misinformation by countries 2023–2024 (n = 136).

When analyzing the topic across countries, we observed that Germany and the UK, positioned in the upper and lower right quadrants of Figure 2, exhibit greater similarities compared to Brazil. Brazil is positioned in the lower left quadrant and is notably distinct due to AI-generated misinformation disproportionately targeting the Brazilian municipal elections (sr = 4.2), scams and frauds (sr = 2.5), natural catastrophes (sr = 1.7), the Venezuelan elections (sr = 1.5), and issues related to social media and AI (sr = 1.4). The higher incidence of AI-generated scams and frauds in Brazil may be linked to broader structural vulnerabilities, including economic instability, weaker enforcement of digital regulations, and lower levels of digital literacy, which are often reflected in the country’s HDI (see Table 1).

Correspondence analysis between topics of AI-generated misinformation and countries.

The significant volume of AI-generated misinformation targeting the Brazilian municipal elections is understandable, given that it was the largest municipal election in the country’s history, encompassing 5569 municipalities (TSE, 2024). Similar to the previous presidential elections (Cazzamatta et al., 2024), memoranda of understanding were signed with platforms such as TikTok, LinkedIn, Facebook, WhatsApp, Instagram, Google, Kwai, and Telegram to implement measures mitigating the negative effects of electoral misinformation (TSE, 2024). Brazil is also distinctive for its high incidence of AI-generated online frauds and scams, including deepfakes of prominent doctors, actors, and singers purportedly promoting ineffective health products, as well as fake government websites and chatbots designed to steal credit card and personal identification numbers. While AI-generated online scams are also present in the UK (2.3%; sr = −0.6), their higher prevalence in Brazil may be better understood by considering contextual vulnerabilities. Lower digital literacy and economic insecurity, compounded by Brazil’s lower HDI of 0.76 (compared to 0.94 in the UK and 0.95 in Germany), increase susceptibility to online fraud by limiting individuals’ ability to recognize deceptive content and access resources for digital resilience.

The Brazilian online environment uniquely features AI-generated misinformation related to natural catastrophes, particularly following the heavy rains in southern Brazil in 2024. These floods severely impacted the region, inundating the airport and stadium, causing numerous deaths and disappearances, and displacing many citizens. Within this context, AI-generated images purportedly depicting bodies floating in the streets were circulating. AI-generated misinformation involving the Venezuelan election, although not widespread, occurred only in Brazil, likely due to the country’s geographic proximity and regional relevance. Brazil also differs in the extent of misinformation involving social media and AI developments, shaped by the conflict between Elon Musk and the Brazilian Superior Court. This dispute led to the suspension of X for several weeks and was instrumentalized by far-right actors. For example, AI-generated images depicted Musk embracing the Brazilian flag, portraying him as a defender of freedom of expression and an opponent of alleged censorship. Similar AI-generated creations of Musk appeared in Germany and the UK, with some falsely claiming that he had developed an idealized girlfriend using AI.

In Germany and the UK, several AI-generated misinformation was linked to specific political or geopolitical events. Notably, the 2024 US presidential election featured more than expected in Germany (sr = 0.6) compared to the UK (sr = 0.3) or Brazil (sr = −1.1), based on standard residuals. This prominence likely reflects from the election’s potential impact on Germany’s domestic and foreign policies, including issues related to NATO, multilateral cooperation, climate protection, and energy security, particularly given Germany’s dependence on Russian gas and its ongoing commitment to supporting Ukraine (Hasselbach, 2024). It is no coincidence that AI-generated misinformation related to the Russia–Ukraine conflict appeared more than expected in Germany (sr = 2.3) compared to the UK (sr = −0.6) and Brazil (sr = −1.5), as indicated by standard residuals and position in Figure 2. Germany is, in any case, the country geographically closest to the conflict. Interestingly, in the case of the Israel–Hamas War, AI-generated misinformation emerged more than expected in the UK (sr = 1.3) compared to Germany (sr = −0.9).

Apart from the Israel–Hamas War, the UK stands out for its AI-generated misinformation related to the UK elections, which resulted in a Labour government after 14 years of Tory dominance (sr = 0.4). Additionally, AI-generated misinformation emerged in response to the mass demonstrations that followed the far-right instrumentalization and incitement of violence after the Southport killing (sr = 0.5). These events appear to have led to an increase in AI-generated misinformation aimed at slandering and defaming political figures (sr = 2.3), a trend also reflected in the UK’s high societal polarization. Finally, although less frequent, India’s election (sr = 0.8) also prompted AI-generated misinformation in the UK, likely due to historical reasons and the colonial past. These findings highlight how misinformation campaigns capitalize on moments of political or military significance to influence public opinion.

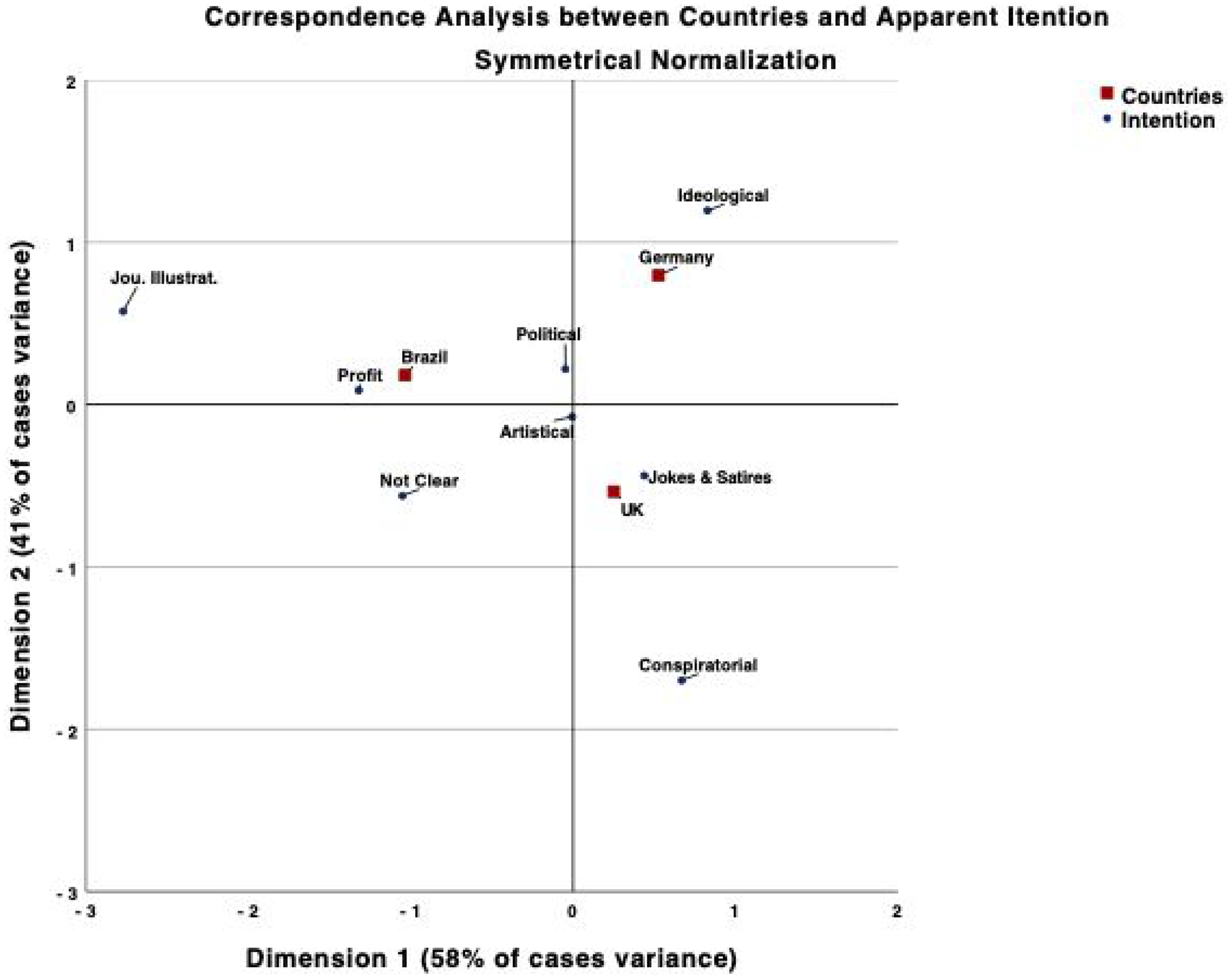

Sources of AI-generated misinformation and apparent intention

When analyzing the origins of AI-generated misinformation, up to two sources were recorded for each instance. Unidentified social media accounts accounted for half of the cases (n = 73; 50%), underscoring significant challenges in tracing AI-generated content to its originators and raising concerns about accountability and transparency (Cazzamatta, 2024). The second-largest category, identified social media users or accounts, was reported in 36.3% (n = 53) of cases. Some fact-checkers may avoid discrediting social media users to prevent increasing their visibility or interacting with potentially inauthentic accounts, which could generate sufficient engagement to evade platform detection of fraudulent behavior (Cazzamatta, 2024). An analysis of the apparent intentions behind these social media accounts (see Figure 3) shows that unidentified sources predominantly disseminated AI-generated misinformation for political (28.2%; sr = 0.3) and ideological purposes (36.4%; sr = 0.9). In contrast, identified accounts are primarily associated with jokes and satire (22%; sr = 0.5) and artistic interventions (75%; sr = 3.6) (see Supplemental Material).

Correspondence analysis between apparent intentionality and countries.

Other categories include unclear sources (n = 10; 6.8%), creators linked to conventional media outlets or journalists (n = 3; 2.1%), and websites and blogs related to AI, such as Emergent Mind or ‘Jim the AI Whisperer’ (n = 3; 2.1%). Regressive alternative media (Cazzamatta, 2025a), satirical websites, and activists collectively make up less than 3% of the recorded cases, reflecting their minimal role in the dissemination of AI-generated misinformation.

Across all three countries, the analysis revealed that social media accounts, both identified and unidentified, are the primary vehicles for AI-generated misinformation (Brazil: n = 35; 94.6%; Germany: n = 35; 83.3%; UK: n = 56; 83.6%). In contrast, conventional media, websites, and other sources played a minimal role across all regions.

Creators of AI-generated misinformation demonstrate varied motivations for producing and disseminating deceptive content. Despite the methodological challenges of accessing intentionality (Hameleers, 2023), analyzing these apparent intentions provides critical insights. Political motives, primarily aimed at delegitimization or mobilization, are the most prevalent, accounting for 45.6% of the 136 analyzed verification articles across all three countries. The second most common category involves “jokes, satires, and parodies” (n = 34; 25%), with the UK displaying the highest reliance on humor and satire, reflecting its tabloid media culture (Esser, 1999). Ideological motives, which do not necessarily aim to influence electoral outcomes but instead propagate specific ideas, are the third most prevalent and particularly prominent in Germany (n = 7; 18.9%). In contrast, Brazil exhibits a strong focus on profit-driven disinformation, largely due to the significant use of AI-generated content in online scams and fraud schemes (see Figure 2).

These trends are illustrated in Figure 3. Political motives, positioned near the origin of the graph, are relatively indistinct, as they appear similarly across all countries. Brazil stands out for its profit-oriented and financially driven motivations, which occur more frequently than expected (sr = 2.2). The UK, on the other hand, is notable for satirical provocations (sr = 1.4), while Germany demonstrates a strong emphasis on ideological goals (sr = 2.3).

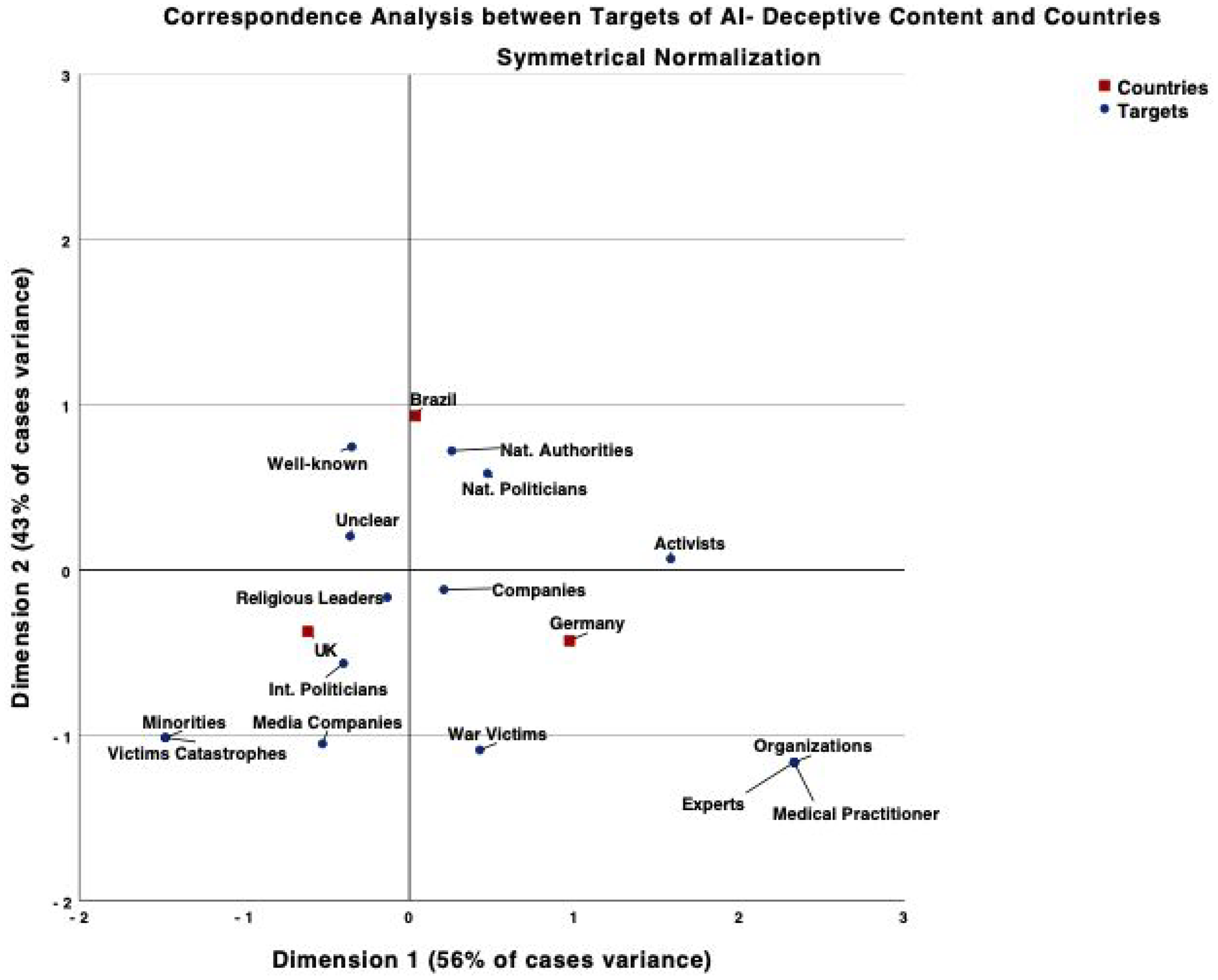

Targets of AI-generated misinformation

The most frequent targets are international politicians and parties, representing nearly one-third of all cases (n = 44; 27.8%), followed by national politicians and parties, with nearly one in six instances (n = 28; 17.7%) focusing on domestic political contexts. The third most common targets are public figures, such as artists, actors, celebrities, and business figures (n = 22; 13.9%). Other notable targets include companies such as McDonald’s and football clubs (n = 15; 9.5%), as well as country authorities (n = 8; 5.1%). Media companies and religious leaders are less frequently targeted. Across all three countries, political figures, both international and national, emerged as the most frequent targets of AI-generated deceptive content, with the UK recording the highest number of cases (n = 34; 49.3%), followed by Germany (n = 19; 45.2%) and Brazil (n = 19; 40.4%). This trend suggests the strategic use of misinformation to undermine political systems and public trust in political figures.

Guided by correspondence analysis (Figure 4), Brazil emerges as notably distinctive for its AI-generated misinformation targeting national politicians (sr = 2.3), national authorities (sr = 1.4), and prominent individuals such as businesspeople and celebrities (sr = 2.3) more frequently than expected. The extensive municipal elections (see above) likely contributed to the heightened focus on national politicians as targets of deceptive messages. National authorities, in this context, specifically refer to judges and members of the Brazilian Supreme Court, highlighting their efforts to compel Elon Musk to comply with national laws and appoint a representative in the country, an example of broader tensions between tech companies and nation-states. Lastly, celebrities and athletes are frequent targets of deepfakes used to promote counterfeit products and fraudulent deals.

Correspondence analysis between countries and targets of AI-generated misinformation.

The UK, in turn, targets international politicians (sr = 1.4), minorities (sr = 0.8), media companies (sr = 0.8), and religious leaders (sr = 0.1) more than expected (refer to Supplemental Material). This is linked to the UK’s focus on the Israel–Hamas War and the killing in Southport, which was instrumentalized by far-right groups. These events prompted mass demonstrations and the spread of disinformation regarding the identity and origins of the shooter, specifically targeting minorities and religious leaders. Finally, Germany stands out for targeting companies (sr = 0.5) and war victims (sr = 0.6) related to the Russia–Ukraine War more than expected.

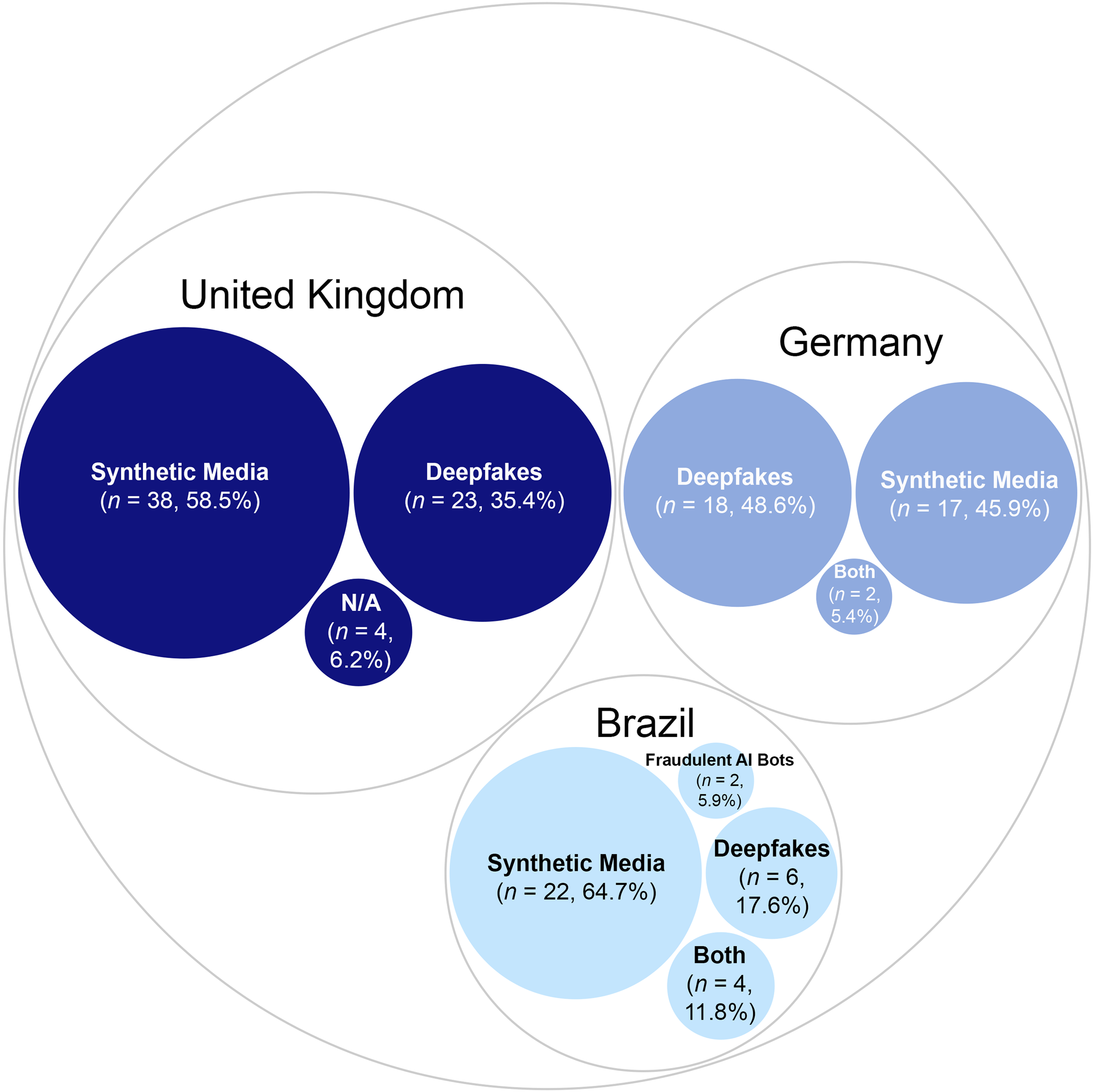

AI elements and generative AI models used

When examining the AI elements and generative models used to create deceptive content, certain technologies dominate while others play a niche role. Synthetic media is the most frequent tactic, accounting for just over half (n = 77; 56.6%) of the 136 analyzed articles, consisting entirely of AI-generated content. This is followed by deepfakes, representing slightly more than one-third (n = 47; 34.4%) of the cases, involving sophisticated digital manipulation of images, video, or audio using AI tools to mimic public figures. Together, these two forms of AI-generated deceptive content dominate the disinformation landscape across the three countries (see Figure 5).

AI elements used to create deceptive content by country.

Notably, the UK is the only country with four cases categorized as ‘N/A’ for AI elements, all involving false claims that content was AI-generated. Fraudulent AI bots designed to impersonate services like banks, tax authorities, and credit card systems to steal user information were identified exclusively in Brazil, in two out of 34 verification articles.

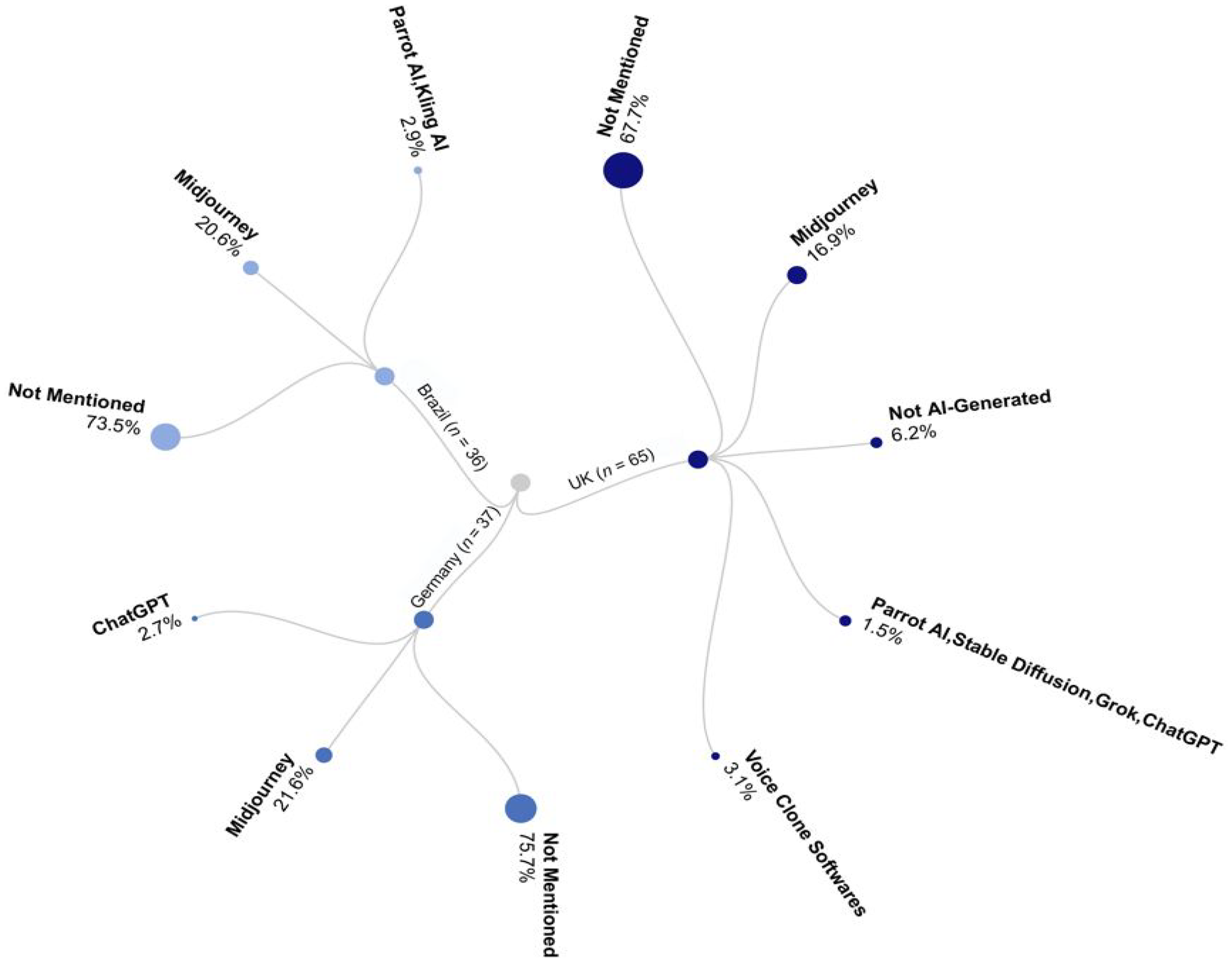

The majority of deceptive content (n = 97; 71.3%) did not specify the generative model used, reflecting a lack of transparency or difficulty in tracing the origin of AI-generated material. Among identifiable models, Midjourney is most frequently used (n = 26; 19.1%), likely due to its user-friendly interface and ability to generate high-quality synthetic images and visuals with minimal expertise. The UK demonstrates a broader range of generative models for creating falsehoods (See Figure 6), incorporating models such as voice cloning software, Parrot AI, Stable Diffusion, and Grok, among others. This diversity is likely enabled by the country’s advanced digital infrastructure, though this technological advantage is often exploited for harmful purposes. In contrast, Germany takes a more cautious approach to emerging technologies, prioritizing privacy and data protection (Płóciennik, 2021). This risk-averse culture may slow technological adoption compared to the UK and Brazil. These findings suggest a more aggressive embrace of innovation in the UK and Brazil, driven by rapid progress but creating opportunities for AI misuse in deceptive content.

Generative AI models used in creating misinformation by country.

Detection methods and debunking strategies for AI-generated misinformation (RQ2)

To address our second research question, we examined the detection methods and debunking strategies used by fact-checkers to identify misinformation, extracting insights from verification articles to provide an overview of practiced methods and strategies in detecting and combating AI-generated misinformation.

The findings indicate that more than three-quarters (n = 118; 77.1%) of verification articles did not specify the tools used to detect misinformation. AI detectors are likely not widely utilized due to their limited accuracy, as they provide probabilistic rather than definitive conclusions (Jacobsen & Simpson, 2023; Wittenberg et al., 2024). Moreover, during the period analyzed, AI-generated misinformation, despite improvements, generally lacked quality and could often be debunked by identifying image inaccuracies and synchronization peculiarities. Among the named tools, True Media Social and Hive (both with 5.2%) were the most commonly used. The second most frequently mentioned tool was “AI or NOT” (3.3%), followed by InVid-WeVerify (2.0%). Although employed less frequently, tools like InVid-WeVerify remain valuable in specific contexts, such as verifying video content and detecting manipulations, making them particularly useful for visual media verification. Other detection tools, including Speech Classifiers (e.g., by Eleven Labs), Hugging Face, Neuraforge, and LIPINC, were each mentioned only once.

Notably, fact-checkers in Brazil showed significantly higher adoption of AI detection tools (42%) compared to the UK (15.1%) and Germany (10.8%). This highlights regional disparities in approaches to combating AI-generated misinformation and suggests that greater skepticism in the UK and Germany toward detection tools reflects their higher levels of journalistic professionalism. Overall, these findings reflect the nascent nature of the phenomenon, with tools for detecting AI-generated misinformation not yet widely adopted or consistently applied.

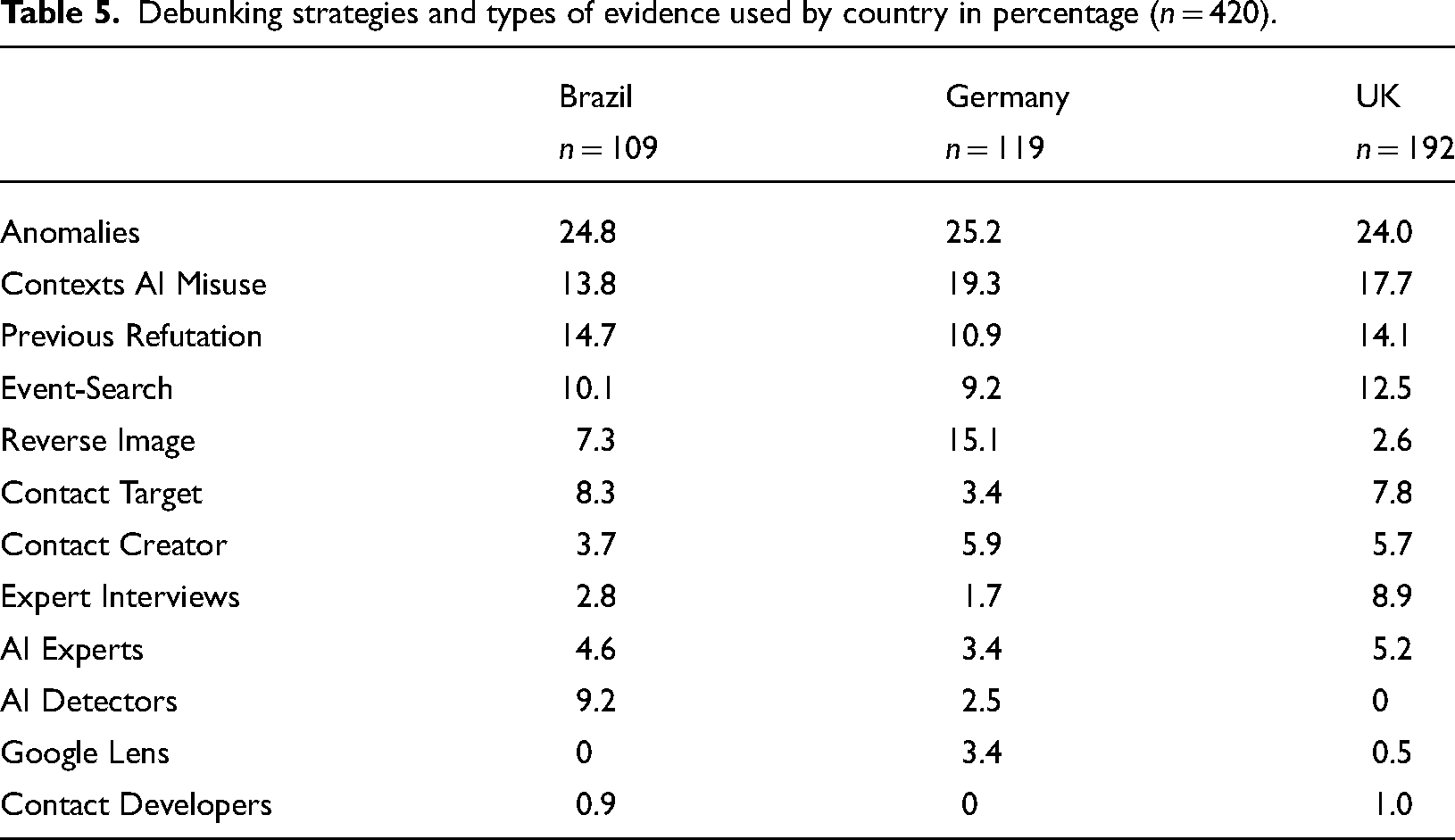

When examining further debunking strategies, the most frequently used method is highlighting anomalies in images or videos, accounting for almost one-quarter of the cases (n = 103; 24.5%). This strategy was widely used across Brazil, Germany, and the UK (see Table 5), reflecting a strong reliance on identifying visual inconsistencies to combat AI-generated misinformation. The second most common strategy involved contextualizing and explaining AI misuses (17.1%; n = 72). Demonstrating that fact-checkers have already refuted similar claims accounts for 13.3% (n = 56), leveraging the repetitive nature of misinformation. Another prominent strategy is sourcing evidence documented in news reports or online spaces, such as official social media accounts (n = 46; 11.0%).

Debunking strategies and types of evidence used by country in percentage (n = 420).

Visual content verification remains a primary focus, with tools such as Reverse Image Search (n = 31; 7.4%) used to trace altered images and videos. Fact-checkers also consulted the targets of AI-deceptive content, including companies, organizations, or individuals affected by them (n = 28; 6.7%), highlighting the importance of verifying facts directly with stakeholders. Less common strategies include expert interviews and contacting the creators or spreaders of AI creation, both appearing in 5.2% (n = 22) of cases. Dedicated AI detection tools are used in only 3.1% (n = 13) of cases, while Google Lens and contacting AI developers were rare strategies, accounting for 1.2% (n = 5) and 0.7% (n = 3), respectively. These findings demonstrate a strong reliance on traditional verification methods, such as visual anomaly detection and contextualization, while the use of AI-specific tools remains limited. This gap represents an opportunity to expand the adoption of advanced technologies to enhance debunking strategies.

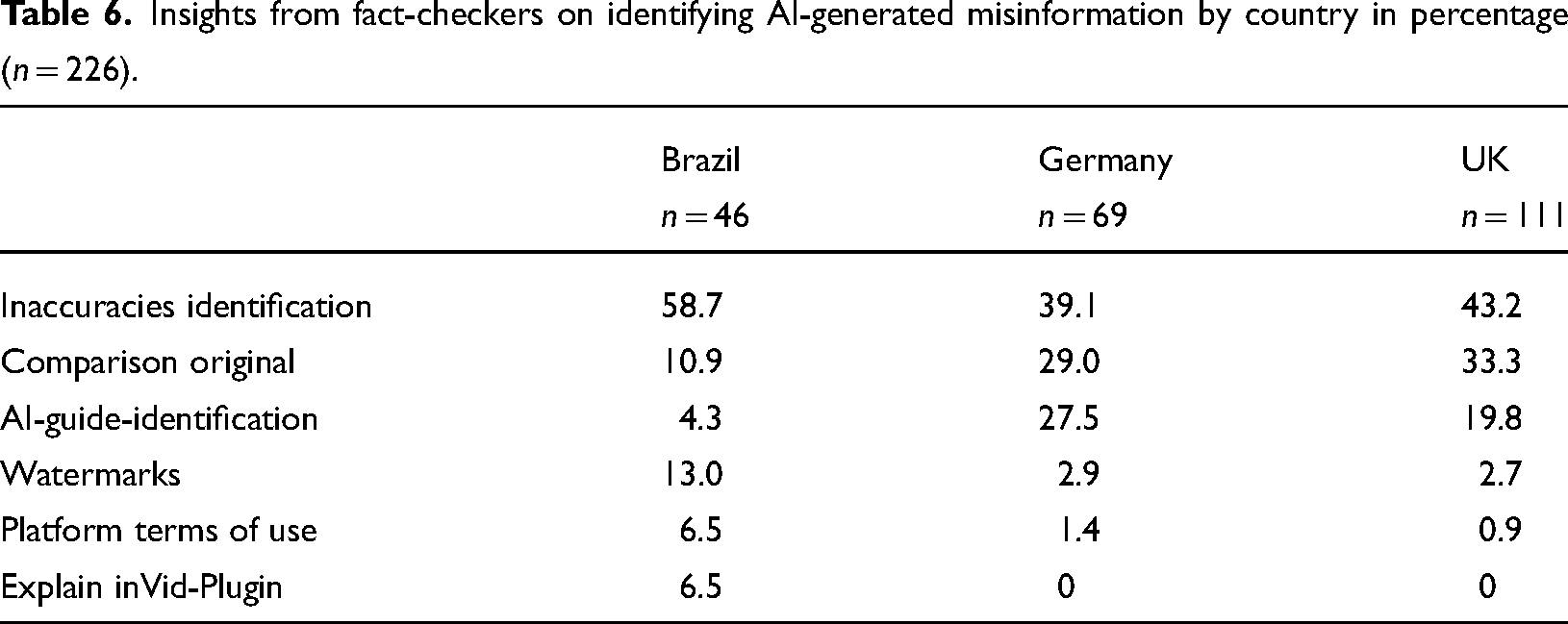

Insights from fact-checkers on the self-identification of AI-generated misinformation

The findings revealed key insights provided by fact-checkers to identify AI-generated misinformation. The most common advice points to inaccuracies in images or videos, such as object distortions, unnatural shadows, mismatched body movements, or incoherent text, accounting for nearly half (n = 102; 45.1%) of the 226 cases. The second most common approach involves comparing manipulated content with original material from the same event or source, comprising just over a quarter (n = 62; 27.4%) of cases. This method is particularly effective when original material is available for side-by-side comparisons to identify discrepancies. These two methods were also the most frequently mentioned across all countries (see Table 6).

Insights from fact-checkers on identifying AI-generated misinformation by country in percentage (n = 226).

Fact-checkers often included external links to guides for identifying AI-generated and deepfake content (n = 43; 19%), more commonly in Germany (27.5%) and the UK (19.8%) than in Brazil (4.3%), again reflecting higher levels of journalistic professionalism in these European countries. These guides help readers recognize synthetic content and navigate deceptive AI materials. Another approach explained platform terms of use for AI-generated misinformation (n = 5; 2.2%), ranging from 0.9% in the UK to 13% in Brazil. This highlights the role of platform mechanisms in understanding the origin and spread of misinformation. Less frequently used methods include pointing to watermarks with AI tool names (n = 11; 4.9%), primarily by Brazilian fact-checkers, and explaining the workings of the InVid Plugin (n = 3; 1.3%).

Fact-checkers’ assessment of AI-generated misinformation and debunking strategies (RQ3)

To complement our findings, we conducted 13 interviews with fact-checkers operating in Brazil, Germany, the UK, and deterritorialized news agencies. Without exception, all interviewees reported a noticeable increase in AI-generated falsehoods circulating online over the past two years, particularly following the US election. However, they noted that the volume remains smaller than anticipated, and the available evidence is still largely anecdotal. As one interviewee stated, “For us, it’s anecdotal evidence, I would say, but we see these a lot.” Another fact-checker explained, There’s been lots of talk about AI-generated content, but it hasn’t played as significant a role in disinformation in our experience, or in the experience of other fact-checkers we’ve spoken to. I’d say that changed somewhat after the US election, we began seeing a lot of AI-generated images, and not just humorous ones, like a cat with five legs, which are irrelevant for us.

To some extent, these findings align with our content analysis, which indicates a clear trend of increasing AI-generated falsehoods. However, the overall volume remains limited. As one interviewee noted, It’s obviously present, but only a small proportion of the content we encounter is focused on political disinformation. We expected a greater presence of AI-generated disinformation during events such as the US elections or the European elections earlier this year, but that was not the case.

Even in the context of elections, the prevalence of AI-generated content was lower than anticipated. While there was a rise in some countries holding elections, the overall volume fell short of their expectations. Consistent with our content analysis, the majority of interviewed fact-checkers working in Brazil specifically noted a rise in the use of AI-generated content for online fraud. Numerous scams have been reported, with significant financial losses affecting the public. According to the interviewees, the most pressing issue currently associated with AI in Brazil is its role in enabling the proliferation of scams, which disproportionately harm lower-income populations. Given that Brazil has only a few decrees regulating misinformation during elections and no specific legislation addressing misinformation more broadly, fact-checkers expressed frustration with Meta’s lack of accountability. One interviewee explained in the context of AI-generated scams: Meta could have identified that this video had already been posted and classified as false by dozens of agencies, but these are sponsored posts, and Meta profits from both the ads and the scams. Even though they claim to take action, it’s clear they don’t—the ads are still there.

Another interviewee referred to a high-profile case involving Brazilian TV personality Pedro Bial, whose image was exploited via deepfake technology to promote a medical product, reportedly for hair loss. Despite repeatedly reporting the incident to Meta, the platform failed to take action, prompting Bial to file a lawsuit. The interviewee noted: “It has become a trend to use the faces of well-known figures to promote products, such as formulas or supplements.”

The quality of AI-generated problematic content and the limitations of detection tools

There was broad agreement across countries regarding the still-limited, though rapidly improving, quality of AI-generated content. As one interviewee noted, “The AI tools still don’t understand the laws of physics, so you can spot mistakes.” Others seemed to agree: “They are still quite easy to spot. So, they are not that good yet, but they probably will be better, and that is also worrying.” Some improvements in deception have already been identified: “The development of generative AI tools for images and videos is far more advanced than last year. The issues, such as the presence of four or six fingers on a hand, are no longer the most significant problems with the latest generation of tools. However, they are not yet sophisticated enough that we cannot still detect the fakes,” noted another interviewee.

Regarding the available tools to identify AI-generated content, fact-checkers are experimenting with them but acknowledge that they are not sufficient, relying on probabilities and remain unreliable. One interviewee pointed out several reasons for skepticism. First, there is always a chance that the image is not AI-generated. Second, the detection tools are often inaccurate. Third, for readers, these tools are just another algorithm, lacking credibility. Other fact-checkers also expressed skepticism toward detection tools, preferring instead to consult experts. As one noted, “I’m very skeptical because I upload the same image three times and always receive a different result.” Another added, “We are journalists; we didn’t develop these tools.” Fact-checkers with expertise in machine learning highlighted the challenges posed by varying image compression formats. One explained: If your models have been trained only on JPEG images, they may struggle to detect forensic traces in other formats—[such as WebP or HEIC]—because most forensic methods have been overwhelmingly developed based on JPEG data over many years.

As a result, fact-checkers use these tools as a supplementary measure (see Table 5 of content analysis) but primarily rely on logic and observation, pointing out mistakes in the picture or explaining why the image, video, or even voice cannot be real, though the latter is more challenging. Concerns regarding AI-generated audio were also raised by other fact-checkers: The more accurately AI-generated audio mimics the original voice—especially in the case of imitation—the harder it is to detect. Sometimes, it’s a voice that only exists within the AI system, yet it still sounds completely human, a voice that doesn’t exist in real life. So, today, audio concerns me more than other forms of AI.

This phenomenon is particularly concerning in the context of online scams, as identified in Brazil, where human-like voices can be exploited by malicious actors in the operationalization of scams. Accordingly, traditional journalistic methods were cited as an essential complement to AI detection models. Fact-checkers noted that deepfakes involving politicians or celebrities are relatively easier to verify, because they can be corroborated against public records, existing images, news coverage, or confirmed through official agencies or public relations representatives. As one interviewee stated, “There are many other ways to figure it out.” Nonetheless, the situation becomes more complex with the rising sophistication of AI-generated content, especially in the context of distant or under-documented events such as the war in Gaza. As one fact-checker observed, “This might become increasingly difficult in the future, especially when there are no alternative images available and the origin of the content is unclear.”

Discussion and conclusion

This study addressed critical gaps in detecting and disseminating AI-generated misinformation by identifying key trends across Brazil, Germany, and the UK, focusing on selected variables such as topics, origins, targets, intentions, elements, and generative models (RQ1). It also synthesized fact-checkers’ insights into detection methods and debunking strategies for AI-generated misinformation (RQ2). Finally, this study assessed fact-checkers’ perceptions of the rise in AI-generated misinformation and its verification challenges (RQ3). To achieve this, we analyzed 136 verification articles published between 2023 and 2024 and conducted 13 expert interviews with fact-checkers. To collect the data, we employed a broad search strategy designed to capture diverse and relevant verification cases. Nevertheless, the study faces several limitations.

As is common in case study research, the scope of our sample is restricted: in each country, we included one independent fact-checking organization and one fact-checking unit embedded within a prominent news agency, selected for their involvement in AI developments and detection methods. Therefore, the findings of this study should be interpreted as illustrative of the fact-checking practices of the selected organizations rather than representative of the broader fact-checking landscape in Brazil, Germany, and the UK. Despite these constraints, the study makes an important contribution to the emerging field of AI-generated misinformation research. It offers empirical insights into current trends in fact-checking practices and lays the groundwork for future research on scalable detection strategies and comparative media responses. The relatively small number of identified articles is a noteworthy finding. Despite the increasing prevalence of AI-generated content, only a limited amount has been explicitly flagged as misinformation by fact-checkers to date. This aligns with previous research (Corsi et al., 2024). Our interviews suggest a more cautious outlook: despite the rising number of AI-generated falsehoods, the incidence remains lower than fact-checkers anticipated. While the uneven quality in AI-generated content remains noticeable, fact-checkers believe that detection will become increasingly challenging as generative models continue to improve. It must be noted that our focus is specifically on AI-generated misinformation addressed by fact-checkers, who typically intervene when content has already gained significant traction or poses potential political, economic, or health risks.

Our analysis found key trends in AI-generated misinformation. In the UK, the country with the highest level of societal polarization, such content primarily targeted domestic politics and public figures, especially during the 2024 US election, the Israel–Hamas War, and the UK election. In Germany, it focused on geopolitical issues, particularly the Russia–Ukraine War, reflecting foreign policy concerns. The UK saw more AI-generated misinformation on the Israel–Hamas War than Germany, likely due to Germany’s strict regulations on antisemitism and hate speech. The UK’s comparatively lenient approach (Coe, 2022) and the absence of specific laws against misinformation may have facilitated AI-generated content circulation. In Brazil, AI-generated misinformation surged during the 2024 municipal elections, alongside increased fraud and scams. The increased occurrence of AI-generated scams and frauds in Brazil could be attributed to underlying structural weaknesses, such as economic instability, insufficient enforcement of digital regulations, and lower digital literacy levels, which are frequently mirrored in the country’s HDI. AI-generated content featuring Elon Musk appeared in both the UK and Brazil to manipulate public opinion, particularly during Musk’s conflict with Brazil’s Superior Court. Overall, AI-generated disinformation thrives in polarized elections, contentious domestic politics, and international crises.

Research suggests that while political deepfakes and synthetic media do not always mislead directly, they create uncertainty, eroding trust in news shared on social media. This decline in trust can reduce civic cooperation and increase news avoidance (Vaccari & Chadwick, 2020). AI’s ability to manipulate content further underscores the complex relationship between information credibility and AI-generated news (Morosoli et al., 2025). AI-generated falsehoods are mainly spread by identified or anonymous social media accounts. Political motives, focusing on delegitimization and mobilization (Hameleers, 2023), are dominant, while ideological goals are more evident in Germany. The UK is characterized by humor and satire in AI-generated falsehoods, reflecting the tabloid culture prevalent in its media systems, whereas Brazil is distinguished by profit-driven misinformation, particularly in scams and fraud.

AI-generated misinformation primarily targets political figures, with international and national politicians comprising nearly half of all instances. The UK leads in such cases, using deceptive content to destabilize political systems. Public figures and companies are frequent targets, often exploited to promote scams and counterfeit products. Brazil focuses on national politicians, Supreme Court judges, and celebrities, driven by political tensions during municipal elections and disputes with tech companies. The UK targets minorities and religious leaders, linked to far-right narratives surrounding the Southport killing and mass demonstrations. Germany targets companies and war victims, particularly those associated with the Russia–Ukraine conflict, reflecting its geopolitical concerns.

Our analysis shows that synthetic media and deepfakes dominate, with synthetic media being the most common. The UK uniquely features cases where content is falsely attributed to AI, reflecting the “liar’s dividend” phenomenon (Christopher, 2023), where actors exploit AI ambiguity to falsely claim content was AI-generated. These claims obscure accountability and leverage AI-related uncertainty. The proliferation of deepfakes further allows individuals, especially politicians with weak commitments to truth, to dismiss evidence of wrongdoing as fabricated (Kalpokas, 2021). Brazil is notable for using fraudulent AI bots impersonating official services in scams. A significant portion of deceptive content lacks clarity on the AI models used, complicating efforts to trace its origin. Midjourney is the most identified model, while the UK shows a broader range of tools like voice cloning software, Grok, Stable Diffusion, and Parrot AI. These findings align with the UK’s advanced digital infrastructure and competitive AI sector (European Commission, 2024).

In response to RQ2, the analysis revealed that many verification articles lack clear documentation of detection tools, likely due to the limited accuracy of current AI detection technologies. Among the tools mentioned, True Media Social and Hive were the most commonly used, though their adoption was limited. Fact-checkers in Brazil showed higher use of AI detection tools compared to Germany and the UK, indicating regional differences in the reliability and transparency of available AI tools, underscoring broader concerns about the trustworthiness and accountability of such technologies (Porlezza, 2023). Despite the emerging nature of AI detection technologies, fact-checkers primarily rely on traditional debunking strategies, such as identifying visual anomalies in images and videos. This suggests that, while AI detection tools are underutilized, visual content verification and contextual approaches remain key in combating AI-generated misinformation.

Consistent with our content analysis, fact-checkers reported a noticeable rise in AI-generated misinformation over the past two years, especially following the US election. However, they noted that the volume of such content remains lower than anticipated, with much of the evidence being anecdotal, which partially explains the relatively low number of articles identified in our content analysis. In Brazil, AI-generated content is frequently used in scams, leading to significant financial losses, particularly among lower-income groups. Fact-checkers have expressed frustration over the lack of regulatory action and Meta’s failure to address reported scams. As for content quality, AI-generated falsehoods remain relatively easy to detect, though advancements in generative tools are making detection more difficult. Fact-checkers are generally skeptical of current detection tools, preferring to rely on traditional journalistic methods, expert consultation, and logic to verify content. Additionally, concerns were raised about AI-generated audio, particularly its role in scams. As AI tools continue to improve, verifying such content, particularly in under-documented contexts, is anticipated to become increasingly challenging.

These insights from fact-checkers can offer valuable guidance for journalism, helping to safeguard information integrity and public trust in the AI era. By identifying key trends in fact-checking practices and building on existing debunking strategies, the findings can help journalists better navigate the challenges posed by AI-generated misinformation. Given the growing difficulty for the public to discern truth from falsehood, and the labor-intensive nature of manual fact-checking, journalists are increasingly integrating AI tools to verify and fact-check text, images, audio, and video content in the newsgathering and distribution process (Sarısakaloğlu, 2025). Nonetheless, journalists must acquire technical skills to effectively use these tools for detecting and debunking misinformation (Diakopoulos & Johnson, 2021; Thomson et al., 2022). This includes understanding how AI technologies work and recognizing AI-generated misinformation, enabling journalists to critically assess information and maintain their role as defenders of truth. Training programs based on identified trends in AI-generated misinformation could empower journalists to evaluate information independently and resist manipulation, fostering informed and resilient journalism. However, scholars caution that relying on AI for fact-checking may not necessarily increase public trust in information quality. Instead, the proliferation of competing AI systems could lead to “information insecurity” and “epistemic relativism,” undermining confidence in factual discourse (Jungherr & Schroeder, 2023, p. 169).

Further research is needed to explore how AI tools can be designed and deployed to strengthen trust, enhance transparency, and promote a shared understanding of facts without worsening disinformation challenges. Future analysis should track advancements in AI technologies and assess changes in topics, targets, and detection methods. Additionally, interviews with journalists could reveal practical challenges, identify gaps in existing tools and strategies, and guide more effective solutions to combat AI-generated misinformation. Experimental studies could further examine which formats of debunking are most effective, while ethical and legal questions surrounding synthetic content present another critical area for investigation as technologies continue to advance.

Meta’s recent decision to discontinue its Third-Party Fact-Checking Project may significantly impact the reach of unidentified and unlabeled AI-generated falsehoods, increasing exposure to AI-generated disinformation. This is especially concerning in countries like Brazil, where social media plays a central role in news consumption. Reducing external oversight could exacerbate the spread of deceptive content, highlighting the need for further investigation into its implications for public trust and media integrity. In this context, it is also important to explore how audiences perceive and respond to AI-generated misinformation, particularly in environments marked by polarization or low institutional trust. Understanding these audience responses can help identify vulnerabilities in information ecosystems and guide the development of more effective countermeasures, including tailored media literacy. Finally, future research should address a key limitation of this study by expanding the geographical scope to include other countries with different media systems, digital infrastructures, or political environments, thereby enabling broader generalizations.

Supplemental Material

sj-pdf-1-emm-10.1177_27523543251344971 - Supplemental material for AI-Generated Misinformation: A Case Study on Emerging Trends in Fact-Checking Practices Across Brazil, Germany, and the United Kingdom

Supplemental material, sj-pdf-1-emm-10.1177_27523543251344971 for AI-Generated Misinformation: A Case Study on Emerging Trends in Fact-Checking Practices Across Brazil, Germany, and the United Kingdom by Regina Cazzamatta and Aynur Sarısakaloğlu in Emerging Media

Footnotes

Acknowledgment

Both authors contributed equally to this research and share first authorship.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partly supported by the Deutsche Forschungsgemeinschaft (grant number CA 2840/1-1; Project Number 8212383).

Ethical statement

All interviews were anonymized in compliance with the European General Data Protection Regulation (GDPR). To protect participants’ identities, the names of the organizations were omitted, and we refrained from numbering the interview quotes. This precaution was taken to prevent any potential cross-referencing with the content analysis that might lead to contextual identification of individual participants.

Supplemental material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.