Abstract

In recent years, the ‘digital resurrection’ of the deceased through Deepfake technology has become a reality accessible to the general public and has raised profound ethical discussions in society. While the academic literature focuses primarily on regulatory and political implications, the public reception of digital resurrection remains largely unexplored. Furthermore, much of the public discourse around Deepfakes centres on their malicious applications, despite the potential for digital resurrection to serve prosocial purposes. This study focuses on an Israeli campaign called ‘Listen to My Voice’ (2021), which ‘resurrected’ women murdered by their partners, having them ‘tell’ the stories of the abusive relationships that preceded their deaths. An analysis of viewer responses shows that the campaign elicited strong reactions straddling the line between technology and ethics. Quantitatively, most comments supported the use of Deepfakes, given the importance of the cause and the impactful messaging. Qualitatively, two key themes emerged: one technical, indicating that visceral realism sparked both emotional connections and fundamental misunderstandings of the technology itself. The second highlighted an ethical tension between desires to protect autonomy and justifications for posthumously using an individual’s likeness for positive aims. The findings suggest Deepfakes’ technical attributes, particularly tangibility, significantly shape its ethical reception—a phenomenon we term ‘the ethics of technological misunderstanding’.

Keywords

Introduction

In November 2021, on the International Day for the Elimination of Violence Against Women, a unique, unprecedented campaign was launched in Israel, where more than 200,000 women are affected by intimate partner violence (IPV) each year (The Israel Women’s Network, 2024). The campaign ‘Listen to My Voice’ 1 utilised artificial intelligence (AI)-powered synthetic video technology, that is, ‘Deepfakes’, in which ‘images, videos, and audio clips are merged to create a hyper-realistic video that can feature a person doing or saying something they never did’ (Murphy & Flynn, 2022, p. 1), to synthetically ‘resuscitate’ five Israeli women who were murdered by their partners. In the campaign’s videos, these women ‘narrate’ their personal experiences in the first person, shedding light on the abusive relationships they endured and ‘providing’ the viewers with insights on taking early steps to prevent the cruel and tragic fate that befell them. Each synthetic video is approximately 2:30 minutes long. The victims featured in the video represent a variety of Israeli society: a woman of Ethiopian origin, an Israeli Arab, Ashkenazi and Sephardic Jews, religious and secular women. In terms of age, the victims vary from 23 to 70 years old. A sixth video featuring snippets from all five videos was also produced. In addition to the campaign website, a dedicated Facebook page was created for the campaign, and the videos were posted on the page alongside other posts offering information about the phenomenon, signs of problematic relationships and contact information for victims.

The campaign garnered significant public attention and elicited various responses. While it was awarded the Innovation Award by the Israeli Marketing Association (The Israeli Marketing Association, 2021), certain viewers expressed unease in witnessing the manipulation of deceased individuals (Yadlin & Morse, 2021). This debate is intertwined with the broader discourse regarding the ethical boundaries and permissible applications of AI and synthetic media, as these technologies continue to advance, challenging the distinction between authenticity and artificiality (Whittaker et al., 2020).

The campaign’s Facebook page sparked a fierce debate on ethical issues related to this unique project. Through a combination of quantitative and qualitative content analyses of comments posted on the campaign’s Facebook page, the present study aims to shed light on the attitudes of the Israeli public regarding Deepfake resuscitation, focusing especially on the ethical parameters it establishes. The central research question guiding our investigation is: How did the Israeli public react online to the campaign, and what were the associated emotional reactions, concerns and expressions of approval and disapproval it received?

Extensive and interdisciplinary literature has explored the ethical implications associated with Deepfakes, covering its broader applications, as well as the specific context of Deepfake resuscitation (de Ruiter, 2021; Diakopoulos & Johnson, 2021; Meskys et al., 2020; Morse, 2024). Much of this literature relies on attitude surveys that assess people’s predispositions regarding technology. However, there is a noticeable scarcity of research investigating real-world case applications of this technology and concurrent real-time public reactions to it.

Furthermore, the prevailing body of academic work pertaining to Deepfakes’ public acceptance predominantly addresses their application in commercial or politically motivated, often malicious, contexts (Mihailova, 2021; Whittaker et al., 2021). Notably, the ‘Listen to My Voice’ campaign represents a pioneering endeavour on a global scale that harnesses Deepfake technology for prosocial purposes. Other than the ‘Listen to My Voice’ prosocial campaign, we only know about one synthetic video from 2020, which brought back to life a dead victim of the 2018 Parkland school shooting and advocated for gun safety legislation (Diaz, 2020). This prompts pertinent questions: Does the altruistic orientation of this initiative influence public reception? Will the heavy concerns expressed in public discourse about the new technological application be alleviated or eliminated when the application of the technology is made for a positive purpose in a critical social issue concerning matters of life and death?

Exploring the reactions elicited by the campaign within the Israeli public, the degree of legitimacy it receives and the boundaries of what is permissible and prohibited in its context may contribute to a deeper comprehension of the social assimilation process of Deepfake resuscitation. This understanding is particularly valuable as Deepfakes are rapidly evolving in sophistication and will eventually be undetectable to the untrained eye (Maras & Alexandrou, 2019).

Literature Review

Intimate Partner Violence

IPV is a critical problem, one that occurs along many dimensions, takes many forms and arises under a range of different conditions (Cismaru et al., 2010). IPV is defined by the Centre for Disease Control and Prevention (CDC) as ‘physical, sexual, or psychological violence perpetrated by one romantic or intimate partner toward another’ (Breiding et al., 2015).

Physical violence involves a range of behaviours from minor violence, for example, slapping to kicking, to severe violence, for example, use of a weapon. Sexual violence involves coercing or forcing someone to take part in sexual acts against their will. When a person uses coercive tactics to limit one’s agency or autonomy or expressive aggression (such as name-calling or threats of violence), these acts are considered forms of psychological violence (DeJonghe et al., 2008).

IPV occurs in all settings and among all socio-economic, religious and cultural groups (WHO, 2012) and is estimated to impact around 37% of women and 30% of men in their lifetime (Smith et al., 2017). Recent data from U.S. crime reports suggest that about one in five homicide victims are killed by an intimate partner. These reports also found that over half of female homicide victims in the United States are killed by a current or former male intimate partner (CDC, 2022). IPV cases are also prone to increase during times of crisis: The incidence of physical IPV in 2020 during the COVID-19 pandemic was 1.8-fold higher than in 2017–2019 (Moreira & Da Costa, 2020).

A similar trend characterises Israel, the setting of the current research. In 2022, the Israeli Police opened 16,500 cases for offences of violence and threats between spouses. Excluding cases where complaints were registered by both men and women, in 89% of the cases the victims were women. In 98% of the cases of sexual offences between spouses, the victims were women. In 2022, 23 women were murdered in Israel, and in 2023, the number rose to 27 (Kfir, 2023).

You Only Live Twice: Death in the Digital Age

The emergence of digital technologies has brought about significant changes not only in the way we live but also in how we cope and process death. While death is characterised by a clear separation between the living and the dead, with the latter being physically absent from the former, in the digital age, we experience a form of ‘digital immortality’ that allows us to interact with the digital remains of the deceased, which can be experienced as a form of the eternal presence of the dead in cyberspace (Meese et al., 2015; Savin-Baden, 2022).

One of the main contributors to this ‘digital immortality’ is the technological affordance of persistence that characterises online media and social networks. As individuals leave behind a ‘digital legacy’ comprising their online activities (i.e., texts, photos and videos), the digital self persists beyond natural death (Mielczarek, 2018). Facebook, for example, memorialises the profiles of deceased users by default when not instructed otherwise. Users can add a legacy contact to their account that will be able to manage the account when the user has passed away. Alternatively, users can contact Facebook and request to be added as a legacy account of a deceased person if evidence is presented (such as a will or court order). Legacy accounts can request that a user account be memorialised. With this practice, Facebook is positioning itself as both a site and steward for digital remains for an indefinite period of time (Kasket, 2019). Consequently, the accounts of the deceased often become virtual ‘congregation’ spaces, and new practices of encountering death have emerged (Brubaker et al., 2019; Morse, 2024).

The above examples highlight the constant presence of the dead in cyberspace, making it a memorial site for their pre-death symbolic representation (Nansen et al., 2014). However, recent developments in the fields of generative AI, such as Deepfakes and voice conversion technologies (Lee et al., 2023) take this situation one step further, allowing not only the preservation or reproduction of existing representations of the deceased but also their digital ‘resuscitation’ (Savin-Baden et al., 2017).

AI and Synthetic Resuscitation of the Deceased

While the manipulation of images, audio, or video is certainly not a new phenomenon, the increasing sophistication of machine-learning algorithms, which can easily manipulate multimedia content, is generating more believable synthesised media (Harbinja et al., 2023; Masood et al., 2023). One of the most prominent visual products of synthesised media is Deepfakes, a portmanteau of ‘deep learning’ and ‘fake’, referring to applications that merge, combine, replace and superimpose images and video clips to create fake videos that appear authentic (Maras & Alexandrou, 2019).

Technically speaking, Deepfakes are designed to utilise common characteristics from a collection of existing images to learn and determine how to simulate other images with those characteristics (Kietzmann et al., 2020). Deepfakes usually rely on training generative neural network architectures, in which a target’s information can be superimposed on an original photo or video to alter it. Other machine-learning techniques, such as general adversarial networks can be applied to ensure that the image or video constantly evolves and improves, creating even more convincing synthesised media (Wang et al., 2023).

From the above description, two key features of Deepfakes are implied.

First, Deepfake products are highly believable, convincing and realistic. This has led researchers and practitioners to express concerns over the malicious potential of this technology, from blackmail and intimidation through sabotage, harassment, defamation (Whittaker et al., 2020), revenge porn (Karasavva & Noorbhai, 2021; Öhman, 2020; Whittaker et al., 2020), identify theft and bullying (Maras & Alexandrou, 2019; Whittaker et al., 2020). Deepfakes are considered an extreme, highly sophisticated form of misinformation (de Ruiter, 2021; Floridi, 2018; Slater & Rastogi, 2022; Vaccari & Chadwick, 2020) and propaganda (Kietzmann et al., 2020; Langguth et al., 2021; Maras & Alexandrou, 2019) and, as such, pose a threat to the credibility of online information and even undermine the basic, epistemic value of image and video (Khan et al., 2023; Rini, 2020). In the context of IPV, deepfake technology emerges as potentially dramatically harmful as it can be used by perpetrators to threaten, blackmail and abuse victims, by creating embarrassing, incriminating, sexual or other forms of highly convincing synthetic videos featuring their partners (Lucas, 2022). Can a technology based on lies, deception and fraud be utilised for good? Is it even ethically possible to harness such potentially destructive innovation for prosocial campaigns?

Second, Deepfakes do not require the consent, participation, or awareness of the person whose image and voice are involved (Fletcher, 2018; Whittaker et al., 2020). Owing to the high volume of digital media created during one’s lifetime, Deepfakes can be produced using publicly released photographs and images (Roberts, 2023). Hence, they can be used even postmortem, allowing for the creation of holograms, audio messages, and videos of the deceased doing or saying something they have never said or done while still alive.

Indeed, in addition to the widespread use of Deepfakes for humorous, pornographic, or political videos of living persons, the use of ‘Deepfake Resuscitation’, that is, featuring a dead victim who is brought back to life with Deepfakes that enables this victim to advocate for an issue related to the cause of their death (Lu & Chu, 2023), has experienced significant growth in recent years. For example, the Shoah Foundation deployed its dimensions in the Testimony project, in which Holocaust survivors’ holograms interact with audiences and tell the survivors’ personal stories (Morse, 2024); My Heritage launched its Deep Nostalgia project, allowing customers to animate dead relatives’ still images and create a short moving GIF of the ancestors (Morse, 2024); at the Salvador Dalí Museum in St Petersburg, Florida, a life-size talking avatar of the surrealist artist, generated from archival footage, snaps selfies with museum visitors (Mihailova, 2021); and Kanye West made headlines in 2020 with his birthday present to Kim Kardashian—a holograph of her late father, Robert Kardashian—using Deepfake technology (Harbinja et al., 2023).

Deepfake resuscitation has potential in terms of historic preservation, education and digital archiving, as can be seen in the above examples. Academic research has also indicated that Deepfakes yield emotional and immersive experiences. For example, Kolb and August Kranzlmüller (2021) used Deepfake technology to create records to digitally preserve Holocaust survivors’ experiences, and found that the majority of the users stated they could relate to the virtual human they interacted with, and that the answers they received included interesting details, supporting the learning process about the Holocaust. However, this technology is also accompanied by a huge backlash, questioning whether such an insertion is appropriate, ethical, or distasteful.

Ethical Considerations Regarding Synthetic Resuscitation

The ability to recreate deceased individuals through emerging technologies raises profound ethical considerations that touch upon the fundamental values of consent, privacy and the preservation of one’s legacy. The pivotal issue of consent is at the forefront of these ethical deliberations. The act of rekindling the characteristics of a deceased person through AI prompts a critical inquiry into whether explicit consent was granted for such posthumous recreation during their lifetime. Within this complex ethical terrain, the involvement and decision-making authority of the deceased individual’s family or legally designated representatives play a decisive role in determining the appropriateness of engaging in such recreation (Hutson & Ratican, 2023).

Moreover, respect for the deceased transcends consent issues and is deeply rooted in fundamental moral values upheld by various cultures. Many cultures expressly prohibit the use of a deceased individual’s body or likeness in ways that may run counter to their interests and rights (Lu & Chu, 2023). Given that the recreated digital persona might inadvertently express views or take actions that the deceased would not have endorsed during their lifetime (Hutson & Ratican, 2023), the deployment of Deepfakes to ‘resuscitate’ the deceased may be perceived as a more extensive form of exploitation. This presents a distinctive ethical challenge concerning the preservation of the integrity and memory of the deceased, and the assurance that their legacy remains shielded from inadvertent distortions.

As presented above, ethical challenges extend to questions pertaining to forms of misuse of the technology. In light of the fact that synthetic media is often indistinguishable from authentic content (Fletcher, 2018), the potential for unauthorised and malevolent employment of Deepfakes in digital resuscitation poses yet another ethical quandary. These technologies can be harnessed to fabricate false narratives, mislead individuals and potentially tarnish the reputation of both the deceased and the living (Westerlund, 2019). In this regard, Deepfake resuscitation also challenges established notions of authenticity, credibility, verifiability and the propagation of misinformation.

Research Contribution

As described above, there is a growing body of literature on the ethical aspects related to the postmortem use of a person’s digital assets. However, little attention has been paid to the new technological practice of synthetic resuscitation and its ethical and social implications. Moreover, there is a noticeable dearth of studies that have empirically examined individuals’ specific perceptions regarding this subject. The vast majority of these studies emphasise the concerns accompanying synthetic media that are associated with political and malicious use of this technology.

In the current study, for the first time, we seek to provide empirical data concerning the audience’s response to an actual campaign that uses this technology for prosocial purposes, as demonstrated by the ‘Listen to My Voice’ Israeli campaign, which aims to raise awareness to the prevalence and tragic consequences of IPV by ‘reviving’ women who were killed by their partners.

This research will shed light on the emotional responses, sentiments, concerns and accolades elicited by the campaign. Focusing on a campaign with a non-controversial, positive social context will allow us to focus on the audience’s attitude and social acceptance related to the use of Deepfake technology per se (and not only in the functional aspects), thereby addressing a crucial gap in the current academic literature. Practically, this research has broader implications for the ethical boundaries of AI applications in society and offers insights into the responsible and ethical use of AI technologies, especially as they become more accessible and affordable.

Methods

The study examined the reactions to the ‘Listen to My Voice’ campaign and asked what were the associated emotional reactions, concerns, as well as expressions of approval and disapproval it received?

To this end, we conducted a quantitative and qualitative content analysis of Facebook comments posted in response to the Facebook posts of the campaign videos. The choice to analyse Facebook responses rather than other platforms like YouTube or Instagram relates to the dominance of this platform in Israel in general and in the context of the campaign in particular. According to data from the Israel Internet Association, in the first quarter of 2021—the year in which the ‘Listen to My Voice’ campaign was launched—the rate of Facebook users in Israel stood at 79.9% compared to 60.7% for Instagram (Israel Internet Association, 2021). Although the percentage of YouTube users is higher—86.4% according to the same survey—the number of responses to the campaign on YouTube was significantly lower (only dozens compared to hundreds), and therefore only Facebook responses were analysed.

As preparation for the research and in order to anchor the findings in a broader context, we conducted interviews with the two creators of the campaign: entrepreneur Shiran Melmedovski Somech and advertising professional Hila Salmon. The interviews were conducted jointly with both creators and took place via Zoom due to COVID-19 restrictions. The interviews were used for preparation of the study materials and are not part of the analysis.

Quantitative Content Analysis

The data for the quantitative content analysis consisted of all of the comments that were posted in response to the campaign Facebook post that featured a video presenting all of the victims together (n = 507). 2 Comments were manually copied and saved locally on a computer for analysis. Because there is no existing analytic framework, owing to the novelty of this type of campaign, the researchers created a coding scheme that derives from the literature on the social and moral aspects of the discussions around synthetic resuscitation, content manipulation and synthetic media.

All categories referring to the content of the comments were derived from the literature on digital resurrection (see Brubaker et al., 2019; Fletcher, 2018; Hutson & Ratican, 2023; Lu & Chu, 2023; Morse, 2024; Savin-Baden et al., 2017; Westerlund, 2019; Whittaker et al., 2020), particularly focusing on the ethical dilemmas that this technology raises. The coding scheme included the following categories, all coded as binary yes/no unless stated otherwise:

Author characteristics

Comment author religion (Jewish/Arab/Other-Unknown) Comment author gender (Woman/Man/Other-Unknown)

(Dis)Approval of the use of the technology for the purposes of the campaign

Does the comment include an expression of support for the use of Deepfake technology in the campaign? (E.g., ‘Important project. Well done!’) Does the comment include an expression of objections to the use of Deepfake technology in the campaign? (E.g., ‘This is horrific, it doesn’t matter what the cause is’.)

Emotional reactions

Does the comment include an expression of shock or extreme distress? (E.g., ‘Shocking’, ‘Chilling’, ‘I’m torn’.) Does the comment refer to one’s personal story? (E.g., ‘I was there’, ‘as someone who got out of a violent relationship’.) Sentiment towards the campaign (negative [e.g., ‘Have you lost your mind? Get rid of this demonic thing and be ashamed of yourselves. Crazy people’]; positive [e.g., ‘Wow, amazing, both technologically and emotionally. Thank you!’]; mixed-ambivalent [e.g., ‘Oh my Godddddd, it’s shocking and hard to watch even though the message is very important’]; neutral-irrelevant [e.g., ‘It’s as if she is alive. May her memory be blessed’]).

Ethical/Legal considerations

Does the comment reference a claim that the cause of the campaign justifies the means? (support vs. rejection, e.g., ‘This campaign is very tough, but overall what is even tougher is domestic violence. As much as it evokes difficult emotions, for me, the end justifies the means’, ‘I disagree, this campaign is horrific no matter what’). Does the comment relate to the issue of victims’ (non) consent to be included in the campaign? (e.g., ‘if the families approved—I think it is OK’.) Does the comment include concerns about victims’ respect or dignity in the context of the campaign? (e.g., ‘It is inappropriate to desecrate them’.) Does the comment relate to the legality of the campaign or the legal questions arising from the campaign? (e.g., ‘Is it legal to use their images like that?’.)

Campaign effectiveness

Does the comment refer to an argument on emotional numbness in society (with respect to the increasing threshold required for emotional stimuli)? (e.g., ‘apparently we are all just numb and need to be shocked to talk about violence’.) Does the comment include a reference to the campaign’s effectiveness in promoting its messages? (e.g., ‘This is one of the toughest and strongest things that has been done here lately. This needs to broadcast on TV now!’)

Sociotechnical angels

Does the comment feature a ‘technological determinism’ approach? (e.g., ‘In 20 years, there will be virtual 3D avatars of the deceased, but with no soul. There will be a world of soulless robots here’.) Does the comment refer to the blurred distinction between real and fake in the context of Deepfakes in general, or the campaign specifically? (e.g., ‘It is so difficult to watch…it is like they are here’; ‘Some people would think this is an illustration […] after all, how can a murdered person speak?’)

The purpose of this preliminary analysis of the comments to the joint video featuring all the victims was to learn which parameters in the coding scheme are most relevant, most significant and which are marginal in the comments. After the first round of intercoder reliability, the coding scheme was edited, and some of the final categories were added.

Coders’ training

Three research assistants coded the comments (N = 597). The coders were trained by the researchers according to the coding scheme, and repeated training sessions were conducted for categories that achieved less than 90% reliability, until an inter-rater agreement of 90% was achieved after the third round.

Qualitative Content Analysis

The data for the qualitative content analysis consisted of a purposive sample of comments and replies. For this stage of analysis, we created a corpus composed only of comments that explicitly referred to the synthetic resuscitation of the victims (N = 214). These comments were collected from all six formal campaign video posts on Facebook (five videos that featured each of the five victims separately and told their personal story, and the shared video presenting all five victims together by editing together parts of the five personal videos).

We first mapped the sample by coding each comment as either expressing support and/or objections to the use of Deepfake technology in the context of the campaign. Comments could express either, neither or both support and objections. Then, using a thematic analysis approach (Braun & Clarke, 2006), we conducted a thematic analysis of that sample of comments, including all of the replies they received (N = 280), in order to learn from them about users’ perceptions of synthetic resuscitation and its social implications.

Results

Quantitative analysis revealed that the campaign mostly elicited extreme spontaneous emotional reactions among users: no less than 49.5% of the comments included an expression of shock or extreme distress. The vast majority of them consisted of just a short word or phrase, such as ‘Painful’, ‘Difficult’, ‘Wow’, ‘Creepy’, ‘Shocking’, ‘Horrifying’, ‘Terrible’, ‘Gut punch’, ‘Heart breaking’, ‘Difficult to watch’.

However, the prevalence of other categories in the coding scheme was almost negligible. 7.1% of the comments in the quantitative sample (36 out of 507) referenced the claim that the cause of the campaign (its social value) justifies the means (using the Deepfake technology). Of these, only two (0.4%) objected to this claim, and the rest supported it. 4.9% (25 comments) claimed that the campaign was effective. Only 1% of the sample referenced an argument regarding the emotional numbness in our society, requiring extreme measures to shock people. Victims’ consent was not mentioned at all; only one comment related to the question of the victims’ consent, and only two comments related to the claim that the campaign did not respect the victims. Only four comments referred to the blurred distinction between real and fake.

In terms of sentiment, the vast majority (75%) of comments used a neutral sentiment towards the campaign, followed by 18% positive sentiment and negligible percentages of negative (4%) and mixed (3%) sentiment towards the campaign.

The results clearly demonstrate that users were emotionally triggered by the campaign. However, since these expressions of distress and shock are very general and it is impossible to understand with certainty what they refer to (is it a reaction to the ‘resuscitation’ of the victims? to their murder? to the message conveyed?), we decided to conduct the qualitative analysis on the comments referring specifically to the synthetic resuscitation of the victims (use of Deepfakes for the campaign) in all six video posts, as described in the Methods section.

For this sample (n = 214), the number of responses supporting the use of Deepfake technology for the campaign significantly exceeded the opposing responses (75% vs. 25%, respectively). The rate of responses expressing a complex view that both supported and opposed the use of Deepfakes for the purpose of the campaign was negligible at 1%. Altogether, the comments were largely supportive of the technology in the context of the campaign.

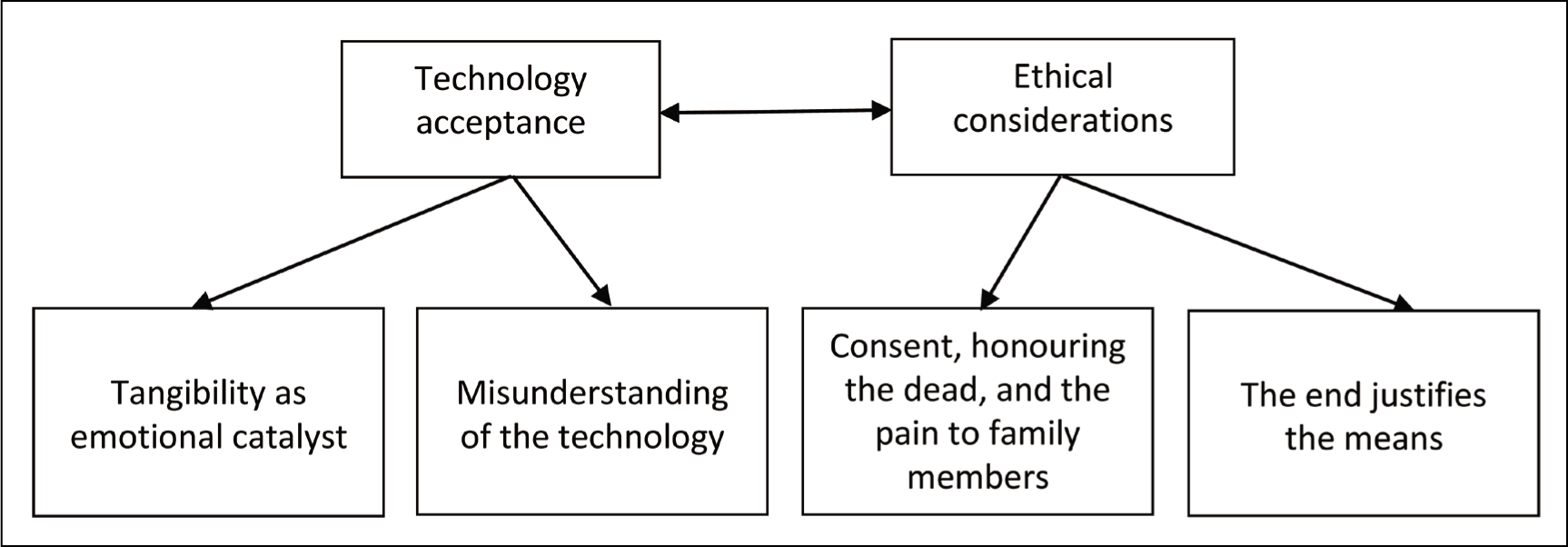

The qualitative analysis sought to reveal users’ perceptions of synthetic resuscitation for prosocial purposes. The analysis revealed two basic themes: Technology acceptance (tangibility as emotional catalyst and lack of understanding) and ethical considerations (questions of consent, honouring the dead, the pain to family members and whether the end justifies the means), as illustrated in Figure 1.

Thematic Tree of Users’ Perception of Synthetic Resuscitation for Prosocial Purposes as Expressed in User Comments.

Technology Acceptance

Tangibility as an Emotional Catalyst

The tangibility of the videos was addressed in various comments, mainly in the context of the blurring between life and death, which was highly unsettling to commenters and evoked intense emotional responses. The respondents shuddered at the live presence of the victims, even though it was clear to them that this was not possible: ‘It is not the blood that cries out from the grave; it is they themselves who are doing it. Chills’, ‘It is creepy to see that. Michal [one of the victims, the authors] died alive’, ‘They penetrate the heart, it’s as if they are alive’. One respondent even symbolically interpreted the synthetic ‘resuscitation’ of the victims as a ‘resuscitation’ of the survival instinct of women in the cycle of violence: ‘Shocked, wow, how the design has improved dramatically, moving to tears, unbelievable, as if they have come to life, to warn those who suffer from violence, to wake them up from their coma, to stand up and [file a] complain’. Similarly, several respondents explained that the videos allowed them to experience the victims and their stories more personally and deeply: ‘I didn’t know her personally, but somehow hearing this feels like we knew’ wrote one respondent, while another wrote: ‘I feel a lump in my heart and throat when I see the faces and voices of those murdered by their partners. Heartbreaking’. Another respondent similarly wrote: ‘When you see the videos, you feel the pain more’.

Misunderstanding of the Technology

In some cases, the videos were so tangible that users just did not understand that they were synthetic and believed they were seeing videos of the victims themselves. Some of the campaign viewers simply did not understand how synthetic resuscitation works. They are clearly unfamiliar with the technology and are confused about its technical aspects. Many respondents expressed surprise and even genuine confusion at the fact that the victims were ‘talking’, and offered several, primarily wrong, resolutions for this dissonance. One respondent wondered if it was the victim in the video or an actress: ‘Shocking story, I just did not understand: is the woman in the video the real Esther or someone who looks like her? What’s going on here?’ Other respondents understood that these were the victims themselves and not duplicates, but believed that the video was recorded before their deaths. For example, in the following responses: ‘Sorry for the ignorance, I ask in all seriousness: were they murdered? So how do they talk? Did they know they would be murdered?’ and ‘Oh my God! did she record herself before? It’s crazy!! May her memory be a blessing’. The lack of understanding of the technology was also reflected in the perception that the victims were still alive. For example, one of the respondents addressed the victim in the second person, reinforced her words and even asked her a question about her abusive partner without realising that the victim was already murdered by her partner with a pistol shot (even though this is stated in the video): ‘You are amazing, an outstanding woman of, you are doing an important service, hope that your voice will be heard. What is up with your monstrous partner? It’s important that you inform everyone about his punishment’. Another respondent asked the victim to send him a private message. Almost all of these responses were answered by other users, who accused the respondents of their mistake (‘Are you waiting for an answer from her?! She was murdered’) and provided an explanation for the technology that enabled the ‘resuscitation’ of the victims. Although these respondents were supposedly knowledgeable, their answers suggest that synthetic media is still a concept that is not sufficiently clear to the general public. No respondents used the term ‘synthetic media’ and a few used ‘deepfakes’. Only one respondent defined the technology in a relatively detailed and precise manner as ‘the technology of animating her image and voice [the campaign did not involve synthesising the voices of the victims. Instead, professional actresses were hired to dub the videos—the authors] that they edited specifically for the project’, while the rest of the respondents used a variety of terms, the vast majority of which were general and vague, and some incorrect. ‘Computer’, ‘computer work’, ‘computer software’, ‘special software’, ‘effect made to her photo […] A computer game they created for the photo’, ‘an app programmed to look alive and real-time’, ‘dubbing’, ‘Photoshop’, ‘hologram’ and ‘simulation. It’s not her’ are prominent examples.

After receiving an answer to their questions about the characters appearing in the videos and realising that it was a digital ‘resuscitation’, some of the respondents expressed shock and real surprise: ‘Whattttt she was murdered in the enddddd I didn’t think so. May her memory be a blessing’, wrote one respondent, while another asked repeatedly even after receiving an answer: ‘Is this really her and they made a recording?’, ‘What, is this a picture and they made a video?’ This may be due to a lack of technological understanding, but in some cases the respondents explained the difficulty in understanding how the videos were produced by saying that they are very tangible: ‘It’s so tangible, I don’t understand how a computer program did such work. How frightening’, one comment read, while another added: ‘This seems so real’, ‘Can’t believe it, so real!’

Ethical Considerations

Consent, Honouring the Dead and the Pain to Family Members

As noted in the quantitative section, one-quarter of the responses expressed opposition to the campaign. Thematic analysis shows that this objection was usually justified by three interrelated moral considerations: the absence of consent from the victims to the production of the videos, the dishonouring of the dead and the pain inflicted on the families. First, some respondents expressed a fundamental consideration: the fact that the heroines of the campaign did not give their consent to participate, consent for their faces and personal stories to be used in the campaign and published at scale, and consent to be digitally resuscitated. These responses expressed discomfort and even resentment towards the creators of the campaign: ‘I am uncomfortable that her image was used without her permission. […] Maybe she would not have agreed’. ‘What is it like to use someone who is not alive and speak for him? REALLY CHUTZPAH [audacious—the authors]’, ‘THE USE OF DEEPFAKE […] With the face of a woman who died in order to convey a message, without the possibility of obtaining her consent, it is bad and despicable’. The harshest reactions related to a complementary aspect of the ‘resurrection’ of the victims—the desecration of the dead. According to the respondents’ claims, using images of dead people—even more so without their consent—degrades them and their memory: ‘You fell on your head, this video is blasphemy of God and desecration of the memory of the murdered, kick out this satanic thing and shame on you crazy’, ‘Simply desecrating the honor of the deceased according to religion. There are other, more dignified ways to do that’.

Respondents have also expressed empathy for the victims’ family members and concern about the feelings that watching the victims would evoke: ‘Nice idea but does it seem good for their families to see something like this? A bit of morality and consideration’, ‘Shocking video. We watched the video, and it was heartbreaking. How would the families watch it, it’s a shame, it’s better to delete this video’. These respondents expressed moral consideration related to a relatively narrow group—the victims’ family members—and tried to step into their shoes.

The End Justifies the Means

The three arguments mentioned above—not obtaining consent from the victims, desecrating their memory and harming the families—have often provoked backlash from other users who sided with the creators of the campaign. The prevailing argument among supportive respondents was that ‘the end justifies the means’. According to one respondent,

As long as there is no disrespect and desecration of the dead and with the approval of the families, I don’t see any problem here. In contrast, it is a matter of saving lives. Yes, it’s not easy to watch, but if it prevents the next murder, then the project is welcome.

Similarly, another respondent claimed, ‘This is a very original and powerful way to convey an important message. […] There is nothing they would like more than to send a message and prevent the next murder’. At the same time, some of the respondents reassured the victims’ families for agreeing to participate in the campaign despite the emotional difficulty: ‘How important is the message!! Thank you to the families who approved the publication along with great pain’, ‘Hug to the families who agreed to have their blood cry out! With their deaths they commanded us to live! Listen!’ In other words, even according to these respondents, the campaign is tangible and may cause emotional difficulty for both viewers and victims’ families, but the public goal of raising awareness of dangerous relationships justifies it. In the words of one respondent, ‘what is wrong with the campaign? Shocking you? Excellent’.

Discussion and Conclusion

This study analysed public reactions to a prosocial campaign that employed Deepfake technology for the resuscitation of five Israeli women, victims of domestic violence, who were murdered by their partners. By examining Facebook comments in response to campaign videos, we sought to understand viewers’ attitudes, emotions and ethical considerations related to the use of Deepfake technology in the campaign. Our study contributes to the growing literature on digital death, synthetic resuscitation and the ethical implications of Deepfake technology.

Bearing in mind the primary reputation of Deepfake technology and its alarming malicious potential as a destructive medium based on lies, deception and fraud (de Ruiter, 2021; Floridi, 2018; Karasavva & Noorbhai, 2021; Khan et al., 2023; Kietzmann et al., 2020; Langguth et al., 2021; Lucas, 2022; Maras & Alexandrou, 2019; Öhman, 2020; Rini, 2020; Slater & Rastogi, 2022; Vaccari & Chadwick, 2020; Whittaker et al., 2020), this study aimed to investigate audience acceptance when it is utilised for good and the ethical considerations that accompany its utilisation.

The study employed a mixed-methods approach, combining quantitative content analysis with qualitative thematic analysis to better understand users’ reactions to the campaign. We found that most comments expressed shock or distress, and not many explicitly referred to the technology, or the synthetic resuscitation of the victims.

Among the comments that referred to the technology, most supported the use of Deepfake technology for the campaign and justified it with the importance of the cause and the effectiveness of the message.

Responses that focused on the technology utilised by the campaign fell primarily into two main categories: responses that focused on the vividness of the campaign, which provoked intense and conflicting emotions of unsettlement on the one hand and emotional connection on the other, and responses that expressed confusion and even disturbance by the campaign. For many users, Deepfake technology is unfamiliar, and they were perplexed about its technical aspects. To some, the videos were so tangible that they simply did not understand what they were watching.

Reactions focused on ethical considerations dealt more with questions related to the victims and their consent, their dignity that might have been compromised and the pain afflicted upon their families as they were exposed to the videos. These discussions sometimes included the consideration of the ends as justifying the means, that is, the urging, prosocial, potentially life-saving purposes of the campaign justify the use of the technology in a way that may be disrespectful, non-consensual and painful for the families.

The analysis of user comments on the campaign provides several takeaways.

The Ethics of Technological Misunderstanding

As an innovative technology, it is unsurprising that a non-negligible group of users will struggle to understand it. This could very well be a manifestation of digital inequality and a novel technological literacy challenge (Castilla et al., 2018). Although we may expect this literacy skill, that is, the ability to distinguish between reality and synthetic media, or the understanding that certain video content may be synthetic, to develop in time—with the advancement of technology, making it harder to identify, we are facing a future where it will presumably become harder to distinguish the blurred boundaries between reality and synthetic media. Content creators must become extremely aware of the possibility that audiences, even highly sophisticated, digitally savvy ones, will simply not get it.

The literature concerning the ethics of misunderstanding usually focuses on the interpretation of the content of messages by audiences, such as misunderstanding of humour or cynicism (Edwards et al., 2017), emotional cues (Marin, 2022), the tone of the message (Edwards et al., 2017), or the subtext (Marin, 2022), especially in intercultural contexts (Graziadei, 2017; Kekki, 2020).

In the case of Deepfake campaigns like the ‘Listen to My Voice’ campaign, the misunderstanding is based on the medium itself, in the production of the content and the technology that creates it. This presents an ethical question related to the responsibility of the content creators to highlight the mechanism behind the content, as well as to emphasise the fakeness of the content, making it visible and undeniable. In that, creators will ultimately distinguish the campaign from other more prevalent forms of Deepfake campaigns, where all efforts are made to hide the fake and make it impossible to reveal the synthetic origin of the content.

Prosocial Purposes are not Exempted from the Observance of Ethics of Consent and Dignity

Even in a prosocial campaign, the key features of deepfake technology, namely its tangibility and the fact that it does not require consent or awareness of the individuals featured in the content, were at the centre of debate around the ethical challenges and considerations of the campaign. Commenters expressed concerns about the dignity of the victims, the fact that the victims could not state their consent to be involved in the project, and the implications for their families. In other words, ‘digital immortality’, which enables interaction with the digital remnants of the deceased and can be perceived as a manifestation of the deceased’s eternal presence in cyberspace (Meese et al., 2015; Savin-Baden, 2022), also raises public concerns about the privacy of the deceased.

In this context, it is interesting to compare these reactions to the campaign and the views of the creators. The researchers held a preliminary interview with the campaign creators before designing the study. In the interview, the two creators were asked about reservations they received when they started working on the project and about ethical considerations they were occupied with during the production phase. They stated that acquiring the families’ consent and cooperation was the basic condition for including a victim in the campaign. They approached several families of victims of IPV. Some families found it too difficult to participate, and the victims were not included in the campaign. The victims included in the campaign were only those whose families were interested and expressed consent to participate. Contact with families was maintained throughout the production, and the videos were first shown to the families in a private, intimate event before the campaign was launched. The texts that were spoken by the victims, choice of words and level of details disclosed regarding the victims and their deaths were all created in accordance with the families to preserve the victims’ dignity and ensure that the texts represented the victims according to the families. These production guidelines are not disclosed on the campaign website or on the campaign Facebook page, and perhaps a more transparent, detailed expression of the ethical and sensitive approach to the campaign production would have benefited the campaign and provided some reassurance to viewers regarding the way the victims and their families were treated during the production process. The creators stated that the responses to the campaign were very positive; viewers expressed shock and distress but were very supportive. Indeed, our analysis shows that the responses were mostly supportive of the project but also raised concerns related to the implications for the victims and their families.

Synthetic Resuscitation Ethics is Relative to the Purpose

Notwithstanding the above conclusions, commenters did relate to the purpose of the campaign by arguing that the end justified the means, including the synthetic resuscitation of real victims. These comments express an approach that weighs the risks and implications against the campaign’s value and contribution to significant social causes. Their approach signifies that Deepfake technology, in general, and synthetic resuscitation, in particular, are not ultimately destructive. It is possible to harness the technology for noble, important, well-intended social purposes and even save lives, as long as the production is well-designed, ethical considerations are well-observed and the creators, producers and distributors are highly sensitive to all involved, the deceased and the living.

Limitations and Future Research Directions

This study analysed reactions to a new form of production that utilised synthetic video creation for a prosocial campaign by analysing user comments posted on a designated Facebook page. The topic of the campaign is highly disturbing, evoking strong emotional reactions. The use of Deepfake technology and the synthetic resuscitation of victims further complicate the emotional and mental processes that the content provokes, as well as the ethical and social discussion that emerges as a result. User comments can provide a preliminary, somewhat basic image of user acceptance of the campaign. Future research could delve into the more complex reactions, considerations and discussions the project provokes by using focus groups or individual interviews with users after being exposed to the campaign and enquiring into their reactions and perceptions regarding Deepfake utilisation for prosocial purposes.

The campaign was deliberately inclusive in the representation of victims from almost all groups in Israeli society. The analysis of user comments cannot provide all the relevant information regarding the identity of the commenters. We expect to see differences in views regarding the legitimacy of the campaign and the considerations that need to be taken between users of different social and ethnic groups in Israel. Questions related to the dignity of the deceased and of women, the legitimacy of openly discussing IPV and presenting images of victims, as well as the use of digital technology to create a synthetic ‘afterlife’ differ according to culture, religious affiliation and conservatism. In future examinations of responses to these and similar questions, special attention should be given to including diverse social groups and delving into the differences in views that may reflect cultural, religious and ethical gaps between the various social groups.

In summary, the results of this study suggest that users may struggle to comprehend the campaign and technology, reflecting digital inequality and new literacy challenges. Commenters were concerned about the victims’ dignity, consent and the impact on their families. These findings raise ethical questions and suggest several recommendations for content creators. Creators must recognise that even digitally savvy audiences might not understand the content. Furthermore, emphasis should be made for greater transparency and a detailed explanation of the ethical approach in the campaign production to reassure viewers about the treatment of victims and families. The technology can be used for noble, socially beneficial purposes and even save lives, provided the production is well-designed, ethical considerations are strictly observed and all parties, both deceased and living, are treated with sensitivity.

Footnotes

Acknowledgements

The authors would like to thank Shani Levy, Shaked Weiss, Reut Izbizki and Dafna Shaulson for their assistance in conducting the research.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.