Abstract

Polishing robot is an automatic system in which the robot controls the end effector to fix the polishing tool and finish the workpiece polishing efficiently. In order to solve the problem of how to maintain the stability of actuator contact force in the robot automatic polishing system, a learning algorithm of robot impedance control parameters based on reinforcement learning is proposed and the impedance control model is established in this paper. The influence parameters (inertia M, damping B, stiffness K) of impedance performance are analyzed by numerical simulation method and the optimized impedance parameters are obtained at last. Due to the small number of iterations and high data utilization rate, reinforcement learning algorithm is more suitable for robot constant force tracking. In the process of applying reinforcement learning algorithm, a combination of dynamic matching method and linearization method is proposed to predict the output distribution of the state, which greatly improves the cost function of the evaluation strategy, and impedance parameters corresponding to the optimal strategy are obtained. Finally, steam turbine blade is taken as polishing test part. The average roughness of the selected points of test part after polishing is only 0.302μm, and much less than 1.151μm before polishing, which verifies the feasibility of the proposed impedance control method.

Introduction

In the face of increasingly high machining requirements and complex polishing tasks, robot is widely used in the field of surface polishing. 1 Yousedizadeh et al. 2 designed an automatic on-line trajectory generator based on operation intention detection. The designed impedance controller enables the robot to actively comply, ensuring the stability of force in processing and the safety of the operator. Du et al. 3 developed an automatic polishing system and designed a flexible end effector, which can control the contact force perfectly.

In order to control the strong vibration generated by the rotating robot in the polishing process, Dong et al. 4 proposed a method for detecting and controlled the contact force with internal sensors, which can well suppress strong interference. Ding 5 proposed a hybrid control method of polishing force and position for complex surface parts, and it can achieve stable force control and accurate position control. Zhang et al. 6 proposed a reasonable control system, which can realize constant force polishing in mass production of industrial robots.

The design idea of robot impedance control is to establish the dynamic relationship between the end force of the robot and its position deviation, so as to control the end force of the robot by controlling the joint displacement of the robot. 7 Due to the high robustness and simple implementation of impedance control, scholars from home and abroad are committed to the application of impedance control in robot industry.8–10 However, the traditional impedance control cannot track force accurately. Due to the complexity of robot polishing and high cost of processing information acquisition, robot polishing is consistent with the feature that reinforcement learning process needs less information.

In recent years, with the rapid development and application of machine learning algorithm, the combination of robot impedance control strategy and machine learning algorithm has been further developed. Reinforcement learning algorithm can find high-return strategies only through the reward function, that is to say by trial and error method, which provides a new way for robots to achieve complex autonomous compliance control. Reinforcement learning algorithm can dynamically adjust the motion state of the robot.11–13 Du et al. 14 proposed a variable admittance control method based on fuzzy reinforcement learning. By dynamically adjusting the variable virtual damping term in the admittance controller, the position control accuracy of the robot is enhanced and the required energy is reduced. Li et al. 15 proposed a learning variable impedance robot control method. Simulation experiments show that several interactions can successfully complete the learning task of force control, which is suitable for the force-sensitive tasks.

At present, the impedance controller of industrial robot is mainly used in the fields of drilling, grinding, deburring, and simple assembly. The research and application of force control strategy for machining small complex curved parts at home and abroad cannot solve the problem. Therefore, the turbine blade is taken as polishing object in this paper. The robot impedance control strategy and application technology based on reinforcement learning algorithm are studied in the surface polishing process. The robot surface polishing experimental system is designed and verified.

The paper is organized as follows. Sec. 2 provides the impedance control method of polishing robot and the influence of impedance control parameters on control performance. In Sec. 3, the state-transition probability model of the impedance control robot is established by the Gauss process model, and the output distribution of the model is predicted by the improved algorithm of dynamic matching and linearization. Sec. 4 provides the feasibility of the algorithm, which is verified by simulation and experiment. Sec. 5 summarizes the benefits and the limitations of the study and the potential future research directions.

Process analysis of surface polishing robot

Modeling of surface polishing robot

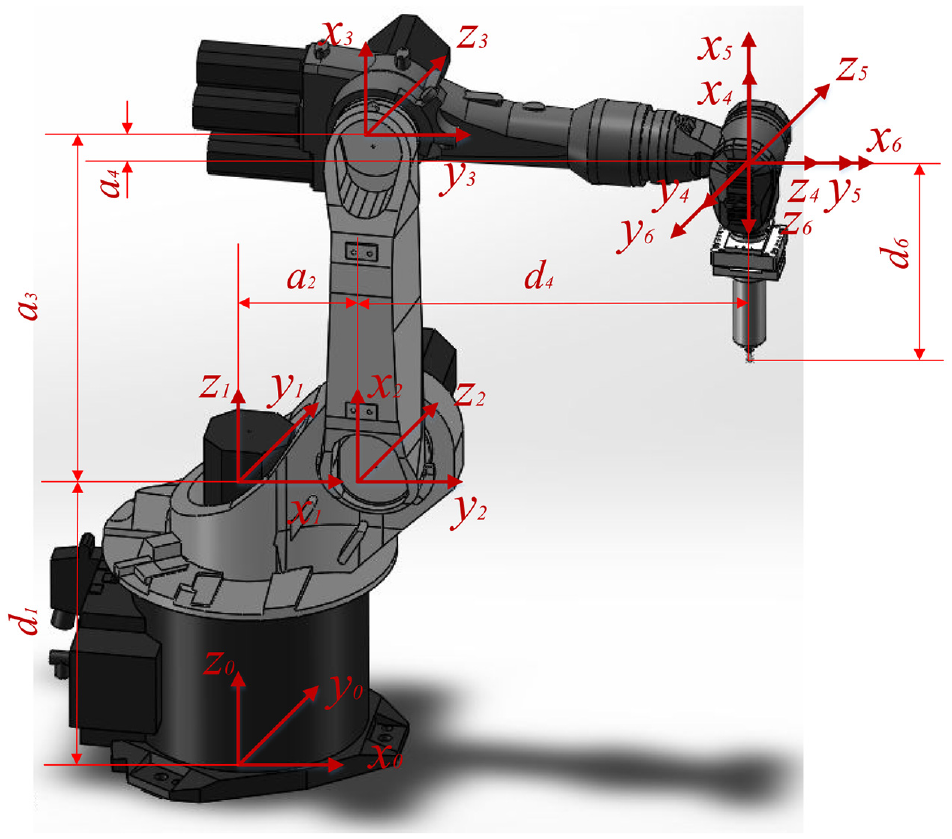

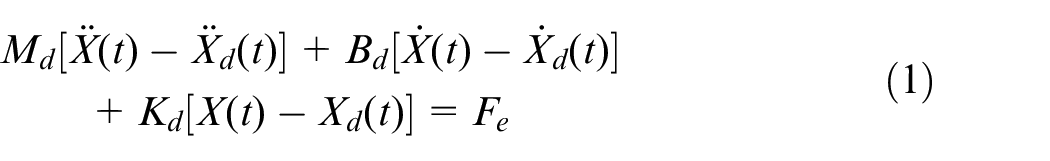

The joint coordinate system of surface polishing robot is established in Figure 1, and the parameters of polishing robot are listed in Table 1, Where

Joint coordinate system of polishing robot.

Motion parameters of polishing robot.

The linkage model and motion model of robot are established by D-H method. The forward kinematics is solved and analyzed. The inverse kinematics is solved by algebra method, and the inverse solutions of multiple groups are optimized. The simulation of robot motion model is carried out in MATLAB robotics toolbox, which verifies the correctness and rationality of kinematics analysis. 16

Impedance control method of surface polishing robot

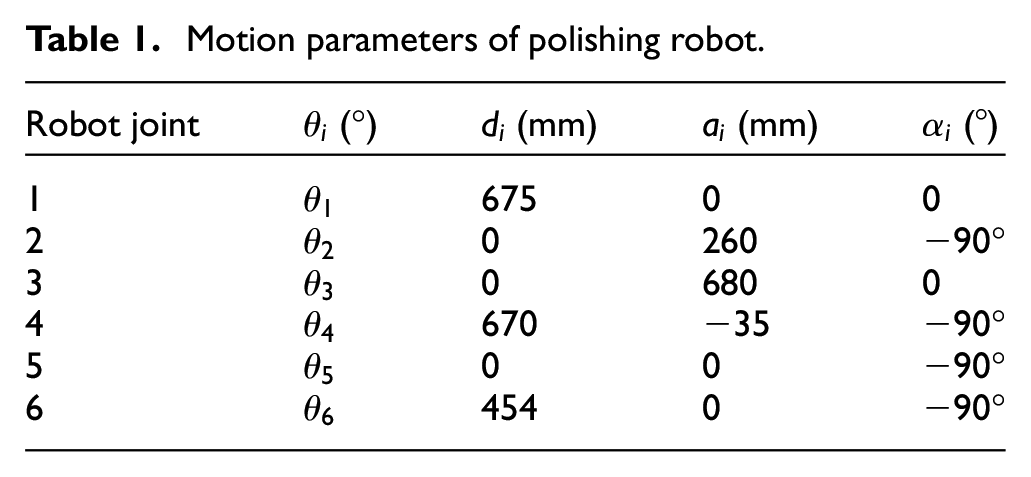

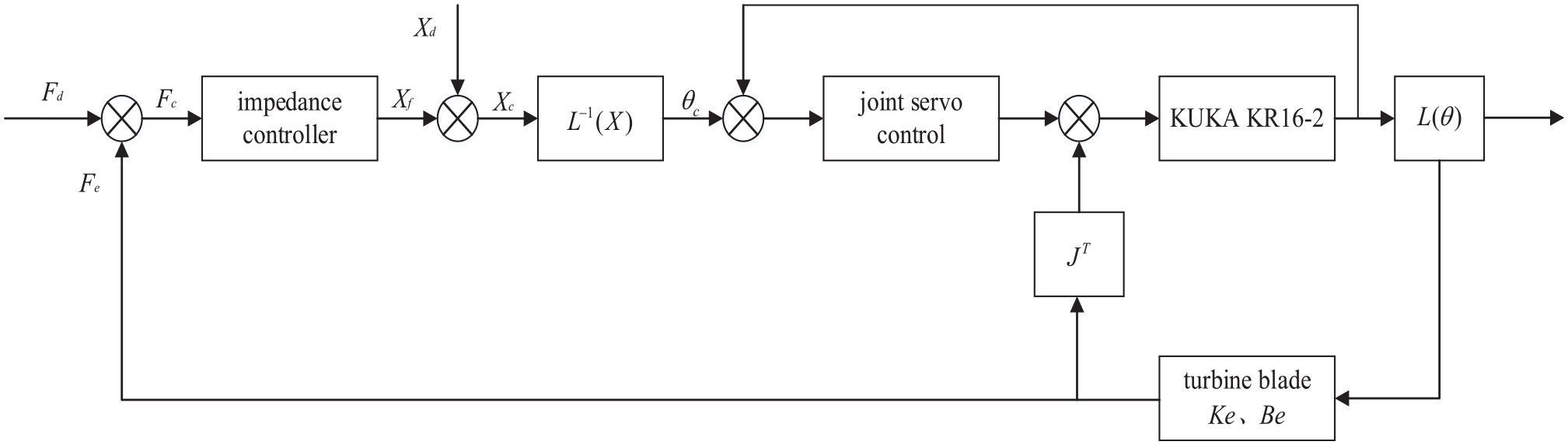

The robot can adjust the polishing trajectory in the polishing coordinate system dynamically according to the end contact force. The purpose of impedance control is to establish the dynamic response model of robot position and contact force for industrial robots. Impedance control methods can be divided into position-based impedance control and moment-based impedance control. 17 In the actual polishing process of robot, the impedance control method based on position is simple and robust. Therefore, the impedance control method based on position is adopted in this paper. Impedance represents the relationship between end contact force and trajectory deviation for robot. Its mathematical expression is:

Where

The corrected position can satisfy with the dynamic relationship between the force and position of robot. In order to track the polishing force, the force deviation is used to replace the contact force in the impedance expression. The position correction which is generated by the impedance controller can ensure that the robot always maintains a constant normal contact force during the blade polishing process. Position-based impedance control flow chart is represented in Figure 2.

Position-based impedance control flow chart.

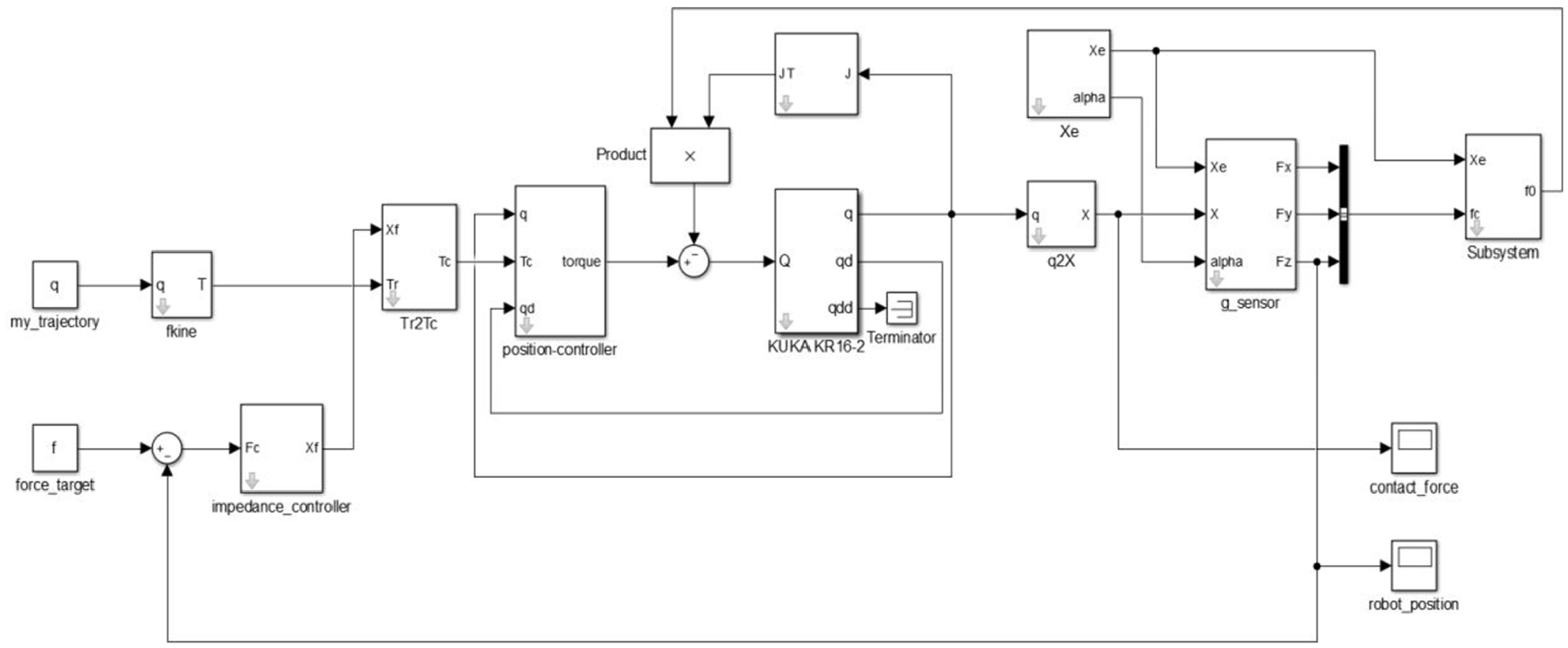

Because the robot moves in three-dimensional space, the impedance simulation system model of surface polishing robot is established in Simulink software through the formula and the motion model of the robot, as shown in Figure 3.

Simulation model of surface polishing robot impedance control.

Influence of impedance control parameters on control performance

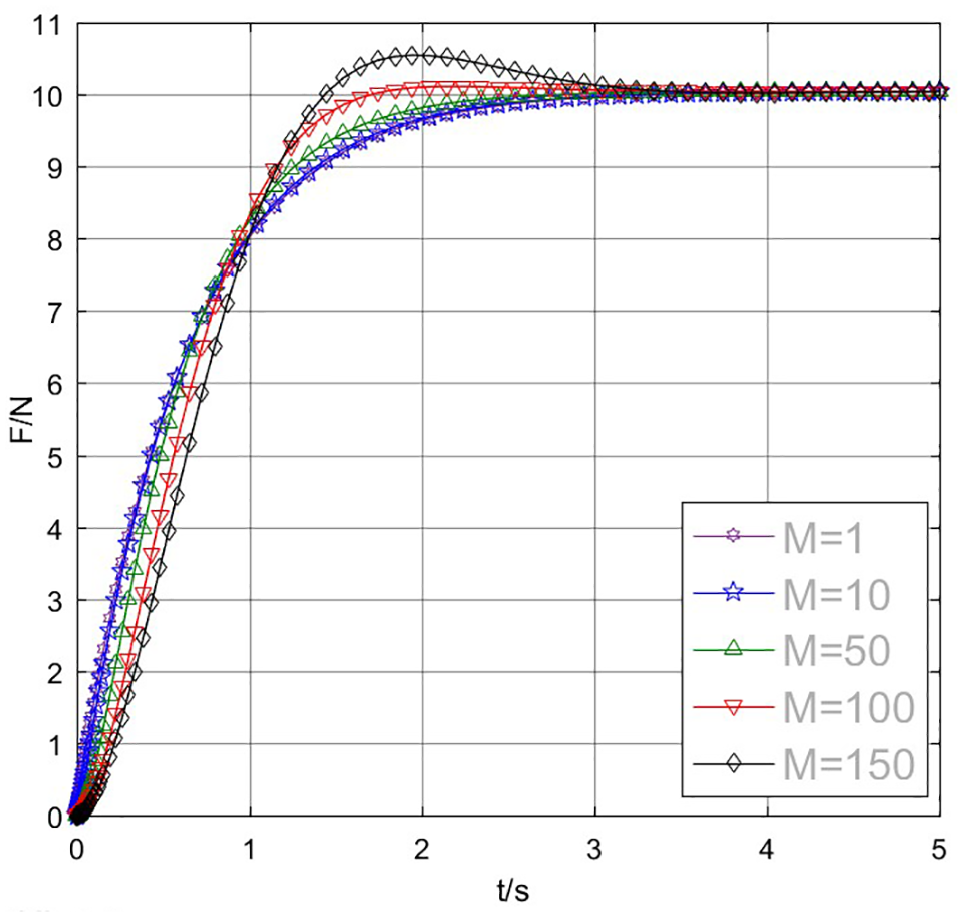

Suitable target impedance parameters can improve the robustness of the control system and the adaptability to the environment for robot impedance control. In order to study the influence of three target parameters (inertia

The simulation curve of contact force with time is shown in Figure 4. When damping parameter

Influence of inertial parameters on system performance.

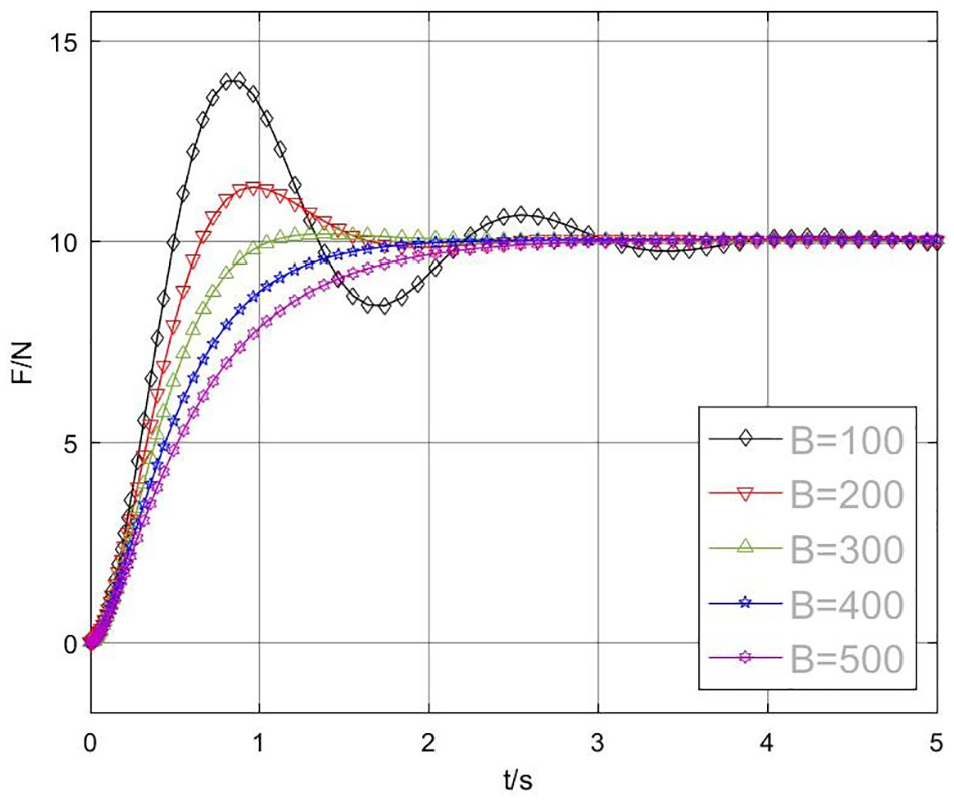

The simulation curve of contact force with time is shown in Figure 5. When inertia parameter

Influence of damping parameters on system performance.

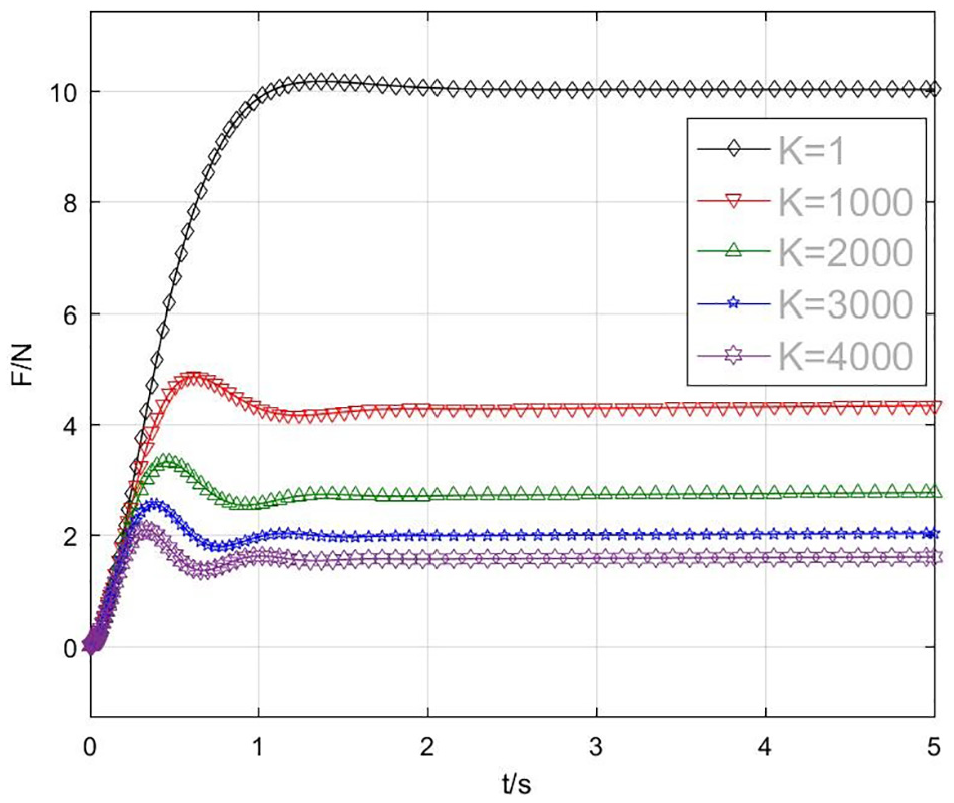

The simulation curve of contact force with time is shown in Figure 6. When inertia parameter

Influence of stiffness parameters on system performance.

In Figure 4, the change of inertia parameter

In Figure 5,

In Figure 6, the stiffness parameter

The steady-state error of the system contact force mainly depends on the target stiffness parameters

Parameter optimization of impedance control based on reinforcement learning

Learning process of impedance parameters based on reinforcement learning

Reinforcement learning is mainly used to solve sequential decision problems. 18 The improved reinforcement learning algorithm for impedance control is mainly divided into three layers. The bottom layer uses the existing data to learn the state-transition probability model. The input of the bottom layer is the state of the Experiment I (including polishing force-position, desired polishing force-position, and corresponding impedance parameters), and the output is the system state-transition probability model fitted by Gaussian process. The middle layer is to predict the output state distribution according to the model, that is to say, the probability distribution of different state-action pairs (the state at time t transfers to the state at time t + 1). The top level is responsible for strategy evaluation and optimization. The cost function is used to evaluate the strategy in the long-term polishing process. The gradient-based strategy search method is used to obtain the optimal strategy and impedance control parameters.

Firstly, the state-transition probability model of the algorithm is established based on the sample data. Due to the uncertainty of machining process, Gaussian process model is used to describe the state-transition probability of robot polishing process. The Gaussian process is completely specified by the mean function

The Gaussian process model is usually assumed that the training model is a normal distribution sample with zero mean value according to the prior probability of the model, so the unique Gaussian process model can be obtained by covariance matrix. The covariance function is the kernel function of the state-transition probability model. Because the time period and the variance of contact force should be as small as possible in the robot polishing process, the prior mean function of Gaussian model is set as

Improved predictive output distribution function

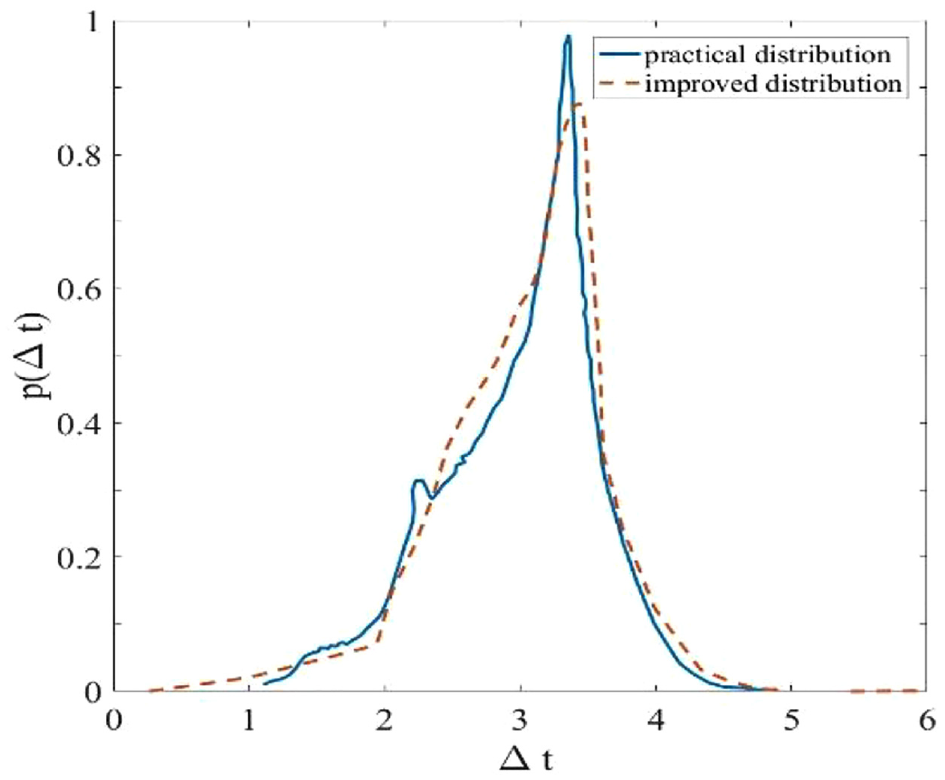

There are two methods to predict the output distribution dynamic matching method and linearization method. The dynamic matching method has better prediction effect when the probability is low, while the linearization method is more suitable for predicting the distribution of high probability states. 20 Because the two methods have different prediction effects for different probabilities, an algorithm combining the two methods is proposed, which uses a regulating factor to balance the prediction methods. Due to the lack of empirical data in the early stage, dynamic matching method was used. In the medium term, the algorithm has accumulated experience. At this time, it needs to predict the distribution characteristics accurately, so it is more suitable for the linearization method. In the later stage, in order to ensure that the output distribution can accurately follow the actual state, the dynamic matching method is used for prediction. The improved algorithm flow is as follows:

Step 1: Calculate the action distribution of the previous state

Step2: Calculate the state-action joint distribution of the previous state

Step 3: Calculate adjustment factor according to the characteristic points and probability accumulation of historical state

Step 4: If

Step 5: When using the linearization method, in order to avoid the large prediction difference between the dynamic matching method and the linearization method, the prediction correction is added to the prediction results,

Step 6: Calculate

Step 7: Calculate distribution

The regulatory factor

The correction factor is:

In the expression of adjustment factor,

The effect of the improved algorithm.

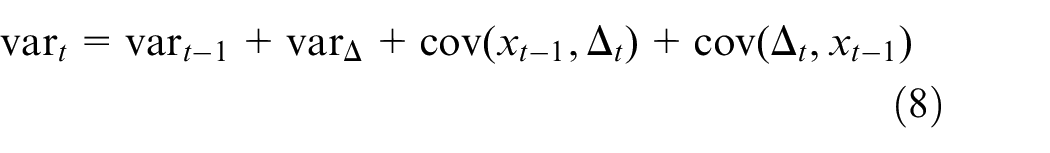

After the output distribution

The distribution of all subsequent states is obtained by predicting the output distribution. An index that represents the pros and cons of a strategy need aggregating all state distributions with the corresponding cost function to evaluate each subsequent state. The index of the evaluation strategy is as follows.

Simulation experiment and analysis

Steam turbine blade is taken as polishing test part in order to verify effect of the impedance control method. During the polishing possess, the end of the robot arm is in contact with the rigid surface of the profile to be machined of the steam turbine blade. Assuming that the damping and rigidity of the blade rigid surface to be machined are

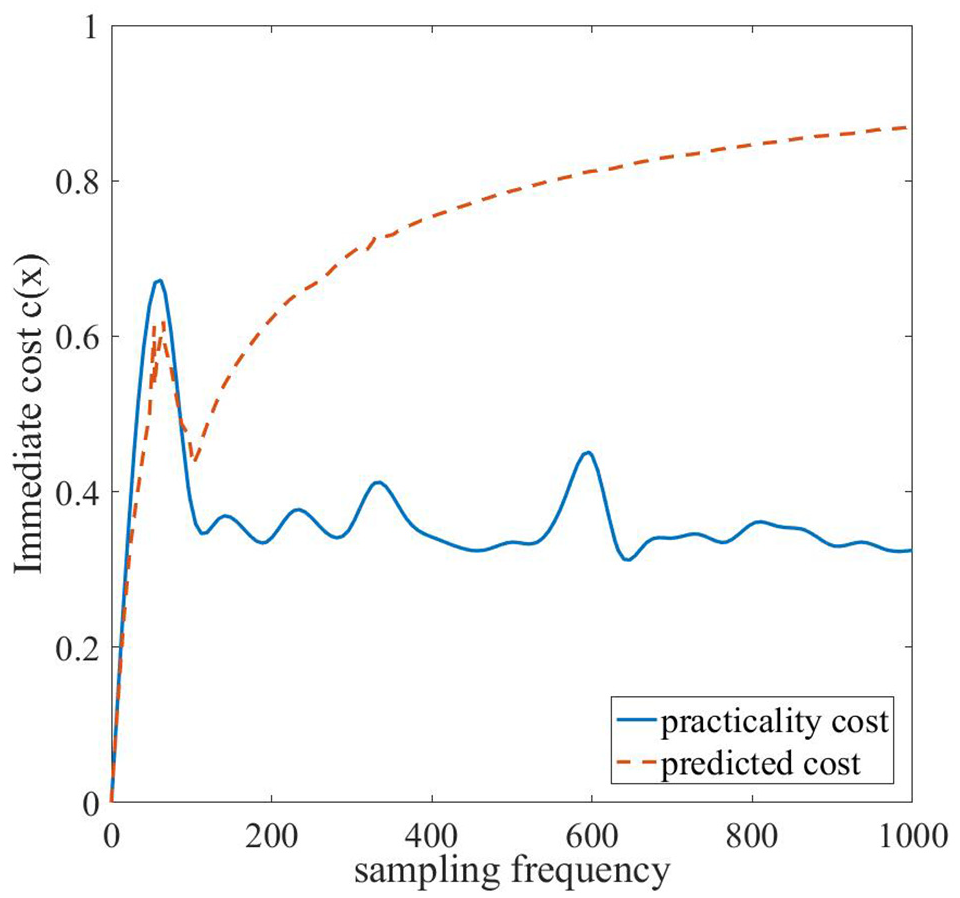

In the first experiment, 1000 sets of initial sample data were provided for the algorithm. This sample data were collected in the experiment under the condition of fixed impedance parameters, that is to say,

Flow chart of experiment process.

The pseudo-codes of the impedance control parameter learning process based on reinforcement learning is as follows.

ImpedanceControlParameterLearning() var init while UpdateDataset() TrainingDataset() establishProbabilisticDynamicModel() //Status values and parameter input values are included in X. //Y is Training target value train sample X,Y get ImprovedPredictionAlgorithm() CostFunction() // // GradientBasedStrategySearchMethod() getImpedanceController() getRobotMotionParameters() end

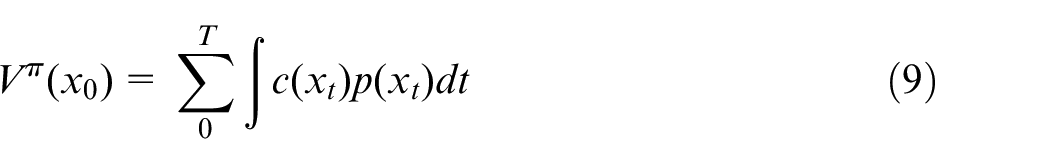

Impedance parameter adjustment process

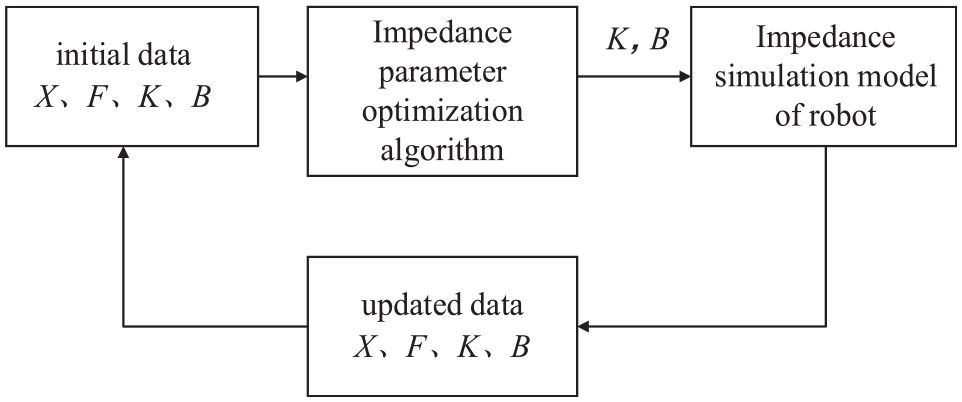

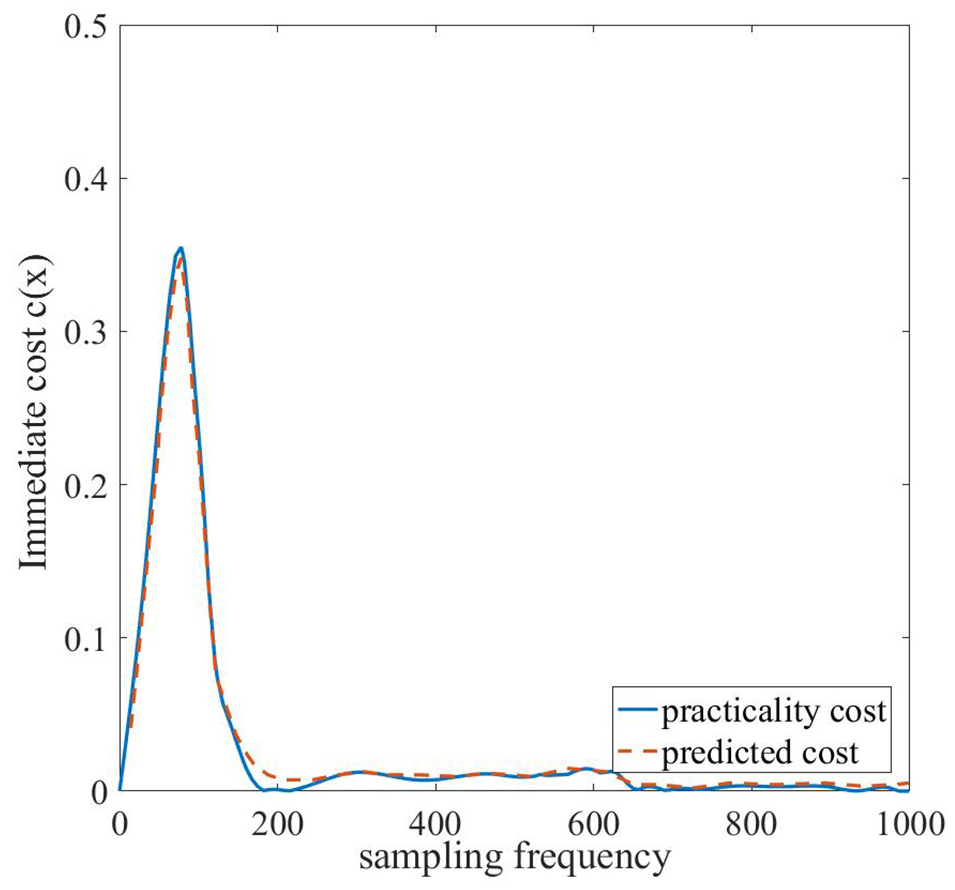

In this section, a reinforcement learning-based robot impedance parameter adjustment method is proposed. This method needs to train reinforcement learning agents through the change relationship between the end force and the position of the robot, combine with the expected output (expected force and expected position), and ultimately determine the appropriate impedance parameters. The value of the cost function reflects the difference between the current state and the expected state. The smaller the cost function is, the closer the current state is to the expected state. The change process of the immediate cost in the first experiment is shown in Figure 9. As it is the first time for learning and requires the accumulation of data and information, the predicted cost deviates greatly from the actual cost.

Immediate cost function value of the first experiment.

Figure 10 shows the change process of the immediate cost in the seventh experiment. Compared with the first experiment, the actual cost of the seventh experiment has been accurately predicted. It shows that the trained algorithm can accurately predict the system state and parameters. As the experiment goes on, the difference between the predicted cumulative cost and the real cumulative cost becomes smaller and smaller.

Immediate cost function value of the seventh experiment.

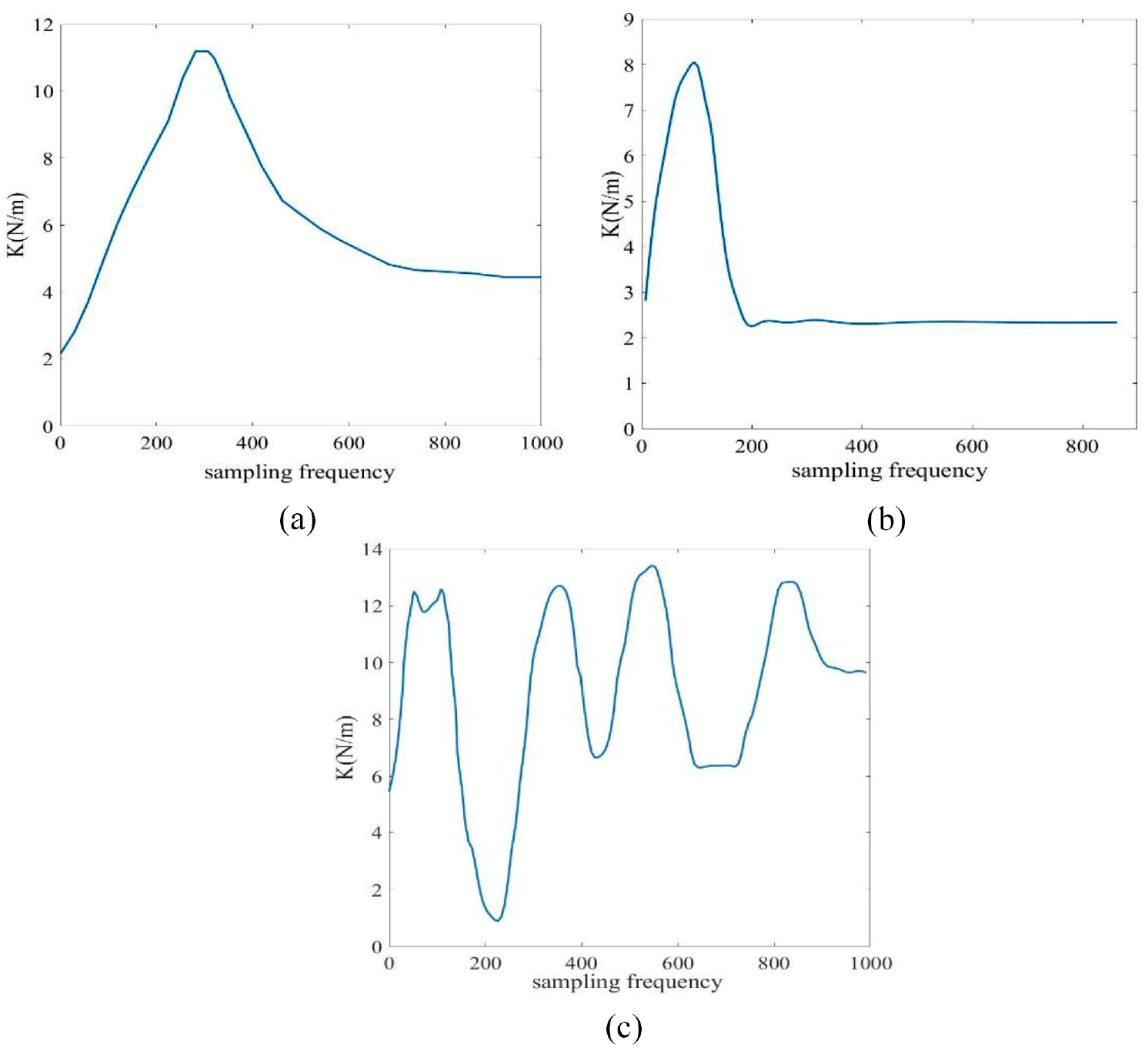

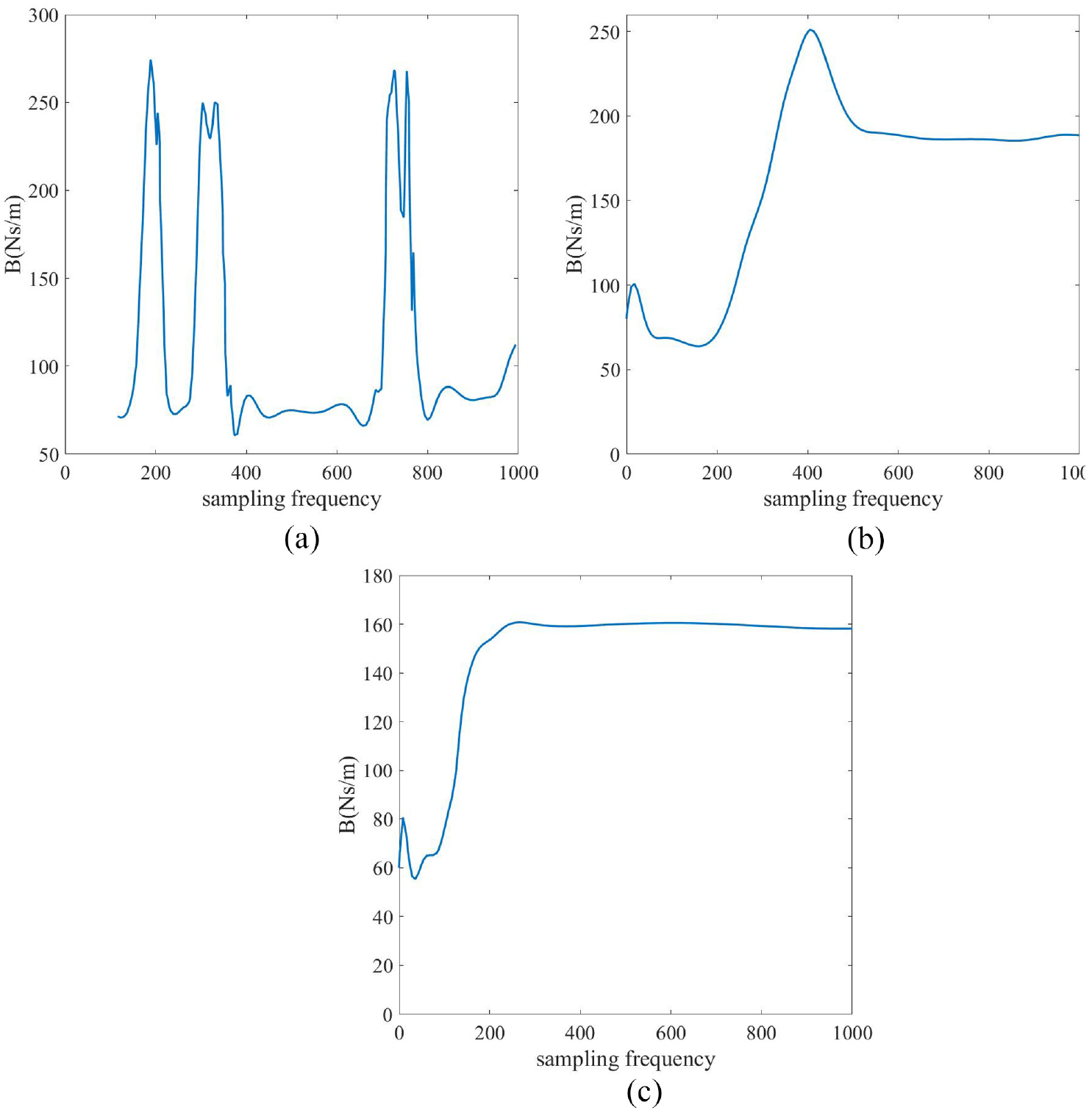

Figure 11 shows the stiffness coefficient variation curve in the first, seventh, and twentieth iteration process. The variation curve of the damping parameter is shown in Figure 12. It reveals that the prediction ability of impedance parameters is gradually improved with the increase of learning times, and the parameters are constantly changing. After the seventh iteration, the appropriate impedance parameters are obtained. By adjusting the stiffness and damping parameters dynamically, the stability and flexibility of the system are ensured. In the twentieth experiment, optimal impedance parameters of the robot system are obtained, which are

The change process of stiffness coefficient: (a) the first iteration, (b) the seventh iteration, and (c) the twentieth iteration.

The change process of damping coefficient: (a) the first iteration, (b) the seventh iteration, and (c) the twentieth iteration.

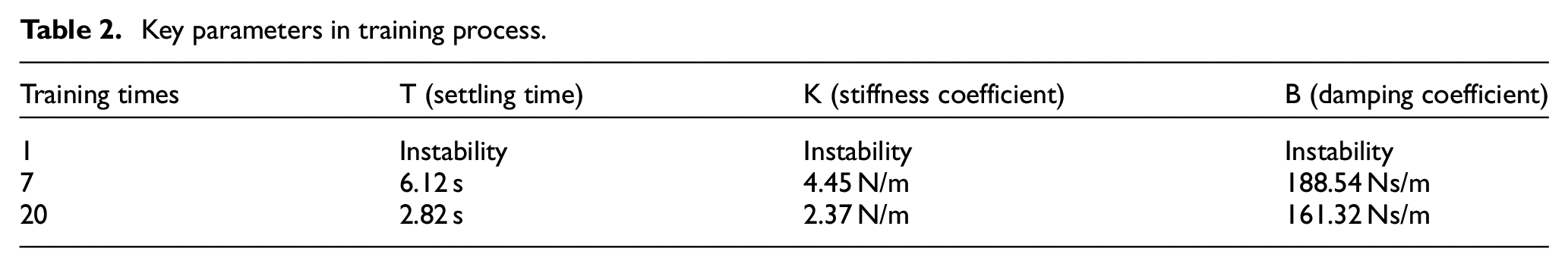

The key data to obtain stable impedance parameters in the process of parameter training are listed in Table 2. With the progress of learning, the system also has the ability to predict parameters and stabilize quickly.

Key parameters in training process.

Simulation analysis

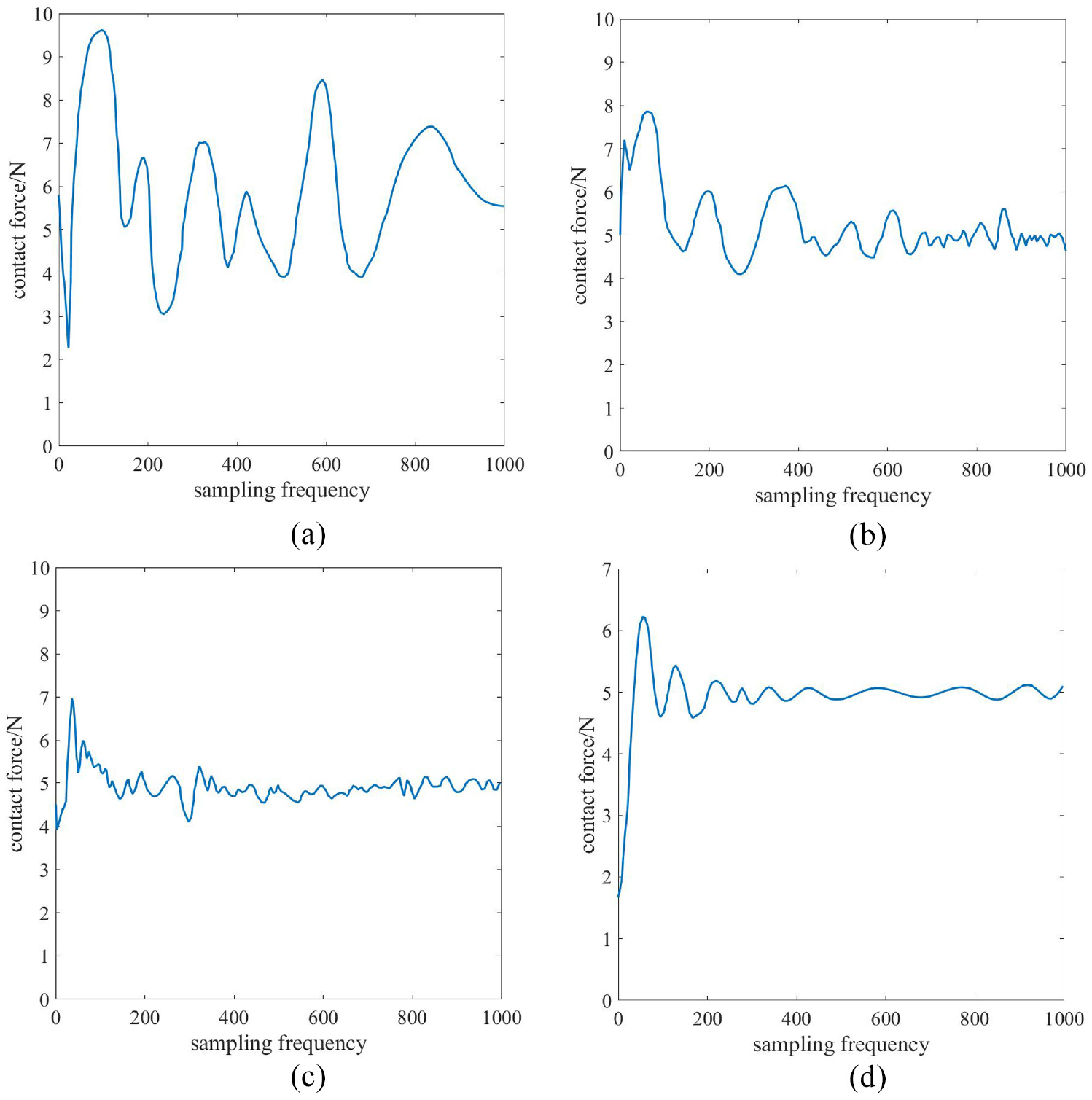

The force change curve in the learning process is shown in Figure 13. It illustrates that the controller can realize the stable and rapid force tracking after the learning process. Mission 1–6 failed due to excessive overshoot or steady-state error. At the seventh time, the force control task was successfully completed.

The learning process of force control: (a) the first learning, (b) the sixth learning, (c) the seventh learning, and (d) the twentieth learning.

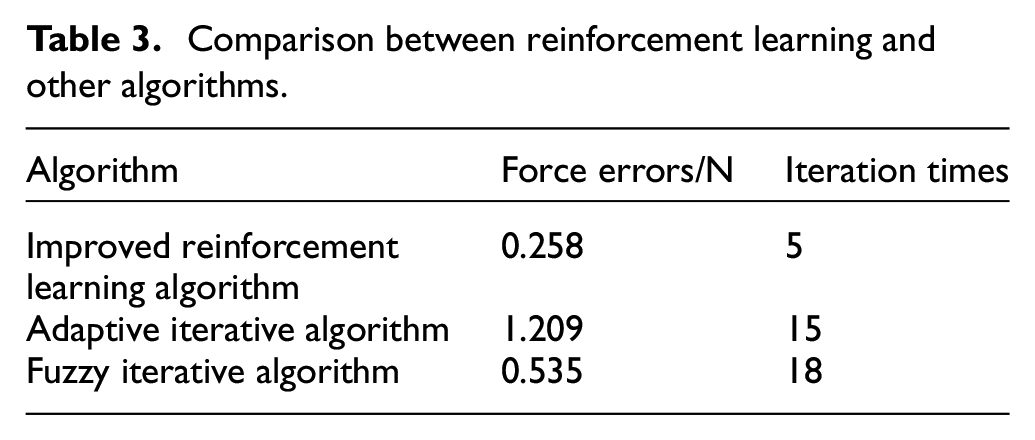

The mechanical arm of polishing robot can stably track the force after contacting with the blade surface through rapid adjustment from the simulation experiment. After seven times of learning, the end of the manipulator can quickly adjust the end position, so that the end contact force can be stabilized to the desired force in a shorter time. After the seventh learning, the force control performance is close to stability and it can be stabilized quickly to the expected value without overshoot. After 20 times of iterative learning, optimal control strategy is obtained, and the cumulative cost is minimum. At this time, the end contact force of the manipulator only needs 0.76 s to reach stable. Table 3 is the comparison of the impedance parameter optimization algorithm based on reinforcement learning with fuzzy iterative algorithm 21 and adaptive iterative algorithm. 22 It can be seen that the algorithm based on reinforcement learning has more advantages than fuzzy iterative algorithm in terms of iteration times and force error.

Comparison between reinforcement learning and other algorithms.

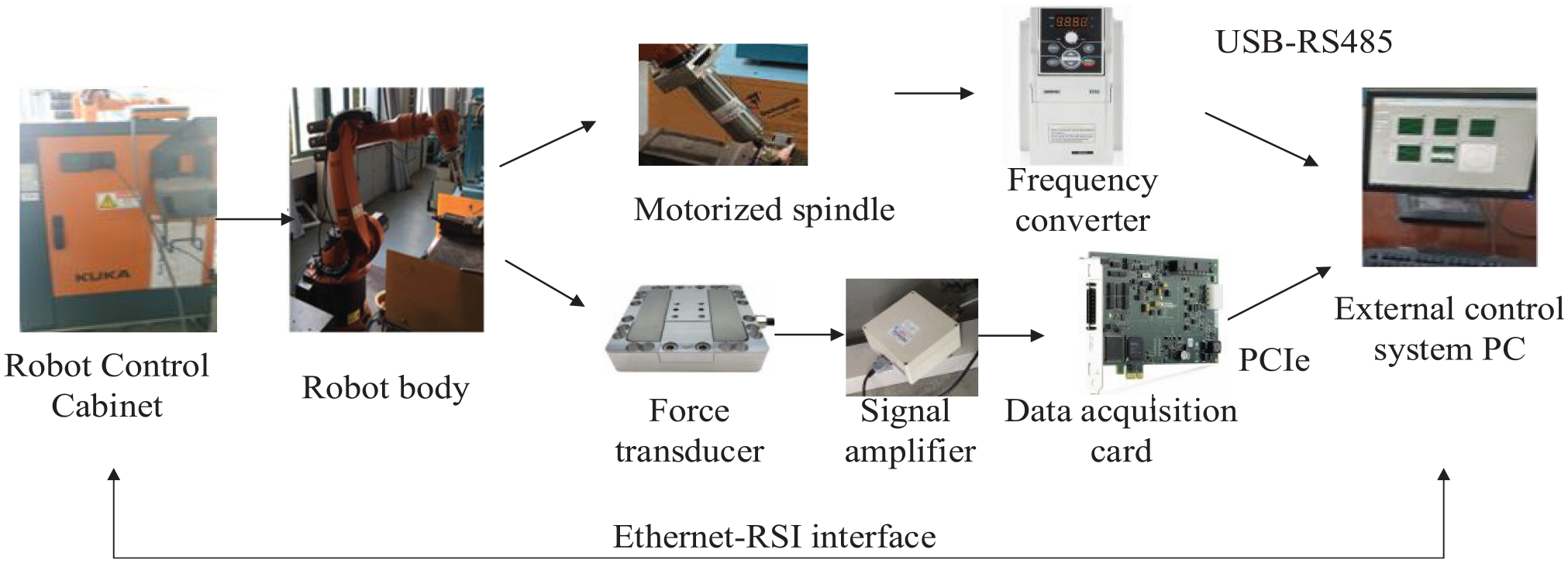

Experimental study

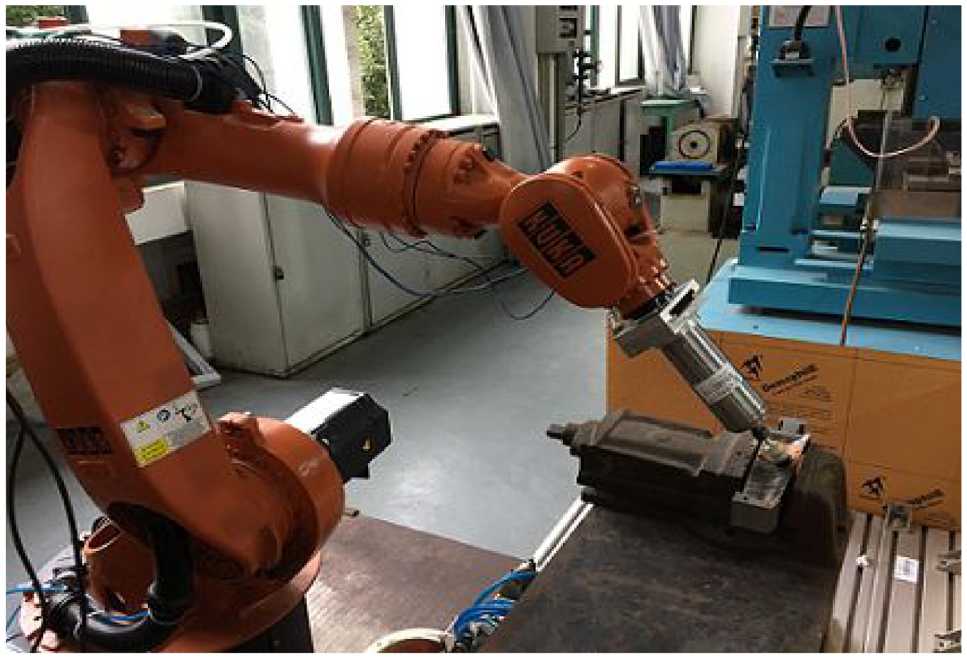

In this section, the polishing system of steam turbine blade was designed. The hardware of the experimental system includes KUKA KR16-2 industrial robot, robot controller, external measurement equipment (three-dimensional force sensor ME3DT120), signal acquisition equipment (signal amplifier and signal acquisition card PCIe-6320), polishing device (connector, motorized spindle, and polishing tool) and host computer, as shown in Figure 14. The hardware system can drive the robot motion, collect the force signal, and drive the motorized spindle. The software system adopts C/S (client/server) architecture. Polishing robot controls the robot movement by instruction program through KRL, and feeds back the parameters such as the end position of the robot to the host computer in real time. By processing the collected force signal and combining with the actual pose of the robot, the host computer calculates the position compensation value by the algorithm. The compensation value is transmitted to the robot through the KUKA-RSI Ethernet interface, so as to realize the stable control of the end polishing force and position.

System structure of surface polishing robot.

In order to verify the effect of impedance control, machining experiments without impedance control and with impedance control are carried out in the designed experimental system. The machining parameters are selected as follows. 23 The spherical polishing head was used for polishing experiment, and the spindle speed was 6000 r/min. The expected contact force is 5 N, and the diameter of the spherical polishing head is 12 mm, and the diameter of the tool rod of the polishing head is 3 mm, and the spindle feed rate is 2 mm/s. Input the optimized impedance parameters in the control software.

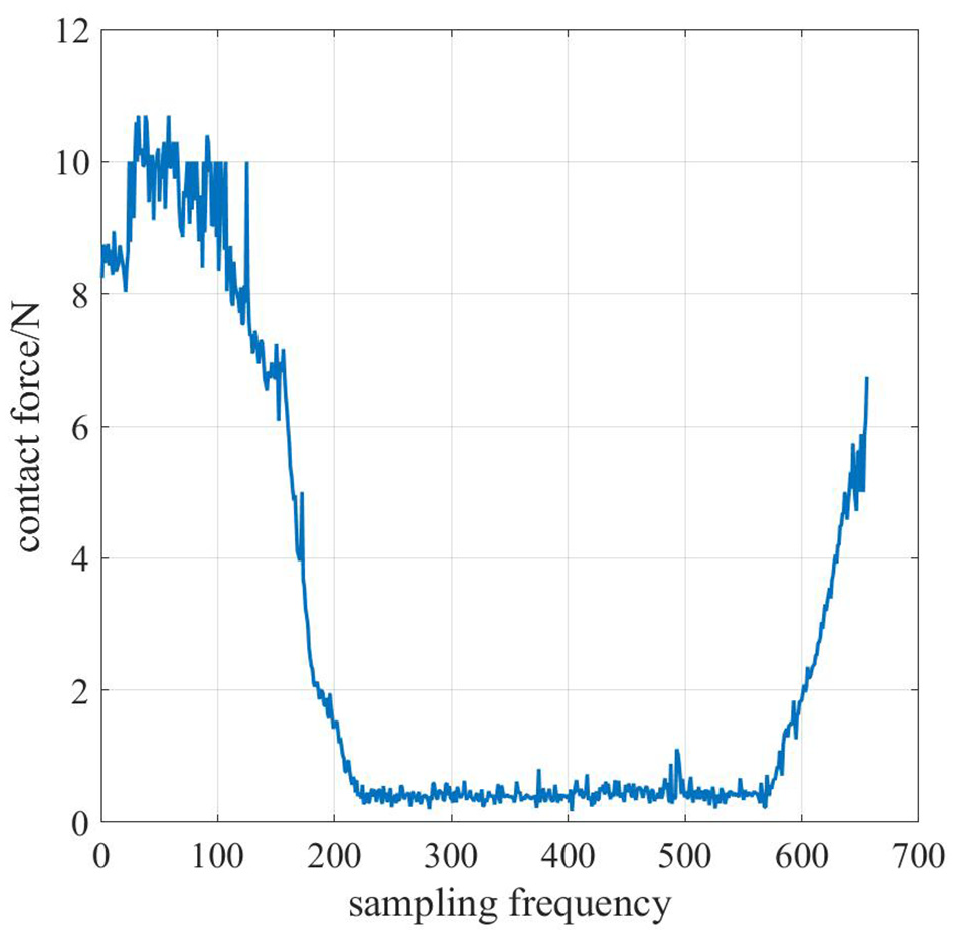

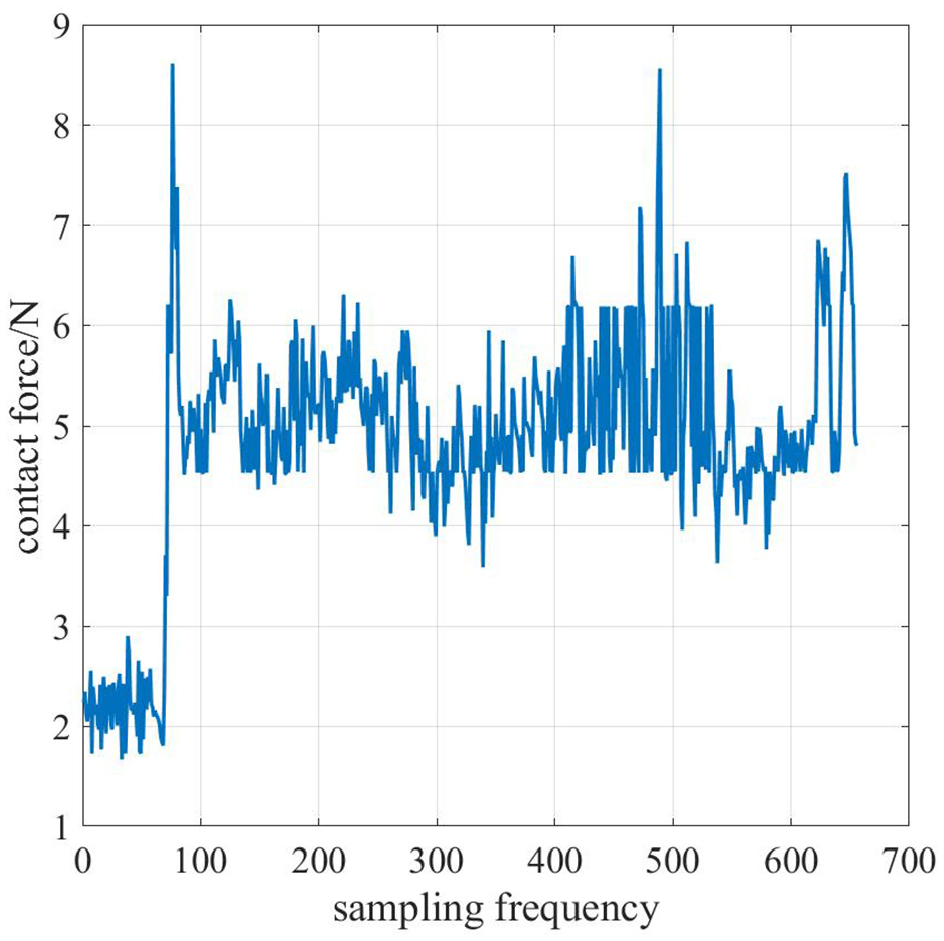

The robot is controlled to move from the zero position to the designated position in the impedance control experiment, and a constant reference force of 5 N is given for tracking. Firstly, the force tracking experiment is carried out without impedance control, and the planned trajectory is directly imported for processing. Then the impedance control is added to the system, and the initial impedance parameters in the polishing experiment are set according to the previous simulation results. Finally, the impedance parameters are adjusted according to the actual effect. Figure 15 is the contact force without impedance control. Figure 16 shows the change of contact force when the impedance parameters

Contact force without impedance control.

Contact force with impedance control.

It shows the change of contact force is unstable without impedance control. The robot always moves according to the planned trajectory because the cutter location point is not adjusted according to the contact force. When the polishing tool begins to contact the workpiece with impedance control, the contact force does not reach the designated expected contact force due to the workpiece error, clamping error, measurement error, and so on. With the progress of impedance control, the contact force mostly remains within

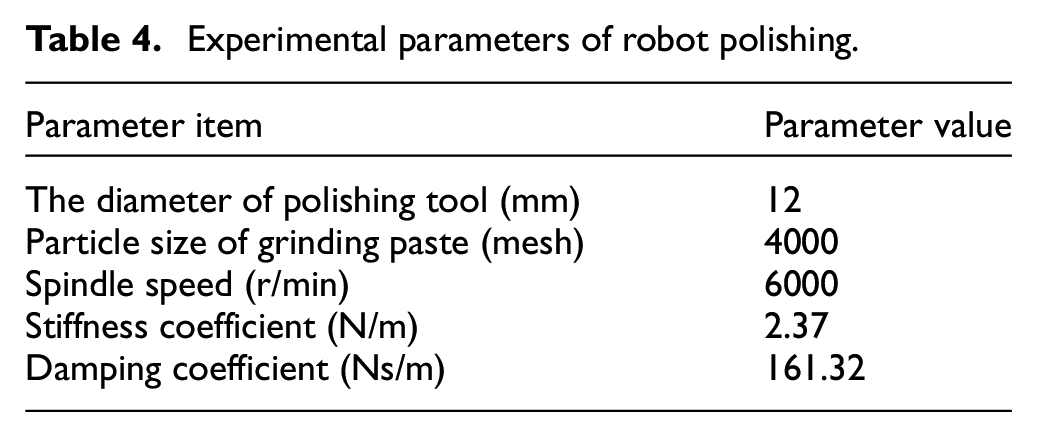

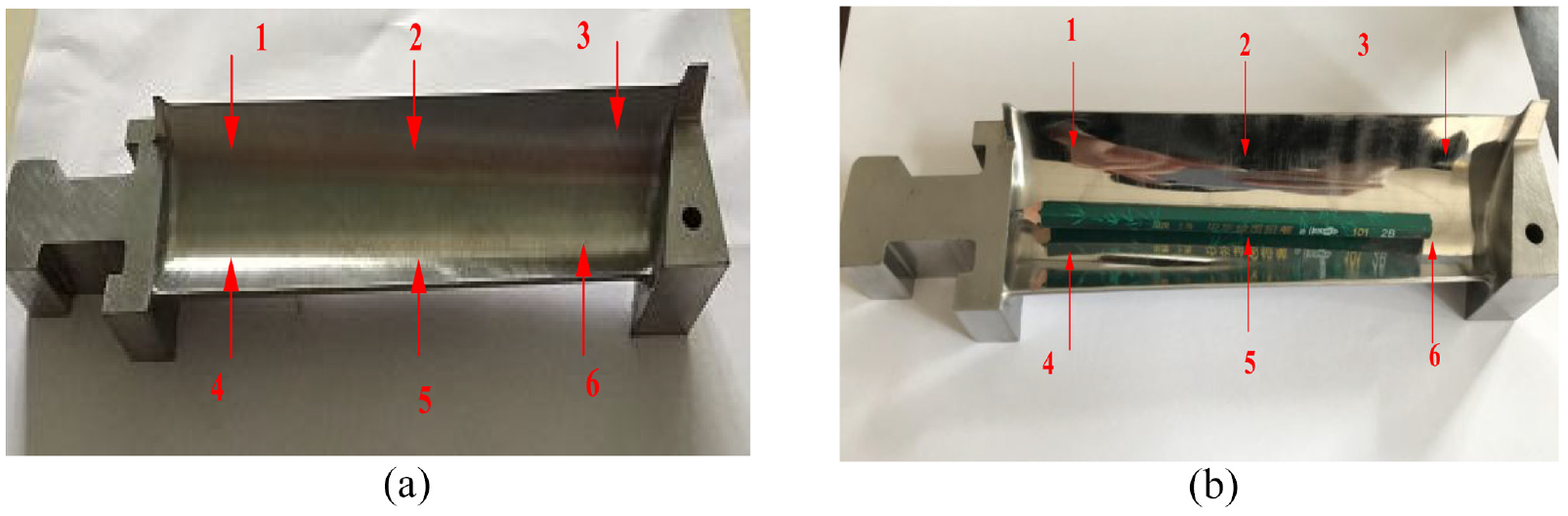

In the polishing experimental system, the inner arc surface of the steam turbine blade is polished to verify the influence of the impedance control algorithm and control parameter optimization method of the polishing robot. The spherical wool felt combined with grinding paste is used to polish the semi polished steam turbine blade. The polishing experiment of steam turbine blade is shown in Figure 17. The main experimental parameters are listed in Table 4.

Polishing experiment of steam turbine blade.

Experimental parameters of robot polishing.

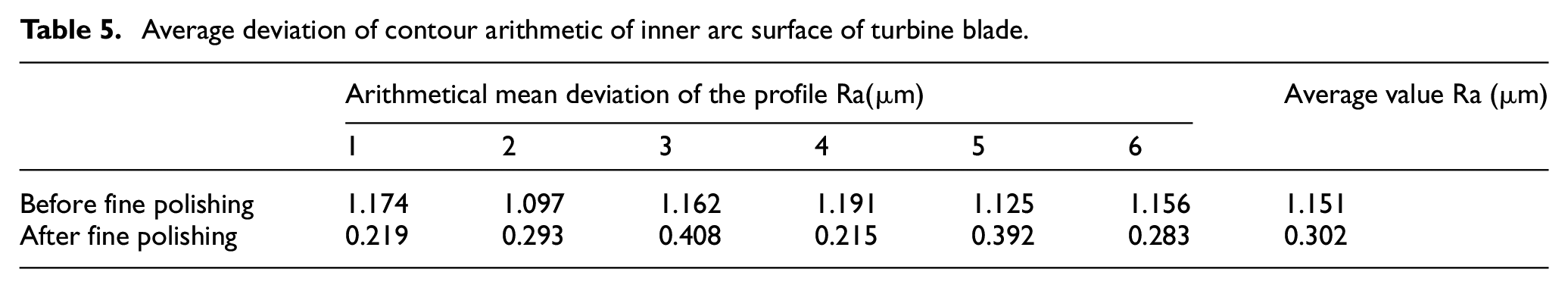

The surface quality of the inner arc surface of the steam turbine blade before and after polishing is shown in Figure 18. TIME210 is selected as instrument of roughness measuring. The average value of the six roughness measurement points before and after polishing is taken to obtain the average deviation value (Ra) of the inner arc surface contour of the steam turbine blade in Table 5. The average surface roughness of the six measuring points is 1.151 μm before fine polishing, and it is 0.302 μm after fine polishing, and there is no obvious texture, which meets the Ra = 0.8 μm requirement of the inner arc surface roughness of the steam turbine blade. The feasibility of the proposed algorithm and the designed polishing system is verified.

Effect before and after fine polishing of inner arc profile of turbine rotor blade: (a) before fine polishing and (b) after fine polishing.

Average deviation of contour arithmetic of inner arc surface of turbine blade.

Conclusion

The impedance control of surface polishing robot and the parameter optimization method based on reinforcement learning are studied in this paper. The impedance control parameter method based on reinforcement learning algorithm is also discussed. The impedance control state-transition model of surface polishing robot is established by Gaussian process method. The improved algorithm combining dynamic matching method and linearization method is used to predict the output distribution of the model and the actual output error is controlled within 20%. The improved reinforcement learning algorithm is used for training impedance parameter, and optimal impedance parameters are obtained and added to the control set. The feasibility of the algorithm is verified by simulation experiment. The experiment results show that the average roughness of the selected points of steam turbine blade after polishing is only 0.302, which verifies the accuracy of the algorithm and the feasibility of the experimental system.

However, there are still some jobs need to be improved and studied. For example, the case of only one type of blade polishing force impedance control method is studied, and the generality of the algorithm is not strong. In the follow-up work, more other types of steam turbine blades will be studied. A lot of in-depth research on the algorithm will be conducted, and more sample data will be obtained to improve the applicable scene of the applicable scene of the algorithm.

Footnotes

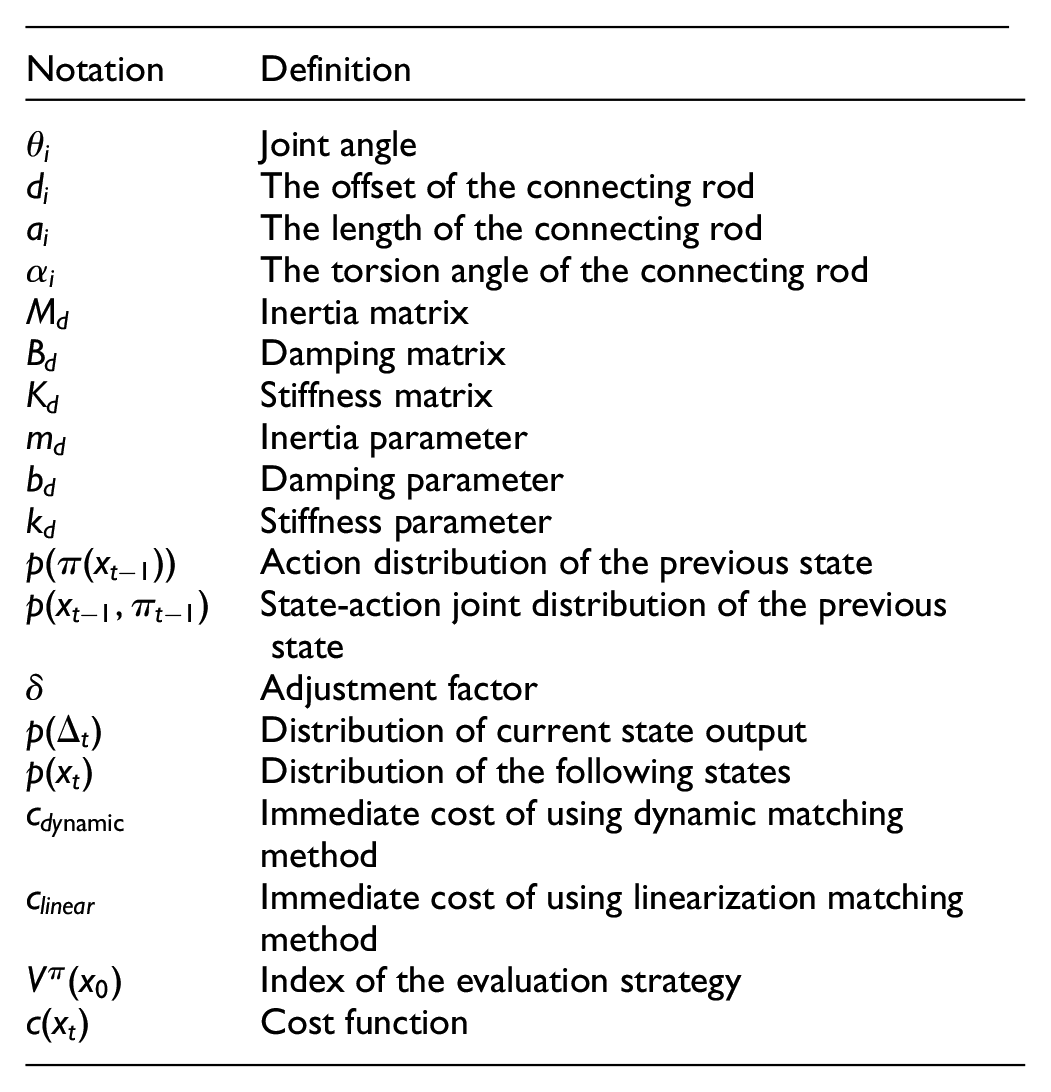

Appendix

| Notation | Definition |

|---|---|

| Joint angle | |

| The offset of the connecting rod | |

| The length of the connecting rod | |

| The torsion angle of the connecting rod | |

| Inertia matrix | |

| Damping matrix | |

| Stiffness matrix | |

| Inertia parameter | |

| Damping parameter | |

| Stiffness parameter | |

| Action distribution of the previous state | |

| State-action joint distribution of the previous state | |

| Adjustment factor | |

| Distribution of current state output | |

| Distribution of the following states | |

| Immediate cost of using dynamic matchingmethod | |

| Immediate cost of using linearization matchingmethod | |

| Index of the evaluation strategy | |

| Cost function |

Author Contributions

Yufeng Ding. Wuhan University of Technology School of Mechanical and Electrical Engineering. Associate professor. Yufeng Ding has put forward the impedance control method of surface polishing robot and theoretical framework. JunChao Zhao. Wuhan University of Technology School of Mechanical and Electrical Engineering. Master. JunChao Zhao has built simulation model of surface polishing robot impedance control and carried out simulation experiment. Xinpu Min. Wuhan University of Technology School of Mechanical and Electrical Engineering. Master. Xinpu Min has optimized the impedance control parameters are by using reinforcement learning algorithm and does some related experiments.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study received financial support from National Natural Science Foundation of China (E51875429): Research on the theory and method of product manufacturing quality control in cloud manufacturing environment.

Code availability

Some models and code generated or used during the study are proprietary or confidential in nature and may only be used with restrictions (e.g. the code can only run in KUKA robot system).