Abstract

Previous research has highlighted that encounters with deepfakes induce uncertainty, skepticism, and mistrust among audiences. In this study, we relate perceived deepfake exposure to media cynicism. Deepfakes shake users’ sense of reality, increasing a need to rely on epistemic authorities, such as journalistic media, while raising fears of manipulation. Based on uncertainty management theory, we propose that two “epistemic virtues” moderate the relationship between deepfake exposure and media cynicism: self-efficacy and intellectual humility. In a survey of 1421 German internet users, we find that perceived deepfake exposure positively relates to media cynicism. Intellectual humility does not dampen this relationship. Deepfake detection self-efficacy may be more harmful than helpful in preventing media cynicism. We discuss these findings in the context of research indicating that users tend to overestimate their ability to detect deepfakes and the challenges the novel deepfake technology poses to audience trust in a digital information ecosystem.

Introduction

In 2017, an anonymous Reddit user named “deepfake” created a forum for sharing pornographic content created using face-swapping technology (Somers, 2020). Since then, the emerging deepfake technology has captured the interest and imagination of researchers and practitioners alike (Godulla et al., 2021). As a compound of the terms “deep learning” and “fake,” deepfakes portray manipulated, fictive content based on existing audiovisual recordings. Hence, deepfake technology enables users to “create audio and video of real people saying and doing things they never said or did” (Citron & Chesney, 2019, p. 1753). Today, deepfakes are often associated with “fake news” as they may mislead audiences (Godulla et al., 2021). Concerns about “fake news,” or rather mis- and disinformation, are prevalent in today’s public discourse (Altay, 2023).

Indeed, deepfakes pose new challenges to audiences—primarily due to the high quality of their manipulations (Lee & Shin, 2022) and audiences’ deeply rooted willingness to believe audio-visual content (Fehrensen & Täubner, 2019). Therefore, experts and lay audiences alike are concerned about the potential of deepfakes to deceive the public (De Ruiter, 2021; Fehrensen & Täubner, 2019), to be used to spread propaganda and misinformation (Neudert & Marchal, 2019), and to disrupt political processes (Ikenga & Nwador, 2024). Initial studies indicate that audiences, in fact, struggle to correctly identify deepfakes (Bray et al., 2023; Thaw et al., 2020) and that exposure to deepfakes induces a sense of uncertainty, skepticism, or even mistrust (Hameleers & Marquart, 2023; Ternovski et al., 2022; Vaccari & Chadwick, 2020). However, some argue that skepticism may actually be beneficial, as citizens are required to remain vigilant in the digital media environment (Tsfati, 2003).

Objective

In this study, we examine the relationship between perceived deepfake exposure and media cynicism. Media cynicism is characterized as a generalized rejection and denunciation of news media (Jackob, Schultz, et al., 2019). It is related to political disaffection, belief in conspiracy theories, and news disengagement (Cappella & Jamieson, 1997; Jackob, Jakobs, et al., 2019) and therefore generally understood as an unambiguously destructive political attitude (Quiring et al., 2021). We propose that perceived deepfake exposure relates to media cynicism because deepfakes unsettle the established reliance on the veracity of audio-visual content (Jacobsen & Simpson, 2023). The deepfake technology, thus, induces a sense of uncertainty. As a result, individuals need to rely more on epistemic authorities, such as journalistic media, to uncover the truth. Deepfake technology also triggers fears of manipulation however (Bendahan Bitton et al., 2024), which may especially affect those skeptical of the intentions of established media.

Based on uncertainty management theory (Brashers, 2001), we propose that both deepfake detection self-efficacy and intellectual humility moderate the relationship between perceived deepfake exposure and media cynicism because they are likely to alleviate anxiety in the face of uncertainty. Beebe (2024) describes “epistemic autonomy,” that is, relying upon oneself in one’s reasoning, judgment, and decision making, as a virtue in the face of a complex, turbulent, and challenging information environment. Deepfake detection self-efficacy is an approximation of epistemic autonomy in the present context. Self-efficacy describes an individual’s belief in their ability to perform a task (Bandura, 1982) - here to detect a deepfake. Media literacy interventions, thus, often aim to bolster self-efficacy (Jeong et al., 2012). However, Beebe (2024) further points out that “epistemic autonomy” alone may lead to an overreliance on one’s capability to navigate the information environment (i.e., overconfidence and overreliance on heuristics). He therefore proposes intellectual humility as a second, complementary epistemic virtue. Intellectual humility entails recognizing the limitations in one’s personal beliefs and one’s capability to independently assess the veracity of information (Leary et al., 2017). Those high in intellectual humility, therefore, are willing to seek out additional information and rely on epistemic authorities, for example, in the context of identifying misinformation (Koetke et al., 2022).

This study is based on an online survey of German citizens (quotas defined for gender, age, and educational attainment), conducted in October 2022 (n = 1421). Our study contributes to the state of research on deepfakes by highlighting the relationship between perceived encounters with deepfakes and media cynicism. We examine the role of detection self-efficacy and intellectual humility and analyze how they moderate the link between perceived deepfake exposure and media cynicism. We find that deepfake self-efficacy can strengthen rather than dampen the relationship between perceived deepfake exposure and media cynicism. This speaks to previous research indicating that users tend to overestimate their ability to detect deepfakes. Intellectual humility, instead, does not protect individuals from heightened levels of media cynicism. We argue that some populations, such as those on the political right, may be especially vulnerable to the deleterious influence of deepfakes on audience trust due to high levels of pre-existing media cynicism. We conclude by discussing how the emergence of deepfake technology poses new challenges to audience trust in a digital information ecosystem.

Literature Review

Perceived Deepfake Exposure and Media Cynicism

Media cynicism is defined as a generalized rejection and denunciation of news media (Jackob, Schultz et al., 2019). While skepticism towards media can be a healthy stance of an engaged citizenry (Tsfati, 2003), cynicism is “an exclusively negative, highly sentencing, and destructive attitude” (Quiring et al., 2021, p. 3500) that manifests itself in a rejection of institutions. Cynicism towards media is likely to correlate with an oppositional stance, belief in conspiracy theories, political disaffection, a rejection of news, or news disengagement (Cappella & Jamieson, 1997; Jackob, Jacobs et al., 2019; Quiring et al., 2021). To understand the potential relationship between (perceived) exposure to deepfakes and media cynicism, it is necessary to more closely examine the cynicism construct.

Cynicism typically arises in dyadic relationships characterized by uncertainty and a need to rely on another despite a lack of trust (Smith & Pope, 1990). It has been studied extensively in the context of organizational uncertainty (e.g., in the context of organizational change) or during intimidating experiences such as police encounters (cf., Dean et al., 1998; Regoli, 1976). Cynicism has been described as a psychological coping mechanism (Lutz et al., 2020). Developing an attitude of cynicism may alleviate stress induced by the need to rely on another despite uncertainty and a lack of trust (Andersson, 1996; Dean et al., 1998). Cynicism implies a heightened level of mistrust, even antagonism, that is, an assumption of ill will (Almada et al., 1991; Mills & Keil, 2005). It does, however, provide meaning and is helpful in navigating a challenging environment (Brashers, 2001).

When considering the relationship between perceived exposure to deepfakes and media cynicism, two causal paths could be argued: First, those cynical towards media may be more likely to suspect that videos encountered online are AI-generated and thus report higher levels of deepfake exposure. There are no previous studies examining the antecedents of perceived deepfake exposure; however, hostile media perceptions have been linked to perceived exposure to “fake news” or misinformation (Halpern et al., 2019; Hameleers, 2025). Second, encountering deepfakes online could induce a sense of cynicism in an individual. While the present study is based on cross-sectional data and, thus, correlational evidence, its conceptual argument rests on this latter proposition. Cynicism is thus conceptualized as a state (cf., Andersson, 1996; Dean et al., 1998) than can vary given different levels of deepfake exposure.

While no previous study has examined the relationship between deepfake exposure and media cynicism, experimental evidence shows that deepfake exposure can induce a sense of uncertainty, skepticism, or mistrust. Ternovski et al. (2022) found that “informing participants about deepfakes did not enhance participants’ ability to successfully spot manipulated videos” but, instead, induced them to “disbelieve any political video they were shown as part of the experiment – real or fabricated” (Ternovski et al., 2022, p. 3). This finding is in line with Vaccari and Chadwick’s (2020) study where the most notable effect of exposing participants to deepfakes was a rise in uncertainty. They conclude that “deepfakes may not necessarily deceive individuals, but they may sow uncertainty” (Vaccari & Chadwick, 2020, p. 9). Hameleers and Marquart (2023) found that just labeling a video as a deepfake reduces its credibility, regardless of the veracity of the label. Ahmed (2021) finds that social media users encountering deepfakes grow more skeptical towards news on social media in general.

We propose that perceived exposure to deepfakes positively relates to media cynicism, as the deepfake technology induces a deep sense of uncertainty, which can at the same time increase one’s reliance on journalistic media to report the truth, but also induce fears of manipulation especially among those skeptical of the intentions of established media. Encounters with deepfakes can trigger a general sense of insecurity and uncertainty (Bendahan Bitton et al., 2024). Deepfakes call into question the veracity of audio-visual media, which have long been held to be especially reliable (Hameleers et al., 2023; Lewandowsky et al., 2017; Vaccari & Chadwick, 2020). Jacobsen and Simpson (2023, p. 2) posit that “deepfakes arguably represent a rupture in society’s trust of the image.” Deepfakes open new opportunities for manipulating (audio-visual) media content (Jacobsen & Simpson, 2023). Awareness of deepfakes therefore has the potential to more deeply affect audiences’ attitudes towards news than previous instances of misinformation. Some may fear that the deepfake technology will allow media to distort the truth and mislead the public more easily than ever before. We propose the following:

Perceived exposure to deepfakes is positively related to media cynicism.

The Role of Self-Efficacy

Uncertainty management theory (Brashers, 2001) explores how individuals cope with experiences of uncertainty to alleviate feelings of anxiety—for example, by seeking additional information. “Uncertainty exists (…) when people feel insecure in their own state of knowledge or the state of knowledge in general” (Brashers, 2001, p. 478). We propose that the relationship between exposure to deepfakes and media cynicism is moderated by what Beebe (2024) describes as “epistemic autonomy,” that is, reliance upon oneself in one’s reasoning, judgment, and decision making. We focus on deepfake detection self-efficacy to approximate the concept of epistemic autonomy in the context of exposure to deepfakes.

Studies have explored audiences’ abilities to detect deepfakes, mostly finding that they struggle to reliably recognize a deepfake (e.g., Lewis et al., 2022; Thaw et al., 2020). Yet, audiences exhibit ample confidence in their ability to do so. Bray et al. (2023) find that in their sample, study participants’ detection accuracy was rather low (less than 50%). However, the authors point out that participants expressed high confidence in their choices regardless of actual detection accuracy. Köbis et al. (2021, p. 1) even titled their study in line with these findings: “People cannot detect deepfakes but think they can.” Confidence in one’s ability to detect a deepfake does not seem to be reliably related to actual detection accuracy (Lewis et al., 2022). In the context of climate change misinformation, Doss et al. (2023) found that perceived ability to detect deepfakes did not consistently relate to actual deepfake detection. However, we propose that confidence in one’s ability to detect deepfakes—which we conceptualize as deepfake detection self-efficacy—may play a role in the relationship between perceived deepfake exposure and media cynicism.

Bandura (1982, p. 122) describes self-efficacy as a personal judgment of “how well one can execute courses of action required to deal with prospective situations.” Notably, self-efficacy is distinct from concepts such as skills, which refer to an individual’s actual capability of performing a task (cf., Hargittai & Hinnant, 2008). In this study, we thus do not attempt to assess audiences’ actual detection capability but their confidence in this capability. Hopp (2022, p. 229) proposes the concept of fake news self-efficacy: “that is, confidence in one’s ability to identify factually incorrect current events information.” We apply the self-efficacy concept to the detection of deepfakes.

We propose that detection self-efficacy moderates the relationship between perceived deepfake exposure and media cynicism. An individual with high confidence in their ability to detect a deepfake should experience less insecurity and uncertainty when navigating their information environment (cf., Compeau & Higgins, 1995; Kim & Glassman, 2013; Wijayanti et al., 2025). Also, they should be less unsettled by the potential lack of veracity of audio-visual media. As a result, they are likely to perceive less of a threat of manipulation and less of a dependency on journalistic media to reveal the truth. Detection self-efficacy, thus, serves as a psychological resource, a buffer against the need to resort to cynical beliefs to alleviate anxiety in the face of uncertainty (Brashers, 2001). Conversely, a lack of self-efficacy will make individuals more susceptible to uncertainty, mistrust, a sense of powerlessness, and fears of manipulation. We propose the following:

Detection self-efficacy negatively moderates (weakens) the relationship between perceived exposure to deepfakes and media cynicism.

The Role of Intellectual Humility

A sense of self-confidence in one’s ability to discern the truth can be considered an “epistemic virtue”—it can motivate a quest for truth (Beebe, 2024). However, it can also lead individuals to overestimate their ability to do so—as empirical studies on audiences’ abilities to accurately identify deepfakes illustrate (Doss et al., 2023; Köbis et al., 2021; Lewis et al., 2022). Beebe (2024) therefore argues that aside from self-confidence another, complementary critical epistemic virtue is intellectual humility.

Intellectual humility is a concept increasingly explored in the context of mis- and disinformation studies (Bowes & Fazio, 2024). It has been defined as “recognizing that a particular personal belief may be fallible, accompanied by an appropriate attentiveness to limitations in the evidentiary basis of that belief and to one’s own limitations in obtaining and evaluating relevant information” (Leary et al., 2017, p. 793). We propose that intellectual humility moderates the relationship between perceived deepfake exposure and media cynicism because it serves as a psychological resource that alleviates the stress of uncertainty and protects individuals from jumping to conclusions (Bland, 2024; Rieger et al., 2024). Those high in intellectual humility recognize and embrace uncertainty rather than experiencing it as an anxiety-inducing burden. They seek out epistemological authorities rather than questioning or mistrusting their motivations (Marie & Petersen, 2024).

Studies show, for example, that individuals high in intellectual humility are eager to seek more information (Gorichanaz, 2022) and less likely to rely on heuristics (Bowes et al., 2023; Zmigrod et al., 2019). In the context of mis- and disinformation, those high in intellectual humility are motivated to engage in behavioral processes to counter misinformation (e.g., fact-checking and seeking alternative opinions; Koetke et al., 2022) and are thus less susceptible to fake news (Bowes & Tasimi, 2022). Gross and Balaban (2024) show that the effect of a debunking intervention on conspiracy beliefs was moderated by intellectual humility. Intellectual humility negatively relates to conspirational thinking (Bowes & Tasimi, 2022). Those high in intellectual humility should therefore be less likely to generally mistrust institutions, such as journalism, or reflexively ascribe them questionable intentions. Marie and Petersen (2024) argue that intellectual humility implies a willingness to rely on others for information. This trusting propensity should safeguard against cynical beliefs, which are characterized by mistrust. To summarize, we propose the following:

Intellectual humility negatively moderates (weakens) the relationship between perceived exposure to deepfakes and media cynicism.

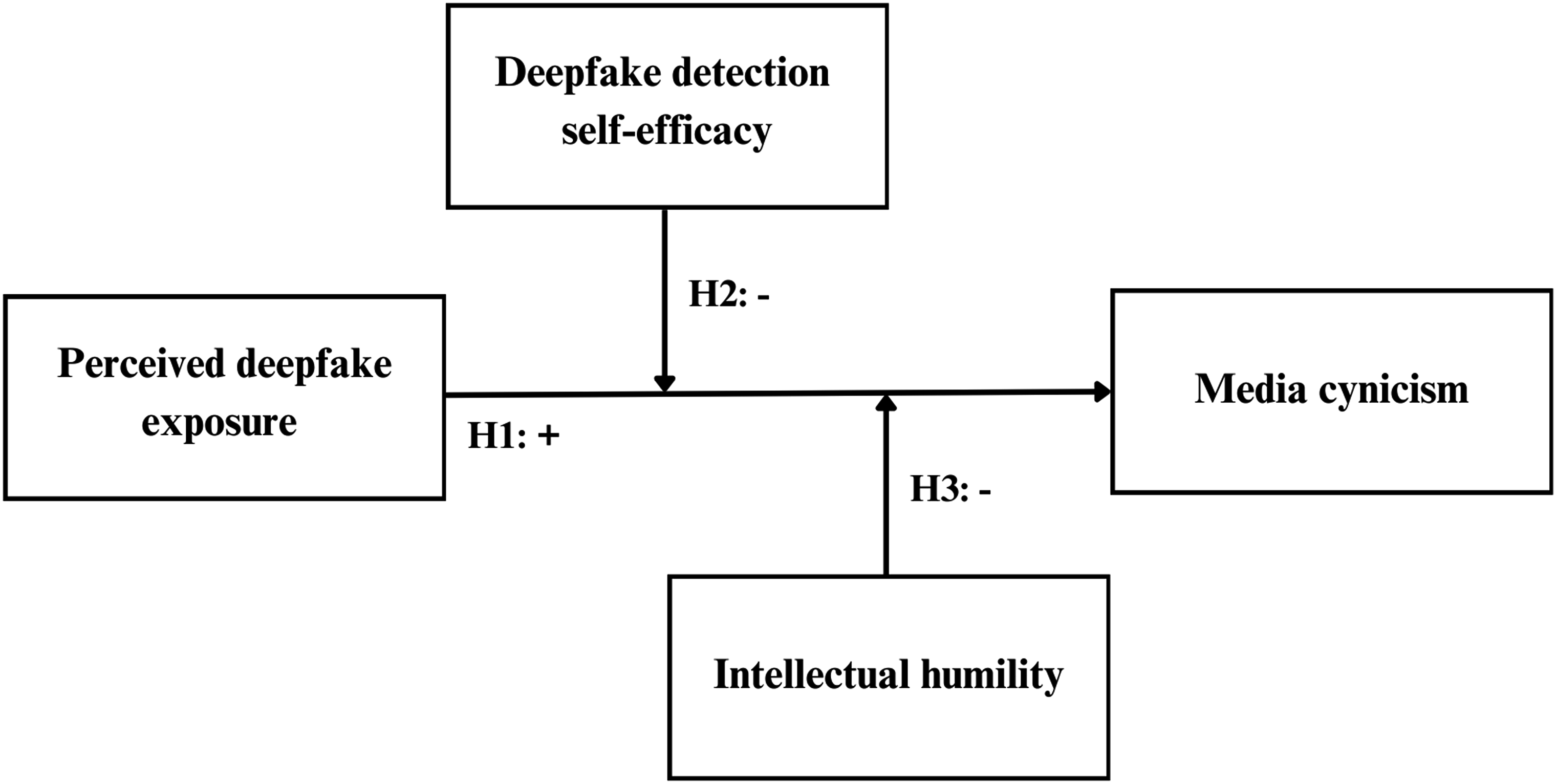

To summarize, we address the following research question (RQ): What are the roles of detection self-efficacy and intellectual humility in the relationship between perceived deepfake exposure and media cynicism? Figure 1 presents the overall research model. Research model.

Methods

Sample

We use data collected from an online survey in Germany to address the research questions. An online survey was chosen to facilitate access to participants, ensure high response rates, and since the subject covered a phenomenon occurring online (Gever, 2024; Ndiinee & Gever, 2025). The survey was fielded in October 2022. A certified market research institute provided access to the participants, who were invited by email to respond and received a small monetary compensation upon completion. In total, 1664 respondents completed the survey. Quotas were defined on the sample composition in terms of age, gender, and state of residency within Germany to ensure equivalence with the overall population. The data set was adjusted so that only respondents who fully completed the questionnaire were considered for data analysis (1421 respondents). The average age of respondents was 48 years, with 49.3% of them male and 50.7% female. About half of the respondents (44.6%) had a monthly net income of between 1500 and 3000, while about a quarter each report higher or lower levels of income. Furthermore, 46.9% of respondents have a low, 28.6% a medium, and 24.5% a high educational attainment level, measured by the highest educational qualification obtained by the respondents (low: no qualification, still at school, and lower or intermediate secondary school leaving certificate; medium: general higher education entrance qualification; high: university degree and doctorate).

Measures

At the beginning of the questionnaire, respondents were presented with a short definition of what is considered a deepfake. Additionally, they were provided with a deepfake example of voice actor Boet Schouwink using deepfake technology to create a simulation of the actor Morgan Freeman (Diep, 2021). After watching the video, all respondents were asked how often they thought they had contact with a deepfake video online in the last 12 months (never, one to three times, four to six times, seven to nine times, more than nine times). We focus on perceived exposure to deepfakes for several reasons. First, previous studies show that participants struggle to accurately identify deepfakes. Actual deepfake exposure, therefore, is not necessarily strongly related to perceived exposure. Second, the prevalence of deepfakes in the information environment is low and therefore difficult to observe. Third, we are interested in how perceived dangers emanating from deepfakes relate to a generalized attitude towards media. Such a relationship is dependent upon subjective threat perceptions more than on objective deepfake exposure. Thus, we do not claim that self-reported deepfake exposure is a valid measure of actual deepfake exposure.

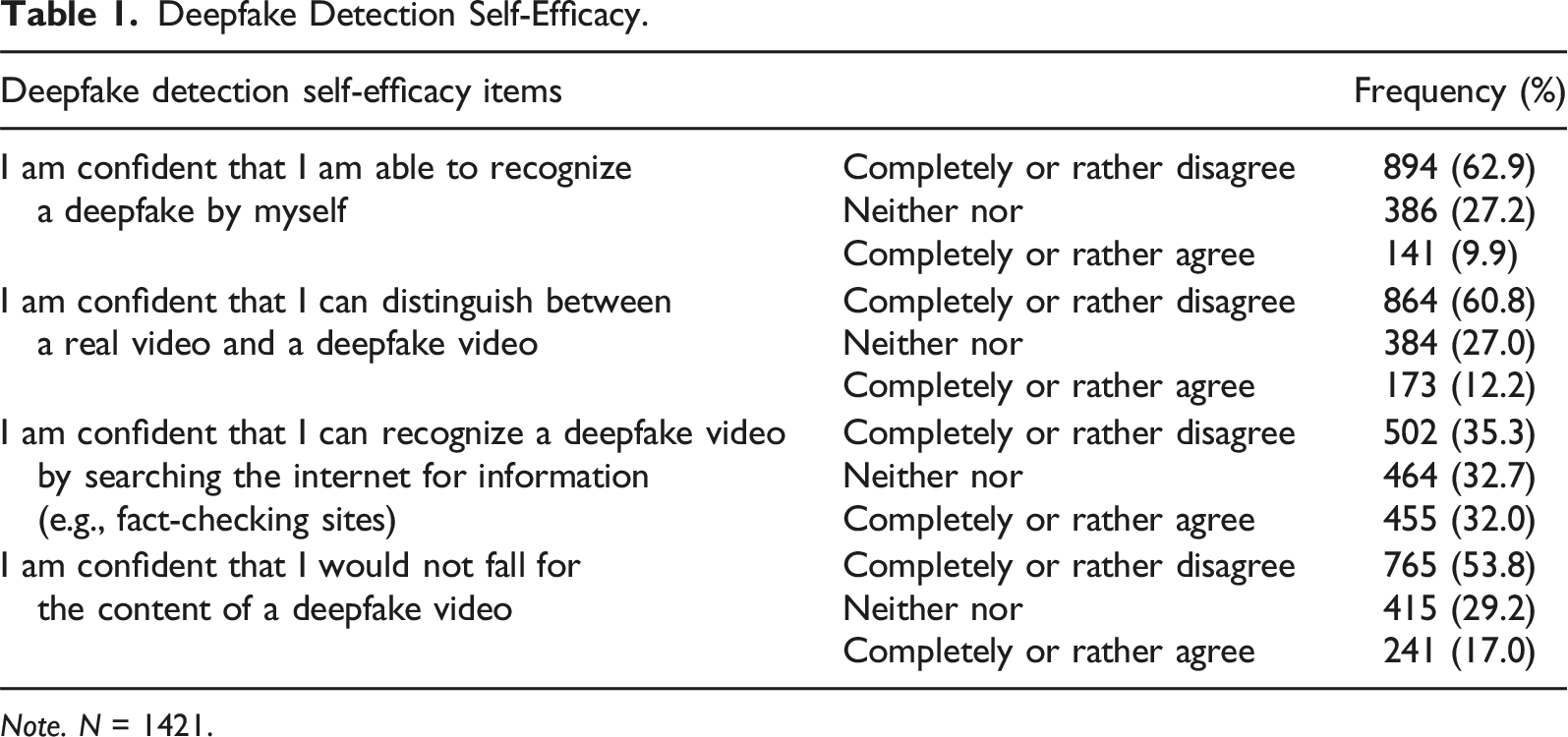

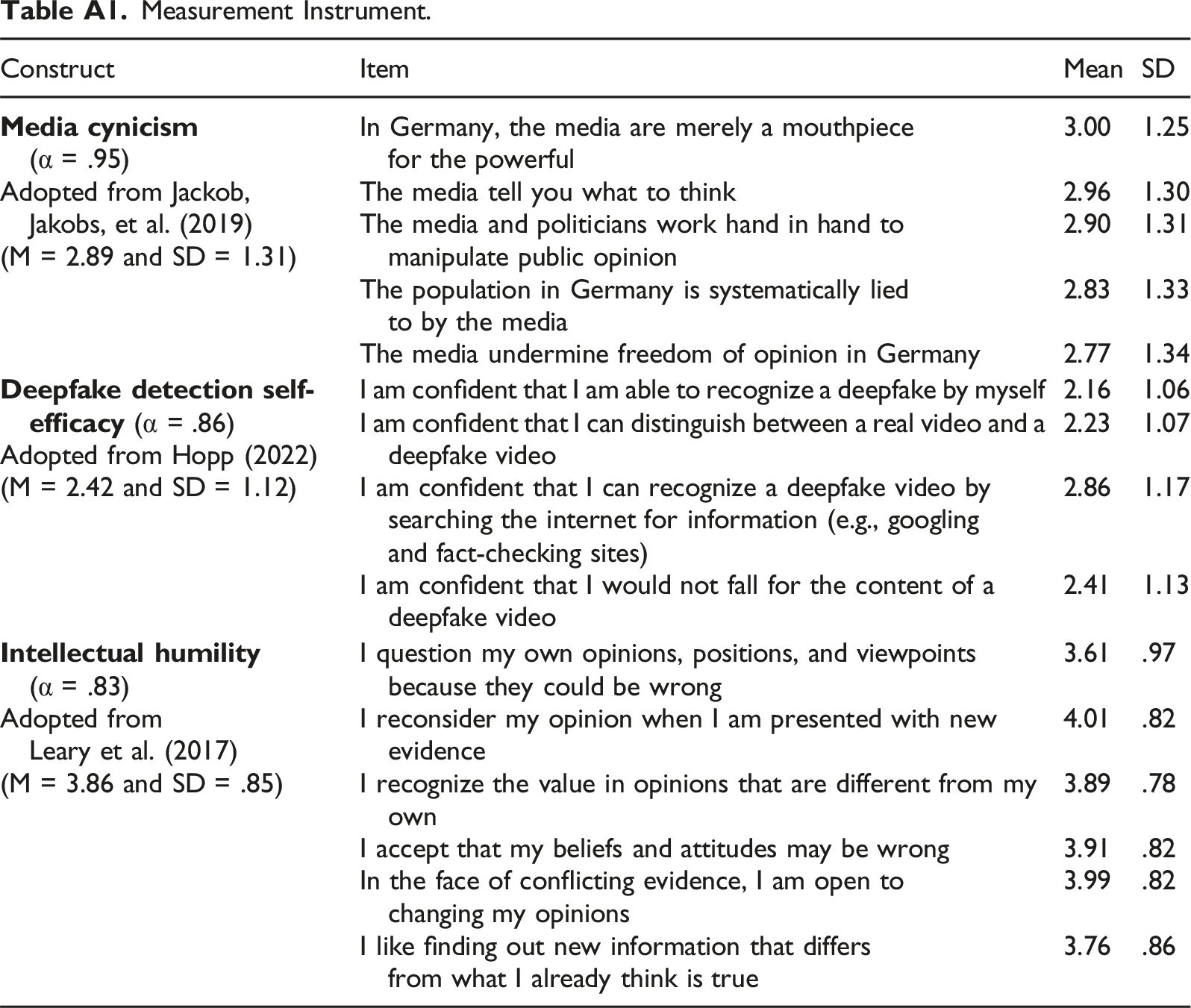

The media cynicism scale developed by Jackob, Jakobs et al. (2019) was included to determine the respondents’ attitudes towards the media and was measured using five items (e.g., the population in Germany is systematically lied to by the media). All items were assessed on a five-point Likert scale (do not agree at all to fully agree). A scale for the measurement of deepfake detection self-efficacy was developed for the purposes of this study based on Hopp (2022). All four items were assessed on a five-point Likert scale (do not agree at all to fully agree) and included the following four items: (1) I am confident that I am able to recognize a deepfake myself, (2) I am confident that I can distinguish between a real video and a deepfake video, (3) I am confident that I can recognize a deepfake video by searching the internet for information (e.g., fact-checking sites), and (4) I am confident that I would not fall for the content of a deepfake video. Intellectual humility was measured following Leary et al. (2017, p. 795) based on a five-point Likert scale (do not agree at all to fully agree). For an overview of all items used in the measurement of media cynicism, deepfake detection self-efficacy, and intellectual humility, please see Appendix Table 1.

The study included a number of control variables. Digital skills assessed following Hargittai and Hsieh (2012). Hence, participants were asked to rate their knowledge of six internet terms such as PDF, Cache, or Spyware (five-point Likert scale from not good at all to very good). Self-reported skills were considered to contrast them with self-efficacy. Participants were queried on their frequency of use of internet applications (e.g., information services, messaging services, and online banking) and social media (e.g., Facebook, YouTube, and TikTok). Both were measured using a six-point Likert scale (never to several times a day). Those with high levels of internet and social media use can be expected to encounter more deepfakes, but also to be less skeptical towards news encountered online (Ahmed, 2021).

Political orientation was measured by asking the respondents for their party preference in the next federal election, providing the six most popular parties in Germany, listed from left- to right-wing, and the option of not giving an indication or naming a different one. Political orientation was measured because previous studies have shown individuals with right-wing orientation to be more prone to believe and share misinformation and display higher levels of media cynicism.

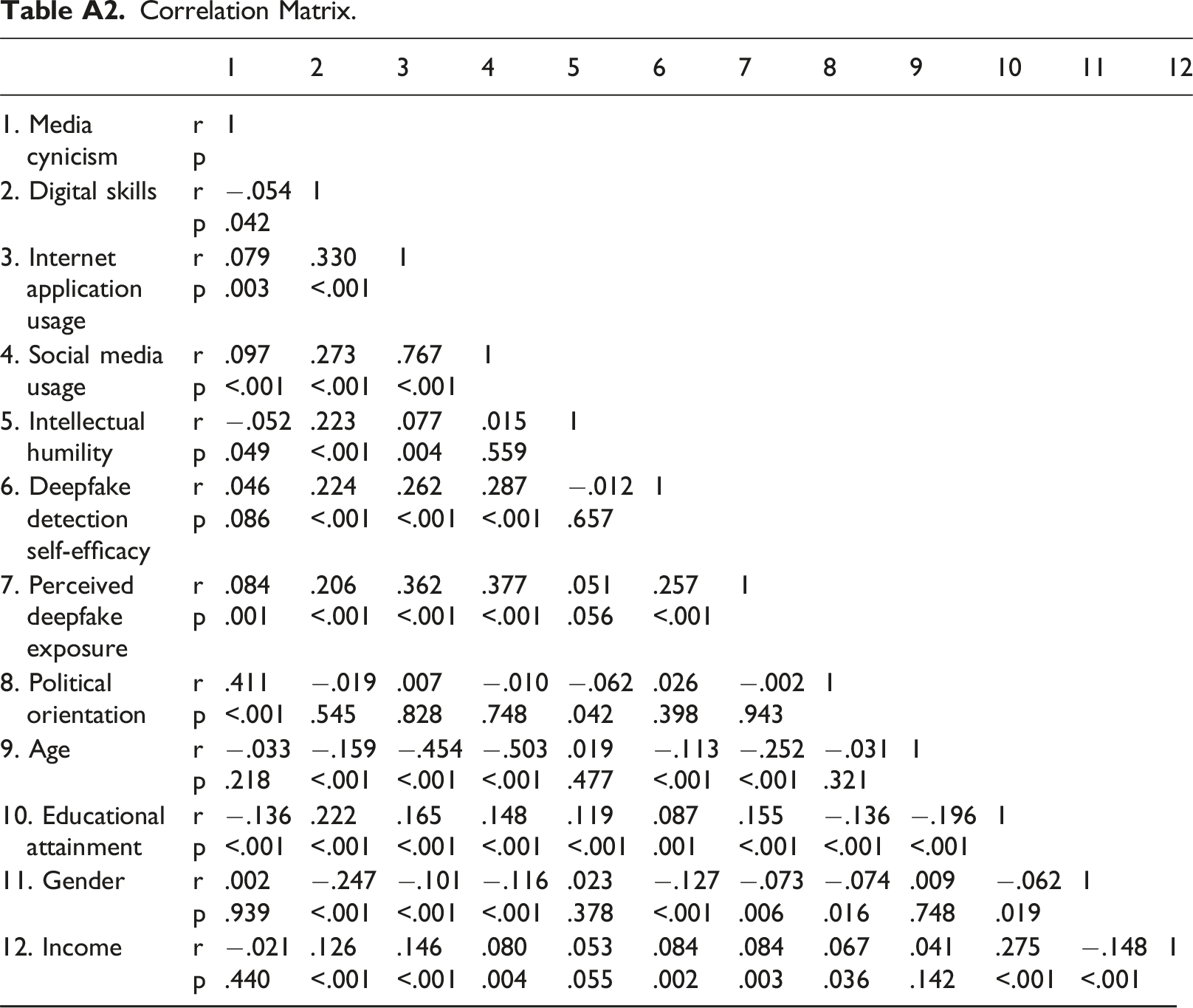

Mean Indices

To assess respondents’ media cynicism, self-efficacy, intellectual humility, digital skills, internet application usage, and social media usage, mean indices were conducted for each construct. A reliability analysis was conducted to determine the internal consistency of the developed deepfake detection self-efficacy scale. As Cronbach’s alpha shows, the internal consistency of the scale is high (α = .86). Descriptive and statistical data analyses were conducted using the software SPSS Statistics 2021 (version 28.0.1.0). Furthermore, a correlation matrix of all variables is presented in Appendix Table 2. The analysis helps to rule out problems of multicollinearity. It also helps to separate out the relationships between the main predictors of the regression analyses. Furthermore, all requirements for the linear regression were checked and fulfilled (linearity, outliers, independence of residuals, multicollinearity, and equality of variance).

Results

Descriptive Data Analysis

Deepfake Detection Self-Efficacy.

Note. N = 1421.

Predictors of Media Cynicism

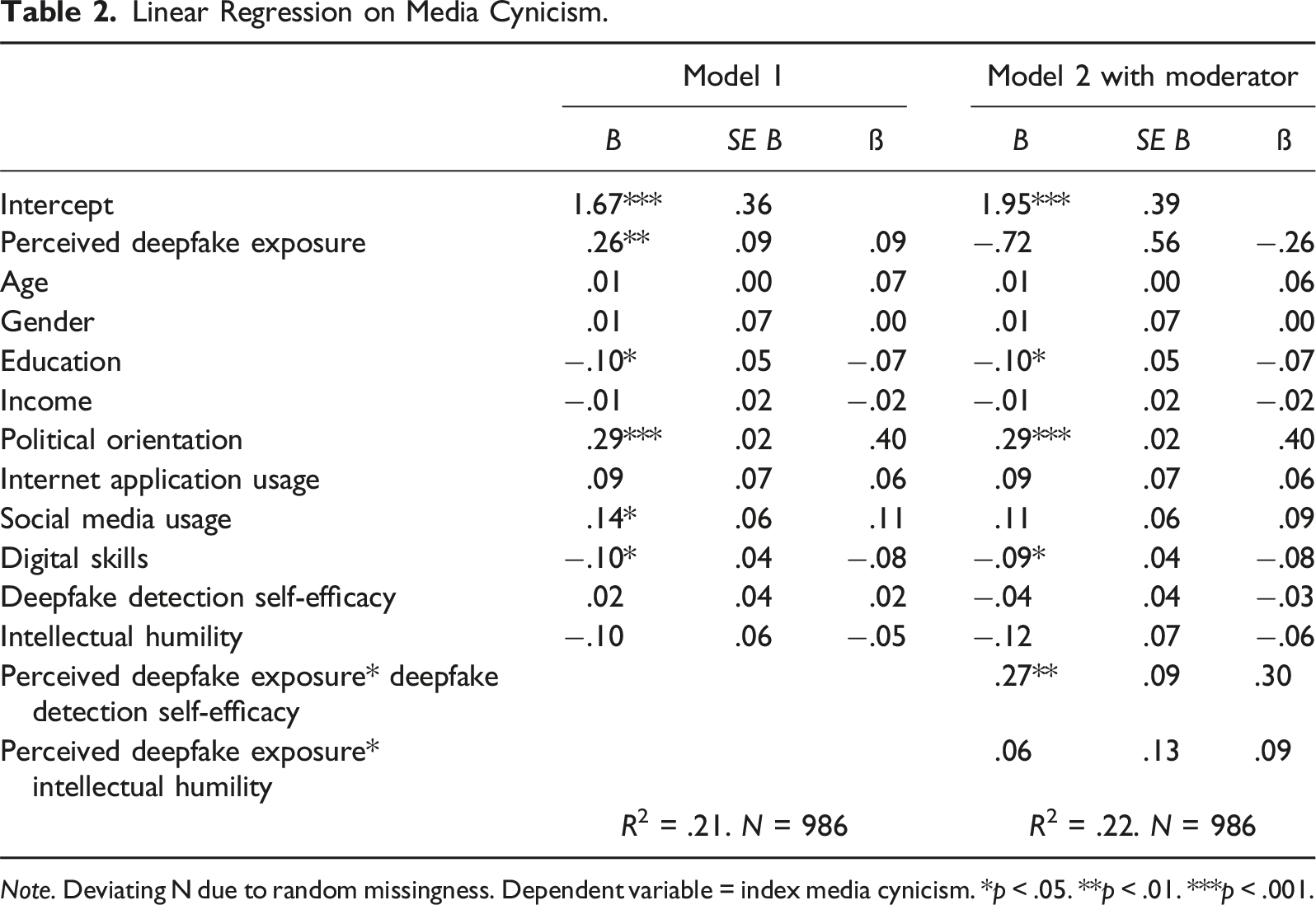

Linear Regression on Media Cynicism.

Note. Deviating N due to random missingness. Dependent variable = index media cynicism. *p < .05. **p < .01. ***p < .001.

Results show that perceived exposure to deepfakes is a significant positive predictor of media cynicism, confirming H1. Social media usage is also positively related to media cynicism, but internet application usage is not. Those on the political right exhibit higher levels of media cynicism. Additionally, digital skills are shown to be significantly negatively related to media cynicism. Finally, those with lower levels of educational attainment report more media cynicism. The regression model explained a total of 21% (corrected R2 = .20) of the variance in media cynicism (F (11, 974) = 23.67; p < .001).

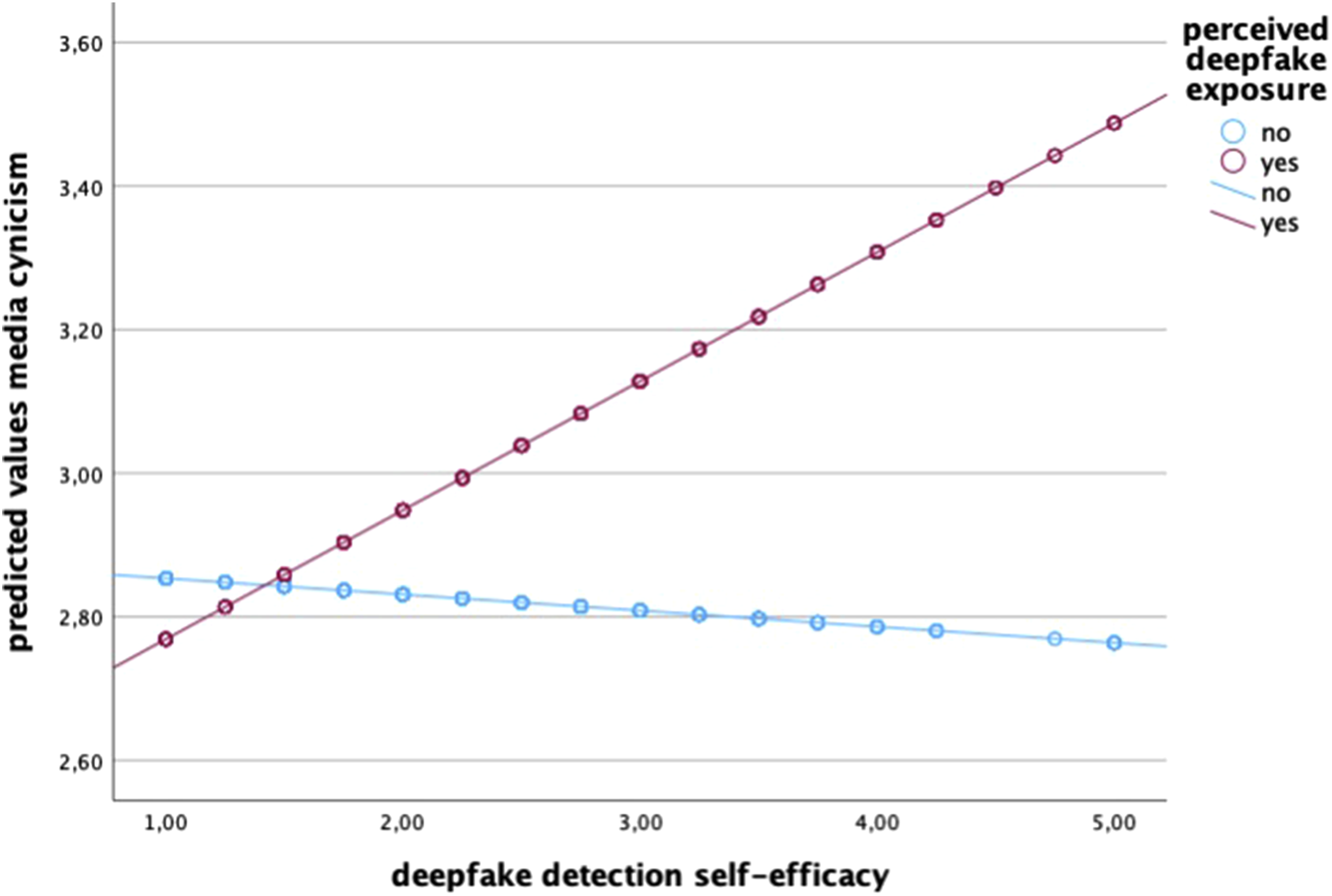

To test moderating effects (H2 and H3), the interaction terms were included in Model 2 of the regression (F (13, 972) = 20.88; p < .001). In the case of detection self-efficacy, the significant positive relation indicates that the higher the perceived deepfake detection self-efficacy is, the stronger the positive effect of perceived deepfake exposure on media cynicism (see Figure 2). We reject H2 as we had predicted a negative moderation but instead found a significant positive moderation. For further analysis of the interaction patterns, Johnson–Neyman intervals were examined

1

to identify values separating significant from non-significant effects. Results show a positive effect on media cynicism for individuals with higher self-efficacy. Above a deepfake self-efficacy value greater than or equal to 2.24, which about 60% of the respondents possess, the effect of deepfake exposure on media cynicism becomes significant and thereby stronger (b = .17, SE = .08, p = .05). Interaction between perceived deepfake exposure and detection self-efficacy on predicted media cynicism.

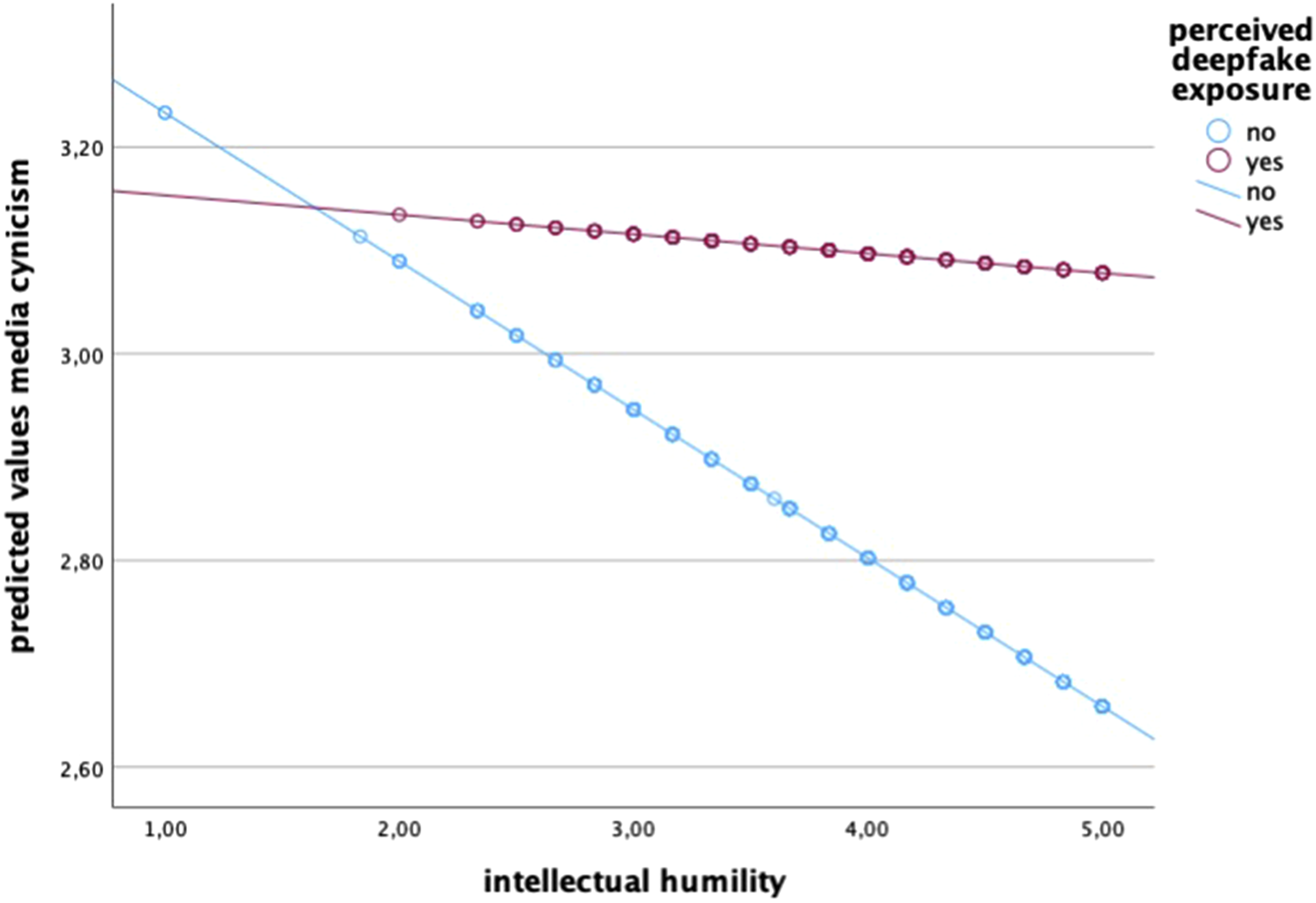

For the interaction term of perceived deepfake exposure and intellectual humility, no significant moderating effect was shown (see Figure 3). Hence, a person’s intellectual humility has no direct influence on media cynicism and no moderating effect on the relationship between deepfake exposure and media cynicism. We therefore reject H3. Interaction between perceived deepfake exposure and intellectual humility on predicted media cynicism.

Discussion

This study examines the relationship between perceived deepfake exposure and media cynicism—and the role of two “epistemic virtues” (Beebe, 2024), self-efficacy, and intellectual humility, in moderating this relationship.

First, we find that in our sample of German citizens, perceived deepfake exposure is low. More than three quarters of respondents do not believe that they have encountered a deepfake within the last 12 months. Numerous previous studies have found that individuals struggle to correctly identify a deepfake, however (Bray et al., 2023; Thaw et al., 2020), so our focus is on perceived exposure and our study does not speak to the actual prevalence of deepfakes in German citizens’ information diet. Similarly, this study does not examine deepfake detection skills, but rather self-efficacy, that is, participants’ confidence in their ability to correctly identify a deepfake. Here, we find that participants in our study rate their own capability to identify a deepfake as quite low. More than 60% do not believe they can distinguish a real from a fake video, and a majority believe that they could be deceived by a deepfake.

These descriptive findings are of interest because previous studies have confirmed overall low deepfake detection skills in diverse populations (Lewis et al., 2022; Thaw et al., 2020), so participants’ self-assessment is likely to be realistic. In other words, we find little evidence for widespread overconfidence. Also, while previous research on misinformation perceptions has found strong evidence of third-person effects (Altay & Acerbi, 2023), we find that participants consider themselves vulnerable to deception by deepfakes. We explain this finding in light of the observation that audio-visual media have traditionally been ascribed high levels of veracity and reliability (Hameleers et al., 2023; Vaccari & Chadwick, 2020). New technological possibilities of altering audio-visual material, including the depiction of real human beings, may therefore fundamentally shake audiences’ confidence and induce a sense of anxiety (Jacobsen & Simpson, 2023: Lee et al., 2023) that goes beyond the effects of purely textual or even visual misinformation—at least as long as the deepfake technology is still largely unfamiliar to the public.

Turning to our first hypothesis, we find that perceived deepfake exposure is indeed positively related to media cynicism. This finding is of interest as previous studies have focused on confusion, mistrust, or skepticism (Hameleers & Marquart, 2023; Ternovski et al., 2022; Vaccari & Chadwick, 2020), which may all be considered problematic, especially from the perspective of reliable sources. Some argue, however, that skepticism towards media content, especially content encountered online, may actually be a healthy characteristic of an engaged citizenry (Tsfati, 2003). Few would claim that about media cynicism, which is defined as a generalized rejection and denunciation of news media (Jackob, Schultz, et al., 2019). It relates to political disaffection, belief in conspiracy theories, or news disengagement (Cappella & Jamieson, 1997; Jackob, Jakobs, et al., 2019; Quiring et al., 2021). We propose that perceived deepfake exposure relates to media cynicism due to the novelty of the technology, which may induce a general sense of uncertainty, and—among some—even anxiety (cf., Brashers, 2001). This uncertainty necessitates a greater reliance on, for example, journalistic media to distinguish truth from untruth. Skepticism towards these media institutions may evolve into cynicism under these conditions of uncertainty, powerlessness, and mistrust.

With regard to the role of self-efficacy, we find that our proposed measure targeted at deepfake detection appears suitable for capturing the phenomenon. It adds to previous attempts to capture detection self-confidence (Bray et al., 2023; Lewis et al., 2022). We find that detection self-efficacy does indeed moderate the relationship between perceived deepfake exposure and media cynicism (H2)—but rather than weakening this relationship, we find a positive moderation effect. Those more confident in their detection capabilities are more likely to develop a sense of media cynicism when encountering deepfakes. This finding is puzzling from the perspective of self-efficacy research and uncertainty management theory, as self-efficacy tends to induce self-confidence, lower insecurity, and bolster trust (Bandura, 1977; Compeau & Higgins, 1995; Hopp, 2022). However, this may not apply to a novel technology like deepfakes that relatively few users have encountered. We find that perceived deepfake exposure positively correlates with detection self-efficacy. It is possible that those who have more experience with deepfakes and thus believe to have a better grasp of the novel technology are more aware and/or more wary of its potential impact on the digital information ecosystem. Possibly those more confident in their ability to identify deepfakes are also more worried about the potential for powerful—political or media—institutions to abuse this new technology. Certainly, our findings warrant further investigations into these relationships.

Given this somewhat surprising role of detection self-efficacy, the role of intellectual humility could be considered all the more important. As Beebe (2024, p. 1) warns: “epistemic autonomy without intellectual humility leads to increased belief in misinformation, conspiracy theories and pseudoscience and decreased trust in scientific experts.” However, we do not find that intellectual humility relates to media cynicism, nor does it moderate the relationship between perceived deepfake exposure and cynicism (H3). Appendix Table 2 shows that intellectual humility positively correlates with digital skills (r = .223, p < .001) and educational attainment (r = .119, p < .001). Both relate negatively to media cynicism in the regression model. Intellectual humility also negatively correlates with media cynicism (r = −.052, p < .053). Intellectual humility and detection self-efficacy are not correlated. In their meta-analytic review of intellectual humility in the context of misinformation, Bowes and Fazio (2024) find that effect sizes tended to be quite small. So intellectual humility, while tendentially helpful in dampening the deleterious effects of misinformation exposure or conspirational thinking according to previous research (Bowes & Tasimi, 2022; Gross & Balaban, 2024; Koetke et al., 2022), does not dampen the relationship between perceived deepfake exposure and media cynicism when controlling for digital skills and education.

It should be noted that we present data from a cross-sectional study that did not apply an experimental design. Further evidence from experimental or longitudinal studies would be necessary to move beyond correlational insights and explore causal relationships. Specifically, we did not explore alternative potential pathways. As noted above, cynical views could potentially induce a heightened sense of deepfake exposure. While this study is based on a sizeable sample, both perceived deepfake exposure and self-efficacy were low, as was media cynicism on average. It would be worthwhile to further examine the relationships highlighted in this study by comparing populations with low and high pre-existing levels of media cynicism. In our sample, too few participants reported high levels of media cynicism and/or perceived deepfake exposure to allow for such comparisons. Finally, future studies could focus on vulnerable populations, especially those already leaning towards cynical attitudes or high in institutional mistrust.

Conclusion and Recommendations

To summarize, this study contributes to the emerging state of research on audience engagement with deepfakes by highlighting that perceived deepfake exposure may not only induce uncertainty, skepticism, and mistrust but even positively relate to media cynicism. Users’ self-confidence in detecting deepfakes, which is most prevalent among more experienced (regarding deepfakes) and social media savvy users with high digital skills, does not weaken but rather strengthens this relationship. Intellectual humility, by contrast, does not significantly relate to this relationship with media cynicism. We propose that these findings highlight users’ general sense of uncertainty when encountering the novel deepfake technology, especially with regard to the reliability of audio-visual media.

We find media cynicism to be more prevalent among those with high social media usage, low digital skills, low education, and those on the political right. Here, a further fanning of cynical attitudes in the context of exposure to deepfakes can be seen as especially problematic. Our findings may thus be helpful for the development of targeted interventions, such as warning labels or media literacy training. However, it also speaks to concerns that microtargeting deepfakes—for example, to those already low in institutional trust—may increase their cynicism (Dobber et al., 2020).

Our findings also indicate that more technologically savvy or experienced users may be better able to reflect the dangers of the deepfake technology. Educating the public about the deepfake technology may thus not immediately alleviate user anxiety and even cynical attitudes. Previous studies have shown that anti-misinformation interventions like warning labels, attempts at “inoculation,” or fact-checks tend to increase the salience of misinformation and can trigger a general sense of skepticism or mistrust towards news (van der Meer et al., 2023; Van Duyn & Collier, 2019). It is unclear if these influences will wane over time as users grow more accustomed to deepfakes and better able to understand their impact on media and the public discourse. For the time being, it is likely that the proliferation of deepfake technology will contribute to a general deterioration in media trust and a more generally skeptical stance of audiences in the digital information ecosystem (Altay et al., 2023; Lewandowsky et al., 2017).

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported by Sächsische Aufbaubank; 100607645.

Note

Appendix

Measurement Instrument. Correlation Matrix.

Construct

Item

Mean

SD

Adopted from Jackob, Jakobs, et al. (2019)

(M = 2.89 and SD = 1.31)In Germany, the media are merely a mouthpiece for the powerful

3.00

1.25

The media tell you what to think

2.96

1.30

The media and politicians work hand in hand to manipulate public opinion

2.90

1.31

The population in Germany is systematically lied to by the media

2.83

1.33

The media undermine freedom of opinion in Germany

2.77

1.34

Adopted from Hopp (2022)

(M = 2.42 and SD = 1.12)I am confident that I am able to recognize a deepfake by myself

2.16

1.06

I am confident that I can distinguish between a real video and a deepfake video

2.23

1.07

I am confident that I can recognize a deepfake video by searching the internet for information (e.g., googling and fact-checking sites)

2.86

1.17

I am confident that I would not fall for the content of a deepfake video

2.41

1.13

Adopted from Leary et al. (2017)

(M = 3.86 and SD = .85)I question my own opinions, positions, and viewpoints because they could be wrong

3.61

.97

I reconsider my opinion when I am presented with new evidence

4.01

.82

I recognize the value in opinions that are different from my own

3.89

.78

I accept that my beliefs and attitudes may be wrong

3.91

.82

In the face of conflicting evidence, I am open to changing my opinions

3.99

.82

I like finding out new information that differs from what I already think is true

3.76

.86

1

2

3

4

5

6

7

8

9

10

11

12

1. Media cynicism

r

1

p

2. Digital skills

r

−.054

1

p

.042

3. Internet application usage

r

.079

.330

1

p

.003

<.001

4. Social media usage

r

.097

.273

.767

1

p

<.001

<.001

<.001

5. Intellectual humility

r

−.052

.223

.077

.015

1

p

.049

<.001

.004

.559

6. Deepfake detection self-efficacy

r

.046

.224

.262

.287

−.012

1

p

.086

<.001

<.001

<.001

.657

7. Perceived deepfake exposure

r

.084

.206

.362

.377

.051

.257

1

p

.001

<.001

<.001

<.001

.056

<.001

8. Political orientation

r

.411

−.019

.007

−.010

−.062

.026

−.002

1

p

<.001

.545

.828

.748

.042

.398

.943

9. Age

r

−.033

−.159

−.454

−.503

.019

−.113

−.252

−.031

1

p

.218

<.001

<.001

<.001

.477

<.001

<.001

.321

10. Educational attainment

r

−.136

.222

.165

.148

.119

.087

.155

−.136

−.196

1

p

<.001

<.001

<.001

<.001

<.001

.001

<.001

<.001

<.001

11. Gender

r

.002

−.247

−.101

−.116

.023

−.127

−.073

−.074

.009

−.062

1

p

.939

<.001

<.001

<.001

.378

<.001

.006

.016

.748

.019

12. Income

r

−.021

.126

.146

.080

.053

.084

.084

.067

.041

.275

−.148

1

p

.440

<.001

<.001

.004

.055

.002

.003

.036

.142

<.001

<.001