Abstract

Combating harmful misinformation about pharmaceuticals on social media is a growing challenge. The complexity of health information, the role of expert intermediaries in disseminating information, and the information dynamics of social media create an environment where harmful misinformation spreads rapidly. However, little is known about the origin of this misinformation. This article explores the processes through which health misinformation from online marketplaces is legitimized and spread. Specifically, across one content analysis and two experimental studies, the authors investigate the role of highly legitimized influencer content in spreading vaccine misinformation. By analyzing a data set of social media posts and the websites where this content originates, the authors identify the legitimation processes that spread and normalize discussions about vaccine hesitancy (Study 1). Study 2 shows that expert cues increase the perceived legitimacy of misinformation, particularly for individuals who generally have positive attitudes toward vaccines. Study 3 demonstrates the role of expert legitimacy in driving consumers’ sharing behavior on social media. This research addresses a gap in the understanding of how pharmaceutical misinformation originates and becomes legitimized. Given the importance of the effective communication of vaccine information, the authors present key challenges for policy makers.

In modern societies, individuals must navigate a web of complex health systems and information to make the right decisions about their health (World Health Organization [WHO] 2013). Health professionals’ ability to effectively communicate accurate information increasingly occurs in an “information marketplace” where legitimate health information sources come up against a growing tide of misinformation (Scott et al. 2020). Although misinformation touches on almost all health care areas, the most high-profile contemporary manifestation of this problem is vaccine misinformation (Cornwall 2020). The COVID-19 pandemic has had a significant impact on the global pharmaceutical industry (Sarkees, Fitzgerald, and Lamberton 2020). Nevertheless, even before COVID-19, social media's role in disseminating vaccine misinformation was recognized as a major public health challenge (Lahouati et al. 2020), with increased skepticism toward traditional modes of health communication (Smith and Reiss 2020). This has led to awareness among pharmaceutical companies of the need to address these deficits in health literacy and tackle the “infodemic” of misinformation (Tyler 2021). Despite attempts by social media firms to tackle vaccine misinformation (Cellan-Jones 2020), it has become endemic, with estimates that more than 100 million Facebook users have been exposed to some form of it (Johnson et al. 2020). The filter bubbles created by social media's private spaces have brought together misinformation and groups linked by institutional distrust (Jamison et al. 2020; Smith, Cubbon, and Wardle 2020). Although there is a growing body of research on how vaccine misinformation spreads through social media (e.g., Featherstone et al. 2020; Jamison et al. 2020; Lahouati et al. 2020), there is a gap in our understanding of how it starts and how it is legitimized.

Health care professionals play an important role in the validation of health information, as individuals rely on these experts to interpret complex information and place a high level of trust in these professionals (Burki 2019). Much popular online health content is written by health professionals with the characteristics of influencers (Ngai, Singh, and Lu 2020), in that they build a social media audience that they influence by leveraging their credentials (De Veirman, Cauberghe, and Hudders 2017). Just as influencers play a key role in generating engagement on social media, the rise in the self-publishing of books through marketplaces such as Amazon gives individuals the ability to spread misinformation. Despite the wide availability of free online content, books are still perceived by consumers as a source of credible, in-depth expert knowledge (Perrin 2016). When this content reaches social media, reach is magnified, feeding into the complex digital information environment where individuals look for information to make health-related decisions (Ashfield and Donnelle 2020; Guess et al. 2020).

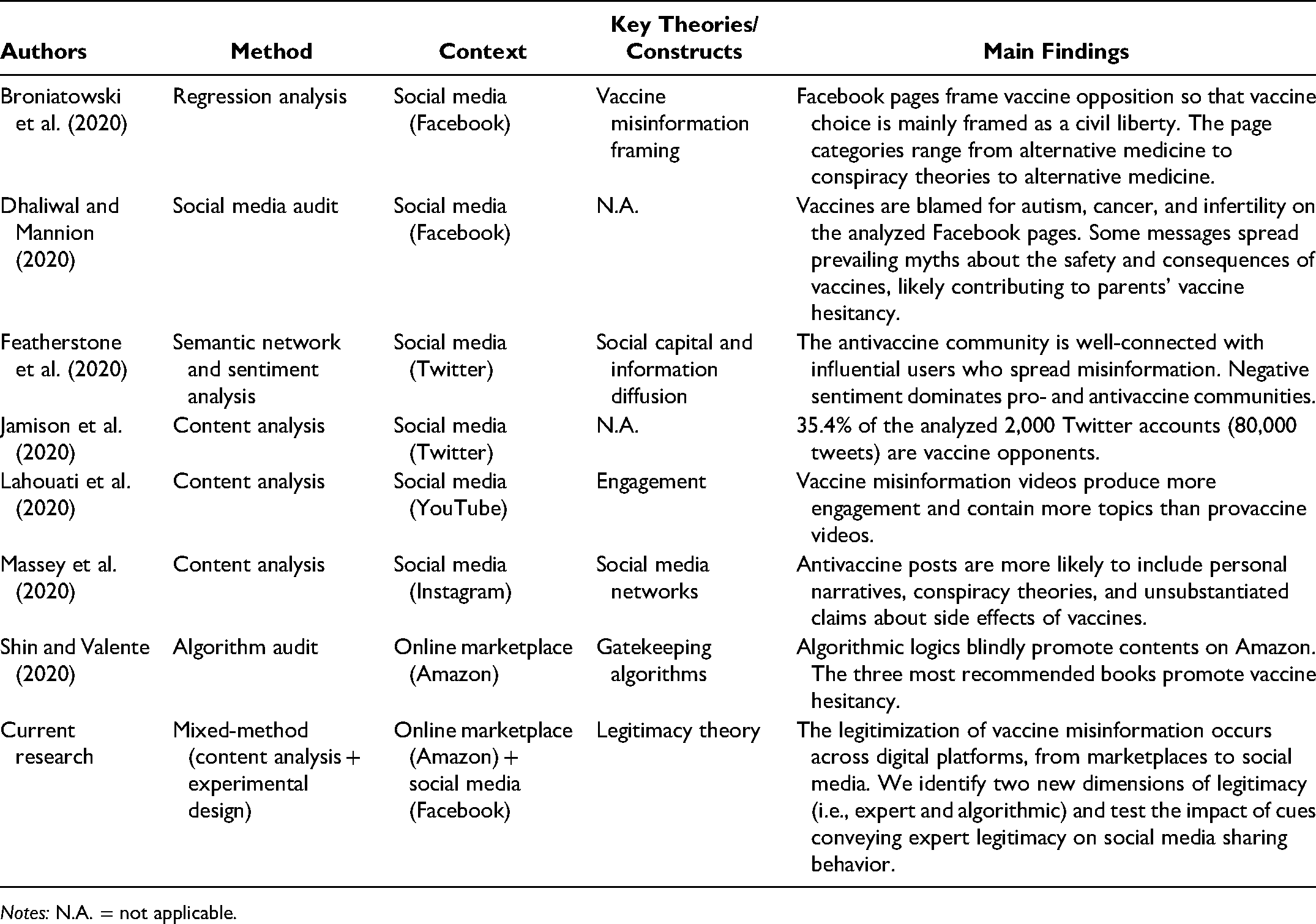

Despite scholarly interest in the spread of vaccine misinformation on social media, there is limited research into the origins of this misinformation and its legitimization processes (Table 1). This article addresses this gap and explores how highly legitimized influencer material available through online marketplaces in the form of books enables the spread of vaccine misinformation. Specifically, we aim to investigate how the legitimation processes of vaccine misinformation occur

Key Contributions to Social Media Vaccine Misinformation.

To fully understand this concept, we investigate legitimation processes at a more granular level, addressing the following research questions:

This article adopts a mixed-methods approach exploring the processes through which vaccine misinformation is legitimized and spread from online marketplaces to social media. Using a large data set of Facebook posts and Amazon book descriptions, we analyze the role of influencer sources in seeding misinformation. The findings of this qualitative phase are empirically tested through two online experiments in which we analyze the effect of specific cues on the perceived legitimacy of vaccine misinformation and consumers’ sharing behavior on social media.

This article makes several contributions. First, we believe it is the first study to explore the origins of vaccine misinformation and to identify how such harmful health misinformation is legitimized. We identify two novel legitimacy dimensions (expert and algorithmic legitimacy) that shed light on the overall legitimation process of vaccine misinformation. Second, we add to the literature on misinformation sharing by identifying the cues that confer legitimacy on vaccine misinformation and evaluating their impact on sharing behavior. Moreover, we find that the legitimizing effect of expert cues is stronger for individuals with low levels of vaccine hesitancy and holds only for misinformation in book form. Finally, we discuss potential policy interventions to mitigate the impact and spread of vaccine misinformation.

The article is structured as follows. We first review the literature about health misinformation, social media, and legitimacy and introduce Study 1, the content analysis. The next section features the hypothesis development and the description of experimental Studies 2 and 3. Finally, we provide a general discussion and implications for both theory and policy.

Theoretical Background

Health Misinformation and Social Media

Social media has come a long way from its original focus as a platform for social interactions to become a key platform for disseminating news and information (Tandoc, Lim, and Ling 2018). Alongside this, there have been widespread concerns about the spread of misinformation through social media platforms where algorithmic biases mediate and influence the promotion of content, polarizing users around specific narratives and fueling the spread of misinformation (Del Vicario et al. 2019).

Health information is a key use case for online information searches and a major source of social media content (Fernández-Luque and Bau 2015). However, health information is complex and requires a high level of information processing capability to interpret correctly. For instance, research in pharmaceutical advertising, particularly in advertising direct-to-consumer, over-the-counter medication (Byrne et al. 2013), has shown that conflicting advice and claims made in consumer-focused pharmaceutical advertising impede consumer understanding (Krishna and Thompson 2021). To ensure effective communication, health information is often mediated via health professionals, and a high level of trust in these health professionals is key to the effective functioning of health systems. The availability of online information can harm trust in “official” health information and intermediary health professionals (Park and Shin 2020). Above all, the consequences of the misinterpretation of health information can be serious, requiring widespread efforts by both policy makers and pharmaceutical companies to ensure that accurate information about pharmaceutical products is communicated effectively to consumers.

The diffusion of health care misinformation occurs because, although every patient has access to health information via the internet, few have the capability to interpret and evaluate it correctly (Bolton and Yaxley 2017). The nature of expert knowledge in health is that this knowledge may be comprehensible only to other trained health professionals (Lian and Laing 2004). Online information consumption and the direct cocreation of health knowledge through social media interactions typically occur in an environment where expert knowledge is disintermediated. Combined with the level of misinformation enabled via social media (Vosoughi, Roy, and Aral 2018), this creates an environment in which misinformation can spread rapidly. Health misinformation covers the full spectrum of health treatments and public health issues. For example, there is misinformation about autism, antibiotics, cancer treatments, alcohol use (Chou, Oh, and Klein 2018), the safety of e-cigarettes (Martinez et al. 2018), and Ebola (Jones and Elbagir 2018). However, while all forms of health misinformation are potentially harmful, few have the broad societal impact of misinformation about vaccines.

Misinformation and Vaccine Hesitancy

Public health professionals widely accept vaccines as the most effective public health intervention to prevent diseases (Piltch-Loeb and DiClemente 2020). However, despite public health success over several decades in expanding vaccination programs, there have been growing concerns over declining vaccination rates. Explanations for this decline include underestimating the risk of illnesses as their prevalence decreases, difficulties accessing and distributing vaccines in poorer countries, and a lack of public confidence in vaccines (WHO 2013). Even before the COVID-19 pandemic, hesitancy toward vaccines was on the rise, and the subsequent behavioral impact was considered one of the major public health challenges of our time (WHO 2019).

Opposition to vaccines, or skepticism over vaccine efficacy, has existed as long as vaccines have been proposed as a solution for limiting the public health impact of infectious diseases. Protests against compulsory smallpox vaccines in England in the 1830s were promoted by groups who saw the vaccine campaign as an attack on individual autonomy (Berman 2020). This historical example has much in common with contemporary vaccine hesitancy. Rejecting vaccines occurs due to social and psychological factors, where vaccines serve as a proxy for wider fears over social control (Leask 2020).

The term “vaccine hesitancy” reflects differing levels of skepticism about vaccines beyond outright refusal. For example, themes identified in COVID-19 vaccine misinformation include harmful intent by governments or pharmaceutical firms, doubt among scientists, and civil liberties issues (Broniatowski et al. 2020). Embedded in these contradictory messages is the goal of generating a public debate around the effectiveness of vaccines. This framing of misinformation through a discourse of debate and individual choice is aligned with the idea of cocreating health care and empowering consumers to make appropriate health decisions (Frow, McColl-Kennedy, and Payne 2016).

Social media has transformed vaccine misinformation’s ability to reach a global audience (Johnson et al. 2020). Antivaccine movements that were once local are now global because vaccine-related content is well-suited to spread through social media. The same dynamics that make social media such a powerful driver of opinion also drive the dissemination of harmful information. Researchers have explored how vaccine misinformation spreads on YouTube (Lahouati et al. 2020), Twitter (Featherstone et al. 2020), and Facebook (Jamison et al. 2020), mostly originating from individuals who share their personal experiences, conspiracy theories, or unsubstantiated claims regarding the harmful effects of vaccines (Massey et al. 2020). Even on a platform where vaccine misinformation represents a small fraction of the content, algorithmic biases can propagate this misinformation (Shin and Valente 2020) through the presence of “filter bubbles” that expose users only to ideas that they already agree with (Jamison et al. 2020). The persuasive influence of these misinformation narratives (Argyris et al. 2020), combined with the affordances created by digital technologies to spread information widely (e.g., through bots; Wawrzuta et al. 2021), creates the conditions for the massive reach of misinformation on social media.

Legitimation of Expert Information

Meyer and Scott (1983) define legitimacy in terms of the level of cultural support available to an organization. We adopt this definition and consider the legitimacy of misinformation and the likelihood of sharing this information to be embedded in the level of cultural support available to the ideas underlying the misinformation. There have been several attempts to identify the dimensions of legitimacy, many of which distinguish between forms of socially determined and symbolic legitimacy and more normative notions of rule-based legitimacy (Zott and Huy 2007). In this article, our theoretical lens is based on Suchman’s (1995) three dimensions of cognitive, pragmatic, and moral legitimacy, reflecting our focus on information rather than organizations. Spreaders of misinformation face a particular “liability of credibility,” which limits access to normative forms of legitimation. Legitimation techniques that make use of symbols can be powerful and provide collective frames of references that are “any thing, event or phenomenon to which members attribute meaning in their attempts to comprehend the social fabric in which they are enmeshed” (Brown 1994, p. 862). Recent literature on conceptualizing legitimacy has distinguished between normative and professional legitimacy (Deephouse et al. 2017), capturing the key role that professional identities and cues play in legitimation processes.

Professional identities enable individuals to navigate uncertainty, when service quality is hard to evaluate (Lian and Laing 2004). This is particularly true in the case of health care. However, the notion of professionalism is rapidly changing. Increasingly, embodied trust in professionals is being replaced by a system in which trust becomes enforced through regulatory controls (Spendlove 2018). This “enforceable trust” (Ferlie 2010) is based on an encoded set of professional regulations that govern concepts of expertise. Activities that undermine the process through which information on health products is legitimized can result in increasing misinterpretation of health information.

In recent years, there has been increased interest in how expert judgments, whether in the media or online reviews, influence consumer decision making (Clauzel, Delacour, and Liarte 2019). Given the nearly infinite amount of information available online, expert judgments serve to reduce cognitive load when consumers make decisions. However, judgments on expertise are themselves based on evaluations of the legitimacy of the material. For those who want to propagate misinformation, establishing the legitimacy of their message is critical (Di Domenico et al. 2020). In regard to health information, the ability to leverage professional identities marks the boundary between legitimate health advice and informal “lay” knowledge.

Method

To identify sources of misinformation from influencers, we focused on Amazon. We chose Amazon as the context of our investigation because it is the most visited e-commerce site in the United States, with 2.44 billion monthly visits in September 2020 (Sabanoglu 2020). Through its market leadership position in both physical and digital book sales, Amazon plays a key role in the dissemination of information. To date, only a limited number of studies have recognized Amazon as a source of misinformation (e.g., Shin and Valente 2020). We believe that product search motivation on Amazon is driven by information seeking based on the need for advice—expert advice, in the case of health care. This makes Amazon an appropriate starting point for identifying expert misinformation.

A key methodological issue is determining what counts as misinformation. In selecting material for this analysis, we focused on whether the books promoted beliefs based on vaccine hesitancy. Specifically, we adopted WHO (2013) guidance, universally accepted among public health professionals, that vaccines significantly reduce the risk of infectious diseases. Information promoting a blanket idea that vaccines are not effective is, therefore, coded as misinformation. In carrying out this analysis, we initially used only metadata, including the book front and back covers and metadata made available by the publisher to share on the Amazon sales page. Because the promotion of vaccine hesitancy is a core selling point of these books, it was not difficult to allocate each book to the appropriate category.

The data analysis comprises two stages. First, we adopt a qualitative approach to examine how vaccine misinformation is legitimized within and between the two platforms. We analyze a data set of descriptions of 28 books containing vaccine misinformation on Amazon and 649 Facebook posts linking directly to these books on Amazon. Second, we adopted an experimental approach to empirically test the findings from the previous qualitative study. A total of 870 U.S. consumers participated in this experimental phase, including three pretests and two main experiments.

Study 1: Establishing Legitimacy of Vaccine Misinformation

To explore how vaccine misinformation is legitimized within and across marketplaces and social media, we adopted a qualitative approach and employed a content-analysis technique (Hsieh and Shannon 2005). Study 1 was approved by the University of Portsmouth Faculty Ethics Committee (reference number: 2020/48/DIDOMENICO). The approved materials included a description of our research plan and data collection protocol. The study draws on two types of data. First, we collected descriptions of 28 vaccine misinformation books available on Amazon. We identified vaccine misinformation available via Amazon online bookstore through the following search protocol.

We selected the “Vaccinations” Best Seller category on the U.S. Amazon site because books in this category have a high degree of discoverability. For example, when searching for terms such as “vaccine books” or “books about vaccines,” these are prominent search results.

Following recommendations from previous studies (Shin and Valente 2020), we analyzed the top 100 best-selling books of the category daily over a period of two weeks, from November 20, 2020, to December 4, 2020. Because these are algorithmically generated lists that change regularly, we selected for analysis any books that were featured in the list at any period over the preceding two weeks. For each, we reviewed the book title, cover material, and descriptive text that accompanied the book. Given the similarity between the cover and description of some antivaccine books and legitimate health services, the first chapter of the book had to be reviewed in some cases to establish which category it fit into. After we eliminated duplicates (different formats of the same book), the sample consisted of 63 paperback and hardcover books. Twenty-eight of the 63 books identified were classified as vaccine misinformation books.

We then focused on the Facebook posts used to share the Amazon links for the selected books. The time frame for the identification of the Facebook posts containing the Amazon link to the books was set from the year of the publication of the book until December 4, 2020. The Facebook posts were identified using CrowdTangle software. CrowdTangle tracks the public (nontargeted, nongated) content from influential verified Facebook profiles, Facebook pages, and Facebook public groups, accounting for a sample of approximately 2% of all Facebook content. Using the CrowdTangle tools on this data set, it is possible to assess when something was posted, from which page or account it was posted, and how many interactions (likes or comments) it earned at any given point in time (see https://www.crowdtangle.com/resources). This tool has been increasingly adopted in social media research, particularly in assessing the spread of health (Ayers et al. 2021) and political (Giglietto et al. 2020) misinformation.

We considered only the first occasion on which information was shared to establish (1) how the misinformation is legitimized on Amazon through the analysis of book descriptions, (2) how misinformation is transferred and legitimated by Facebook users, and (3) how legitimization occurs between the two digital platforms.

Data Collection and Analysis

The first set of data collected for this study is represented by the descriptions available on the Amazon website of 28 books identified as misinformation and listed in the “Vaccination” Best Seller category on Amazon U.S., consisting of 5,594 words. The second set of data is composed of Facebook posts containing the Amazon links to the five most shared books from the same sample of 28 misinformation books selected in Study 1. Initially, 873 Facebook posts were retrieved; this number was reduced to 649 after duplicates and non-English language posts were removed.

In terms of analysis, we adopted three dimensions of legitimacy from Suchman’s (1995) legitimacy framework: (1) cognitive legitimacy, (2) pragmatic legitimacy, and (3) moral legitimacy. In addition, two other dimensions emerged from the field data. The corpus was then content-analyzed using QSR NVivo 12 software. The coding process followed previous studies in the field (Paschen, Wilson, and Robson 2019). First, two researchers independently coded 150 randomly selected Facebook posts and 10 Amazon book titles’ descriptions into the legitimacy dimensions identified through the literature. Then, the researchers reviewed the coding decisions together until agreement was reached. Next, the coding dimensions identified from the data were discussed and compared, and the researchers extracted commonalities between them. Definitions and examples for each new coding dimension were developed, enabling us to revise the initial list of coding scheme categories. The remaining data were then coded using the enriched version of the coding scheme. The final encoding results were analyzed through QSR NVivo 12 software, and the overall rate of agreement between the two coders was 87% (.75, Cohen's k).

Findings of Study 1

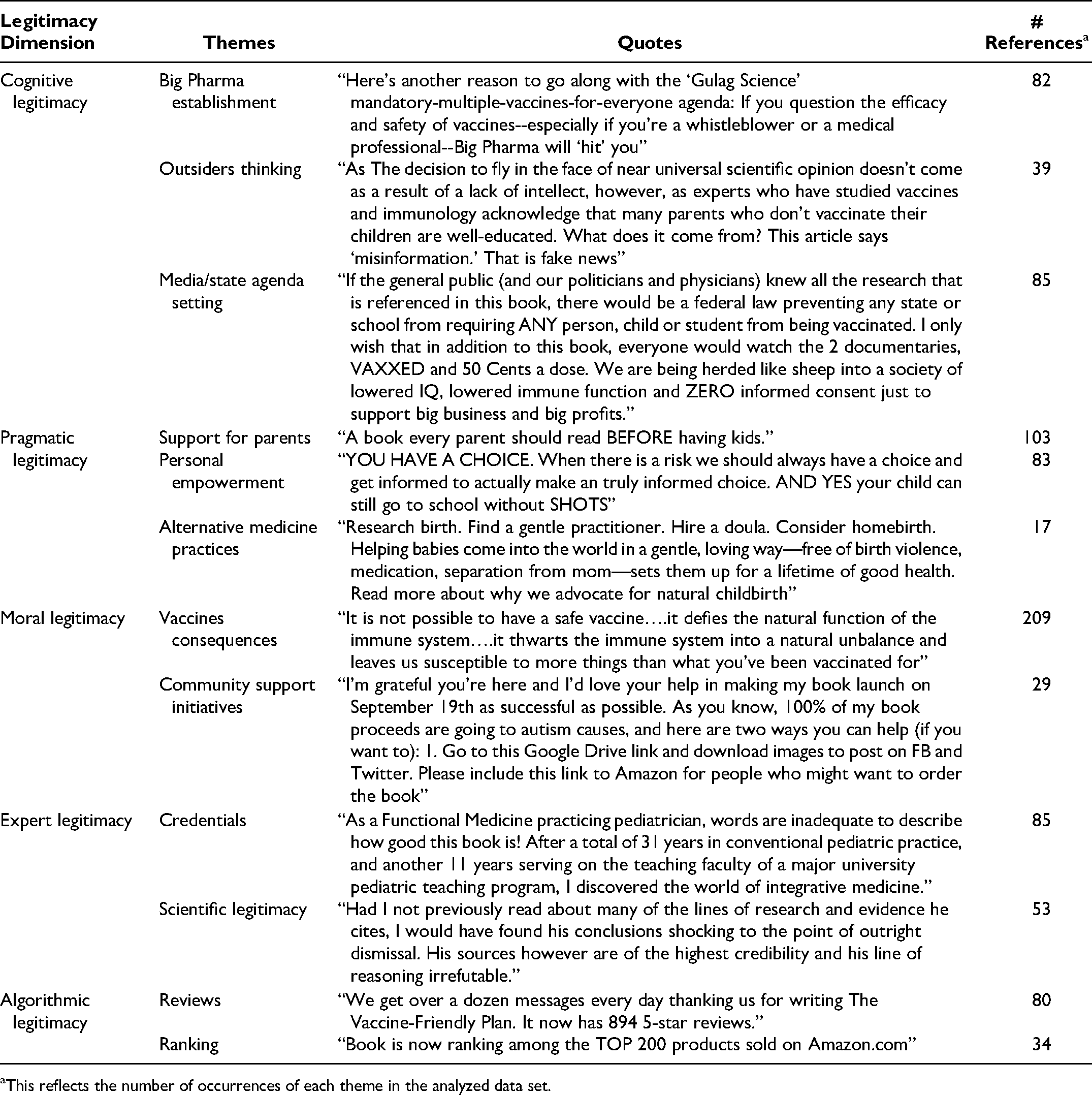

In addition to the three dimensions of legitimacy identified through the literature (i.e., cognitive, pragmatic, and moral legitimacy), we identified two new dimensions: expert and algorithmic legitimacy. In addition, through this analysis, 12 subthemes emerged from the five dimensions, which enabled us to better understand the determinants of the various types of legitimacy. Table 2 provides a list of the themes identified, including examples of references and quotes.

Summary of findings of Study 1.

This reflects the number of occurrences of each theme in the analyzed data set.

Cognitive legitimacy

In the context of vaccine misinformation, cognitive legitimacy is conveyed through narratives reporting a conspiratorial view of the world blaming the pharmaceutical establishment, the media system, and the government for promoting their vaccine agenda against individuals’ well-being. Specifically, these institutional entities are often alleged to be hiding the harmfulness of vaccines for economic purposes. In this sense, cognitive legitimacy emerges when the information provided through the titles helps the readers make the world a less chaotic place, finding correlations between entities and events that harm the population. We identified three themes for cognitive legitimacy. The theme “Big Pharma Establishment” highlights where legitimacy is conferred by attacking the medical and pharmaceutical establishment in two ways. First, pharmaceutical companies are claimed to be hiding the real results of vaccine studies to promote them. Second, pharmaceutical establishments are accused of adopting criminal practices toward the doctors (the authors of the books among them) who “bravely” uncover their misconduct. These claims are reinforced through links to external sources.

Similar power dynamics also apply to the second theme of cognitive legitimacy, “Media–State Agenda Setting.” Statements accuse the media of hiding the truth by not giving any coverage to the antivaccine books. In this theme, cognitive legitimacy is elicited by exposing secret plots and conspiracies implemented by both the media system and the government against individuals’ freedom and well-being. By achieving cognitive legitimacy, these books fulfill the needs of people who feel powerless (Abalakina-Paap et al. 1999) and lack sociopolitical control or psychological empowerment (Bruder et al. 2013). Such individuals are more likely to embrace conspiracy theories to find meaning in an apparently meaningless environment (Douglas, Sutton, and Cichocka 2017).

The last theme of cognitive legitimacy, “Outsider Thinking,” refers to claims about prominent doctors—the authors of the books or Facebook posts—being independent of the power systems (“Big Pharma,” the media, and the government). These “outsiders” are identified as defenders of the right to access relevant information. Here, cognitive legitimacy is achieved by identifying an outsider system of thinking and by praising the individuals who dare to fight against censorship to provide the needed vaccine information. This is complemented by attacks on out-group individuals, which are aimed at maintaining a positive image of the self and the in-group (Douglas, Sutton, and Cichocka 2017). Even in this case, it is interesting to note how cognitive legitimacy is achieved through the dynamics of conspiracy theories, which may be recruited defensively, to relieve the self or in-group from a sense of culpability for their disadvantaged position (Cichocka et al. 2016).

Pragmatic legitimacy

Pragmatic legitimacy involves direct or indirect exchanges between an organization and audience in which organizational action visibly affects the audience's well-being (Suchman 1995). In this case, pragmatic legitimacy is explained by claims about how reading the books will benefit the readers and guide them toward an informed decision about vaccines. We identified three themes for pragmatic legitimacy. In the theme of “Support for Parents,” pragmatic legitimacy emerges when readers positively evaluate the practical information about vaccines that the books present. The targeted audience of most books is represented by pregnant women and/or new parents.

Alongside parenting suggestions, the books are recommended as a route to “Personal Empowerment.” Empowerment refers to the notion of abandoning the mainstream health world and embracing the alternative truth proposed by the books, which suggests that the choice of vaccinating children should be up to parents (and not doctors). Moreover, the books confer pragmatic legitimacy in that they represent a way for individuals to rebel against mainstream medical culture and take back control of their bodies (and lives). Finally, pragmatic legitimacy is evoked by praising the benefits of alternative medicine practices such as naturopathic and holistic treatments, which have already been identified as potential reasons for the spread of misinformation (Chou, Oh, and Klein 2018). These practices are described as a means to detoxify the body and provide long-term health to counter mainstream medical practices such as vaccines.

Moral legitimacy

Moral legitimacy indicates a positive normative evaluation of the organization and its activities, reflecting judgments about whether the organization's activities are “the right thing to do” and promote social welfare (Suchman 1995). In the context of vaccine misinformation, moral legitimacy is conferred mainly by “revealing” the dangerous composition of vaccines and their consequences in terms of causing autism and other autoimmune diseases. We identified two themes for moral legitimacy. The first is “Vaccine Consequences.” Many statements refer to vaccines as an unnecessary treatment for the population's well-being that can lead to the development of autoimmune diseases such as autism in vaccinated children. Statements coded in this theme feature emotionally charged content to achieve emotional engagement with the audience. The moral dimension of legitimacy is conveyed mostly by stories shared by parents whose children develop an illness after being vaccinated. Sharing personal emotions and information, as well as evoking autobiographical memories and personal stories, enables users to become active participants (Van Laer et al. 2014) and may help create a strong connection with the others in the conversation (Dessart and Pitardi 2019). From this perspective, the authors of the books invoke a moral duty to report these stories, which, in their view, are often hidden or silenced by the mainstream health care establishment. The second theme, “Community Support Initiatives,” references activities that the authors or their supporters offer to help their communities (e.g., autism communities). In this case, moral legitimacy is conveyed by the authors’ philanthropic activities, such as pledging donations to charities and families’ support programs—which, in turn, may stimulate book sales.

Expert legitimacy

We identified two new dimensions that are specific to the marketplace context of this study. The first, expert legitimacy, is evoked through the presentation of the books’ authors as experts in their fields, mostly pediatricians and medical doctors. We identified two main themes for expert legitimacy. “Credentials” are used widely and prominently as a means to display a form of medical qualification of the authors. Even if the author's qualification was a doctorate unrelated to health care, it was still perceived as relevant. In this case, legitimacy is conveyed through the idea of providing “expert judgments,” which are known to be strong influencers of consumer decision making (Clauzel, Delacour, and Liarte 2019). Another theme stems from the use of scientific literature to support arguments, highlighting the important role that “Scientific Legitimacy” plays in judgments of the quality of evidence, even by nonscientific members of the public. However, while there may be public awareness of the role of scientific evidence in determining effective health care outcomes, there is less awareness of how this actually works. In some cases, the authors cited papers published in lower-quality journals or journals that might lack a clear peer review process. In other cases, book authors’ summaries of papers are not related to the core content of the paper. The misrepresentation of “scientific” evidence, or the promotion of poor-quality evidence, provides the scaffolding on which expert legitimacy of vaccine misinformation is formed. This is particularly relevant given that it is the same form of legitimation on which professional advice for public health is based.

Algorithmic legitimacy

The second new dimension is algorithmic legitimacy, derived from the logic behind the inclusion of a particular book in Amazon’s algorithmically determined Best Seller categories or book reviews. Because algorithms select the information displayed, their output has a considerable impact on consumers’ attitudes and behaviors (Epstein and Robertson 2015) and, more importantly, on the perceived credibility of information (Westerwick 2013). In this way, algorithmic legitimacy confers credibility on and promotes social acceptance of vaccine misinformation books when information about these books spreads on social media. Two main themes concur in the creation of algorithmic legitimacy. The theme of “Reviews” includes claims reporting the support that the book has received from other readers, especially in the form of positive reviews on Amazon. Communicating the number and positive valence of the reviews fulfills two goals for book authors. First, they are able to show the audience that other readers have appreciated the information in their book. Second, they use this support to stimulate further audience engagement with books on the Amazon marketplace, generating more sales and more reviews. In turn, higher sales and more reviews determine algorithmic recommendations so that the books are given more visibility in the marketplace. The second theme is “Ranking,” where legitimacy is stimulated by showing how much success the book has received on Amazon, in terms of the volume of purchases and the fact that the book is regularly listed in Amazon's Best Seller list. This is not limited to the Amazon ranking but also includes references to the number of followers that the authors or particular groups supporting the authors have on other digital platforms (e.g., Facebook). Even in this case, algorithms play an important role in determining the popularity of social media pages and groups. The more users follow a page, the more the page will be recommended to other users. Therefore, a Facebook page’s number of followers could be seen as confirmation of the legitimacy that algorithms confer on that page.

How Is Vaccine Misinformation Legitimized Within and Between the Online Marketplace and the Social Media Platform?

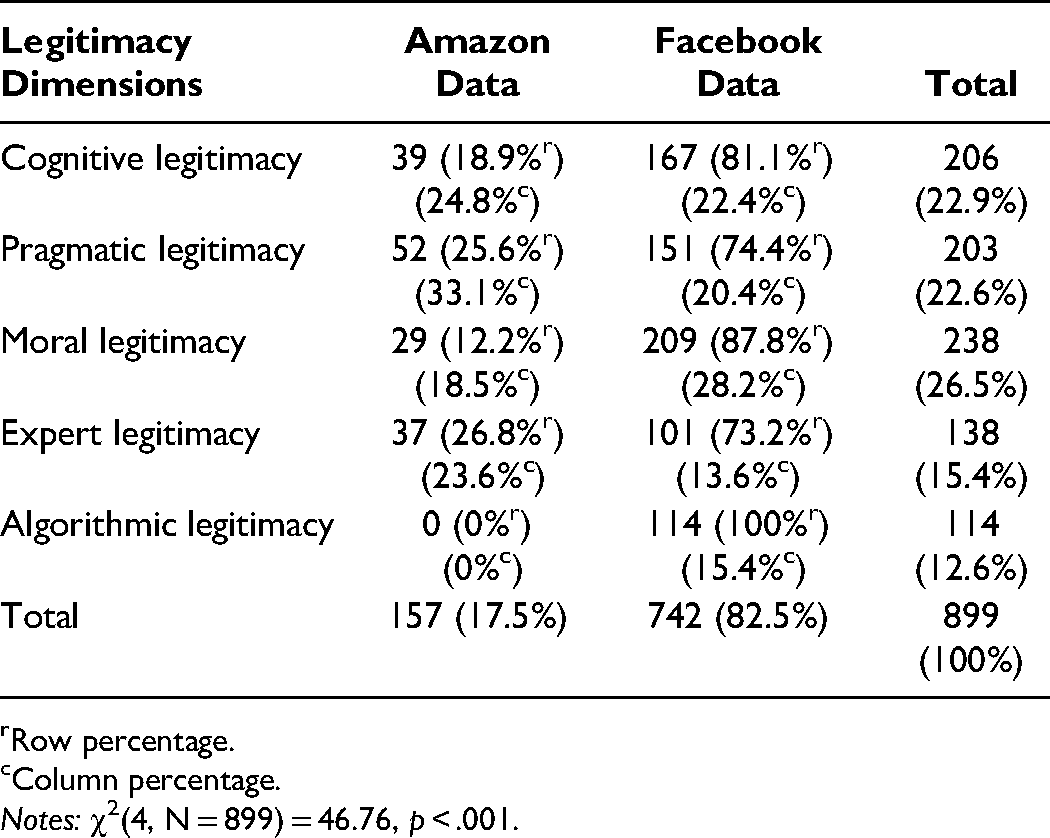

From the analysis, we could assess the number of coding references of the dimensions of legitimacy across the two sets of data (see Table 3).

Coding References of Amazon and Facebook Data Sets.

Row percentage.

Column percentage.

These results shed light on the extent to which each dimension of legitimacy is elicited, showing different mechanisms between the description of the misinformation books available on the Amazon website (the starting point) and the Facebook posts containing the Amazon link (arriving point).

When focusing on the marketplace (i.e., Amazon), pragmatic legitimacy is the most evoked dimension. This is then followed by a cognitive dimension, conveyed through narratives that promote individuals’ interests against Big Pharma and governments’ lobbying activities, which is connected to a moral dimension of legitimacy. Authors then use expert legitimacy to establish their professional authority, or the authority of the author of the foreword included in the book description, thus strengthening the credibility of the book.

The analysis of Facebook posts provides another perspective. Here, the most conveyed dimension of legitimacy is moral. Unlike the Amazon book descriptions written by the book authors, Facebook posts are shared by the book readers, who find moral utility in the community-supporting activities associated with the purchase of the books. Vaccine misinformation books are legitimized through the cognitive dimension, which is strictly linked to the moral dimension. Facebook users then employ expert legitimacy through credentials to increase the trustworthiness of their posts and of the promoted misinformation. Finally, algorithmic legitimacy arises from the Facebook data set. Consumers on Facebook use ranking positions and positive reviews from Amazon to praise vaccine misinformation books and their authors and to ultimately confer authority and increase the reliability of their posts. This reveals the potential impact of the marketplace in the legitimization process of vaccine misinformation books on social media.

Vaccine Misinformation Legitimacy Framework

Drawing from the insights of this content analysis, we propose a conceptual framework of the legitimation process of vaccine misinformation titles (see Figure 1). The framework identifies the different dimensions of legitimization and delineates the connections between them, showing how legitimacy develops from the marketplace to the social media platform.

The vaccine misinformation legitimization framework.

Cognitive, moral, and pragmatic dimensions of legitimacy

Legitimacy directly stems from the contents of the books and originates from the books’ descriptions on Amazon or social media posts. Three types of legitimacy, namely, cognitive, moral, and pragmatic, combine to give meaning to vaccine misinformation. These types of legitimacy are strictly related to each other. In particular, the theme regarding vaccine consequences

Expert dimension of legitimacy

The legitimation process suggests the adoption of symbols and credentials of legitimate health information purveyors to enable misinformation to be evaluated on the same criteria as highly credible information. Expert legitimacy arises from the descriptions of Amazon's titles and is then transferred to social media, where it is used as a means to reinforce and validate the rigor of the information provided. As represented in Figure 1, the cognitive, moral, and pragmatic dimensions of legitimacy are embedded in the expert dimension. These dimensions are directly generated by the book content and reinforce each other. In this sense, expert legitimacy confers trustworthiness on the vaccine misinformation, as it appears to come from knowledgeable health care professionals. This validation might occur either before or after the action of the cognitive, moral, and pragmatic dimensions.

Algorithmic dimension of legitimacy

In contrast to the previous dimensions, algorithmic legitimacy is not strictly related to the content of misinformation; rather, it is granted by algorithmic logics that blindly popularize the content on the online marketplace. The algorithmic dimension of legitimacy possesses a temporal component. It represents the last step of the process and emerges exclusively from the social media data set. Therefore, the algorithmic dimension of legitimacy, represented by the top right circle in Figure 1, is separate from the other interconnected dimensions. The success that the titles have in the marketplace, in terms of positive reviews and rankings, is the ultimate confirmation of the appropriateness, usefulness, and trustworthiness of information contained in the books, thus reinforcing their social acceptance.

Hypothesis Development

Building on the results of our qualitative investigation of the vaccine misinformation legitimization process, the following experimental studies aim to empirically test the effect of the first newly identified level of legitimacy (i.e., expert legitimacy) on consumers’ evaluation of misinformation and sharing behavior. We decided to focus on expert legitimacy because (1) it is the first level that directly impacts the legitimacy of misinformation and (2) while algorithmic legitimacy is automatically generated from interest-based machine logics, expert legitimacy is created by people who leverage their knowledge to spread misinformation. As a result, any policy implications might be more direct and effective in mitigating this phenomenon.

Credentials as Expert Cues

In Study 1, we found that authors’ credentials, displayed as medical qualifications, represent one cue conveying expert legitimacy, as they confer credibility and trustworthiness on authors. Building on this finding, we are primarily interested in assessing the impact of expert cues on the perceived legitimacy of the books. Legitimacy is a measure of the consumers’ level of acceptance of the firm (Randrianasolo and Arnold 2020) and, thus, justifies the presence of its products on the market. The role of experts in the legitimization processes is important, as expertise confers validity cues to consumers who assess the legitimacy of an organization (Bitektine and Haack 2015). When the information to evaluate is highly complex, consumers may experience uncertainty during the evaluation process due to their lack of experience in the subject (Humphreys and Carpenter 2018). Consequently, expertise can be even more influential in alleviating consumers’ cognitive load (Durand, Rao, and Monin 2007). Expert judgments are key factors affecting consumers’ evaluation in a variety of industries (Clauzel, Delacour, and Liarte 2019). For experience goods such as books, expert judgments can provide consumers with signals of the quality of the product (Kovács and Sharkey 2014), reducing consumer uncertainty. Thus, we hypothesize that expert cues (communicating expert legitimacy) increase consumers’ perceptions of the legitimacy of a book. Formally,

Moderating Role of Vaccine Hesitancy

In the context of our investigation, we suggest that vaccine hesitancy acts as a boundary condition in the development of legitimacy perceptions for vaccine misinformation.

Vaccine hesitancy reflects consumers’ concerns about the decision to get vaccinated (Salmon et al. 2015) and results in delays in acceptance or refusal of vaccines despite availability (Shapiro et al. 2018). Vaccine hesitancy may result from individuals’ evaluation of vaccine safety (MacDonald 2015). However, context-specific elements that revolve around historical, sociocultural, economic, and political factors play a key role in influencing attitudes toward vaccines (Larson 2016). Today, the rapid spread of vaccine misinformation on social media fuels the expansion of vaccine hesitancy, lowering individuals’ willingness to trust scientific institutions and the pharmaceutical industry (Dhaliwal and Manion 2020; Wardle and Singerman 2021). This also emerges from the findings of Study 1, where we show how antivaccine misinformation covers a range of topics, ranging from conspiracy theories regarding the pharmaceutical establishment to distrust of the government. Given the mistrust toward the medical establishment embedded within the antivaccine movement, we expect that the presence of expert cues will have a different impact on legitimacy perceptions, depending on individuals’ level of vaccine hesitancy. Specifically, we suggest that the authors’ credentials will lead to higher legitimacy perceptions for individuals with low levels of vaccine hesitancy (i.e., with positive attitudes toward vaccines). On the other hand, for highly hesitant individuals, the authors’ medical credentials will be detrimental to the perceptions of the books’ legitimacy. Formally,

The Mediating Role of Legitimacy

Perceived legitimacy is auxiliary to other processes, such as attitude formation and, potentially, behavior (Zelditch 2001). Higher levels of legitimacy may be associated not only with more positive evaluations of an organization (Randrianasolo and Arnold 2020) but also with the development of trust and credibility (Chari et al. 2016). Trust is a fundamental component in the health information environment, and those sharing information must establish their legitimacy in the field to gain influence and an audience. These dynamics also apply to spreaders of misinformation, who leverage their personal expert legitimacy to increase the legitimacy of the information and, in turn, stimulate the sharing of the contents on social media. Formally,

Study 2: Assessing the Impact of Expert Cues on Perceived Legitimacy

Study 2 examines the effect of expert cues on perceived legitimacy (H1) and the moderating influence of vaccine hesitancy on this relationship (H2). Both Studies 2 and 3 were approved by the University of Portsmouth Faculty Ethics Committee (reference number: BAL/2020/50/DIDOMENICO). The approved materials included a description of our research plan, recruiting materials, informed consent documents, and interview guide.

Participants, Design, and Procedure

A total of 191 U.S. consumers (women = 54.5%; Mage = 35.19 years, SD = 12.12 years) recruited from the Prolific online panel participated in the study. We implemented a two-cell (expert cues: presence vs. absence) between-subject experimental design. Our manipulation consisted of including (vs. excluding) the medical credentials of the authors (e.g., M.D., Dr.). Respondents read a scenario describing a situation in which they received a link from a Facebook group to one of the books considered in our study. The stimuli then showed a fictional marketplace search page displaying the book's cover and the author and book descriptions. To develop our stimuli, we selected 4 books among the 28 analyzed in Study 1. To counterbalance the possible influence of factors such as cover vividness, book title, and book content on our dependent variables, we included all four books in the experiment, resulting in four stimuli for each condition. Participants were randomly assigned to one of the conditions and presented with only one book. Then, responses were aggregated to obtain the final measure of the relevant variables. Prior to the main experiment, we pretested the trustworthiness of the fictional marketplace and the effectiveness of the credentials’ manipulation. Web Appendix A provides details about the pretests.

First, respondents expressed their views on vaccines through a seven-point Likert vaccine-hesitancy scale (α = .96; Shapiro et al. 2018), which served as a moderator in this study. After the stimuli, they rated the author's expertise on a five-item seven-point Likert scale (α = .98; Ohanian 1990) and the perceptions of the book legitimacy on a five-item seven-point Likert scale (α = .93; Chung, Berger, and DeCoster 2016). Then, we included two control variables in the survey instrument. In particular, we asked whether the respondents had knowledge of the book shown in the scenario (binary choice: yes/no), and we measured their involvement with the topic (vaccine) through a three-item seven-point Likert scale (α = .90; Jiménez, Gammoh, and Wergin 2020). Finally, respondents answered demographic questions (age and gender).

Results

First, we checked the effectiveness of our manipulation. The results of a one-way analysis of variance (ANOVA) revealed that participants in the presence-of-cues condition rated the author's expertise as higher (Mpc = 5.54) compared with those in the absence-of-cues condition (Mac = 4.42; F(1, 190) = 26.29,

Effects on perceived legitimacy

A one-way ANOVA on perceived legitimacy showed that individuals perceived a higher level of legitimacy in the presence-of-cues condition (Mpc = 4.52) than in the absence-of-cues condition (Mac = 3.96; F(1, 189) = 7.26,

To test the moderating effect of vaccine hesitancy on the relationship between expert cues and perceived legitimacy, we performed a moderation analysis employing the Hayes (2021) Model 1 macro with 10,000 bootstrapped samples. Age (b = −.001, n.s.), gender (b = −.62, 95% confidence interval [CI95] = [−1.01, −.22]) and involvement with the topic (b = .37, CI95 = [.16, .58]) were added to the model as control variables. The exclusion of the control variables from the model did not produce any significant change in the registered effects. The overall moderation model has significant effects (F(6, 184) = 7.56,

Study 3: The Role of Legitimacy on Social Media

Study 2 confirmed the role of expert cues in increasing legitimacy perceptions and showed how vaccine hesitancy modulates this relationship. Study 3 builds on these results and tests the validity of these relationships in a social media context. The study also includes a behavioral measure and examines the mediating role of legitimacy in driving consumers’ sharing behavior on social media (H3). Finally, the study tests whether the effects identified change as a function of the type of book (factual vs. misinformation). Previous studies have shown that antivaccine misinformation is shared more widely than factual vaccine information (Xu 2019) and is generally more appealing to individuals than provaccine information (Schmidt et al. 2018). While Studies 1 and 2 focused only on misinformation vaccine books, in this study, we examine whether the persuasive effects of expertise and perceived legitimacy are reduced in the case of factual vaccine information.

Participants, Design, and Procedure

A total of 399 U.S. consumers recruited from the Prolific online panel (women = 42.6%, nonbinary = 3%; Mage = 38.38 years, SD = 14.69 years) participated in the study. The online study used a 2 (expert cues: present vs. absent) × 2 (misinformation: factual vs. misinformation books) full factorial between-subject experimental design where the third factor (i.e., vaccine hesitancy) was measured.

Respondents read a scenario asking them to evaluate one sponsored Facebook post promoting a recently published book. The stimuli then showed a fictional sponsored Facebook post displaying the book's cover, the author's name, and a short description of the book (e.g., “

Results

A one-way ANOVA confirmed the effectiveness of the expert cues ‘manipulation in decreasing the perceived authors’ expertise (Mpc = 5.26 vs. Mac = 4.31; F(1, 398) = 43.52,

Effects on perceived legitimacy and sharing behavior

First, we performed a one-way ANOVA to assess the impact of expert cues on legitimacy. The results confirmed the significant effect of expert cues on consumer perceived legitimacy (F(1, 398) = 4.52,

To test our main assumption (H3) that the legitimacy triggered by expert cues will result in sharing behavior, we performed a simple mediation analysis using PROCESS Model 4 (10,000 bootstrapped samples; Hayes 2021). In the model, expert cues served as our independent variable, perceived legitimacy as the mediator, and sharing choice as the dependent variable. The results showed a significant effect of expert cues on perceived legitimacy (b = .32, CI95 = [.24, .62]) and a significant indirect effect through perceived legitimacy (b = .44, CI95 = [.02, .89]). Moreover, expert cues were no longer a significant predictor of sharing choice when controlling for perceived legitimacy (b = −.41, CI95 = [−1.00, .18]), which indicates a fully mediated model. The logistic regression model was statistically significant (χ2(2) = 27.307,

To examine whether the relationship between expertise, perceived legitimacy and sharing behavior differs as a function of the type of book (misinformation vs. factual), we first performed a two-way ANOVA on perceived legitimacy. Both expert cues (F(1, 398) = 5.04,

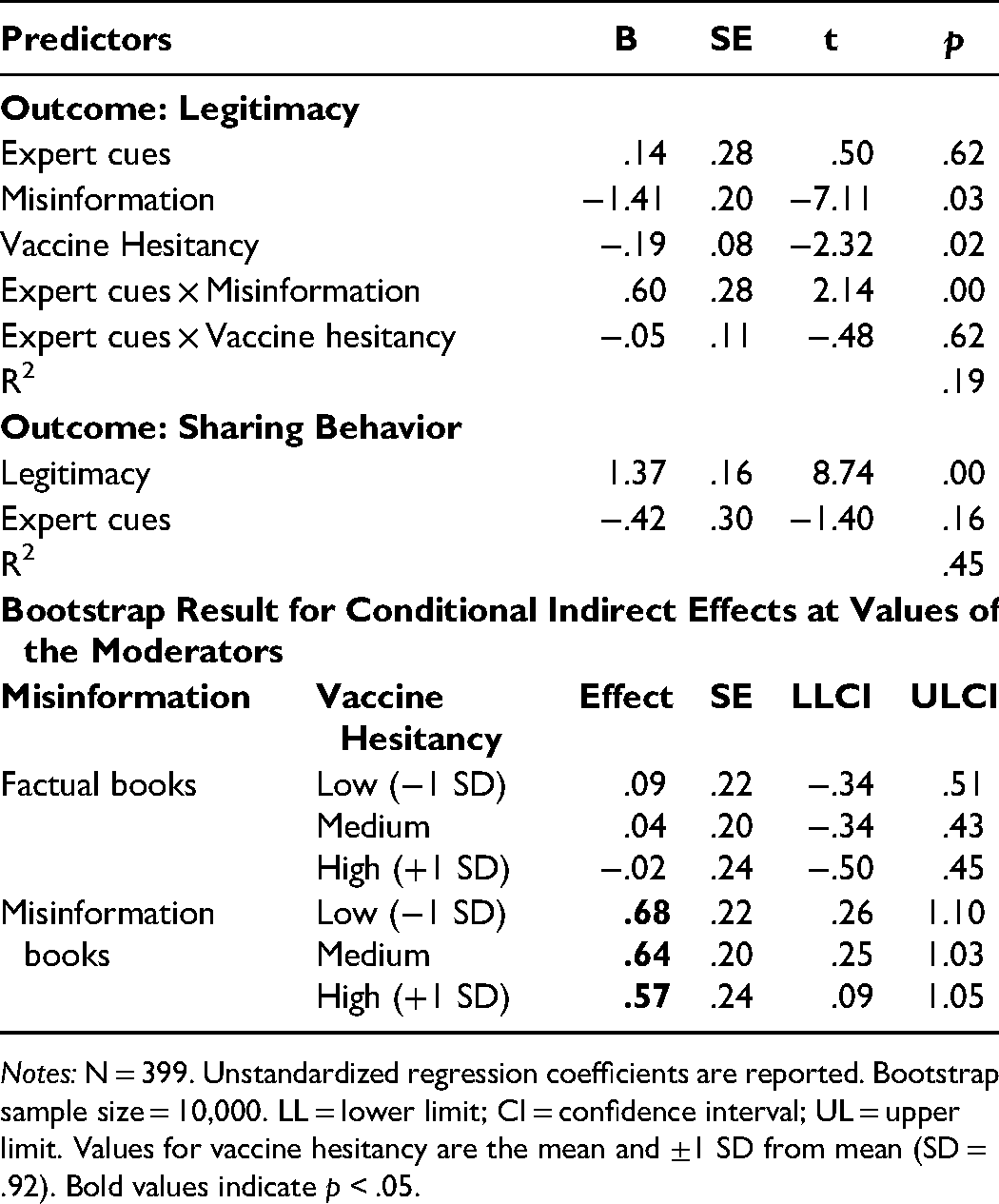

We then conducted an additive moderated mediation analysis (Model 9, 10,000 bootstrapped samples; Hayes 2021) to simultaneously test the previous relationships on sharing behavior. In the model, we included both the type of book and individual differences in vaccine hesitancy as moderators. Age (b = −.004, n.s.), gender (b = −.14, n.s.), and involvement with the topic (b = .33, CI95 = [.25, .45]) were added as control variables. The interaction between expert cues and misinformation was significant (b = .60, CI95 = [.05, 1.14]), but the interaction between expert cues and vaccine hesitancy was not (b = −.05, CI95 = [−.27, .16]). The indirect effect of expert cues on sharing behavior through legitimacy was significant only for vaccine misinformation books (see Table 4). Moreover, this effect was strongest for individuals with low levels of vaccine hesitancy (VH = 7, b = .68, CI95 = [.26, 1.10]) and decreased for higher levels of vaccine hesitancy (VH = 4.9, b = .57, CI95 = [.09, 1.05]). The logistic regression model was statistically significant (χ2(2) = 27.307,

Testing Additive Moderated Mediation (Study 3).

These findings confirm that expert cues are significant predictors of sharing behavior through legitimacy only for vaccine misinformation books and not for factual ones. Moreover, although we did not find an interaction effect of expertise and vaccine hesitancy, the results show that the indirect effect of expert cues through legitimacy is magnified when individuals display low levels of vaccine hesitancy (values higher than 6.19).

General Discussion

The spread of vaccine misinformation poses a serious threat to public health (Lahouati et al. 2020). The dissemination of this content is amplified and facilitated by social media, yet little is known about how this process starts and how it is legitimized. Across three studies, the findings show how expert misinformation travels and acquires legitimacy across digital platforms. First, in our qualitative investigation, we identified how vaccine misinformation legitimacy is developed within and between the marketplace and the social media platform. Specifically, we showed how legitimacy is first established in the marketplace through cognitive, moral, and pragmatic dimensions; then magnified by what we defined as expert legitimacy; and finally strengthened by the algorithmic dimension in the social media platform. Second, in two separate experimental studies, we tested the impact of expert legitimacy conveyed by expert cues on legitimacy perceptions and social media sharing behaviors. Study 2 focused on legitimacy development and showed the positive effect of expert cues on the perceived legitimacy of misinformation books while assessing the crucial moderating role of vaccine hesitancy. Specifically, we found that the presence of expert cues increases perceptions of misinformation books’ legitimacy in individuals who display a positive attitude toward vaccines, whereas it decreases the same legitimacy perceptions in individuals with high levels of vaccine hesitancy. This result highlights the important role of prior beliefs on information evaluation and legitimacy perceptions. Vaccine-hesitant individuals are likely to distrust science (Hornsey, Lobera, and Díaz-Catalán 2020). This distrust affects their information processing, as it lowers the perceived legitimacy of vaccine-related content when such content is shared by individuals who are part of the (nontrustworthy) medical establishment. Similarly, individuals who are generally supportive of vaccines, and thus of science (Leask 2020), trust the expertise of those who share vaccine medical information. As such, the legitimation of vaccine misinformation through expertise identified herein could potentially increase the general levels of vaccine hesitancy, posing threats to public health. Study 3 analyzed these relationships in the context of social media and examined whether they hold for both vaccine misinformation and factual books. The results showed that expert cues drive social media sharing behavior through legitimacy. Moreover, we found that the persuasive effect of expertise through legitimacy is significant only for misinformation books, and not for factual books. Contrary to our expectations, we did not find confirmation of the moderating role of vaccine hesitancy in Study 3. However, it is important to note that vaccine hesitancy for this study was generally lower (M = 6.19, SD = .92) than that found in Study 2 (M = 5.92, SD = .87), which means that Study 3 respondents generally displayed a more positive attitude toward vaccines. This may be because the two studies were conducted at different times, and the public debates around vaccines have significantly increased in the last months of 2021.

Theoretical Implications

The research contributes to both the misinformation and legitimacy literature in several ways.

First, by building on Suchman’s (1995) legitimacy framework, this study expands previous literature by identifying two new dimensions of legitimacy that amplify the dissemination of health misinformation on social media. The study shows how expert legitimacy, conveyed by the use of medical qualifications and scientific evidence, and algorithmic legitimacy, developed through rankings and reviews, are the two types of legitimacy that ultimately confer credibility and trustworthiness to misinformation.

Second, the research contributes to the understanding of the legitimization process of harmful health misinformation. Specifically, it proposes a conceptual framework that delineates how legitimacy is established in the interplay among the five dimensions and flows from the marketplace to the social media platform. Whereas previous research on misinformation focused on the factors that can enhance legitimacy perceptions (Kim and Dennis 2019) and increase the diffusion of misinformation on social media (Allcott and Gentzkow 2017), this study adopts a broader perspective and identifies the relationships between the five types of legitimacy (Deephouse et al. 2017) and the path through which legitimacy is developed. The study sheds new light on the fabrication of legitimacy in marketplaces, showing how it occurs through the allure of “hidden truths” and credentials magnified by algorithm-driven logics (Di Domenico et al. 2020).

Third, the study contributes to the literature on misinformation by demonstrating that misinformation in online marketplaces can facilitate the dissemination of health misinformation through legitimation processes. This study is among the first in the marketing field to acknowledge the marketplace’s role as one of the starting points of the spread of vaccine misinformation. Although the majority of previous studies in the misinformation literature have analyzed the dissemination process that occurs within social media (Del Vicario et al. 2019), this research is among the first to focus on other online information channels, identifying reputable marketplaces such as Amazon as an originator of vaccine misinformation. Moreover, we demonstrate that the relationship between expertise, legitimacy, and sharing behavior does not hold for factual information, providing a novel contribution that furthers the understanding of the elements that may facilitate vaccine misinformation sharing.

Finally, we shed light on the impact of vaccine hesitancy on individuals’ legitimacy perceptions of misinformation. Whereas previous studies have mainly tested the impact of misinformation on the development of vaccine hesitancy (Dhaliwal and Mannion 2020), we adopted vaccine hesitancy as a factor modulating perceived legitimacy and showed that the use of credentials increases perceptions of the legitimacy of misinformation books in individuals with positive attitudes toward vaccines.

Implications for Policy Makers

Addressing misinformation is a difficult challenge for policy makers, who must walk a fine line between regulation and censorship (Edwards 2022). While the European Union attempts to legislate to monitor platforms directly, such as through the proposed Digital Services Act, there are no federal regulations that enable the removal of social media content in the United States (Pradhan 2021).

This article identifies forms of misinformation that have largely escaped existing regulatory control. While there is consensus over the need for platforms to address harmful “fringe” perspectives, the question of misinformation and scientific or professional expertise is more contested (Coombes and Davies 2022), as was seen, for example, in the dispute between the

First, although policy makers focus on individual platforms, such as Facebook and WhatsApp, this approach ignores the complexity of online information flows between platforms. In this study, high-salience misinformation is legitimized through one platform (an online book marketplace), which in turn increases spread through another platform (social media site). The kinds of policy pressure applied on Facebook to encourage self-regulation around misinformation could also be effective on other platforms. This approach does not imply censorship or removal of content but uses existing techniques—labeling and limiting algorithmic promotion—to hinder discoverability. Where the threat of regulatory pressure is present, particularly with health-related misinformation, social media platforms have shown themselves willing to self-regulate. The same recommendations apply to the regulation of online marketplaces. Though overlooked in the past, their algorithms govern the promotion of products on the marketplace, applying logics that will boost the legitimacy and dissemination of harmful content.

Second, policy makers can address the role of professional accreditation in underwriting this legitimacy. The forms of misinformation identified in this article represent the misuse of credentials. For example, the use of medical credentials by physicians who have been banned from practicing or the use of nonmedical credentials (e.g., a doctorate in a nonmedical subject) to imply medical expertise. There already exist legal tools that can be used. In the United States, Title 42 of the Code of Federal Regulations (2013) specifies credentialing to ensure the safety of patients. In the European Union (2005), credentialing is regulated by the Directive/2005/36/EC. To address the role of credentialization in the spread of misinformation, platforms could be more active in verifying credentials when used to legitimize information. This approach would ensure a correct use of credentials, delegitimize misinformation and mitigate its spreading through digital environments, and build on the verification mechanism that platforms currently use to address information quality. Because the impact of credentials on vaccine misinformation legitimacy perceptions is strong among individuals who hold positive attitudes toward vaccines, we urge policy makers to plan appropriate communication campaigns to educate and warn vaccine-supportive individuals about the risks of misleading credentialing.

Conclusion, Limitations, and Future Research

Mistrust in vaccines is as old as vaccines themselves, and this mistrust helps spread misinformation. However, the combination of social media and the wide availability of highly legitimized forms of misinformation has accelerated its diffusion. This study has identified how these forms of misinformation are legitimized and spread through social media and provides important policy implications in addressing vaccine misinformation. Despite these contributions, we acknowledge some limitations of our article that future research can address. Although the usage of CrowdTangle in academic research is growing, the tool currently tracks a limited number of Facebook pages/accounts. Further research could expand the exploration of the magnitude of the phenomenon by creating a more comprehensive data set of social media posts and extending this analysis to other social media platforms. We suggest that legitimation through credentials leads to sharing behavior on Facebook for vaccine misinformation content. Future research could examine whether these effects hold for other social media contexts and with different misinformation types. Moreover, we showed that algorithmic logics for displaying products in online marketplaces could facilitate the legitimization and spreading of misinformation. This opens a gap in understanding the issue from a business ethics perspective. To what extent are companies responsible for the spreading of misinformation? Should they limit the autonomy of algorithms and, in general, artificial intelligence in making business decisions? To what extent, then, is business ethics compatible with business purposes? We leave the answers to these lingering questions to future research.

Supplemental Material

sj-pdf-1-ppo-10.1177_07439156221103860 - Supplemental material for Marketplaces of Misinformation: A Study of How Vaccine Misinformation Is Legitimized on Social Media

Supplemental material, sj-pdf-1-ppo-10.1177_07439156221103860 for Marketplaces of Misinformation: A Study of How Vaccine Misinformation Is Legitimized on Social Media by Giandomenico Di Domenico, Daniel Nunan and Valentina Pitardi in Journal of Public Policy & Marketing

Footnotes

Joint Editors in Chief

Maura L. Scott and Kelly D. Martin

Special Issue Editors

Matthew E. Sarkees, M. Paula Fitzgerald, and Cait Lamberton

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.