Abstract

This paper tackles the issue of health and well-being misinformation, the dissemination of false or misleading information related to medical treatments, diseases, mental health, nutrition, exercise or general well-being, propagated by social media influencers. It investigates the virality of misinformation posts by TikTok and Instagram influencers exploring users’ appraisals and their sharing tendencies. Grounded in social influence and cognitive appraisal theories (CAT), three online experimental studies dissect the dynamics of virality, user comments and their effects on perceived deception, parasocial interaction and sharing intent. The results of Study 1 demonstrate heightened post virality reduces perceived deception, fostering stronger parasocial connections and sharing intentions. Conversely, lower virality levels heighten deception perceptions. In Study 2, critical comments are shown to enhance deception in high virality posts. Whereas supportive comments are shown to enhance the indirect effect of low virality posts on sharing intentions, via deception and parasocial interaction. The study contributes by demonstrating how social influence theory and CAT together explain how social media influencer misinformation posts based on their virality and user responses are likely to be shared and what consumer appraisals explain this effect. It provides directions of how marketers can tackle this issue.

Introduction

During the 2020 pandemic, journalist Sarah Wilson drew attention to the perplexing coalition between wellness advocates and the alt-right QAnon community, a far-right political conspiracy group that employs digital tactics to disseminate misinformation, including about COVID-19 (Wilson, 2020). While misinformation predates digital platforms, the internet’s speed and scale have accelerated its spread (Guess & Lyons, 2020). Approximately 22% of North American consumers have encountered false information from celebrities, with 19% encountering misleading product details (Watson, 2022). Social media influencers, individuals with substantial online followings and engagement (Kapitan et al., 2022; Kay et al., 2023; Weismueller et al., 2020), are often implicated in disseminating misinformation (Lee, 2017; Taylor, 2023) and this poses particular concern as consumers exposed to posts by such individuals may be more likely to reshare this information increasing the spread of misinformation. Health and beauty-related misinformation poses particular concerns (Hudders & Lou, 2023; Liotta, 2023), with the potential for such information to put individuals at risk of serious harm or even death.

Yet, the downside of influencers, and in particularly their sharing of health-related misinformation is underexplored in marketing literature (Hudders & Lou, 2023), despite the risks posed by promoting harmful health trends such as anorexia (Syed-Abdul et al., 2023) and consumption of food or beverages harmful to physical health (De Jans et al., 2022). Given the rising propensity of misinformation, and the potential for influencers to share it, this research aims to address the current lack of understanding of how to combat this issue by investigating the influence of virality and user comments on social media influencers’ misinformation posts, and how these factors shape consumer appraisals and likelihood of further sharing misinformation.

Virality, the sum of user interactions with online content, serves not only as an effectiveness metric, but also as a signal of message quality and acceptance (Alhabash et al., 2015). It is a crucial factor in the spread of influencers’ misinformation on social media, as the virality of social media content increases the spread of information (Visentin et al., 2021), shapes perceptions of social norms and increases the likelihood of offline behaviours (Calabrese & Zhang, 2019). However, current marketing literature often either considers what makes content ‘go viral’ or what subsequently happens after content has achieved a critical viral mass, not what occurs at the initial stages of virality and how the persuasiveness of misinformation may also increase. To provide new insight, this research aims to explore how different stages and speeds of virality, defined as low and high virality, impact users’ perceptions of misinformation shared by social media influencers through the lens of social influence theory. Social influence theory is a psychological framework that explains the ways in which people are influenced by those around them, emphasising the power of social interactions and group dynamics such as those which may be present on social media through aspects such as ‘likes’. Based on social influence theory, this research predicts it is likely that high virality posts will lead to significantly higher consumer sharing intentions due to this serving as a level of social proof. Thus, the first aim of this research is to explore how different stages of virality, indicated by likes, shape user responses to social media influencers’ misinformation posts.

When analysing the spread of misinformation, it’s vital to consider how consumers respond to user comments on influencers’ posts. Studies indicate that supportive or critical user comments can significantly reduce online content persuasiveness and perceived credibility (Colliander, 2019; Naab et al., 2020). For example, users may challenge (‘You are so fake!’) or praise (‘You are so inspiring!’) influencer posts. However, research on social media influencer marketing dynamics, especially concerning virality and user comments on misinformation posts, is limited. User comments’ influence may depend on a post’s virality. In highly viral posts, substantial social proof may diminish the impact of both positive and negative comments on an influencer’s social media post. Conversely, in less viral posts, where social proof is scarce, user comments could significantly shape consumers’ perceptions. A study by Hudders et al. (2022) sheds some initial insights by exploring how influencers’ responses to negative comments bolster perceptions of credibility. However, this research does not explore misinformation propagation by social media influencers or investigate potential differences between supportive and critical user comments and their impacts on content persuasiveness in combination with virality. The second aim of this study is, therefore, to be among the first to empirically test whether critical posts diminish the influence of social media influencer content on users’ sharing intentions, and whether affirmative comments amplify or diminish the persuasiveness of a social media influencer’s misinformation post.

If the virality of a social media influencer’s misleading post and user comments influences users’ intent to share such misinformation, understanding the underlying mechanisms becomes pertinent. The current study employs cognitive appraisal theory (CAT) to investigate how deception and perceived parasocial relationships serve as primary and secondary appraisals, and thus shape users’ responses to influencer misinformation. Prior research highlights deception (Li et al., 2020; Lim et al., 2020; Riquelme et al., 2016) and prosocial interactions (Shan et al., 2020) as explanatory factors for online responses and influencer interactions. However, these factors have often been treated in isolation. In contrast, this study integrates deception and parasocial relationships, utilising CAT for a comprehensive understanding of users’ psychological reactions to influencer-generated misinformation proposing they function as primary and secondary appraisals. Thus, the third aim of the study is to explore the mediating roles of deception and parasocial relationships in the influencer misinformation and user sharing intentions relationship.

Through three online experimental studies investigating influencers sharing misinformation on social media and consumers’ corresponding responses, this research reveals that high virality decreases deception and enhances parasocial connection with influencer, which boosts sharing intentions. Study 2 suggests critical comments enhance perceptions of deception on highly viral posts, which in turn could work to counter misinformation effects. Conversely, positive comments can indirectly enhance sharing intentions on low virality posts. By combining social influence theory and CAT, this research elucidates how consumers are influenced by social interactions on social media influencer misinformation posts and how they evaluate them. These results offer valuable insights for marketing practice. This study serves as a crucial building block in comprehending and addressing the multifaceted challenges posed by misinformation shared by influencers.

Theoretical background and hypothesis development

Health and well-being misinformation and social media influencers

Social media platforms are frequently criticised for facilitating the spread of false or misleading information, known as misinformation, on a variety of global health issues (Bode & Vraga, 2018). Misinformation is defined as false information or content that has not been created with the intention to harm, which sets it apart from disinformation - false information that is intentionally created to cause harm, such as fabricated content and manipulation campaigns (Wardle & Derakhshan, 2017). Despite this distinction, misinformation can still have serious consequences for individuals and society. The World Economic Forum identifies large-scale digital misinformation as one of the main risks facing modern society (Howell, 2013). Misinformation about health and well-being topics and products can mislead individuals into making harmful decisions, such as taking ineffective or even dangerous treatments, ignoring proven medical advice or adopting unhealthy lifestyle practices (Suarez-Lledo & Alvarez-Galvez, 2021; Wang et al., 2019). This can have severe consequences, especially during public health crises, where quick and accurate information can mean the difference between life and death (Greene & Murphy, 2021).

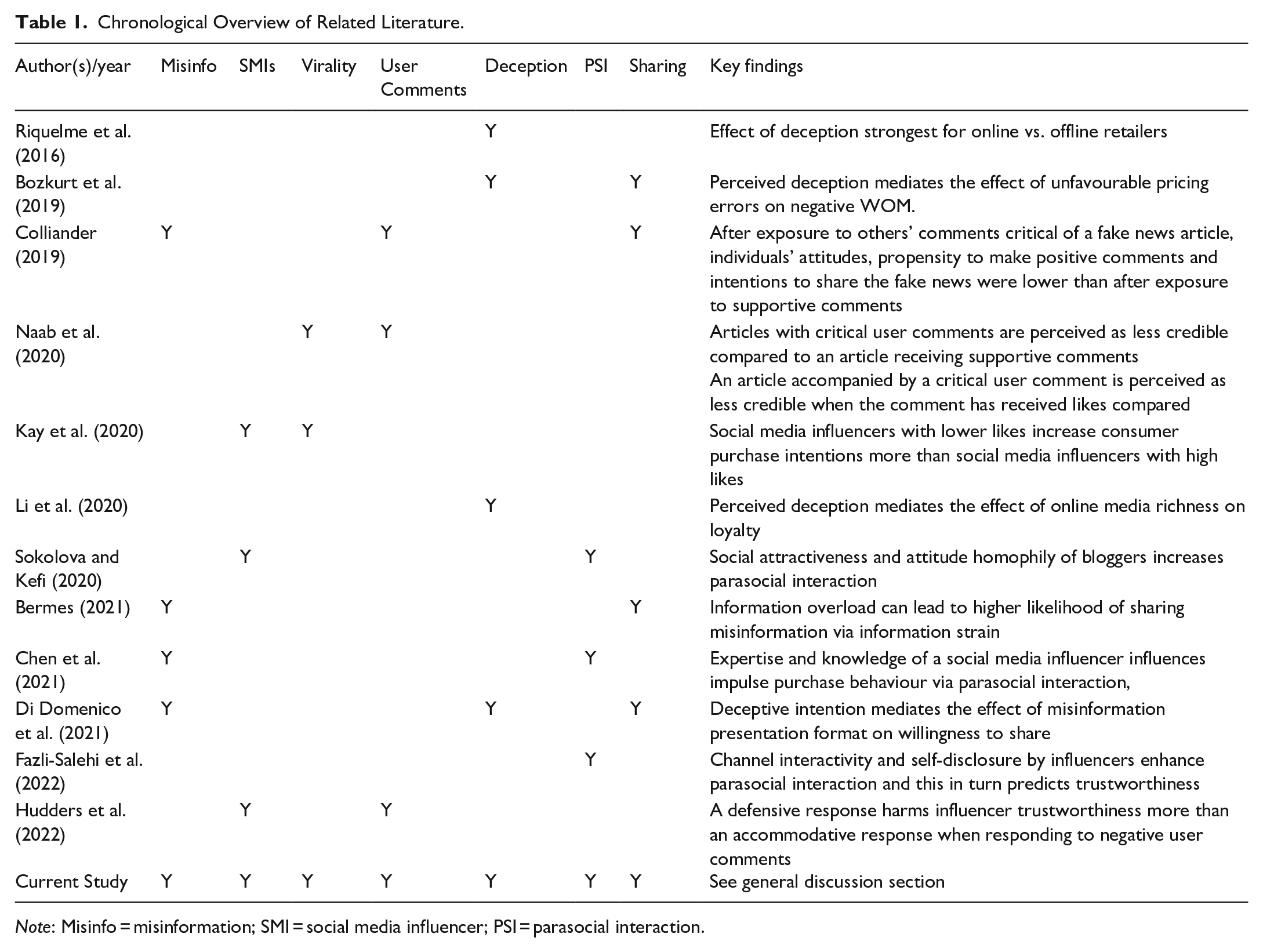

Social media influencers have become potent purveyors of misinformation and wield significant influence in today’s digital landscape, particularly in the realm of health and well-being (Harff et al., 2022). When social media influencers share misinformation about health and well-being topics and products, it can have significant negative impacts on their followers (Hudders & Lou, 2023). However, there is relatively limited research shedding light on how misinformation shared by social media influencers affects users, even though research on potentially explanatory concepts such as virality, user comments, deception and parasocial interaction and online sharing have been considered in other domains (see Table 1). The current research is thus, as highlighted in Table 1, one of the first to synthesise the streams of literature on these concepts using social influence and CAT, shedding insight on the effect of social media influencers misinformation posts on consumers.

Chronological Overview of Related Literature.

Note: Misinfo = misinformation; SMI = social media influencer; PSI = parasocial interaction.

Virality via ‘likes’ and social media influencer misinformation

For the current study, virality refers to the degree to which social media content, such as a post or video, becomes popular and is spread rapidly through online sharing and engagement. Online sharing and engagement is indicated by metrics such as likes, shares and comments. Virality is a key consideration for numerous disciplines which examine social media, including marketing (Tellis et al., 2019) and information systems (Wang et al., 2019). While informative, this virality literature considers what initially makes something ‘go viral’ as opposed to what may subsequently happen after social media content achieves viral status. Further, the majority of this literature has yet to consider misinformation shared by social media influencers, which is thus a key consideration of the current study.

Specifically, the current research considers the viral metric of ‘likes’ (Pletikosa & Michahelles, 2013), which social media influencer literature also considers as an important metric that shapes follower persuasion as well as categorising influencer size (e.g. macro-influencers, micro-influencers; Kay et al., 2020) or as an outcome in relation to engagement (Karagür et al., 2022). Furthermore, liking a post is commonly perceived as requiring less effort (being passive as opposed to active) compared to other forms of engagement, such as sharing and commenting (Schivinski et al., 2016). Consequently, ‘likes’ arguably represent a more substantial portion of the audience’s engagement than comments or shares. From a theoretical perspective, liking also aligns well with social influence theory, which suggests that individuals tend to conform to group opinions. Thus, when consumers encounter a post with numerous likes, they are more likely to conform to the popular opinion and engage with the content in a similar manner. In contrast, shares and comments don’t offer as concrete an indication of support or approval of social media content and thus have a weaker alignment to social influence theory, which guides the current research. Despite likes being a key mechanism for the virality of social media content and social media influencers, how ‘likes’, as a metric of virality, influences users’ appraisals of health and well-being misinformation is relatively unknown.

Virality and social influence theory

To explain why virality will impact the persuasion of influencers’ when posting misinformation, the current research draws upon social influence theory (Kelman, 1974), which has previously been used in influencer studies (Ouvrein et al., 2021; Tafesse & Wood, 2022) and other social media studies (Brison & Geurin, 2021) to theoretically explain why attributes, such as likes, on social media posts signify popularity or social approval and subsequently will be a key mechanism which enhances users’ sharing intentions.

Social influence theory suggests individuals will modify their beliefs, attitudes or behaviours due to interactions with others who have similar interests (Brison & Geurin, 2021). Therefore, the current research posits that sharing intentions are strongly influenced by the virality of a post. When an influencer misinformation post has high virality, it is more likely to be seen by a large audience and can potentially influence a greater number of people. This, according to social influence theory, can result in a higher level of sharing intentions because individuals are more likely to engage with content that is already popular or has a high level of social proof indicated by high likes (virality) and thus greater credibility as opposed to deception. Indeed, studies in social media support such propositions (Seo et al., 2019). For instance, Seo et al. (2019) show increased likes enhances the credibility of a social media post. In further support, Kim outlines high virality metrics, such as likes, lead to greater message persuasion and behavioural intentions of social media users. Thus, based upon the prior discussion of existing research and theorising based on social influence theory it is hypothesised that:

H1. Higher virality (vs. low virality) of a misinformation post by an influencer leads to greater social media user sharing intentions

Virality of social media influencer misinformation versus factual information

The present study aims to investigate whether the impact of virality on influencer posts varies depending on whether they contain misinformation or factual information. Research has shown that the effectiveness of misinformation differs from that of factual information. For instance, Sui et al. (2023) demonstrate that normative news frames, news stories which present facts on the basis of conflict, human interest and morality, are more influential for misinformation posts than for factual posts. In addition, Zhou et al. (2021) find that persuasive and emotional factors play a critical role in the spread of misinformation.

A potential reason why misinformation requires additional support, such as virality, to be believed and shared in comparison to factual information, is that factual information is grounded in reality. It can be verified by objective sources and evidence, or it can rely on consumers’ pre-existing knowledge or awareness. This inherent persuasiveness obviates the need for additional enhancement through factors like virality. However, by its very nature misinformation lacks the backing of objective sources to verify the claims being made (Wardle & Derakhshan, 2017). As a result, misinformation may not easily have credibility. To bridge this credibility gap, misinformation may require social proof provided through virality. Social proof, in the form of likes on a post, may reinforce the likelihood of belief in misinformation, making it more difficult for individuals to critically evaluate the accuracy of the information due to the perception that others have already made this assessment as indicated by a like. Indeed, research shows that social proof through likes or other viral metrics has led to consumers engaging in irrational behaviours such as panic buying during COVID-19 (Naeem, 2021). Naeem’s (2021) findings specifically show social media generated social proof and shaped a panic buying reaction to products that weren’t necessarily low in supply.

Given this argument and the prior research indicating that misinformation often requires alteration or manipulation to enhance its persuasiveness due to its lack of a factual basis, we contend that virality will have a greater impact on misinformation than on factual information posted by an influencer. Therefore, the current study hypothesises that the impact of virality on the spread of misinformation will be greater than its impact on factual information posted by influencers.

H2. High virality will significantly enhance sharing intentions for misinformation influencer posts compared to factual information.

User comments to influencer misinformation and social influence theory

In this study’s context, following social influence theory, another form of social proof, user comments on social media influencer misinformation posts are considered. Leveraging the work of Colliander (2019) and Naab et al. (2020), the current study conceptualises two types of user comments added to misinformation posted by an influencer, supportive and critical.

Supportive, refers to users’ comments which affirm either the misinformation (content) or influencer (poster/endorser), which may subsequently increase the perceived credibility (reduce deception) as social influence theory suggests this may function as a form of informational influence whereby individuals conform or change their beliefs as they believe others have accurate knowledge (Hazari et al., 2023; Kelman, 1958). In further support and in the case of the current research, supportive comments from users may encourage further conformity in combination with ‘likes’ from the perspective of social influence theory, whereby the individual then further changes their attitudes as the individual desires to be liked and fit in Kelman (1958), Hazari et al. (2023). In the case of the current research, supportive comments from users may occur due to users being strong fans or followers of an influencer or alternatively by band wagoning due to seeing others engaging in praise or positive reinforcement of the misinformation post of a social media influencer.

The literature also shows supportive comments to be important in increasing the sharing of misinformation. Colliander (2019) demonstrates that intentions to share fake news are enhanced when users are exposed to other users’ supportive comments. In the current research, it is contended that supportive comments might not notably impact low virality posts, given their low social influence base. Instead, as high virality posts garner substantial engagement and attention, the addition of positive comments may further enhance its impact as another form of social proof. Conversely, high virality posts stand to gain more from supportive comments due to established social proof from high likes.

H3a: Supportive comments on high virality posts will significantly increase sharing intentions.

The second type of user comment to influencer misinformation is: critical, which is defined as users commenting to challenge the correctness or truth of the information contained in influencer’s post. Critical comments may occur in the case of social media influencers sharing misinformation when users try to correct or voice disagreement and thus stop the spread of misinformation. Critical comments on low-viral posts are unlikely to affect the level of perceived deception and subsequent sharing intentions due to their limited social proof. Conversely, in highly viral posts, a critical comment could significantly reduce the likelihood of followers further sharing misinformation posted by influencers. This phenomenon, elucidated through social influence theory, indicates social undesirability and normative incorrectness, diminishing the probability of the post being shared further and mitigating additional potential virality. Such perceptions might deter open engagement due to perceived deceit.

In line with prior discussion on the basis of social influence theory, literature underscores the influential role of critical comments, akin to negative word-of-mouth, on individuals’ behaviour (East et al., 2008). Recent studies in misinformation on social media, lends support for considering critical user responses, demonstrating that ‘attacking’ (challenging) the source of fake news reduces message credibility (Vafeiadis et al., 2020). Research on social media influencers also supports a critical user response to misinformation posts, with research by Xiao (2023) showing negative comments underneath YouTube videos of influencers will lead users to see the video as less trustworthy. This has further been supported by the study of Naab et al. (2020) who demonstrate how critical user comments decrease perceived news articles’ credibility. Thus, for critical comments the following is hypothesised:

H3b: Critical comments on high virality posts will have a significantly lower sharing intentions.

Consumer appraisals of influencer misinformation

While the previous discussion and theorising has explained why it is likely that users are influenced by the virality of social media influencers posts, it is unclear how they appraise this information. The appraisal of the information helps explain why a viral post may be more likely to be shared by social media users. To address the limitations inherent in social influence theory, which primarily focusses on the likelihood of virality and user comments impacting sharing intentions without considering how consumers evaluate the misinformation posted by influencers, this study also draws on CAT and specifically the Transactional Model of Stress (Lazarus & Folkman 1984). By integrating CAT with social influence theory outlined in the previous hypotheses, this research aims to unravel the intricacies of social media dynamics, providing insights into the persuasive mechanisms at play and how consumers evaluate and respond to these situations. This approach illuminates the complex interplay between social influence and consumers’ cognitive appraisals, shedding light on why individuals are inclined (or not) to share misinformation. Consequently, this comprehensive framework offers a nuanced understanding of the profound impact of social media influencers’ misinformation posts. Specifically, using CAT, this paper demonstrates how users’ assess the (lack of) deception of misinformation shared by social media influencers, and how this in turn influences connections with the influencer (parasocial interaction) within the theory’s consideration of primary and secondary appraisals. Specifically, the concept of deception and parasocial interaction are theorised as being primary and secondary appraisals, respectively, which is discussed and justified next.

Primary appraisal-deception

According to CAT, primary appraisal involves assessing whether a stimulus, such as a post by a social media influencer, is irrelevant, positive or stressful (Lazarus & Folkman, 1984). Of particular interest is the latter aspect, the stressful appraisal, in which a situation poses a potential threat or harm to an individual’s well-being or goals. This aspect serves as the central focus of the current research. This is because feeling stressed is a common reaction when individuals are faced with conflicting influences (Frank, 2018; Moschis, 2007), which this research considers when social proof and persuasion from influencers are at odds with the accuracy of the information being presented. This internal conflict can lead to significant cognitive strain, making it challenging to navigate the situation effectively. To investigate how users appraise misinformation posts by social media influencers, the current study examines the concept of deception, which is considered integral to understanding (un)ethical marketing practices including those on social media (Small et al., 2019). When a social media influencer’s misinformation post gains limited virality, it might be perceived as a potential threat thus, stressful, through the lens of CAT (Lazarus & Folkman, 1984). This is because of the conflict between the limited social proof and accuracy of the information may be more apparent. Consequently, this perception would likely lead to a heightened sense of deception. On the other hand, if a misinformation post by an influencer spread widely and gains high virality, the perceived threat might diminish. The threat might be reduced as the social proof outweighs the influence of the potential factual accuracy of the information, which in turn results in a lower level of perceived deception due to limited conflict and subsequent stress of evaluating the social proof against the factual grounding of the information.

Indeed, significant research supports the potential for deception to be a primary appraisal of a social media influencers misinformation post. Riquelme et al. (2016) for instance, demonstrate how deception is a key driver of consumers’ satisfaction with an online retailer. In further support, Ketron’s (2016) study demonstrates perceived deception to mediate the relationship between consumer cynicism and purchase persuasion (Ketron, 2016). Transferring this empirical support from the prior studies to the current study on misinformation and social media influencers, deception may be necessary to study how users first evaluate a viral misinformation post. However, from the theoretical perspective of CAT (Lazarus & Folkman, 1984) once an individual has appraised the situation in terms of stressfulness, or deception in the case of the current study, they move onto a secondary appraisal, which this study considers as parasocial interaction.

Secondary appraisal-parasocial interaction

Secondary appraisals refer to the process by which an individual assesses their personal resources, abilities and coping ability (Lazarus & Folkman, 1984). In the current research, the focus is on the assessment of personal resources, considered as the perceived parasocial interaction with a social media influencer (Yap & Lim, 2023). Research demonstrates social media influencers, and their community can provide followers a sense of social support (a resource) as well as inspire and motivate personal growth (Kim & Chan-Olmsted, 2022), which demonstrates the potential ability of parasocial interaction to be a secondary appraisal of influencer misinformation posts. In the current study, if a user perceives the primary appraisal as being stressful (deceptive) and posing a threat to their well-being, their secondary appraisal might encompass an assessment of diminished personal resources concerning their parasocial interaction and connection with the influencer. Conversely, when the primary appraisal is less stressful or threatening (lower levels of deception), it is more probable that the user will perceive a heightened level of parasocial interaction in accordance with CAT (Lazarus & Folkman, 1984). Considering a practical example, envision a skincare post from a beauty influencer garnering numerous likes from followers. These likes imbue lower levels of perceived deception among other followers, facilitating a deepening of the connection between the influencer and consumers, thereby enhancing parasocial interaction.

Social media influencer literature also provides empirical support for parasocial interaction playing a potentially mediating role in the current study (Shan et al., 2020; Yuan & Lou, 2020), which if functioning as a secondary appraisal according to CAT is likely. Shan et al.’s (2020) influencer marketing study demonstrates that parasocial interaction mediates the relationship between self-influencer congruence and endorsement effectiveness. Similarly, Yuan and Lou (2020) find parasocial interactions to mediate the relationship between perceived fairness of the influencer and followers’ interests in the promoted products.

Thus, based upon the prior theorising in accordance with CAT and discussion of relevant literature relating to deception and parasocial interaction, the following is hypothesised:

H4. Deception and parasocial interaction will function as primary and secondary appraisals and mediate the relationship between virality and sharing intentions.

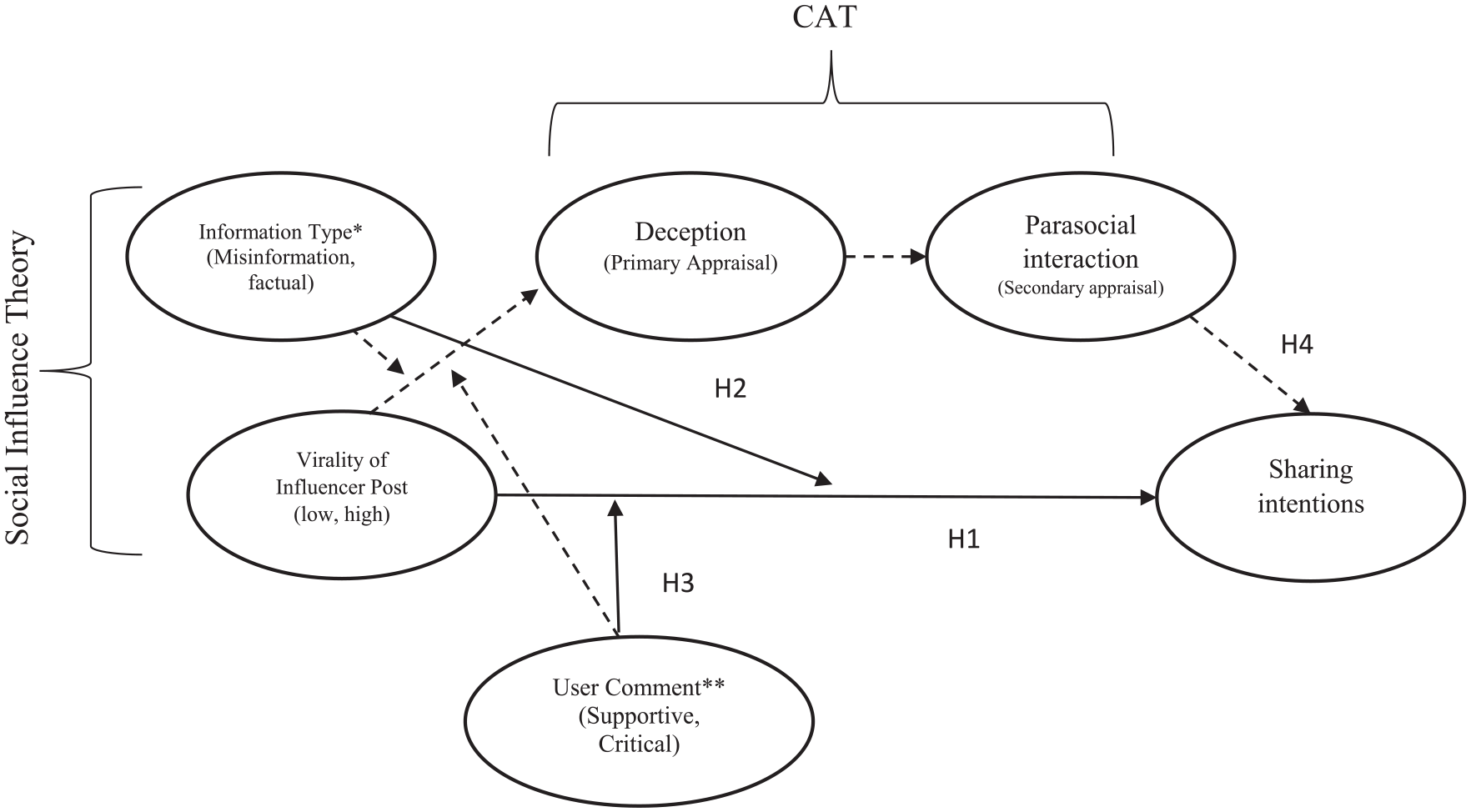

A summary and illustration of the constructs and the hypotheses previously presented can be seen in Figure 1.

Conceptual model and hypothesised relationships.

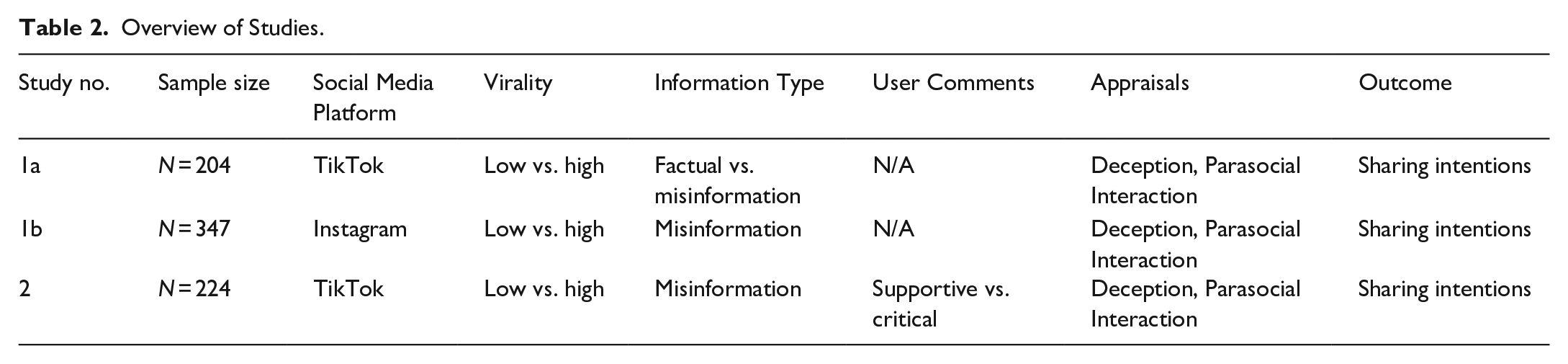

Overview of studies

To test the proposed conceptual model and hypothesised relations presented in Figure 1, three experimental studies were conducted in the current research. Study 1 consisted of two experiments. Study 1a consisted of an experiment comparing the impact of virality on factual and misinformation TikTok posts by a fictional social media influencer to establish how virality is key to misinformation. Study 1b sought to confirm these findings in another social media setting, namely Instagram and ensure that gender of the influencer did not have a significant impact. Once confirming that virality was key for misinformation, Study 2 then sought to consider how user comments could either enhance or detract the impact of social media influencer misinformation virality. Table 2 provides a summary of the studies.

Overview of Studies.

Study 1a: Virality and information type on TikTok

Study 1a considered the impact of virality and information type, seeking to establish the misinformation shared by social media influencers was impacted more by virality than factual information.

Method

Sample and stimuli

A total of 236 Australian participants were initially recruited from Prolific, an online crowdsourcing platform used in prior influencer studies (e.g. Hudders & De Jans, 2022) and randomly assigned to one of the four experimental conditions in the 2 (virality: slow likes vs. fast likes) × 2 (information type: misinformation vs. factual) between-subjects experiment. Six participants did not complete the full survey instrument. According to the ethics approval for this study, these incomplete responses did not imply consent for their data to be used and were therefore excluded. The remaining responses were evaluated based on completion time and the potential presence of common method bias. For example, responses that showed a high level of agreement (five out of five) for intentions to share, yet also indicated a similar score for deception, were excluded due to concerns about the quality and attention given to these responses. After identifying such cases, an additional 30 participants were removed from the dataset. This process resulted in a total of 204 (52.9% female; 40.7% aged 25–34 years) usable responses that were retained for analysis.

About 47.1% of the sample indicated they were employed full-time, 41.7% indicated their highest completed level of education was a Bachelor’s (undergraduate) degree. A sensitivity power analysis conducted using G*Power revealed that the sample size could detect an effect size of d = 0.25 at the alpha = .05 and power = 0.95 levels.

The study explored simulated live video content on TikTok, with a a fictional 20-year-old female influencer (hired actor) either presenting accurate information or health-related misinformation regarding gluten-free diets, conveyed by. All videos were of the same length, 60 s ensuring consistency and the hired actor ensured consistent positioning, background and placements within the video. The selection of TikTok was driven by its viral, concise video format and engaging nature, often featuring entertaining dances or skits. Despite its popularity among more than one billion global users, especially youth (Ling et al., 2022), TikTok’s inclination towards viral content has led to the propagation of misinformation. The platform’s limited moderation, rooted in upholding free speech principles, contributes to this challenge (Ling et al., 2022).

TikTok users frequently seek health guidance on the platform, despite its non-specialised nature (Song et al., 2021). Given their vulnerability to misinformation, particularly within the social media landscape (Harff et al., 2022), employing a fictional influencer in a controlled experimental environment holds merit. This approach ensures precise control over the influencer’s actions and message content, facilitating consistency across TikTok posts and manipulated variables. Consequently, the method permits hypothesis testing and variable isolation while minimising confounding influences arising from inconsistent influencer-driven content design and delivery. Drawing from influencer marketing research (Hudders & De Jans, 2022; Kay et al., 2020), the use of fictional influencers aligns with established methods, bolstered by prior empirical support.

To operationalise factual versus misinformation for the current study the following was undertaken. The script for the misinformation video was developed based on the key arguments outlined in the bestselling (and later proven to be without scientific basis) book Grain Brain (Perlmutter, 2016). This book outlined that a gluten-free diet is not just beneficial for those afflicted with celiac disease, but for everyone to avoid chronic disease and ensure better health and well-being. The factual script was based largely on the peer-reviewed article that refuted the claims made in Grain Brain (Nash & Slutzky, 2014).

To operationalise virality at two levels, slow likes and fast likes, the speed and total at which the like counter on the TikTok video increased was manipulated. This is consistent with the work of Seo et al. (2018) who also manipulated likes on static stimuli to indicate varying levels of social proof for viral ads, which is theoretically consistent with the current research and its intent for the stimuli.

On TikTok, the like counter refers to the number of times viewers have tapped the heart icon on a video, indicating their appreciation or approval of the content. In the fast likes condition, the number of likes surged rapidly from the outset of the video beginning, creating an illusion of instant and large social proof. In contrast, in the slow likes condition, the like counter increased at a more gradual pace, giving the impression of social proof, but not at the same extent and speed as the fast likes condition.

Procedure and measurement development and validation

Participants began the experiment by clicking on a link to a Qualtrics survey on Prolific. Participants were then asked questions to collect demographic data before being assigned to view one of the experimental conditions (a TikTok video). Participants were instructed ‘Please watch the following TikTok. After the video has completed, an arrow button will appear which will allow you to proceed. Please ensure you watch the video to the end’. Following viewing of the assigned stimulus, participants were asked questions relating to deception, parasocial relationships and sharing intentions, which were adapted from previously validated scales in the literature. Specifically, deception was measured using the four-item scale of Riquelme et al. (2016) and shown to be reliable and valid in the current study (loadings = 0.886–0.917, α = .955). The measurement for parasocial relationships utilised the eight-item scale adapted from Masuda et al. (2022), which was also established as reliable and valid (loadings = 0.775–0.922, α = .929). For intentions to share, three items were used (‘I would share this post with others on social media’, ‘it is likely I would share this post on social media’, and ‘I would not share this post on social media [reverse scored]) with the latter item being dropped due to a low factor loading (0.53) and not meeting desirable thresholds within the literature of being 0.7’. Its exclusion resulted in improvement in reliability (Hair et al., 2010). Once dropped the remaining two items were found to be reliable and valid and were computed together to create an index score for sharing intentions (loadings = 0.97–0.98, α = .95). For the covariate of FOMO (fear of missing out), nine scale items were adapted from the study of Zhang et al. (2020) (loadings = 0.70–0.85, α = .91), whereas for attitude homophily four items were adapted from the study of Bu et al. (2022) (loadings = 0.89–0.90, α = .91). Specifically, these measures and controls for FOMO and attitude homophily were used as both have shown to be important explanatory factors in social media and influencer related studies (Zhang et al., 2020).

Results

Virality and sharing intentions

To assess H1, a one-way ANOVA assessing the impact of virality (low vs. high) on sharing intentions was conducted. The ANOVA (F = 4.99, p = .026; ηp2 = .025) demonstrates participants in the high virality condition had significantly higher sharing intentions (M = 1.69, SD = 1.35) than the low virality condition (M = 1.33, SD = 0.87) thus supporting H1.

Mediation analysis

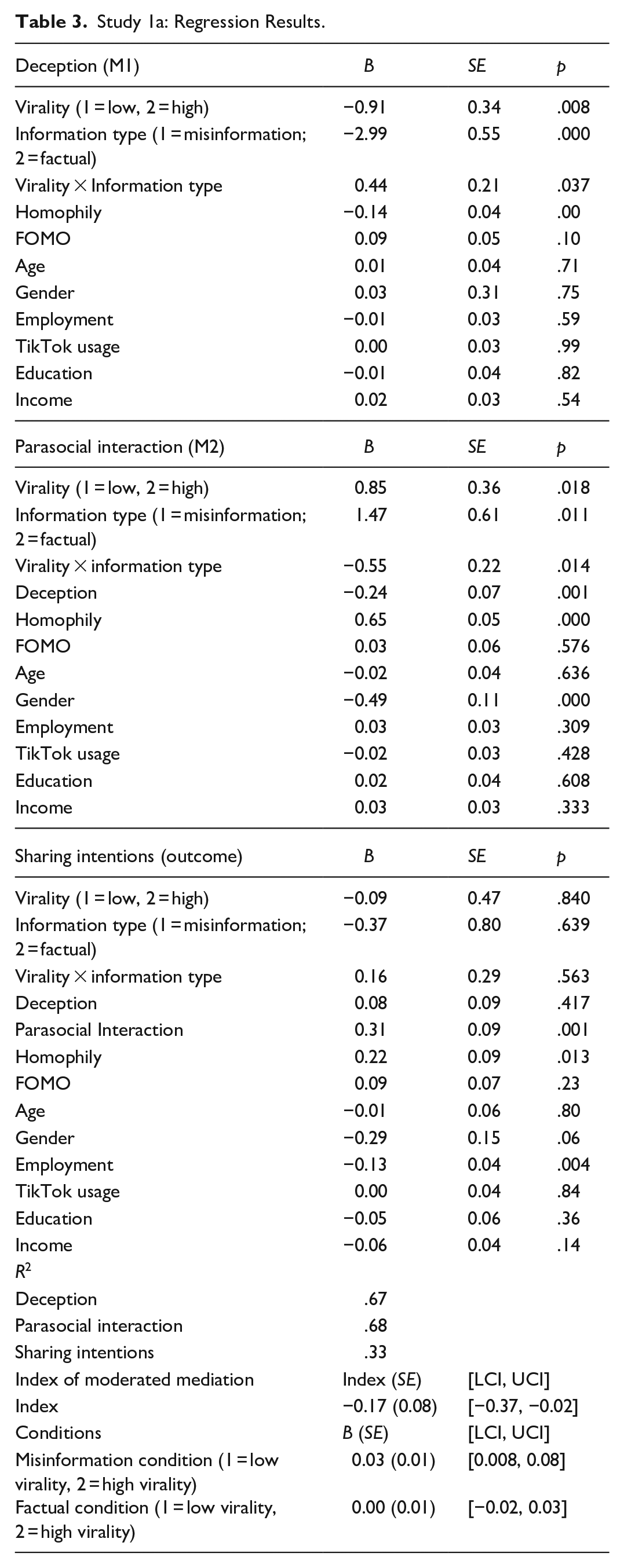

The data was then assessed using the SPSS Process Macro extension, specifically Model 85 with 5,000 bootstrapping samples and a 95% confidence interval. For the analysis, the independent variable was virality (scored as 1 = Low virality, 2 = high virality), the moderator was information type (scored as 1 = misinformation, 2 = factual), the mediators were deception and parasocial interaction and the outcome variable was sharing intentions. To ensure aspects of the sample did not impact the observed relationships, age, gender of the participant, income, employment and usage of TikTok were controlled for as covariates within the analysis.

The results demonstrate a significant index of moderated mediation (index = −0.17, SE = 0.08, 95% CI [−0.37, −0.02]; see Table 3). The indirect effect in the misinformation condition is shown when high virality is present (B = 0.03, SE = 0.01, 95% CI [0.008, 0.08]). Whereas, in the factual condition, the indirect effect is non-significant (B = 0.00, SE = 0.01, 95% CI [−0.02, 0.03]).

Study 1a: Regression Results.

The results demonstrate that low virality is likely to induce significantly higher perceptions of deception (B = −0.91, SE = 0.34, p = .007), whereas high virality is likely to result in significantly lower perceptions of deception. Further, the results demonstrate a significant interaction between virality and information type (B = 0.43, SE = 0.20, t = 2.10, p = .036). Specifically, the results show that for misinformation, virality is significantly likely to alter perceptions of deception, in particular low virality is more likely to have higher levels of reported deception (B = −0.46, SE = 0.15, t = −3.06, p = .002). Whereas, for factual information, virality is shown to not significantly alter perceptions of deception (B = −0.03, SE = 0.14, t = −0.21, p = .82). Thus, the results support H2, suggesting that the higher the virality, the lower the perceived deception in cases where misinformation is posted, and conversely virality having no influence for factual posts. Next, deception is shown to significantly decrease parasocial interactions (B = −0.44, SE = 0.10, t = −4.35, p = .000). The results show parasocial interactions significantly increased sharing intentions (B = 0.33, SE = 0.09, t = 3.41, p = .000).

The index of moderated mediation, which indicates the differing strength of the indirect effect of virality on sharing intentions via the mediators of deception and parasocial relationships, is significant as indicated with the confidence intervals not passing through zero (index = −0.03, SE = 0.02, 95% CI [−0.09, −0.003], 95% CI).

The results show that for the misinformation condition, the indirect effect of virality on sharing intentions via deception and parasocial interactions is significant and strongest for high virality as opposed to low virality (B = 0.03, SE = 0.02, 95% CI [0.009, 0.008]). Whereas, for factual posts, the effect is significantly weaker and non-significant suggesting virality does not change likelihood of sharing intentions (B = 0.00, SE = 0.01, 95% CI [−0.02, 0.03]). This provides further support for H2 and the importance of virality for shaping the impact of misinformation as opposed to factual information posts by influencers. H4 is also supported demonstrating deception and parasocial relationships as primary and secondary appraisals explaining user responses to influencer misinformation posts. The model produced an R2 of .67 for deception, .69 for parasocial interaction, and .33 for sharing intentions.

Rival model

As one of the main theoretical assumptions of the current study is that deception and parasocial interaction facilitated roles as primary and secondary appraisals, a rival model was tested to further assess the robustness of this assumption. In this rival model, the ordering of their positions as mediators was reversed. The results of this rival model testing demonstrate that parasocial interaction and deception could not function alternatively as primary and secondary appraisals. The index of moderated mediation is non-significant (index = 0.01, SE = 0.01, 95% CI [−0.01, 0.05]), and the direct effect of deception on sharing intentions is also non-significant (B = 0.08, SE = 0.09, p = .41). Thus, this provides further evidence, in line with the supported hypotheses from the original model, of the theorised mediation pathway of deception and parasocial interactions based on primary and secondary appraisals, in accordance with CAT.

Study 1b: Virality and misinformation on Instagram

Sample, stimuli, and procedure

A total of 357 Australian consumers recruited via Prolific participated in a pre-registered experiment. This oversampling of 357, exceeding the planned 300 responses in the pre-registration, was a strategy by the researchers to ensure that there were sufficient high-quality responses for analysis after excluding low-quality data responses. The experiment in Study 1b differed from that of Study 1a in that it was a 2 (virality: high vs. low) × 2 (influencer gender: male vs. female) design. Moreover, in contrast to TikTok being used as the social media context in Study 1a, Study 1b was set in the context of Instagram to examine the generalisability of results observed in the previous study. The inclusion of influencer gender as a manipulation within the experiment was also used to assess and determine (or rule out) whether influencer gender may impact the observed relationships in Study 1a. For consistency with Study 1a, the stimuli related to misinformation regarding gluten-free diets. To operationalise high and low virality, we manipulated the number of likes on the posts (1,183 in low and 11, 1183) consistent with Kay et al. (2020) study of macro and micro influencers.

As per the pre-registration of the study, participants were removed when exhibiting times below 3 min and identified as having potential common method bias issues. After this stage, 10 participants were identified for removal from the dataset, leaving 347 participants’ data for analysis (Mage = 34.01, Female = 55%). A post hoc G*Power test revealed that a sample size of 347 would be adequate to detect an effect size of d = 0.025 at the alpha = .05 and power = .99 levels.

Participants were first presented with a series of questions relating to their use of Instagram and beliefs regarding social media in general. Following this, participants were randomly exposed to one of the four experimental conditions: (1) high virality/male, (2) low virality/male, (3) high virality/female, (4) low virality/female. Participants were instructed to examine the Instagram post for 10 s before proceeding to answer questions related to the stimuli and concepts of interest to the study. The same measures for FOMO (loadings = 0.70–0.80, α = .90), attitude homophily (loadings = 0.86–0.92, α = .90, deception (loadings = 0.77–0.84, α = 83), parasocial interaction (loadings = 0.76–0.86, α = .88) and sharing intention (loadings = 0.98–0.98, α = .96) used in Study 1a were employed in Study 1b.

Results

Virality and sharing intentions

We undertook a one-way ANOVA examining virality (low vs.) high and sharing intentions. The variables of attitude homophily, FOMO, education, income, age, gender, employment and Instagram use were controlled for as covariates, consistent with the testing in Study 1a. In addition, as the current experiment also manipulated the gender of the influencer, this was controlled for to observe and rule out its potential competing influence on explaining the effect virality has upon reporting levels of sharing intentions. The ANOVA is significant (F = 7.39, df = 1, p = .006, ηp2 = .025), with participants exposed in the high virality condition reporting significantly higher sharing intentions (M = 1.73, SD = 1.44) than participants from the low virality condition (M = 1.27, SD = 0.68).

We also conducted a two-way ANOVA to further eliminate the impact of the influencer’s gender, controlling for the same remaining factors as in the prior one-way ANOVA. The main effect of gender was non-significant (F = 0.029, df = 1, p = .866, ηp2 = .000), as well as the interaction of gender and virality (F = 2.09, df = 1, p = .149, ηp2 = .007), further suggesting that the influencer’s gender did not offer a significant alternative explanation for the effect of virality on sharing intentions.

Mediation analysis

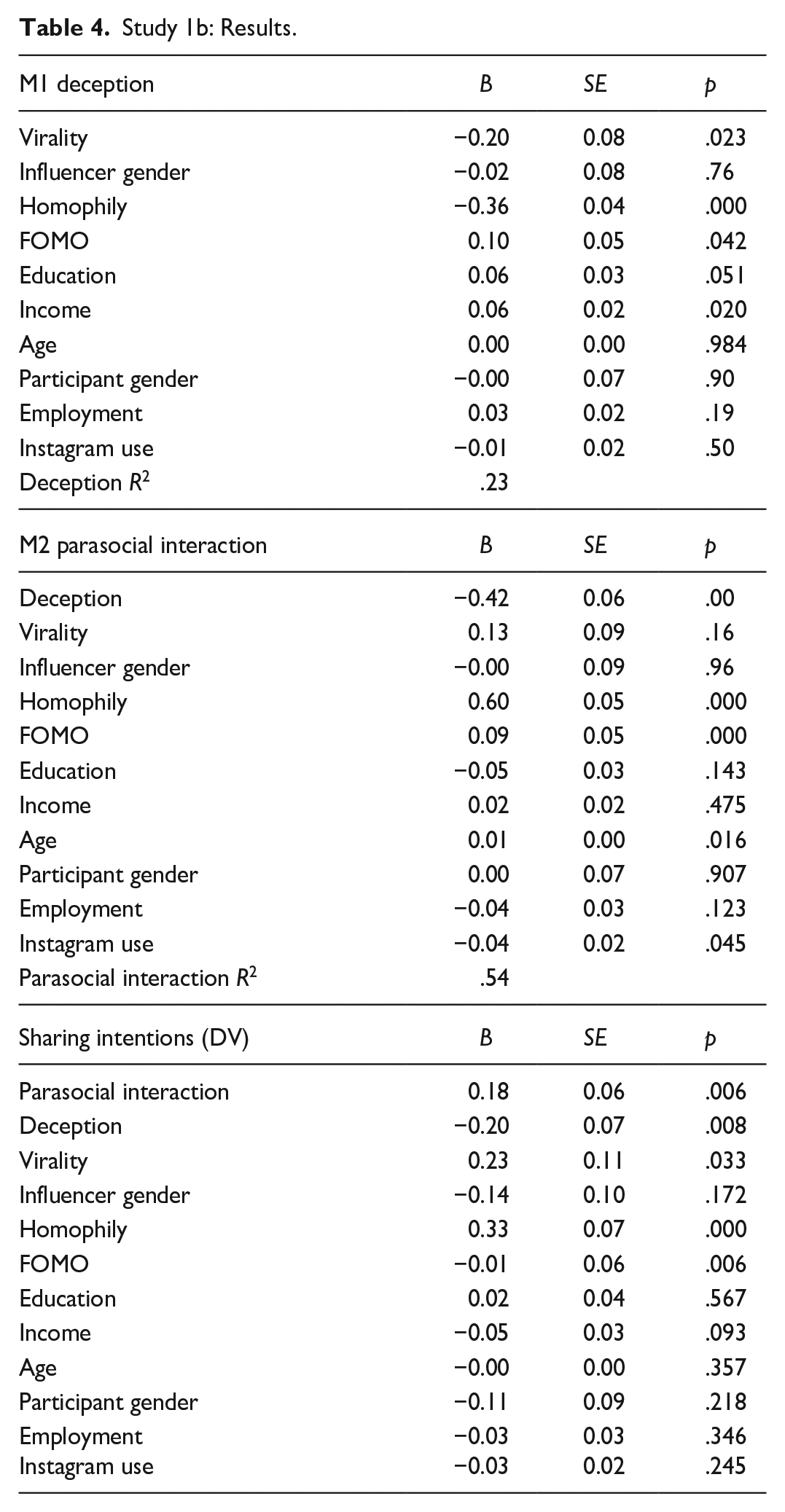

The data was then accessed using Model 6 of the SPSS Process Macro extension, with 5,000 bootstrapping samples and a 95% confidence interval. For the analysis the independent variable was virality (scored as 1 = Low virality, 2 = high virality), the mediators were deception and parasocial interaction and the outcome variable was sharing intentions. Consistent with the ANOVA, the same variables were controlled for as covariates. The indirect effect of virality on sharing intentions via deception and parasocial interaction is significant (B = 0.01, SE = 0.01, 95% CI [0.001, 0.043]). A summary of the results is provided in Table 4.

Study 1b: Results.

Study 1: Discussion

The results of Study 1 demonstrate that virality significantly shaped users’ responses to misinformation influencer posts, but not factual posts. Specifically, the results demonstrated that high (low) virality for misinformation posts reduced (increased) perceived deception. Further, the results support the theorising of the proposed model based on CAT, with deception and parasocial relationships being shown to be explanatory mediators (appraisals) of the virality-sharing intentions relationship. Further, the robustness of these results across different social media platforms, as well as gender of influencers, is demonstrated in Study 1b. What was not considered in Study 1a or b was the impact of negative and positive comments on the persuasiveness of misinformation posts by influencers (H3), this is considered next in Study 2.

Study 2: Virality and user comments on TikTok

In addition to validating the findings in relation to H1, Study 2 builds on Study 1 by exploring the impact of user comments on influencer misinformation and virality and thus assessing H3. For Study 2, factual information (H2) is not considered as evidence from Study 1a suggests sharing intentions does not vary based upon virality with such information.

Method

Sample and stimuli

The same procedure as Study 1a was used for Study 2 with 244 Australian participants initially recruited from Prolific. A toal of 11 of these participants did not complete all survey measures within the survey and as such implied consent could not be determined and thus their data were not used. Like Study 1, the data was then assessed for speed of response and potential common method bias, particularly where responses were illogical (high scores for both deception and sharing intentions). About 10 further responses were then excluded from analysis resulting in a total of 224 usable responses (38.6% of the sample identified as being between the ages of 25–34 years, 50% identified as female). A sensitivity power analysis conducted using G*Power revealed that the sample size could detect a moderate effect size of d = 0.25 at the alpha = 0.05 and power = 0.96 levels.

In relation to the stimuli, the same female influencer as per Study 1 was used as well as the same misinformation topic and justification provided for the misinformation. The experimental design of the current study focussed solely on misinformation and was a 2 (low virality vs. high virality) × 2 (critical vs. supportive user comment) design. The same measurement scales for deception (loadings = 0.70–0.80, α = .75) parasocial interaction (loadings = 0.70–0.90, α = .89) and sharing intentions (loadings = 0.90–0.91, α = .77), attitude homophily (loadings = 0.88–0.930, α = .90) and FOMO (loadings = 0.76–0.86, α = .93) used in Study 1 were again used for Study 2 and were again established as reliable and valid.

Results

Virality, comment type and sharing intentions

A two-way ANOVA assessing the impact of virality (low vs. high) and user comments (supportive vs. critical) on sharing intentions was conducted. The main effect for virality is significant (F = 7.83, df = 1, p = .006; ηp2 = .035) demonstrating participants in the high virality condition had significantly higher sharing intentions (M = 1.40, SD = 1.00) than the low virality condition (M = 1.17, SD = 0.68) thus having evidence supporting H1 in line with the prior results of Study 1. The interaction effect between virality and user comments is non-significant (F = 2.49, df = 1, p = .190, ηp2 = .011), thus not providing evidence to support H3. However, the moderating effects of comment type will be further examined in the mediation analysis to determine whether it influences other aspects of the conceptual model. For example, a moderated indirect effect relationship may exist (Hair et al., 2021), where the indirect effect (but not the direct effect) is significant only under specific comment types.

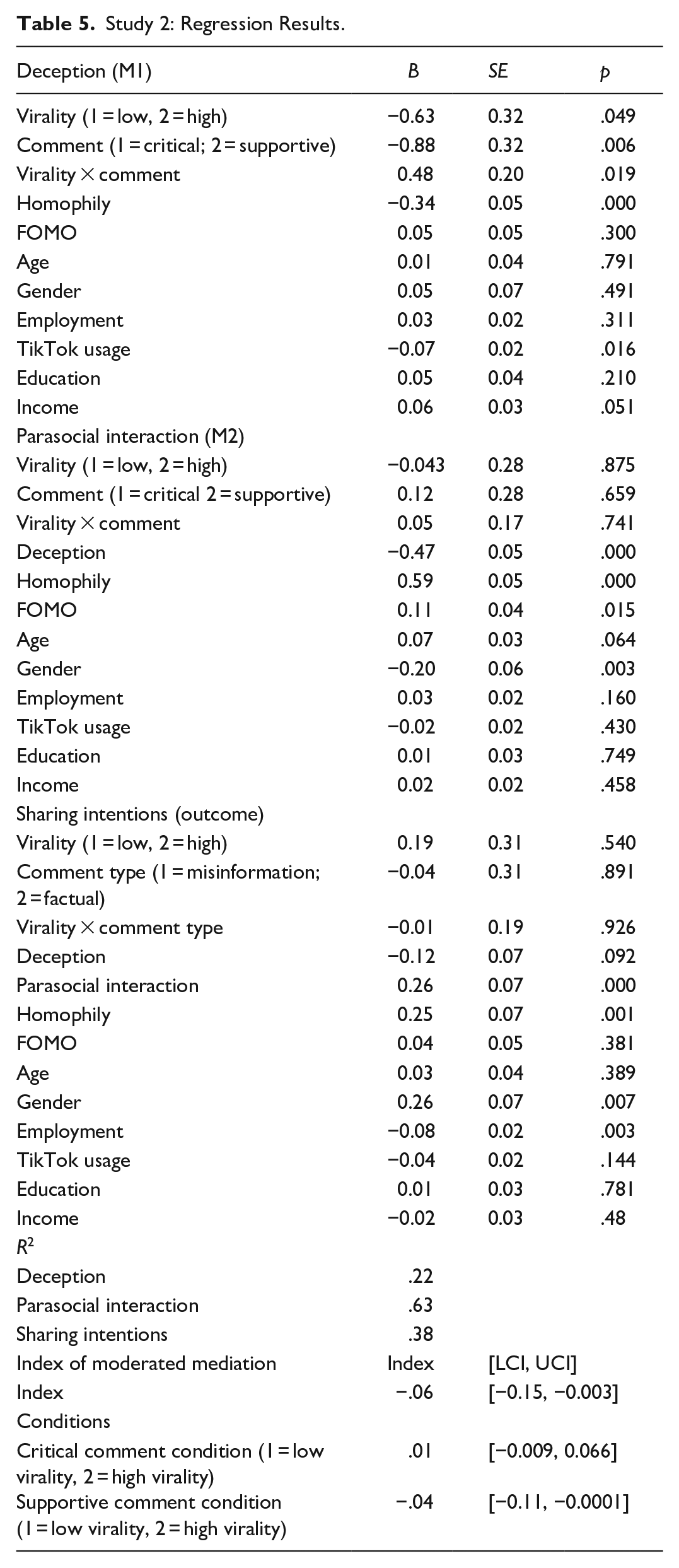

Mediation analysis

The results demonstrate that low level virality was likely to have significantly higher levels of perceived deception in comparison to high virality (B = −0.63, SE = 0.32, t = −1.97, p = .049), confirming the results of Study 1. The results also show that a critical comment from a social media user as opposed to a supportive comment was likely to lead to higher levels of perceived deception (B = −0.88, SE = 0.32, t = −2.73, p = .006). A significant interaction effect is observed between virality and user comments (B = 0.48, SE = 0.20, t = 2.36, p = .019). Critical comments significantly enhance deception for high virality posts (B = 0.26, SE = 0.13, p = .047), which while not directly testing H3, provides some evidence of the impact critical comments have upon high virality misinformation. In contrast, supportive comments have a non-significant difference on levels of deception across the virality conditions (B = −0.15, SE = 0.14, p = .276). Deception decreases parasocial interaction (B = −0.47, SE = 0.05, t = 8.07, p = .000). Parasocial interaction is shown to increase sharing intentions (B = 0.26, SE = 0.07, t = 3.44, p = .000).

The index of moderated mediation is significant (index = −0.06, SE = 0.04, 95% CI [−0.15, −0.003]). Thus, while the ANOVA results presented previously show that a supportive comment did not directly enhance sharing intentions, the results of the moderated mediation provide evidence this occurs indirectly via lower deception and high parasocial interaction. This also evidences a form of mediation which Hair et al. (2021, p. 142) note as being an ‘indirect-only mediation’, further this being only stronger in particular conditions of the moderator. Specifically, supportive comments enhance the indirect effect of low-virality posts on sharing intentions (B = −0.04, SE = 0.03, 95% CI [−0.11, −0.0001]). In the case of critical comments, a non-significant indirect effect of the virality of the post (B = 0.01, SE = 0.01, 95% CI [−0.0009, 0.064]) is observed. The model produced an R2 of .21 for deception, .63 for parasocial interaction and .34 for sharing intentions (see Table 5).

Study 2: Regression Results.

Study 2: Discussion

The findings from Study 2 indicate that critical comments can counteract high virality misinformation posts by heightening their level of deception. In comparison, supportive comments in low virality conditions are found to reduce their deception and, through mediation analysis, indirectly influence sharing intentions. Therefore, the results seem to indicate that an escalation in social proof, like combining high virality and a supportive comment, does not inevitably result in a higher probability of misinformation being shared. Rather, a supportive comment may only elevate the likelihood of sharing misinformation when social proof is low, as evidenced in the low virality condition.

General discussion

This research sought to understand the impact of virality on the spread of misinformation by social media influencers and likely further sharing by users, how user comments impacted this relationship and the mediating roles of deception and parasocial interaction. The implications of the findings for theory and practice based upon the prior theorising in the study as well as the empirical results are subsequently discussed.

Theoretical implications

The current research has illustrated how integrating social influence theory (Kelman, 1974) and CAT (Lazarus & Folkman, 1984) provides a comprehensive framework for understanding the intricacies of misinformation dissemination by influencers and subsequent sharing behaviours among consumers. Exploring the interaction between these theories unveils valuable insights into the circumstances under which misinformation or influencers become persuasive (virality and comments), and the cognitive responses (deception and parasocial interaction) that elucidate why this happens, thereby offering a more holistic understanding of the phenomenon.

The current research is one of the first to theoretically and empirically explore how virality affects social media influencers and misinformation, addressing the need for greater understanding of the darker aspects of social media influencers (Hudders & Lou, 2023). While previous studies have highlighted the persistent spread of health and well-being misinformation (Suarez-Lledo & Alvarez-Galvez, 2021; Wang et al., 2019), few have delved into the involvement of social media influencers in this phenomenon or why such content becomes ‘viral’ and continues to be shared (recall Table 1). Consistent with social influence theory, this research shows how increases in virality reduce perceptions of deception regarding social media influencer misinformation and subsequently increase intentions to share by users. Importantly, the study provides evidence that this impact of virality is specific to misinformation posts rather than factual ones. A significant theoretical implication of this finding for misinformation and social media influencer research is how social influence theory elucidates the self-perpetuation of social media influencers’ misinformation virality as users continue to share the information.

This study delves into how user comments impact influencer posts and misinformation spread, aligning with social influence theory. While influencer response strategies have been explored previously (Xiao, 2023), limited literature exists on how user comments—positive or negative—affect influencer content persuasiveness. Research on comment effects mostly extends beyond misinformation and influencer contexts (Colliander, 2019; Naab et al., 2020). This study pioneers investigating both positive and negative user responses to influencer-driven misinformation and their impact on sharing intentions. By revealing how comment strategies affect user evaluations and sharing tendencies, this study contributes to understanding influencer response strategies (Xiao, 2023). Positive comments foster engagement with influencer-driven misinformation, while negative comments heighten perceptions of deception. However, the influence of user comments varies under different virality conditions. The findings suggest that when one social influence aspect is present (high virality or a supportive comment), misinformation is perceived as less deceptive and potentially shared. Additionally, a ceiling effect might exist where additional social influence (combining high virality and supportive comments) doesn’t amplify misinformation impact or sharing likelihood. Moreover, pairing high virality with a critical comment seems to diminish misinformation effects, heightening its deception perception. This research therefore sheds novel insights on social influence intricacies in misinformation and social media, showing how user interactions modify misinformation persuasiveness.

This research contributes by exploring the mediating roles of deception and parasocial interaction. Drawing on CAT, it elucidates the psychological process of primary and secondary appraisals, revealing why users share misinformation posts by social media influencers. While prior studies highlighted deception (Ketron, 2016; Riquelme et al., 2016) and parasocial interaction (Shan et al., 2020; Yuan & Lou, 2020), few considered both factors together in examining social media influencers and misinformation. This study proposes and validates deception and parasocial interaction as primary and secondary appraisals, respectively, made by users exposed to an influencer’s post. It confirms and extends the validity of CAT in understanding appraisals, stressing the interplay between primary and secondary appraisals in grasping users’ intentions after exposure to misinformation or influencer posts. Thus, future research should explore users’ primary and secondary appraisals within CAT to comprehensively understand the psychological mechanisms behind sharing health misinformation by social media influencers.

Practical implications

The study underscores critical practical implications for managing the proliferation of misinformation, particularly when wielded by social media influencers, and highlights the roles of marketers and policy makers in this context. To effectively counter the dissemination of false information, it is imperative to intervene during the initial stages of virality. The research demonstrates that once a post gain significant virality, it becomes increasingly difficult to raise questions or doubts, making early action paramount.

This study underscores the critical necessity for the swift deployment of response teams skilled in promptly identifying misinformation. While social media platforms already have such teams in place, there is evidence of external entities and partnerships, such as the RMIT and ABC partnership (https://www.rmit.edu.au/about/schools-colleges/media-and-communication/research/public-communication-research-and-advisory-services/projects/fact-check), actively engaged in fact-checking political claims, public figures and advocacy groups, due to the overwhelming prevalence of misinformation. These external teams play a pivotal role in preventing the escalation of misinformation and should receive adequate funding and support. By allocating resources to enable these teams to identify false claims early and respond promptly, they can effectively mitigate the potential damage caused by viral misinformation. This research emphasises that the effectiveness of these teams lies in their ability to act before misinformation reaches a level of virality that becomes nearly impossible to curb.

Considering these findings, it is imperative for marketers and policy makers to adopt a proactive and preventive approach. Educational campaigns with slogans such as ‘Question It, Verify It: Empowering Minds, Debunking Lies!’ aimed at the community should actively encourage these essential aspects. Such campaigns and the crafting of their slogans and messages play a vital role in fostering community self-regulation of misinformation and promoting critical thinking among individuals and collectively to facilitate calling out this anti-social behaviour. By posing challenging and critical questions promptly when misinformation surfaces, individuals can unite as a community to help effectively regulate misinformation and prevent its rapid spread.

This research highlights consumers’ susceptibility to sharing misinformation from social media influencers, amplifying its potential spread. It stresses the importance of educating consumers on responsible sharing practices. Marketers and policymakers have crucial roles in this effort, urging individuals not only to be critical consumers but also responsible disseminators of information. Promoting a culture of cautious sharing is vital. Slogans like ‘Think Twice, Share Nice: Be Sure Before You Share’ can empower consumers to critically assess their sharing behaviour. Targeting the act of sharing emerges as a significant strategy. While consumers may believe or act on misinformation, they may refrain from sharing it if they are discerning. This approach can effectively curb misinformation spread, fostering a more informed and responsible online community.

Limitations and future research directions

While this research exhibits notable strengths, several limitations also present opportunities for future investigation. The primary focus of this research on health misinformation, given its substantial societal and economic implications, invites the need for exploring whether the observed result patterns can be replicated across other pertinent subjects vulnerable to misinformation, such as politics (propaganda), finance (investment advice) and science and technology (climate change). Investigating the significance of misinformation virality across these contexts becomes pivotal, considering variations in topic complexities, emotional engagement and levels of understanding that could influence the spread of misinformation.

This study marks an initial endeavour into examining influencer-driven misinformation diffusion on social media The insights derived herein hold potential for extending analogous misinformation patterns to different social media platforms. Acknowledging the distinct user demographics, profiles, functionalities and features inherent to each platform, it becomes imperative to assess how these factors might validate or modify the current findings. Thus, investigating misinformation propagation across diverse platforms can offer valuable insights, unveiling commonalities and disparities in influencer-mediated misinformation dissemination strategies. Another intriguing avenue for future exploration entails considering a wider range of comments which users may post on a social media influencer misinformation post. The current study considered supportive and critical comments in line with prior literature (Colliander, 2019; Naab et al., 2020), but it is also likely that in the marketplace other types of comments are posted or exist. For instance, future studies could consider neutral comments or even the integration of different emotion laden language into comments to shed further insights.

Although the current study has furnished evidence to uphold its hypotheses, exhibiting strong internal validity and ability to replicate across social media platforms (Instagram and TikTok) and influencer models, its external validity could be augmented by future research. Subsequent studies could utilise algorithms to identify influencers disseminating misinformation and who have fostered enduring relationships with their followers. Investigating the volume of virality and the types and combinations of comments in such real-world settings would extend the current research and offer insights into how these dynamics precisely manifest in real-world interactions.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the YES Research Program 0980027715, Queensland Department of Environment and Science, Youth Engaged in Sustainability Research Program.