Abstract

Background

Several patient-reported outcome measures are available to monitor headache impact, but are those reliable in real-life clinical practice?

Methods

Two identical patient-reported outcome measures (HALT-90 and MIDAS) were applied simultaneously in each clinical visit to a series of patients treated with monoclonal antibodies for migraine and intra-individual agreement was evaluated using the intraclass correlation coefficients.

Results

Our sample included 92 patients, 92.4% females, 45 years old on average. Moderate (0.50 to 0.75) and even poor (<0.50) ICC were observed in all but the first item of these patient-reported outcome measures in at least one evaluation. Over time, missing data were more frequent and no learning effect was detected.

Discussion

We observed intra-personal variation in reliability when answering patient-reported outcome measures, persisting in repeated applications, and a decrease in the motivation to respond, which should alert clinicians for these additional challenges in real-life clinical practice.

Introduction

Patient-reported outcome measures (PROMs) (1) measure data of relevant life and health goals, such as physical/social functionality, quality of life or symptom burden, being reported by the patient not prone to interpretation. PROMs are crucial in health and technology assessment (1) to value specific outcomes in clinical trials’ protocols (2). PROM development is complex, based on a conceptual framework of a medical condition, involving patients and stakeholders and considering all practicalities (cultural/language adaptation, data collection method, administration, size, scoring, recall period, weighting of items/domains, burden etc.) and its psychometric assessment is mandatory (2).

Health care providers are then asked to integrate PROMs into everyday workflow and clinicians challenged to apply them in the evaluation of therapeutic interventions, especially those aiming for symptom and/or functionality improvement, such as headache disorders. PROMs were recently included in guidelines to support monoclonal antibody treatment continuation for migraine (3).

PROMs are valuable yet bewildering instruments because, in clinical practice, their application is often inadequate, out of context or in non-validated populations (4). In headache, most available PROMs lack a clear focus and robust psychometric evaluation and the majority has not demonstrated the ability to detect real change over time, which is essential to real-life application (5). Any headache doctor is aware of the difficulty of obtaining relevant data for diagnosis and impact evaluation during clinical interviews, as patient’s perception is influenced by recall bias of severe or recent occurring attacks (6). It is very difficult for patients to sum up relevant information when explaining recurrent, fluctuating, variable and unpredictable events such as those happening in primary headaches. Diagnostic and impact calendars are a recommended strategy to overcome some of these limitations in clinical practice (7) and trials (8) but even those are frequently found to be incomplete or inconsistent. So, can we trust PROMs to be reliable for headache impact monitoring in real-life clinical practice?

Method

A real-life evaluation of patients treated with monoclonal antibodies for migraine in our center is routinely performed every 3 months and includes the self-fulfillment of a fixed sequence of eight short PROMs – most headache related (HALT-30, HALT-90, HURT and MIDAS) but we also include global impression of change, an anxiety and a global quality of life questionnaire. We ask patients to fulfill PROMs on site after the visit but there is the option to fulfill online if the patient so prefers. The online link is also sent out by email after video consultations.

HALT-90 (9) (the second PROM presented) and MIDAS (10) (the seventh) are two PROMS that have exactly the same questions with slightly different wording. We evaluated intra-individual agreement on both instruments in four sequential evaluations in all patients treated for at least 3 months in our center, by calculating intraclass correlation coefficients (ICCs), using a two-way mixed-effects model (absolute agreement type) (11) for each item and for the total score. We expected to find good (0.75 to 0.90) to excellent (>0.90) agreement and also a learning effect from the first to the fourth evaluation.

Results

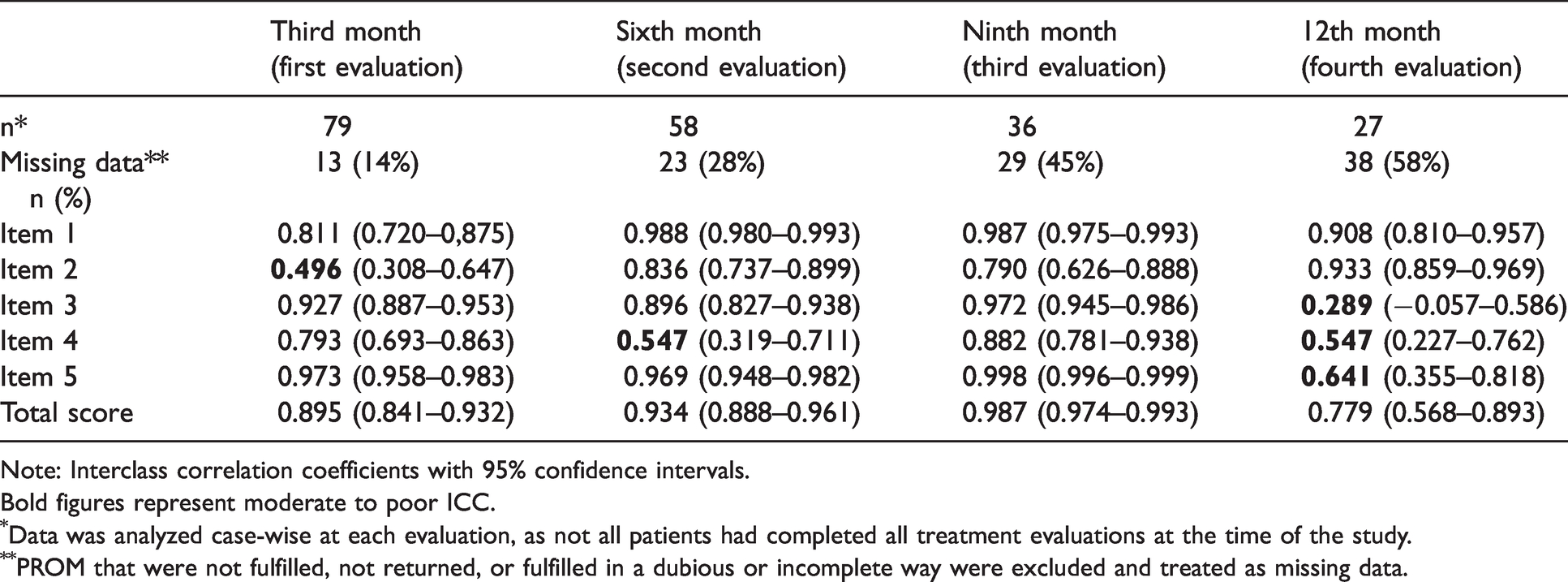

Ninety-two patients were included, 85 (92.4%) females with an age average of 45.0 ± 10.7, ranging from 19 to 69 years old. Forty patients (43.5%) had chronic migraine and 48 (52.2%) had medication overuse; 50 (54.3%) were treated with erenumab and the remaining 42 with fremanezumab. ICCs were mostly found to be excellent or good, yet some were moderate (0.50 to 0.75) or even poor (<0.50) and the learning effect was unclear. Missing PROM data increased from 14 to 58% from the first to the last evaluation (Table 1).

Interclass correlation coefficients between HALT-90 and MIDAS items and total score.

Note: Interclass correlation coefficients with 95% confidence intervals.Bold figures represent moderate to poor ICC.

Data was analyzed case-wise at each evaluation, as not all patients had completed all treatment evaluations at the time of the study.

PROM that were not fulfilled, not returned, or fulfilled in a dubious or incomplete way were excluded and treated as missing data.

Item 4 had moderate reliability in two evaluations, which can point to interpretation issues between different wordings (9,10). In the fourth evaluation, items 3, 4 and 5 had low or moderate reliabilities and higher confidence intervals despite the expectation of performance improvement due to the learning bias, probably relating to its smaller sample. The lowest reliability was observed in the item 3 (days of household work lost) last evaluation.

Discussion

Contrary to what could be expected, data presented suggests that patients may answer differently when prompted with the same question, a few minutes apart, as part of PROM evaluations.

This brief analysis was not designed to explore the reasons for the variation in reliability between these identical questionnaires in repeated applications, not does it intend to lessen their value as PROMs. We aim to expose limitations inherent to any PROM application – interpretation difficulties, educational status, personality traits, motivation, secondary gain (e.g. access to treatment), ability to concentrate, pain status, available time and so forth, facts that influence the quality of the data in real life, where environment and patient selection is different from the clinical trials’ scenario. Clinicians have to assume that our view of the patient data in real life is biased. The relevant increase in PROMs’ missing data from the first to the fourth evaluation may be a reflex of a decay motivation and commitment to fulfill the PROMs as times goes by, something that is also commonly seem with the use of diaries and calendars in clinics. In this study, it might have been enhanced due to the COVID-19 pandemic, which increased the fear of staying at the hospital longer and prompted an increase in video consultations. As a result, many patients had to actively check their email for the link to fulfill the PROMs online after the visit, something that is easy to postpone, and then to forget.

Implementing PROMs in real life has additional challenges, such as choosing the ideal set of PROMs to provide useful information while trying to keep the evaluation short and not bothersome, but also ensuring that patients fulfill them adequately, which may imply the help of an assistant or nurse, resulting in extra costs. In a more conceptual perspective, it is very challenging to define clinical meaningful change in PROMs results and even to be able to comprehensively englobe patients’ experiences in disorders that involve a multitude of concepts, as was recently exposed in a systematic review (12). Also, accepting PROMs as endpoints may be problematic because achieving one goal doesn’t mean that the patients’ problem is solved – by reaching a milestone, patients’ priorities in health goals change, and we may need another PROM to capture it (13). All these constraints do limit the use and utility of PROMS yet relying only on headache days is clearly insufficient to monitor headache and migraine treatment effects. Suggestions of composite scores have been made to help on clinical decision making (14) yet no consensus on monitoring is in place. Patient empowerment and involvement in decision making is essential but although PROMs might be good instruments for group evaluations, one may question if their reliability is enough to use them as decision-making instruments on an individual level in real-life clinical practice, especially in complex disorders without biomarkers, such as headache disorders.

Clinical implications

Clinical monitoring of real-life migraine patients with PROMS is less reliable than expected. PROMs are pivotal in outcome evaluations of headache disorders yet show limitations when used in real-life clinical setting.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Author contributions

RGG was responsible for study design, data collection, data analysis; manuscript drafting and revision. AGO was responsible for statistical advice and manuscript revision.

Availability of data and material

Additional data will be made available to interested researchers upon request.

The manuscript has not previously been posted on any preprint server.