Abstract

Background:

Continuing education is intended to facilitate clinicians’ skills and knowledge in areas of practice, such as administration and interpretation of outcome measures.

Objective:

To evaluate the long-term effect of continuing education on prosthetists’ confidence in administering outcome measures and their perceptions of outcomes measurement in clinical practice.

Design:

Pretest–posttest survey methods.

Methods:

A total of 66 prosthetists were surveyed before, immediately after, and 2 years after outcomes measurement education and training. Prosthetists were grouped as routine or non-routine outcome measures users, based on experience reported prior to training.

Results:

On average, prosthetists were just as confident administering measures 1–2 years after continuing education as they were immediately after continuing education. In all, 20% of prosthetists, initially classified as non-routine users, were subsequently classified as routine users at follow-up. Routine and non-routine users’ opinions differed on whether outcome measures contributed to efficient patient evaluations (79.3% and 32.4%, respectively). Both routine and non-routine users reported challenges integrating outcome measures into normal clinical routines (20.7% and 45.9%, respectively).

Conclusion:

Continuing education had a long-term impact on prosthetists’ confidence in administering outcome measures and may influence their clinical practices. However, remaining barriers to using standardized measures need to be addressed to keep practitioners current with evolving practice expectations.

Clinical relevance

Continuing education (CE) had a significant long-term impact on prosthetists’ confidence in administering outcome measures and influenced their clinical practices. In all, approximately 20% of prosthetists, who previously were non-routine outcome measure users, became routine users after CE. There remains a need to develop strategies to integrate outcome measurement into routine clinical practice.

Keywords

Background

The use of standardized outcome measures has increasingly become an expectation in the provision of clinical care.1–3 Nevertheless, professionals across a range of health disciplines have reported challenges integrating outcomes measurement into their daily routines.2,4–8 A lack of fundamental knowledge regarding measure selection, administration, and/or interpretation has been often cited as a primary barrier to clinical use of outcome measures.2,9–11 Education specific to outcomes measurement has been advocated as a means to increase practitioners’ familiarity with available measures and promote their use in clinical care.2,3,11–14 However, outcomes measurement training has only recently become an educational requirement for Orthotics and Prosthetics (O&P). For O&P practitioners whose professional training did not include outcomes measurement, continuing education (CE) courses are a way to acquire the knowledge and skills necessary to use outcome measures in clinical practice. 3

CE is generally acknowledged as an effective means to alter practitioners’ attitudes, knowledge, and skills.15–17 For example, CE focused on developing health professionals’ research skills was shown to increase experience and confidence in performing activities like finding literature, reviewing literature, and analyzing study results. 18 CE therefore seems well suited to addressing both the philosophical and practical barriers to use of outcome measures in clinical care.19–21 Despite the potential for CE to positively affect outcomes measurement practices in O&P, little evidence exists to demonstrate that focused outcomes measurement education and training can facilitate practitioners’ use of standardized outcome measures.

In a prior study, 5 we developed a mixed-format CE course to familiarize prosthetists with administration of two physical performance measures that have been advocated for use with prosthetic patients. Prosthetists were trained to administer the measures using didactic and interactive teaching strategies, provided with videos and written materials for later reference, and encouraged to incorporate the new knowledge into their daily clinical practices. Those who attended the course were surveyed about their confidence in administering the measures before and immediately after training. Results showed that prosthetists’ confidence improved significantly with the provided CE. 5 While the observed short-term effects of this course are encouraging, the long-term effectiveness of outcomes measurement CE (i.e. whether focused training can lead to increased confidence and use of measures) is unknown.

The primary aim of this research was to determine whether practitioners’ confidence in administering outcome measures was retained long-term after CE. Second, we wanted to assess if practitioners’ use of outcome measures had changed, relative to before they took the CE course. Finally, we wanted to assess participants’ perceptions of outcomes measurement, so as to identify barriers that may still exist after the need for knowledge is addressed through CE. We hypothesized that practitioners who routinely used the selected outcome measures after CE would maintain confidence in their ability to administer them. We also hypothesized that prosthetists would use the selected performance measures with patients more often after the CE course, as compared to before training.

Methods

Prosthetists who participated in outcomes measurement courses 5 were surveyed 1–2 years after training to assess their perceived ability to administer performance-based outcome measures included in the CE course. Furthermore, prosthetists were asked about their perceptions of the benefits and barriers to outcomes measurement. All 79 prosthetists who completed a CE course in the prior study 5 were sent the follow-up survey. All study procedures were reviewed and approved by a University of Washington Institutional Review Board.

Training

Details regarding recruitment, training, and evaluation procedures were previously reported. 5 Briefly, CE courses were designed to educate prosthetists on the rationale, administration, and interpretation of two performance-based measures: the Timed Up and Go (TUG) 22 and the Amputee Mobility Predictor (AMP). 23 The 3-h in-person course included didactic (i.e. presentation, written instructions, video, and discussion), demonstration, and role-play instructional techniques. Didactic instruction included basic outcomes measurement theory, rationale for using each measure with specific patients, and strategies for administering measures in clinical settings. Prosthetists practiced setting up, assessing, scoring, and interpreting the measures, while study investigators provided verbal and tactile feedback. For example, investigators demonstrated with prosthetists how to position the patient for testing and apply the appropriate amount of force to the patient’s sternum in the nudge test (item 10 on the AMP). Each prosthetist also received written instructions and videos to allow them additional exposure to the content after the course. 24

Surveys

Custom surveys were developed by the investigators to evaluate prosthetists’ perceptions regarding outcome measures at three points: immediately before training (pre-training, Online Appendix 1), immediately after training (post-training, Online Appendix 2), and 1–2 years after training (follow-up, Online Appendix 3). All three surveys asked, “How confident are you in your current ability to administer the TUG or AMP?” and “Please estimate the time it currently takes you to administer the TUG or AMP.” The follow-up survey asked practitioners to report how often they used the TUG, AMP, or other measures with patients. The survey also asked practitioners about their perceptions of practical and philosophical issues related to use of outcome measures in clinical practice (e.g. perceived benefits and barriers). Benefits and barriers listed in the survey were similar to surveys provided to health professionals in prior studies.2,6 Pre- and post-training surveys were administered in person before and immediately after the course. Follow-up surveys were administered by paper or computer, according to each prosthetist’s preference. Paper surveys were mailed with a prepaid return envelope. Computer surveys were administered using open-source WebQ software. Non-respondents were contacted by email and/or phone to complete the follow-up survey.

Analysis

Prosthetists’ responses from the follow-up survey were compared to their responses from the pre- and post-training surveys to assess the long-term effectiveness of the outcomes measurement CE. Data were analyzed using SAS 9.3 (SAS Institute, Cary, NC) to generate descriptive results and PASW Statistics v18.0 (SPSS, Chicago, IL) to assess statistical comparisons between groups. As in our prior study, 5 prosthetists were classified and grouped as “routine users” and “non-routine users” of standardized outcome measures. Routine users were defined as prosthetists who responded that they “often” or “always” used the TUG, AMP, or other outcome measure with their patients, while non-routine users were those who responded “never,” “rarely,” or “sometimes.” Differences in demographic and professional characteristics between prosthetists who completed the CE course in the prior study and the subsample included in this study were assessed with one-sample Wilcoxon signed-rank tests and one-sample binomial or chi-square goodness-of-fit tests. Differences between routine and non-routine users in this study were assessed with Wilcoxon Mann–Whitney and two-sided Fisher’s exact tests. Differences between prosthetists’ post-training and follow-up level of confidence and administration time were evaluated using the Wilcoxon signed-rank test. The threshold for statistical significance was set at α = .05 and adjustments were made for multiple comparisons, as appropriate. Descriptive statistics were used to compare routine and non-routine users’ perspectives about the benefits and barriers to using outcome measures in clinical practice.

Results

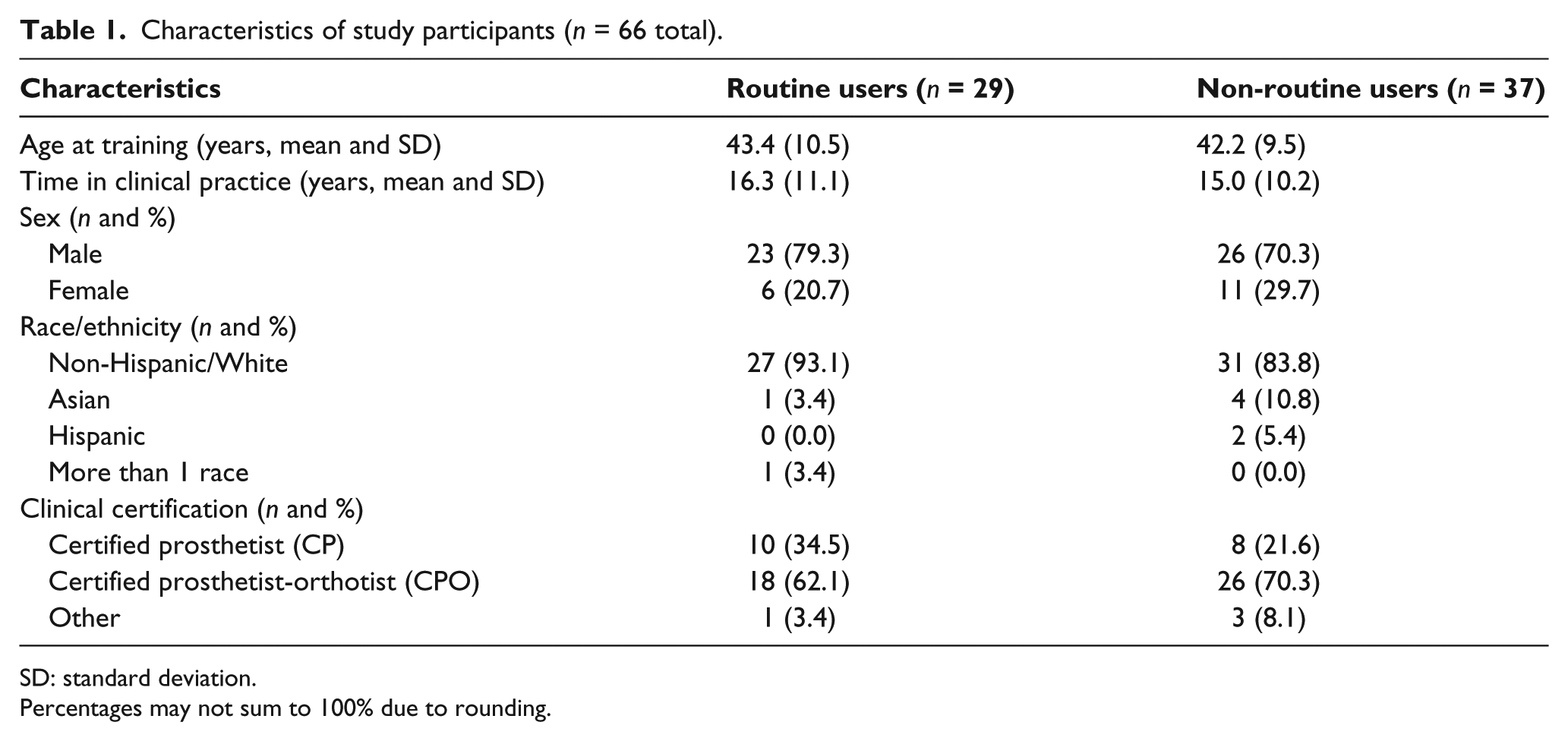

A total of 66 prosthetists from the prior study 5 completed the follow-up survey (83.5% retention rate) and were included in this analysis (Table 1). Time to follow-up ranged from 1.11 to 2.42 years (M = 1.78 years, standard deviation (SD) = 0.37). There were no significant differences in age (Z = −0.514, p = .607), years in practice (Z = −0.493, p = .622), sex (p = .845), race/ethnicity (p = .843), education (p = .655), or clinical certification (p = .829) between prosthetists who completed the post-training and follow-up surveys. Fewer than half of the prosthetists (43.9%, n = 29) reported “often” or “always” using outcome measures and were subsequently classified as routine outcome measure users at follow-up; the rest (56.1%, n = 37) were classified as non-routine users. There were no significant differences in age (Z = 0.323, p = .747), years in practice (Z = 0.363, p = .717), sex (p = .572), race/ethnicity (p = .311), education (p = .232), or clinical certification (p = .537) between routine and non-routine users (Table 1). Most practitioners (71.2%, n = 47) remained consistent in their reported use of outcome measures between the pre-training and follow-up surveys. However, 13 prosthetists (19.7%) changed from non-routine to routine users and 6 prosthetists (9.1%) changed from routine to non-routine users. Thus, the percent of respondents classified as routine users was higher at follow-up (43.9%, n = 29 of 66) compared to pre-training (38.0%, n = 30 of 79).

Characteristics of study participants (n = 66 total).

SD: standard deviation.

Percentages may not sum to 100% due to rounding.

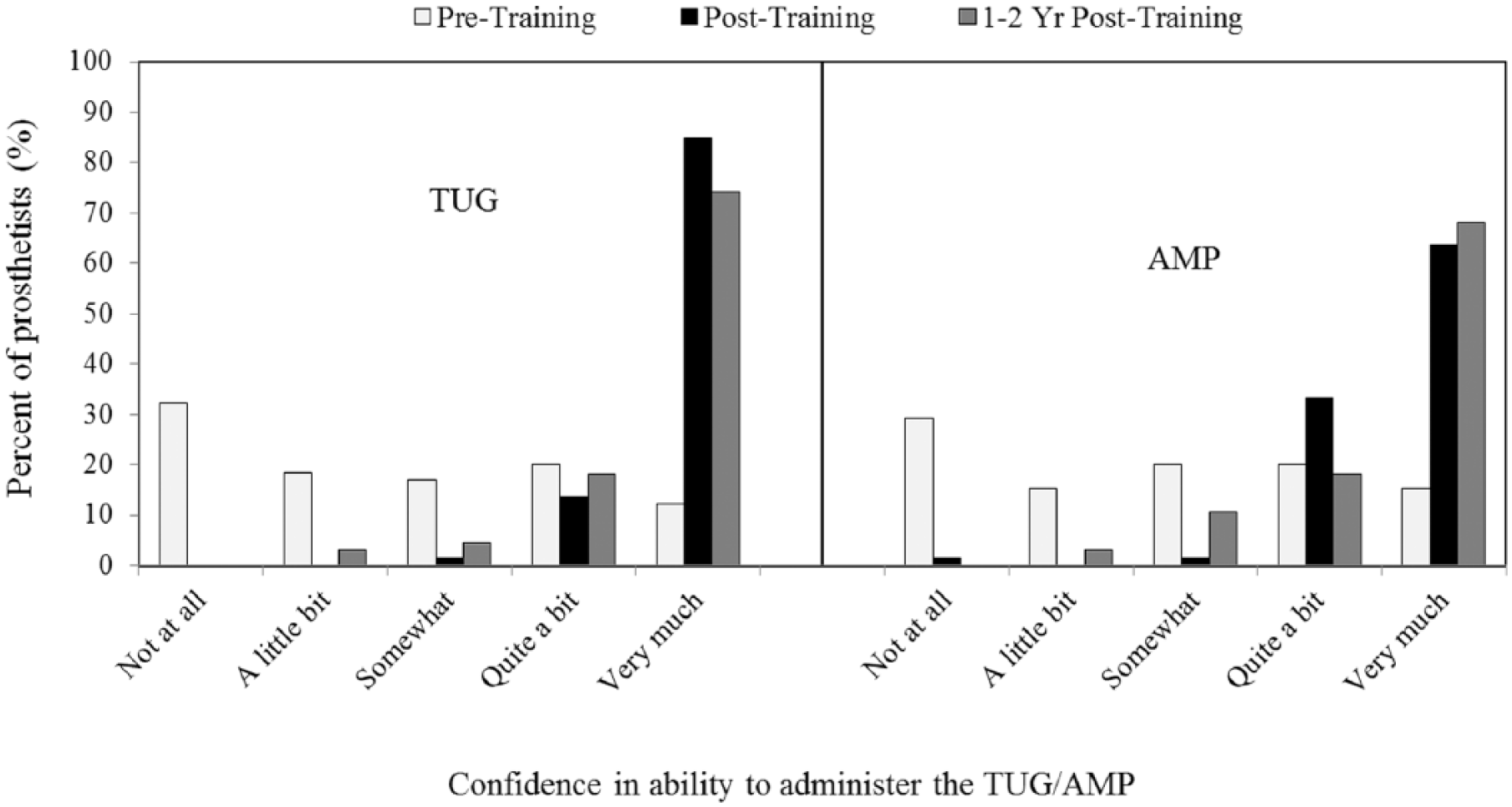

As described in the prior study, prosthetists reported significantly increased confidence in administering both the TUG (Z = −6.506, p < .0001) and AMP (Z = −6.331, p < .0001) immediately after CE was provided (Figure 1). 5 There were, however, no statistically significant changes in confidence in administering the TUG (Z = −1.874, p = .061) or AMP (Z = −0.726, p = .468) between post-training and follow-up. While some prosthetists (28.8%, n = 19) reported less confidence with one or both measures at follow-up as compared to post-training, most (63.2%, n = 12 of 19) changed by only one category (e.g. they reported being “very much” confident at follow-up, compared to being “quite a bit” confident after CE). Also, the large majority of prosthetists who reported being less confident with either measure at follow-up (89.5%, n = 17 of 19) were classified as non-routine users.

Prosthetists reported confidence in administering the TUG and AMP before training, after training, and at 1.8 ± 0.4 years after training.

Prosthetists reported that the TUG required slightly more time to administer at follow-up (4.6 ± 3.5 min) than at post-training (4.2 ± 3.2 min, Z = −0.526, p = .599). Conversely, the AMP required slightly less time to administer at follow-up (19.5 ± 9.4 min) compared to post-training (20.6 ± 7.1 min, Z = −1.726, p = .084). Although it generally took prosthetists about four times longer to administer the AMP than the TUG, more prosthetists routinely used the AMP with patients (i.e. 24.2% routinely used the TUG; 40.9% the AMP). Prosthetists’ use of both measures was markedly increased at follow-up, relative to pre-training (i.e. only 3.0% and 16.7% of prosthetists routinely used the TUG and AMP, respectively, prior to training). Even more prosthetists indicated that they intended to routinely use the TUG and AMP with future patients (35.4% and 47.7%, respectively).

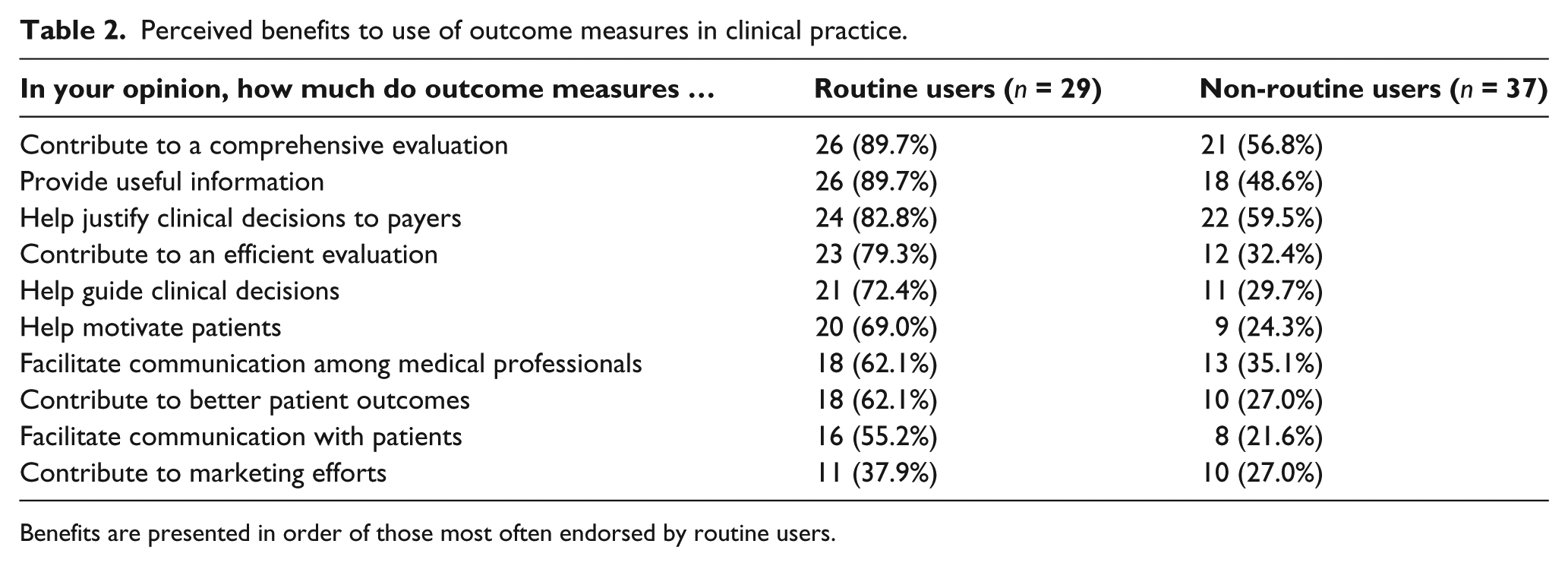

Prosthetists’ opinions about the benefits to outcome measures varied (Table 2). Most routine users (n = 89.7%, n = 26 of 29) responded that outcome measures “quite a bit” or “very much” contributed to a comprehensive evaluation, whereas fewer non-routine users (56.8%, n = 21 of 37) responded accordingly. The largest difference between routine and non-routine users was the belief that outcome measures contribute to an efficient patient evaluation (i.e. 79.3% (n = 23 of 29) of routine users endorsed this statement compared to only 32.4% (n = 12 of 37) of non-routine users). The smallest difference between groups was the belief that outcome measures contribute to marketing efforts (37.9% (n = 11 of 29) of routine users compared to 27.0% (n = 10 of 37) of non-routine users). Fewer than 60% of non-routine users agreed “quite a bit” or “very much” with any of the listed benefits. The benefit most endorsed by non-routine users (59.5%, n = 22 of 37) was that outcome measures “help justify clinical decisions to payers.”

Perceived benefits to use of outcome measures in clinical practice.

Benefits are presented in order of those most often endorsed by routine users.

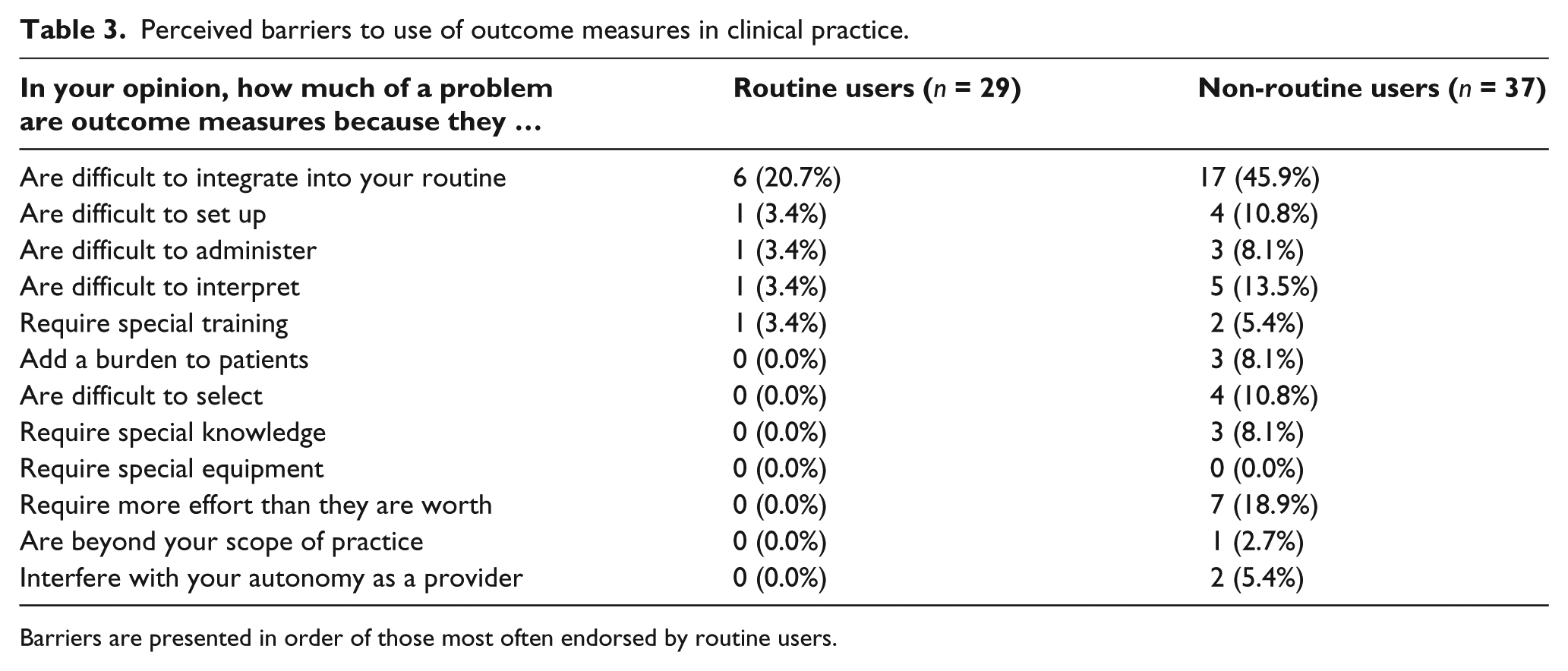

Prosthetists’ opinions of barriers to use of outcome measures varied as well (Table 3). Non-routine users reported more barriers than routine users. While relatively few routine users (20.7%, n = 6 of 29) indicated that outcome measures were “quite a bit” or “very much” difficult to integrate into their routine, more (45.9%, n = 17 of 37) non-routine users responded similarly. Finally, a modest number of non-routine users (18.9%, n = 7 of 37) reported that outcome measures require more effort than they are worth, while no routine users identified this issue as a barrier.

Perceived barriers to use of outcome measures in clinical practice.

Barriers are presented in order of those most often endorsed by routine users.

Discussion

Results of this study showed that outcomes measurement CE had both an immediate and long-term effect on prosthetists’ confidence and ability to administer performance-based measures. As a group, prosthetists were as confident administering the TUG and AMP at follow-up as they were immediately after CE (1.78 ± 0.37 years before). CE also positively affected clinical practices, in that prosthetists reported using the measures more often with clinical patients and planned to use them with future patients. Overall, the proportion of prosthetists in this study who were routinely using standardized measures at follow-up (43.9%) was similar to the 26.0%–47.9% reported by other health professionals.2,6,8 However, requirements for functional outcomes reporting recently implemented by the US Centers for Medicare & Medicaid Services have likely increased use above these previously reported levels in fields such as physical therapy, occupational therapy, and speech pathology that are subject to these requirements. 25 Functional outcomes reporting using standardized outcome measures is not yet required in O&P, but may be in the future.

Results also showed that prosthetists’ perspectives of the benefits to outcome measures varied. Most routine outcome measure users endorsed nearly all of the benefits listed in our survey. Non-routine users largely endorsed only two—that outcome measures “help to justify clinical decisions to payers” and “contribute to a comprehensive evaluation.” This consensus among routine and non-routine users regarding the use of measures for justification is also supported by the finding that prosthetists more often used the AMP than the TUG. Although administration of the TUG requires less time, the AMP may be used to support determination of patients’ functional level and provision of appropriate prosthetic componentry to payers. 26 That non-routine users believed outcome measures could “help perform a comprehensive patient evaluation,” yet chose not to apply them in practice, suggests that the benefit may not justify the effort required to implement their use in routine practice. This conclusion is partially supported by the finding that almost 20% of non-routine users reported outcome measures “require more effort than they are worth,” while, none of the routine users perceived effort as a barrier. Furthermore, although both groups acknowledged outcome measures were difficult to integrate into daily routines, a larger proportion of non-routine users (45.9%) endorsed this barrier compared to routine users (20.7%). The perception of value, in light of the difficulties and burdens of measurement, appears to reflect a significant philosophical difference between routine and non-routine outcome measure users in this study.

Fundamental differences in attitudes toward outcomes measurement were also observed in the numbers of prosthetists in each group who endorsed other benefit statements. For example, nearly twice as many routine users as non-routine users (relative to the size of each subsample) agreed that outcome measures “provide useful information.” That one-third of the prosthetists in the study, including several routine users, elected not to endorse this statement is also interesting. One reason that so many prosthetists felt outcome measure information was not useful to clinical practice may be the relative lack of population-specific normative data (e.g. typical scores from large numbers of individuals grouped by age, sex, level, and/or etiology of amputation) for most measures suited to prosthetic patients, including the AMP and TUG. 1 Normative data (or norms) are critical to interpreting scores obtained with a measure as they allow practitioners to more effectively compare each patient’s score with similar individuals. A scarcity of norms has been suggested as a reason for therapists’ tempered use of outcome measures. 11 Prosthetists may also question the value of outcomes measurement, when it is not directly reimbursed under current prosthetic payment policies. Similarly, compensation has been cited as a barrier to therapists’ use of outcome measures. 14

Overall, 1.4–2.8 times more routine users than non-routine users agreed with the benefit statements listed in the survey. These results suggest that positive attitudes or perceptions toward outcomes measurement might facilitate the use of outcome measures in clinical practice. Conversely, negative attitudes or perceptions may inhibit the use of measures. Such a finding is consistent with other investigators who reported that health professionals’ positive and negative attitudes toward outcomes measurement may be both a barrier and a facilitator, respectively, to use of outcome measures.2,6,9,11,14 Thus, it would appear that strategies to educate and inform practitioners about the value of outcomes measurement, like CE provided in the prior study, 5 may have the potential to positively and sustainably affect clinical practices. Similarly, efforts to address negative perceptions about measurement of clinical outcomes may help to eliminate or mitigate barriers to outcome measure use.

The barrier most commonly endorsed by both routine and non-routine users in this study was integration of outcome measures into clinical routines. As relatively few prosthetists endorsed other barriers, specific challenges to integration remain unclear. It may be that busy clinicians lack the time needed to effectively integrate outcomes measurement into their routines. Time is widely regarded as a principal barrier to outcome measure use by many health professionals.2,6–9 It is similarly perceived as a critical barrier to other professional behaviors, including implementing learning from CE 27 and performing evidence-based practice. 28 Thus, efforts to make outcomes measurement more efficient (e.g. use of brief instruments or computerized adaptive testing methods 29 ) may help to facilitate integration. However, it is also acknowledged that barriers related to time can (and should) be addressed through changes in organizational culture, structure, and support. 21 For example, clinic owners or administrators can help to develop and sustain an environment where outcomes measurement is valued by allowing practitioners time to use outcome measures with patients, providing clinicians and staff opportunities to attend outcome measure training, and using information obtained from outcome measures to guide business or personnel decisions. The extent to which such activities, in combination with CE, affect positive changes in prosthetists’ use of outcome measures should be considered a priority for future study.

Limitations

Several limitations of this study should be noted. First, this study included a small number of prosthetists, relative to the number of professionals in the field (i.e. there are about 3600 prosthetists in the United States 30 ). Prosthetists in prior study 5 may also have been motivated to learn about outcome measures. So, it is possible that our sample may not reflect the experiences or perceptions of prosthetists nationwide. Second, not everyone in the original study 5 responded to the follow-up survey. It is possible that responses from non-respondents would have affected results. However, the effects of non-response are believed to be negligible, as loss-to-follow-up was lower than the 20% attrition often considered to be an indicator of bias in longitudinal studies,31,32 and similar numbers of prosthetists originally classified as routine and non-routine users in the original study 5 were lost to follow-up (i.e. 8 and 7, respectively). Furthermore, no significant differences were found between prosthetists in the prior study and the subsample included here (i.e. there appears to be no systematic bias among those who completed each survey). Finally, the CE provided in the prior study 5 was focused on the use of performance-based measures. Thus, the long-term effectiveness of CE reported here may not apply to other forms of outcome measures, like self-report. Additional work would be needed to assess if CE related to selection and use of self-report measures can facilitate their use in clinical practice.

Conclusion

Focused CE has a long-term effect on prosthetists’ confidence in using outcome measures with their clinical patients. Prosthetists generally agree that outcome measures offer benefits to professional practice. However, prosthetists also perceive numerous barriers to routine use of standardized outcome measures. As expected, benefits are perceived most by those who routinely use outcome measures, and barriers are most prevalent among those who do not. Overall, results of this study suggest that CE courses have the potential to change clinicians’ practices related to implementation of outcomes measurement, but efforts to address remaining barriers are still needed.

Footnotes

Author contribution

B.J.H. helped with the study concept and design. S.E.S., S.J.M., and I.G. helped in data collection. R.S., S.E.S., and B.J.H. conducted analysis and interpretation of data. B.J.H., S.E.S., and R.S. helped in drafting the manuscript. S.J.M., I.G., and R.G. helped with critical revision of manuscript for important intellectual content. B.J.H. obtained funding.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

All study procedures were reviewed and approved by the University of Washington Institutional Review Board.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this publication was supported by the Eunice Kennedy Shriver National Institute of Child Health and Human Development of the US National Institutes of Health (NIH) under award number HD-065340. The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.