Abstract

Background:

Systematic reviews of scientific literature are valuable sources of synthesized knowledge. Systematic review results may also be used to inform readers about challenges inherent to an area of research, guide future research efforts, and facilitate improvements in evidence quality.

Objectives:

To identify methodological issues that affected the overall level of scientific evidence reported in a contemporary systematic review and to offer suggestions for enhancing publications’ contribution to the overall evidence.

Study design:

Secondary analysis of a systematic review.

Methods:

Publications included in a systematic review related to microprocessor-controlled prosthetic knees were analyzed with respect to established methodological quality criteria. Common issues were identified and discussed.

Results:

Internal validity was commonly affected by variable comparison conditions, limited justification of accommodation time, potential fatigue and learning effects, lack of blinding, small sample sizes, limited evidence of measurement reliability, subject attrition, and limited descriptions of selection criteria. Similarly, external validity was affected by limited descriptions of the study sample, indeterminate representativeness, and suboptimal description of the interventions.

Conclusion:

Results suggest that efforts to address methodological limitations, educate evidence consumers, and improve research reporting are needed to advance the quality and use of evidence in the field of prosthetics.

Clinical relevance

Critical analysis of the strengths and limitations of publications included in a systematic review can inform evidence consumers and contributors about challenges inherent to a field of research. Results of this analysis suggest that efforts to address identified limitations are needed to enhance the overall level of prosthetics evidence.

Keywords

Background

Systematic reviews (SRs) are critical appraisals of primary sources of information (e.g. original research studies) on a specific health-care topic.1,2 They are considered to be valuable sources of information, particularly for clinicians who may be unable to allocate the time needed or possess the skills and/or resources required to find, critically evaluate, and synthesize evidence in an ever-growing body of knowledge. 1 SRs are also recognized to be valuable research tools, as they may contain information needed to generate and support hypothesis development, estimate sample sizes, and inform future research efforts.1,2

A characteristic common to SRs is a rigorous appraisal of the methodological strengths and limitations present within each publication included in the review. Such appraisals are based on established methodological criteria included in checklists, forms, or other evaluation tools.3–6 SR authors typically use these criteria to score (i.e. rate) each publication to determine its individual contribution to the overall level of evidence. Results of the critical appraisal can also be used to explore methodological issues that generally affect the body of literature. Although in-depth discussions of the methodological issues that commonly affect the reviewed publications are generally beyond the scope of a SR, they may be useful to inform research consumers (e.g. students, clinicians, researchers, and providers) about challenges related to the topic of interest (e.g. prosthetic interventions) and to advance future research efforts by offering suggestions to mitigate or eliminate issues in future publications.

To illustrate this application of a SR, we performed a secondary analysis of 27 publications included in a SR of outcomes associated with use of microprocessor-controlled prosthetic knees (MPKs) and nonmicroprocessor-controlled prosthetic knees (NMPKs) among individuals with transfemoral limb loss. 7 This specific SR was selected as a matter of convenience and was intended to provide salient examples of identified methodological issues and actionable solutions to address them via the design, conduct, and/or dissemination of the research. The purpose of this secondary analysis was to review and discuss issues related to methodological quality that affected the overall level of scientific evidence reported in a SR on a contemporary topic in prosthetics research (i.e. MPKs). It was believed that such an analysis would (1) identify methodological issues that are present in prosthetics research publications, (2) illustrate challenges present in conducting prosthetics research, and (3) inform future efforts to disseminate prosthetics research evidence.

Methods

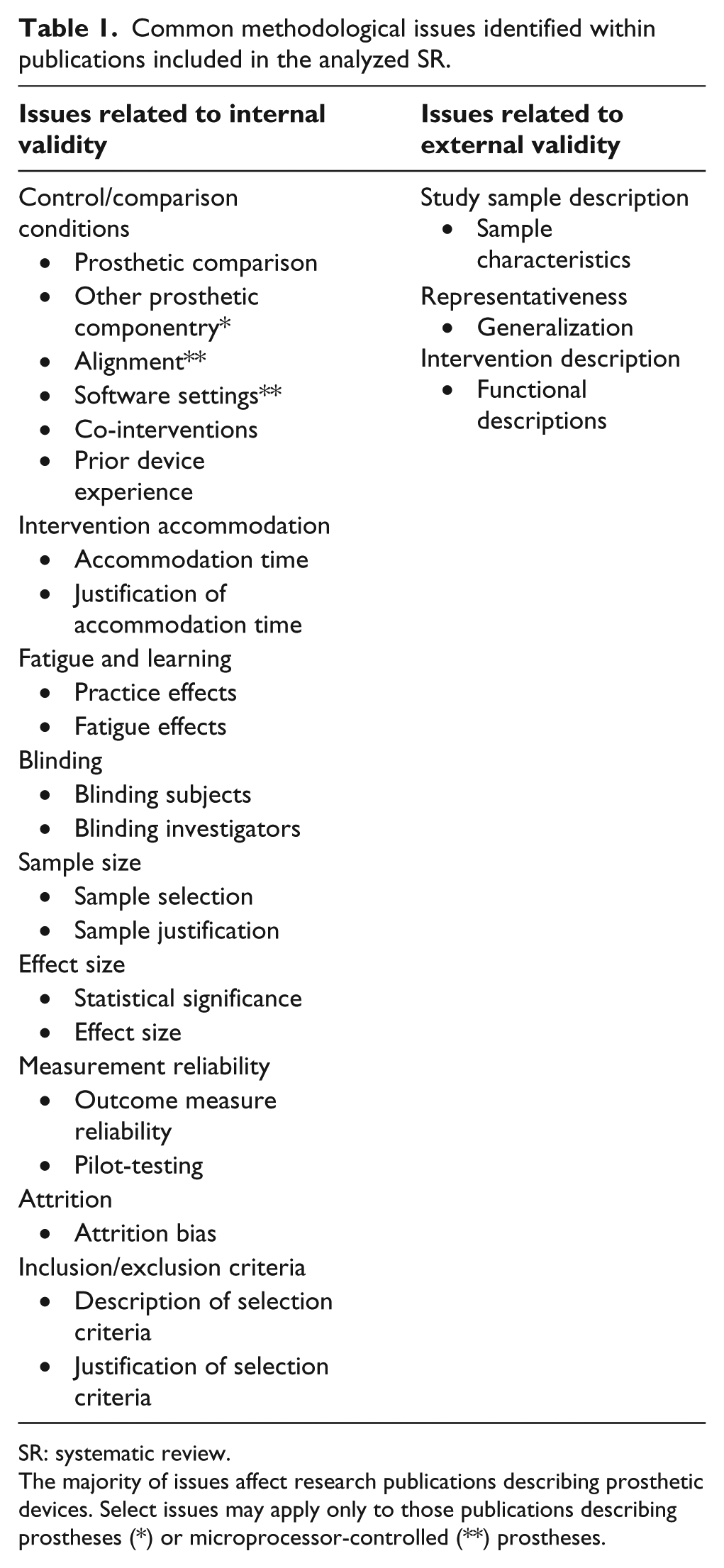

The 27 publications included in the SR were critically appraised by the authors to identify issues that commonly affected methodological quality and publications’ contribution to the overall level of evidence. 7 Checklists of potential threats to internal and external validity were used to assess each publication and categorize the identified issues (Table 1). 8 Internal and external validity have been described as critical components of methodological quality.9–11 As in the original SR, assessment of methodological quality was based upon the extent to which criteria were addressed (i.e. described) in each publication. 7 Critical appraisal of the publications found that they were often missing content needed to address the specified methodological criteria. Below, we describe each criterion, review issues related to the criterion that were identified in the SR, and offer suggestions to address these criteria in future studies and/or publications.

Common methodological issues identified within publications included in the analyzed SR.

SR: systematic review.

The majority of issues affect research publications describing prosthetic devices. Select issues may apply only to those publications describing prostheses (*) or microprocessor-controlled (**) prostheses.

Results

Internal validity

Internal validity is the degree to which the relationship between the independent variable (e.g. prosthetic knee type) and dependent variables (e.g. walking speed, energy expenditure, quality of life) is free from confounders that may affect the study outcomes. 12 Elimination or control of confounding factors enhances the quality of the study and allows investigators to draw sound conclusions about the effectiveness of the intervention. The presence of confounding factors limits confidence in the outcomes and introduces alternative explanations for the measured results. Issues that commonly affected the internal validity of the SR publications, including control/comparison conditions, intervention accommodation, fatigue and learning, blinding, sample size, effect size, measurement reliability, attrition, and selection criteria were identified. 7

Control/comparison conditions

Effective evaluation of an intervention requires that reasonable and appropriate comparison conditions be formed. To establish strong causal links between an intervention and associated outcomes, distinct intervention and comparison conditions should be formed. Furthermore, effects of an intervention are best demonstrated when only one feature (e.g. the prosthetic knee) differs between groups or conditions. In practice, variations among subjects, interventions, and/or testing conditions (i.e. confounding factors) often exist and may adversely affect investigators’ ability to detect and report changes in outcomes due to the applied intervention. Multiple issues related to control/comparisons conditions, including the prosthetic comparison, other prosthetic components, prosthetic alignment, software settings, co-interventions, and prior experiences, were identified in the analysis.

Prosthetic comparison

The NMPK comparison condition described in the reviewed publications often varied within or among studies. The NMPK was most often a noncomputerized knee that was functionally comparable to the studied MPK. For example, five publications13–17 that described studies of the Intelligent Prosthesis (Endolite, Miamisburg, OH, USA) included comparison to a pneumatic swing-phase control NMPK. Similarly, publications that described studies of the C-Leg (Ottobock, Minneapolis, MN, USA),18–25 Rheo (Össur Americas, Foothill Ranch, CA, USA), 26 or Adaptive Knee (Endolite) 27 included comparison to a hydraulic swing-and-stance phase control MPK. In 11 of the reviewed publications,28–38 the specific NMPK devices used by study subjects varied or were not specified. While standardization of the comparison prosthesis may require additional resources (i.e. time and cost) or may result in increased attrition if study subjects cannot tolerate or acclimate to the knee, it strengthens any causal relationship that may be inferred between the applied intervention and the measured outcomes.

Other prosthetic componentry

Differences in other prosthetic components (i.e. prosthetic sockets or feet) may affect study outcomes. For example, selection of a foot with a soft heel (e.g. a Solid Ankle Cushioned Heel (SACH) foot) can markedly shift the ground reaction force and affect stability of a prosthetic knee in loading response compared to a foot with a firm heel. 39 Publications included in the analysis varied in their descriptions of prosthetic componentry between groups or conditions. Most publications indicated that the investigators controlled for changes to the prosthetic socket by using the same socket or creating a duplicate socket for both interventions. However, few publications described use of the same prosthetic foot across conditions. Investigators’ decisions to change feet across conditions were likely due to requirements established by manufacturers that only select feet be used with their prosthetic knees (often for warranty purposes). However, change from one foot to another may require revisions to alignment of the prosthesis and introduce another variable into the experiment. Although evidence suggests that small alignment perturbations do not significantly affect outcomes for individuals with transtibial limb loss, 40 effects of foot alignment on individuals with transfemoral limb loss are unknown and should not be discounted. In six publications,19–21,31,32,37 the investigators attempted to minimize the impact of foot changes by using one of the same functional category. While this decision reflects typical clinical practice, a preferable alternative for future studies may be to use the manufacturer-recommended foot in both the control and intervention conditions. Unfortunately, such changes in study protocol will require additional resources and may not be a practical solution in all situations.

Alignment

Alignment of the components of interest (i.e. the prosthetic knee) may also affect measured outcomes and should be considered when establishing comparison conditions. For example, evidence suggests that mal-alignment of the prosthetic knee significantly increases the rate of oxygen consumption at walking speeds faster than normal. 18 Furthermore, suboptimal alignment of a MPK may produce gait deviations 41 that could affect outcomes (e.g. spatial symmetry, joint angles, joint moments, and joint power) included in the reviewed publications.13,17,19,23,27,29,31 A majority of publications reported that investigators had controlled or managed the alignment of the prosthesis in some way. In such cases, investigators indicated that alignment was performed by a certified prosthetist,14,17,19–23,25,29,37 based upon manufacturer’s recommendations,26,32,38 or derived from patient feedback and observational gait analysis.20,37 Five studies18,23,25,37,38 described a method of quantifying the alignment of the prosthesis with a clinical alignment tool. 42 However, only one study reported specifics as to the alignment that was ultimately achieved. 29 This is a complex and potentially important issue, as manufacturers’ knee alignment recommendations differ. Maintaining alignment across testing conditions may improve the internal validity of the study, but is not generally clinically desirable. Rather, documentation of the alignment process and the final alignment achieved may be desirable to better understand how prosthetic alignment influences outcomes or to explain potential outliers in the sample. It is acknowledged that including such detail in a scientific publication may be impractical due to word count restrictions. An alternative to including this information in a manuscript may be to provide such information in an online appendix.

Software settings

Another feature of contemporary prosthetic devices that has received little attention in the literature is adjustable software settings. Like alignment, software settings are modified by a prosthetist based on observational gait analysis and subjective feedback from the user. Manufacturer-provided software often allows the prosthetist to adjust the dampening characteristics of a MPK at different phases of the gait cycle. As such, contribution of these settings to the function of the knee should not be ignored. Authors of one publication 20 noted a broad range of MPK software settings preferred by their study prosthetist and subjects. However, whether programmed settings were controlled (or standardized across subjects) was not described in any of the reviewed publications. Furthermore, none provided details regarding obtained settings or how they were selected by the study prosthetists. It may be desirable in future research to describe and study the impact of software settings on device performance and associated user outcomes. Furthermore, the influence of these settings on subject performance and satisfaction should not be ignored. If the knee is not set properly, it may not function as it should, and hence, the outcomes reported may not be optimal or accurate.

Co-interventions

Simultaneous interventions may also confound results of a study. Several of the publications reviewed in the SR indicated that subjects received both the intervention condition (i.e. a MPK) and physical therapy.24,27,32 While provision of therapy with a new component reflects common clinical practice, it may challenge understanding of the impact of a singular independent variable (i.e. a MPK) on the dependent variable of interest (i.e. the selected outcome). This issue raises an interesting scientific question as to the impact of physical conditioning through physical therapy versus the provision of advanced prosthetic technology in the rehabilitation of individuals with lower limb loss. Similarly, use of walking aids such as a cane or walker (although excluded in the SR) may confound outcomes associated with prosthetic interventions. Much like combined provision of physical therapy and a prosthesis, the interaction of conventional walking aids and prosthetic technology has not been well studied in the literature. Until such information is available, it may be desirable to restrict application of co-interventions or to verify that the co-interventions have no effect on the outcomes of the study (e.g. by comparing subjects with and without the applied co-intervention). An alternative approach would be to employ statistical models (e.g. multivariate linear regression) that can control for the effect of co-interventions in the analysis.

Prior device experience

Subjects’ previous experiences may positively or negatively affect their ability to use a studied intervention. For example, subjects who previously used NMPKs with hydraulic swing control may experience different outcomes after transition to an MPK with hydraulic swing control than subjects who previously used weight-activated locking control. These prior experiences may confound the results of a study. The variety of NMPKs used by subjects prior to the research28–38 may have influenced their ability to acclimate to the MPK in addition to affecting their measured outcomes. Observed variances in acclimation times reported by investigators who allowed subjects to individually acclimate to the MPKs may be attributed to subjects’ prior experiences with similar control strategies.25,31 As no evidence yet exists to explain how experience influences the transition to a new prosthesis, additional research is needed in this area. Until more is known about the effects of prior experience, it may be desirable to standardize subjects’ experience (e.g. by restricting participation to subjects with/without prior experience).

Intervention accommodation

Providing subjects with adequate time to accommodate to an intervention is important to ensure proper function prior to measurement. If subjects have not properly acclimated to using the intervention, outcomes may be attenuated or uncharacteristically variable due to users’ unfamiliarity with the device. Furthermore, interventions measured without adequate accommodation may reflect suboptimal situations that are not indicative of long-term use. In select situations, short-term accommodation may be of interest and shortened accommodation times may be desired. However, in all cases, investigators should ideally select and justify the period of accommodation provided to study subjects. In the reviewed publications, descriptions of accommodation time ranged from “ample time to acclimatize to each knee” 26 to 39 weeks. 25 While most of the reviewed publications reported an accommodation period, only five16,19,25,31,37 offered an explanation for the selected time. Four authors16,19,31,37 justified the period by citing a single-subject case study 43 that reported 1–3 weeks was adequate to establish a consistent gait pattern after transition to a new prosthetic knee. However, investigators who allowed individuals to accommodate to a MPK based upon established performance and/or safety criteria reported that subjects typically required much longer accommodation times (i.e. between 14 ± 10 and 18 ± 8 weeks) to adjust to the new device.25,31 Although there likely exists no “correct” accommodation time for all prosthetic users, description and justification of the selected period may be needed to place the measured outcomes in context. Furthermore, additional research is needed to explore stability of the many outcomes of interest (e.g. energy expenditure, activity, ambulation over uneven terrain) studied in individuals with transfemoral limb loss. Study investigators may also wish to consider the scientific and clinical implications of selecting fixed, variable, long, or short accommodation times. Additional research is likely needed to inform when it is most appropriate to assess the function of a particular prosthetic component and to better understand the potential limitations associated with assessing interventions too early.

Fatigue and learning

Outcomes may also be influenced by confounding factors unrelated to the interventions, including those introduced by a selected test protocol. The general effect of fatigue and learning on performance during physical testing is well documented.44,45 Evidence of increased physical performance by persons with lower limb loss across tests has prompted investigators to voice concerns about practice effects.46,47 Although limited evidence about the effects of fatigue on physical performance in persons with lower limb loss is available, it is logical to assume that fatigue similarly has the potential to affect study outcomes. Only seven publications13,14,16–18,20,28,30 described a protocol that addressed potential fatigue effects. For example, most publications did not indicate whether subjects were allowed to rest between tests. As fatigue may negatively impact physical performance, measured outcomes may not have entirely reflected the intended condition (i.e. a changed prosthetic knee joint) when rest was not provided. Similarly, publications rarely described practice effects (i.e. learning) that may be associated with administered tests. The potential for practice effects was most commonly noted when novel, ad hoc tests or surveys were administered. Testing effects can be minimized through selection of outcome measures resistant to practice effects and/or development of protocols to minimize fatigue and learning effects. Such considerations are especially important when interventions are assessed only one time (e.g. a before-and-after study) and influence of these effects cannot be easily assessed. Randomization of the test order is insufficient to prevent either fatigue or learning, but may balance those effects across subjects. For example, fatigue effects may be mitigated by allowing subjects to rest and return to a baseline condition before additional testing is conducted. Likewise, learning effects may be minimized by allowing subjects to practice before testing. Application of strategies to address potential fatigue and/or learning effects during study development (and subsequent publication of study results) can ultimately enhance the quality of the study and the resultant evidence.

Blinding

One of the tenets of traditional experimental design is blinding of the intervention to both the subject and the researcher (i.e. a “double-blind” design). Blinding controls for potential placebo effect among the subjects and prevents the researcher from treating the data differently for each intervention. Blinding promotes internal validity and allows for greater certainty that the intervention, and not the subjects’ or investigators’ perception of the intervention, produced the measured outcome. 48 Blinding may be particularly important when studying “new” technologies (such as MPKs) because the novelty of the intervention may bias individuals to a desired outcome. None of the publications described blinding the study participants during testing, and only one 17 described blinding the investigators to the intervention during analysis. While blinding may seem to be an easy threat to address, there are often unique challenges to blinding subjects to prosthetic interventions. Practical issues often arise when attempting to compare a device that requires battery charging (e.g. MPK) to one that does not (e.g. NMPK). For example, most MPKs require the user to avoid certain environmental conditions (e.g. exposure to water or sand), while NMPKs may not have as stringent requirements. Despite the challenges, attempts at blinding should be considered. For example, blinded analysis of the data by different investigators than those who collect the data may help to mitigate investigator bias. For studies that alternate interventions within a daily session, use of a cosmetic covering could serve to blind the subjects (and investigators) to the intervention under study. Studies that occur over a longer period may require more complex solutions. For example, a cosmetic covering combined with a placebo power source may provide the means to more effectively compare a NMPK and MPK. Clearly, such blinding solutions increase the cost and complexity of a protocol, but the resultant evidence may be stronger if blinding is attempted and described in the publication.

Sample size

Determination and description of an appropriate sample size are important for scientific, economical, and ethical reasons. 49 Scientifically, it is desirable that a study include sufficient number of subjects so as to detect hypothesized differences in studied outcomes. If the sample is too large, significant differences of limited scientific or clinical importance may be detected and reported. If the sample is too small, important differences may be deemed statistically nonsignificant. An oversized study may also use more resources than are required to obtain the desired outcomes, whereas an undersized study may exhaust resources before the desired knowledge is obtained. Finally, an undersized experiment may expose subjects to potentially harmful interventions without a reasonable likelihood of advancing knowledge. Similarly, oversized studies may unnecessarily expose additional subjects to risks associated with the intervention. 50 An exception to providing a priori sample size determination may be exploratory research (e.g. pilot studies), where little is known about expected outcomes and estimating sample size requires additional data. However, description and justification of the number of subjects to be included in a study is generally desirable. Sample sizes reported in the reviewed publications ranged from 124 to 36836 subjects. Observational studies generally included more subjects (i.e. mean = 90 subjects) and experimental studies included fewer (i.e., mean = 9 subjects). Overall, more than half of the publications reviewed included 10 subjects or less. None of the publications reviewed provided an explanation for the desired or obtained sample size. Small sample sizes are common to many areas of rehabilitation research, including prosthetics. 51 Reasons for small samples in prosthetics research include highly specialized and expensive interventions (e.g. MPKs, myoelectric hands), relatively low incidence of conditions of interest (e.g. bilateral amputation, amputation due to birth defects), and limited access to select populations (e.g. people with high-level amputations, wounded warriors). To address concerns of sample size, it may be advisable to consider “small N” (i.e. single-case) study designs that are better suited to small samples. 51 Similarly, investigators may consider multi-institutional collaborations to access larger, more diverse patient populations. Furthermore, inclusion of a targeted sample size and associated justification is recommended to allow readers to better interpret significant and nonsignificant differences between test conditions, 52 particularly if other methodological issues (e.g. attrition) are present. Reporting desired and obtained sample sizes may also aid efforts to plan future research studies.

Effect size

Effect size is an estimate of the strength of the relationship between variables or the extent to which populations of interest differ. 12 In contrast to traditional test statistics (e.g. t-tests) that describe the probability that measured differences are real (i.e. not due to chance), effect sizes describe the magnitude of the measured differences. Thus, documentation of both effect sizes and inferential test statistics allows readers to assess the extent to which the intervention changed the outcome of interest as well as the likelihood that the intervention produced the change. 12 Effect size is also useful for conducting power and meta-analyses in secondary analyses of the literature. 53 Among the reviewed publications, only one 22 reported effect size. Given the relative ease of calculating effect size and its potential contribution to evidence-based practice, 54 its inclusion in future publications is suggested. Reporting effect size is recommended by many journals, including those that adhere to the American Psychological Association (APA) guidelines. 55 Although effect size is not presently required by most biomedical or rehabilitation journals, its inclusion has been increasingly advocated.54,56 Ideally, future publications will include both statistical significance and effect size so as to allow readers to better interpret study outcomes.

Measurement reliability

Tools and techniques that provide consistent and reproducible measurements are desirable so as to have confidence that conclusions drawn from data collected after an intervention are appropriate. Evidence of measurement reliability57,58 indicates that the same methods and measures could be used at other times, by other investigators, with other subjects, and/or in other settings with similar results. Without such justification, readers may question the reliability of the instruments and, by extension, any conclusions drawn from their use. Measurement reliability is necessary to accurately monitor individuals over time, estimate appropriate sample sizes, and evaluate individual differences or changes in outcome. 58 Reliability concerns are particularly warranted in cases where ad hoc instruments are used to evaluate outcomes of interest. As these instruments have no published history, they may warrant scrutiny as to their ability to provide reliable information. Among the publications reviewed, most described instruments (e.g. motion analysis cameras, force plates) known to be generally reliable. However, few publications provided data or references to substantiate reliability of the methods or measures being used. As evidence of common outcome measures’ reliability is increasingly available, 59 it would seem appropriate to obtain, include, and/or cite evidence of instruments’ reliability in the publication. Additionally, a number of publications in the SR described use of ad hoc instruments, but did not include evidence of their reliability.15,22,31,32,36,37 Results obtained from novel instruments could be strengthened by pilot-testing the measures and reporting evidence of their reliability. Providing or citing such evidence may strengthen readers’ confidence in the results and enhance the overall quality of the publication.

Attrition

Attrition, the loss of study subjects over the study period, is often a concern in scientific research. Although limited attrition of study subjects (i.e. less than 5%) is generally viewed as acceptable, relatively high levels of subject attrition (i.e. more than 20%) have been described as a serious threat to internal validity.60–63 Attrition may affect study power, confound relationships between exposure and outcome, and limit generalizability of the study outcomes. 60 Relatively high or unexplained attrition in experimental trials may also indicate methodological issues in applying the intervention or challenges with conducting the testing protocol. The presence and management of attrition is additionally important as it may impact the statistical analyses conducted by the investigators. Most of the reviewed publications reported less than 20% attrition. However, publications rarely described differences between subjects recruited into the study and those that completed it. As such, it was often impossible to assess attrition, and it could only be assumed that all subjects completed the study. Attrition in those studies where recruitment and completion details were provided ranged from 9.5% 32 to 72.0%. 21 Seven publications reported attrition rates higher than 20%.17,19–22,30,36 Reasons for high attrition included time commitment, 21 medical issues unrelated to the intervention, 21 safety concerns, 30 prosthetic fitting problems, 21 and nonresponse. 36 Notably, all of the reviewed publications described per-protocol methods to analyze collected data (i.e. only subjects that completed studies were included in analyses). Although attrition is an inevitable occurrence in longitudinal research, reporting and/or accounting for loss due to follow-up may be desirable in prosthetics research. It has been recommended that in cases where attrition is present, baseline characteristics of the study sample as well as characteristics of subjects who have been analyzed be reported so as to allow readers to assess potential attrition bias and its effect on study findings. 61 It has also been advocated that investigators examine differential attrition between groups to assess risks to bias. 62 Additionally, analysis techniques exist for including data from subjects who have not completed the study. 64 Use of intention-to-treat analyses may be preferable in cases where attrition may affect study outcomes. Strategies such as those noted above may mitigate concerns of attrition in future publications.

Selection criteria

Inclusion and exclusion criteria dictate selection of individuals who are eligible to participate in a study (i.e. the target population). Description and justification of appropriate selection criteria can not only improve the internal validity of a study, they can also make a study more feasible, reduce cost, and serve ethical purposes.65,66 Inclusion and exclusion criteria also help to establish the authors’ desired uniformity (i.e. heterogeneity or homogeneity) of the study sample. Finally, description of study selection criteria, in conjunction with a description of the recruited sample, informs the generalizability of the results to the target population.67,68 Selection criteria described in the reviewed publications included statements about subjects’ age, gender, etiology of limb loss, time since limb loss, previous prosthetic treatments, co-morbidities, level of limb loss, and/or activity level. However, fewer than half of the publications included details of both inclusion and exclusion criteria.21–23,25,27,30,31,35,37 Additionally, inclusion and exclusion criteria were generally not well described across the reviewed body of literature. Inclusion criteria were often vague or minimal (e.g. they did not include factors that are needed to generalize study results to persons with lower limb loss, such as amputation etiology). Exclusion criteria were often defined as the opposite of the inclusion criteria rather than distinct reasons why candidate subjects might not be selected for participation. Descriptions of the recruited sample, absent selection criteria were also common. Improved descriptions of target and sample study populations have previously been recommended to enhance readers’ ability to interpret and generalize results from clinical trials. 68 Justification of selection criteria has also been advocated as a means to facilitate appropriate interpretation of research evidence. 69 Provision of such detail in prosthetics research publications may similarly be warranted.

External validity

External validity is the degree to which the study findings (i.e. the measured relationship between the independent variable and dependent variables) can be generalized outside of the experiment. 12 That is, external validity reflects the extent to which similar results would be found for other participants, with other interventions, in other locations, and/or at different times than those included in the study.70,71 External validity is therefore strongest when the study includes individuals, interventions, and conditions present in “real-world” settings (e.g. a prosthetics clinic). Although external validity criteria have been historically omitted or underrepresented in appraisals of research publications (as compared to issues related to internal validity), their role in facilitating use of research evidence in clinical practice is increasingly recognized.72,73 Overall, few methodological issues related to external validity were identified across the publications included in the SR. However, several issues that affected the external validity of the publications, including sample description, representativeness, and intervention description were identified. 7

Sample description

A description of the study sample is needed to determine the degree to which subjects recruited for the study matched a specified target population (or other persons of interest). The sample description may also be used to assess the uniformity (i.e. heterogeneity or homogeneity) of the study sample. In conjunction with the described inclusion and exclusion criteria, description of the study sample can be used to determine the representativeness of the results. 67 If description of the sample characteristics is inadequate or unclear, readers cannot determine whether other persons (e.g. a specific patient) might experience outcomes similar to those obtained in the study. Although most publications included in the SR provided basic descriptions of the recruited study sample (i.e. age and cause of amputation), reporting of the study sample was often limited. Commonly omitted were characteristics recognized to be clinically important when assessing candidacy for prosthetic components, 74 such as subjects’ activity or functional level and time since limb loss. Conversely, sample descriptions sometimes included characteristics of arguable scientific or clinical value, such as the side of limb loss (i.e. left or right) in the description of the study sample.13–15,26,30,32,36 Of more interest than side of amputation may be the side relative to subjects’ pre-amputation limb dominance. 75 Given the aforementioned variation in sample descriptions across the body of literature, it may be desirable to establish recommendations for reporting sample characteristics. 72 Inclusion of elements such as age, etiology of amputation, time since amputation, prosthetic experience, activity level, and level/length of amputation in future publications may both enhance the methodological quality of the evidence and further its use in clinical practice. Furthermore, subject characteristics (e.g. renal disease, joint conditions, visual impairment) that affect prosthetic use and may also affect select outcomes should be described, if appropriate. 76

Representativeness

To most effectively generalize study findings to individuals beyond those in the study, the recruited sample should be representative of a target population. The information needed to support representativeness is typically provided through a clear description of both the target and sample population characteristics, and by recruiting a sample that adequately represents the target population. 67 Other factors that affect representativeness include sample size, 77 recruitment processes, 78 response rate, 79 and attrition of study subjects. 60 Representativeness of subjects across the reviewed body of literature was largely limited. Few publications21,23,25,30,31,35,37 included the details needed to assess sample representativeness (i.e. descriptions of target and recruited sample). Determination of representativeness was also challenged by issues related to sample size and attrition described previously. While small samples and moderate attrition may be expected, given the relative cost and complexity of prosthetic research, representativeness could be enhanced through improved descriptions of targeted and sample populations. Providing such information to readers may improve the degree to which studies’ results may be generalized and applied.

Intervention description

Thorough descriptions of treatments and/or devices included in a study are needed to replicate their use in clinical practice and future research.80,81 Rehabilitation interventions are recognized as challenging to describe in publications because of their complexity and customization to an individual. 82 Furthermore, important differences in design, function, or behavior of a component (e.g. a specific prosthetic knee) may be unknown to readers without first-hand knowledge of or experience with that device. Adequate descriptions are therefore needed to allow readers to understand the results and appropriately generalize the findings. Publications included in the SR were reviewed to determine whether functional descriptions of the studied prosthetic knees were provided. Although authors generally included or cited details about the interventions, specific descriptions of the knees’ capabilities were limited or absent in six publications.23–25,30,35,37 One possible reason for excluding this information may be the word limits applied by most scientific journals. In such situations, citing publications that provide detailed descriptions of the components like MPKs83–86 may be preferable. An alternative approach may be to provide details in an online appendix, a feature increasingly available to publication authors. Such strategies may help to provide the necessary details without negatively affecting the publication word count.

Discussion

This secondary analysis of a published SR 7 was undertaken to examine methodological issues common to the included publications. It was hoped that review of identified issues would inform research consumers of challenges inherent to prosthetics research. Additionally, the authors believed that consideration and discussion of potential solutions might inform future studies and improve the overall level of evidence reported in subsequent publications. Although the focus of those studies included in the analyzed SR was outcomes associated with use of MPKs among individuals with transfemoral limb loss, the methodological issues identified extend to other areas of prosthetics research.

A recent SR of biomechanical and/or physiological parameters used to study persons with lower limb loss found no “A-level” (i.e. methodologically strong) evidence among 89 reviewed studies. Methodological issues identified by the authors included blinding and sample size, two issues that were also identified in this analysis. 87 Similarly, authors of a recent SR on the safety and efficiency of MPKs noted that numerous variables, including accommodation time and standardization of the control condition, were poorly controlled. Of the 18 publications included in that review, only one study was rated to be of high methodological quality. 88 Another review of biomechanical outcomes following partial foot amputation included 28 articles. The authors similarly reported a “range of methodological issues affecting these investigations” (p. 6). Randomization, heterogeneity of the population, and description of the study subjects were identified as issues that affected the reviewed publications. 89 Authors of a Cochrane Review of foot–ankle prostheses noted that the overall methodological quality of 29 reviewed publications was “moderate.” The authors identified numerous methodological issues that also appeared in this analysis, including description of the intervention, blinding, acclimation time, attrition, and effect size. 90 A subsequent publication expanded the Cochrane Review to include studies of prosthetic feet, knees, and sockets. Of the 41 articles reviewed there, only 4 were identified as “A-level.” The authors again noted that inadequate blinding, description of study subjects, control of prosthetic componentry, accommodation time, attrition, and statistical analysis were common. 91 Similarities among these reviews and the present analysis suggest that the identified methodological issues are not unique to research related to MPKs and apply to prosthetic research, in general.

A potential limitation to this analysis is the assessment tool selected to evaluate the reviewed body of literature. Study quality checklists of established methodological criteria developed by the American Academy of Orthotists and Prosthetists (AAOP) were used to appraise the internal and external validity of the included publications. 8 The 18 items included in the internal validity checklist and the 8 items included in the external validity checklist formed the basis for the review criteria, and hence the issues identified in this analysis. Therefore, identification of methodological issues described in this analysis may have been limited to those included in this specific appraisal tool. However, it should be noted that the other prosthetics reviews described earlier used a variety of methodological assessment tools, including the AAOP checklists, 8 an 11-item scale developed for the Physiotherapy Evidence Database,92,93 and a 13-item scale developed for conducting Cochrane Reviews of prosthetic interventions. 91 The issues identified in this analysis were also discovered by other quality assessment tools, which lends support to the claim that the challenges identified here are indeed common to prosthetics research.

Given a growing reliance upon scientific evidence for clinical practice decisions and policy determinations, the limited methodological quality of the literature (appraised using established standards of literature review) related to lower limb prosthetics is concerning. Without adequate evidence to support the use of prosthetic interventions and technologies with appropriate patients, providers may be challenged to justify their prescription and provision. Such concerns are not unique to prosthetics and have been identified in other areas of rehabilitation. 94 It has been suggested that rehabilitation research possesses inherent characteristics that impede the development of quality evidence, as measured by classical evidence-ranking systems. These include complexity and diversity of patient characteristics, emphasis on participation in life activities, small sample sizes, difficulty or impossibility of blinding, impractical or unethical control conditions, co-interventions from use of assistive technologies, and relatively low funding levels.51,95 It could be easily argued that similar traits apply to prosthetics research. As such, examination of efforts undertaken by related fields to address these concerns may be warranted.

One potential means to address concerns of evidence quality in prosthetics literature is to educate readers, funding agencies, and policy-makers as to the important characteristics and challenges inherent to this field. Position statements95,96 and technical briefs97,98 by the National Center for the Dissemination of Disability Research (NCDDR) have provided guidance to research consumers regarding the challenges and solutions to disability research evidence. Similar efforts to describe challenges in prosthetics research may help to redefine or clarify the standards used to appraise and disseminate prosthetics evidence. This suggestion should not be interpreted as encouragement to “lower the bar” for definitions of quality in prosthetics research, but rather to help define how prosthetics research is appropriately and fairly evaluated in the proper context. Indeed, it is critical that “rather than suggesting that [a health profession] deserves special treatment and different “evidence”, we need to focus on ensuring that the relevant questions are answered and evaluated with the most appropriate, corresponding research questions” (p. 227). 99 Educating research consumers as to how existing standards apply to this body of research is expected to be more productive than suggesting they do not apply.

In addition to refining, enhancing, or clarifying standards for assessing methodological quality in prosthetics research, it is the responsibility of those within the field to aspire to improve the quality of research conducted. Many of the issues identified in this analysis (and the other citied evidence reviews) are fundamental to any type of scientific investigation. The issues raised, in most cases, are not insurmountable or even impractical to overcome. Careful considerations in the design, execution, and dissemination of future research studies can greatly enhance the quality of the results obtained from them. In addition to suggestions made in this analysis, recommendations for ways to broadly improve the quality of research across medical disciplines are available. 97 Attention to methodological design and conduct of studies has shown increased quality of literature in other allied health professions, 100 and such efforts may be similarly successful when applied to prosthetics research. Ultimately, any evidence derived from a body of literature will be based on the conduct of the primary studies. 99 It is therefore the responsibility of those within a field to ensure that the “best evidence” be generated through best scientific and clinical practices.

Finally, efforts to improve the quality of reporting research may also help to enhance the level of evidence related to prosthetic interventions. Criticisms of the quality of scientific writing in health and rehabilitation literature have been well documented 101 and have prompted the development of scientific reporting guidelines for different types of research designs.98,102 Existing reporting standards include Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA),103,104 Consolidated Standards for Reporting Trials (CONSORT),105–107 Transparent Reporting of Evaluations with Nonrandomized Designs (TREND), 108 Strengthening the Reporting of Observational Studies in Epidemiology (STROBE), 109 and Standards for Reporting of Diagnostic Accuracy (STARD). 110 These guidelines are believed to be responsible for improvements in evidence quality in the general biomedical literature over the last decade.102,111 Given the observed variations in reporting related to prosthetics research, it may be appropriate to adopt or adapt these standards for reporting research in this field. Reporting guidelines, such as these, are increasingly being adopted by rehabilitation journals, but have not yet been adopted by prosthetics and orthotics journals. 112

The observations and recommendations described here are made with recognition that the methodological quality of prosthetics literature has notably advanced in the last 15 years. This is evidenced by the changing proportion of publications in the analyzed SR that received a methodological rating of “low” quality. Between 1995 and 2005, all reviewed publications received a “low” rating. In the most recent half-decade (i.e. 2005–2010), only 64% of the reviewed publications received a “low” rating. 7 While quality evidence is admittedly becoming more common, a lack of “high” quality evidence and enduring prevalence of “low” quality literature suggest that research consumers may welcome changes in education, implementation, and/or reporting of prosthetic research. Such efforts may not only facilitate changes in the overall quality of prosthetics evidence but also enhance consumers’ ability to apply it in clinical practice.

Footnotes

Author contribution

All authors contributed equally in the preparation of this manuscript.

Declaration of conflicting interests

The authors declare that there is no conflict of interest.

Funding

This analysis and review was supported by a US Department of Education Grant (H235J060001) to the American Academy of Orthotists and Prosthetists and a Center of Excellence Grant (A4883C) from the Department of Veterans Affairs, Rehabilitation Research and Development.