Abstract

Generative artificial intelligence (GenAI) is rapidly entering engineering workflows, raising new questions about liability, safety, and professional ethics. This study examined how 48 students across five engineering disciplines interpreted these issues in discipline-specific GenAI failure case studies created with ChatGPT-4o. Using a deductive qualitative approach, 192 written responses were coded against a 32-item taxonomy of ethical and professional considerations. Across disciplines, students consistently prioritised control and oversight and decision-making under risk and uncertainty. In contrast, no responses addressed data ownership, power and hegemony, or language fluency. Disciplinary differences were evident: mechatronic and electrical engineering students identified a broader set of considerations, including data collection and use, equity and accessibility, and environmental impacts. These findings support aligning curriculum and assessment with explicit requirements for documenting and validating the use of GenAI, designing for appropriate human oversight, and implementing discipline-specific learning activities that address data governance, equity, and environmental implications throughout the engineering lifecycle.

Introduction

Artificial intelligence has long been used in engineering through tools such as optimisation algorithms, predictive modelling, and automated control systems. 1 Generative artificial intelligence (GenAI) represents a distinct category focused on producing new content rather than analysing existing data. 2 This study specifically examines GenAI because its role as a creative and decision-support tool introduces ethical and professional considerations that differ from those associated with traditional AI in engineering practice.

The integration of GenAI into engineering practice represents a transformative shift that is reshaping how engineers approach design, analysis, and decision-making.3,4 As GenAI tools become increasingly sophisticated and accessible, their adoption in engineering workflows has accelerated, presenting both unprecedented opportunities for innovation and complex new challenges related to professional responsibility, ethical decision making, and risk management.5–7 This evolution demands a proactive response from the engineering profession and, critically, from the higher education institutions tasked with preparing graduates for the future.

Engineering education has historically struggled to keep pace with rapid technological advancement, 8 and the emergence of GenAI intensifies this challenge. 9 Traditional pedagogical approaches, particularly in the crucial domains of ethics and professional practice, often rely on established case studies to bridge the gap between theory and practice.10,11 While the case study methodology is a recognised and effective pedagogical tool by providing students with opportunities to apply theoretical knowledge to practical scenarios, its traditional implementation faces significant resource constraints in higher education and battles the rapid adoption of new technology.12,13 The development of high-quality, relevant cases requires extensive faculty time and industry collaboration to ensure authenticity. Furthermore, the use of well-known historical cases is often undermined, as established analyses are readily available online, potentially limiting students’ development of independent ethical reasoning.14,15

The challenge is compounded by the diverse nature of the engineering profession itself. Different engineering disciplines encounter GenAI integration in distinct ways; civil engineers may use GenAI for structural optimisation, electrical engineers for smart grid management, and mechatronic engineers for autonomous systems design. Each application gives rise to a unique set of ethical considerations and professional responsibility challenges that a one-size-fits-all educational approach may fail to address adequately.16,17 There is a pressing need to develop educational strategies that are not only current but contextually relevant to the specific domains students are preparing to enter.

This study addresses this knowledge gap through a novel methodological approach that leverages GenAI itself as a tool to generate discipline-specific case studies for engineering management education. By utilising ChatGPT 4o to create scenarios involving GenAI-related failures across five engineering disciplines, civil, mechanical, electrical, mechatronic, and agricultural, this research presents both a pedagogical innovation and a systematic method for investigating student ethical reasoning. In doing so, the study offers discipline-differentiated findings that not only provide directly meaningful insights for mechanical engineers but also highlight important cross-disciplinary differences, helping all engineering fields recognise and anticipate the varied ethical and professional challenges GenAI introduces. Because modern engineering work is increasingly multidisciplinary, these findings also offer value in supporting more integrated, collaborative, and system-wide approaches to responsible GenAI adoption across the profession.

Through the deductive mapping of student responses against a comprehensive framework of 32 ethical and professional considerations, the study provides the first systematic documentation of how engineering students from different disciplines perceive and prioritise the challenges of GenAI. The findings offer crucial, evidence-based insights that can inform the evolution of both educational practice and professional development standards. To achieve this, the paper seeks to answer the following research questions:

What are the most prevalent ethical implications identified by engineering students when analysing a GenAI-related engineering failure? How do these identified implications vary across different engineering disciplines (Agricultural, Civil, Electrical, Mechanical, and Mechatronic)? Which specific implications do students associate with the distinct management functions of liability, safety, professional conduct, and ethics?

Literature review

Assessing ethics in engineering education

Traditional approaches to engineering ethics education have relied on professional codes and case studies to instil values of integrity, accountability, and public responsibility.8,11 Professional codes such as the Engineers Australia framework and the National Society of Professional Engineers (NSPE)

Code of Ethics position the protection of public safety, health, and welfare as engineers’ primary duty, thereby anchoring expectations for professional conduct and liability-aware practice.18,19 However, despite this clear professional mandate, ethics instruction is often perceived by students as abstract or secondary to technical learning, thereby limiting its impact on decision-making in real-world risk environments.

A multi-level review by Martin et al., 20 shows that these shortcomings persist across individual, institutional, and cultural layers of engineering programs. At the classroom level, they identify ongoing misalignment between ethical frameworks, learning outcomes, and assessment tasks; at broader levels, ethics is frequently siloed rather than embedded within mainstream engineering work. This fragmentation helps explain why graduates may understand ethical principles in theory yet struggle to apply them when safety, uncertainty, and competing project pressures generate genuine professional dilemmas.

Literature on process safety education reinforces the need to assess ethics through the lens of hazard, risk, and accountability. Mkpat et al. 21 argue that safety reasoning, including hazard identification, risk management, and safety culture, must be integrated systematically across curricula, because ethical responsibility in engineering is inseparable from preventing harm in complex systems. Guerrero-Pérez 22 similarly frames process safety as an urgent curricular priority, noting that disasters and near-misses are not only technical breakdowns but also ethical and legal failures with significant societal consequences. These contributions highlight that assessing ethics in engineering education should extend beyond knowledge of codes toward evaluating students’ capacity to anticipate unsafe outcomes, justify defensible choices under uncertainty, and act in accordance with professional duties where public welfare and liability converge.

Generative AI and the future of engineering education

GenAI amplifies many of the challenges identified in the previous sections. Its adoption in engineering is accelerating, with applications ranging from design optimisation to predictive analytics and real-time decision support. 23 The integration of GenAI across civil, mechanical, and electrical engineering raises complex questions about liability, safety, and professional conduct. 24 Yet, as Hagendorff 25 observes, the pace of adoption has outstripped the development of discipline-specific ethical frameworks. This mismatch underscores the urgency of equipping future engineers with the capacity to engage critically with GenAI's implications.

In higher education, students report both enthusiasm and concern regarding GenAI tools. Chan and Hu 26 and Otto et al. 27 show that while students value efficiency and personalisation, they worry about academic integrity, loss of critical thinking, and diminished ownership of learning. Bittle and El-Gayar 28 further highlight risks to academic integrity, calling for frameworks that balance technological innovation with ethical safeguards. Camarillo et al. 29 explored how critically reviewing GenAI outputs affects engineering students’ ethical learning and judgment. After evaluating GenAI-generated case studies, students demonstrated improved ability to identify potential bias and recognise a wider range of societal and environmental concerns often absent from traditional textbook analyses. Quince and Nikolic 7 demonstrate that while students often identify core issues such as transparency and accountability, they struggle to engage with systemic concerns such as equity, data ownership, and power dynamics. They also highlight that without explicit scaffolded learning interventions, the depth of student GenAI ethics awareness is limited.

Pedagogically, GenAI offers new opportunities. By generating discipline-specific scenarios, GenAI can extend traditional scenario-based assessment, offering scalable and contextually relevant tasks. This builds directly on McMartin et al.'s 30 work, suggesting that GenAI can enhance realism and efficiency in ethics education. At the same time, however, overreliance on GenAI tools risks narrowing students’ problem-solving strategies and reducing opportunities for creative and critical thinking.

The challenge, then, is to balance innovation with responsibility. While constructive alignment, authentic assessment, and scenario-based methods provide useful foundations, they must be reimagined to incorporate GenAI. This involves embedding human oversight, transparency, and accountability as non-negotiable values, while also addressing systemic issues of equity and power. Without this, engineering education risks reproducing the same ethical blind spots that currently limit professional practice.

Pedagogical approaches: Case studies, scenarios, and authentic assessment

In response to these gaps, educators have developed pedagogical approaches that more explicitly integrate ethics and professional reasoning into learning activities. Constructive alignment, which ensures coherence between intended learning outcomes, teaching activities, and assessment, has been widely promoted in higher education. 31 While constructive alignment is a curriculum design framework, it is underpinned by principles of constructivism, which is a broad pedagogical perspective that views learning as an active process in which students build new understanding based on prior knowledge and experience. 32 Studies show that students perceive aligned curricula as more engaging and meaningful for their learning.33,34 Such approaches can support the integration of ethical reasoning into mainstream engineering subjects, avoiding its treatment as an isolated topic.

In parallel, the literature highlights a set of approaches that bring ethical and professional reasoning into realistic learning contexts. Case-based approaches draw on real or realistic past events to support analysis and judgement; scenario-based approaches use hypothetical situations to explore potential decisions and their consequences; and authentic assessment tasks require students to apply disciplinary knowledge in ways that resemble professional practice. Although these approaches differ in emphasis, they share a focus on situated reasoning and have been used to help students engage with the kinds of dilemmas they may encounter in engineering work.

Case-based learning is particularly prominent in engineering ethics education. Raju and Sankar 35 argue that case studies provide a way to situate professional dilemmas in real-world contexts, enabling students to grapple with technical and ethical trade-offs simultaneously. Similarly, Yadav et al. 12 demonstrate that case teaching fosters deeper engagement and improved transfer of learning compared to traditional lecture-based formats. Toogood 36 further develops a model of engagement principles that explains how students interact with case studies, underscoring the importance of accessibility, relevance, and opportunities for reflection. While case-based methods are widely used, Runeson and Höst 37 caution that poorly designed case studies risk superficial engagement. They provide methodological guidelines for developing rigorous multi-case approaches in engineering and software education, which can enhance the validity of case-based pedagogy. These include defining clear objectives, research questions, and a theoretically grounded frame of reference at the outset, ensuring that the case and units of analysis are appropriately selected, and planning multiple data sources to enable triangulation.

Scenario-based learning offers another valuable tool. Scenario-based tools, in contrast, present hypothetical or projected situations that focus on specific decision points or conceptual challenges, encouraging students to explore possible outcomes. McMartin et al. 30 show that scenario assignments can effectively assess students’ ability to integrate technical, ethical, and teamwork considerations. Unlike static case studies, scenarios simulate the ambiguity of real practice and require students to plan processes rather than simply propose solutions. This aligns with the calls of Herzog et al. 38 to embed emotional and contextual dimensions into ethics education, thereby better preparing students for uncertainty and complexity.

Authentic assessment has also gained traction as a means to both preserve academic integrity and promote employability. Authentic assessment refers to tasks that mirror the kinds of activities, challenges, and decision-making processes students encounter in professional practice. Rather than relying on traditional examinations, authentic assessments require learners to apply knowledge in realistic contexts. 39 Because these tasks demand situated judgement and integrated disciplinary reasoning, they are also less easily completed through generative AI tools. Emerging studies suggest that authentic, process-oriented assessments can mitigate GenAI-related risks to academic integrity by emphasising performance, justification, and iterative engagement rather than the simple production of text. 40 As such, authentic assessment is increasingly promoted both for its contribution to employability skills and for its role in fostering integrity within AI-enabled learning environments. Sotiriadou et al. 41 show that authentic tasks reduce opportunities for misconduct while supporting skill development. This finding aligns with Kong et al.'s 42 concerns about contract cheating, indicating that well-designed assessments can simultaneously address integrity and learning quality. Tshai et al. 43 further highlight how project-based and problem-based approaches can simulate professional practice, preparing students for real-world dilemmas.

Methodology

This study was conducted within a third-year engineering management course at the University of Southern Queensland, Australia, during September – November 2024. The course is a compulsory subject for undergraduate engineering students and includes a mix of third and fourth-year students from various disciplines including, Civil (21), Electrical (11), Mechanical (6), Mechatronic (4) and Agricultural (4). Two submissions could not have a discipline or case study identified. Ethics approval was obtained from University of Southern Queensland.

Assessment design

This study investigates the changes and ethical implications derived from students’ work of a single, unsupervised assessment. The assessment took the form of a traditional case study where students had to respond to four questions about the Liability, Safety, Professional Conduct and Ethical considerations in the case study (below). The case studies focused on the ethical, safety, professional conduct and legal considerations of utilising GenAI tools in engineering practice. Each of the disciplines was provided with a specific case study (civil, electrical, mechanical, mechatronic or agricultural), which was made available to the students.

What legal defence is available for such a technical failure in reference to common law and statute? And would arbitration or mediation be preferred for resolving technical disputes? Does the use of generative AI change legal liabilities? Is there a reasonable legal argument for the building contractor, project manager and/or engineer to be partly liable for damages and to face a potential criminal negligence charge resulting from the fatal technical failure due to the use of generative AI? Support your case with legal reasoning and case law. Explain why it is critically important for a professional engineer to comply with a code of ethics and professional conduct and why would applying this code develop and reinforce public trust along with compulsory registration of professional engineers, relate this to the rapidly evolving usage of Generative AI. Safety can be defined as ‘freedom from dangers and risks’. To what extent can any occupational environment be completely free of dangers and risks? Expand on the perceived dangers and risks of using generative AI in engineering practice.

Case study development

To address the limitations of traditional case study development, this research utilised GenAI to create novel, fictitious scenarios that incorporated GenAI into engineering practice. The creation of scenarios was undertaken as there are currently limited public case studies of engineering practice where GenAI has been incorporated. This was also a design choice to leverage the capabilities of GenAI to develop equitable case studies across disciplines within hours, and not days. Five case studies were generated using ChatGPT 4o, each tailored to a specific engineering discipline: civil, mechanical, mechatronic, electrical, and agricultural. The decision to use ChatGPT 4o was informed by an initial comparison of its performance against Gemini and Copilot on the task of generating an analytically deep, constructively aligned, and repeatable case study across disciplines. Quality was evaluated based on three criteria: (1) the plausibility and coherence of the case narrative, (2) the extent to which the output demonstrated constructive alignment between outcomes, activities, and assessment, and (3) the model's ability to generate discipline-equitable versions of the case without major prompt modification. Copilot demonstrated strong repetition but lacked depth and nuance in the generated cases. Gemini produced greater depth but was inconsistent across attempts and did not reliably support questions that could be answered equitably across the five disciplines. ChatGPT-4o produced the most coherent and transferable case, maintained alignment with the pedagogical framework, and generated outputs that required minimal additional prompting, leading to its selection for this study. To ensure the case studies were constructively aligned with the course, the generation prompt included the course learning outcomes, the four assessment questions, and the marking scheme. The prompt can be seen below. “File 1 contains the 7 course learning outcomes for the unit. File 2 contains the 4 questions we are asking the students to answer. File 3 contains the marking scheme/guide for the 4 questions in File 2. Based on these files create an incident that incorporates the use of generative AI into engineering practice in the agricultural engineering domain. The incident will be required to have a fatality. Please make this indecent 200 words minimum. It should allow students to sufficiently answer all the questions in File 2 and can be assessed by the marking Scheme in File 3. The case study will be required to allow for a demonstration of all 7 of the course learning outcomes in File 1. I do not need anything generated other than the incident description which needs to be 350 words minimum.”

While the same prompt was used for each discipline, minor variations in formatting and content were observed in the generated outputs. The authors reviewed and edited each case study to ensure full consistency where each case study followed the example below, and that all required subtopics were present, and an external moderation by engineering management academics and the five separate discipline experts was undertaken. In addition to content validation, a full assessment of the constructive alignment between the case study, intended outcomes and marking criteria was undertaken. An example of a case study can be seen below

Context

On a typical day in a bustling urban construction project, an electrical engineering team was finalising the installation of a complex electrical grid system for a high-rise building. The project, led by a renowned engineering firm, heavily integrated Generative AI (GenAI) in its design and operational procedures to enhance efficiency, predict potential faults, and streamline workflow. The GenAI was employed to optimise the electrical load distribution, foresee maintenance needs, and provide real-time data analytics to prevent any technical failures. However, during the critical phase of the electrical grid commissioning, a catastrophic failure occurred. A transformer exploded, causing a severe electrical fire that rapidly spread throughout the building's lower floors. The incident resulted in the tragic death of an on-site engineer who was performing final inspections. The GenAI system had failed to predict the overload condition that led to the explosion, raising immediate concerns about its reliability and the extent of its role in engineering decision-making.

Legal and ethical implications

The investigation revealed that the GenAI had misinterpreted data due to an unforeseen anomaly in the input parameters, which went undetected by the engineers relying on the AI's recommendations. This raised significant legal questions about liability and responsibility. Under common law, the parties involved—namely the building contractor, the project manager, and the engineer—faced potential criminal negligence charges. The use of GenAI complicated the legal landscape, as it introduced new variables into the determination of fault and liability.

Technical and professional responsibility

This incident highlighted the critical importance of adhering to professional codes of ethics and conduct. Engineers must ensure the safety and reliability of their designs and be vigilant about the limitations of emerging technologies like GenAI. The public trust in engineering professionals relies heavily on their commitment to these ethical standards, especially with the increasing integration of AI in engineering practices.

Risk management and safety concerns

The failure underscored the inherent risks in any occupational environment, emphasising that absolute safety is unattainable. The perceived infallibility of GenAI systems can lead to over-reliance and complacency, posing significant risks. A reassessment of risk management practices is crucial, considering the additional layer of complexity introduced by GenAI. Safety protocols must evolve to incorporate these technologies, ensuring that human oversight remains paramount.

Resolution and future directions

To address the legal disputes arising from this incident, arbitration or mediation may be preferred to a prolonged court battle, offering a more efficient resolution to technical disputes. Furthermore, this case calls for a thorough review of GenAI applications in engineering, advocating for stricter regulations and more comprehensive testing before deployment in critical infrastructure projects.

Data analysis

A deductive qualitative analysis approach was used to analyse the student responses. The analytical framework consisted of a 32-item catalogue of implications of GenAI use, previously developed and published by Quince and Nikolic. 7 The aim of the framework was to provide a holistic, practice-oriented taxonomy of the kinds of considerations that users must weigh when deciding whether, when, how, and how much GenAI should be used. The 32 implications are not organised hierarchically, rather each item represents an independent consideration that may arise in different combinations depending on context.

In the previous study, 7 the 32-implication framework was used as an analytical tool to examine how students recognised the social, economic and environmental implications of GenAI. Student submissions were analysed by identifying which of these implications appeared in their work. The framework, therefore, functioned as a coding guide that enabled the researchers to determine the extent to which students could identify implications and to compare their awareness across different parts of the assessment.

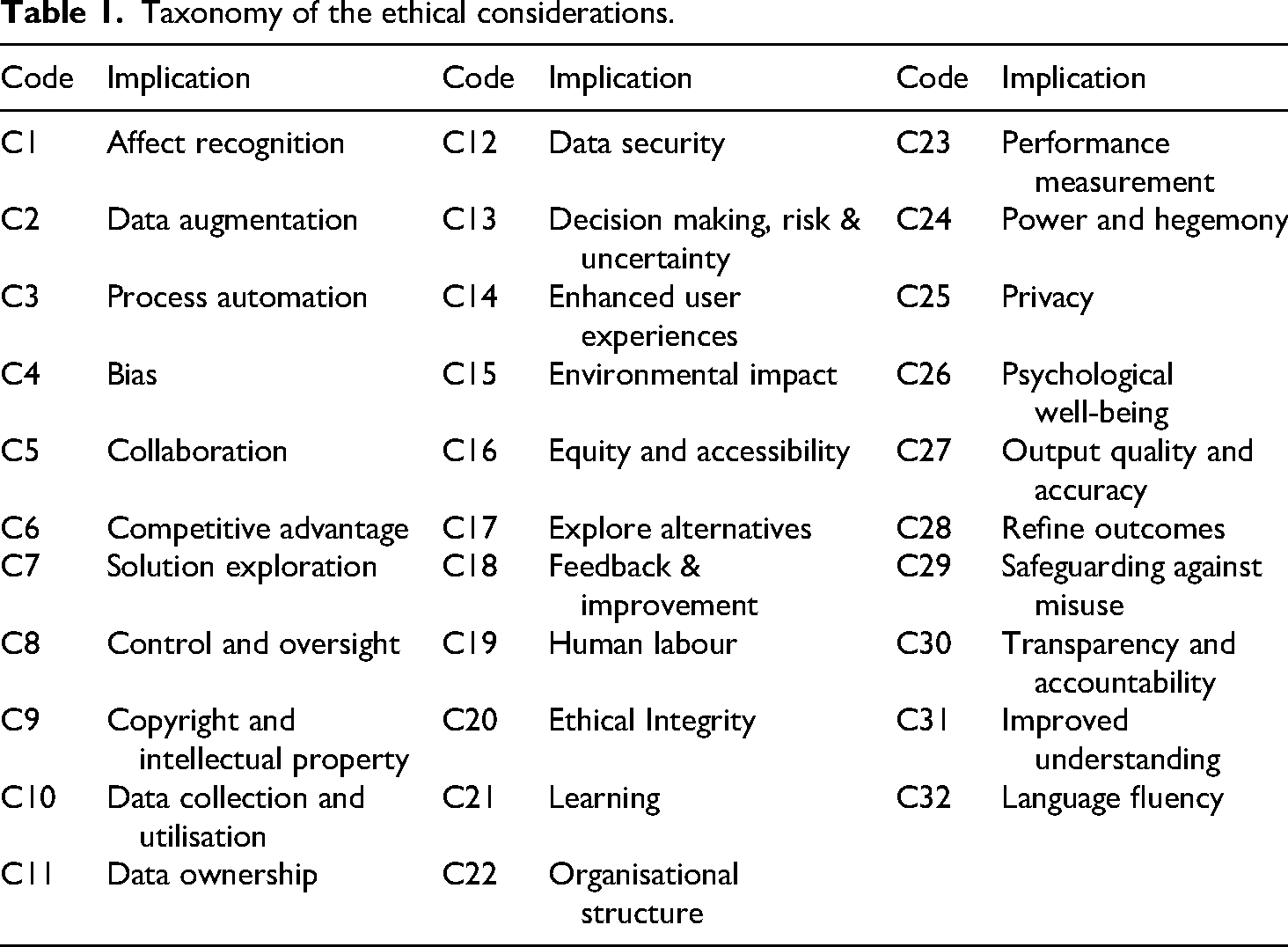

As the taxonomy was originally developed in 2023, it was reviewed prior to this study to ensure alignment with emerging concerns relating to liability, safety, professional conduct and ethics. To confirm its completeness, the 32-item catalogue was compared with four systematic literature reviews on the ethical implications of GenAI.2,25,28,44 No additional categories were identified, and the validated framework used in this study is presented in Table 1.

Taxonomy of the ethical considerations.

Each student's response was mapped to the 32-item taxonomy, while key phrases were extracted to provide a qualitative assessment of the students’ work. Each of the four responses was mapped separately for each student, which yielded a total of 192 individual responses (48 students x 4 responses). The coded data were then aggregated to calculate the frequency of each implication. This allowed us to identify the most prevalent themes overall, examine patterns within each engineering discipline, evaluate how the implications related to the specific questions students were answering, and compare these findings with the results of the previous study.

7

The analysis was structured to investigate student perceptions at four distinct levels:

Overall Thematic Prevalence: The student's data was assessed to identify which of the 32 themes were discussed across all four of their responses. This provided a unique count for each implication per student, revealing the most and least prominent themes in the cohort's collective thinking regarding GenAI in engineering practice. Comparison of Thematic Mapping: The second analysis compared the unique identification of ethical implications with the previous study by Quince and Nikolic.

7

Disciplinary Variations: The third level of analysis disaggregates the data by the students’ engineering discipline based on selected case study (civil, electrical, mechanical, mechatronic, and agricultural). This comparative analysis reveals how perceptions and identified risks vary between cohorts, highlighting potential discipline-specific concerns. Question-Specific Implications: The fourth level examines the prevalence of themes within each of the four assessment questions: liability, safety, professional conduct, and ethics. This provides a granular understanding of which specific implications students associate with each distinct management function.

Limitations

The findings of this study should be interpreted in light of several limitations. First, the research was conducted with a cohort of 48 students from a single engineering management course at one Australian university. As such, the generalisability of these findings to broader student populations or to practising engineers may be limited, as different educational contexts and levels of professional experience could yield different perceptions. Furthermore, this small sample size and categorical structure of the data means that inferential statistical analysis was not suitable. Second, the study utilised fictitious case studies generated by a single GenAI model, ChatGPT 4o. While designed for realism and consistency, these scenarios may not elicit the same depth or type of response as an analysis of a real-world engineering failure. The specific framing and nuances of the GenAI-generated text could have also influenced the direction of student thinking.

Results

Overall thematic prevalence

The frequency analysis revealed a core set of ethical considerations that were easily recognised among the student cohort. Two themes were identified by every participant: Control and oversight (C8) and Decision making, risk & uncertainty (C13). Students consistently asserted that ultimate human responsibility is non-negotiable. One student stated, “The use of GenAI does not absolve the engineer of their professional responsibilities”, while another emphasised the practical need for “human controlled monitoring and fail safes to reduce the risk of catastrophe when there are errors within the AI”.

This was closely followed by Output quality and accuracy (C27) and Transparency and accountability (C30), each identified by 97.92% of students. The responses indicated a strong understanding that GenAI outputs require rigorous validation. As one student noted, “engineers that using GenAI must ensure its outputs are carefully verified and meet safety standards”. Another elaborated on this point, stating, “The engineer's task when using Generative AI will be to understand and validate the Generative AI's output, ensuring that the highest level of ethics and professionalism is maintained”. The need for transparency was linked directly to public trust, with one response highlighting that a lack of transparency “can lead to issued of trust and accountability”. Other highly prevalent themes included Process automation (C3) at 83.33%, Psychological well being (C26) at 75.00%, and both Ethical integrity (C20) and Organisational structure (C22) at 70.83%.

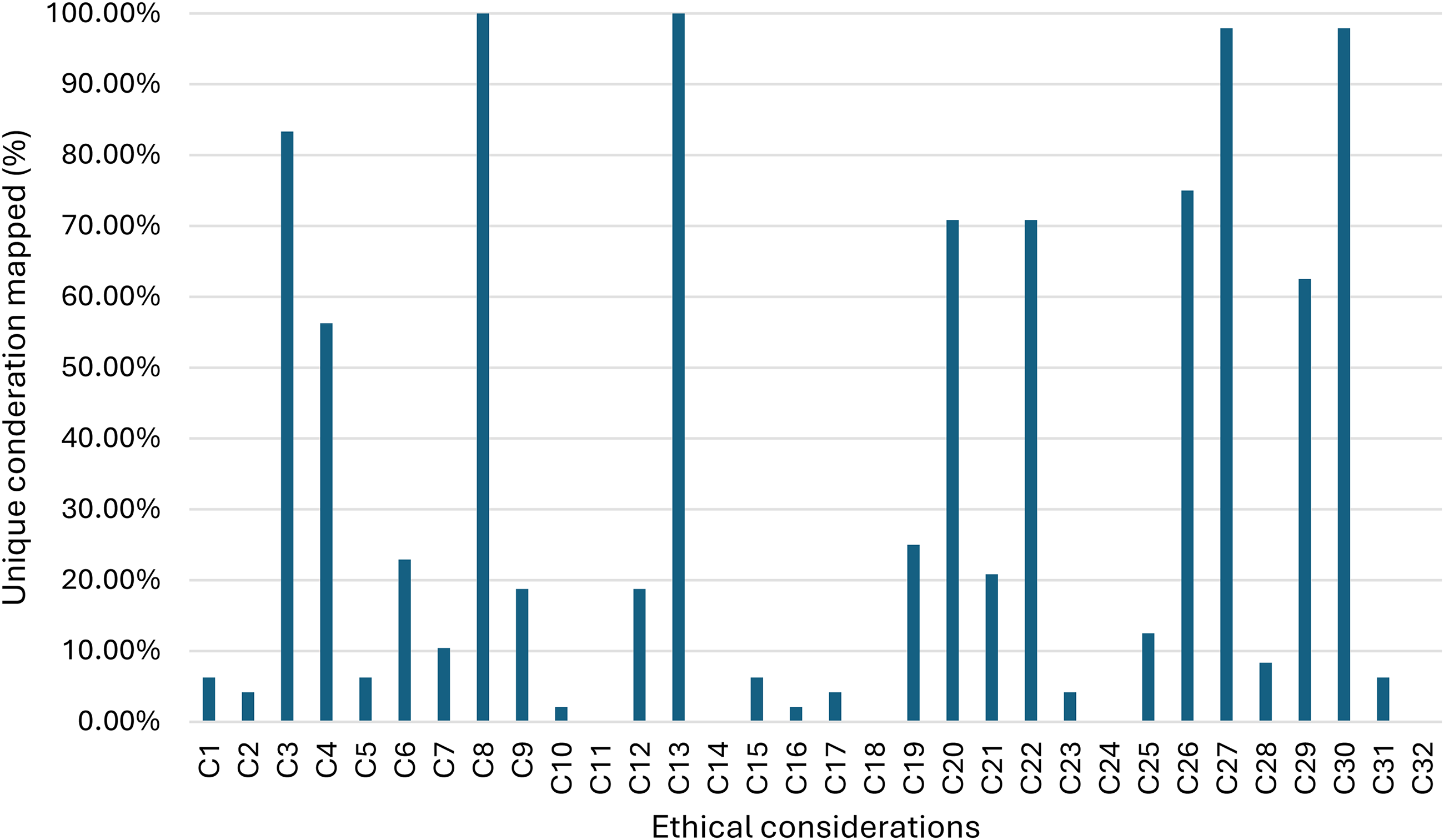

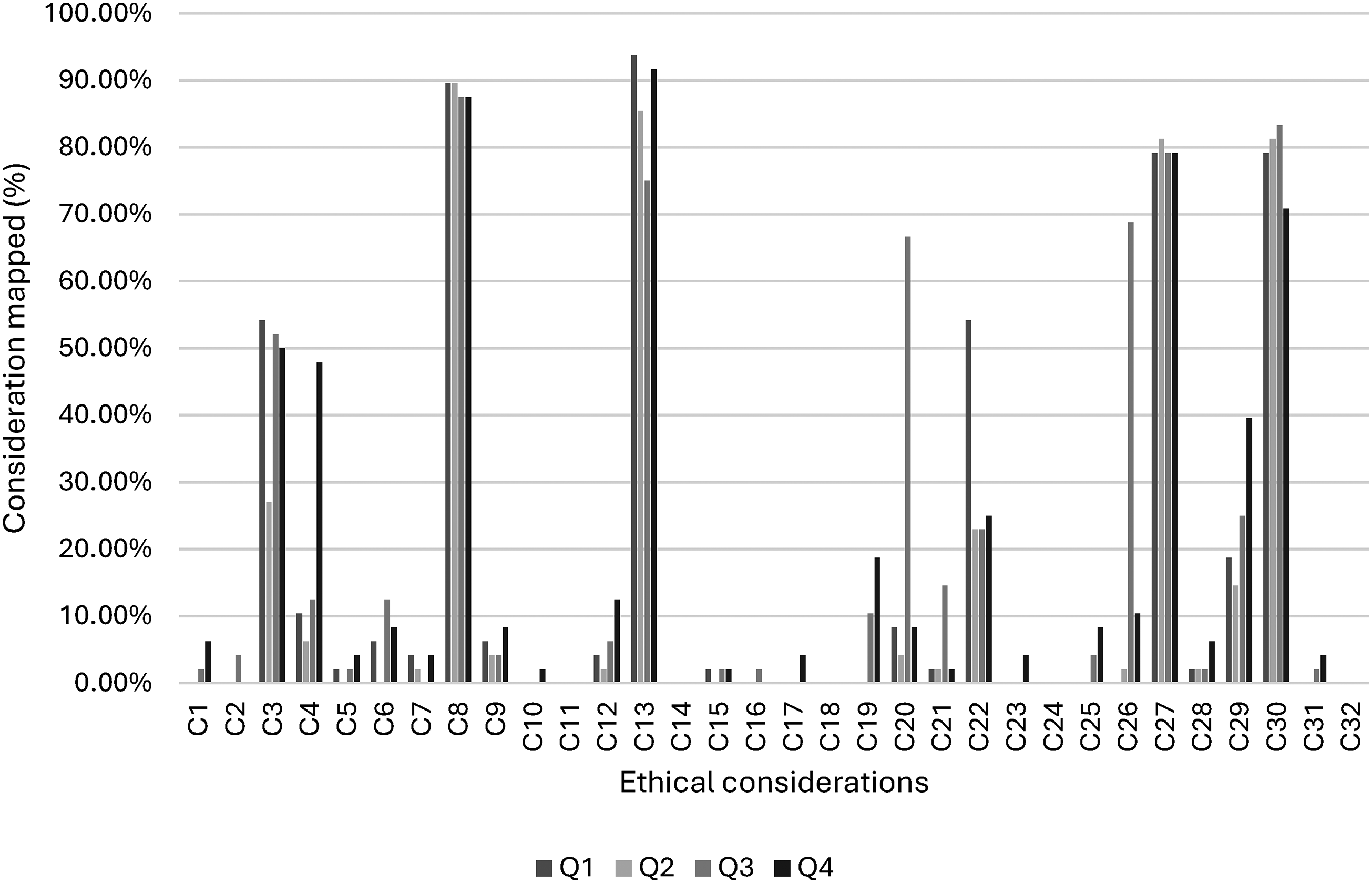

In contrast, several themes were entirely absent from the student responses. Five implications showed a 0.00% frequency: Data ownership (C11), Enhanced user experiences (C14), Feedback & improvement (C18), Power and hegemony (C24), and Language fluency (C32). Other themes were rarely mentioned, including Data collection and utilisation (C10) and Equity and accessibility (C16), each with a frequency of 2.08%. The full frequency distribution is presented in Figure 1.

Unique student identification of ethical considerations.

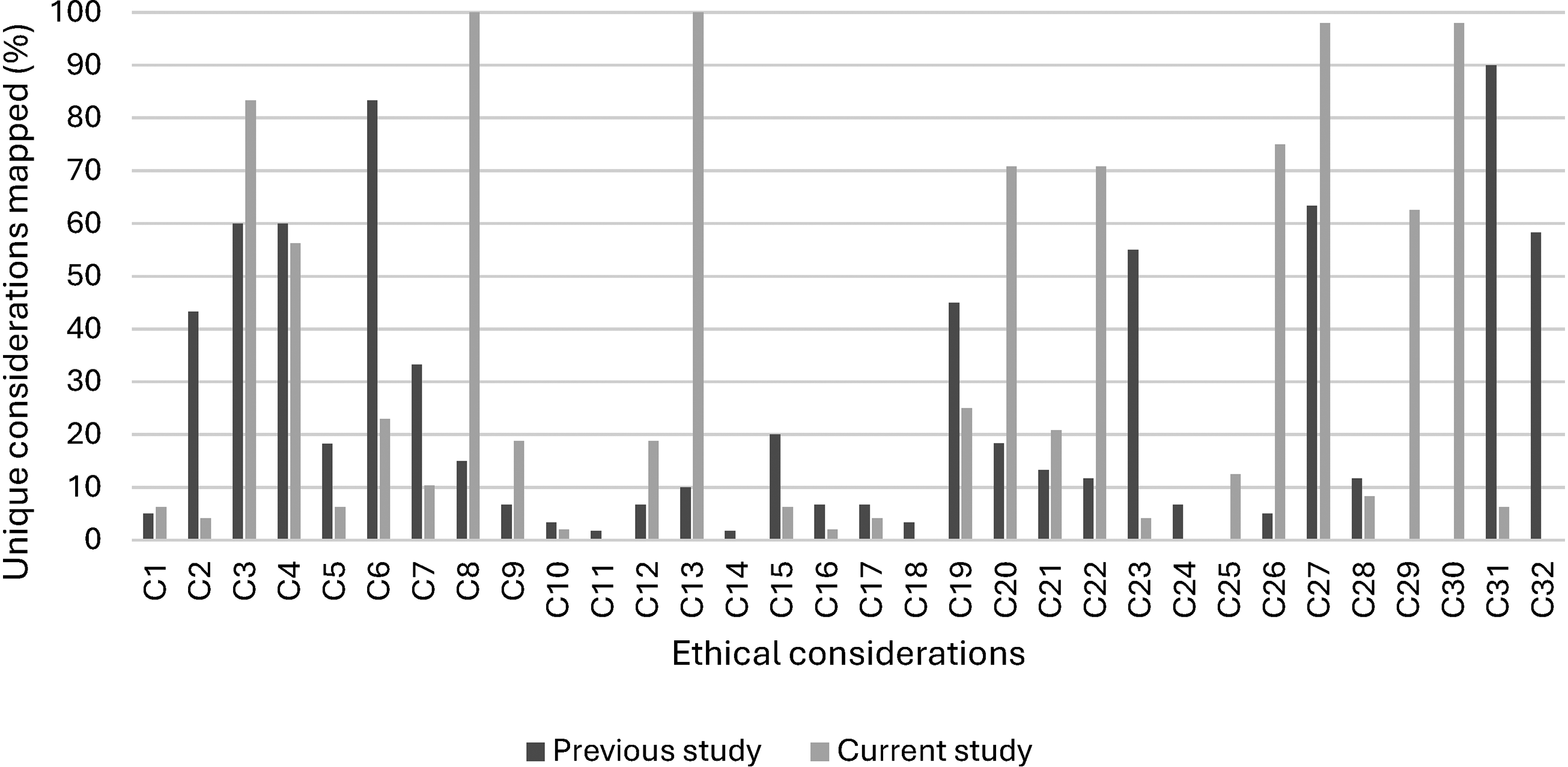

Comparison of thematic mapping

To investigate how assessment framing shapes students’ ethical reasoning, the current study was compared with a previous study on GenAI in engineering (Figure 2). The previous study 7 examined ethical implications through broad social, economic, and environmental lenses, encouraging students to reflect on sustainability, societal responsibility, and economic impact with limited background scaffolding. In contrast, the current study focused on the ethical dimensions of directly integrating GenAI into engineering practice, providing an explicit connection to the implications, drawing attention to professional accountability, reliability, and risks associated with automation.

Comparison of ethical themes identified between previous and current studies.

In the earlier study, where students reflected on GenAI through social, economic, and environmental perspectives, responses clustered strongly around themes such as sustainability, societal responsibility, and economic cost, as shown in higher frequencies for categories C3, C4, C6, C7, C20, C22, C27, and C30. By contrast, in the current study, which examined ethical implications tied directly to the integration of GenAI into engineering practice, a different set of themes emerged more prominently. Categories C8, C13, C19, C26, C28, and C29 displayed much higher frequencies.

Analysis by discipline

When the data is disaggregated by discipline, the analysis reveals both a consistent core of shared concerns and notable variations in the breadth of issues identified. A foundational set of ten themes was identified by students across all five disciplines: Agricultural, Civil, Electrical, Mechanical, and Mechatronic engineering. These universally acknowledged themes included Control and oversight (C8), Decision making, risk & uncertainty (C13), Output quality and accuracy (C27), and Transparency and accountability (C30). This indicates a strong cross-disciplinary consensus on the primary operational and ethical responsibilities of engineers using GenAI. This shared foundation was captured in one student's statement that “Compliance with code of ethics and professional conduct is essential for engineers to maintain public trust, ensure accountability and addressing the challenges posed by the tools like GenAI”.

However, significant differences emerged in the periphery. Students from Mechatronic and Electrical engineering demonstrated the broadest range of considerations. Mechatronic engineering students were the only cohort to identify implications such as Data collection and utilisation (C10) and Equity and accessibility (C16). Similarly, Electrical engineering students were unique in identifying the Environmental impact (C15). This broader perspective from students in data-centric disciplines was reflected in comments regarding system vulnerabilities, with one student noting the “risk of cyber crime with generative AI which can effect on breach of customer's information confidentiality”.

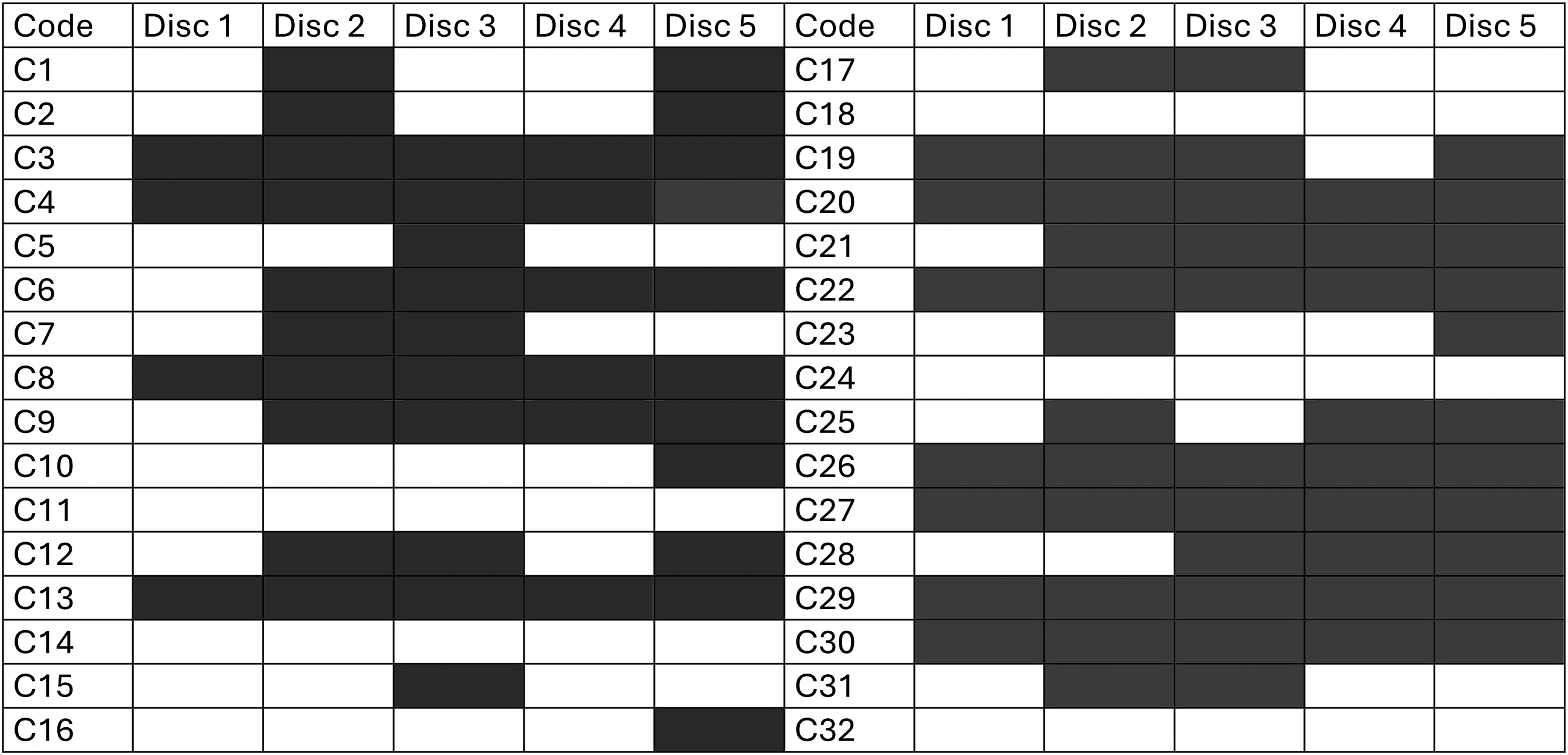

Conversely, some perspectives were notably absent from specific disciplines. For example, the theme of Human labour (C19) was identified by students in all disciplines except for Mechanical engineering. Students from other cohorts raised points such as how automation “create dependency and make employee lazy and less attentive to increase their skills”, a consideration that did not appear in the responses from the mechanical engineering students. The full distribution, showing which themes were identified by at least one student within each discipline, is detailed in Figure 3.

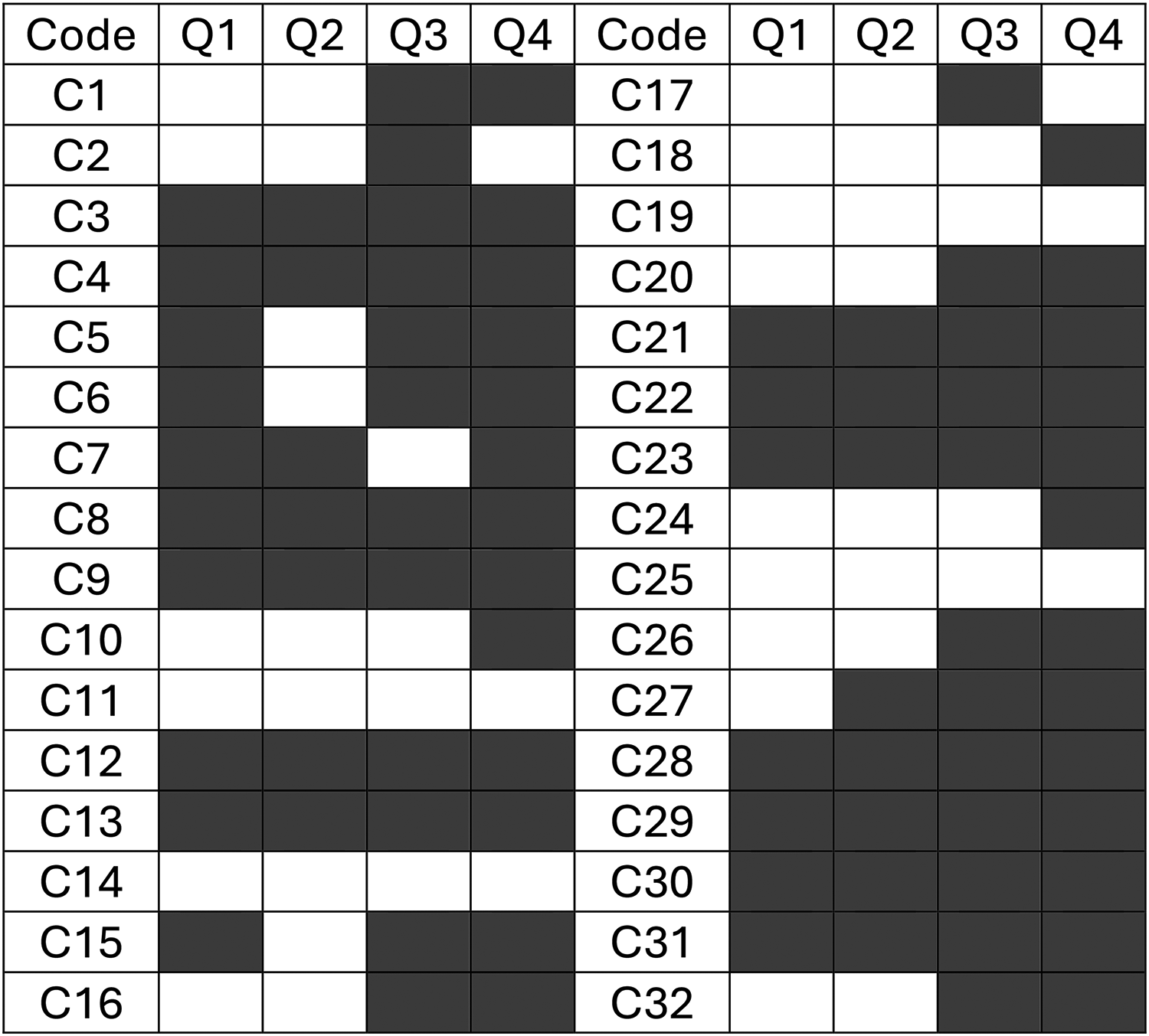

Distribution of themes identified by at least one student within each discipline. Disc 1 is agricultural, disc 2 is civil, disc 3 is electrical, disc 4 is mechanical, disc 5 is mechatronic. *Grey is found.

Analysis by question

The analysis of themes by assessment question reveals that the management function being considered significantly influenced the scope of students’ thinking. While a consistent set of core themes underpinned all four areas, the questions related to ethics and professional conduct (Q3) and safety (Q4) elicited a much wider range of considerations than those focused on liability (Q1 and Q2), as demonstrated by Figure 4. A core group of thirteen themes was identified in response to all four questions. This universal set included themes such as Control and oversight (C8), Decision making, risk & uncertainty (C13), Output quality and accuracy (C27), and Transparency and accountability (C30). This suggests students view these issues as foundational to all aspects of engineering management in a GenAI context.

Influence of assessment question focus on the breadth of themes identified.

The questions on liability (Q1 and Q2) prompted responses that were tightly focused on legal and professional culpability. When considering liability, students concentrated on assigning responsibility for failure, with one stating, “Since the defect did exist when it was designed, the designer is held liable”. This narrow legal focus meant that themes such as Human labour (C19) and Privacy (C25) were not raised in response to either liability question.

In contrast, the question on professional conduct and ethics (Q3) prompted a broader discussion. It was in this context that students raised concerns about Equity and accessibility (C16) and Data augmentation (C2). The centrality of professional standards to this question was evident in student responses, with one noting, “Compliance with code of ethics and professional conduct is essential for engineers to maintain public trust, ensure accountability and addressing the challenges posed by the tools like GenAI.”

Similarly, the question on safety and risk (Q4) elicited the widest range of themes overall. It was the only context where students identified implications for Data collection and utilisation (C10), the need to Explore alternatives (C17), and Performance measurement (C23). Students linked the technical aspects of safety to broader social confidence, with one response articulating this as the public's need for “confidence that sufficient ai systems are in place to prevent dangerous situations from occurring”. The five themes remained absent across all four questions: Data ownership (C11), Enhanced user experiences (C14), Feedback & improvement (C18), Power and hegemony (C24), and Language fluency (C32). The full distribution of themes by question is detailed in Figure 5.

Distribution of themes identified across assessment question. *Grey is found.

Discussion

The findings of this study provide a detailed and nuanced map of how engineering students perceive the integration of GenAI into their future profession. The results clearly indicate that while students have a strong grasp of the immediate operational and technical risks, prioritising themes of control, oversight, and output accuracy, their consideration of broader, systemic implications is less developed. This reinforces the findings discovered in Quince and Nikolic 7 that students need pedagogical guidance to discover and understand the wide spectrum of ethical considerations. Recent GenAI studies in higher education reveal a similar issue where students typically focus first on the obvious, practical risks (such as accuracy, misuse, and oversight) and are less likely to notice broader ethical and social consequences unless teaching draws these out.26,45 Constructivist learning theory helps explain why. In this view, students don’t develop a deep ethical understanding just by being exposed to GenAI; they build it through guided, student-active learning that makes hidden impacts visible.46,47 GenAI-focused pedagogy papers likewise argue that scaffolded, discussion-rich and reflective activities are what help students move past immediate technical concerns to examine GenAI's broader professional and societal implications in depth. 48 This distinction between the tangible and the abstract in students’ ethical reasoning is significant. It suggests that while current educational and professional frameworks may effectively instil core principles of engineering diligence and accountability, they may not yet fully equip emerging engineers to grapple with the wider societal and systemic consequences of disruptive technologies like GenAI. Exploring the nuances of this perceptual gap is crucial for understanding how to better prepare the next generation of engineers for the complex realities of their practice.

The results identify a tension in AI usage with a strong prevalence of Process automation (C3), identified by 83.33% of students, signally GenAI as operationally useful in their workflows (i.e., a support/assist tool). However, both Control and oversight (C8) and Decision making, risk & uncertainty (C13) were identified by 100% of students, along with student quotes stressing that GenAI doesn’t remove engineers’ responsibilities and must be monitored, suggesting students frame GenAI as an aid under human supervision, not a replacement. The tension between benefits and risks is identified and supported in many student studies. For example, a study of university students by Chan and Hu 26 found them to be broadly enthusiastic about integrating GenAI tools like ChatGPT into their studies and future engineering careers, but also concerned about over-reliance on AI or its impact on the value of education. A theme that remains constant in more recent studies. 27

An important insight from the findings is what students did not raise. The near absence of Data ownership (C11), Power and hegemony (C24), and Feedback and improvement (C18) suggests students are thinking about GenAI mainly at the level of immediate use. This use is categorised by accuracy, misuse, and oversight, rather than as a sociotechnical system shaped by data rights, institutional control, and ongoing governance. This pattern is consistent with GenAI studies in higher education showing that students tend to foreground practical and integrity related risks, while giving less spontaneous attention to structural issues like data provenance, market power, or longer term societal impacts unless curricula make these visible.49,50 At the same time, research on critical GenAI literacy argues that these broader concerns are learnable through deliberately constructed and reflective pedagogies that focus on data justice, power relations, and the full life cycle of GenAI systems.51,52 Supporting this, some studies find that when students are explicitly prompted to think beyond tool performance, they do articulate wider issues such as privacy, bias, and social consequences. 53

Despite the low sample size, the results suggest that students from different disciplines converge towards different ethical implications. For example, there were some specific differences for some items between civil and electrical students. The notion that students’ views on GenAI can vary by discipline or other variables, such as demographics, has become apparent in recent literature. For example, a large U.S. university study, by Kim et al., 54 surveyed 982 students and 76 faculty, uncovering similar differences. Their study found that a consistent perception of student and instructor attitudes was overall positive, but differences emerged across disciplines/gender. They found that male STEM majors expressed significantly more positive views on GenAI than female students in non-STEM fields. This underscores the importance of considering disciplinary culture. Students in technical fields may be more eager adopters, whereas others show more caution, highlighting a possible risk of using a one-size-fits-all approach, as comfort and enthusiasm can differ by field. At the same time, cross-disciplinary studies are still growing, and our work addressing engineering-specific differences helps fill this gap in the literature.

Industry perceptions suggest that GenAI will play an increasing role in the workforce, and upskilling will be required. 23 Engineering accreditation and national bodies highlight the importance of engineering ethics and responsible practice. As GenAI tools enter engineering workflows, it is imperative that ethical and responsible AI use is adhered to. For example, Daniel and Xuan 24 notes that traditional codes of ethics can accommodate AI, with, for example, the Institution of Chemical Engineers (IChemE) code already emphasising transparency, integrity, and accountability, which align well with responsible AI principles (like explainability and fairness). Our findings on students’ emphasis on transparency of AI processes and accountability for AI-driven decisions echo these points. In practice, this means an engineer using ChatGPT or a design-AI should document how the AI was used, verify its outputs, and remain answerable for the results, just as they would for any tool. Students need to experience and demonstrate ethical practice, and pedagogical frameworks, like the Project-work Artificial Intelligence Integration Framework, which holds ethics at the centre of all interactions. 55

The consequences of not engaging with such practice can be severe. Many possible clauses of professional conduct are raised by 56 that include that engineers must “approve, sign, or seal only work products that have been prepared or reviewed by them or under their responsible charge”, “only take credit for professional work they have personally completed” and “provide attribution for the work of others.” These examples highlight that the uncritical use of AI can breach multiple engineering ethics policies. These insights directly support the insights from students, where every student identified Control & oversight (C8) and Decision making, risk & uncertainty (C13), and nearly all students emphasized Output quality & accuracy (C27) and Transparency & accountability (C30) (97.92%) where they expect the engineer (not the model) to own the work product, the outcomes and the validity.

Conclusions

This study provides an evidence-based map of how engineering students conceptualise GenAI in practice. These findings reinforce the importance of a multidisciplinary perspective, as differences across engineering fields, including mechanical engineering, offer valuable insight for developing more coherent and collaborative approaches to responsible GenAI use.

Across all disciplines, students demonstrated a strong focus on human responsibility. The universal emphasis on Control & oversight (C8) and Decision-making, risk & uncertainty (C13), combined with near-unanimous calls for Verification (C27) and Transparency (C30), frames GenAI as an assistive tool that must operate under accountable professional judgment. However, the near absence of themes like Data ownership (C11), Power & hegemony (C24), and Feedback & improvement (C18) signals significant blind spots regarding systemic, socio-technical, and life-cycle governance concerns.

The assessment's framing also had a discernible impact. Discipline-specific case studies tended to foreground operational reliability and legal accountability, shifting student focus away from the broader social and environmental lenses highlighted in prior work. Furthermore, prompts addressing ethics, professional conduct, and safety fostered richer thematic coverage than those focused on liability, suggesting that thoughtful assessment design can effectively scaffold wider ethical reasoning.

The implications for education and practice are significant. To address these findings, educators should embed oversight by design into curricula, requiring explicit documentation of AI use, validation steps, and accountability lines within engineering assessments and capstone projects. It is also essential to broaden the curriculum with activities that expose students to data governance, equity, power dynamics, and environmental externalities to complement their existing focus on control and accuracy. Instruction can be further enhanced by differentiating by discipline, leveraging the natural proclivities of specific cohorts while establishing core, cross-disciplinary competencies in responsible AI. Finally, assessment strategies should evolve to evaluate process over output, using authentic, scenario-based tasks to assess students’ decision-making rationale, their management of uncertainty, and their engagement with post-deployment feedback loops.

Footnotes

Acknowledgments

The authors acknowledge the use of generative AI tools (ChatGPT-4o) in brainstorming, drafting, and refining sections of this manuscript. All outputs were critically reviewed and edited by the authors to ensure accuracy and integrity.

Declarations

The authors received no financial support for the research, authorship, and/or publication of this article. The authors declare that they have no competing interests. This study received ethics approval from the University of Southern Queensland Human Research Ethics Committee (HREC).

Data availability statement

The data generated and analysed during this study are not publicly available due to ethics approval restrictions.