Abstract

We transformed an introductory mechanical engineering (ME) project course guided by theories of learning and evaluated the impact of this transformation. The course is the first opportunity for students to make design decisions to meet requirements and build something. In this Part I paper we present the development of a new instrument, the “design practices assessment” (DPA), to measure how well the students learned the intended design skills. The assessment instrument is based on the specific design practices and their entailing decisions an engineer makes. It is a valid way to measure the mastery of mechanical engineering design practices and hence can serve as a template for assessment in other engineering design courses. We present the theoretical framework underlying the DPA, then explain the structure and development, followed by the explanation of the scoring system, measures of validity, and results from its use to measure student learning.

Theoretical framework

Traditional metrics, such as course grades or self-efficacy surveys when used in project design courses often fail to capture the nuanced competencies required in more authentic project-based design learning environments.1–3 To effectively measure a student's development in project-based courses one must capture not only the acquisition of knowledge, but also the application of skills in real-world contexts. 1 Currently, educators and researchers focus on course performance assessments, peer evaluations, self-assessments, development and use of rubrics, and reflective journals or reports as methods to evaluate student learning in project-based settings.2–4 These tools collectively can provide a holistic view of student learning by encompassing both the cognitive and affective domains. Bailey and Szabo 5 review different assessment approaches, including the strengths and weaknesses of many. Under common weaknesses, they include not process focused and very labor intensive. In addition, these various approaches often have the additional weaknesses that: 1) there is no way to comparatively measure a student's incoming preparation and hence their full development; and (2) usually they are quite course specific so they do not allow one to carry out a standardized assessment to compare learning across different courses and institutions to compare the effectiveness of different teaching methods. They also primarily focus on the general process of engineering design, rather than specifically mechanical engineering design, although admittedly, there is a substantial overlap. Here we present the development and application of a new assessment tool designed to measure the mechanical engineering design skills of individual students taking an introductory project course in mechanical engineering design. Our approach is related to that of Bailey and Szabo 5 in which they present students with a flawed hypothetical design and have them critique it. While our assessment shares those general features, it has several differences. Ours is specifically focused on mechanical engineering rather than general engineering design and has gone through multiple tests of validity, avoiding the problems Bailey and Szabo note with the validity of their assessment. Unlike the approach in that work, the design flaws we use are known student errors, compiled from student course work, which likely contributes to the validity of our assessment to accurately capture student thinking.

The “design practices assessment” (DPA) is based on assessment design as described in the definitive NRC book, “Knowing What Students Know” 6 and reference. 7

As stated in reference 6 “Every assessment, regardless of its purpose, rests on three pillars: 1) a model of how students represent knowledge and develop competence in the subject domain, 2) tasks or situations that allow one to observe students’ performance, and 3) an interpretation method for drawing inferences from the performance evidence thus obtained.” Here is how we developed the model of student competence which is the assessment construct of the DPA. As discussed in detail below, a comprehensive model was formulated starting with the decision processes observed in skilled engineers and stated in the engineering design literature, and then systematically mapping those decisions onto the learning goals for the particular introductory mechanical engineering design course. This mapping guided the creation of tasks that allowed us to evaluate student performance in making the relevant set of design decisions. Developing competency in making these decisions was among the course learning goals These tasks were also shaped by extended observation of prior student course work and further refined through extensive pilot testing and interviews with both instructional teams, past students, and potential prospective students, as well as other education researchers, following the best practices discussed in references 6 and. 7

The scoring system to evaluate student performance on the DPA was developed by systematically looking at the range of student performance and creating a scoring system that would reflect this range appropriately. The validation process matched that called for in refence 6 ; “Validation tasks that tap relevant knowledge and cognitive processes, often lacking in assessment development, is another essential aspect of the development effort. Starting with hypotheses about the cognitive demands of a task, a variety of research techniques, such as interviews, having students think aloud as they work problems, and analysis of errors, can be used to analyze the mental processes of examinees during task performance. Conducting such analyses early in the assessment development process can help ensure that assessments do, in fact, measure what they are intended to measure.” There were multiple rounds of expert content validation, pilot testing and interviews with students, observation of student work and errors in design tasks, and finally the comparison of student DPA results with a student artefact produced in the class, the “photo essay”, which was to illustrate their design process.

The construct measured by the DPA was established through several stages of development. As presented by ABET, 8 engineering design is a decision process. “Engineering design involves identifying opportunities, developing requirements, performing analysis and synthesis, generating multiple solutions, evaluating solutions against requirements, considering risks, and making trade-offs.” This definition was our starting point. However, we then made this more detailed and specific by mapping these elements onto the specific set of decisions that Price et al. 9 had identified in their study of the problem-solving process of scientists and engineers. These decisions were mapped onto and suitably reworded to match the objectives of the course being assessed. Those course objectives were determined by the combination of observing the course in operation, examining the instructional materials, and interviewing the instructor. Many of the decisions in this context particularly applied to prototyping of prospective designs, which was an important feature of the course. Most of the decisions given match what is seen in the engineering design literature10,11 although these decisions provide greater specificity. We also included some communication decisions and reflection decisions in our list. Although not explicitly included in the ABET criteria for the engineering design section, these are covered in other sections of the criteria. They are explicit or clearly implied elements elsewhere in the engineering design literature.

The prototyping decisions are largely a subset of those found in reference. 12 They are a subset as that reference includes some decisions involved in carrying the prototyping process through to a manufactured product, which are not relevant to our context. The resulting set of decisions were then grouped into four general categories of practices, as shown below, based on the work of Salehi. 13 These categories, while different from those listed in ABET or other engineering design literature, largely overlap those. They have the advantage that they map more naturally on the time ordering of the assessment tasks.

Good engineering design involves making a number of decisions, with the quality of those decisions reflecting the person's skill. 4 Making those decisions well involves recognizing what factors and information are relevant to the decision and then making good judgements based on that information. Expert engineers are distinguished by their ability to make good decisions in complex and uncertain environments. According to Price et al. 14 experts are adept at recognizing patterns and using mental predictive frameworks to help in their decision-making. Their mental predictive frameworks possess a well-organized knowledge base, allowing them to draw connections between different pieces of information and focus on the most relevant aspects of a problem. Thus, these design problem-solving decisions are at the heart of the design and development of an engineering project, and correspondingly, at the heart of the DPA. The four design practices and their entailing decisions measured by DPA are as follows:

Problem definition & inspiration practice including the design decisions:

What are the requirements of the project? Do your proposed solutions match the requirements and goals? What are the important features and information needed? What are the general “types” of solution which could be implemented? What existing mechanisms for accomplishing similar functions should be examined? What is the feasibility and reliability of each potential solution? What approximations or simplifications are appropriate? How can the problem be simplified to make it easier to solve?

Prototyping practice including the design decisions:

How can the system be broken down into subsystems? For each subsystem prototype, what are the driver-questions? For each subsystem, which is/are potential “show-stopper(s)”? How will success be defined for driver question? Which driver question should be answered first, second, third…? What resources will be used? What resources might be needed? What is the testing plan, plan for what to prototype, how to test prototype, and plan milestones? The plan should be complete. It will not be perfect and that's OK. When prototype is built and tested, what is learned?

Integrate or iterate practice including the design decisions:

Do the results make sense based on designer's intuition and experience? How do the results compare with driver questions metrics for success? Should designer iterate on the prototype or are components ready to be integrated to build the final product? Is solution optimized, or are there parts of it that should be changed and improved? What are potential failure modes of the solution?

Representation of findings practice including the design decisions:

Having completed prototyping and initial building stages, based on designer's experience, are there additional things they wish they knew or skills they wish they had to further this design? How are results being organized and communicated? How can the prototyping process be improved? What features of this design might be useful in another situation? Examining the plan for communicating the prototyping effort, is anything missing? Is the communication suitable for the intended audience?

Assessment format and testing

The DPA is based on having the student participant evaluate and provide feedback on a tentative design structured around the decisions listed above. The DPA's premise is that the process of evaluating a design and providing feedback involve making very similar decisions to those involved in actually creating a design. Thus, the quality of evaluation and feedback that a person provides on a tentative design provides a valid measure of their design skills. This premise is supported by the fact that much of the design process involves the engineer evaluating their own or peers’ potential designs, looking for flaws and possible improvements. 8

The reason for this format for the DPA, rather than requiring participants to produce a design of their own, is that this approach improves the standardization and consistency in the analysis, as well as efficiency in assessment implementation. If participants are called upon to produce an original design, the assessment will demand more time to complete. The results will also be extremely varied, with a great variety of good and bad features. It is very difficult to achieve a reliable and consistent scoring method across such variation. That extensive variation also makes the scoring very time consuming. When participants are instead evaluating a given design which has a number of known deficiencies and opportunities for improvement, it is possible to have a scoring rubric that is easy to apply and gives a consistent measure reflecting of the quality of design decisions they are making. The rubric only has to capture what deficiencies do they notice out of a consistent set, and whether or not they note the desirable improvements. In the DPA, the participant being assessed is presented with a scenario where they are paired with an imaginary student on a project. This imaginary student asks for feedback from the participant at various stages of their design. The participant is shown a “flawed example”—the imaginary student's work—which contains several mistakes, omissions or shortcomings. The participant is to provide feedback on this example. The first questions are open response and ask the participant to provide feedback to their imaginary partner, listing the shortcomings they notice, including mistakes, missing items or desired improvements. Following those open-response questions, in separate following survey pages, they are given a set of Likert-scale questions that ask to rate their level of satisfaction on the topic of each of the shortcomings embedded into the flawed example. The various shortcomings contained in the flawed example form the basis of the scoring rubric. The participants cannot go back and modify their open-response answers after seeing the Likert questions.

This dual question format allows the assessment to distinguish between three levels of participant skill: (1) those who did not need prompting to identify a shortcoming, (2) those who could identify a shortcoming once prompted to think about the particular aspect of the design, and (3) those participants who, even when directly prompted, did not identify a shortcoming.

An important element of the assessment design was to base it on actual student work and common shortcomings in that work. We wanted the example design presented to participants in the assessment to be relatable and at an appropriate level to fully engage the participants within the available time allocation of the assessment. To achieve that we analyzed the actual student course deliverables from prior offerings of the introductory design course and collected the most common shortcomings. We created the initial version of the assessment using a combination of pictures, drawings and written reflections from past students. The assessment flawed example was the aggregate of the work of many past students.

One limitation to this assessment design is that it is quite limited in its measure of creativity. However, measurements of engineering creativity are inherently difficult. There is some measure of creativity in the open-ended question asking what the hypothetical partner could have done better, as this provides students with the opportunity to offer creative solutions beyond those which are given to them to evaluate. However, we admit that this does not extensively probe student creativity.

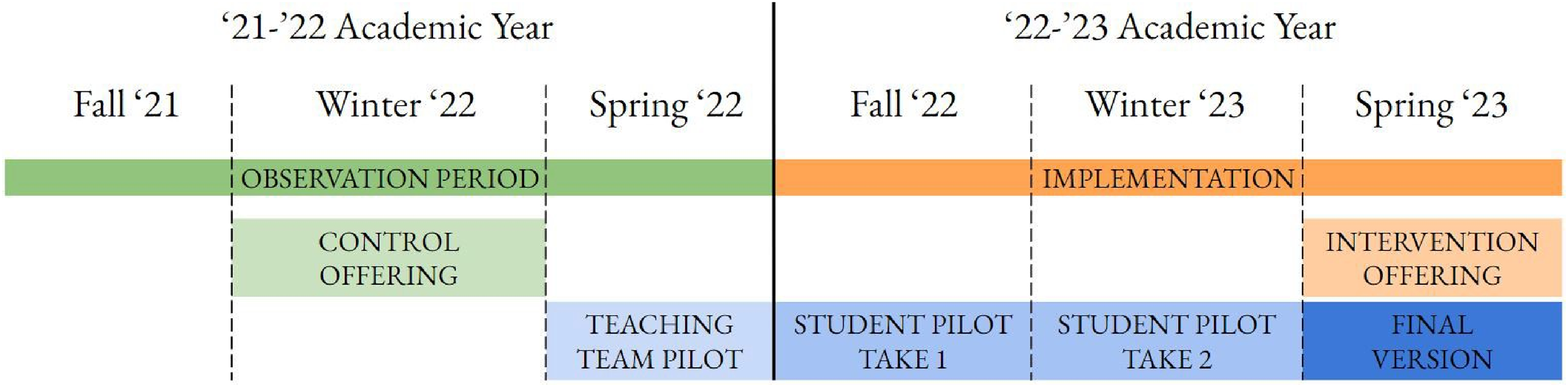

To develop the assessment, we followed several steps as shown in Figure 1. Steps (1), (2), and (3) are discussed above. (1) Observed an introductory mechanical design course taken by all mechanical engineering and product design undergraduates at Stanford University and collected student artifacts produced in the course; (2) identified the learning outcomes the instructors had for the course, both implicit and explicit and mapped them to the previously identified practices of engineering design; and (3) created the assessment based on the targeted engineering design practices. (4) We pilot tested and refined the DPA with course instructors, teaching assistants, and then students.

Timeline of the development of the course design in which assessment was used.

Development cycles

At each stage of testing the assessment was refined on the basis of the results, including revising both the content/wording and the methods to administer and incentivize students to engage fully with the assessment. Think-aloud interviews and pilot testing followed the procedures described in reference. 7 The same assessment was given as both a pre-course and post-course assessment. While students generally know little at the pre-course administration, they do have some ideas about engineering design and so are not completely ignorant, and there was a substantial range of incoming skills across the class, as shown below.

We believe that there is little impact of having the same design example used in pre and post course assessment. Our previous experience with pre and post course assessments have shown that by the end of a course students barely even remember taking a pre-course assessment that did not count towards their grade. Also, in this course students learn a great deal that is relevant to the DPA, as shown below. That learning will dominate their responses compared to thinking they may carry over from the pre-course assessment.

Pilot testing with the course teaching team

The first testing of the assessment happened prior to the Spring of the 2021–22 academic year. First, the assessment was given to the past instructors of the course (4 in total) who reviewed and commented on it. The assessment was then pilot tested by having it completed by the teaching assistants from the Product Realization Lab at Stanford University (17 in total), most of whom had taught in introductory mechanical design courses. During a follow up meeting, we collected their comments and suggestions regarding the assessment based on their teaching experiences. This provided “expert validity”, as these course experts agreed that the assessment questions accurately probed the skills of interest.

Pilot testing with students – round 1

This pilot testing of the assessment had students in the Introduction to ME course complete it twice, first as a pre-course assessment (60 in total) and second as a post-course assessment (64 in total) in the Spring 2022 offering of the course. Student responses were examined to see if they were interpreting and hence answering questions as intended, and the assessment was probing the full range of student competencies. This was followed by think-aloud interviews with five students, asking them for their interpretation of the questions and reasons for their answers. While this showed that the topics targeted were appropriate and common student shortcomings were properly identified, some issues were revealed. First, some of the vocabulary used was found to be ambiguous and so was clarified. Second, it was discovered that the level of student engagement changed dramatically between the pre and post versions. From the extent of the student answers and measure of time spent, it was clear that students took much more care when answering the questions on the pre class version than they did on the post course version. The post-course assessment was given as a complete/incomplete assignment for a small amount of credit done after the final project was turned in, so the course was essentially completed. Based on this, we developed a different way to administer the post-test to improve student engagement.

Pilot testing with students – round 2

The second round of pilot testing with students was carried out with students in the same course in the winter 2023 quarter offering. The pre-course assessment (73 students) administration remained the same, but the post-course assessment was administered during Zoom meeting sessions with course teaching assistants present. 57 students took part. Each meeting was composed of four students and the teaching assistant, lasted for 20 min, and happened a week before the final project was due. The student responses were evaluated as in the previous pilot testing. Based on measures of time spent on each question and overall quality of the responses, the level of engagement was satisfactory, and this showed that 20 min was a suitable amount of time to complete the DPA. This does emphasize that for a standard assessment to be valid, how it is administered is important. Following this administration of the assessment, about 40 students were interviewed on their interpretation of the questions, including the drawings shown, and their thinking behind their answers. These served as validation interviews, which references 6 and 7 stress are an important and often neglected step in assessment development. This led to further small refinements in the language.

Final version

The final version of the assessment produced after the testing and refinement process is available in Appendix G. It is divided in three sections all framed around one general prompt:

Imagine you have been paired with a student from your lab to respond to a design challenge. The challenge is as follows:

“You must build a desktop M&M dispenser for your lab's snack area.

The dispenser must (1) be able to hold a bag's worth of M&Ms, and the releasing mechanism must (2) be spring loaded, and (3) use rotary motion to release a single M&M.”

This prompt was followed by a set of questions, organized into three sections, each centered around particular mechanical design problem-solving Practices:

First section: Problem definition and benchmarking; Second section: Prototyping;

Third section: Representation & Iterate or integrate. This third section combined the two practices identified above to reduce the instrument length. The sections are described in detail below.

Problem definition and benchmarking

The first section is labelled Brainstorming phase” as the meaning of this label was clearer to students than the terms “Problem Definition and Benchmarking”. The first question prompt reads:

After your first meeting, you agree to provide each other with complete documentation of your design process and the rationale behind the choices you made. The purpose of this documentation is to fully describe the reasoning behind choosing a mechanism and design which you will prototype together. You receive the following document.

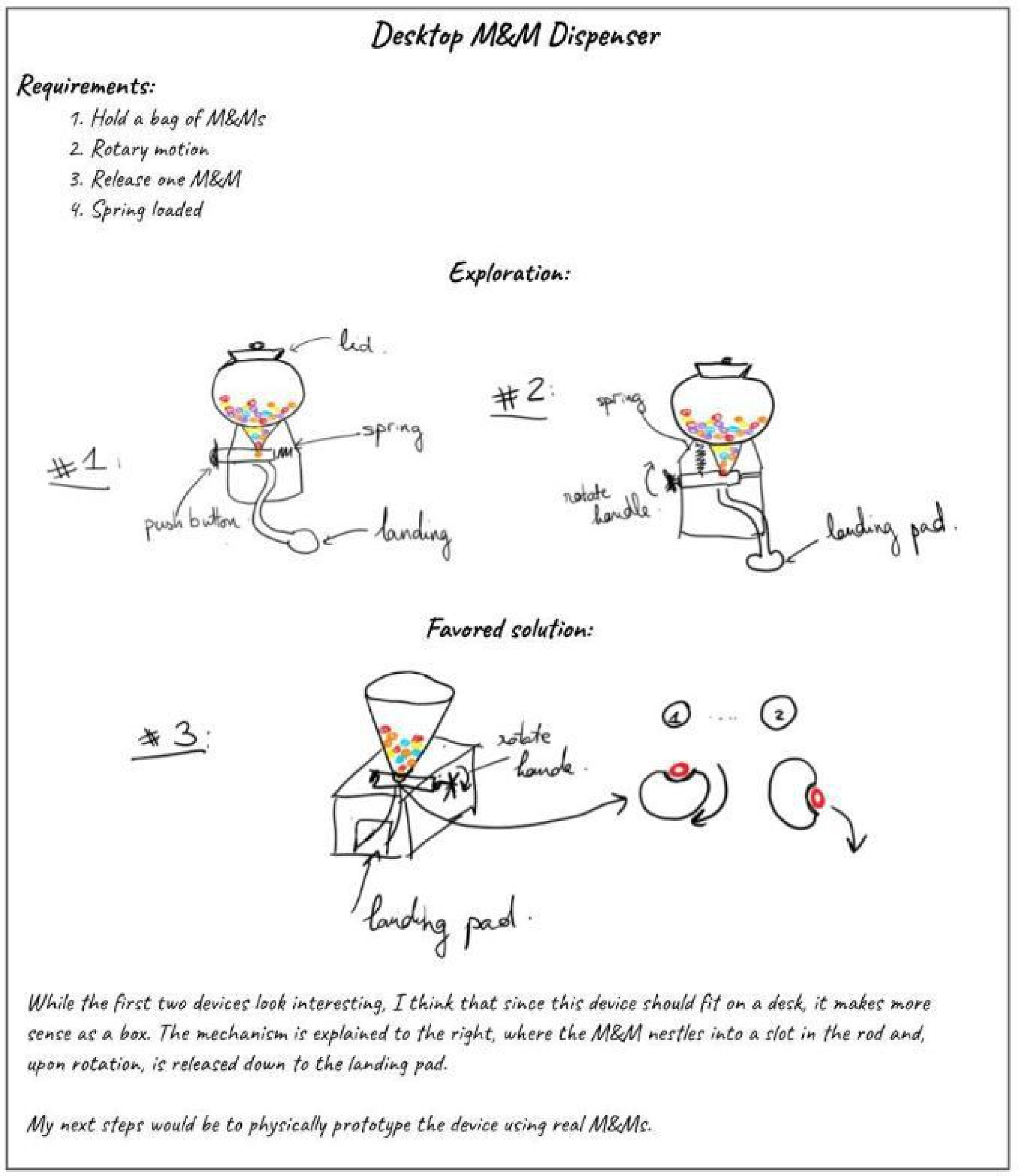

The document that follows, as shown in Figure 2. contains several common shortcomings:

The imaginary student merely copies down the requirements of the project without exploring them further. The requirements of the project are not fully met by the proposed solutions. The exploration of potential solutions is shallow and inspired by a narrow set of existing solutions. The justification for selecting the favored solution does not make sense. There is no organization or ranking of specific criteria which make up the solutions as they relate to the requirements. The solutions do not break down the full device into interacting subsystems. While there is a little further explanation provided with the favored solution, it does not provide enough information to flag potential pitfalls or issues.

Problem definition and benchmarking section flawed example: Deliverable provided to the participant for analysis.

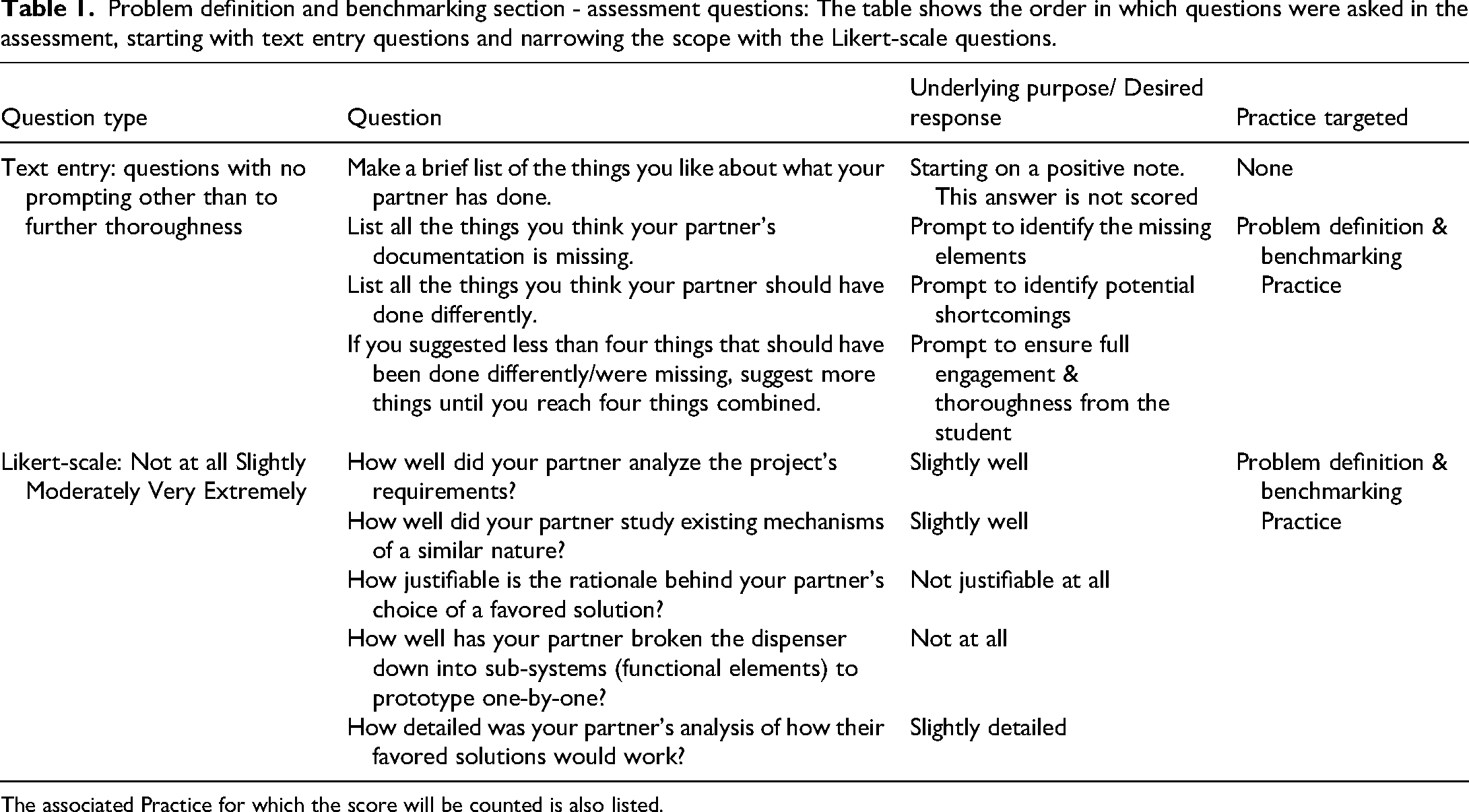

The questions for this portion of the assessment are derived from the above common shortcomings and are listed below (Table 1).

Problem definition and benchmarking section - assessment questions: The table shows the order in which questions were asked in the assessment, starting with text entry questions and narrowing the scope with the Likert-scale questions.

The associated Practice for which the score will be counted is also listed.

Prototyping section

The second section of the assessment is labelled “Prototype planning”. The prompt reads as follows:

After some fruitful rapid prototyping, you and your partner have decided on a design inspired by an existing gumball machine. You’ve split the work such that your partner will prototype the dispensing subsystem and you will prototype the funnel, exit chute, and housing.

You’ve agreed on a common interface for attaching the funnel and exit paths.

Your partner sends you their prototyping plan and asks for your feedback. They specifically ask that you be as detailed as you can be in your critique.

The purpose of this plan is for them to think through and get as much done as possible ahead of going into the lab to laser cut, 3D print, and assemble the subsystem prototype

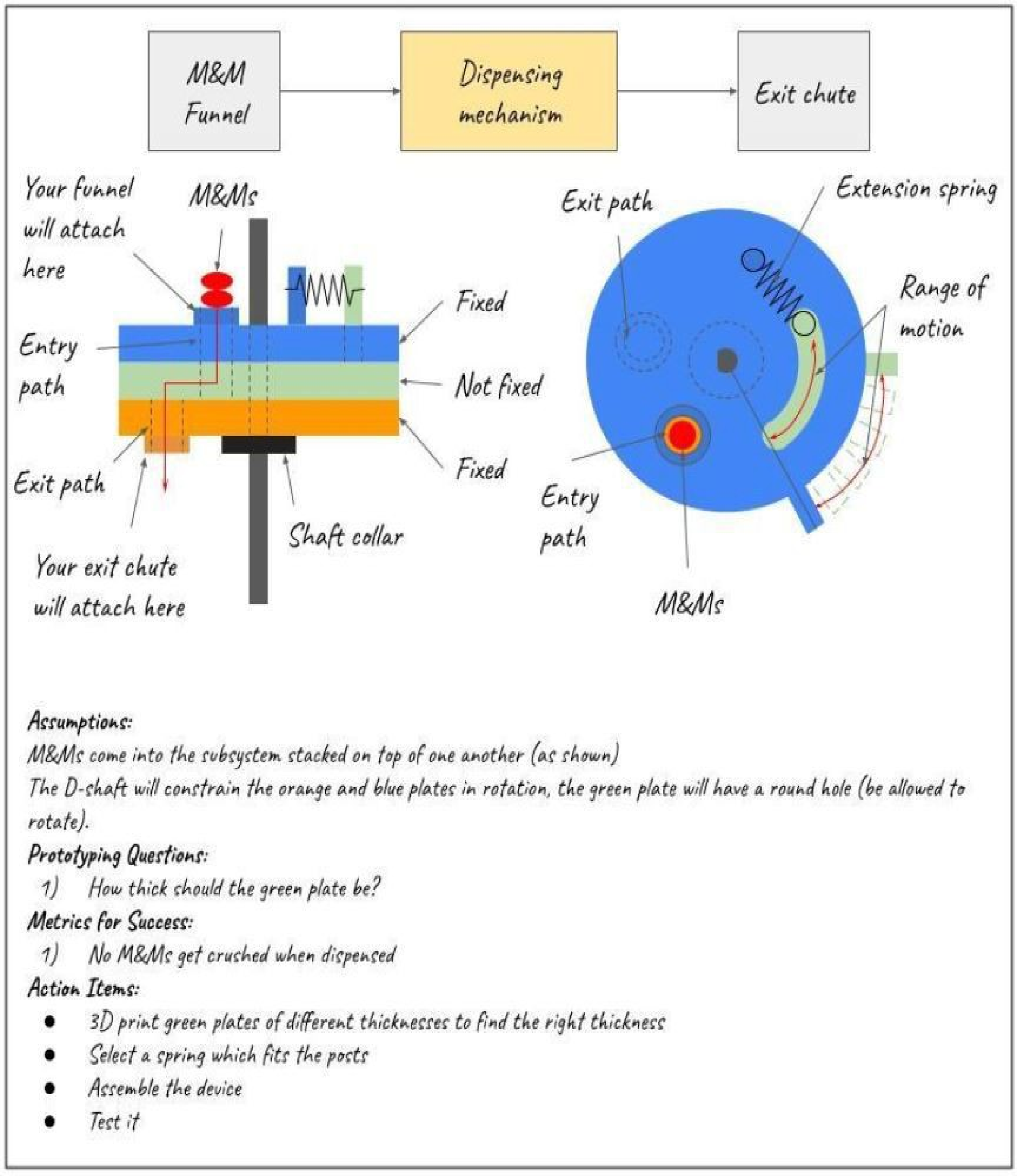

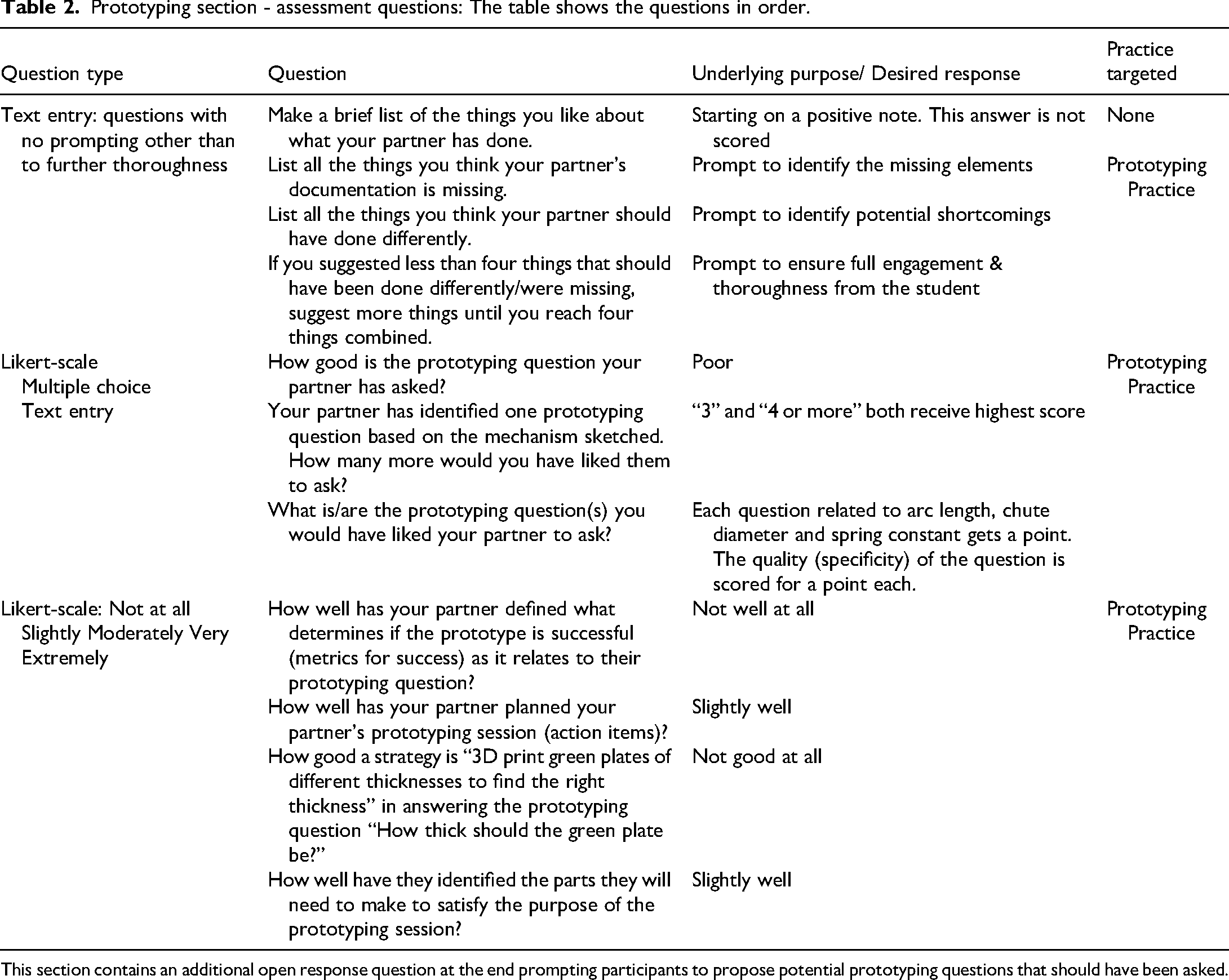

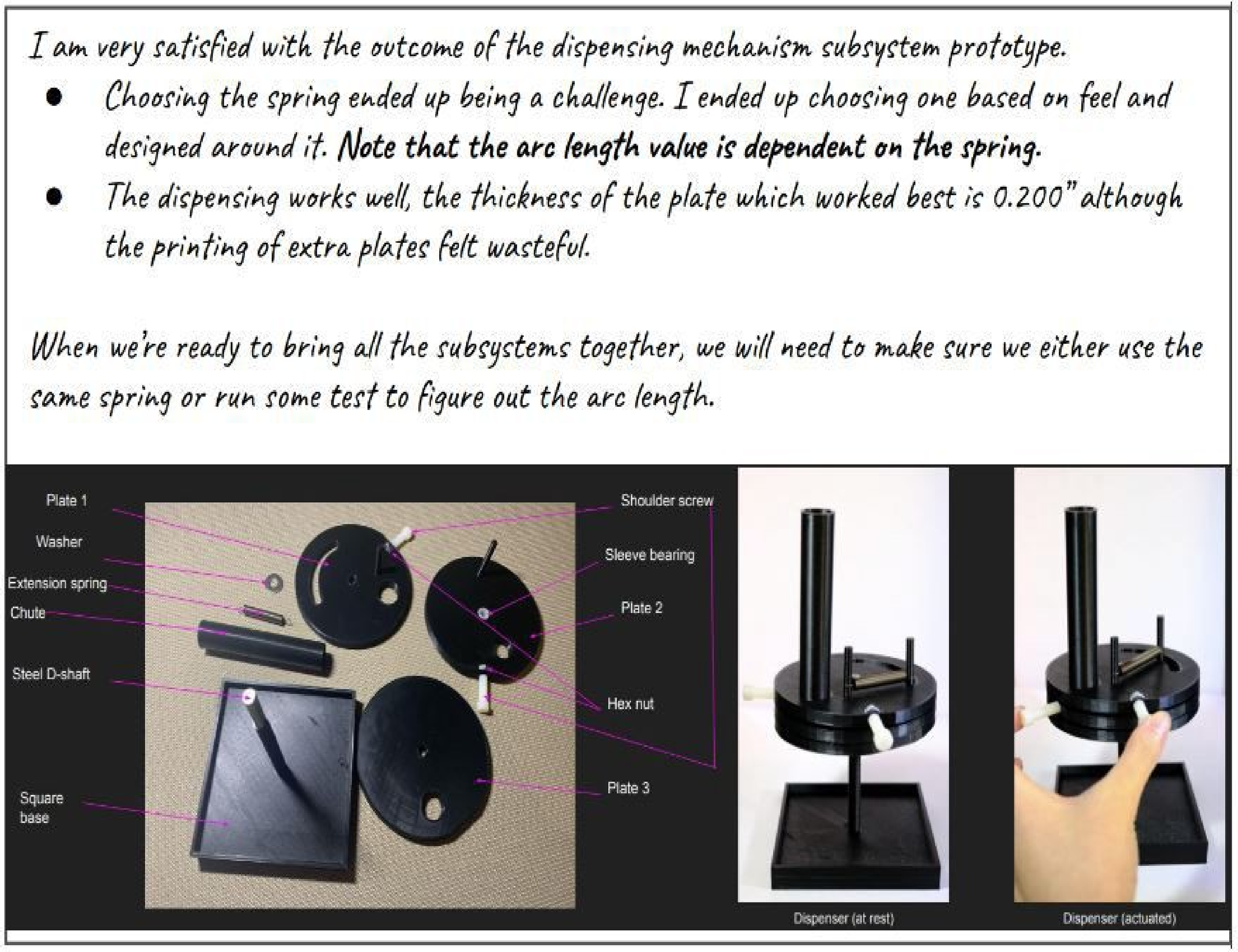

The document which follows the prompt is shown below and contains several common shortcomings:

The prototyping question should be more specific, asking for a range as an answer. More prototyping questions should be asked (spring specifications, arc length) The metrics for success should be more specific and targeted at the prototyping question(s). There are no rough dimensions or sense of scale. The student wants to 3D print the plate to determine the correct thickness which will be inefficient. The criteria for spring selection is not adequate. There is no detailed testing plan or forward thinking as it relates to testing and representing the findings (Figure 3).

Prototyping section flawed example: Deliverable provided to the participant for analysis.

The questions for this section are listed in Table 2, starting with broad free response questions followed by targeted Likert scale questions. Participants are not given the option of going back to change previous answers.

Prototyping section - assessment questions: The table shows the questions in order.

This section contains an additional open response question at the end prompting participants to propose potential prototyping questions that should have been asked.

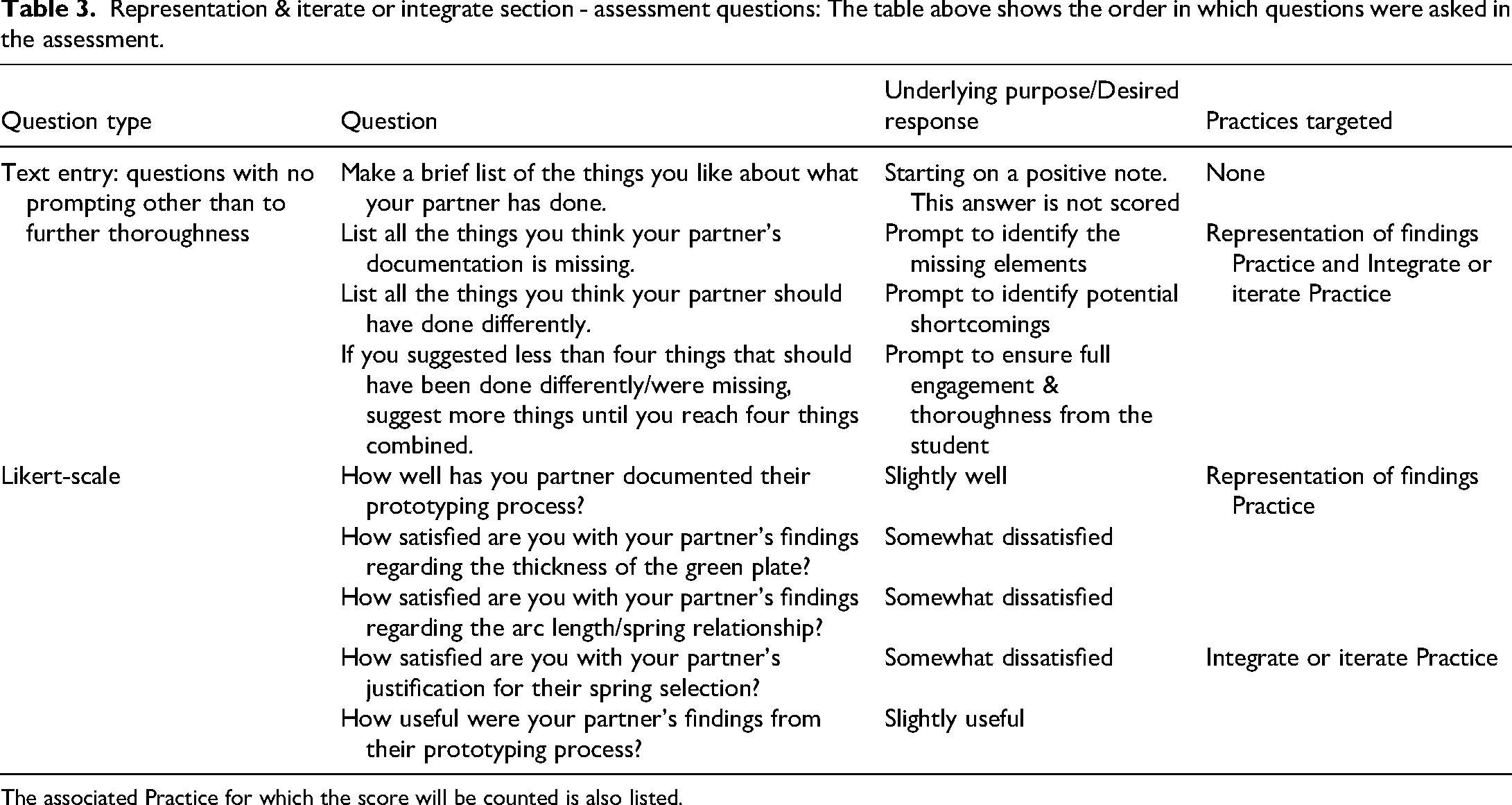

Representation & iterate or integrate

The third section is labelled “Testing and Representation of findings”. The prompt reads as follows:

After some time, you hear back from your partner. They have finished the prototyping process. They send you the following and ask you to provide feedback as you did with their prototyping plan.

They tell you the purpose of this slide is to document the result of their prototyping of the dispensing mechanism subsystem. The slide is meant to convey the relevant information they learned as a result of their prototyping and testing.

The document which follows the prompt, shown in Figure 4, contains the following common shortcomings:

There are no results shown for tests performed to determine the plate's thickness. The justification for selection of the spring is not adequate. There are no results or process shown for how the arc length was determined. There is no reflection or forward planning based on lessons learned. There is not enough information provided as to how things were done. There is no tolerance on the plate thickness. The documentation shows an outcome rather than a set of findings as part of a repeatable process.

Representation & iterate or integrate section flawed example: Deliverable provided to the participant for analysis. The deliverable is embedded with a set of common shortcomings which are targeted in the scoring rubric for the representation & iterate or integrate section.

As in the first two sections, there are open response questions followed by Likert scale questions, as shown in Table 3.

Representation & iterate or integrate section - assessment questions: The table above shows the order in which questions were asked in the assessment.

The associated Practice for which the score will be counted is also listed.

Scoring

The score for the assessment is done separately for the text entry responses, and the Likert-scale answers. The scoring was done by two researchers to confirm interrater reliability. The two scorers were one author (FK) and a second researcher who had previously been a teaching assistant in the introductory ME design course.

Text entry scoring

The rubric used to score the text entry responses was developed by the two scorers. The rubrics were binary questions, each targeting a common shortcoming. First, the scorers selected two participant entries which were deemed to be of high-quality. This determination was made based on the agreement between the common shortcomings included in the examples and the participants’ answers. The scorers worked together to evaluate those participants’ text entries. Using one participant's responses first, they refined the rubric. They then scored the second, high-quality, response discussing the interpretation together.

Next, the scorers randomly selected sets of five pre- and post- participant responses and scored them independently. An inter-reliability score for each rubric item is included in the tables of the text entry rubrics below. An inter-rater reliability agreement of 90 percent was achieved for each set of rubrics. A single scorer then scored the rest of the responses using the rubrics. Scores were obtained for each of the four mechanical design problem-solving practices, and for the overall assessment.

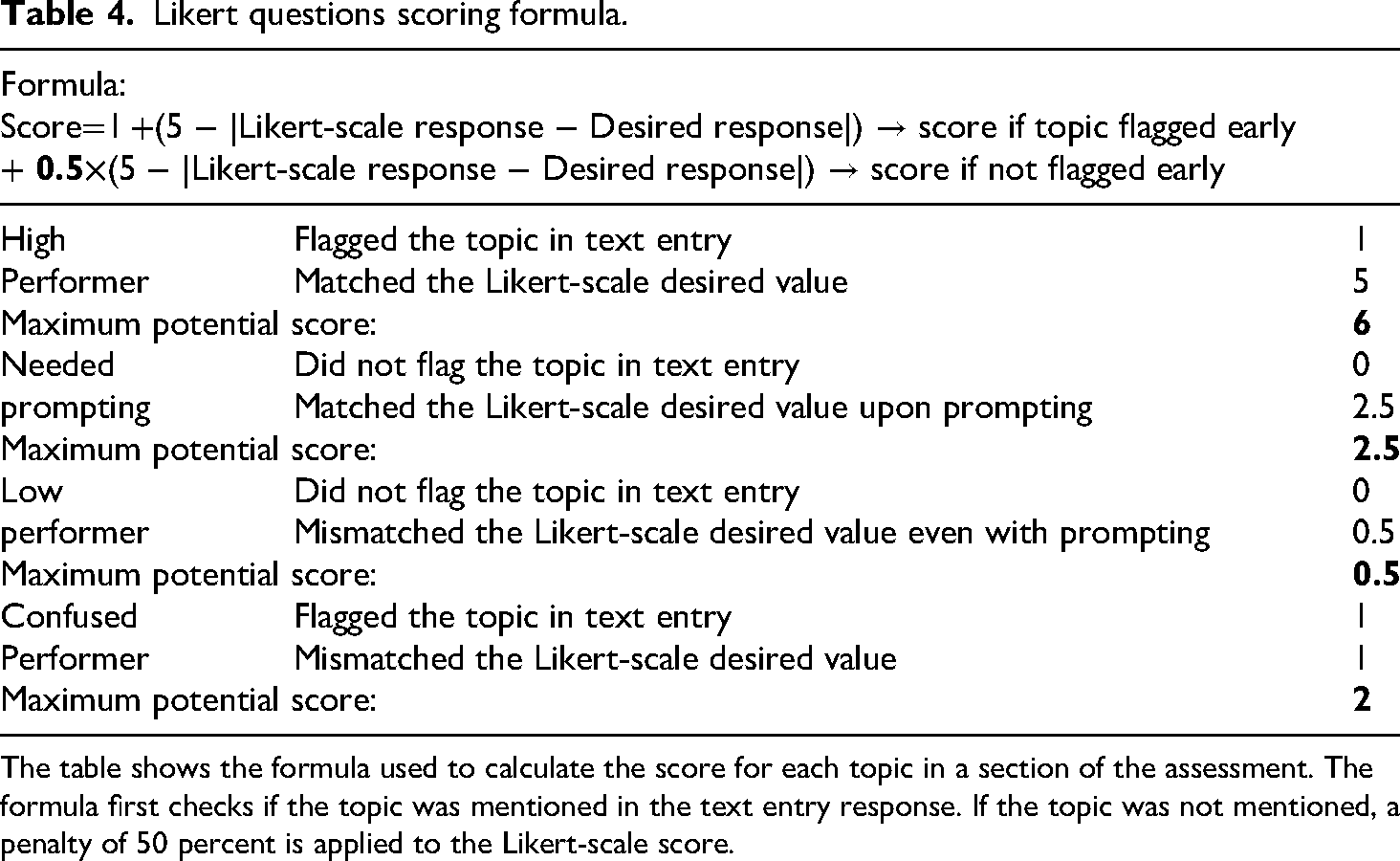

Likert-scale response scoring

For each of the Likert questions, a “desired” Likert-scale response was agreed upon by two scorers based on the quality of the work presented by the imaginary student to the participant of the assessment. A correct answer received 5 points, with the score dropping according to the distance on the Likert scale the participant response was from the desired response according to 0.5 × (5 − |Likert-scale response − Desired response|. This choice of scoring weight and breakdown for the Likert scale questions as shown in Table 4 was determined by having the two raters first look at a number of student responses and ranking them for quality, and then seeing what Likert scoring would best reproduce the observed rankings.

Likert questions scoring formula.

The table shows the formula used to calculate the score for each topic in a section of the assessment. The formula first checks if the topic was mentioned in the text entry response. If the topic was not mentioned, a penalty of 50 percent is applied to the Likert-scale score.

The score for each section was determined using the formula shown in Table 4. This rewarded participants for noting a specific deficiency with no prompting i.e., in the text-entry portion of the assessment. If the participant recognized the deficiency after prompting, i.e., listed in a Likert-scale question asking them to evaluate a particular aspect of the work, they would receive a lower score. Finally, if a participant did not recognize the deficiency even with prompting, they would receive the lowest score.

Scoring rubrics

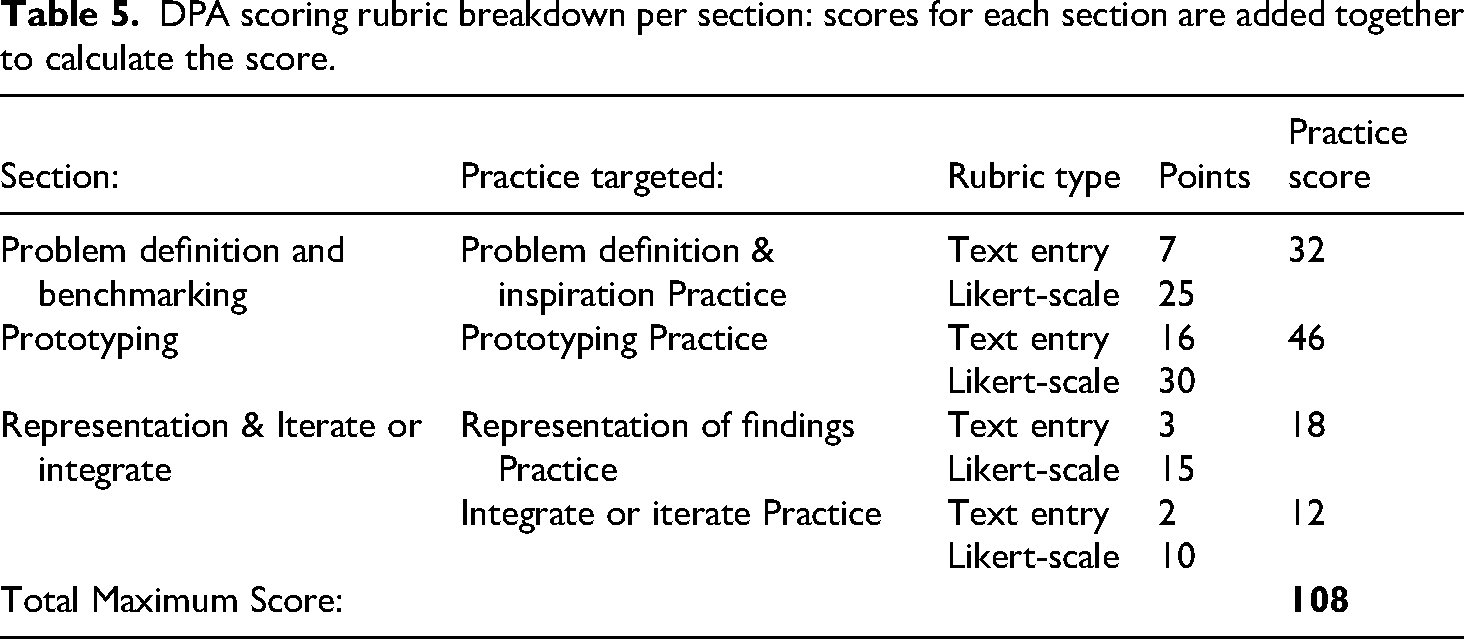

A similar scoring rubric was created for each of the three sections of the DPA as shown in Table 5.

DPA scoring rubric breakdown per section: scores for each section are added together to calculate the score.

A score for each Practice was calculated based on the questions answered. The representation of findings practice and integrate or iterate practice scores are separately determined based on particular questions contained in the third section of the DPA.

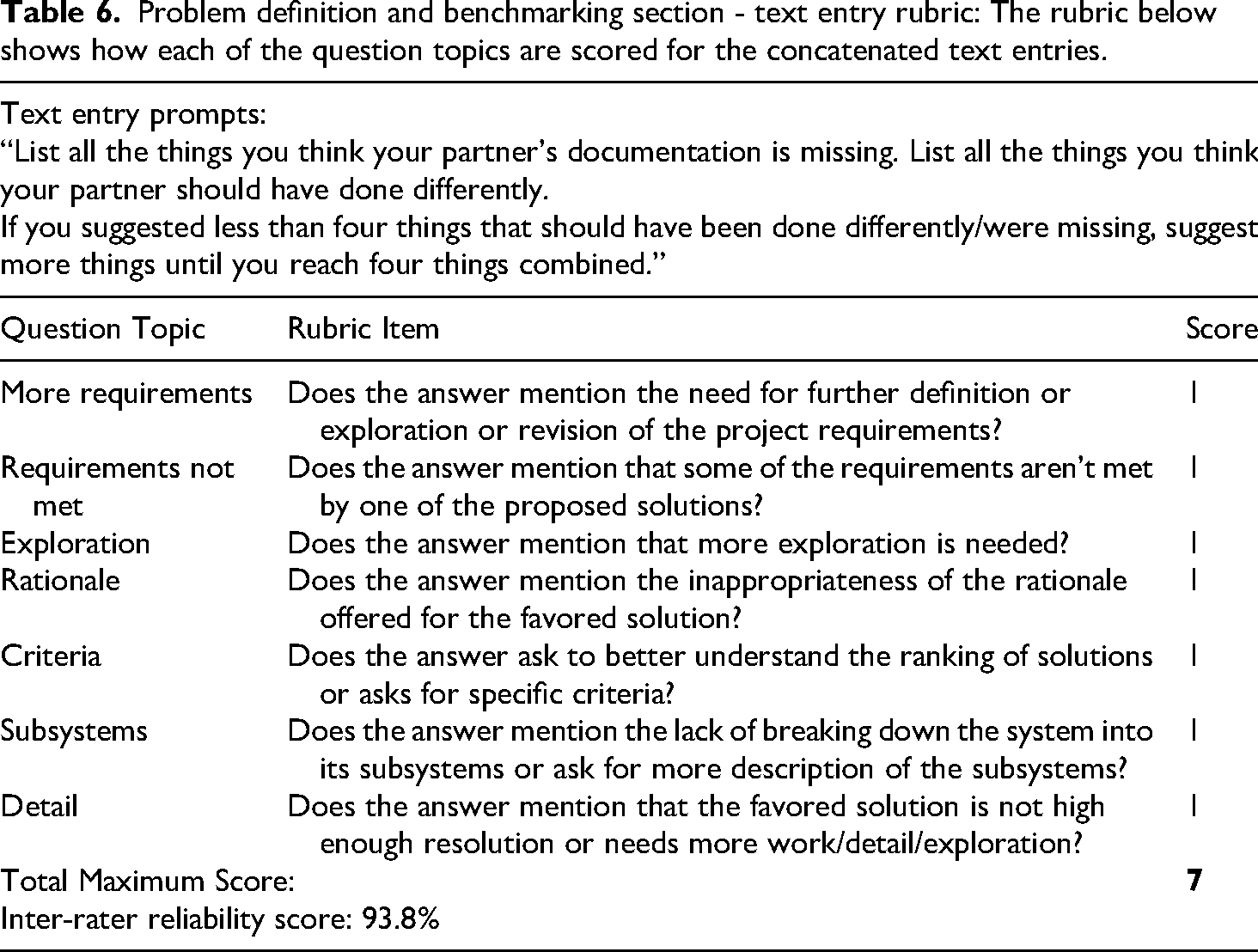

Problem definition and benchmarking section

The answers to the first three questions were concatenated into a single text field and scored as per the rubric in Table 6, where each rubric item is worth one point. An example answer with its scores is shown in the Appendix.

Problem definition and benchmarking section - text entry rubric: The rubric below shows how each of the question topics are scored for the concatenated text entries.

Some of the Likert-scale questions could cover more than one shortcoming in the design process. For example, one question was “How well did your partner analyze the project's requirements?” A good open-ended response to this could include both stating that the requirements were not met and stating that the requirements listed were not sufficient. If a student mentioned either of these they were given the scoring multiplier for their Likert question response.

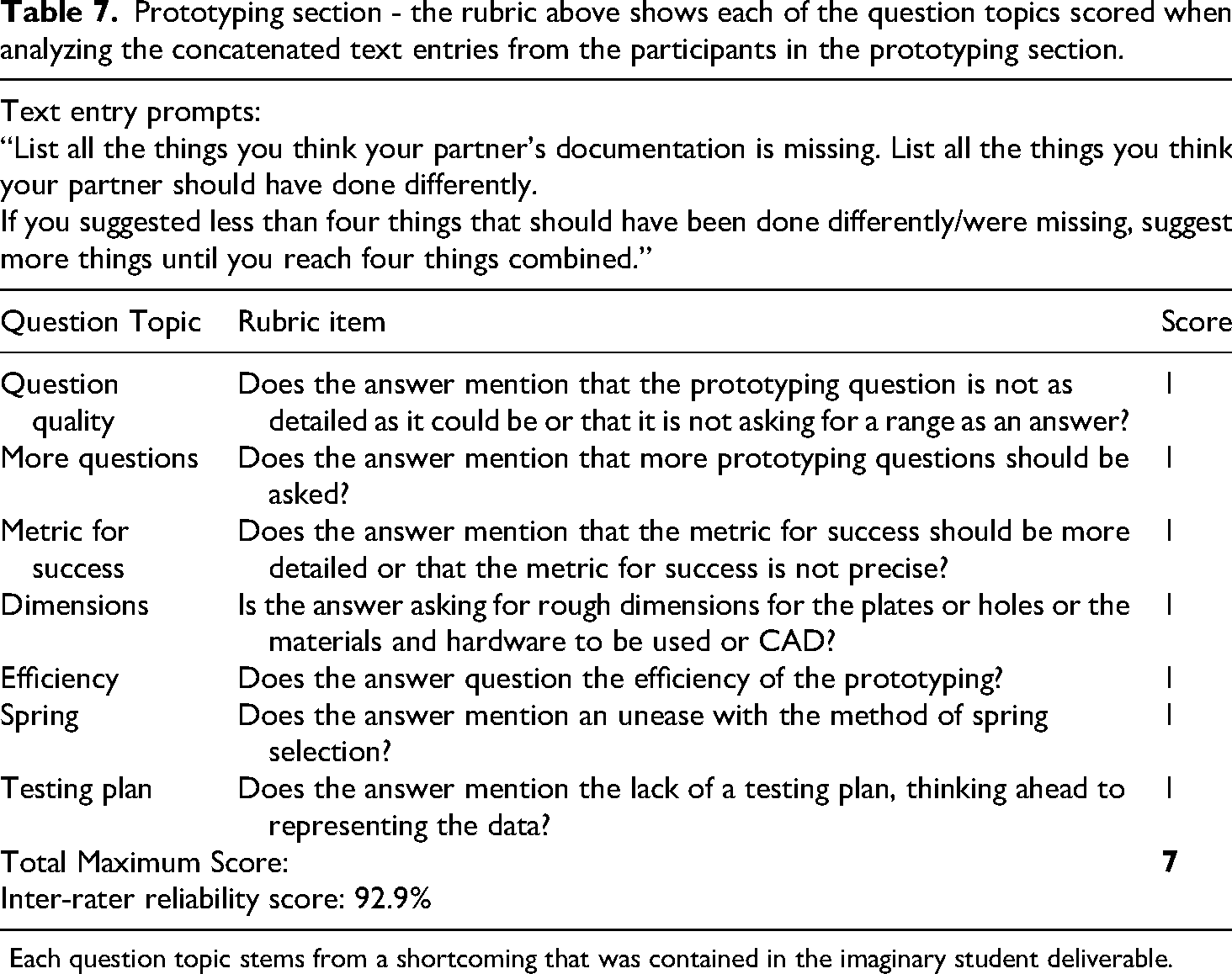

Prototyping section

Open response

The answers to the first three questions were concatenated into a single text field and scored with the rubric in Table 7. Each rubric item is worth one point. An example answer with its score is shown in the Appendix.

Prototyping section - the rubric above shows each of the question topics scored when analyzing the concatenated text entries from the participants in the prototyping section.

Each question topic stems from a shortcoming that was contained in the imaginary student deliverable.

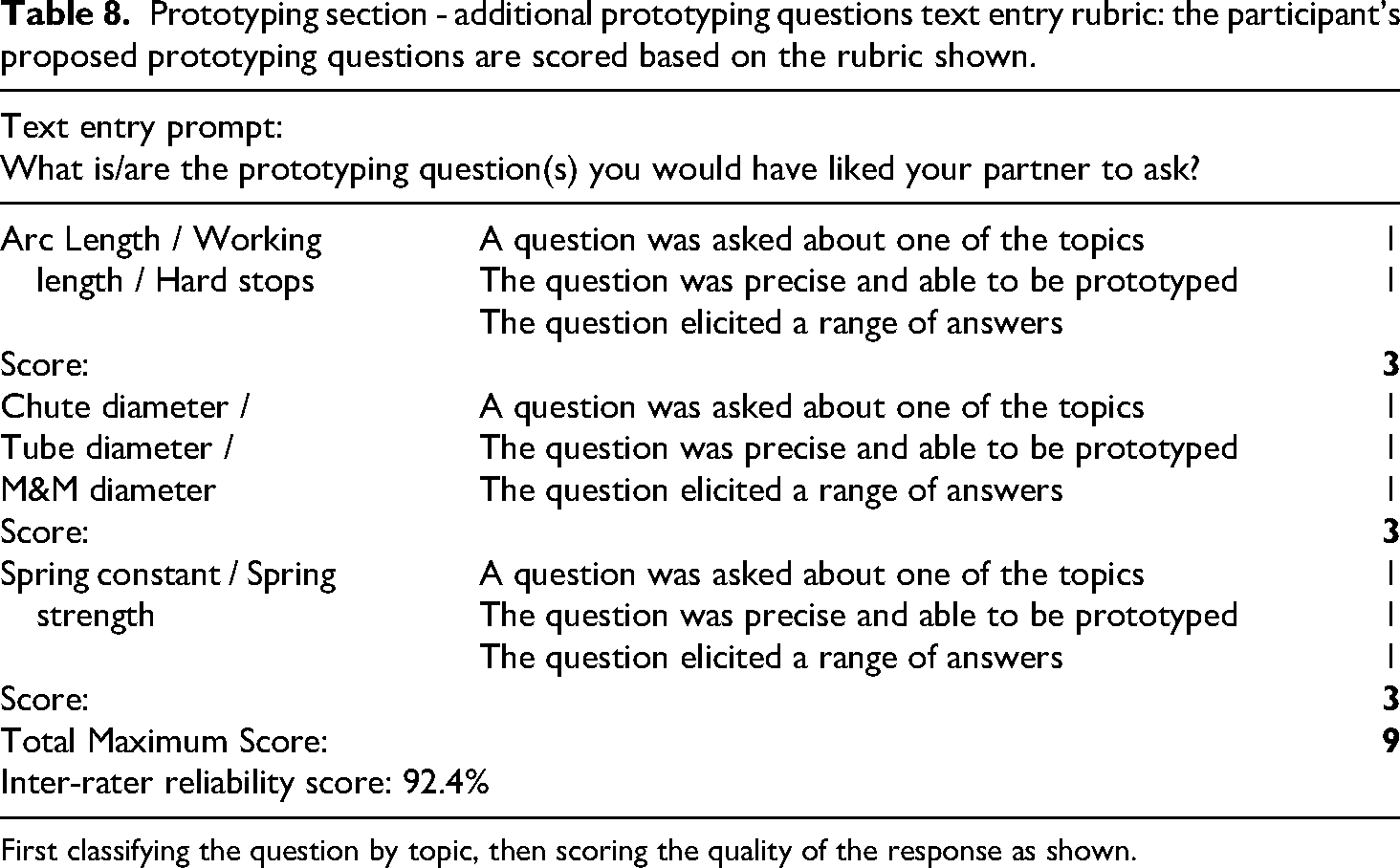

Additional prototyping questions text entry

Directly after the Likert questions in this section were the open response questions:

“Your partner has identified one prototyping question based on the mechanism sketched. How many more would you have liked them to ask?”. “What is/are the prototyping question(s) you would have liked them to ask?”

The answer was scored according to the rubric in Table 8.

Prototyping section - additional prototyping questions text entry rubric: the participant's proposed prototyping questions are scored based on the rubric shown.

First classifying the question by topic, then scoring the quality of the response as shown.

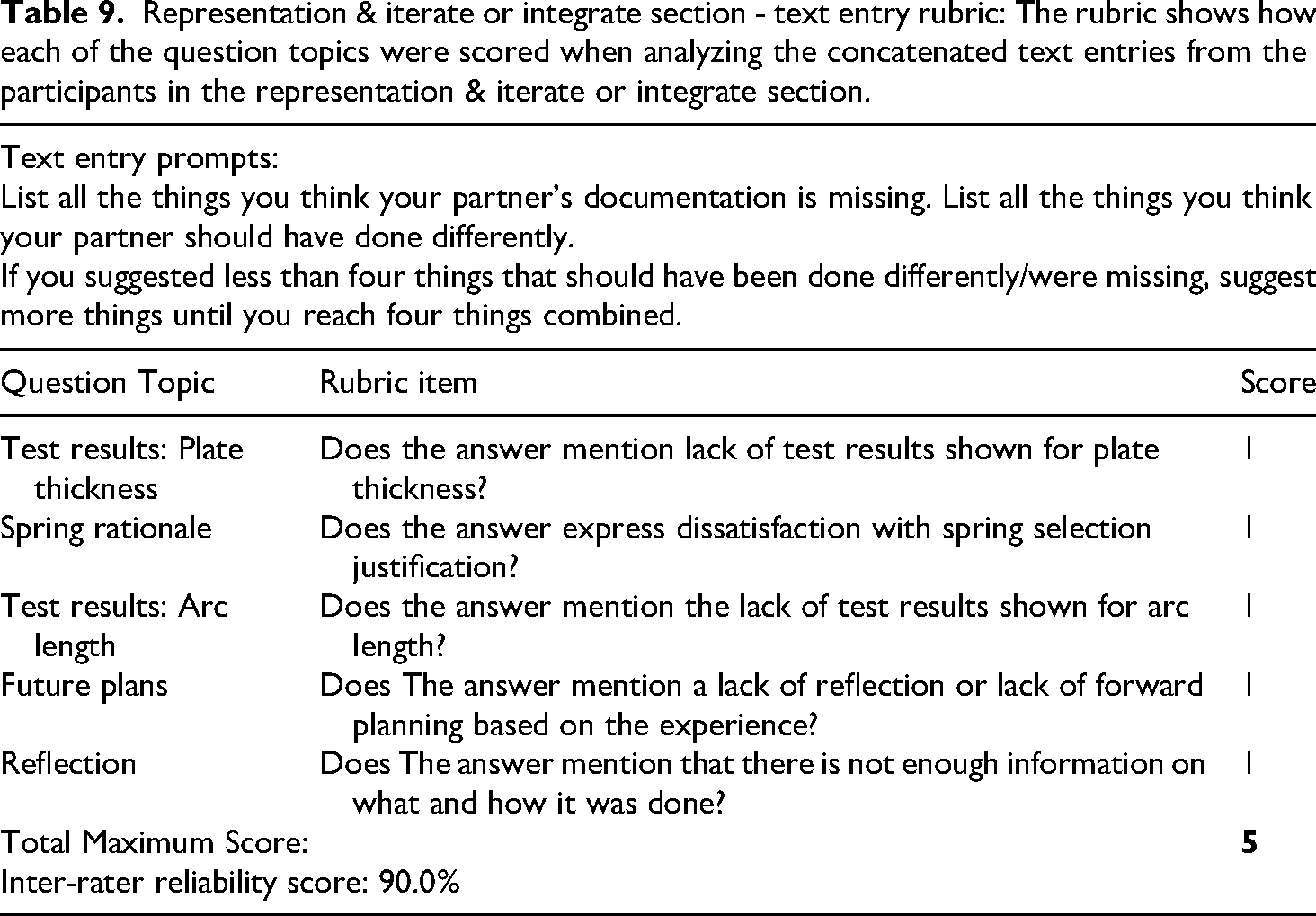

Representation & iterate or integrate section

The answers to the first three questions were concatenated into a single text field and scored as per the rubric in Table 9, where each rubric item is worth one point.

Representation & iterate or integrate section - text entry rubric: The rubric shows how each of the question topics were scored when analyzing the concatenated text entries from the participants in the representation & iterate or integrate section.

The maximum potential scores for each Practice are 42 points for the problem definition & inspiration Practice, 46 points for the prototyping Practice, 12 points for the representation of findings Practice, and 18 points for the integrate or iterate Practice. The reasons for this difference in points are that in the assessment, the problem definition & inspiration Practice and the prototyping Practice are evaluated at the planning stage, where there are more options for what to do. The representation of findings and integrate or iterate Practices are assessed after the physical device is made, and so there aren’t as many things to suggest or give feedback on.

Statistical analysis. We calculated Cronbach alpha for the student scores on the four practices. It was 0.60. This indicates the practices being evaluated by the DPA are different but somewhat related, which is a desirable result for such an assessment. The difficulty index for the four practices on the post test scores was 0.61 for problem definition, 0.55 for prototyping, 0.72 for representation, and 0.55 for iterate or integrate. This shows that the questions are of reasonable difficulty, and there is little indication of a ceiling effect. When the DPA was given as a pre test, the scores are much lower, as shown in Figure 6 and as expected.

Validation

There were two forms of validation for the Design Problem Assessment, content validity and concurrent validity. The content validity was based on the combination of our expert analysis of the design process and the decisions involved in it, an analysis of the learning goals for the introductory ME course, and the determination from our own analysis and the pilot testing with ME instructors and teaching assistants that the DPA questions were accurately probing the set of design decisions.

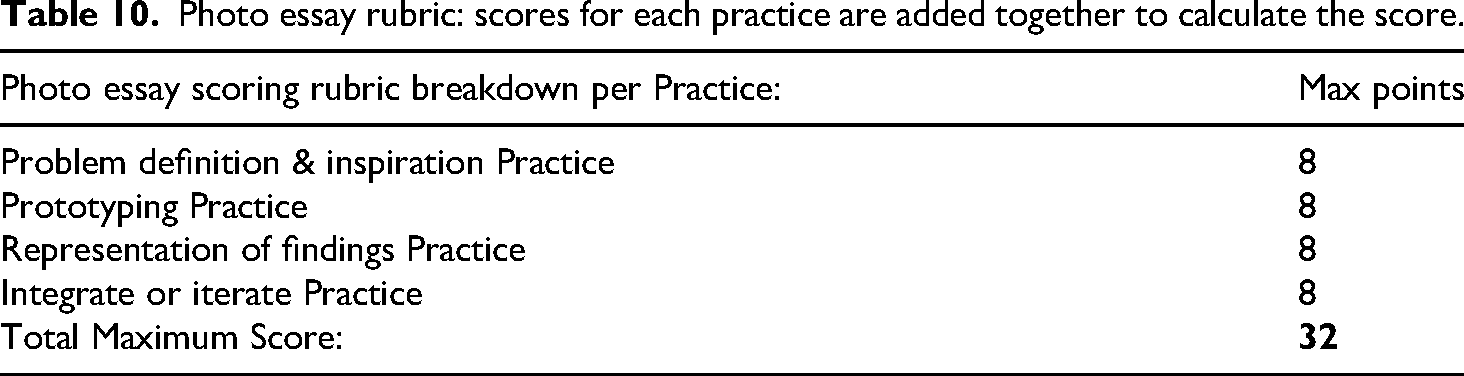

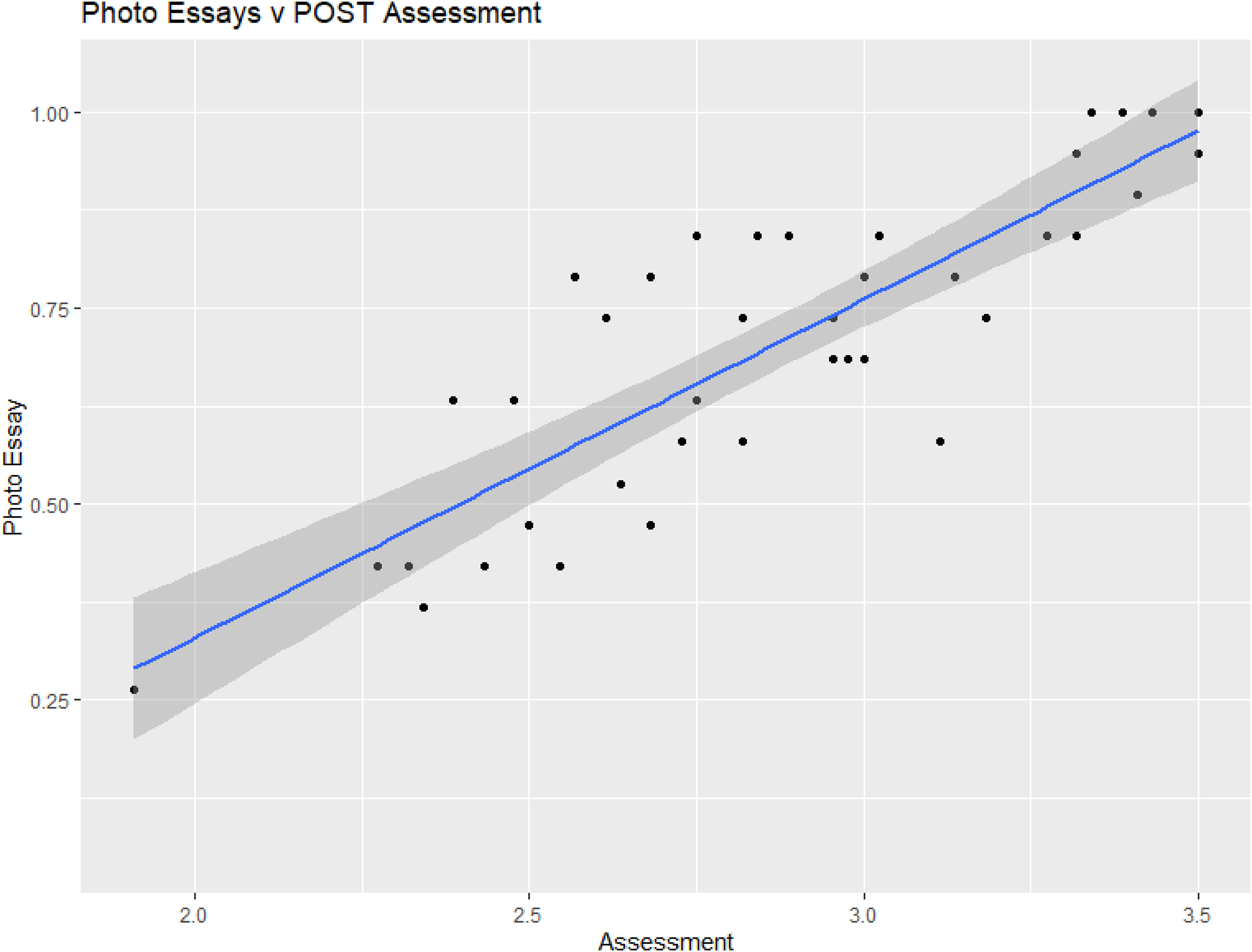

For concurrent validation, we compared performance on the DPA with how students performed on a “photo essay’. In this introduction to ME course the students turned in a “photo essay” at the completion of the course. This photo essay was a poster on which they were asked to completely document their design, prototyping, and build process for the project they completed. The photo essays were scored through a rather laborious process in which two raters iteratively developed and tested a scoring rubric that they felt reflected the goals of the course and the practices of good engineering design shown in Table 10.

Photo essay rubric: scores for each practice are added together to calculate the score.

Although we believe the photo essay does provide a reasonably good assessment of design skills, and it has the advantage that it is directly related to a design product, it has the drawbacks relative to the DPA that: it provides less detail, it is more work to score, it cannot be used on a pre and course basis to examine student growth, and the grading consistency is likely not as high. However, as a test of the validity of the DPA, for each student we compared their score on the photo essay with their score on the DPA.

The comparison is shown in Figure 5. This shows a very strong correlation with a correlation coefficient, t-value, and p-value, all indicating a robust relationship (r = 0.84, t(37) = 9.31, p < 0.0001, 95% CI [0.71, 0.91]). These results show that students’ performance on the DPA is an effective proxy for a student's performance on the photo essay.

Overall photo essay scores versus post course DPA scores. The shaded areas represent the 95% confidence level interval.

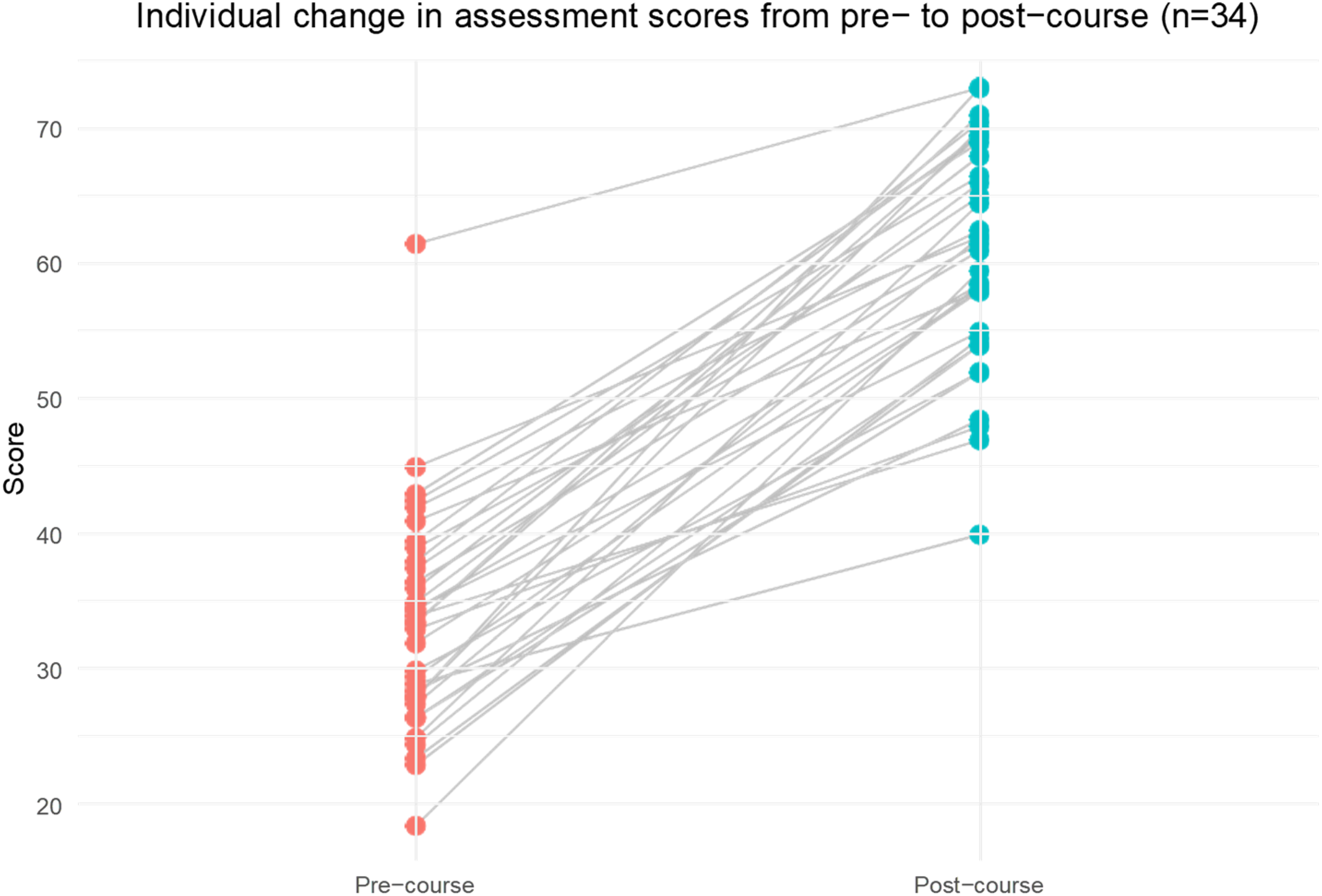

Individual DPA scores pre-course vs. post-course: Slope graph showing the individual progression of student DPA scores from pre- to post-course.

Examples of use

To illustrate the value of the Design Problem Assessment we show its use to measure student progress from both before they started the course to after completion. The average DPA score went from 34 (1.4) before up to 61(1.5) after. This course involved a number of instructional innovations which are too extensive to be explained here and will be discussed in a future paper. The course was redesigned using ideas from cognitive science, particularly the approaches of “deliberate practice” and “preparation for future learning”.

Individual progress on overall DPA scores in pre-course & post-course

Figure 6 illustrates the individual changes in DPA scores from pre-course to post-course for each student. As shown in the graph, every student's score increased following the intervention. The upward trend for each individual line in the graph signifies that (1) the intervention had a uniformly positive impact on all students and (2) further validates the DPA as an assessment which captures student development as it relates to the course's learning outcomes.

We also observed that all demographic groups for which there might be equity gaps, female, first generation-low income, historically marginalized ethnic group, showed the same improvements on the DPA from pre- to post-test as do the majority students.

Measuring individual design practices

The DPA also allows students to be assessed on their skill across the four different design practices, and how the course changed them. The DPA showed that students improved by statistically indistinguishable substantial amounts in each of the four individual practices, so the comparison in this case provided no additional information.

Discussion

This work presents a new assessment instrument, the Design Problem Assessment, DPA for short. The DPA is suitable for measuring the skills of individual students in project-based introductory mechanical engineering design courses. It is based on the ideas in ABET criteria and other engineering design literature that engineering design is a decision-based process and is one of the few assessment that operationalizes the assessment of those targeted criteria for introductory students. It also provides greater specificity to those criteria by articulating the specific decisions relevant to engineering design in a project based mechanical engineering design course and probing students’ ability to make those decisions well. By using a format where students are critiquing the design work of a hypothetical partner, the assessment is made practical for students to complete in a relatively short period of time (20 min) and straightforward and efficient to score. The development followed the best practices identified in the assessment literature, and it has undergone extensive testing and refinement to ensure that it is valid at measuring the skills of interest. It has been used on a pre and post course basis to show considerable learning gain in a well-designed course. With minor modifications, the DPA should be suitable for use in other mechanical engineering design courses.

Conclusion and implications

We have presented the development of the Design Problem Assessment (DPA) as an assessment tool suitable for use with students taking or having taken a project-based mechanical design course. This instrument identifies important engineering practices and provides a way to measure a learner's skill in each of them in an efficient and effective way. It can be used as a formative assessment tool to measure students’ preparation and progress during a course, or for summative assessment to gain a rigorous measure of the learning achieved in a course. Although targeted toward an introductory project design course, it could be readily adapted for use in a variety of engineering design courses. The relevant engineering practices remain the same and the same prompts and general approach of having students trouble-shoot a flawed design process would be valid. The primary modifications that would be needed would be to choose example designs and flaws appropriate to the course and its intended learning outcomes. For more advanced courses this would generally mean greater complexity.

Supplemental Material

sj-docx-1-ijj-10.1177_03064190251396099 - Supplemental material for A novel assessment of mechanical engineering design skills

Supplemental material, sj-docx-1-ijj-10.1177_03064190251396099 for A novel assessment of mechanical engineering design skills by F Krynen, S Salehi and C Wieman in International Journal of Mechanical Engineering Education

Footnotes

Acknowledgements

We gratefully acknowledge the assistance of L’Nard Tufts with the assessment scoring.

Student participation in this work was by written consent, and the research was approved by the Stanford University Institutional Review Board as protocol #48785.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Howard Hughes Medical Institute through an HHMI Professor grant to C. Wieman.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The individual data contained in this article cannot be shared to comply with student privacy requirements.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.