Abstract

Journalology is a form of bibliometrics that assigns relative value to journals based on the scientific influence of their published articles. 5 A frequently used metric for rating scientific journals is the impact factor (IF). Calculation of the IF comes from citation index data at Web of Science™ (Thompson Reuters). For example, the 2015 IF for Veterinary Pathology would be calculated as the total citations in 2015 to Veterinary Pathology articles published in 2013–2014 divided by the total citable articles published in 2013–2014. To simplify, this metric is fundamentally an average number of citations per article published over the previous 2 years.

The IF can serve as an indicator of a journal’s scientific influence; however, the scope of the IF’s significance has extended much farther than originally intended. 5,9,10 For instance, some institutions include the IF of scientists’ publications to make decisions on hiring or to evaluate faculty for promotions and bonuses. 6,10 Likewise, ranking of academic institutions can be based on the IF of the institution’s publications, and several institutions have established minimal IF thresholds for scientists to publish their data. Furthermore, funding agencies have used IFs to screen/rank scientists and their grant applications. 7 Because of increasing pressure and haste to publish in high-IF journals, the incidence of faulty and nonreproducible science being published is worrisome. Evidence for this is seen in the direct correlation between the journal IF and the retraction index (a measure of retraction frequency), meaning that higher IF journals also have a higher retraction rate. 4

The impetus to publish in high-IF scientific journals has reached a point where it has become detrimental to science. 3 This atmosphere has also spawned several mechanisms for gaming/manipulating of IF scores, and these range from efforts to manipulate citation data to overt editorial pressure on authors to cite articles in their respective journals. 12 In recent years, regulations have been enacted to constrain these manipulations; even so, the continued lack of transparency (and reproducibility) for IF calculations is a sign that warrants concern.

As a counterbalance to this “impact factor mania,” a reformation has started to emerge. Recently, several Nature group journals have abandoned exclusively publishing impact factor metrics on their websites. Instead, these journals have begun promoting more diverse metrics to highlight their journals’ accomplishments, including a new metric called the 2-year median. 1 Similarly, editors for the American Society for Microbiology journals have recently decided to remove IF content from their journals’ websites so as to bring the focus back on publishing high-quality science. 3

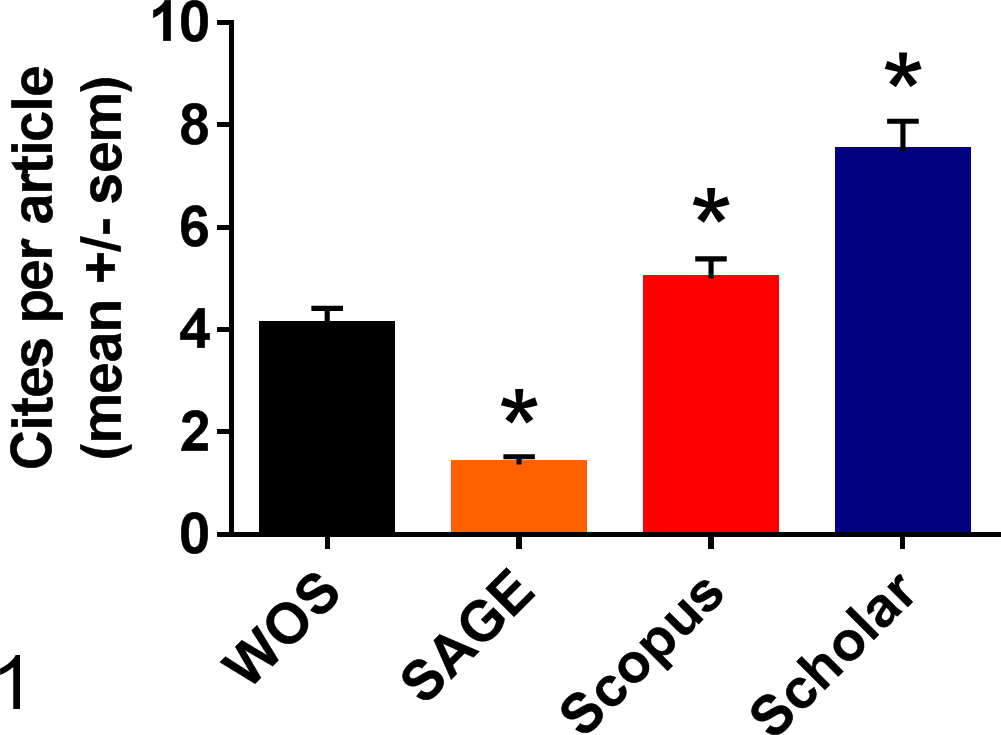

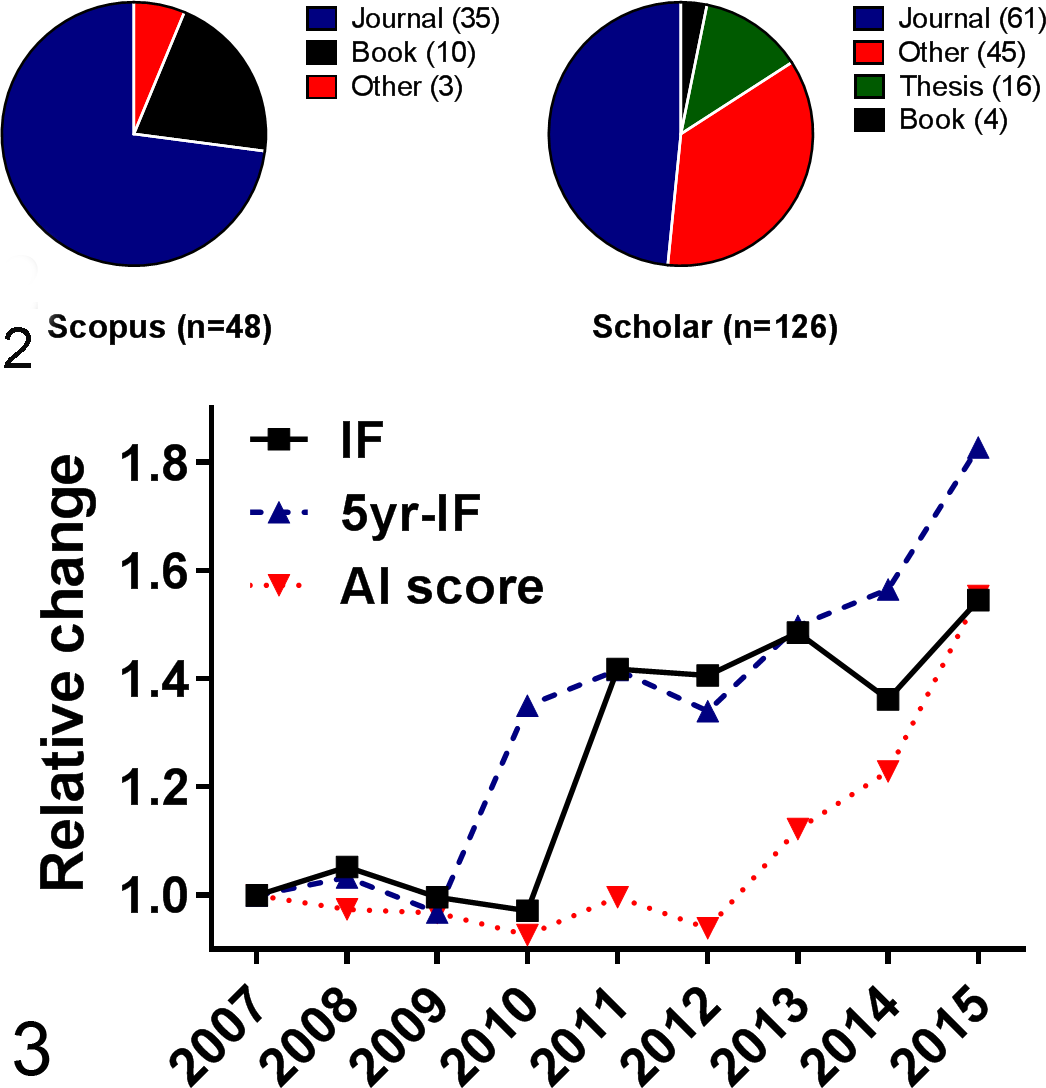

With regard to Veterinary Pathology (IF = 2.1 in 2015), how does the IF potentially affect the journal and what other options are available? The IF can affect the quality and scope of manuscript submissions. If the IF is below a certain threshold (∼2.0), some of our peer pathologists could not submit their manuscripts to the journal without experiencing a penalty from their home institution. Similarly, a low IF might decrease receipt of good-quality manuscripts from investigators, and the converse is true as well. Strategic decisions by the editorial board on how to guide and improve the journal include discussions regarding journal metrics such as the IF. One should keep in mind that the IF is a single metric, based on 2-year data and from 1 citation index. Citation counts from other citation indices with a larger scope and size of databases than Web of Science reveal that the impact of Veterinary Pathology is notably larger than first perceived (Figs. 1, 2). In addition, editors often identify published articles that have had several citations so they can recruit more of these types of papers, but this approach can be dependent on the source of citation index data. For instance, evaluation of articles from the first issue of Veterinary Pathology in 2015 (assessed October 6, 2016) shows variations between Scopus (Twenhafel et al, 11 n = 9 citations), Web of Science (Baseler et al, 2 n = 9 citations), and Google Scholar (Sabattini et al, 8 n = 18 citations) as to the highest cited article. Use of multiple citation indices could be beneficial to see a broader scope of the journal’s successes.

Citations of Veterinary Pathology articles (n = 225; mean ± standard error of the mean) published in 2013 and 2014 from Web of Science (WOS), SAGE, Scopus, or Google Scholar (Scholar) databases. Data were assessed July 2016. *P < .0001. Dunn’s multiple comparison test versus WOS.

Sources of citations for Veterinary Pathology articles from Volume 1, 2013, that were found in Scopus or Google Scholar (Scholar) but not Web of Science (WOS) databases. Data were assessed August 2016. Note that “other” sources were typically non-English sources.

In close, we offer some rhetorical comments to stimulate discussion. Do we use the IF as a solitary metric (as many journals still do) to gauge or validate the success of Veterinary Pathology, even though it may not optimally represent our global impact? Do we diversify the use of journal metrics (Fig. 3), diversify our sources of citation index data, or focus our definitions of impact in comparison to peer group journals, of which Veterinary Pathology is already in the top 10th percentile (13th of 138 journals, Veterinary Sciences, www.jcr.incites.thomsonreuters.com)? These and similar discussions have occurred in the recent past, but given the current events and changes on the foreseeable horizon, an active and open dialogue may be warranted for the readership and stakeholders of Veterinary Pathology.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.