Abstract

Studies regularly demonstrate how well intelligent agents (IAs) can support humans or are demonstrably superior to them in some areas. Given that some tasks likely remain unsuitable for even the most intelligent machines in the mid-future, work in hybrid teams of humans and IAs—where the capabilities of both are effectively combined—will most likely shape the way we work in the coming decades. In an abductive study, we investigate an early example of hybrid teams, consisting of a conversational intelligent agent (IA) and humans, that aims to improve health behavior or change personality traits. We theorize Transactive Intelligent Memory System (TIMS) as a new vision of collaboration between humans and IAs in hybrid teams, based on our empirical insights and our literature review on transactive memory systems theory. Our empirical evidence shows that IAs can develop a form of individual and external memory, and hybrid teams of humans and IAs can realize joint systems of transactive memory—a competence that current literature only ascribes to humans. We further find that whether individuals view IAs merely as external memory aids or as part of their teams’ transactive memory is moderated by the tasks’ complexity and knowledge intensity, as well as the IA’s ability to complete the task. This theorizing helps to better understand the role of IAs in future team-based working processes. Developers of IAs can use TIMS as a tool for requirements formulation to prepare their software agents for collaboration in hybrid teams.

Keywords

Introduction

Artificial intelligence already accomplishes complex and knowledge-intense tasks, for example, programming (Mollick, 2022; Nguyen and Nadi, 2022), chip design (Zhang et al., 2021), and medical surgery (Parums, 2021). Growing evidence suggests that intelligent agents (IAs) at workplaces serve as more than just tools that are used by humans. IAs are intelligent systems that act in an operational environment, aiming to achieve most favorable outcomes, based on objectives that have been set by their developers (Russell and Norvig, 2021: 22). The current technical progress allows the suggestion that IAs can operate as actors able to join the workforce, similar to humans joining a team (Seeber et al., 2020; Siemon et al., 2020; Tarafdar et al., 2023). Research explores implications of the technology on individuals and organizations (e.g., Huysman, 2020; Lyytinen et al., 2021; Shollo et al., 2022; Willcocks, 2020). The current technological state, however, indicates that IAs can also support team-level work, e.g., through automated task allocation, speech transcription, and translation (Dwivedi et al., 2023). Such support is highly desired, given today’s interconnected workplaces, where workers need a high degree of innovativeness, creativity, and the mutual inclusion of other team members’ knowledge. Some organizations have begun to leverage these capabilities to support executive meetings (Bort, 2017; Mishra, 2022) or assist courts in their rulings (Chen et al., 2022). Commercial services emerge already that offer automatic meeting transcription and summarization (e.g., Otter.ai 1 ), writing support (e.g., QuillBot 2 ), and question-answering systems for knowledge-intense tasks (e.g., ChatGPT 3 , Perplexity 4 ). Current societal challenges like aging population, skill shortage, and the fact that humans desire meaningful tasks reinforce the need for IAs contributing to knowledge work in teams (Riemer and Peter, 2020; Willcocks, 2020). Yet, to leverage this technology, research and practice need to understand how humans effectively work together with IAs in hybrid teams and vice versa. With hybrid teams, we mean a team of humans and IAs in which at least two human actors and at least one IA interact and jointly contribute to the accomplishment of a task (we consider one human interacting with one or more IAs as an individual-level interaction).

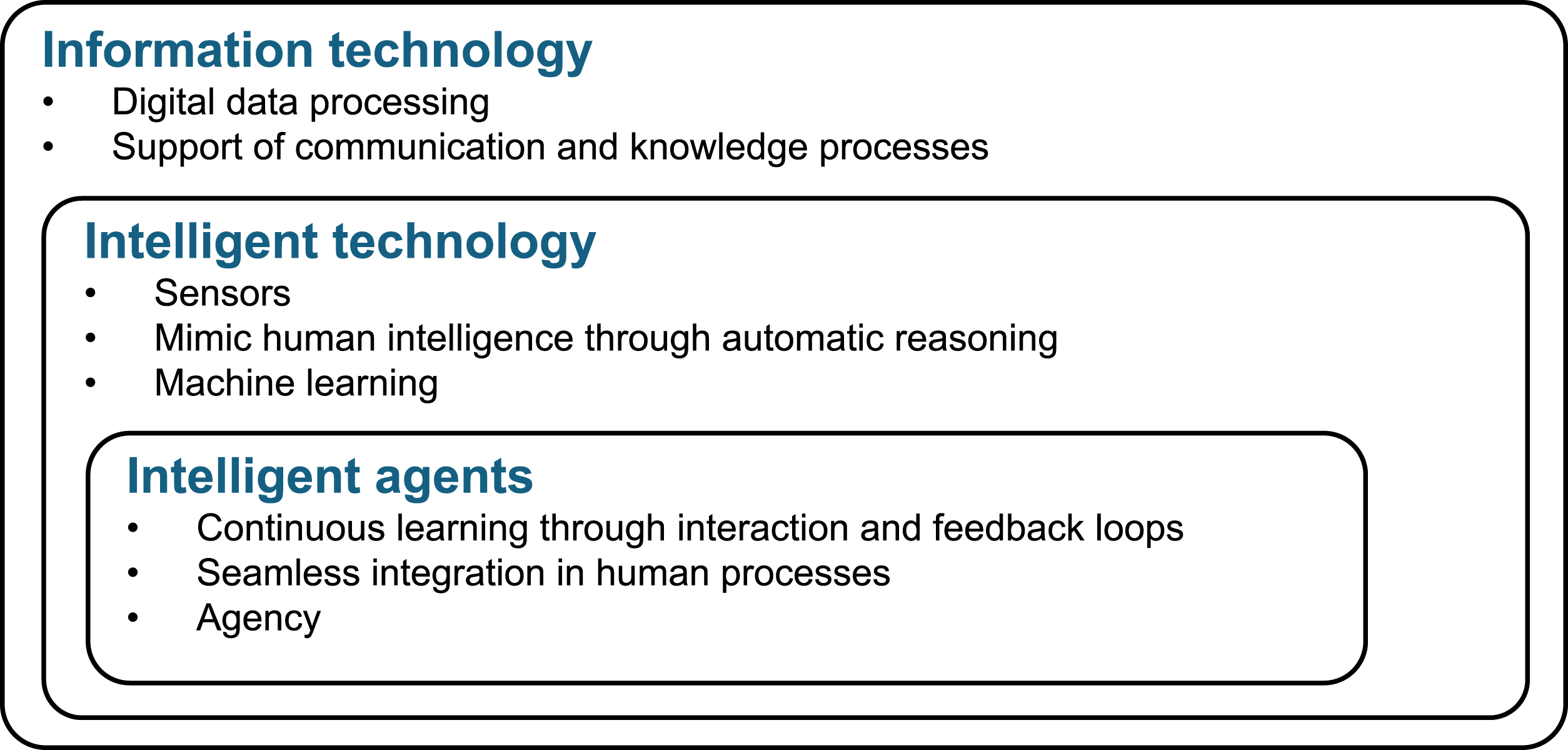

We attribute today’s proliferation of IAs to three characteristics. First, IAs can process many data in a short time, automatically deduce patterns from data, and thereby leverage methods from the field of machine learning (ML). Earlier types of information technology (IT) like expert systems, by contrast, had to be programmed with explicit instructions. These instructions describe (in a rule-base way) what is to be done in which case and thereby restricted the field of operation, because (human) programmers have limited resources to explicitly program rules for many situations. Systems based on ML, though, consist of instructions on how to recognize new rules from data and thereby can also discover new distinctions, some of which were previously unknown to humans (Berente et al., 2019; Shollo et al., 2022). The inferences from ML-detected pattern, which are often probabilistic in nature, allow IAs to act and solve tasks in situations that were not explicitly envisioned before 5 (Faraj et al., 2018). The Otter.ai service, for example, can create a transcript and summary of meetings on a new topic, even if the developers did not have this particular topic in mind when they created the tool. This is because speech recognition and text generation models today are trained with a huge amount of different audio recordings to extract words from audio with different pronunciations and dialects. Second, IAs can automatically make decisions and perform actions by applying rules to data independently of humans, which literature refers to as “automated decision-making” (Shrestha et al., 2019), “agency” (Ågerfalk, 2020), and even “autonomy” (Hu et al., 2021). The abilities to learn and act independently of humans challenge known assumptions about how users interact with technology (Schuetz and Venkatesh, 2020), for example, the direction of the user-artifact relationship, control, and monitoring mechanisms. Third, IAs are—as we understand them—seamlessly integrated into human environments, with little technical barriers of use. For example, they come with natural language interfaces, like chatbots used in customer support or with voice assistants to control home automation systems. This seamless integration of IAs into human environments does not only take place at the individual but also on a team level, when, for example, tools like the widespread collaboration tool “Slack” find colleagues talking about similar topics and suggests to involve them in a conversation because this would enhance and positively influence human teamwork (Woyke, 2018). A similar example is the automatic assignment of responsibilities for incoming inquiries, which can be delegated to Slack (Slack, 2023). While current artificial intelligence applications often focus on one of these three characteristics (e.g., pattern detection systems in business analytics frequently have no agency, conventional industry robots act independently but do not learn from data), IAs realize all three characteristics together. Hence, our study focuses on IAs as one type of intelligent technology.

The emergence of IAs in organizations, which appear, for example, in the form of conversational IAs with natural language interfaces, put current assumptions about knowledge work in teams into question: In hybrid teams, not only humans but also IAs might contribute to a team’s memory, and they interact to accomplish knowledge work. IAs, in fact, possess increasingly humanistic capabilities (Schuetz and Venkatesh, 2020), which allow them to be involved in different knowledge processes, like gathering, storing, and retrieving information. Saleforce’s CEO, for example, is reported to having included a conversational agent in quarterly analyses meetings to get an opinion on the business performance that is not affected by human prejudice (Bort, 2017). Slack integrates ML-based large language models to automatically summarize chat conversations for team members entering a chat, or extract customer opinions from product reviews (Slack, 2023). The information systems (IS) field has started to examine different aspects of interaction between humans and IAs (Huysman, 2020). However, current research assumes that contributions of IAs take place at the level of individual users or developers (e.g., Grønsund and Aanestad, 2020; Schuetz and Venkatesh, 2020; van den Broek et al., 2021), on the level of organizations (e.g., Coombs et al., 2020; Günther et al., 2017; Shollo et al., 2022) or even entire economies (Brynjolfsson et al., 2017). Beyond that, we find that IAs can interact with humans also in hybrid teams, which is a field that is largely unexplored so far. The lack of studies on team-level IA interaction—and the necessary technical competencies of IAs to carry out such work—is surprising, given that teamwork is an important approach to cope with intricate, dynamic, and knowledge-intensive work of today’s challenging and dynamic business environment (Choi et al., 2010; Salas et al., 2008; Zhang et al., 2011).

This study therefore draws on the literature on knowledge work in teams and investigates an early example of an IA that works together with multiple humans as part of a team. Following an abductive logic (which we explain in our method section), we explore the newly emerging shared memory structures—what Wegner (1986) described based on psychological notions as “group mind”—in hybrid teams of humans and IAs (which we see as an anomaly of human-only teams). We focus on the interaction and knowledge processes at team level and pursue the research question:

How can hybrid teams of humans and intelligent agents carry out knowledge work and use each other as mutual memory aids to form a group mind?

To answer this question, we conducted an in-depth qualitative case study on IAs, more specifically on intelligent conversational agents, that help to improve health, lifestyle, and personality of individuals. Our case is a software platform for digital health interventions called MobileCoach that assists patients, health professionals, and other actors in health and lifestyle-related activities. Instances of that platform materialize IAs that go well beyond operating as a data processing and communication tool as they act independently within hybrid teams. The critical case that we selected is an early, yet predestined, example of IAs that possess all three above outlined characteristics together (pattern detection, automatic decision-making and acting, seamless integration in work environments). Hence, it is a unique opportunity to investigate the interaction of IAs with humans in forming hybrid teams. Our abductive analysis led us to systematically review literature on and expand the theory of transactive memory systems (TMS), which is the core theory of the group mind of human teams.

Classical TMS explains how individuals use different memory types and processes to pursue knowledge work and collaborate to achieve effective outcomes (Wegner, 1986, 1995). Our study in contrast demonstrates that hybrid care teams use each other as memory aids and can jointly develop a new form of TMS, which we describe as Transactive Intelligent Memory Systems (TIMS). In this context, humans consider and use IAs as part of their transactive memory when their task mastery is sufficient for the task complexity and otherwise as external memory. Likewise, IAs can establish a novel form of memory, comparable to the individual human memory known from TMS and different to the traditional technical memory understanding that is attributed so far to technology and where memory is used a synonym for a tool’s storage capability rather than an intellectual memory. According to our new conceptualization, IAs can also possess a new form of knowledge, which is comparable to the tacit knowledge of humans. Hence, IAs can complement and augment humans in knowledge work and mitigate skill shortages and productivity gaps in many modern economies (c.f., Willcocks, 2020). This new conceptualization of hybrid teams helps to systematically study collaboration modes in these groups of humans and IAs. The concept also allows formulating requirements for effective design of IAs so that they can participate in such collaborations.

Before we give a detailed account on our study, the next section briefly summarizes literature around knowledge work in organizations and introduces the theory of TMS. Then, we describe our case study and abductive research approach in the third section. In the fourth section, we present our findings and discuss their implications to research in practice in the fifth section. We close the paper with a summary of the study contributions.

Theoretical background

In the following, we summarize literature on knowledge work in organizations, particularly in teamwork, and give an overview of studies that investigated classical IT as well as intelligent technologies to support knowledge and teamwork. This section closes with a cursory introduction to TMS theory, which emerged in our abductive study as the most promising theoretical lens for explaining situations in which IAs join teamwork.

Knowledge work in teams

Humans engage in teamwork to pursue the intricate, dynamic, and knowledge-intensive work that characterizes today’s challenging and dynamic business environment (Choi et al., 2010; Salas et al., 2008; Zhang et al., 2011). Hence, organizational knowledge work relies on the exchange of knowledge within teams where individuals collaborate to accomplish a task (Davis and Naumann, 1997; Drucker, 1994; Frenkel et al., 1995; Schultze, 2004). Team members develop and use a common base of knowledge and experience that supports them in establishing jointly valued goals (Kitaygorodskaya, 2006; Klimoski and Mohammed, 1994; Salas et al., 2008).

Humans that work in teams need a different skillset to those who work individually. While technical competences (i.e., substantive knowledge, skills, and abilities related to the team’s objective) are, according to Larson and LaFasto (1989: 62), “the minimal requirement on any team,” interpersonal competencies enable knowledge exchange and the creation of a shared group mind (Larson and LaFasto, 1989; Sapsed et al., 2002).

Teams allow solving tasks that individual workers are not capable of, particularly, because team members use each other as sources of knowledge and inspiration, form a joint group mind (Wegner, 1986) and distribute cognition within organizations (e.g., Hutchins, 1995; Lehner and Maier, 2000; Nelson and van Osch, 2017; Nevo and Wand, 2005). Team members can share, for example, their hardly articulable tacit knowledge (McAdam et al., 2007; Nonaka and von Krogh, 2009; Polanyi, 1958) with team members in their close collaboration network. While team members exchange a significant portion of knowledge to enable efficient teamwork (Hutchins, 1995), essential knowledge and skills remain heterogenous (Zhang et al., 2020) and unevenly distributed within the team (Choi et al., 2010).

IS research has spent considerable effort on investigating how IT can support knowledge work in teams. Research can be categorized in the design and effective use of IT for coordination, collaboration, and communication (Borghoff and Schlichter, 2000). Although studies demonstrate that these IT systems, for example, help team members to communicate and collaborate in presence (Zhang et al., 2011) as well as virtually (Fang et al., 2022; Hansen et al., 2019) and serve as individual and collective knowledge repositories (Oshri et al., 2008), these IT systems operate on a relatively narrow problem space where developers had to implement every functionality in software code. Users of knowledge repositories (e.g., expert systems and wikis) had to manually enter codified knowledge in a well-defined format (Schuetz and Venkatesh, 2020).

Intelligent technology support of knowledge work in teams

The proliferation of intelligent technology in organizations—as it happens, for example, with collaborative robots (Sowa et al., 2021), intelligent automation in knowledge and service work (Coombs et al., 2020), and decision support (Shollo et al., 2022)—significantly alters knowledge work in teams (Schuetz and Venkatesh, 2020). Scholars describe constellations where humans and IAs work together as “human–autonomy teams” (Endsley, 2017), “hybrid intelligence” (Akata et al., 2020), “hybrid knowledge work” (Oeste-Reiß et al., 2021), “metahuman systems” (Lyytinen et al., 2021), and “human-artificial intelligence hybrids” (Fabri et al., 2023; Rai et al., 2019). Given our focus on teamwork, we adopt the term hybrid teams.

These studies point to various capabilities in which intelligent technologies differ from traditional IT systems, as we illustrate in Figure 1. While IT systems in general offer data processing functionalities and can be used to support knowledge processes, the subcategory of intelligent systems consist of more dynamic variants, which can monitor their environment through sensors and employ rules and reasoning facilities to make inferences (Schuetz and Venkatesh, 2020). Several applications in the field of artificial intelligence, to which intelligent technology and its subcategories can be classified, aim to mimic human intelligence (Chollet, 2019). This materializes in the form of robots, natural language interfaces, and ML algorithms that allow to dynamically extract pattern from data and thereby learn from the environment (Endsley, 2017). The capability of ML allows intelligent technologies to adapt to a broader variety of conditions than classical IT systems, as they learn rules from the variety and depth of empirical data. Moreover, ML techniques can be used to discover pattern in data, which can be a source of novel knowledge that is generated independently of humans (Berente et al., 2019) but can sometimes be hard to understand (Berente et al., 2021). Exemplary characteristics of system categories illustrating that intelligent agents are instances of the broader categories.

IAs are, as we see them, a class of systems within the field of intelligent technology that combine capabilities that, in addition to possessing the characteristics of their parent classes, enable a seamless integration in human environments through natural language interfaces (e.g., chatbots and voice assistants) and ML. Important for that is the capability of automatic reasoning and decision-making, which gives them agency (Russell and Norvig, 2021). This means that IAs can act independently of humans. For example, IAs can participate in markets and automatically bid in auctions (Herrmann and Masawi, 2022), while they can recognize prediction errors in suboptimal forecasts and update their models accordingly. So, systems in this category can to some extent compensate for failures that they make in operation and, thus, improve over time (Endsley, 2017). These capabilities make the IAs different from other types of intelligent systems because “these systems now can change their behavior [and act] without direct human intervention.” (Ågerfalk, 2020: 5)

A vivid discourse emerged around the organizational applications of IAs. Yet, studies currently focus on three units of analysis: The first stream focuses on the interaction of humans and IA, on the level of individual users or developers (e.g., Endsley, 2017; Fügener et al., 2021; Grønsund and Aanestad, 2020; Kane et al., 2021; Lebovitz et al., 2021; Schuetz and Venkatesh, 2020; van den Broek et al., 2021). These studies examine, for example, the potential uses of IAs to support business processes (Wilson and Daugherty, 2020), the automation potential of tasks (Vimalkumar et al., 2021), and issues related to the inscrutability of ML models (Berente et al., 2021), but the core of these studies lies not on investigating the cognitive processes that happen with a team’s group mind as we focus on in the present study. A second stream focuses on the level of organizations (e.g., Benbya et al., 2021; Berente et al., 2021; Coombs et al., 2020; Günther et al., 2017). Research topics include the value creation processes (Shollo et al., 2022) or new potentials of knowledge generation (Sturm et al., 2021). A third stream studies entire economies (e.g., Brynjolfsson et al., 2017) and assess the economic impact of IAs and other intelligent technologies. A connected discourse on negative workforce implications of automation through IAs (Frey and Osborne, 2017; Manyika et al., 2017) turned recently into a research agenda for IS to better understand the meaningful use of intelligent technologies (Huysman, 2020; Riemer and Peter, 2020; Willcocks, 2020, 2021), which we use as a motivation for our study. In addition to contributions of IAs on the individual, organizational, and macroeconomic level, we argue that intelligent technologies in the form of IAs can also benefit humans on the team level because of their aforementioned new capabilities that differ from that of traditional IT.

Transactive memory systems (TMS) theory

To explain the complex interactions and knowledge sharing mechanisms within teams, Wegner (1986, 1995) proposed the theory of TMS. The theory conceptualizes the development of group mind with its processes, information structures, and the inferred knowledge in teams. The main principle of TMS is the limitation of human individual memory and the fact that we use other individuals as external memory aids. TMS thus describes the set of individual memory systems together with the communication and interaction that take place between the individuals who hold this memory. Thereby, a collective system (i.e., the transactive memory) emerges. The development and exchange of knowledge constitutes the key component for TMS (McAdam et al., 2007). As a sub-unit of organizational memory (Jackson and Klobas, 2008), TMS explains how team-specific interaction mechanisms and joint knowledge work is transferred to the organization-wide activities and thus result in higher work efficiency (Nevo and Wand, 2005).

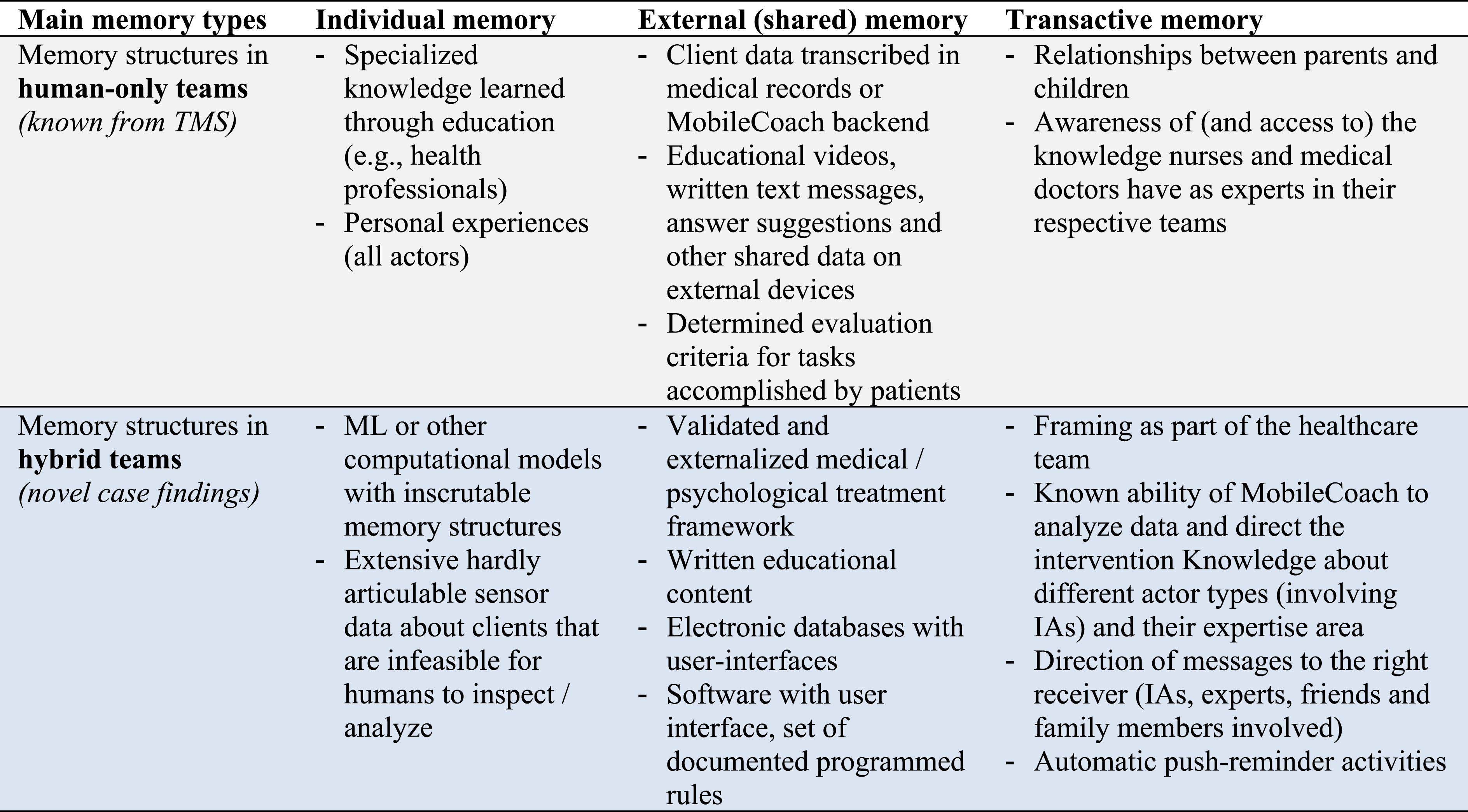

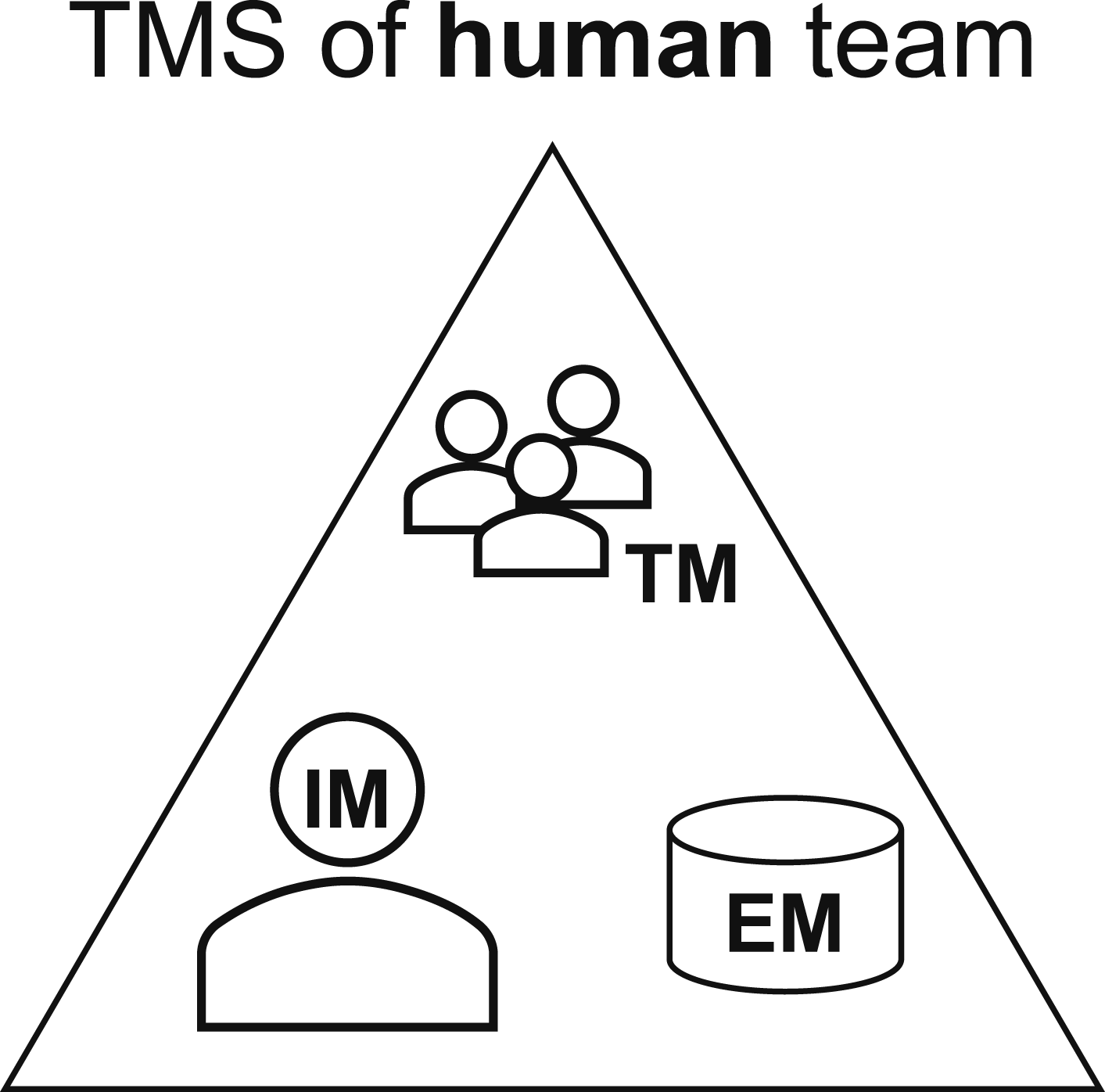

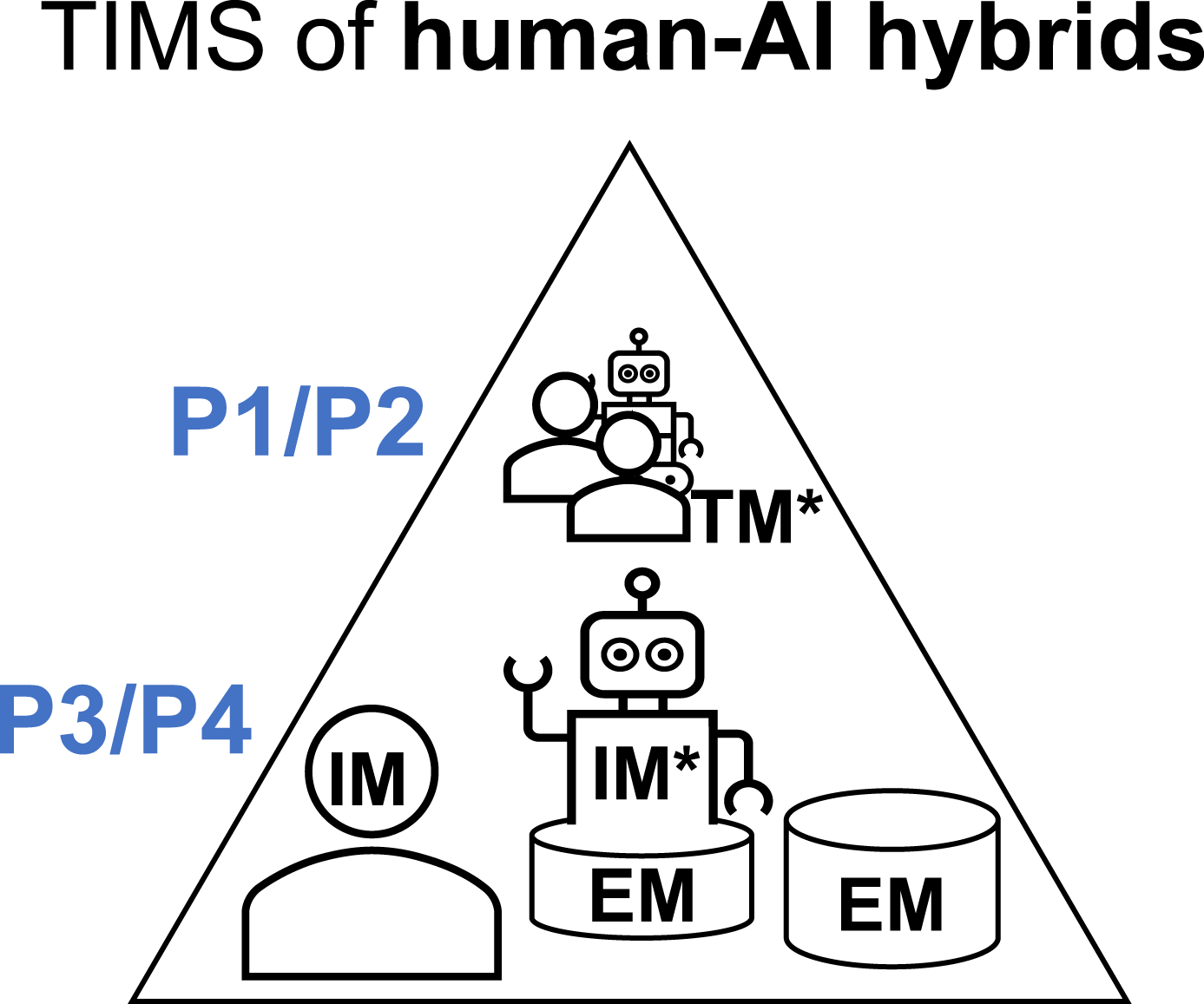

In detail, Wegner (1986, 1995) distinguishes between three main memory types (see Figure 2). With the individual memory (1), individuals encode, store, and retrieve information as part of their own information processing. Through the encoding and storage of information and knowledge using external storage systems (e.g., shared documents, databases, or intranets accessible by the team members), the external memory (2) emerges and the directory to the knowledge being stored is held within people’s individual memory. Transactive memory (3) occurs once other individuals and experts serve as external memory aids for each other allowing the establishment of a collective and complex storage system for knowledge, where different persons collaborate with each other to handle information and knowledge in form of transactions. External memory aids simply represent storage objects that individuals use in addition to their individual memory to store information and knowledge. Literature distinguishes between human memory (memory) and machine memory (storage). While the content of a technical memory must be triggered externally and the memory itself is considered as terminated, and can be switched off as needed, human memory develops as a self-contained, autonomous process that cannot be externally deactivated and is in constant use. While humans have no control over the internal location of content in their memory, this can be predetermined to a certain extent in technical memory. Content in human memory is retrieved by the person who owns it, while machine memory is not tied to a specific person (Lehner, 2021). Transactive memory, by contrast, emerges through using other individuals in the team as memory aids and by knowing that another person can help to obtain this knowledge. Simultaneously, a superior meta-memory evolves in the mind of individuals (Nevo et al., 2012), which stores meta-knowledge that reflects available knowledge topics and also directions to access these topics (Wegner, 1995). Consequently, individuals serve as mutual external memory aids and allow benefiting from the expertise and knowledge of other individuals in the team. This results in the development of a shared network of “who knows what” (Lewis and Herndon, 2011; Wegner et al., 1985). Thereby, encoding, storage, and retrieval of information and inferred knowledge are the three main transactive processes (e.g., Argote and Guo, 2016; Jackson and Klobas, 2008; Wegner, 1986). Conceptualization transactive memory systems with individual memory (IM), external memory (EM), and transactive memory (TM).

When realizing TMS, individuals rely not only on the articulate explicit knowledge, which is stored in databases (McAdam et al., 2007), but also develop tacit knowledge, which is only implicitly available in the minds of individuals and their memories. This hardly articulate and difficult to formalize form of knowledge also requires consideration in the collaboration processes of individuals in teams and workplace settings (McAdam et al., 2007).

Prior studies on TMS, which we identified and analyzed in our systematic literature, 6 highlight several shortcomings of the traditional TMS theory and formulate associated research opportunities. On the one hand, different studies point out aspects where TMS does not sufficiently explain knowledge processes in groups of individuals (e.g., Ariff et al., 2011; Chang, 2005; Choi et al., 2010; Lewis and Herndon, 2011). Criticism includes that TMS has mainly focused on face-to-face teams, temporary teams, or student teams, while teams in virtual work environments and larger collectives in organizations receive little research attention. Cao and Ali (2018) also argue that the role of creativity for task fulfillment quality in teams is widely ignored by TMS research. Other authors emphasize the importance of continuous research on TMS theory to meet the demands of continuously changing work environments and technological advances (e.g., Chang, 2005). Moreover, the influence of technology on group knowledge processes is understudied: For example, Ali-Hassan and Nevo (2016) highlight the insufficient ability of traditional IT systems to integrate domain expertise and communication skills of individuals. Similarly, Simeonova (2018), who examines TMS in the context of Web 2.0 applications, stresses the importance of interactive systems (as opposed to traditional technologies) for an effective knowledge management in organizations and the need for further research related to TMS in this area. With the advent of IAs, we see work environments significantly altered. Therefore, a reassessment of TMS is necessary. While existing research has formulated potential benefits arising from big data and ML for TMS (McFadzean, 2017), we found no study examining our observation that IAs can become members of hybrid teams. This is surprising, given that a study of Sparrow et al. (2011) indicated that humans include online search engines as part of their individual transactive memory. Thus, it seems reasonable that teams also include IAs in their joint transactive memory.

In summary, traditional TMS theory focuses on human teams and provides a conceptualization of the memory structures and necessary processes. However, conventional TMS theory falls short of providing descriptive models on the concrete implementation of these complex structures in today’s dynamic work environments. Given recent technological developments, including the expanded capabilities of intelligent technologies that go beyond purely tool-based functions, it is crucial to empirically examine how TMS needs to align with these new developments, as well as to provide first descriptive approaches to this novel phenomenon.

Method

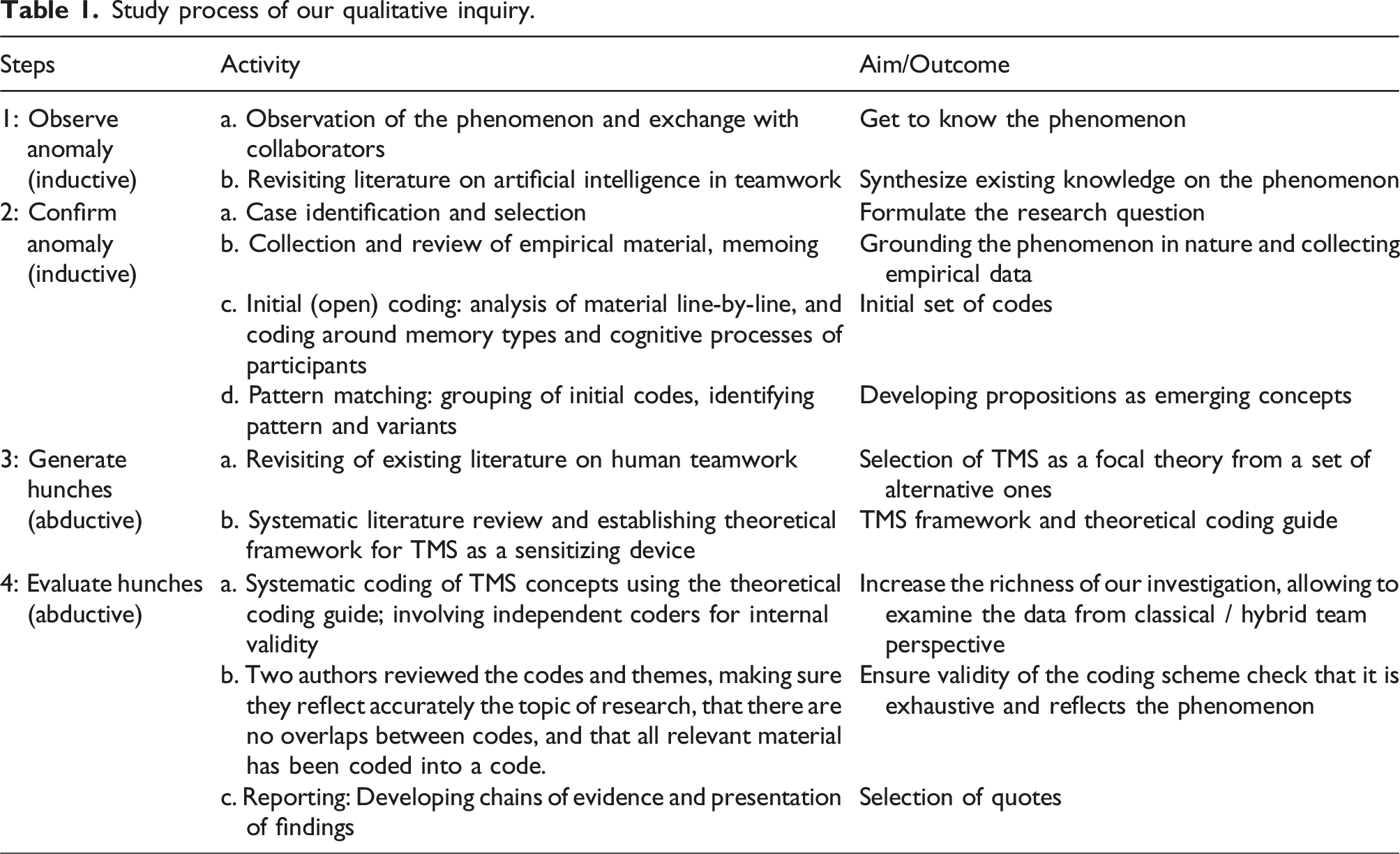

Starting from the observed anomaly—a practical phenomenon that cannot be explained with current theory—that some IAs enter human teamwork, we selected such an early example of the situation where IAs join teams of humans and thus form hybrid teams: The software platform MobileCoach for digital health interventions, which materializes in intelligent conversational agents (i.e., IAs with all three characteristics pattern detection, automatic decision-making and acting, and seamless integration in work environments) to improve human health, lifestyle, and personality of individuals. We carried out an in-depth qualitative case study (Yin, 2018), which allowed us to combine fieldwork, observations, and desk research to gain deep insights into the functioning of TMS in the context of hybrid teams. In a sense of “systematic combining” (Dubois and Gadde, 2002: 129), the case study approach enabled to follow an abductive logic, which is predestinated to study anomalies and allows leveraging inductive as well as deductive reasoning. The approach also allows to capture the breadth of the theory and the richness of the application context to ultimately advance theory (Lee and Baskerville, 2003). The case study uncovered agreements and differences with existing TMS theory. With abductive reasoning (Reichertz, 2019; Sætre and Van De Ven, 2021; Zamani and Pouloudi, 2021), we developed the construct of Transactive Intelligent Memory Systems (TIMS) as an extension of classical TMS. Thereby, we moved back and forth between empirical discovery and theory to expand our understanding of both theory and the empirical phenomenon (Dubois and Gadde, 2002). As part of our abductive process and the generation of different ideas (i.e., hunches), we conducted a systematic literature review to rethink and sensibly recombine the theoretical insights with our case findings. We examined interactions and knowledge sharing mechanisms within hybrid teams, as well as factors that facilitate effective implementation of the group mind.

Study process of our qualitative inquiry.

Observation of the anomaly (step 1)

We observed the anomaly that IAs start entering teamwork on multiple levels. First, on our personal level in that two of the authors—in 2019 when we started this project—independently found that the contemporary conception of teamwork could not explain what happens with human teams, when IAs can perform intellectual activities with a performance that is comparable to humans. Sætre and Van de Ven (2021: 586) point to the “important role of collaborators and co-authors” in the process of abduction. Second, we substantiated this anomaly on a level of the scientific literature in that we conducted a literature review on artificial intelligence in teamwork. This broad search led us to some theories as possible explanation, which we consider later (in step 3a) as “hunches” (Sætre and Van De Ven, 2021). Finally, we searched other practical examples where intelligent systems enter teamwork and found, for instance, the scenarios of Salesforce and Slack, which we used in the motivation of this article.

Case selection and description (step 2a)

As an empirical case, we selected the open-source software platform MobileCoach

7

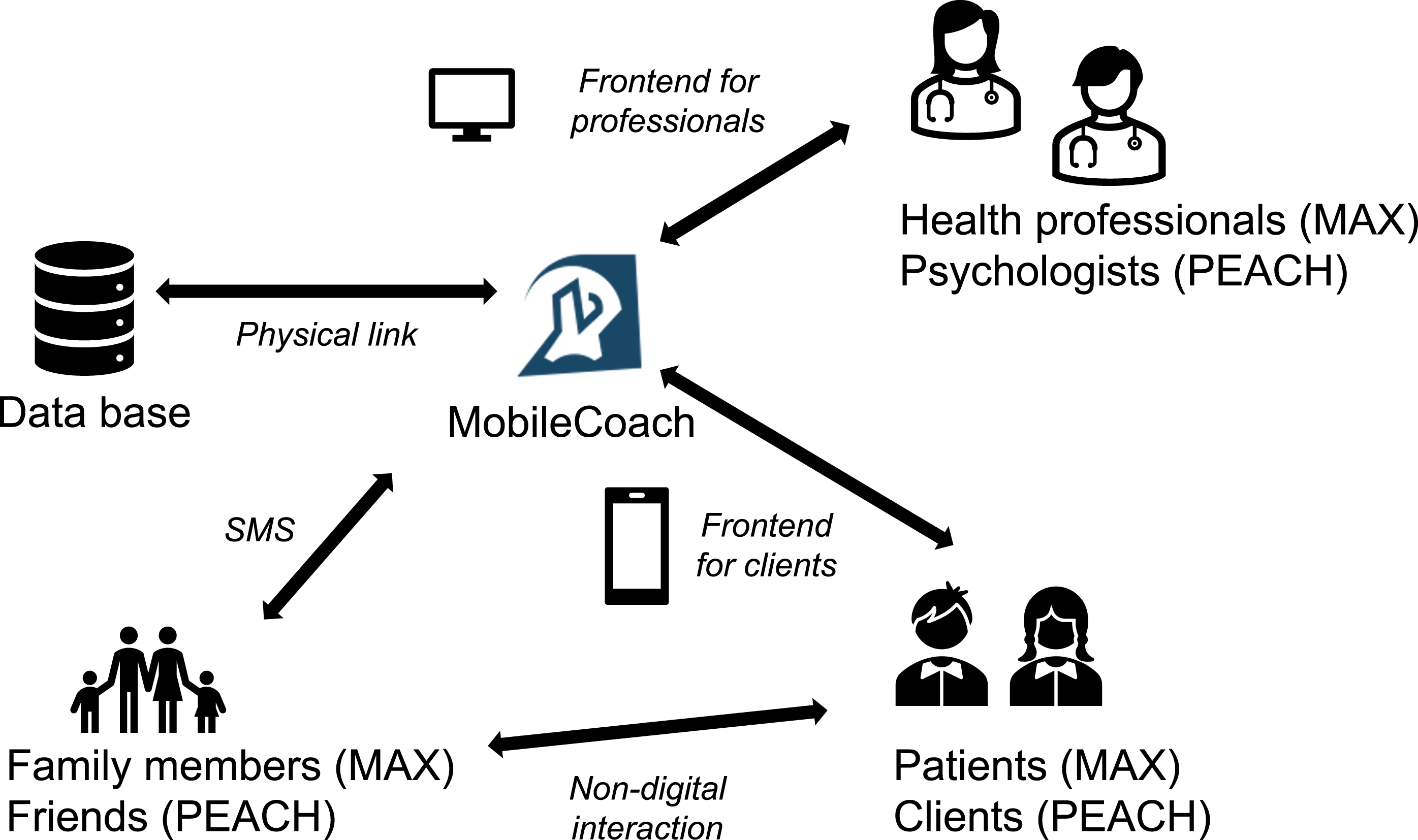

(Filler et al., 2015; Kowatsch et al., 2017) with two of its conversational IA instances that supports interdisciplinary teams of experts in their efforts to change people’s health, lifestyle, and personality. Achieving such changes in the lives of individuals requires an interdisciplinary team of clinical or psychological experts, that work together. The study site, with its two instances, is a critical case (Yin, 2018: 49) because we had the opportunity to study teamwork in a knowledge-intensive field with the participation of an IA actor as part of the (health care) intervention team. The case is an early, yet predestinated, example of a situation where IAs enter human teamwork because the IAs exhibit all three characteristics: ML models are used to detect pattern, for example, in smartphone usage data to predict the best point in time to send messages to the users of the IA so that they mostly reacts to it (e.g., while commuting, after a call, and during breaks). The IAs exhibit a high degree of agency as they acted as an intermediary between clients/patients, health professionals/psychologists, parents/supporting family members, as we illustrate in Figure 3. Thus, the IAs guided all team members in the care team through the respective intervention, reminded, for example, for upcoming activities and tasks to perform, and educational resources. The IA made a high number of respective decisions independently of humans (e.g., timing of messages, sending messages to the respective team member, and more than 99% of conversations with the child patients). Finally, the IA was tightly integrated in the everyday life of its patients / clients as it was an application on the personal smartphone and, thus, very accessible. Relations between MobileCoach and team members.

The situation that IAs join human care teams allows us to study new team constellations in its breath and depth (with our unique access to members of the research group that developed and evaluated the platform and the IA instances in several clinical and non-clinical studies). The first-hand insights enabled our abductive investigation.

The choice of the case was further theoretically guided by four perspectives on knowledge-intense work (Remus, 2002: 116): Process, knowledge, employee, and task. The core domains involved in our case (medicine and psychology) are knowledge-intensive fields. The treatment process itself can become highly complex and specific because experts from different professions but also patients or clients collaborate in the care team. From a knowledge perspective, the processed knowledge is—due to its intellectual nature—highly complex, strongly context-dependent, has a high turnover, and access to it is often difficult. From an employee perspective, team members must be innovative, creative, and they must demonstrate a certain degree of autonomy and expertise to make decisions in critical situations. From a task perspective, medical and psychological treatments are characterized by long learning periods that the involved actors undergo and a continued communication.

The MobileCoach platform enabled several instances of IAs that realize different types of interventions to alter human behavior. When we started the study, two instances MAX and PEACH (which we introduce below) existed that met our selection criteria and analysis objective (i.e., targeting knowledge-intense teamwork). We consider these two instances as dependent embedded units of the overall case study (Scholz and Tietje, 2002; Yin, 2018: 48). This means that our findings related to the overarching platform MobileCoach apply for its instances as well. Moreover, findings related to the two instances may, under respective circumstances, apply for other instances not considered in this study (e.g., a finding related to a particular predictive model can be expected to also occur when a similar (future) predictive model is used in another instance related to the MobileCoach platform).

Study site 1: Interventions related to asthma (MAX)

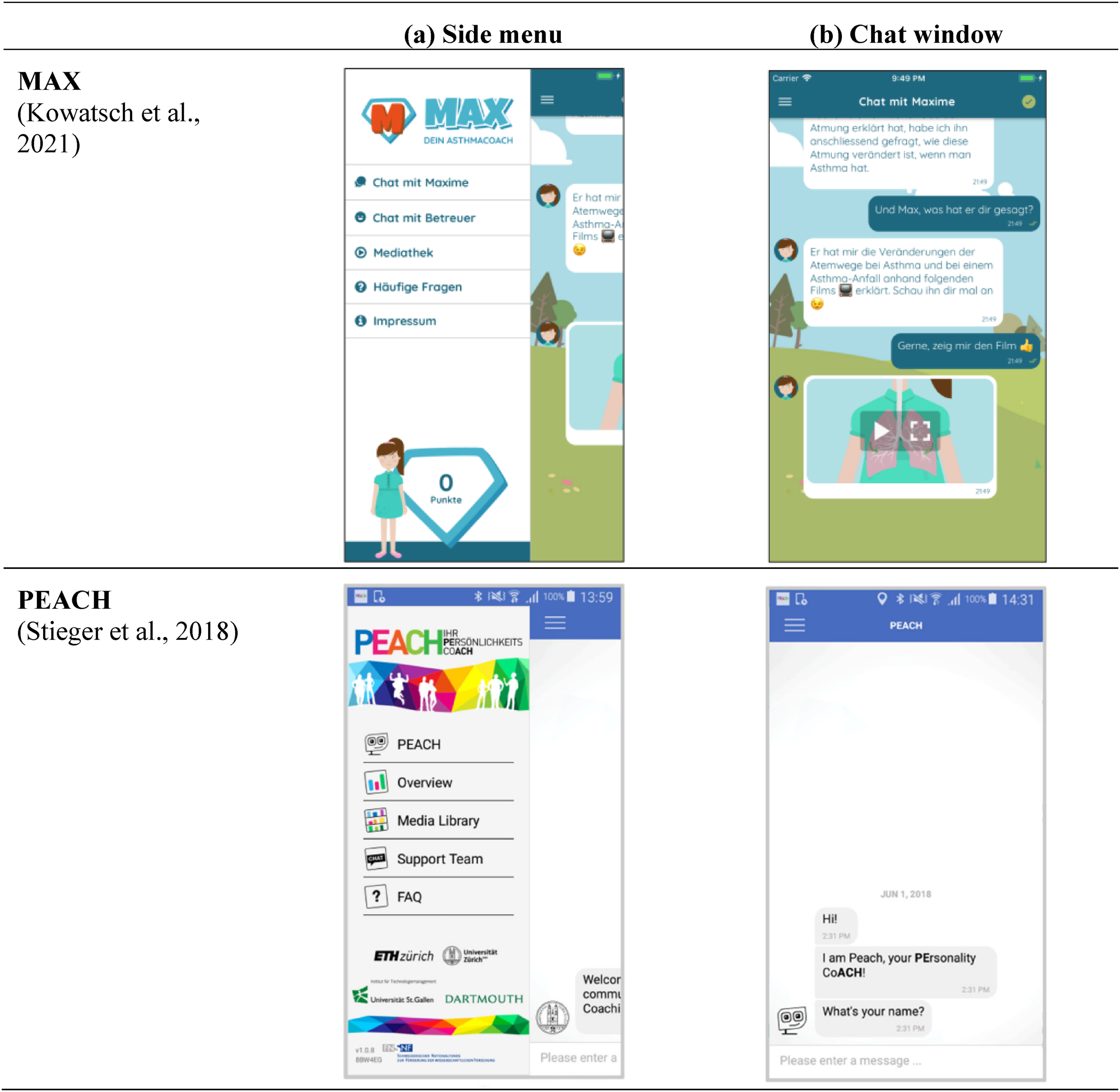

At the core of this study is the MobileCoach instance MAX, which was an IA that realized a digital health intervention to treat asthma among 10–15 years old patients. The IA coordinated the joint efforts of the healthcare team. The unique feature of this application was the integration of child patients, their parents, and health professionals (e.g., medical doctors and asthma experts) in a single environment. Interactive elements (e.g., videos, quizzes, and reminders) were combined with a chatbot system, as well as gamification elements to increase treatment adherence (see screenshots in Figure 4). The application received three Media Awards at the WorldMediaFestival (2018 and 2019) and was validated in a medial study (Kowatsch et al., 2021). As an extension to this IA, the research group examined ML methods to detect nocturnal coughs to count them as a measurable biological marker, which refer to a measurable feature of a clinical condition to inform and examine therapeutic interventions (Barata et al., 2020; Tinschert et al., 2017). Screenshots of the intelligent agent instances (all images released under Creative Commons CC-BY-NC-ND 4.0).

Study site 2: Interventions to change personality traits (PEACH)

The second study site centers around PEACH (PErsonAlity CoacH), an IA that helped individuals to change personality traits, that is, decrease or increase of neuroticism, conscientiousness, extraversion, openness, and agreeableness (Rüegger et al., 2020; Stieger et al., 2018, 2021). Interested participants (clients) could sign up for a ten-week conversational agent-based coaching intervention for intentional personality change (screenshots in Figure 4). As part of the intervention, clients set and reviewed daily goals regarding their selected personality trait to be changed, completed short questionnaires, could access daily film clips and educational material as well as a dashboard with indicators on their behavior. The unique features to include this study site are the interactive materials, the agency of the IA, and that researchers examined ML methods to predict the state of receptivity of a user (i.e., learning from user behavior to send reminders at suitable times) as well as a user’s personality and its change (e.g., to tailor the intervention to the patient).

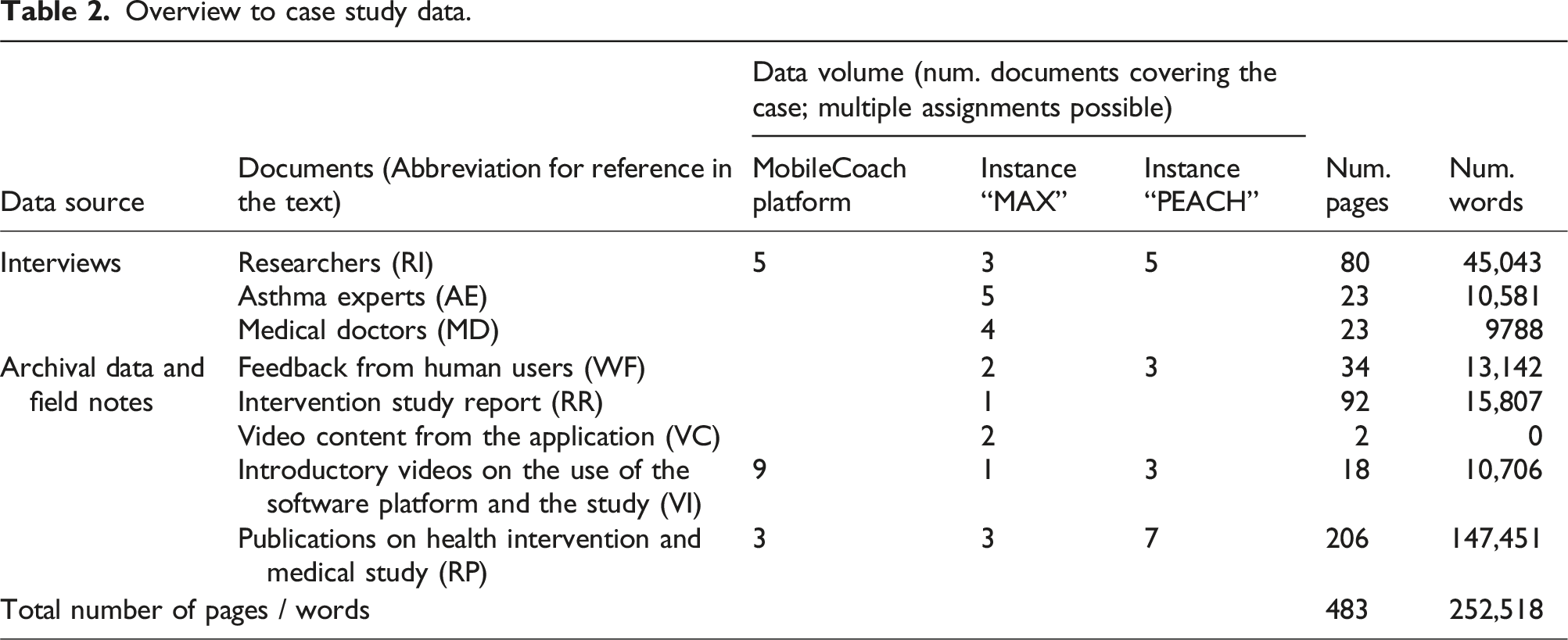

Research data collection (step 2b)

Overview to case study data.

Data analysis (step 2c–3a)

We conducted several rounds of analyzing our case study data. In the second step of our abductive approach, we started with an initial (open) coding in a style following Glaser (1978) to confirm the anomaly. Thereby, we analyzed the data line-by-line and coded observations that describe the assemblage of individuals and the IA (Akhlaghpour et al., 2013; Orlikowski and Iacono, 2001) and related phenomena. In that analysis, we found evidence for different memory types and cognitive processes among humans and IA participants. To further confirm our observations, we grouped our initial codes into broader themes in the second coding round. Thus, the coded segments were analyzed first independently by two members of the author team and subsequently in a joint discussion round to assess any differences and possible disagreements, which emerged in our interpretation. Thereby, we refined the coding, added further codes, and merged conceptually similar codes into main categories as emerging concepts (Miles and Huberman, 1994).

Based on these emerging concepts and our initial literature analysis (see step 1), we started to formulate several alternative hunches as plausible explanations for the anomaly (Sætre and Van De Ven, 2021) in the third step of our approach. The formulation of hunches was mainly driven by empirical data, by theoretical ideas found in step 3a, and supplemented by discussions in the author team as well as other colleagues. To evaluate each hunch, we revisited existing literature in the respective theoretical domain on teamwork. A list of identified alternative hunches as an interim result of this third step can be found in Appendix B.

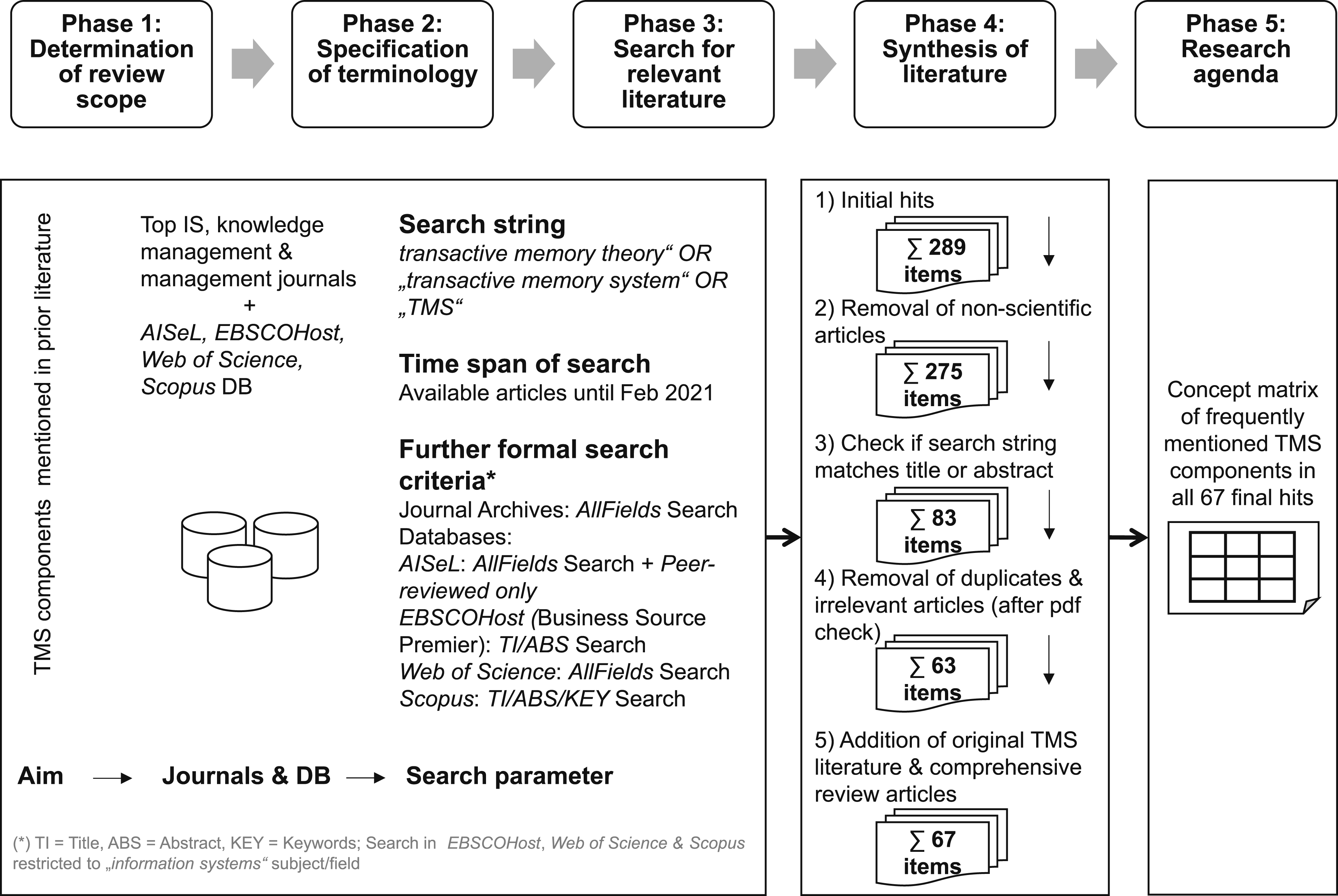

Establishing a theoretical framework on transactive memory systems (step 3b)

TMS emerged as the most suitable theoretical frame to apply in our abductive reasoning. To obtain a comprehensive picture about the state of the art, we systematically reviewed leading IS and (knowledge) management journals and conferences (following vom Brocke et al., 2009; Webster and Watson, 2002) on TMS to identify key theoretical concepts. We also checked whether studies have already investigated TMS in the context of artificial intelligence. Figure 5 visualizes our literature review approach, which was organized in five analysis phases. Our full literature procedures, a list of the core journal websites and further scientific databases covering additional relevant journals and conferences that we included, as well as the detailed concept matrix with all analyzed papers and their synthesis according to TMS can be found in Appendix C. Literature review process.

As part of the terminology specification (phase 2) and literature search process (phase 3), we queried the journal archives and the databases using the search string “transactive memory system” OR “transactive memory theory” OR “TMS” 8 considering all articles in English language that have been published up to and mid-2022. We specified no limiting search parameters, except that we included only peer-reviewed articles. We searched in all fields for the AIS electronic library, and for the databases EBSCOHost, Scopus, and Web of Science, we searched in titles, abstracts, keywords, and the subject field of IS, depending on the available search options within the latter three databases. The restriction to the field of IS was made because the three databases also cover a considerable amount of non-IS-specific content that is irrelevant to our study focus.

Our systematic literature search led us to 289 initial hits, which we synthesized in phase 4 of our literature review process. By focusing on research papers (we removed other forms of contributions like editorials, errata, notes), we reduced this list to 275 items, and manually reviewed the abstracts and titles of these hits with respect to the occurrence of our keywords. This step further reduced the list to 83 articles, for which we read the full texts in detail to judge the relevance of a paper. We considered an article as relevant to our study if at least one of the following criteria was met: First, the study gives a detailed account on the TMS understanding or refers within the main body of the article to at least one of the main TMS components referenced by Wegner (1986, 1995). Second, the study was not just mentioning TMS as an outlook, in the imitations, or future work sections. As a result of this literature synthesis, we considered a final list of 67 articles as relevant to develop a theoretical coding guide on the TMS theory based on a concept matrix summarization (phase 5).

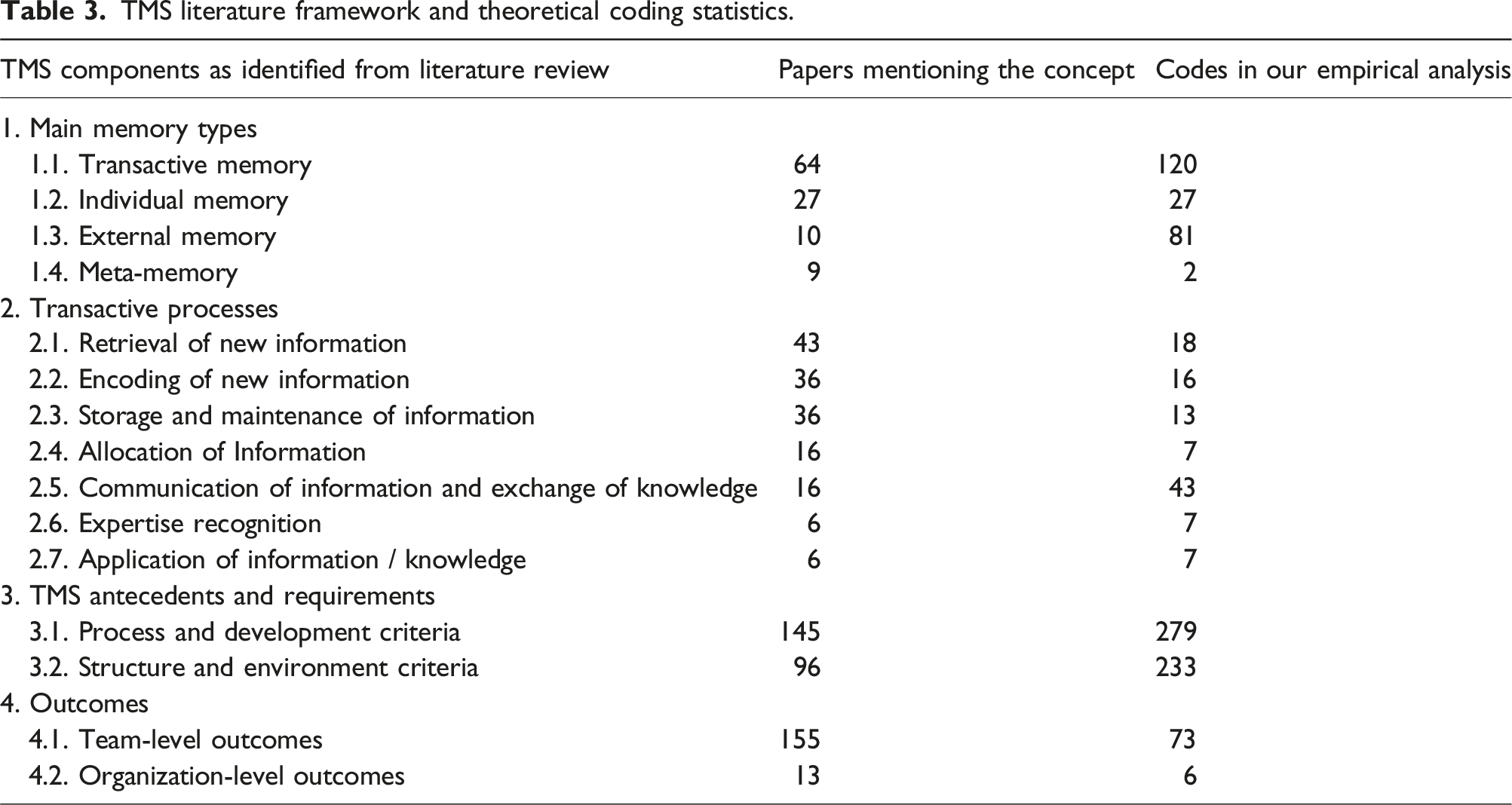

TMS literature framework and theoretical coding statistics.

Theoretical coding and abductive reasoning (step 4)

In our third round of analysis, we used the theoretical coding guide (overview in Table 3) and searched for the occurrence of TMS components in our data. In this coding procedure, we found components that have not been considered in TMS theory, so far, because they are special to hybrid teams. These codes served to abductively extend the coding guide and develop the TIMS framework, which we present below. In Appendix D, we provide the detailed coding guidelines used and include additional exemplary quotes from the data. In the whole qualitative data analysis, which we performed with the software MAXQDA, we analyzed the 483 pages of case study data and assigned codes to 1813 text segments.

Findings

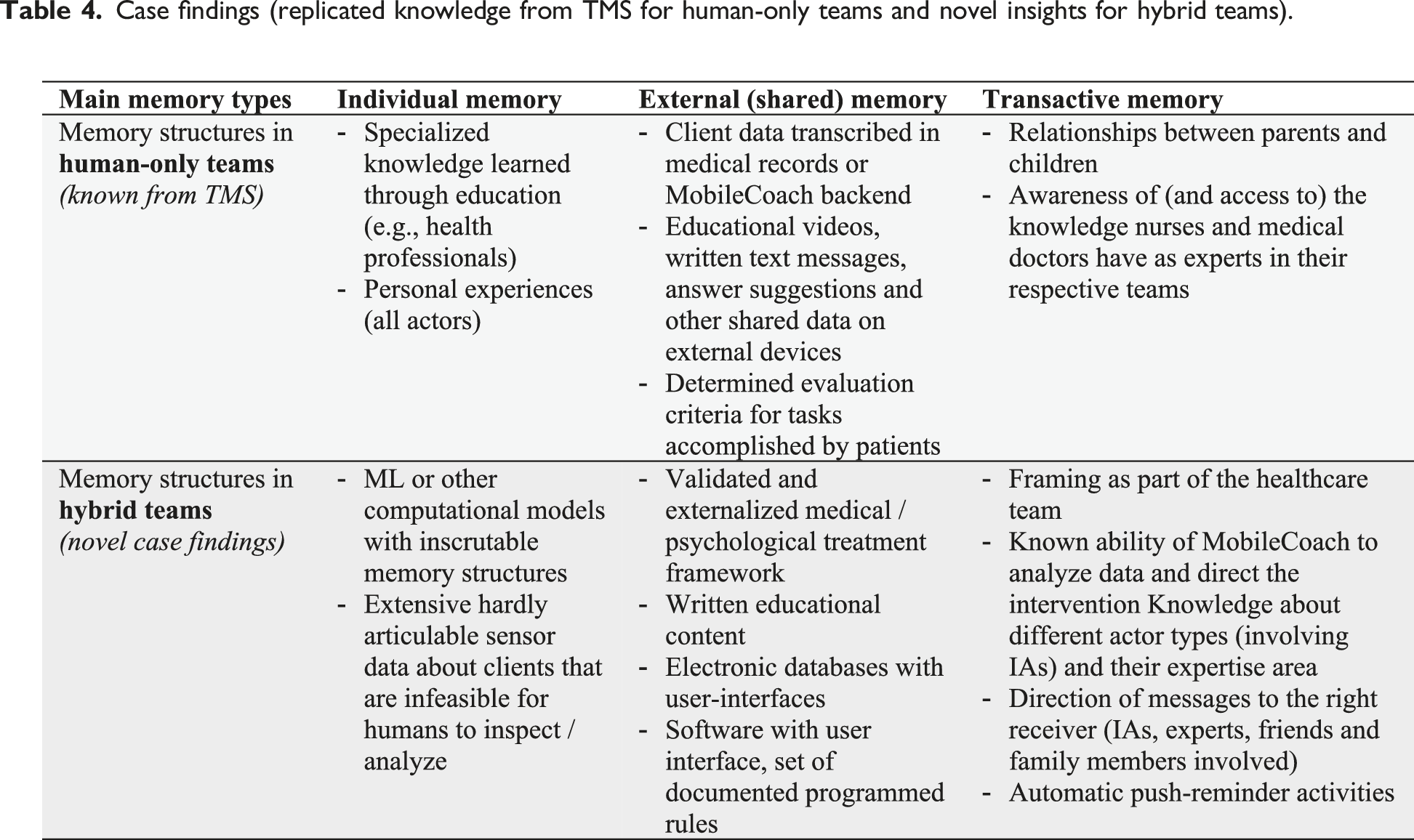

Case findings (replicated knowledge from TMS for human-only teams and novel insights for hybrid teams).

Individual memory

Our findings revealed that all members of the hybrid team (i.e., the human actors and IAs) in both study sites encoded, stored, and retrieved information in their individual memory as part of their information processing. Classical TMS theory is limited in explaining these capabilities of IAs in teamwork, given that the theory only considers humans as actors.

Individual memory of humans

Health professionals held plenty of knowledge (retrieved through their excessive education and experience) that they recalled in the consultation and treatment of patients. They stored this knowledge as individual memory. Patients possessed some knowledge and experience on their disease, which they placed in their individual memories. The child patients learned how “to perform inhalation independently” (MD4 10 ) and learned additional knowledge on the medical condition. Yet, the processing capabilities of patients were limited, as they could only partly remember circumstances and problems that they already faced and had to rely on external memory aids. The PEACH smartphone application was seen as a tool to store and retrieve personal development goals, e.g., simple reminders on a patient’s everyday activity (e.g., goal setting and achievement).

Individual memory of IAs

Similar to humans’ individual memory, IAs accumulated information through task execution and data processing, building their own individual memory. The smartphone applications retrieved data through sensors or questionnaires in the chat environment (e.g., mood and environmental conditions). Much of that information was either not directly relevant for humans in its original representation (e.g., technical data structures or log files), or hardly accessible by humans (e.g., because it is hidden in technical environments) or at least often hard to interpret.

ML algorithms “learned” rules from data, for example, in the case of cough detection using microphone data or the models that estimated the state of receptivity, that is the moment when the smartphone user is most likely to react to a notification of the IA. For that, the smartphone application continuously recorded and analyzed smartphone sensor data, made automatic distinctions, and initiated respective actions (e.g., initiate a message or count a nightly cough). This information processing happened without any human notice or interaction. The IA itself became an active member of the team, possessing skills and abilities comparable to those of humans, rather than being considered as merely a means to store and compute. The internal rules of the ML model were inscrutable to humans (e.g., the model of an artificial neural network consists of weights of input vectors, or the model of a support vector machine consists of several support vectors). Even if analyzed in detail, understanding the more advanced and complex models was only possible for a selected professional class of knowledge workers with highly specialized skills. Regarding the use of the ML models in the case of IAs, we found no necessity for the care team members to inspect the models. An ML researcher explained that with an analogy: “Imagine my eyes look at something concrete, calculations are made, or the calculations when my ears elicit a noise. I am not even aware of what they do, am I?” (RI2)

Another manifestation of IA’s individual memory were computational models that use implemented procedures to analyze data. Although the programmers knew these models, other individuals did not want to see or comprehend them in detail. The psychologist behind the PEACH intervention, for example, pointed to a traffic light component in the app that helped participants to check their status related to their goals. “This algorithm calculated whether participants were still heading in the right direction … they got a traffic light in green indicating: ‘Hey, you're on the right track’, red meant: ‘You are kind of introverted although you want to become extrovert.” (RI2) Similarly, there was no functionality to inspect all database entries for individual users and their behavior (e.g., timestamps of digital coach usage, achieved points in tasks) because much of that information was purely technical. In addition, “adaptive reminders” (RI5) for tasks could be skipped by users, but they remained important. In the PEACH intervention, such tasks were just again integrated in the next day’s conversation with the client—likely without being noticed by clients that this was originally a skipped task from an earlier session.

External memory

From a memory perspective, we found confirmation of TMS theory that individuals also use IAs as external memory in the form of a collectively accessible knowledge repository. Yet, according to our data, external memory is used by IAs, as well.

External memory of humans

As the capabilities of humans to process and analyze a long history of detailed time series data (e.g., regular intakes of medication, times of inhalations for each patient) were limited, they use many forms of shared external memory. In addition to classical external memory forms (e.g., notes and medical records), the digital coach was often cited as a tool that stored additional data on a patient. Examples include the chat protocols and the status of patients’ learning progress (learning sessions that they have already accomplished). The digital coach also supported in the evaluation of the inhalation videos with a standardized criteria catalog: “the assessment was really well organized. ... standardized criteria helped not to forget anything, and you don't have to think again about how to write it.” (AE1) Complementary, a functionality allowed storing notes about ratings of inhalation videos. Patients or clients could access shared learning resources like videos, explanations, and quizzes to test their knowledge. In the PEACH intervention, for example, “there were ten weeks filled with content. There was an interaction every day in the morning and evening … you didn't always have to answer but there was a push message twice a day.” (RI3) Another form of external memory that all human actors could access was the conversations text and any written feedback on tasks, like an inhalation task. In some situations, the conversation with the agent offered just answer options to respond in a conversation. While clients found them “partly funny,” they complained about the limits of these answer options and one client said “sometimes, cramped small talk combined with a slow dialog was a little frustrating.” (WF5)

These situations where the IA was used to store and retrieve information (e.g., diary, simple reminders, and access of learning content) can be clearly explained by classical TMS theory where humans use the technical tools as individual or shared external memory aids. The same appears when the technical limitations of the intelligent agent became visible.

External memory of IAs

From the perspective of the digital coach, also plenty of information was stored as shared external memory, for example, in databases or as program code. One important element was the carefully created contents of the whole intervention. For example, MAX was built based on an asthma comic that was a validated medical instrument for child patients. This comic was digitized with learning videos and respective contents with the help of a method-didactic expert and graphics professionals and a final approval by medical doctors. PEACH was similarly effortful to design and implement. Based on an “intervention framework that was derived from psychotherapy research” (RP2), multiple researchers technically instantiated the digital coach: “the content was developed by psychologists … from the University of Zurich, … a doctoral student from the marketing department at ETH Zurich wrote the dialogues.” (RI3)

Although technical data was mostly stored in a human-readable format and accessible by different team members, it was not necessarily used by the entire team. The program code or the data structures in a database were, for example, hidden for users of the application but accessible for developers and the software itself. Despite the carefully and effortfully crafted design of the digital coach, technical contents are usually just accessed by the digital coach software to deliver the intervention. Human users (patients, clients, and health professionals) did not use this codified information nor had they direct access to the databases or information in principle.

Transactive memory

The existence of transactive memory is known to enhance group performance by allowing members to rely on each other’s specialized knowledge, leading to improved coordination, faster learning, and more efficient problem-solving. In our study, we observed that the IA became active itself in the transactive memory system, in addition to situations where the IA acted as shared external memory aid. For this, the IA independently accumulated knowledge, made calculations, and logical conclusions. In these situations, the IA leveraged all characteristics that we outlined before, namely, pattern recognition, agency, and seamless integration into everyday environments. The IA exceeded thereby the function of a mere shared database and became an active part of the team, so that other members involved it as part of the team’s transactive memory. More specifically, the IA got involved in creating the group mind and became a team member itself in that it developed its own knowledge, memory structures, and expertise. Others could use the expert knowledge through communication mechanisms. Just like a human actor and as part of this role, the IA was able to encode, store, and retrieve the other experts’ knowledge as well. The following four examples document such situations.

First, the digital coach was “framed as a social actor with an own personality” (RI6) which had an active part in the health care team in the MAX intervention. To realize this, the conversations with the digital coach were natural and anthropomorphic, including “communication pattern [users] employ with friends or family members. … Giving the chatbot a name, … the role of the physician’s assistant, and … visual appearances make him humanistic, with jokes and emojis” (RI1). This was also reflected in comments of clients, for example, one stated that “the way PEACH spoke to me was like a human being” (WF4). In addition, there was, “a separate chat channel with the chatbot on eye level” (RI6). Although human actors knew that they were interacting with a chatbot, they perceived it as a human assistant and thus part of the team, which increased trust. The IA was “a single point of contact that orchestrates and communicates relevant information to the outside world and, above all, also engages in team building. … parents worked together with the young patients on these issues and their health literacy. A strong - we speak here of a ‘working alliance’ - was built between them and the agent” (RI6).

Second, the agentic nature of the digital coach was quickly adapted by users, who began to rely on the IA as if it were a human coach. Both digital coaches had a high acceptance among their users and the chatbot system could handle almost all chat interactions with the patients. For example, “99.5% (15,078 out of 15,152) [of conversational turns] took place between patients and the MAX conversational agent, and only 0.5% (74 out of 15,152) occurred between patients and health care professionals.” (RP5) Beyond this, “digital coaching has the positive effects that your personal coach is available 24 hours a day” (RI6) and it played an active part in the intervention as it sent push notifications whenever an intervention or activity was needed by patients, health professionals, and supporting family members. In the case of the PEACH intervention, twice-a-day notifications were embedded in the intervention: “if you did not complete an important interaction on a certain day, ... then [this task] was just embedded into the conversation flow again later, the next day and so on. Until it was done” (RI2). The MAX intervention, by contrast, gave “the patient full control over the timing. … During onboarding … and at the end of the first session, the digital coach asked: ‘When do we want to meet again?’ Of course, with few constraints, but you could say ‘tomorrow,’ for example.” (RI1)

Third, from the perspective of an IA, the digital coach remembered who the supervising family member in the MAX intervention was (the mother, father, sister, etc.). It used this information to notify the child patient that they should interact with them (physically) and sent the supporting family member an SMS with a call for action. The digital coach also triggered more complex non-digital interactions between human participants. One example was that multiple people were asked to work together (e.g., play a game, or a family member was instructed to record a video of the child patient inhaling so that healthcare professionals could assess the performance, after this video was uploaded). Moreover, cough detection ML models were capable to “differentiate coughs of different persons” who are sleeping in the same room (RI3). By storing and processing this information internally or externally, the IA itself becomes an expert possessing relevant exclusive knowledge that can be consulted by physicians in the team who initiate further action. Hybrid teams can, in situations where this functionality works reliably, include IAs thereby into their transactive memory.

Finally, the intervention could go in different directions based on the preferences of the users. As part of the PEACH intervention, “In the beginning, participants could choose one out of nine different goals. Each was a personality trait [which they want to focus on in the intervention] and then they were allowed to choose whether to increase or reduce it, except for emotional stability, which could not be reduced.” (RI3) Later, the digital coach relied on the client again with formulating concrete goals: “After a few weeks, the participants had to ... set themselves a goal, which was then simply text. ... For example: ‘When I get home in the evening, I'll work on my homework for an hour.’ … This was controlled by a self-contrast. ... then simply assessed in the evening whether they had done what they had set out to do.” (RI3) Thus, the digital coach relied on its users to complete the interventions, but these interactions were carefully and purposefully embedded into the whole intervention.

Interaction, transactive processes, and outcomes

As a further justification on the existence of the diverse memory types, we found evidence that humans and IAs alike performed the various transactive processes like encoding, storage, retrieval, allocation, and communication of information in the different memory types. Also, the results of using IAs in the different memory types lead to additional outcomes and added value. Much empirical evidence for this was already presented above, when we showed the existence of different memory types among individuals and IAs. In addition to that, we found novel interactions between patients/clients and medical/psychological professionals that would not have taken place without the IA. “There are taboo subjects that patients often refuse to talk about during consultations - sex and asthma, for example. ... The patient can suddenly talk about a topic that [the AI] brings up in a very relaxed way.” (RI1) Another aspect is treatment adherence, which means the extent wo which a patient follows the prescription of a physician. Health professionals pointed to the new possibilities to incentivize intake of medications or document the health status. An asthma expert liked the gamification elements: “The reward system is very child oriented” (AE2). In the end, many patients/clients expressed their positive assessment of the IA. One child patient wrote, for example, that “the cooperation with you [MAX] and the accompanying person” (WF1) was great.

Discussion and implications

Our study demonstrated that the three main types of memory (individual, external, and transactive) are existent not only among humans (as we know from TMS theory) but also in hybrid teams that include IAs (see Table 4). We also found evidence that humans include IAs as part of the team’s joint transactive memory, which is also evident when looking at the transactive knowledge processes. As classical TMS theory falls short to explain the existence of the memory types among IAs and the inclusion of IAs in human transactive memory, we suggest a conceptualization that describes the group mind of hybrid teams. The TIMS framework that we suggest below is a foundation for further research on hybrid teams, which include humans and IAs, and offers guidelines for the development and implementation of IAs that are intended to collaborate with humans in hybrid teams.

Theoretical implications

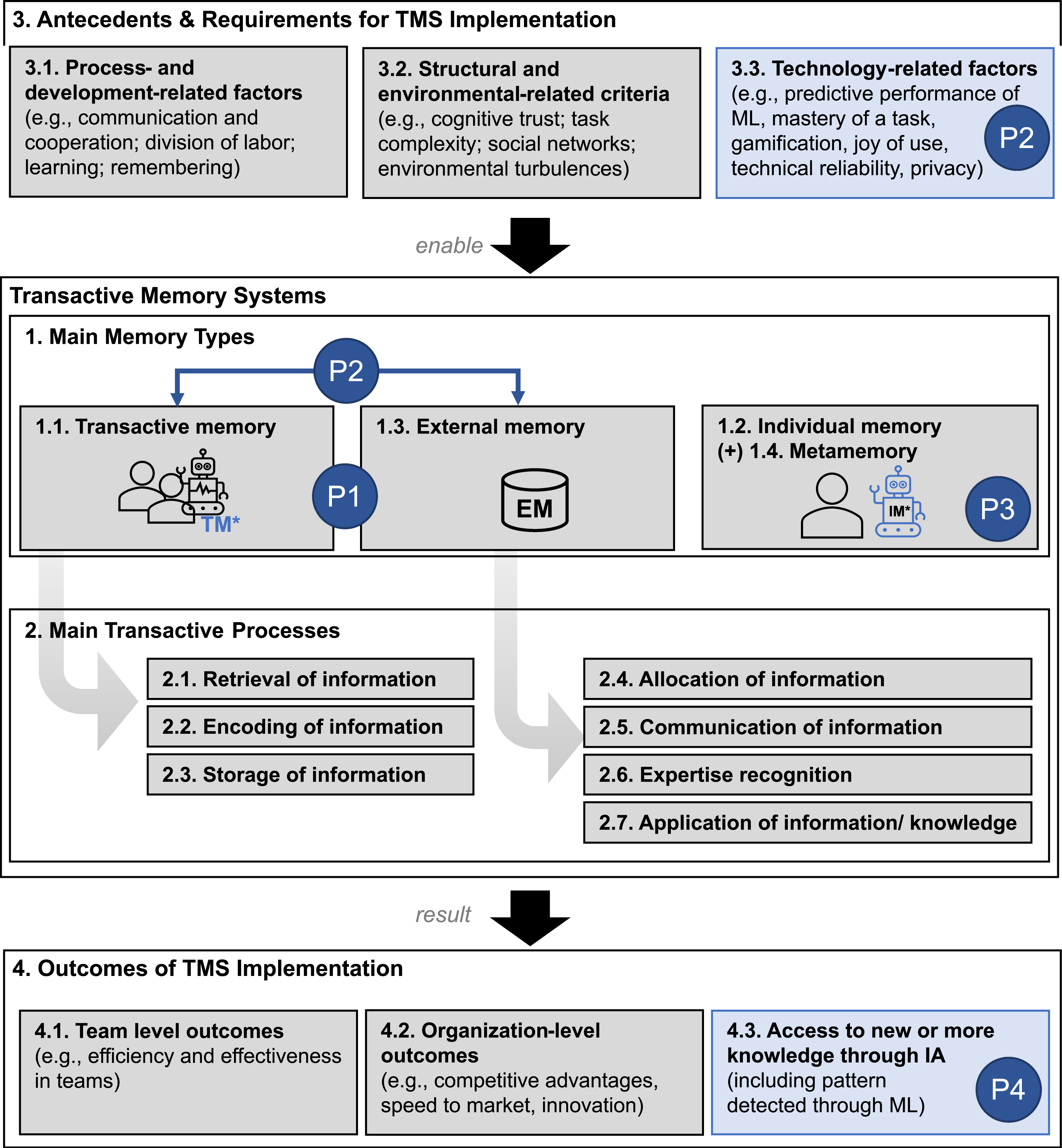

Figure 6 illustrates our proposed change to the conceptualization to traditional TMS theory, together with our research propositions as an overview. Our in-depth qualitative case study has uncovered further antecedents, requirements, and outcomes that we summarize in our abductively generated TIMS conceptualization. Conceptualization of transactive intelligent memory systems in hybrid teams with the different memory types (IA-specific memory types are denoted with *) and our research propositions (in blue).

IAs are part of a team with different memory types

Classical TMS considers IAs as technical resources and, thus, being part of individuals’ shared external memory—similar to databases, corporate wikis, etc.—IAs have capabilities that partly exceed human information processing (e.g., handling huge amounts of data in short time or detect pattern in data that humans cannot identify). Sparrow et al. (2011) already demonstrated that humans can consider search engines as a form of external and transactive memory, when they are faced with difficult questions. Our study extends this line of reasoning to hybrid teams and to the context of IAs. Such IAs exhibit more capabilities than search engines (i.e., learning, automatic decision-making and acting, and seamless integration into everyday activities) and they are increasingly present in organizations (e.g., Bort, 2017; Chen et al., 2022).

Our findings suggest a dual role of IAs: In their external memory, humans use the digital coach to retrieve data where they knew that it was stored there. Health professionals, for example, accessed patient-related data (chat protocols, answers from quizzes, etc.), stored notes about inhalation videos, and used a criteria catalog for the formal evaluation of inhalation videos. Beyond that, the digital coach was considered as joint transactive memory, for example, when health professionals retrieved latent patient-related data that is hidden in sensor signals and behavioral data (i.e., digital biomarkers). Patients and clients accessed learning and intervention materials that were targeted micro-interventions for which usually a human expert would be necessary to select. The digital coach tailored content for specific users (e.g., invited supporting family members to help with tasks or games) and ML models were able to distinguish coughs from different people. This is also the case when a team relies on the online service Otter.ai to transcribe and summarize meetings and save the relevant slides from presentations for the organizational memory. The team members trust the capabilities of this service based on previous positive experiences. Consequently, IAs can become part of a team and reliably take over certain tasks, for example, by making processes more efficient, uncovering new contexts, or taking over other peoples’ tasks thus increasing team satisfaction. Nevertheless, there is still a big number of tasks that humans need to carry out. We postulate: P1: Humans (a) use IAs as shared external memory, but they also (b) include them into their transactive memory processes that are emerging in teams.

Moderators for the choice between memory types

Continuing this conceptualization, the automation potential of tasks stands out as a condition when humans include IAs in their transactive memory (rather than treating them as sole external memory aids). The automation potential is determined by the complexity of tasks (Campbell, 1988; Vimalkumar et al., 2021; Wood, 1986) and the ability of a machine to master them (Traumer et al., 2017). Unlike in repetitive tasks that can already be automated today, IAs will only take a subordinate role in knowledge-intense activities, which involve creativity or coordination efforts (like arts, education, and politics). ML applications, for example, demonstrate that they can identify important medical events (e.g., unplanned hospital readmission or prolonged stay) from extremely large data sets of electronic health records (Rajkomar et al., 2018). A (group of) humans could not effectively carry out this high cognitive labor. The ability of IAs to cope with many heterogeneous tasks is limited, since an “artificial general intelligence” seems to be unlikely (Aleksander, 2017; Chollet, 2019), given that artificial intelligence systems can hardly capture the whole context in which they are used (Denning and Arquilla, 2022). In case of predicting medical events, a model that is trained to predict hospital readmission, will probably not being capable of carrying out activities necessary to prevent the readmission of patients. Hence, we formulate the following two propositions: P2a: Humans consider IAs as shared external memory when the level of an agent’s capability is not high enough to handle the complexity of the whole knowledge work activity. P2b: Humans consider IAs as active part of their teams’ transactive memory when the level of an agent’s capability to solve a knowledge work activity is equal to or better than that of humans.

Our case offered evidence for both propositions. Situations where human teams included IAs in their joint transactive memory required a high mastery of the task by the IA and a significant knowledge intensity of the task. This was the case in the chatbot conversation, which is a complex activity and was almost fully automated (only 0.5% of the conversational turns in the asthma coach required experts’ involvement). Health professionals appreciated the IA’s ability to independently generate plain text feedback from their formal rating of inhalation videos, thus allowing human actors to utilize the expertise and knowledge of IAs. Also examples outside our case study demonstrate that the capabilities of artificial intelligence systems exceed human capabilities, when they beat human players even in strategic games (META et al., 2022) or are sources of creativity (Teubner et al., 2023).

We also found situations where the knowledge intensity was high, but the IA showed less or no mastery (e.g., the rating of inhalation videos itself) and situations with simple tasks (e.g., setting and retrieving goals). Thereby, humans considered the IA merely as a database. Patients and clients also degraded the IA to an external memory aid when the conversational agent offered too little freedom for answering questions (e.g., in cases where only a limited set of answer possibilities were available). These findings suggest that task complexity and knowledge intensity, together with the ability of the IA to master the task, is a moderator of whether an IA is considered an external memory aid or part of a team’s transactive memory.

While reminders sometimes appeared to be merely like egg-timer functions that users could set (e.g., when the IA asked, “when shall we meet again?”), some reminders became part of daily routines (e.g., setting and checking daily goals). The reminder timing even went so far as that ML was used to schedule the reminders at times when the user was particularly receptive to messages. This demonstrates the relation between knowledge intensity (i.e., task complexity), IA’s mastery of a task and the type of memory reveals that there is a continuous degree according to which humans consider IAs in either one function or the other.

In addition to knowledge intensity and task mastery, we found further antecedents and requirements of classical TMS that apply for teams of humans and IA. We observed, for example, that cognitive trust, learning and remembering, and credibility (already known from TMS theory) apply as well. This became evident when health professionals were necessary in the onboarding phase to create trust in the IA. A medical doctor said that “otherwise it is like a black box and that could be difficult in terms of trust” (MD2). Trust was established in the smartphone app through age-appropriate avatar. For hybrid teams, we found further antecedents that are relevant to establish transactive memory (e.g., factors like fun, gamification, and motivation that come with using the app).

New form of IA-bound memory and knowledge

Our data showed that IAs that participate in TMS also form a type of an individual memory. This new form of memory consists of knowledge that these IAs hold to handle an activity, and which is hardly accessible by humans. Thus, we postulate that an IA may involve a human colleague to benefit from their knowledge and expertise. This suggests evidence for the following proposition: P3: IAs can possess a new form of memory, comparable to the individual memory known from TMS.

The most prominent manifestation of such individual memory is information that ML algorithms accumulate, and humans cannot interpret, particularly deep neural networks. If we follow the understanding of the literature so far, computer systems are not attributed to have an individual memory but only mere storage facilities for data (Lehner, 2021: 161). With IAs, however, ML procedures extract rules from data and generate data structures that humans partially fail to comprehend. There is a current debate in the literature on the inscrutability of ML models, especially when these models are intended to support managerial decision (Berente et al., 2021; Shollo et al., 2022). Studies find acceptance issues (e.g., Lebovitz et al., 2022), confusion about the type of explanation required (Miller, 2019), or caution against a lack of causality (e.g., Rudin, 2019) in this context. Yet, the ML models used in the context of IAs are usually developed to support the agency of IAs and not (or less) to support human decision-making. Therefore, we find it unsurprising that our interviewees did not see the inscrutability of the ML models as a challenge because they were not interested in the internal rules of the ML model rather than the outcome or action of the IA. The concept of transactive memory can help to explain this finding, given that humans delegate certain complex analyses to the IA by considering it as part of their transactive memory. This is what Baird and Maruping (2021) describe as “agentic IS artifacts.” Such situations are analogous to the result of the study by Sparrow et al. (2011) in which people did not remember the explicit result of a web search but remembered that they had found relevant results there, which the authors regard as a form of transactive memory.

Similarly, the internal memory of humans and the knowledge stored therein is not accessible by other individuals and sometimes even individuals themselves are unaware of possessing a specific knowledge item. Once the knowledge that humans hold in their memories is hardly articulable, it is described as tacit knowledge (Nonaka and von Krogh, 2009; Polanyi, 1958). When IAs are good at a task, they draw, in addition to explicit knowledge, also on hardly articulable, enormous and complex knowledge, accumulated by ML algorithms (Dourish, 2016; Faraj et al., 2018), which constitutes a black box for humans (Guidotti et al., 2018).

Our findings suggest that IAs—in addition to explicit knowledge—also create a new form of hardly articulate and formalizable tacit knowledge, which is developed and used by ML methods and is difficult for humans to grasp (e.g., how to detect coughs or the state of receptivity using sensor data from smartphones). We refer to this as IA-bound knowledge. IAs develop and apply this knowledge independently and detached from humans (e.g., documented ML results, analytical conclusions, or error messages coded by the technology). Thus, we postulate: P4: IAs develop (a) explicit knowledge but also (b) a new form of knowledge, like tacit knowledge of humans, which others can access but hardly interpret.

A framework for transactive intelligent memory systems (TIMS)

Given the limitations of classical TMS and the empirical findings of our study, we expanded the TMS framework (i.e., our theoretical coding guide) that we derived from literature and illustrated the result as our conceptualization of TIMS in Figure 7. The elements in blue represent new components that appeared in our study as relevant in hybrid teams. The grey elements stem from the original TMS perspective, which we found in our literature review. Both elements together build TIMS, which helps researchers to systematically study collaboration modes in hybrid teams. Components of TIMS (differences to classical TMS theory and propositions P1-P4 in blue).

Practical implications for effective TMS implementation in hybrid teams

Our theorizing suggests three managerial implications: First, teams can be complemented by IAs. This requires organizations to establish appropriate structures to build suitable team compositions and make the new technology accessible—not only to individuals—but also to teams. This effort requires establishing an organizational culture that fosters the adoption of IAs. Second, the assignment of tasks to members in hybrid teams should be made based on task characteristics instead of prejudice or existing practices. Finally, TIMS helps organizations to formulate knowledge management strategies that account not only for humans but also consider IAs as actors. Thereby, organizations can describe new job roles for humans as well as IAs considering their respective strengths and shortcomings.

For effective IA implementation, three requirements foster the design of hybrid teams to build TIMS: First, an intuitive and reliable user interface that encapsulates the underlying technology of IAs (understandable design, ease and joy of use, user motivation to use intelligent technologies), given that users need to accept the tool as an autonomous partner; this also implies reliability of the whole technology stack (e.g., proper Wi-Fi connection). Second, to address the dynamic nature of collaboration in hybrid teams, the user interface must be interactive and become more humanistic. Artificial intelligence technology can already partially imitate human behavior, what is discussed as “affective computing” (Picard, 2000). Literature already pointed to the relevance of emotions (fear, joy, sadness, …) and their link to knowledge creation (Fteimi et al., 2021). The degree of humanity that a user interface to their technological partner exhibits depends to a large extent on the human actors and their instructions passed to the technology (e.g., in the form of training data from which algorithms can learn). Third, a clear understanding of the role of IAs in establishing TIMS in hybrid teams is needed. This includes their capabilities (e.g., task specification for the algorithm) as well as human limits in tasks (e.g., biases). Organizations must consider implementing organizational forgetting mechanisms in IAs as they have to realize similar mechanisms as reinforcement learning algorithms do.

Limitations and future research directions

We anticipate three limitations of our study: First, we used TMS to explore how knowledge work is carried out in hybrid teams. Yet, TMS (also our TIMS conceptualization) is complex, and it hardly allows to study only group interactions without individual interactions between people and IAs. Future studies should, for example, employ longitudinal research designs and focus explicitly on the TMS processes to further corroborate the TIMS construct. Second, the TMS components and the framework we set up considers elements that our literature review (in the IS and knowledge management field) and the case study uncovered; some TMS components that are studies outside our scope may be missing. Third, we started to examine the topic with an application that already exists, not what future applications may accomplish.

Our work paves the way for follow-up studies that should validate our concept in further application fields, also with other types of IAs. When continuing research with the TIMS conceptualization, an interesting tie-in is the exploration of the metamemory construct and to what extent this exists in an IA or is exclusive to humans. Future research can also focus on the establishment of coordination and communication mechanisms between human and IA actors, task and expertise concretization for each actor, and the technology and human readiness for such kind of collaboration as well as the failure tolerance.

Summary and study contribution

This study explored knowledge work in hybrid teams of humans and IAs. Our abductive approach involved a systematic literature review and an the-depth study of a critical case: An early empirical example where IAs join a hybrid team. We propose the construct of TIMS that describes how humans and IA can work together, even on comparable cognitive levels for certain tasks. Our empirical data demonstrated that IAs can—similarly to humans—develop a new form of individual and external memory and they can be part of joint transactive memory. Whether people view IAs merely as external memory aids or as part of their teams’ transactive memory is moderated by the task’s complexity and knowledge intensity, as well as the IA’s ability to complete the task. Our proposed construct provides a ground for further investigating hybrid teams and understand the mechanisms that are necessary to efficiently implement TIMS. Developers of IAs can use TIMS as a tool for requirements specification to prepare their software agents for use in hybrid teams. We also deduced three requirements to such systems to enable the formation of TIMS.

In the end, our study provides an empirical argument towards the current discourse on the much feared “robo-apocalypse” (Willcocks, 2020, 2021). We suggest that IAs should not be seen in the role of an actor that replaces humans. Rather, collaboration with IAs is an opportunity that enables both actors (human and machine) to complement each other and thus overcome the weaknesses of the one with the capabilities of the other. Currently, various tasks, especially innovative and creative tasks or such that heavily depend on emotions, are still restricted to humans. This was particularly evident in our analysis in areas where health care professionals had to be involved. The implementation of algorithms, the initial task triggering process, as well as a substantial part of the interpretation tasks currently remain in the hands of humans.

Supplemental Material

Supplemental Material - The group mind of hybrid teams with humans and intelligent agents in knowledge-intense work

Supplemental Material for The group mind of hybrid teams with humans and intelligent agents in knowledge-intense work by Konstantin Hopf, Nora Nahr, Thorsten Staake and Franz Lehner in Journal of Information Technology

Footnotes

Acknowledgments

We greatly thank Tobias Kowatsch, Marcia Nissen, Mirjam Stiger, and other colleagues from the Center for Digital Health Interventions at the University of Zurich, University of St. Gallen, and the ETH Zurich, Switzerland, for providing extensive insights on and interview data from research projects around the MobileCoach platform that enabled us to conduct the case study analysis in this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding