Abstract

We present a corpus investigation of the influence of first language – second language (L1–L2) typological similarity on the acquisition of the L2 English article. We consider item-level typological similarity in terms of the availability of an article in the L1, but also broader typological similarity in terms of the linguistic distance between L1 and L2 as captured through a variety of lexical, morphosyntactic and phonological measures of linguistic distance. We analyse the accuracy of the use of the definite and indefinite English articles in around 0.5 million writings from learners with 11 typologically diverse L1s. The data are sampled from an open access English as a foreign language (EFL) corpus, EFCAMDAT. Our results indicate that L1 influence arises from a combination of item level L1–L2 differences, that is, the availability of an article in the L1, as well as broader properties of the L1 grammar, as captured by linguistic distance measures. The results indicate that it is the availability of the definite article in the L1 that predicts article omission in L2 English, for both the definite and indefinite articles. This finding supports the generative typological distinction between determiner phrase (DP) and noun phrase (NP) languages, indicating that the availability of a definite article and a DP predicts the use of bare nominals in the L1 and consequently, article omission in L2 English.

I Introduction

The similarity between learners’ first (i.e. native) language (L1) and second language (L2) is commonly seen as a central factor modulating the influence of L1 on L2. This basic observation goes back to contrastive analysis but has survived in all current theoretical approaches of crosslinguistic influence (Abbas et al., 2021; Ellis, 2006; Ionin and Montrul, 2010; Jarvis, 2000; Lago et al., 2021; Odlin, 1989; Schwartz and Sprouse, 1996, Westergaard, 2021). Importantly, similarity is crucial for demonstrating ‘crosslinguistic performance congruity’ (Jarvis and Pavlenko, 2008). This means that the source of L1 influence is related to identifiable properties of the L1 rather than other aspects of L2 learning or even cultural and educational factors that might correlate with an L1.

A common manifestation of crosslinguistic influence is transfer of an item (feature, form, structure) from L1 to L2. The more similar L1 and L2 are, the more likely transfer is (Jarvis and Pavlenko, 2008; Kellerman, 1983). Transfer can facilitate learning: the more L1–L2 similarities exist (e.g. in shared cognates, functional morphemes etc.), the more comprehensible the L2 input is for the learner (Kellerman, 1983), leading to faster learning. By contrast, when L1–L2 have few similarities, learning will proceed more slowly, with learners producing errors and potentially avoiding challenging structures in their production (Schachter, 1974). L1–L2 similarity can involve specific structures or morphemes, (e.g. articles, tense–aspect markers, question particles, or more abstract features, e.g. definiteness). Within the generative literature, similarity relates to the settings of linguistic parameters, which are related to abstract features capturing crosslinguistic variation. For example, the availability of the person (formal) feature broadly distinguishes languages with systematic agreement (Russian, German, Italian, Arabic etc.) from languages without agreement (Chinese, Japanese, Korean etc.) (Roberts, 2019).

There is substantial empirical evidence showing how the availability of similar or congruent items in L1 facilitates L2 acquisition (Charasbaszcz and Jiang, 2014; Jarvis and Pavlenko, 2008; Murakami and Alexopoulou, 2016). Recently, the increasing availability of big learner data from assessment and teaching institutions has enabled a focus on typological effects beyond individual L1s. Thus, Murakami and Alexopoulou (2016) considered the accuracy of six L2 English morphemes in the written component of Cambridge Assessment exams from the Cambridge Learner Corpus (Nichols, 2003). They calculated accuracy in supplying the relevant morpheme in obligatory contexts in 11,893 exam scripts across proficiency from learners of seven L1s (German, French, Spanish, Korean, Japanese, Turkish, Russian). They showed that the availability of a congruent morpheme in L1 leads to higher accuracy in the use of the corresponding morpheme in L2 English, across several L1s and across proficiency.

Shifting the focus beyond individual features and items, Schepens et al. (2015, 2020) demonstrated compellingly that the linguistic distance between L1 and L2/3 Dutch predicts spoken proficiency scores in state examinations for proficiency in Dutch as an additional language (Ln). Using a variety of measures for linguistic distance (lexical, morphological, phonological), based on measures of similarities between L1 and Dutch regarding cognates, morphemes, and sounds, they showed that the larger the linguistic distance between L1 and Ln Dutch, the lower the learners’ proficiency scores. Importantly, this effect is significant even when other variables are controlled (e.g. years of education, quality of education in country of origin, age of arrival, length of residence, and gender). Their combined measures of linguistic distance capture 28%–69% of variance in Ln attainment scores.

Not only is linguistic distance predictive of scores in Dutch proficiency tests but van der Slik et al. (2017) found that it can also predict learning progress over time. Thus, test scores improve over time (i.e. as length of residence increases) for learners with L1s of equal or higher morphological complexity than Dutch. Strikingly, the scores of learners with L1s of lower morphological complexity worsen over time. Crucially, they used big data, specifically test scores obtained by thousands of immigrants taking the official test of Dutch as a second language (known as ‘STEX’). Such databases enable the investigation of a large number of typologically diverse languages. For example, Schepens et al. (2015) examined 56 different L1s so that the effect of linguistic distance can be reliably evaluated.

In this article, we build on the work of Murakami and Alexopoulou (2016) and Schepens et al. (2015, 2020) with the aim of understanding the potential interplay between similarity involving individual items/features and similarity involving broader typological features (as measured by linguistic distances measures). Schepens and colleagues established the effect of linguistic distance on broad acquisition outcomes like speaking proficiency, while Murakami and Alexopoulou (2016) showed that the availability of a congruent element in the L1 influences the accuracy of the corresponding L2 morpheme across a range of typologically diverse L1s (e.g. Turkish, Russian, Korean). The question that arises is whether the effect of linguistic distance on broad outcomes arises as the aggregate of individual L1–Ln similarities/differences, with broader typological features of the L1 playing no role in the acquisition of individual items. However, it is possible that broader typological properties of the L1 influence the acquisition of individual items, like L2 English morphemes, over and above the availability of a congruent item in the L1. A broader range of typological L1–L2 similarities (e.g. word order, agreement patterns etc.) will make input more comprehensible overall and, thus, (indirectly) facilitate the acquisition of individual features. Crucially, it has been known since Greenberg (1967) that the distribution of typological features across languages is not random. Rather, there are correlations and implicational relations in their distribution. For example, we generally find definite articles in systems with a count/mass distinction rather than in systems based on classifiers (Chierchia, 1999). Thus, we hypothesize that broader typological similarities between L1 and L2 will impact on the acquisition of individual items.

Within the generative second language acquisition (SLA) literature, this hypothesis is consistent with the full transfer hypothesis of L1 parameter settings to the initial state of L2 (Schwartz and Sprouse, 1996). To the extent that such broader typological correlations and implicational relations are captured by linguistic distance measures, it is reasonable to expect an effect of linguistic distance on the acquisition of individual features, beyond the availability of the individual relevant feature in L1.

We focus on the L2 acquisition of the English article as a case study to investigate if L1–L2 linguistic distance influences the acquisition of this individual morpheme, beyond the (known) facilitative effect of an article in the L1. Following up on Murakami and Alexopoulou (2016), we examine the accuracy learners show in their use of the definite and indefinite articles, specifically their accuracy of use in obligatory contexts for articles. We have sampled writings from learners from 11 L1 backgrounds from the EF Cambridge Open Language Database (EFCAMDAT) and look at the effect of L1 type (whether an article is available in the L1) and L1–L2 linguistic distance, using a variety of lexical, phonological and syntactic distance scores.

II Background

In the typological literature, linguistic distance is used to establish phylogenetic relations between languages (see reviews in Longobardi and Guardiano, 2009; Ruhlen, 1991; Trask, 2000). Here, we use linguistic distance as a measure of L1–L2 similarity and a way to operationalize L1–L2 congruency. The dominant approach of measuring linguistic distance relies on the number of cognates shared between a pair of languages and the degree of phonological and orthographic differences between word translation equivalents, such as Levenshtein distances (Schepens et al., 2011). Such lexico-statistical approaches have been successfully exploited for automated measurement of linguistic distance and have been complemented by approaches involving word distributions or n-grams (see Gamallo et al., 2017). There is a variety of measures available for lexical (e.g. Gray and Atkinson, 2003), morphosyntactic (e.g. Dunn et al., 2011), and phonological distance (e.g. Atkinson, 2011) as well as syntactic distance based on generative syntax parameters (Ceolin et al., 2020). These measures can support treelike models of language family relations (e.g. Cysouw, 2013; Schepens et al., 2013, 2015).

Adopted for SLA research, linguistic distance measures allow us to investigate L1 typological effects on broad outcomes, like L2 proficiency, and appreciate the relative contribution of different subdomains and features (lexical, phonological, morphological) to such outcomes. For instance, Schepens et al. (2020) show that when considering phonological distance, sub-categorical properties (i.e. phonological features) are stronger predictors of Ln proficiency scores than sound categories. Additionally, they showed that a combination of measures of lexical, morphological, and phonological distance is necessary to explain the variance in the proficiency scores. It is an open empirical question if similar effects of linguistic distance can be obtained for the acquisition of individual features or whether linguistic distance effects can only be detected on broad outcomes.

In this study we investigated the L2 English article. Articles are amongst the more difficult morphemes for L2 acquisition (DeKeyser, 2007) and show strong crosslinguistic influence (Ionin and Montrul, 2010; Murakami and Alexopoulou, 2016) in that the availability of an article in the L1 facilitates article acquisition in L2. However, while the effect of L1 type in terms of presence/absence of an article is clearly established, it is not possible at present to evaluate variation within each type of language; for example, among the article-less L1s, it is not known if Korean or Japanese learners might find L2 English articles more challenging than Russian learners; similarly, within L1s which have articles, it is not clear, for example, if Arab learners might be less accurate than French or German learners of English. The descriptive data in Murakami (2016) presented in Figure 3 suggest some differences within each type of L1: for example, Japanese and Korean learners appear to have lower accuracy than Russians and Turkish learners at lower levels of proficiency; similarly, Spanish learners appear to have lower accuracy than German and French learners. However, it is not clear in Murakami and Alexopoulou (2016) what the effect of linguistic distance might be, if any.

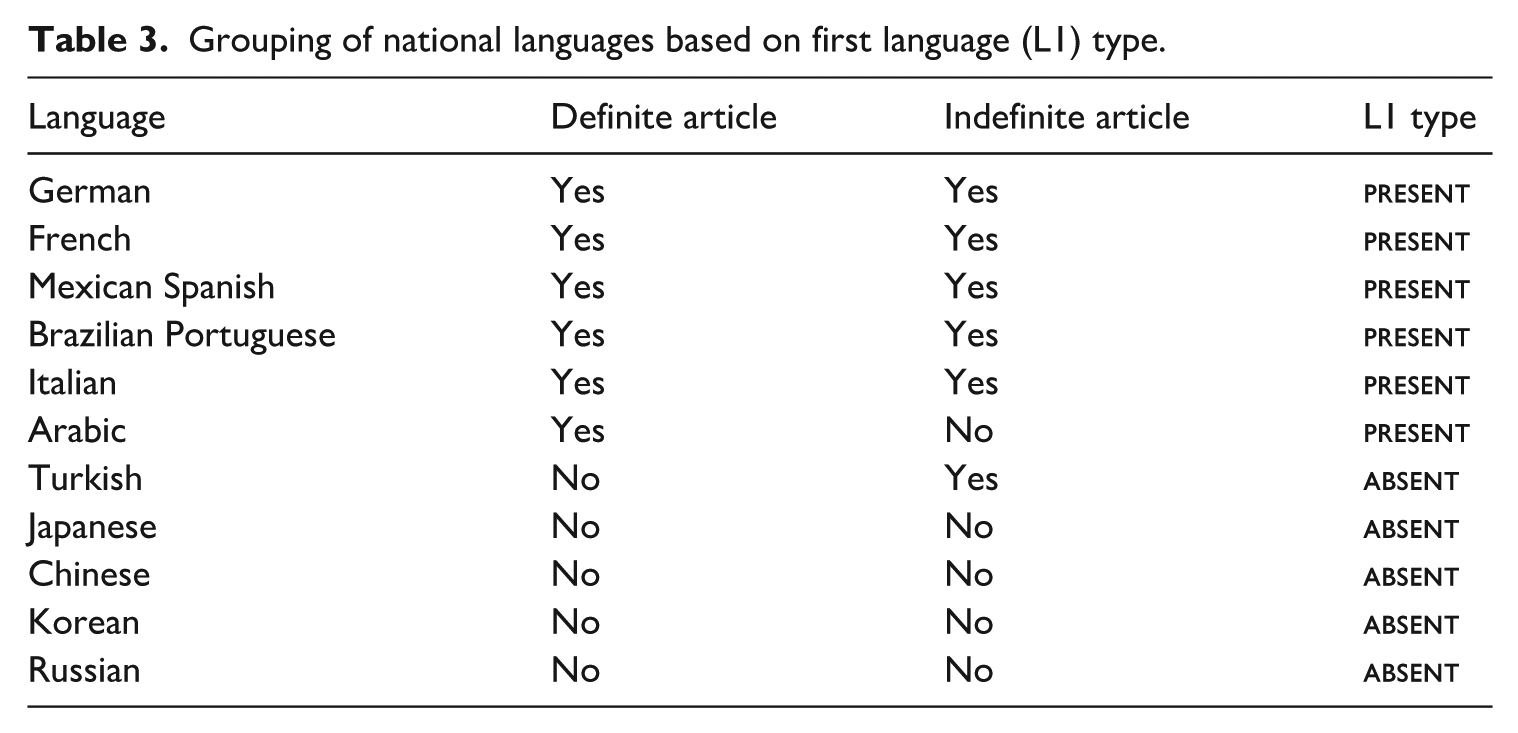

Another aspect motivating the choice of the definite article is that, in comparison to other L2 morphemes, it is relatively straightforward to ascertain if a language (L1) has a definite article or not. By contrast, verbal morphemes tend to conflate agreement, tense, and aspect features in English making the correspondence between congruent items often less straightforward crosslinguistically. Nevertheless, languages lacking a definite article may have an indefinite article or a numeral which can be considered as congruent with the indefinite article (Dryer, 2013). The classification of languages could, therefore, be based on the definite article only or on both articles. Current syntactic accounts suggest that only the definite article is relevant for language classification, since it is the definite article that is associated with a distinct functional projection, the Determiner Phrase (DP) (Alexiadou et al., 2007). Lack of a DP predicts the use of bare nouns as arguments, for both definite and indefinite nominals and irrespective of whether a language has an indefinite article or numeral. Syntactic accounts, therefore, predict that learners from L1s lacking a DP will show high omission rates for both articles, definite and indefinite, even when the L1 has an indefinite article or numeral, because it is the absence of a DP that predicts bare nouns in argument positions. This prediction has been empirically confirmed (Ionin and Montrul, 2010). However, it is important to examine whether the absence of a definite article influences the acquisition of the indefinite article for a larger sample of languages and compare with possible linguistic distance effects.

III The current study

The goal of the present study is to examine further the role of L1–L2 typological similarity in crosslinguistic influence focusing on the acquisition of the articles in L2 English. Our research questions are summarized below:

Research question 1: Is learner accuracy in the use of the L2 English definite and indefinite articles linked to: (a) the availability of a congruent item in the L1 (i.e. definite and indefinite articles)? (b) solely on the availability of a definite article in L1? (c) the linguistic distance between the learners’ L1 and L2 English? (d) the learner’s proficiency?

Research question 2: Does the L1 impact on the acquisition of the definite and indefinite article similarly, i.e. does the availability of a definite/indefinite article and linguistic distance have a similar impact on both articles?

We follow current syntactic assumptions 1 and hypothesize that the availability of a DP is the crucial L1 aspect that influences the acquisition of both articles, irrespective of the availability of an indefinite article/numeral; we therefore analyse both definite and indefinite articles to investigate this hypothesis. Specifically, in light of previous empirical studies (e.g. Murakami and Alexopoulou, 2016) we predict that learners whose L1 has a definite article will show higher accuracy in their use of both definite and indefinite articles, in comparison to learners whose L1s do not. We further expect an additional effect of linguistic distance, specifically that L1–L2 similarity will correlate with higher accuracy scores. We also expect that all learners will improve their accuracy with proficiency; in other words, proficiency will be a strong predictor of accuracy.

We examined the accuracy in the use of articles in the writings of learners of English as a foreign language (EFL) from a teaching environment. We sampled writings from 11 L1s: German, French, Italian, Brazilian Portuguese, Spanish, Arabic, Russian, Turkish, Chinese, Japanese and Korean. This sample allowed us to consider a typologically diverse set of L1s, five of which lack a definite article (Russian, Turkish, Japanese, Korean, Chinese), while six do have a definite article (German, French, Italian, Brazilian Portuguese, Spanish, Arabic).

IV Method

1 The EFCAMDAT corpus

We sampled the EF Cambridge Open Language Database (EFCAMDAT) (Alexopoulou et al., 2017), an open access corpus of English as a foreign language which is available at https://ef-lab.mmll.cam.ac.uk/EFCAMDAT.html. EFCAMDAT consists of learner writings submitted to Englishtown, the online school of EF (Education First), an international school of English as a foreign language. The data collection was completed in 2013. The Englishtown curriculum offered in 2013 was organized in 16 teaching levels, each with eight teaching units or lessons. At the end of each lesson there was an open-ended writing task, which learners needed to complete and submit to Englishtown in order to progress to the next unit. Each writing was corrected and graded by an EF teacher. Learners could move to the next unit if they received a satisfactory grade, otherwise they would repeat the writing task. While writing their answer, learners may have consulted the preceding lesson as well as a model answer that accompanies each writing prompt (or any other external resource).

There is a total of 128 writing tasks across the 16 EF teaching levels. The curriculum is strongly communicative, and the writing prompts include a variety of descriptive, narrative and argumentative tasks, such as writing a review for a restaurant, completing a story, writing an email to a colleague, contributing to a forum discussion, giving a set of instructions on how to play a game etc. Each writing task specifies its length, ranging from 20–40 words at Level 1 to 150–180 at Level 16. The curriculum is standardized, so all learners across different countries complete the same writing tasks.

Around 66% of the EFCAMDAT writings contain EF teachers’ corrections for spelling, grammatical errors, lexical choices etc. Each writing is associated with a randomly generated identification number for the learner submitting the writing, the EF teaching level, a topic-id code corresponding to each one of the 128 writing prompts, national language (NL) and date of submission. National language (NL) captures the nationality of the learner and country of residence. Thus, a Japanese national accessing Englishtown from Brazil is not included in the corpus. NL has been used as a proxy of L1 (Murakami and Alexopoulou, 2016). As an L1 proxy, it is imperfect as it does not capture learners’ multilingualism (e.g. a German learner who is also a heritage speaker of Turkish). Nevertheless, NL has been shown to be a reliable proxy for L1 in previous research (Murakami and Alexopoulou, 2016).

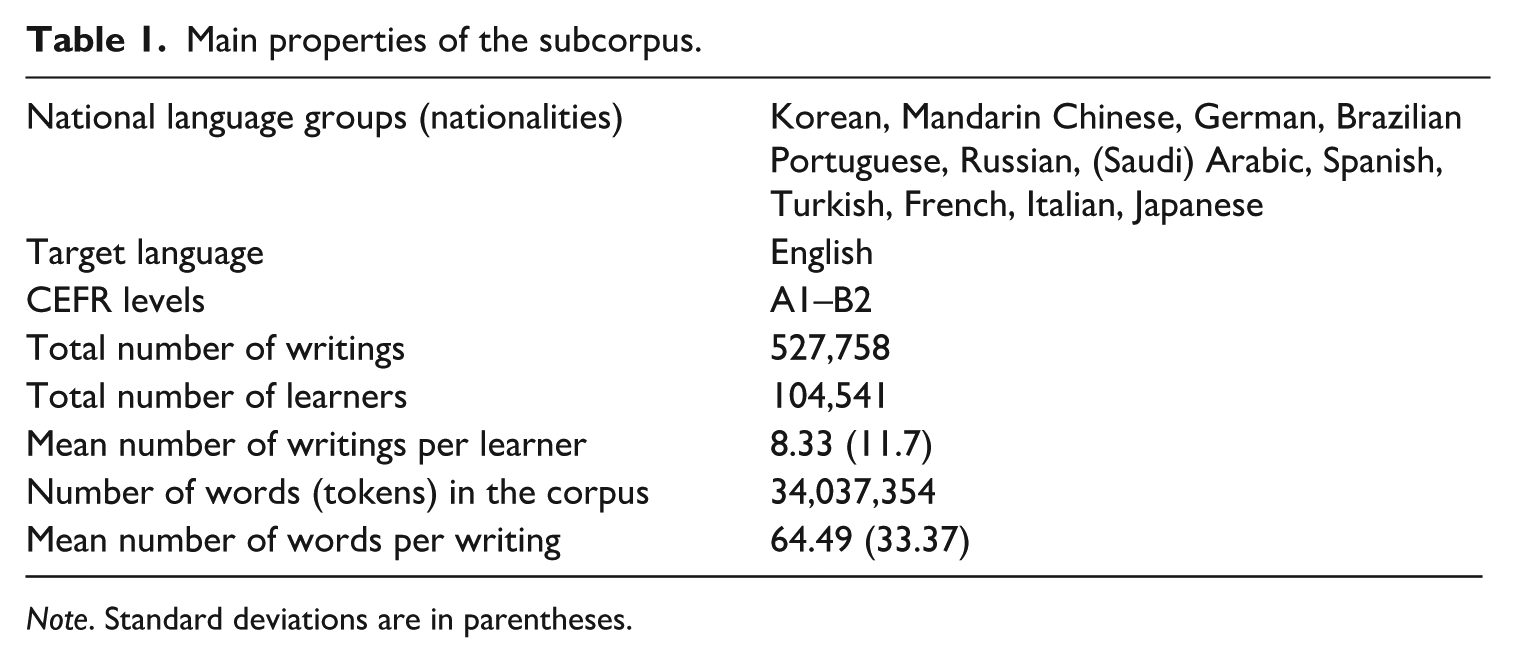

In this study, we used a subset of EFCAMDAT consisting of 527,758 writings, henceforth, scripts, written by 104,541 learners, summing to 34-million-word tokens (for the total number of learners and writings in each teaching level and NL group, see Appendix 1 in supplemental material). To construct this subcorpus we selected only the scripts that had teacher corrections, so that, for each script, we have both the student’s original writing and the teacher corrected version.

Our teacher-corrected subcorpus was then cleaned following the process described in Shatz (2020). Appendix 2 in supplemental material details the cleaning process. Following the data cleaning, we annotated the learners’ original and teacher-corrected writings with part-of-speech (POS) tags using the Penn Treebank Project (Markus et al., 1993). The part-of-speech tags were used to identify the noun phrases and definite and indefinite articles in learners’ original and teacher corrected writings. To identify the obligatory contexts for the articles we used the parsed version of the error-corrected texts and targeted article-related parts-of-speech tags. To target part-of-speech tags, we developed R scripts using the dplyr package version 1.0.5 (Wickham et al., 2022) and the stringr package (Wickham, 2022).

2 Target L1 groups and proficiency

We included 11 NL groups: Korean, Chinese, German, Brazilian Portuguese, Russian, (Saudi) Arabic, Spanish, Turkish, French, Italian, and Japanese. As mentioned earlier, NL is a proxy for L1, crossing nationality with country of residence. Thus, NL Chinese learners included those living in Mainland China and Taiwan; for these learners we assume Mandarin Chinese as the relevant L1. NL Arabic is the national language of learners from Saudi Arabia while NL Spanish is the L1 of learners from Mexico and Spain. These NL groups were selected to enable comparisons of typologically diverse languages, while ensuring sufficient numbers of teacher-corrected writings. Table 1 presents the general properties of the cleaned subcorpus, which is available at https://ef-lab.mmll.cam.ac.uk/EFCAMDAT.html.

Main properties of the subcorpus.

Note. Standard deviations are in parentheses.

Regarding proficiency, we sampled from the Englishtown levels 1–3, 4–6, 7–9, and 10–12, which correspond to the Common European Framework of Reference (CEFR) levels A1, A2, B1, and B2 respectively (we excluded higher levels corresponding to C1 because of a significant decrease in the number of writings). Appendix 1 in supplemental material provides further information on the distribution of learners and error-corrected writings across NL groups and CEFR levels.

3 Accuracy measure and data extraction

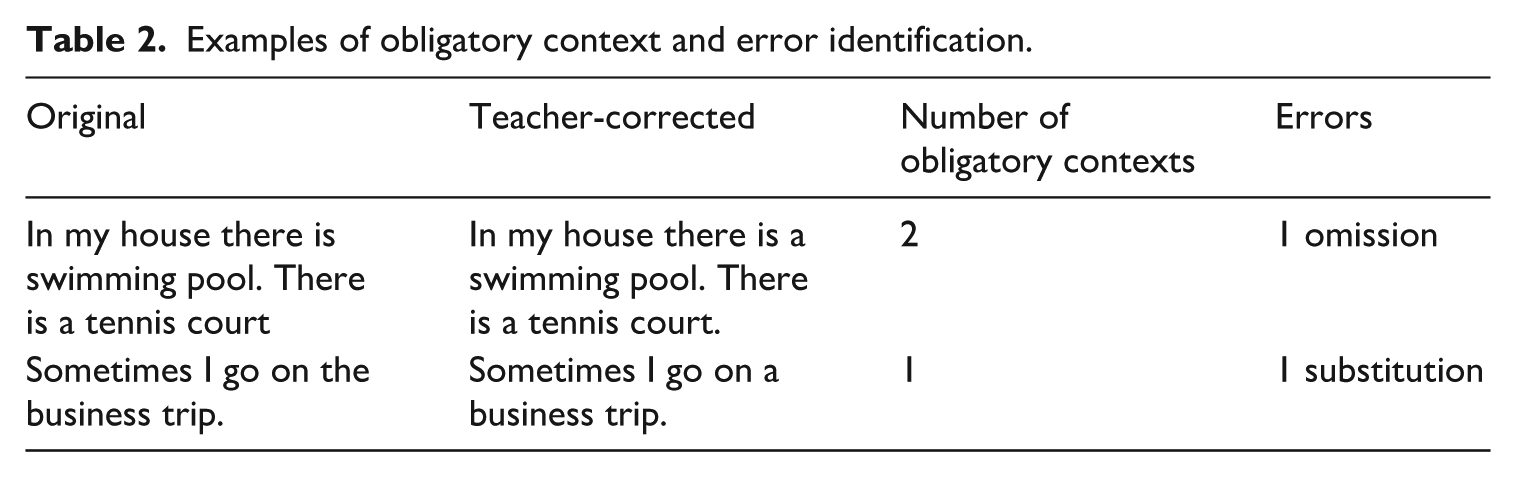

To measure learners’ article accuracy in obligatory contexts, we calculated the ratio between correct uses and obligatory contexts (see also Murakami, 2016). This accuracy measure is conceptually equivalent to target-like use scores (Pica, 1983). To obtain the number of correct uses, we exploited the EFCAMDAT teacher-corrected writings. As mentioned earlier, in our cleaned subcorpus, we have two versions of each learner writing: the raw text submitted by the learner to Englishtown and a version corrected by the teacher. We operationalized as obligatory contexts, all the contexts where a definite or indefinite article was used in the teacher-corrected version of a script. We then compared the teacher corrected scripts with the original script submitted by the learners in order to identify omission and substitution errors. Whenever the original and corrected scripts matched, an instance of correct article use was recorded. In case of a mismatch, two types of discrepancies were noted: (1) a missing article in a learner’s text where the teacher used an article, and (2) an incorrect article in a learner’s text where the teacher used a different article (‘a’ instead of ‘the’ or vice versa). Each discrepancy was coded as an error, and the error type in each case was coded as omission (1) or substitution (2). The examples in Table 2 illustrate this process. (For an example of a full original annotated script, see Appendix 3 in supplemental material.)

Examples of obligatory context and error identification.

Regrettably, we could not include article overuse errors, such as using ‘a’ or ‘the’ where no article is needed (e.g. ‘drink a milk’). We excluded such errors from our analysis mainly due to the technical difficulty in the automated identification of obligatory contexts for no article. To identify obligatory contexts in the teacher corrected scripts we used the article part-of-speech labels; bare nominals are not associated with a distinct part of speech label which means that their identification using part of speech labels is less straightforward. Thus, our accuracy score captures suppliance in obligatory context (SOC) and not target-like use (TLU).

To identify the obligatory contexts for the articles, we targeted article-related POS labels in the error-corrected scripts. We developed R scripts to define obligatory contexts in the error-corrected text, compare corrected and original writings to identify errors and error types and count each type of error in error-corrected writings.

Our measure of accuracy relies on the accuracy of the teacher corrections as well as the accuracy of the R scripts we developed to retrieve learner errors and measure SOC scores. We therefore evaluated the accuracy of the teacher corrections and our scripts against a manually annotated gold standard. Appendix 4 in supplemental material explains the manual error annotation procedure in detail. The results of the error annotation indicate high reliability. For the manual annotation, first, a trained annotator and the third author analysed the same 55 scripts and reached an inter-annotator agreement of over 97%. Then they analysed 55 scripts each, identifying a total of 370 errors in the use of articles. Overall, they reached an agreement of over 88% with original teachers’ error annotation.

4 Data analysis and variables

Considering that our focus – learners’ accuracy in article use – was based on the proportion of correct article use in each writing sample in relation to the number of obligatory article contexts, we adopted generalized linear mixed models with a binomial distribution and logit link function. The outcome variable was accuracy in each obligatory context (1 if article is used correctly; 0 if it is omitted or substituted). This modelling approach allowed us to quantify the proportion of correct article use in each writing sample in relation to the number of obligatory article contexts. We constructed two models: first, we investigated whether the availability of definite and indefinite articles in learners’ L1s, along with proficiency, predicts their accuracy in article use. Second, we examined whether L1–L2 English linguistic distance impacts article accuracy.

We, therefore, considered four predictors of learner accuracy in article use: L1 type, linguistic distance, EF teaching levels, and article type.

a L1 type

A two-level categorical variable indicating whether learners’ L1 associated with our NL groups has a definite article (

Grouping of national languages based on first language (L1) type.

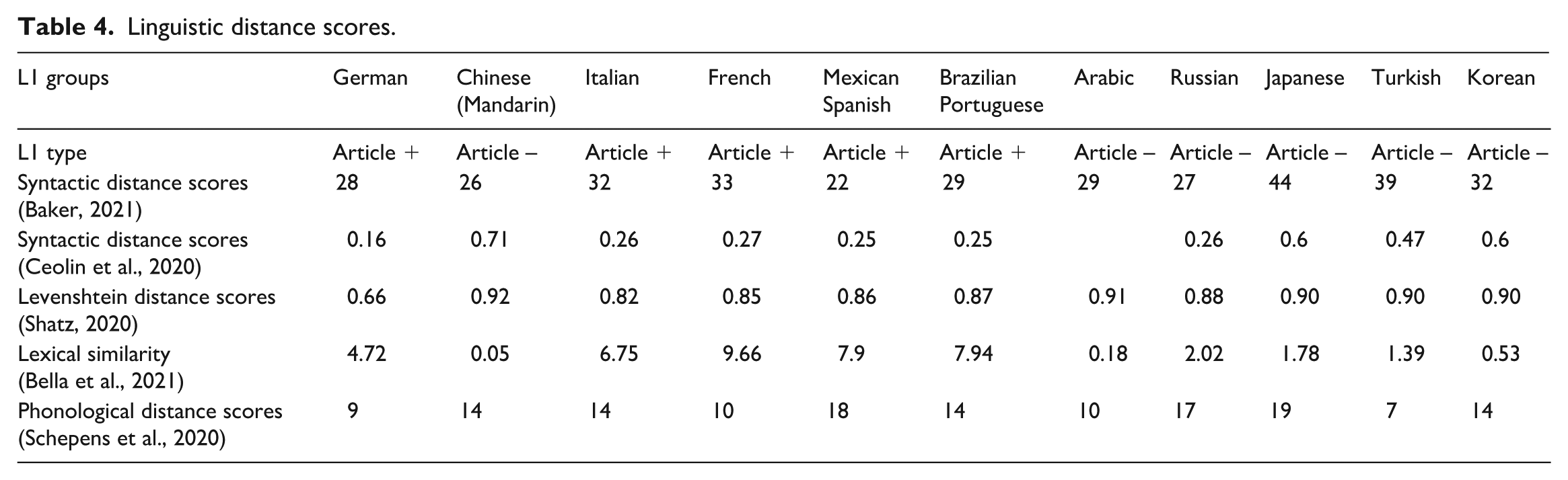

b Linguistic distance

These are continuous variables measured for phonological, lexical, and morphosyntactic distances. We calculated crosslinguistic lexical distance using two measures. First, we used Levenshtein Distance, a commonly used measure of lexical distance. The Levenshtein Distance scores correlate with expert cognancy judgement (e.g. Schepens et al., 2013), with linguistic distance measures based on morphological features (e.g. Schepens et al., 2020), and with different psycholinguistic measures including lexical knowledge (e.g. De Wilde et al., 2010). We calculated the Levenshtein Distance scores by taking L1–L2 word pairs and calculating the minimum number of character substitutions, additions, and deletions that are needed to transform one string to another. Then we divided this number by the length of the longer word (in terms of number of letters). In the case of the word blue, for example, the English–German pair /blue/ and /blau/, the Levenshtein Distance score is 0.50 because there are two-character transformations, and the word length is 4. The smaller the scores, the closer the two languages are. The measures were based on an extended Swadesh list of 38 concepts that are shared by all the national languages in our sample.

Second, we used the lexical similarity scores provided by Bella et al. (2021). These scores capture the number of similar words, based on cognancy, in the contemporary lexicons of pairs of languages. The similarity score is a single number between 0 and 100. Larger scores indicate a higher number of shared cognates between two languages. The wordlists used for comparisons were obtained from the large, general, and contemporary lexicons. The score is accompanied by a confidence rating depending on the size of the lexicons over which the similarity score was calculated. All of the 11 L1 groups involved in this study had high confidence ratings.

We also used the measures of phonological similarity developed by Schepens et al. (2020), capturing similarity between languages in terms of how many sound categories they share. Specifically, this measure considers the effects of three aspects: new sounds (sounds present in English but not in learners’ L1), missing sounds (sounds present in L1 but not in English), and different sounds (different sound categories between L1 and English) (Schepens et al., 2020).

Finally, for morphosyntactic distance, we used the syntactic distance scores provided by Baker and Roberts (see Baker, 2021) and Ceolin et al. (2020). Both studies used the parametric comparison method proposed in Longobardi and Guardiano (2009). The unit of measurement is the generative syntactic parameter which may be set positively or negatively for a given feature, e.g. a positive feature setting for the word-order parameter in case of head-final order, or a negative setting for head-initial orders. Baker (2021) used 88 generative morphosyntactic parameters and provided distance scores for 33 languages; they considered the positive/negative setting of features such as person, tense, evidentiality, passive voice, the locus of feature realization and word order and movement parameters (e.g. verb–object ordering, wh-movement). Ceolin et al. (2020) focused on syntactic distance in the nominal structure. Extending Longobardi and Guardiano (2009), they used 94 parameters capturing crosslinguistic variation in nominal syntax and provided scores for 69 Eurasian languages. 3

Table 4 summarizes the linguistic distance measures between English and the 11 L1s considered in this study. As can be seen, lexico-semantic scores (Bella et al., 2021) capture very well the distance between Romance languages and English but do less well in capturing the closeness of English with German, and the closeness of Russian, as an Indo-European language, to English. Levenshtein distance scores seem to distinguish very well between Indo-European languages, but do not show any distance differences for Turkish, Korean, Chinese, and Japanese. The syntax distance scores provided more variability across all languages in our sample, including non-Indo-European languages. According to nominal syntax measures, Chinese is the most distant language from English (0.71) followed by Korean and Japanese (0.6), and then Turkish (0.47). Unfortunately, we do not have a nominal syntax distance score for Arabic. The Baker (2021) syntax scores also showed variability in non-Indo-European languages. One notable difference with all other linguistic distance scores is that Mandarin Chinese had a low distance score from English (28), comparable to German (26) and Romance languages. This is probably due to the fact that Chinese shares with English basic word order (except the nominal domain) and no agreement which compares with impoverished agreement in English.

Linguistic distance scores.

c EF teaching levels

We based proficiency on Englishtown EF teaching levels 1–12 (mean 4.38, SD 2.84), corresponding to CEFR levels A1–B2. The value indicates the proficiency level of the lesson for which a writing is submitted by the learner; indirectly, this value captures the learners’ proficiency levels at the time of writing, which could be at the start of their learning programme or moving on from a preceding level.

d Article type

Article is a categorical variable with two levels: definite (reference level) and indefinite. We extracted a total of 2,774,915 obligatory contexts for both article types, 37.77% were indefinite and 62.22% were definite. We included article type in our models to specifically examine whether the effects of L1 type and linguistic distance scores on accuracy vary as a function of article type.

V Results

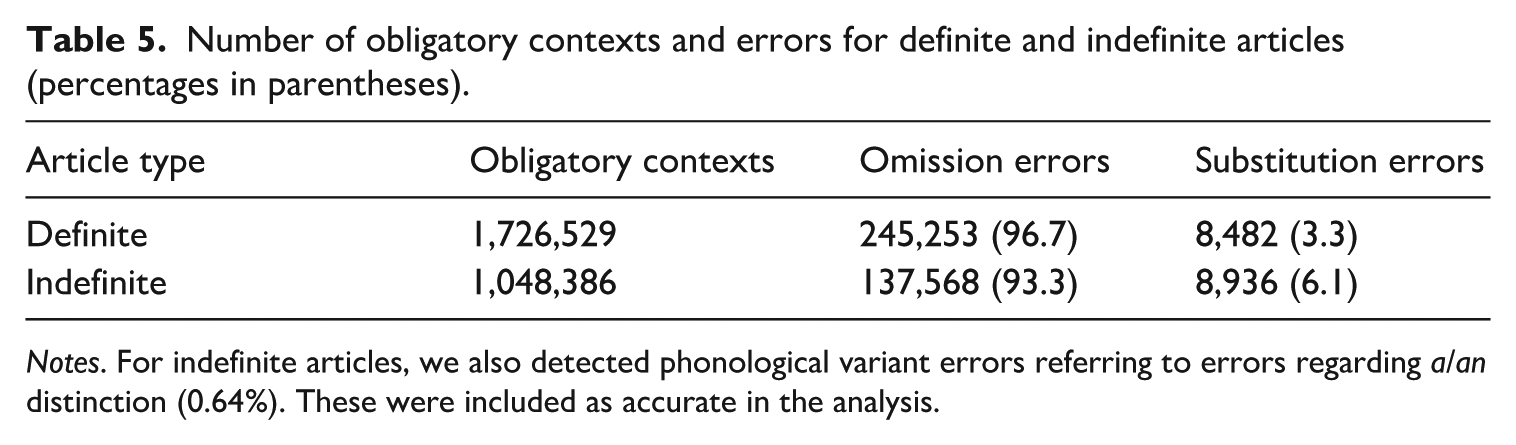

1 Distribution of errors in obligatory contexts

Table 5 shows that the obligatory contexts for the definite article outnumber the contexts for the indefinite article by approximately 1.7 to 1, suggesting a significantly higher opportunity for the use of the definite article. In both contexts, omission errors represent the vast majority of errors, with substitution errors just over 6% in indefinite contexts, roughly double the 3.34% of substitution errors found in definite contexts. The unequal distribution of the obligatory contexts for the two articles means that we have a much higher number of errors for the definite article than the indefinite article.

Number of obligatory contexts and errors for definite and indefinite articles (percentages in parentheses).

Notes. For indefinite articles, we also detected phonological variant errors referring to errors regarding a/an distinction (0.64%). These were included as accurate in the analysis.

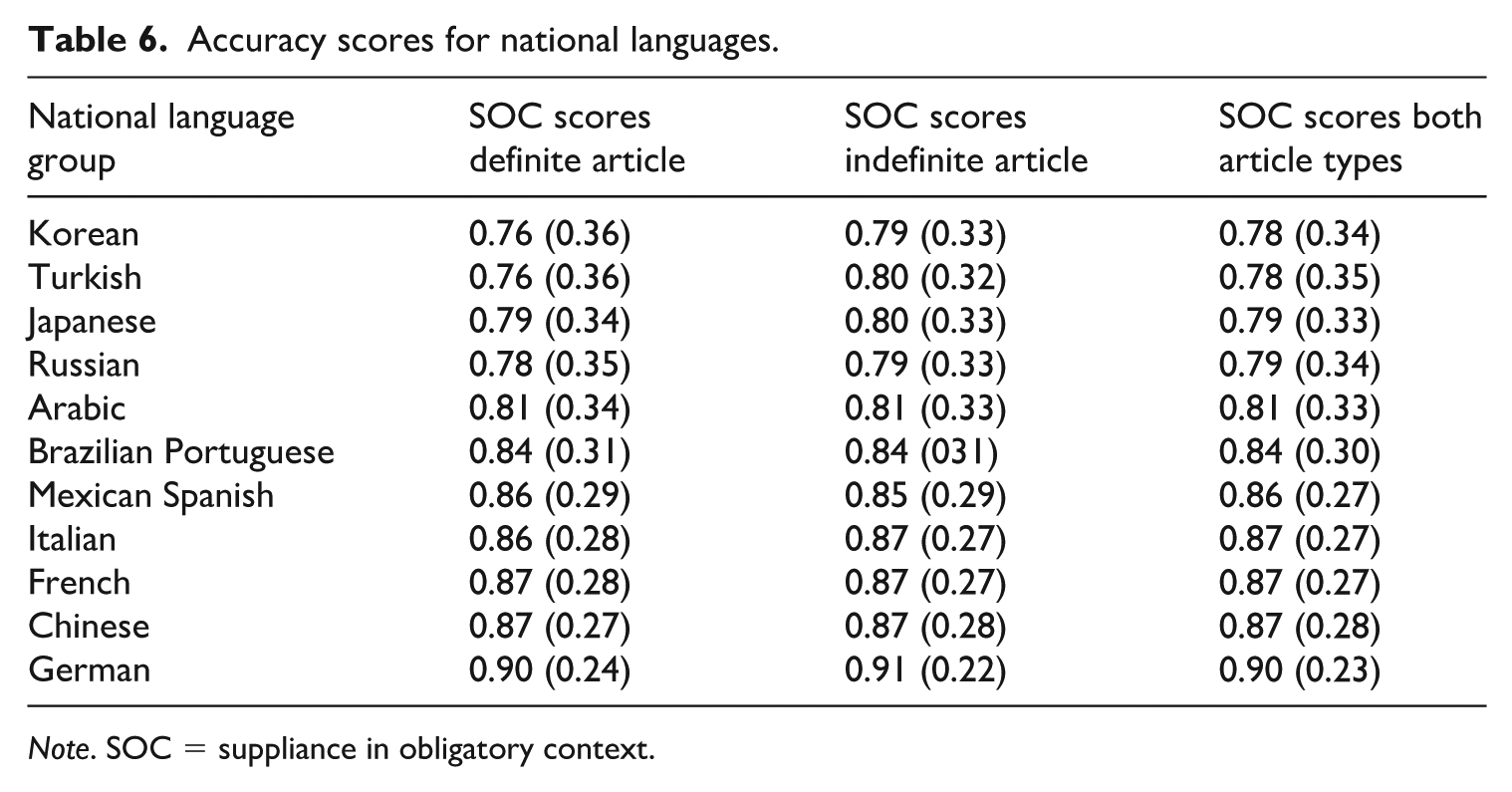

2 The effects of national languages on accuracy

Table 6 shows the mean accuracy scores of suppliance of the indefinite and definite articles in obligatory contexts across all EF teaching levels for each NL group, ranked from lowest to highest accuracy score. We generally observe high accuracy, ranging from 78% (SD = 34) to 90% (SD = 23). Looking at the rightmost column, we can observe that the lowest scores belong to NL groups without definite articles in the corresponding L1s (

Accuracy scores for national languages.

Note. SOC = suppliance in obligatory context.

Turning to the influence of the indefinite article, we observe that the availability of the indefinite article in the L1 does not seem to impact on article accuracy in L2. For example, Turkish learners whose L1 has an indefinite article do not seem to do better than, e.g. Japanese, or Korean learners, who lack an indefinite article. At the same time, the unavailability of an indefinite article in Arabic does not lead to lower accuracy for Arab learners with the indefinite article. Crucially, Arab learners show higher accuracy with the indefinite article than, e.g. Turkish learners (in fact, all learners in the

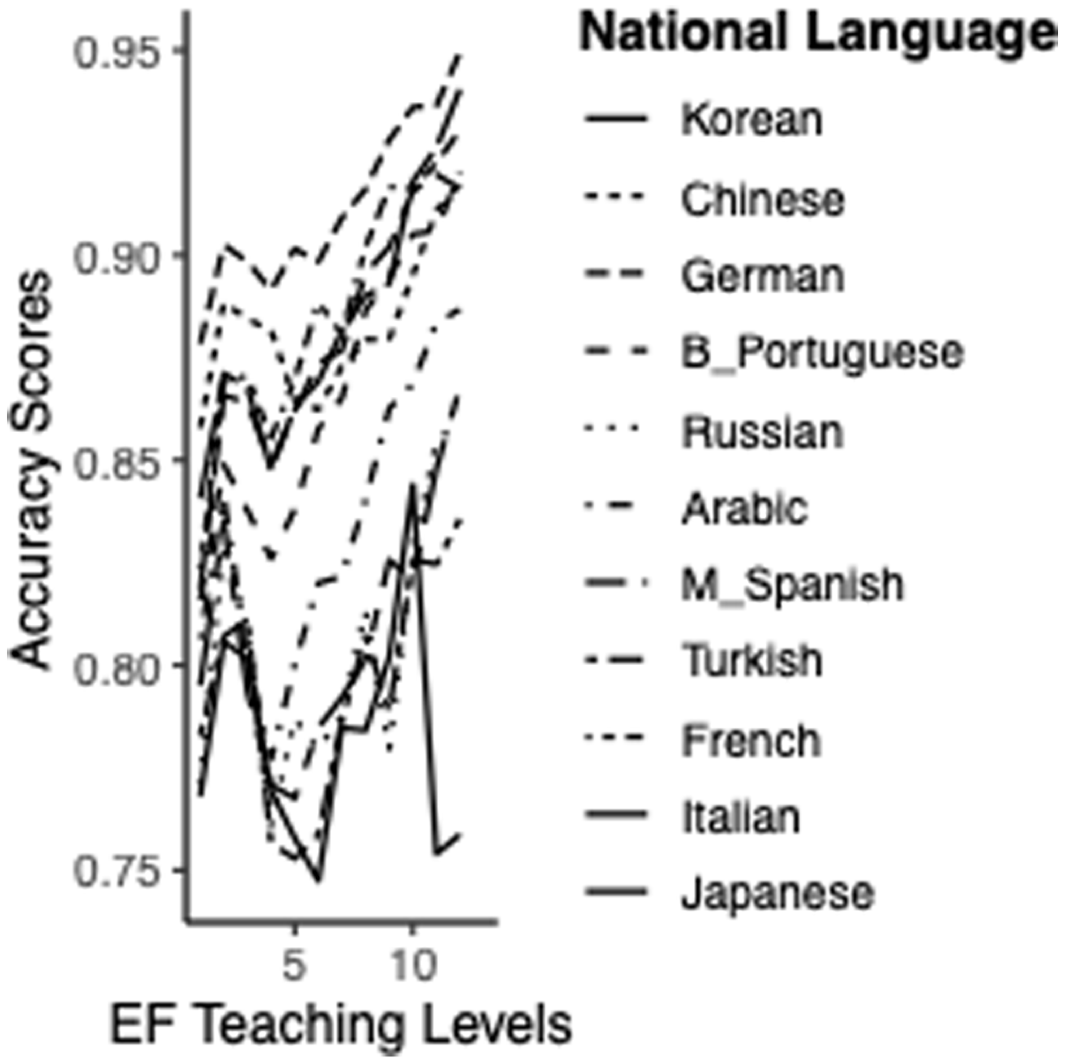

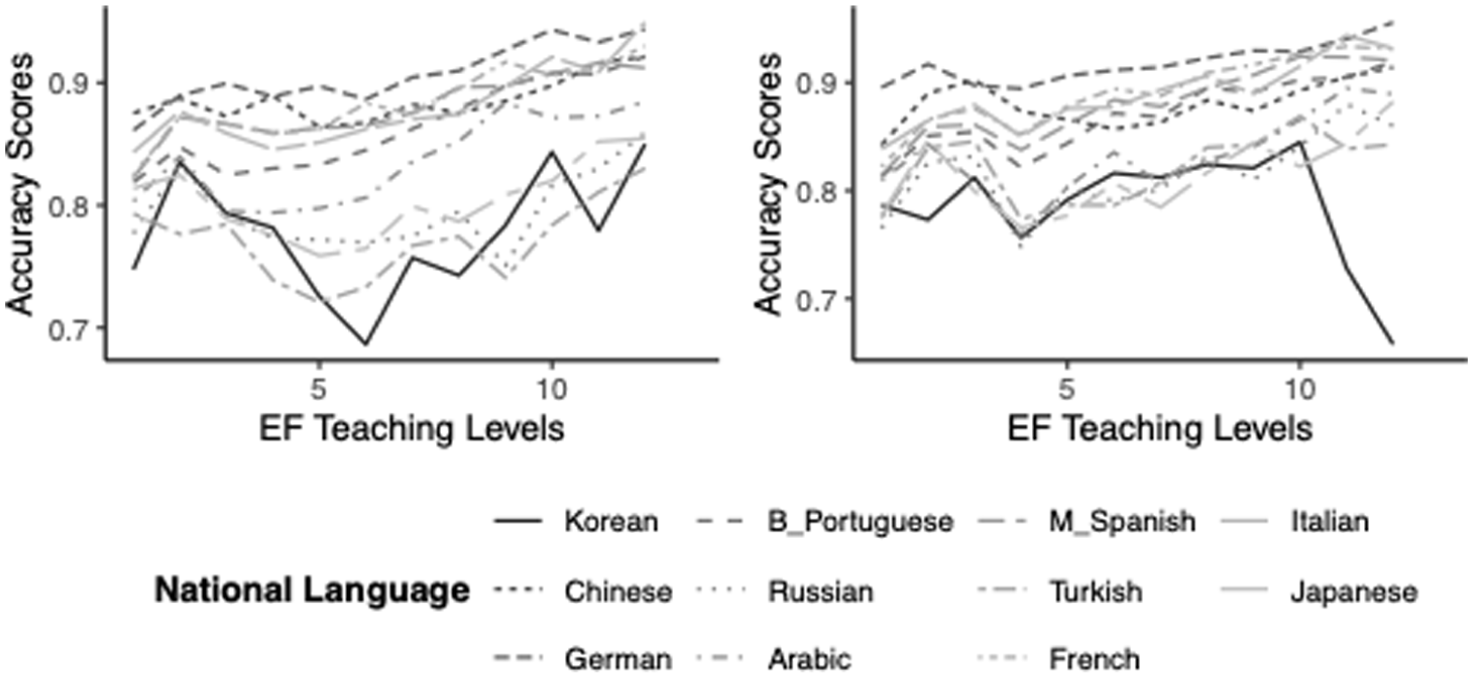

To facilitate comparisons between NL groups, Figures 1 and 2 show the average accuracy scores for both definite and indefinite articles across EF teaching levels for all NL groups. As expected, accuracy improves with proficiency. At the same time, the NL differences that are present at beginner EF teaching levels are also present at the end of intermediate teaching levels (the highest proficiency points in our data), suggesting that proficiency cannot overturn NL language effects, at least up to intermediate levels. Figure 1 shows the highest and lowest SOC scores in each NL group across EF teaching levels. Moreover, it shows that

Developmental trajectories of accuracy scores across national language groups.

Developmental trajectories of accuracy scores across national language groups for definite articles (left) and indefinite articles (right).

Figure 2 shows accuracy across EF teaching levels per national language group separately for the definite and indefinite article. The patterns are similar for the two articles though Arab learners seem to cluster with the

3 Model development

We built two generalized linear mixed models with a binomial distribution, using the lme4 package in R to address our research questions (Bates et al., 2015; Version 4.3.2; R Core Team, 2023). We calculated the p-values using the lmerTest (Version 3.1-3; Kuznetsova et al., 2017). 4 We constructed multiple models and compared them to identify the most plausible model, using the Akaike Information Criterion (AIC) values. For model selection, we opted for a forward-selection approach (see James et al., 2013). We began with the simplest model and added predictors incrementally, only if they improved model goodness-of-fit (see also Murakami, 2016). For both models, we first built an unconditional model, including only random intercepts.

To control for individual variation, we included by-learner and by-national language random intercepts. The by-learner intercepts accounted for variation in article accuracy across individual learners, while the by-national language intercepts captured overall accuracy differences among native language groups. Additionally, we included by-national language random slope for article types in both models, representing variation in accuracy differences between article types across national language groups. This implies that some learners, depending on the characteristics of their native languages, may learn to use articles more quickly than others. In Model 1, we investigated whether L1 type, alongside EF teaching levels, predicts learners’ accuracy in their use of definite and indefinite articles. As fixed effects, Model 1 included L1 type (

4 The effects of L1 type on article use accuracy

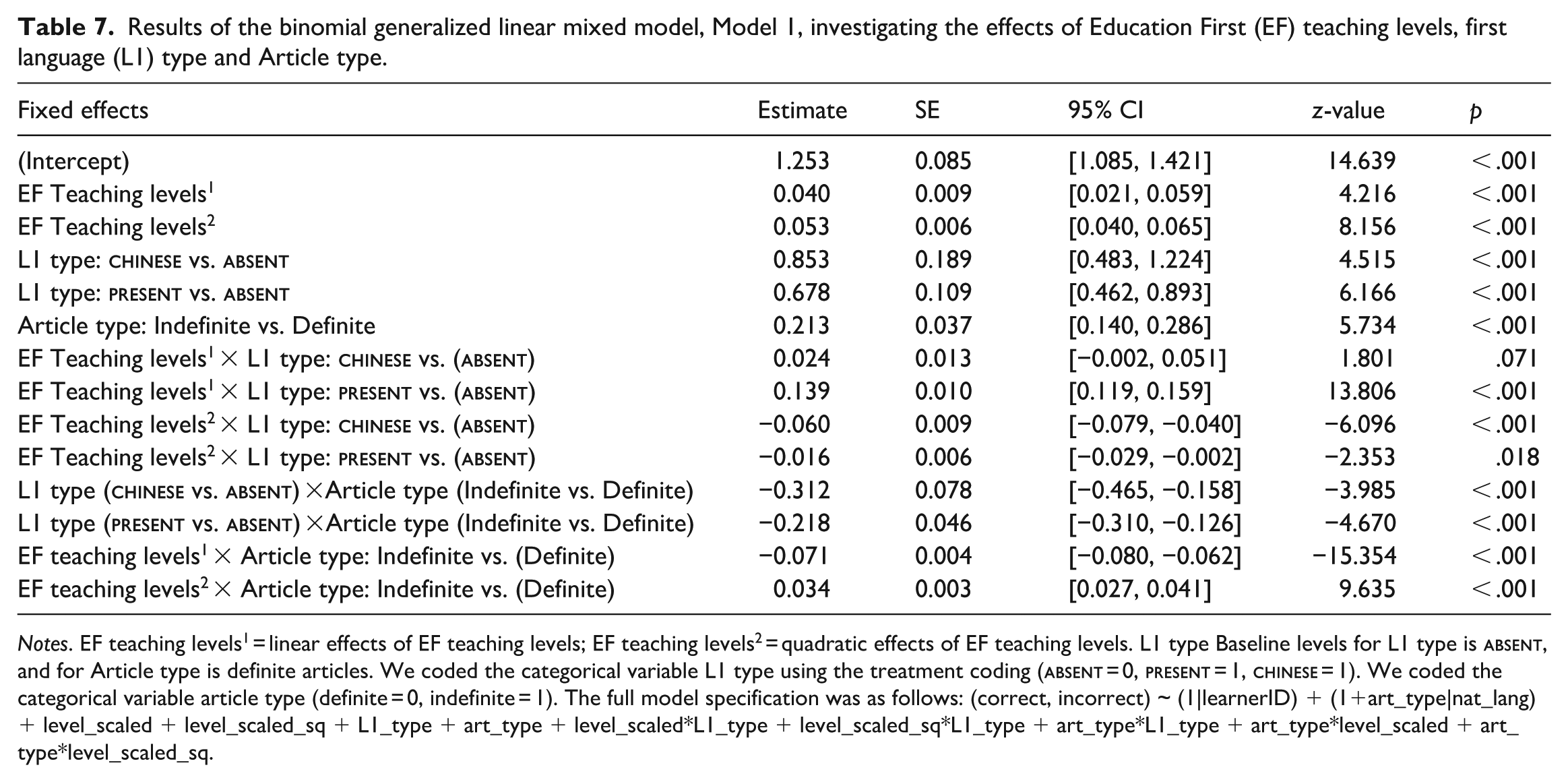

The results are presented in Table 7. The VIF scores of L1 type, EF teaching levels (including linear and quadratic effects) and article type did not indicate any problems with multicollinearity (Fox & Weisber, 2019; all VIF scores < 1.40). As predicted, the main effect of L1 type was significant. Learners in both

Results of the binomial generalized linear mixed model, Model 1, investigating the effects of Education First (EF) teaching levels, first language (L1) type and Article type.

Notes. EF teaching levels1 = linear effects of EF teaching levels; EF teaching levels2 = quadratic effects of EF teaching levels. L1 type Baseline levels for L1 type is

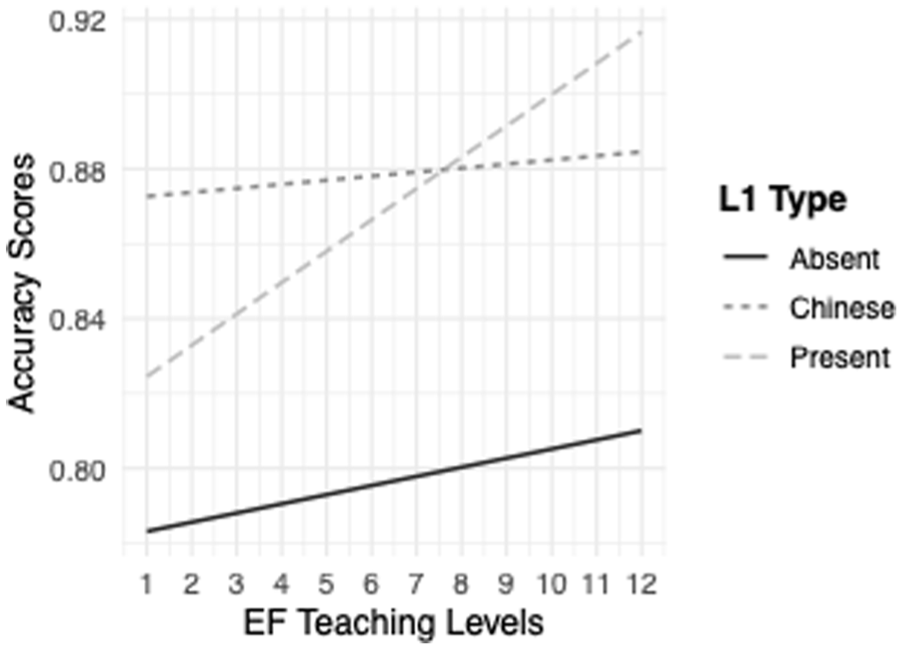

To decompose the significant interaction between L1 type and EF teaching levels, we employed the emtrends function in the emmeans package in R (Lenth, 2024) to obtain the simple slopes for EF teaching levels including both linear and quadratic effects by each level of L1 type. The linear effect of EF Teaching levels on article accuracy was significantly lower for learners in the

Interaction between first language (L1) type (

The quadratic effects of EF teaching levels on article accuracy were significantly higher for learners in the

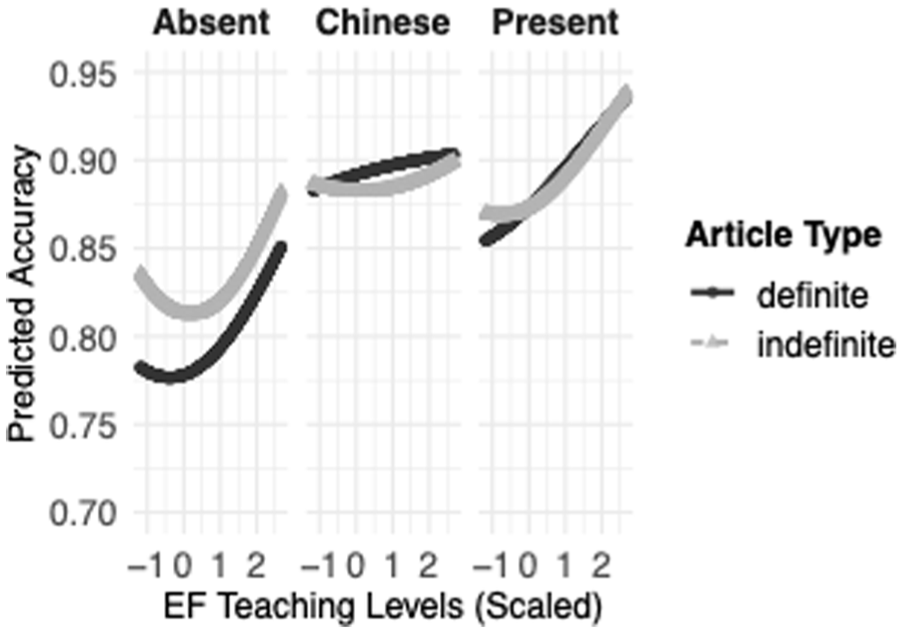

Predicted accuracy by Education First (EF) teaching levels (linear and squared) and article type for each first language (L1) type.

We deconstructed the significant interaction between article type and EF teaching levels. The linear effect of EF teaching levels on article accuracy was higher for definite articles compared to indefinite articles, Estimate = 0.072, SE = 0.004, z = 15.418, p < .001 (0.1523–0.0802 = 0.072). However, the quadratic effect of EF teaching levels showed a different pattern. As EF teaching levels increased, the improvement in article accuracy accelerated more for indefinite articles, indicating a non-linear relationship in which learners’ accuracy with indefinite articles improved at an increasing rate with higher EF teaching levels, Estimate = −0.034, SE = 0.003, z = −9.635, p < .001 (0.027−0.062). Figure 4 illustrates the interaction effect, incorporating both linear and quadratic effects of teaching levels.

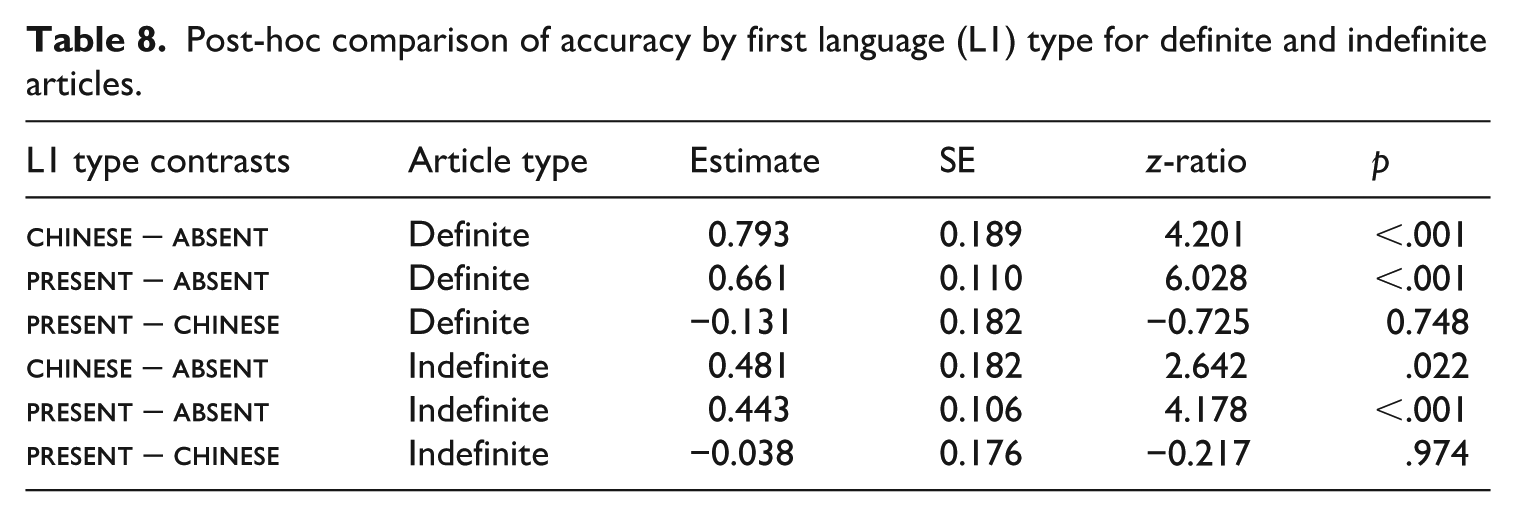

In order to gain a clearer understanding of the interaction between L1 type and article type, we ran a series of post-hoc tests using least-square means employing the emmeans package in R (Lenth, 2024), with Tukey adjustments for multiple comparisons. The results indicated that the learners in both the

Post-hoc comparison of accuracy by first language (L1) type for definite and indefinite articles.

5 The effects of linguistic distance on article use accuracy

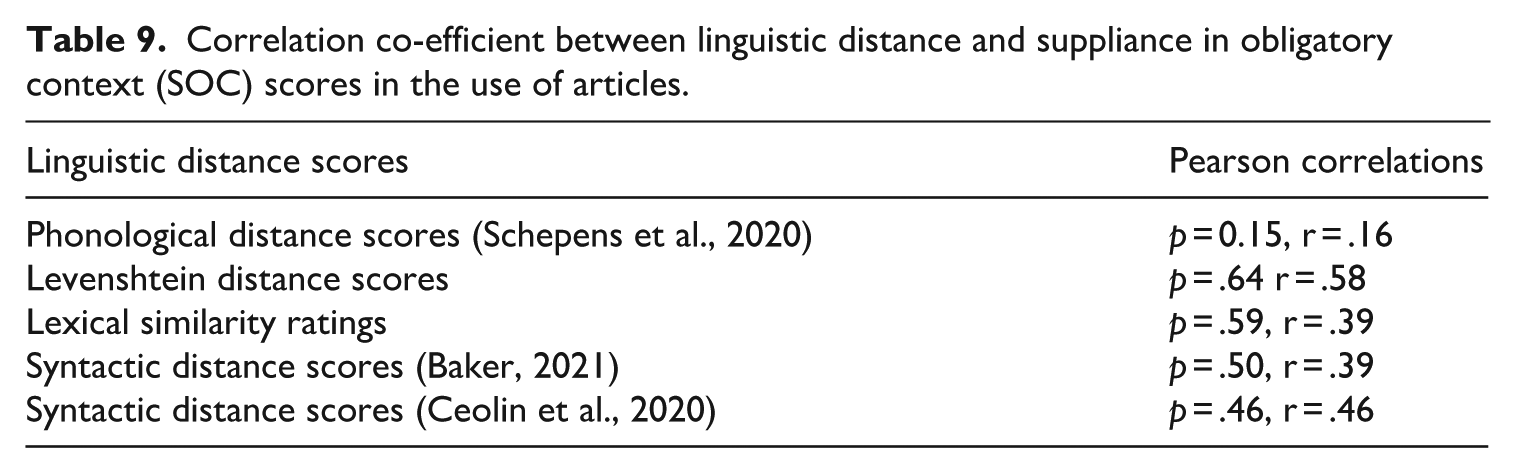

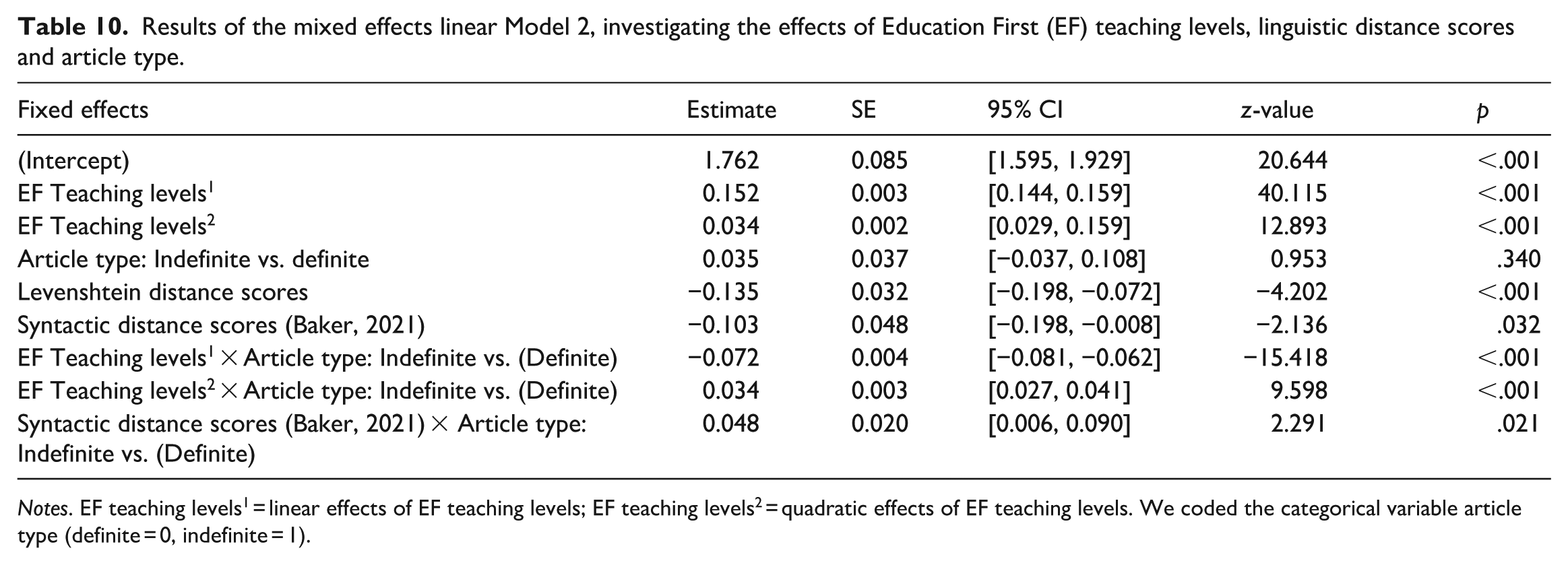

Table 9 shows correlations between linguistic distance scores and accuracy. As can be seen, there is a medium-to-strong correlation between Levenshtein distance and accuracy, medium size correlations for the syntactic distance scores, and essentially no correlation with phonological scores. We constructed generalized linear mixed models with a binomial distribution, to investigate the effects of linguistic distance scores on the accuracy of article use. In this model (Model 2), national languages are represented with linguistic distance scores, as can be seen in Table 4. The VIF scores of EF teaching levels, article type, Levenshtein distance scores, and the Baker (2021) syntactic distance scores did not indicate any problems with multicollinearity (VIF scores < 1.40). As we aimed to analyse the effect of linguistic distance, we decided not to include L1 type in the second model. Similar to Model 1, the resulting final model included random intercept for subject, a by-L1 random intercept for L1 and also a by-L1 random slope for article type. For fixed effects, Model 2 included EF teaching levels with linear (i.e. EF Teaching levels1) and quadratic (i.e. squared, EF Teaching levels2) effects, article type (INDEFINITE or DEFINITE), Levenshtein distance scores, and the Baker (2021) syntactic distance scores. Phonological and Ceolin et al.’s (2020) nominal syntactic distance 5 scores did not enter into the final version of the model, as these predictors did not improve the model’s goodness-of-fit. The resulting final model also included the interaction terms, EF teaching levels and article type, Baker (2021) syntactic distance scores and article type.

Correlation co-efficient between linguistic distance and suppliance in obligatory context (SOC) scores in the use of articles.

The results are presented in Table 10. Both the linear and quadratic effects of EF teaching levels were significant, showing that the accuracy of article use was higher in learners of higher proficiency. Turning to linguistic distance scores, the main effects of both Levenshtein distance and Baker (2021) syntactic distance scores were significant, as they predicted article accuracy. In other words, learners in the L1 groups with lower Levenshtein and Baker (2021) syntactic distance scores, such as German, used articles more accurately than learners in the L1 groups with higher distance scores, such as Turkish and Korean. The main effect of article type was not significant.

Results of the mixed effects linear Model 2, investigating the effects of Education First (EF) teaching levels, linguistic distance scores and article type.

Notes. EF teaching levels1 = linear effects of EF teaching levels; EF teaching levels2 = quadratic effects of EF teaching levels. We coded the categorical variable article type (definite = 0, indefinite = 1).

We deconstructed the significant interaction between article type and EF teaching levels. The linear effect of EF teaching levels on article accuracy was higher for definite articles compared to indefinite articles, Estimate = 0.153, SE = 0.004, z = 15.418, p < .001 (0.152–0.080 = 0.153). However, indefinite articles show a stronger quadratic trend, indicating that as EF teaching levels increased, the improvement in article accuracy accelerated more for indefinite articles, indicating a non-linear relationship, Estimate = −0.034, SE = 0.003, z = −9.598, p < .001 (0.034 (definite) –0.069 (indefinite) = −0.036).

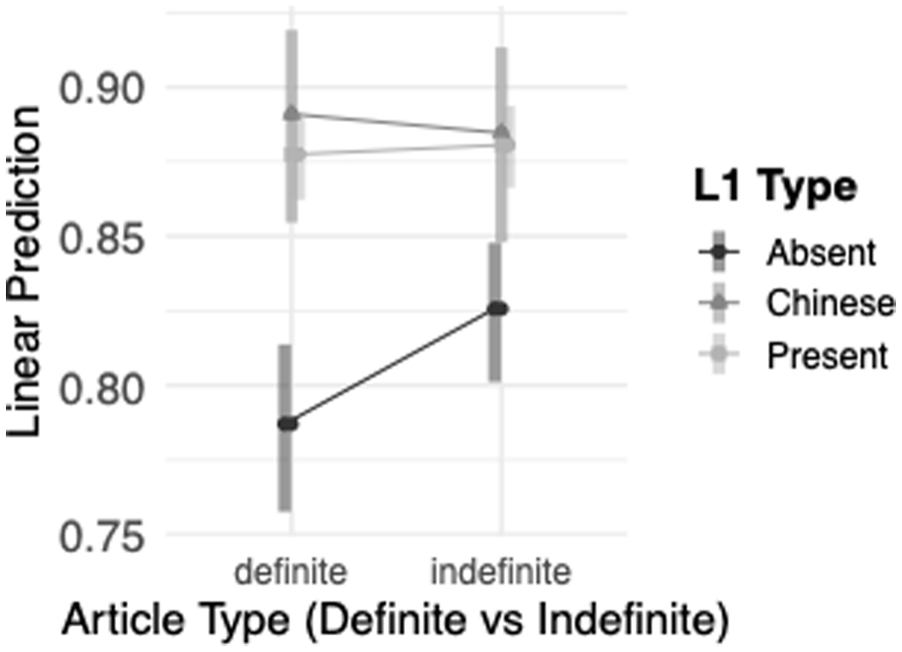

We also deconstructed the significant interaction between article type and Baker (2021) syntactic distance scores. As the scores increase, accuracy for both article types appears to decrease slightly. The difference in trends between the two types of articles is significant, with definite articles showing a larger decrease in accuracy compared to indefinite articles, Estimate = −0.048, SE = 0.021, z = −2.291, p = .02 (−0.103–0.055 = −0.048).

VI Discussion

Below we summarize our main findings:

A higher number of definite obligatory contexts (approximately 1.7 definite contexts for each indefinite one.).

Overall high accuracy in suppliance of the articles, ranging from 75%–90% of correct suppliance in obligatory contexts.

Errors predominately involve omission, with substitution errors below 6% of all errors.

The presence of a definite article in learners’ L1 predicts both their accuracy in L2 article usage and the extent of their improvement with proficiency. Specifically, learners in the

When teaching levels are considered in quadratic terms, a more nuanced picture emerges regarding the progress learners make with proficiency. Thus, as can be seen in Figure 5, learners from the

Learners from the

Interaction between article type (definite, indefinite) and first language (L1) type (

We begin with the observation that overall, our learners show relatively high levels of accuracy ranging between 75%–90%. By comparison, accuracy in Murakami and Alexopoulou, (2016) ranged between 65%–93% for beginner and intermediate levels of proficiency. The higher accuracy of learners in our study can be explained by the fact that Murakami and Alexopoulou, (2016) reported results from the Cambridge Learner Corpus, a corpus of high-stakes exams. By contrast, the learners of our study produced writings as part of their learning in an open setting with access to resources. Their higher accuracy is, therefore, not as surprising. It is worth noting that even a 15%–20% error rate is highly noticeable for articles since each sentence may contain a couple of article obligatory contexts. As we mentioned in our methodology section, our manual evaluation indicated that the teacher corrections were reliable, so the higher accuracy is not due to teachers missing learner errors.

Our results confirm earlier findings that article omission is the main error type, that accuracy improves with proficiency, and that the availability of an article in the L1 leads to higher accuracy in the use of the L2 English articles. One important limitation of the current study is that we were not able to consider overgeneralization errors. However, a comparison with Murakami and Alexopoulou, (2016) and Derkach and Alexopoulou (2023), who include overgeneralization errors and calculate TLU scores, shows that omission is the predominant type of error while the comparison between TLU and SOC scores does not impact on the overall picture of the L1 influence on L2 accuracy (Murakami and Alexopoulou, 2016).

Higher proficiency does not appear to close the accuracy gap between the

The frequency distribution of the two target articles, definite vs. indefinite did not seem to play any role in learner accuracy. If it did, we should see related differences in the accuracy of the two articles, with the definite article potentially showing higher accuracy given its higher frequency. The analysis showed that within the

Regarding L1 influence, L1 article type (

We should acknowledge that our distinction between NP and DP languages (see Bošković, 2008) oversimplifies and abstracts away from theoretical debates regarding the DP/NP distinction and the role of the definite article in assuming a DP (see, among others, Franks and Pereltsvaig, 2004; Gillon and Armoskaite, 2015; Crisma et al., 2020; Kornfilt, 2017, 2018; Köylü,, 2021; Lyutikova and Pereltsvaig, 2015; Progovac, 1998; Salzmann, 2020). Further investigation of finer grained distinctions in the use of the articles in different contexts (e.g. regarding specific or generic nouns) might well need to draw from more nuanced typological distinctions. However, our current findings do lend support to a broad typological distinction between NP and DP languages.

We should also note that, while our results are consistent with the generative hypothesis that languages vary in allowing DPs or bare NPs as arguments, alternative hypotheses cannot be excluded. For example, it could be argued that the definite article has a prominent discourse function and that the acquisition of the indefinite article is somehow dependent semantically on the acquisition of the definite article, making the definite article a better predictor of L2 accuracy. Note, however, that the learners from the

Chinese learners did not confirm the prediction that the absence of an article in the L1 leads to lower accuracy in the L2. In fact, Chinese learners showed accuracy which was higher than the average accuracy in the

Our data also indicated broader typological effects on the accuracy of L2 English article use. Within the

The correlation between linguistic distance scores and accuracy demonstrates that the effect of L1 on L2 articles goes well beyond the availability of an article/DP in the L1 and suggests influence from a broader range of typological properties. Thus, our study provides a positive answer to our main research question, namely whether L2 accuracy is solely influenced by the availability of an article in the L1 or by broader L1 properties.

Linguistic distance scores had a small effect in the regression model with the Ceolin et al.’s (2020) not reaching significance. This is not surprising in a model that has proficiency as a predictor. After all, all learners improve with proficiency. Crucially, L1 influence captures variation among learners of the same proficiency. It is, therefore, not surprising that proficiency is a stronger predictor of accuracy than linguistic distance in the regression model.

While linguistic distance scores capture the broader typological effects, they do not tell us which L1 properties and features are implicated and how they might affect the acquisition process. Since the acquisition of articles involves the syntax–semantics interface as well as discourse and information structure, it is reasonable to expect that a range of other properties might influence acquisition and underpin the typological effects: for example, other properties of the nominal system (e.g. whether the L1 has grammatical number and a count/mass distinction, encoding of specificity and case marking etc.), as well as broader properties (e.g. word order, tense and aspect marking, information structure etc.). Further qualitative empirical research is needed to reveal what underpins these broader typological effects. However, the present study has demonstrated that such broader typological effects are indeed part of the L1 influence on L2. These effects might also, partially, explain why many advanced learners will have difficulty surpassing the 90% accuracy threshold in their use of articles (see Murakami and Alexopoulou, 2016; Lardiere, 2008), as they suggest that the acquisition of one morpheme depends on a broader range of features.

VII Conclusions

Our study provides evidence that L1 influence arises from the combination of item level L1–L2 differences, e.g. in the availability of an article, as well as broader properties of the L1 grammar. It also shows that L1 influences overall accuracy as well as the rate of accuracy improvement at different proficiency levels. It also provides support for the generative typological distinction between DP and NP languages (Bošković, 2008), indicating that it is the availability of a definite article and a DP that predicts the use of bare nominals in the L1 and, accordingly, article omission in L2 English. Our study also highlights the use of big data for SLA research, which has enabled the study of 11 typologically diverse languages, a sample of languages that is much broader than what is available to the typical lab-based or field-work SLA studies. In this way, corpus studies based on data from educational institutions can complement the standard experimental developmental SLA studies.

At the same time, our study underscores the need for further empirical research, particularly qualitative studies, to gain a more comprehensive understanding of how linguistic distance affects the acquisition of individual L2 items. Future research should pinpoint which specific properties influence acquisition. Crucially, how similarity and transfer might lead to negative transfer, potentially hindering rather than facilitating acquisition. An example of this is the transfer of generic interpretations of the definite article from Romance languages to L2 English (see Ionin and Montrul, 2010). To fully grasp the nature of L1 influence in relation to the typological properties of L1 and L2, it is crucial to synthesize insights from targeted developmental studies with broader patterns revealed by large-scale corpus analyses.

Supplemental Material

sj-docx-1-slr-10.1177_02676583251395876 – Supplemental material for The influence of L1 typology on the acquisition of the L2 English article: A large-scale corpus study

Supplemental material, sj-docx-1-slr-10.1177_02676583251395876 for The influence of L1 typology on the acquisition of the L2 English article: A large-scale corpus study by Doğuş Öksüz, Theodora Alexopoulou, Kateryna Derkach and Ianthi Maria Tsimpli in Second Language Research

Footnotes

Acknowledgements

The authors would like to thank Thomas Hammond for his support in manual error annotation.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is funded by the Leverhulme Trust Research Project Grant ‘Linguistic Typology and Learnability in Second Language’ (RPG-2018-123) and a grant by EF Education First supporting the EF Lab on Applied Language Learning

Open Badges Statement

Data Availability Statement

No data were collected from participants for this article; we analysed pre-existing data from the EF Cambridge Open Language Database.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.