Abstract

The Canadian English Language Proficiency Index Program (CELPIP) is a computer-delivered test for English language proficiency, primarily used for Canadian immigration purposes. This review begins by contextualizing the test’s use as an immigration gatekeeping instrument, followed by an overview of its underlying construct and the four test components: listening, reading, writing, and speaking. We then appraise the test in terms of its accessibility, reliability, validity, authenticity, and impact. While we appreciate the “Canadian-ness” of the test, the user-friendly computer-based test delivery, and the accessible approach to sharing scoring criteria, we also identify several shortcomings regarding transparency in scoring, attention to interactional competence, and attention to research on test impact. We close with a brief commentary on the use of such tests for selecting and controlling immigrants.

Introduction

The Canadian English Language Proficiency Index Program (CELPIP) is a computer-delivered English test designed to assess the functional 1 language proficiency required for communications in Canada in social, educational, and workplace settings. It was developed by Paragon Testing Enterprises (hereafter “Paragon”), originally established as a for-profit affiliate of the University of British Columbia. In 2021, Paragon was acquired by the Canadian subsidiary of Prometric, a U.S. company.

CELPIP is available in more than 140 2 designated centers worldwide. As stated on the Paragon website, CELPIP tests are accepted by several government immigration programs, professional organizations, colleges, universities, and employers. According to the CELPIP Annual Report of 2022 Test Takers (Prometric Canada, 2023a), 91.4% of the test takers took the CELPIP for Canadian immigration purposes. Paragon has a service agreement with the Government of Canada (specifically, Immigration, Refugees and Citizenship Canada [IRCC]) to deliver arm’s length third-party language testing services for economic immigration and citizenship. 3 In addition to the CELPIP, at the time of writing only one other English test is being used for this purpose, 4 the IELTS (General Training). Two French tests are currently designated, TEF Canada (Test d’évaluation de français), and TCF Canada (Test de connaissance du français).

To preface this review, it is important to contextualize the test’s use as a gatekeeping instrument within Canadian immigration policy. We then proceed to the body of the review, which includes a description of the test incorporating critical comments, followed by a more in-depth appraisal organized by the key assessment qualities of accessibility, reliability, validity, authenticity, and impact.

This review has been informed by official and publicly available information from Paragon and Prometric Canada as well as our experiences taking the two free practice tests that are currently available on the website. According to communications with Prometric Canada, these CELPIP practice tests are retired versions of CELPIP tests, subject to the same pre-testing and item performance analysis process as operational CELPIP versions. In addition, the first author registered for and took one official test version during the preparation of this review. Therefore, we bring both a language assessment specialist perspective and a test taker perspective to this review. 5

Context of test use

Since 1971, the official federal government position on immigration has been informed by the Canadian Multiculturalism Policy (Trudeau, 1971) and the Canadian Multiculturalism Act (1988). These policies explicitly promote the preservation of immigrant communities’ cultures and languages (Brosseau & Dewing, 2018). Central to this government position is that the immigration process does not discriminate based on race, class, ethnicity, gender, religion, or other personal factors (Dauvergne, 2016).

There are several immigration pathways into Canada (leaving aside discussions of Quebec, which has a separate system), but for those without family ties or refugee status, skilled immigration through the points-based Comprehensive Ranking System (CRS) is the primary option. The point system (originally introduced in 1967) gives potential immigrants a score based on their level of education, age, work experience, and official language proficiency levels. This point system carries the implication of preferences for specific groups, namely, high-skilled, employable, and wealthy applicants, suggesting a mismatch between liberal multiculturalism policies and more neoliberal commercial aims (Haque, 2017).

According to Immigration and Refugee Protection Regulations (2002), a skilled worker must demonstrate their abilities in either English or French to fulfill the language requirement, through one of the tests mentioned earlier. These test scores are aligned to the Canadian Language Benchmarks (CLB) framework of reference for English or its French equivalent, the Niveaux de compétence linguistique canadiens (NCLC). The minimum language level to be placed in the candidate pool for consideration by IRCC varies from CLB 4 for reading and writing plus CLB 5 for speaking and listening, to a CLB 7 6 (CELPIP level 7) in all four language areas (speaking, listening, reading, and writing). In addition, it is possible to get additional points by demonstrating language abilities over CLB 5 with an additional test in the other official language (IRCC, 2022). There are not many options for applicants to increase their points in the short term; therefore, there is significant incentive and competitive pressure for applicants to take language tests multiple times to boost their total scores and rank as high as possible in the candidate pool.

Test overview

CELPIP underlying construct

CELPIP comprises two tests: CELPIP-General test assesses test takers’ English listening, reading, writing, and speaking skills and is officially designated by IRCC for permanent residence applications (the first step toward citizenship). The CELPIP—General LS test assesses test takers’ English listening and speaking skills only (but shares the same structure as CELPIP—General). CELPIP—General LS is officially designated for citizenship applications for cases where a language test was not provided at the permanent resident stage or for some provincial and national professional designations. CELPIP-General takes about 3 hours to complete, while CELPIP—General LS only takes about 1 hour. In this review, we focus on CELPIP-General.

Administration and reporting

CELPIP-General is delivered in person at the computer stations of Paragon test centers. All participants start and complete the test at roughly the same time and complete the test in one sitting.

Test takers can access their score report online in 4 to 5 calendar days. Test takers can also apply for a re-evaluation of some or all sections of the test within 6 months of the test date. The score report is a brief one-page document that indicates the test taker’s personal information, test information, and CELPIP levels for each section.

Test components

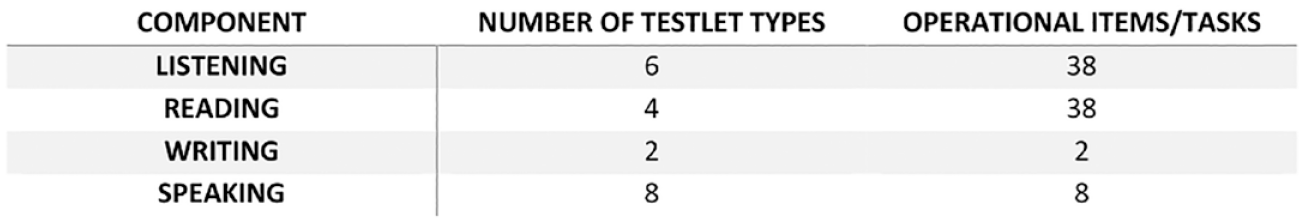

The CELPIP Test Review Report I (Paragon Testing Enterprises, 2021) provides an overview of the structure of CELPIP-General (see Figure 1). In addition to operational items, every test administration includes a number of non-scored items to assist with ongoing content development and validation.

Structure of CELPIP-General.

Listening

The Listening section of the test is about 50 minutes long, with six different audio or video tasks. The tasks include, for example, listening to daily life conversations, news items, or discussions. These are divided into shorter sections of less than a minute played only once followed by one or more four-option multiple-choice questions. The response options are written but the prompt for each item is only provided orally once. The test taker cannot review questions before hearing the recordings. The recordings that we listened to are generally slower than authentic speech, with few fillers, with exceptionally clear pronunciation and slightly exaggerated intonation. The discussions are slightly faster and more authentic than the other tasks, with occasional pauses, fillers, and false starts, but they still have a scripted feel. Here is an example of the beginning of a conversation in Practice Test A (Paragon Testing Enterprises, 2022a):

Hi. Thanks for coming to Mattress and Bedding World. How may I help you?

Hi! I’d like to buy a set of pillows.

Sometimes recordings are accompanied by pictures, which represent the context of the recording but do not provide information to help answer the questions. As observed in the Reading section (see below), the questions appear to tap into a relatively varied set of subskills, from listening for details to determining the main idea to making inferences.

Reading

The Reading section lasts about 55 minutes, with four texts of about 10 minutes each plus time for instructions and transitions. The texts range from everyday practical documents like diagrams and schedules with visual support to correspondence, journalistic pieces, and quasi-academic texts (the practice test described interesting scientific facts about dragonflies.) The longest text in this section was under 400 words.

As with the Listening section, the navigation for the Reading section did not present difficulties and included a short familiarization question before beginning. The texts are presented on the left and the questions on the right, with a scrolling feature to keep everything on the same page and a timer in the corner to help with time management for each text.

Writing

The Writing section consists of two tasks of 150 to 200 words each. This section is designed to last 53 minutes, divided roughly in half between the two tasks. The first task is similar to the first writing task of the IELTS General, except it is called an email instead of a letter. The second task consists of responding to a one-question survey followed by a justification of the response. Notably, in addition to a word counter, the CELPIP-General Writing section also has a spell-check function.

Speaking

The last section in the test is Speaking, which lasts about 20 minutes. It has eight monologic tasks where test takers record their responses, each lasting from 60 to 90 seconds. Tasks include describing pictures, giving advice, expressing opinions, and making difficult decisions. Before each task, test takers are given a practice question and preparation time before speaking. There is a countdown timer function in the speaking test that may help the test takers feel more in control of their time. However, further research is needed to examine how the countdown function may influence test takers’ behaviors or performance. Please refer to practice tests A and B on the CELPIP website for more detailed information on listening, reading, writing, and speaking (Paragon Testing Enterprises, 2022a, 2022b).

Appraisal of CELPIP-General

Accessibility

There is some evidence of attention to the accessibility of CELPIP-General. According to the test fairness framework introduced by Kunnan (2004), a test should provide test takers with ample opportunity to learn the test content and prepare, ensure it is financially affordable, be accessible in terms of distance, accommodate physical and learning disabilities appropriately, and ensure that test takers are well-informed about the test-taking equipment and procedures. In the case of CELPIP-General, prospective test takers have access to free sample tests, videos, online information sessions, interactive lessons, webinars, workshops as well as paid study materials (ranging from $20 to $225 CAD). A detailed description of test day information is also available on the website including documents to bring and test day rules and procedures. The CELPIP-General currently costs $280 CAD in Canada, whereas the IELTS General costs $345 CAD (although international pricing varies). Currently, the CELPIP-General is available in 140 test centers worldwide, 97 of which are in Canada. This means a number of test takers may face the inconvenience and additional cost of traveling to another city or even another country to take the test. However, one advantage of the CELPIP-General is that it can be completed in one sitting. As to accommodations, a range of options are available for candidates with disabilities, such as private test rooms, additional time (25%, 50%, and 100%), specialized rating for speech issues, test forms for the visually impaired, use of assisted listening devices, reader, and scribe. As previously mentioned, apart from the word count feature, the Writing section of CELPIP-General incorporates a spell check function for all test takers, potentially resulting in fewer glaring mistakes when compared to tests without a spell check function. This inclusion can be perceived as an effort to promote fairness by accommodating individuals with conditions such as dysgraphia who may require spell-check assistance.

According to Paragon’s website, the number of CELPIP test centers in overseas locations is on the rise. We surmise that this access to international growth was the motivation for Paragon’s recent decision to merge with a US-based conglomerate. This also means that there is no longer a Canadian-owned test for Canadian immigration and citizenship purposes.

Reliability

Internal consistency for Listening and Reading sections

The Listening and Reading sections of CELPIP-General are assessed by selected-response questions and are machine-scored. Until recently, there has been limited publicly available evidence pertaining to the reliability of CELPIP-General, with the exception of descriptive statistics in test review reports shared by Paragon and Prometric published annually since 2018. To assess internal consistency, Cronbach’s alpha was reported for the Listening (.89) and Reading (.88) sections administered in 2022 (Prometric Canada, 2023a).

Rater agreement for the Writing and Speaking sections

The Writing and Speaking sections are rated with in-house rubrics through an online rating system by a team of raters in Canada. Paragon provides a rater training program that includes initial and ongoing training, monitoring, and feedback. They writing section employ double rating and “benchmark rating,” with a third rater providing the final score if the initial raters disagree. Test takers’ speaking performances are evaluated by three raters. If discrepancies arise, two additional raters re-evaluate the response. CELPIP 2022 rater agreement was 90.9% for writing and 90.0% for speaking, indicating a high consistency among raters (Prometric Canada, 2023a).

Although the scoring rubric is proprietary and not publicly available, some interpretations of the writing and speaking scale dimensions can be inferred by examining the performance standards and level descriptors available on their website (Prometric Canada, 2023b). Parallel to the CLB, the CELPIP descriptors describe typical speaking situations (e.g., presenting information to authority figures in the workplace). The performance standards also specify criteria related to each performance level, including content/coherence, vocabulary, task fulfillment, and less typically, “readability” for writing and “listenability” for speaking. “Readability” includes a focus on accuracy in terms of its effect on comprehensibility, in addition to grammatical and syntactic complexity and variety, paragraphing, and cohesive elements. “Listenability” is the parallel criterion in speaking, which considers accuracy, complexity, variety, and fluency, but it appears to overlap with other criteria. For instance, in the first writing task drafting an email, if the test taker does not produce an email format, it is unclear whether test takers would be penalized based on task fulfillment, “readability,” or both.

An additional resource we encountered on the official website is the CELPIP score comparison chart (Paragon Testing Enterprises, 2022c). This document focuses on the Writing and Speaking sections, encompassing level descriptors, sample responses, and response analyses for each level. In comparison to the performance standards (Prometric Canada, 2023b) document, which solely outlines the rating criteria for each level, the level descriptors within the score comparison chart provide detailed information to elucidate the anticipated performance standards, which is likely to be more accessible and comprehensible for test takers. Notably, we believe the inclusion of sample responses is useful, as they showcase authentic test taker performances across all 12 proficiency levels, all engaged in the same tasks. For example, in the Speaking section, a comprehensive range of 12-level performances is presented for two tasks: giving advice and describing pictures. We believe that these samples provide prospective test takers with an idea of what to anticipate while also gauging their own proficiency along the 12 CELPIP-General levels by comparing their own abilities to the examples. Response analyses are also available for each sample response with details, but more could be added to explain how evaluation criteria are applied.

Validity

Validation research on CELPIP-General

Paragon has been involved in several research and development initiatives, evidenced through in-house research reports, a 2015 research partnership with the University of British Columbia (including a Paragon UBC Professorship in Psychometrics and Measurement), and an awards and grants program for professional researchers and graduate students.

The CELPIP-General was developed with reference to the same underlying theoretical orientation as the CLB, Bachman and Palmer’s (1996, 2010) theoretical model of communicative language ability (Centre for Canadian Language Benchmarks [CCLB], 2012). Validation research on CELPIP-General is being carried out to collect evidence \to support the intended test use (English proficiency required for successful communication in social, educational, and workplace contexts in Canada as defined by IRCC). As per the CELPIP Test Review Report I (Paragon Testing Enterprises, 2021), Doe et al. (2019) investigated the language use and communication challenges faced by 23 Canadian new immigrants in entry-level workplace settings. The study identified the essential competencies related to workplace communication and aligned them with the CLB and the CELPIP-General LS (listening and speaking) levels of test performance.

One notable research program has involved the collection of response process-based validity evidence (Zumbo, 2007; Zumbo & Chan, 2014). Process-based validity has been identified as an important source of score validity (American Educational Research Association [AERA] et al., 2014; Messick, 1995; Zumbo & Hubley, 2017). For instance, work by Wu et al. (2018) suggests that test takers often employ processes and strategies associated with comprehending meanings, such as summarizing key information. Also, Chen et al. (2020) found that the variation in test takers’ scores can be predicted by whether test takers used construct-relevant strategies.

There are several research areas that could be further explored to offer additional evidence that CELPIP-General is appropriate for its intended use. These additional possibilities are summarized below.

Lack of assessment of interactional competence

A broad definition of interactional competence is the ability to collaboratively engage and co-construct purposeful and meaningful interactions, taking into account sociocultural and pragmatic dimensions of the speech context (Galaczi & Taylor, 2018). As previously mentioned, the purpose of CELPIP-General is to evaluate test takers’ English abilities in everyday situations, which inevitably encompasses interactional competence as a part of the target language use domain. Nevertheless, interactional competence is only captured in a limited way in CELPIP-General. The writing and speaking topics may somewhat resemble real-life language use, such as replying to an email, giving advice, comparing, persuading, or expressing opinions, but these tasks do not involve any interaction based on language comprehension as they only require test takers to respond to a single question or a picture. Particularly in speaking tasks, test taker abilities such as topic management, turn management, interactive listening, visual behavior, and so on are neglected (see Galaczi & Taylor, 2018). In fact, the current approach of all computer-mediated speaking assessments can be viewed as essentially monologic and unidirectional (Iwashita et al., 2021). This representation of speaking may help reduce costs for test takers but must be acknowledged as a constraint on the construct representation of speaking in this test.

This lack of attention to interactive competence can be explained by the fact that both the CLB and the CELPIP are grounded in the model of communicative language ability originally described by Bachman (1990) and subsequently expanded upon by Bachman and Palmer (1996, 2010), which does not contain interactional competence.

Lack of assessment at advanced levels

From our experience taking the practice tests, the topics seem to represent a variety of typical daily interactions and activities, in line with the description of the underlying construct as “functional” English. However, the test assigns scores corresponding to Levels 10 and above on the CLB, indicative of sophisticated communication in professional settings. We did not find sufficient evidence suggesting substantial content coverage at these advanced levels of performance. From a construct coverage perspective, it would be preferable to limit the score range of CELPIP-General to that best represented by the test content. However, this would go against IRCC’s requirements for language tests to align to CLB levels all the way to CLB 12. This is one example of how political considerations may conflict with adherence to best testing practices.

Authenticity

The “Canadian-ness” of CELPIP-General

The Paragon website states that CELPIP-General is a test of “general English,” defined as the “ability to function. . .in a variety of everyday situations, such as communicating with co-workers and superiors in the workplace, interacting with friends, understanding newscasts, and interpreting and responding to written materials.” Promotional materials emphasize how CELPIP-General represents authentic communication situations in Canada. The CELPIP website includes testimonies from test takers who appreciate the real-life relatable nature of the test, with situations typical to what they experience every day in Canada.

Given this description of the construct, when we took the practice test, we explored not only the general or functional nature of the test content, but also its “Canadian-ness,” in terms of its cultural and/or linguistic elements. We looked and listened specifically for distinct markers of the Canadian language, including spelling, lexis, and pronunciation. We did not notice any uses of words that would have marked Canadian as opposed to American spelling (e.g., realize, color) except in the listening transcripts (which utilized American spellings and are not visible to test takers). In the Writing section, both British/Canadian and American spellings are accepted. The content was distinctly Canadian in nature (e.g., with references to Canadian places, political parties, etc.) but local cultural knowledge would not be necessary to understand the task demands—which is reasonable given the international test-taking population. The accents of speakers are also recognizably Canadian, with evidence of some typical elements such as the cot-caught merger (which also exists in some American varieties of English) and Canadian Raising (the raising of vowels in the diphthongs /ay/ and /aw/ before voiceless consonants). One especially Canadian element we identified was “eh” as an all-purpose tag question (e.g., “That was good, eh?” “My directions didn’t help, eh?”).

The frequency of audio playback in the Listening section

The decision to use single-play is not justified by the test development team. The arguments supporting either single or double play on theoretical grounds are generally inconclusive (Jones, 2013). For instance, one argument for single play is that people typically hear an utterance only once in real life; while people can ask for repetition during conversation, exact repetition is rare (Buck, 2001). On the other hand, people can generally replay online materials multiple times (Murray, 2007; Sun & Chen, 2016). As highlighted by Holzknecht and Harding (2023), “the decision to play a listening text once or twice in a listening assessment setting is not trivial.” The decision should be made with a balance between assessment purpose and “the prevalence of repeated listening in the target language use (TLU) domain” (p. 472). As a large-scale proficiency test, the ground for the decision to use single play, and whether the anxiety induced by single play is balanced by construct considerations, warrants examination. There have been other discussions on the frequency of audio playback in the Listening section. For instance, playing the audio a second or even more times may significantly change the task difficulty (Brindley & Slatyer, 2002) and item discrimination (Borroughs, 2002), and its effects may vary depending on the test taker’s ability (Chang & Read, 2006).

Our intention is not to criticize the choice of single-play in the Listening section of CELPIP-General. Instead, our goal is to elucidate the intricacies inherent in this decision-making process and advocate for additional information to substantiate such choices.

Impact

A critical consideration for test developers revolves around the potential educational, social, and political implications of their assessments (Cheng, 2014; Hamp-Lyons, 1997; Kunnan, 2004). CELPIP-General is primarily used as a gatekeeping instrument within Canadian immigration policy and is a crucial determinant of whether test takers can immigrate to Canada and establish a new life. Given the stakes, it is surprising that neither Paragon nor Prometric’s annual report discusses the broader impact of CELPIP.

Only two washback studies have been conducted by Paragon. In the first, Zumbo (2023) focused on the effectiveness of the test preparation program, adopting a Bayesian statistical framework to estimate the magnitude and associated uncertainty of washback effects resulting from test preparation. The second study evaluated consequential validity by assessing the classification accuracy of test takers’ language proficiency indicated by a CELPIP score as the level described by the CLB (Chen et al., 2021). Assigning a lower CELPIP score to a candidate whose true proficiency is at or above CLB 7 denies both the candidate and their family’s fair opportunities for immigration. Conversely, scoring a candidate higher than they should leads to unfairness for other eligible candidates and necessitates additional resources to aid the individual in settling in Canada. The reported results suggest that CELPIP test scores demonstrate high classification accuracy and exhibit low rates of false-negative and false-positive outcomes at critical proficiency levels.

Beyond these two studies, there appears to be a dearth of washback studies on CELPIP from the perspectives of test takers, policymakers, and other stakeholders. Such perspectives are essential, considering the impact of these tests on the test candidates and the potential invisible borders or walls that these tests may inadvertently create (Polanco & Zell, 2017; Winter, 2018).

Final thoughts

We have appreciated the opportunity to conduct an in-depth analysis of a test that can play such a large role in the journeys of migrants to Canada. There is a lot to appreciate about CELPIP-General, including the variety of subskills elicited by the tasks, the authenticity of the language use situations represented in these tasks, the user-friendly computer-based test delivery, and the extensive support materials offered for preparation. We appreciate the accessible approach to sharing scoring criteria and rating processes with test takers. For a relatively new test on the scene, there is a commitment shown to the importance of conducting regular research and engaging with the international language testing community.

CELPIP-General is designed to evaluate the functional language proficiency required for effective communication in Canada specifically. The most distinctive characteristic is the “Canadian-ness” of the test, evident in the content chosen and the accents in the Listening section. Meanwhile, some aspects need attention, such as the lack of transparency of the scoring procedure; the possibility of introducing elements related to interactional competence and to advanced levels of test takers; and the insufficient attention given to research on test impact on various stakeholders.

Comparisons to the other major test used for this purpose, the IELTS General Training, are inevitable. As mentioned earlier, the benefits of CELPIP-General include the cost, the fact that it can be completed in one sitting, and the Canadian content and accents. Benefits of IELTS include its long-established program of research, its wider availability for test takers, and its use of an in-person interview task. These comparisons are the subject of much online debate among immigration applicants. During our investigation of CELPIP-General, we came across a number of videos and webpages created by test takers who shared their experiences and provided tips for prospective candidates. These videos and webpages often involve comparisons between CELPIP-General and IELTS. We wonder whether testing companies take test takers’ perceptions of test difficulty into account in their decision making. There is great potential in future validation research to incorporate test takers’ perspectives.

A larger question that goes beyond an examination of this single test is whether the cut-off scores of CELPIP-General for each immigration path and their equivalent levels on the CLB, are indeed appropriate as a representation of the minimum language ability required for an immigrant to be successful in society—the stated construct according to IRCC. If so, then additional points should not be given in the CRS system for higher language test scores than the established cutoffs. There would be no ethical justification for encouraging potential immigrants, many with limited resources, to take any language test multiple times in an attempt to get more points in an immigration system despite the fact that they have met the language requirements. This could be seen as a form of language test misuse with far-reaching consequences. We therefore suggest that future research be conducted to investigate these relationships between test scores and language policies in immigration, which impact so many lives.

Footnotes

Author contributions

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.