Abstract

Test preparation has garnered considerable attention in second language (L2) education due to the significant implications that successful performance on a language test may have for academic advancement, future career opportunities, and immigration prospects. Meanwhile, an overemphasis on test preparation has been criticized for encouraging the cultivation of construct-irrelevant test-taking strategies at the expense of developing general language proficiency. To systematically explore how test preparation has been investigated in the literature, we conducted a scoping review of 66 studies on L2 test preparation. Specifically, this study examined the key characteristics of publications on test preparation, the main themes explored, the study and participant characteristics, as well as the essential aspects of their research methodologies. The results of this review revealed various trends in the literature on L2 test preparation, such as the exclusive focus on English as the target language, the lack of diversity in stakeholders as participants, the dominance of international language tests, and the paucity of experimental studies that utilize advanced statistical techniques. In addition to interpreting the results of our analysis, we discuss the implications of this scoping review and outline several directions for future research on test preparation.

Keywords

Introduction

Overview and definitions

Language tests are widely used for a broad range of purposes around the globe. Driven by expanding globalization and the internationalization of education, there has been a steady increase in the number of international students, migrant workers, and people seeking permanent immigration who are required to demonstrate their proficiency in the target language through standardized language tests (Yu & Green, 2021). Successful performance on such tests depends to a large degree on the type, amount, and quality of test preparation activities and practices (Knoch et al., 2020). As a result, test preparation plays an essential role in language education and, in the case of some Asian countries, has become “a massive enterprise” and “a powerful industry” (Ross, 2008, p. 7). In South Korea, for example, up to 2% of the gross national product is reportedly spent on learning English and preparing for English language tests (Hincks, 2015).

In the literature, test preparation is also referred to as coaching (Hu & Trenkic, 2021; Messick, 1981), teaching to the test (Menken, 2006; Popham, 2001), and test-wiseness or test-taking strategy training (Yu & Green, 2021). As J. Ma (2017) argued, the term “test preparation” is considered to be more inclusive and neutral as it can be applied to various practices in both in-class and outside-of-class contexts. Furthermore, test preparation does not bear any negative connotations, unlike the terms “coaching” and “teaching to the test” that are oftentimes associated with cramming, test-deviousness strategies (Cohen, 2021), and other questionable practices aimed at inflating test scores.

Recognized as a complex, multidimensional construct, test preparation is also deemed to be a contentious phenomenon (Yu et al., 2017; Yu & Green, 2021) and has been dubbed “a double-edged sword” (Cheng & Doe, 2013, p. 19). On the one hand, pedagogically sound test preparation practices can help second language (L2) learners increase their overall language proficiency. On the other hand, improper practices can encourage the use of test-deviousness strategies and other tricks to artificially boost L2 learners’ test scores, especially in high-stakes testing contexts. Crocker (2006), for instance, claims that “[n]o activity in educational assessment raises more instructional, ethical, and validity issues than preparation for large-scale, high-stakes tests” (p. 115). In essence, the issues surrounding test preparation are a matter of an ongoing debate about its impact on the development of language proficiency, validity of test scores, instructional practices, as well as equity and fairness in language assessment (Gebril & Eid, 2017; J. Ma, 2017; Yu et al., 2017; Yu & Green, 2021).

Types of test preparation

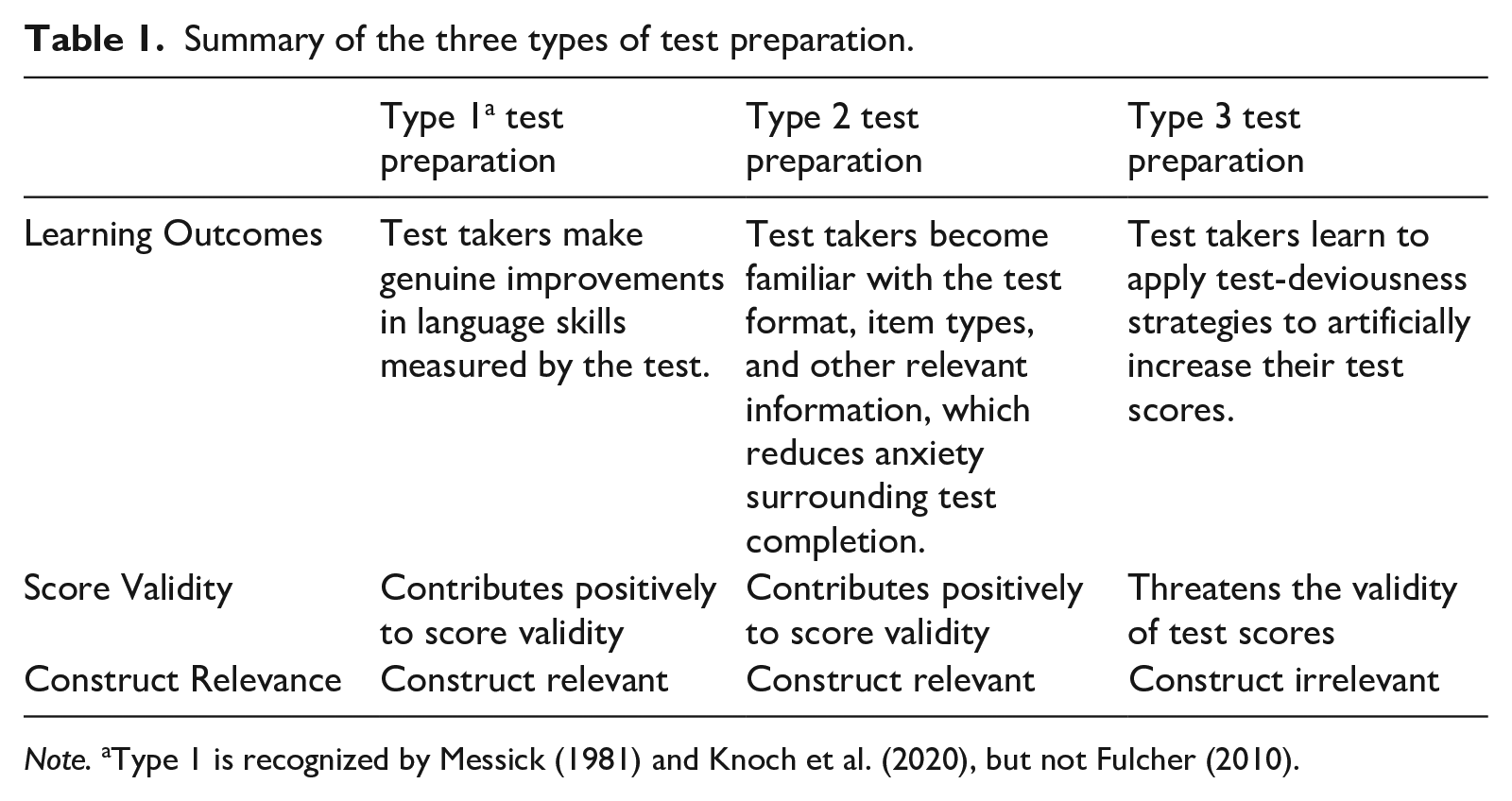

Messick (1981) distinguishes between three main types of test preparation (which he refers to as coaching) based on the outcomes: test preparation leading to “genuine improvements” in the measured skills, test preparation resulting in “enhanced test-taking sophistication,” and test preparation focusing on the development of “heightened test-taking artifice” (p. 1). The first type of test preparation leads to the development of the language skill(s) measured by the test, thus contributing to the validity of scores. The second type of test preparation aims at familiarizing test takers with the test format and making them less anxious, which can also be viewed as having a positive effect on the validity of test scores. Finally, the third type of test preparation emphasizes the development of test-deviousness strategies (such as using various tactics to guess the correct answer) to artificially inflate test takers’ scores, which poses a clear threat to the validity of such scores. In sum, while the first two types of test preparation can be viewed as construct-relevant, the last type is a source of construct-irrelevant variance. Knoch et al. (2020) refer to the aforementioned types of test preparation as Type 1, Type 2, and Type 3, respectively.

A similar classification of test preparation types is provided by Fulcher (2010, p. 288). According to Fulcher, two main types of test preparation are available to test takers. The first type focuses on introducing L2 learners to the test, its format, item types, and other relevant information that learners may need to understand the structure of the test and the procedure for its completion. This type appears to correspond to Type 2 in Messick’s (1981) classification. Fulcher’s (2010) second type of test preparation aims at helping L2 learners achieve higher scores on the test by teaching them how to apply test-deviousness strategies (Cohen, 2021) rather than supporting the development of their language skills (similar to Type 3 in Messick, 1981). Unlike the first type, the second type of test preparation has been criticized for incentivizing “shadow education” (i.e., private tutoring) (Yung, 2015) and promoting unethical practices that introduce construct-irrelevant variance (Haladyna et al., 1991; Hamp-Lyons, 1998).

Overall, the above-mentioned classifications fundamentally recognize the same three types of test preparation, with Fulcher’s (2010) classification distinguishing only two of the three types (see Table 1 for a summary).

Summary of the three types of test preparation.

Note. aType 1 is recognized by Messick (1981) and Knoch et al. (2020), but not Fulcher (2010).

Research on test preparation

Test preparation in L2 contexts is commonly examined as part of washback research (Alderson & Hamp-Lyons, 1996; Allen, 2016a, 2016b; Di Gennaro, 2017; Green, 2007; Tsang & Isaacs, 2022; Wall & Alderson, 1993). Defined as the impact of a test on teaching and learning (Green, 2007), the concept of washback (or backwash) in language testing is inextricably tied to test preparation. Specifically, various aspects of test preparation, including the content, learning practices, teaching methods, as well as learners’ and teachers’ cognitive (e.g., beliefs) and affective (e.g., motivation) processes, are significantly shaped and affected by the test that L2 learners prepare to take (Cheng et al., 2015; Green, 2007; J. Ma, 2017).

As J. Ma (2017) and Yu and Green (2021) suggest, there are two main strands of research on test preparation. The first strand comprises studies investigating the processes that underlie test preparation, such as the types of test preparation practices that prospective test takers engage in both in class and outside of class (Knoch et al., 2020; J. Ma, 2017), the pedagogical approaches used by teachers to help learners with test preparation (Clark & Yu, 2022a; Irvine-Niakaris & Kiely, 2015), characteristics of test preparation materials (Wall & Horák, 2011), and participants’ attitudes and perceptions related to test preparation (Xie, 2015; Zhan & Wan, 2016). The second strand concerns research on the effectiveness and products of test preparation. In this strand, researchers have examined how different test preparation practices affect test scores (Lertcharoenwanich, 2022) and the development of language proficiency (Minakova, 2020).

Empirical evidence suggests that test preparation can be affected by a broad range of variables, including both teacher-related and context-specific variables (see Gebril & Eid, 2017; Green, 2013). Test preparation has also been shown to have a profound impact on both learning and teaching (Stoneman, 2006), which can be both positive and negative (Yu & Green, 2021). While the importance of test preparation is widely recognized, the ongoing debate about its impact on the curriculum and instruction warrants a more in-depth examination of test preparation practices due to the variety and complexity of factors involved. Furthermore, despite a large number of studies in this area, concerns have been raised about the methodological limitations of existing research on test preparation (e.g., Xie, 2015), including a notable scarcity of quantitative approaches and a heavy emphasis on observational and perceptual studies exploring participants’ beliefs, perceptions, and preferences regarding different aspects of test preparation, including its effectiveness and impact. In addition, the majority of studies appear to have explored test preparation for high-stakes English language proficiency tests such as IELTS and TOEFL. These concerns and observations point to the need for a comprehensive and evidence-based synthesis of published research on test preparation, including scrutinizing the methodological aspects of existing studies in this domain. Conducting such a review would help clarify the extent, range, and nature of research on test preparation, as well as to identify overarching trends and patterns in the methodologies utilized across multiple studies. Given that, to our knowledge, no systematic review of the literature on test preparation in L2 contexts has been done to date, we conducted a scoping review, which is a type of systematic review that “summarizes substantive and methodological features of primary studies on a particular topic” (Chong & Reinders, 2022, p. 3). Our scoping review aimed to answer the following research questions (RQs):

How much research on test preparation is there? What are the characteristics of publications on test preparation in L2 contexts?

What are the main themes explored in primary studies on test preparation?

What are the study and participant characteristics in primary studies on test preparation?

What types of research paradigms, research designs, data collection methods, data collection instruments, and data analytic methods are used in primary studies on test preparation?

Methodology

Research design overview

This study presents a scoping review of the current state of knowledge on test preparation in an L2 context. To carry out this review, we followed the guidelines for conducting systematic reviews from Macaro (2020) and Newman and Gough (2020), as well as the methodological framework for conducting qualitative research synthesis described in Chong and Reinders (2020) and Chong and Plonsky (2021). When working on this section, we also followed the Journal Article Reporting Standards for qualitative meta-analyses set by the American Psychological Association (APA) Style (Levitt et al., 2018) and the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Page et al., 2021).

Research team description

The research team is led by a principal investigator (Ruslan Suvorov) who specializes in language testing and assessment. Three research collaborators (Shanshan He, Anne-Marie Sénécal, and Laura Stansfield) are currently pursuing doctoral studies in education, with expertise and interest in language testing and assessment research. Two of the four researchers (Shanshan He and Ruslan Suvorov) have previous experience with conducting and publishing a systematic review in this field.

Study selection

Inclusion and exclusion criteria

The initial step of this scoping review involved establishing a set of inclusion and exclusion criteria to guide the selection of studies and ensure the review’s quality.

The primary studies included in the review met the following inclusion criteria:

examined test preparation as part of washback (or backwash) for L2 tests;

involved participants in second or foreign language contexts;

reported research across the methodological spectrum (i.e., quantitative, qualitative, and mixed methods);

were published in any year;

were disseminated as journal articles, chapters in edited volumes, research reports, and unpublished doctoral dissertations available in repositories such as ProQuest (following Macaro’s, [2020] guidelines);

completed a peer review process (with the exception of doctoral dissertations, which are not peer-reviewed publications).

We excluded studies with the following characteristics:

explored test preparation in contexts unrelated to washback (or backwash) for L2 tests;

included participants outside of a second or foreign language learning context;

were disseminated through other types of publications (e.g., non-empirical studies, test reviews, literature reviews, meta-analyses, editorials, masters dissertations, published abstracts, etc.);

were not subjected to peer review.

Search strategy, screening, and eligibility assessment

We followed the PRISMA guidelines (Page et al., 2021) to identify possible studies, screen them, and assess them for eligibility based on our inclusion and exclusion criteria. To guide the study search, various combinations of the following search terms were used: washback, backwash, test preparation, coaching, language assessment, language test, and language exam. We selected these specific search terms through an iterative process of identifying the keywords, synonyms, and closely associated terms used in research on language test preparation. The final search string was as follows: (“test preparation” OR “coaching” OR “washback” OR “backwash”) AND (“language test*” OR “language exam*”).

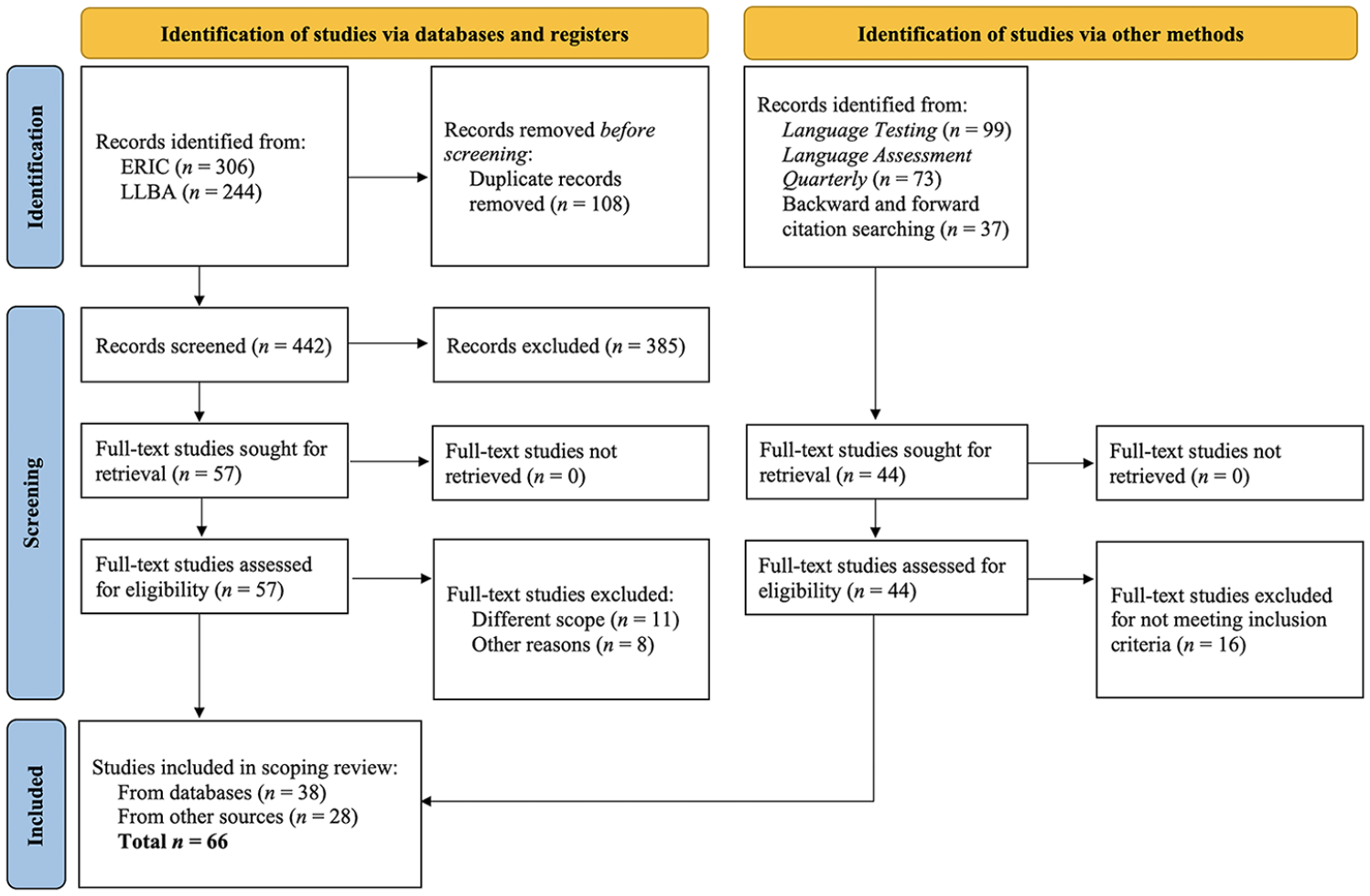

To identify possible studies, three types of searches were used: database searches, journal searches, and forward and backward citation searches. First, two researchers from the team used the search string in Educational Resources Information Center (ERIC) and Linguistics and Language Behavior Abstracts (LLBA), which are the two most commonly used databases for research syntheses in applied linguistics (In’nami & Koizumi, 2010). Limited to “peer-reviewed” records only, the search of ERIC and LLBA databases yielded a total of 550 records (see the PRISMA flowchart in Figure 1). These records were then imported into Covidence, a web-based application for managing systematic reviews (Veritas Health Innovation, 2024). Among the imported records, 108 duplicates were removed. The titles and abstracts of the remaining 442 records were individually screened by the two researchers in Covidence, with the agreement of 93%. The remaining 7% (i.e., 31 records) were discussed to achieve consensus. After completing the initial screening, the two researchers retrieved 57 full-text studies and assessed them for eligibility. Out of these, 19 studies were further excluded because they did not meet the inclusion criteria.

PRISMA flowchart for study selection.

In addition to the database search, the research team searched the journals Language Testing and Language Assessment Quarterly and conducted backward and forward citation search. These journals were chosen for their prominence in the field and history of publishing research on test preparation. Backward citation search was performed by examining the reference lists of studies selected from the databases, whereas forward citation search was done via Google Scholar. The search via these two methods yielded 44 additional studies. After screening the full texts of these studies, the research team excluded a further 16 because they did not meet the inclusion criteria. As shown in Figure 1, the final dataset included in the scoping review comprised 66 studies, of which 38 were identified through the database search and 28 were identified through the other two methods. The eligibility of each study in the final list was assessed and agreed upon by all four researchers during weekly meetings over several months.

Data extraction and synthesis

The extraction and synthesis of the data comprised three main steps. First, we conducted a preliminary round of data extraction using the initial list of 30 variables of interest organized into four categories that corresponded to the RQs: “Bibliographic information” (RQ1), “Themes” (RQ2), “Participant and study characteristics” (RQ3), and “Research design and analysis” (RQ4, see Appendix 1). In the context of this study, variables were defined as “observable attributes or properties of the world that take on different values” (Epstein & Martin, 2004, p. 321). We generated this initial list of variables based on the RQs guiding our scoping review. To extract data for these variables, we utilized an online collaborative Excel spreadsheet hosted in OneDrive, which was used throughout the extraction and synthesis process and ultimately became a coded dataset. The four researchers divided the 66 selected primary studies and independently extracted the data from each study that was relevant to each variable. To ensure consistency, regular discussions of the extraction process were held during weekly meetings.

Since the primary focus of this scoping review was to uncover which key topics have received attention in the literature on test preparation and how they have been investigated, we primarily examined the Introduction and Methods sections of the published works. In cases where information was not explicitly stated in these sections, we consulted other sections of the studies to locate the missing information. For example, details about statistical tests were sometimes found in the Results section.

In the second step, we refined the preliminary list of variables of interest by rephrasing, merging, and/or modifying some of them to better fit the RQs guiding the study. The final list consisted of 22 variables: 4 variables in the “Bibliographic information” category, 1 in “Themes,” 10 in “Participant and study characteristics,” and 7 in “Research design and analysis.”

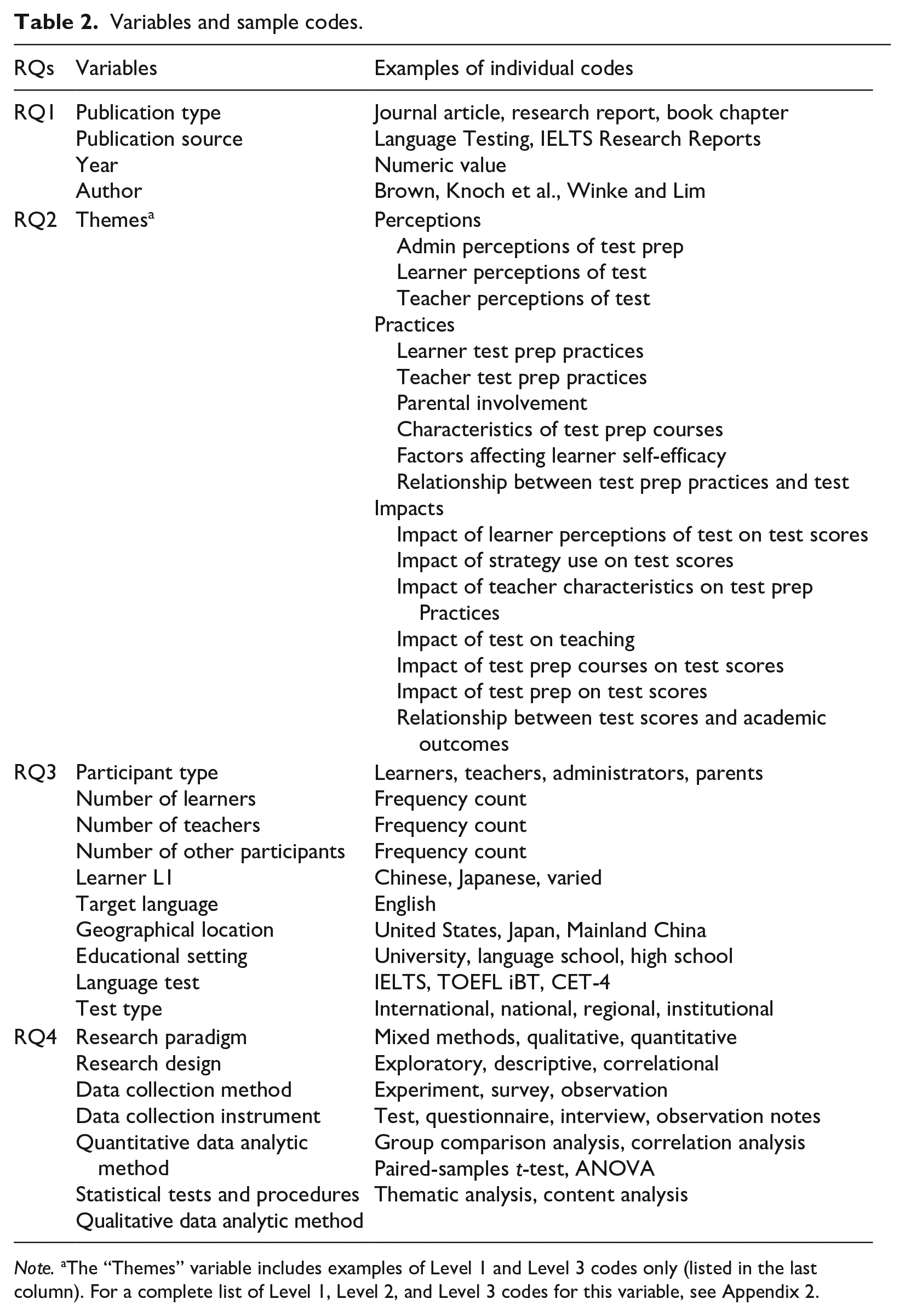

After revising and finalizing the variables and extracting all the relevant data, we proceeded to data synthesis, which entailed coding the data (i.e., Step 3). At this step, two types of coding were used (Saldaña, 2021). First, the data for all four variables in the “Bibliographic information” category, as well as the data for some variables in the “Participant and study characteristics” category, were coded using attribute codes. We used attribute coding because it is appropriate for providing basic descriptive information such as the year of publication or the number of participants (Saldaña, 2021). To code the data for the remaining variables, we used thematic coding because this type of coding is used to identify patterns and themes in the extracted data.

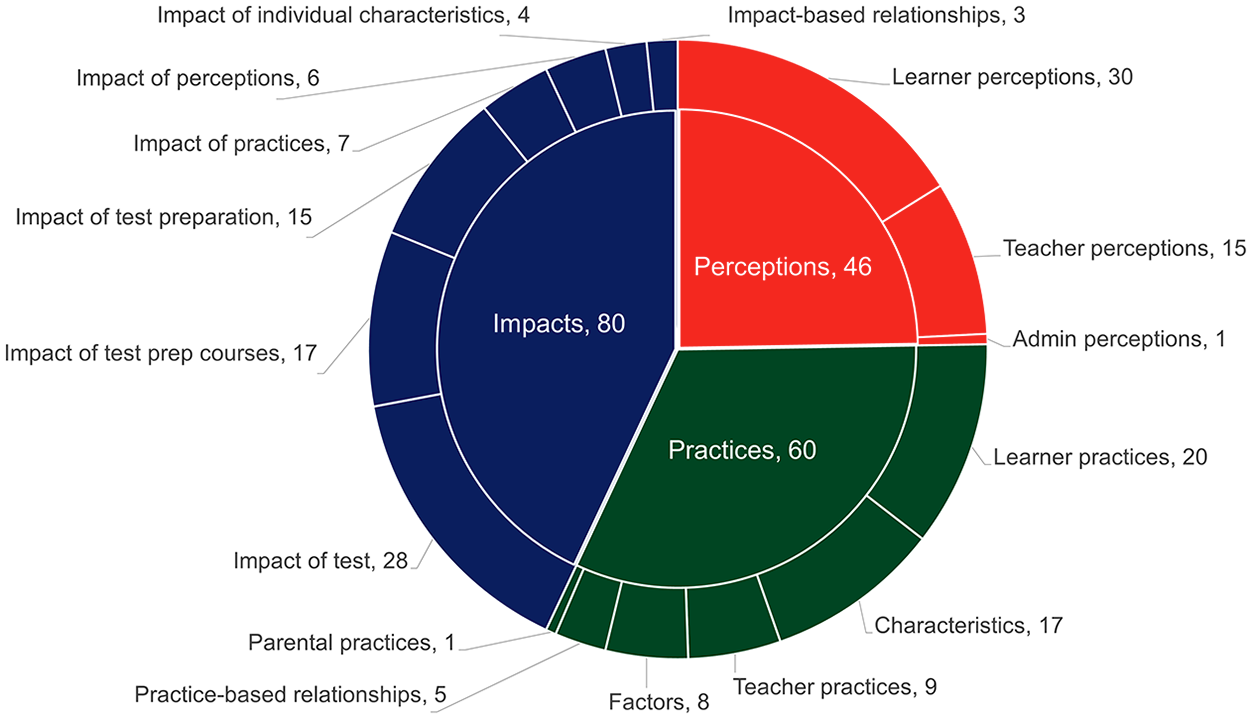

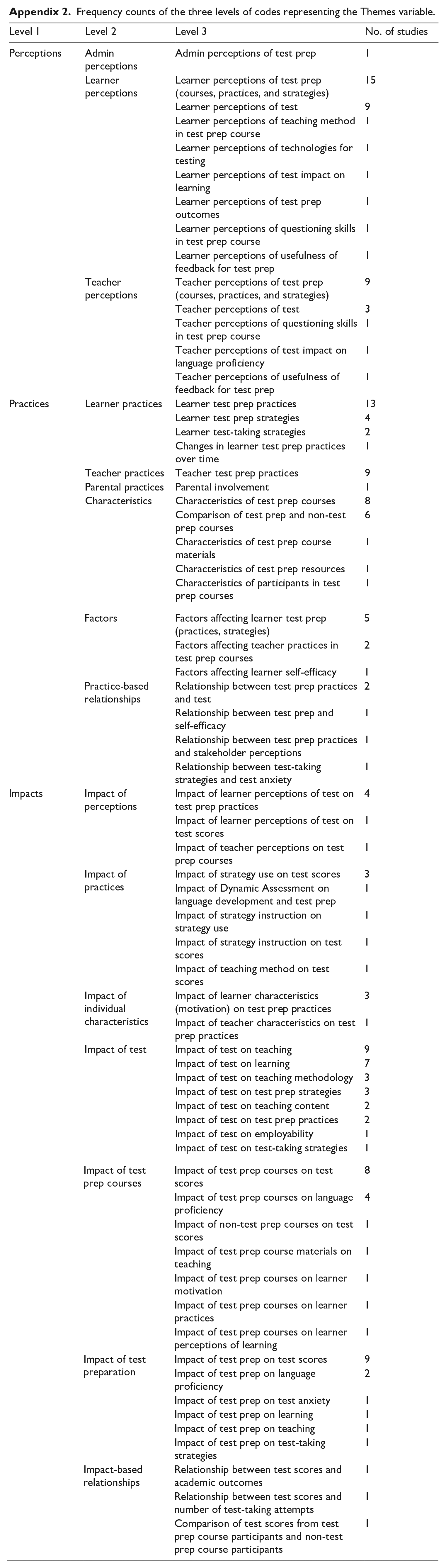

We used one level of codes for all variables. The only exception was the “Themes” variable, for which we used three levels of codes to reflect the complexity of themes identified in the included studies (see Appendix 2). The first level of codes was based on Hughes’s (1993) classification of the washback components into participants, process, and products (also adopted by J. Ma, 2017). Using this classification, we created the following three codes to represent three broad groups of themes (henceforth Level 1 themes): perceptions (corresponding to Hughes’s participants), processes (same as in Hughes), and impacts (Hughes’s products). The second level of codes was used for the sub-types of Level 1 themes (e.g., administrator perceptions, learner perceptions, and teacher perceptions within the Level 1 theme “Perceptions”), thus providing a more nuanced categorization of perceptions, processes, and impacts. Finally, the third level of codes was used for highly refined and specific themes within each Level 2 theme (e.g., “learner perceptions of test impact on learning” or “teacher perceptions of test”). The final list of variables with sample codes is provided in Table 2.

Variables and sample codes.

Note. aThe “Themes” variable includes examples of Level 1 and Level 3 codes only (listed in the last column). For a complete list of Level 1, Level 2, and Level 3 codes for this variable, see Appendix 2.

The finalized 22 variables were divided among the four researchers, with each researcher coding the data for 4–6 variables. To ensure the quality and reliability of coding, we double-coded the data, as explained below. Considering the importance and complexity of the “Themes” variable (RQ2) that had three levels of codes, 100% of the associated data were double-coded. For all other variables, we double-coded only 11% of the data due to resource constraints. Our decision to double-code 11% of the data was informed by Loewen and Plonsky (2015), who suggest double-coding 10–20% of the qualitative data. During the data synthesis phase, we held weekly meetings to discuss any discrepancies among the coders and reach agreement on all assigned codes, following Smagorinsky (2008) and Vorobel et al. (2021). The full set of variables and coded data are available as supplementary material in Appendix C.

We used descriptive statistics to quantitatively analyze the codes and provide insights into the features of the studies relevant for answering the four RQs.

Results

RQ1

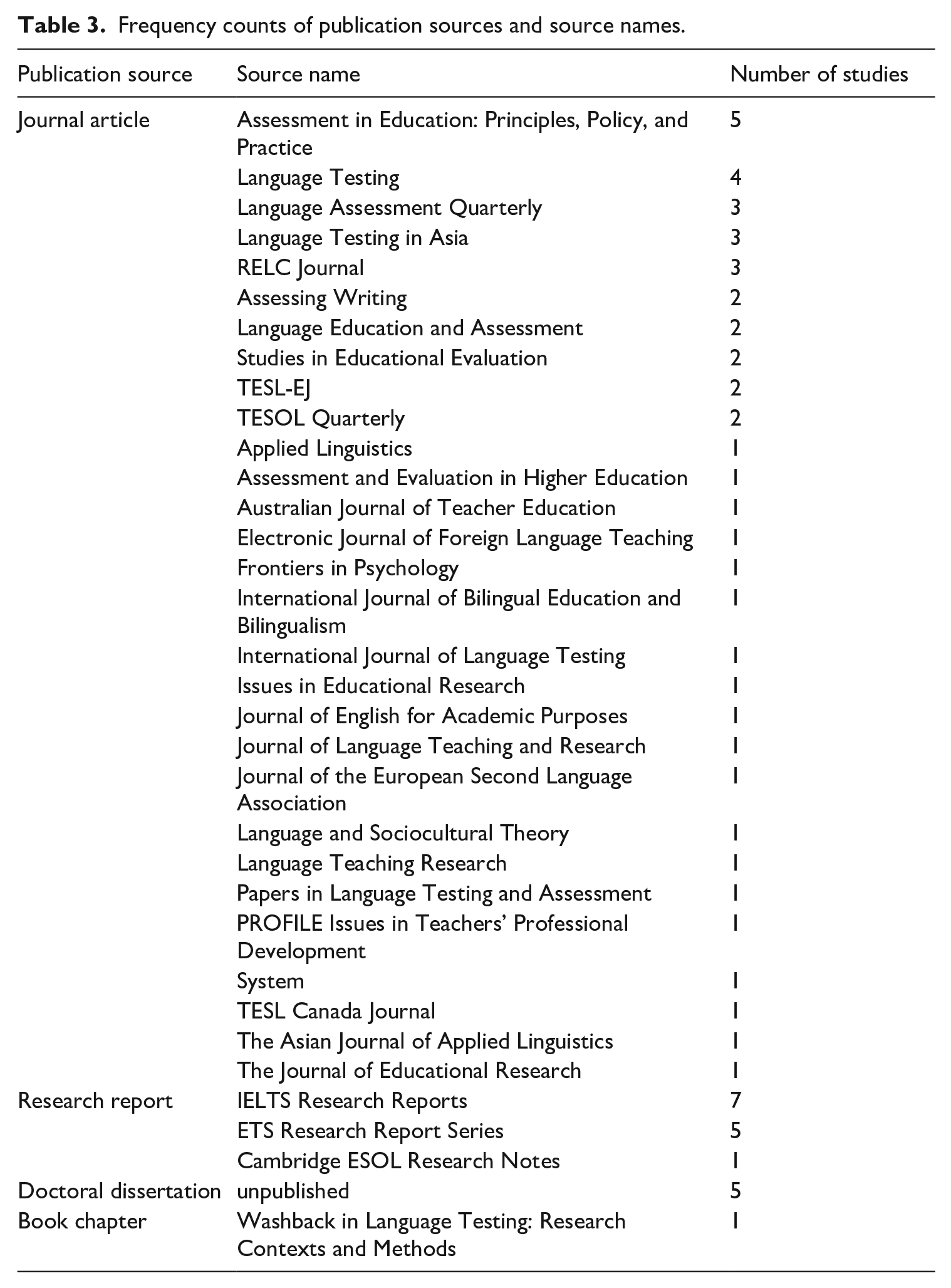

The first RQ investigated the volume of research on test preparation and the characteristics of primary studies. The 66 included studies comprised 47 journal articles published in 29 different journals (representing 71.2% of all the publications), 13 published research reports (19.7%), 5 unpublished doctoral dissertations (7.6%), and 1 book chapter (1.5%).

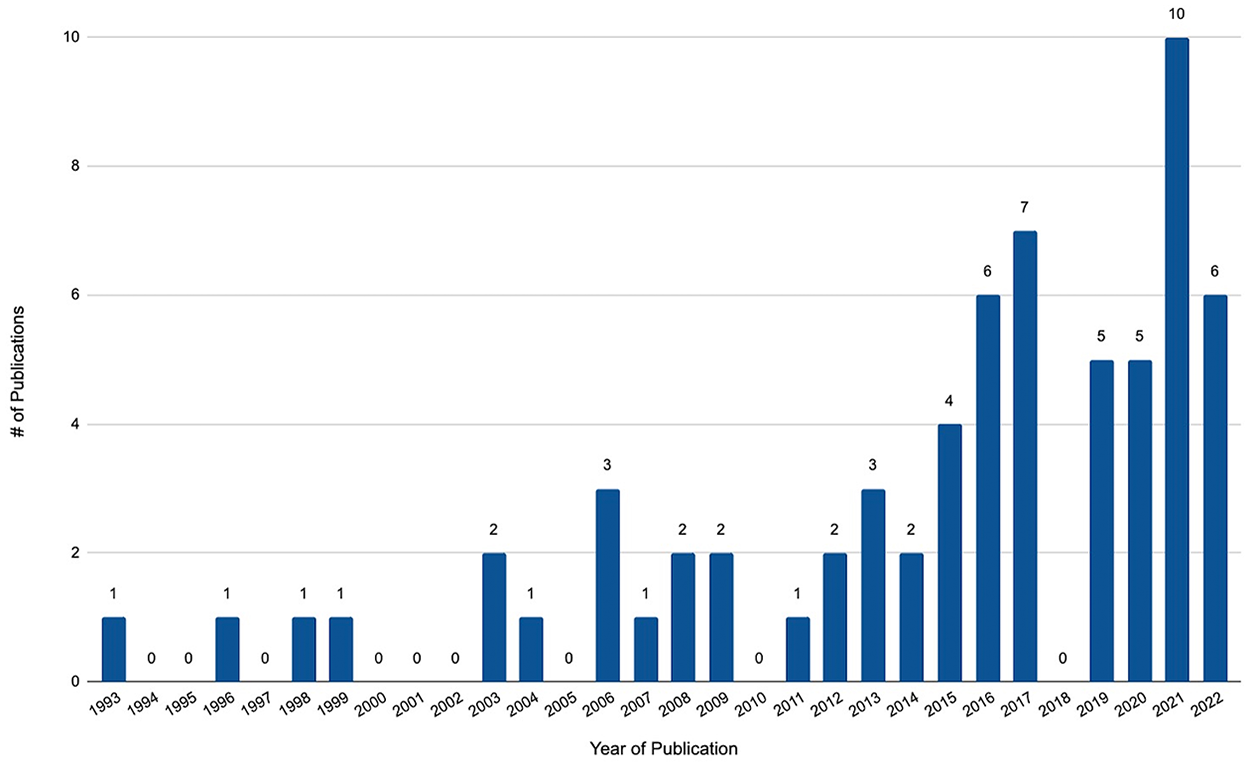

The most common publication venue was IELTS Research Reports (k = 7), followed by ETS Research Report Series (k = 5). Assessment in Education: Principles, Policy, and Practice (k = 5) and Language Testing (k = 4) were the most common publication venues for journal articles, followed by Language Assessment Quarterly, Language Testing in Asia, and RELC Journal (three studies in each journal, see Table 3). The collection of publications spanned a period of nearly 30 years, with the earliest study published by Wall and Alderson in 1993. As evident from Figure 2, the number of studies has steadily increased over the years, with the largest increase observed over the past decade, wherein 73% of the reviewed studies were published.

Frequency counts of publication sources and source names.

Number of included studies by year of publication.

RQ2

To answer RQ2, we examined the main themes in the 66 included studies on test preparation. As explained in the Methodology section above, our analysis of the themes was based on a three-level data coding approach. Following the three overarching Level 1 themes—namely, Perceptions, Processes, and Impacts—our analysis revealed 16 corresponding Level 2 themes (see the nested pie chart in Figure 3). Perceptions comprised Level 2 themes illustrating stakeholders’ perceptions of test preparation; Processes contained the themes representing different characteristics, factors, and practices associated with test preparation; whereas Impacts consisted of the themes that indicated how test preparation affected, or was affected by, various variables, including perceptions and processes. Among the 16 Level 2 themes, 3 were within Perceptions, 6 belonged to Practices, with the remaining 7 Level 2 themes constituting Impacts. As illustrated in Figure 3, Impacts was the largest Level 1 theme, with 80 instances of corresponding Level 2 themes found in the dataset (50 studies, or 76%), followed by Practices (60 instances of Level 2 themes in 39 studies, or 59%) and Perceptions (46 instances of Level 2 themes in 28 studies, or 42%). In terms of their frequency, the most common Level 2 themes were “learner perceptions” (found in 30 studies, or 45%), “impact of test” (28 studies, or 42%), and “learner practices” (20 studies, or 30%).

Distribution of Level 1 and Level 2 themes in the included studies.

At a more granular level, each Level 2 theme comprised a refined list of individual Level 3 themes. There was a total of 66 individual themes identified in the included studies, with 14 themes related to Perceptions, 18 themes to Practices, and 34 themes to Impacts. As shown in Appendix 2, the most common Level 3 themes were “learner perceptions of test preparation (courses, practices, strategies)” in Perceptions (15/66 included studies, or 23%), “learner test preparation practices” in Practices (13/66 studies, or 20%), as well as “impact of test preparation on test scores” and “impact of test on teaching” in Impacts (9/66 studies each, or 14%). The vast majority of studies examined multiple Level 3 themes, with a single theme being the focus of only 12/66 studies (or 18%).

RQ3

RQ3 inquired about the characteristics of the recruited participant sample, geographical locations of the studies, and types of language tests used in primary research on test preparation. The reviewed studies included six different types of research participants: learners (k = 58), teachers (k = 25), administrators (k = 3), alumni (k = 2), parents (k = 1), and employers (k = 1). Twenty out of 66 reviewed studies (or 30%) included two or three participant types, with learners and teachers being the most popular combination (k = 17). The number of learner participants in each study ranged from two in Minakova (2020) to 14,593 participants in Liu (2014) (Mdn = 89). The number of teacher participants ranged from one participant in Mickan and Motteram (2008) to 200 participants in Gebril and Eid (2017), Mdn = 8. Across all 66 included studies, there were a total of at least 34,840 learner participants, 498 teacher participants, 128 alumni, 100 employers, 28 administrators, and 6 parents.

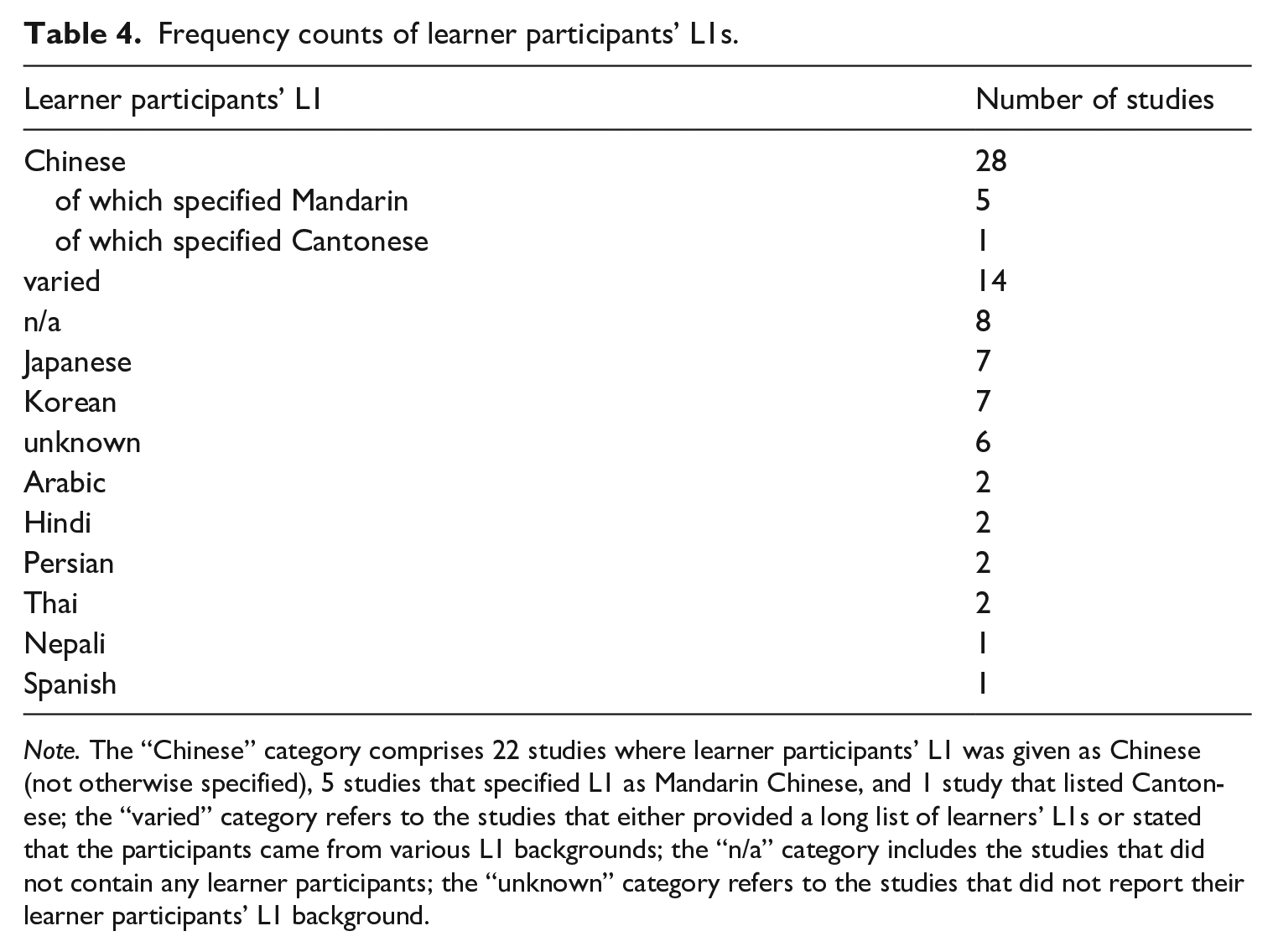

The reviewed studies included a wide range of learners’ first language (L1) backgrounds (see Table 4), with Chinese (k = 28), Korean (k = 7), and Japanese (k = 7) being the most common. Eighteen out of 66 studies had learner participants with mixed L1s, whereas in 31 studies, all learner participants shared the same L1. Notably, English was the target language in all 66 studies.

Frequency counts of learner participants’ L1s.

Note. The “Chinese” category comprises 22 studies where learner participants’ L1 was given as Chinese (not otherwise specified), 5 studies that specified L1 as Mandarin Chinese, and 1 study that listed Cantonese; the “varied” category refers to the studies that either provided a long list of learners’ L1s or stated that the participants came from various L1 backgrounds; the “n/a” category includes the studies that did not contain any learner participants; the “unknown” category refers to the studies that did not report their learner participants’ L1 background.

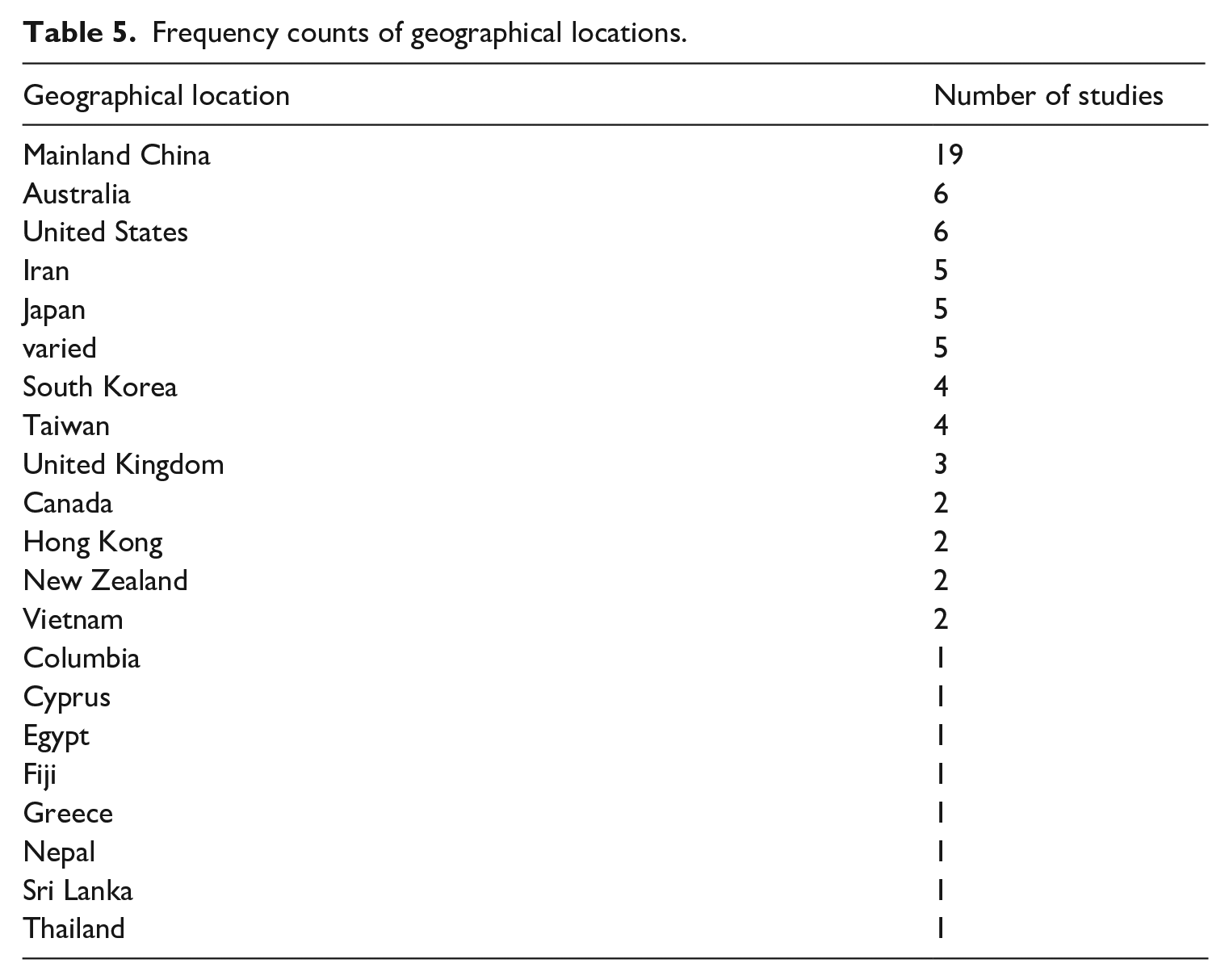

As shown in Table 5, the geographical locations of the included studies varied, with Mainland China (k = 19), Australia (k = 6), and the United States (k = 6) being the most common. Sixty studies were conducted in one of the three educational settings, including four studies with data from two educational settings each (i.e., Barnes, 2016, 2017; J. Ma, 2017; Yu et al., 2017): university (k = 32), language school (k = 24), or high school (k = 9). The data for the remaining six studies were collected outside of educational settings (e.g., online or over the phone).

Frequency counts of geographical locations.

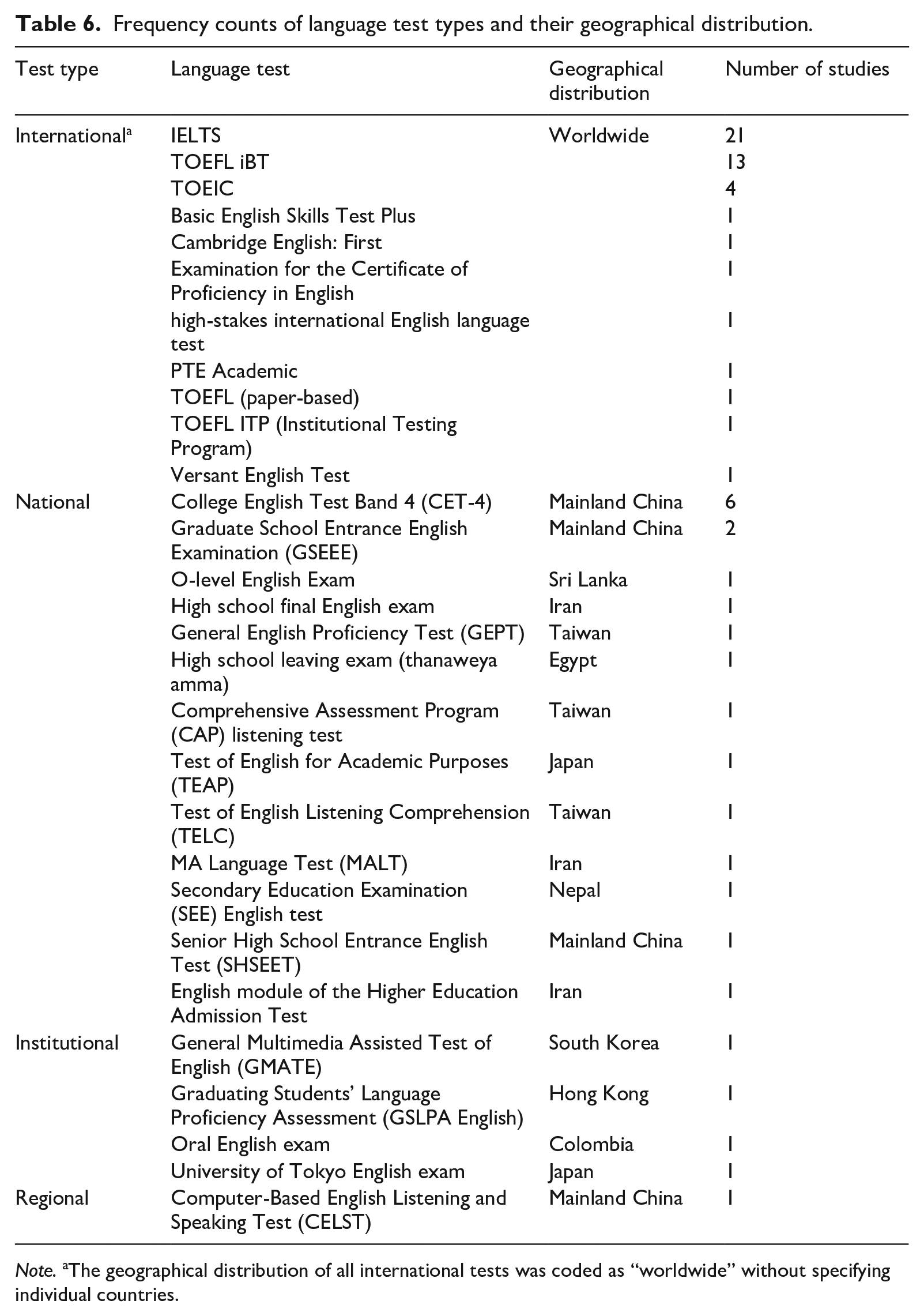

We categorized all language tests used in the included studies into four main types (see Table 6): international (k = 44), national (k = 20), institutional (k = 4), and regional tests (k = 1), with two studies examining two test types (i.e., J. Ma, 2017, and Stoneman, 2006). In terms of specific language tests, IELTS was the most commonly researched (k = 21), followed by TOEFL iBT (k = 13) and CET-4 (k = 6). Only four studies investigated more than one language test: Farnsworth (2013), J. Ma (2017), Saif et al. (2021), and Stoneman (2006). Table 6 also shows the geographical distribution of each test (i.e., countries where each test is administered), with the tests in the “international” category being coded as “worldwide” due to their presence in multiple countries.

Frequency counts of language test types and their geographical distribution.

Note. aThe geographical distribution of all international tests was coded as “worldwide” without specifying individual countries.

RQ4

The last RQ explored the research paradigms, research designs, data collection methods, data collection instruments, and data analytic methods used in the 66 studies on test preparation. In this review, we used the term research paradigm to refer to the three main methodological paradigms (i.e., quantitative, qualitative, and mixed methods), which we then further broke down into research designs and data analytic methods, as explained below. Forty percent of the included studies (k = 26) employed a qualitative research paradigm, followed by 22 mixed-methods studies and 18 studies that used the quantitative research paradigm. The vast majority of mixed-methods studies (i.e., 17/22) were published in the last decade, pointing to the growing recognition and adoption of this paradigm in research on test preparation.

Next, we loosely followed Creswell and Creswell (2022) to classify all included studies across the three paradigms into four categories of research design defined as “types of inquiry within qualitative, quantitative, and mixed methods approaches that provide specific direction for procedures in a research study” (p. 13). The four categories of research design were descriptive (i.e., studies designed to describe some existing phenomena related to test preparation), exploratory (i.e., studies designed to explore new phenomena), causal-comparative (i.e., studies designed to “compare two or more groups in terms of a cause (or independent variable) that has already happened,” Creswell & Creswell, 2022, p. 13), and correlational (i.e., studies investigating correlations among variables). The most common research design was descriptive (found in 51/66 studies, or 77%), followed by causal-comparative (31/66, or 47%), exploratory (23/66, or 35%), and correlational designs (16/66, or 24%). Eighteen studies were based on one research design, whereas the remaining 48 studies deployed a combination of two or, in some cases, three types of research design.

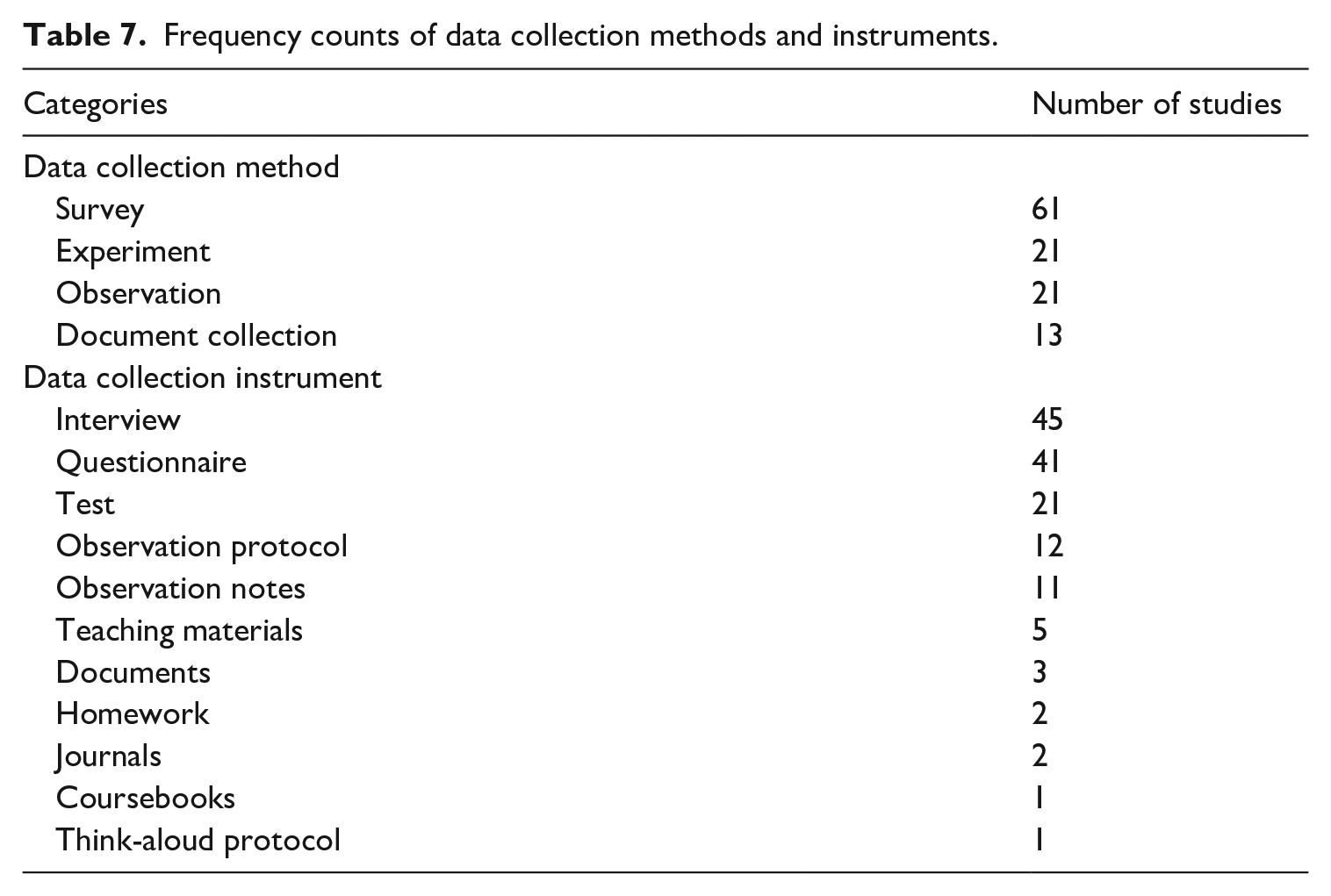

Table 7 shows that the most common data collection method was survey (k = 61) followed by experiment (which included both experiments and quasi-experiments, k = 21), observation (k = 21), and document collection (k = 13) methods. Twenty-six out of 66 studies (or 39%) used a single data collection method, namely, survey (22 studies), experiment (3 studies), and observation (1 study). The remaining 40 studies used a combination of two (k = 31), three (k = 8), or four (k = 2) data collection methods. Furthermore, researchers in the included studies used a variety of data collection instruments, with interviews (k = 45), questionnaires (k = 41), and tests (k = 21) being the most common.

Frequency counts of data collection methods and instruments.

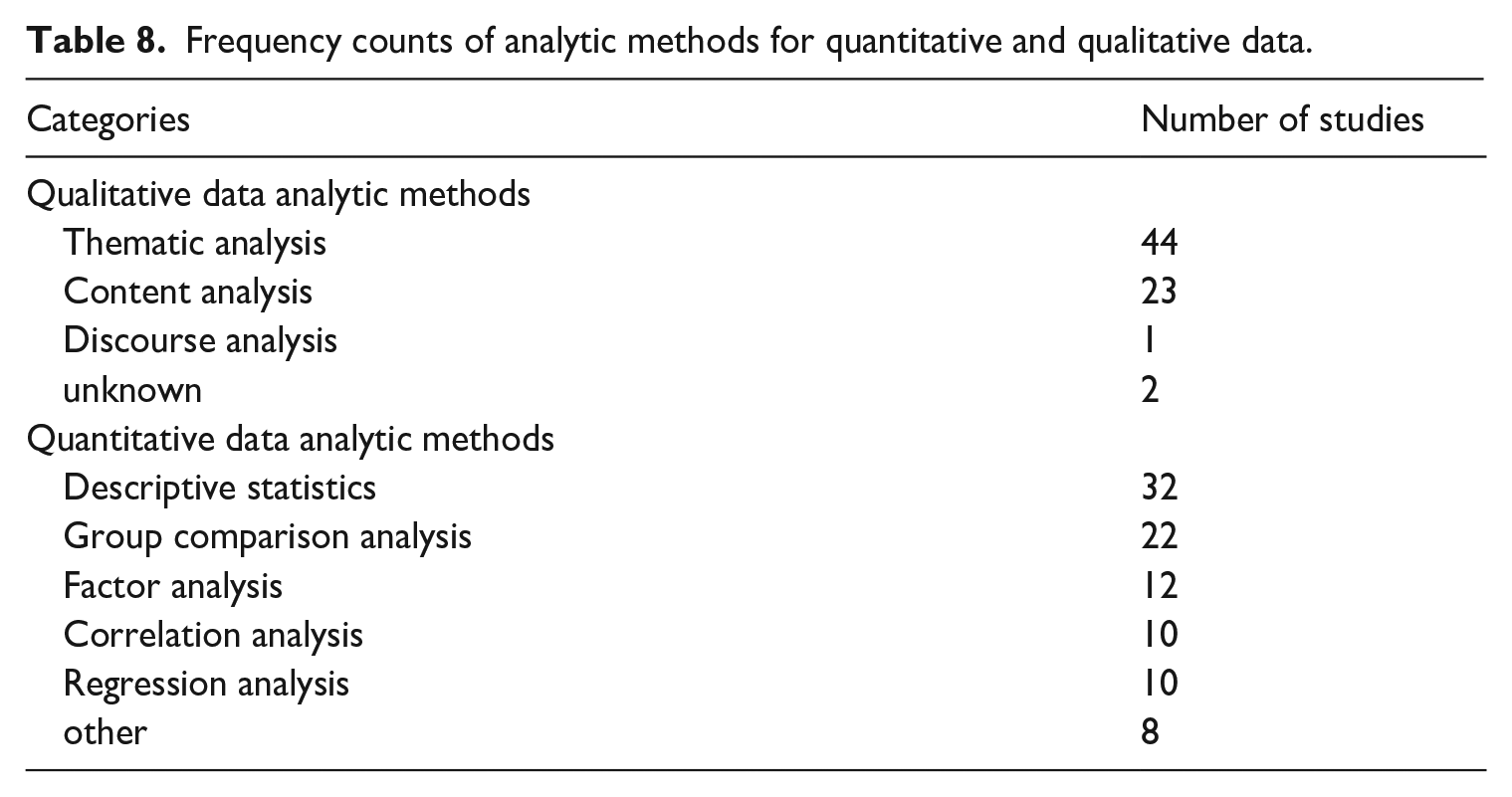

Among the qualitative data analytic methods (see Table 8), thematic analysis was the most common one (k = 44), followed by content analysis (k = 23) and discourse analysis (k = 1). Two studies that reported collecting and analyzing interview data as part of their mixed-methods research paradigms (i.e., Rao et al., 2003; Winke & Lim, 2017) did not specify the method used for qualitative data analysis and were thus coded as “unknown.”

Frequency counts of analytic methods for quantitative and qualitative data.

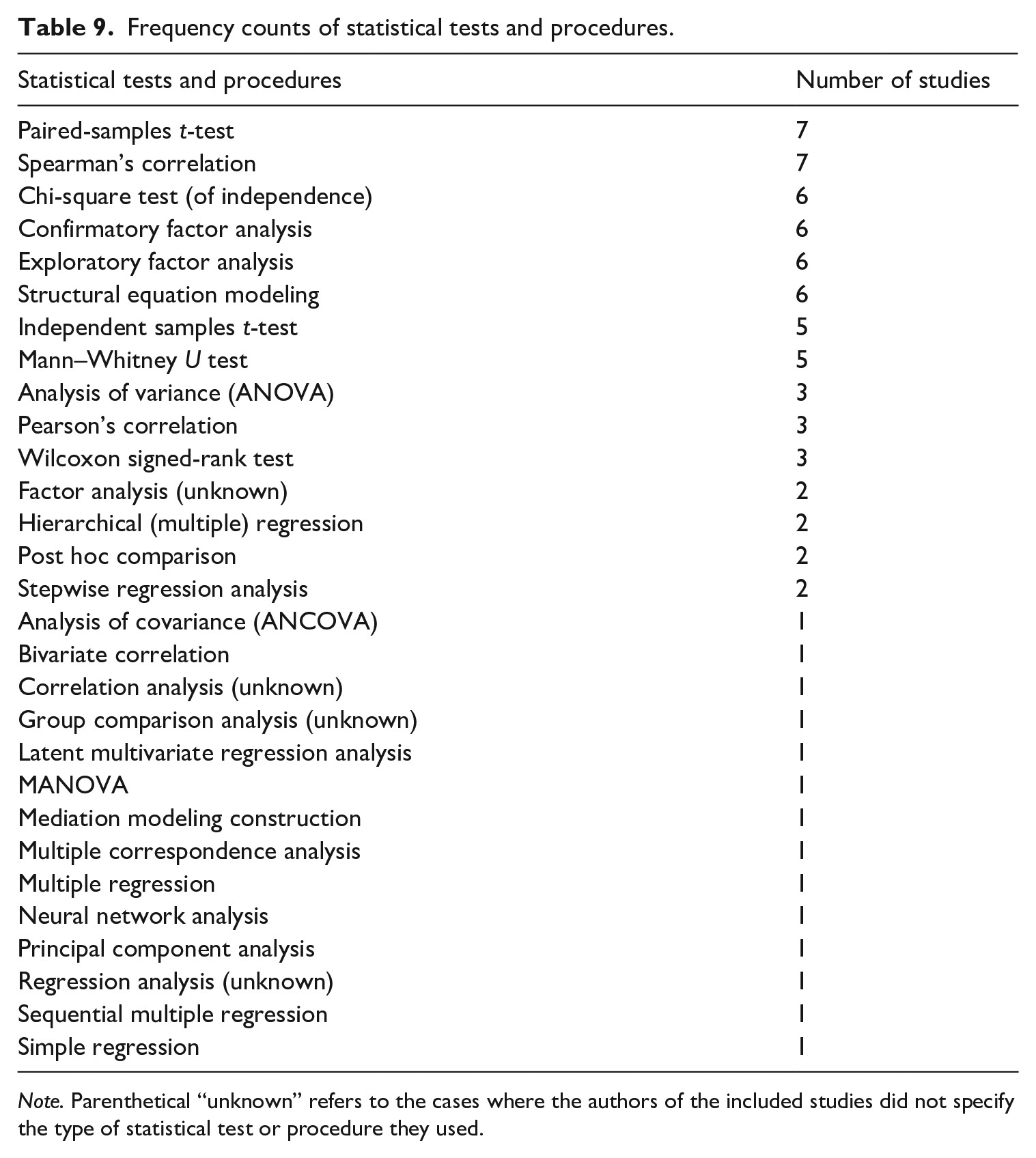

With regard to the quantitative data analytic methods, descriptive statistics were provided in almost half of the studies (k = 32), whereas group comparison analysis (which entailed testing for statistical significance) was used in one-third of the studies (k = 22). The remaining quantitative data analytic methods included factor analysis (k = 12), correlation analysis (k = 10), and regression analysis (k = 10), with 30 studies using a combination of methods. Within these broader categories of quantitative data analytic methods, there were multiple individual statistical tests and procedures (shown in Table 9), with the most common being paired-samples t-test and Spearman’s correlation (k = 7 each), followed by chi-square test, confirmatory factor analysis, exploratory factor analysis, and structural equation modeling (six studies each).

Frequency counts of statistical tests and procedures.

Note. Parenthetical “unknown” refers to the cases where the authors of the included studies did not specify the type of statistical test or procedure they used.

Discussion

The current study aimed to investigate methodological practices in research on test preparation. Specifically, we examined 66 studies to identify the number and types of publication venues, the main themes explored, methodological and participant characteristics, as well as the types of research approaches, research designs, data collection methods and instruments, and methods of data analysis. In this section, we discuss our results in relation to each RQ. In so doing, we summarize the main trends and patterns in our findings, offer an interpretation, and highlight issues related to the methodological aspects of the included studies, reflecting practices in language test preparation research over the past three decades, that require future consideration.

Amount of research and publication venues

The findings for RQ1 suggest that the number of studies investigating test preparation has been growing in recent years, demonstrating the increasing importance and scholarly maturity of this research area. One potential reason for this growth is a significant influx of international students in some predominantly anglophone countries over the past two decades, with the total number of internationally mobile students globally surging from 2.1 million in 2000 to 6.4 million in 2021 (UNESCO Institute for Statistics [UIS], 2024). This growth trend highlights the need for a deeper understanding of effective preparation practices tailored to different groups of L2 learners and various high-stakes language proficiency tests worldwide. It also underscores the importance of adapting L2 instructional approaches to ensure that this globally expanding demographic possesses the language proficiency necessary for academic success.

Most of the studies in our dataset (k = 47) have been published in journals. Even though the key journals in the field such as Language Testing and Assessment in Education: Principles, Policy & Practice house the largest number of studies per journal, the research report series published by ETS (k = 5) and IELTS (k = 7) contain the largest number of studies per publication venue. A fairly large number of research reports suggest that test preparation holds a prominent place in the research agendas of major test development companies that are willing to fund studies in this area.

While the diverse range of publication venues shows some promise that research on test preparation is of growing interest not only to applied linguists and language educators but also to scholars in adjacent fields such as psychology and cognitive science, the majority of source journals remain in the domains of language teaching/assessment, general education, and applied linguistics. The paucity of publications in adjacent fields suggests that for research on test preparation to have a meaningful impact on, for instance, language assessment policy for immigration or business, more interdisciplinary collaboration is needed between scholars in applied linguistics and other relevant areas such as political science. Such collaborations would ideally result in more cross-disciplinary research dissemination to expand the reach of test preparation literature across various publication venues.

Main themes

In response to RQ2, this scoping review uncovered a wide range of individual themes in the included studies. Interestingly, 60% of all themes (i.e., 40 out of 66) were explored in single studies. This pattern indicates that most themes related to test preparation have been studied only once, which points to a need for further, more in-depth investigation of these themes. In addition, more than half of individual themes (i.e., 34 out of 66 themes) were found to belong to the Impacts category and were investigated in 74% of the included studies (i.e., 49 out of 66 studies). This finding suggests that researchers working in this area tend to prioritize impact-related themes, such as the impact of test on teaching (e.g., Read & Hayes, 2003; Teng & Fu, 2019), the impact of test preparation on test scores (e.g., Winke & Lim, 2017; Xie, 2013), and the impact of test on learning (e.g., H. Ma & Chong, 2022; Wall & Horák, 2006). It should be noted that impact was found to be a complex bi-directional phenomenon, with some studies investigating the impact of different aspects of test preparation on test scores (i.e., impact of test preparation; see, for instance, Farnsworth, 2013; Hu & Trenkic, 2021; Knoch et al., 2020) and other studies examining the impact of a specific test on test preparation processes and practices (i.e., impact on test preparation; for example, Barnes, 2016; Stoneman, 2006; Yang, 2020).

While the studies exploring themes in the other two categories were less common, the proportion of their representation was still notable, with test preparation practices scrutinized in 38 studies (or 58%) and stakeholders’ perceptions being the focus of 29 studies (44%). Coupled with the fact that the vast majority of the included studies (i.e., 54 out of 66 studies, or 82%) investigated multiple themes, this moderately balanced distribution of the overarching categories intimates that researchers tend to study the topic of test preparation from different angles.

Study and participant characteristics

Despite the relevance of language test preparation to various stakeholder groups, the findings for RQ3 revealed that, with the exception of six studies (i.e., Dawadi, 2020; J. Ma, 2017; Pan & In’nami, 2017; Saif et al., 2021; Sayyadi & Rezvani, 2021; Wall & Horák, 2006), the existing body of research focuses overwhelmingly on teacher and learner participants. Other important groups of stakeholders in language testing (e.g., test administrators, immigration officials, and policymakers; see the list in Rea-Dickins, 1997) who use language test scores for making various decisions that have high stakes for L2 learners, such as decisions related to university admission, immigration, and employment, are yet to be examined empirically. The inclusion of other participant types in studies on test preparation appears to be particularly pertinent in light of Taylor’s (2013) call for further research investigating the development of language assessment literacy (LAL) across various stakeholder groups. Built upon the dimensions that Taylor proposed, Kremmel and Harding’s (2020) Language Assessment Literacy Survey contains multiple items related to test preparation and washback in general (e.g., Items 19, 23–25), indicating that the inclusion of more diverse participant (i.e., stakeholder) types in test preparation research has a strong potential to inform practices in the development of LAL, particularly in the component of language pedagogy.

Our results for RQ3 also point toward a sampling bias in the test preparation literature, a phenomenon not uncommon in applied linguistics and adjacent disciplines. Universities and language schools were the most common educational setting observed in the reviewed studies, a finding consistent with Andringa and Godfroid’s (2020) synthesis of meta-analyses in applied linguistics. Specifically, Andringa and Godfroid note that it is unclear what types of learners fall in samples from “language institutes” and that comparatively little is known about the language learning that takes place there. In the current synthesis, the reviewed studies covered multiple types of language schools, such as Japanese “juku” cram schools (Allen, 2016b) and Korean “hagwons”—private cram schools (Kim, 2021). Additionally, English was the only observed target language in the literature on test preparation, a finding that is less surprising when considered together with the overwhelming prevalence of studies investigating the preparation for international language tests such as IELTS and TOEFL iBT. Therefore, generalizing findings from educated participants (with likely higher socio-economic status) who study English for high-stakes international assessments to other contexts may not be appropriate. To obtain more generalizable results in this line of research, we stand in agreement with Andringa and Godfroid’s (2020) recommendation to diversify participant samples and expand research contexts beyond academia.

Patterns in research methodologies

The last RQ evinced some intriguing methodological patterns in the literature on test preparation, with three-fourths of the studies using descriptive research design and employing qualitative data analytic methods. Only a handful of quantitative or mixed methods studies in our dataset utilized more advanced statistical tests and procedures such as factor analysis (k = 12) and structural equation modeling (k = 6), with most studies resorting to the use of descriptive statistics (k = 32). This finding echoes Xie’s (2015) concern about a dearth of quantitative research on test preparation that employs “sophisticated” (p. 58) quantitative methodology.

In light of the large body of descriptive studies, it was hardly a surprise that survey was deployed as the data collection method in all but five studies, with interviews and questionnaires being the most common data collection instruments (k = 45 and k = 41, respectively). While survey research can provide useful insights into different aspects of test preparation and has been extensively adopted in language assessment and the broader field of applied linguistics, a number of concerns have been raised in recent years regarding the validity of this method and associated data collection instruments. Such concerns include the impact of the interviewer (e.g., power dynamics) and the interactional context on the interviewee’s responses (Talmy, 2010), the issues related to ill-constructed questionnaires that do not adequately measure the construct under consideration (Dörnyei & Dewaele, 2023), as well as over-reliance on convenience sampling that limits the generalizability of the findings (Wagner, 2015). These limitations highlight the fact that survey research alone cannot elucidate the full spectrum of variables and the complexity of their interaction, suggesting the importance of procuring additional empirical evidence through well-designed experiments that use, for example, the completely randomized design or the factorial design.

Lastly, the findings for RQ4 also demonstrated that qualitative studies in our dataset relied primarily on thematic analysis of the data. As a widely used approach to identifying, analyzing, and reporting themes in the data, thematic analysis has been embraced by many researchers because of its flexibility and ease of use (Nowell et al., 2017). Meanwhile, one of the main disadvantages of this qualitative data analytic method is that its flexible nature may lead to the lack of consistency and coherence in theme development (Holloway & Todres, 2003, as cited in Nowell et al., 2017). Indeed, in analyzing the research methodologies underlying the included studies, we observed that some of them failed to adequately outline and report the details of the procedure followed in conducting a thematic analysis that can be considered trustworthy and methodologically rigorous. This issue highlights the importance of transparency in qualitative research (cf. Chong & Plonsky, 2021) and points to the need to ensure the methodological rigor of future qualitative studies on test preparation.

Conclusion

The overarching goal of this scoping review was to provide a synthesis of research on test preparation in both second and foreign language contexts. Specifically, we aimed to explore the key characteristics of publications on test preparation, the main themes, the study and participant characteristics, as well as the essential aspects of research methodologies underlying the included studies. Out of 66 studies that we identified using a set of inclusion and exclusion criteria, the most common publication type was journal articles published in various L2 testing and learning journals, followed by research reports published by IELTS and ETS. The earliest publication on test preparation appeared in 1993, with the last decade witnessing a substantial increase in the number of published studies on this topic. Using a three-level coding scheme to examine the themes in each study, we identified 66 individual Level 3 themes within 16 Level 2 themes that were grouped into 3 main Level 1 themes (i.e., Impacts, Practices, and Perceptions). The largest number of studies fell into the Impacts theme, followed by the Practices and Perceptions themes. Almost one-half of the included studies belonged to “learner perceptions” (Level 2 theme), with other common Level 2 themes being “impact of test” and “learner practices.”

In terms of participant characteristics, 88% of all participants were learners, with their number in individual studies ranging from two to 14,593. Furthermore, we found that Chinese was the most dominant learners’ L1 and Mainland China was the most common geographical location for data collection, with most studies conducted at universities (48%) or language schools (36% of studies). As the target language of all 66 studies was English, two-thirds of tests examined in the included studies were international English language proficiency tests, such as IELTS and TOEFL iBT, followed by almost one-third of studies that investigated national tests, such as CET-4 in Mainland China.

Finally, our scoping review revealed that studies conducted within the qualitative research paradigm were more prevalent (40%) compared to mixed methods (33%) and quantitative studies (27%). Surveys were used in all but five studies, with interviews and questionnaires reported as data collection instruments in 68% and 62% of the studies, respectively. While thematic analysis was the most common qualitative data analytic method (67% of the studies), descriptive statistics were present in almost half of the included studies and group comparison analysis was used in one-third of the studies. Our review also unveiled a multitude of statistical tests and procedures that varied from fairly basic (e.g., paired-samples t-test) to more advanced (e.g., structural equation modeling).

Implications

Our examination of the individual themes across the 66 included studies revealed that test preparation is a multifaceted phenomenon: Most themes (40 out of 66) have been the subject of individual studies. The broad range of individual themes shows that researchers have embarked on disparate explorations and have approached the issue from different angles, which carries implications for scholars further delving into this topic. When interpreting existing literature findings, scholars should be cautioned against making generalizations, since there is a lack of multiple studies exploring the same theme. Consequently, there is a pressing need for research that revisits and repeatedly explores these individual themes to construct a more informed comprehension of the complex phenomenon that is test preparation. Such replication research is essential for strengthening the validity and generalizability of existing findings (Porte & McManus, 2019)—for instance, on the link between self-access test preparation activities and test performance in different instructional or assessment contexts than the original study (Knoch et al., 2020).

While research on test preparation lacks thematic homogeneity, there has been a noteworthy increase in research on this topic, especially over the last 10 years (with research published since 2013 accounting for 73% of the reviewed studies). This surge underscores the growing importance of test preparation in the field of language assessment, carrying implications for test developers. One such implication is that test preparation practices should be taken into consideration in test validation research that examines the impact of preparation on the assessment of language proficiency. Examining test preparation practices as part of validation research should extend to more local tests, such as institutional and regional language tests that, unlike well-established international language tests, are less likely to be the subject of multiple robust validation studies.

The exclusive focus on English tests in the current literature reflects the dominant role that English continues to play in the global language assessment industry (see, for instance, Isbell & Kremmel, 2020). This has implications for ESL and EFL teachers: Preparation for high-stakes English language tests has been and will most likely remain an integral part of language learning, especially in countries with exam-centric educational structures with institutionalized test-preparation markers, such as China, Japan, and South Korea (Ross, 2008). Therefore, ESL and EFL teachers working with L2 learners striving to prepare for specific English proficiency exams should seek a healthy balance between engaging learners in effective test-preparation practices and helping them improve their general English language proficiency.

Limitations

One limitation of this study pertains to our conceptualization of test preparation. As previously mentioned, there exists a broad range of terminologies denoting topics that are directly or closely associated with test preparation, such as coaching (e.g., Hu & Trenkic, 2021), teaching to the test (e.g., Menken, 2006), and test-wiseness (test-deviousness) or test-taking strategy training (e.g., Yu & Green, 2021). This lack of standardized conceptualization surrounding test preparation posed challenges in the search for studies relevant to our synthesis. To mitigate this challenge, we developed a comprehensive search strategy that encompassed an extensive range and various combinations of search terms (e.g., washback, backwash, test preparation, and coaching). Nonetheless, it remains possible that the variability in conceptualization may have impeded our ability to detect all pertinent studies.

Another potential limitation concerns our approach to data coding. When coding the data to identify the main themes explored in the literature on test preparation (RQ2), we encountered differences in nomenclature used by the authors of the included studies. As a result, our challenge was to decide whether to code the data for a particular theme based on the authors’ original terminology (which at times was misleading and did not accurately reflect the phenomena under investigation), or rather whether to rely on our own interpretation of the theme when assigning codes. To minimize the potential bias in our coding and analysis and ensure high inter-coder reliability, all four authors of this scoping review independently coded the data for themes and then discussed the codes to reconcile any discrepancies and create a final list of themes. A similar issue arose when coding the data for RQ4: The authors of the included studies used different terminologies to refer to their research designs, data collection instruments, and methods of data analysis, which we had to reconcile. For instance, while a number of studies that reported using written “surveys” with Likert-scale items referred to them as data collection instruments, we coded these as “questionnaires” following Brown (2001) and Wagner (2015), with the term “survey” being reserved for a data collection method.

Finally, we did not investigate the methodological quality of the included publications in our scoping review. According to Peters et al. (2020), a formal appraisal of the methodological quality of the research studies included in a scoping review is generally not required. However, such an appraisal would have strengthened the quality of this synthesis by more systematically evaluating individual studies. It could have also provided a snapshot of the methodological strengths and shortcomings of the included studies as an indicator of the state of reporting on language test preparation. Adopting appropriate reporting guidelines to formally evaluate methodological quality would be warranted in any further use of systematic methods to investigate this topic (see, for instance, Isaacs & Chalmers, 2023).

Directions for future research

While this scoping review provided a comprehensive characterization of the extent and nature of research on test preparation and identified a variety of themes related to test preparation perceptions, practices, and impacts, our synthesis did not examine the specific knowledge that was gained about these themes in the included studies. Consequently, future research is needed to review the findings of the existing studies on test preparation in order to advance our understanding of how test preparation is perceived by the key stakeholders, what test preparation practices are commonly used, and what factors contribute to the effectiveness of test preparation.

Our scoping review has also revealed a need for experimental studies that use more advanced statistical tests and procedures to investigate test preparation practices and their impact on L2 learners’ test performance. By employing well-designed experiments and harnessing the power of analytic techniques that align with the RQs, future studies will be able to explore complex relationships among different variables related to test preparation, provide deeper insights into the data, uncover hidden patterns, and enhance the validity of the research findings and their interpretation.

Further research is also warranted to address the existing gaps that we identified in the literature on test preparation. For instance, more empirical studies should be undertaken to explore a broader range of tests that we identified in the included studies based on their geographical distribution. While the majority of studies in this scoping review (approximately 67%) focus on high-stakes international tests, such as IELTS (k = 21) and TOEFL iBT (k = 13), it is essential to investigate other tests, such as national and regional tests, given that some of them also have a substantial number of test-takers. For example, the CET-4 test, a national English proficiency exam in Mainland China administered to undergraduate students (with the exception of English majors), warrants a more thorough investigation. The CET-4 was taken by 13 million students in 2006 (Zheng & Cheng, 2008), compared to a record 3.5 million test-takers reported by IELTS in 2018 (IELTS, 2019). This example highlights the importance of gathering additional empirical evidence on a wider range of tests to gain a deeper understanding of test preparation in L2 contexts. Moreover, it is imperative to undertake additional empirical research aimed at exploring proficiency tests of languages other than English. Notably, among the 66 studies within this scoping review, English has consistently remained the sole focal language. Researchers across various areas of applied linguistics, such as Gillespie (2020) in computer-assisted language learning and Dalman and Plonsky (2022) in L2 listening strategy instruction, have increasingly emphasized the urgent necessity for research examining a more extensive array of languages, including less commonly taught languages.

Finally, future research should investigate the influence of the COVID-19 pandemic and online language proficiency tests on test preparation practices, perceptions, and impacts. The landscape of post-secondary admissions and language proficiency testing has undoubtedly been altered since the onset of the pandemic (Ockey, 2021), with the closing of testing centers in 2020 necessitating the swift development and uptake of digital, at-home language tests such as the Duolingo English Test (DET) (Isbell & Kremmel, 2020). Although the literature on relatively novel, online proficiency tests such as the DET is still rather scarce, the importance of test preparation practices and their relevance to the interpretation of scores from such tests has already been recognized by some researchers (e.g., Isaacs et al., 2023). Given the dearth of investigations into the online language test preparation industry, scholars are left to speculate about the potential washback of assumed test preparation practices on score validity (e.g., Wagner, 2020). Therefore, there is a clear need for future research to examine the perceptions, practices, and impacts of test preparation for new digital at-home language tests compared to more established language proficiency tests administered at testing centers.

Supplemental Material

sj-xlsx-1-ltj-10.1177_02655322241249754 – Supplemental material for A scoping review of research on second language test preparation

Supplemental material, sj-xlsx-1-ltj-10.1177_02655322241249754 for A scoping review of research on second language test preparation by Shanshan He, Anne-Marie Sénécal, Laura Stansfield and Ruslan Suvorov in Language Testing

Footnotes

Appendix

Frequency counts of the three levels of codes representing the Themes variable.

| Level 1 | Level 2 | Level 3 | No. of studies |

|---|---|---|---|

| Perceptions | Admin perceptions | Admin perceptions of test prep | 1 |

| Learner perceptions | Learner perceptions of test prep (courses, practices, and strategies) | 15 | |

| Learner perceptions of test | 9 | ||

| Learner perceptions of teaching method in test prep course | 1 | ||

| Learner perceptions of technologies for testing | 1 | ||

| Learner perceptions of test impact on learning | 1 | ||

| Learner perceptions of test prep outcomes | 1 | ||

| Learner perceptions of questioning skills in test prep course | 1 | ||

| Learner perceptions of usefulness of feedback for test prep | 1 | ||

| Teacher perceptions | Teacher perceptions of test prep (courses, practices, and strategies) | 9 | |

| Teacher perceptions of test | 3 | ||

| Teacher perceptions of questioning skills in test prep course | 1 | ||

| Teacher perceptions of test impact on language proficiency | 1 | ||

| Teacher perceptions of usefulness of feedback for test prep | 1 | ||

| Practices | Learner practices | Learner test prep practices | 13 |

| Learner test prep strategies | 4 | ||

| Learner test-taking strategies | 2 | ||

| Changes in learner test prep practices over time | 1 | ||

| Teacher practices | Teacher test prep practices | 9 | |

| Parental practices | Parental involvement | 1 | |

| Characteristics | Characteristics of test prep courses | 8 | |

| Comparison of test prep and non-test prep courses | 6 | ||

| Characteristics of test prep course materials | 1 | ||

| Characteristics of test prep resources | 1 | ||

| Characteristics of participants in test prep courses | 1 | ||

| Factors | Factors affecting learner test prep (practices, strategies) | 5 | |

| Factors affecting teacher practices in test prep courses | 2 | ||

| Factors affecting learner self-efficacy | 1 | ||

| Practice-based relationships | Relationship between test prep practices and test | 2 | |

| Relationship between test prep and self-efficacy | 1 | ||

| Relationship between test prep practices and stakeholder perceptions | 1 | ||

| Relationship between test-taking strategies and test anxiety | 1 | ||

| Impacts | Impact of perceptions | Impact of learner perceptions of test on test prep practices | 4 |

| Impact of learner perceptions of test on test scores | 1 | ||

| Impact of teacher perceptions on test prep courses | 1 | ||

| Impact of practices | Impact of strategy use on test scores | 3 | |

| Impact of Dynamic Assessment on language development and test prep | 1 | ||

| Impact of strategy instruction on strategy use | 1 | ||

| Impact of strategy instruction on test scores | 1 | ||

| Impact of teaching method on test scores | 1 | ||

| Impact of individual characteristics | Impact of learner characteristics (motivation) on test prep practices | 3 | |

| Impact of teacher characteristics on test prep practices | 1 | ||

| Impact of test | Impact of test on teaching | 9 | |

| Impact of test on learning | 7 | ||

| Impact of test on teaching methodology | 3 | ||

| Impact of test on test prep strategies | 3 | ||

| Impact of test on teaching content | 2 | ||

| Impact of test on test prep practices | 2 | ||

| Impact of test on employability | 1 | ||

| Impact of test on test-taking strategies | 1 | ||

| Impact of test prep courses | Impact of test prep courses on test scores | 8 | |

| Impact of test prep courses on language proficiency | 4 | ||

| Impact of non-test prep courses on test scores | 1 | ||

| Impact of test prep course materials on teaching | 1 | ||

| Impact of test prep courses on learner motivation | 1 | ||

| Impact of test prep courses on learner practices | 1 | ||

| Impact of test prep courses on learner perceptions of learning | 1 | ||

| Impact of test preparation | Impact of test prep on test scores | 9 | |

| Impact of test prep on language proficiency | 2 | ||

| Impact of test prep on test anxiety | 1 | ||

| Impact of test prep on learning | 1 | ||

| Impact of test prep on teaching | 1 | ||

| Impact of test prep on test-taking strategies | 1 | ||

| Impact-based relationships | Relationship between test scores and academic outcomes | 1 | |

| Relationship between test scores and number of test-taking attempts | 1 | ||

| Comparison of test scores from test prep course participants and non-test prep course participants | 1 |

Acknowledgements

The authors would like to thank Dr. Talia Isaacs and the three anonymous reviewers for their insightful comments and constructive feedback that have been instrumental in shaping and refining this manuscript.

Author Contribution

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Ruslan Suvorov currently serves as Associate Editor of Language Testing. He was blinded to the manuscript in the ScholarOne online submission platform and Dr. Talia Isaacs managed all stages of its processing as handling editor. The remaining co-authors declared no potential conflicts of interest with respect to the research and authorship of this study.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.