Abstract

Background:

The Trail Making Test (TMT) is a famous neuropsychological test that is frequently used. The structure of a paper-and-pencil-based TMT is highly likely to be adapted to a mobile app. The current study aimed to develop and validate an Android-based tablet version of TMT.

Methods:

The application (TMT App) was developed using an Android-based platform. Healthy and depressed individuals (n = 133) were assessed on both the TMT versions (paper-based version and app-based version) in a random cross-over design. The device’s usability was ascertained using the system usability scale (SUS) in a subset of individuals (n = 65).

Results:

There was a significant positive correlation between the individual processing times for the paper-based TMT-A and the app-based TMT-A in both healthy and depression groups [r(63) = 0.55, p < .001; and r(66) = 0.77, p < .001, respectively]. The individual processing times of the paper-based TMT-B and the app-based TMT-B also showed a significant positive correlation in both healthy control and depression groups [r(63) = 0.67, p < .001; and r(66) = 0.89, p < .001, respectively]. There was a positive correlation of age with TMT-A and TMT-B for either version. Both groups had similar positive responses to the usability of the TMT App.

Conclusion:

The preliminary validation results for the TMT App suggest that it is significantly correlated with existing paper-and-pencil methods, and that it is user friendly.

The digital TMT version (TMT App) is a valid and reliable tool to measure cognitive measurements. TMT App is significantly correlated with existing paper-and-pencil methods. The processing time for test completion of both TMT-A and TMT-B in depressed individuals is longer as compared to healthy individuals using TMT App, similar to the paper-based version.Key Messages:

Cognitive tests are used to evaluate an individual’s cognitive functioning, including but not limited to auditory, visual, perceptual, motor, and episodic memory. These were primarily developed as manually administered paper-and-pencil/pen-based tests. While they are beneficial in conjunction with other mental or physical examinations and brain imaging to determine cognitive impairments, they are susceptible to variability due to test administration or procedures. Digital or computerized tests can address this aspect of variability and maintain consistency and standardization in procedure across participants. 1 Digitalized tests can also capture more high-fidelity data to increase performance measurement accuracy and facilitate further in-depth analysis. Researchers have found computer-based testing methods more sensitive to subtle awareness deficits than paper-based alternatives. 2 There has been a recent rise in digitalized applications for evaluating cognitive functioning utilizing mobile/tablet platforms or computerized versions. Using inter- and intra-person consistency, the utility of such digital tests has been compared against conventional manually administered methods. 3 Better cognitive evaluation strategies can benefit clinicians and patients, providing an opportunity for a minimal sample size to capture subtle deficits in randomized controlled trials (RCTs). 4

The Trail Making Test (TMT) is a famous and frequently used neuropsychological test because of its simplicity, good sensitivity, and ease of administration. 5 It was created initially to assess general intelligence as a part of the Army Individual Test Battery; it was subsequently modified and included in the Halstead–Reitan Neuropsychological Battery.6,7 It is frequently used as a diagnostic instrument to detect cognitive deficits in stroke, traumatic brain injuries, and dementia.8,9

The existing version of the TMT is manually administered and consists of only one form. 10 The test structure is highly apt for being adapted to a mobile app by using a finger or stylus on a touch screen, replacing pen and paper in the conventional version. Like the trail made with a pen on paper, a line could be drawn on the screen following the participant’s touch. Instead of the examiner calculating the test’s rules, the app could detect it automatically. Such digitalization can generate unlimited TMT screen arrangements using various algorithmic approaches.

Several digital variants of the TMT have been reported in the literature. The versions have been developed on computers,1,11-13 digital pens, 14 fMRI machines,15,16 or mobile/tablet based on iOS,17-19 online/chrome, 20 or Android software.3,19,21 The computerized versions of TMT (eTrails, iTMT, C-TMT, etc.) have reported higher reliability than Reiten’s TMT.1,12 The digital versions are considered better because of fewer administrative errors and capture more high-fidelity data, thereby increasing the measurement accuracy. Some of these are incorporated as a part of a cognitive assessment battery. Only a few are mobile/tablet-based versions, and none are available in the public domain, as is the paper-and-pencil version. The current study aimed to develop and validate a tablet-based version of TMT on the Android platform.

Methods

Participants

Individuals attending psychiatry outpatient services at a tertiary care hospital in North India and hospital staff were included in this cross-sectional study with convenience-based sampling and randomized cross-over design conducted from October 2021 to February 2023. Individuals aged between 18 and 55 years with the ability to read the English language and provide informed consent were included, while those with a history of any significant head trauma, chronic neurological or other physical illness, and substance abuse (except nicotine and caffeine) were excluded from the study. Individuals fulfilling the criteria of depressive disorder as per the Diagnostic and Statistical Manual-5 (DSM-5) were included in the depression group (individuals with depression). Healthy control (HC) participants were chosen from family members (nonbiological) or friends of patients attending the psychiatry outpatient services and hospital staff. The study was approved by the Institute Ethical Committee prior to the conduction of the study (IEC-663/03.09.2021 dated 06-09-2021).

Instruments

A semi-structured interview sheet: It consisted of a socio-demographic profile and clinical details. The socio-demographic profile contained information regarding age, gender, marital status, education, contact details, residential status, etc. The clinical details included the age of illness onset, total duration of illness, family history of psychiatric illness, and medications received (with dosage).

Montgomery–Åsberg Depression Rating Scale (MADRS): This clinician-rated scale for assessing depression severity consists of 10 items; each item is rated on a scale of 0–6, resulting in a maximum total score of 60 points, with higher scores indicative of more significant depressive symptomology. 22 The scoring instructions indicate that a total score ranging from 0 to 6 indicates that the patient is in the normal range (no depression), a score ranging from 7 to 19 indicates “mild depression,” 20 to 34 indicates “moderate depression,” a score of 35 and greater indicates “severe depression.” A total score of 60 indicates “very severe depression.” While the Hamilton Depression Rating Scale (HAMD) includes items that address somatic symptoms, the MADRS focuses on the psychological symptoms of depression (e.g., sadness, tension, and pessimistic thoughts). 23

Trail Making Test (TMT):: It has been associated with measures of speed, visuospatial skills, general fluid cognitive abilities, cognitive flexibility, set-switching, motor skills, and dexterity. 24 It consists of two parts. Part A requires an individual to draw lines sequentially connecting 25 encircled numbers distributed on a sheet of paper as quickly as possible without lifting the pen. Task requirements are similar for part B, where the individual must alternate between numbers and letters (e.g., 1, A, 2, B, 3, C, … 13). While part A estimates principally visuo-perceptual abilities, part B mainly reflects working memory and secondarily task-switching ability. Subtracting performance of part A from B, visuo-perceptual and working memory demands are minimized, providing a better indicator of executive functioning. 25 The total time taken to complete each part provides the test score. If the participant cannot complete the test within 300 seconds, the score is 301 seconds. In the paper-based test version, the participant uses a pen/pencil to connect the circles containing the numbers or letters on white paper. The examiner/researcher scores the test manually, using the overall time taken and several errors made by the participant. The Android-based TMT App was developed on a mobile/tablet using a similar display and usability format.

System Usability Scale (SUS): It is a quick and reliable tool designed for measuring the usability of any system. It is a 10-item questionnaire assessing participant responses on a Likert scale, from strongly agree to strongly disagree. It appraises various products and services, including software like websites or applications and hardware like mobile devices. 26

Study Procedure

Phase I—Development of TMT App

The app development phase commenced by performing a technology review of the available multi-platform frameworks. The application was then developed using an Android-based platform using the open-source software Android Studio, which is the official integrated development environment (IDE) for Google’s Android operating system, built on JetBrains’ IntelliJ IDEA software and designed specifically for Android development (

Instead of designing a new cognitive test, the TMT App was created to look like the original test to determine initial convergent validity with the paper-based version. The capacitive touch screen displayed numbers and letters for TMT parts A and B in the format of the original paper-based version. Each number or letter was mapped to individualized sensor points. The initial screen asks the user to feed basic information about themselves (including name or any identification number, age, gender, and phone number), which gets recorded in a folder in the download section of the tablet. The user would then enter the next screen after pressing the “Enter” button, where they would be asked to take TMT part A or B. Individual screens for both parts would initially mention the instructions for conducting the test and provide the option of trying a practice session before a test session. In either of the sessions, the user would be shown a screen where the user would start with the minimum number “1” and then, without lifting a finger, glide over the screen to the following number/letter following the logic of part A or B. A line would be displayed following the finger sliding over the screen from one number/letter to another. The displayed line will remain connected if the user joins two consecutive correct numbers/letters. It will stick to the user’s fingertip, suggesting that the connected number/letter was incorrect so that the user may try another number/letter. Once all the numbers/letters are connected, the user may lift a finger to touch the “Done” button on the screen, after which the user is taken back to the previous screen for further testing or assessing results. The result screen would display the user details (name, age, gender) and the test scores in the total time taken for completion of TMT A and B each.

Phase II—Validation of TMT App

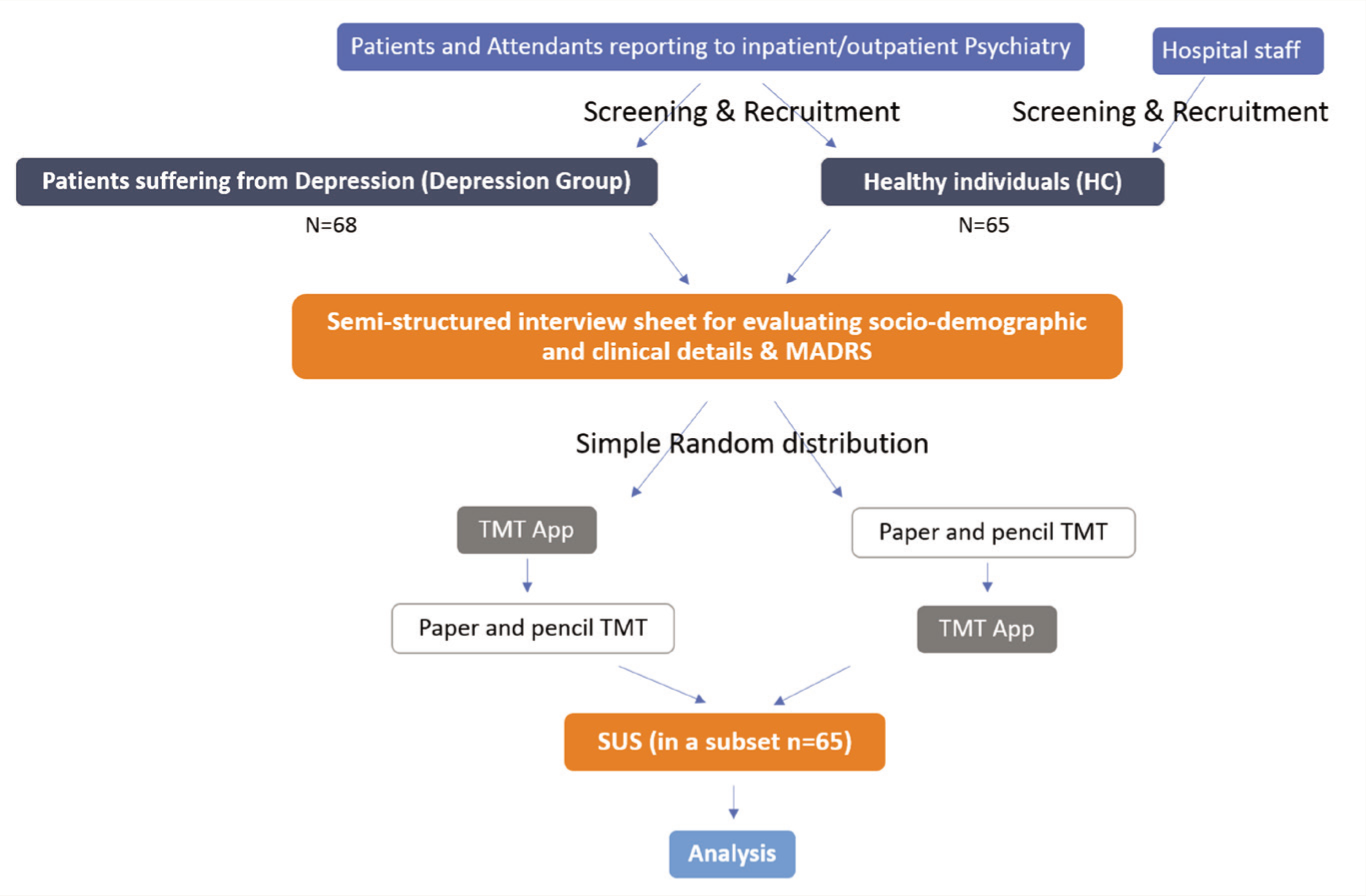

The investigator screened the participants for psychiatric co-morbidity and physical, neurological, and substance use co-morbidity by history and clinical examination. MADRS was applied for depression group participants only. All participants (n = 133) were assessed on both the TMT versions (paper-based version and app-based version) in a random (using a web-based random number generator) cross-over design, wherein about half of the participants received the paper-based version initially followed by the app-based version later with an inter-test interval of about 30 min. In contrast, the other half of the sample population received an opposite order of test administration (Figure 1). This was done to avoid the learning effect of the test. The device’s usability was ascertained using SUS in a subset of individuals (n = 65).

Study Flow.

Analysis

Data analysis was performed using Statistical Product and Service Solutions v. 29.0 (SPSS Inc., USA). A value of p < .05 was considered statistically significant. Data was presented as mean ± standard deviation for quantitative variables and frequencies (percentage) for categorical variables. Data was found to be normally distributed using the Shapiro–Wilk test. Therefore, parametric tests were carried out for within-group and between-group analysis depending on the variables of concern. The Pearson product-moment correlation was used to assess the correlations. The effect strength was measured according to the Cohen conventions: >0.2 as a small effect, >0.5 as a medium effect, and >0.8 as a large effect. 27

Results

The study included 65 (48.9%) healthy controls and 68 (51.1%) individuals with depression. The groups were similar for mean age distribution (35.49 ± 10.75 and 34.35 ± 10.67 years, respectively, t = 0.61, p = .54) and gender distribution, with more male than female participants (male:female = 41:24 and 40:28, respectively, χ2 = 0.25, p = .72).

The first use of app-based or paper-based versions was equally distributed in the overall sample (52.6% and 47.4%, respectively; p = .61). However, there was more first use of the app-based version in HC and paper-based version in the depression group (paper-based:app-based = 24:41 in HC and 39:29 in the depression group, χ2 = 5.56, p = .02).

Research question 1: Was the processing time of the paper-based and app-based versions of TMT different?

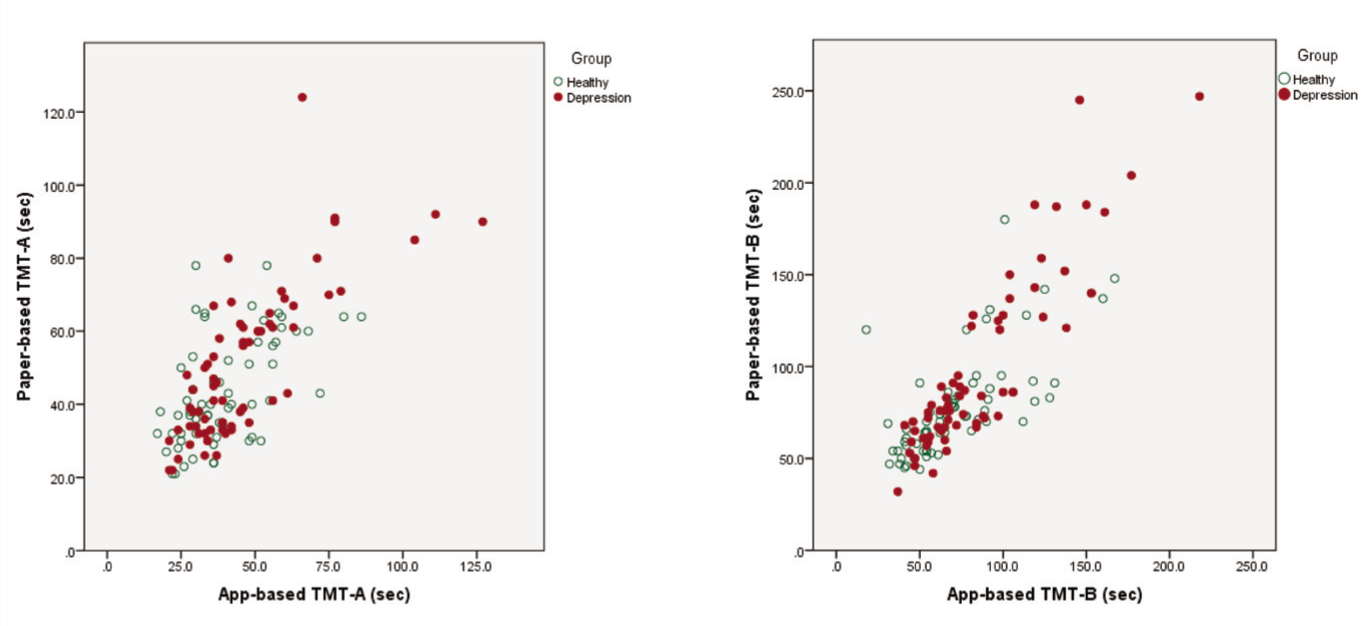

The construct validity of the digital test generated by Android app-based TMT was measured by correlating the time the same participant took to complete an app-based and the paper-based versions (Figure 2). The individual processing times of the paper-based TMT-A and the app-based TMT-A showed a significant positive correlation in both HC and depression groups [r(63) = 0.55, p < .001; and r(66) = 0.77, p < .001, respectively]. There was also a significant positive correlation between the individual processing times for the paper-based TMT-B and the app-based TMT-B in both HC and depression groups [r(63) = 0.67, p < .001; and r(66) = 0.89, p < .001, respectively]. Longer time to test completion of both paper-based TMT-A or TMT-B was associated with extended digital execution with a medium to large effect size.

Correlations of Processing Times of Paper-based and App-based Versions of the TMT-A and TMT-B in Both Healthy and Depression Groups.

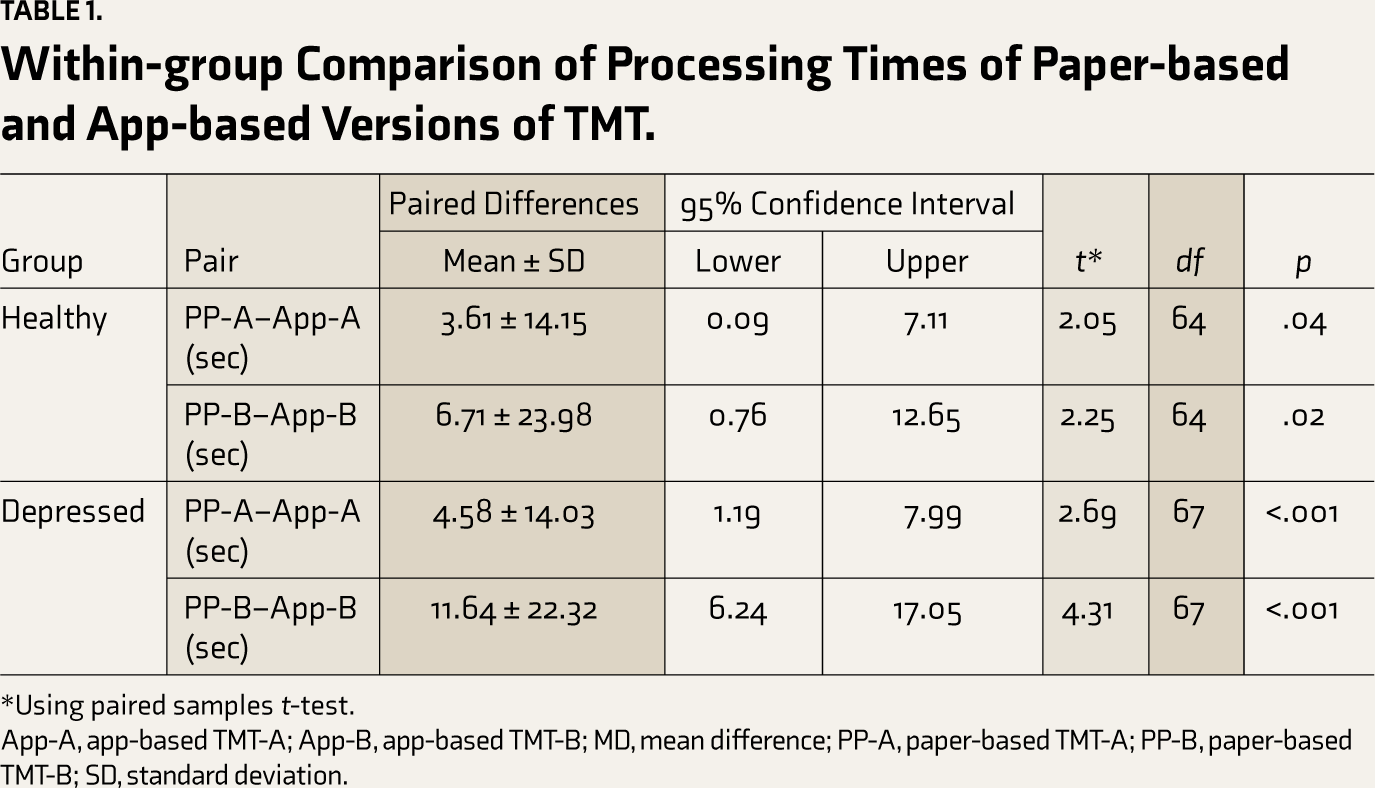

For both TMT-A and TMT-B, the participants in both groups needed significantly more processing time for the paper-based version than for the app-based version (Table 1).

Within-group Comparison of Processing Times of Paper-based and App-based Versions of TMT.

*Using paired samples t-test.

App-A, app-based TMT-A; App-B, app-based TMT-B; MD, mean difference; PP-A, paper-based TMT-A; PP-B, paper-based TMT-B; SD, standard deviation.

Research question 2: Was age or gender an influence on processing time in paper-based or app-based versions of TMT?

There was a positive correlation of age with TMT-A and TMT-B scores for the paper-based version in HC (r = 0.31, p = .01 and r = 0.25, p = .04) as well as the depression group (r = 0.51, p < .001 and r = 0.45, p < .001). Similarly, there was a positive correlation of age with TMT-A and TMT-B scores for the app-based version in HC (r = .37, p = .002 and r = 0.37, p = .002) as well as the depression group (r = 0.41, p = .001 and r = 0.53, p < .001). All correlations of the different versions can be classified as having small to medium effect sizes. Gender did not influence the processing times in TMT-A or TMT-B in either the paper-based or the app-based version.

Research question 3: How was the usability of the app-based version? Was there a connection between an individual evaluation of the app-based version and the processing time of the TMT versions?

The SUS was applied in a subset of individuals in HC [n = 28 (43.1%)] and depression group [n = 37 (56.9%)]. The individuals in the subset also had findings, such as the overall group with no intergroup differences for gender and age distribution. The first use of the paper-based version was more frequent than the app-based version in the overall sample [49 (75.4%) and 16 (24.6%), respectively, p ≤ .001]. However, the distribution of first use was comparable in HC and depression groups (ratio of the first use of paper-based version:first use of app-based version was 18:10 in HC vs 31:6 in the depression group, χ2 = 3.26, p = .08). There was also a significant positive correlation between the individual processing times for the app-based TMT-A and the paper-based TMT-A and app-based TMT-B and paper-based TMT-B versions in both HC and depression groups.

The total SUS scores did not correlate significantly with any group’s app-based version of TMT-A or TMT-B processing times. However, the total SUS scores correlated negatively with the mean difference (paper vs. app) in processing times for TMT-A (r = −0.49, p < .01) but not with TMT-B (r = 0.07, p = .69) in the HC group. No significant correlation was observed for the total SUS scores with the mean difference (paper vs. digital) in processing times for TMT-A or TMT-B (r = 0.14, p = 0.39 and r = −0.23, p = .17) in the depression group.

Research question 4: Do healthy individuals differ from individuals suffering from depression for processing times of the app-based and paper-based versions of TMT?

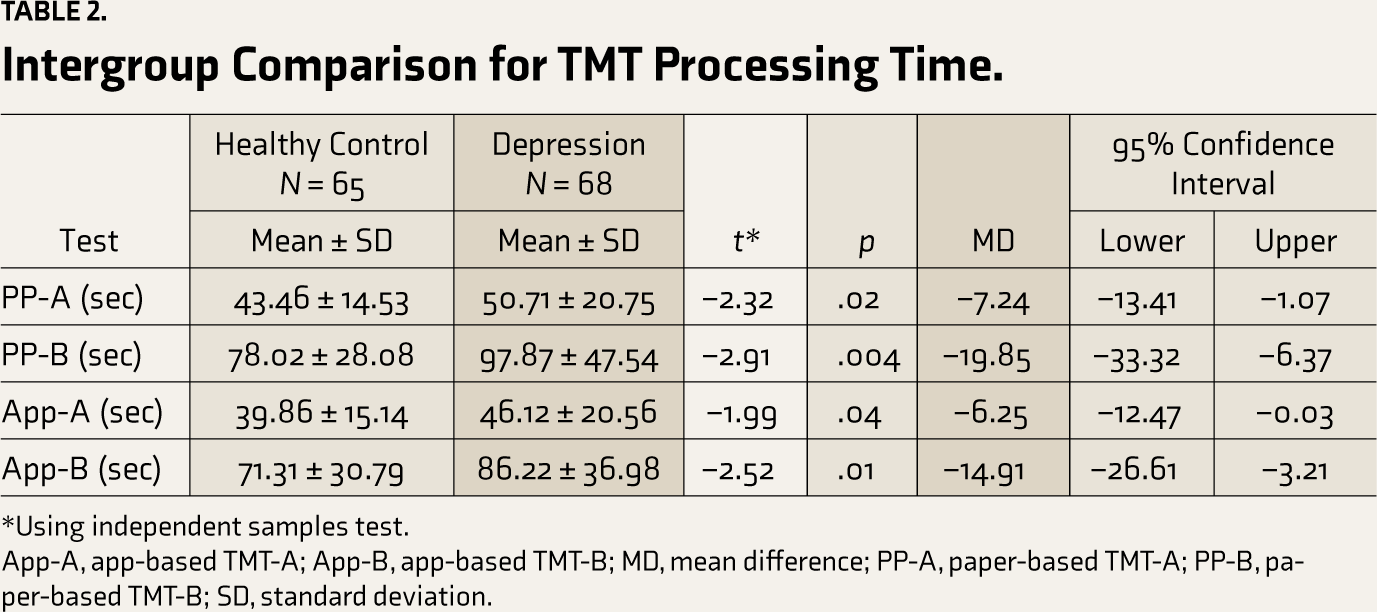

In the overall sample, the duration of test completion was significantly longer in the depression group than in the HC group for both paper-based and app-based TMT-A and TMT-B (Table 2). In the SUS subset, the processing time for the test was also significantly longer in the depression group than in the HC group for both paper-based and app-based TMT-A and TMT-B.

Intergroup Comparison for TMT Processing Time.

*Using independent samples test.

App-A, app-based TMT-A; App-B, app-based TMT-B; MD, mean difference; PP-A, paper-based TMT-A; PP-B, paper-based TMT-B; SD, standard deviation.

Within-group analysis found significantly less processing time with app-based TMT-A and TMT-B than with paper-based TMT-A and TMT-B, respectively (Table 1). More significant differences were observed in the depression group (p < .001) than in the HC group (p < .05), which could be reflective of the differences among the groups for the first use of the TMT version.

Research question 5: Does the severity of depression affect the processing times of the app-based and paper-based versions of TMT? Is TMT-A or TMT-B a good predictor of discriminating healthy individuals from depressed individuals?

In the depression group, the mean score of MADRS was 23.97 ± 9.79, with most individuals having moderate depression. None of the paper-based or app-based versions of TMT-A and TMT-B processing times were correlated significantly to MADRS scores.

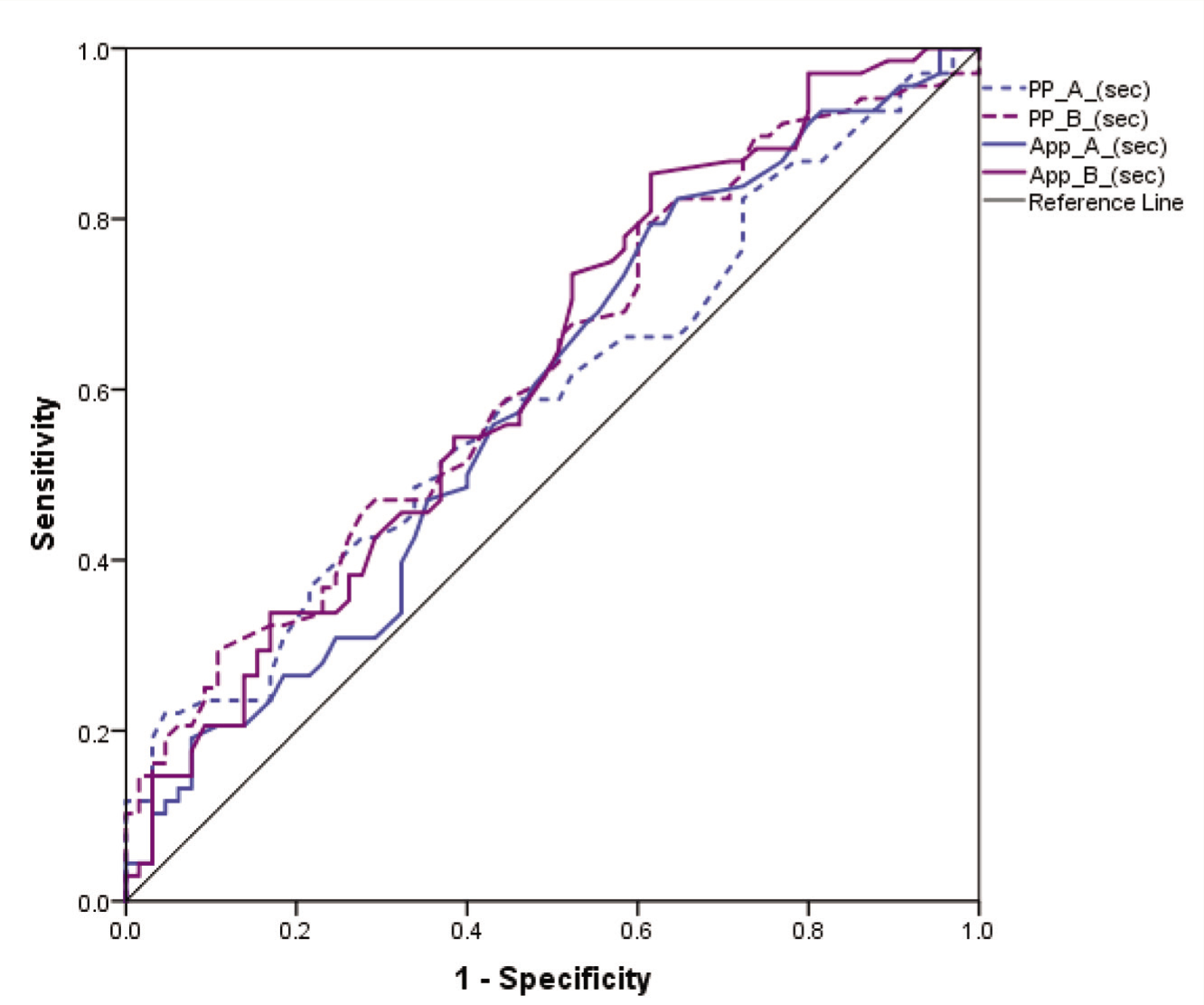

The area under the curve (AUC) values were similar but small for both the paper-based and app-based versions for processing times of TMT-A (59.1% and 59.0%) as well as TMT-B (62.3% and 62.4%) in discriminating healthy individuals from depressed individuals (Figure 3).

App-A, app-based TMT-A; App-B, app-based TMT-B; MD, mean difference; PP-A, paper-based TMT-A; PP-B, paper-based TMT-B.

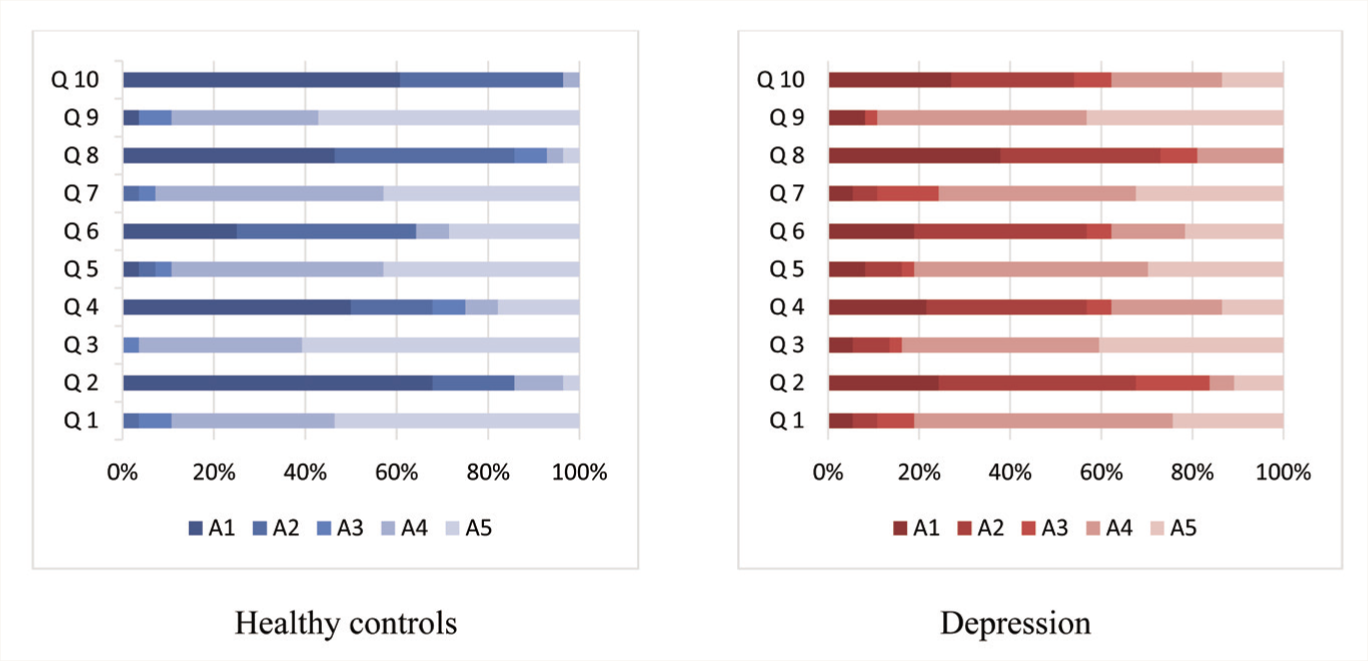

Research question 6: Do healthy individuals differ from individuals suffering from depression in evaluating the app-based version’s usability?

Both groups had similar positive responses to the usability of the app-based version (Figure 4). The mean SUS scores were above average (considered as SUS scores above 68) in both groups but significantly higher in the healthy group as compared to the depression group [80.01 ± 11.51 (Grade B) and 67.97 ± 14.98 (Grade D), respectively; p = .001, t = 3.52, MD = 12.02, 95%CI = 5.21–18.83]. Thus, it was a good grade in healthy individuals and an okay/fair grade in the depression group.

(Q1: I think that I would like to use this system frequently, Q2: I found the system unnecessarily complex, Q3: I thought the system was easy to use, Q4: I think that I would need the support of a technical person to be able to use this system, Q5: I found the various functions in this system were well integrated, Q6: I thought there was too much inconsistency in this system, Q7: I would imagine that most people would learn to use this system very quickly, Q8: I found the system very cumbersome to use, Q9: I felt very confident using the system, Q10: I needed to learn a lot of things before I could get going with this system. A1: strongly disagree, A2: rather disagree, A3: partly agree, A4: rather agree, A5: strongly agree.)

Discussion

In the current study, the construct validity of the app-based TMT was found to be highly correlated to the paper-based TMT version, as evidenced by the significantly positive correlations of the individual processing times (paper-based versus app-based versions) with each other. All correlations of the versions had medium to large effects in both HC and depression groups. Since the test differed only in the input format (paper versus tablet) while having digitalized equivalent content and constant external factors, it can be assumed that the correlation was grounded on the congruent cognitive requisites of the test versions. Previous researchers have also attributed the correlations to constructs that are recorded concurrently.20,28 More evidence for it is brought about by the similar within-group correlation findings in HC and depression groups for the test, suggesting minimal to no involvement of external factors.

We found higher correlations in TMT-B than in TMT-A, similar to other researchers.20,28 It could be attributed to the varying cognitive demands posed by the two parts. While TMT-A primarily requires processing speed, TMT-B also demands the more complex functions of inhibition and visual working memory. 25 In addition, TMT-B was performed by all participants always after TMT-A. Therefore, the similarity of the method and the resulting learning effect may have reduced the cognitive demands when performing TMT-B.

It was also observed that for both TMT-A and TMT-B, participants in both groups needed less time for test completion with the app-based version than with the original paper-based version. While one would expect that the effect of learning would reduce the processing time for the second use of the TMT, it was observed that the duration was reduced in app-based TMT irrespective of whether it was used first or second. Considering both the groups had similar positive responses to the usability of the app-based version, the relative user-friendliness of the app-based version could have resulted in a lower processing time than the paper-based version. Since the first use of the app-based version was in the HC group while the second use of the app-based version was in the depression group, the reduction in processing time could have been more impactful in the depression group than in the HC group. Latendorf and colleagues found more processing time in the digitalized version of TMT than the paper-based version, suggesting it to be mediated through dual task reasoning with the cognitive demand posed by the test itself being intensified by the amplified cognitive load in the information processing system. 20

The total SUS scores correlated negatively with the mean difference (paper vs. app) in processing times for TMT-A but not with TMT-B in the HC group, while there was none in the depression group. This suggests that the more manageable the use of the system was felt to be by the participants, the lower the individual differences in the TMT-A processing times were observed in healthy individuals but not in individuals suffering from depression. One explanation could be that the inter-individual differences between the app-based and paper-based versions’ processing times were more minor for TMT-A than TMT-B owing to the lower cognitive load. However, the time required for processing may not be a reliable indicator of the difficulty of use. Latendorf and colleagues found that the differences in the processing time for TMT-B increased the ease of use of the system as perceived by the participants. While they suggested that it may be since TMT-B had a far higher range than TMT-A, the authors also acknowledged that the processing time may not be a reliable indicator of the user feasibility. 20

There have been few studies reporting Android application-based TMT.3,19,21 While the other two previous Android versions had used a capacitive touch screen like the current app, the Android-based mobile TMT app by Cassidy and colleagues required a stylus pen for performing the test, limiting its usability only to tablets with the capability of having a stylus use. 19 The current app-based TMT version can be used using a finger, increasing its feasibility for different tablet versions. Like the current study, Cassidy and colleagues found a shorter response time with mobile TMT than paper TMT for both A and B. 19 However, they found significant differences only for TMT-B, unlike the current study wherein we found significant differences for both A and B. They had conducted the validation study in individuals not assessed for depression or any mental health ailment, which could have impacted their findings. We found significant differences for both healthy and depressed individuals. Dahmen and colleagues used a machine learning approach to determine the accuracy of the app-based version. 21 Although, the researchers differentiated healthy individuals from those suffering from neurological disorders, the AUC reported were similarly small, as found in the current study for discriminating healthy from depressed individuals. The small AUC found in the current study could be due to the reason that most of the individuals in the current study had moderate depression. The UX-TMT app by Kokubo and colleagues used only 10 numbers for TMT-A and only 5 numbers and 5 Japanese alphabets for TMT-B with only the touching of buttons rather than any visible trail appearing on the screen. 3 Similar to our findings of moderate correlation scores in healthy individuals, they reported a moderate correlation of UX-TMT with the TMT-B score in the Montreal Cognitive Examination (MoCA) Japanese version.

Being a single-center study with a small sample size limits the study’s generalizability. Testing the TMT App in major neurocognitive disorders is required to strengthen the findings further. While the current study has recruited more than double the number of healthy participants compared to previous app-based studies, further validation on a larger sample population is now required to corroborate these initial findings and generate norms for the general population. Further advancement in the current app-based version can be made using other variables like number of errors made, detailed timing information, or pauses and lifts. Previous studies have used some of these variables and suggested that these digital performance features substantiate the findings. Another utility of the digital version is the ability to use multiple screens to reduce practice effects in longitudinal studies. However, the generated instances should be assessed for their reliability and validity as a cognitive assessment instrument. The TMT App will be in the public domain, so researchers can use the test freely worldwide (

Conclusion

The Android app-based TMT version (TMT App) was developed in the current study, and the preliminary validation results suggest that the tablet-based version can produce reliable cognitive assessments and correlate significantly with existing conventional paper-and-pencil methods. The app has been designed with the prospect of further building upon the existing functionality in future versions. The processing time for test completion of both TMT-A and TMT-B in depressed individuals was longer as compared to healthy individuals. This observation was present in paper-based and app-based TMT versions.

Footnotes

Acknowledgements

The authors thank Ms. Neha Behal for helping in subject recruitment.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Declaration Regarding the Use of Generative AI

None used.

Ethical Approval

The study was approved by the Institute Ethics Committee, All India Institute of Medical Sciences, New Delhi vide IEC-663/03.09.2021 dated 06-09-2021.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Informed Consent

Written informed consent was obtained from the study participants.