Abstract

Background:

Successful identification of emotional expression in patients is of considerable importance in the diagnosis of diseases and while developing rapport between physicians and patients. Despite the importance of such skills, this aspect remains grossly overlooked in conventional medical training in India. This study aims to explore the extent to which medical students can identify emotions by observing photographs of male and female subjects expressing different facial expressions.

Methods:

A total of 106 medical students aged 18–25, without any diagnosed mental illnesses, were shown images of the six universal facial expressions (anger, sadness, fear, happiness, disgust, and surprise) at 100% intensity with an exposure time of 2 seconds for each image. The participants marked their responses after each image was shown. Collected data were analyzed using Statistical Package for the Social Sciences.

Results:

Participants could identify 76.54% of the emotions on average, with higher accuracy for positive emotions (95.6% for happiness) and lower for negative emotions (46% for fear). There were no significant variations in identification with respect to sex of the observers. However, it was seen that participants could identify emotions better from male faces than those from female faces, a finding that was statistically significant. Negative emotions were identified more accurately from male faces, while positive emotions were identified better from female ones.

Conclusions:

Male participants identified emotions better from male faces, while females identified positive emotions better from female faces and negative ones from male faces.

Misidentification of emotions, especially negative emotions, from static facial expressions was common in medical students of both sexes, which is an aspect that needs to be addressed during the training of medical students in India.Key Messages:

The identification and correct interpretation of facial expressions play an important role in fruitful social interactions, as this can help the observers to formulate and regulate their own behavior in response to the emotions expressed by others (expresser) 3 and, thus, helps build rapport between people. For example, correctly identifying the emotion of anger may lead to a psychological as well as physiological state of “fight or flight” in the observer. Therefore the lack of this quality can prove to be quite debilitating to the social and emotional health of a person and can frequently lead to interpersonal conflicts. This is evidenced by the widely studied association of the inability to identify and express emotions with psychological and physical abuse. 4 People suffering from chronic illnesses have also been shown to have difficulties in expressing EFEs. 5

Being an evolutionary remnant of non-verbal communication, the ability of humans to identify and interpret EFEs shows surprising consistency across geographical and ethnic boundaries, albeit with slight cultural modifications.6,7 Studies have provided evidence to establish the “universality” of a cluster of such facial expressions, namely anger, sadness, fear, surprise, happiness, and disgust. It has also been shown that this process of EFE recognition is highly optimized in humans, as the identification of certain facial expressions can occur even when the said expression is presented outside of the conscious awareness of a person. 8 It must also be noted, however, that even with the inter-cultural consistency, the accuracy of identification of emotions varies between individuals based on the content of the emotions expressed, their intensity, as well as the characteristics of the observer.9,10 Research on this particular subject has also provided evidence regarding the sharp decrease in accuracy among observers when they were shown a large number of EFEs in a particular experimental setting. 11

Creating rapport with patients and caregivers is of utmost importance to doctors. They should be very adept at identifying as well as interpreting EFEs of their patients and carers to the best extent possible. This is important as it can help to establish a relationship of trust between them and the people they are treating, which has been shown to lead to better clinical outcomes. 12 Physicians are also expected to act as leaders while at the same time be considerate and closely engaged with their patients to motivate them for treatment. 13 The act of recognizing and responding to the patients’ as well as their caregivers’ non-verbal signals can also help the doctor understand the deviations from the normal facial expression spectrum that are present in their patients, especially those who suffer from chronic ailments or physical or emotional pain. This not only helps in the management of the psychological symptoms of the disease but also generate positive response from the patients and the caregivers who are likely to be under distress too. 14 There is increasing evidence that doctors’ awareness of and ability to respond to emotions in themselves and other people influence their ability to deliver safe and compassionate health care, a particularly pertinent issue in the current health care climate. 15

While all doctors are expected to be leaders and good communicators, this aspect of training is grossly overlooked in the medical curriculum, especially in the Indian context. This study will help to address this gap by trying to determine how medical students fare in the identification and interpretation of human EFE in an idealized setting, with static images showing the six universal facial expressions at 100% intensity, and, thus, generate data on this particular subject.

Objectives

The current study was conducted with the following objectives:

To find the proportion of students who can correctly identify the six universal EFEs from static pictures.

To compare the differences in the identification rates of emotions based on the sex of the observers and expressers.

Research Hypothesis

A large proportion of medical students are unable to identify and interpret one or more universal facial expressions correctly and there are significant differences between males and females in their ability to identify and interpret universal facial expressions.

Materials and Methods

The study was an analytical cross-sectional one conducted in a tertiary care health center cum teaching institute of eastern India. It was conducted over a period of four months from June to September 2018. Relevant ethical permissions were obtained from the Institutional Ethics Committee.

Study Population

Medical students aged 18–24 years, hailing from different parts of India and varied backgrounds, and pursuing Bachelor of Medicine, Bachelor of Surgery (MBBS) in a tertiary care teaching institute of eastern India volunteered as participants. Written consent was obtained. After screening for any history of neuropsychiatric or developmental disorders as per their medical examination records at the time of admission to the medical course, they were also screened for any current ongoing psychiatric illnesses using the Primary Care Evaluation of Mental Disorders Patient Health Questionnaire (PRIME-MD PHQ) self-administered questionnaire. 16 They were also screened for any recent history of head trauma and for diagnosis of any neurological anomalies or diseases that would grossly affect their cognitive or behavioral functions. Of the 113 volunteers, seven (four males and three females) were excluded as they had attended lectures or seminars that discussed facial expressions and their role in non-verbal communication in the last one year, so as to avoid introducing any selection bias. Subsequently, the total number of the participants was 106, with 53 males and 53 females. An attending physician verified that none of them were under the influence of any psychotropic substance at the time of the undertaking of the experiment.

Study Tools

To measure the ability of the participants to recognize EFE, four image sets from the Karolinska Directed Emotional Faces (KDEF)17 were used, which is a directory of 4,900 human facial expressions. Each of these sets contained seven images, each corresponding to a facial expression at 100% intensity, namely anger, disgust, fear, happiness, sadness, surprise, and neutral, in front profile. Each of the four sets corresponded to the facial features of a single person, with two sets showing emotions of females (KDEF nos 01, 02) and the rest showing emotions of males (KDEF nos 35, 14). In addition to these four sets of images, four extra images were also selected from the KDEF, to be used for demonstration purposes: two were of a male expressor (KDEF no 09) showing happiness and neutral expressions and two were of a female (KDEF no 07) showing anger and neutral expressions, all at 100% intensity. Of the 32 total images, 24 (the remaining eight were the four demonstration images and four neutral images) were selected and shuffled to form a set of randomly arranged images. The participants were shown this image set and had to mark what he/she thought the emotion in the picture was, in the answer sheet provided to them, which contained six options for each corresponding image (anger, disgust, sadness, surprise, happiness, and fear).

The answers were marked as right or wrong, with the correct answers being marked with the score of 1 and the incorrect responses as 0. The wrong responses were further classified according to the chosen response and documented. The more faces a participant correctly identified, the higher was the score given to him/her.

Study Technique

Before the start of the experiment, the participants were clearly explained about the procedure. It was ensured that the environment in which the experiment was conducted was isolated from potentially distracting stimuli (e.g., loud noises, bright lights, etc.). Two demonstration images were used to explain and demonstrate the whole experiment to the participants through a 2-second image exposure, 18 followed by response marking. The remaining four images of neutral faces of the four expressors were also shown to the participants in order to do away with any confusion arising from unfamiliarity with their faces.

Each participant was shown the aforementioned set of randomly arranged images on a computer screen with 1208 × 768 resolution, 60 Hz refresh rate. Each image was presented to the participant for two seconds, after which it was replaced by a blank screen. The participants identified the facial expression demonstrated on the on-screen images and then chose and marked the identified emotion in the list of the emotions on the answer sheet provided to them. Care was taken to ensure that the participant looked at the photograph for the full two seconds and marked their answers only after the image had been replaced with the blank slide. After a response was marked for a particular image, the next image was shown, and the process repeated for the full set. There was no option of more than one response or the repetition of the images to each participant. The collected responses were compiled, compared, and analyzed.

Data Analysis

Participants were shown 24 images, each image corresponding to an EFE. After their responses were collected, each participant was scored out of 24, with each correctly identified emotion assigned a score of 1, and each incorrectly identified emotion assigned a score of 0. The data thus collected by the researchers were compiled using MS Excel (Microsoft Inc) and analyzed using the principles of descriptive and analytical statistics using the Statistical Package for the Social Sciences (Version 20, IBM Corp). Statistical significance was considered at P values <0.05.

Results

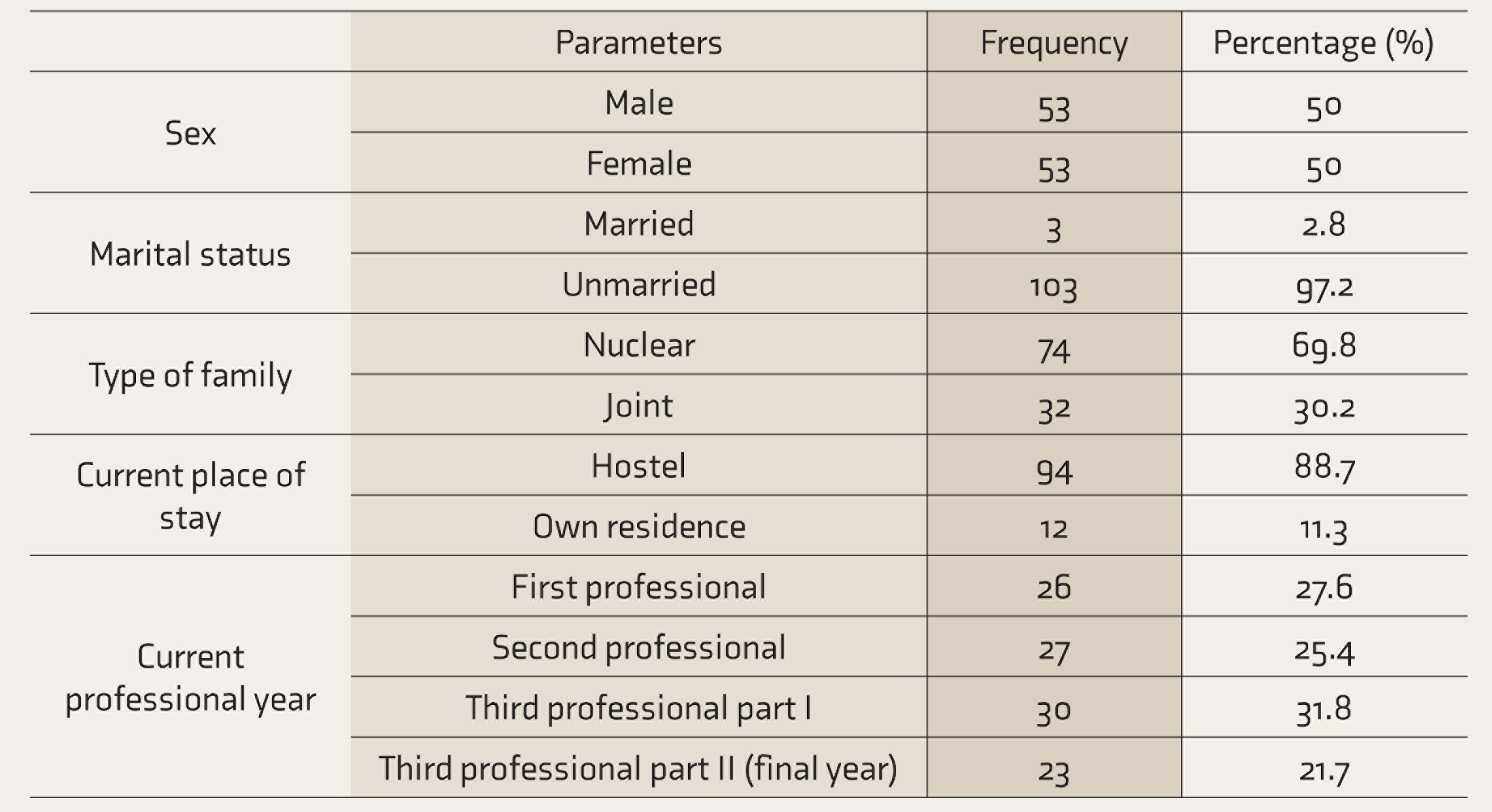

Sociodemographic Characteristics of the Participants (n = 106)

Of a total 113 volunteers, seven were excluded as they had previously attended lectures or seminars discussing facial expressions and their role in non-verbal communication in the last one year.

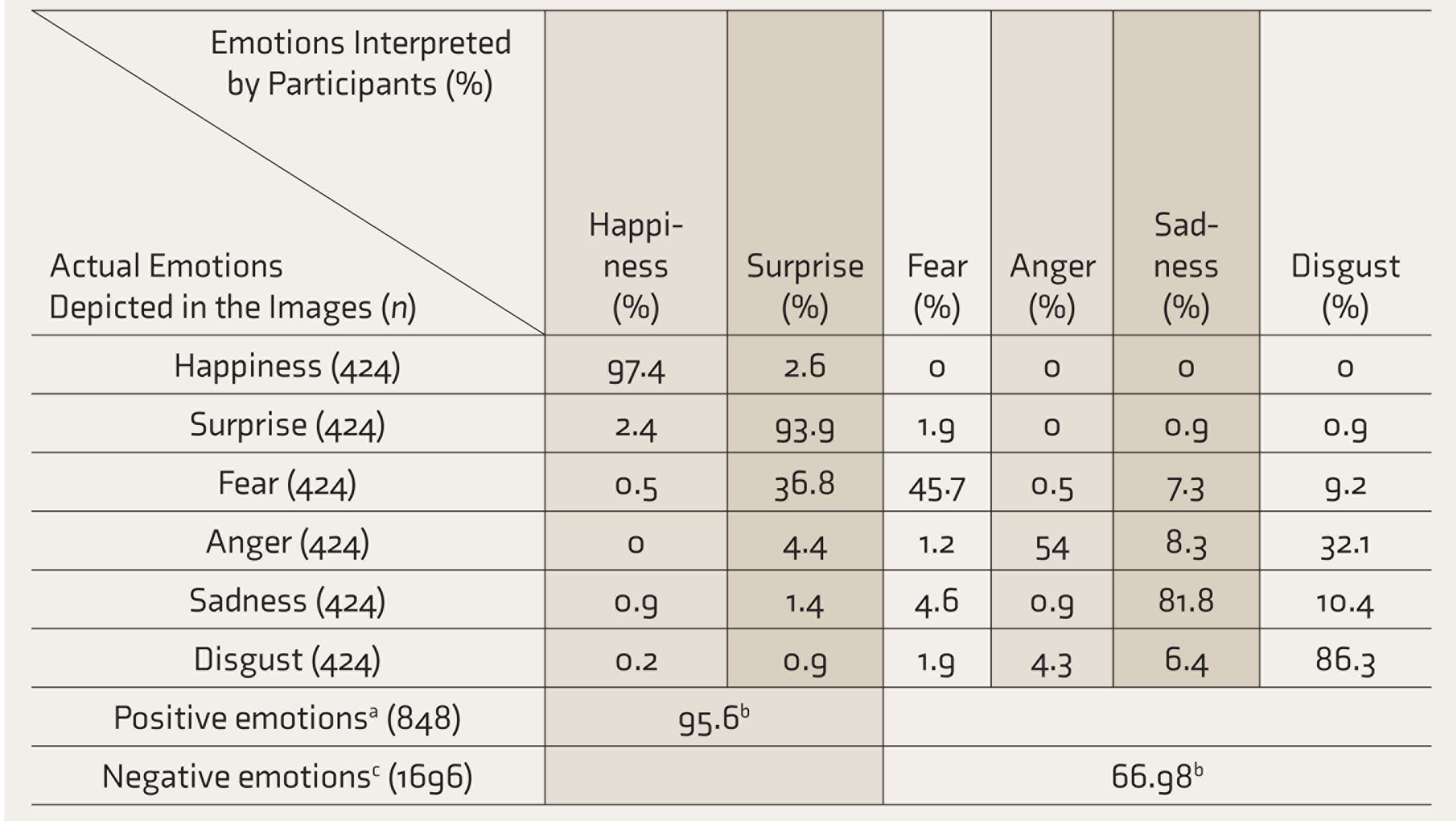

The Proportion of Interpreted EFEs by Study Population (n = 424)

Each of the 106 participants identified four sets of EFEs, each set containing one image for each of the six EFEs. Therefore, each participant marked four images of each EFE (24 images in total), leading to a total of 424 depicted images for each of the EFEs. aHappiness and surprise. bCorrectly identified proportions of emotions. cAnger, fear, sadness, and disgust. EFEs: emotional facial expressions.

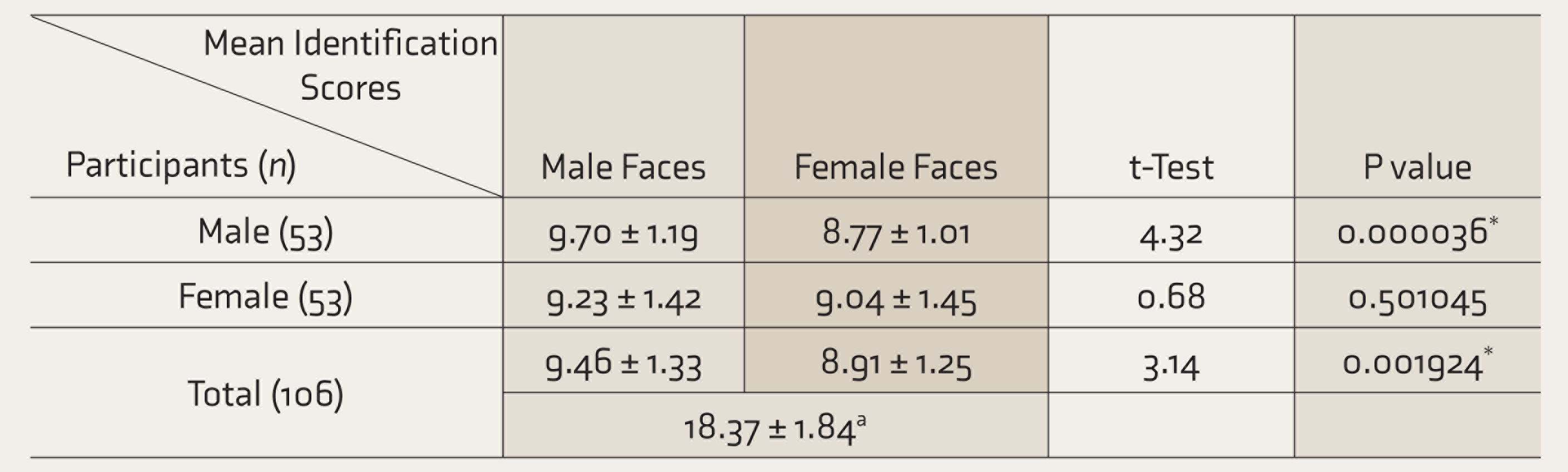

Sex-Based Variations in Mean Scores While Identifying EFEs from Images of Male/Female Faces (Total Score = 12)

Each participant identified four sets (two male faces and two female faces) of six EFEs each. Therefore, each participant marked (and were scored on) 12 EFEs expressed by male faces and 12 EFEs expressed by female faces (24 images in total). aScore out of 24 total EFEs marked by each participant. *Statistically significant. EFEs: emotional facial expressions.

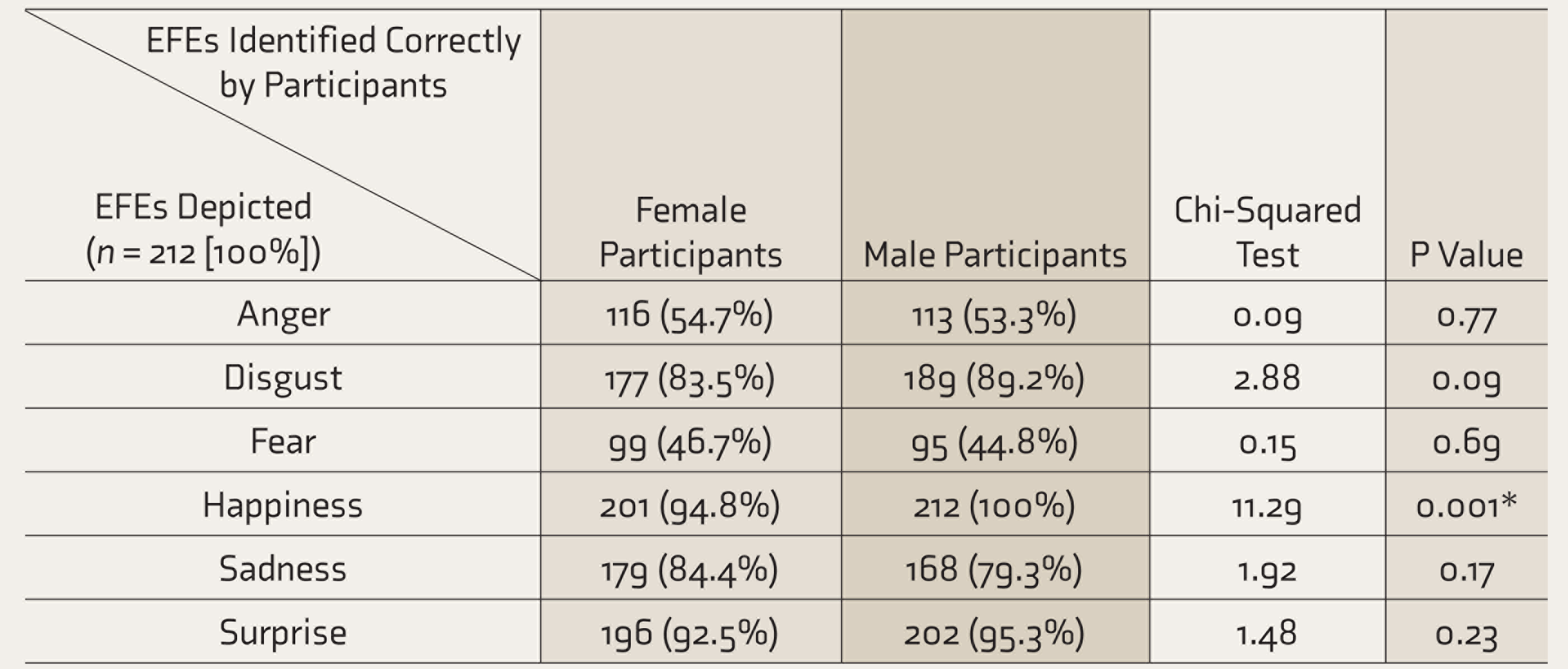

Proportions of Emotional Facial Expressions (EFEs) Identified Correctly by Study Participants (n = 212)

Each of the 106 participants identified four sets of EFEs (each containing six EFEs). Each participant marked four images of each EFE (24 images in total). Therefore, 53 female participants marked a total of 212 images for each EFE. Same for male participants. *Statistically significant.

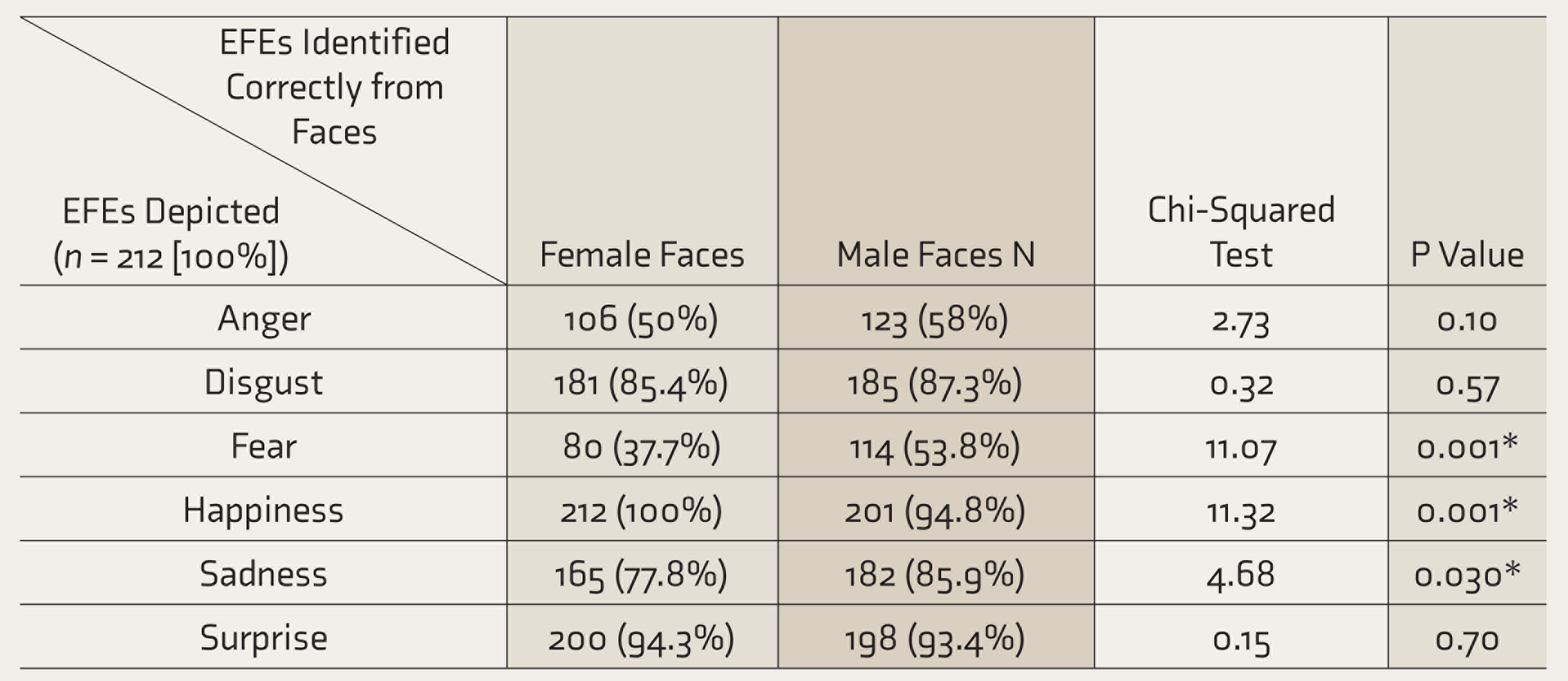

Differences in the Identification of EFEs from Male/Female Faces (n = 212)

Each participant identified four sets (two male faces and two female faces) of six EFEs each. Therefore, a total of 106 study participants marked 212 images of EFEs expressed by male faces and 212 images of EFEs expressed by female faces. *Statistically significant. EFEs: emotional facial expressions.

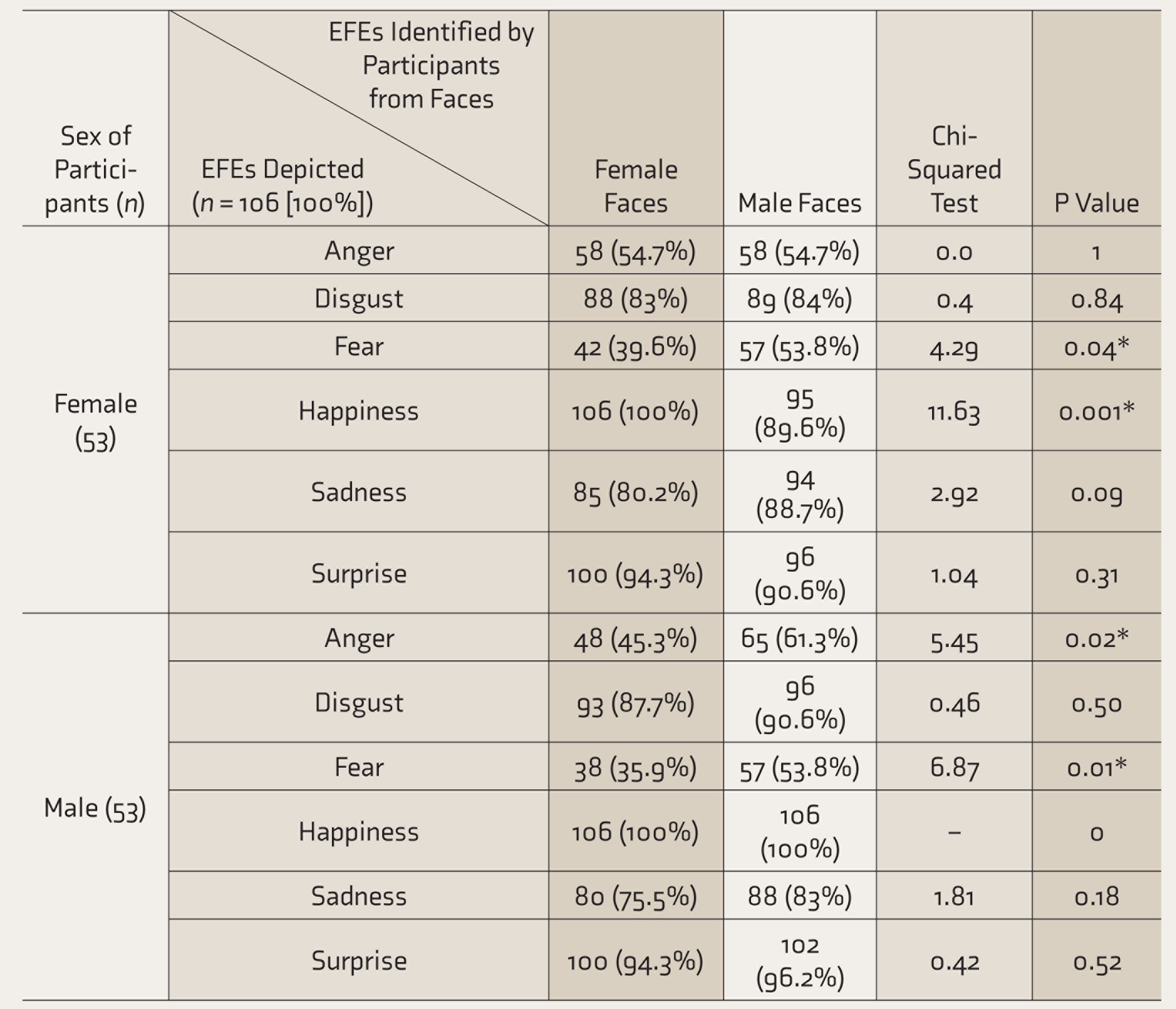

Proportions of EFEs Identified from Female and Male Faces by Participants (n = 106)

Each participant identified four sets (two male faces and two female faces) of six EFEs each. Therefore, a total of 53 female participants identified a total of 106 images of EFEs from female faces and a total of 106 images of EFEs from male faces. Same for male participants. *Statistically significant. EFEs: emotional facial expressions.

Further analysis of sex variations during identification of EFEs showed that the female participants could identify negative emotions like fear (53.8%, P = 0.04) and sadness better from male faces (88.7%, P = 0.09) than from faces of their own sex (Table 6). On the other hand, they identified positive emotions better from female faces than they did from male ones. Interestingly, male participants also scored better when identifying emotions from male faces (9.70 ± 0.327) than they did from female ones (8.77 ± 0.280) (Table 3). This was most pronounced in the cases of negative emotions such as anger (male face = 61.3%; female face = 45.3%; P = 0.02), fear (male face = 53.8%; female face = 35.9%; P = 0.01) and to a lesser extent in the case of sadness and disgust.

Discussion

In line with the “happy face advantage” hypothesis, 19 the emotion the study participants most accurately identified was happiness (97.4%), followed by surprise (93.9%). These findings are consistent with the theory proposed by Smith and Schyns which states that since these “positive” emotions are associated with “catastrophic” transformations of the mouth region (the mouth opens, revealing the teeth, which are otherwise not visible in the neutral face), they can be identified with more accuracy than other expressions. 20 The highest accuracy for the identification of happiness, which was represented by a smiling face, can also be explained from an evolutionary perspective. In primates, clenched bared teeth displays (thought to have evolved into smiles in humans) and open mouth displays (laughter) have been seen to represent appeasement and playfulness, respectively. Both functions play an important role in the de-escalation of conflicts, an aspect that is preserved in the social functions of human smiles and laughter. 21 Because the correct identification and interpretation of these emotions reduce conflicts as well as improve survival in populations, expressions of positive emotions like happiness might have evolved in humans to be more highly recognizable across sexes.

Participants were the least accurate in identifying negative emotions such as fear or anger—only 45.8% and 54%, respectively of these emotions were identified correctly. These emotions do not appear to have social connotations; their primary purpose seems to be responding to emotional experience. 22 Despite being a negative emotion, the comparatively higher accuracy in the identification of disgust might be due to the more pronounced features associated with it (wrinkled nose, lowered eyebrows, squinting eyes, and a gaping mouth). 23

The higher identification accuracy noticed in the case of sadness can be explained by the fact that we as humans are hardwired to identify and recognize emotions associated with crying. It has has been well documented that the cry of an infant represents an honest need for attention.24,25 Based on this theory, female participants were expected to score better than males in identifying sadness, which was validated by the current study (females correctly identified 84.4% of sadness versus male participant who could correctly identify 79.3% of images depicting sadness).

Of the misidentified emotions, though anger and fear were misidentified most commonly as disgust and surprise, respectively, the vice versa was not observed. The shared visual cues between these emotions (expression of anger and disgust share a brow-lowering component; while surprise and fear share simultaneously raised eyebrows, flared nostrils, and open mouth) 26 could have led to their misidentifications. Other, more intense visual cues probably led to the prevention of similar mistakes when emotions like disgust or surprise were shown. The increased confusion between fear and surprise (which share several visual characteristic cues) as compared to anger and disgust also favors this assumption.

In line with prior research, 27 our findings reiterate the lack of differences between the overall accuracy of the male and female participants in the identification of EFEs, except for a slight advantage noticed for male participants in identifying happiness.

Another important finding of the study was the significant differences in the accuracy observed between male and female faces. It was observed that emotions such as disgust, fear, and sadness were identified better (with fear and sadness having statistically significant findings) in male faces than female ones by both male and female participants, and that of happiness and surprise were generally better identified in female faces overall (although male participants identified surprise marginally better from male faces than female ones). These observations reinforce findings recorded in existing research.10,28 They might have evolutionary roots, as males have historically been more physically involved in disputes and conflicts than their female counterparts. As such, humans have evolved to identify negative emotions more from the male members of the species. Females, being primarily in caregiver positions, might have evolved to have more easily identifiable positive EFEs. However, the extent to which these evolutionary links exist needs further research.

In clinical practice, where the expression of negative emotions from the patients as well as their caregivers is expected to be more frequent than the expression of positive emotions, this inability of the future physicians to correctly identify negative EFEs is an interesting finding of this study that needs to be replicated by further research. Medical curriculum, especially in India, does not fully address the training of communication skills in medical students. This study brings forth a troubling fact that future doctors might have potential difficulty in picking up subtle non-verbal cues that are so important in a doctor–patient relationship and communication with the family members. This study suggests the need to address this aspect of training by introducing suitable changes in the existing medical curriculum.

Limitations

The major limitation is that this study was done among the medical students of a single tertiary medical center of India, and the sample is therefore not representative of all medical students. Another important limitation is that the KDEF, from which the images used in this study were obtained, was not specifically validated for the Indian population. Also, the current study was performed using static images at 100% intensity of EFEs. These represent an ideal situation, and in real-world scenarios, most emotions are expressed at lower than 100% intensity along with other body language cues.

Future Directions

Further research into the topic needs to be done, using more dynamic scenarios as well as using combinations of EFEs and other verbal and non-verbal cues to emulate real-world situations and generate more data on this topic. Also, the evolution of EFEs themselves and their social functions need to be explored in further studies.

Conclusion

Indian medical students could identify positive EFEs much better than negative ones. For most expressions, the accuracy of identifications showed significant variations with respect to the sex of the expressors, but not to the sex of the observer.

Footnotes

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.