Abstract

There has been a growing interest among management scholars in conducting research on grand challenges. Despite recognizing that studying such highly complex and uncertain phenomena likely requires more unconventional approaches, there has been very little methodological guidance provided to interested scholars. Drawing upon our own grand challenge projects undertaken over the past decade, we put forward a methodological approach we term ‘abductive experimentation’. Such an approach is an action-oriented process of inquiry that cycles between generating ‘doubt’ and generating ‘belief’. More specifically, abductive experimentation iterates between induction, abduction, and deduction to both generate and reconcile ‘surprising’ findings and causal mechanisms. While we submit abductive experimentation as a methodological approach particularly well suited to the study of grand challenges, we believe that the process depicted also provides a general roadmap for scholars seeking to dismantle the artificial dualism between theory and practice.

Introduction

Over the past decade, there have been heightened calls for management and organizational scholars to increase their research focus on ‘grand challenges’ (George, Howard-Grenville, Joshi, & Tihanyi, 2016). Such challenges can be described as ‘highly significant yet potentially solvable problems such as urban poverty, insect-borne diseases and global hunger’ (Eisenhardt, Graebner, & Sonenshein, 2016, p. 1113). It is argued that grand challenges provide a unique opportunity for building new theory through participatory problem solving (Buckley, Doh, & Benischke, 2017), and for dismantling the socially constructed and artificial divide that has developed between ‘theory’ and ‘practice’ within the field of management (Banks et al., 2016; Ployhart & Bartunek, 2019).

However, it has also been noted that grand challenges present unique characteristics in that they are highly complex, wrapped in uncertainty, and have intertwined technical and social elements that make identifying solutions extremely difficult (Ferraro, Etzion, & Gehman, 2015). As a result, management scholars seeking to study grand challenges have been warned they are likely to require ‘less conventional approaches’ (Colquitt & George, 2011) and more ‘methodological diversity’ (Aguinis, Ramani, & Villamor, 2019) in the design and execution of research projects involving such phenomena. Unfortunately, there has been very little methodological guidance on what sorts of ‘unconventional’ approaches may be best suited to the study of grand challenges.

In an attempt to address this insufficiency, we put forward a methodological approach that we have termed ‘abductive experimentation’. Abductive experimentation is an action-oriented process of inquiry undertaken in partnership between academics and practitioners collectively seeking to address grand challenges. Rooted within the philosophical principles of pragmatism (Dewey, 1938; Peirce, 1958), abductive experimentation iterates between induction, abduction and deduction in a cyclical process of generating ‘doubt’ and generating ‘belief’ (Martela, 2015). While the methodological process involves a host of qualitative and quantitative data collection activities used in mixed method fashion (Morgan, 2008), field experimentation serves as its primary methodological pillar given its noted ability as a tool for disentangling complex societal problems (Crane, Henriques, & Husted, 2018; Podsakoff & Podsakoff, 2019). However, the use of field experiments in the abductive process we propose is much more generative than confirmatory – its purpose is to concurrently evaluate initial ‘hunches’ and generate additional ‘hunches’ for subsequent exploration (Ansell & Bartenberger, 2016; Sætre & Van de Ven, 2021). Using examples from our own research projects over the past decade on the study of poverty alleviation, we seek to illustrate how abductive experimentation can serve to satisfy the ethical exigency many academics feel to be more directly involved in solving social problems while at the same time meeting institutional demands for publishing their research within top-tier academic journals.

There are undoubtedly multiple approaches that can be undertaken in the study of grand challenges, and we offer abductive experimentation as simply one potential roadmap. Abductive experimentation is likely best suited to scholars seeking to take a more active than passive role in addressing grand challenges, for scholars seeking to create knowledge in the pursuit of desired ends (Greene, 2007; Morgan, 2007). A willingness to engage in experimentation (both field and laboratory) is also important, as the increasing use of experiments on the part of practitioner organizations seeking to address grand challenges (Eden, 2017; Shadish & Cook, 2009) provides a tremendous point of overlap for research collaboration. And finally, abductive experimentation is likely most appealing to scholars who are ‘philosophically’ comfortable with pragmatism and the use of mixed methods not simply to ‘corroborate’ or ‘confirm’ empirical results (Johnson, Onwuegbuzie, & Turner, 2007; Niglas, 2004), but rather to accommodate the path dependence of new knowledge creation whereby developing currently ‘unknown’ solutions to complex grand challenges requires incrementally unearthing unforeseen knowledge at each stage of the inquiry process (Ferraro et al., 2015).

Philosophical Foundations

The methodological approach we outline here for studying grand challenges is rooted within a philosophy of pragmatism (Dewey, 1920; Johnson & Onwuegbuzie, 2004). Pragmatism can be defined as ‘a philosophy of evolutionary learning [emphasizing] the ability of both individuals and communities to improve their knowledge and problem-solving capacity over time through continuous inquiry’ (Ansell, 2011, p. 5). In contrast to philosophies of realism and idealism, and their associated positivist and constructivist paradigms (Morgan, 2014; Teddlie & Tashakkori, 2009), pragmatism represents an alternative form of inquiry that is considered ‘aparadigmatic’ in its orientation (Biesta, 2010).

While there remains some debate among scholars regarding the specific set of principles underlying pragmatism, ‘their commonalities are significant and valuable for the study of organization and organizing’ (Simpson & den Hond, 2022, p. 129). A pragmatist approach to research is widely considered to be a process of inquiry that starts with practical experience, progresses to doubts, and finds provisional closure in the social construction of new beliefs (Lorino, 2018). It is also an approach that is focused on providing workable solutions to important problems rather than the construction of infallible knowledge (Greene & Hall, 2010). However, a pragmatist approach to inquiry is not simply a case of asking ‘what works’, but rather a cyclical process of questioning beliefs about a problem to choose actions, and subsequently reflecting on those actions to again question beliefs (Morgan, 2014). Additionally, pragmatism recognizes that such choices of ‘actions’ and ‘questioning’ are path dependent in that it is only through the completion of one stage of inquiry that the specific choices for the next stage are illuminated (Denzin, 2012).

Pragmatism has seen a resurgence within academe in conjunction with a heightened enthusiasm for mixed method approaches to research design (Ivankova, Creswell, & Stick, 2006). However, what most differentiates pragmatism as a unique form of inquiry is the prominent role of abduction within the research process (Martela, 2015). Abduction is a stage within the theorizing process that involves ‘converting observations into theories and then assessing those theories through action’ (Morgan, 2007, p. 71). Unencumbered by an ontological orientation that demands either moving from the general to the particular (deduction) or from the particular to the general (induction), abduction allows for greater creativity and imagination in the generation of ‘hunches’ from a body of incomplete knowledge (Alvesson & Sköldberg, 2009; Ansell & Bartenberger, 2014; Mantere, 2008). As Peirce (1931, cited in Locke, Golden-Biddle, & Feldman, 2008, p. 907) describes, ‘deduction proves that something must be; induction shows that something actually is operative; abduction merely suggests that something may be’ (emphasis in original). Lorino (2018) uses the example of a group sitting down for a picnic in a forest to illustrate the linkages between induction, deduction, and abduction: One of the participants shows a large flat stone and says: ‘That is a good table!’ This verbal mediation plays an abductive role: it formulates a hypothesis (we could use this stone as a table). Everyone in the party knows that, if the stone is going to be the table of the picnic, their bottles must stand stable on it (deduction). Then some of them start putting glasses and bottles on the stone to check its planeness (induction).

In contrast to a non-pragmatist approach, which typically perceives induction and deduction as independent steps in the dyadic process of theory building and theory testing respectively, pragmatism views abduction, deduction and induction as intertwined parts of a triadic process whereby: (1) abduction involves the forming of a ‘hunch’ or explanatory hypothesis to make sense of puzzling facts; (2) deduction involves the logical translation of a hypothesis into a testable proposition; and (3) induction involves the active collection of new data to test an existing hypothesis and further problematize cause-and-effect relationships (Sætre & Van de Ven, 2021; Tavory & Timmermans, 2014). As such, pragmatists generally view abduction as ‘the only logical operation which introduces any new idea’ (Peirce, 1903, p. 216). In other words, empirical material must contain surprise, doubt and anomalies (Alvesson & Kärreman, 2007; Locke et al., 2008) as the critical ingredient for abductive approaches. Because of this, abduction often specifies the consideration of data ‘in tandem with various theories [so as] not to retrofit data to theory, but to explore which theory would best explain what we found’ (Smets, Jarzabkowski, Burke, & Spee, 2015, p. 939). However, theories are seen by pragmatists as simply another tool that provides insight into previously observed patterns or general rules that may be helpful in the cyclical process of generating beliefs and doubts to a specific situation or context (Lorino, 2018).

We contend that research involving grand challenges is particularly well aligned with an abductive process of inquiry for several reasons. Topics that fall under the purview of ‘grand challenges’ include poverty alleviation, climate change and disease prevention (George et al., 2016). As a result of grand challenges being highly complex, wrapped in uncertainty, and having intertwined technical and social elements that make identifying solutions extremely difficult (Eisenhardt et al., 2016; Ferraro et al., 2015), ‘the fundamental principles underlying a grand challenge are the pursuit of bold ideas and the adoption of less conventional approaches to tackling large, unresolved problems’ (Colquitt & George, 2011, p. 432). Given that the ‘solutions’ or ‘ideas’ needed to solve grand challenges remain largely unknown at the present time, abduction offers an imaginative and iterative approach to inquiry whereby promising paths can become clearer through each incremental action and reflection on beliefs (Morgan, 2014).

A number of recent studies on grand challenges have been motivated by a specific practical problem rather than a purported gap within existing theory (George et al., 2016). Additionally, recent studies have demonstrated that there are many different quantitative, qualitative and mixed method approaches that can be employed in the study of grand challenges including surveys (Olsen, Sofka, & Grimpe, 2016), archival data (Zhao & Wry, 2016), mathematical modelling (Vakili & McGahan, 2016), interviews (Williams & Shepherd, 2016) and case studies (Mair, Wolf, & Seelos, 2016). 1 However, we believe that management scholars who feel compelled to take more direct action to address grand challenges would benefit from further methodological guidance on more ‘unconventional’ approaches (Colquitt & George, 2011) – particularly more experimental approaches rooted within abduction. The lack of prior attention to experimentation for studying grand challenges is somewhat surprising given that it is an increasingly common approach to ‘pilot testing’ of potential solutions by practitioner organizations (Ferraro et al., 2015), and that such organizations are increasingly interested in undertaking such experimentation in direct collaboration with academics (Banks et al., 2016).

Methodological Foundations

The process of inquiry we outline for undertaking grand challenge research is grounded not only within the philosophy of pragmatism, but also within existing literature on mixed methods and experimental design. We briefly review each in turn as a means of constructing the scaffolding for building the overall process of abductive experimentation.

Mixed methods

There are a number of different definitions of mixed methods research (Johnson et al., 2007). For the purpose of our article, we adopt Creswell and Plano Clark’s (2011, p. 271) definition as a methodological approach that ‘focuses on collecting, analyzing, and mixing both quantitative and qualitative data in a single study’. Thus, as compared to multi-method research, which involves the use of either two or more quantitative methods, or two or more qualitative methods within a single study, or full-cycle research, which involves the use of multiple methods within a programme of research (Chatman & Flynn, 2005), mixed method research entails at least one quantitative and qualitative method within a single study (Aguinis et al., 2019).

According to an article published in Organizational Research Methods by Gibson (2017), there are five traditional ways in which mixed methods have been historically used by organizational scholars: (1) a qualitative analysis supplemented by a quantitative analysis to ‘strengthen confidence’; (2) a quantitative analysis supplemented by qualitative analysis to provide a ‘fuller explanation’; (3) a quantitative social network analysis followed by qualitative interviews with individual ties; (4) a content analysis (of qualitative text) conducted in parallel with a quantitative analysis to model relationships; and (5) a case study that simultaneously employs both quantitative and qualitative analyses.

Organizational scholars have previously recognized that the study of complex phenomena is well suited to the richness afforded by mixed method design (Glynn, Barr, & Dacin, 2000). Researchers have also specifically begun to call for an increased use of mixed method approaches to the study of complex grand challenges (Aguinis et al., 2019). However, the process of abductive experimentation differs from prior mixed methods literature in several key respects. Rather than seeing the use of qualitative methods to distinctly ‘build theory’ while quantitative methods are meant solely to ‘test theory’, the collection of quantitative and qualitative data are combined into a single inductive stage. The purpose of that inductive stage is to collectively ‘test’ the deductive propositions that emerged through the abductive theory building process. Furthermore, as opposed to using mixed methods to essentially ‘corroborate’ or ‘triangulate’ findings, the act of ‘mixing’ within the process of abductive experimentation is much more capacious in its quest for additional ‘surprises’ and ‘doubts’ with which to generate additional ‘hunches’ that alter the current knowledge base. Overall, existing literature on mixed methods has adopted much of the language of pragmatism, but has failed to significantly integrate abduction into the overall theorizing process (Tavory & Timmermans, 2014).

Experiments

Shadish, Cook and Campbell (2002, p. 12) define an experiment as ‘a study in which an intervention is deliberately introduced to observe its effects’. Experiments have been experiencing somewhat of a resurgence over the past two decades (Shadish & Cook, 2009). Such rejuvenation is largely a result of a refocusing within many disciplines on ‘causality’, and the explicit testing of the ‘mechanisms’ linking specific variables of interest (Eden, 2017). However, management scholars remain comparatively slow in adopting the use of experimentation as a methodology as compared to other disciplines such as medicine and education (Aguinis & Edwards, 2014; Podsakoff & Podsakoff, 2019).

There are a number of ‘traditional’ types of experiment identified within the literature (Cook & Campbell, 1979) including (1) laboratory experiments, (2) field experiments, (3) quasi-experiments and (4) natural experiments. Laboratory experiments are generally seen as the gold standard for testing internal validity given their random assignment of subjects to conditions and high degree of researcher control over the study environment (Shadish et al., 2002). However, laboratory experiments are often criticized by organizational scholars for a lack of external validity or ‘generalizability’ as a standalone method (Highhouse, 2009). Field experiments are a type of experiment that also uses random assignment but involve ‘a manipulation relevant to working adult participants engaging in genuine tasks’ (King, Hebl, Botsford Morgan, & Ahmad, 2013). As a result, they are thought to provide higher levels of external validity as compared to laboratory experiments, but there can be a trade-off in terms of degree of ‘noise’ from the social environment (Hauser, Linos, & Rogers, 2017). Quasi-experiments are very similar to field experiments except that study participants are not randomly assigned to the designed interventions (Grant & Wall, 2009), and natural experiments are similar to quasi-experiments except that the interventions occur organically as opposed to beings a result of an intentional act on the part of the researcher (Heath & Luff, 2017).

Scholars have previously noted that experiments, much like mixed methods, are particularly well suited to the study of complex phenomena, as they allow the researcher to isolate the effect of a specific input on a specific outcome (Podsakoff & Podsakoff, 2019). Field experiments, in particular, are thought to be a good fit with the heightened enthusiasm among management scholars in ‘phenomenon-driven’ or ‘problem-driven’ research such as grand challenges given their balance between internal and external validity (Pfeffer & Sutton, 2006; Van de Ven, 2007). At the same time, practitioner organizations are increasingly engaging in experimentation with respect to the design and small-scale testing of possible interventions to grand challenges (Crane et al., 2018; Ferraro et al., 2015). However, many smaller and medium-sized organizations lack employees with the methodological training to explicitly hypothesize, measure and interpret causal outcomes (Eden, 2017). Thus, field experiments (as a methodology) provide a fortuitous point of intersection for dismantling artificial dualisms between ‘theory’ and ‘practice’ (Giluk & Rynes-Weller, 2012; Hauser et al., 2017; Rynes & Bartunek, 2017). Unfortunately, many academics have thus far abstained from using field experiments in their studies due to a lack of guidance on how to incorporate them within the broader set of methodological tools (Borsboom, Kievit, Cervone, & Hood, 2009; Grant & Wall, 2009; Highhouse, 2009).

Several scholars have argued that there would be great benefit to organizational scholarship that combines ‘experiments’ with ‘mixed methods’ (Hauser et al., 2017; Podsakoff & Podsakoff, 2019). Proponents of such an approach, from a non-pragmatist perspective, have suggested using interviews to develop instruments, identify measures, or better understand context before undertaking a particular experiment (Sandelowski, 1996). Alternative combinations have also been suggested such as conducting interviews either during or after participants are exposed to an intervention (Lipsey & Cordray, 2000), or nesting an experiment within a larger case study (Grant & Wall, 2009).

Given the breadth of the different types of experiment (e.g. laboratory, field), and range of qualitative methods (e.g. case study, ethnography), there are undoubtedly numerous ways of combining these two approaches depending on the nature of the specific research question or phenomenon being investigated. The mixed method process of abductive experimentation we outline uses field experimentation as the primary pillar, but in a much more exploratory rather than traditional confirmatory fashion (Cook & Shadish, 1994). Thus, as opposed to the traditional distinctions between different types of experiment based on degrees of ‘validity’, the process of abductive experimentation draws upon prior work by Ansell and Bartenberger (2016) to reconceptualize experimentation as potentially ‘generative’ rather than simply ‘controlled’, and for different types of experiment to be used at different stages of the research process.

The purpose of our article is not to provide a detailed guide on how to conduct experiments –a number of instructional articles have already been written on this topic (e.g. King et al., 2013; Podsakoff & Podsakoff, 2019; Shadish et al., 2002). Neither is the purpose to provide a detailed guide on how to conduct mixed methods research – again, a number of existing articles and books successfully cover this topic (e.g. Creswell & Plano Clark, 2011; Teddlie & Tashakkori, 2009). Rather we put forward abductive experimentation as a novel way of combining the philosophy of pragmatism with literature on experiments and mixed methods to study grand challenges. Thus, our focus is at the processual level, and the outlining of specific activities and considerations at multiple stages of the research process, rather than detailing the nuances of conducting experiments or mixed method research more generally.

Abductive Experimentation

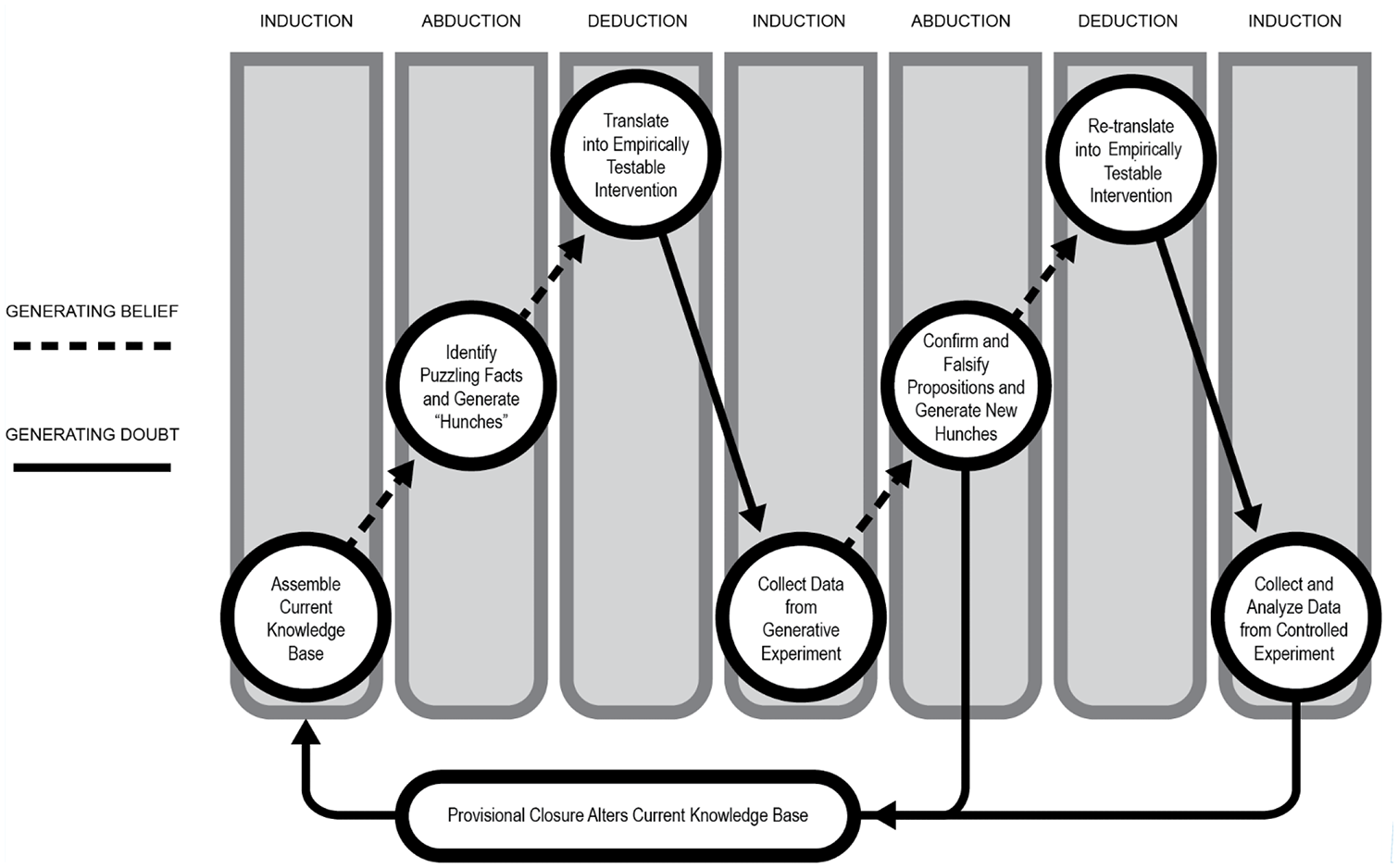

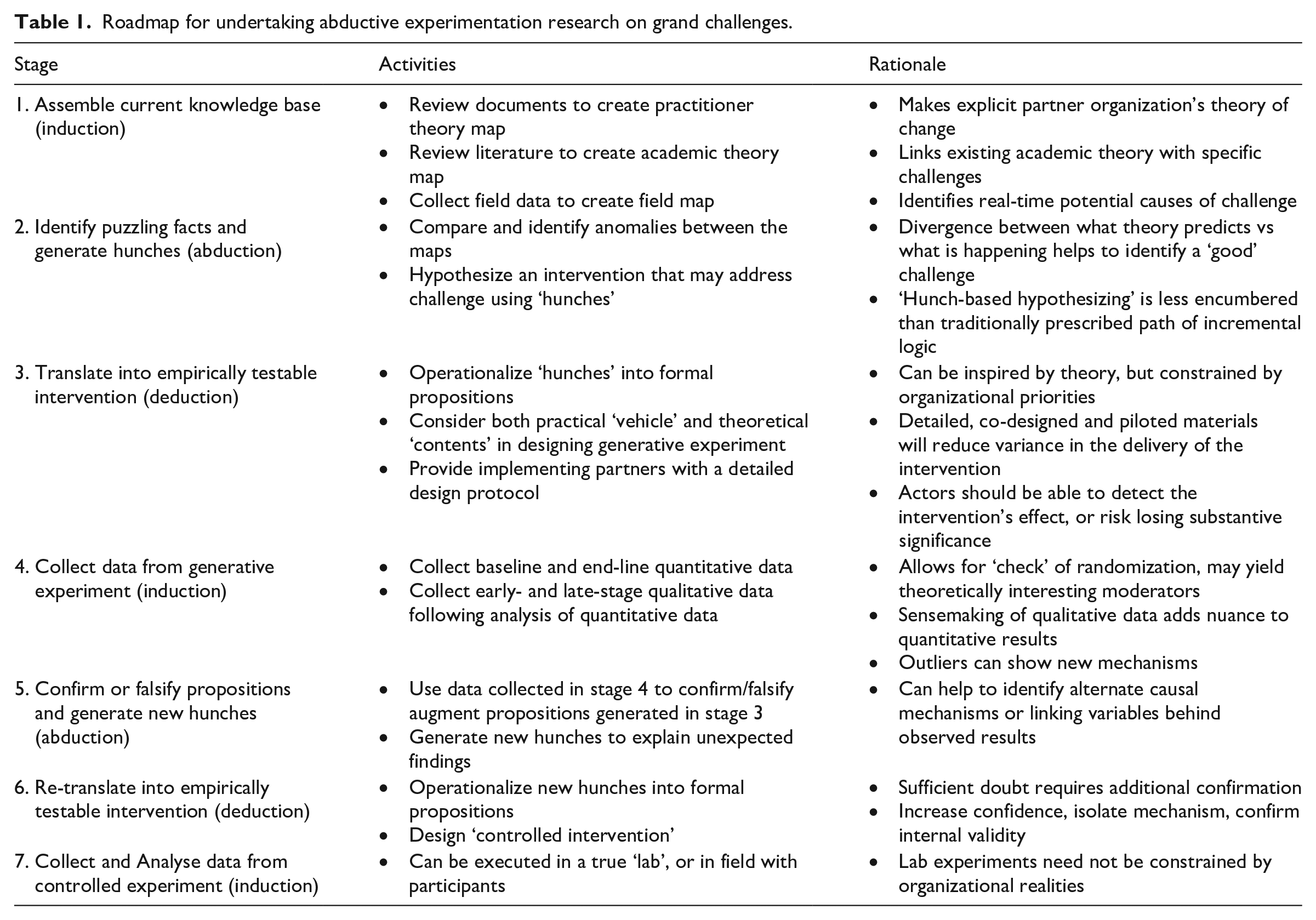

The following section presents an overall processual roadmap of abductive experimentation as a method of inquiry for researching grand challenges (see Figure 1), as well as a detailed description of each stage within the process (see Table 1). To help illustrate each of these stages, we use examples of grand challenge projects we have undertaken in the past decade using abductive experimentation – some as running examples continuing across several stages of the research process, and others as more ancillary examples at a single stage. Our own experiences have largely involved research in partnership with organizations using market-based approaches to alleviate global poverty. While we draw predominantly from our own focus on poverty alleviation, we would contend that the roadmap is also appropriate for other types of grand challenge research.

The abductive experimentation model of inquiry.

Roadmap for undertaking abductive experimentation research on grand challenges.

Before delving into the process of abductive experimentation, it is important to discuss setting the boundaries of subsequent inquiry. Decisions must be made regarding the specific set of collaborators that will participate within the study – what Dewey (1916) termed the ‘community of inquiry’. In keeping with traditions of other early pragmatists (i.e. Addams, Mead and Follett), this process should involve seeking out ‘practitioner’ organizations as research partners who possess their own set of unique skills, knowledge and preferences (Simpson & den Hond, 2022). It is important to seek out practitioner organizations that present an opportunity for active collaboration for identifying and testing potential solutions to current challenges rather than an arms-length engagement whereby the perceived role of the academic team is to provide third-party validation of existing activities.

Once one or more viable research partnerships have been identified, discussions must occur surrounding specific challenges or problems that could be the focus of subsequent inquiry. Within our own research, we typically initiate conversations with potential partners by asking ‘What are the problems or challenges that are keeping you awake at night?’ The purpose of using this colloquial phrasing is to solicit the most pressing and current practical problems faced by their organizations. It is important that the practitioner partner sees the potential collaboration as an opportunity to work with an academic team on current issues, rather than simply serving as a case study for something that happened in the past (Tavory & Timmermans, 2014). It is also in keeping with a pragmatic approach to inquiry that recognizes that the meaning of a particular problem needs to be understood within its social context (Morgan, 2014).

Because experimentation forms the pillar of the abductive process we put forward, it is important that the scope of a particular challenge or problem that is discussed allows for sufficient statistical power to evaluate the efficacy of potential interventions (Cashen & Geiger, 2004). While of course the precise calculation of sufficient ‘size’ is subject to a number of factors, a minimum sample of 60 ‘subjects’ (30 per cell) is used as a rule of thumb (Cohen, 1988). 2 While this is a minimum, we do not often work with samples that are significantly larger than this, either. The reason for this is an ethical one: research-based field experiments involving grand challenges are often piloting a new and as-yet untested intervention – requiring that we take particular care in assembling a sample that represents the minimum size necessary for statistical analysis. At the conclusion of initial discussions among the research team, it is hoped that there has been some degree of consensus surrounding the top two or three challenges that the group is interested in pursuing further. The challenge then becomes how to narrow even further to a single challenge as part of the process of problematization.

Stage 1: Assemble current knowledge base (induction)

The abductive experimentation research process begins with an inductive phase of collecting information on each of the shortlist of challenges identified. In essence, the collection of such information serves to ‘test’ the extent to which one or more hypothesized challenges are in fact significant challenges worthy of further study (Sætre & Van de Ven, 2021). To do so requires the compilation of three different ‘maps’: a ‘practitioner theory map’, an ‘academic theory map’ and a ‘field map’.

It is important to note that in the process of abductive experimentation we put forward, the notion of both academic and practitioner ‘theory’ play a significant role in capturing pre-existing ways of thinking or ‘historical habits’ (Simpson & den Hond, 2022). Academic theories (e.g. institutional theory) represent a rich archive of thoughts and beliefs surrounding a particular outcome of interest (Whetten, 1989). While often viewed as ‘general rules’, academic theories also provide a mediating role that serves to help organize the contextual experiences of other researchers into a common language (Weick, 1995), and can thus serve as a source of important information for understanding a diverse set of phenomena (Van de Ven, 1989). At the same time, practitioner theories – particularly within the context of addressing grand challenges (e.g. theory of change) – provide rich representations of historical beliefs on the part of an individual or organization. While not necessarily sharing a common vernacular, we argue that both types of theory are incredibly important in drawing conclusions surrounding what could be considered ‘unusual’ to a community of inquiry.

Practitioner organizations involved in grand challenge activities often have an explicit ‘theory of change’ (Van der Byl, Slawinski, & Hahn, 2020). Such theories outline a series of causal linkages between the ‘inputs’ on the part of the organization and the subsequent ‘outputs’, ‘outcomes’ and ‘impacts’ they anticipated when they originally designed each of intervention. In addition to collecting data on such practitioner theories, we strongly suggest the research team reviews other early-stage project-related archival documents to augment the explicit theory of change. Such additional data can serve to add greater depth to the pre-existing assumptions of each practitioner theory with respect to the anticipated root causes surrounding the specific challenge addressed, anticipated mechanisms of change, and anticipated situational or contextual factors (Turner, Cardinal, & Burton, 2017). The end result should be a detailed ‘practitioner theory map’ for each of the shortlisted problems.

Correspondingly, members of the research team should seek to align salient aspects of each of the shortlist of challenges to higher-level constructs within academic theories. This process will serve to link existing academic theory (which can be stated in more abstract terms) with the specific challenges (Shapiro, Kirkman, & Courtney, 2007). As a primary example, one of our research projects involved working with an organization seeking to provide rural Guatemalans with access to subsidized socially valuable products (e.g. eyeglasses, solar lamps, water purifiers), but was struggling to achieve the desired impact. One of the primary causes of this appeared to be that the local agents they were using to distribute the products lacked the motivation to sell and care for the products they received on consignment. Such issues are related to constructs such as ‘opportunism’ and ‘motivation loss’ within agency theory that have been shown to occur when ownership and control are separated. In another example, a non-profit organization we worked with in Sri Lanka presented a challenge in which rural communities would initially commit human resources to construct a new school, but then unexpectedly fail to deliver on such commitments, thereby jeopardizing the ultimate completion of the project. This specific challenge is reflective of the construct of ‘hold-up’ within transaction cost economics at an abstract level.

Some scholars may question whether academic theory should be engaged with at all at this early stage of the inquiry process. To this more provocative question we suggest that the answer is ‘yes’, as theory can provide inspiration for what Alvesson and Kärreman (2007) have referred to as problematization: to problematize means to challenge the value of a theory and to explore its weaknesses and problems in relation to the phenomena it is supposed to explicate . . . theory development is stimulated and facilitated through the selective interest of what does not work in an existing theory. (Alvesson & Kärreman, 2007, pp. 1266–1267

Put simply, without theory, the researcher does not have anything against which to evaluate, or ‘break down’ (Alvesson & Kärreman, 2007), the puzzles, doubts or surprises that she encounters. Furthermore, as Ferraro et al., (2015, p. 369) state, ‘the problem-solving perspective of pragmatist philosophy starts from the idea that specific problems challenge existing knowledge and therefore provide critical learning opportunities’.

Once we have identified the academic theories that correspond to these key constructs, we turn to recent conceptual review articles on the associated literatures in academic journals such as Academy of Management Annals and Journal of Management, or recent empirical articles that employ meta-analyses (Hedges & Olkin, 2014) to provide an overview of academic thinking surrounding the relationship between constructs of interest. While it may seem somewhat rudimentary or superficial to look to ‘summary articles’ to obtain an understanding of existing academic knowledge and beliefs, this is actually very purposefully done. Such an approach serves to at least partially inhibit academic members of the research team from reaching for their favourite ‘theoretical hammer’ – from ‘blocking the path of inquiry’ – as they seek to meaningfully solve the challenge at hand (McGahan, 2007). Similar to the ‘practitioner theory map’, the task is then to construct an ‘academic theory map’ for each of the shortlisted practical problems. And again, similar to the practitioner map, each academic map should outline all known antecedents, mediators, moderators and dependent variables associated with the selected challenge. Taken together, these two maps seek to capture the historical knowledge base for the research team.

The final step in this stage of the abductive experimentation process is to actively engage in a field data collection exercise is to create a current ‘field map’. Thus, as compared to the prior two maps, the ‘field map’ relies on real-time primary data that identifies the present-day salient triggers, antecedents or barriers that appear to be causing the shortlisted set of challenges. This field map should be constructed by interviewing a diverse set of stakeholders (employees, competitors, local government representatives, etc.), observing organizational practices and interactions, and collecting any recent project evaluation reports (van de Ven, 2007).

In addition to acquiring information on the factors that may be causing the set of challenges, it is helpful to purposefully seek out anecdotal stories in which a particular challenge was somehow subdued or overcome (Miles & Huberman, 1994). This can be particularly helpful in pointing to new theories not typically associated with the problems, as well as new ideas for subsequent formal interventions. For instance, in the Sri Lankan project described previously where community members were failing to live up to their commitment to assist with school construction, we were originally viewing the problem solely through an economic lens – as a ‘hold-up problem’ whereby all contractual mechanisms prescribed by current theory had been ineffectual in that particular context. However, in the process of interviewing members of the field staff responsible for ensuring community commitments were kept, we were told a story about how a cell-phone picture of a nearby community’s more advanced construction progress had triggered immediate action from the community. This not only led us to subsequently explore an alternative theoretical lens (social interdependence theory), but ultimately led to our intervention comparing cooperative versus competitive approaches (as alternative interventions) for motivating groups in resource-scarce environments.

Stage 2: Identify puzzling facts and generate hunches (abduction)

Upon completing the mapping activities, the abductive process of identifying ‘anomalies’ between the three maps begins – particularly points of contrast between the current ‘field map’ and the practitioner/academic ’theory maps’. Alvesson and Sköldberg (2009, p. 5) describe this as ‘eating into the empirical matter with the help of theoretical pre-conceptions’. The process of seeking out misalignment between the maps is akin to trying to solve a puzzle (Kovacs & Spens, 2005) or playing the role of a detective (Czarniawska, 1999). In other words, it is a tangled process of trying to piece together clues to identify which problem appears most ‘unusual’, and thus represents a significant opportunity for altering the current knowledge base. In keeping with the logic of pragmatism, we are not at this stage seeking to identify the more nuanced aspects of how the current causes of a particular problem may or may not match perfectly with pre-existing expectations (Farjoun, Ansell, & Boin, 2015), but rather to look for more significant ‘surprises’ (Peirce, 1903) or ‘doubts’ (Locke et al., 2008) that jump out.

In our experience, we have found the more significant surprises often come from seeing contrasts between the field map and the academic theory map, as academic theory maps are typically much more densely populated from prior studies involving hundreds of organizations as compared to the practice theory map that was constructed from the single organization partner. Again building upon our own experience, we have found that such ‘surprises’ typically fall into one of three categories: (1) existing theory does offer prescriptions for resolving the problem, but such prescriptions have not been explored within a specific context, and there are specific features of the context which suggest that prescriptions may diverge from existing causal relationships (most common); (2) existing theory (or theories) offer competing prescriptions for how best to solve a particular problem (somewhat common); or (3) existing theory does not provide any prescriptions for solving the problem (extremely rare). In terms of the most common types of misalignment between the practice theory map and the field map, these tend to fall into one of two categories: (1) the previously identified set of inputs and activities did not have the intended effect on the previously identified set of outcomes (most common); or (2) the previously identified inputs created unforeseen outputs or outcomes of either a positive or negative nature (somewhat common).

To illustrate this process of identifying misalignment between pre-existing expectations and the current state of affairs, we return to the example of our research project in Guatemala. Data collected from the field map suggested that not all of the socially valuable products were considered equal, and that it would be financially possible to have agents assume physical and psychological ownership over one of the products rather than continue to receive it on consignment. However, the academic literature on agency theory and identity theory offered competing prescriptions of a negative and a positive effect on overall performance respectively. Another example involved a recently completed research project in rural Ghana. The problem faced by the practitioner organization was that newly established producer cooperatives were experiencing extremely high levels of conflict as an unwanted and unanticipated outcome of their organizing activities. After documenting the information obtained from our initial round of data collection, we had noted in our field map that ‘authority’ appeared to be one of the primary triggers of conflict. However, comparing this relationship with that in our academic theory map showed a stark contrast on cooperative governance which had largely assumed ‘equality’ as a guiding principle, and thus failed to explain or predict how authority structures may influence cooperative functioning.

These examples illustrate the importance of mobilizing empirical ‘material’ as a ‘critical dialogue partner’ (Alvesson & Kärreman, 2007) – a metaphor which we agree works well in the attempt to understand novel phenomena that do not easily ‘fit’ with existing theoretical prescriptions. This lack of fit ‘between one’s encounter with a tradition and the schema-guided expectations by which one organizes experience’ (Agar, 1986, p. 21) is what we look for as we are examining our grand challenge phenomenon through various practical and academic theoretical lenses. This is arguably among the most challenging steps in the process, and as others have remarked, this approach does rely to some extent on ‘serendipity [and] the art of being curious at the opportune but unexpected moment’ (Merton & Barber, 2004, p. 210).

This process of contrasting ‘maps’ for each of the shortlisted challenges should ultimately lead to the choice of a single challenge. We recommend that the criteria applied in this selection process not only be limited to focusing on the challenge that represents the most ‘doubt’ or ‘puzzle’, but also be balanced with the potential for impact beyond the single organizational partner. In other words, the more prevalent the problem across multiple organizations focused on a grand challenge, the greater the potential contribution to practice and theory (Howard-Grenville et al., 2019).

Once a single challenge has been selected as the focus of inquiry going forward, the abductive process turns to hypothesizing one or more interventions that could address the unanticipated challenge. However, the process of hypothesizing using abduction tends to be much more creative than what non-pragmatist scholars would consider to be formal hypothesis development (Alexander, 1993). This is because hypothesizing using abduction is focused on generating ‘hunches’ by piecing together clues obtained during the process of collecting initial field data (Weick, 2005). In simple terms, constructing hypotheses using abduction involves a ‘what if’ approach to brainstorming potential solutions rather than using linear step-by-step logic development. While such brainstorming remains at least partially rooted within current knowledge, the process – which Weick (1989) refers to as ‘disciplined imagination’ – is less encumbered than the traditionally prescribed path of incremental logic.

Returning to the example of the sales agents within Guatemala, after reviewing the field data and competing prescriptions of agency theory and identity theory, the team began to brainstorm a potential ‘moderator’ that may serve to help reconcile this disparity. It was noted by one member of the team that there were two types of product sold by the agents – those that were related to vision (e.g. eye drops, glasses) and those that were not (e.g. solar lamps, water purifiers). We began to wonder whether or not the predictions of identity theory may hold for the similar products, but those of agency theory for the dissimilar products. Another example of such ‘disciplined imagination’ occurred in a research project we undertook within Ethiopia to address problems with an existing entrepreneurship training programme. The organizational partner delivering the original programme had complained that, despite its best efforts, entrepreneurs who had participated in their training programme continued to ‘copycat’ other businesses from their local community rather than come up with new business ideas. Through the process of overlaying our ‘maps’, we realized that their existing training was based almost exclusively on principles of opportunity discovery (Shane, 2000). However, recent debate within the entrepreneurship literature had highlighted another approach towards entrepreneurial action labelled ‘opportunity creation’ (Alvarez & Barney, 2007). But existing theory offered little prescriptive guidance as to whether an ‘opportunity discovery’ or ‘opportunity creation’ approach may lead to varying levels of novelty in ideation. In once again comparing our maps, we began to hypothesize contextual reasons why one may be more successful than the other.

When employing an abductive approach to hypothesizing, it is common to experience what some have called a ‘eureka moment’ when constructing potential hypotheses (Locke et al., 2008). From our own experience, we would agree that it is often through the tedious task of sifting between seemingly insignificant ‘clues’ that plausible alternatives emerge in a fairly impromptu fashion. However, we should also caution that it is common for feelings of ‘conceptual delight’ to quickly fade when practical realities are considered. The primary reason for this relates to ethics – the potential that a potential intervention could result in unacceptable levels of risk to the organizational partner or its stakeholders (Ansell & Bartenberger, 2014). In such instances, it is imperative for the researcher to discard such ‘hunches’ as viable options for moving forward. A secondary practical impediment to what initially may seem like a good conceptual idea is the issue of effect size. This is not the same analytical concern associated with statistical power – the ability to detect an effect (Cohen, 1988) – but rather whether or not the intervention being considered will sufficiently ‘move the needle’. In other words, does the intervention have a sufficient level of practical significance to meaningfully address the problem at hand regardless of the potential for statistical significance?

Stage 3: Translate into empirically testable intervention (deduction)

Pragmatists often have an innate desire to test the efficacy of their hypotheses in real-world settings (Ferraro et al., 2015; Martela, 2015). However, an important part of this stage of inquiry is that initial ‘hunches’ must be logically operationalized as actual propositions. Such operationalization involves deductively specifying a cause-and-effect relationship between two or more variables that concretely capture this broad conjecture. Drawing again on Lorino’s (2018) example of a group sitting down for a picnic in the forest, simply hypothesizing that an observed flat stone might serve as a good table is insufficient – it is necessary to design one or more empirical tests of this ‘hunch’ by operationalizing one or more factors that would support this hypothesis (e.g. a bottle will stand stable on the rock). It is important to note that while non-abductive experiments would typically include a single intervention compared to a control group, the abductive experimentation process would typically involve comparing the efficacy of one intervention as compared to one or more other interventions to provide more ‘exploratory’ than ‘confirmatory’ power in its design.

For example, we undertook a second research project within Ghana looking to solve the problem of heightened conflict among members of newly formed cooperatives. Drawing upon our ‘maps’, we had formulated a hypothesis that the way in which the goals of the cooperative were framed might help reduce this conflict. This resulted in the direct comparison of a ‘promotion’ vs. ‘prevention’ framing in the design of the field experiment rather than a single treatment versus a control group. As another example, in the research project in Ethiopia we described earlier regarding the problem of entrepreneurs ‘copycatting’, our initial ‘hunch’ was that the existing training programme (based on opportunity discovery thinking) was anchoring the process of business ideation in market signals which were highly homogeneous, and as a result, if we could anchor the process within opportunity creation thinking, which is anchored in a more heterogeneous set of resources, the challenge would be partially addressed. However, this involved an extensive process of thinking through the detailed design of the intervention materials such that they accurately captured the implicit ends-based ‘opportunity discovery’ and means-based ‘opportunity creation’ principles, as well as the process for accurately capturing changes in ‘copycatting’. It also meant building formal logical arguments as to why a training based on one set of principles would be expected to reduce ‘copycatting’ as compared to the other, and what alternative factors may need to be considered in order to effectively test this argument.

Once the logic of the hypothesis has been deductively translated into propositions, the detailed design of the intervention begins (Ansell & Bartenberger, 2016). Within our work, the design of the field experiment is codified within a formal protocol document. This protocol should be co-created and approved by all members of the research team, and constitutes a comprehensive representation of the detailed process that will be used to test the propositions – to the point of containing a word-by-word script of the intervention activities if possible. Not only will this serve to explicate the logic and principles behind the activities to be undertaken but will also work to ensure all members of the research team are ‘on the same page’, so to speak. We have also found that providing ample opportunity for all members of the research team to comment on, pre-pilot and make modifications to materials involved in the intervention is critical. These materials may take the form of posters, charts, manuals, training or workshop curricula, diagrams, photos, sound or video recordings and props. The intervention may also consist of a range of these different forms, as we have found that building redundancy into the materials, in order to consistently reinforce the intervention throughout its duration, is critical, particularly in ‘noisy’ field environments where such grand challenge research typically takes place.

The intervention, of course, is part of the larger process of experimentation. As mentioned earlier, the process of abductive experimentation we put forward for addressing grand challenges is methodologically anchored in field experiments as it provides an amicable balance between internal and external validity when attempting to determine causal relationships for highly complex phenomena (Aguinis & Edwards, 2014). While there are a multitude of other approaches available to researchers when considering how to formally test such propositions, we assert that it is highly constructive if such testing in the context of grand challenges is done by way of ‘active and deliberate interventions that are controlled’ (Brown, 2012, p. 295).

While we discussed much of the information on experimental design previously, a couple points of emphasis are warranted. First, the field experiment employed at this stage of the process of inquiry is highly generative rather than affirmative (Ansell & Bartenberger, 2016). Thus, its purpose is as much to create additional doubt as it is to confirm prior beliefs. However, we do seek out randomization to the greatest extent possible, as a means of minimizing concern of omitted variable bias (Eden, 2017). This is especially important within grand challenge research as the study participants may be concurrently subject to other unknown interventions from other organizations that may significantly alter the outcomes of interest.

Second, from a measurement standpoint, it is also highly desirable to design the field experiment such that mediation or ‘mechanism’ can be tested (van de Ven & Huber, 1990). While tests of mediation within social settings are frequently partial rather than complete (Maxwell, Cole, & Mitchell, 2011), the ability to empirically isolate the mechanism linking the intervention(s) to the outcomes of interest helps contribute a great deal to disentangling the myriad factors at play within complex grand challenge phenomena. Additionally, mediators represent ‘disaggregated dependent variables (Ray, Barney, & Muhanna, 2004) which are even more closely tied to the interventions, and thus useful in understanding their specific impact on behaviour or psychological states. As an example, we undertook a research project in Tanzania that, much like the project described previously in Ethiopia, was trying to refine an existing entrepreneurship training to encourage greater experimentation and differentiation in participants’ subsequent business endeavours. We designed a new training module based on Dweck’s (2015) concept of ‘growth mindset’ that had been explored primarily in the field of education. As a means of explicitly testing ‘why’ the new module might lead to changes in entrepreneurial behaviour, we constructed a measure of ‘entrepreneurial self-efficacy’ as a mediating variable.

There are certainly instances in which the assembled knowledge base that forms the foundation of the intervention(s) is insufficiently developed to clearly identify mechanisms a priori. In such instances, the most pertinent mediating variable(s) can be identified subsequently through qualitative data collection, and potentially validated through controlled experimentation, which we will discuss in turn.

Stage 4: Collect data from generative experiment (induction)

Stage 4 is again an inductive stage in the research process whereby new data is collected in order to explicitly test the translated propositions and further problematize cause-and-effect relationships (Sætre & Van de Ven, 2021; Tavory & Timmermans, 2014). As stated previously, we find a mixed methods design – collecting both quantitative and qualitative data at this stage – to be compatible with our process for two primary reasons. First, the study of complex phenomena such as grand challenges is well suited to the rigour and richness afforded by collecting multiple types of data (Aguinis et al., 2019; Glynn et al., 2000). Second, it is compatible with pragmatism’s view of quantitative and qualitative methodologies as tools of inquiry, to be employed in the service of gathering insight into the practical matter, rather than its ontological alignment (Guba & Lincoln, 2005; Morgan, 2007; Shah & Corley, 2006). Deviating from common associations of induction with qualitative methodologies, pragmatism suggests that induction can occur through both qualitative and quantitative forms of data.

While it is well beyond the scope of this article to provide detailed prescriptions for both quantitative and qualitative data collection, there are several important components we wish to highlight when using this methodology as part of a larger process of inquiry for grand challenges. While there are many different ways to structure the points of quantitative data collection within field experiments (Kirk, 2012), we have found that collecting baseline data (where feasible) is particularly important when engaging in grand challenge research. First, collecting dependent variable or ‘outcome’ measures just prior to the launch of the experiment allows for a subsequent evaluation of randomization (Bruhn & McKenzie, 2009). Practical constraints often result in smaller sample sizes than desired, and ensuring there are no statistically significant differences between the intervention groups provides increased confidence in ruling out alternative plausible hypotheses. Second, collecting baseline measures allows for the evaluation of the change resulting from the intervention within participants randomly assigned to different conditions as well as between conditions (Shadish et al., 2002). This is especially important when outcome measures may be cyclical in nature. For example, within our Guatemala project, the sales of different products within different regions of the country fluctuated at different points in the year. Collecting baseline sales data prior to the commencement of the field experiment allowed us to directly compare ‘within-agent change’ from comparable periods from the prior year.

Collecting baseline quantitative data also allows for the collection of a host of potentially relevant moderating variables or ‘contextual factors’ (Busse, Kach, & Wagner, 2017) within grand challenge research. While such factors may not always appear to be conceptual ‘interesting’ from an academic theory perspective, they provide a meaningful and important level of detailed information for understanding how to potentially replicate and scale the grand challenge intervention more broadly. For example, while subsequent statistical analyses may reveal that males reacted differently to a particular intervention than females – and this finding may not be overly interesting from an academic theory perspective – such information is crucial for customizing future efforts in addressing the grand challenge problem.

In addition to the collection of quantitative data we have described above, the methodological approach we put forward also advocates for the collection of qualitative data during this inductive phase. A pragmatist approach to inquiry recognizes that, ‘means and ends are not always clearly determined prior to action’ (Ferraro et al., 2015). With respect to abductive experimentation, this means engaging in qualitative data collection with the specific intention of building upon the results from the field experiment through a sensemaking process rather than simply seeking to ‘confirm’ or corroborate’ them. As Levy Paluck (2010) has argued, particularly in the context of grand challenges, merging quantitative field experiments and qualitative field interviews can be a particularly powerful mixed methods combination. Such methodological blending – with interviews occurring after the field experiment – can be critical in the process of discovering and building new knowledge (Van Maanen, Sørensen, & Mitchell, 2007; Weick, 2007).

The collection of subsequent qualitative data can also be very useful to illustrate specific examples of the empirically supported linkages (Creswell, Plano Clark, Gutmann, & Hanson, 2003), and to provide insight into the experiences of ‘outliers’. While such individuals may be in the minority as compared to the empirically derived ‘t-test of means’, a pragmatist process of inquiry purposefully seeks out unusual responses to interventions as an additional source of information to support or challenge prior understanding. Additionally, we purposefully select interviewees that are at least plus or minus one standard deviation from the mean within our field experiment results. Such participants are more likely to be able to meaningfully articulate their responses (and reasons for their responses) to the intervention they received. Finally, we seek to interview at least 15 to 20 participants per condition, and to ensure they represent the overall diversity (i.e. age, gender, geographic location) of participants within the field experiment.

We have also found it extremely valuable to collect both early-stage and late-stage qualitative responses to interventions (Keppel, 1991). Doing so provides important insight into the trajectory and undulations of the participants’ response to the grand challenge intervention. In this way, collecting stories from field experiment participants at different points in time can augment the ‘snapshots’ provided by quantitative data by showing the lived experiences of participants. Returning again to the research project involving the social enterprise within Guatemala, the specific intervention that was designed involved having all agents purchase the gotas or eyedrops in cash up-front while continuing to receive all other products on consignment. While the intervention was ultimately successful in mitigating the organization’s problem in the long term, the salespeople reacted negatively in the short term before they became accustomed to the new model. Having the ability to anticipate (and potentially mitigate) such short-term negative reactions is important for scaling such efforts further.

Stage 5: Confirm or falsify propositions and generate new hunches (abduction)

Armed with new data, we are now able to begin the process of using the data collected in stage 4 to confirm or falsify the propositions that we deductively generated in stage 3. It is also during this additional abductive phase in the process that we seek to generate new hypotheses or ‘hunches’ to explain unexpected findings from the field experiment. Returning to Lorino’s (2018) example – if we found that the bottle would not stand stable on the rock, or stood stable only under certain conditions, what hunches might we have as to why that may have been the case? Indeed, from our own experience, it has been common to find some unexpected ‘surprises’ in our empirical results. Such ‘surprises’ typically come in one of three forms: (1) a non-significant finding; (2) a significant finding but in the opposite direction than predicted; and/or (3) unanticipated moderating or ‘contextual’ factors. As an example, one of our biggest surprises occurred in the Sri Lanka school construction project described earlier. Triangulating our three ‘maps’ had indicated that the level of familiarity between communities building their respective schools may affect the extent to which competition or cooperation between such communities was more effective for increasing motivation. While the statistical results of our field experiment did indeed confirm that familiarity was important, it was in the inverse direction from what we had guessed would happen. The findings from our qualitative data provided important clues as to why this may have been the case – in other words, it started the process of again generating new ‘hunches’ and beliefs.

Even when the statistical results from the field experiment confirm the set of propositions, there may be a significant opportunity for generating new beliefs. As mentioned previously, there are often multiple mechanisms linking variables of interest within grand challenge research rather than a single reason ‘why’. Even in cases where mediation is both measured and statistically supported within a field experiment, it is likely a support of partial rather than complete mediation (Kenny, 2008). Therefore, conducting follow-up interviews with participants within the field experiment can help to identify other reasons at least partially responsible for the linkages between the interventions and associated outcome variables. For instance, in the project described earlier that we undertook in Ghana comparing flat versus hierarchical formal structures for reducing conflict within cooperatives, we had hypothesized a significant moderating role of informal structures. In our follow-up interviews with respondents, the reason why informal structures affected the main interventions was not only for the reason we had hypothesized but also for another potentially important reason that we had failed to consider.

At the same time, conducting follow-up interviews can sometimes suggest alternative mechanisms behind the observed statistical results which directly challenge rather than augment the field experiment findings. For example, within the Guatemala project using rural sales agents, our interviews revealed that in addition to the identity-based mechanisms we had proposed as the link between increased product ownership and effort, there were potentially ‘logistical’ reasons for the effect we had observed for related products – that participants may have engaged in more cross-selling for financial reasons rather than identity reasons. In such instances, it may be necessary to proceed with further – more controlled – experimentation to garner more confidence in the set of beliefs.

Stage 6: Re-translate into empirically testable intervention (deduction)

In accordance with the philosophy of pragmatism, there is never a permanent resolution – with no need for further revisions – to questions that occur in a reality that is socially constructed and therefore continually evolving (Morgan, 2014). Despite this, a pragmatist perspective does involve ‘provisional forms of closure to steer through actual debates and solve actual problems’ (Prasad, 2021, p. 5). Therefore, the abductive experimentation process we put forward ends not when all doubt has been extinguished, but when ‘provisional closure’ has been realized. The majority of grand challenge research projects we have undertaken using an abductive experimentation approach have in fact reached provisional closure at the end of stage 5. We have generally found that sufficient information has been obtained by that time to provide meaningful insight to our project partners, as well as findings of sufficient rigour and substance for crafting an academic manuscript suitable for peer-based review. However, there are times when there is still sufficient doubt that it may be necessary to undertake a second experimental study to provide additional confidence (Falk & Heckman, 2009). In such cases – much in the same way we described in stage 3 and will therefore not repeat – it becomes necessary to return to the deductive process of translating the new hunches generated in phase 5 to empirically testable propositions.

At this stage however, we have found that there is generally enough information to shift the experimental approach from ‘generative experimentation’ to ‘controlled experimentation’ (Ansell & Bartenberger, 2016). More specifically, a laboratory experiment can be a useful tool for providing greater internal validity regarding one or more causal relationships brought to light during the course of the research project, without needing to undertake an additional field study (Colquitt, 2008). This may involve simply removing the ‘noise’ that may have been present within the field experiment in order to isolate and ‘re-test’ an unexpected finding. However, executing a laboratory experiment can be particularly helpful in further isolating mechanisms uncovered in the qualitative research that were not tested in the field experiment.

As described in the Guatemalan example above, the combined quantitative and qualitative data collect from the field study suggest that significant ‘doubt’ remained as to whether or not the mechanism we had proposed was indeed responsible for the outcomes we had observed. We therefore designed a second experiment involving the field study participants whereby we created a laboratory environment within Guatemala. The laboratory study was designed to explore (1) whether our proposed intervention indeed triggered a change in identity within the agents, and (2) whether or not the new identity was responsible for changes and differences in their subsequent behavior. This involved designing a whole new intervention with a different set of products but adhered to the same constructs and principles of the prior field experiment.

Another example of the need to continue the abductive experimentation process involved a large United States social enterprise that, despite its new internal promotion efforts of its poverty alleviation mission, continued to experience extremely low levels of prosocial motivation. We subsequently designed and field tested a new set of intervention videos that differed in terms of both their level of beneficiary salience and direction of social comparison using a 2 x 2 design, with compassion and envy acting as mediators to higher or lower levels of resulting prosocial motivation. The quantitative results of the field experiment produced inconsistent statistical results, and the qualitative interviews suggested that contextual factors had an unanticipated ‘restriction in range’ effect. As a result, we proceeded to deductively design an online laboratory experiment to essentially test the same set of propositions, but with a different population and governance structure to explore the boundary conditions of our beliefs.

Stage 7: Collect and analyse data from controlled experiment (induction)

As compared to the generative type of field experimentation undertaken in stage 4 of the process, the laboratory experiments are conducted in a much more controlled fashion (Ansell & Bartenberger, 2016). And also, as compared to the inductive data collection that occurred in stage 4, we historically collect only quantitative data after conducting the laboratory experiment as the primary goal of the laboratory experiment at this stage in the process is to confirm or deny a formal proposition rather than to generate additional doubt. Due to the other similarities in these two inductive stages, we will not go into additional detail with respect to the details of data collection.

Needless to say, undertaking an additional laboratory experiment can be fairly costly in terms of both time and money (Collins, Dziak, & Li, 2009). For example, conducting the second experiment within Guatemala required multiple members of the research team to undertake a two-week research trip around the country, incurring a fairly sizable financial expense as a result. However, one must remember that the types of grand challenge being researched have important implications for society – and that insufficiently substantiated conclusions can be harmful to making progress (George et al., 2016). There are also the ethical implications of allowing a newly designed intervention to scale up and expand without sufficient information to ascertain its efficacy (Ansell & Bartenberger, 2014). Thus, we would argue that the design and implementation of a second experiment, if warranted, is worth the extra investment.

Once provisional closure has been achieved – either at stage 5 or stage 7 – it is imperative for members of the research team to ensure the new knowledge that has been created is integrated into the pre-existing knowledge base. In academic terms, this often means constructing a manuscript for publication within an academic journal, presenting the findings at an academic conference, etc. However, as a means of further deconstructing the artificial divide between ‘academe’ and ‘practice’, we strongly suggest writing up the findings of the study using non-academic vernacular, and directly sharing the findings with other grand challenge organizations facing similar challenges. Such an integrated approach to altering the current knowledge base will help to improve both the practice theory map and academic theory map for other research teams seeking to use abductive experimentation in future projects.

Discussion

The topic of ‘grand challenges’ has served as a rallying cry among management scholars seeking to directly contribute knowledge to some of the world’s most pressing social and economic issues (Banks et al., 2016; George et al., 2016). While a number of methodologies can be used by interested scholars to research the topics of poverty alleviation or climate change, we have outlined a process of inquiry that we feel matches well with the unique characteristics of grand challenges (Eisenhardt et al., 2016; Ferraro et al., 2015), as well as the ethical and ‘consequentialist’ exigencies of the pragmatist philosophy. Indeed, according to a pragmatist perspective, the merit of any line of inquiry is evaluated first and foremost with regard to its social and pragmatic value, and the process we lay out is very much defined by its pursuit of desired ends rather than the abstract pursuit of knowledge (Morgan, 2007). Thus, it serves as a meaningful and consequential foundation for seeking solution to grand challenges. The seemingly intractable nature of grand challenges also demands a more imaginative and unencumbered abductive process of inquiry, a process where hypotheses are expected to be bold and exploratory rather than incremental and logically infallible, and where theories and methods are viewed as part of a common toolkit for the purpose of solving real-world problems rather than being partitioned into ontological or dogmatic schools of thought. We hope that this ‘non-traditional’ process of inquiry we have laid out helps to at least partially fill the gap in prescriptive guidance for scholars interested in pursuing grand challenge research (Aguinis et al., 2019).

While the process of abductive experimentation we submit is very much aligned with prior work on abduction and pragmatism more generally, there are several ‘nuances’ we wish to highlight as a means of contributing to this rich tradition. First, by framing our proposed methodology in the context of addressing grand challenges, we have taken the perspective that unique insights from individual projects can be meaningfully aggregated among a larger set of partners. Some pragmatists may argue that such goals of aggregation are akin to a pursuit of ‘grand truth’ which is not in strict adherence to the traditional ‘fallibilist view of knowing’ (Simpson & den Hond, 2022). However, we would suggest that sharing new practices or ‘warranted assertions’ among multiple actors pursuing the same problem is simply a function of expanding the ‘community of inquiry’ (Dewey, 1916).

In much the same vein, it may seem somewhat unusual for a methodology rooted in pragmatism to purposefully remove ‘context’ through the potential inclusion of laboratory experiments within the process of inquiry. From a pragmatist perspective, all knowledge is considered both socially constructed and socially situated (Lorino, 2018; Tavory & Timmermans, 2014). While we certainly agree that it is futile to try and completely ‘decontextualize’ knowledge, we do see value in isolating cause-and-effect relationships as part of bringing provisional closure to an otherwise infinite and ongoing query. Weick used the notion of ‘small wins’ as a way of deconstructing significant social problems into smaller manageable pieces. Small wins are defined as ‘concrete, complete, implemented outcomes of moderate importance’ (Weick, 1984, p. 43), and thus represent a fairly high degree of certainty surrounding the relationship between sub-factors that affect a larger challenge to society. We believe the focused empirical examination on a small sub-set of factors provided through laboratory experimentation helps to achieve this degree of ‘concreteness’, and ultimately is more likely to lead to the increased sharing of such knowledge among the diverse set of members attempting to tackle a common grand challenge.

Something that is fairly unique to our abductive process of inquiry is the use of three formally constructed ‘maps’. While there are many different ways of playing the role of detective searching for clues as part of the process of abduction (Czarniawska, 1999), much of the prior literature lacks specific guidance on the process of generating ‘surprises’ – instead relying on ‘serendipity’ as a guiding principle (Merton & Barber, 2004). While we in no way wish to repress the creative process of abduction, we have found that the codification of both historical and present-day cause-and-effect depictions, and the act of overlaying such maps to help identify points of contrast, can be a helpful tool. In other words, we believe that this process of creating and comparing maps does not artificially bound avenues of inquiry, but rather is highly useful in ‘jumpstarting’ what can be an otherwise difficult and overwhelming process.

While our primary audience for the abductive experimentation process we propose is intended to be scholars studying grand challenges, we believe that such a process provides methodological insights for scholars more broadly attempting to undertake research that bridges the perceived gap between theory and practice (Doh, 2015; Polzer, Gulati, Khurana, & Tushman, 2009; Van de Ven, 2007). While the philosophy of pragmatism would contend that such dualisms are both unnatural and unproductive (Dewey, 1920; Simpson & den Hond, 2022), it is also true that the current expectations for many early-career professors are measured primarily by their ability to publish within academic journals rather than their ability to improve society (Prasad, 2021). Furthermore, publishing in such top-tier academic journals typically demands both a significant contribution to academic theory as well as an incredibly high level of rigour in terms of data collection and analysis (Ployhart & Bartunek, 2019). The unfortunate result has been an increased perception of ‘practitioners’ as outsiders in the research process who might detract from the exclusive focus on academic theory, rather than seeing them as equal partners in the process of inquiry who bring their own unique knowledge set (Glynn et al., 2000; Hambrick, 2007; Miller, Greenwood, & Prakash, 2009). The abductive process we put forward attempts to provide a roadmap to eliminate the unnecessary dichotomy that has developed between ‘rigour’ and ‘relevance’, and to naturalize ‘the practitioner’s own discourse and an awareness of the inclusion of intended beneficiaries of interventions in culturally respectful ways’ (Aguinis et al., 2019).

In terms of rigour, we put forward a clearly structured research process that leverages qualitative data to both inform and interpret quantitative testing using experimentation – what many scholars consider to be the ‘gold standard’ of empirical design (Aguinis & Edwards, 2014). However, the iterative multi-stage nature of the abductive experimentation process allows for adherence to rigour without sacrificing the creative process of novel theorizing that is so important to making significant and rapid progress in address grand challenges. Through the constant interplay between data and concepts (Mantere, 2008; Van Maanen et al., 2007), imagination and creativity are brought to bear on the subject of doubt in order to make an ‘imaginative conceptual leap’, and a tentative hypothesis is formed, tested and potentially reformed. As top-tier academic journals increasingly push for rigorous empirics that also deliver consequential theoretical contributions, the use of abduction – grounded in part within existing academic theory – can be very helpful in generating ’creative leaps’ that may ultimately constitute a significant contribution to such theory.

In terms of relevance, the process of abductive experimentation offers a methodological opportunity to directly and meaningfully work with those outside of academe, without sacrificing the ability to publish, jeopardizing tenure and promotions, and risking professional ostracism. Clearly this is not a demand-side problem: the vision statement of the Academy of Management reads: ‘We inspire and enable a better world through our scholarship and teaching about management and organizations.’ However, without a methodologically appropriate pathway, the supply of such work is lacking, as many scholars conclude that their passion for working on such ‘practically-driven’ challenges must be kept separate from their research destined for top-tier journals. It may be tempting for practice-driven scholars who do attempt to bridge this divide to view academic theory as a sort of ‘necessary evil’. In other words, to see academic theory as simply a legitimation tool for the purposes of publication offers but little use for solving problems in reality. From our own experience using abductive experimentation, we have found the opposite to be true. When gathering information about practical problems, academic theory often serves as an important tool for organizing such information into conceptually distinct buckets. While theory may not immediately provide nuanced prescription to a particular practical issue, we have found it to be essential for determining what questions to ask as we move through the research process.