Abstract

Quantification of biological effects (cancer, other diseases, and cell damage) associated with exposure to ionising radiation has been a major issue for the International Commission on Radiological Protection (ICRP) since its foundation in 1928. While there is a wealth of information on the effects on human health for whole-body doses above approximately 100 mGy, the effects associated with doses below 100 mGy are still being investigated and debated intensively. The current radiological protection approach, proposed by ICRP for workers and the public, is largely based on risks obtained from high-dose and high-dose-rate studies, such as the Japanese Life Span Study on atomic bomb survivors. The risk coefficients obtained from these studies can be reduced by the dose and dose-rate effectiveness factor (DDREF) to account for the assumed lower effectiveness of low-dose and low-dose-rate exposures. The 2007 ICRP Recommendations continue to propose a value of 2 for DDREF, while other international organisations suggest either application of different values or abandonment of the factor. This paper summarises the current status of discussions, and highlights issues that are relevant to reassessing the magnitude and application of DDREF.

Keywords

1. INTRODUCTION

1.1. Setting the scene

The International Commission on Radiological Protection (ICRP) is the leading organisation for developing recommendations on the protection of workers, the public, and the environment against exposures to ionising radiation. The radiological protection framework recommended by ICRP is based on more than a century of research on the biological effects of ionising radiation, the scientific results of which are reviewed regularly by major international organisations, such as the United Nations Scientific Committee on the Effects of Atomic Radiation (UNSCEAR).

Radiobiological studies at the molecular and cellular levels provide insights into the damage mechanisms that come into operation when cells or organisms are exposed to ionising radiation. Such studies have well-defined conditions and can investigate the influence of numerous parameters (e.g. dose, dose rate, degree of fractionation, radiation quality, environment, cell type and line, position in the cell cycle, and repair capacity) on the biological outcome. Indicators of cellular damage studied include identification of DNA damage [e.g. through detection of phosphorylated histone H2AX (γH2AX) foci], induction of chromosome aberrations, gene alterations, and cell survival, and may also include non-targeted effects such as genomic instability, bystander effects and adaptive response. Experimental studies on animals are another source of information. Animal studies enable direct investigation of biological effects, again taking into account various parameters such as dose, dose rate, radiation quality, type of species (such as mice, rats, dogs, and sometimes incorporating human cell types such as in humanised mice models). The detrimental outcomes considered may include general effects such as life shortening, but also more specific outcomes such as cancer incidence or mortality, which are already close to those relevant for humans after exposure to ionising radiation. Finally, epidemiological studies on human individuals exposed to ionising radiation provide an important source of information for radiological protection. For obvious reasons, these epidemiological studies do not have the advantageous features of the controlled experimental conditions found in studies on molecules, cells, or animals. Instead, epidemiological studies must deal with exposure situations as they were in the past when the exposure happened [e.g. in epidemiological studies on the atomic bomb (A-bomb) survivors, nuclear workers, uranium miners, the Techa River population, Chernobyl clean-up workers, and medical cohorts], or as defined in epidemiological studies due to other reasons (e.g. in studies on computed tomography exposures of patients where the exposure scenario is controlled but defined by getting the best image with the lowest dose).

Among the parameters included in the current ICRP system of radiological protection, the dose and dose-rate effectiveness factor (DDREF) plays an important role. This is because the risks of solid cancer and leukaemia incidence or mortality obtained from the Life Span Study (LSS) on the A-bomb survivors serve as a major input for ICRP in defining dose limits, dose constraints, and reference levels for protection of workers and the public in planned, accidental, and existing exposure situations. However, the LSS only provides valuable statistically significant results on radiation-induced solid cancers and leukaemia above whole-body doses of approximately 100 mGy, from exposures that occurred during a relatively short time (say seconds up to minutes), therefore involving high dose rates. It was thus considered that some adjustments to the derived LSS risk coefficients had to be made in order to make them applicable to the radiological protection setting where lower doses and dose rates are typical.

1.2. History

The requirement of a dose and dose-rate adjustment was noted in the first report of UNSCEAR, published in 1958, where it was acknowledged that ‘effects of low radiation levels must be extrapolated from experience with high doses and dose rates’, and that ‘among other physical factors, distribution in time governs the effects of ionising radiation’ (UNSCEAR, 1958). For many years, however, data from the A-bomb survivors did not show sufficient statistical significance to quantify radiation-induced risk for cancer and leukaemia induction and mortality based on human data. It was only in 1977 that UNSCEAR first proposed a ‘reduction factor’ to compare effects from acute exposures to low-linear energy transfer (LET) radiation with those from fractionated or protracted exposures. The proposed range (2–20 for this factor) was deduced from animal experiments (UNSCEAR, 1977). Again based on animal data, the US National Council on Radiation Protection and Measurements (NCRP, 1980) coined the term ‘dose-rate effectiveness factor’ (DREF) and proposed values between 2 and 10. Finally, in its 1990 Recommendations, ICRP (1991) introduced DDREF and suggested a value of 2 for absorbed doses below 200 mGy, and for higher doses if the dose rate is less than 6 mGy h−1 averaged over a few hours.

For many years, the proposal of a DDREF value of 2 was generally accepted, and it was re-affirmed in 2007 when ICRP emphasised, in its latest recommendations, that this value ‘should be retained for radiological protection purposes’ (ICRP, 2007). However, around that time (in 2006), the Committee on the Biological Effects of Ionizing Radiation (BEIR VII) of the US National Academy of Sciences (NAS) came to a somewhat lower point estimate of 1.5 based on a combination of information from animal and human data (NAS, 2006). UNSCEAR (2006) suggested that DDREF should not be used at all, and a linear-quadratic (LQ) dose–response relationship should be relied upon instead to analyse the data from the A-bomb survivors. Since then, the trend has continued towards lower proposed values of DDREF. For example, the World Health Organization (WHO, 2013) used a value of 1 in its recent report on the health effects after the Fukushima accident, and the German Commission on Radiological Protection (SSK, 2014) stated recently that they no longer consider ‘justifications of the use of the DDREF in radiological protection as being sufficient’. A more detailed review on the historical development of DDREF is given in Rühm et al. (2015).

1.3. Methodological considerations

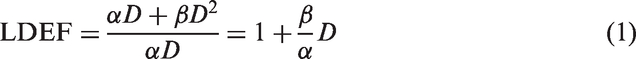

Although early radiation damage at the DNA level, such as the induction of DNA double-strand breaks (DSBs) visualised by γH2AX foci, often shows a linear dependence on dose after acute exposure to low-LET radiation, more complex damage such as chromosome aberrations usually shows an LQ dose–response relationship. The LQ model includes a term ‘linear in dose’ with a proportional constant

When a certain dose of low-LET ionising radiation is delivered in a number of fractions rather than acutely within one short fraction, the exposed cells might be able to repair the damage if there is sufficient time between two consecutive fractions. The dose–response of the second fraction may start in a similar way as that of the first fraction (i.e. with the assumed linear slope in the LQ dose model). In the limit of chronic exposure (which can be considered as an infinite number of short fractions with no breaks in between), the resulting dose–response curve is linear with a slope corresponding to the

Based on these and other considerations, ICRP had proposed to combine LDEF and DREF to one DDREF (see above). It is noted, however, that there are indications that the

2. MOLECULAR AND CELLULAR BIOLOGICAL CONSIDERATIONS

Among radiogenic diseases, cancers and hereditary effects are currently considered to be of most importance, and are included in the current ICRP approach to calculation of low-dose radiation detriment. However, in the future, this approach may have to extend to other conditions, such as circulatory diseases, if the risk at low dose becomes well established. Current evidence (e.g. UNSCEAR, 2010, 2012) places greatest emphasis on gene mutations and chromosomal aberrations arising from DNA damage as the main mechanism by which radiation exposure contributes to increase the incidence of cancers and hereditary effects. One prediction, which follows from the proposition that gene and chromosomal mutations are the main contributor to radiation carcinogenesis, is that DNA repair genes (and, particularly in the case of ionising radiation, DSB repair genes) will modify radiation cancer risk. There is evidence that genes such as

The information relevant for risk estimation at doses less than those where direct human evidence is available comes from studies on induction and repair of DSBs, gene mutations, chromosomal aberrations, and thresholds for cell-cycle checkpoint activation and apoptosis. The magnitude of DDREF values derived from chromosome aberration studies is not large, generally indicating values of approximately 4. There are sound data indicating that DNA damage responses and mutational processes operate at low doses (down to 20 mGy) and dose rates (down to 1 mGy day−1, equivalent to approximately 0.04 mGy h−1) as they do at higher doses and dose rates. There are, however, pieces of evidence that may indicate that responses over a wide range of dose are non-linear. For example, some studies have been interpreted to suggest that the formation of protein foci around DSBs may be supralinear at low doses (e.g. Beels et al., 2009, 2010; Neumaier et al., 2012). Furthermore, several studies have indicated that DSB repair as monitored by foci of chromatin proteins is slower or incomplete following low-dose exposures (e.g. Rothkamm and Löbrich, 2003; Grudzenski et al., 2010; Ojima et al., 2011). Some cell-cycle checkpoints have relatively high thresholds for activation. The G2/M checkpoint, for example, is not activated at doses below 200 mGy (low-LET exposure), and is estimated to require the presence of 10–20 DSBs for activation (Löbrich and Jeggo, 2007). At the molecular level, there has been much interest in patterns of gene expression at high and low doses and dose rates, and their similarity or difference. While there can be differences in gene expression following exposure at high and low doses and dose rates (e.g. Ghandhi et al., 2015), some genes respond over all doses and dose rates, notably p53-responsive genes (Manning et al., 2013, 2014; Ghandhi et al., 2015). It is therefore important to develop an understanding of how changes in gene expression relate to disease, especially as modifications are usually assessed within hours or perhaps a few days following exposure. While these studies indicate that the magnitude of any DDREF value is endpoint-dependent, and developing a value for use in general radiological protection is problematic and highly dependent on judgements on the processes critical for the development of cancer and mutations following radiation exposure, one may conclude that cellular data tend to support the application of a DDREF to estimate risk at low doses.

One critical point is that much time elapses between the induction of gene mutations/chromosomal mutations, alteration of gene expression, etc. and the clinical presentation of cancer. Many processes are likely to affect and modulate the development of disease following the early induction of mutations or other cellular/molecular alterations. Rarely is it possible to link early post-irradiation events to disease, although this may be possible in some animal models (e.g. Verbiest et al., 2015).

Considering the cellular and molecular data relevant to LDLDR risk extrapolation, it is concluded that key challenges remain to identify the biological mechanisms that lead to disease following radiation exposure, to understand their dose and dose-rate responsiveness, and to identify the processes that may modulate the rate and frequency of progression to clinically manifest disease. All of these factors will be relevant to evaluation of DDREF from a mechanistic perspective.

3. EVIDENCE FROM ANIMAL STUDIES

When NAS (2006) prepared their BEIR VII report, their analyses of animal data relied largely on the dataset produced at the Oak Ridge National Laboratory, TN, USA. However, that type of analysis can now be based on much larger datasets because databases for irradiated animal data (not used previously by the BEIR VII Committee) have been set up recently in the USA (Wang et al., 2010; Haley et al., 2011) and Europe (Gerber et al., 1996; Tapio et al., 2008; Birschwilks et al., 2011).

For example, a recent analysis included data from 28,289 mice in 91 treatment groups from 16 studies (Haley et al., 2015). Inclusion criteria were: external radiation exposures to low-LET radiation (either x rays or γ rays), with a range of dose rates from 0.001 Gy min−1 (and 16.6 mGy fraction−1) to 4 Gy min−1 (total dose of 1 Gy fraction−1); in all cases, the total dose was no more than 1.5 Gy, and there were at least three distinct treatment groups per stratum. It should be noted that while these total dose and dose-rate limits fit the BEIR VII conditions, other agencies have their own rules for DDREF application [for instance, UNSCEAR (2012) decided that DDREF was to be entertained only for total doses below 0.1 Gy and dose rates below 6 mGy h−1]. In all cases, digitised data on treatment and life span were confirmed by crosschecking with primary literature. In performing this analysis, it appeared that: (a) protracted exposures induce less risk for life shortening than acute exposures and to a larger extent than the value of 1.5 estimated by BEIR VII for DDREF would suggest; and (b) the LQ dose–response model that BEIR VII used did not fit the observed data. Instead, both acute and protracted exposures appeared to have approximately linear dose–responses at total doses between 0 and 1.5 Gy, albeit with different slopes [see Haley et al. (2015) for more details].

Next, the animal dataset was altered to include some additional datasets with exposures as high as 4 Gy that match the highest doses considered in some analyses of the LSS cohort data (Ozasa et al., 2012). Furthermore, for this work, only those datasets where both acute and protracted radiation exposures occurred were selected, or else protracted exposures with different dose rates. Thus, this second analysis included 11,528 mice in 115 treatment groups from eight studies. Using this dataset and a linear model that closely mirrors that used to estimate risk from the A-bomb survivor data, it transpired that: (a) protracted exposures induced approximately two-fold less risk of life shortening than acute exposures (specifically, DREF was estimated to be 2.1 with a 95% confidence interval from 1.7 to 2.7); (b) no evidence was found that DREF limited to a smaller total dose range (e.g. 0–3 Gy) would be significantly different (exclusion of animals or treatment groups that showed signs of tissue effects did not lead to significantly different outcomes of this analysis); and (c) life shortening associated with both acute and protracted exposures shows linear dose–response relationship, but the slopes of these curves are different. Nevertheless, it is important to emphasise that although these linear models describe the data better than the LQ model, they are merely a convenient approximation.

Together, these results demonstrate the need for a systematic analysis of as many animal datasets as possible, with varying dose ranges and dose-rate ranges, and for various sets of outcomes. Animals from different species should also be investigated in such analyses. The described results also demonstrate that the huge set of animal data that is now available will be a valuable source of information for the current re-evaluations of DDREF to be applied on human data from acute exposures, such as those from the A-bomb survivors.

4. EVIDENCE FROM EPIDEMIOLOGICAL STUDIES

Epidemiological studies of cancer risk after LDLDR radiation exposures complement the animal studies that compare effects of radiation exposures at high and low dose rates. Apart from studies on the A-bomb survivors of Hiroshima and Nagasaki, Japan, which represent studies on high-dose-rate exposures (Ozasa et al., 2012; Hsu et al., 2014) (see also Section 4.3), epidemiological studies characterised by exposure scenarios involving low-dose-rate exposures typical for the cohort of nuclear workers exposed to chronic radiation have been studied intensively in the past. For example, a study was performed to combine data on nuclear workers from 15 countries worldwide (Cardis et al., 2007), while an updated follow-up of nuclear workers from three countries (USA, UK, and France) was published recently (Richardson et al., 2015; Hamra et al., 2016). Additionally, the workers exposed at Mayak Production Association (PA), the first Russian nuclear enterprise, and the population of the Techa River region, which includes individuals exposed to radiation due to radioactive releases from Mayak PA in the river, are also an important source of information on the influence of radiation dose and dose rate on health effects. Both of these cohorts have a number of key strengths such as: large size; long follow-up periods; individual estimates of doses from external and internal exposure; heterogeneity by sex, age, and ethnicity; and known vital status and causes of death. Moreover, for the majority of Mayak PA workers (approximately 95%), complete information on both incidence and mortality, initial health status, and non-radiation factors are available. Results of epidemiological studies of these two cohorts performed over recent years provide strong evidence for increased risk of solid cancers (Schonfeld et al., 2013; Sokolnikov et al., 2015), leukaemia, and non-cancer effects associated with both external and internal radiation exposures over prolonged periods delivered at low dose rates.

4.1. Major cohorts for epidemiological LDLDR studies

Recently, a number of LDLDR epidemiological studies have provided risk estimates that can potentially be used to estimate DREF by comparing the quantitative risk estimates from LDLDR studies with matched LSS estimates of risk. Based on compilations from the literature, DREF is being evaluated from available LDLDR data for total solid cancer mortality, and for some major cancer subtypes such as breast, lung, colon, stomach, and liver. More recently, it was decided that, although the present review focusses on solid cancers, leukaemia will be included in the list of cancers to be studied in a later phase of the project.

As whole-body irradiation can potentially affect all organs, an analysis of total solid cancers (hereafter just ‘solid cancers’) provides an integrated estimate of radiation risk. As the total number of solid cancers will be much larger than that for any individual tumour site, it affords a risk assessment with greater statistical power and precision than assessments for individual organs.

For each type of cancer, a systematic literature search in PubMed was performed in August 2015, and supplemental reference ascertainment methods were also used to find primary epidemiological studies with dose–response associations covering the period January 1980 – June 2015. The new joint analysis of US, French and UK nuclear workers is also being included in the analysis (Richardson et al., 2015). Search results were limited to cohort or nested case–control studies on cancer risks associated with ionising radiation in environmental, occupational, or emergency situations. The final selection of studies also filtered out overlapping data in individual and pooled studies as far as possible, and used the most recent data available for each study. This comprehensive search for studies that had dose–response analyses of solid cancers (or of all cancers except leukaemia) was conducted to reduce/eliminate study selection bias in the risk comparison. Ecological studies (e.g. Tondel et al., 2011) or reports of only (or mostly) childhood cancers (e.g. Kendall et al., 2013; Mathews et al., 2013) were not included. For solid cancer mortality, 20 independent LDLDR studies with dose–response-based risk estimates have been identified to date, which represent approximately 960,000 individuals and over 17 million person-years of follow-up, a collective dose of 36,000 person-Sv, and 33,000 solid cancer deaths. All except four studies had mean doses below 50 mSv, and most were worker studies, other than two studies based on environmental exposures (Yangjiang, China and Techa River; Tao et al., 2012; Schonfeld et al., 2013). Exposures were to low-LET radiation, except for four studies which had both external γ exposures and significant high-LET internal exposures [Mayak and Rocky Flats plutonium workers (Cardis et al., 1995; Sokolnikov et al., 2015), and Port Hope and German uranium-processing workers (Zablotska et al., 2013; Kreuzer et al., 2015)], requiring the authors to statistically factor out the internal exposure contributions to risk. Miner studies were not considered due to the dominance of exposure to radon progeny and paucity of dose–response data for external exposure.

Of the 20 dose–response-based LDLDR studies, if one examines the 11 studies that had at least 250 solid cancer deaths, it is notable that nine of these studies had positive risk coefficients, although only four were statistically significant in the positive direction; this is not surprising as individual LDLDR studies typically have low statistical power. It is noted that most of the studies assume a linear dose–response relationship when risk coefficients were deduced. Meta-analyses of risk coefficients are underway in the available studies in comparison with the A-bomb LSS risk coefficients for the subsets of LSS individuals with comparable composition by sex, age at exposure, and age at observation. The overall results for mortality studies will be reported, augmented with sensitivity and methodological analyses, and analyses that include incidence data.

Although an analysis of total solid cancer risk after LDLDR exposures provides a broad assessment of DREF, it may represent heterogeneous DREFs for various tumour sites. Radiation effects for tumour sites may differ because of biological diversity in genetic pathways, epigenetic influences, and tissue and metabolic co-factors. Various environmental or lifestyle risk factors may modify radiation risk for certain cancer types but not for others: for example, smoking effects may modify the radiogenic risk of lung cancer, and reproductive factors may modify the radiogenic risk of breast cancer. The impact of modifying factors may be dependent on dose. To get an overview of variations in low dose risk, LDLDR studies providing estimates for breast, lung, colon, stomach, and liver cancers were reviewed. Meta-analyses will be conducted for these sites to estimate DREFs, and examine the degree of variation in DREF among tumour sites. LDLDR epidemiological studies have various limitations as they are all observational and not experimental. For individual LDLDR studies, uncertainties and, possibly, bias may be contributed by factors such as dose uncertainties; incomplete cancer ascertainment; variations in health surveillance; and lack of information on lifestyle habits, occupational or socio-economic status, and potential disease risk factors. The meta-analysis estimate of DREF from LDLDR studies is not very precise. It nevertheless provides the most direct evidence regarding DREF for human radiation exposure, which is important because DREF represents an average for the human population that is highly heterogeneous with respect to innate susceptibility and exposure co-factors. Experimental studies typically do not mimic that heterogeneity. Ultimately, judgements regarding DREF will need to integrate information about associated biological mechanisms, experimental studies of dose and dose-rate factors in controlled animal experiments, and epidemiological data.

4.2. Methodology for meta-analyses to deduce DREF values by comparing cancer risks associated with fractionated and acute doses of ionising radiation

The purpose of the meta-analyses described here is to directly compare cancer risks associated with ionising radiation from two different non-medical radiation exposure modalities. The methodology used follows that described in Jacob et al. (2009). Exposures considered are at low dose rates and low or moderate cumulative doses (mostly below 100 mGy mean cumulative organ dose) delivered at low dose rates, or doses covering a wider range than this (under 4 Gy organ dose) but delivered acutely. Whereas cancer risks associated with low or moderate doses from the fractionated exposure mode are the most relevant to modern radiological protection, there are well-established radiation-associated cancer risks for the acute exposure mode from the LSS on the cohort of the Hiroshima and Nagasaki A-bomb survivors. In order to quantify the overall differences in all solid cancer and site-specific cancer risks (lung, breast, stomach, liver, and colon) from these two exposure modalities, a meta-analysis has been initiated for each type of cancer, mainly considering cancer mortality and cancer incidence in separate meta-analyses.

For each of the studies in the final selection (Section 4.1), a set of information related to the radiation risks was extracted, i.e. type of dose reported (e.g. colon dose, skin dose, etc.), type of risk measure reported [e.g. usually the excess relative risk (ERR) per unit dose – or some measure that could be converted to ERR], proportion of males, length of follow-up, age at first exposure, and age at the end of follow-up. With this information, it was possible to compute ‘matching’ cancer risks in subcohorts of the A-bomb survivors with matching distributions according to sex, age at exposure, grouping of cancer types, and length of follow-up.

The ratio

The study is ongoing, and the final results obtained on total solid cancers and site-specific tumours will be published soon. A similar study is also currently underway for leukaemia.

4.3. Analysis of dose–response relationship among the A-bomb survivors to deduce LDEF values

The dose–response for most cancer sites in the LSS cohort is well described by a linear dose–response (Little and Muirhead, 1996, 1998; UNSCEAR, 2006; Preston et al., 2007; Ozasa et al., 2012). In the LSS, the major exceptional sites in this respect are leukaemia and non-melanoma skin cancer (Little and Charles, 1997; Ron et al., 1998; Preston et al., 2007). When all solid cancers are analysed together, there is no evidence of significant departure from a linear dose–response in the latest LSS cancer incidence data, although there are suggestions of modest upward curvature in the latest LSS mortality data (Preston et al., 2007; Ozasa et al., 2012). The evidence for breast cancer, where there is reasonable power to study the risks at low doses, suggests that the data are most consistent with linearity (Preston et al., 2002).

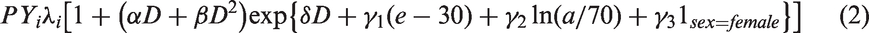

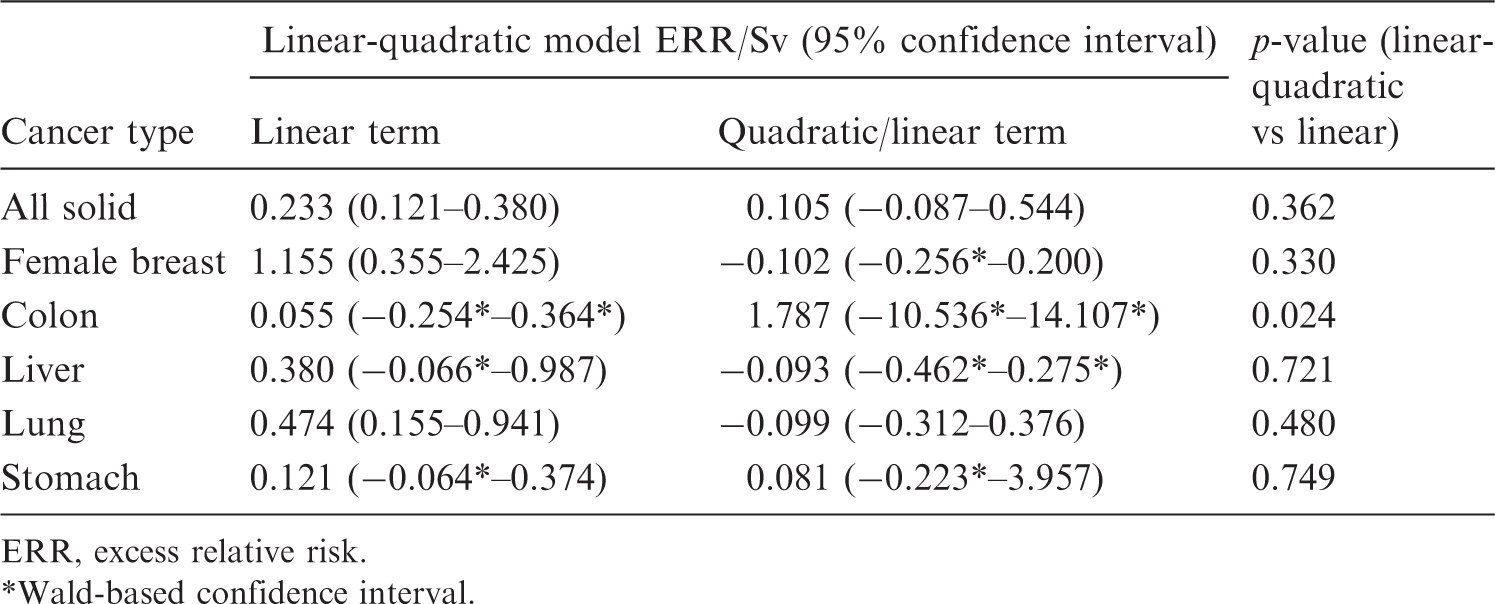

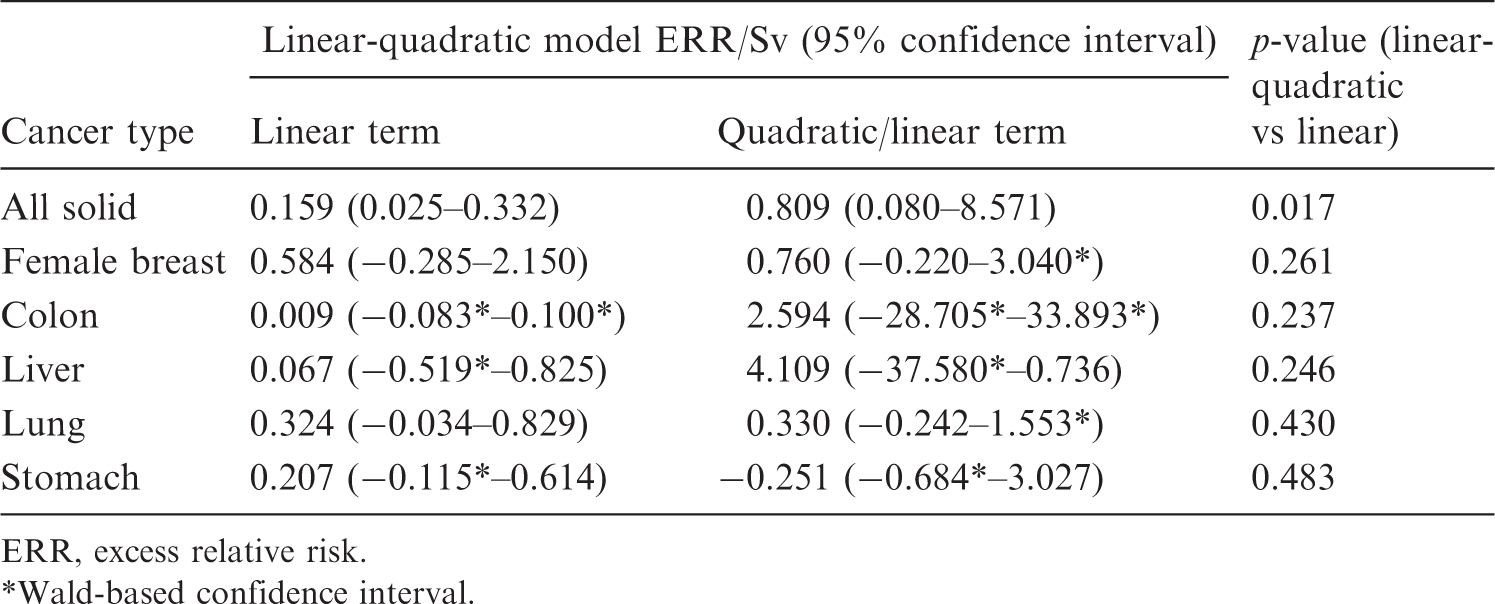

Preliminary analysis of the latest version of the solid cancer mortality dataset for the LSS cohort has been conducted (Ozasa et al., 2012). The organ dose used for all solid cancers and for the remainder category (all solid cancers excluding breast, colon, lung, and stomach) was that to the colon, and the appropriate organ dose was used otherwise. In all cases, a relative biological effectiveness of 10 was assumed for neutrons, as used by Ozasa et al. (2012). All ‘nominal’ organ doses were calculated using the latest dosimetry system, the so-called ‘DS02 dosimetry’ (Young and Kerr, 2005). Individual data were not available, so all analyses used the publicly available stratified data. The stratification employed is very similar to that used by Ozasa et al. (2012), and is defined by time since exposure, age at exposure, attained age, city, sex, ground distance category, and (measurement-error adjusted) dose. Poisson disease models were used. The models that are used in this paper are functions of the mean organ dose,

Fit of linear-quadratic model to Japanese Life Span Study solid cancer mortality data of Ozasa et al. (2012), full dose range.

ERR, excess relative risk.

Wald-based confidence interval.

Fit of linear-quadratic model to Japanese Life Span Study solid cancer mortality data of Ozasa et al. (2012), respective organ dose range < 2 Sv.

ERR, excess relative risk. Wald-based confidence interval.

5. OUTLOOK AND CONCLUSIONS

Since the early times of radiological protection, the problem of how to extrapolate from high doses and dose rates, where sound data on the health and biological effects of ionising radiation exist, down to low doses and dose rates which are relevant in radiological protection of workers and the public, has been a controversial issue. The current situation, where a number of international bodies such as ICRP, UNSCEAR, NAS, WHO, and SSK have come to somewhat different conclusions with regard to the numerical value of DDREF and its application, highlights the need to revisit the issue.

The deepening in our understanding of the radiobiological mechanisms of radiation action has revealed a number of processes with non-linear dose–response curves at low doses, and bystander effects where cells are affected although not hit by any ionising particle, and these complicate the situation. Currently, it is difficult to judge how these processes contribute to carcinogenesis in humans, which takes place at a much higher level of organisation than the level of cellular and tissue organisation involving many unknown parameters, and which continues for many years or even decades after the initial radiation exposure.

In the past, experimental data on animal models from available databases served as a major input in deriving numerical values for DDREF. Through the current availability of newer and larger databases and tissue banks, more data are now available on historical animal experiments than in the past. Task Group 91 recommends that this new infrastructure should be used, with particular emphasis on investigating low-dose and low-dose-rate effects in animals. Similar evaluation of data available for different animal species (mice, rats, dogs, etc.) might allow for the investigation of interspecies variability in low-dose and low-dose-rate effects, thus helping answer questions related to the extrapolation of results from animal models to humans.

The continuous follow-up of human cohorts exposed to ionising radiation allows for continuous improvement in deduction of risk coefficients for the process of radiation-induced human carcinogenesis, the outcome most closely related to those endpoints relevant for radiological protection of humans. Task Group 91 plans to support a meta-analysis of the most recent results of radioepidemiological cohorts, comparing those exposed to high dose rates (e.g. A-bomb survivors) and low dose rates (e.g. nuclear workers, medically exposed cohorts, Techa River population, Chernobyl clean-up workers, and populations living in high background radiation areas) of ionising radiation.

Some of the available results on radiation-induced biological effects and carcinogenesis in animals may suggest that dose effects and dose-rate effects should be treated separately, meaning that values for LDEF and DREF should be deduced independently before any decision on a combined factor is made. This may imply that, although ICRP does not recommend the application of a DDREF on leukaemia data in its latest recommendations (ICRP, 2007), the proposed new analysis should also be performed for leukaemia even if the corresponding shape of the dose–response curve follows an LQ behaviour. This has already been initiated by Task Group 91, as well as an evaluation of the radiobiological evidence for treating dose and dose-rate effects separately.

Finally, it is noted that while this paper focusses on DDREF, the overall radiological protection concept recommended by ICRP includes a number of further issues that may need to be revisited; for example, calculation of detriment as proposed by ICRP in its latest recommendations (ICRP, 2007) includes numerical approaches to quantify the transfer of risk across populations, quality of life, years of life lost, etc. Additionally, the numerical values recommended (e.g. radiation and tissue weighting factors), and whether or not one should include detriment from radiation-induced non-cancer diseases, need to be addressed. Therefore, these issues should also be revisited in parallel to the current re-analysis of DDREF.