Abstract

Many scientists are making the case that humanity is living in a new geological epoch, the Anthropocene, but there is no agreement yet as to when this epoch began. The start might be defined by a historical event, such as the beginning of the fossil-fueled Industrial Revolution or the first nuclear explosion in 1945. Standard stratigraphic practice, however, requires a more significant, globally widespread, and abrupt signature, and the fallout from nuclear weapons testing appears most suitable. The appearance of plutonium 239 (used in post-1945 above-ground nuclear weapons tests) makes a good marker: This isotope is rare in nature but a significant component of fallout. It has other features to recommend it as a stable marker in layers of sedimentary rock and soil, including: long half-life, low solubility, and high particle reactivity. It may be used in conjunction with other radioactive isotopes, such as americium 241 and carbon 14, to categorize distinct fallout signatures in sediments and ice caps. On a global scale, the first appearance of plutonium 239 in sedimentary sequences corresponds to the early 1950s. While plutonium is easily detectable over the entire Earth using modern measurement techniques, a site to define the Anthropocene (known as a “golden spike”) would ideally be located between 30 and 60 degrees north of the equator, where fallout is maximal, within undisturbed marine or lake environments.

Keywords

Seventy years ago—at 5:30 a.m. on July 16, 1945—the world’s first nuclear device exploded at the Trinity Test Site in what was then the Alamogordo Bombing and Gunnery Range in New Mexico. After an intense flash of light and heat, and a roaring shock wave that took 40 seconds to reach the closest observers, a fireball rose into the sky, forming a mushroom cloud 7.5 miles high. J. Robert Oppenheimer later wrote that he and other Manhattan Project scientists who had gathered to watch the test “knew the world would not be the same.” The “nuclear age” had begun (Ackland and McGuire, 1986; Eby et al., 2010; Groves, 1962).

Arguably, Trinity was also the beginning of something even bigger: a new geological epoch (Zalasiewicz et al., 2015). Human activities have had such a great impact upon the Earth that many researchers suggest we are no longer living in the Holocene Epoch (a term describing the most recent slice of geological time that literally means “entirely new”), but instead within a brand-new time unit: the Anthropocene, from the Greek words for “human” and “new.”

Since 2009, a small group of us, composed of geoscientists and other experts from across the globe, have assembled to develop a proposal for this new terminology and to make recommendations to the official body—known as the International Commission on Stratigraphy—that determines geological time units. To accomplish this, our panel, the Anthropocene Working Group, has not only been examining the evidence for the Anthropocene’s existence but attempting to determine the duration of this potential new unit (Zalasiewicz et al., 2012). The group will also make recommendations about where the Anthropocene, if it does exist, fits into the hierarchy of geological time: period, epoch, or age (perhaps even within the Holocene Epoch).

Many scientists agree that the Earth has left the Holocene behind and is now in the Anthropocene, but there is less agreement about when the Anthropocene began. Some researchers make good arguments for dating the beginning of this new epoch to the advent of agriculture, or to the increase in fossil fuel consumption that ushered in the Industrial Revolution, or some other major shift that left its mark on the geological record. One recent paper argues for either 1610 (when atmospheric carbon dioxide levels dipped after the arrival of Europeans brought death to about 50 million native people in the Americas) or 1964 (based on peak carbon 14 fallout signatures) as potential kickoff dates (Lewis and Maslin, 2015). But if geoscientists want to establish a starting point for the Anthropocene, Trinity and the nuclear bombings and tests that followed it from 1945 to the early 1960s created an extremely distinctive radiogenic signature—a unique pattern of radioactive isotopes captured in the layers of the planet’s marine and lake sediments, rock, and glacial ice that can serve as a clear, easily detected bookmark for the start of a new chapter in our planet’s history.

Does it really matter what epoch we are living in? It’s obviously important to geologists and other Earth scientists, who use the geological timescale to measure, describe, and compare events and changes that happened in our planet’s past. For many people outside these fields, though, the potential designation of a new epoch has political overtones. As an editorial in a leading scientific journal observed a few years ago, the Anthropocene “reflects a grim reality on the ground, and it provides a powerful framework for considering global change and how to manage it” (Nature, 2011).

Although the Anthropocene has, in the public sphere, become closely associated with climate change and particularly the burning of fossil fuels, it is much bigger than that. We and other scientists who are considering whether a new epoch has begun—and if so, how best to mark its onset—are examining a host of environmental changes wrought by humans, from the domestication of plants and animals to the nuclear arms race. Public discussion of these changes can only lead to a growing awareness that humans have left an enormous footprint on the Earth—and not just a carbon one—and may help increase public understanding of how a warming climate relates to other momentous global changes.

Origins of the Anthropocene

In the geological timescale used by Earth scientists, the Holocene Epoch began about 11,700 years ago, after the planet’s last glacial phase came to an end. When the Anthropocene concept (Crutzen, 2002; Crutzen and Stoermer, 2000) was initially proposed, the Industrial Revolution was suggested as its starting point. The reasoning was that industrialization’s accelerated population expansion, technological changes, and economic growth caused increased urbanization, mineral exploitation, and crop cultivation; these factors in turn elevated atmospheric carbon dioxide and methane concentrations enormously (Waters et al., 2014; Williams et al., 2011).

Alternatively, the proponents of an “early Anthropocene” or “Palaeoanthropocene” interval that preceded the Industrial Revolution (Foley et al., 2013) emphasize that this interval had a diffuse beginning, with signatures associated with the onset of deforestation, agriculture, and animal domestication; some scientists propose that these changes broadly coincide with the beginning of the existing Holocene Epoch (Smith and Zeder, 2013).

But there is growing evidence for another, later starting point for the Anthropocene: the range of globally extensive and abrupt signatures during the mid-20th century (Waters et al., 2014) that coincide with the “Great Acceleration” of population growth, economic development, industrialization, mineral and hydrocarbon exploitation, the manufacturing of novel materials such as plastics, the emergence of megacities, and increased species extinctions and invasions (Steffen et al., 2007, 2015). Some researchers even suggest that the onset of the Anthropocene is marked by a “diachronous” boundary in sediments—one in which a boundary between human-modified and “natural” ground can be found that is of different ages at different locations—and thus is not a geological time unit (Edgeworth et al., 2015).

The standard accepted practice for defining geological time units during the current eon (which began about 541 million years ago) is to identify a single reference point (or “golden spike”), at a specific location, that marks the lower boundary of a succession of rock layers as the beginning of the time unit. This internationally agreed-upon physical reference point is representative of the sum of environmental changes that justify recognition of the time unit—the appearance or extinction of a fossil species, say, or a geochemical signature left by a massive volcanic eruption (Smith, 2014). For example, the boundary between the Cretaceous and Paleogene Periods has as its golden spike the base of an iridium-enriched layer of rock in El Kef, Tunisia—a marker for the debris spewed into the atmosphere when a huge meteorite struck the Earth and for the mass extinctions of dinosaurs and other creatures that followed that event.

The mid-20th century saw substantial changes to living things and their ecological relations—also known as biotic changes (Barnosky, 2014)—but those changes have not yet been well enough documented from the stratigraphic perspective to be the primary marker for the Anthropocene. The 1945 detonation of the Trinity device would make a well-defined, historically important reference point, but a single detonation lacks a clear signature in the global geological record, even though nuclear testing converted sand into a glass-like substance known in the United States as “trinitite” (Eby et al., 2010) and in Kazakhstan as “kharitonchik.” This may well be considered a durable signature in the stratigraphic record, but one that is very localized in extent.

In contrast, the fallout from the numerous thermonuclear weapons tests that began in 1952 deposited large amounts of radionuclides in the environment and left a well-defined radiogenic signature. That level would provide an effective global signal that marks the beginning of the Anthropocene, in comparison to using the Trinity Test as a marker. The difference between the two suggested levels is just seven years, and represents only fine-tuning of a generally mid-20th-century boundary; ultimately, the choice between them will depend on analysis and debate of the whole ensemble of stratigraphic evidence currently being assembled.

Sources of human-made radiation

Admittedly, the fallout from nuclear testing is not the only source of radiation that would show up in the geological record. Naturally radioactive substances have increased worldwide, due to the mining of ore and the burning of coal and other fossil fuels, initially beginning during the Industrial Revolution and then rapidly increasing after 1945. Such increases, however, do not provide a clear marker because the radioactive substances are not uniquely anthropogenic in origin and may be locally abundant for other, natural reasons.

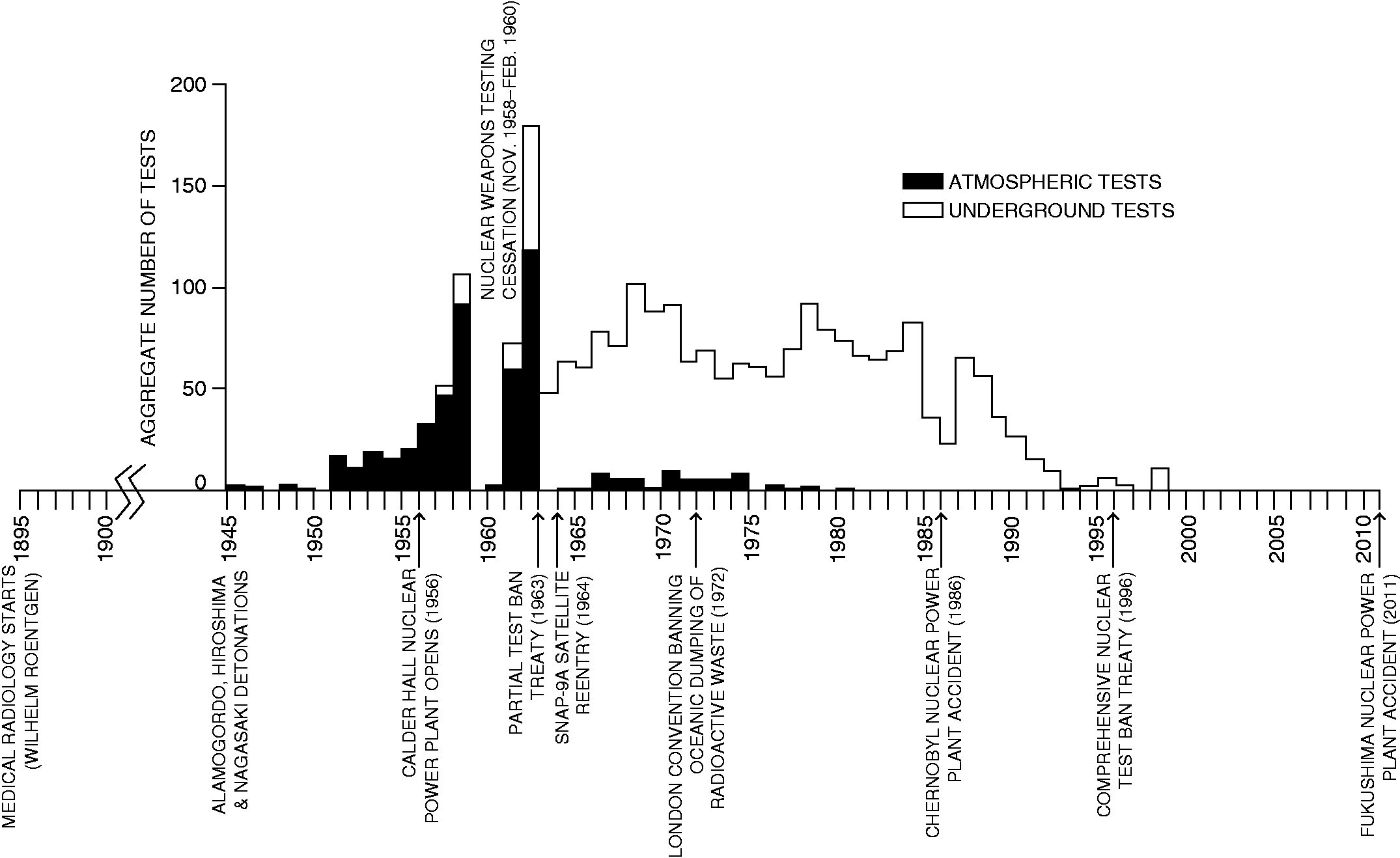

In addition to these sources, the medical use of radiation represents the earliest anthropogenic source of radiation exposure (see Figure 1). Diagnostic medical examinations currently contribute the largest dosage after natural exposure (UNSCEAR, 2000) but they target individuals, not the environment, so their impact in the geologic record would be small. Medical waste incinerators and uncontrolled disposal of equipment can produce local contamination, but they are not useful as widespread stratigraphic signatures.

Timeline of anthropogenic radiogenic signatures: Frequency of atmospheric and underground nuclear weapons testing

Besides these sources, there are the contributions of nuclear power. The first commercial nuclear power plant, at Calder Hall in northern England, opened in 1956. Nuclear power grew rapidly from 1970 to 1985, but the growth stopped after the 1986 Chernobyl accident. The 2011 Fukushima disaster produced similar hemispheric fallout, though with less discharge. Radioactive releases from reprocessing plants, which recover uranium and plutonium from spent fuels, are typically greater than for nuclear power plants (Jeandel et al., 1980). Such controlled releases, mainly uranium series isotopes from sites such as the Sellafield (United Kingdom) and La Hague (France) reprocessing plants, peaked in the mid-1970s (Aarkrog, 2003). Radioactive waste dumping caused localized contamination mainly in the Northeast Atlantic until 1982 and the Kara Sea near Novaya Zemlya and the Sea of Japan until 1993, despite the London Convention of 1972 banning this practice (Livingston and Povinec, 2000). The accidental discharges at Chernobyl and Fukushima and controlled releases at Sellafield from 1952 to the mid-1980s contributed only small amounts (UNSCEAR, 2000).

The disintegration of the navigational satellite SNAP-9A in 1964 off Mozambique produced significant additional atmospheric input of plutonium 238 (shown in Figure 1) and provided important dating information in the southern hemisphere (for example, see Hancock et al., 2014; Koide et al., 1979). But the future usefulness of plutonium 238 as a signature will be limited by its half-life of 88 years.

All told, such discharges compose only a small, regional component of the total anthropogenic radionuclide budget, so they are poor candidates for defining the beginning of the Anthropocene. In contrast, atmospheric nuclear weapons testing, or wartime usage in the case of Hiroshima and Nagasaki, resulted in regional to global distribution of fallout. Most anthropogenic radionuclides in the environment today, locked in soils and sediments, originated from atmospheric testing that took place over a 35-year period from 1945 to 1980. This fallout started abruptly and shows distinct, globally recognizable phases, such as a rapid decline after the Partial Nuclear Test Ban Treaty of 1963. (Underground tests, on the other hand, had much lower yields and releases to the atmosphere.) The fallout signature is locally augmented by accidental discharges from power stations, reprocessing plants, and satellite burn-up on atmospheric reentry.

The signature of nuclear weapons testing

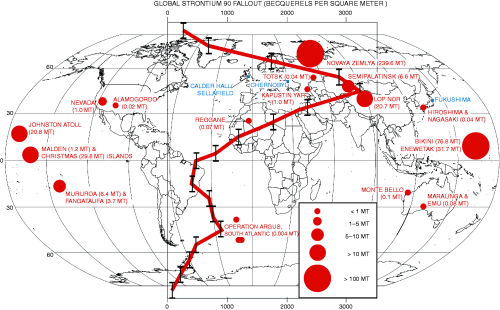

The case for using the fallout from nuclear weapons testing as a marker for the onset of the Anthropocene is strong. There were 2,053 nuclear weapons tests from 1945 to 1998 (Figure 1), mainly in central Asia, the Pacific Ocean, and the western United States (see Figure 2); 543 were atmospheric tests. Test frequency peaked in 1951–1958 and especially 1961–1962, interrupted by a moratorium (UNSCEAR, 2000). Underground tests occurred at the rate of 50 or more per year from 1962 to 1990. From 1945 to 1951, the tests involved fission (“atomic”) weapons producing fallout along test site latitudes in the lowest layer of the atmosphere (Aarkrog, 2003). Larger fusion (“thermonuclear” or “hydrogen”) weapons tests, starting in 1952, produced higher-altitude fallout dispersed over the entire Earth surface, with a marked peak in fallout yields in 1961−1962. Radionuclide fallout rapidly declined during the late 1960s, when tests were mainly underground, and effectively ceased in 1980.

Distribution and total fission and fusion yields, in megatons, of atmospheric nuclear weapons tests (red); and location of significant nuclear accidents/discharges (blue); with superimposed latitudinal variation of global strontium 90 fallout, in becquerels per square meter. Note that two sites, Novaya Zemlya in Russia and the French Pacific atolls, contributed significantly to the total fallout

The geographical distribution of radionuclides associated with fallout has been measured for the commoner components, such as strontium 90 (Figure 2) and cesium 137; comparable measurements for plutonium 239 and 240 are not available. Strontium 90 fallout is concentrated in the mid-latitudes (30–60 degrees) of each hemisphere (Figure 2), and is smallest at the poles and equator (Aarkrog, 2003; Livingston and Povinec, 2000). Approximately 76 percent of all radionuclide fallout was in the northern hemisphere, where most testing occurred (Livingston and Povinec, 2000).

The best radioactive markers for the Anthropocene

To define the Anthropocene boundary, radioactive isotopes should ideally be absent or rare in nature; have long half-lives that provide a long-lasting signature; have low solubilities and high reactivities so that they are less mobile and form a fixed marker in geological deposits; and be present in sufficient quantity to be easily detectable. The short half-lives of cesium 137, strontium 90, and tritium (a short-lived radionuclide of hydrogen associated with fusion bombs) limit their potential to serve as geologically “permanent” markers. Americium 241 has a deeper-water distribution than plutonium 239 and 240, being more readily transported to the bottom of deep oceans on sinking organic particles (Cochran et al., 1987; Lee et al., 2005), and so may be a more suitable signature in the comparatively undisturbed deep-water environments. However, the lower abundance of americium 241 and its 432-year half-life would make it useful for only one or two millennia. Radiocarbon (carbon 14) shows a “bomb peak” at 1963−1964 in most carbon reservoirs, including peat deposits, soil, wood, and coral (Hua et al., 2013; Reimer et al., 2004), and this has been proposed by Lewis and Maslin (2015) as a potential marker for the base of the Anthropocene. This spike will be detectable for about 50,000 years into the future, so it represents a long-lasting and important signature on land. However, the high solubility and low reactivity of carbon 14 in marine sediments (Jeandel et al., 1981; Livingston and Povinec, 2000) limit its suitability as a marker in the world’s oceans. Lewis and Maslin (2015), too, depart from normal stratigraphic practice in placing their suggested beginning Anthropocene level at the peak of the signature, rather than at its onset.

In contrast, the use of plutonium as a marker offers several advantages. Plutonium isotopes have low solubility and high particle reactivity, rapidly associating with clay or organic particles. The long half-life of plutonium 239 (24,110 years) makes it the most persistent artificial radionuclide, detectable by modern mass spectrographic techniques for about 100,000 years (Hancock et al., 2014). Plutonium 240, also a product of nuclear weapons testing, is less abundant and has a shorter half-life (6,563 years), and hence will decay below easily detectable levels sooner. Few direct plutonium fallout measurements were made during the 1950s and 1960s, but the historical pattern of plutonium isotope distribution is believed to be similar to that of the widely studied isotope cesium 137, especially after 1960 (Hancock et al., 2014).

What’s more, plutonium in the air is now dominated by atmospheric discharge from nuclear power plants and the re-suspension of plutonium-bearing soil particles, whereas during 1945–1960 the major source of plutonium was nuclear weapons testing. Since 1960, plutonium concentrations in the atmosphere have been decreasing almost exponentially following international test-ban treaties (Choppin and Morgenstern, 2001).

Another benefit to using plutonium is that this element mostly binds with decayed plant material and iron oxides on the surface of soil particles (Chawla et al., 2010), thus locking it in place; plants mobilize only a little plutonium through uptake by roots. But a drawback may be that plutonium can migrate in peat profiles and can probably move downward in organic-rich soils and sediments (Quinto et al., 2013), adding anthropogenic plutonium to layers that were deposited before nuclear weapons testing. This may limit the application of plutonium as an Anthropocene signature in acidic, organic-rich environments.

Within the oceans, plutonium sticks to the surface of suspended matter that falls through the water column, and consequently its distribution in the ocean is affected by currents and by movement of sediment (Zheng and Yamada, 2006). Plutonium in particular accumulates in coastal sediments, especially in low-oxygen, organic-rich environments (Livingston and Povinec, 2000) where few bottom-dwelling animals can survive, and so their movements do not disrupt the radioactive sediment layers. Plutonium is taken up by organic material in shallow sunlit levels of the sea and then released back into solution when reaching a depth of several kilometers (Livingston and Povinec, 2000). Coral skeletons thus become archives of plutonium contamination history, with plutonium concentrations in their growth bands reflecting the plutonium levels in the oceans (Lindahl et al., 2012).

Most “golden spikes” lie within marine sedimentary successions because they tend to be more continuous than terrestrial strata and contain traces of plant and animal life that can be easily matched with sediments at other sites. These criteria hold true for the potential use of a radiogenic signature. However, dynamic transport of radionuclides in the water column—through erosion, suspension, and re-sedimentation and via the biological food chain (Livingston and Povinec, 2000)—can modify the radiogenic signature to the point where it no longer represents a time series of discrete fallout events that can be precisely correlated from one location to the next.

Another consideration is the delay between detonation and eventual fallout, with radioactive debris residence times in the troposphere of about 70 days for small-yield detonations (Norris and Arkin, 1998) and 15 to 18 months in the stratosphere for large thermonuclear tests (Zandler and Araskog, 1973). Such delays account for why radionuclides such as cesium 137 reach peak abundance several years after the maximum introduction of fallout in the atmosphere. This delay is exacerbated in ocean environments as fallout is transferred through the water column to bottom sediment. From 1973 to 1997, the maximum plutonium signature in the Northwest Pacific descended from 500 meters to 800 meters below the ocean’s surface through gradual settling of the early-1960s fallout peak at an average rate of 12.5 meters per year (Livingston et al., 2001), and in the mid-latitudes more than 70 percent of plutonium 239 and 240 still remains in the water column (Lee et al., 2005). This suggests at least decadal residence times in oceanic waters, and a resultant smearing of potential plutonium signatures in marine sediments, such that annual resolution of sediment layers in the oceans is unlikely.

There are alternative environments in which a “golden spike” section could be located: for example, undisturbed lake deposits where fallout material has accumulated, or where sediment accumulation is too rapid for the layering to be disrupted by burrowing animals (Hancock et al., 2014). There is some precedent for this; the base of the Holocene Epoch is defined in a Greenland ice core (Walker et al., 2009), and this type of deposit might also be used to define the base of the Anthropocene. Such cores can provide annual records through layer-counting known Saharan dust events, volcanic eruptions, and the 1963 tritium horizon when abundances of this radionuclide peaked. Plutonium appears to be immobile in ice (Gabrieli et al., 2011; Koide et al., 1979), and high-resolution records of plutonium fallout have been measured in polar ice cores (Koide et al., 1977, 1979). With greater fallout of radionuclides in the mid-latitudes, alpine glaciers may be more suitable. Ice cores from Swiss and Italian alpine glaciers display the earliest rise of plutonium 239 fallout from 1954 to 1955, with subsequent peaks in 1958 and 1963 and a sharp decrease following the Partial Nuclear Test Ban Treaty in 1963 (Gabrieli et al., 2011). The world’s ice caps, however, are undergoing increased wastage through global warming, and so their potential to provide a long-term record may be limited.

A time of global changes

If we want to use the fallout from nuclear weapons to mark the beginning of the Anthropocene Epoch, the 1945 Alamogordo nuclear weapons test marks the start of the nuclear age but lacks a clear radiogenic signature in the global geological record. By comparison, the most pronounced rise in plutonium dispersal commences in 1952 and can provide a practical radiogenic signature for the beginning of the Anthropocene.

Although the Anthropocene may be a time of global warming, climate change itself is a poor geological indicator for a new epoch, at least when viewed over a recent timescale of decades. There is a significant time lag between the recent striking increase in atmospheric carbon dioxide levels and significant climate and sea level changes, with the latter effects not yet clearly expressed in geological deposits.

The advent of the nuclear age in itself does not merit the identification of a new geological epoch. The signature of weapons testing coincides with a range of human-driven changes that have produced stratigraphic signals that indicate a dramatic shift in the Earth system around the mid-20th century, which in total may be considered the distinctive feature of the Anthropocene. The fact that the plutonium 239 signature is coincident with other changes makes it a useful tool for defining the Anthropocene’s base.

A summary of the evidence and recommendations for defining an Anthropocene Epoch will be presented at the next International Geological Congress in 2016. The Anthropocene Working Group is still collecting evidence; nuclear sciences are likely to be critical to the definition of the Anthropocene, and contributions to this discussion would be welcomed.

Footnotes

Acknowledgements

The authors thank Irka Hajdas for her comments on radiocarbon signatures.

Funding

Colin Waters publishes with the permission of the Executive Director, British Geological Survey (BGS), Natural Environment Research Council, funded with the support of the BGS’s Engineering Geology science program. This research received no other specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author Biography

Ten of this article’s 11 authors are members of the 38-person