Abstract

This study focuses on an important yet often neglected topic in public personnel competency studies: competencies required for digital government. It addresses the question: Which competencies do civil servants need for data-driven decision-making (DDDM) in local governments? Empirical data are obtained through a combination of 12 expert interviews and 22 Behavioral Event Interviews. Our analysis shows that DDDM as observed in this study is a hybrid process that contains elements of both “traditional” and “data-driven” decision-making. We identified eight competencies that are required in this process: data literacy, critical thinking, teamwork, domain expertise, data analytical skills, engaging stakeholders, innovativeness, and political astuteness. These competencies are also hybrid: a combination of more “traditional” (e.g., political astuteness) and more “innovative” (e.g., data literacy) competencies. We conclude that local governments need to invest resources in developing or selecting these competencies among their employees, to exploit the possibilities data offers in a responsible way.

Keywords

Introduction

The importance of civil servants’ competencies—broadly defined as work-related knowledge, skills, traits, and motives—to cope with the challenges of the 21st century is increasingly emphasized in the public Human Resource Management (HRM) literature (Bonder et al., 2011; Getha-Taylor et al., 2016; Kruyen & Van Genugten, 2020). These studies focus, for example, on collaborative competencies, typical for the zeitgeist of New Public Governance (NPG; Getha-Taylor, 2008, 2018). However, the development of digitalization and datafication of society and governmental organizations and the competencies this requires of civil servants are underexposed (Kruyen & Van Genugten, 2020).

The attention to civil servants’ competencies dates to the 1950s (Hood & Lodge, 2004), and gained momentum around the turn of the century. Since then, Competency-Based Management (CBM) is increasingly embraced as a new approach to managing almost all key human resource processes (Bonder et al., 2011; Hondeghem & Vandermeulen, 2000; Kruyen & Van Genugten, 2020). Indeed, studies have focused on training and developing competencies (e.g., Naquin & Holton, 2003; Sims et al., 1989; Van Buuren & Edelenbos, 2013), on recruitment and selection procedures based on competency profiles (Farnham & Stevens, 2000; Sundell, 2014) but also on complete job analysis processes based on competency profiles that inform strategic management (Bonder et al., 2011; Hondeghem & Vandermeulen, 2000; Pickett, 1998). All these studies show that civil servants are usually recruited and trained based on general, universal or core (clusters of) competencies required for all public but also private employees. However, civil servants need specific, context-dependent competencies related to their job (Bonder et al., 2011; Getha-Taylor et al., 2016; Kruyen & Van Genugten, 2020). Within this CBM and competency profiles literature, digital or data competencies are barely mentioned, and civil servants themselves also seem unaware of the importance of digital competencies (Kruyen & Van Genugten, 2020). The lack of attention to digital or data competencies is rather surprising since over the past decades’ digital technologies have come to play a major role in the public sector (Lips, 2020; West, 2005).

Following the private sector, governments have started to use increasing amounts of data sources to support decision-making (Choi et al., 2018). This data-driven decision-making (DDDM) is decision-making “based on the analysis of data rather than purely on intuition” (Provost & Fawcett, 2013, p. 53). It represents a shift in governmental decision-making that strongly preferences a way of working in which data facility and analysis are the most significant elements. Organizational theorists have argued that advanced technological systems almost always come with high hopes for their potential to change organizations for the better (Contractor & Seibold, 1993; DeSanctis & Poole, 1994). It is therefore not surprising that DDDM developers, users, and scholars also hypothesize different, faster, more supported, more precise and cheaper decisions than “traditional” decisions based solely on experience and intuition (Berner et al., 2014; McAfee & Jolfsson, 2017; OECD, 2017; Van der Voort et al., 2019).

Decades of extant research in organization theory demonstrate that technological advancements restructure and reshape work practices and show the importance of the role of human agency in this process (Barley, 1986; Boudreau & Robey, 2005; Contractor & Seibold, 1993; DeSanctis & Poole, 1994; Pentland & Feldman, 2007). The introduction of new technologies in organizations thus presumably alters the set of required competencies somehow (Orlikowski, 2000). Various studies highlight the importance of developing the “right” competencies in governments to exploit the new technology of DDDM (e.g., Brown et al., 2011; Desouza & Jacob, 2017; Kim et al., 2014; Malomo & Sena, 2017). However, none of them explain what the “right” competencies entail. They do not define them more precisely than for example “skills that allow for the analysis and modeling of complex phenomena” (Malomo & Sena, 2017, p. 17). This highlights the need to develop a thorough academic understanding of the role of competencies in public DDDM.

This study fills the gap both in the literature on CBM and in the literature on public DDDM by addressing the following research questions:

To answer this question, we conducted 12 expert interviews, 22 Behavioral Event Interviews (BEIs) with municipal civil servants, and a focus group, following the competency study method of Spencer and Spencer (1993). This method is used increasingly in public management research and is considered the most scientifically rigorous approach to identifying competencies (Getha-Taylor, 2008).

With this article, we extend the competency profiles of civil servants by introducing a specific (cluster of) competencies that are required in DDDM. As a result, we inform the public HRM literature and more precisely the CBM literature by introducing insights from digital government literature. Moreover, this study can stimulate practitioners to make better-informed decisions regarding the competency-based profiles of civil servants. These can inform the recruitment and selection process and the training and development process, so public organizations can better cope with the challenges of digital government (Kruyen & Van Genugten, 2020).

Competencies for Responsible DDDM

Data-Driven Decision-Making

The rapid usage and expansion of the boundaries of data collection and analysis require that civil servants take different steps in decision-making processes. Before we explain what DDDM means for the competencies of civil servants, we specify what DDDM entails and how it differs from “traditional” decision-making.

First, in DDDM, the frequency at which information is obtained increases. Data usage in “traditional” decision-making relies on specific periods of data collection, such as census data (Höchtl et al., 2016). These data mainly allow the analysis of what happened in the past. In DDDM on the other hand, vast amounts of different, real-time data sources can be linked (van der Voort & Crompvoets, 2016). This allows not only for the analysis of what happened in the past but gives the possibility for real-time updates or even for predictive models. Second, how information is derived from data expands. Datasets have always been analyzed with the aim of verifying specific pre-set hypotheses or answer specific questions (queries). DDDM expands this practice as it can take the data as a starting point from which ideas or questions can be formed (Vetzo et al., 2018). To do so, large amounts of connections are tested to distill what the data itself “says” (Janssen & Van den Hoven, 2015, p. 363). Third, the importance of data in decision-making expands. In DDDM, data analysis is the primary source of decision-making. Civil servant’s experience, intuition, and expertise play a much smaller role than in traditional decision-making (Provost & Fawcett, 2013).

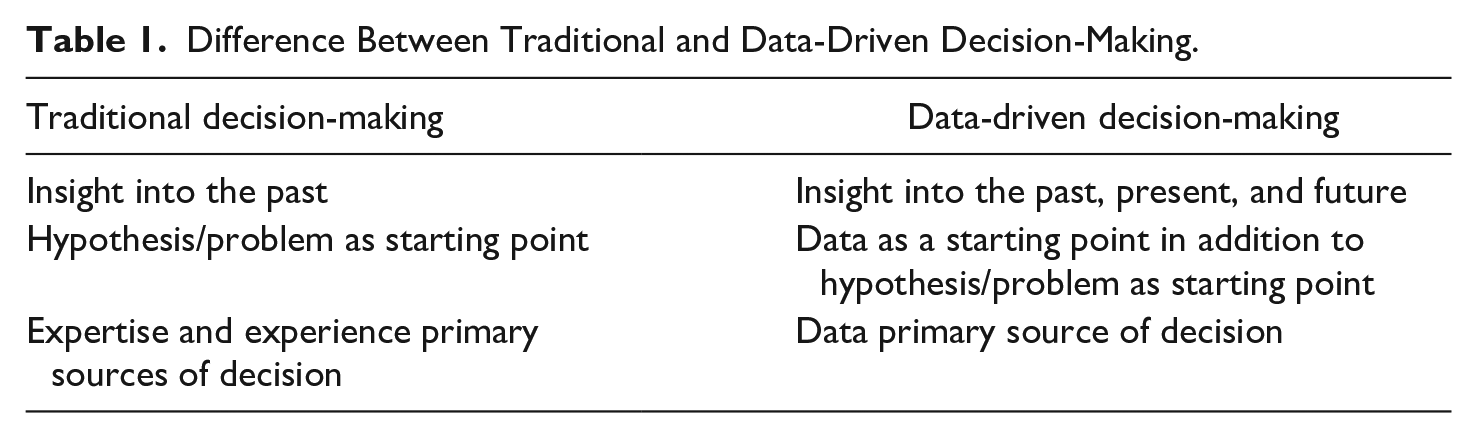

Table 1 provides an overview of the three main differences between traditional decision-making and DDDM. As the table shows, DDDM is a practice that represents the rapid expansion of the boundaries of data collection and analysis that have always been included in the forms of routinized, rational decision-making found in public organizations. DDDM, therefore, builds on traditional decision-making, but it uses data much more frequently and in additional ways. This development has been accelerated in the last 20 years by the emergence of a series of new technologies such as real-time data collection and predictive modeling based on big data (Mayer-Schönberger & Cukier, 2013).

Difference Between Traditional and Data-Driven Decision-Making.

Competencies in the Public Sector

Competencies in HRM have come to be known as a generic umbrella term including several characteristics civil servants have or need to do their job. Kruyen and Van Genugten (2020) define competencies broadly as work-related skills, abilities, and attitudes that civil servants need to perform their job effectively. Getha-Taylor (2008) defines competencies somewhat broader by adding the deeper level of traits of civil servants. Moreover, she also takes a less humble approach toward competencies by framing them as traits associated with excellence. According to Getha-Taylor (2008, p. 105), the empirical study of competencies originated with McClelland’s work (1973), which suggested that competencies are a means to predict success in the workplace. Building on the work of McClelland, Boyatzis (1982, p. 21) first defined the term competency as “an underlying characteristic of an individual which is causally related to effective or superior performance in a job.” However, in line with, for example, Kruyen and Van Genugten (2020), we follow the approach that looks for the minimum abilities required to tackle specified tasks (Hood & Lodge, 2004). Inherently we combine the definition of Getha-Taylor (2008) and Kruyen and Van Genugten (2020) by defining competencies as the knowledge, skills, traits, and motive characteristics minimally required to tackle specified tasks by employees.

This definition is also in line with competency profiles that public organizations use to inform recruitment and selection, and training and development programs (Bonder et al., 2011). According to Bonder et al. (2011, p. 2), the structure of a competency profile in public organizations is “. . . designed to reflect “core competencies” required of all employees (often personal traits), “group competencies” required for certain job roles (primarily abilities and skills), and “task competencies” related to specific jobs (often particular domain).” This attention to competency profiles grew in the public sector because it puts the employee instead of the job at the center, which was necessary to break through the bureaucratic culture where employees were seen as just a cog in the machine bureaucracy (Hondeghem & Vandermeulen, 2000). This employee-centered focus fits with the trend in HRM that, since the 1980s and 1990s, organizations have realized that employees are one of the most critical stakeholders to reach high organizational performance (Bonder et al., 2011; Guest, 2017).

However, focusing on the employee instead of the job does not mean that competency profiles can be analyzed separately from the work environment (Boyatzis, 1982). Indeed, in line with Bonder et al., (2011) idea of task competencies necessary for particular jobs and Boyatzis’s (1982) idea that competencies should fit the needs of the job demands in the work environment, the much-applied job demands and resources (JD-R) model in the public HRM literature shows that all working environments consist of certain job demands and certain job resources. These develop in interaction with personal resources (i.e., competencies) (Schaufeli & Taris, 2014). In general, job demands are the job tasks of employees that cost energy to deal with and lead to lower performance and job resources give energy and lead to higher performance. Personal resources are subdivided into trait personal resources which are stable psychological employee characteristics, and state personal resources, which are somewhat more malleable psychological characteristics (cf. the definition of competencies) (Schaufeli, 2017). According to JD-R scholars, these civil servants’ personal resources help to cope with job demands (e.g., Borst et al., 2019). For example, a civil servant that needs to develop an anti-fraud algorithm might use his computational skills and fraud knowledge to deal with this demand. Consequently, personal resources (i.e., competencies) should fit with the job demands at hand to reach performances, which depend on the particular job and sectoral context (Borst, 2018; Borst et al., 2019; Boyatzis, 1982; Kruyen & Van Genugten, 2020).

In line with the assumptions from the JD-R model, recent studies show that competency profiles indeed need to fit the (changing) work environment of civil servants. Kruyen and Van Genugten (2020) showed that civil servants consider 248 different competencies important to perform their job effectively. Interestingly, of these competencies, many were general (clusters of) core competencies instead of context/sector-specific competencies, such as integrity, creativity, getting things done, communication and persuasion, leadership, and self-development competencies. However, the authors argue that some of these competencies are specifically related to the philosophy of traditional public administration (PA), and/or New Public Management (NPM) and/or NPG. Moreover, Getha-Taylor et al. (2016) showed that a specific cluster of competencies (i.e., collaborative competencies) due to NPG is situational and differs between levels of the public sector. Therefore, they argue that “competency models must keep pace with environmental shifts and work-related changes” (Getha-Taylor et al., 2016, p. 308). As digitalization and datafication are such a substantive environmental developments that translate to various big work-related changes such as DDDM, it is very important to gain insight into the specific cluster of competencies that civil servants need to deal with their digital job demands and to effectively fulfill their job (Kruyen & Van Genugten, 2020).

Competencies for DDDM

Decades of extant research in organization theory demonstrate that technological advancements restructure and reshape work practices (Barley, 1986; Boudrea & Dobey, 2005; Contractor & Seibold, 1993; DeSanctis & Poole, 1994; Orlikowski, 2000; Pentland & Feldman, 2007), presumably altering the set of required competencies in some way. Since DDDM differs from traditional decision-making in the central role that data analysis itself and its contemporary advancements play in decision-making, it can be presumed it changes the required set of competencies.

Several authors focusing on DDDM in private sector companies have already proposed new competency frameworks for companies that increasingly use data in their decision-making processes (Debortoli et al., 2014; Gudanowska, 2017; Hecklau et al., 2016; Prifti et al., 2017). Most of these frameworks stress the importance of the “right” combination of technical competencies such as coding and process understanding, personal competencies such as flexibility and creativity, and social competencies such as teamwork and communication. Identifying technical, personal, and social skills is essential and highlight that more than just technical training is needed. At the same time, these studies do not account for the requirements of the public sector context, such as political dynamics, the need for democratic accountability, and the central role of the rule of law (Boyne, 2002). Civil servants need an understanding of and sensitivity to this environment and the skill to maneuver in it; in other words, they need political sensitivity or astuteness (Edelman, 1988; Tiernan, 2007).

Another strand of literature focuses on 21st-century skills. These are the skills that students in all education levels need to develop to prepare for their work in the datafying society (Voogt & Pareja Roblin, 2010). Based on an extensive literature review, Voogt and Pareja Roblin (2012) conclude all 21st-century skills frameworks ultimately seem to converge on a common set of skills: digital/data literacy, creativity, problem-solving, collaborating, communication, citizenship, critical thinking, and productivity. Again, although touching upon important new competencies, 21st-century skills studies focus specifically on students and their preparation for the job market, both in the public and private sectors. Therefore, they are not tailored to civil servants and the specific context of public decision-making.

A final relevant strand of literature is research on responsible DDDM. Using data in decision-making does not come without risks. Data itself can be good or bad, messy, incomplete, inconsistent, and insufficient (Kitchin, 2014). That is because technical systems are the solidification of thousands of design decisions (Van den Hoven et al., 2014; Vetzo et al., 2018). If outcomes of data analyses are used negligently or uncritically, this can lead to “unethical” errors in public decision-making. To address this issue, Van den Hoven (2013, p. 105) advocates “responsible innovation” that contributes to better solutions for societal challenges. This is done by actively mapping ethical and societal aspects and incorporating them into the design process of innovation. Furthermore, it involves engaging stakeholders in the development process by regularly asking them for feedback and adjusting innovations based on this. In line with Van den Hoven (2013), we argue that if DDDM is to live up to its expectations of improved decision-making, this should also include responsibility.

These three strands of literature form important inputs for our research but are treated with some caution. Apart from the lack of focus on public sector decision-making, the competency frameworks are derived only from theoretical and conceptual reasoning, and almost none of them have been empirically validated (e.g., Debortoli et al., 2014; Hecklau et al., 2016; Prifti et al., 2017). Therefore, we take these frameworks into account but take a bottom-up approach in identifying required competencies in DDDM in local governments. We explain this methodology in the next section.

Method

Research Design

The exploration of the literature on competencies for DDDM highlights a lack of understanding of which competencies are required in public DDDM. We formulated two research questions to address this gap and guide the empirical research:

These questions were answered using a research design that consisted of expert interviews, BEIs, and a focus group in a case study of a local government. This two-step data collection process allowed us to first get a “helicopter” picture of required competencies in DDDM as depicted by a diverse group of experts. We then tested this picture in practice in a case study, where civil servants explained their daily work with DDDM and which competencies they used in this process.

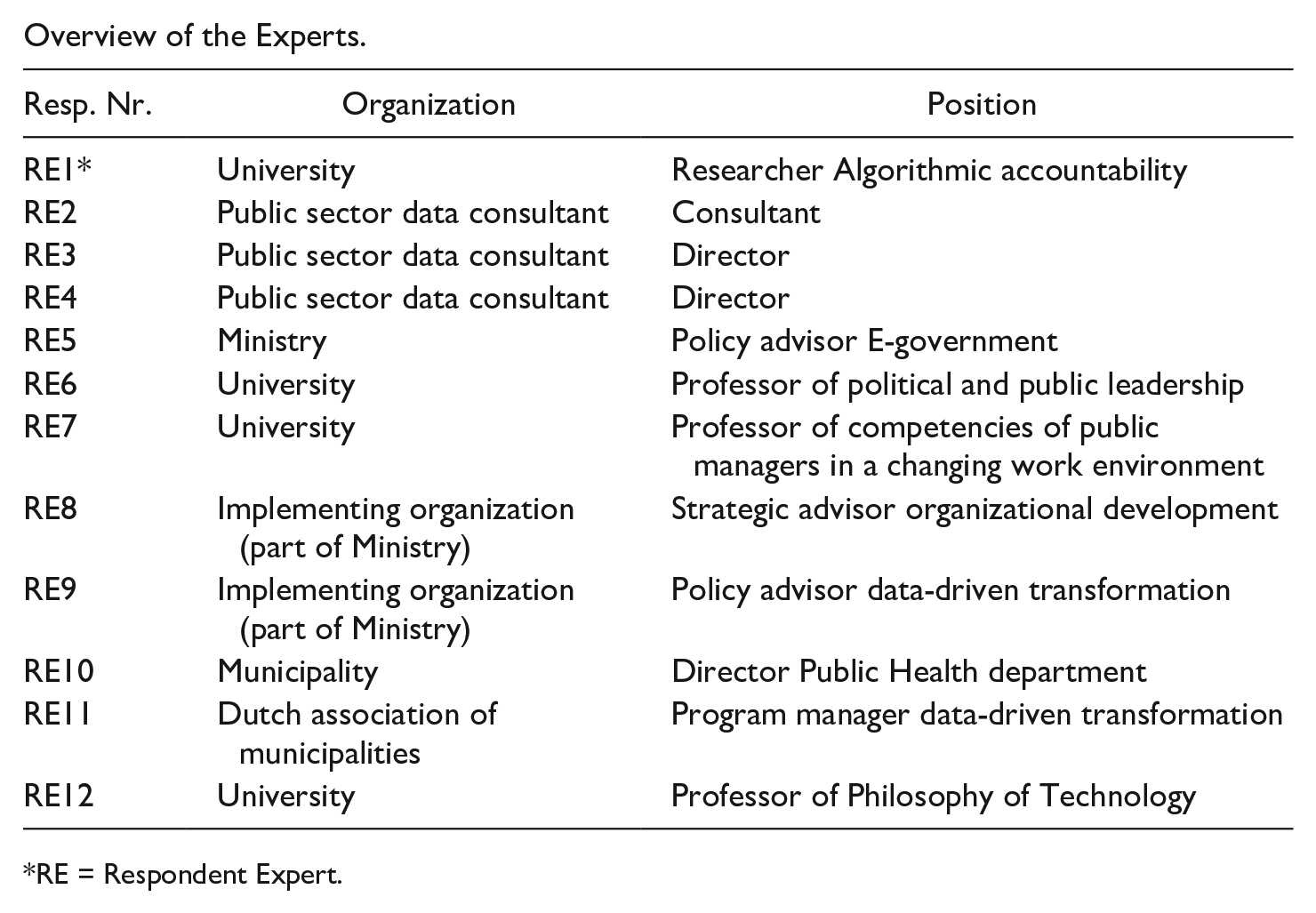

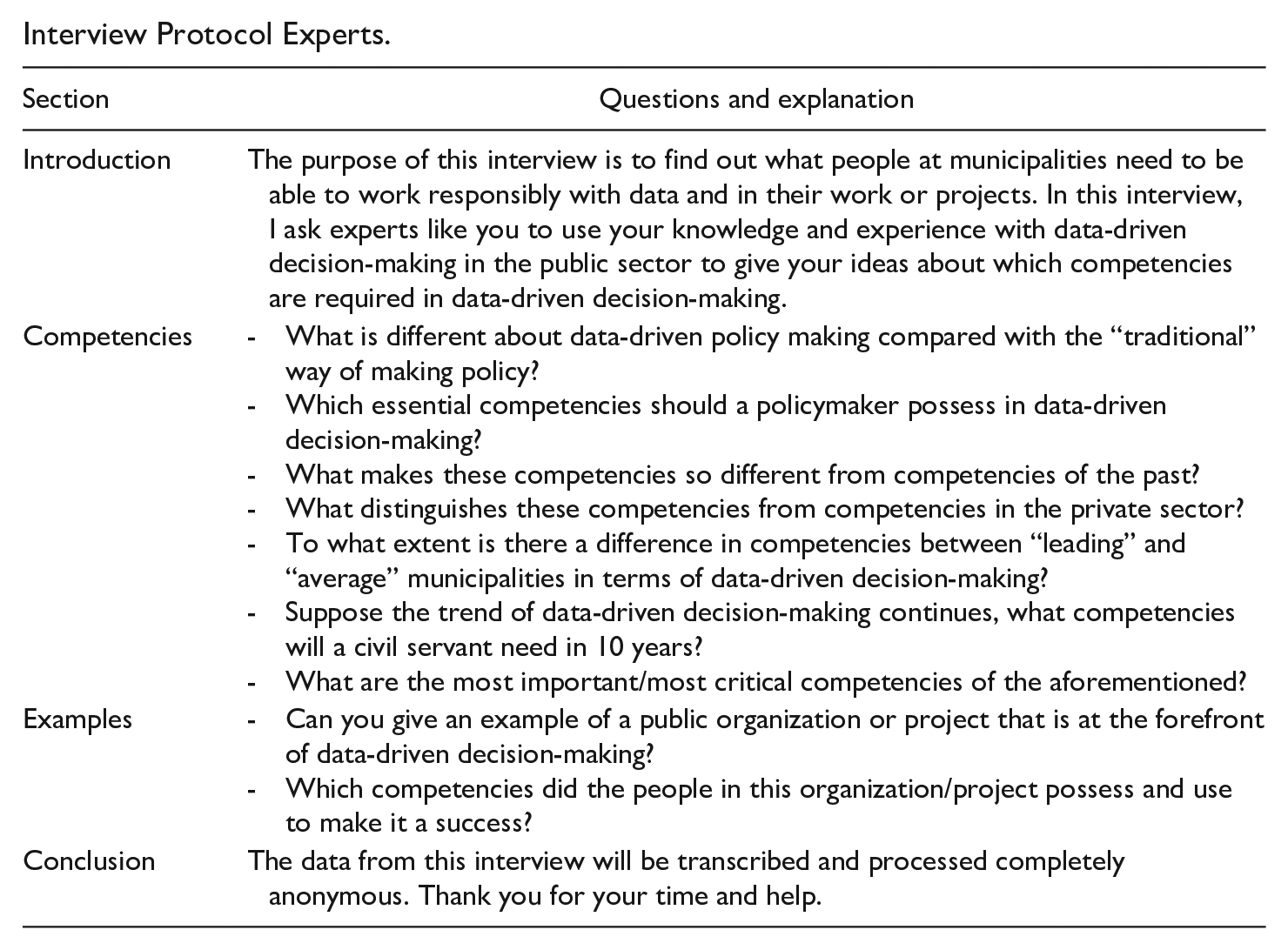

Expert Interviews

The first round of data collection consisted of 12 expert interviews. A mix was sought of experts from different organizations (universities, ministries, local governments, consultancy firms in the public sector) and experts with different expertise, such as knowledge about DDDM and/or knowledge of competencies of civil servants (for an overview see Appendix A). In line with the recommendations of Spencer and Spencer (1993), these expert interviews were semi-structured (see the topic list in Appendix B). The experts were asked to identify competencies they considered necessary in DDDM in local governments. They did so by describing these competencies in general terms or linking them to specific DDDM processes they had experienced themselves. The interviews lasted between 40 and 80 min and were recorded and transcribed.

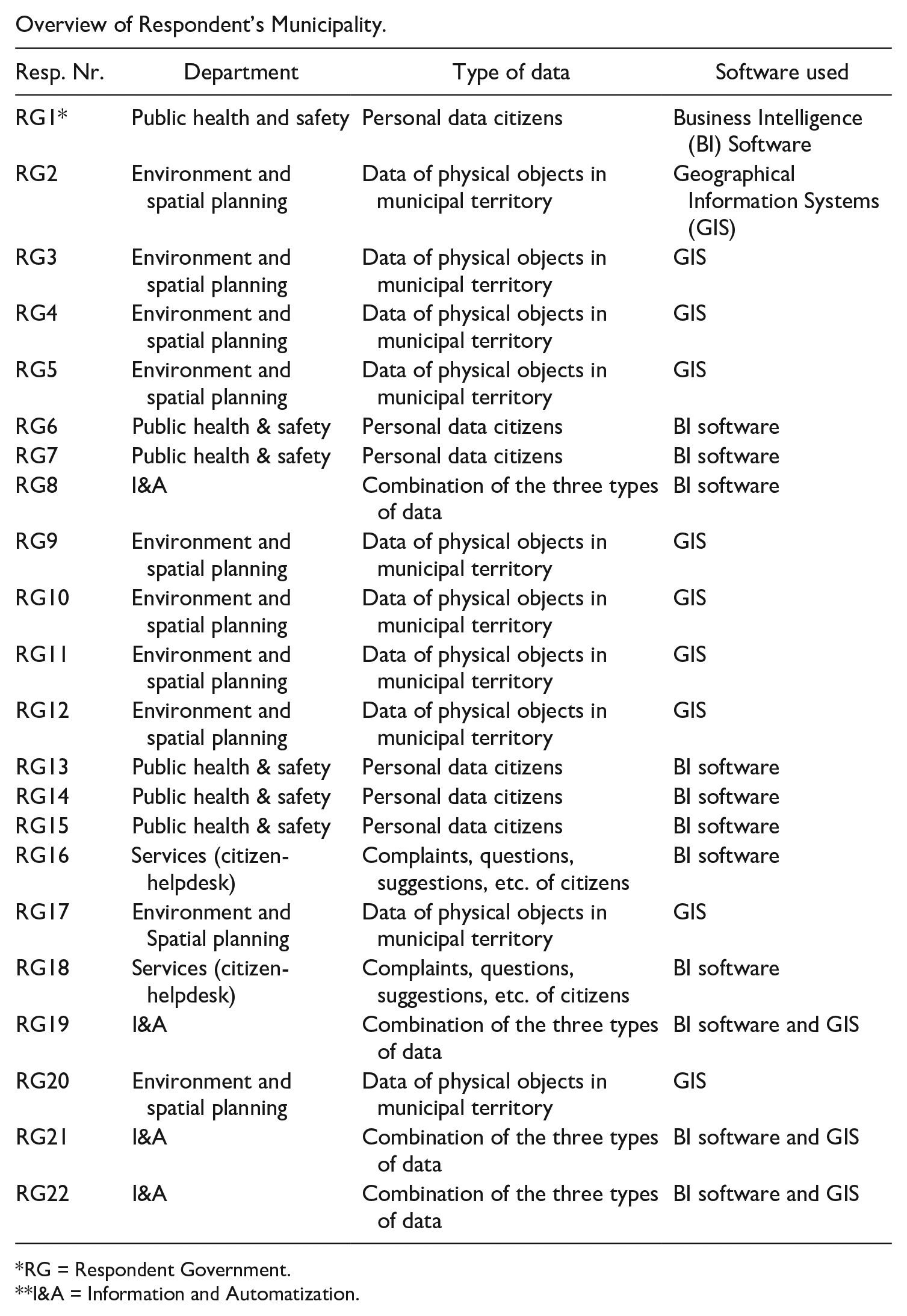

Case Study and BEIs

The second round of data collection consisted of an in-depth case study of a medium-sized, urban local government (municipality) in The Netherlands, with approximately 500 employees. The organization had recently started to experiment with DDDM. Most municipalities are still in the early stages of adoption of DDDM (Desouza & Jacob, 2017; Malomo & Sena, 2017; Poel et al., 2018). Therefore, the municipality can be considered to be in a “normal” or “average” position when it comes to DDDM in municipalities and can thus be qualified as a “typical case” (Ritchie & Lewis, 2003).

Twenty-two civil servants of the municipality were purposively sampled based on the criterion that they had finished at least one complete DDDM process (see Appendix C). An example of a project involving several respondents, is a project that translated cultural heritage data from archived books into Geographical Information System (GIS) multi-layered maps. Another project used citizen data to develop a predictive model of potential debts among groups of citizens. A mix was sought between different domains of expertise (environmental planning, public health, and safety) and different roles within the organization (policymakers, data analysts, project managers, and policy executors), to cover the full spectrum of civil servants in a municipality. We did not include public managers, such as directors or high-ranking officials (Noordegraaf, 2000), because they direct the organization in which the DDDM takes place and do not execute it. Also, public management scholars argue that managers need different competencies than their employees (Bartelings et al., 2017; Boyatzis, 1982).

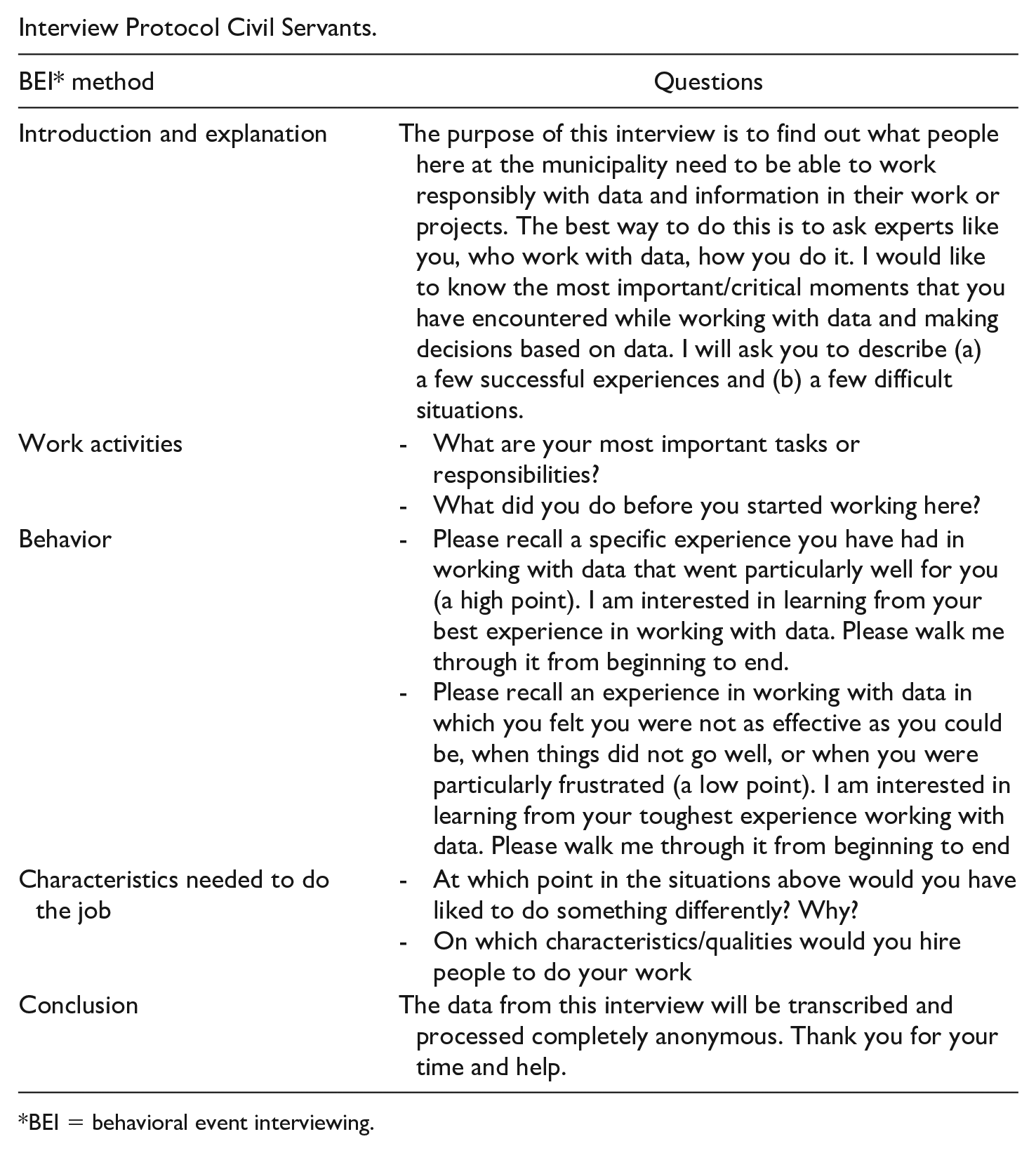

The civil servants were interviewed using a specific interviewing technique called Behavioral Event Interviewing (BEI). This method lies at the heart of Spencer and Spencer’s (1993) competency method and is currently considered a standard procedure for all competency studies (Getha-Taylor, 2008, pp. 105, 108). The reason for choosing BEI over traditional interview methods is that people often do not know what their competencies, strengths, weaknesses or even their job likes, and dislikes are. That is why the purpose of the BEI method is to get behind what people say they do, to find out what they actually do (Spencer & Spencer, 1993, p. 115). As a result, in BEIs, researchers explicitly ask respondents to describe specific work situations to find out what respondents did in these situations and deduce competencies from these descriptions, instead of directly asking which competencies they think they need and use. We followed the interview protocol of Getha-Taylor (2008) (see Appendix D). The 22 interviews lasted between 45 and 90 min and were recorded and transcribed.

Analysis

The transcripts of the 32 interviews formed the empirical data of this study. The data were analyzed following the process of reliability coding of open-ended interview data as described by Hruschka et al. (2004). The analysis consisted of six steps.

First, each of the “helicopter view” expert interview transcripts was divided into segments that referred to a single competency (e.g., individual words, sentences, paragraphs, or responses to individual questions). Segments mainly consisted of answers to the question of which competencies the experts considered required in DDDM in governmental organizations (e.g., “teamwork” or “data literacy”), but also of the answers that experts provided to the question to describe a successful DDDM process they experienced.

Second, these segments were inductively open-coded in Nvivo 12 (Boeije, 2014) to create an initial codebook consisting of all competencies mentioned by the experts. Two authors coded separately: one author coded all segments, and another coded all segments of three interviews (25% of the data). The authors then met to compare the proposed set of competencies and agree on an initial codebook. We followed Hruschka et al. (2004, p. 3122), by agreeing on (a) how relevant a competency is specifically to the process of DDDM and (b) whether the code emerges in the data when staying as close as possible to the data. For each code, the team derived a set of rules by which the coders decided whether a specific unit of text was or was not an instance of that code. In this process for example, synonyms were merged (e.g., “data knowledge” and “data literacy” were agreed to be coded as the latter). Following Hruschka et al. (2004), we relied on Cohen’s kappa for intercoder reliability testing. This test prevents the inflation of reliability scores by correcting for chance agreement. This ranges from below 0.40 (poor agreement), 0.40–0.75 (fair to good agreement), to over 0.75 (excellent agreement). We calculated the score in Nvivo 12 using the segments and corresponding codes that were coded by both authors and arrived at a kappa score of 0.76, which means excellent agreement.

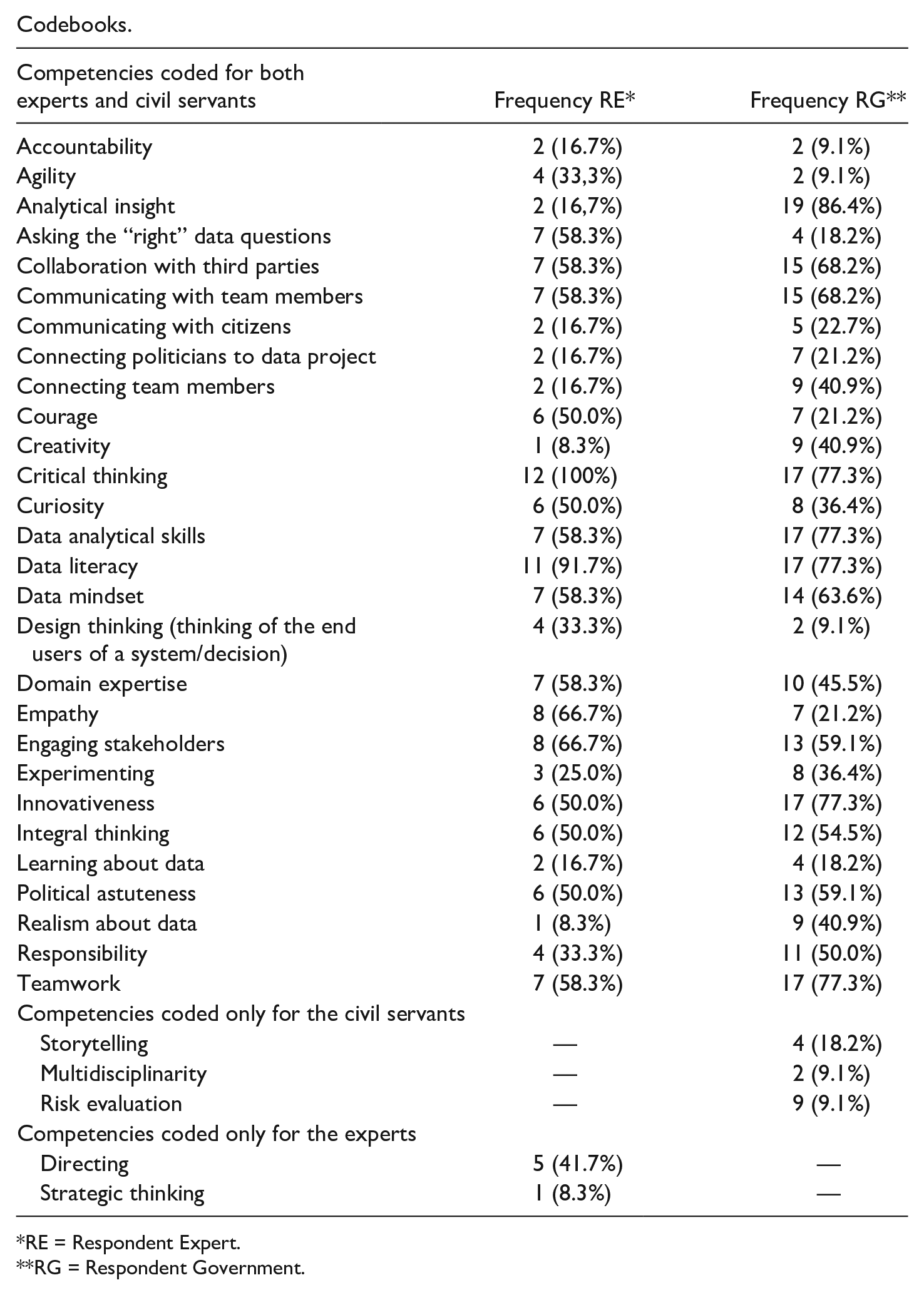

Third, we used an iterative process to test this initial codebook for the municipality’s case, using the BEI interviews of the civil servants. We started the analysis of the BEIs by summarizing the main contextual characteristics of the civil servants: organizational context (in which department the civil servant worked), which software the civil servants used in their projects and what kind of data they worked with (see Appendix C). We then divided the transcripts into segments. In line with Spencer and Spencer (1993), these were mainly parts of the text consisting of implicitly discussed competencies. These were detailed descriptions by the civil servants of their behavior in specific DDDM processes they experienced at work. However, although not asked to do so by the researchers, some civil servants also explicitly mentioned some competencies, such as “My team collaborated very well on the data project,” which were also included as separate segments. We analyzed these segments using a process of deductive and inductive coding: The initial codebook developed in the previous step was the starting point, but the option to include competencies that inductively came up in the BEIs remained. Again, one author coded all segments, and another author coded all segments of five BEIs (23% of the data). The authors met frequently to compare the coding, in the same way as in the previous step. Again, synonyms were merged through frequent dialogues among the authors to arrive at shared understandings. The authors came to joint decisions in all cases of discrepancies by staying as close as possible to the data. For example, one civil servant said: “I always try to find out which new possibilities there are in DDDM and experiment with them at home.” One author coded this as “pioneering” and the other as “experimenting,” but jointly decided on the second code, which was mutually understood to be closer to the original phrasing. We arrived at a kappa score of 0.70, which means good agreement. Appendix E shows the result of this process in a codebook that describes competencies that were coded for both the expert interviews and the BEIs (26 in total), experts only (two in total), and BEIs only (3 in total). We decided to exclude the two competencies coded only for the expert interviews because they can be considered managerial competencies. As explained above, this study does not focus on the competencies of managers of organizations executing DDDM but of employees executing DDDM themselves. The three competencies coded for the BEIs were added to the final codebook.

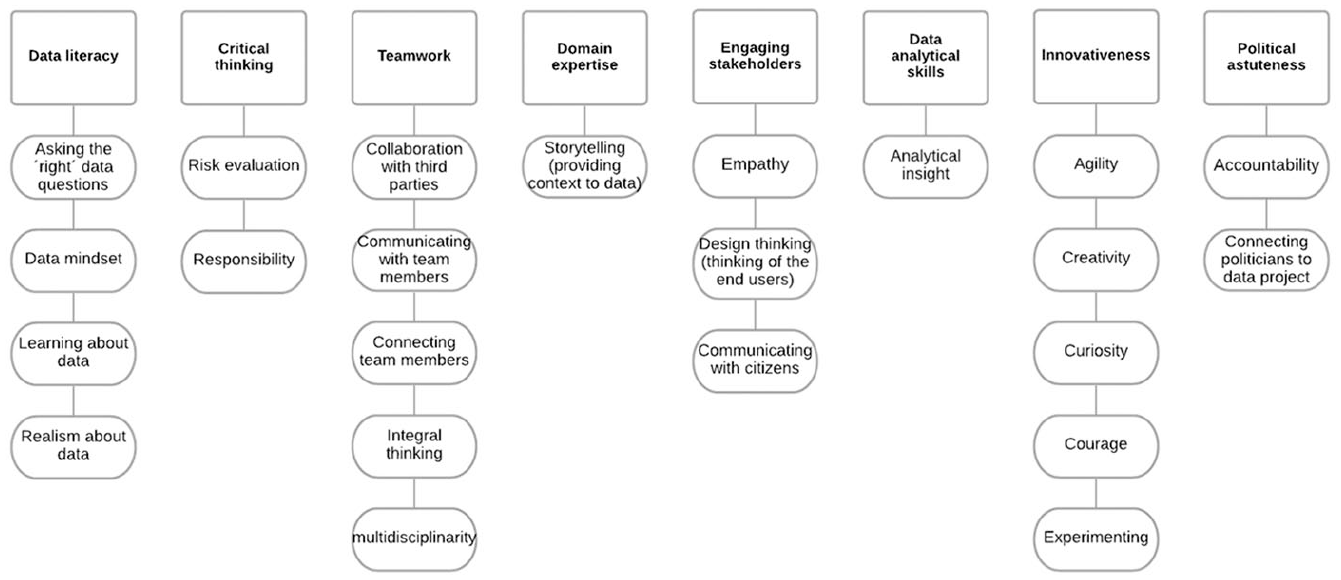

Fourth, following Kruyen and Van Genugten (2020, p. 123), we grouped these competencies inductively in several meaningful clusters. The decision to make clusters out of all identified competencies is based on competency literature that stresses that a model of fewer and more detailed competencies is preferred over a model with a large number of competencies that only have brief descriptors (Ruggerberg et al., 2011, p. 247). We used the final codebook consisting of the 26 competencies coded for the expert interview and BEIs plus the three competencies coded only for the civil servants. We clustered them by staying close to the competencies’ meaning and grouping them together into clusters of subcompetencies that logically fit together. This resulted in eight clusters of meta-competencies and 23 subcompetencies (see Figure 1 below for an overview).

Overview of clustering competencies.

Fifth, we verified the cluster of eight meta-competencies and subcompetencies in a focus group session at the municipality. The focus group consisted of five civil servants from the sample of 22 BEIs. Also present were one civil servant responsible for implementing DDDM in the organization, the HRM director of the organization, and the general director of the organization. In the session, the civil servants discussed whether the identified competencies corresponded with their experiences. The consensus was that the competency set matched their experience well. Also, the HRM manager and de general director considered the model valuable in the organization’s HRM practices.

Sixth, we inductively coded for underlying patterns in relationships between competencies and civil servant characteristics. We did this to follow both organizational science literature (e.g., Barley, 1986; Contractor & Seibold, 1993; DeSanctis & Poole, 1994; Orlikowski, 2000) and competency literature (e.g., Borst, 2018; Borst et al., 2019; Schaufeli & Taris, 2014) that suggests that the context in which employees work, influences how they execute their work and which competencies are required to do so.

Findings: DDDM as a Hybrid Process

How Civil Servants Behave in DDDM-Processes

In the case study, the 22 respondents discussed 81 different DDDM processes. We explained that in theory in DDDM, (a) data are used to provide insight into the past, present, and the future, (b) data are the starting point of a decision-making process in addition to a hypothesis/problem (c) data are the primary source of information for decision-making. The DDDM processes as observed in the municipality only partially met this theoretical definition.

First, the data were often not the primary source for decision-making. Civil servants used other sources and combined this with the data analyses before deciding. Examples of other quantitative sources were research reports of, for example, (national) research institutes such as the Central Bureau of Statistics (CBS) (RG13, 14). Examples of “softer” information were case studies, expert opinions, and requests made by politicians (RG7, 9, 10, 14, 15). RG19 explains, “If we were going to work one hundred percent data-driven, the data would determine the things we do [but that is currently not the case].” This makes the DDDM process as it took place in the municipality not fully data-driven in accordance with its theoretical definition.

Second, most DDDM processes started from a specific question or problem and not from the data. As RG15 explains, [First] there is a question from our management or the aldermen. Then you formulate your research question as concrete as possible . . . [after determining] the assignment, you dive into the data.

One aspect of DDDM observed in the municipality met the “pure” theoretical definition: the usage of data. Data are not only used to provide insight into the past but also the present and future. Predictive analyses were mainly used for maintenance projects concerning all kinds of objects in public space (such as the medieval culverts). In public health or safety issues, using predictive analyses was a lot more complicated: We can link data [to make predictions] because there are systems to do so. But what will you do if you have that information? . . . [This concerns actual people and problems in their lives], what is your role as a municipality in this, how far do you go, and how much capacity will you put on it? (RG13)

This statement shows that when it comes to social issues concerning people’s lives, it is not easy to use predictive analyses in decision-making without a pre-formulated question or goal because that leads to a lot of unanswered questions about what to do with the obtained insights.

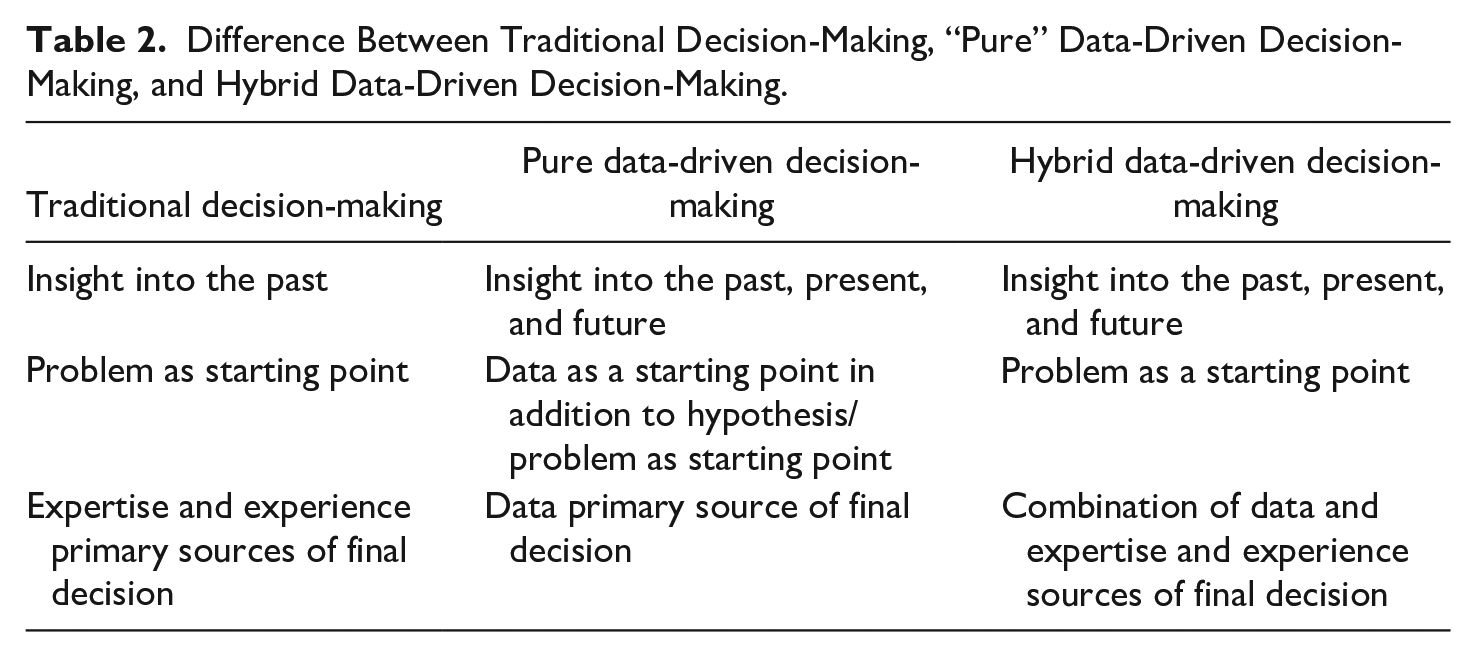

From this, we concluded that in practice, a hybrid form of decision-making was observed in the municipality. The term “hybrid” is derived from academic literature on hybrid public organizations (Noordegraaf, 2007; Brandsen & Karré, 2011; Van der Wal & Van Hout, 2009). According to Van der Wal and Van Hout (2009), hybrid organizations are characterized by a mix of pure but often contradictive behavioral rationalities, for example, “older” and “newer.” Similarly, the DDDM process combines traditional and DDDM elements (Table 2). Data are used in decision-making in ways beyond “traditional” data analysis: predicting the past, present, and future. However, the decision-making process is still very much structured like the “traditional” way: starting from a specific goal or question and final decisions based on multiple sources, not primarily the data. RG19 summarizes this: “I think we are moving towards an intermediate form of data-driven working. We have much more data at our disposal, but the structure of the [organization and the decision-making process] remains kind of the same.”

Difference Between Traditional Decision-Making, “Pure” Data-Driven Decision-Making, and Hybrid Data-Driven Decision-Making.

This empirical analysis showed what civil servants do in practice when using data in decision-making processes, and thereby answered research question 1. The following section will investigate which competencies are needed in these hybrid DDDM processes, which will answer research question 2.

Competencies Required in DDDM

Data literacy

All respondents discussed some form of data literacy explicitly or implicitly. Data literacy contains the subcompetencies of asking the “right” questions, having a data mindset, learning about data, realism about data and transparency about data usage.

First, realism about data means being able to “read” and understand what data are and what they can and cannot explain about reality (RE1). The importance of this is acknowledged by two civil servants of the municipality (RG4, 12), who stated they had some trouble in their data-driven projects because they did not fully understand how data was used: I was in charge of a [data-driven] project that I didn’t fully understand. I found that very difficult. I understood the content, but I didn’t understand the technical side, which is just as important . . . it would have been useful if I knew how [the data analysis] worked. Then I could have assessed [if requests I made to the data scientists] were complicated or impossible or easy. That would have been an advantage. (RG12)

The statement shows that this civil servant would have liked to understand the “technical side” of the project. Several respondents stress that to understand this; one must learn and understand the so-called “data pipeline” (RG2, 3, 5 to 10, 12, 14, 16, 18, 19). According to the respondents, this means data are a continuing “mill,” starting from registration by administrative employees (in, for example, the citizen-helpdesk), all the way to the analysis of patterns in citizens’ questions and complaints by data scientists (RG2, 5, 19). Understanding this “data pipeline” allows one to understand that data are not neutral. Because one understands that a person (e.g., a civil servant, or citizen) actively decides to collect data (e.g., write down a complaint in certain words), it is clear that they are not neutral representations of reality. We come back to this point when describing the competency of critical thinking.

Second, data literacy entails knowing how to use data to inform practice. This enables one to have a data mindset throughout the whole project and to ask the “right” questions. RE3 explains this: “It is important that [civil servants] learn to recognize when data are relevant for what they are doing . . . that when they get an issue to solve, they think: hey, I could also solve that with data.” This is confirmed by many respondents of the municipality (RG2, 3, 5, 6, 7, 9, 10, 12, 13, 15, 17, 20, 21, 22). In all the DDDM processes they discussed, it was essential that they understood to what extent data could help them addressing their question or problem, and to what extent it could not.

Finally, several experts (RE2, 5, 6, 8, 12) state that data literacy includes understanding the broader implications of the datafication of society. According to RE2 and RE5, this means that just as civil servants are well informed about societal trends such as individualization and globalization, they should be informed about datafication. They should, for example, know how platforms such as Uber and Airbnb work, but also technologies such as AI and blockchain, and what this means for their own policy- and decision-making.

Critical thinking

A competency that is strongly related to data literacy is critical thinking, which was discussed by all the experts and 19 of the respondents of the municipality (RG 1 to 15, 17, 18, 20 to 22). First, critical thinking entails being able to check how data is used in the analysis and being able to estimate and evaluate the risks of this. Second, it includes being able to tackle ethical dilemmas in a responsible way, by using contextual knowledge and judgment.

First, several respondents (RG4, 8, 10, 11, 18, 20) indicated that before they start data collection, they consider the risks this poses for the data subjects (usually citizens). They do this not only because it is legally required but also because they are convinced themselves that it is very important to think about the effect of their analysis on actual people. After making the decision to collect and use data, many respondents mentioned they actively checked the data, its registration, and the analysis throughout the whole DDDM process (RG1 to 4, 7, 9, 10, 11, 15, 17, 20, 21, 22). They evaluate the following questions: Where does this data come from? How was it registered, and why? What are the definitions used? To what extent do the data represent reality? What are potential biases? If any of this is unclear, several respondents contacted the people that executed the data registration to ask for clarification (RG1, 4, 7, 9, 10, 11, 15). In the environmental and spatial planning domain, some respondents (RG9, 10, 17) physically go outside to check whether the data represent reality, especially when they suspect something is wrong. RG10 explains this for a maintenance project involving medieval culverts. According to the model of an engineering company contracted for the project, the culverts should have collapsed already and if not, were about to collapse at any moment. However, when the civil servant themselves checked the culverts on-site, there seemed to be nothing wrong. The model of the engineering company was based on assumptions of modern-day construction techniques, but the culverts were constructed using medieval techniques. RG10 consulted another engineering company that specialized in these medieval techniques. This company concluded that the culverts only needed some reinforcement but other than that were in good shape. RG10 states learning an important lesson from this experience: “You cannot trust the data blindly because the calculations can completely [destroy reality] . . . one side of the project is the scientific approach, but the other is the actual practice.” Critical thinking thus requires careful risk estimation before starting a DDDM process and careful checking throughout the process.

However, some respondents also discussed situations that cannot be captured in these two dimensions of critical thinking. They were confronted with specific ethical dilemmas that required contextual knowledge and judgment to solve the dilemma (RG1 to 5, 7, 20, 21, 22). One such illustrative example occurred in a sewage maintenance project (RG2 to 5, 20). A previous maintenance project of the municipality had caused damage to the foundations of houses due to unforeseen subsidence. To prevent this from happening again, a team of civil servants came up with the idea to provide citizens with information about the state of the foundation of their houses. The civil servants purchased satellite information from a private company, which measured the heights of roofs of houses over time. These data were transformed into color-coding of the houses in green (no subsiding), orange (slight subsiding), or red (severe subsiding). At first, the team wanted to make these maps publicly available. The underlying idea was that citizens could use the information about the state of their own houses and those of their neighbors to execute maintenance work together. However, several civil servants considered this unethical as it could create market disruptions. After a lot of deliberation, the decision was made not to make the information publicly available but to inform people only about the color of their own house. Whether this is the “right” thing to do, of course, is subject to debate, but the point is that the civil servants involved carefully considered the effects of their data-driven project. RG20 summarizes this: “We struggled with what [information] to give and not give, so we had to take a critical look at it and think about it. [We didn’t] do that on [our] own, we did it all together.” This statement shows that critical thinking mainly requires contextual knowledge and careful group deliberation to arrive to a decision.

Teamwork

The third identified competency is teamwork, mentioned by seven experts (RE2, 3, 4, 6, 7, 9, 10) and 17 respondents of the municipality (RG1 to 7, 9 to 12, 15, 17, 19 to 22). Teamwork involves being able to work in multidisciplinary teams that integrally work together, which is done by communicating and connecting team members. Also, it includes collaboration with “third” (private) parties.

When it comes to multidisciplinary teams, several respondents state that in DDDM projects, data experts need to work closely together with domain experts and connect well with them (RE2, 3, 4, 6, 7, 9, 10; RG1, 3, 7, 9, 15, 12, 17, 19 to 22). Both are highly specialized disciplines, which means you cannot simply turn a data scientist into a domain specialist or vice versa, thereby making collaboration essential (RE2, 3, 4, 5, 7; RG7, 12). RE1 explains this as follows: I think there will be a lot of collaboration in multidisciplinary teams in which the technical part will be done by a data scientist together with [the domain specialist], but that does require that those two parties are able to communicate with each other, so there has to be some kind of common ground that they can talk about with each other.

A point that further stresses this is that two respondents (RG6, 21) specifically indicated that because they did not have a multidisciplinary project team to execute their DDDM project, the project was not as successful as they had hoped.

It is important to note that multidisciplinary teams often do not consist only of people from the municipality itself but also of people from private companies that join the teams on temporary contracts. That is because data collection and analysis are often outsourced to private companies. According to several respondents, working with third parties requires some carefulness on the side of the civil servants (RE3 to 7, 9, 10; RG6, 10, 11, 12, 14, 17). For example, the previous example of the culverts showed the importance of double-checking the work executed by these third parties, as this might not be correct. Furthermore, several respondents discussed the importance of making sure that the data, analysis or final product that is delivered by a third-party fits within the municipal organization and its systems in use so it can also be used in the future (RE2 to 5; RG3, 4, 12, 17). RG15 for example, explains that it is very problematic when a third party delivers an expensive data analysis but does not sufficiently explain to the civil servants how they can replicate the analysis themselves for future projects: “Commercial parties who can [perform certain analyses] are very expensive. And then they can do it [perform an analysis], but [when they leave] we still can’t do it.”

Domain expertise

The fourth identified competency is domain expertise, discussed by seven experts (RE1 to 4, 6, 7, 11) and 13 respondents of the municipality (RG4, 6, 7, 9 to 14, 17, 19 to 22). Expertise in the domain usually entails both specialist knowledge of the content of the issues at stake and expertise in the local municipal context. It also includes storytelling, which means providing explanatory context to the data. RE7 explains this latter: You need someone who understands a lot of the content . . . [and] can say “column F contains all those values, but that is impossible.” A data analyst sees coherence between variable A and B, while a domain expert says, “yes, those are actually two things that naturally go together; nothing is surprising about that.”

Engaging stakeholders

The fifth identified competency is engaging stakeholders, discussed by eight experts (RE1 to 4, 7 to 10) and 14 respondents of the municipality (RG1 to 4, 6, 7, 10 to 13, 15, 16, 18, 20). This includes empathy, design thinking (reasoning from the end-user’s perspectives of a DDDM decision) and communicating with citizens. A stakeholder can be defined as any group or individual who can affect or is affected by the achievement of the organization’s objective (Freeman, 1984; Scholl, 2005). Individuals and groups of stakeholders that are being affected by data-driven decisions of municipalities are usually citizens or companies. Therefore, engaging these stakeholders serves two purposes: (a) finding out what the impact of the decision will be for the citizens and organizations subject to it, and (b) obtaining the required data when the municipality does not possess it themselves. When it comes to (a), RE4 explains why it is important to communicate well with citizens and empathize with their stories: This farmer said “how do you get this data [of my CO2 emission]? [The stable you’re referring to] has not been in use for six years, and you count it in your emissions [calculation]!” You will only find out about those kinds of things if you go and actually talk to these people.

When it comes to (b), stakeholders are usually organizations that can provide data the government itself has no access to (RG1, 7, 10, 13, 14, 15). Examples of these types of organizations are health care organizations, housing cooperations, and citizen groups. It is important to note that engaging stakeholders is different from teamwork with third parties that take over or help with part of the DDDM process, as described in the teamwork competency above. Engaging stakeholders is about involving stakeholders in a participative way in a DDDM process, not about paying a third party to execute part of the DDDM process.

Data analytical skills

The sixth identified competency is data analytical skills, discussed by eight experts (RE1, 3 to 9) and 17 respondents of the municipality (RG1 to 5, 7 to 17, 20, 21). What role data plays in the process differed per DDDM process. Roughly two groups of data usage can be distinguished in this study: projects that used data about physical objects in the municipal territory (e.g., lampposts, trash bins, playground equipment), which were processed using GIS systems, and projects that used personal data of citizens (gender, age, postal code) and were processed using Business Intelligence (BI) systems. However, all the projects came down to the same steps in using data: (a) being able to transform a policy question into a data question, (b) analyzing or modeling the data to obtain patterns and relationships, and (c) interpreting those and (d) making visualizations that provide insight into these patterns. RE6 explains: “it’s about analyzing, connecting, visualizing because data in itself is, of course, nothing.”

Innovativeness

The seventh identified competency is innovativeness. Again, this is an umbrella term for several subcompetencies that are closely related. These are agility, creativity, courage, curiosity, and experimenting. These were discussed by eight experts (RE1 to 7, 10) and 17 respondents of the municipality (RG3 to 6, 9 to 13, 15 to 22).

RG17 sums up all different competencies in the following statement: The lesson I have learned is go where you want to go. However, that is not a fixed target, it moves, and you must be able to move along and incorporate that flexibility into the process. Not being rigid like “we had agreed on this, so we will do this,” no, you will gradually get new insights along the way. You have to allow yourself to be guided by those insights.

This statement shows that courage and agility are needed to start analyzing data with a degree of uncertainty about what the analysis will show. Experimenting and creativity are needed to gain new insights along the way and to act upon these new insights. Several respondents (RE1 to 5; RG2, 3, 4, 6, 11, 12, 15, 21, 22) described that curiosity is also an essential part of innovativeness: Curiosity is the most important competence that a civil servant should have . . . because the way you can solve a problem is changing at lightning speed, so you have to be very curious about these new ways. (RE2)

Political astuteness

The eighth identified competency is political astuteness, discussed by eight experts (RE2 to 6, 9, 10,11) and 13 respondents of the municipality (RG1, 4, 6 to 10, 13, 14, 15, 17, 21, 22). As explained in the theoretical section, “traditional” political astuteness entails understanding and being sensitive to the public sector context, such as political dynamics and the need for democratic accountability. According to many respondents, the nature of DDDM changes political astuteness in two ways. First—as explained in the previous discussion of the competency ‘innovativeness’—DDDM is a process of working toward a moving target. This requires a different type of political astuteness, as explained by RE2: The competency would be that you dare to accept that you yourself do not know what a result of [a DDDM project] is going to be. And you should also have the ability to tell politicians that you do not know something yet, but you will find the tools to do experiments to understand it better than you do now.

This statement shows the importance of connecting politicians to DDDM projects. When civil servants want to start a DDDM process and need political support, civil servants need to prepare politicians for the inherent uncertainty of a DDDM project. This means civil servants should explain to politicians that there are uncertainties and risks involved in DDDM, but also substantial benefits that make it worth it. They should make this connection from the very start of a project (RE11). RG13 specifically explains this for a decision that was made in the social domain: Eventually [DDDM] comes down to political decision-making. I did the data analysis as a foundation for that decision-making. And it helped, it made a difference, because otherwise [without the data-analysis], I think we would have had a lot of trouble pushing for it [such a politically sensitive decision that needed to be well informed].

Contextual Differences Depending on Civil Servants’ Departments

As explained in the “Method” section, we analyzed the competencies for relationships with the context of civil servants, using three contextual characteristics (see Appendix C): department of the civil servant, type of data used, and type of system used. As explained in “Data analytical skills” section, the latter two characteristics can roughly be divided into two groups: civil servants working with data about physical objects in the territory of the municipality using GIS systems and civil servants working with personal data using BI systems. In this study, we did not find differences between these two groups regarding which competencies were used in working with them. Again, we think this is because the data analyses themselves were very similar and relatively simple. We will return to this point in the discussion.

However, we did find a pattern when analyzing the different departments: the policymaking departments (Environmental and Spatial Planning and Public health and Safety), the Information and Automatization (I&A) department and the Services department. These departments have different job demands: the policymaking department’s main job is to draft policies, whereas the Services department executes this policy by delivering services directly to citizens (handing out subsidies, permits, official documents, etc.). The I&A department is a facilitating department for the whole organization, enabling the other departments to execute their work by developing IT systems and keeping them up and running. There were two competencies that seem to relate to this work context: domain expertise and political astuteness. All civil servants of the policy departments used both competencies in DDDM; only two of the I&A department did so, and none of the Services department. As explained above, domain expertise and political astuteness involve a solid knowledge of the policy content and the political context in which it is drafted. It thus seems logical that these competencies are mainly used by the policy departments. However, according to some respondents, they should be just as important to civil servants who develop the supporting IT systems. RE1 explains that when doing so, these civil servants are also confronted with political values: Now systems are often used for a lifetime. For example, a certain algorithm might be implemented under a right-wing government with corresponding values . . . but if no one thinks about some kind of political sustainability of [the systems] then at the moment they are implemented, they are true, but . . . when the [political] coalition changes and the values are different [and they don’t change the algorithm accordingly], then you get a kind of value lock in your system.

The group for which these competencies seem less relevant are the civil servants working in Services. This can be explained by the fact that these civil servants’ task is not to create a new policy or a support system in a certain political context but to execute existing rules and regulations. These findings correspond with HRM scholars stating that personal resources (including competencies) should fit the particular job demands at hand, which depend on the particular job context (Borst, 2018; Kruyen & Van Genugten, 2020).

Discussion and Conclusion

Based on the widely confirmed assumption that under the influence of digitalization and datafication the use of technologies by civil servants has become standard practice (Meijer et al., 2018), we fill an important research gap by explicitly addressing civil servants’ required competencies in digital government (Kruyen & Van Genugten, 2020). More specifically, we figured out how DDDM takes place in government organizations and the required competencies of civil servants in DDDM. Through a systematic two-step qualitative explorative study, we found eight core competencies and 23 subcompetencies required for conducting DDDM. Consequently, we contribute to the generic public HRM literature and CBM literature by extending the competency profiles of civil servants. More concretely, we describe below what these contributions are by connecting the empirical findings to CBM literature. Using the theoretical insights to interpret the empirical findings, we derive new leads for research, leading to propositions that build a fruitful basis for future endeavors in this scholarly domain. After discussing lessons and research propositions, lessons for public (HRM) managers are discussed, followed by the limitations of this study.

Lessons for Research Resulting in Propositions

First, we state that DDDM, as observed in the municipality, is a hybrid process that requires hybrid competencies. The DDDM we found is a mixture of “traditional” and “newer” decision-making. According to Kruyen and Van Genugten (2020, p. 19), civil servants need a mixture of competencies from different philosophies, also known as the so-called layering perspective, where civil servants need competencies of traditional PA, NPM, and NPG but also new competencies of digital government (Kruyen & Van Genugten, 2020; Van der Steen et al., 2018). This study shows that for DDDM, indeed, such a layering of competencies is important. We identified eight required competencies with corresponding sub-competencies, from which teamwork (NPG), engaging stakeholders (NPG), domain expertise (PA), and political astuteness (PA) are more traditional public decision-making competencies and data literacy, critical thinking, data analytical skills, and innovativeness are newer competencies.

Although these empirical findings resonate with the idea of layering perspectives, the results are less explicit about the necessity and/or sufficiency of these competencies. A discussion in HRM literature is whether all competencies (also called abilities) are equally necessary or sufficient to perform (Hauff et al., 2021). According to the sufficiency assumption, single competencies are sufficient but not necessary to increase an outcome; if one competency is not in place, the performance may be reduced, but other competencies can still be effective and may compensate for the missing competency. In contrast, the necessity assumption implies that performance can only be achieved if a specific competency is in place. According to this logic, a particular competency is critical for reaching performance. Indeed, if this necessary competency is not in place, the outcome cannot be achieved, and deploying other competencies is pointless. Since this article took the stance to analyze the minimally required competencies necessary for DDDM, we would expect these eight competencies to be necessary in contrast to sufficient to perform. We, therefore, pose the following proposition, which can be studied in future research to corroborate our exploratory findings:

However, based on our empirical findings and competency literature, we do not claim that every civil servant should possess every one of these competencies. In stating this we follow Bekkers (2018, p. 44), who shows that governments often demand that their civil servants possess a great number of often conflicting or contradictory competencies. In practice, this is an unrealistic expectation. The solution to this seems not to expect a single person to possess a great variety of competencies but to expect competencies to be present on a team level. Several other competency scholars support this reasoning. They state that on a team level, the lack of competency of individuals can be compensated by calling upon those of others (Kauffeld, 2006, p. 3). The empirics underscore this point as well. Several respondents (RG3, 6, 12, 17, 19; RE5 to 10) stress the need to create teams consisting of civil servants with complementary competencies. Three respondents of the municipality (RG9, 12, 17) refer to this as “making sure the right people are at the right place at the right time.” Based on the competency literature and the empirics, we thus conclude that, by default, it should be sufficient if a competency is present on the level of a DDDM process or, in other words, possessed by at least one team member involved. This is also in line with the situational approach, which argues that competency models need to be context-specific and capture the distinctiveness of organizations and sectors (Getha-Taylor et al., 2016).

Despite this nuance, the empirics also show that there are still three more general core competencies that should be possessed by everyone involved in a DDDM process. These are data literacy, critical thinking, and teamwork. Regarding data literacy, many respondents specifically mentioned that just as civil servants know about societal developments such as individualization and globalization, they should know about the datafication and digitalization of society. Furthermore, when civil servants are involved in a DDDM project, they need to have a rudimentary level of understanding of what data is, how it is used in their project, and the implications of the (use of) data. This makes data literacy a competency that every civil servant in a DDDM project should possess. Regarding critical thinking, working with data in the public sector requires careful deliberation about solving ethical dilemmas. This is not something one person can do alone (as was shown in the “Results” section), which stresses that all civil servants involved in a project should be able to reflect upon data usage critically. Finally, working with data requires the competency of teamwork of all civil servants. Needless to say, working in a team is a joint effort. However—as explained above—in DDDM it is especially important for everybody to be able to work together because it requires a combination of more traditional and newer competencies that need to complement each other. In other words, in line with Getha-Taylor et al. (2016) and Kruyen and Van Genugten (2020), in every job, employees need specific competencies and more general core competencies. This is no different when focusing on a specific cluster of digital or data competencies. Consequently, the following propositions can be posed, which can be studied in future research to corroborate our exploratory findings:

This study thus contributes to the layering perspective (Kruyen & Van Genugten, 2020; Van der Steen et al., 2018) by not only showing that PA, NPM, and NPG-related competencies are necessary in the process of DDDM but also that newer digital and innovation-related competencies are required (Proposition 1a). Also, we have shown nuances in the necessity and sufficiency of these layered competencies deriving from the teamwork nature of DDDM (Propositions 1b and 1c). Yet we have not extensively tested these propositions in this study, as it was explorative. Therefore, future studies should test and verify these propositions in other municipal and public organizational contexts.

Furthermore, building on Proposition 1b, we found that although it is sufficient that civil servants possess some of the five competencies, it might be less open-ended than proposed by Proposition 1b, which of those civil servants in the team possess which of these competencies. As the empirics show, especially domain expertise and political astuteness are necessary competencies to possess as a policymaker within DDDM, while it is less necessary to possess these two competencies as a civil servant from the I&A department that develops IT systems and even a lot less necessary to possess as a policy executor from the Services department. An important explanation for these empirics seems to be given by the CBM literature, which shows that civil servants work in different domains and are confronted with different job demands that they must cope with through task-specific competencies (Bonder et al., 2011; Boyatzis, 1982). Indeed, the policy content and the political context are important to understand to draft policies. Moreover, policymakers are the closest to politically elected top executives and are often forced to develop ambiguous and conflicting policies (Borst et al., 2019). Therefore, political astuteness might be a logical way to cope with these demands during DDDM. This line of reasoning about coping with demands through specific competencies also fits with the assumptions of the JD-R model that particular personal resources (i.e., competencies) might be helpful to cope with particular contextual demands (Borst, 2018). In general, we might therefore propose that future research studies the following proposition to corroborate our exploratory findings:

More specifically, we propose,

Finally, to build further on the JD-R model that states that particular competencies might be helpful to cope with particular demands, it is interesting to note that we deduce from the empirics that public contextual demands might also hinder the usage of competencies by civil servants. Some name for example the General Data Protection Regulation, but also the importance of safeguarding public values in DDDM and the presence of red tape. As literature shows, demands such as red tape might hinder competencies such as the innovativeness and creativity of civil servants (Houtgraaf et al., 2021). Consequently, in line with the JD-R model, public sector demands including public values and red tape might hinder the usage of competencies in DDDM. Future research could thus study the following proposition:

This study contributes to the literature that contextualizes the JD-R model to specific public contexts (Borst, 2018; Borst et al., 2019), by showing that competencies required in DDDM might be different for public employees depending on their (public sector) job context and job demands (Proposition 2a). We specified this for the job context of policymakers (Proposition 2b). We also added that the usage of specifically newer competencies might be hindered by characteristics of policymaking contexts (Proposition 2c). However, again, we did not test these propositions in this study. Future studies should study and verify these propositions as well.

In sum, we conclude that DDDM in governments requires eight core competencies, including newer competencies such as data analytical and innovativeness competencies and more traditional competencies, including, for example, domain expertise and political astuteness. Moreover, it is not necessary that every civil servant possesses all eight competencies as long as on the team-level applying DDDM all competencies are present. At the same time, three competencies, including data literacy, critical thinking, and teamwork, need to be possessed by every single civil servant. Who possesses one or more of the remaining five depends on the department (e.g., policy, execution, technical support) civil servants works in and the inherent contextual demands they have to deal with.

Lessons for Public (HRM) Managers

Besides these theoretical contributions, our study also has two important implications for practitioners, including public (HRM) managers. First, we show that competency profiles still need to include “traditional” public decision-making competencies—such as engaging stakeholders, political astuteness, and teamwork—to perform effectively in the world of digital government and DDDM. However, we also show that public (HRM managers) need to extend these profiles by including “newer” competencies such as data literacy and data analytical skills. In line with Competence-Based Management, these competency profiles need to be adapted so the recruitment and selection process and the training and development process can be aligned with the changes in the working environment. This is necessary for public organizations to better cope with the challenges of digital government in the future (Kruyen & Van Genugten, 2020).

Second, we show that competency profiles need to be aligned with the team level. Following Kauffeld (2006), we state that, except for three competencies that, according to the respondents, should be possessed by everyone involved (critical thinking, data literacy, and teamwork), it is sufficient if the eight competencies are present within the team executing the DDDM process. These findings highlight that DDDM is a shared responsibility by several civil servants in a team. Resultantly, not all civil servants need to have the same competencies. Therefore, public (HRM) managers should invest in connecting civil servants that possess newer competencies such as innovativeness and data analytical skills with civil servants that possess more traditional competencies such as domain expertise and political astuteness. This requires identifying which civil servants in their organization possess which competencies and bringing them together in the process of DDDM.

Limitations

Despite these contributions, this study has some limitations. As explained before, the data analyses included in the case study were relatively similar and simple. We did not include more complex technologies that will most likely become more important in decision-making in the future, such as Artificial Intelligence (AI) and blockchain (Van den Hoven et al., 2017; Vetzo et al., 2018). The simple reason for this is that we studied a typical case in which civil servants did not use any of these technologies yet. Future studies could test the eight competencies in governmental cases that are more ahead in their technology development. Potentially more complex technologies could require some different competencies or different sub-competencies. Furthermore, this study used a combination of expert interviews and interviews and a focus group with civil servants of one municipality. Although the selected municipality can be considered a typical case and thus representative of many other Dutch municipalities, single case studies are limited in terms of external validity (Blatter & Haverland, 2012; Yin, 2013). As a result, the identified competency framework should be tested in other cases, such as the aforementioned governments that are further ahead in using more complex technology in DDDM, governments at different administrative levels (provincial, national) and (local) governments in other countries. We have formulated six propositions that can be used as starting points for these future studies.

To sum up, this study’s main conclusion is that DDDM in local governments is a hybrid process that requires hybrid competencies. It requires combining newer and more traditional competencies. Local governments need to invest resources in developing or selecting these competencies among their employees. This will help them better exploit the possibilities data offers in a responsible way, which is important in today’s and tomorrow’s society in which the influence of new technologies will only be increasing.

Footnotes

Appendix A

Overview of the Experts.

| Resp. Nr. | Organization | Position |

|---|---|---|

| RE1* | University | Researcher Algorithmic accountability |

| RE2 | Public sector data consultant | Consultant |

| RE3 | Public sector data consultant | Director |

| RE4 | Public sector data consultant | Director |

| RE5 | Ministry | Policy advisor E-government |

| RE6 | University | Professor of political and public leadership |

| RE7 | University | Professor of competencies of public managers in a changing work environment |

| RE8 | Implementing organization (part of Ministry) | Strategic advisor organizational development |

| RE9 | Implementing organization (part of Ministry) | Policy advisor data-driven transformation |

| RE10 | Municipality | Director Public Health department |

| RE11 | Dutch association of municipalities | Program manager data-driven transformation |

| RE12 | University | Professor of Philosophy of Technology |

RE = Respondent Expert.

Appendix B

Interview Protocol Experts.

| Section | Questions and explanation |

|---|---|

| Introduction | The purpose of this interview is to find out what people at municipalities need to be able to work responsibly with data and in their work or projects. In this interview, I ask experts like you to use your knowledge and experience with data-driven decision-making in the public sector to give your ideas about which competencies are required in data-driven decision-making. |

| Competencies | - What is different about data-driven policy making compared with the “traditional” way of making policy? - Which essential competencies should a policymaker possess in data-driven decision-making? - What makes these competencies so different from competencies of the past? - What distinguishes these competencies from competencies in the private sector? - To what extent is there a difference in competencies between “leading” and “average” municipalities in terms of data-driven decision-making? - Suppose the trend of data-driven decision-making continues, what competencies will a civil servant need in 10 years? - What are the most important/most critical competencies of the aforementioned? |

| Examples | - Can you give an example of a public organization or project that is at the forefront of data-driven decision-making? - Which competencies did the people in this organization/project possess and use to make it a success? |

| Conclusion | The data from this interview will be transcribed and processed completely anonymous. Thank you for your time and help. |

Appendix C

Overview of Respondent’s Municipality.

| Resp. Nr. | Department | Type of data | Software used |

|---|---|---|---|

| RG1* | Public health and safety | Personal data citizens | Business Intelligence (BI) Software |

| RG2 | Environment and spatial planning | Data of physical objects in municipal territory | Geographical Information Systems (GIS) |

| RG3 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG4 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG5 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG6 | Public health & safety | Personal data citizens | BI software |

| RG7 | Public health & safety | Personal data citizens | BI software |

| RG8 | I&A | Combination of the three types of data | BI software |

| RG9 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG10 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG11 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG12 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG13 | Public health & safety | Personal data citizens | BI software |

| RG14 | Public health & safety | Personal data citizens | BI software |

| RG15 | Public health & safety | Personal data citizens | BI software |

| RG16 | Services (citizen-helpdesk) | Complaints, questions, suggestions, etc. of citizens | BI software |

| RG17 | Environment and Spatial planning | Data of physical objects in municipal territory | GIS |

| RG18 | Services (citizen-helpdesk) | Complaints, questions, suggestions, etc. of citizens | BI software |

| RG19 | I&A | Combination of the three types of data | BI software and GIS |

| RG20 | Environment and spatial planning | Data of physical objects in municipal territory | GIS |

| RG21 | I&A | Combination of the three types of data | BI software and GIS |

| RG22 | I&A | Combination of the three types of data | BI software and GIS |

RG = Respondent Government.

I&A = Information and Automatization.

Appendix D

Interview Protocol Civil Servants.

| BEI* method | Questions |

|---|---|

| Introduction and explanation | The purpose of this interview is to find out what people here at the municipality need to be able to work responsibly with data and information in their work or projects. The best way to do this is to ask experts like you, who work with data, how you do it. I would like to know the most important/critical moments that you have encountered while working with data and making decisions based on data. I will ask you to describe (a) a few successful experiences and (b) a few difficult situations. |

| Work activities | - What are your most important tasks or responsibilities? - What did you do before you started working here? |

| Behavior | - Please recall a specific experience you have had in working with data that went particularly well for you (a high point). I am interested in learning from your best experience in working with data. Please walk me through it from beginning to end. - Please recall an experience in working with data in which you felt you were not as effective as you could be, when things did not go well, or when you were particularly frustrated (a low point). I am interested in learning from your toughest experience working with data. Please walk me through it from beginning to end |

| Characteristics needed to do the job | - At which point in the situations above would you have liked to do something differently? Why? - On which characteristics/qualities would you hire people to do your work |

| Conclusion | The data from this interview will be transcribed and processed completely anonymous. Thank you for your time and help. |

BEI = behavioral event interviewing.

Appendix E

Codebooks.

| Competencies coded for both experts and civil servants | Frequency RE* | Frequency RG** |

|---|---|---|

| Accountability | 2 (16.7%) | 2 (9.1%) |

| Agility | 4 (33,3%) | 2 (9.1%) |

| Analytical insight | 2 (16,7%) | 19 (86.4%) |

| Asking the “right” data questions | 7 (58.3%) | 4 (18.2%) |

| Collaboration with third parties | 7 (58.3%) | 15 (68.2%) |

| Communicating with team members | 7 (58.3%) | 15 (68.2%) |

| Communicating with citizens | 2 (16.7%) | 5 (22.7%) |

| Connecting politicians to data project | 2 (16.7%) | 7 (21.2%) |

| Connecting team members | 2 (16.7%) | 9 (40.9%) |

| Courage | 6 (50.0%) | 7 (21.2%) |

| Creativity | 1 (8.3%) | 9 (40.9%) |

| Critical thinking | 12 (100%) | 17 (77.3%) |

| Curiosity | 6 (50.0%) | 8 (36.4%) |

| Data analytical skills | 7 (58.3%) | 17 (77.3%) |

| Data literacy | 11 (91.7%) | 17 (77.3%) |

| Data mindset | 7 (58.3%) | 14 (63.6%) |

| Design thinking (thinking of the end users of a system/decision) | 4 (33.3%) | 2 (9.1%) |

| Domain expertise | 7 (58.3%) | 10 (45.5%) |

| Empathy | 8 (66.7%) | 7 (21.2%) |

| Engaging stakeholders | 8 (66.7%) | 13 (59.1%) |

| Experimenting | 3 (25.0%) | 8 (36.4%) |

| Innovativeness | 6 (50.0%) | 17 (77.3%) |

| Integral thinking | 6 (50.0%) | 12 (54.5%) |

| Learning about data | 2 (16.7%) | 4 (18.2%) |

| Political astuteness | 6 (50.0%) | 13 (59.1%) |

| Realism about data | 1 (8.3%) | 9 (40.9%) |

| Responsibility | 4 (33.3%) | 11 (50.0%) |

| Teamwork | 7 (58.3%) | 17 (77.3%) |

| Competencies coded only for the civil servants | ||

| Storytelling | — | 4 (18.2%) |