Abstract

Researchers attempting to survey refugees over time face methodological issues because of the transient nature of the target population. In this article, we examine whether applying smartphone technology could alleviate these issues. We interviewed 529 refugees and afterward invited them to four follow-up mobile web surveys and to install a research app for passive mobile data collection. Our main findings are as follows: First, participation in mobile web surveys declines rapidly and is rather selective with significant coverage and nonresponse biases. Second, we do not find any factor predicting types of smartphone ownership, and only low reading proficiency is significantly correlated with app nonparticipation. However, obtaining sufficiently large samples is challenging—only 5 percent of the eligible refugees installed our app. Third, offering a 30 Euro incentive leads to a statistically insignificant increase in participation in passive mobile data collection.

In 2015 and 2016, an estimated 1.2 million refugees 1 arrived in Germany, increasing the total German population by about 1.5 percent and the non-German citizen population by almost 16 percent (calculations using 2014 numbers; see Bundesamt für Migration und Flüchtlinge [BAMF] 2016, 2017). This large influx of refugees to Germany and other European countries raised many questions concerning the demographic and cultural future of European societies. Policy makers require empirical knowledge of refugee needs, aspirations, and life circumstances to address these questions and craft credible and sustainable integration policies. Unfortunately, the data on which such policies could be based are often lacking.

While several initiatives are currently underway in the German and European research communities to address the demand for empirical data on refugees (Britzke and Schupp 2017; Kroh et al. 2018), it has become clear that interviewing refugees poses several challenges for the standard repertoire of survey research methodology. Important issues arise not only from sampling (Bloch 2007; Jacobsen and Landau 2003; Singh and Clark 2013; Vigneswaran and Quirk 2012) but also recruiting and interviewing respondents as well as tracking individuals for longitudinal study designs (Morville et al. 2015; Tingvold et al. 2015). Refugee populations, even those who have reached destination countries such as Germany, are highly mobile as they may be resettled outside of the initial interview area based on the outcome of their asylum applications or due to governmental or agency policies. Furthermore, once refugees have the right to freely choose where to live, they tend to move repeatedly between accommodations depending on space, familial needs, and other factors. Such movement creates challenges for researchers when seeking recontact for follow-up or longitudinal study purposes (Ward and Henderson 2003). Tracking increases time and monetary demands on projects and the likelihood of nonresponse in any subsequent waves due to failure to locate respondents (Morville et al. 2015; Tingvold et al. 2015).

At the same time, the rise of smartphone penetration has introduced new opportunities for collecting data (Couper, Antoun, and Mavletova 2017; Sugie 2018). Widespread use of smartphones allows not only for data collection through mobile web surveys independent of time and location of the respondent but also passive mobile data collection through apps installed on smartphones. Research apps on smartphones collect behavioral data from users in greater detail and richness of information than can be collected from survey questions, including geolocation, physical movements, online behavior and browser history, app usage, and call and text message logs (Harari et al. 2016; Link et al. 2014). These data can enable researchers to infer users’ mobility patterns, physical activity and health, consumer behavior, and social interactions.

Studies have shown that migrants use smartphones and apps in their effort to integrate into the host society, including by learning the language of the host country (Bradley, Lindström, and Hashemi 2017; Kukulska-Hulme et al. 2015), maintaining and building social networks (Alam and Imran 2015; Gillespie et al. 2016; Ram 2015; St. George 2017), and accessing occupational and employment opportunities (Alam and Imran 2015; St. George 2017). Even if respondents move, smartphones allow researchers to maintain contact with respondents over time. Thus, using smartphone technology for data collection among refugees seems a natural fit. However, refugee populations may still be considered “hard-to-survey” due to the political sensitivity of their situation and their high levels of suspicion and distrust due to experiences of conflict and displacement (Firchow and Mac Ginty 2017). Thus, whether refugees are willing to participate in data collection using their smartphones has remained unanswered so far.

In this article, we explore the conditions and feasibility of data collection through smartphones as a mode for collecting information about refugees in Germany and for tracking their attitudes and behaviors in a longitudinal design. The main research question can be broken down into several subquestions: Does bias arise in data collection using smartphone technology due to differences in smartphone ownership patterns across refugee groups? Are refugees willing to respond to mobile web surveys and participate in passive mobile data collection? Is there differential nonresponse and nonparticipation error due to differences in response or participation patterns among specific refugee groups? Does a monetary incentive increase participation in passive mobile data collection among refugees?

In the next section of this article, we review the relevant literature on the use of mobile phones, particularly smartphones, for conducting mobile web surveys and passive mobile data collection, as well as possible sources of error that stem from differential smartphone ownership and study participation. We then discuss the methods used to administer the Life and Integration in Germany (LIG) study, a feasibility study on smartphone data collection among a nonprobability sample of refugees in Southwestern Germany. We present the results of our study, discuss the implications of the findings, and consider next steps.

Literature

A growing body of literature assesses the influence of participating in web surveys with smartphones on data quality (see Couper et al. [2017] for a review). While researchers now employ mobile web surveys in many fields to collect self-reports via smartphones from diverse populations, we are not aware of any published study that has used mobile web surveys in the context of refugee research.

Likewise, passive mobile data collection is still a relatively unexplored scientific method. Automatically collecting data from smartphones allows researchers to record extensive information with minimal effort by the respondent; these data are observational in nature rather than self-reported, and they are generated within real-world contexts rather than laboratory ones (Raento, Oulasvirta, and Eagle 2009; Revilla, Ochoa, and Loewe 2017; Sugie 2018). Previous studies have found that data passively collected via smartphones are as or more accurate than self-reports, meaning that this new approach can be used to enhance and even correct traditional survey data (Boase and Ling 2013; Scherpenzeel 2017). Combining self-reports through mobile web surveys with passive mobile data collection is also increasingly used to measure network data, collect real-time emotional well-being measures, and sensitive information such as mental health and sexual behavior (Eagle, Pentland, and Lazer 2009; Gaggioli et al. 2013; Goldberg et al. 2014; Raento et al. 2009; Sugie 2018). While using smartphones for data collection promises great improvement for researchers, there are still several possible methodological challenges that may prevent the use of smartphones in large-scale data collection efforts. In the following sections, we describe potential problems of coverage and nonparticipation when using smartphones for data collection, and we elaborate how our study contributes to the literature in this field.

Coverage Error When Using Smartphones for Data Collection

Since smartphones are a relatively new technology, penetration is far from universal. Users might differ from nonusers in basic sociodemographic characteristics such as age, gender, and education. If there are systematic differences between smartphone users and nonusers in the variable of interest, coverage bias would arise in studies that use smartphone technology for data collection (Groves et al. 2009).

While more than three of four adults in many Western countries such as Germany (Bundesverband Informationswirtschaft, Telekommunikation und neue Medien 2017) and the United States (Pew Research Center 2017) own a smartphone, smartphone penetration in other parts of the world is much smaller. Pew Research Center found that 57 percent of the adult population in the Middle Eastern/North African (MENA) region, and only 19 and 14 percent of the adult population in sub-Saharan Africa and South Asia, respectively, owned a smartphone (Poushter 2016). The two countries of origin with the highest numbers of recent refugees to Germany, Syria and Iraq, were not included in the Pew Research study. 2

On a subgroup level, smartphone ownership patterns follow the same trends in the MENA, sub-Saharan African, and South Asian regions as elsewhere in the world. Millennials (aged 18–34), those with more education, and those with higher income are more likely to own a smartphone. In six of the sub-Saharan African countries surveyed by Pew Research Center, as well as Pakistan and India, smartphone penetration is significantly higher among men (Poushter 2016).

Passive data collection may further exacerbate the potential for coverage error. While mobile web surveys can be completed on virtually any device with an Internet browser, regardless of model or operating system (OS), the specific OS determines what data can be collected passively (Harari et al. 2016). Thus, the particular type of smartphone owned may add another source of coverage error that has not yet been considered, and no reliable data on systematic differences between users of different OS exist so far.

One goal of this study is to assess the potential for biases that arise due to differential smartphone ownership among refugees. Our expectations are that we will find similar types of bias due to smartphone ownership in the refugee population compared to the general population: Refugees who are younger and better educated are more likely to own smartphones. In the context of refugee studies, it is especially interesting whether bias extends to country of origin and asylum status. Given the limited previous research, we do not have clear expectations about differences between owners of smartphones with different types of OS.

Nonresponse and Nonparticipation Error When Using Smartphones for Data Collection

Even if all members of a target population own a smartphone and could participate in data collection, nonparticipation error can arise if participants and nonparticipants to a study systematically differ in the variable of interest (Groves et al. 2009). 3 Several studies found that differential nonresponse exists for mobile web surveys, with smartphone respondents usually being younger, more likely to be female, from higher income groups, heavier mobile Web users, and primarily relying on smartphones to access the Internet than respondents who use desktop or laptop computers to complete web surveys (see Keusch and Yan [2017] and Couper et al. [2017] for summaries on the existing literature on nonresponse in mobile web surveys). The literature also indicates that simply because individuals possess a mobile or smartphone does not mean that they are willing to participate in the mobile version of a survey (De Bruijne and Wijnant 2013; Mavletova 2013).

The picture is similar when we look at passive mobile data collection using smartphone apps. Here again, the few studies so far seem to be biased toward individuals who are more likely to use the Internet (i.e., younger, more computer savvy, etc.) and thus report more Internet usage than might be found in the general population. This may be due in part to the reliance of this research on samples from online panels (Pinter 2015; Revilla et al. 2017; Sonck and Fernee 2013). Recent research also suggests the existence of biases and low participation rates among those who consent to enhanced data collection such as through record linkage or downloaded apps (e.g., Pinter 2015; Revilla et al. 2017). In one of the few studies using smartphones to monitor disadvantaged and hard-to-reach groups (specifically, parolees recently released from prison), Sugie (2018) had an initial consent rate of 93 percent. However, this consent rate is likely due to the high effort in the recruitment process, as well as the fact that respondents were provided a smartphone plus data plan as part of the project. The author noted that most groups with sensitive or potentially negative interactions with government agencies are more likely to be concerned with issues of privacy, monitoring, and surveillance.

In addition to factors correlating with the willingness to participate in either mobile web surveys or passive mobile data collection, there are several aspects common to both types of data collection. For example, Couper et al. (2008, 2010) found substantial evidence that perceptions of risk and harm, saliency of privacy, and especially topic sensitivity decrease respondents’ willingness to participate in surveys. Willingness to participate in passive mobile data collection using smartphone apps is also influenced by general attitudes toward privacy, surveys, trust, and altruism (Keusch et al. forthcoming).

Refugees have cause to be both wary of data collection due to their experiences of conflict and displacement and sensitive to their presentation to government agencies due to their outstanding applications for asylum. In this study, we examine in more detail whether this skepticism translates to low response rates to mobile web surveys and low participation rates in passive mobile data collection. Furthermore, we evaluate whether these phenomena in turn lead to systematic biases in some of the variables of interest.

In summary, although smartphone penetration might be high among refugees coming to Europe, very little effort has been made so far to leverage this technology by researchers. Our key contribution in this article is therefore a first assessment of the feasibility of using smartphone technology to collect data from refugees via mobile web surveys and passive mobile data collection using apps.

The LIG Study

The LIG study collected data from refugees living in residences for temporary accommodation (so-called “Unterkünfte zur vorläufigen Unterbringung” 4 ) in three districts of the state of Baden-Württemberg, Germany, over a period of six months in 2017. The data cover information on the refugees’ life situation, political attitudes, integration experiences, and efforts and level of success in entering the labor market. We obtained permission to conduct our project from the district administrations which provided us with a list of all residences in their jurisdictions. We then contacted the residences, explained our study to their directors, and asked for permission to conduct interviews with the refugees living there. 5 In total, we collected data from refugees at 41 residential locations. Our target group was adult refugees aged 18 years and above. Due to resource constraints, we were only able to interview refugees who spoke either Arabic or English.

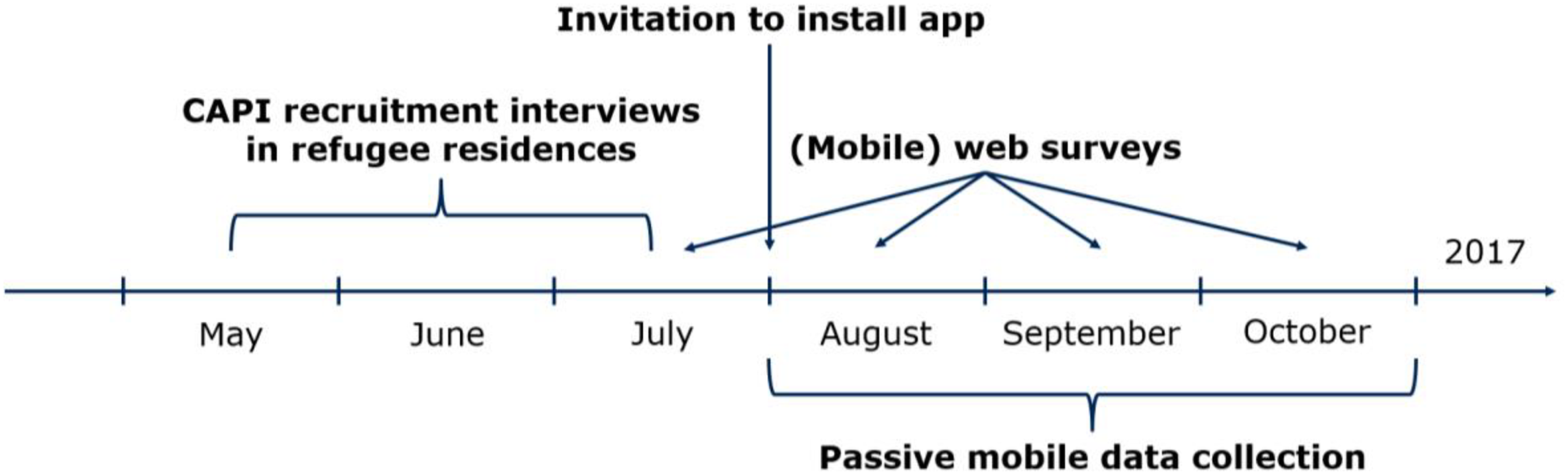

As the time line in Figure 1 illustrates, we collected data in three stages between May and October 2017. First, we visited the residences between May and July to recruit participants for our study and conduct short computer-assisted personal interviews (CAPI). Second, we invited the respondents to four online surveys between July and October. Third, we invited the same respondents at the beginning of August to install our research app on their smartphone, which would passively collect data over the course of three months. Participating in the web surveys did not require downloading the research app. In the following section, we describe the three stages in detail.

Time line of the Life and Integration in Germany (LIG) study.

Recruitment and CAPI Survey

The initial part of data collection in the LIG study included personal interviews in refugee residences. Based on the recommendations in the refugee research literature (Harrell-Bond and Voutira 2007; Ward and Henderson 2003), we closely collaborated with the directors of the residences and local social workers throughout the process of scheduling our visits to the residences and conducting the interviews there. Social workers usually announced our visit by posting an information flyer to message boards in the public area of the residences (see Online Supplemental Material [which can be found at http://smr.sagepub.com/supplemental/]). Some social workers explicitly encouraged refugees to participate in our study before the visit. Personal interviews were conducted in a public area at the residences, and we approached all adult refugees who were present and spoke either Arabic or English.

A total of 529 refugees provided their consent (see Online Supplemental Material [which can be found at http://smr.sagepub.com/supplemental/]) and participated in a short CAPI survey. The questionnaire was administered by a team of interviewers that consisted of refugees (from Iraq and Syria) and students (from Germany, Botswana, Egypt, Ethiopia, and Lebanon). The interviews collected information about demographics, personality traits, asylum status, smartphone ownership, and Internet usage. The median time to complete an interview was 10 min. At the end of the interview, the participants were asked for their consent to be recontacted through WhatsApp or e-mail for the later stages of the data collection. Of the 529 participants in the initial interview, 468 owned a smartphone and provided information for further contacts as part of the study.

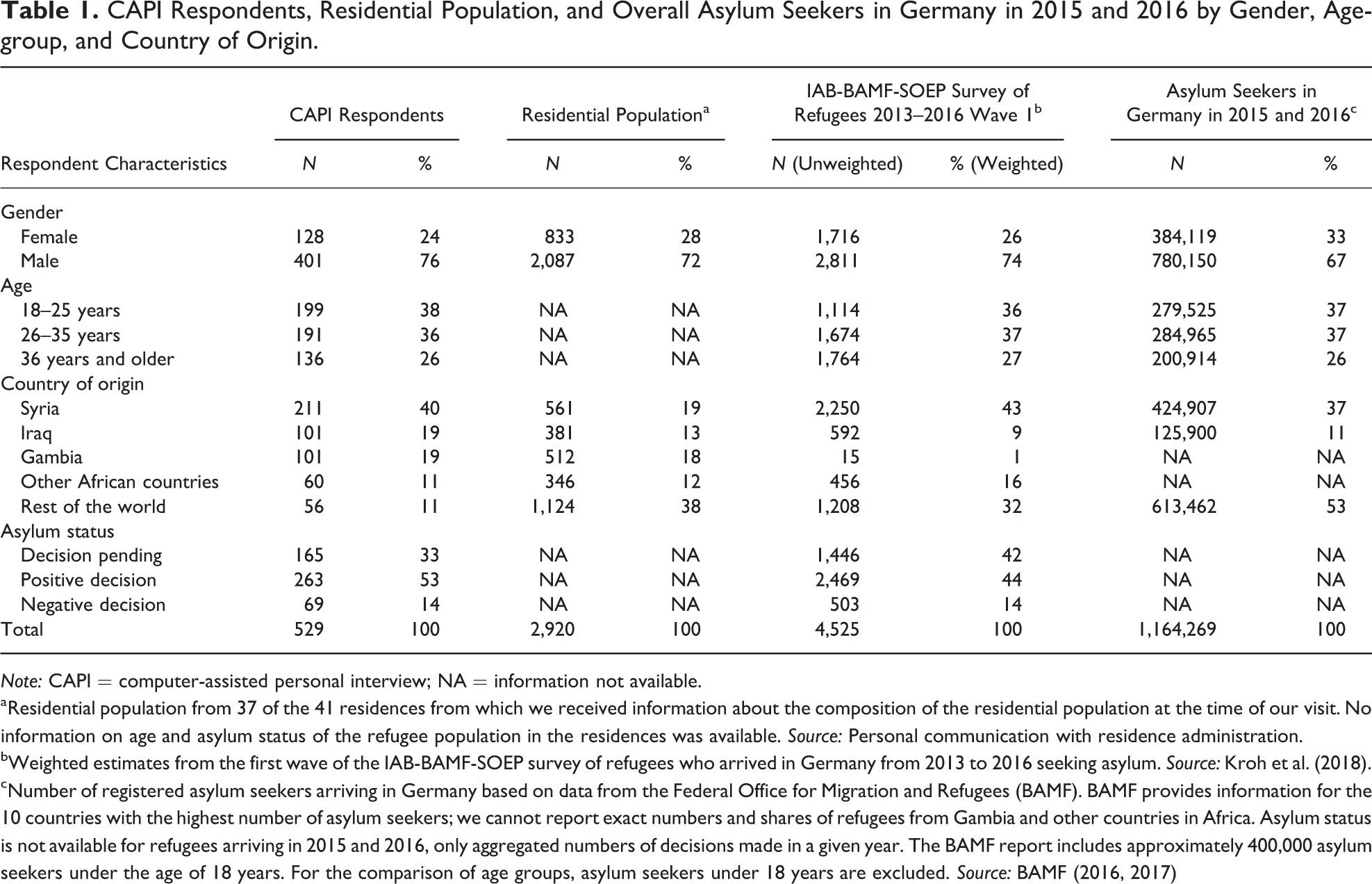

In order to check our sample against the population of refugees in Germany, we compare it to three other sources (Table 1): aggregated background information about the composition of the resident population at the time of our visit, the IAB-BAMF-SOEP (Institut für Arbeitsmarkt - und Berufsforschung-Bundesamt für Migration und Flüchtlinge - Sozioökonomische Panel) Survey of Refugees in Germany (Kroh et al. 2018), and administrative records reported by the German Federal Office for Migration and Refugees (BAMF 2016, 2017) for the overall cohort of asylum seekers entering Germany in 2015 and 2016. We can see that there is a close similarity between these benchmarks and our sample with respect to age and gender. In addition, our sample slightly overrepresents refugees with a positive asylum decision and underrepresents refugees with an asylum decision still pending. The only large, but expected, mismatch can be observed in terms of country of origin. Because of the language restrictions, our sample features more nationals from Arabic-speaking countries and major African sources and heavily underrepresents individuals from the rest of the world. Overall, the comparison shows that our sample, although nonprobabilistic in nature, shares many features with the general population of refugees in Germany.

CAPI Respondents, Residential Population, and Overall Asylum Seekers in Germany in 2015 and 2016 by Gender, Age-group, and Country of Origin.

Note: CAPI = computer-assisted personal interview; NA = information not available.

aResidential population from 37 of the 41 residences from which we received information about the composition of the residential population at the time of our visit. No information on age and asylum status of the refugee population in the residences was available. Source: Personal communication with residence administration.

bWeighted estimates from the first wave of the IAB-BAMF-SOEP survey of refugees who arrived in Germany from 2013 to 2016 seeking asylum. Source: Kroh et al. (2018).

cNumber of registered asylum seekers arriving in Germany based on data from the Federal Office for Migration and Refugees (BAMF). BAMF provides information for the 10 countries with the highest number of asylum seekers; we cannot report exact numbers and shares of refugees from Gambia and other countries in Africa. Asylum status is not available for refugees arriving in 2015 and 2016, only aggregated numbers of decisions made in a given year. The BAMF report includes approximately 400,000 asylum seekers under the age of 18 years. For the comparison of age groups, asylum seekers under 18 years are excluded. Source: BAMF (2016, 2017)

Mobile Web Surveys

After we completed recruitment, we contacted all 468 participants with smartphones who gave us their contact information through WhatsApp or e-mail and invited them to participate in four web surveys over the course of three months. For each of the surveys, we sent the participants an individualized link to a website at which they could respond to the survey questions (see Online Supplemental Material [which can be found at http://smr.sagepub.com/supplemental/]). The questionnaires were programmed in Questback EFS in a design optimized for smartphones. While the online surveys could also be completed on laptop or desktop computers, 100 percent of respondents used a mobile device.

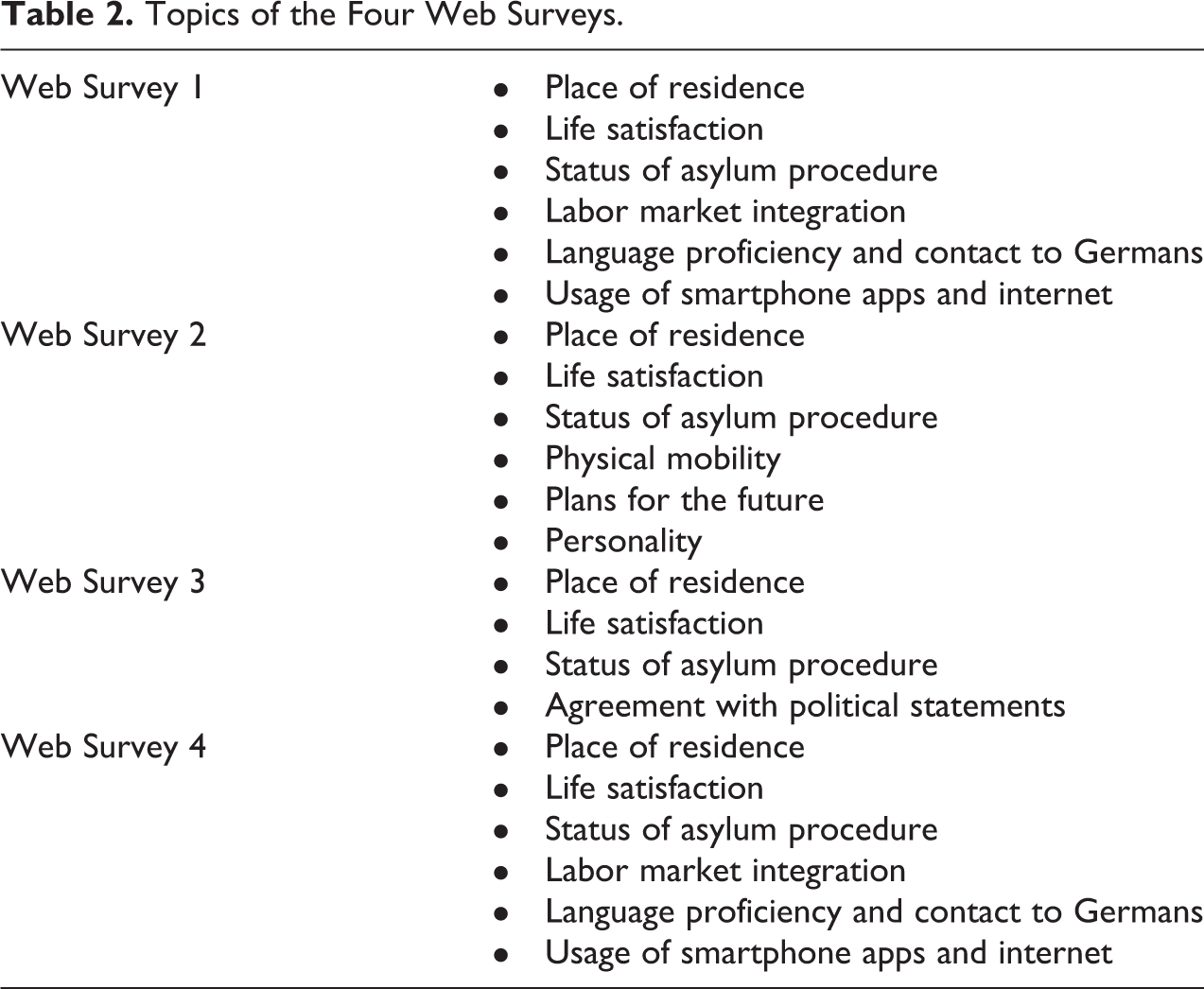

All surveys included core questions of place of residence, life satisfaction, and asylum status while other topics appeared once or twice (questions from the first survey were repeated in the fourth survey; see Table 2 for details). For all four surveys, we sent reminder messages after three to seven days to participants who had not responded following the initial invitation. Completing the four surveys took a median time of 11, 10, 13, and 8 min, respectively.

Topics of the Four Web Surveys.

Passive Mobile Data Collection

Shortly after the invitation to the first web survey, we invited the 417 participants who reported owning an Android 6 smartphone and provided contact information to download the LIG app for passive mobile data collection during the next three months. The LIG app was developed by the company P3 insight and was available in English and Arabic. Once installed, the app automatically collected data on (1) the approximate location of the smartphone and (2) Internet activity and app usage on the smartphone. To collect information on the approximate location of the smartphone, a simple Internet connection test was performed every 15 min that also recorded the last known cell tower location of the smartphone. We restricted recording of most Internet activity to time stamps and server names of URLs visited on the native browser of the smartphone (e.g., if a user visited a subpage of the website of the German Bundesregierung, e.g., https://www.bundesregierung.de/Webs/Breg/DE/Themen/Fluechtlings-Asylpolitik/_node.html, only www.bundesregierung.de was recorded). For search engine websites, we recorded the entire URL to collect information on search terms used by the refugees. We recorded time stamps of app usage when a specific app was running in the foreground on the smartphone but no information on the activity within the app was collected (e.g., our research app collected how often and for how long the Facebook app was used but not what a user posted, watched, tagged, or liked on Facebook). These data are intended to help us answer substantive research questions on refugee mobility, job search and labor market activities, language learning, and social integration.

We used a multistage information and consent procedure to ensure that participants understood what type of data the app collected. We first sent a short description of the app’s functionality followed by a second message with a link to the full terms and conditions on the project website to all invited participants via WhatsApp and e-mail. Three days later, we sent a third message with an invitation to download the app via a personalized link to the Google Play Store. Upon downloading the app from the Google Play Store, participants had to first agree to the terms and conditions of the app in general and were then additionally asked to opt in for both the recording of the approximate location and the collection of Internet and app data. Participants could withdraw the right to collect either of the two types of data in the settings of the app at any point during the three months of passive mobile data collection. Participants with Android smartphones who had not downloaded the app after 5 and 12 days following the initial invitation were reminded to do so via WhatsApp and e-mail (see Online Supplemental Material [which can be found at http://smr.sagepub.com/supplemental/], for invitation messages, written information, and app screenshots). After three months, the app automatically stopped collecting data, and a message was sent through the app to all participants that the passive mobile data collection had ended.

To test the influence of monetary incentives on participation in the app part of the study, we assigned the Android smartphone users to one of two groups. Approximately half of the invitees were informed in the initial invitation message and all subsequent reminders that they would receive a 30 Euro 7 incentive (participants could choose from a money transfer, a Google Play Store voucher, or an Amazon voucher) for installing the app on their smartphone and allowing data collection for the full three months of data collection (treatment group). The other half did not receive this information (control group). 8 At the end of the passive data collection phase, we administered the incentive to all participants who had installed the app and allowed passive data collection on their smartphone, regardless of assignment to the treatment or control group.

Ethics and Data Protection

Given their current circumstances and past experiences, refugees may feel that they have a limited ability to exercise self-determination, have unrealistic expectations of the benefits of the research, or have experienced severe trauma that may undermine their competency as respondents (Harrell-Bond and Voutira 2007; Mackenzie, McDowell, and Pittway 2007). Against this background, we took the utmost care to ensure that refugees’ safety, well-being, and the protection of their data were our highest priority throughout the entire study. Before launching data collection, the study procedures were reviewed and approved by the ethics commission of the University of Freiburg. When training the interviewers for the CAPI survey, we put a strong focus on how to minimize emotional stress that both refugees and interviewers might experience. The questions asked in the CAPI and the web surveys struck a balance between collecting important information on refugees and their life situation and avoiding overly sensitive topics, such as experiences with violence and war.

During our visits in the residences, we made clear that this was part of a scientific study, that we did not work for the German government, and that refugees’ answers would not affect their asylum application in any way. We also could not and did not give advice or assistance on questions relating to the asylum application. The study only collected data from participants with their informed and explicit consent. As part of the CAPI survey, we explained the procedures of the study verbally to all participants and handed them an information sheet that explained subsequent parts of the study in plain English and Arabic.

For the web surveys and the app study, only participants who consented to further contacts were approached. Participants had the unilateral right to end their participation at any time during any stage of the study and with immediate effect. The research app itself only collected information on a level of granularity that allowed us to observe individual behavior necessary to answer substantive research questions but without exposing individuals.

After the last mobile web survey, we deleted all participants’ contact information, thus anonymizing the data set. At the end of the field period of the passive mobile data collection, we linked data from the different sources using pseudonyms (i.e., randomly generated ID numbers). The full data set is exclusively saved on protected servers in Germany where all legally required and recommended data protection policies are constantly examined and data security measures updated. All data transmissions were conducted using advanced encryption standards.

Results

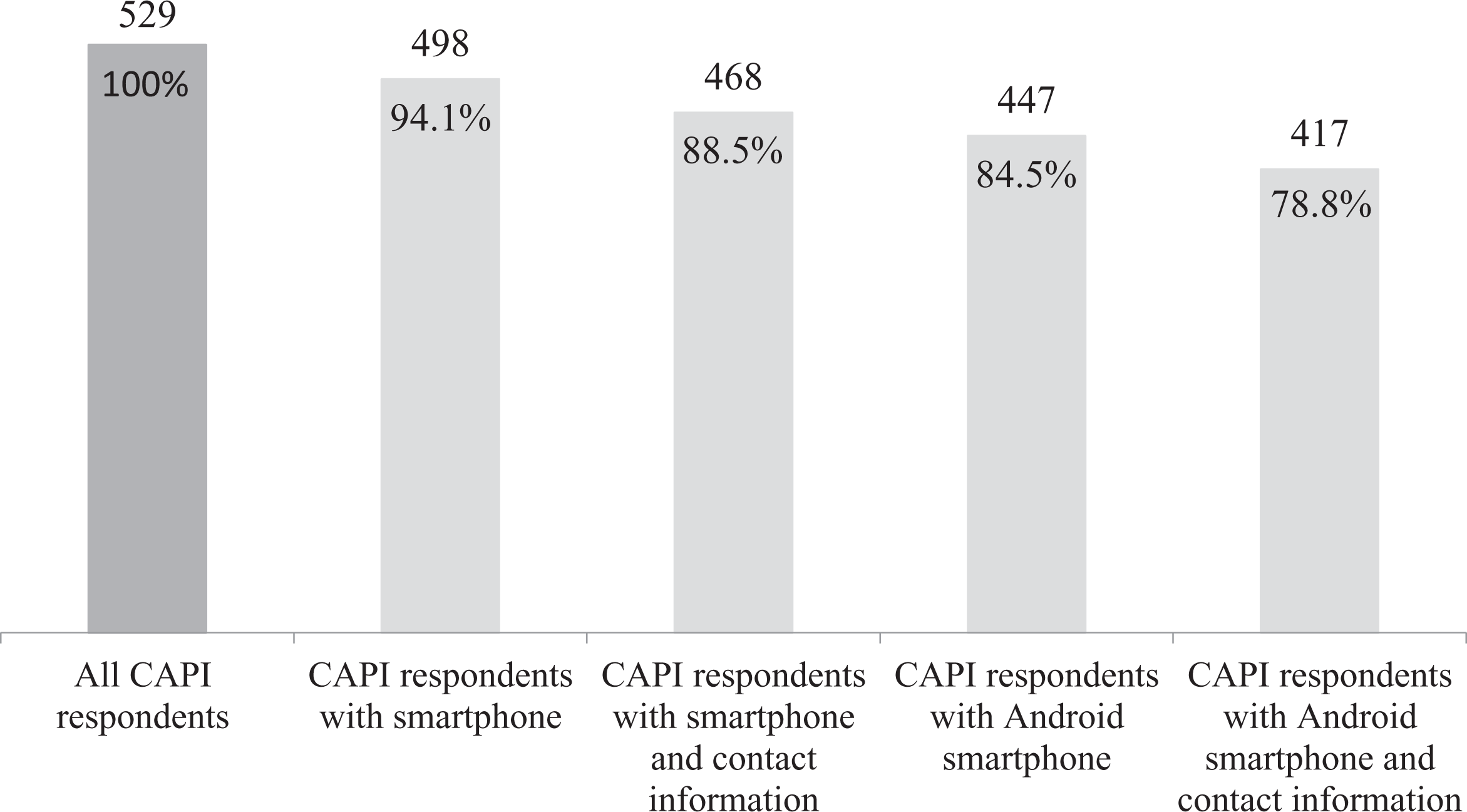

Figure 2 presents the number of participants who reported owning a smartphone during the CAPI interview. Of the 529 refugees we interviewed in person, almost 95 percent reported owning a smartphone, most of them running on the Android OS (85 percent). We observed relatively high willingness to share contact information and be contacted for further research; about 89 percent of CAPI respondents had a smartphone and provided contact information, and 79 percent both had an Android smartphone and provided contact information.

Number of computer-assisted personal interview (CAPI) respondents with smartphones.

We assessed bias in mobile web surveys due to smartphone ownership by first regressing smartphone ownership among all 529 CAPI respondents on sociodemographic characteristics (age, gender, relationship status, and country of origin 9 ). Next, we focused on more substantive measures of our study. We specified individual models predicting smartphone ownership as a function of educational measures (years of formal education, reading proficiency as assessed by the interviewers 10 ), and current asylum status (decision pending vs. positive decision vs. negative decision), as reported in the CAPI survey, controlling for sociodemographics. Descriptive statistics for all variables used in the analysis are in Table A1 in the Online Appendix (which can be found at http://smr.sagepub.com/supplemental/). We can only assess coverage error based on participation in the CAPI survey since no other auxiliary information on individual refugees is available.

We then assessed nonresponse error in mobile web surveys by modeling the likelihood of participating in at least one of the four mobile web surveys as a function of the previously named variables plus an indicator of whether the refugee had reported using the Internet on his or her smartphone for the 468 respondents who reported owning a smartphone and provided contact information.

Next, we examined the same possible biases for the passive mobile data collection among refugees. In this case, we assessed the potential bias by regressing owning an Android smartphone on the independent variables described above among the 529 CAPI respondents. Finally, for nonparticipation error in passive mobile data collection, we specified a model predicting installation of the app on a refugee’s smartphone using the same set of independent variables plus an indicator for whether the refugee had reported to use apps on his or her smartphone among the 417 Android smartphone owners who provided contact information.

All analyses were conducted using R version 3.5.1 (R Core Team 2018) by specifying probit models using the “glm” function. We specified four sets of models, one set each with smartphone ownership, participation in at least one of the four mobile web surveys, Android smartphone ownership, and app installation as the dependent variable.

Together with the independent variables described above, we include dummy variables for the three districts where interviews were conducted to control for heterogeneity across districts. In addition, we clustered the standard errors at the refugee residence level in our models using the “summ” function from the “jtools” package (Long 2018). 11 To account for time differences between the initial CAPI survey and the invitation to participate in the first mobile web survey (depending on the residence the difference was between 13 and 65 days) and the invitation to download the research app, we specified models predicting participation including a time lag variable. Since the time lag variable was not significant (p > .05) in either of the models, we report findings of the parsimonious models here (models including time lag available upon request). We used the “probitmfx” function in the library(mfx) developed by Fernihough (2015) to estimate the average marginal effects (AMEs) for predictors in our models.

Error Due to Differential Smartphone Ownership in Mobile Web Surveys among Refugees

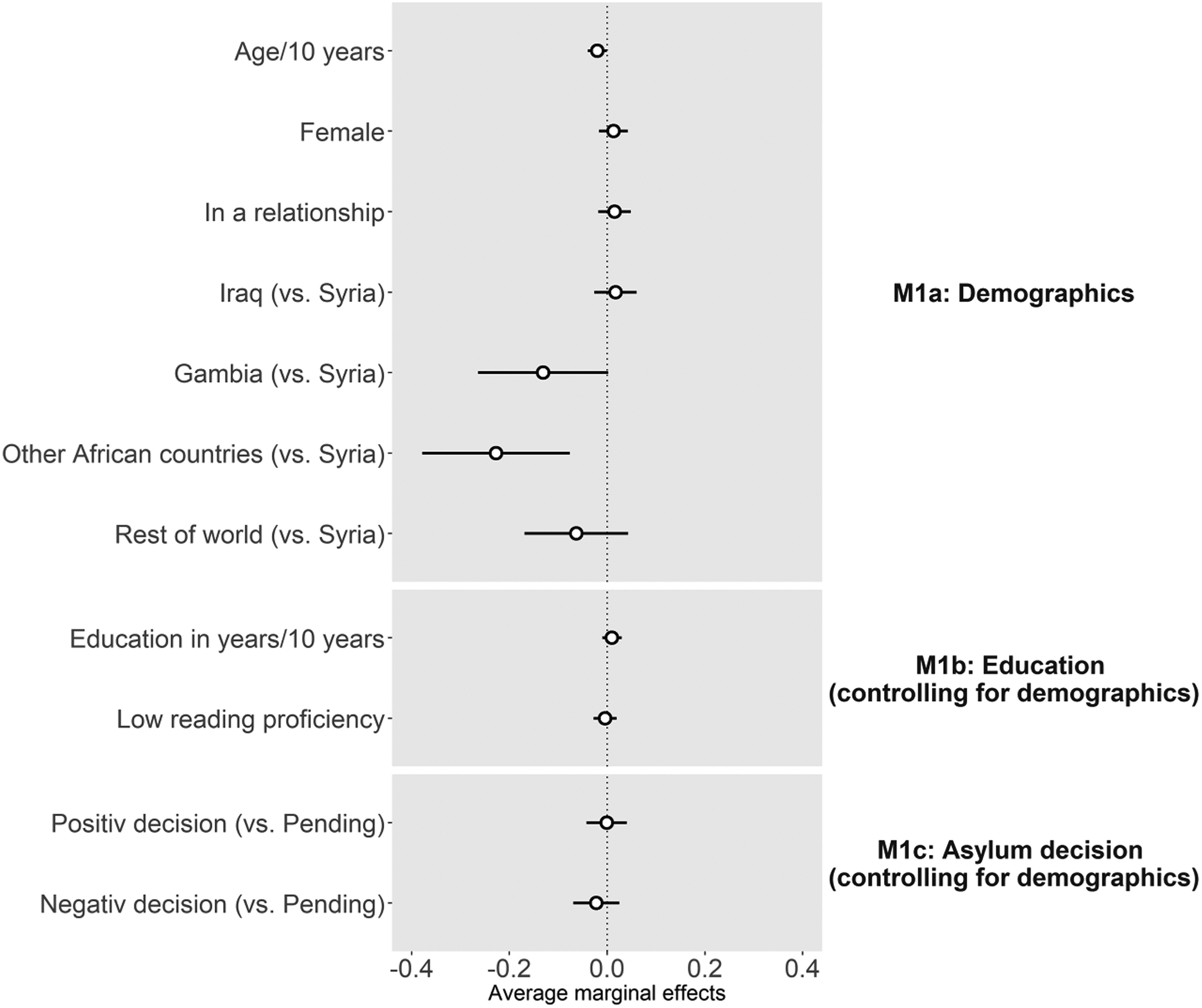

Of the 529 CAPI respondents, 498, or 94.1 percent, reported owning a smartphone. Figure 3 presents the AMEs from three probit models predicting smartphone ownership (see Table A2 in the Online Appendix [which can be found at http://smr.sagepub.com/supplemental/], for the exact numbers). Model M1a predicts smartphone ownership based on CAPI respondents’ age, gender, relationship status, and country of origin. Smartphone ownership is significantly associated with the age of respondents; with every 10 years of age, the average predicted probabilities of owning a smartphone decrease by two percentage points. In addition, the average predicted probabilities of owning a smartphone are almost 23 percentage points lower for refugees from African countries other than Gambia compared to refugees from Syria, while the predicted probabilities of smartphone ownership do not differ for refugees from Iraq, Gambia, and the rest of the world. Gender, education, and relationship status are not significantly correlated with smartphone ownership. Model M1b specifies the association between educational measures and smartphone ownership controlling for sociodemographics, and M1c specifies the association between asylum status and smartphone ownership controlling for sociodemographics. Neither of these measures has a significant association with smartphone ownership controlling for sociodemographics.

Average marginal effects (points) and 95 percent confidence intervals (lines) from probit models predicting smartphone ownership. Estimates for district dummies are not shown. Standard errors are clustered at the residence level.

To account for the unbalanced data set—less than 6 percent of refugees reported not owning a smartphone—we used an oversampling approach to test the robustness of our results. We used bootstrapping and k-nearest neighbor to synthetically create additional observations of the rare event (i.e., not owning a smartphone) applying the SMOTE (Synthetic Minority Oversampling Technique) function in the library(DMwR) in R (Torgo 2010). After oversampling, we reran our models and obtained very similar results, confirming the findings of our initial models (detailed results available upon request). The only notable change is that the negative effects of country of origin for Gambian and refugees from the rest of the world become significant in Model M1a.

Nonresponse Error in Mobile Web Surveys among Refugees

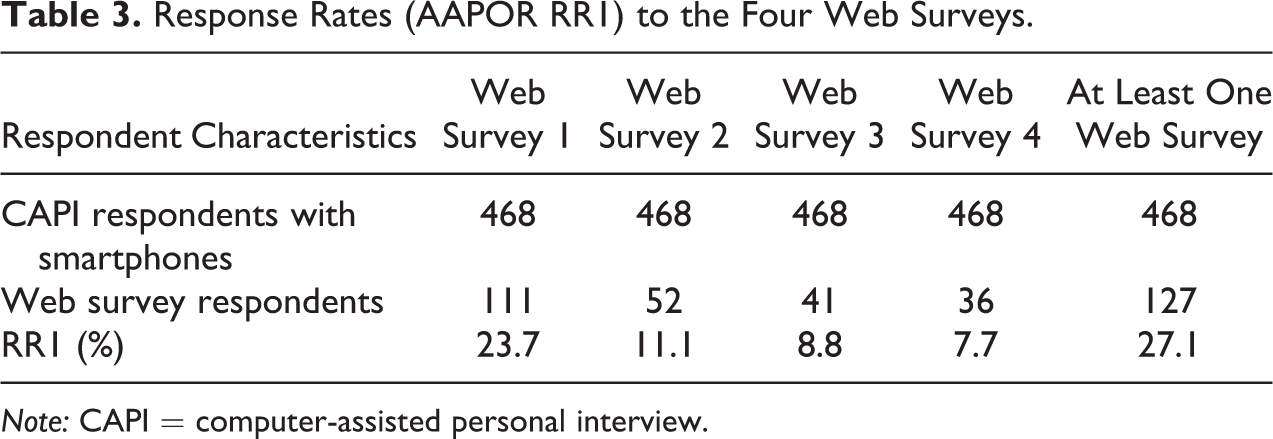

We sent invitation messages to participate in four mobile web surveys to the 468 CAPI respondents who reported owning a smartphone and provided contact information in the recruitment interview. Table 3 shows that the AAPOR (American Association for Public Opinion Research) (2016) response rates RR1 12 to the mobile web surveys declined from 23.7 percent in the first survey to 7.7 percent in the fourth survey. Across all four surveys, 27.1 percent of respondents participated in at least one of the four surveys.

Response Rates (AAPOR RR1) to the Four Web Surveys.

Note: CAPI = computer-assisted personal interview.

Figure 4 shows the AMEs from four probit models predicting participation in at least one of the four mobile web surveys (see also Table A3 in the Online Appendix [which can be found at http://smr.sagepub.com/supplemental/]). Model M2a shows that out of the sociodemographic variables, only country of origin is significantly associated with web survey participation. The average predicted probabilities to participate in at least one survey for refugees from Gambia are 16 percentage points lower than those for refugees from Syria. Predicted probabilities for mobile web survey participation do not differ between Syrians and refugees from other countries. Model M2b further shows that education is significantly correlated with participation in the web surveys controlling for sociodemographics. With each additional year of education, the average predicted probabilities increase by two percentage points. Low reading proficiency reduces the average predicted probabilities by 13 percentage points. Model M2c shows that a positive asylum decision increases the predicted probabilities of mobile web survey participation by 14 percentage points over no decision, controlling for sociodemographics. At the same time, the negative effect for refugees from Gambia is no longer significant in this model, suggesting that country of origin is a proxy for systematic differences in asylum decisions. In model M2d, we assess the influence of using the Internet on a smartphone as reported by the refugee in the CAPI survey on mobile web survey participation controlling for sociodemographics. CAPI respondents who had reported using the Internet on their smartphone have average predicted probabilities that are 23 percentage points higher than those who did not report using the Internet on their smartphone.

Average marginal effects (points) and 95 percent confidence intervals (lines) from probit models predicting participation in at least one of the four mobile web surveys. Estimates for district dummies are not shown. Standard errors are clustered at the residence level.

Error Due to Differential Android Smartphone Ownership in Passive Mobile Data Collection among Refugees

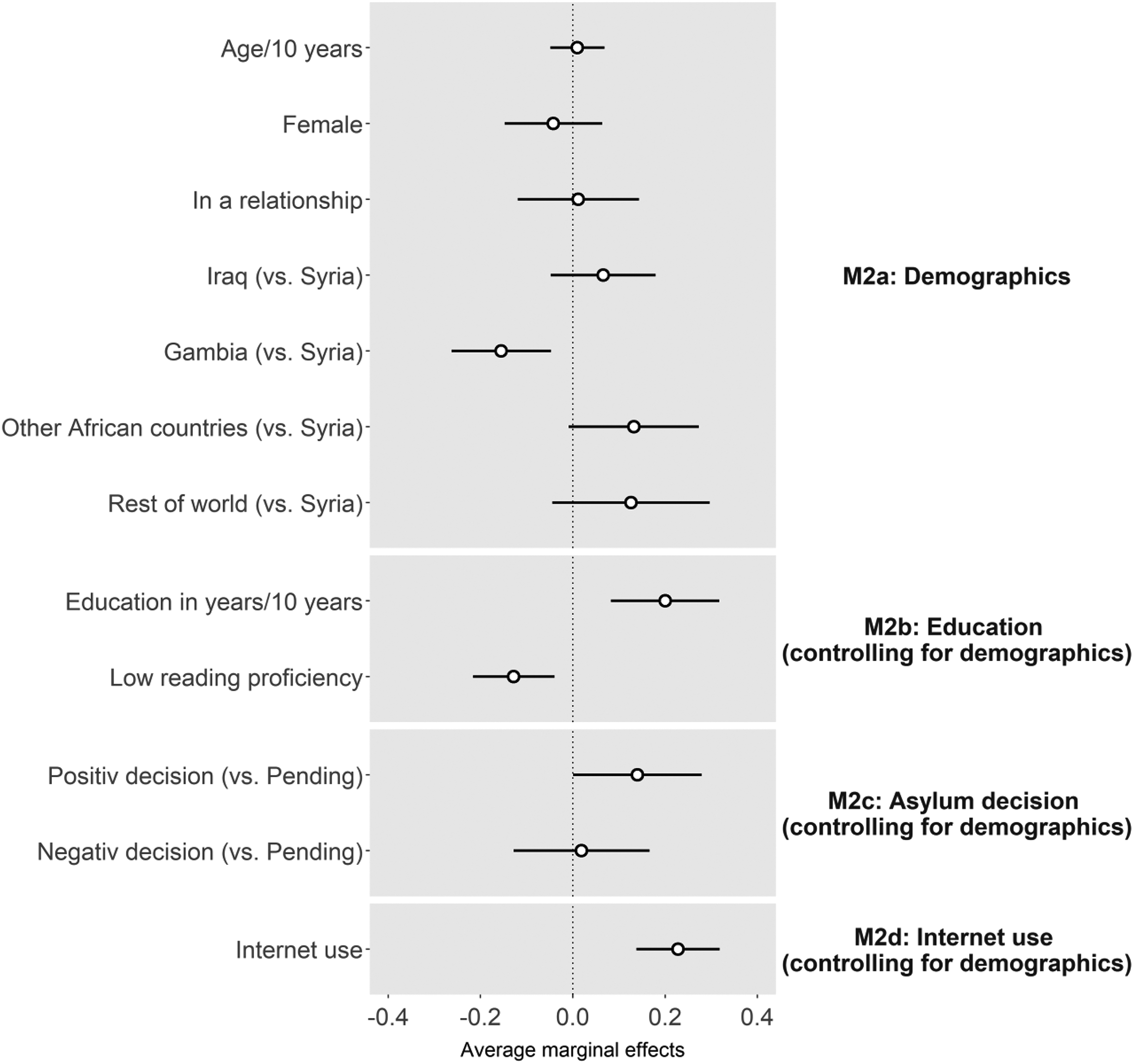

Of the 529 CAPI respondents, 447 reported owning an Android smartphone. 13 Figure 5 presents the AMEs from three probit models predicting Android smartphone ownership using the same analytical strategy as for the models predicting smartphone ownership (see also Table A4 in the Online Appendix [which can be found at http://smr.sagepub.com/supplemental/]). Model M3a shows that refugees from African countries other than Gambia have, on average, a 15 percentage points lower probability of owning an Android smartphone than refugees from Syria; Android smartphone ownership in other countries does not differ significantly from Android smartphone ownership in Syria. Models M3b and M3c show that neither of the substantive variables have a significant association with owning an Android smartphone, controlling for sociodemographics.

Average marginal effects (points) and 95 percent confidence intervals (lines) from probit models predicting Android smartphone ownership. Estimates for district dummies are not shown. Standard errors are clustered at the residence level.

Nonparticipation Error in Passive Mobile Data Collection among Refugees

We recorded 27 of the 417 CAPI respondents who reported owning an Android smartphone and gave us their contact information installing the LIG app to their smartphone. However, data were passively collected from their smartphone through the app for only 21 participants, yielding a participation rate of 5.0 percent. For the remaining six participants, either no data were ever collected (indicating the person downloaded the app but never consented to data collection in the app) or just very briefly data were collected (i.e., after installation, only two or three data points were recorded—indicating the app was uninstalled immediately). Installing the app is strongly correlated with participating in the web surveys; 20 of the 21 participants who installed the app also completed at least one survey (95.2 percent) and 5 responded to all four mobile web surveys (23.8 percent).

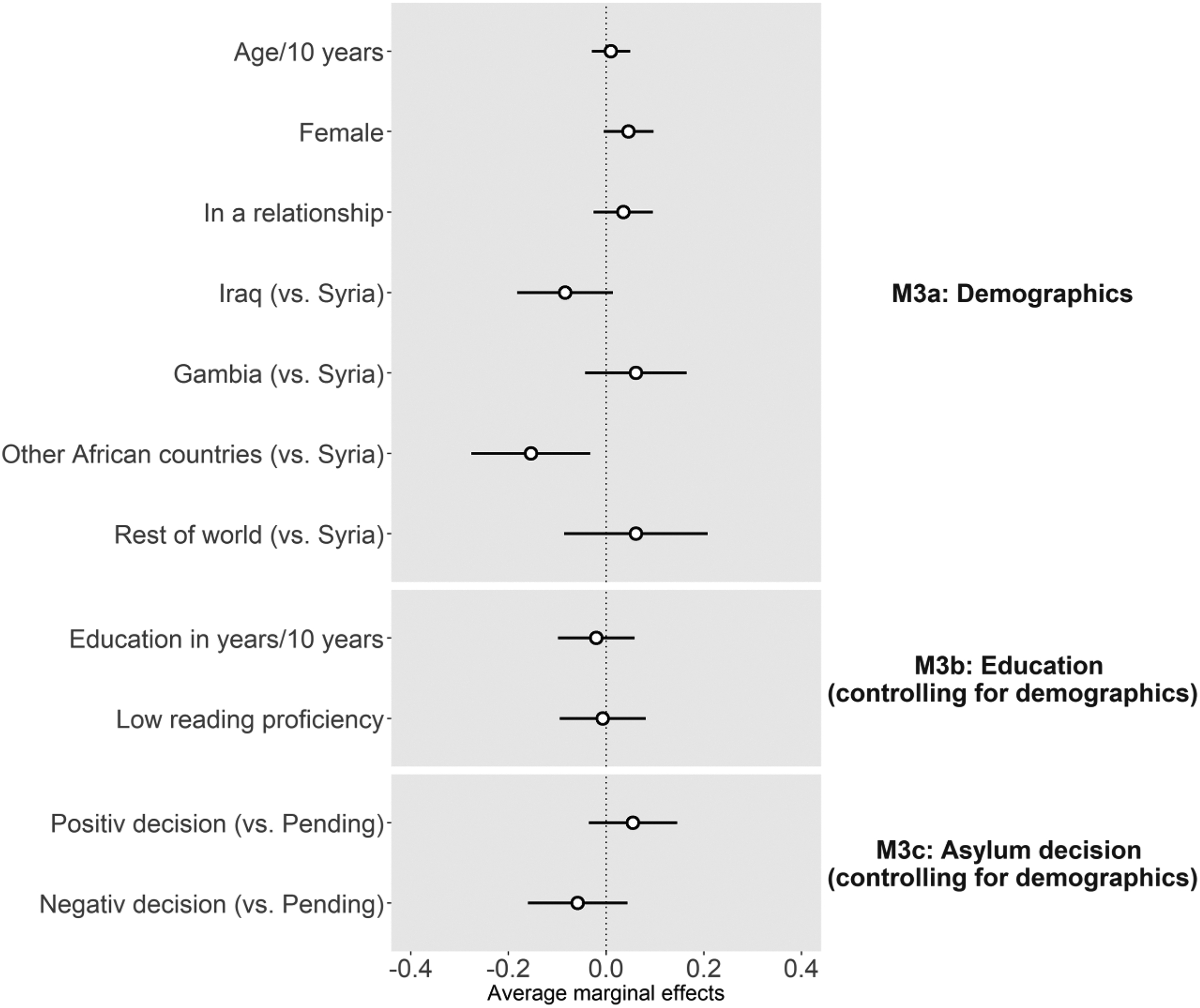

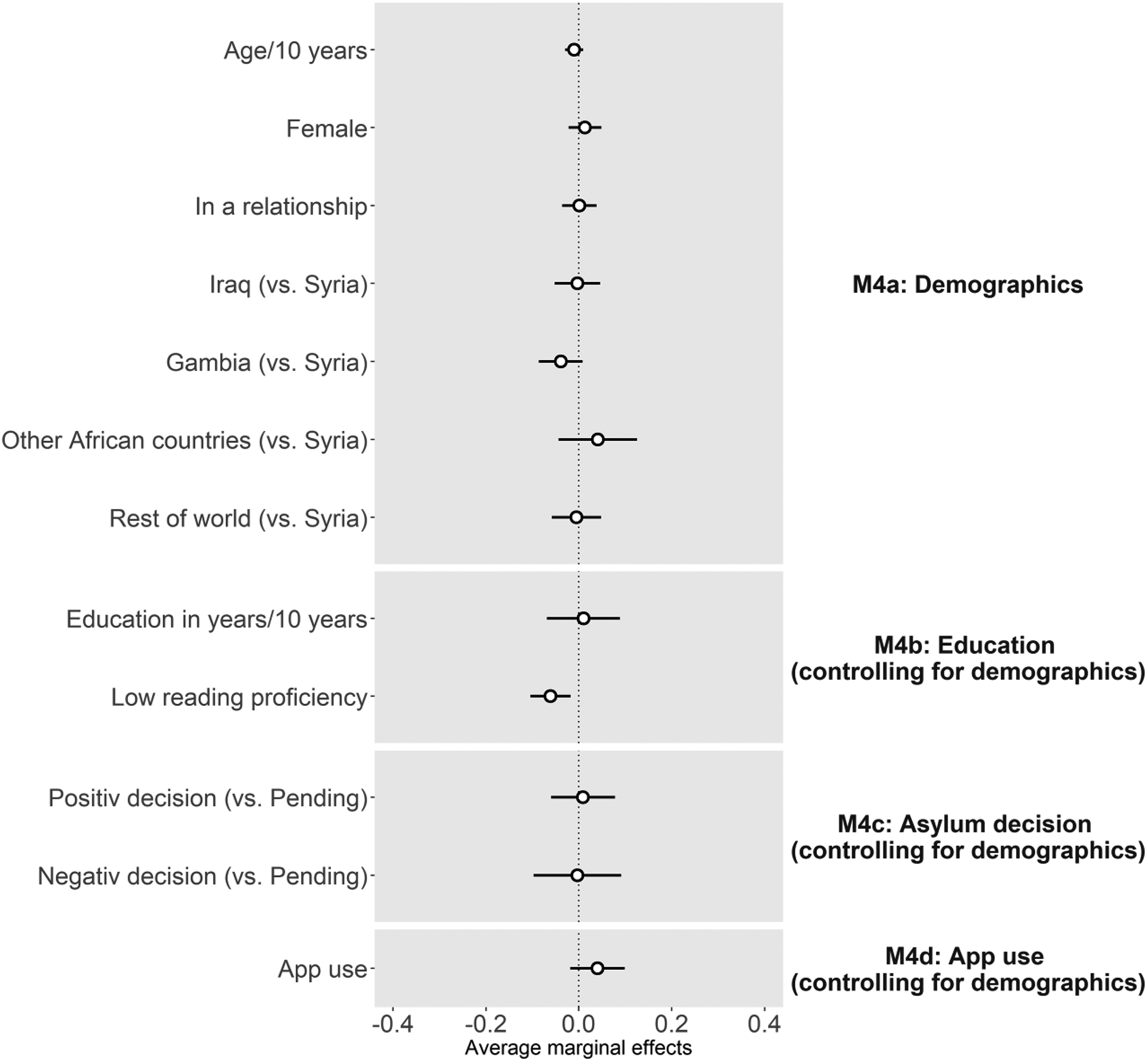

Figure 6 shows the AMEs from the four probit models predicting participation in the passive mobile data collection (see also Table A5 in the Online Appendix [which can be found at http://smr.sagepub.com/supplemental/]). In models M4a through M4c, only reading proficiency is significantly associated with participation in the passive mobile data collection controlling for sociodemographics. If an interviewer reported after the recruitment interview that a CAPI respondent had low reading proficiency, the average predicted probabilities of participation in the passive mobile data collection falls by six percentage points. Model M4d includes a dummy variable for using apps on a smartphone as reported by the refugee in the CAPI survey. The results show that using apps is not significantly correlated with participation in the passive mobile data collection controlling for sociodemographics.

Average marginal effects (points) and 95 percent confidence intervals (lines) from probit models predicting participation in the passive mobile data collection. Estimates for district dummies are not shown. Standard errors are clustered at the residence level.

To account for the unbalanced data set—only 5 percent of invitees downloaded the app to their smartphone—we again use the SMOTE oversampling procedure to test the robustness of our results. The results of the models after oversampling confirm the findings of our original analysis. The only exceptions are that the positive coefficient for refugees from other African countries in Model M4a and the positive effect of app use in Model M4d become significant (results available upon request).

Monetary Incentive

We assessed the importance of monetary incentives on the willingness to install the research app by including a dummy variable for the incentive experiment in model M4e (see Table A5 in the Online Appendix [which can be found at http://smr.sagepub.com/supplemental/]). Although the coefficient of the incentive dummy is not statistically significant (p > .1), it is noteworthy that the treatment group, which was informed about the 30 Euro incentive, had a participation rate of 7.0 percent while only 3.2 percent in the control group participated. These numbers imply a treatment effect of 3.8 percentage points or an increase of 119 percent in participation.

Discussion

The recent influx of more than one million refugees to Europe, and Germany in particular, and the resulting need for data about these newcomers to facilitate the integration process has resulted in researchers searching for new and improved ways of collecting data. As part of the LIG study, we conducted a feasibility study among refugees in Germany to assess the potential for using smartphone technology for collecting survey data and passive mobile data in immigrant research. We find that 94 percent of the refugees we interviewed reported owning a smartphone; a mobile device penetration rate that is larger than in the general population in Germany. This finding both underscores previous empirical evidence that smartphones play a crucial role for refugees and gives hope to researchers for the use of smartphones to access and, in particular, keep in touch with refugees for longitudinal data collection. New technical developments such as research apps, which allow in situ collection of self-reports together with passive mobile data collection of behavioral information on a smartphone (e.g., location and movements, app, and Internet use), offer additional opportunities to researchers to collect data that are richer and more detailed than what can be collected by asking survey questions.

Our assessment of error due to smartphone ownership is based on responses to a CAPI survey from refugees living in residences in three districts in Southwestern Germany. Although we cannot rule out that smartphone ownership is correlated with the propensity to participate in the CAPI survey, 14 we could replicate findings from earlier research on smartphone coverage. In particular, smartphone ownership correlates strongly with age, such that younger refugees are more likely to own a smartphone. In addition, we find that refugees from Syria—one of the two countries with the highest inflow of recent refugees to Germany—are more likely to own a smartphone than refugees from African countries. Smartphone ownership in our study, however, is neither correlated with other sociodemographic characteristics such as gender and relationship status nor with measures on education and asylum status when controlling for sociodemographics. For these more substantive measures of refugee research, no bias exists when employing smartphones for mobile web surveys. Income and wealth (at origin), two constructs we did not measure in our CAPI survey, might explain differences in smartphone ownership by country of origin.

Interestingly, further limiting the target audience to refugees who own an Android smartphone seems to reduce error. The difference in bias might arise from the exclusion of iPhones and Windows Phones that are more expensive than most Android phones and might therefore be owned by a very particular subgroup of refugees only.

When it comes to nonresponse in mobile web surveys among refugees, we find that initially about one quarter of the refugees who provided contact information in our personal interviews participated in the first web survey via smartphone one month later. However, in subsequent waves of the mobile web survey, we see substantial attrition; in wave 4, only one in 14 refugees from our original sample participated. We can only speculate what reasons might have contributed to this drop-in response rates over time. Since we sent invitations to the mobile web surveys via e-mail and WhatsApp, changes in phone numbers are less likely to have caused an increase in noncontacts. One explanation is that the lack of an incentive for completing the mobile web surveys, in combination with a—for smartphones—relatively long questionnaire led to reduced motivation to participate over time. Modularizing the mobile web surveys into smaller chunks could help to make the task of completing one questionnaire at a time less burdensome and lead to higher response overall (Toepoel and Lugtig 2018). Whether refugees would perceive an increase in messages from a researcher positively or negatively needs to be empirically tested.

Nevertheless, we find, comparable to other populations, that the likelihood of responding is correlated with education and Internet use; refugees with more years of formal education and those who regularly use the Internet on their smartphone are more likely to participate in mobile web surveys. Compared to the German population, refugees might have a higher illiteracy rate, and we found that being flagged by an interviewer as having reading problems in our CAPI survey was a strong predictor of nonresponse in the mobile web surveys. Whether these findings hold for other nonrefugee populations with similarly high illiteracy rates needs to be studied further. Although we find some significant differences in participation in the mobile web surveys by country of origin, we want to be cautious not to overinterpret these results because of the relatively low sample sizes in some of the subgroups.

While passive mobile data collection seems to be a promising technology for collecting detailed behavioral data via smartphones, the main issue is low participation. Approximately 5 percent of the refugees who were eligible to download the study app did so and participated in the passive mobile data collection, even after receiving several reminders. In practical terms, this means that if one wants to passively collect mobile data from 500 refugees in a study, almost 10,000 refugees with Android smartphones would need to be invited. Given the extremely low participation rate, the use of passive mobile data collection might be limited in the study of refugees, at least under comparable conditions to our study. In large-scale refugee surveys, the technology could be used to study behaviors of subpopulations based on volunteer samples.

The participation rate in our study is lower than that of earlier studies collecting similar types of information but from different populations (e.g., Scherpenzeel 2017; Sugie 2018). Although we limited the amount of data and the granularity of information that can technically be collected via passive measurement on smartphones, this type of data collection might be perceived as too invasive for refugees.

We also found that offering a 30 Euro incentive to participate increased the download rate by almost four percentage points, a statistically insignificant effect on the propensity to participate. Further increasing the monetary incentive for participation in passive mobile data might be worthwhile as the costs for incentives are most likely small compared to the overall costs of such a study and the benefits of a larger sample size. However, refugees are an extremely vulnerable population and incentives must not be so high as to become coercive. In general, we need to learn more about what keeps refugees from participating in a study like ours.

Whether other types of incentives would be more successful in increasing the participation goes beyond the scope of this article. We thus encourage researchers to test the effect of nonmonetary incentives such as data plans or incentives that are provided as part of the research app. To make a research app more attractive to participants, other features such as access to a language learning program, relevant local information, or feedback on smartphone use could be added. Whether adding such features leads to reactivity, such as participants changing behavior that is measured through the app, and would thus bias the results of such a study needs to be investigated.

Similar to what we found for nonresponse to the mobile web surveys, we saw that literacy problems are strongly correlated with nonparticipation in the passive mobile data collection. This is not surprising, given that we mainly provided refugees with written information encouraging them to download the app and explaining what type of data would be collected. Future studies should assess whether making the app installation process part of a personal interview where the interviewer can assist the refugees in overcoming any potential technical difficulties and answer questions about data protection and privacy in detail increases the participation rate. Implementing such a design will of course increase study costs.

Despite the many new insights for research on refugees using smartphone technology that our study provides, there are some limitations that reduce the generalizability of our findings. We acknowledge that we did not draw a probability sample that would allow us to make statistical inference to a larger population of refugees in Germany. Due to budgetary limitations, we were only able to interview refugees who were fluent in Arabic or English in three districts in Southwestern Germany. In addition, to keep the recruitment interview short, we only asked a relatively small number of questions in the CAPI survey. Thus, the set of variables that allows us to estimate coverage and nonresponse/nonparticipation bias on measures of interest to researchers conducting studies on refugees is limited. Furthermore, we invited only those refugees to the four mobile web surveys and the app who participated in our CAPI survey in the first place; that is, we approached only people who already had provided some information to us, which might have increased the willingness to participate. Due to a lack of auxiliary data, for example, we cannot assess whether the propensity to participate in the CAPI survey is correlated with ownership of a smartphone. Overall, our study collected data on a rather small sample (n = 529), thus limiting the power of our analysis and preventing us from analyzing the differential effect of smartphones across a wider range of countries or including interaction terms into our analytical models. We encourage further research on a larger scale that would allow researchers to test the moderating effects of some of our measures.

Supplemental Material

Appendix - Using Smartphone Technology for Research on Refugees: Evidence from Germany

Appendix for Using Smartphone Technology for Research on Refugees: Evidence from Germany by Florian Keusch, Mariel M. Leonard, Christoph Sajons and Susan Steiner in Sociological Methods & Research

Supplemental Material

Supplemental Material, Keusch_et-al_R2_supplemental - Using Smartphone Technology for Research on Refugees: Evidence from Germany

Supplemental Material, Keusch_et-al_R2_supplemental for Using Smartphone Technology for Research on Refugees: Evidence from Germany by Florian Keusch, Mariel M. Leonard, Christoph Sajons and Susan Steiner in Sociological Methods & Research

Footnotes

Authors’ Note

The study procedures were reviewed and approved by the ethics commission of the University of Freiburg (application number 13/17).

Acknowledgments

The authors are thankful for feedback and comments from seminar participants at the Walter Eucken Institute, the Center for European Economic Research, the Institute for the Study of Labor, the University of Mannheim, the University of Heidelberg, and the University of Hannover and are very grateful to Omar Flayyih for excellent research assistance and Ralf Philipp for help with the IAB-BAMF-SOEP refugee study data. They thank the district administrations and the directors and staff at the refugee residences for their support of their study, as well as their interviewers for their dedicated work. Most importantly, we appreciate the time and effort the participants of this study provided.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the German Research Foundation (DFG) under research grant SA2845/1-1, the Mannheim Centre for European Social Research (MZES), the Walter Eucken Institute, and Leibniz University Hannover.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.