Abstract

This study proposes a perceptually guided workflow for transforming digital images into knit-compatible outputs constrained by predefined yarn palettes. The workflow incorporates multiple error diffusion-based color quantization methods and evaluates each result using seven perceptual and statistical quality metrics (Color Difference formula 2000, Structural Similarity Index Measure, Mean Squared Error, Peak Signal-to-Noise Ratio, Visual Information Fidelity, Feature Similarity Index Measure, Gradient Magnitude Similarity Deviation). These metrics are normalized, perceptually weighted, and aggregated into a composite score to support method comparison. Designers can predefine target yarn colors and compare alternative reductions both visually and quantitatively. The selected image is automatically converted into a bitmap format, with each color linked to predefined knitting codes. Experimental results show that the workflow preserves visual fidelity while respecting material constraints such as yarn availability and machine resolution. By combining color reduction, quality assessment, and code generation in a unified process, the system enables efficient and reproducible translation of digital visuals into textile-ready formats.

Keywords

Knitting has developed as a craft based on handwork throughout history 1 and has become one of the indispensable elements of the modern fashion industry today. 2 In its early days, knitting was limited to hand-made products, but today it has expanded into a wide range of applications such as clothing, accessories, and decorative textiles. 3 With the impact of digitalization, knitting design has gained a new dimension by combining traditional techniques with modern technologies, making the design processes more innovative, flexible, and efficient. Computer-aided design (CAD) software and advanced knitting machines have automated the design process, increasing production speed and allowing for the more precise creation of complex patterns. 4 These technological developments have enabled the production of fully personalized and unique knitted products in the fashion, advertising, and entertainment sectors, leading to a rapid increase in demand for this field. 2

However, despite all the advantages offered by digital knitting design, the process faces various constraints in terms of technical challenges and production suitability. Knitting machines play an important role in the production of customized garments; however, programming these machines is quite complex and often requires expertise. 5 Designers have to use low-level programming languages when designing knitting patterns and transferring them to knitting machines, and since they do not fully master the operating principles of the machines, they encounter various difficulties in this process. 6 This situation necessitates the simultaneous use of advanced CAD software knowledge and knitting techniques in the knitting design process.7,8

The number of yarns that knitting machines can use in pattern production is limited by the number of yarn carriers. This situation restricts the designer’s use of color in the pattern creation process. This limitation makes it a multistep and technical process that requires the conversion of digital images into knitting patterns, the adaptation of colors to the limited number of yarn carrier systems on knitting machines, and the seamless transfer to production. There are various methods related to this process. Techniques developed specifically for Jacquard knitting machines suggest making the visuals suitable for knitting by reducing their colors to a limited number through methods like posterization. For example, in their patent titled ‘Method for manufacturing a knitted fabric reproducing an adversarial image’ (WO2022168014A1), Conti and Didero 9 state that the images should first be resized, then their colors posterized, and finally assigned to yarn carriers determined through knitting design software.

Additionally, although in the method described in the literature each pixel is directly interpreted as a knit stitch, the inability to predetermine the reduced colors by the user makes it difficult to ensure color accuracy and leads to loss of details. In these methods, the colors need to be matched with the closest tones in the yarn catalog, and when a one-to-one match is not possible, a melange effect is created by combining multiple yarns. Additionally, designers are not offered the flexibility to choose between different color reduction algorithms or to examine design accuracy using automatic quality assessment metrics such as the color difference formula 2000 (CIEDE2000) or Structural Similarity Index (SSIM). As a result, the process of converting digital images into knitting is restricted both creatively and in terms of production, making the seamless transfer to production more difficult.

This study proposes a system composed of integrated algorithms to improve the image-to-knit process. It allows designers to select yarn colors, choose among different color quantization methods such as error diffusion, and evaluate the visual output using standardized quality metrics like CIEDE2000, SSIM, and mean squared error (MSE). The system aims to balance visual accuracy, designer control, and production constraints through a user-friendly workflow.

Introduction

Problem definition

The process of converting digital images into machine-ready knitting outputs is not only algorithmically complex but also fragmented across incompatible tools and design environments. Designers are required to define yarn-constrained color palettes, apply appropriate quantization methods, and ensure that the resulting visuals remain compatible with both the aesthetic intent and the physical limitations of knitting machinery – such as a limited number of yarn carriers.

General-purpose image editing software can perform basic operations such as color reduction or posterization, but these tools are not designed to account for textile-specific limitations. They typically lack support for yarn-based palette restrictions, offer no mechanism for comparing quantization algorithms, and provide no objective feedback on the perceptual quality of the output. Similarly, textile CAD systems – although powerful for stitch-level design, simulation, and machine code generation – are not equipped to automatically process full-color images under yarn carrier constraints or to evaluate the fidelity of the resulting color-mapped visuals.

This lack of integration forces designers to manually bridge creative and technical domains using disjointed tools and intuition-based decisions. To address these shortcomings, this study proposes a unified system that combines yarn-constrained color quantization, algorithm selection, and perceptual quality evaluation into a single workflow. This system enables designers to explore multiple quantization methods, assess the resulting images using standardized visual metrics, and export machine-compatible outputs with greater confidence and efficiency.

Motivation

Digital tools like CAD software and automated knitting machines have expanded the creative possibilities in textile design, making it easier to produce complex and customized knitted patterns. As designers increasingly use digital images in their workflows, the gap between visual intent and machine constraints – such as limited yarn colors and carrier capacities – has become more apparent. This growing disconnect highlights the need for approaches that can better align digital design practices with the realities of textile manufacturing.

Main contributions

The key contributions of this study to digital textile and knitting design are as follows:

A modular system is proposed for transforming digital images into knitting-ready outputs, enabling designers to reduce visual content into limited yarn palettes defined by thread availability or design constraints.

The system integrates five color quantization methods and evaluates their outputs using seven perceptually motivated quality metrics (CIEDE2000, SSIM, MSE, Peak Signal-to-Noise Ratio (PSNR), Visual Information Fidelity (VIF), Feature Similarity Index (FSIM), gradient magnitude similarity deviation (GMSD)). Each metric is normalized and weighted to generate a composite score, allowing for structured and interpretable method selection.

The workflow supports preproduction evaluation and automates the conversion of selected visuals into bitmap (BMP) files mapped to knitting machine code. This ensures CAD compatibility and provides a bridge between digital image processing and physical textile manufacturing.

Paper organization

This article is organized into five main sections to provide a clear and logical progression from problem context to implementation and evaluation. The first section introduces the background, significance, and objectives of the study. The second section reviews the current methods and challenges in digital knitting design and production. The third section presents the proposed method in detail, including flat knitting machine constraints, visual preprocessing, color palette definition, color reduction algorithms, CAD integration, and automatic quality assessment metrics. The fourth section reports experimental results along with their interpretation and practical implications. Finally, the fifth section concludes the paper by summarizing key findings and outlining directions for future research.

Related work

The transformation of digital visuals – composed of millions of distinct RGB values – into compatible knitting codes for flat knitting machines with a limited number of yarn carriers represents a complex, multidisciplinary computational challenge. This process intersects multiple domains, including image processing, computational design, error propagation, and machine automation. At its core lies a fundamental contradiction: the rich and continuous color spectrum of digital images versus the discrete and hardware-limited nature of knitting technology. Since each yarn carrier can handle only a single color, it is not feasible to directly translate high-resolution, multicolored images into knitting patterns. Therefore, an intermediate step is required: one that reduces the number of colors while preserving the essential details and visual integrity of the image. Research into advanced image processing techniques, dithering algorithms, CAD tools, and computational knitting methods plays a critical role in enabling this transformation.

Digital knitting design and production have made significant progress in recent years, being supported by innovative computational methods. In this field, various methods stand out, such as converting three-dimensional (3D) models into machine-compatible knitting instructions, developing interactive visual programming interfaces, using advanced simulation techniques, and even integrating handcraft-based pixelation approaches.10,7,11,12 However, there are still various difficulties in the use of these innovative methods, such as machine limitations, the need for expertise, and integration issues.

Studies focusing on the conversion of 3D designs into knitting instructions aim to directly transfer the digital design process to machine production. The method developed by Narayanan et al. 10 automatically converts 3D surfaces into instructions that can be run on industrial knitting machines, thereby enabling the production of complex 3D surfaces. Similarly, Narayanan et al. 7 focused on integrating 3D designs with knit stitch network data using visual programming interfaces. This approach makes the design process more intuitive and interactive while taking machine constraints into account.

Another method called ‘knit sketching’ was introduced by Kaspar et al. 11 and has made it possible to design garment shapes through sketch-based interaction. In this way, it aims to reduce errors that may occur during knitting and optimize the overall process. On the other hand, the illusion knitting techniques defined by Zhu et al. 12 allow for the creation of patterns that change depending on the viewing angle. However, such multilayered and optical effect-based approaches require additional algorithms and hardware optimizations due to the need for high-precision management of machine constraints and color transitions. 12

Another direction of innovation in digital knitting lies in the development of advanced 3D simulation and visualization tools tailored to warp-knitted structures. Wu et al. 13 demonstrated that garments with stable geometries can be reliably produced through automated industrial knitting pipelines. Dong et al. 14 introduced a simulation framework for warp-knitted jacquard boas, featuring double-needle bed structures, pocket-like cavities, and functional zone designs. Their system offers real-time visualization based on detailed stitch mesh modeling and pattern-driven displacement. Liu et al. 15 addressed the simulation of fully formed warp-knitted garments using a geometry-driven mesh approach, employing coordinate transformation and tubular yarn modeling to represent stitch deformation with high fidelity. These contributions significantly enhance the accuracy and realism of structural visualization in digital garment prototyping. However, they focus primarily on the simulation phase of pre-established patterns or design templates. By contrast, the present study addresses a different and earlier stage in the pipeline – transforming full-color digital images into machine-compatible knit instructions. Rather than simulating fabric structure, our system supports designers in preparing visual inputs through algorithmic color reduction, perceptual evaluation, and bitmap generation under yarn and hardware constraints. This offers an alternative path toward integrating digital imagery into the knitting process and contributes a capability to the digital knitting ecosystem.

The use of CAD software in knitwear design also greatly enhances efficiency, design diversity, and production speed.16,5 CAD-based approaches shorten the process of creating patterns and allow for the virtual testing of high-resolution prototypes. 17 However, the full integration of these systems with industrial machines can be limited due to factors such as user expertise and software complexity.10,18 Especially for individuals with low user experience, a comprehensive training process is required to efficiently use CAD systems on industrial knitting machines that require detailed pattern- and machine-specific parameter adjustments.7,19

On the other hand, yarn carrier technologies also directly affect the efficiency and production capacity of flat knitting machines. Thanks to new generation control methods and optimization techniques, the aim is to minimize the movement of yarn carriers, increase production speed, and support complex knits. 20 However, how innovative yarn carrier designs, especially in complex structures such as double-layered or triple-layered knitted fabrics, can be applied and standardized is still a subject of research.21,22

In the field of digital knitting, color management and image processing methods hold significant importance. In the study presented by Igarashi and Igarashi, 23 an automatic adaptation algorithm is proposed to minimize the visual impact of errors occurring in handcraft production processes – particularly in designs that can be represented as pixel art, such as knitting, embroidery, and beadwork. This method allows for real-time updates of the design to compensate for errors that occur during production (e.g. misplacement, duplication, or omission of pixels), thus providing the user with a range of adaptation options without the need to completely dismantle the existing work to correct the faulty parts.

All these methods and innovations indicate a more flexible, user-friendly, and highly accurate design-production cycle in the future of digital knitting. However, the current literature indicates that there is still a need for progress in areas such as software complexity, simulation accuracy, machine constraints, color reduction techniques, and integration.24,7,15 Especially, the lack of training and inadequate team communication can hinder the widespread adoption of these technologies in the industry. 19 Future studies are expected to make CAD and simulation tools more user-friendly, automate complex patterns, and integrate color management with machine-level constraints. Thus, digital knitting design and production will be applicable on a broader industrial scale; it will become possible to produce more innovative and customizable textile products.

A recent patent by Conti and Didero 9 proposes a method for manufacturing knitted fabrics that reproduce digital images by reducing color complexity through posterization, resizing the image, and mapping the resulting colors to yarn carriers in a fixed sequence. Although this approach is notable for integrating image preparation with the constraints of Jacquard knitting, it provides limited control over the quantization process. The color reduction method is fixed, and users are not offered alternative quantization algorithms or mechanisms to assess the perceptual fidelity of the output. In contrast, our system introduces a modular workflow in which designers can choose among five different error diffusion-based quantization algorithms – including adaptive and Laplacian-based variants – and compare the results using seven perceptual quality metrics such as CIEDE2000, SSIM, and FSIM. These metrics are aggregated into a weighted composite score that supports structured, data-driven decision making. Moreover, the final design is automatically converted into a machine-compatible bitmap format (BMP) aligned with yarn carrier limits, enabling seamless integration into knitting production. This level of algorithmic flexibility, quantitative evaluation, and automatic code generation differentiates our system from existing solutions and contributes a novel framework to the image-to-knit domain.

Materials and methods

In this study, the main objective is to convert high-resolution digital visuals into knitted fabric by reducing them to a color palette determined by the designer, using flat knitting machines with a limited number of yarn carriers. Digital visuals, which contain thousands of different colors, must be adapted to the limited yarn-carrying capacity of knitting machines. There are five different color reduction and error diffusion algorithms that were used for this. These are Floyd–Steinberg dithering, block-based error diffusion, adaptive error diffusion, brightness-preserving error diffusion, and Laplacian-based adaptive error diffusion.

Initially, researchers developed error diffusion algorithms for color reduction processes on screens with a limited color palette. However, with modern screens supporting a wide color gamut, the use of these algorithms has diminished. 25 In this study, a transformation of previously screen-oriented algorithms into a system tailored for textile design is achieved by incorporating textile-specific quality metrics. By integrating evaluation criteria relevant to knitting, such as visual continuity and yarn carrier limitations, the algorithms are adapted to guide color selection at the pixel level, enabling effective visual representation using fewer colors and yarn carriers.

The reduced visuals generated in this study were exported in .bmp format and integrated into CAD software compatible with knitting design, such as Raynen Design Software, in preparation for machine knitting. For sample production, a V-bed flat knitting machine manufactured by BEWORTH was utilized. The knitting process employed 150 denier polyester yarn and was carried out on an 18-gauge machine. The resulting knitted samples measured 90 cm in width and 75 cm in height.

To ensure optimal mapping between digital resolution and machine hardware, all input visuals used in this study were standardized at 900 × 900 pixels. This resolution was chosen to match the 900-needle layout of the knitting machine, resulting in a one-to-one mapping between pixels and needles along the width.

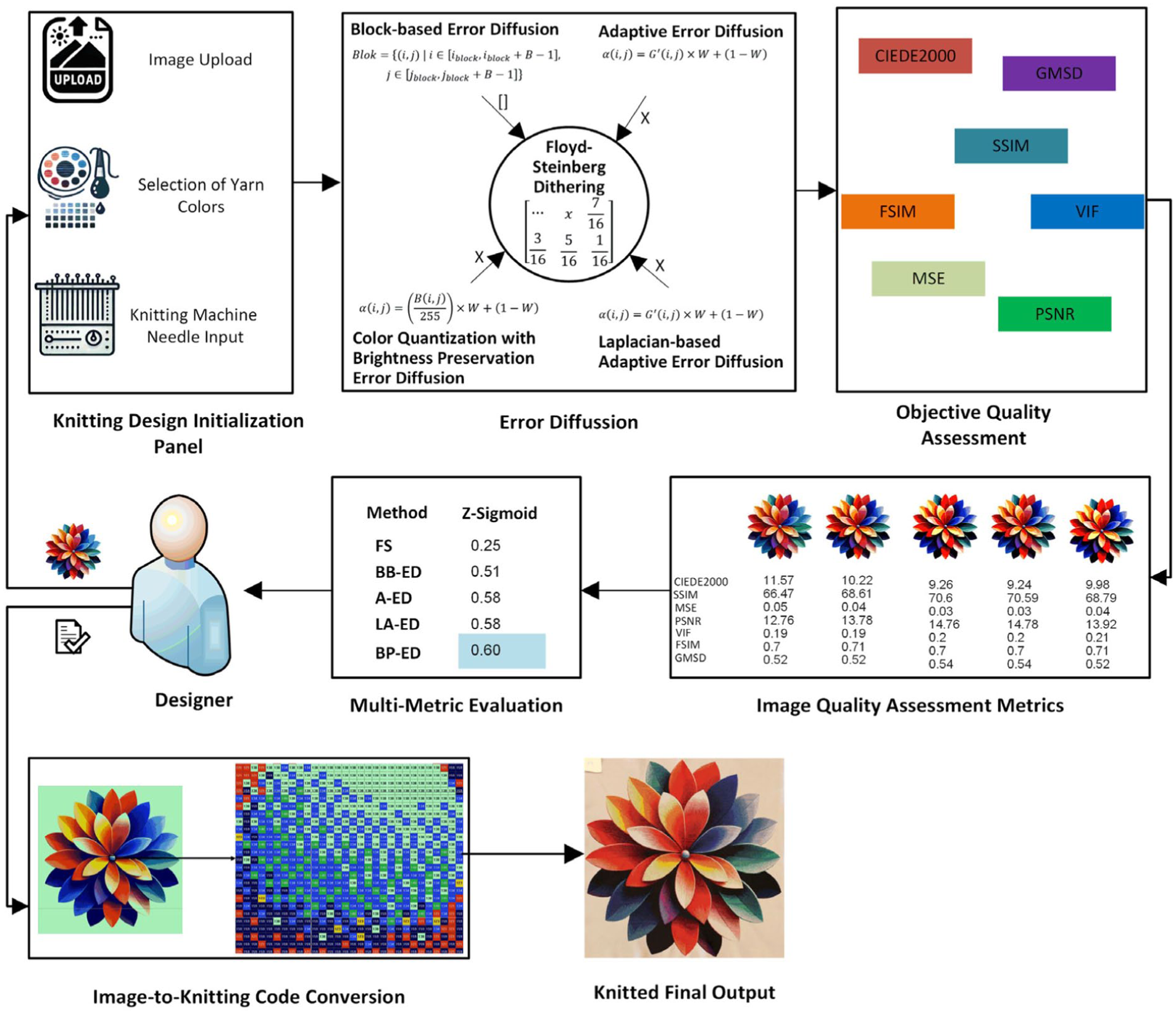

The core steps of the proposed image-to-knit design workflow are summarized in Figure 1.

Pipeline of the proposed system.

The designer uploads a digital image to the application interface.

The designer selects a custom yarn palette by specifying the desired RGB values, which reflect the available yarn colors to be used in production.

The application inputs the technical specifications of the knitting machine, including the needle count capacity. The image is automatically resized to match the maximum pixel resolution allowed by the machine, ensuring that each pixel corresponds to a needle where applicable.

The designer applies one or more of the following color quantization algorithms, each based on error diffusion techniques: Floyd–Steinberg dithering Block-based error diffusion Adaptive error diffusion Brightness-preserving error diffusion Laplacian-based adaptive error diffusion

The application generates preview outputs for each method, allowing the designer to visually assess the impact of different algorithms on color transitions and structural detail.

Each reduced image is automatically evaluated using seven perceptually motivated image quality metrics: CIEDE2000 (Color Difference formula 2000) SSIM (Structural Similarity Index) MSE (Mean Squared Error) PSNR (Peak Signal-to-Noise Ratio) VIF (Visual Information Fidelity) FSIM (Feature Similarity Index) GMSD (gradient magnitude similarity deviation)

Based on a composite score calculated from these metrics, the system identifies the top-performing visual outcome. This result is presented alongside other algorithm outputs for the designer’s review.

The designer selects the final visual, which is automatically converted into a BMP file. Each RGB color is mapped to a predefined knitting code compatible with the knitting machine’s coding system.

The BMP file is exported and uploaded into the designated knitting software.

The corresponding yarns are loaded into the appropriate yarn carriers of the knitting machine. Upon execution, the machine processes the file and transforms the selected image into a knitted product, such as a garment or fabric sample.

Flat knitting machines and yarn carriers

Flat knitting machines offer versatile usage opportunities in the textile industry, providing a wide range of applications in fabric production. These machines are defined by their working principles, which are based on the formation of loops on a horizontal plate. A system carries out the knitting process, creating stitches by moving needles back and forth. These machines, which are generally preferred in applications where the fabric width is adjustable, have the capacity to produce flat-surfaced fabrics. The working principles are based on the transmission of the yarn to the needle hooks, the formation of stitches, and the downward movement of the fabric through the machine. Flat knitting machines are commonly used, especially in the production of sweaters, scarves, and similar flat textile surfaces. On the other hand, V-bed flat knitting machines (Figure 2) – a specific type of flat knitting machine where the needle beds are arranged in a ‘V’ shape and typically have a double bed design – enable the production of more complex and flexible knitting patterns, including double-sided fabrics. In these machines, the needles operate along angled beds, allowing for greater movement flexibility. This design makes V-bed machines particularly well suited to producing elastic and reversible structures, such as ribs and interlocks. The enhanced mechanical versatility of V-bed machines allows for quicker and more efficient knitting of diverse patterns compared to traditional single-bed configurations.

V-bed flat knitting machine.

One of the fundamental components of both flat and V-bed knitting machines is the yarn carrier, which guides the yarn to the needles (Figure 3). The number and arrangement of yarn carriers in the machine directly influence its ability to produce complex patterns and to work with a variety of yarn types. However, the limited number of yarn carriers, especially in standard flat knitting machine setups, can pose challenges when creating intricate designs, thereby constraining both the diversity of possible outputs and overall production efficiency. In the current literature, there are various studies on the effects caused by the limited number of yarn carriers and possible solutions. For example, Huh and Kim 20 emphasized that the intarsia single-bed flat knitting machines, which are generally preferred for sweater production, operate with a limited number of yarn carriers. This situation makes it difficult to use multiple yarns and efficiently produce complex patterns. Similarly, Holderied et al. 21 showed that the current designs of yarn carriers used in standard flat knitting machines are not good enough for modern textile production. They emphasized the need to change these machines with new yarn feeding systems and explained how important it is to make changes to get new structures in knitting processes. Both studies clearly highlight the critical role of yarn carriers in determining a machine’s functional capacity and production range.

Yarn carriers.

In order to address these limitations, researchers continue to develop innovative approaches and technological solutions. The studies show that flat knitting technologies are continuously evolving to address the challenges posed by the limitations in the number of yarn carriers.

Image preprocessing and color palette definition

The visuals used in this study consist of personal photographs, portraits, and artistic/conceptual images generated with the support of text-to-image methods. Since the images were synthetically generated and used solely for color quantization analysis, facial features have been masked in accordance with ethical publication standards. This diversity enables a comprehensive evaluation of the method’s performance across various color scales and textural characteristics. Prior to being uploaded to the application, the images were preprocessed through steps such as resizing and converted into formats suitable for the requirements of each algorithm.

The correspondence of each pixel to a stitch during the knitting phase – particularly during the scaling process – has been taken into account. In this context, the width and height of the visual have been proportionally adjusted based on the number of needles in the machine. This method keeps the pixel sizes of the visual and the actual stitch capacity of the machine in balance during the knitting phase. This makes sure that the product is the right size and has the right number of stitches spread out.

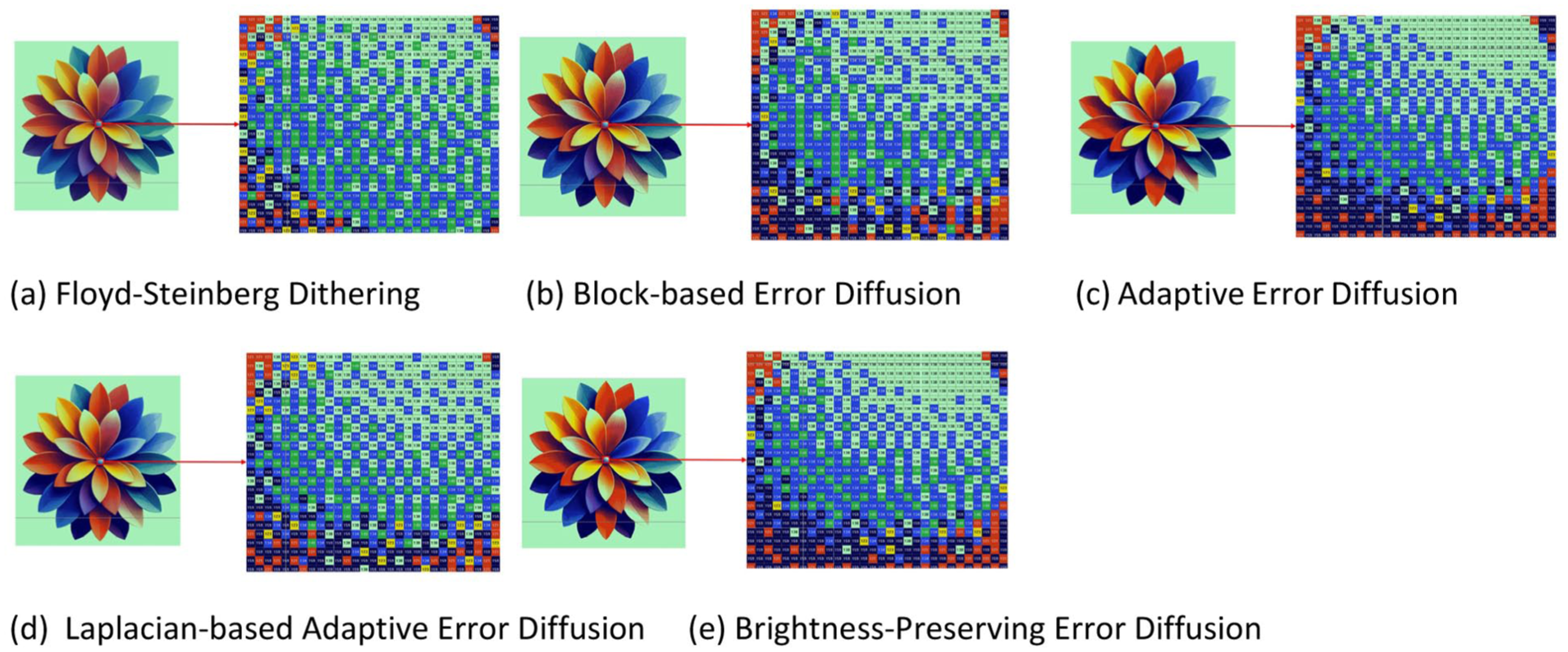

The designer creates a color palette suitable for the yarn stock on hand or the design concept when determining the yarn colors to be used in the knitting process. This color palette is expressed as follows:

Error diffusion algorithms

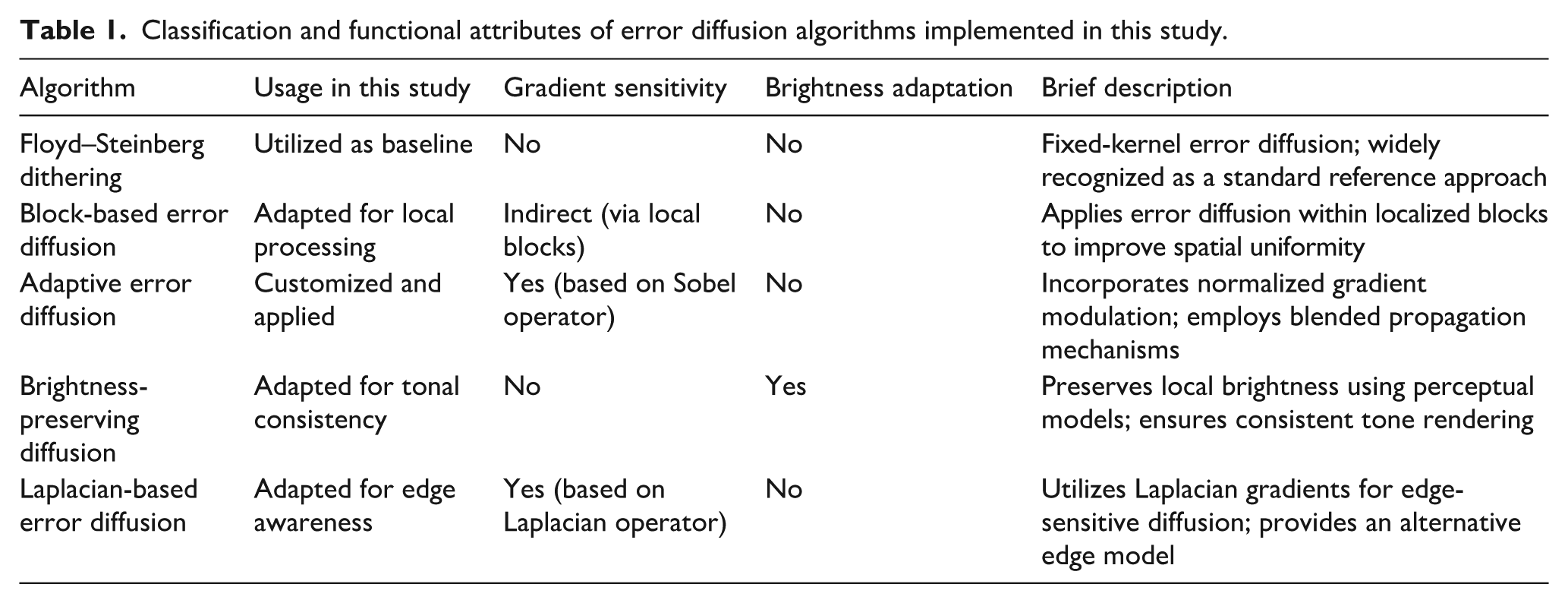

One of the primary objectives of this study is to produce a knitted image that visually approximates to the original while adhering to a limited set of thread colors defined by the designer. To achieve this, five error diffusion algorithms were selected and adapted for use in this study, drawing from established approaches in the literature where applicable. These algorithms operate by mapping each pixel’s original color to the nearest available color in the predefined yarn palette and distributing the resulting quantization error to neighboring pixels using algorithm-specific diffusion coefficients.26,27 Whereas Floyd–Steinberg dithering was implemented in its standard form, the remaining four methods were adapted or modified to suit the structural needs of textile image processing. A comparative overview of these algorithms and their core functional properties is provided in Table 1.

Classification and functional attributes of error diffusion algorithms implemented in this study.

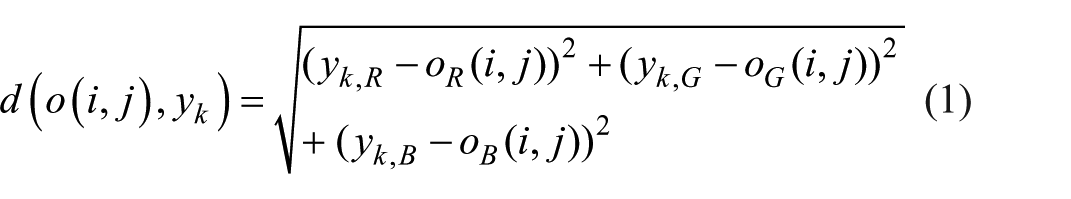

In measuring color similarity, the Euclidean distance commonly used in RGB space has been preferred. This choice was made considering not only the advantages offered by the classical structure of error diffusion algorithms but also the practical benefits such as speed and ease during the application process.

28

As shown in the following equation, the color distance between the original pixel color

The color

Here,

Quantization error expresses the difference between the original color values

To maintain visual quality, this difference is distributed over the neighboring pixels using specific coefficients.

Floyd–Steinberg dithering

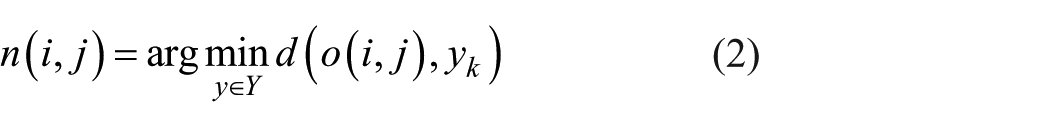

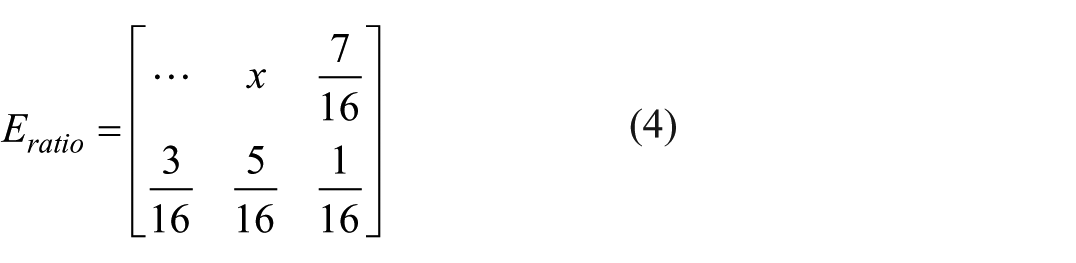

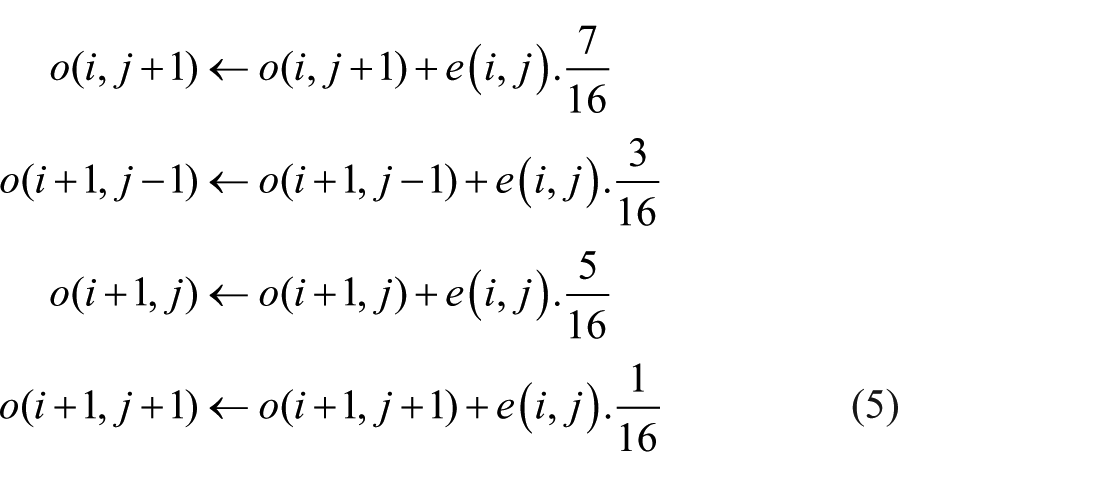

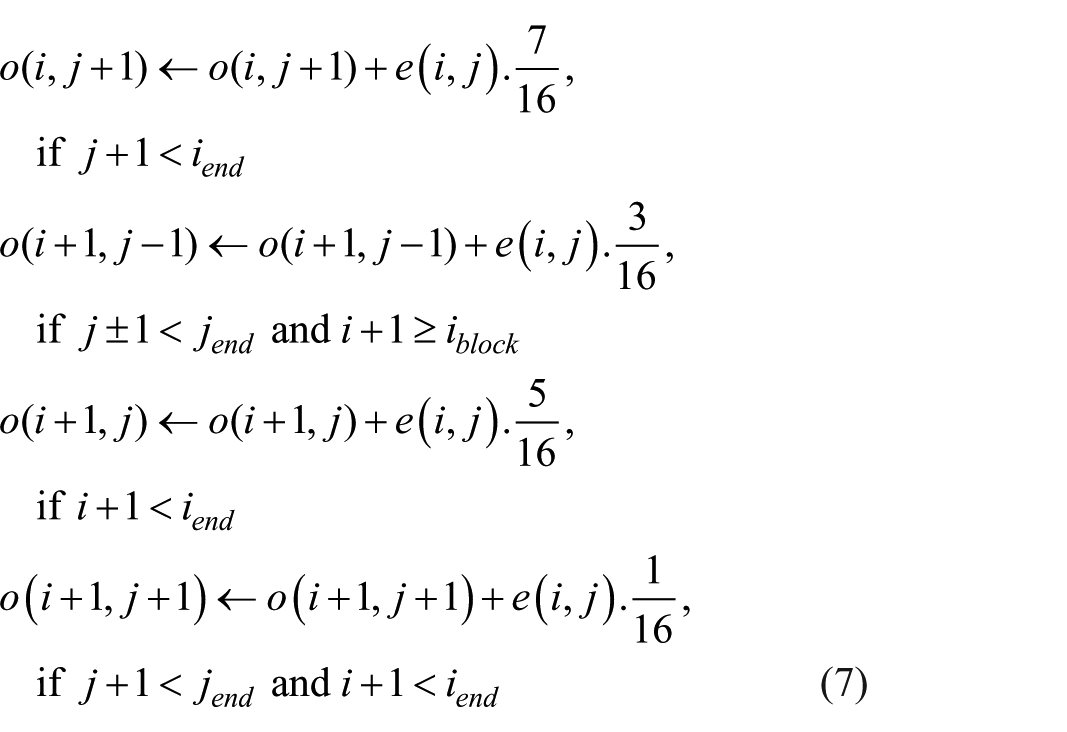

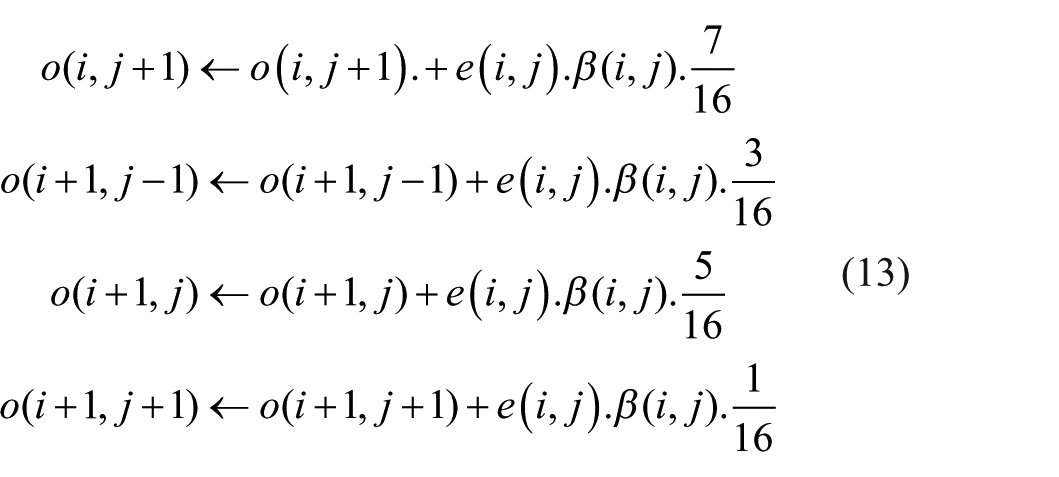

Originally introduced by Floyd and Steinberg in 1976, 29 the Floyd–Steinberg algorithm remains one of the most widely used error diffusion methods. 27 After calculating the nearest color in the palette (2), the resulting error (3) is spread to neighboring pixels in specific ratios (4) and (5).

This is the definition of the Floyd–Steinberg error diffusion matrix:

The error distribution is applied to nearby pixels as follows:

Equation (4) represents the pixel processed at

Block-based error diffusion

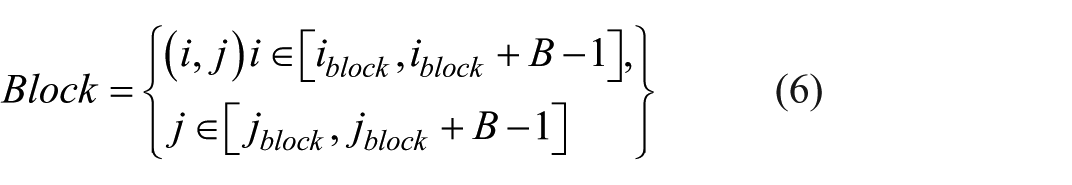

This method divides the image into blocks of specific sizes (e.g. 5 × 5) and applies error diffusion independently within each block. This localized processing prevents error propagation across block boundaries, maintaining better control over diffusion behavior. Whereas Zhou et al. 30 proposed a block-based threshold modulation error diffusion algorithm that modulates thresholds dynamically and introduces a novel scan path to support parallel halftoning, the fixed diffusion coefficients of the Floyd–Steinberg algorithm are preserved within each block in the proposed method. By constraining error diffusion to localized regions and maintaining consistent coefficients, the method achieves a more balanced visual outcome and improved structural coherence. In this method, Equation (2) is used for color selection, and Equation (3) is used for error calculation.

The following equation divides the image into blocks of varying sizes:

Here, B denotes the block size – a one-size-fits-all parameter – which defines the width and height (in pixels) of each square region over which local computations are performed. Each block has dimensions

The error is distributed to the neighboring pixels within the block:

Adaptive error diffusion

Error diffusion is a widely used half-toning technique that converts continuous-tone images into binary representations by distributing quantization errors to neighboring pixels. However, conventional error diffusion methods with fixed diffusion kernels – such as the Floyd–Steinberg algorithm – often lead to visual artifacts, particularly in textured or edge-dense regions. To address this, adaptive error diffusion approaches have been proposed, where the diffusion process dynamically responds to local image characteristics. Wong 31 introduced an adaptive framework that optimizes the diffusion kernel in realtime using a local frequency-weighted error criterion and the least mean squares (LMS) algorithm – an iterative optimization method commonly used in adaptive signal processing – achieving superior halftone quality. Similarly, Chung et al. 32 employed gradient magnitude and orientation to adaptively modulate thresholds and filter weights, enhancing edge preservation in halftone images. Building upon these foundations, this study implements a gradient-based modulation scheme that adjusts diffusion strength based on local edge intensity, not to suppress propagation near edges, but to ensure sufficient diffusion in flat regions where tonal smoothness is visually critical in textile outputs.

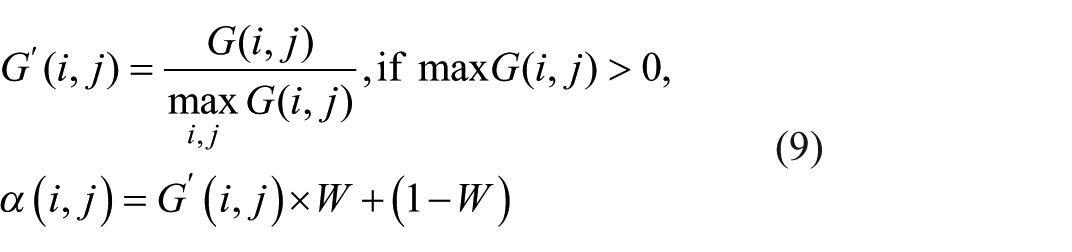

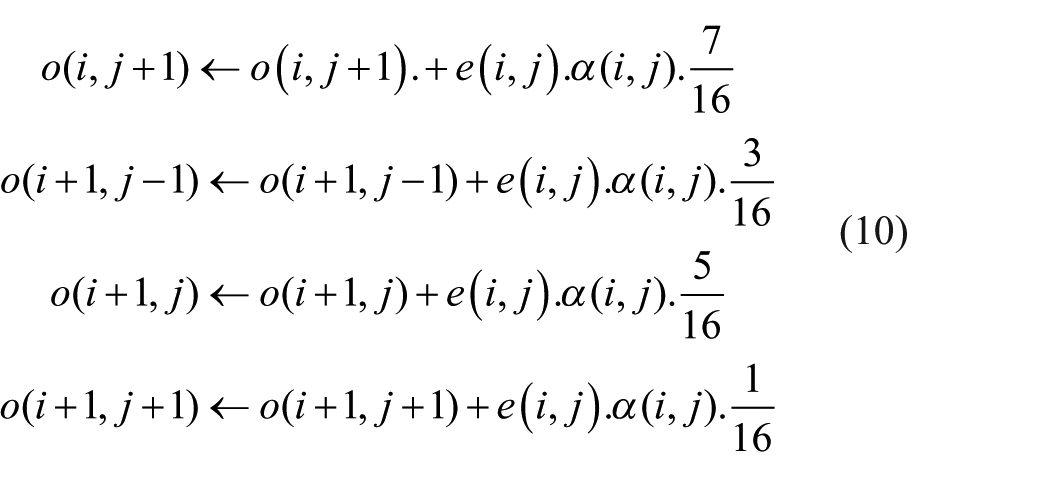

Although this method is categorized as adaptive due to its use of a spatially varying coefficient

Gradient calculation: Horizontal and vertical gradient components

Normalization of the gradient: We have

where

Error propagation: A Floyd–Steinberg-like error diffusion is performed, in which the fixed coefficients given in Equation (5) are multiplied by the spatially adaptive factor

Brightness-preserving error diffusion

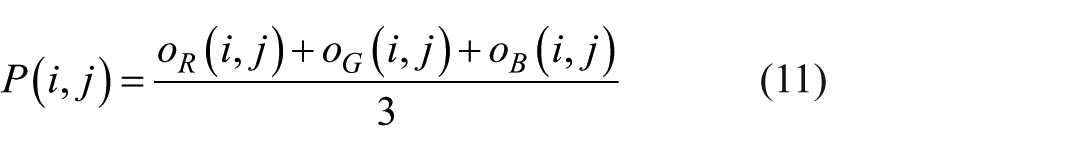

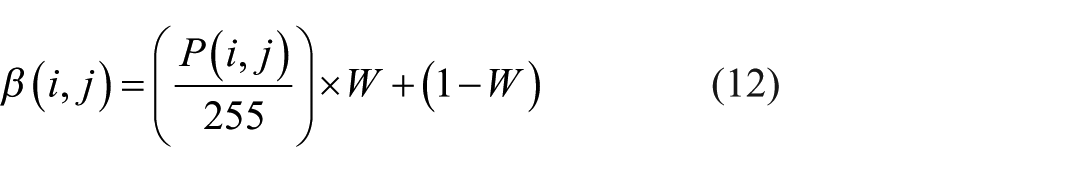

This method represents a brightness-preserving error diffusion approach, designed to maintain local brightness consistency while minimizing visual artifacts. Inspired by perceptual models of the human visual system (HVS), such as just-noticeable difference (JND) and Weber’s law, previous work by Yu et al. 33 proposed a complex enhancement framework that adjusts image contrast and color based on perceptual sensitivity maps. Although their method involves histogram reconstruction, bilateral filtering, and perceptual mapping to enhance visual quality, a computationally efficient alternative for error diffusion tasks is offered in this study. By modulating error propagation using a simple pixel-wise brightness estimation, the diffusion strength is dynamically adapted to preserve perceptual luminance and suppress artifacts, especially in dark or saturated regions. The pixel-wise brightness estimation used in this process is mathematically defined in Equation (11), which serves as the basis for the adaptive weighting factor introduced in the following expression Equation (12).

A tuning factor

In Equation (12),

Laplacian-based adaptive error diffusion

This is similar to adaptive error diffusion, but it calculates the gradient information more precisely using the Laplacian filter instead of the Sobel filter. It is particularly advantageous for sharp edges or high-frequency areas. When calculating, the Laplacian result is normalized, and the error distribution is again performed over a similar matrix using Equation (3).

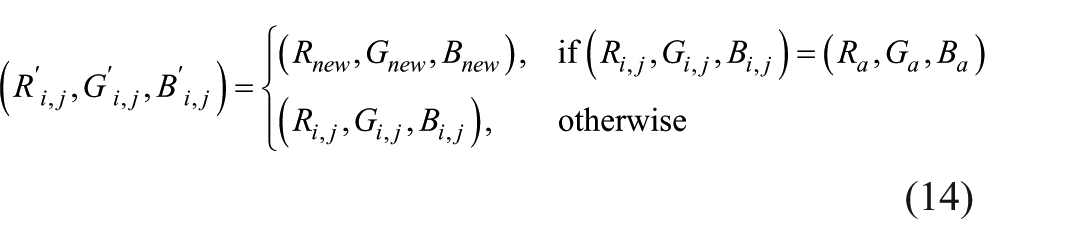

Integration with CAD software and conversion to knitting code

Images reduced with error diffusion algorithms are converted into a format suitable for CAD software to be used for flat knitting machines. In this study, the.bmp file format has been determined as the basic output format, taking Raynen design software as an example. This format is visually transferred to the software, and the color codes are directly matched with the color tables of the knitting design software.

where:

The processed image is interpreted in such a way that each pixel corresponds to a stitch or pattern mark in the knitting code. Here, the color codes are ensured to match correctly in the machine commands, taking into account the thread palette predetermined by the designer. Thus, the number of threads to be used in the machine and the pixel colors in the design are harmonized using Equation (14). Outputs in .kni format, etc., are produced through CAD software and transferred to the machine.

Analysis and evaluation methods

To evaluate the effectiveness of the proposed system, a multimetric quality assessment approach was employed, focusing on both perceptual fidelity and computational efficiency. A set of seven widely accepted image quality metrics was used, each capturing different visual or mathematical dimensions of the input images, which are relevant for ensuring high-fidelity knit pattern generation.

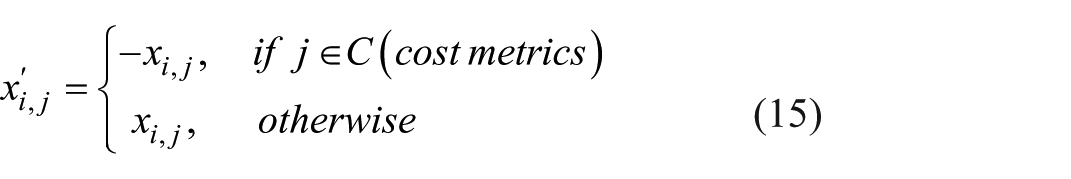

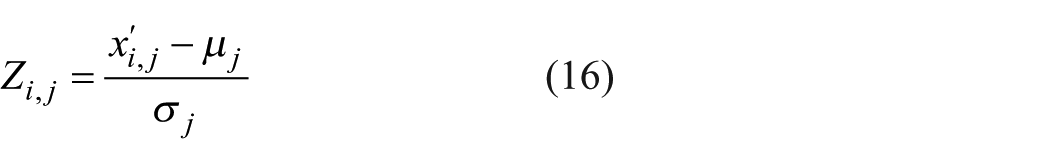

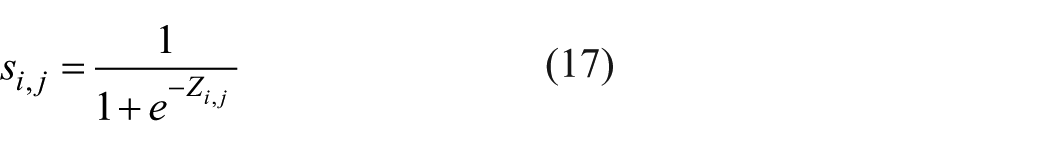

Metric normalization and weighting

In multimetric performance evaluation systems, individual metrics often operate on different numerical scales and have varying statistical distributions. To ensure fair and interpretable aggregation of such metrics, a robust normalization and weighting strategy is essential.

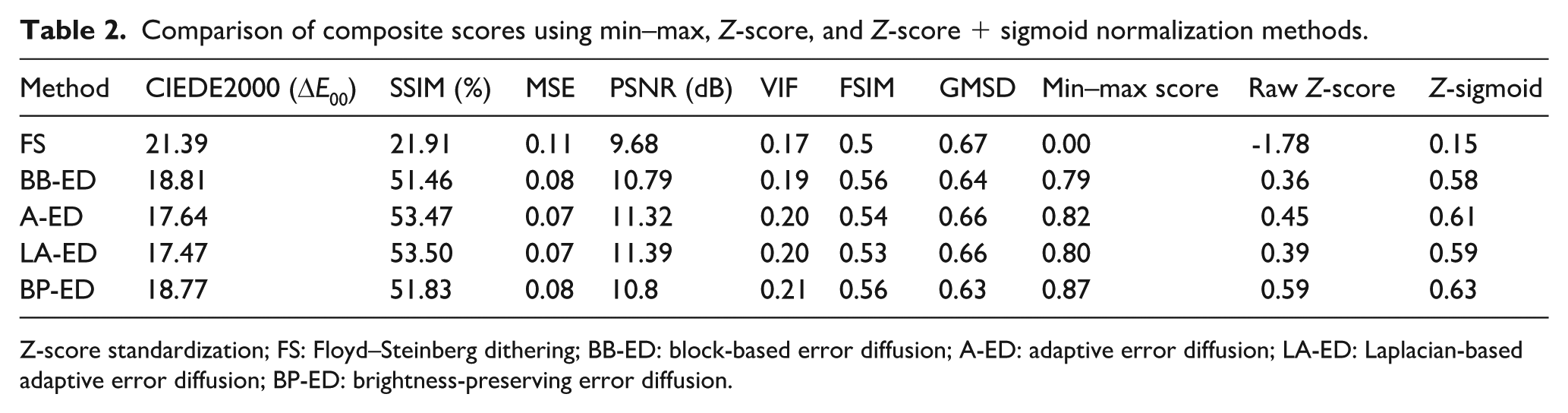

Conventional normalization methods such as min–max normalization or standalone Z-score standardization exhibit notable limitations in this context. Specifically, min–max normalization assigns a score of zero to any method that scores the worst across all metrics, even when the differences between metric values are marginal. Similarly, Z-score normalization alone can cause misleading negative scores for methods that are only slightly below average in every metric. This behavior disproportionately penalizes methods like Floyd–Steinberg dithering, which in our experiments received the lowest raw metric values overall, resulting in a composite score of 0.00 using min–max and negative aggregation in Z-score alone. These shortcomings motivated the development of the proposed Z-score followed by sigmoid transformation, which ensures smoother scaling and better reflects subtle differences among closely performing methods.

In response to these issues, we developed a two-stage normalization approach that combines Z-score standardization with a subsequent sigmoid transformation. This method was designed to preserve subtle variations in performance and present designers with a more perceptually meaningful and stable composite score, especially when metric values are close in magnitude.

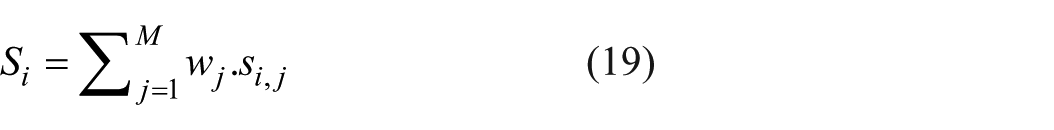

To illustrate the comparative impact of normalization strategies, Table 2 presents the composite scores obtained via min–max normalization, raw Z-score aggregation, and the proposed Z-score + sigmoid approach. The Floyd–Steinberg dithering method, despite having metric values that are numerically close to other methods, was assigned a score of 0.00 under min–max normalization and a highly negative value of −1.78 using raw Z-score aggregation. In contrast, the Z-score + sigmoid method yielded a perceptually more balanced score of 0.15 for Floyd–Steinberg dithering, avoiding excessive penalization. Overall, the proposed approach demonstrated smoother differentiation among methods with subtle metric differences, leading to more stable and interpretable rankings.

Comparison of composite scores using min–max, Z-score, and Z-score + sigmoid normalization methods.

Z-score standardization; FS: Floyd–Steinberg dithering; BB-ED: block-based error diffusion; A-ED: adaptive error diffusion; LA-ED: Laplacian-based adaptive error diffusion; BP-ED: brightness-preserving error diffusion.

Let

Here,

To normalize across metrics with different scales and distributions, we first apply Z-score standardization:

where

Sigmoid transformation

To map standardized scores into the interval [0,1] and limit the impact of outliers, we apply a sigmoid function:

This transformation emphasizes moderate differences near the mean while dampening extreme values, allowing for perceptual subtleties to influence the final score without being overwhelmed by large deviations.

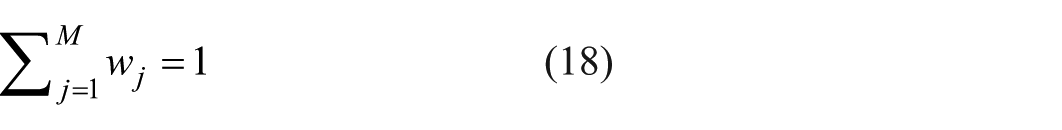

Weighting scheme

A weighted aggregation of normalized metric scores was performed to compute a composite image quality index. The weighting scheme was constructed in two stages.

First, the perceptual characteristics of each metric were examined based on prior studies,34 –38 which informed the qualitative role of features such as structural fidelity, edge sensitivity, and color difference in human visual perception. Although these studies do not prescribe explicit weights, they provide theoretical grounding for the selection of relevant metrics.

Second, a parameter sweep was conducted using a set of representative knitted image samples. Multiple weight configurations were evaluated by comparing composite scores with observed visual outcomes in terms of structural continuity, tonal consistency, and perceptual detail. The final default weights were selected to yield visually stable and balanced results across a range of test cases. This default configuration is included in the system but can be modified by the designer as needed.

Final composite score computation

Each metric

Let

This scalar score provides an interpretable and perceptually grounded measure of overall image quality.

The specific weights

SSIM (0.25) – strong structural similarity aligned with local luminance and contrast perception. 35

FSIM (0.20) – incorporates phase congruency and gradient information to capture perceptually salient features. 34

CIEDE2000 (0.20) – perceptually uniform color difference model grounded in CIELAB space. 36

VIF (0.10) – evaluates perceptual information fidelity via natural scene statistics in a wavelet domain. 37

GMSD (0.10) – sensitive to local gradient magnitude variations, modeling edge awareness of the human visual system. 37

PSNR (0.10) – widely used fidelity baseline, albeit weakly aligned with perceptual quality.

MSE (0.05) – retained for historical completeness and to capture absolute signal fidelity in a statistical sense.

These weights reflect both empirical tuning and perceptual relevance as established through literature and visual benchmarking.

Processing time evaluation

In addition to visual quality, processing time was measured to evaluate practical applicability in real-time and batch production workflows. Each color reduction algorithm was run three times on a workstation equipped with an Intel Core i7-12700H processor and 16 GB RAM. The visuals were standardized at a resolution of 900 × 900 pixels, with color palettes set to 6, 8, and 12 colors.

Average execution times were recorded to compare performance under identical input settings. Time efficiency was particularly important for algorithms like Floyd–Steinberg, where rapid textile throughput is critical in industrial settings.

System adaptability

The system’s performance was also examined under varying yarn palette constraints, simulating hardware or stock limitations. As the number of available colors decreased, perceptual quality scores – especially SSIM, FSIM, and GMSD – declined across all methods. However, algorithms such as brightness-preserving error diffusion maintained relatively higher fidelity, demonstrating the system’s adaptability to constrained design scenarios. This suggests the system is well suited not only to optimal conditions but also to resource-limited production environments.

Results and discussion

Performance analysis: color accuracy and structural fidelity

The evaluation was conducted on three different images, each with unique color and structural characteristics – namely a flower photograph, a bird image, and a human portrait. Each image was reduced to three distinct color palettes containing 6, 8, and 12 colors. These palettes were constructed to simulate thread colors in textile design and included perceptually diverse hues. The 6-color palette consisted of primary tones such as black (0,0,0), white (255,255,255), yellow (255,255,0), red (255,0,0), blue (0,0,255), and green (0,255,0). The 8-color palette introduced dark brown (120, 85, 55) and pastel green (180, 210, 190), while the 12-color palette further expanded to include medium blue (90, 140, 200), light olive green (140, 180, 120), bluish gray (100, 120, 160), and pale beige (220, 220, 180).

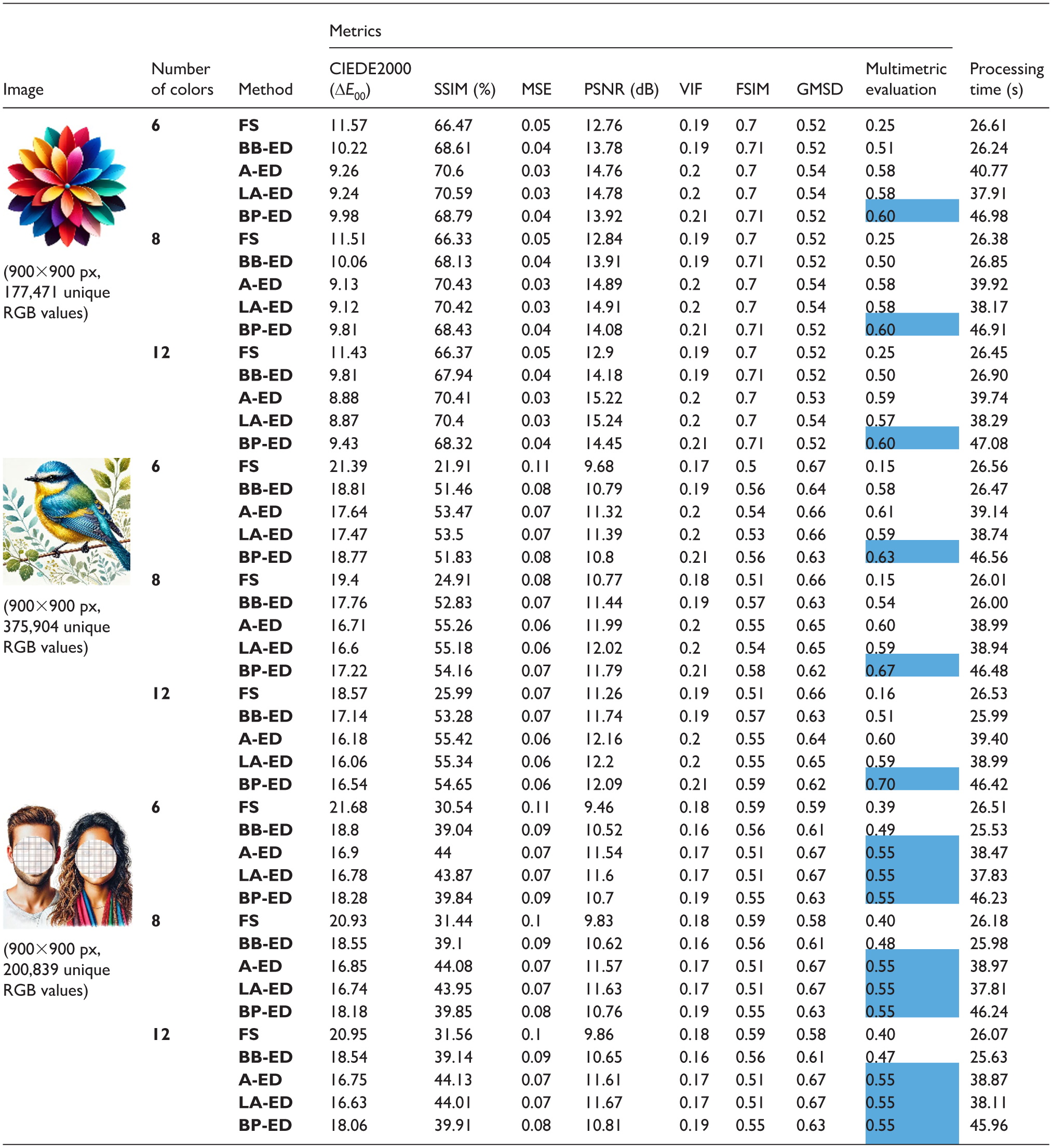

Each combination of image and color palette was processed using five different error diffusion methods: Floyd–Steinberg dithering, block-based error diffusion, adaptive error diffusion, Laplacian-based adaptive error diffusion, and brightness-preserving error diffusion. The quality of the reduced images was evaluated using seven complementary metrics – CIEDE2000, SSIM, MSE, PSNR, VIF, FSIM, and GMSD – which were normalized and combined into a single multimetric evaluation score via perceptual weighting.

Tables 3 summarize the results. In general, the brightness preserving error diffusion method consistently achieved the highest composite scores across most palette sizes and images. Its performance was particularly strong in the 6-color and 12-color scenarios, indicating its robustness in both limited and extended color contexts. For instance, in the 12-color flower image, it reached the highest score of 0.60, outperforming even perceptually optimized variants. In contrast, adaptive error diffusion and Laplacian-based adaptive error diffusion also performed competitively in several scenarios, especially in mid-range palettes (e.g. 8-color combinations), often achieving second-best scores.

Weighted multimetric evaluation of color reduction algorithms for knitting applications.

FS: Floyd–Steinberg dithering; BB-ED: block-based error diffusion; A-ED: adaptive error diffusion; LA-ED: Laplacian-based adaptive error diffusion; BP-ED: brightness-preserving error diffusion.

The classical Floyd–Steinberg dithering algorithm consistently scored the lowest across all test cases, primarily due to its high color error (CIEDE2000) and weak SSIM scores. Although it remains efficient, it lacks mechanisms for structural or perceptual optimization.

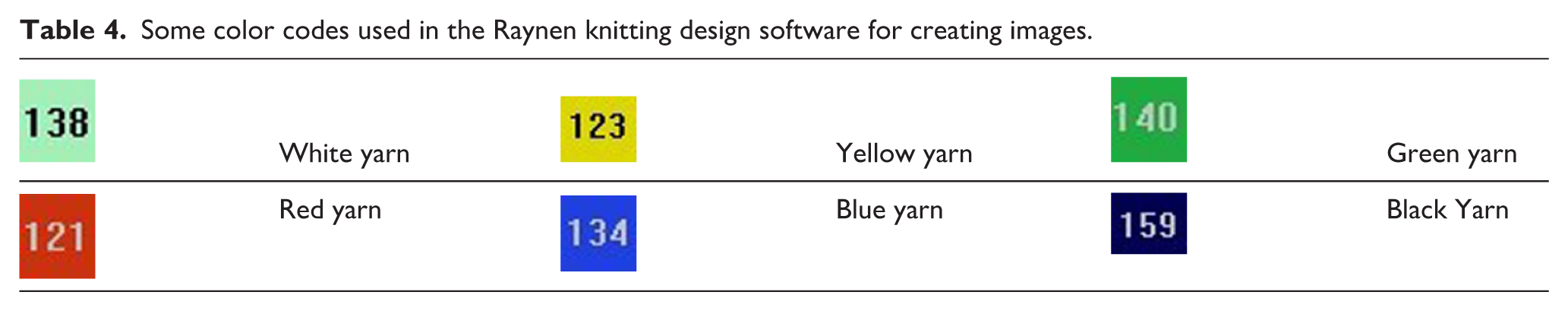

Among the five error diffusion algorithms evaluated (Figure 4), the brightness-preserving method yielded the most consistent perceptual quality across diverse test cases. This can be attributed to its dynamic modulation of error propagation based on local luminance characteristics. By adaptively scaling the diffusion strength to maintain brightness consistency – particularly in dark or saturated regions – the method effectively suppresses common artifacts such as banding or unnatural contrast shifts. This property is especially beneficial for knitted outputs, where tonal transitions are often constrained by the discrete nature of yarn colors and carrier resolution. Furthermore, the perceptual alignment of this method with human sensitivity to luminance variation likely contributed to its favorable performance in metrics such as SSIM, FSIM, and CIEDE2000.

Outcomes of the implementation of error diffusion algorithms.

Importantly, this study does not propose a one-size-fits-all solution. Instead, it provides a perceptually grounded evaluation framework that enables designers to identify which method yields the most visually faithful outcome for a given image and palette. The flexibility of this framework allows its integration into textile workflows where palette limitations and aesthetic fidelity must be balanced. Representative visual outputs of the evaluated methods, reduced to different color palette sizes, are presented in Table 3 to illustrate the perceptual differences across algorithms.

Conversion to a knitting code

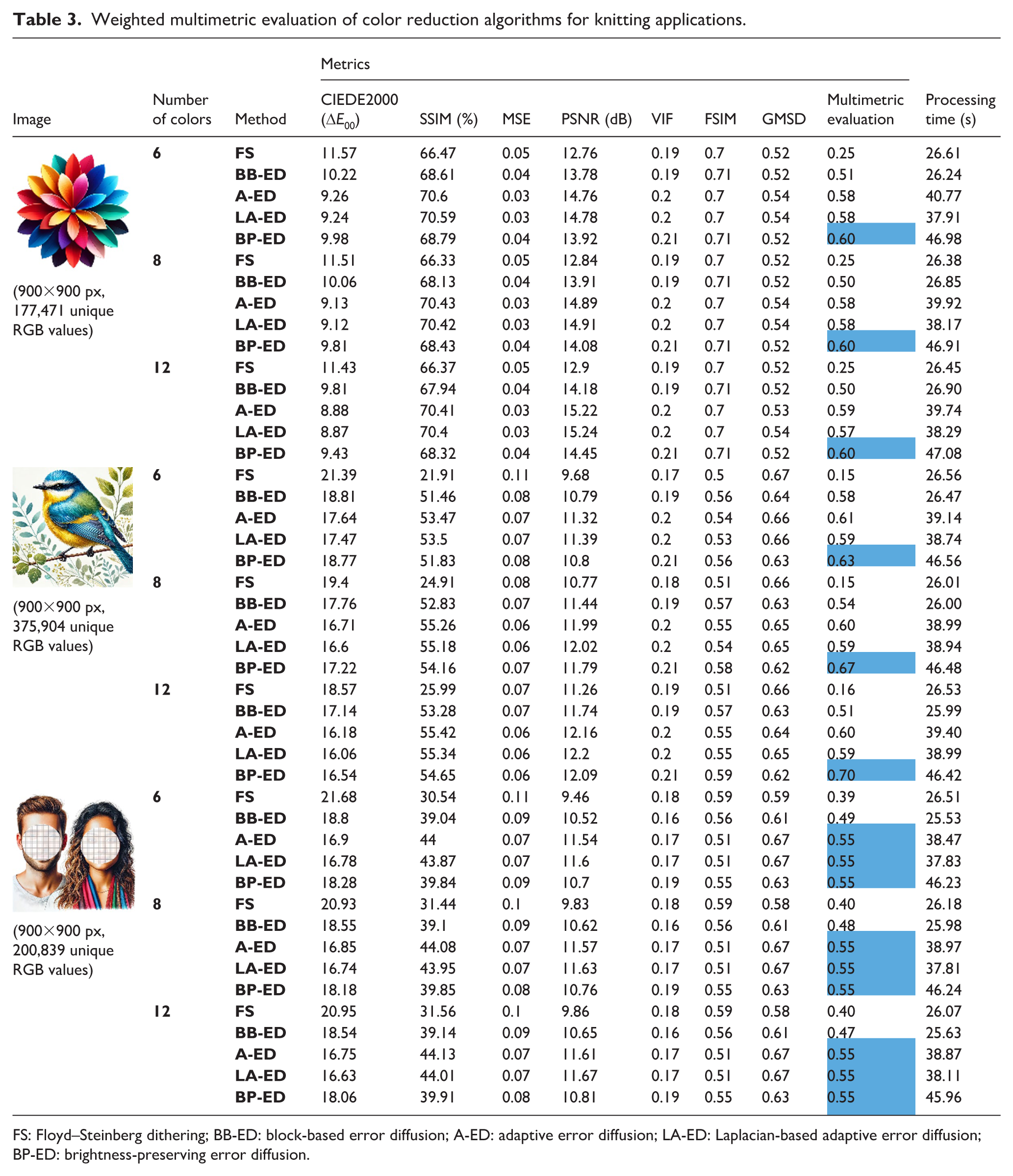

The proposed system consists of a multistage pipeline: the designer selects a target color palette, various error diffusion methods reduce the color space, a set of perceptual quality metrics evaluates the results, and finally, the selected output is converted into a knitting-compatible format. This final stage ensures that the designer’s chosen visual – based on either the system’s recommendation or personal preference – is seamlessly transferred into the production environment.

The system automatically maps the colors in the selected image to predefined knitting codes, as listed in Table 4. These codes vary depending on the number of colors in the palette and serve as identifiers for thread-color associations in the knitting machine. The code-mapped output is exported in BMP format and can be directly uploaded into standard knitting design software. An example of the converted image is shown in Figure 5.

Some color codes used in the Raynen knitting design software for creating images.

The resulting images of the digital visual converted into knitting codes. (a) Floyd–Steinberg dithering. (b) Block-based error diffusion. (c) Adaptive error diffusion. (d) Laplacian-based adaptive error diffusion. (e) Brightness-preserving error diffusion.

In the knitting process, each color corresponds to a physical thread loaded into a specific yarn carrier. Once the code-integrated BMP is loaded into the machine, the visual is transformed into a tangible textile product (Figure 6). This stage finalizes the system’s workflow by connecting digital image processing with physical fabric production. Figures 6 and 7 display the knitted outputs of five error diffusion algorithms applied to two different images – a flower and a bird motif – under varying production settings. Figure 6 was produced at 900 × 900 resolution using 18-gauge, whereas Figure 7 reflects a 700 × 700 resolution with 14-gauge, resulting in comparatively lower visual sharpness and tonal continuity. These figures highlight how resolution and machine gauge influence the perceptual outcome of different quantization strategies.

Knitted version of the image (900 × 900 px) using 18 gauge.

Knitted version of the image (700 × 700 px) using 14 gauge.

To support detailed visual analysis of how each algorithm manifests in the physical knitted outputs, Figure 6 presents both the full knitted results generated by the five error diffusion methods and enlarged views cropped from selected high-detail regions of each sample. These enlarged segments highlight stitch-level color distribution and local tonal transitions, enabling a more nuanced comparison. For example, Floyd–Steinberg error diffusion output exhibits noticeable color dispersion beyond the intended motif boundaries due to its aggressive, unconstrained propagation. In contrast, the block-based error diffusion result demonstrates more spatial control and reduced color bleed, although some loss of tonal richness and visual smoothness is apparent. Outputs from adaptive and Laplacian-based adaptive error diffusion preserve clearer gradients and exhibit sharper edge transitions, offering perceptually closer approximations to the original image. Finally, brightness-preserving error diffusion maintains vivid coloration while producing smoother and more homogeneous tonal blending across adjacent regions, making it particularly effective in low-contrast areas.

Comparison with existing approaches

A recent patent by Conti and Didero (WO2022168014A1) proposes a method for adapting adversarial images to Jacquard weaving machines via dimensionality reduction and fixed posterization. Although general-purpose tools like Adobe Photoshop allow for basic color reduction and posterization, these methods are manual and not tailored for yarn-limited textile workflows. Moreover, they lack mechanisms to ensure direct correspondence between digital colors and physical yarn inventories, often requiring advanced user intervention and visual approximation.

In contrast, yarn palette definition is incorporated as a native component of the workflow in the proposed system. This ensures that all reduced colors correspond exactly to available yarns, eliminating the need for post-hoc color matching and enabling a more predictable and production-ready design process.

The system also integrates five error diffusion algorithms, each yielding distinct results in terms of edge clarity and tonal transitions. Designers can quantitatively evaluate these alternatives using seven perceptual quality metrics (CIEDE2000, SSIM, MSE, PSNR, VIF, FSIM, and GMSD), allowing informed decisions during early design stages.

Previous research has primarily focused on geometric or structural aspects of knit design – such as automatic mapping of 3D meshes to stitch patterns, 10 sketch-driven garment layout generation, 11 or warp-knit simulations for form optimization. 14 Interactive CAD and visual programming tools aim to simplify pattern specification,7,15 but often assume manual resolution of color conflicts.

Other approaches address perceptual or fabrication-related goals. Computational illusion knitting 12 embeds dualview images via stitch microgeometry, while pixel art adaptation for handicraft fabrication 23 adjusts designs in real time to correct user errors during manual fabrication. Although effective for visual impact or error tolerance, these methods do not explicitly address the constraint of yarnaccurate color mapping.

By focusing on material-aligned color fidelity, the proposed method complements existing systems and fills a critical gap between digital color design and its physical realization in flat-bed knitting environments.

Workflow integration and user accessibility

Traditional workflows for converting digital images into knitting-compatible outputs often rely on multiple software tools. Designers typically use image editors like Photoshop for posterization, followed by manual or third-party tools to generate knitting code. These disjointed steps require significant technical expertise, manual adjustments, and switching between software environments – factors that hinder efficiency and accessibility, particularly for novice users.

The proposed system consolidates the entire process into a single, user-friendly platform. Designers can compare algorithmic results within the interface, evaluate them based on quantifiable metrics, and export the selected output directly as a BMP file compatible with commercial knitting machines. This automatic conversion step eliminates the need for intermediate tools and reduces the cognitive and technical load on the user.

The workflow further maps each color in the selected image to predefined knitting codes (as shown in Table 4), ensuring compatibility with the thread carriers of the knitting machine. Once the BMP file is uploaded, the machine performs the knitting process according to the color mapping. Figure 5 illustrates the code-converted image, and Figure 6 and 7 shows the final textile product. Figure 8 presents the visual results of error diffusion algorithms according to 6-, 8-, and 12-color palettes, highlighting the impact of palette size on the final knitted appearance. Figure 9 presents sequential snapshots of the knitting machine in operation, illustrating different stages of producing the image-based pattern.

Visual results of error diffusion algorithms according to 6, 8, and 12 color palettes.

The knitting processes of reduced digital images.

By unifying color reduction, quality evaluation, and code generation, the system improves design throughput and opens up knitting design to a broader range of users. This integration not only increases creative flexibility but also supports sustainable production by reducing the need for material rework and excess yarn stock.

Integration into automated workflows and production scalability

The architecture of the proposed system has been intentionally designed to support both individual designer use and potential integration into industrial-scale workflows. Each core stage of the pipeline – including image input, yarn-constrained color quantization, algorithmic evaluation based on visual quality metrics, and output generation – operates as a modular, file-driven process. These stages can be executed manually through the user interface or automated via batch processing and scripting, enabling scalable deployment across large datasets and production environments.

After quantization and evaluation, the system generates BMP image files where each pixel corresponds to a reduced color mapped from the selected yarn palette. Although these BMP files are not machine instructions themselves, they are structured to be fully compatible with commercial flat-knitting CAD software. Within such platforms, the BMP visuals serve as the foundation for building knit-ready pattern instructions, using existing CAD workflows. This design ensures that designers can work within a familiar environment while benefiting from preprocessed, palette-constrained inputs aligned with machine limitations – such as yarn carrier capacity and resolution constraints.

The separation between algorithmic preprocessing and CAD-based instruction generation makes the system particularly suitable for automation. For instance, given a repository of digital images and predefined palette templates, the system can automatically select quantization methods, compute perceptual scores, and export BMP files for CAD import – all without manual intervention. This positions the workflow as a practical intermediary layer between high-level design intent and technical production configuration.

Nonetheless, tighter integration with production control systems – such as knitting software application programming interfaces (APIs), yarn inventory databases, or scheduling platforms – remains a direction for future work. The current file-based structure and parameterized design provide a solid foundation for developing such extensions, paving the way for fully automated, closed-loop design-to-production pipelines.

Conclusion

This study proposed an integrated image-to-knit system that combines perceptually motivated color quantization, multimetric evaluation, and automatic knitting code generation. By incorporating seven visual quality metrics into a normalized and weighted composite score, the system assists designers in selecting thread-constrained palettes and objectively identifying the most visually faithful representation among five error diffusion algorithms. Among these, brightness-preserving error diffusion showed comparatively strong performance in preserving tonal consistency and perceptual quality, particularly under reduced color palettes. However, context-specific advantages were observed for other methods – such as adaptive and Laplacian-based diffusion – emphasizing the value of maintaining algorithmic flexibility based on design intent.

Beyond evaluation, the system enables seamless transition from visual design to textile fabrication. By mapping reduced colors to yarn carriers and exporting standardized BMP files compatible with knitting CAD tools, the framework bridges the gap between image-based creativity and machine-ready production. Its modular structure supports batch processing, parameter customization, and designer feedback – making it suitable not only for experimental design, but also for integration into scalable production workflows.

Despite these contributions, certain limitations remain. The output quality is inherently bounded by the number of yarn carriers available on the target knitting machine, which may reduce fidelity when processing images with high color complexity. Although the system can handle large and gradient-rich visuals, such cases may require longer processing time and can still lead to visible quantization artifacts under strict palette constraints. Furthermore, the current implementation does not simulate yarn texture, post-knit deformation, or perceptual changes in physical lighting conditions.

Future research will focus on expanding input support to include dynamic yarn availability, enhancing real-time feedback during fabrication, and integrating subjective evaluation by designers and end-users. In addition, the integration of this workflow with 3D knit simulation and yarn texture modeling tools will be explored to strengthen the visual and structural realism of the design-to-production pipeline.

Footnotes

Acknowledgements

This paper is derived from the PhD thesis titled Deep learning based creation and visualization of knitting code of a flat knitting machine prepared under the academic supervision of Professor Erhan Akin at the Computer Engineering Department of the Institute of Science, Fırat University.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Data availability statement

This study involves the development and implementation of a software/system application. All relevant data, including system outputs, screenshots, and descriptive content, are provided within the article. Nevertheless, the data supporting the findings of this research are available from the corresponding author upon reasonable request.