Abstract

In this paper, a novel modified optimization algorithm is presented, which combines Nelder-Mead (NM) method with a gradient-based approach. The well-known Nelder Mead optimization technique is widely used but it suffers from convergence issues in higher dimensional complex problems. Unlike the NM, in this proposed technique we have focused on two issues of the NM approach, one is shape of the simplex which is reshaped at each iteration according to the objective function, so we used a fixed shape of the simplex and we regenerate the simplex at each iteration and the second issue is related to reflection and expansion steps of the NM technique in each iteration, NM used fixed value of

Introduction

The Nelder-Mead (NM) is one of the best direct search based optimization technique for unconstrained multivariable complex problems. 1 This strategy was proposed by Nelder and Mead. 2 Due to its derivative free nature and ease of implementation it finds its applications in diverse disciplines, for example, engineering, computer science, chemistry, biosciences, etc.3–12

NM is a very popular heuristic-based optimization strategy, however, a very small number of papers have addressed its convergence. In this regard, a detailed study was carried out in Torczon. 13 An analytical expression for the convergence of pattern search methods was formulated. However, the NM algorithm was not considered in that study because of the varying structure of the simplex in each iteration which directly depends on the cost function. The convergence of NM strategy was further investigated by Lagarias et al., 14 where one and two dimensional strictly convex functions were studied. It was claimed that the algorithm converges for a convex function of one variable, whereas, for the two-variable functions the convergence can also be ensured. McKinnon et al. 15 also highlighted the convergence issues of NM. It was concluded that if the objective function considered in the work of Lagarias et al. 14 is thrice continuously differentiable, then the minimum point can be achieved, otherwise, the algorithm may converge to a non-stationary point. In Lagarias et al. 16 a variant of the NM technique was proposed for the twice continuously differentiable cost function.

Besides the convergence issues, this optimization technique also suffers from dimensionality problems. The convergence performance is proportional to the dimension of the optimization problem. The optimization problem with lower dimension will converge faster as compared to higher dimension problem (see for instance Torczon, 13 Lagarias et al., 16 and Han and Neumann 17 ). This phenomenon is called the curse of dimensionality. 18 Price et al. 19 proposed a variant of NM which shows good results for higher dimensions with better convergence rate. Moreover, in Gao and Han 20 proposed a modified approach in which expansion, contraction, and shrinking become the function of the dimension of an optimization problem.

Many other modifications are also proposed in the literature. In Mehta and Dasgupta 21 a modified NM algorithm was proposed for constrained optimization problems. Online implementation of NM method was proposed for computing systems and chemical processes in Poojary and colleagues22–24 and Xiong and Jutan 25 respectively. In Marsililibelli and Castelli 26 an algorithm was proposed in which the parameters of simplex search are made adaptive according to the shape of the objective function. It also proposed a random search procedure for the initialization of simplex that assures the convergence of global minimum. In Musafer and Mahmood, 27 a free selective simplex for the downhill Nelder Mead simplex algorithm is proposed rather than the determinant simplex that forces its elements to perform a single operation,such as reflection.

Basically NM is a local search technique, however, some hybrid global optimization techniques were developed in Yıldız and colleagues,28–39 which incorporate NM algorithm. The aim of hybridization is to accelerate global convergence.

Although many modifications are proposed in NM that address the issues of convergence and curse of dimensionality, but still there is scope to investigate new modifications that consider simplex structure like Spendley et al. 40 and optimal selection of parameters for NM operations that ensure convergence of the algorithm and give better performance for higher dimensions.

In this paper, a novel modified optimization algorithm is presented, which combines Nelder-Mead (NM) method with a gradient-based approach. In this technique, the simplex is initialized as a non-degenerated structure and its shape does not change with iterations. Therefore, it ensures the convergence of the algorithm even for higher dimensions. Moreover, due to the inculcation of the gradient based-approach, the modified NM algorithm solves the optimization problem in fewer iterations as compared to the NM. The Online implementation of this modified technique is also presented. Finally, this method is applied to a system identification problem where parameters are estimated in mean square error sense. In this case optimization problem is convex with a global minimum. This is a classical optimization problem in adaptive filter theory. 41

The rest of the article is arranged as follows. The NM method is explained in Section 2, whereas, Section 3 presents the proposed algorithm. The online implementation of MNM is given in Section 4, the result discussion is presented in Section 5 and the article is concluded in Section 6.

Nelder-Mead

Nelder-Mead is a well known heuristic optimization method based on simplex structure. A simplex is hyper tetrahedron in n-dimensional space consisting of

Where

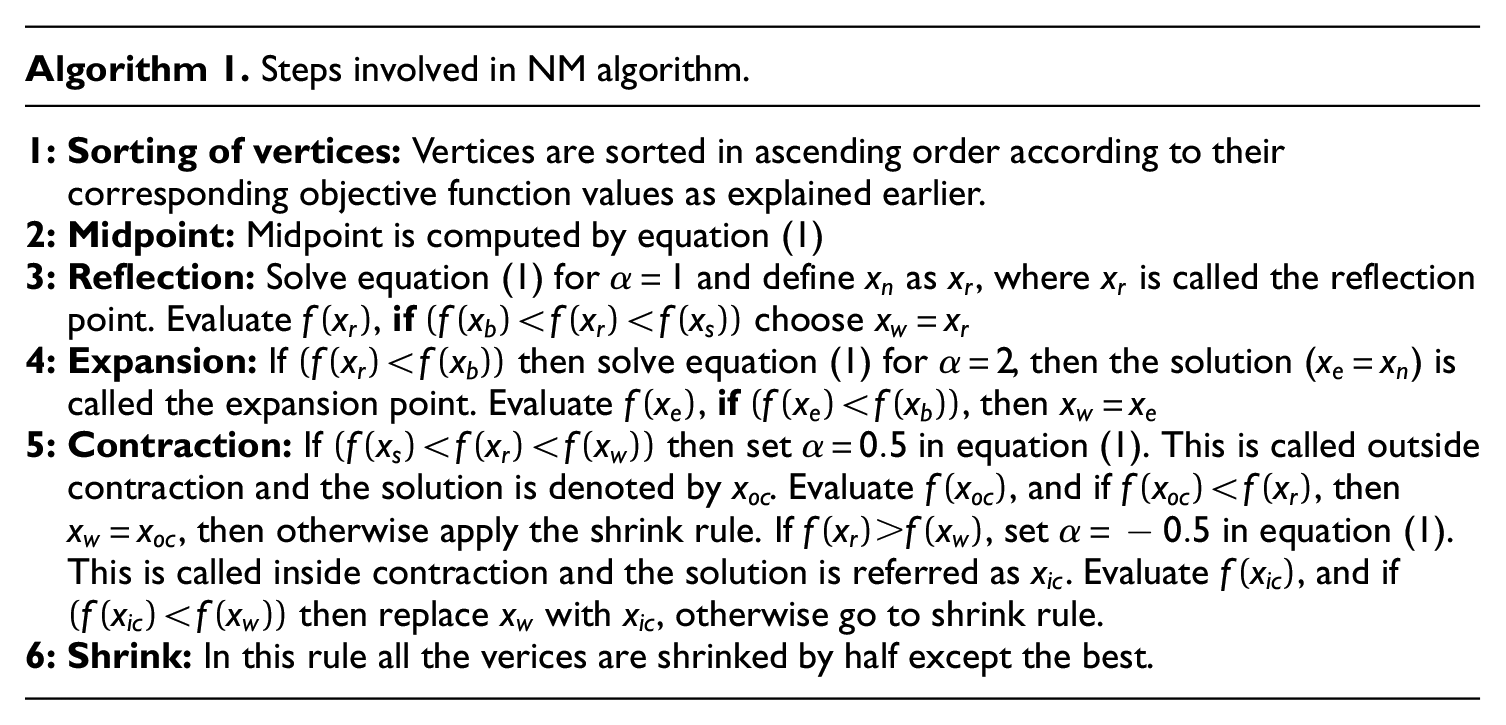

All the rules of NM algorithm are presented in the Figure 1.

Operations performed on the simplex in Nelder-Mead’s algorithm for n = 2.

Modified Nelder Mead

The NM algorithm explained in the previous section requires direction of propagation of the simplex structure and the value of

A modified NM (MNM) algorithm is proposed in this section, which differs from the conventional NM method in two aspects: structure of the simplex and the selection of

Consider a generalized unconstrained optimization problem, given by equation (2)

where

In MNM method the simplex is a hyper tetrahedron structure in

where

The MNM method starts by initializing

where

The

The solution of the above optimization problem is

The Algorithm 2 presents all the steps of MNM.

Parameter estimation using MNM: An online implementation

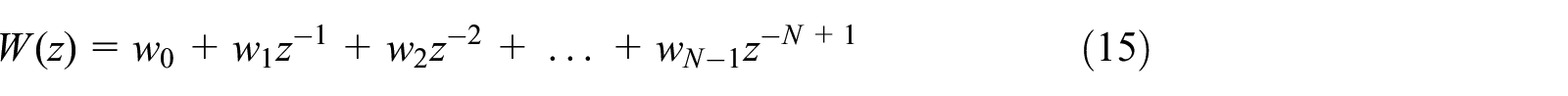

In this section online implementation of the MNM algorithm for parameter identification is discussed.

Problem statement

The algorithm is used to estimate the parameters of an FIR system. The adaptive filter shown in Figure 2 estimates the parameters of the system in such a way that error

where

N-taps transversal adaptive filter. 41

The error

where

Equation (9) is the standard representation of digital filters in the literature. It describes the scalar product of two vectors

Problem formulation

The MNM is implemented for estimating parameters

Equation (10) highlights a very important property of the MNM algorithm. Now the optimization problem of

Now the centroid for the new simplex is determined by equation (12)

where

Results and discussion

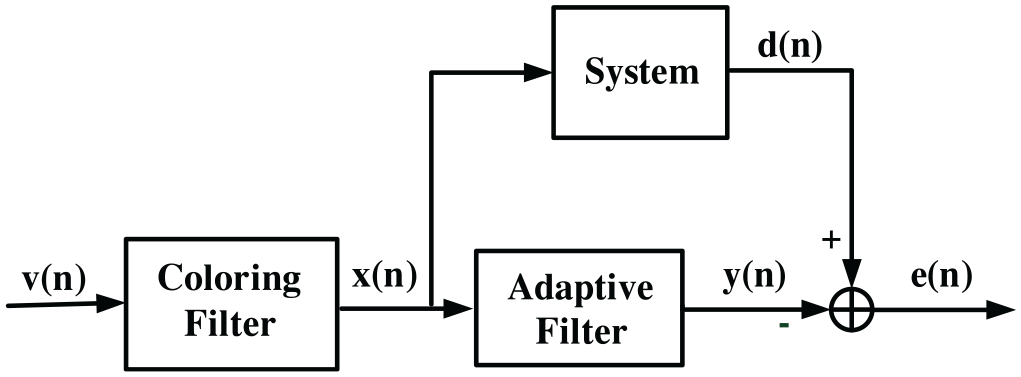

The performance of the proposed algorithm is evaluated by using an example of system identification problem given in Farhang-Boroujeny,

41

which is described by block diagram in Figure 3, where

where

System identification problem.

The aforementioned system identification problem is solved by using MNM, NM, LMS, and NLMS algorithms. The LMS and NLMS are used because they are widely used for such problems, moreover, NLMS is a bench mark for such applications. All the methods are online except NM, which is an offline technique requiring complete statistics of input for the system identification problem. The simulation results show comparison of above mentioned algorithms for identification of 2, 5, 10, and 25 taps FIR systems. The adptive filter taps are randomly initialized for all the techniques. The implementation of NM and MNM is presented in Algorithms 1 and 2 respectively, whereas, the pseudo-code for LMS and NLMS is given in Appendix. For simulations the simplex size for both MNM and NM algorithms is taken as

where

where,

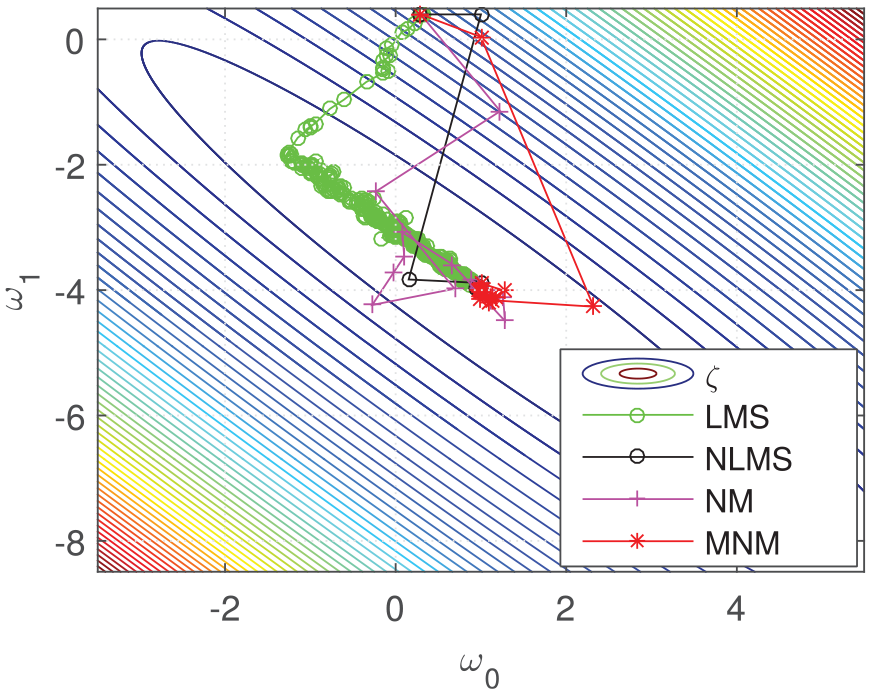

Effect of coloring on the trajectories of filter taps for different algorithms for

Effect of coloring on the trajectories of filter taps for different algorithms for

Effect of coloring on the trajectories of filter taps for different algorithms for

Effect of coloring on the trajectories of filter taps for different algorithms for

The results in Figures 4 to 7 show the convergence of the algorithms in the presence of coloring effect in the input of the system and the adaptive filter. The coloring depends upon the value of

where

The quantitative analysis of the robustness of the algorithms is presented in Table 1 by computing standard deviation (std) of the number of iterations for all the techniques.

Effect of coloring on the number of iterations for all the algorithms.

The results in Figures 8 and 9 show that the mean square error (MSE) for the algorithms decreases with the number of iterations. The error is computed by ensemble average of the sequence given by equation (8) over 100 simulation trials. It can be seen from Figures 8 and 9 that the performance of MNM is better than LMS and even comparable with NLMS. However, the NM algorithm shows poor performance and converges to a relatively higher value of MSE as compared to its counterparts. The robustness of MNM and NLMS due to the coloring effect can also be observed from the results.

Learning curve plot of the algorithms for 2-taps system for

Learning curve plot of the algorithms for 2-taps system for

Figures 10 to 13 show similar results for

Learning curve plot of the algorithms for 5-taps system for

Learning curve plot of the algorithms for 5-taps system for

Learning curve plot of the algorithms for 10-taps system for

Learning curve plot of the algorithms for 10-taps system for

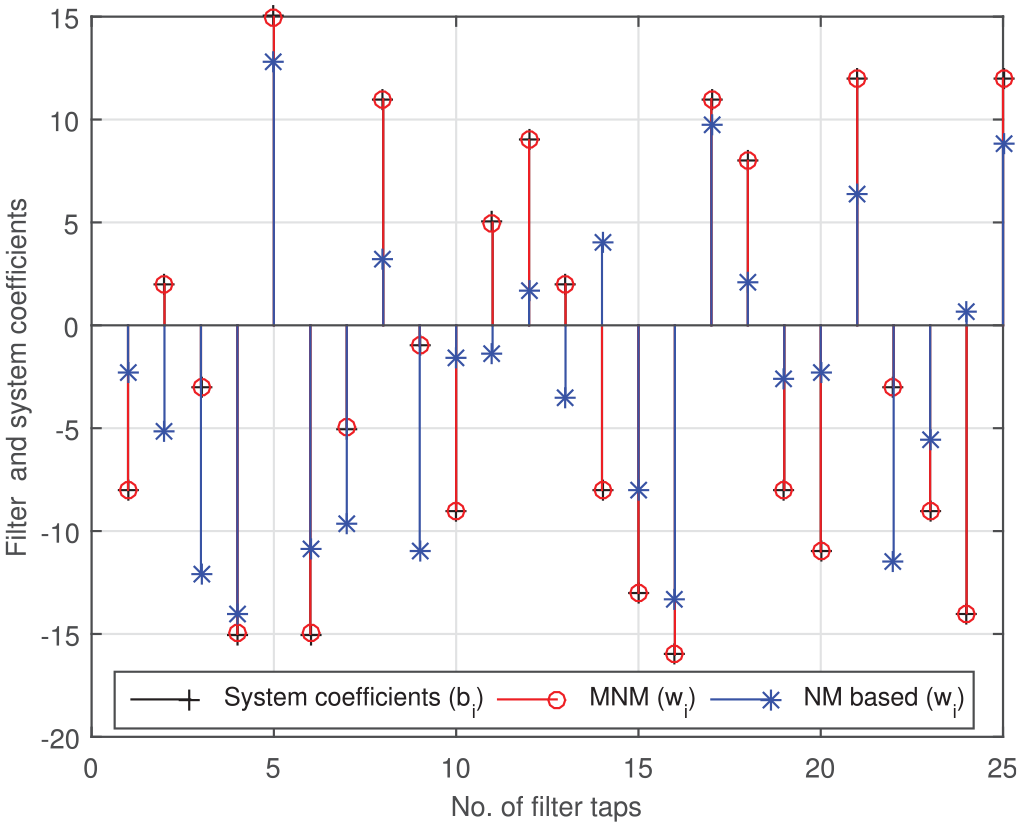

Estimation of 25 taps LP FIR filter.

Conclusion

A novel modified optimization technique based on NM method has been proposed that transforms

The implementation of MNM on nonlinear optimization problems will further validate its effectiveness.

Footnotes

Appendix

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.