Abstract

Accountability measures have quickly entered into formal teacher-preparation programs. As a response, we introduce the use of structural equation modeling vis-à-vis path analysis in secondary-grade mathematics teacher preparation as a methodology to test models to understand the strength of relationships to recommendations of prominent professional organizations and standards for entering the teaching profession. This longitudinal, 6-year, five-cohort study examines the relationship of program design sequencing and core components (internal measures) to an externally scored high-stakes teacher licensing examination portfolio intended to measure pedagogical content knowledge and first-year teacher readiness. The internal measures and program sequencing model explains 49.2% of the variance in relation to the standardized outcome teaching portfolio examination with high-power and medium- to large-effect statistics. We provide implications for teacher preparation with respect to recommendations of professional organizations, governments, and accreditation standards. Results should stimulate discussions and fuel future research efforts.

Keywords

Introduction

Over the past three decades, there has been extensive educational reform in the United States causing policymakers and leaders to introduce accountability measures that hold teacher education responsible for preparing effective future teachers. Reformers continue to transform teacher education with attention to teacher preparation and practice at local jurisdictional (state or institutional) and national levels (Cochran-Smith et al., 2013; Darling-Hammond, 2017; Placier et al., 2016). These reform efforts include increasing clinical field hours for teacher candidates (TCs), administering performance-based assessments/standardized tests, creating local and national standards, forming national and international professional organizations, and so forth. The research-driven efforts of professional organizations have set the foundation for conceptualizing teacher education standards. Meanwhile, jurisdictions within the United States have introduced both macro- and micro-level accountability measures for teacher education programs to measure the extent to which reform efforts meet desired outcomes. At the macro-level, most jurisdictions have introduced high-stakes external accountability measures such as standardized examinations (e.g., Praxis II—content knowledge) and teacher exit/licensure portfolios that evaluate TCs’ readiness to enter the teaching profession (e.g., edTPA [formerly referred to as the educative Teacher Performance Assessment]—teaching ability and subject area pedagogical knowledge). Example micro-level, “within-program” accountability measures include increasing grade point averages, instituting required curricula, and administering key assessments to meet local and/or national standards. Teacher preparation programs (TPPs) receive many messages about what they should do, but the evidence supporting those mandates and declarations is rarely clear, which is typical in educational settings. Because of this, the field of teacher education has lacked a culture of robust research that accompanies program experimentation and evaluation.

In response to these accountability pressures in the United States, a national collaborative effort has focused on transforming secondary mathematics teacher preparation through the Mathematics Teacher Education Partnership (MTEP). Over 40 secondary mathematics TPPs in the United States began work in 2011–2012 to create a framework with three goals: (a) establish a national research and development agenda, (b) produce well-prepared first-year mathematics teachers, and (c) align program transformations to multiple professional organization recommendations and standards. While large-scale reform efforts, such as the work of the MTEP, aim to evaluate TPPs to improve mathematics teacher preparation, critiques can be made for such efforts being costly, laborious, and lacking sufficient modeling with respect to measurement (Tatto, 2018).

This has led our research team to use a seldom-used quantitative methodology (i.e., structural equation modeling [SEM] via path analysis) for evidence regarding accountability and professional recommendations for TPPs. Path analysis is a method that allows for computing indirect and direct effects in a specifically longitudinal pathway as opposed to advanced regression correlational relationships to outcome variable(s). Our desire is to advance the field methodologically, open dialog for future research, and consider recommendations to strengthen TPP designs and outcomes in both mathematics teacher education and teacher education in general as a result of nearly 20 years of work calling on better TPP coherence and study depth of TPP models (Cochran-Smith, 2004; Dai et al., 2007; Darling-Hammond, 2006; Henry et al., 2012; Tatto et al., 2016). The field has moved slowly on program design research and effective, high-quality evaluation (Tatto, 2018), and we recognize that external critiques and examinations will continue to influence teacher education. TCs, just as other preprofessionals, ought to be well prepared with a strong sense of self-efficacy, ability, and knowledge from internal program measures (e.g., validated instruments, course grades, major/key assignment rubrics) within a curriculum that render professional examinations (e.g., Praxis II, edTPA) a formality as opposed to a roadblock or gatekeeper (De Voto et al., 2021; Moldavan, 2018; Ratner & Kolman, 2016; Swars Auslander et al., 2019; Zelkowski & Campbell, 2020, 2022; Zelkowski et al., 2018; Zelkowski & Gleason, 2018). With the knowledge that mathematics courses in K-12 and higher education are often gatekeepers for students, our work focuses on the design of high-quality TPP models in response to professional recommendations to ensure TCs are well-prepared first-year teachers to improve students’ mathematical success.

Study Purpose

The purpose of this study was to examine how a TPP 1 design, in alignment with several professional organizations’ recommendations and standards publications, 2 influences TCs’ knowledge, skills, and teaching ability readiness as measured by internal measures over sequenced method courses and field experiences with respect to the external high-stakes, standardized edTPA, particularly the secondary mathematics edTPA (Stanford Center for Assessment, Learning and Equity [SCALE], 2020). For context, the structural design of the portfolio incorporates pedagogical constructs (i.e., integrating concepts of planning, instruction, and assessment) and subject-specific pedagogy that aligns with content and pedagogical standards from professional organizations, such as the National Council of Teachers of Mathematics (NCTM; Moldavan, 2018; Pecheone et al., 2016; Tanguay, 2018). TCs must compile artifacts (e.g., lesson plans, student work samples, video segments) that reveal their abilities to plan, instruct, and assess 3–5 consecutive days of mathematics instruction (SCALE, 2020). In addition, TCs answer commentary prompts that encourage them to justify their instructional and assessment decisions, analyze their teaching effectiveness with reference to evidence, reflect on their use of academic language, and assess data to demonstrate student learning and guide future instruction.

While edTPA denotes one way to measure teaching ability and readiness in response to accountability (American Association of Colleges of Teacher Education, 2020), other professional recommendations have been made to address reform initiatives. The Conference Board of the Mathematical Sciences’ Mathematics Education of Teachers II (CBMS, 2012; MET II) states in part, “Prospective middle grades teachers should take two methods courses that address the teaching and learning of mathematics in grades 5–8” (p. 48). Furthermore, the Association of Mathematics Teacher Educators (AMTE, 2017) states in their Standards for Preparing Teachers of Mathematics (SPTM), “Effective programs preparing teachers of mathematics at the high school level provide candidates multiple opportunities to learn to teach mathematics effectively through the equivalent of three mathematics-specific [teaching] methods courses” (p. 141). Both professional organizations acknowledge that most TPPs do not offer the recommended coursework and experiences to meet these recommendations. This demonstrates the need for comprehensive reviews of program design efforts in correspondence with the targeted goals for preparing mathematics teachers.

We developed a framework for leveraging structural equation models, vis-à-vis path analyses, to examine the relationship between sequenced mathematics teaching methods courses for TCs and various internal measures used as part of the program’s voluntary participation in the Council for the Accreditation of Educator Preparation’s (CAEP) Specialized Professional Association (SPA) of the NCTM review. Tatto (2018) explains the need for such a framework: Recent reviews of [mathematics] teacher education reveal the need for more systematic exploration of programs and their intended outcomes, and for rigorous research directed at producing system-level evidence of program effects. Indeed, national-, state-, or even program-level evaluations of teacher education program [design] effects are rare. When they have been undertaken, evaluations have not shed much light on the acquisition of knowledge [and ability] needed for teaching because they have not measured future teachers’ knowledge [and ability] and have, for the most part, relied on responses to satisfaction surveys. (p. 410)

While Tatto’s chapter used the international comparison data from the Teacher Education and Development Study in Mathematics, she suggested that strategic program alignment with accreditation demands is more likely to generate graduates who are highly knowledgeable and well-prepared beginning mathematics teachers. Our work directly addresses Tatto’s (2018) call, as we aimed to provide empirical evidence through a new application of a known analysis methodology that examines the sequential relationships between internal program measures and high-stakes performance assessments. Although we recognize several limitations within this study, we underscore the importance of our findings so other researchers can build upon our work through evaluation research and applied empirical methods to move TPPs toward program transformations (MTEP, 2014) with improved TC outcomes and readiness for accountability purposes.

Other scholars have pushed for more rigor and quality in the evaluation of TPPs (Cochran-Smith, 2004; Dai et al., 2007; Henry et al., 2012; Hiebert & Morris, 2012; Tatto et al., 2016; Zeichner, 2012). Knight et al. (2012) wrote about the complexities of assessment and accountability in teacher education. More recently, Laughter et al. (2022) pushed for consideration of the whole TCs’ development. We recognize a single-program analysis may not significantly influence national, state, or local policies. Our work provides empirical evidence vis-à-vis a methodology rarely used in TPP research to start discussions and advance TPP accountability models. We agree that secondary teachers’ mathematical content knowledge preparation is critical, as noted in many bodies of literature. However, this study and its applied methodology focus on the pedagogical content knowledge preparation of TCs. The following research questions are examined in this study:

What are the relationships between mathematics TCs’ performances in program courses measured by key assessments and their performances on a professional licensure examination designed to be an indicator of pedagogical content knowledge?

How do these findings support or further question the professional recommendations in the preparation of secondary mathematics teachers?

A High-Stakes Standardized Measure in Secondary Mathematics Teacher Preparation

Professional licensure portfolios (e.g., edTPA 3 ) have become more common as a quality control measure for assessing TPP outcomes and readiness for entering the teaching profession. Some evidence suggests that proficiency on these assessments positively correlates with well-prepared beginning teachers (Clotfelter et al., 2007; Darling-Hammond, 2010a, 2010b; Darling-Hammond & Hyler, 2013; Gitomer, 2007; Goldhaber et al., 2017; Shuls, 2017; Wineburg, 2006) while other evidence raises concern about whether standardized assessments measure what they propose to measure or whether they are predictive of early-career-teaching effectiveness (D’Agostino & Powers, 2009; Dover & Schultz, 2016; Gitomer et al., 2011, 2021). De Voto et al. (2021) found edTPA to be a strong tool for leveraging program inquiry to prepare TCs better. However, they also saw that other TPPs could be simultaneously compliant and resistant to it. Arguably, there seems to be more validity to predicting beginning teacher effectiveness with licensure portfolios (e.g., edTPA) than with content-only examinations (e.g., Praxis II). However, assessments provide public perceptions to professionalize teaching with accountability only to an extent.

Due to increased external accountability on TPPs, many TPPs have struggled to recruit and maintain enough mathematics TCs to meet the supply demands, especially when not all high-school graduates are well-prepared mathematically (Dickey, 2016; United States Department of Education, 2016; Zelkowski, 2011). Moreover, many institutions cannot retain and credential some TCs because of their inability to pass professional examinations (Darling-Hammond et al., 1999; National Council on Teacher Quality, 2017, 2018). We argue that well-aligned coursework, internal measures, and coherent program designs prepare TCs to demonstrate their readiness by excelling on licensure examinations rather than otherwise. These program characteristics may reduce attrition in TPPs and increase the number of well-prepared and credentialed first-year teachers while simultaneously producing accountability evidence regarding program effectiveness.

Context and Conceptual Framework

Constructing a Conceptual Framework for Program Design and Study

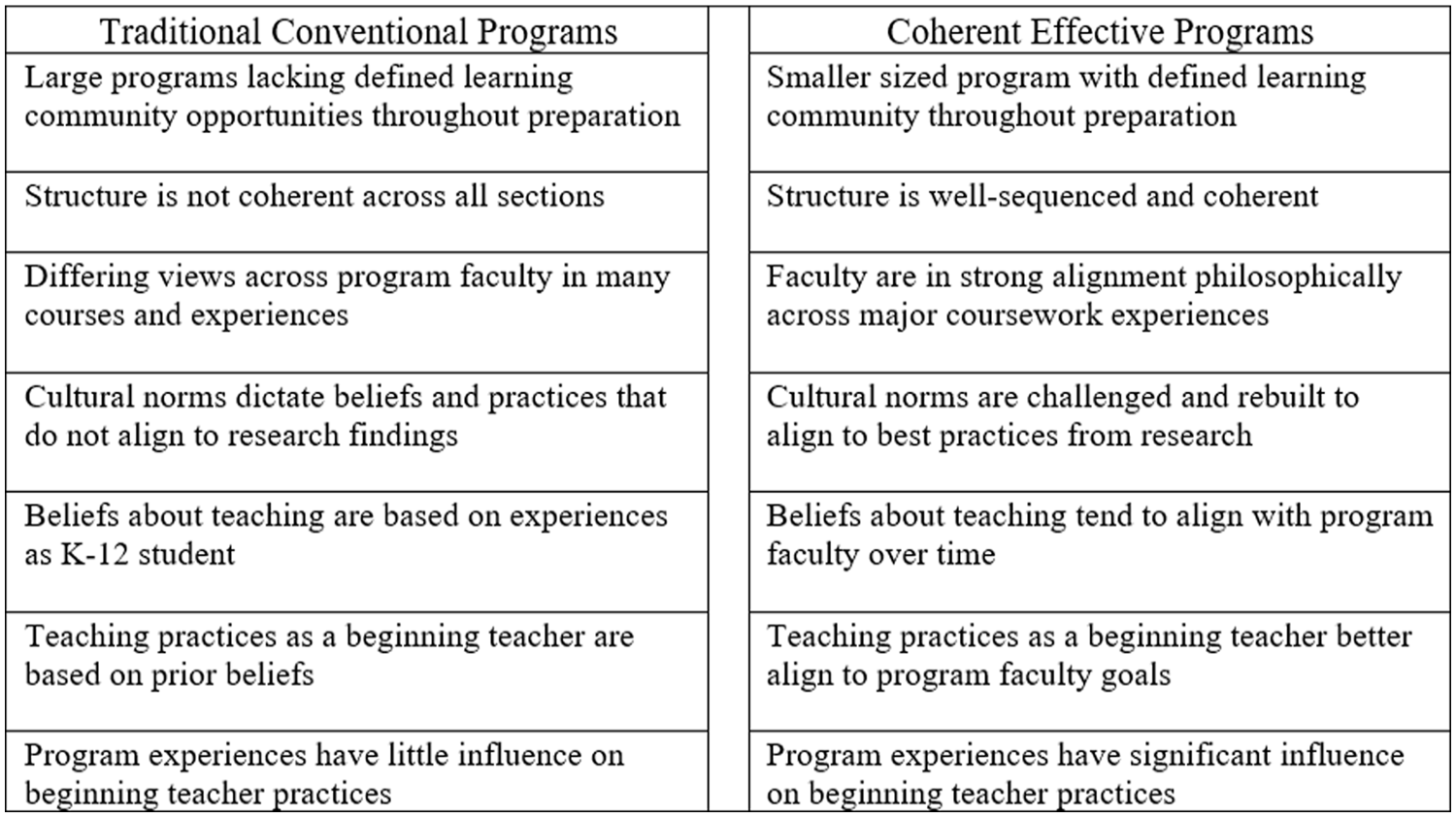

Mainly relying on the work of Tatto (1998) and Darling-Hammond (2006) as the primary drivers, we went through a process of redesigning a TPP a decade ago before most current professional recommendation documents existed. Tatto found that TPPs lacked professional norms (e.g., consensus in academic standards, clinical requirements, specific coursework) across different institutional programs and suggested that developing common standards and frameworks for assessing TCs could improve TPP outcomes. Tatto further indicated that TPPs are more likely to change TCs’ beliefs about practices in programs with extensively designed assessments, clinical experiences, highly focused coursework, and program faculty with shared visions of the desired outcomes (see Figure 1). Also central to our program design was Darling-Hammond’s (2006) conceptualization of erasing traditional preparation program models consisting of many unrelated courses. According to Darling-Hammond, TPPs meeting 21st-century needs should exhibit (a) aligned coherence and integration among courses, (b) strong connections between coursework and clinical fieldwork in schools with extensive and intensely supervised clinical teaching by integrating coursework pedagogy that links theory to practice, and (c) proactive relationships with schools.

Characteristics of Teacher Preparation Programs Utilized for this Study.

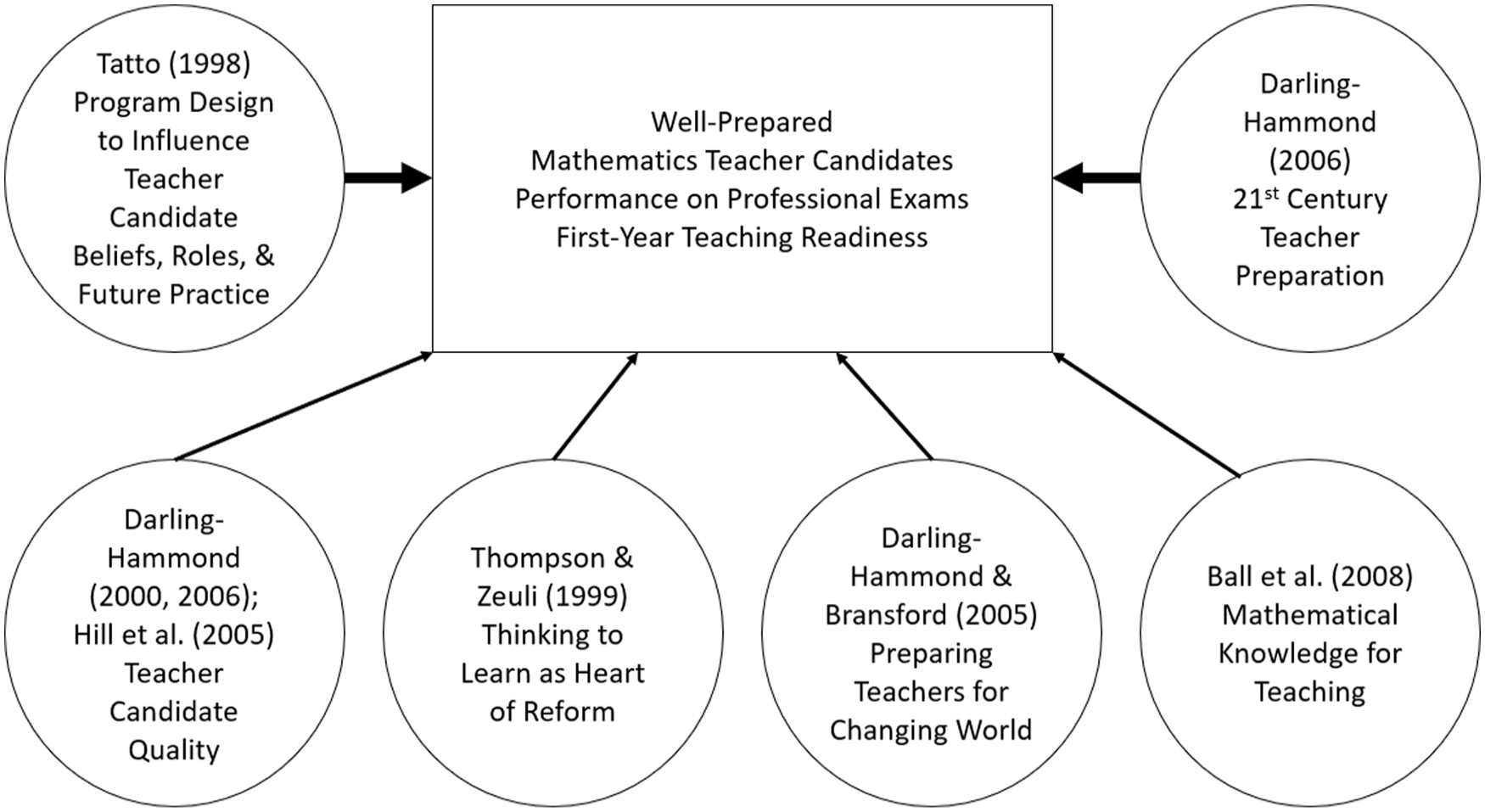

In addition to grounding our program design in literature recommending longitudinal designs with rigorous and differentiated assessments, Figure 2 depicts the contributing literature that provides the secondary drivers for our program design. By examining and considering four secondary drivers to narrowly frame program components (e.g., courses, sequence, assignments, assessments, measures), we recognize that TCs require extensive experiences with knowledge of subject (Ball et al., 2008), knowledge of teaching (Darling-Hammond, 2000, 2006; Hill et al., 2005), and knowledge of learners (Thompson & Zeuli, 1999) to become early career professionals who understand teaching and learning as foundational to being a well-prepared teacher (Darling-Hammond & Bransford, 2005).

Conceptual Driver Diagram With Primary Drivers (Darling-Hammond, 2006; Tatto, 1998) and Contributing Secondary Drivers.

We framed our desired outcomes to guide our research and launched data collection in Fall 2012 due to the onset of the MTEP (discussed next). While we acknowledge the growing literature on professional recommendations that have been published since the onset of this study, we elaborate on how these guiding bodies work together to form a growing consensus. Then, we share the specifics of our program design and analyses in the “Methods” section.

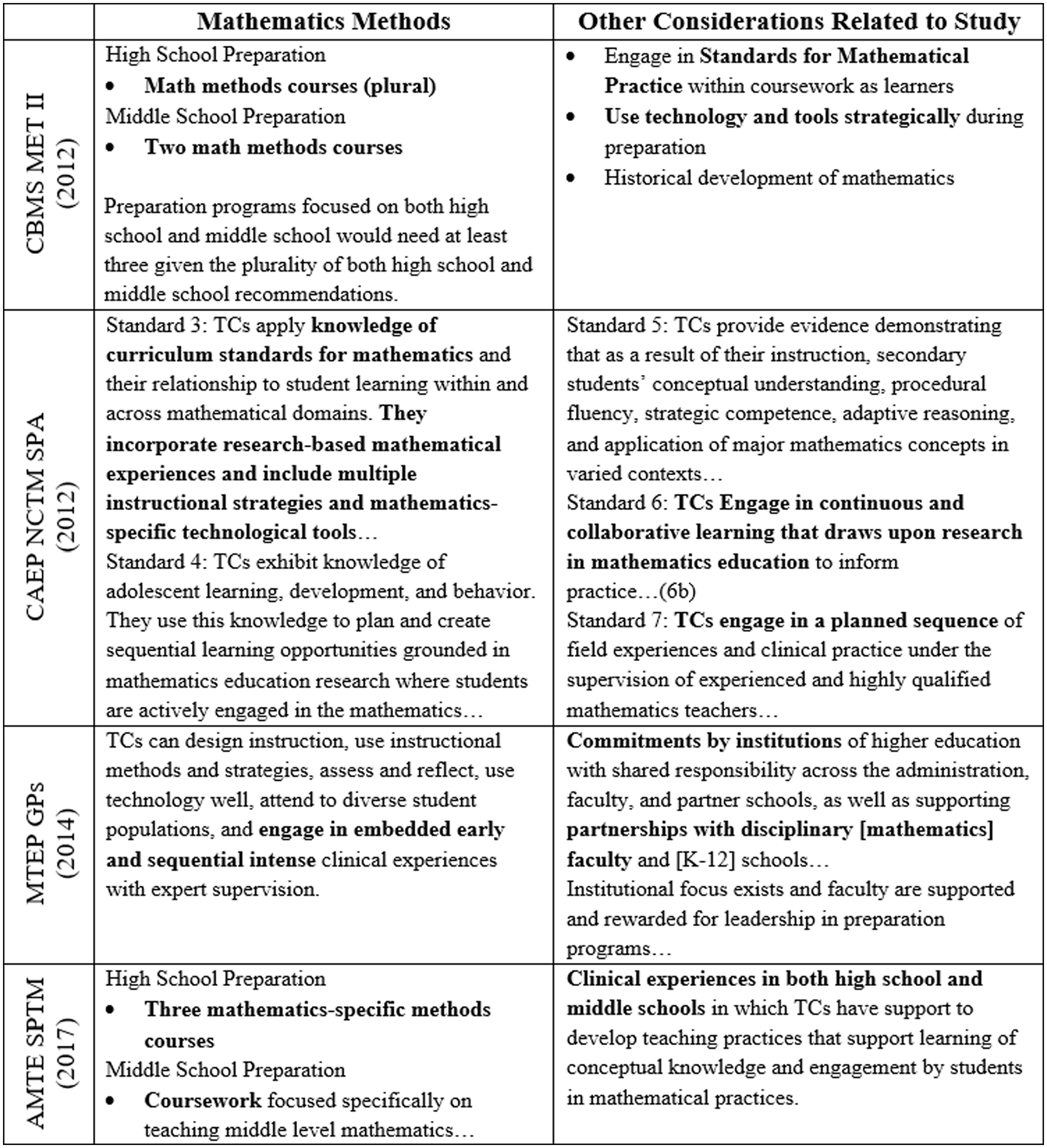

Advancing National Consensus for Mathematics Teacher Preparation

All economically developed countries with centralized or decentralized teacher preparation have recommendations for TPPs (Loughran & Hamilton, 2016). Historically in the United States, the CBMS (2001, 2012) MET I and MET II documents have set the mathematics content coursework recommendations for TPPs. Informing the pedagogical and clinical experiences avenues before 2012, only the CAEP SPA NCTM published standards but not with respect to coursework. In 2011–2012, 4 the MTEP became the largest collaborative movement for secondary mathematics TPPs by leveraging networked improvement communities (Bryk et al., 2010; Martin & Gobstein, 2015) in Research Action Clusters to move programs toward shared and collaboratively developed Guiding Principles (MTEP, 2014). Within the MTEP Guiding Principles are high demands that differentiate between a well-prepared beginning mathematics teacher and one who is just barely qualified. More recently, the AMTE (2017) SPTM identified standards for well-prepared beginning mathematics teachers; however, no U.S. TPPs, state departments of education, or accreditation bodies currently require the CBMS MET I or II recommendations, the AMTE SPTM, and/or the MTEP Guiding Principles. Some states require the CAEP SPA NCTM standards, while others do not. Collectively, a cross-cutting analysis of these four documents suggests several qualities of TPPs that produce graduates who are well prepared to enter the teaching profession related to their pedagogical preparation (see Figure 3). By studying seven exemplary TPPs, Darling-Hammond (2012) found several factors correlated with highly prepared teachers entering the profession. The main factors include (a) a vision of high-quality teaching embedded within all coursework and clinical experiences, (b) at least 30 weeks of clinical experiences and student-teaching opportunities that support ideas from coursework, and (c) strategies embedded in coursework for confronting students’ initial beliefs about teaching practice or the profession. We recognize that many other scholars have worked in the realm of TPPs beyond those our work has relied upon here. We certainly recognize that such scholarship has guided and framed the CBMS MET, MTEP, CAEP SPA NCTM, and AMTE SPTM for TTPs.

Cross-Sectional Analysis of Professional Organization Recommendation and Standards Documents.

Methods

Description of Programmatic Setup

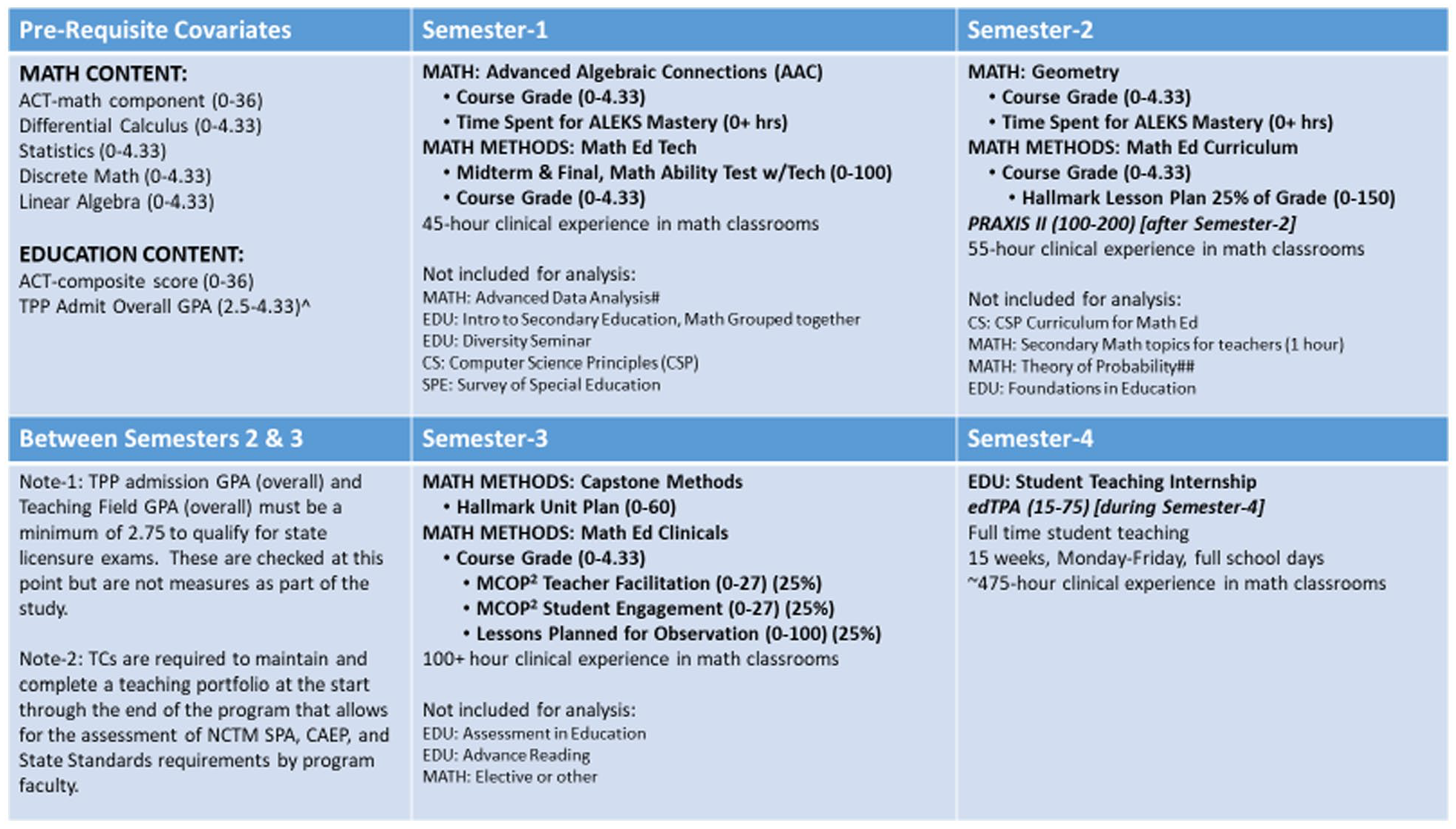

Our initial program review at The University of Alabama (a large research institution spanning from urban to suburban to rural within a 15-mile radius) took place in 2008–2009, based on the CBMS (2001) MET I. Continued planning and revisions ensued through 2012 to align with the CAEP SPA NCTM 2012 standards and the CBMS (2012) MET II. In 2012, we launched this longitudinal study’s initial data collection with the first TC cohort with the plan to voluntarily have the program externally evaluated by the CAEP SPA NCTM 2 years later. As the study progressed, the program faculty reaffirmed fit within the more recent recommendations of the MTEP (2014) guiding principles and the AMTE (2017) SPTM. The program consists of three sequential and strategically designed semesters. Each semester includes a mathematics methods course and paired clinical field experience preceding the final fourth semester full-time (i.e., 15 weeks, 5 full school days per week) student-teaching internship. 5

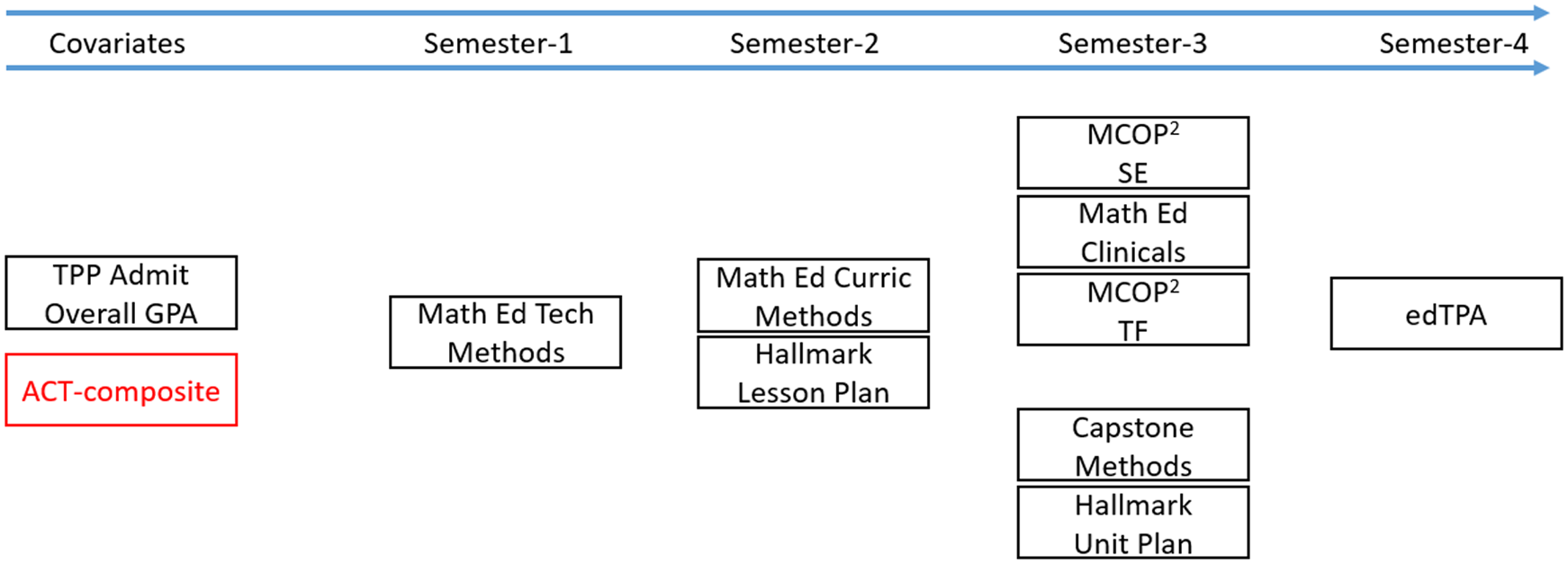

The cohort model for pedagogical preparation is 2 years (four semesters) with TCs enrolling in mathematics teaching methods courses and clinical field experiences, among other coursework (e.g., education foundations, mathematics content, special education) required for licensure. TCs must possess minimum grades (i.e., grade point average, see Figure 4). TCs with a bachelor’s degree and/or a major in mathematics seeking initial certification at the master’s degree level enter the same 2-year cohort sequence of teaching methods courses and paired clinical field experiences. Figure 4 depicts the 2-year program design, including the key coursework and assessments.

Four Semester Curriculum Pathway and Requisite Pedagogical Assessments.

In Semester 1 of the TPP, TCs complete a mathematics methods course (Math Ed Tech). The methods course focuses on technologies for teaching mathematics utilizing dynamic tools (e.g., GeoGebra, spreadsheets, TI-Nspire) and emphasizes the domains of knowledge of content and curriculum and technology content knowledge (Ball et al., 2008; Niess et al., 2009). TCs complete 45 clinical field hours observing a mathematics teacher (middle grades or high school) and working with students in small groups.

In Semester 2, TCs complete a mathematics methods course (Math Ed Curriculum) covering curriculum, lesson planning, and mathematical task development. The methods course focuses on planning for and enacting NCTM’s (2014) eight effective mathematics teaching practices (MTPs). This course extensively assesses TCs’ lesson planning and task-selection abilities as a prerequisite for Semester 3 using a key assessment rubric. The primary knowledge development of the course targets the domains of knowledge of content and teaching and knowledge of content and curriculum, while knowledge of content and students is a secondary focus to prepare TCs for Semester-3 field experiences. TCs complete 55 clinical hours working with a cooperating mentor mathematics teacher (in an alternative grade band from Semester 1, middle grades or high school) in planning and nonevaluative classroom teaching.

In Semester 3, TCs complete the third mathematics methods course (Capstone Methods), which focuses on student learning, task implementation, and sequenced lesson planning (i.e., unit planning) targeting knowledge of content and teaching, knowledge of content and curriculum, and knowledge of content and students. TCs complete 120 clinical hours (Math Ed Clinicals) in middle grades or high school. Using the Mathematics Classroom Observation Protocol for Practices (MCOP 2 ) that measures student engagement in mathematical practices and teacher facilitation of the MTPs, TCs’ lesson plans and implementation are formally evaluated by program faculty in the field (Gleason et al.,2015, 2017; NCTM, 2014; National Governors Association Center for Best Practices & Council of Chief State School Officers, 2010; Zelkowski & Campbell, 2020; Zelkowski et al., 2020, 2021). In total, TCs complete at least 200 clinical/practicum-based hours in Semesters 1–3 before the Semester-4 teaching internship (15 weeks, 5 days/week, full school days, ≈475 hours) in a middle grades or high-school mathematics classroom. During the internship, TCs submit their edTPA portfolios before the 10th week of the internship for external standardized scoring. Overall, the TPP requires many experiences, courses, measures, grades, and artifacts aligning TCs as well-prepared beginning mathematics teachers. We have provided a brief overview of each course and the assessment measures used in the analysis as an appendix.

Data Sources

Deidentified data were compiled for all program-completing TCs (N = 59) across five cohorts (size range 7–16) over 6 years. The sample does not include TCs who never finished the program (i.e., missing the outcome measure). This work was part of a continuous improvement model that included eight CAEP SPA NCTM assessments: (a) Praxis examination; (b) mathematics course grades; (c) MCOP 2 ; (d) clinical experience portfolio; (e) edTPA; (f) math skills assessment; (g) Hallmark Unit Plan; and (h) Hallmark Lesson Plan.

Each TC completed the same sequence of coursework and study measures. We held study measures and the program model constant for all five cohorts (see Figures 4 and 5). We considered two general performance covariates as overall prior achievement proxies: overall grade average (a TPP admission requirement) at the start of Semester 1 and the standardized ACT-composite (formerly called American College Testing) score from high school.

Sequential Program Design, Covariate, Measures, and Internal Measures.

Analysis Methods

Our study was designed to understand the quantified, sequential relationships between program components, key assessments, course performance, and the externally assessed secondary mathematics edTPA. We acknowledge the rigorous validation process of the edTPA 6 as a measure of applicable pedagogical content knowledge and teaching readiness.

We used path analysis, a derivative of SEM, as an inference instrument taking input variables in a time-sensitive sequence while considering our assumptions regarding empirically observed data to ultimately draw logical inferences about the inputs. Fitting data to a theoretical path model allows researchers to analyze such assumptions. While the models themselves do not prove causality, such analyses provide evidence for or against the assumptions’ plausibility (Olobatuyi, 2006; Sarwono, 2017). There are three necessary conditions to consider when using path analysis to examine the structural relationship among variable assumptions by researchers: (a) an association must exist between variables of analysis; (b) a time order must exist, in that the cause must precede the outcome measure in time; and (c) the association between cause and effect variables must be nonspurious, meaning that another variable external to the study should not be able to explain the effect measure alone (Babbie, 2004; Heise, 1969, 1975; Mill, 1973). Part (c) is critical to consider, as some variables outside the model cannot be controlled. Our study uses the preferred maximum likelihood estimations for the SEM models after having checked for normality within our data. Each variable was checked across seven categories, including Q-Q plots, extreme outliers in boxplots, skewness/kurtosis along with their Z-score values using standard error values, and the Shapiro-Wilk statistic.

Our decision to use path analysis predicates on explicit theories that previously described teacher preparation relationships as a series of longitudinal events that produce knowledge, skills, and ability assessed internally in programs and externally on the professional licensure examination. In early analyses of our work, we isolated rubric-level measures and some grades via regression and various analyses of variance in relation to the outcomes (Zelkowski et al., 2018; Zelkowski & Gleason, 2018). Our early analyses in these conference articles ultimately informed our start to path analyses methodology based on discussions and feedback from the field. Path analysis provides an advanced method of analysis to examine variables and test assumptions within the program setup over multiple points in time (semesters) on the outcome measure of interest. This methodology is particularly suitable for our study since it examines a sequence of experiences and internal measures over time leading to the high-stakes accountability outcome (i.e., edTPA). In addition, path analysis provides an estimation of the importance and significance of paths along with information to examine assumptions within the models.

In our study, we look solely at the relationships that exist in the models. In true SEM models, one would need a control group and a treatment group model to understand the total direct and indirect effects of the treatment(s). We recognize the challenge within a single program to use both models, especially given the impractical and unethical implications of some students and not others being enrolled in alternative pedagogy courses alongside their cohort peers. Thus, we present the data without a direct comparison (control) to understand the relationships between the paths and variables with respect to our research questions.

Path Model Considerations—Determining Variable Inclusion/Exclusion

Our sample included 59 TCs who completed the program in five different cohorts over 6 years. We faced two critical challenges before constructing our path models for analyses: (a) overall course grades versus using key CAEP SPA NCTM assessments within a course and (b) the maximum total paths of a model based on our total variables of consideration in relation to our sample size (see Kline, 2015; Wolf et al., 2013).

A challenge with part (a) was determining whether to use a complete course grade as the performance indicator or a key CAEP SPA NCTM assessment within a course. First, we considered Semester 2 in the path model. The Hallmark Lesson Plan was removed from consideration because the Math Ed Curriculum methods course grade captures this key assessment (i.e., 30% of the course grade) and other major planning for teaching aspects (e.g., task development, teacher questioning, mathematical flexibility, problem-solving choices, professional reflections). The edTPA requires more than a single lesson plan with many components; thus, the course grade represented the better choice. Second, we considered the Semester-3 course grade for the Math Ed Clinical Course versus the MCOP 2 observation score of teaching. The course grade comprised (a) the three scored lesson plans (25%); (b) the evaluated observations of the implemented lesson plans (50%; MCOP 2 ); and (c) the TC’s mentor classroom teacher evaluation (10%) with the remaining 15% coming from participatory activities. The clinical course grade captures many components of edTPA and program expectations rather than just the MCOP 2 observation protocol scores alone. Third, we considered the Hallmark Unit Plan rubric total and the overall Capstone Methods course grade. This was the easiest of the challenges informing the edTPA model decisions. The Hallmark Unit Plan key assessment is most closely aligned with the edTPA expectations and was the only assignment held constant throughout the study’s Capstone Methods course for all cohorts. The other methods course grade components varied semester to semester depending on the professor of record.

With N = 59 TCs, we first addressed part (b) by considering an acceptable number of model variables and paths requiring important rationales for selection/elimination with the least probability in bias error of the measure (i.e., reliability of the measure). Using desired effect sizes (medium to large; 0.3–0.5), along with WebPower—Statistical Power Analysis Online (http://psychstat.org/semchisq) built from Satorra and Saris (1985), we initially set a statistical power = 0.80 and an alpha = 0.05 to determine our range of degrees of freedom to consider for a model. This method produced a range of 12.12–45.31 degrees of freedom. Bumping the power statistic to 0.90 resulted in a df = (5.979–26.52) range. Given these results, we aimed for a path model with at least six but at most 20 degrees of freedom. Ultimately, we let the multiple fit indices (e.g., comparative fit index, Tucker–Lewis Index, Goodness of Fit Index, Root Mean Square Error of Approximation) produced after analyzing the path model determine the quality of the model fit (Xia & Yang, 2019).

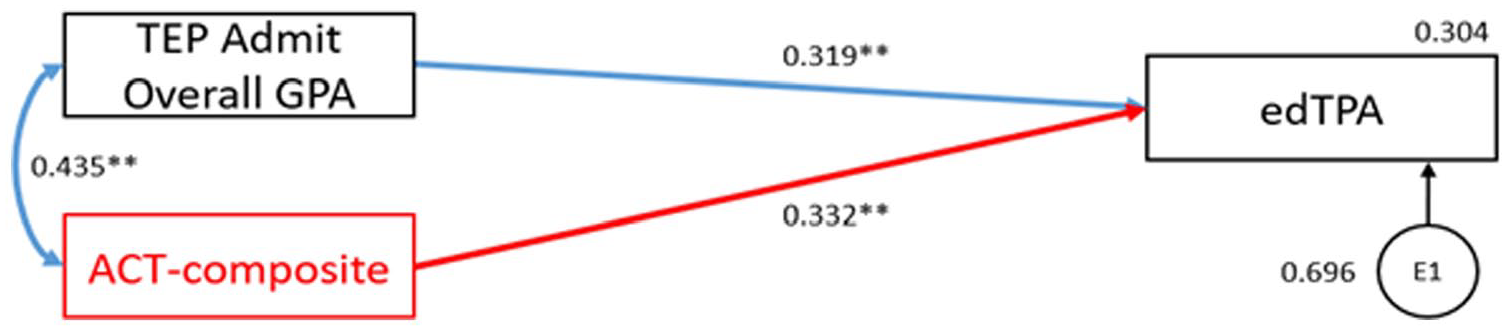

Figure 6 presents the covariates’ relationship to the outcome measure and the direct relationship coefficients to the licensure exam before inserting the program variables. Figure 6 is presented as a mechanism to understand the effects of the program variables and sequence of courses beyond the relationship of covariates to outcome measures. Covariates’ relationship to each other are denoted with dual-direction paths. The combined effect (R 2 ) of all exogenous (independent) variables on the endogenous (dependent) is labeled adjacent to each diagram rectangle. The unexplained effect is adjacent to the error term. Figure 6 depicts a baseline model with two moderately related covariates (0.435) and two significant contribution standardized coefficients (0.319; 0.332) that explains 30.4% of the variance in the edTPA standardized pedagogical outcome variable. The baseline model leaves 69.6% of the variance explained by other variables.

Covariate Model (Prior Achievement) Related to edTPA Scores With all Values in the Path Model as Standardized Beta Regression Weight Coefficients (Direct Paths).

Building the edTPA Model—Rationale and Decision-Making

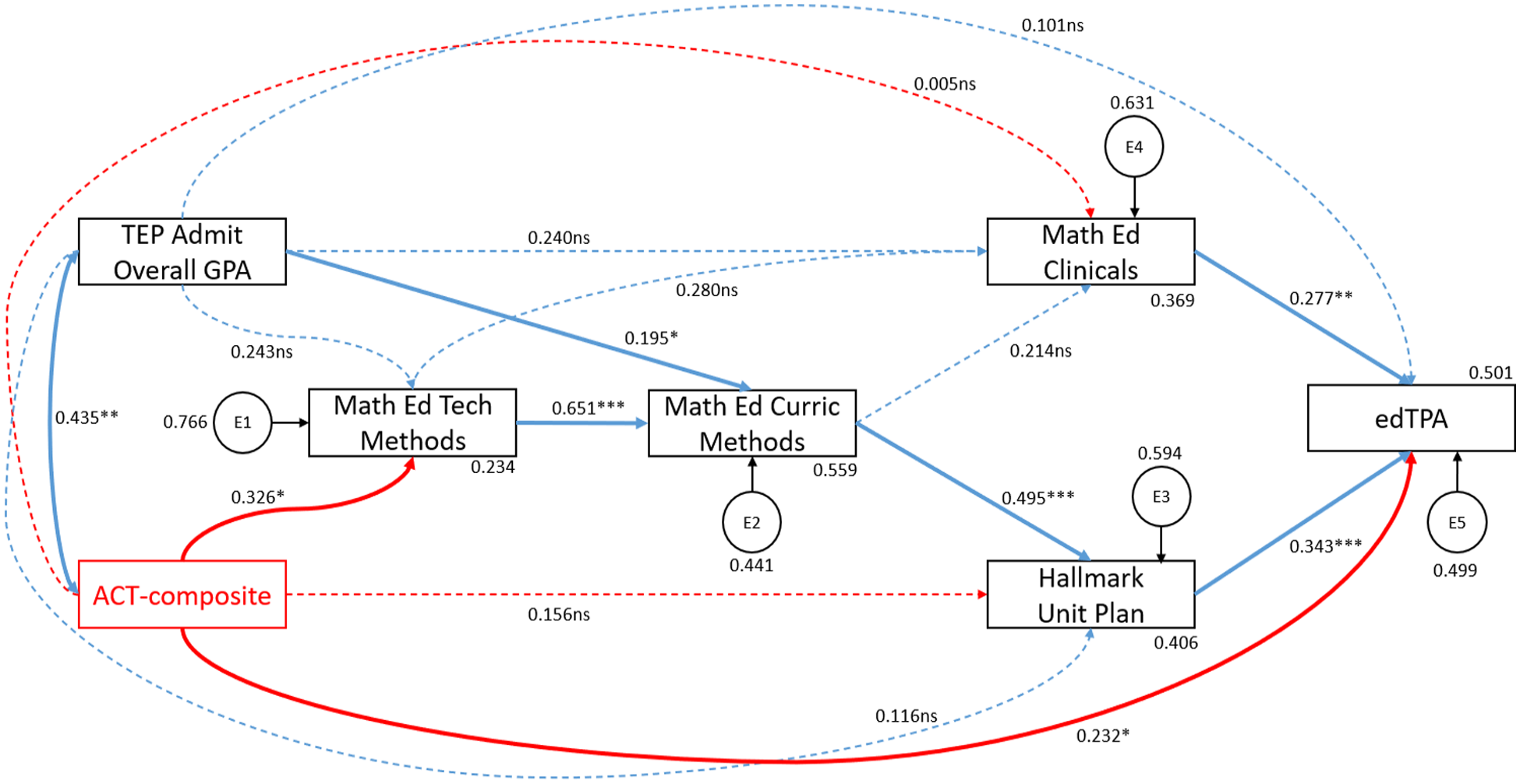

Figure 7 presents our initial edTPA pedagogical content knowledge path model for which we elaborate on our process and decision-making of the included paths. We determined three very small coefficients (nonsignificant paths) in Figure 7 and refined the edTPA model by deleting these three paths, reducing the total variation explained from 50.1% to 49.2% (see Figure 8). Considering the previous discussions regarding variable inclusion/exclusion, we placed direct paths from our two covariates to variables of Semester 1, 2, and 3.

Initial Path Model With Standardized Parameter Coefficients and Direct Effects Related to edTPA Scores With all Values in Path Model as Standardized Beta Regression Weight Coefficients (Direct Paths).

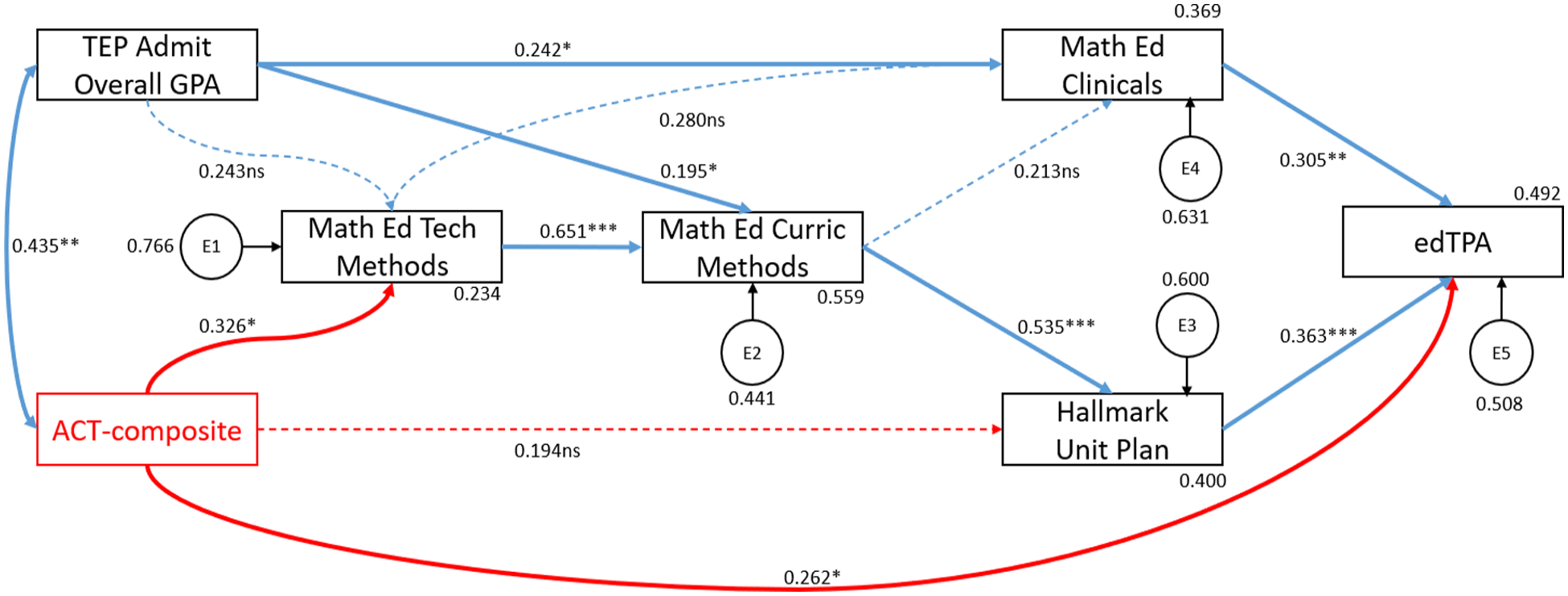

Refined Path Model With Standardized Parameter Coefficients and Direct Effects Related to edTPA Scores With all Values in Path Model as Standardized Beta Regression Weight Coefficients (Direct Paths).

Results

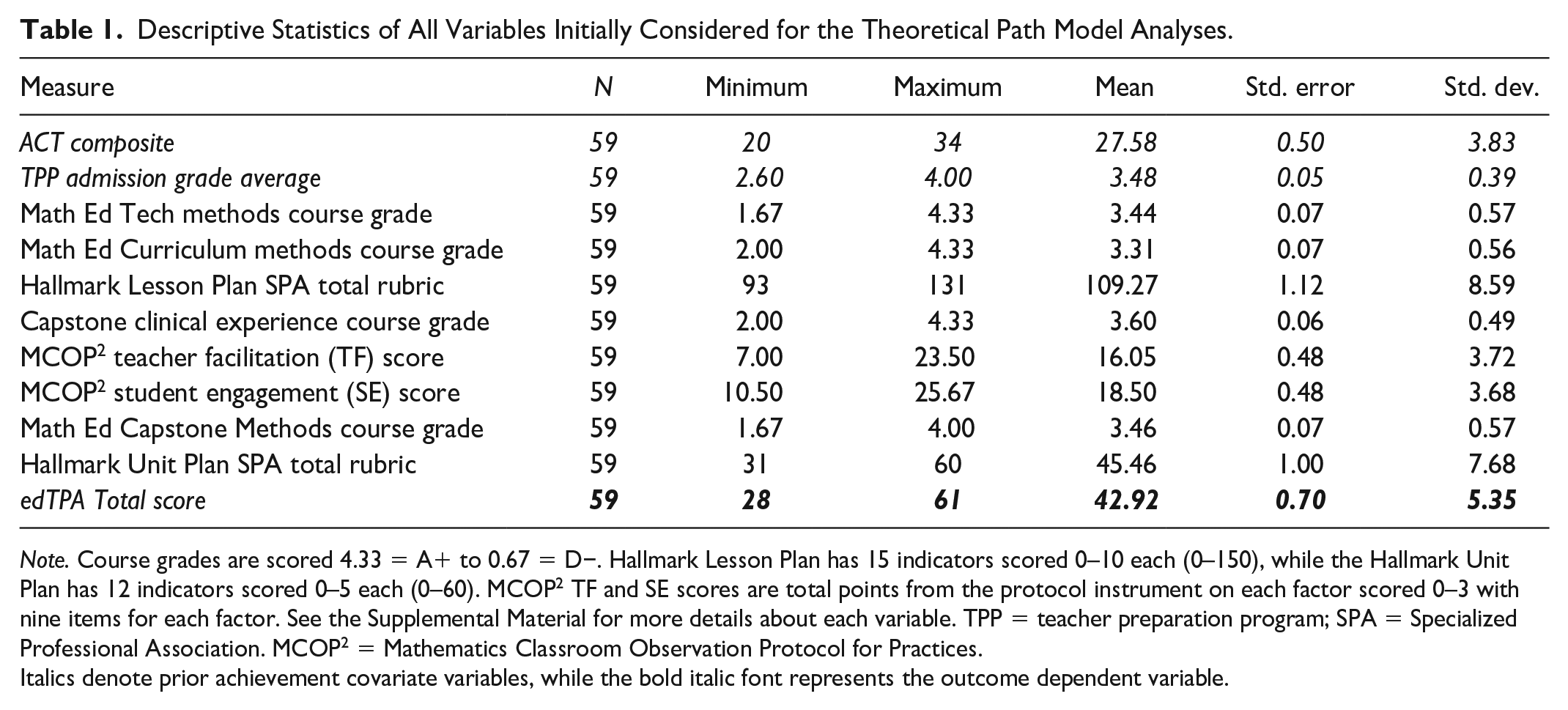

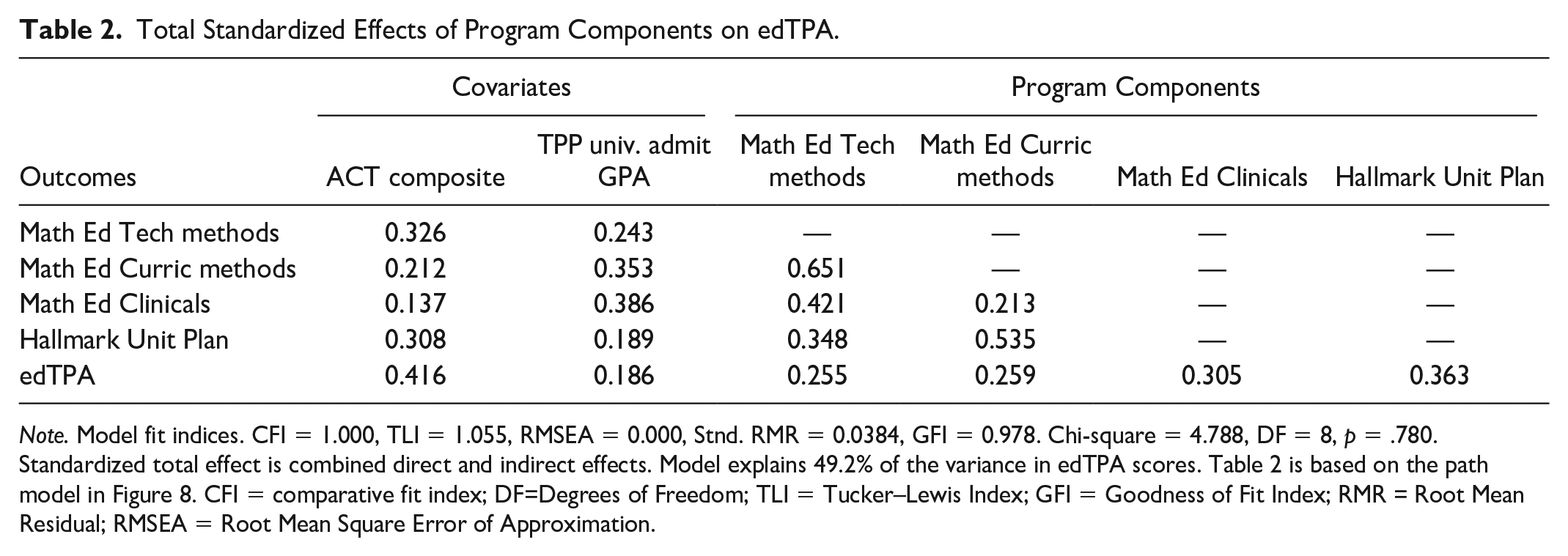

To support our conclusions, we present Table 1 (descriptive statistics) and Table 2 (total standardized SEM model statistics) to show the program components and their relationships to the professional licensure examination. We also reference Figures 6, 7, and 8, where we present the path model results, with statistically significant paths bolded and solid and nonsignificant paths hyphenated. The results reveal a strong model based on the model fit indices (see Hooper et al., 2008; Kenny & McCoach, 2003; Kline, 2015) and the requisite standardized total effects. The edTPA model fit indices in the notes of Table 2 represent an excellent model fit, explaining 49.2% of the variance in edTPA scores. We recognize that our interpretation with respect to Table 2 considers the total effects (total = direct + indirect) of program components and their relationship to the edTPA overall score.

Descriptive Statistics of All Variables Initially Considered for the Theoretical Path Model Analyses.

Note. Course grades are scored 4.33 = A+ to 0.67 = D−. Hallmark Lesson Plan has 15 indicators scored 0–10 each (0–150), while the Hallmark Unit Plan has 12 indicators scored 0–5 each (0–60). MCOP 2 TF and SE scores are total points from the protocol instrument on each factor scored 0–3 with nine items for each factor. See the Supplemental Material for more details about each variable. TPP = teacher preparation program; SPA = Specialized Professional Association. MCOP 2 = Mathematics Classroom Observation Protocol for Practices.

Italics denote prior achievement covariate variables, while the bold italic font represents the outcome dependent variable.

Total Standardized Effects of Program Components on edTPA.

Note. Model fit indices. CFI = 1.000, TLI = 1.055, RMSEA = 0.000, Stnd. RMR = 0.0384, GFI = 0.978. Chi-square = 4.788, DF = 8, p = .780. Standardized total effect is combined direct and indirect effects. Model explains 49.2% of the variance in edTPA scores. Table 2 is based on the path model in Figure 8. CFI = comparative fit index; DF=Degrees of Freedom; TLI = Tucker–Lewis Index; GFI = Goodness of Fit Index; RMR = Root Mean Residual; RMSEA = Root Mean Square Error of Approximation.

Clarifications Related to Analyses for Answering Research Questions

We recognize this study is limited to one TPP at one institution to understand a program design’s relationship to a professional licensure examination considering the recommendations for multiple sequential methods courses with paired field experiences in response to reform initiatives for TPP effectiveness, evaluation, and accountability (e.g., Henry et al., 2012; Knight et al., 2012; Tatto et al., 2016). Therefore, generalizations external to our study and comparative analyses of other TPPs are not possible. Instead, our analyses and discussion to follow are predicated on our model-building assumptions process to understand the veracity of such assumptions as informative designs in researching TPPs. As previously mentioned, the path model does not prove causality but demonstrates the degree of relationships between variables, paths (structure), and outcome measures. Such analyses support or disprove our assumptions’ plausibility with interpretations answering our research questions. Thus, when we refer to direct or indirect effects, we refer to the relationships discovered in our model, acknowledging the limitations of our study without a control comparison. We further reiterate that the ACT from high school should not be part of TPP entrance standards. Instead, the ACT is a useful measure of TCs’ knowledge and skills to inform the design of programs to prepare TCs for success on high-stakes examinations and be well-prepared first-year teachers.

Research Question 1

In this section, we refer readers to Table 1, Table 2, and Figure 8 in reference to program relationships to pedagogical content knowledge (edTPA). We address the following research question: What are the relationships between mathematics TCs’ performances in program courses measured by key assessments and their performances on a professional licensure examination designed to be an indicator of pedagogical content knowledge?

Pedagogical Content Knowledge, edTPA

A total of 30.4% of the variance in edTPA scores is explained by the TPP admission grade average and the ACT-composite score. By adding the previously discussed program design variables and paths, the pedagogical content knowledge model (Figure 8) explains 49.2% of the variance in edTPA scores, nearly a two-third improvement in variance explained. Based on the fit indices (Table 2) and the total variance explained (Figure 8), we classify the model as excellent.

However, we note that factors outside our path model play a large role in the edTPA performance. Namely, variation in TCs’ performance in the Math Ed Curriculum methods course could be partially explained by the course being taught by two different professors in different years and how two different faculty observed and assessed TCs in the Math Ed Clinicals course although grades and rubrics show similarities across faculty year-to-year. Furthermore, our model does not account for the impact on TCs’ pedagogical content knowledge learned from their mentor/cooperating teachers in which they experience 3–4 different classrooms in the field for over 650 hours. It is well-documented and accepted that the impact on TCs’ pedagogical practices relies heavily on the field experience mentorship of classroom teachers (Cochran-Smith & Zeichner, 2005; Zeichner, 2010). Ultimately, TCs combine learning experiences provided by their TPP professors in methods courses and their field experience mentor/cooperating teachers (Feiman-Nemser, 2001; Hiebert & Morris, 2009), most of whom have a strong relationship with methods faculty after having worked in long-term professional development. There is likely a moderate to strong impact on edTPA scores related to the clinical experiences during the student-teaching internship, which was not measured directly.

Using and Interpreting the edTPA Path Models

Examining our model (Figure 8), we first consider the effects on edTPA in relation to the program components analyzed. The relationship on edTPA from the Hallmark Unit Plan (SD = 7.68) indicates about three-quarters of a letter grade on the assignment equates to an effect of (0.363 × 5.35 = 1.94 points), and the relationship of Math Ed Clinicals course grade (SD = 0.49) indicates about half a letter grade equates to an effect of (0.305 × 5.35 = 1.63 points) where these are total points on edTPA. These two measures alone demonstrate a relationship of one full letter grade equating to an effect of nearly 6-points (5.85) on edTPA (slightly greater than 1 SD). Using Tables 1 and 2, the relationship of the Math Ed Tech methods (SD = 0.57) and Curriculum methods (SD = 0.56) course grades inform us of a total effect on edTPA as ([0.255 + 0.259] × 5.35 = 2.75 points) for about a one-half letter grade of performance.

Research Question 2

Figure 3 highlighted recommendations of the CBMS MET II and AMTE SPTM. The CAEP SPA NCTM 2012 standards provide a heavy directional focus on the knowledge, skills, and ability of TCs to be what the MTEP Guiding Principles would deem a well-prepared beginning teacher. Our research was driven to understand program design, requisite program sequential experiences, and key assessments in relationship to professional licensure examinations as a proxy for pedagogical content knowledge. We address the following research question: How do these findings support or further question the professional recommendations in the preparation of secondary mathematics teachers?

Support for Professional Recommendations from Our Study

We inferred from the CBMS MET II for TPPs that multiple methods courses are recommended. However, nearing the end of our data collection, the AMTE SPTM release explicitly called for TCs preparing to teach high school to have three methods courses, although not necessarily sequential. From the beginning of our study, we were positioned to understand the relationship between sequential methods courses and the edTPA. Initially, the edTPA was not consequential for certification by the state’s jurisdiction although it was consequential regarding the internship letter grade for TCs. Moreover, we recognize two TCs were significantly below (28) the consequential jurisdictional cut score (37), and two TCs just one point below (36), but the other 55 TCs posted an edTPA M = 43.7, which was a full 1.25 SD above the jurisdictional passing mark. Thus, our findings provide support for the recommendations of the AMTE SPTM and CBMS MET II, particularly for less academically prepared TCs in relation to pedagogical content knowledge if one considers entry-grade point average and ACT-composite proxies.

Regarding the pedagogical recommendations of CBMS, the MET II leaves that decision on professional teaching and learning organizations’ standards comprising the CBMS (i.e., NCTM, AMTE). Given that our program design followed such recommendations, we do not disagree with such recommendations, particularly that of TPPs certifying mathematics TCs for middle grades and high school. Our findings support the recommended three methods courses (AMTE, 2017) alongside well-designed clinical experiences connecting coursework to practice in the field (i.e., linking theory to practice). If we count the TPP’s Math Ed Clinicals as a course, then the TCs experience four courses, where one is a field-based practicum. Likely, TPPs certifying both middle- and high-school mathematics teachers could benefit from three or more methods courses depending on the structure of the TPP.

Questions About National Recommendations

The AMTE SPTM lacks a direct focus on the developmental and pedagogical trajectory of TCs. That is, the sequencing of multiple methods courses likely has a greater impact than a single semester with multiple methods courses before student teaching—at least our study’s findings suggest greater direct and indirect effects in relation to higher edTPA performances. Sequencing methods courses is not abundantly clear in the AMTE SPTM, nor can it be inferred or assumed. The MTEP (2014) Guiding Principles further lack any specificity regarding the sequence of pedagogical development and fail to note the importance of multiple semesters or multiple methods courses. However, MTEP has updated their Guiding Principles and added some clarity by linking the AMTE SPTM in the recent revision related to field experiences but not with respect to sequenced methods courses (MTEP, 2020). While Mathematical Knowledge of Teaching Pedagogical Content Knowledge assessments for use in TPPs were lacking before edTPA, the AMTE SPTM and MTEP Guiding Principles point to the need for such assessments with multiple sources of validity evidence to provide TCs’ quality indicators before high-stakes licensure examinations.

Discussion

Darling-Hammond (2006) suggested that “teacher education as an enterprise has probably launched more new weak programs that underprepare teachers, especially for urban schools, than it has further developed the stronger models that demonstrate what intense preparation can accomplish” (p. 302), which is likely a result of the competing fast-track/short-term preparation models that often lack or follow a different set of licensure requirements. Our study and TPP design resisted any fast-tracking models while consistently looking at internal and external measures for continuous improvements. The internal measures, external measures, and sequenced path models allowed us to deeply examine relationships within the TPP to inform improvements and modifications.

Based on our framework’s primary drivers (Darling-Hammond, 2006; Tatto, 1998) and four secondary contributing drivers, our program design was grounded in several assumptions (e.g., TPP coherence and sequenced courses in a learning community of TCs, connections between coursework and clinical fieldwork, proactive relationships with mentor teachers, and coursework that privileges knowledge of teaching, learners, and mathematics; see Figures 1 & 2). Our findings both confirm the conceptual grounding and add nuance to these frameworks. First, our findings confirm that a three-semester sequence of methods courses and paired field experiences with mentor teachers who have strong relationships with program faculty ultimately produced a community of TCs who outperformed national averages on edTPA as well-prepared first-year 21st-century teaching professionals.

Second, considering the work of Tatto (1998, 2018), our findings confirm and contribute new information about program design. Namely, our findings suggest that if one methods course semester was removed from the model, the data indicate some TCs would be labeled as just-barely prepared first-year teachers using edTPA as a proxy. Furthermore, some TCs would likely have failed to meet the edTPA passing benchmark, suggesting that edTPA would act as a gatekeeper (Ratner & Kolman, 2016). Based on the CBMS and AMTE recommendations, our findings support the contention that multiple sequenced semesters of methods and paired field experiences are justified. Furthermore, we acknowledge the need to continually assess TCs’ internship and career readiness in relation to internal program measures (Tatto, 1998). A qualitative examination of TCs’ Hallmark Lesson Plans revealed that TCs lacked sufficient focus on important aspects of equitable teaching practices in their planning (NCTM, 2014), which we further confirmed and detected in edTPA rubric-2 and rubric-3 scores, 7 prompting the qualitative review. We implemented two modules in the first two methods courses and then added a third module to the clinical methods course that deepens the mentor teachers’ engagement with TCs and program faculty. To read in greater detail about these three modules, see Zelkowski and Campbell (2022) and Zelkowski et al. (2020, 2022, in press).

Furthermore, we recognized that two additional rubrics from edTPA consistently had fewer 3s and 4s than desired. The third module focuses on feedback related to mathematical goals to improve students’ learning, topics assessed with edTPA rubric-12 and rubric-13. Since implementing the third module, fewer than 1 in 15 TCs earned less than a three on these rubrics. Thus, our findings support the contention of Darling-Hammond (2006) that TPPs should serve TCs with well-developed program models that integrate field experiences, program faculty, and mentor teachers in partner schools. TPPs should resist pressure to water down TPPs, ultimately undermining the public’s perception of TPPs. Finally, we recognize the need to collect additional data with respect to the engagement and influence of mentor teachers during clinical experiences. Each of the three new modules include opportunities to collect informal information from mentor teachers from an activity TCs collaborate in clinical settings. We further look to improve the quality and quantity of such measures of detecting the influence/impact on edTPA in relation to clinically based field experiences. However, we expect this work to take 5–8 years in a current ongoing research project.

To guide future work, the MTEP Research Action Clusters provide coordinated research, development, refinement, and implementation efforts to unify and transform mathematics TPPs with their collaboratively developed Guiding Principles (Association of Public and Land-grant Universities, 2012; Martin & Gobstein, 2015; MTEP, 2014, 2020). This movement for TPPs to use a networked improvement community model (Bryk et al., 2010) has started to build larger-scale empirical evidence to drive TPP transformations and consistency benchmarks.

With reference to Tatto’s (1998) targeted characteristics for successful TCs and strong TPPs, along with Darling-Hammond’s (2006) three-pronged approach to rectify criticisms of teacher education to produce 21st-century high-quality teachers, we encourage researchers to fill gaps in the literature through advanced design studies (see Tatto, 2018). We present here the use of path analysis to demonstrate the relationships between internal program measures with validity evidence, as well as their relationship and influence on a standardized portfolio of teaching practice. Our methodology here allows programs to understand the impact or influence of the program model. That is, by examining the relationship between prior metrics (e.g., ACT, grade point average), we can understand the sequential development and relationship of the program model on edTPA beyond that of just the prior academic ability of TCs. As previously discussed, several criticisms of teacher preparation (e.g., fragmentation, lack of coherence, low standards) exist. This longitudinal study serves as one example of program revisions adopted and a self-study to respond to the MTEP movement working to transform TPPs toward strong consistency in norms and practices. We strategically sequenced methods courses and positively impacted the TCs’ performances on edTPA. We recognize that not all TPPs can initiate similar modifications without empirical evidence first. Therefore, we encourage researchers to look for opportunities from our “glass half-full” study and challenge the field to conduct future rigorous studies leveraging edTPA as a useful tool of inquiry, so TCs see the portfolio as a time to shine rather than as a roadblock (De Voto et al., 2021). We recognize the need for further analyses of the development of mathematical content knowledge within teacher preparation. While this study focused on the pedagogical content knowledge domain of TCs, we see the need to apply the same methodology to examine the mathematical content preparation design in programs. We leave this study to future analyses.

Conclusion

The MTEP Guiding Principles have high demands that differentiate a well-prepared beginning mathematics teacher and one who is just-barely qualified. Similarly, the AMTE SPTM provides strong recommendations for developing well-prepared mathematics teachers in which we can endorse the recommendation of three methods courses. We acknowledge that no accreditation bodies require the application of research methodologies such as path analysis to demonstrate programmatic relationships to readiness benchmarks.

The mathematics teacher educator professional community at the secondary level is not only facing challenging teacher shortages but also simultaneously responding to increased accountability. While we do not advocate removing quality control standardized measures, our study aimed to provide longitudinal, empirical evidence of the effects of three sequenced mathematics methods courses and paired clinical field experiences via edTPA. Tatto (2018) articulates two important final points to consider given our findings in this study related to pedagogical knowledge development: One of the most important factors correlated with high levels of knowledge is previous attainment in mathematics. This finding has important policy implications. In countries where there is a great supply of knowledgeable candidates, it is possible to be highly selective, yet in other countries, where the teaching profession is not as highly regarded and the supply of high-quality candidates is limited, selectivity may not be possible. In situations such as this, teacher education opportunities to learn become more important, as these institutions and programs may be the only venue that can provide mathematics courses. (p. 443) Understanding that teacher education quality is highly dependent on program’s selectivity provides information for future policy. An important question for teacher educators, however, is whether their programs contribute knowledge, skills, and dispositions beyond those that individuals bring with them when they enter a program. (p. 444)

Zeichner (2006) provided a 30-year insight into the future of university teacher education. It was suggested that redefining traditional certification programs beyond the goal of raising achievement test scores in primary and secondary schools was critical and that TPPs must take teacher education more seriously as a responsibility or abandon their program leaving fewer criticisms and increasing the percentage of higher-quality programs. We believe De Voto et al. (2021) clarify how edTPA can be a useful tool of inquiry for improving teacher education while warning about pitfalls related to cosmetic compliance and active resistance that harms the teaching profession. We hope our findings fuel future research and aid scholars in leveraging transformational change that increases attention to applying rigorous program evaluation and accountability through a robust interrogation of their TPPs. 8

Supplemental Material

sj-pdf-1-jte-10.1177_00224871231180214 – Supplemental material for The Relationships Between Internal Program Measures and a High-Stakes Teacher Licensing Measure in Mathematics Teacher Preparation: Program Design Considerations

Supplemental material, sj-pdf-1-jte-10.1177_00224871231180214 for The Relationships Between Internal Program Measures and a High-Stakes Teacher Licensing Measure in Mathematics Teacher Preparation: Program Design Considerations by Jeremy Zelkowski, Tye Campbell and Alesia Moldavan in Journal of Teacher Education

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in part by the National Science Foundation Grants #1340069, 1849948, 1726998, 1726392, 1726853. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.