Abstract

This systematic review investigates the effect of teacher professional development (TPD) on adolescent students’ reading achievement in middle and high school. A systematic search of TPD and student reading achievement studies (1975–2020) identified 15 medium-quality articles meeting this study’s inclusion criteria. A meta-analysis of 14 of these studies corrected by Hedges’ g showed that TPD on student reading was associated with a small overall effect of g = 0.062, p < .05 on student reading outcomes. However, the effect size was moderated by delivery of the TPD, TPD hours, student population, and assessment. None of the 14 studies reported TPD theory-driven quality indicators for TPD delivery (e.g., school support, use of technology, and promotion of self-reflection or reported measures of teacher change). Conclusion of findings in literacy TPD includes the need for TPD theory-driven studies.

Keywords

Teachers’ knowledge and practices influence students’ learning and academic performance (Avalos, 2011; Darling-Hammond, 1998, 2000; Spear-Swerling, 2009). Spear-Swerling (2009) argues that increasing teachers’ reading knowledge better prepares them to assess students’ current reading levels, differentiate instruction, and provide appropriate feedback. For these reasons, policymakers and educators worldwide invest significant resources to help teachers improve their pedagogy-relevant reading knowledge and practice to better students’ academic results (Education Endowment Foundation, 2019; Garet et al., 2001; Murakami et al., 2016; Rose, 2005). One vehicle for this desired professional change is teachers’ postcertification continuing professional development (Hattie, 2015, 2009; Slavin, 2008). Teacher professional development (TPD) is often the primary vehicle for policy-driven educational change. TPD is a formal learning process that focuses on developing and improving teachers’ teaching and learning through workshops, online specialist support, or in-class coaching.

There exists a body of research aimed at improving teacher quality (Cirino et al., 2007; Hattie, 2015, 2009; Slavin, 2008) and evaluating TPD programs (Hattie, 2015, 2009; Joshi et al., 2009; Slavin, 2008). As Kennedy (2016) elucidates, the “conventional” model of TPD implicitly assumes the existence of at least three stepped processes: (a) TPD alters teachers’ knowledge and understanding of students. (b) This change in teachers’ knowledge and understanding alters teachers’ practice. (c) This change in practice alters student learning. Kennedy’s description of these three assumptions is a presumed causal chain wherein (a) ≥ (b) ≥ (c) (See also Borko, 2004; Darling-Hammond et al., 2009; Desimone, 2009; Van Veen et al., 2012). Van den Bergh et al. (2014) reported that TPD positively enhanced teachers’ practices in the classroom. Trust et al. (2016) presented evidence that teachers’ change in knowledge post-TPD changed teaching practice and student academic outcomes. Parsons et al. (2016) maintain that significant change happens to teacher learning and knowledge if effective TPD occurs. Effective PD needs to be (a) sustained, (b) linked to students’ learning goals, (c) based on best practices, (d) delivered by coaches/experts, (e) collaborative participation among teachers, (f) based on student needs, (g) implemented with school support including solid leadership, and (h) reflective of teachers’ practice, (See also, Blank & De las Anas 2009; Sinclair et al., 2018; Wexler, 2021).

This article explores the impact of TPD on middle and high school students’ academic achievement in reading.

What Works in TPD: Possible Factors

While the conventional model of teacher change described above is transparent, it is likely deceptively simple. Teacher change is a complex phenomenon, and thus, most models of teacher change are multicomponential (Clarke & Hollingsworth, 2002; Kennedy, 2016). The complete empirical underpinning of TPD remains to be established (Penuel et al., 2007; Wayne et al., 2008). To this end, below, we consider the possible factors that were empirically explored in the cited research, not a complete list of theorized factors that might affect TPD.

It is also quite possible that qualitative aspects of TPD are crucial to understanding teacher change and change in student attainment (Villegas-Reimers, 2003). Teachers’ beliefs about learning and teaching are one of the main catalysts for teacher change and successful TPD (De Vries et al., 2014). Research suggests a strong correlation between teachers’ beliefs and change in classroom practice following TPD (Ahmad, 2022; Rietdijk et al., 2018). Desimone (2009) emphasizes that quality PD focuses on increasing teacher knowledge that positively changes teachers’ belief systems. Essential to quality PD is the “coherence” between teachers’ belief systems and content of PD provided. Similarly, Guskey (2002) argues that teacher participation in TPD reflects belief systems that TPD supports effective teaching and practice. Nevertheless, TPD must align with their classroom pedagogy and needs.

Amendum and Fitzgerald (2013) reported student academic improvement following high-quality TPD. Student phonics growth highly correlated to the type of TPD support teachers received around scaffolding strategies to teach reading. Coaching, and expert training on teaching (Russo, 2004), seems to affect teacher practice and student achievement. In a 4-year longitudinal study, Biancarosa et al. (2010) showed that one-on-one coaching with a teacher improved student literacy. Kraft et al. (2018) conducted a meta-analysis on the effect of coaching on teacher instruction and found a significant Effect Size (ES) = of 0.49. The use of online discussions, video recordings, and other technical methods are alternate methods that may aid teachers in effecting positive change. When a teacher is videotaped and subsequently reviews the video, it allows them to reflect on their teaching strengths and identify areas requiring improvement (Borko et al., 2008; Kucan et al., 2009; Prestridge, 2010).

Context is likely important too. Melville and Wallace (2007) argue that a positive school culture facilitates teacher learning, practice, autonomy, and leadership in the classroom and increases teachers’ knowledge of the subject. Others emphasize that if TPD activities are to be adopted by teachers, they must be relevant to current classroom practices (Clarke & Hollingsworth, 2002; Putman et al., 2009) and valued by teachers who are also motivated to supplant existing methods (Kennedy, 2016). Buczynski and Hansen (2010) argue that effective TPD relies on a partnership with universities reflecting a direct contribution of researchers to teacher practice. Partnership allows researchers to explain their research to teachers and where teachers can provide feedback on the study, including relevance to teachers’ practice. Standardized testing might also alter the effect of TPD on student performance. Herman and Golan (1990) argue that teachers focus on teaching students learning objectives reflected in standardized or high-stakes testing content and not necessarily those reflected in TPD.

Some researchers believe time spent on TPD activities impacts student attainment (Guskey & Yoon, 2009). Garet et al. (2001) claim that an average of 30 TPD hours is required to produce a measurable change in teacher practice. However, empirical evidence for this specific claim is not strong. A meta-analysis by the Author (2018) showed that TPD lasting less than 30 hours positively impacted student reading outcomes, while TPD lasting more than 30 hours did not. Hunzicker (2011) emphasizes a multifactorial model wherein a combined impact of the number of hours of TPD, teacher motivation to change, and the extent to which the TPD relates to teachers’ existing practices in the classroom together produces teacher change.

While professional change in teachers is complex, the empirical question of whether TPD measurably improves student attainment remains essential for educators and policymakers to answer. Prior studies (Author, 2018; Guskey, 2002; Guskey & Yoon, 2009; Hattie, 2009; Timperley et al., 2007) have either focused on attainment generally rather than reading specifically, which as we describe below may be problematic, or have focused on reading in the elementary school phase only. This review extends prior work by disaggregating reading outcomes from broader attainment measures and focusing on middle and high school students.

Professional Development in literacy and Content Area in Middle and High School

Reading in middle and high school is arguably more complex than in elementary school. In middle and high school, reading comprehension is essential for students to support learning content in diverse areas such as math, biology, and history. Reading fluently and making connections between texts are critical skills required in middle and high school to ensure students’ success (Heller & Greenleaf, 2007) with sufficient vocabulary for comprehension (Draper et al., 2005). Teachers may need TPD here (Shanahan & Shanahan, 2008). Draper (2008) suggests collaboration between middle and high school content-area and literacy teachers to improve students’ academic performance. However, secondary teachers struggle to teach deployment of the reading strategies necessary to read in specific content area subjects (Hall, 2005; Wilson et al., 2009). Teachers find the TPD irrelevant, if their students have learning disabilities (LD) or specific LD in reading (SLD), as they do not have the tools and resources necessary to work with them (Gillespie-Rouse & Kiuhara, 2017).

Bryant et al. (2001) argue that several factors contribute to successfully implementing literacy in content areas following TPD. First and foremost, PD researchers need to inquire about teachers’ knowledge of their students and design collaborative PD with relevant strategies that are apt to the needs of their students. Teachers need substantial TPD time to learn, prepare, and implement PD content in their content area classrooms. Cantrell et al. (2008) reported that middle and high school teachers are willing to alter their belief in literacy instruction in their content areas classes if they are supported by professional development focused on coaching, peer collaboration, and team planning. On the contrary, Conley (2008) and Wilson et al. (2009) show that change in teacher practice in secondary schools depends on training teachers with metacognitive strategies that center on students’ awareness of concepts and techniques to adjust their thinking process.

Given this reasoned reflection on the gaps in knowledge on TPD effectiveness, it is essential to ascertain that the content and approaches of TPD relevant to improving reading do indeed improve reading. The research question for this systematic review is thus: “Does teacher professional development measurably improve student reading achievement in middle and high school, and what are the factors that moderate the outcome?”

To further explore theoretical perspectives on TPD described above and their possible impact on student academic achievement post-TPD, school support, relevance to teacher practice, technology, teacher reflection, teacher belief of teaching and learning, partnership with universities, teacher change, delivery of the PD (researcher delivered; coach delivered), student population (students with SLD in reading), student assessment (standardized testing), and PD hours (above or below 30 hrs), collaboration between content areas and literacy researchers, teachers’ knowledge of students,’ team planning, and use of meta-cognitive strategies were all investigated to determine their impact on student academic achievement in reading following TPD.

Method

Search for Previous Systematic Reviews in TPD

Following the Cochrane Database for Systematic Reviews protocol, we located recent systematic reviews or meta-analyses focused on TPD and student reading in middle/high school. The initial search was conducted through “The Campbell Collaboration Library,” “The What Works Clearing House,” “The EPPI Centre,” “PsychINFO,” and “ERIC.” Keywords used were “systematic reviews,” “meta-analysis,’ “teacher,” “professional development,” “training,” “students,” “high school,” and “middle school.” The inclusion/exclusion criteria were designed based on protocols from the EPPI Center, a research base for systematic reviews.

Included Studies

Reviews on TPD.

Reviews on TPD and student reading, including studies that reported no significant results or had a small ES.

Reviews that used randomized control trials (RCTs) designs or quasi-experimental designs (QEDs).

Reviews that focused solely on in-service teachers.

Reviews that focused on TPD in any type of reading instruction (phonemic awareness, phonics, word reading, fluency, vocabulary, and reading comprehension).

Reviews that included students in grades 5–12 (ages 10–18 years).

Reviews that included language instruction in English.

Reviews that were peer-reviewed.

Excluded Studies

Studies that involved preservice teachers or hired interventionists.

Studies of a qualitative nature.

Studies that focused on other academic subjects such as math and science.

Studies that did not have a control group.

Single participant studies, other matched studies.

Studies that focused on TPD in narrative and writing.

Initial Results

This search identified one systematic review from the EPPI Center: Cordingley et al. (2007) evaluating studies from 1997 to 2001. One systematic review was retrieved from The What Works Clearing House: Yoon et al. (2008), considering studies between 1986 and 2003. A third systematic review was located from an online source: Reed (2009) which included studies between 2001 and 2007. One book was found: Hattie (2009), covering the period 1975 to 2007, with more than 800 meta-analyses on educational variables affecting student achievement. The systematic reviews focused on TPD and its effect on student achievement, but not explicitly on reading. The following steps involved an inspection of the papers in the reviews above and the location of individual studies that focused on TPD student reading outcomes.

No meta-analysis or systematic reviews on TPD and student achievement in reading in middle and high schools were found. We excluded all 26 located individual studies focused on TPD and reading as they did not fit the inclusion criteria. Thus, we could not identify any relevant articles in existing well-executed searches up to 2008. We reported all the years of the authors’ published papers to locate the years in which papers have been published and to locate the years that were not reported and thus consider them.

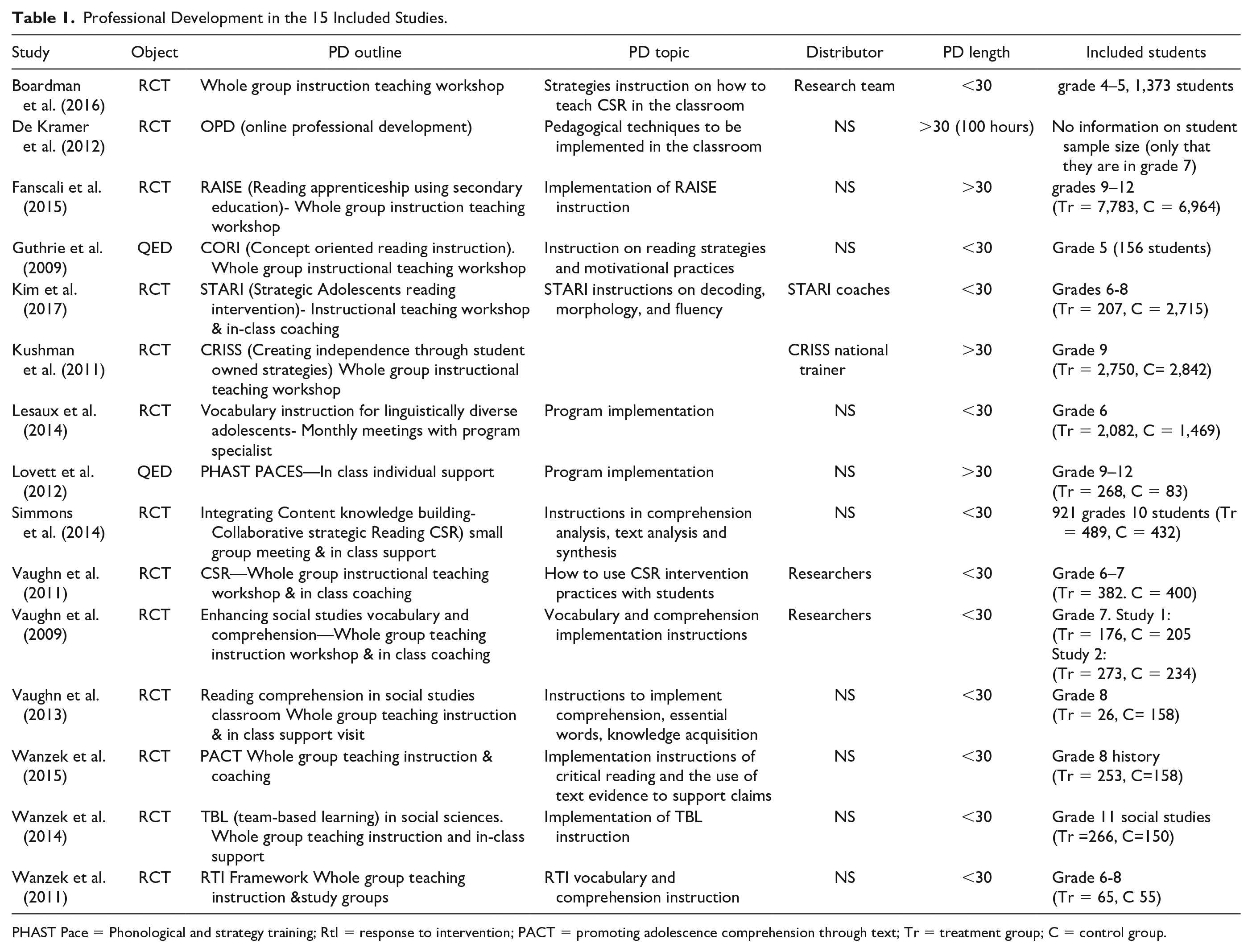

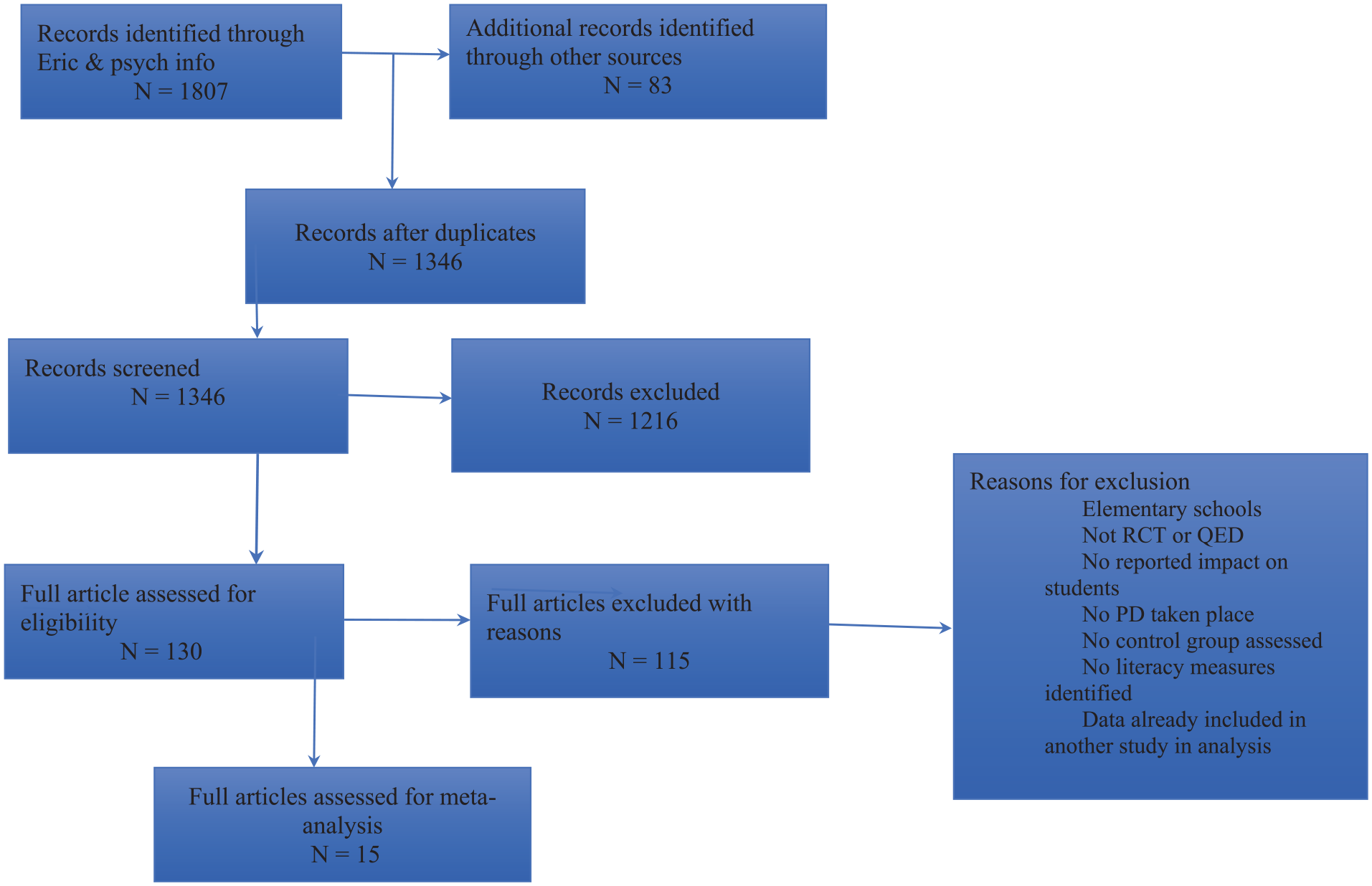

Individual Studies Search for Systematic Reviews

We then identified well-executed individual studies relevant to this study’s research question published after 2008. We used the exact search term and databases in the search for existing systematic reviews and meta-analyses, excluding the keywords “systematic review” and “metanalysis.” We also used snowballing technique and searched in gray literature for any reports, government reports or doctoral thesis using the same search terms. We also located individual studies based on Torgerson’s (2003, 2006) recommendation of RCTs and QED as the most reliable approaches to assessing the effectiveness of an intervention. This comprehensive search and selection exercise identified 15 studies that fit the inclusion criteria (Table 1 for summary details and marked by an asterisk in the reference list). Figure 1 is a PRISMA flow diagram depicting all studies identified through search, inspected, or included or excluded in the review. Note that Boardman et al. (2016) included grade 4 and 5 students. We only used the grade 5 reported results as grade 5 is middle school. The grey literature showed two research reports (Fancsali et al., 2015; Kushman et al., 2011) that fit our inclusion criteria.

Professional Development in the 15 Included Studies.

PHAST Pace = Phonological and strategy training; RtI = response to intervention; PACT = promoting adolescence comprehension through text; Tr = treatment group; C = control group.

PRISMA Chart.

Methodological Quality of Included Studies in the Systematic Review

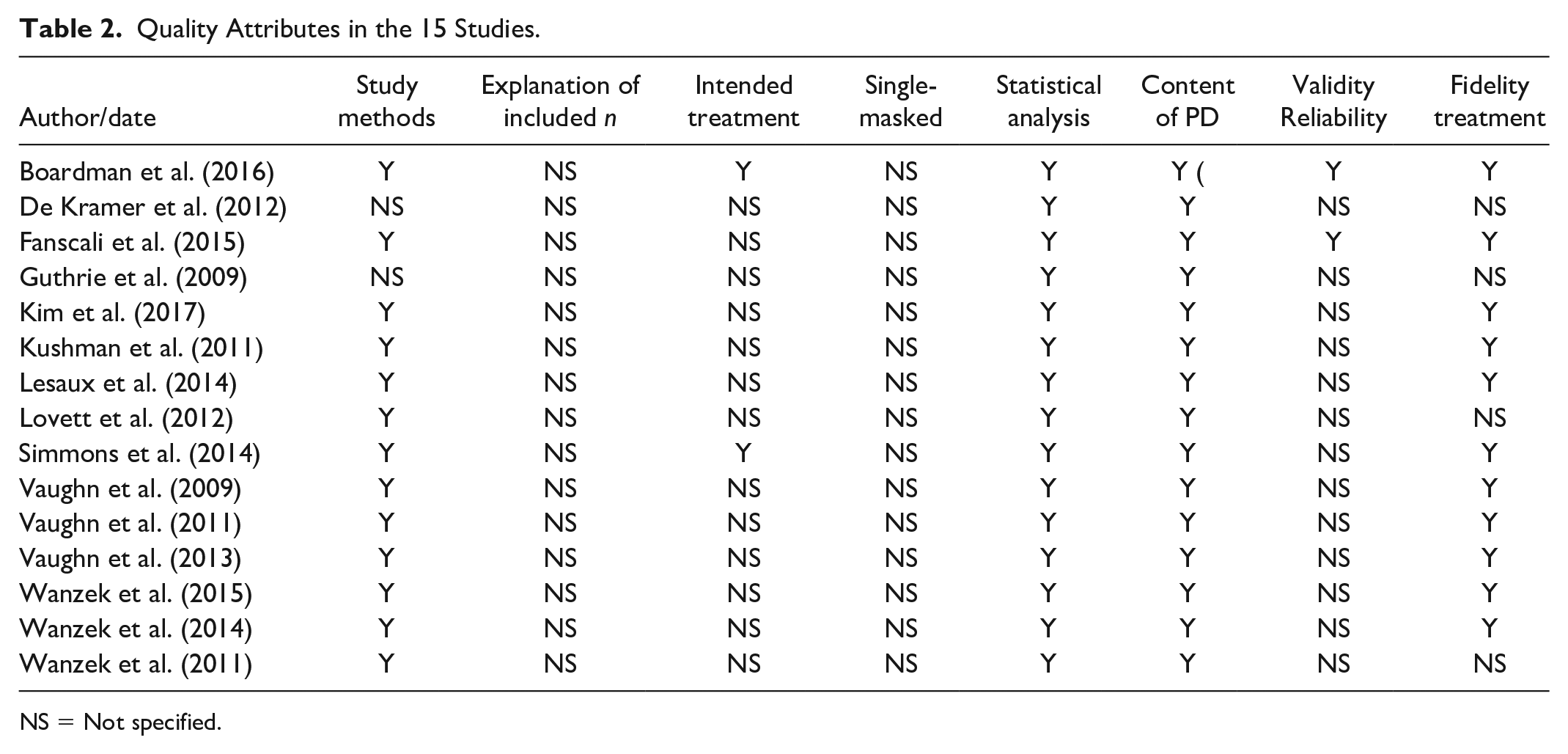

Two coding quality systems were adopted to assess the methodological quality of the execution and report beyond the essential selection criteria above for the studies included in the systematic review. The first was a modified coding system from CONSORT (Consolidated Standards for Reporting Trials) (Table 2). The second was Weight of Evidence (WOE), recommended by the EPPI Center guidelines (https://eppi.ioe.ac.uk/cms/Default.aspx?tabid=67) to assess the quality of included studies (Table 3). The CONSORT and EPPI guidelines were used to code studies to provide a quantitative measure of the rigor of study design. The procedures followed include whether the study reported (a) study methods (i.e., whether randomization is reported and how randomization took place), (b) explanation of included sample size (whether the study provided justification of sample size n and reported a power estimate, (c) intention-to-teat (ITT) (i.e., whether the authors statistically analyzed groups based on the original n ignoring any consequent attrition, (d) masked assessment of outcome (i.e., whether the participants in the study were unaware of the intervention taking place (Turner et al., 2012). In addition to these guidelines, another set of guidelines from the EPPI Center was adopted: (e) whether the study provided a statistical measure of the impact of TPD and intervention on students, (f) whether the content description of the professional development was provided, (g) whether evidence of reliability and validity was demonstrated, and (h) whether authors provided proof of treatment fidelity.

Quality Attributes in the 15 Studies.

NS = Not specified.

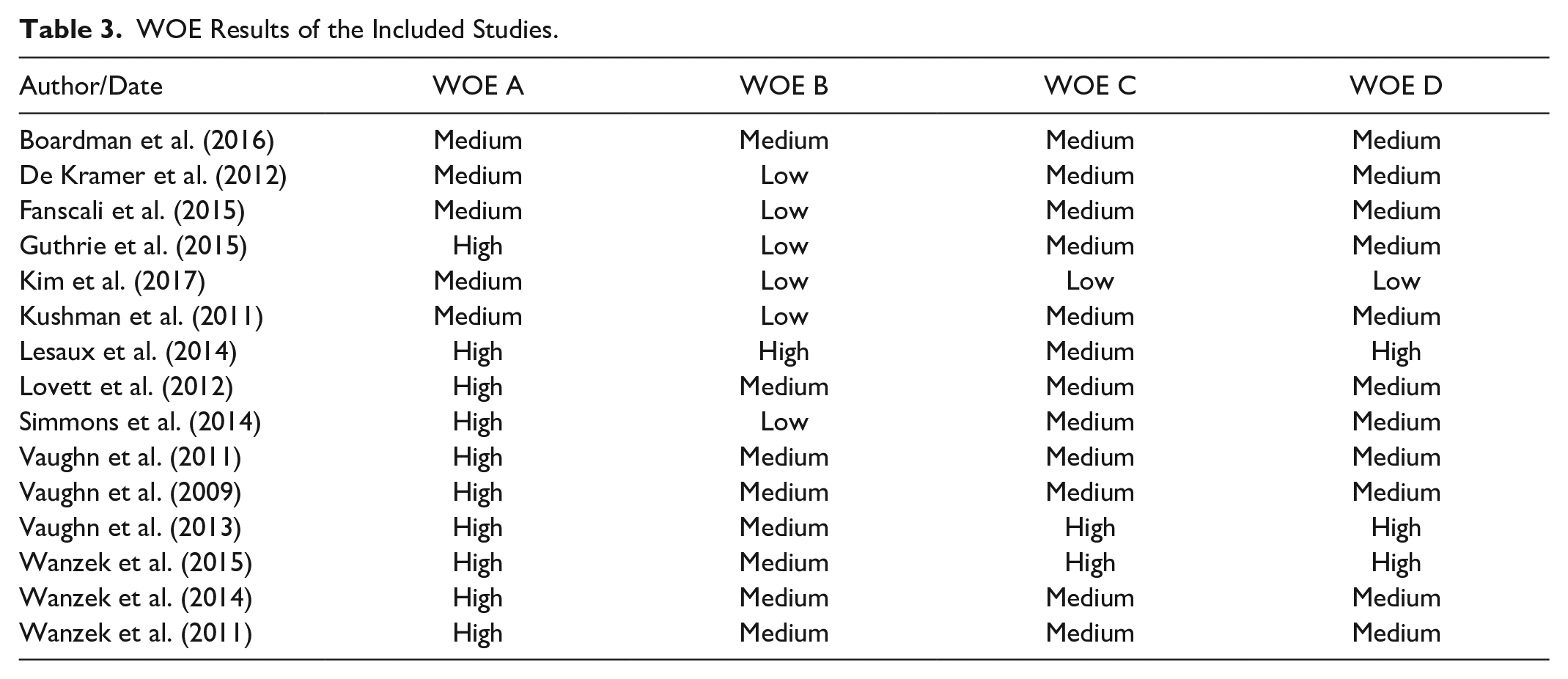

WOE Results of the Included Studies.

The authors independently coded all 15 articles for quality to establish reliability. Coding showed a reliability kappa of .79. The few disagreements centered mainly on whether the studies described how they applied (ITT) and blinding procedures, with less than 3% disagreement on study specifics between both reviewers resolved through discussions, referring to data and decision codes in the original paper and re-analyzing the data to reach consensus, reviewers adjusting initial positions.

Table 2 provides the results of the agreed final analysis of all 15 articles. Inspection reveals that none of the 15 studies reported sample size justification or blinded outcome assessment. Two studies reported ITT. Two studies reported evidence on the reliability and validity of outcome measures. Thirteen studies retained reported treatment integrity. Sanetti et al. (2021) conceptualize four dimensions of treatment fidelity (adherence, dosage, exposure, and quality). None of the studies reported any of these four criteria. Thus, treatment fidelity is interpreted with caution. Alternately, all studies reported appropriate statistical analysis on student reading with pretest and posttest measures and provided information on TPD, with 12 studies also providing a concise summary of the TPD.

A further evaluation of the overall quality of the studies included was undertaken in an analysis of the WOE using EPPI Center standard practices. WOE is a global quality assessment measure, including each study’s internal validity and reliability. WOE establishes whether studies fit the inclusion criteria and answer the research question posed by the systematic review (Gough, 2007). This method of quality assessment has also been adopted in systematic reviews of educational intervention studies (Author, 2018; Cordingley et al., 2007; Davies et al., 2013; Sebba et al., 2008). The rating is “High,” “Medium,” and “Low.” A low A automatically leads to a “low” coding on all other criteria and is immediately excluded from further analysis as recommended by the WOE quality assessment. Studies that report High or Medium on Low A are further analyzed on WOE B and C, and then an overall code D is assigned. For example, if a study reported one High, one Medium, and one Low, the overall D would be “Medium.” If the study reported two High and one Medium, the overall D would be High (Table 3). Interrater reliability analysis on WOE ratings undertaken by the two authors revealed a Kappa of .83, suggesting good agreement on overall study quality between the two raters. The same formal procedure undertaken for the data extraction above was followed in resolving disagreements to obtain finalized WOE ratings.

Finalized WOE quality analysis ratings showed that 11 studies were coded Medium, three were coded High, and one was coded Low quality. This latter study was included in the narrative and qualitative reviews but was excluded from the statistical meta-analysis. All studies reported appropriate statistical analyses of results to assess the impact of TPD on student outcomes using the appropriate nested analysis, TPD descriptions, and measuring treatment fidelity. Studies lacked at least one prominent design feature required to execute rigorous research. Consequently, the conservative position of rating the overall quality of the included studies “Medium” using the formal study quality-coding framework was adopted.

Results

Candidate Moderators

Moderators reflecting practices in included studies were identified. These were TPD type, program, hours, student population, content focus, use of standardized testing, and use of meta-cognitive strategies. To analyze these moderators in the meta-analysis, we coded the moderators into clustered groups and ran analyses for each. Lovett et al. (2012) provided 70 hours of TPD to teachers. Fancsali et al. (2015) provided 10 days of TPD. Kushman et al. (2011) delivered TPD across 2 years but did not specify total TPD hours. De Kramer et al. (2012) provided 100 hours of online TPD. The remaining studies either employed 3 days of consecutive TPD or a 1-day (6-hour) model of TPD. Follow-up sessions accompanied both models of TPD during the intervention in the classroom. Samples varied: while eight studies focused TPD on neurotypical students, two studies (Boardman et al., 2016; Wanzek et al., 2011) focused on students with learning disabilities. Three studies (Guthrie et al., 2009; Kim et al., 2017; Lovett et al., 2012) focused on readers with a specific learning disability (SLD) reading. Two studies (Lesaux et al., 2014; Vaughn et al., 2009) focused on English language learners. Four studies (Vaughn et al., 2009, 2013; Wanzek et al., 2014, 2015) implemented their TPD intervention of reading in social studies classrooms. The remaining studies were conducted in regular language arts classrooms. De Kramer et al. (2012) study is the only one that included an assessment of teacher knowledge of reading comprehension and vocabulary pre- and post-TPD. The students included in all studies were between grades 5 and 12. All teachers had 1 and 30 years of experience with 9 years mean range.

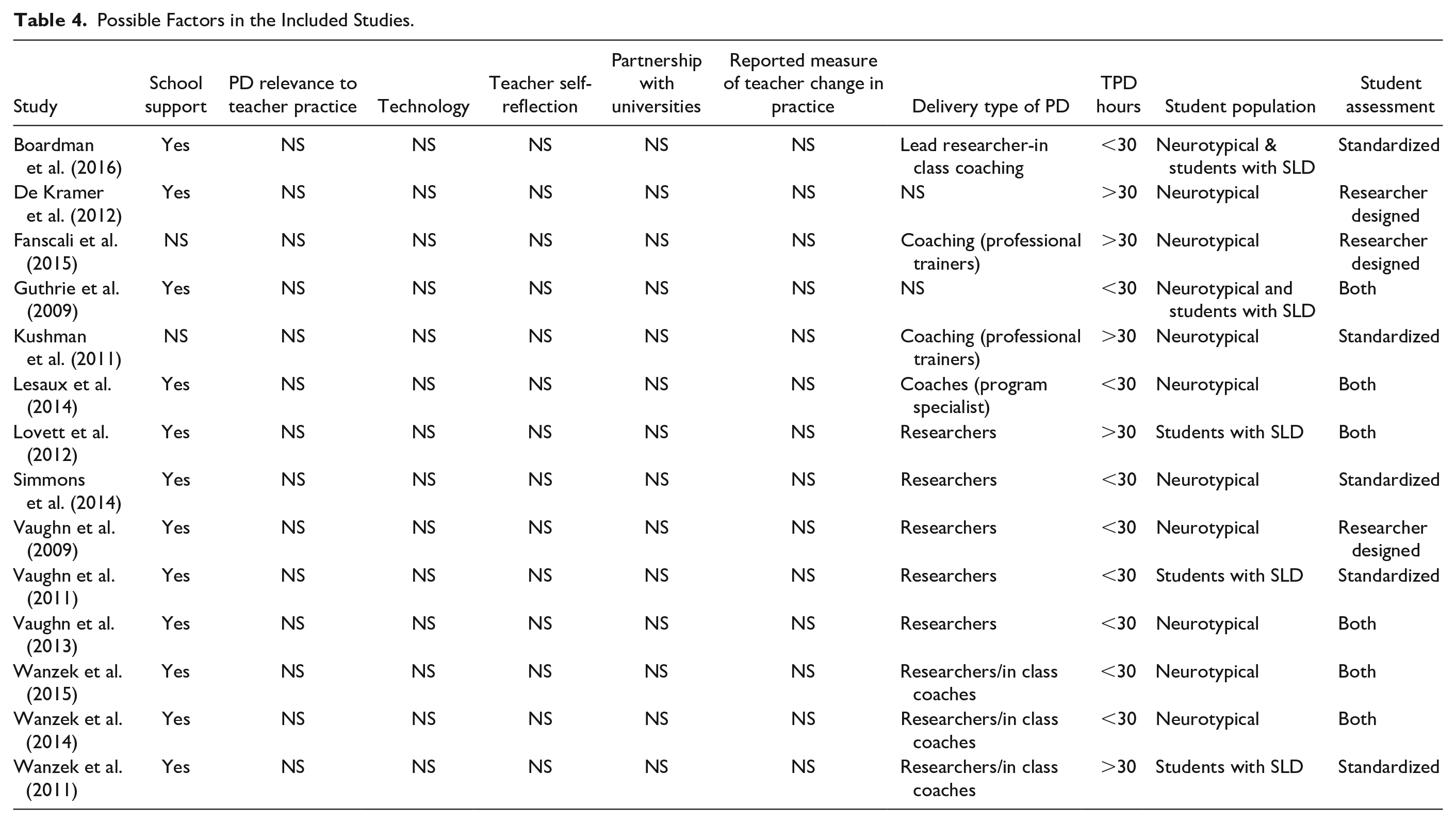

Table 4 documents candidate moderating factors. While De Kramer et al. (2012) measured change in teacher knowledge of the teaching subject, teacher change in practice was not measured. Only one study (Vaughn et al., 2009) used video recording; no other technologies were reported. None of the included studies reported consideration of TPD relevance to teacher practice, teacher self-reflection, explicit details of a sustained partnership with universities, or reported teacher change in practice, collaboration between content areas and literacy researchers, teachers’ knowledge of students, or team planning. All studies included some form of metacognitive strategies (explicit teaching). Hence, no investigation of possible moderating effects of these variables was possible.

Possible Factors in the Included Studies.

We explored the demographic characteristic of included students as a potential moderator. One study reported the mean age of the participant (Vaughn et al., 2013). One study reported including students from low socioeconomic status contexts (Kim et al., 2017). Four studies reported students on free or reduced lunch (Simmons et al., 2014; Vaughn et al., 2011, 2013; Wanzek et al., 2015). Four studies reported that students attended special education classes (Boardman et al., 2016; Guthrie et al., 2009; Kim et al., 2017; Simmons et al., 2014). One study reported having students as low proficient in English (Wanzek et al., 2015). Henceforth, the information provided was scarce to run demographics as a moderator.

Some study features could be operationalized as moderators: Delivery of TPD (coaching vs. other modes of delivery), TPD hours (more significant or lesser than 30 hours), student population (neurotypical students vs. students with SLD in reading, school support (yes vs. no), and student assessment (standardized vs. researcher-designed evaluations). The first and second authors coded the moderators separately. Interrater reliability was 0.72, considered “substantial,” (McHugh, 2012). The only disagreement was coding the student population. This disagreement was fully resolved after discussing definitions of these samples and led to a 100% subsequent agreement.

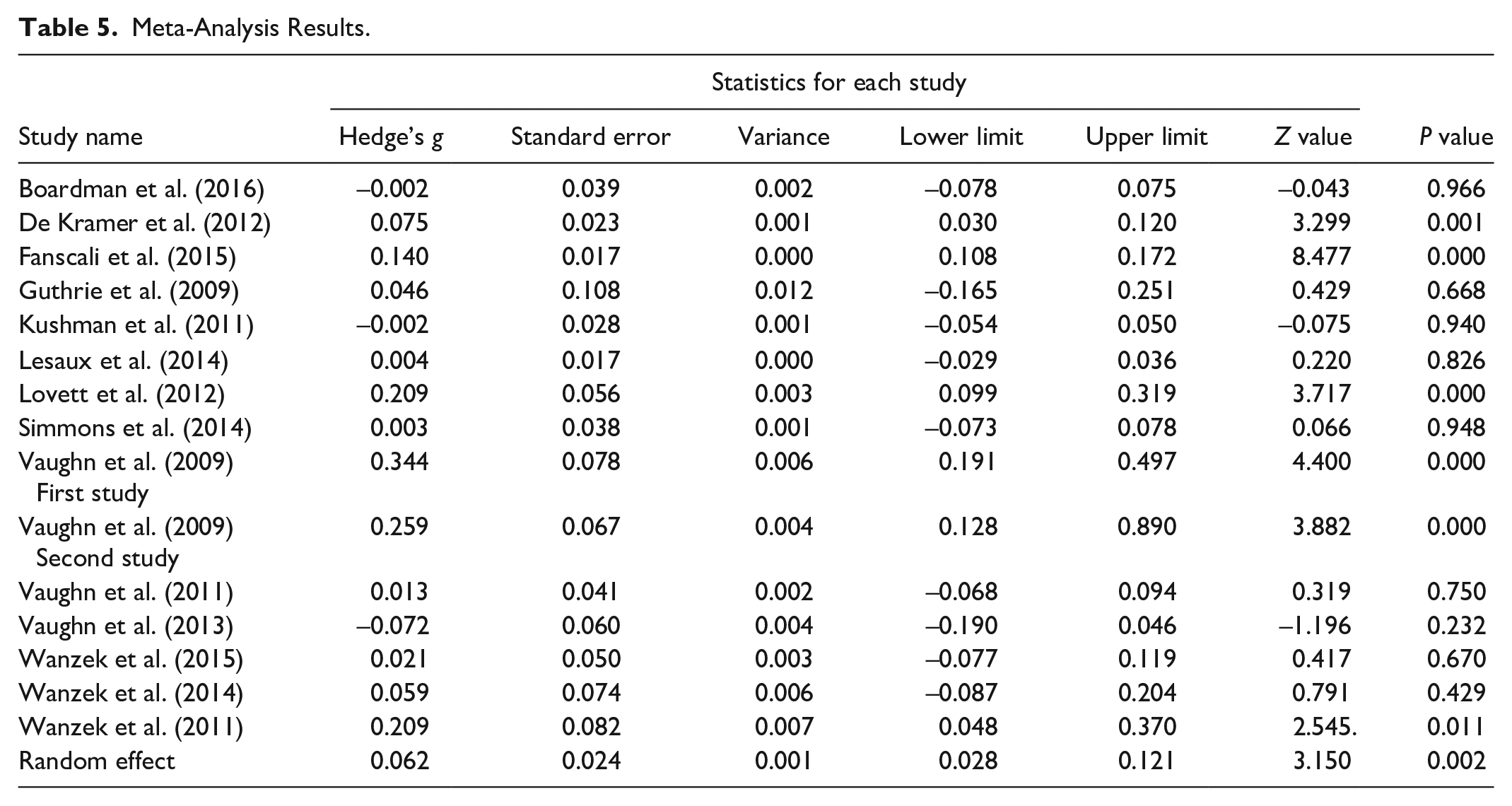

Meta-Analysis Results

The ES of all outcome measures in the included studies was calculated based on Cohen’s d equation: Mean Group 1- Mean Group 2/pooled standard deviation (SD). We entered the mean, SD and n for each study in the ES calculator in the Comprehensive Meta-analysis was employed (www.meta-analysis.com). The studies did not have equal sample sizes. Thus, all measurements were converted to Hedge’s g, which weights sample size in calculating the final ES (Borenstein et al., 2022). To control for the dependency of the ES, we randomly selected one reading outcome for each study with multiple outcomes. This approach is very common in meta-analyses to overcome dependency (See Ahn et al., 2012; Scammacca et al., 2014). The results showed that the studies were heterogeneous (Q = 84.581, df = 14, p < .001). Results also showed that four of the 13 studies had a negative ES. The smallest positive ES was 0.003, and largest positive ES was 0.344 (Table 5).

Meta-Analysis Results.

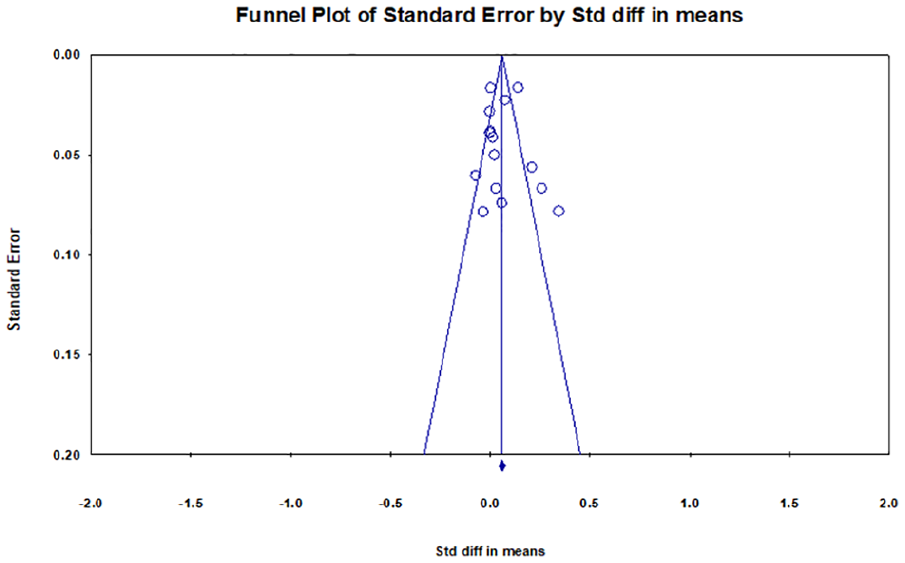

Reporting Bias

A scatter plot of included studies was used to inspect the funnel plot of ES distributions. Smaller studies tend to have larger standard errors, and thus the results of smaller studies will be spread more widely around the average estimate compared to larger studies. With no publication bias, a sample plot against the estimated ES from primary studies should yield a funnel-shaped distribution. Figure 2 shows no clear evidence of publication bias in this meta-analysis. The final ES in the results was g = 0.062, p < .05, 95% CI [0.028, 0.121].

Funnel Plot.

For the moderator analysis, we reran the meta-analysis by adding a column function “moderator” and then grouped the studies by each moderator across delivery types and compared the effects of different types of delivery. Results showed that the overall effect of TPD on student reading was moderated by type of delivery with a significant effect p < .05, g = 0.068, 95% CI [0.024, 0.134]. The analysis showed that coaching was not significant, g = 0.036, p = .269; researcher delivered TPD was significant g = 0.080, p < .05. The results showed that TPD effectiveness was moderated by TPD hours g = 0.062, p < .5, 95% CI [0.017–0.108]. Further analysis showed that both groups had significant results p <.05. There was a slight increase in the ES with more than 30 hours TPD g = 0.082, while less than 30 hours TPD yielded g = 0.070. We grouped studies into “students with SLD in reading” and “neurotypical students.” Results showed the TPD effectiveness was indeed moderated by student population g = 0.060, p <.001, 95% CI [0.017–0.108]. Further analysis showed that “students with SLD” was not significant, g = 0.044, p = .279. At the same time, “neurotypical” was, g = 0.071, p < .05. We grouped studies into “standardized assessment” and “researcher-designed assessment” for the final moderator.” Results showed an overall significance g = 0.060, p < .001, 95% CI [0.017–0.108]. Further analysis showed that standardized assessment was not significant, g = 0.012, p = .456, but researcher-designed assessment was significant g = 0.129, p < .001.

Discussion

This systematic review and meta-analysis aimed to inform education practice and policymakers about the role of TPD in affecting teacher change and student academic achievement. The overall ES was g = 0.062 at p < .05, which is modest in Cohen’s (1988)’s terms. It is not advisable to interpret the results of all interventions solely in terms of values of Cohen’s d at .2 (small), .5 (medium), and .8 (large) in social science and humanities as research varies in critical contextual factors such as the setting, framework, outcomes, nature, and purpose of the study (Henson & Roberts, 2006). Hattie’s (2009) meta-analysis of TPD on attainment in schools produced an ES = .62 and was ranked 19th in his rank order of meta-analyses. The ES = .0062 reported here would rank 125th in Hattie’s table of meta-analyses.

We sought to synthesize concepts from the theory of effective TPD as candidate moderators of TPD on reading outcomes. This study did not uncover any well-executed studies on university partnerships, relevance to teacher practice, teacher self-reflection, collaborations between the content area and literacy teachers, ongoing PD, teachers’ knowledge of students, peer collaboration between participating teachers, or reported measures of teacher change in practice. Only two studies reported teacher self-report, insufficient to consider it a moderator. Our data showed an apparent disconnect between what theory of effective TPD expounds and the content and approaches of well-designed empirical studies of TPD.

Four candidate-moderators were analyzed: Students (with SLD in reading), coaching, and standardized assessment did not significantly affect the overall ES. The overall effect was moderated by TPD hours, researcher-designed assessment, researcher-delivered PD, and the neurotypical student population. Yoon et al. (2008) and Guskey and Yoon (2009) argue that an average of 49 hours of PD improves student achievement. PD of fewer than 30 hrs was associated with larger effects on attainment in Authors (2018). Authors (2018) found that studies of elementary school readers with higher-rated methodological quality yielded better student performance. Here that all articles are of medium quality.

Coaching did not produce significant results here. These results are consistent with Desimone and Pak’s (2017) view that coaching does not improve teacher practice or student achievement. The results also show that students with SLD did not benefit from the TPD. This may suggest that for such students to improve, they need other TPD that focuses on their specific needs (Lovett et al., 2012). All studies that included students with SLD in this meta-analysis used standardized assessments. It may be harder to show effects for this population on such tests that tap their areas of core difficulty (Dutro & Selland, 2012). Researcher-designed assessments may more comprehensively address the needs of students and allow them to show content knowledge (Xu et al., 2019).

In addition, we investigated whether TPD improves teacher knowledge. Only one study addressed this issue. Some consensus exists around the characteristics of effective PD (Darling-Hammond et al., 2009; Desimone, 2009; Parsons et al., 2016). Yet, these characteristics are absent in TPD descriptions in the included studies. While all included studies described the PD programs, the descriptions overlooked how the PD elements were connected to PD program goals. None of the included studies reflected on teachers’ attitudes, and only one measured the improvement of teachers’ knowledge. Henceforth, future PD studies might usefully focus on teachers’ attitudes and knowledge. As Van Veen et al. (2012) argue, PD programs should offer an explicit theory of the PD program, PD philosophy and detailed information on implementation and how it improves student and teacher learning. As TPD is time-consuming for teachers, it may be effective to offer online PD (De Kramer et al., 2012). As Wanzek et al. (2015) and Vaughn et al. (2011) note, PD may be more effective if it is consistent with mandated curricula in the school jurisdiction.

Limitations

One limitation concerns the completeness of data in studies of TPD, given the stepped nature of the conventional model of TPD outlined by Kennedy (2016). Methodologically, the three-step model suggests that impacts at each step should be explored. Techniques such as mediated and moderated path analyses might be successfully employed within the frame of well-designed RCTs to explore these stepwise processes and inform more targeted conceptual and empirical work on TPD. Among the 14 included studies in this statistical meta-analysis, only one study (De Kramer et al., 2012) reported measures of teacher knowledge before and after TPD, and none explored teacher’s practices pre- and post-TPD. The next generation of TPD studies might thus usefully address this complex task of evaluating the stepwise impact of TPD more fully in more complete quality designs. None of the studies reported any correlation between teacher belief systems and TPD. Knowing how strong teacher beliefs are in any TPD (Desimone, 2009), future studies could productively explore correlations between teacher belief systems, change in practice, and change in student achievement.

Lovett et al. (2012) included a delayed posttest to see if any change in attainment was evident and if the immediate posttest was sustained. The result showed that high school teachers and students might need more time to adjust to the change in practice adopted after effective TPD. “Tailored” teacher interventions might be more effective. Adolescents with specific SLD in reading with a range of reading problems may need different forms of evidence-based TPD to show improvements.

Despite the overall “medium” quality study rating, it is important to credit these researchers’ efforts here. All papers were RCTs. Twelve of the fourteen included studies reported methods of allocation. All studies described the process of TPD to some degree. Most studies reported fidelity of treatment. Most of these studies presented sophisticated results analyses at the appropriate “level,” accounting for nestedness in data at the classroom and school levels. All studies drew attention to some form of a meta-cognitive strategy in their TPD to help teachers guide students to develop knowledge and complete tasks. Most interventions used training materials and content considered “evidence-based.” The studies often used interventions that targeted collaborative peer-assisted work with support for motivation, student self-regulation, and strategy-based intervention to facilitate working with content-area knowledge. TPD in these studies appeared to emphasize teachers’ understanding of their critical role in adolescent reading development and improvement. Considering all 14 studies were ultimately included in the statistical meta-analysis, we do not attribute the relatively modest findings to basic study design or implementation shortcomings.

Other possibilities suggested by these modest findings may be that it is simply hard to “move” reading ability, mainly where improvements in text comprehension are targeted in older readers. A meta-analysis of word reading accuracy and fluency interventions revealed that it might be harder to influence reading levels beyond the early elementary years (Suggate, 2010). Against this, other meta-analytic reviews of the broader intervention literature report an ES = .2 for growth in comprehension ability, even in relatively brief interventions (Rogde et al., 2019). More significant effects may be evident in more sustained intervention programs.

More generally, research on reading in middle and high schools may be arduous where essential reading is not a primary focus in the curriculum and where students move between many classes. One issue that bears close consideration is the possibility that standardized measures of reading comprehension used in many analyses may be insensitive to the effects of intervention. Five studies consistently report the results of targeted PD on content-area knowledge (e.g., history, social studies) without corresponding improvements on standardized reading comprehension tests. Further analysis revealed that overall, the ES of PD on content-area knowledge was g = .145. While larger than the overall reported effect of g = 0.062, it was not significantly different from zero in the modest number of studies focused on this topic. This pattern may reflect the failure of students to generalize appropriately. It may also be essential to establish the content validity of psychometric measures of reading comprehension used with TPD in high schools.

Another issue, as Kosanovich et al. (2010) elucidate, is that students in content area classrooms must have advanced skills to read with proficiency and derive meaning from complicated texts. TPD must thus challenge the “inoculation fallacy” (the misconception that reading instruction stops at grade 3, (Snow & Moje, 2010), because a skillful reader must be able to navigate content-area text effortlessly and with understanding. Ongoing support may be necessary across content areas with different structures and language conventions (Moje, 2010).

A fourth complexity concerns needs of readers with SLD. Wexler (2021) argue for the need for literacy-focused lessons in content area classes. Content area teachers’ perspectives of their limited role in literacy in middle and high school (Snow & Moje, 2010) sometimes precludes more effective practices. Teaching literacy in middle and high school may require reconceptions of (and by) teachers and changes in practice (Moje, 2008). As Wexler (2021) have proposed, co-teaching could be a good starting point for reform.

All included studies took place in the United States. Consequently, patterns in other countries, cultures, and orthographies remain unknown. It may also be perilous to generalize from a complex “outlier orthography” such as English (Share, 2008) and the current US educational system. Evidence from and reviews of high-quality studies of TPD from other communities and language systems are urgently needed to advance this scientific field. In addition, insufficient information on student demographic information was reported. With these details about students, we could have perhaps explored the effects of demographic characteristics in TPD.

Conclusions and Subsequent Directions

This article explored whether TPD measurably improves middle and high school student reading achievement. A meta-analysis of 14 studies meeting rigorous inclusion criteria revealed an overall ES of .062, significant at p < .05. Potentially important directions for future intervention here might use approaches derived from theories of effective TPD such as a sustained partnership with universities, the perceived relevance of TPD by teachers to their practice, analysis of teacher self-reflection in or after TPD, or reported measures of teacher change. Finally, the theory suggests room for greater use of educational technology in conjunction with such work in TPD. In addition, the quality criteria listed in Table 3 and the assessment and modeling of both teacher and student change over time both before and after the intervention are critical features of the best work going forward. There should be careful consideration of standardized assessment, specifically if readers with SLD are involved. Such work could usefully be undertaken in conjunction with embedded qualitative analyses.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.