Abstract

Defaults are pervasive in consumer choice. The authors combine eye-tracking laboratory experiments with cognitive modeling to pinpoint the influence of defaults in the decision process and conduct naturalistic experiments with large preregistered samples to test the limits of defaults on consumer choices. Contrary to previous assumptions, in simple binary choices, default options did not potentiate rapid heuristic-based decisions but instead altered processes of attention and valuation. Model comparison indicated that defaults received a positive boost in value—a “golden halo”—that was large enough to increase hedonic choices when the default was hedonic, but had limited effects for utilitarian defaults or for when defaults were incongruent with background goals. The findings illustrate and quantify the mechanisms through which default options shape subsequent decisions in simple choices. Further, the authors establish boundary conditions for when defaults can and cannot be used to nudge consumer choice.

Consumer preference is widely regarded as relatively elastic (Simon and Spiller 2016) and therefore susceptible to bias from the way a choice is presented (Johnson et al. 2012; Mertens et al. 2022). One popular nudge is to preselect one option for the consumer, often referred to as the default. Defaults present the most consistent and powerful nudge (Mertens et al. 2022) and the sole intervention to survive corrections for positive publication biases (Szaszi et al. 2022). An improved understanding of when and how defaults shape choices could help identify new approaches for changing choice architecture

Despite substantial previous work on defaults and their prevalence in the field, we have only limited evidence for precisely

This article addresses several open questions and contradictions. We show that mere assignment of default status without the default’s typical contextual advantages has the power to bias choice in simple binary decisions. We extend previous work by addressing specifically

Theoretical Foundations

Preference for and Attention to Default Options

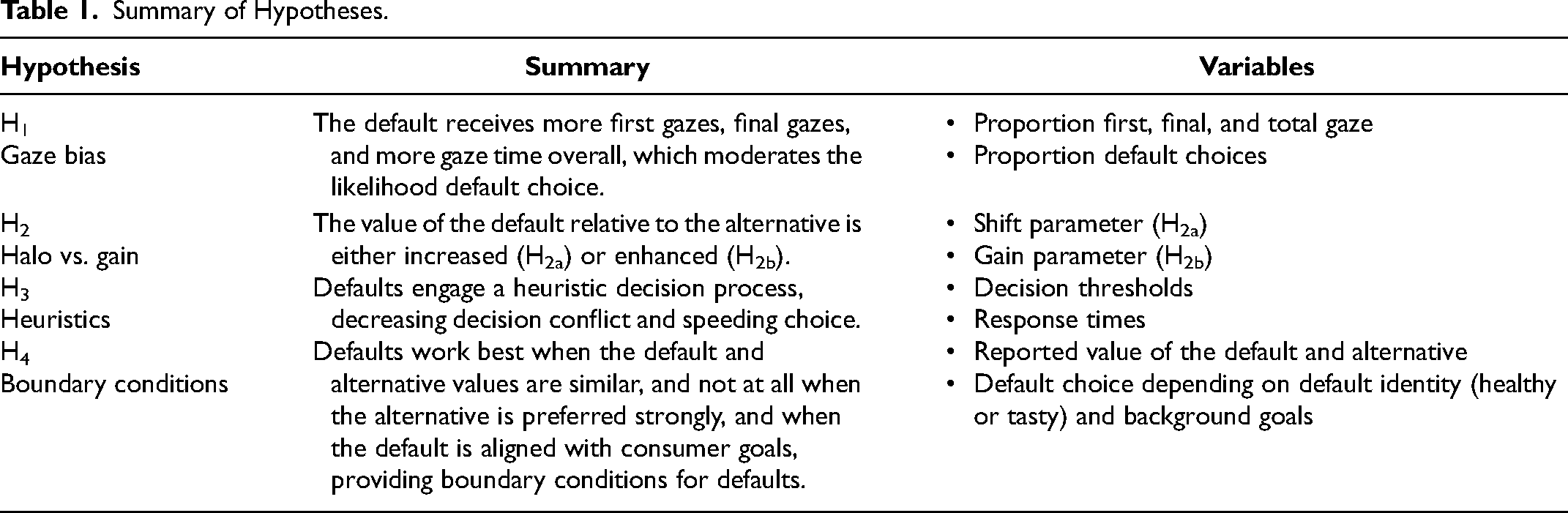

In domains as diverse as investing, organ donation, and insurance, consumers are biased toward selecting any option designated as the default (Johnson and Goldstein 2003; Madrian and Shea 2001; Samuelson and Zeckhauser 1988; Thaler and Benartzi 2004). Defaults may also receive more attention in retail settings where alternatives are not prominently featured. However, it is unknown whether any attentional advantage can be attributed to the default status itself when controlling for prominence. This is important to assess because options receiving proportionally more eye gaze have a significant choice advantage (Krajbich, Armel, and Rangel 2010; Martinovici, Pieters, and Erdem 2023). For example, one’s gaze is more likely to be drawn toward a preferred brand, a bias that increases as the consumer gets closer to a decision (Martinovici, Pieters, and Erdem 2023). We hypothesize that the default will be attended to first, last, and for longer than the alternative and that this explains choice both within a participant and individual variance in default bias across participants (H1). We further compare default status with visual saliency (Study 1a) without language regarding defaults, allowing us to contrast visual prominence with default effects.

Isolating Default Effects

Defaults can influence choices through at least four distinct pathways that are unrelated to their status as the preselected option. First, moving away from the default often requires physical actions or mental processing (Dinner et al. 2011; Smith, Goldstein, and Johnson 2013). Second, alternatives are not usually featured prominently, and thus can be easily overlooked in complex environments (e.g., retail stores). Third, consumers who wish to switch away from a frequently chosen default may face a set of relatively unfamiliar alternatives. Fourth, decisions with defaults often have temporally distant outcomes (e.g., investments, retirement plans, organ donation) and may therefore be more susceptible to biases (Malkoc, Zauberman, and Ulu 2005). In Studies 1 and 2, we remove the aforementioned contextual advantages to isolate and investigate the role of the default status itself.

Overlapping brain activations to winning money and keeping a default suggests that the latter is rewarding (Yu et al. 2010). Therefore, we expect that even when eliminating these advantages, we can still replicate default effects (Table 1, H1). Further, this result indicates that the default may be perceived to be more rewarding than its alternative. This is supported by Brown and Krishna (2004, p. 529), who propose that consumers “treat default designations as though they contain relevant information about product value” and infer choice-relevant information from context (Prelec, Wernerfelt, and Zettelmeyer 1997; Wernerfelt 1995). Indeed, making an item the focus of comparison, as may happen with defaults, enhances its attractiveness or perceived value (Dhar and Simonson 1992). If so, the default will behave in the decision process as if it is more highly valued (that is, a positive constant is added to its value) than if it had been an alternative (H2a). However, previous work has indicated that the value of option will be instead magnified (H2b). That is, its value, good or bad, is multiplied by a constant, making good options better and bad options worse. This latter possibility would be consistent with an attentional mechanism for defaults, as the value of options receiving more eye gaze are amplified during choice (Martinovici, Pieters, and Erdem 2023; Smith and Krajbich 2018). By comparing attentional and value-based mechanisms, we test whether default bias can be attributed to attentional effects alone or instead also involves alteration in value.

Influence of Default Options on the Decision Process

Defaults are often discussed in the areas of heuristics, endowment effects, and loss aversion, such that the default provides a reference point against which we judge alternatives (Dinner et al. 2011; Kahneman, Knetsch, and Thaler 1991). Some have argued that defaults could encourage adoption of a more passive process, operating through “automatic and nonevaluative mechanisms” (Zhao et al. 2020, p. 6) and reducing the amount of evidence required to make a choice and speeding choice (H3). However, others argue that defaults could induce a “skeptical and alert” cognitive state (Brown and Krishna 2004) indicating increased decision conflict and resulting in more cautious, slower choices. Yet, a systematic investigation into the influence of defaults on the decision process has not been forthcoming. Here, we test various alternative influences of defaults on the decision process.

Boundary Conditions for Default Effects

Not all defaults will necessarily work equally well (Jachimowicz et al. 2019)—indeed, some studies do not find a main effect for defaults at all (e.g., Donkers et al. 2020) or find that they backfire—but the causes of and boundaries for differences in the effectiveness of defaults are not well understood. In H4, we propose that defaults work best when they are of equal value to the alternative and decrease in effectiveness as their value decreases relative to it. We also propose another boundary condition, in that defaults are most effective when aligned with, and least effective when incongruent with, a consumer's goals (Study 2). Study 3 assesses the influence of hedonic and utilitarian defaults when consumers exhibit an overwhelming baseline preference for one over another.

Summary of Hypotheses.

Study 1: Influence of Defaults on the Decision Process

In this study, participants made incentive-compatible choices about common snack foods, 1 varying whether there was a tastier but less healthful default (indulgent default), a more healthful but less tasty default (disciplined default), or no default (baseline). We measured attention via eye tracking and characterized the decision process with a series cognitive models.

Parameterizing Default in the Decision Process

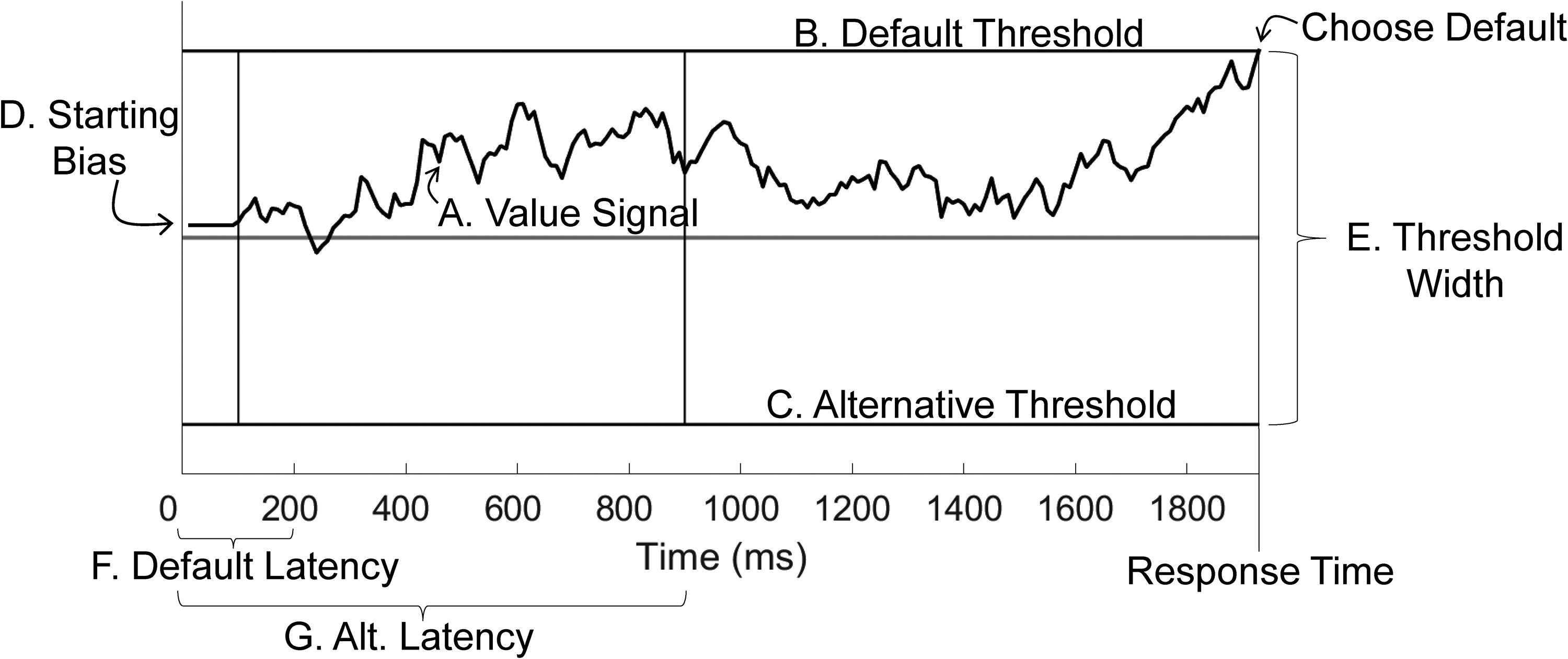

Choices rely on the predecisional integration of information about options, and inferences in favor of one option over another build in coherence toward one choice across the course of a deliberation (Simon et al. 2001; Simon and Spiller 2016). Accordingly, the decision process has been characterized using computational approaches such as the drift diffusion model (DDM; for a review, see Ratcliff et al. [2016]), in which the state of the decision process is represented by a value signal that evolves over time (Figure 1, Label A) until one option reaches a predesignated threshold (Figure 1, Labels B and C). The DDM can dissociate multiple distinct cognitive processes (e.g., Cavanagh et al. 2014; Krajbich, Armel, and Rangel 2010), so it provides important tools for understanding default choices by investigating a set of decision parameters that account not only for choices (cf. a logistic regression) but also for their response times, which may differ between default and no-default choices. The DDM has also been found to provide more accurate out-of-sample predictions than logit models (Clithero 2018). In this section, we identify distinct pathways through which the default could alter the decision process and how specific parameters of the DDM can help test our hypotheses. See Web Appendix A, “Modeling,” for more details on the DDM.

Decision Model for the Influence of Defaults on Choice.

We tested several distinct ways in which the default could gain an advantage in choice. First, the default's value could be distorted. For example, if the default is perceived as an endowment or endorsement, its value could appear better than it would be otherwise (H2a). We test this by allowing the default to receive an additional positive shift in value during option comparison in the DDM. Alternatively, its value could be amplified, meaning its value (good or bad) could contribute more to option comparison than the alternative (H2b), as has been seen when increased attention is paid to one option (Martinovici, Pieters, and Erdem 2023; Smith and Krajbich 2018). Here, we test this by allowing the default's value to be multiplied during option comparison, making disliked options appear more disliked, and liked options appear better. We also fit the attentional drift diffusion model (aDDM; Krajbich, Armel, and Rangel 2010) to jointly estimate the influence of changes to attention (H1) and the valuation (H2).

Defaults could also ease decisions by engaging a faster heuristic-style process (H3). We can test this in several ways. In the DDM, a

If the default is attended to first (H1), its value may be estimated first as well. Therefore, we test whether the default has a relatively shorter

Method

Participants

Forty-one young adults (22 female, 11 male, 1 nonbinary, 7 prefer not to answer; Mage = 24.7 years, SD = 7.8 years) screened for dietary restrictions participated in this 60-minute incentive-compatible dietary choice study. Participants had fasted for four hours, and 78% reported being hungry. Participants did not differ in overall likelihood of prior consumption for the foods randomly selected as defaults versus alternatives (Bayes factor 2 M = .06). One participant was excluded as they did not use tastiness, healthfulness, or wanting to guide their choices in the baseline task, suggesting inattention, and one participant did not have sufficient eye-tracking data. These exclusions resulted in a final sample of 38 participants (a priori target sample size was 40). Six participants are excluded from the demographics reporting because questionnaire data was not collected. Compensation was $12 and a snack. All participants gave informed consent under a protocol approved by the university.

Design

Participants completed four rating tasks in a fixed order: taste and health, baseline, default, and wanting. To avoid contamination effects from the default manipulation, we ordered the baseline task before the default task. Stimuli were presented using the Psychophysics Toolbox for MATLAB (Brainard 1997). Afterward, participants were served the food they chose in one randomly selected trial; all participants consumed the food immediately. See Web Appendix A for task instructions.

Food rating task: tastiness and healthfulness

Participants rated 30 snack foods on two five-point scales: tastiness and healthfulness (Figure W1a). Scale type, food presentation order, and scale left-to-right direction were randomized.

Baseline task

Participants performed 100 self-paced choices between an indulgent or disciplined food (Figure W1b). Due to a coding error, five participants did not have any such trials (indulgent vs. disciplined foods), and they are excluded from the baseline healthy choice comparisons. Pairs were not repeated between the baseline and default tasks, and their order was randomized. Between trials, a centered fixation cross was displayed for between 200 to 500 ms.

Default task

Participants made 200 self-paced randomized binary choices between foods (Figure W1c) and were told, without deception, that on each trial a computer algorithm would preselect a food for them. The algorithm preselected the disciplined food on one-third of the trials, the indulgent food on another third of trials, and the two foods were matched on both taste and healthfulness in the final third of trials. Participants were asked to decide to keep that preselected food or to switch to the other food (pressing a button to choose regardless).

Choice labels “KEEP” and “SWITCH” were in white text on the left and right of the screen, randomized across participants, and surrounded by a green and white box, respectively. The default was randomly presented on the top or bottom of the screen. Participants used the 1 and 0 keys to select the left or right label. After a “keep” choice, the green boxes remained around the preselected food and the “KEEP” label for 200 ms. If the “switch” option was selected, the alternative's box and the “SWITCH” label were instead green for 200 ms, after a 50 ms delay. Labels were used to test whether a starting bias best explained default bias. Because the “KEEP” label was always in the same location for each participant and used the same response key, participants could be biased in their choices toward that label without having yet even seen, identified, or assessed the attributes of the options on screen.

Food rating task: wanting

Participants rated all the experimental foods on a five-point scale asking “How much do you want to eat this food after the experiment?” This was performed after the default task so that participants would not think the preselection algorithm used these “wanting” ratings to designate the default.

Eye gaze metrics

Gaze position was collected during all tasks with a Tobii T60 remote eye-tracking system at a temporal resolution of 60 Hz (±.002 Hz). Areas of interest (AOIs) were drawn around each food's box with a 25-pixel buffer to account for small inaccuracies in calibration. We calculated total gaze time to each AOI for each trial and participant. When using gaze data to predict subsequent choices, we decided a priori to omit the final AOI gaze, which often co-occurs with choice execution (Krajbich, Armel, and Rangel 2010); this conservative approach allowed us to draw stronger claims about the attentional antecedents of decisions (we also report analyses including final gaze). We decided a priori to exclude trials where the eye tracker could not detect gaze location for over 50% of samples (<5% of trials removed, on average).

Analyses and Modeling

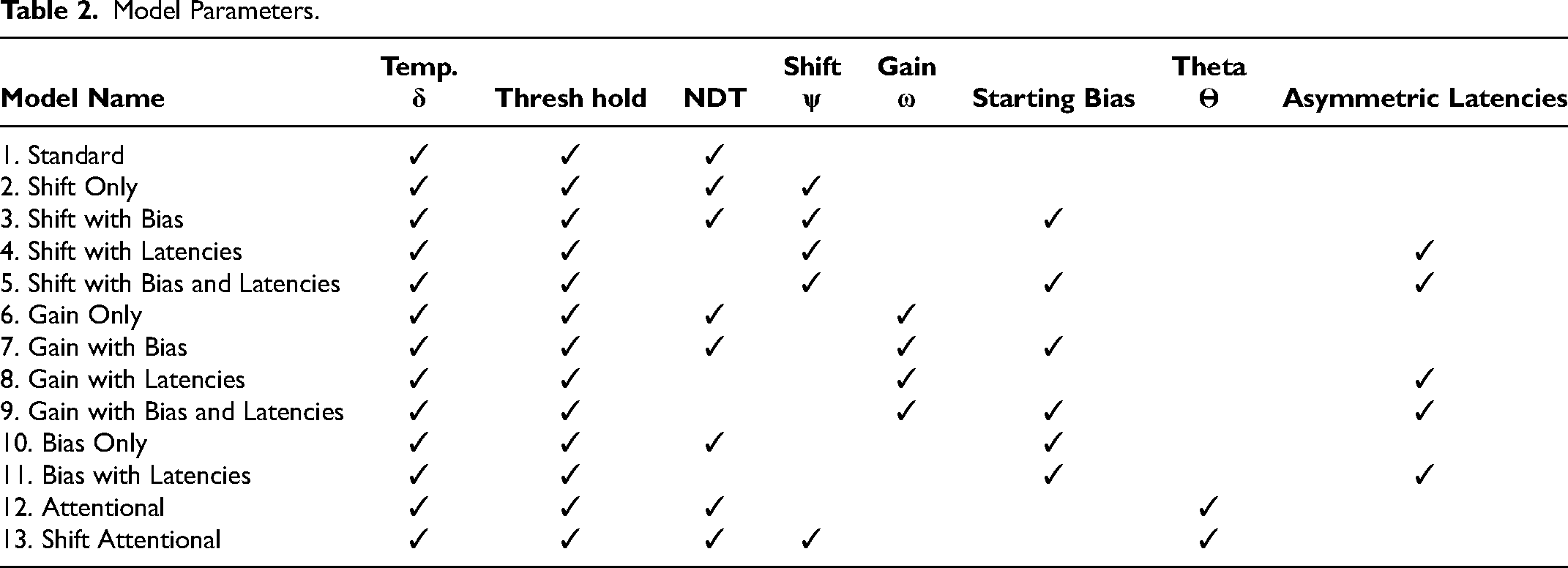

DDM estimation

Thirteen DDMs were estimated separately for each participant and task (Table 2, Web Appendix A). We again estimated parameters of the best-fitting model using half of a participant's trials (noted as “cross-validations”), and we compared these with the participant’s behavior on the withheld trials. Value integrated at a rate determined by temperature parameter δ. Threshold size set the amount of evidence required to make a choice. In “Gain” models, the default's wanting value was amplified by ω, which would intensify the value of the default, making a disliked option more disliked and a liked option more liked. In “Shift” models, the default's value received a positive addition ψ. This shift was not related to the underlying value of the food and therefore would increase likelihood of the default selection even if the default were disliked. We also varied the time at which option value began accumulating. A starting bias started option comparison closer to one option threshold. We tested the individual and joint effects of these parameters. We also tested the aDDM, which applies a penalty to the unattended option, as well as an aDDM that incorporates the best-fitting default bias parameter. See Web Appendix A for a parameter recovery and simulation exercise to illustrate how each parameter is expected to influence choices and response times (RTs).

Model Parameters.

Results and Discussion

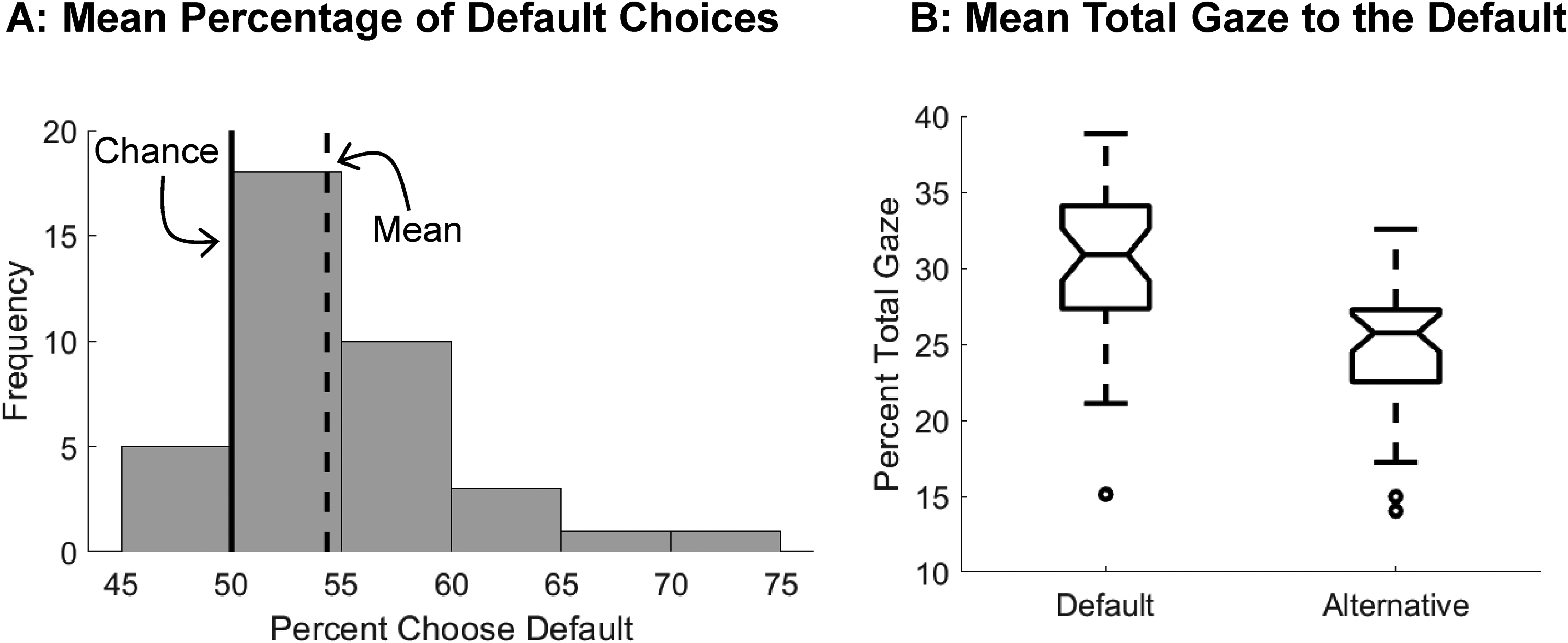

Choices were biased in favor of the default option

First, we replicated past default bias findings that participants selected the default significantly above chance (Figure 2, Panel A; M = 54%; d = .87, t(37) = 5.39,

Default Choice and Gaze Bias.

Eye gaze is biased toward the default

We hypothesized (H1) that the eye would be drawn to the default first, last, and for longest. The default received more gaze time on average (H1; Figure 2, Panel B; Mdefault = 30%, Malternative = 25%; d = 1.13, t(37) = 9.99,

Model selection

To assess what decision process features contribute to the choice, we used pairwise comparisons of each DDM's Bayesian information criterion (BIC) values, which evaluate overall model fit while penalizing for additional parameters. The Shift Only model (median BIC = 988) fit choices and RTs better than the Gain Only model (median BIC = 1018), providing support for H2a over H2b in that the default acts through an endowment or endorsement, increasing the value of the default (d = −.14, W = 191, z = −2.60,

Modeling results also indicate that a heuristic-based starting point bias alone is not the best explanation for choice and RT data (H3). Previous work used a starting bias to parameterize default effects (Zhao, Coady, and Bhatia 2022) but did not allow for all of the alternative process explanations for this effect that we test. We replicated their results in that the starting bias parameter is significantly greater than zero when included (

Influence of the golden halo on choice

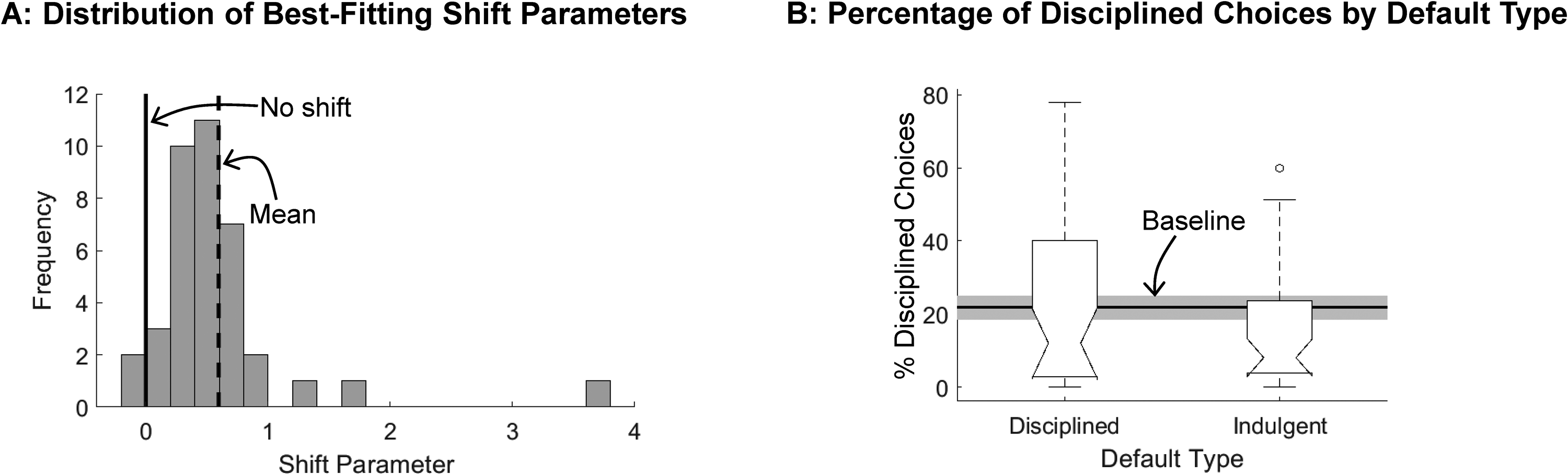

The default's value shift was larger than zero on average (Figure 3, Panel A; Min = −.04, Max = 3.80, M = .60; d = .94, t(37) = 5.76,

The Default Creates a Shift in the Decision Process.

Influence of attention bias on choice

The attentional drift diffusion model (aDDM) proposes that the value of an unattended item is penalized by θ in option comparison (Krajbich, Armel, and Rangel 2010). Because the default receives both more gaze and a positive shift in value, we combined these into one DDM, the Shift Attentional Drift Diffusion Model (saDDM), which simultaneously estimated attentional bias and the default's shift in value. This tests whether one or both parameters are best at explaining default effects. We tested this against the aDDM without a shift parameter. See Web Appendix A for estimation methods. Replicating previous work, there was a significant discount on the nongazed item in both models and tasks (θs for aDDM: baseline: median = .56; d = −1.26, z = −5.37,

The main test of our saDDM, however, is to see whether, even when allowing this attentional bias, the default shift ψ still is greater than zero on average. This is the case for the default task (median = .22; d = .31, W = 515, z = 2.10,

Default decision process: fast, heuristic-based?

We hypothesized that defaults may reduce choice effort by engaging a fast heuristic (H3). In the “Model Selection” section, a starting bias alone was not the best explanation for choices with defaults, the first evidence against a heuristic-based process. Further, mean RTs were 257 ms

Compared with the baseline task, in the default task, decision thresholds were larger (Mbaseline = 1.43, Mdefault = 1.56; d = −.55, W = 136, z = −3.40,

Limits to the default effect

Lastly, we investigated the conditions under which the default effect is eliminated. We have shown that defaults shaped choices while controlling for the reported wanting of each item. Although default location had significant positive influence on choice, there was an interaction between wanting value and default status (interaction B = .50, t(7,596) = 9.83,

Finally, we note that attention can be involuntarily drawn toward visually salient features like the default's green box (Anderson 2013; Awh, Belopolsky, and Theeuwes 2012) to bias choices (Blom et al. 2021; Milosavljevic et al. 2012). To rule out this explanation for our default bias, in Study 1a (Web Appendix B) we highlighted one option in a vivid color but used no preselection language, and we found that neither choice nor attention to the highlighted option was greater, indicating that visual salience is not the driving factor underlying the default bias observed here. However, we note that real-world choices may involve conditions with multiple alternatives, distraction, greater engagement or incentives, and/or possible risks—and in such conditions, default options could have different effects.

Most consumers are primarily driven by taste goals (Kourouniotis et al. 2016), so it is unclear whether healthy defaults can improve choice since they are incongruent with consumers’ goals. If not, defaults would present a more limited opportunity to alter choice in retail environments than previously thought. Here, we varied whether the default was disciplined (healthy, less tasty) or indulgent (tasty, less healthy) to test whether default bias was reduced for disciplined defaults. First, we note that participants made more healthy choices when the default was disciplined, relative to when it was indulgent (Figure 3, Panel B; Mdisciplined = 22%, Mindulgent = 16%; d = .28, t(37) = 2.88,

Study 2: Testing Dietary Defaults in the Presence of Background Goals

Next, we test the limits of default effects in the presence of conflicting background goals. The current environment induces short-term hedonic goals, making long-term goals, such as the healthfulness of foods, less accessible (Simmons, Martin, and Barsalou 2005). Here, we induced goals of eating either what tastes good (“taste goal”) or what is healthier (“health goal”). We hypothesize that when taste goals are induced, disciplined default bias will be reduced, but not diminished, relative to a health goal or no goal at all. This allows us to understand the constraints defaults may have in the face of strong consumer preferences or goals; this is important to know, as in such cases where default effects are eliminated, other nudges should be considered instead.

Method

Participants

Recruitment and screening were identical to Study 1 (see “Study 2 Replication” in Web Appendix C for details). Fifty-five young adults (35 female, 19 male, 1 prefer not to answer; Mage = 24.1 years, SD = 8 years) completed this experiment. Four additional participants were recruited, consented to the study, and were paid, but no data was collected for them due to equipment failure.

Background goals

Participants were randomly assigned to a condition. After the ratings task, participants read one of two short scripts (Web Appendix B) describing the importance of eating either healthier (“health goal”; N = 24) or tastier (“taste goal”; N = 24) foods, embedded in the task instructions. To minimize concerns of differential understanding of each goal, scripts were approximately matched on sentence structure, word count, and reading level.

Results and Discussion

Study 2 replicated key findings from Study 1 (see Web Appendix C). This included the DDM comparisons performed for Study 1, which again indicated that the Shift-Only model best fit the data. Next, we report how goals interacted with default identity to bias choice.

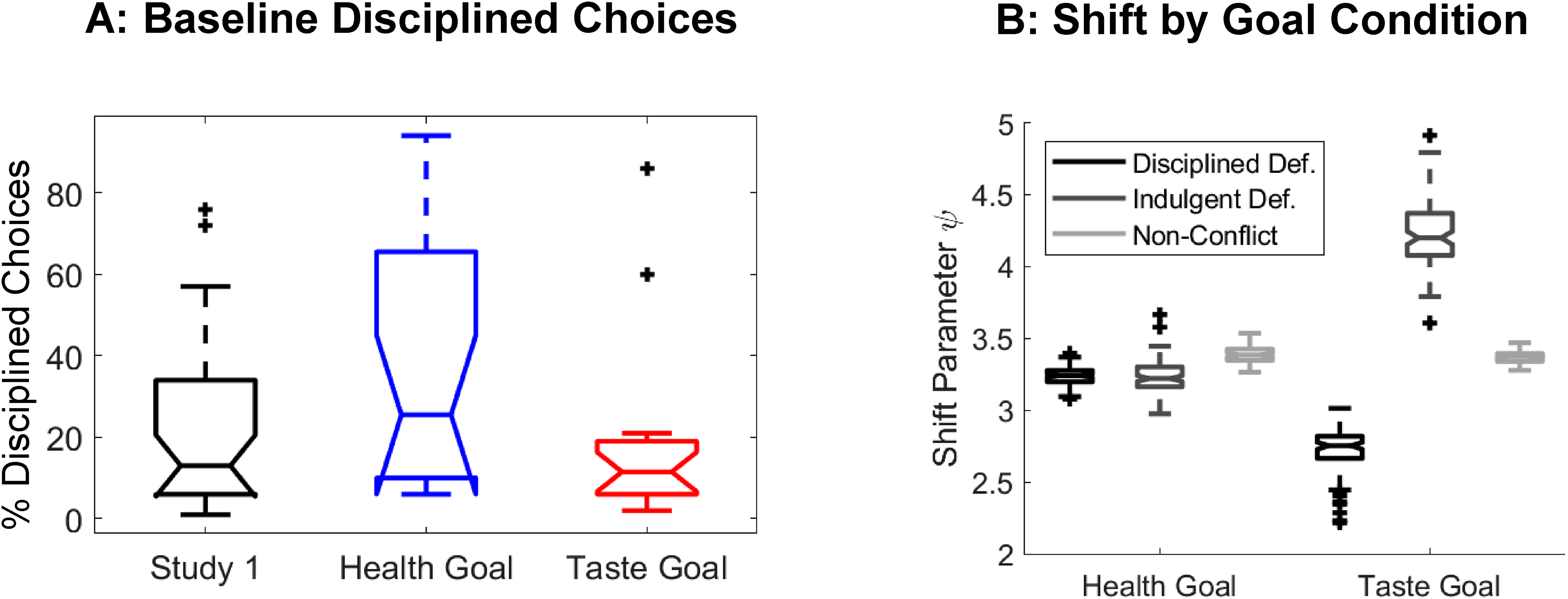

Validating goal manipulation

First, we validated that the manipulation induced a goal of focusing on either the taste or health of foods by confirming that health goal participants made more disciplined choices than taste goal participants in the baseline task (Figure 4, Panel A; Mhealth = 36.25, Mtaste = 17.67; d = .72, t(36) = 2.19,

Study 2: Disciplined Choices, Shift, Differed by Goal.

Defaults were more powerful in those with utilitarian goals

As in Study 1, we found that defaults are most powerful when options are equally wanted, with decreasing power as the default becomes less preferred (see Web Appendix C). However, the statistically significant interaction between wanting and default indicator only holds in the taste goal condition (health: interaction B = .03, t(4,796) = .49,

Default and goal type congruence

We predicted that incongruent defaults—those that conflict with the background goal—would be less influential. For example, we predicted that disciplined defaults would be less effective than indulgent defaults for participants given taste background goals. We test this in several ways. First, there was no difference in the

In Study 1, indulgent defaults led to more indulgent choices compared with the baseline task. Echoing our results—that defaults are more powerful in the health goal condition—we find that this holds in the health goal, but not the taste goal, condition (Figure W10; health goal: Mbaseline = 36%, Mdefault = 29%; d = −.25, t(19) = −2.91,

The default's golden halo differs by congruence with goals

Next, we investigate whether the default's golden halo, estimated by the DDM's shift parameter, behaves differently depending on whether it is congruent with a participant's background goal. For example, a disciplined default may receive a smaller shift when participants are given a goal that conflicts with its disciplined identity (i.e., a taste goal). To assess this while retaining sufficient estimation power, we pooled participants and estimated DDM parameters for each goal group and default type separately. To obtain a sense of parameter variance, we estimated 100 iterations of each model. We found that the mean shift was positive for all default types and goal conditions (Figure 4, Panel B;

Study 3: Effects of Default Options in Single Consumer Choices

Studies 1 and 2 illustrate the default's decision process advantages by combining lab experiments, process tracing, and cognitive modeling. Although this provides insight into underlying mechanisms, the tasks used differ from typical consumer choices. For one, the repeated-trials nature of the tasks, necessary to achieve sufficient power for eye-tracking and modeling results, limit their verisimilitude (Li et al. 2022). Here, we assess default effects using a large-sample online study using single incentive-compatible choices in a large panel dataset to increase generalizability. We further extend our findings to a new domain: hedonic and utilitarian gift certificates. Across many choice contexts, we choose between hedonic and utilitarian options—for example, the choice to spend money on something indulgent (e.g., an expensive coffee, as represented by our Starbucks gift card) or something more practical to benefit the consumer in the longer run (e.g., a home improvement store purchase). Therefore, Study 3 extends to a new domain outside a lab setting, while still allowing us to assess the default's influence on the decision process to bias choice in hedonic–utilitarian trade-offs. Further, gift cards allow us to perform a large-sample online study while maintaining some incentive compatibility by delivering gift cards to participants. Study 3 also increases generalizability by increasing the sample size (1,710).

Method

Procedure: pretest

To select brands widely perceived to be either hedonic or utilitarian, we asked 121 CloudResearch (Amazon Mechanical Turk) workers to evaluate 41 U.S. nationwide brands. We excluded 16 participants for failing attention check questions, providing identical responses for each company, or reporting that they had never heard of Amazon. Participants rated brands on a hedonic–utilitarian scale and reported both willingness to pay (WTP) and their happiness in being gifted a $25 gift certificate. Happiness was rated on a five-point scale and was designed to be used as a substitute for the five-point reported food wanting measure in the same analyses as in Studies 1 and 2; thus, we will referred to this as an option's “wanting value.” These answers were used to create three brand pairs for the choice task. Brands selected were highly familiar on average, and each brand pair was designed to maximize their relative differences in hedonic–utilitarian scale ratings while matching as closely as possible on happiness ratings. The exact brand pairs used were Home Depot versus Netflix, Walgreens versus Cold Stone Creamery, and Bed, Bath & Beyond versus Starbucks. Detailed methods and a full report on the companies tested, including ratings distributions, can be found in Web Appendix D.

Procedure: choice task

Using the same recruitment tool, we recruited 1,710 participants who provided informed consent before receiving instructions (858 female, 821 male, 13 nonbinary, 1 agender, 17 prefer not to say; Mage = 42 years, SD = 13). Participants were informed, without deception, that some of them would be randomly chosen to be emailed the gift certificate they chose, in a randomly determined amount up to $100 (see Web Appendix D for instructions). Then, participants saw an example choice screen using two brands that were not used in the task and scored in the middle on both the hedonic–utilitarian and happiness scales (McDonalds and Taco Bell). Lastly, they made one choice between two gift certificates. Brand logos were displayed one on top of the other (Figure W13). Participants were randomly sorted into one of three conditions to parallel the lab studies: (1) baseline (no default; N = 515) in which they indicated “Top” or “Bottom” for which gift certificate they wanted, (2) default hedonic (N = 509), and (3) default utilitarian (N = 504). In the default conditions, participants responded “Keep” to keep the option surrounded by the blue box, or “Switch” to select the other item. The company shown on top and the response option locations were randomly determined per participant, with a roughly equal number of cells per group. Then, participants answered a series of questions about both companies and some demographic questions. Participants were excluded for failing attention check questions, having an RT two standard deviations below or above the mean RT for their condition, or indicating that they have never heard of either company (11%; final N = 1,528). All analyses were preregistered (AsPredicted.com #84950) unless otherwise indicated.

Results and Discussion

Validation

Participants chose according to their preferences; top-option choice related to three preference indicators (shopping frequency B = 1.06,

Default chosen more often than alternative

A logistic regression relating top choice to default location (1 top, 0 bottom), which option was on top (1 utilitarian, 2 hedonic), and their interaction indicated a significant effect of default (B = 2.86,

Hedonic and utilitarian defaults

Because Studies 1 and 2 found that default bias was larger for indulgent defaults and absent for disciplined defaults, we preregistered the prediction that defaults will have a weaker effect in the utilitarian than hedonic default condition when compared with a condition without defaults. This is confirmed by the significant interaction effect between default type and location variables in the regression reported previously. Further, participants made more hedonic choices in the hedonic default condition than in the baseline condition (baseline = 33%, hedonic default = 39%, z = −2.22,

Default effects as a function of preference

In Studies 1 and 2, default effects varied depending on the default's value. To assess this in Study 3, we used a logistic regression to relate top choice to the default's location, its value, and an interaction between the two. We found that wanting value fully mediated the default's influence on choice (default B = −.821,

Default's golden halo

Next, we estimated the Shift Only DDM, parameterizing the default's “golden halo” for hedonic and utilitarian gift certificates in a single-choice task. The model used participants’ rating of how happy they would be to receive a gift certificate (a five-point scale) as an estimate of the option’s wanting value. As we observe only one data point per participant, we utilized a fixed-effects estimation for each condition and repeated the estimation 100 times to obtain a measure of parameter variance. As expected, the shift parameter was not different from zero for the baseline condition, but was positive for both default conditions (Figure W14; not preregistered; Mbaseline = −.0024; d = −.001, t(99) = −.01,

Default choice speed

Lastly, we assessed whether one-off default choices were slower. We found that participants took 340 ms longer on average in the default condition (Mbaseline = 6,356 ms, Mdefault = 6,697 ms; d = −.10, t-test of log-transformed RTs: t(1,526) = −1.78,

Study 4: Default Biases in Multi-Alternative Single Choices

In Study 4 we test several critical elements of the default choices that could not be accounted for in Studies 1–3. First, our prior studies presented only two alternatives to participants. While such choices are often present in consumer settings (e.g., fries as a default that can be swapped for salad), it is also common to have more than two options, only one of which is the default. Second, Studies 1–3 required participants to select an option regardless of whether they were keeping the default or switching to the alternative. We did this to control for effort advantages that the default often has. However, in the field, the default is frequently preselected, and no action is needed to keep it. This does not allow us to test for the possibility of faster default choice when one option is preselected, which could lend evidence toward the heuristic explanation for default choices. Lastly, Studies 1 and 2 use relatively small sample size lab-based studies. Study 4, like Study 3, increases generalizability by increasing the sample size and using a more representative participant pool.

To address these points, in Study 4 we first tested a default with five options instead of only two, allowing us to test defaults outside two-alternative forced-choice (2AFC) tasks. Further, one of the five options was preselected for participants. This allowed us to test defaults—in particular, our finding that defaults are less heuristic-based than previously assumed—in a common situation in the field, in which the physical effort costs of selecting the default are less than switching to the alternative. In addition, the choice of a color does not impact participant rewards, but rather what they see on the screen. This extended our findings from consequential incentive-compatible designs to another context in which defaults are not consequential for reward outcomes (although some may argue that seeing your chosen color on the screen is a reward in itself).

Studies 1–3 used keep/switch choice labels. Although such language is used in the field (e.g., “switch to a salad” when fries are the default), this is not always the case. In both the color and food choice tasks in Study 4, participants made choices with a default but without labels. Instead, they selected the radio button for that option, which allowed us to confirm our findings without the possible biasing effect of labels. We used the repeated-trials food choices task to estimate the DDM on an individual level without labels. As in Study 3, we do not include language about the default being preselected for them by an algorithm, allowing us to test whether this induced an endorsement that enhanced the default's value.

Method

Procedure

Prolific (www.prolific.co) participants (N = 247; 120 female, 121 male, 6 nonbinary; Mage = 38 years, SD = 13) completed three tasks: a color choice task, a food choice task, and a food ratings task. In the color choice task, they made a single choice between five hues of blue color (Figure W17a). They were told that the default would be highlighted in this color throughout the task. Participants were randomly but equally sorted into which color was preselected, and the radio button for that option was preselected. The instructions did not mention that one option would be preselected.

Next, in the food choice task, participants made 100 choices between two foods (Figure W17b-c). Participants were told that one food was sometimes preselected for them, and that it would be indicated by being inside a box in the same blue hue they selected in the color choice task. Condition was randomly determined per trial, with fewer than five trials of the same condition in a row. Default location (left or right) was randomized. Then, participants rated each food on how much they would want to eat it. Lastly, they filled out demographic questions.

In the food choice task, food pairs were fixed across participants and designed such that the average participant would like both options similarly well. This was accomplished using the food ratings from our prior studies (193 unique foods across 538 participants). Pairs were selected based on the highest average and lowest standard deviation of reported wanting values. Pairs were both rated to be either healthy or unhealthy, determined using mean health ratings. This resulted in 21 common foods such as almonds, chips, apples, bananas, cookies, and grapes.

Sample size was determined in the following manner. Power analysis in Study 3's hedonic condition indicated that 212 participants were needed to detect an effect at

Results and Discussion

Defaults bias, but do not hasten, choice

In the color choice task, participants chose the color they wanted the default to be highlighted in for the following food choice task. There were five options, each a different shade of blue, and the default option was randomized (Figure W17a). Participants expressed preference for the colors, with bright and dark blue being favorites (selected colors: aqua = 10%, bright = 27%, turquoise = 14%, indigo = 22%, dark = 27%). Despite this, participants were more likely to keep the preselected color compared with the chance rate of 20% (27%; z = 2.59,

Next, in the food choice task, participants made 100 binary food choices in which the default was highlighted by a blue box, and participants clicked the radio button beneath their choice (Figure W17b-c). No “keep” or “switch” labels were used, unlike in Studies 1–3. The default was chosen more often than chance (56% of the time; d = .39, t(237) = 6.06,

Model selection

Lastly, we performed an exploratory (not preregistered) DDM comparison. Although the task instructions do note that one option was “preselected for you,” we made three important departures from Studies 1 and 2 to eliminate biases that could have given the Shift Only model an unfair advantage. First, participants were told that an “algorithm” selected the default in Studies 1 and 2, but we do not use this language here. Second, in Studies 1 and 2 participants rated foods on taste and health beforehand. Here, they do not, so participants would not believe that we used their preferences to construct choices. Therefore, an endorsement process explanation (the Shift Only model) is less likely. Third, the words “keep” and “switch” were not used, decreasing the possibility that we are unnaturally inducing an endowment with “keep” language. Despite these changes, the Shift Only model remained the best-fitting value function (vs. the Gain Only model; BIC medians = 215, 230; d = −.13, W = 5727, z = −7.28,

Default's golden halo

In default trials, the DDM shift parameter was greater than zero (M = 2.57; d = 1.42, t(226) = 21.46,

Default choices: fast or slow?

Results of our previous studies and the color choice task continued to reject H3, that defaults would engage a heuristic that reduced decision time. In the food choice task, we also assessed whether default trials sped or slowed choice. We found that there was no difference in mean RTs between conditions (Mbaseline = 2.257 s, Mdefault = 2.261 s; t-test of log-transformed RTs, d = .01, t(225) = −.82,

Study 4 demonstrates the process underlying default bias beyond 2AFC repeated-trial measures, to a new choice context of aesthetic preferences in which one option had already been ticked, and to a more generalizable large sample size. We also demonstrate that the golden halo conferred on the default option holds when simply indicating the default by highlighting its box with a distinct color, rather than using (perhaps heavy-handed) “keep” and “switch” labels. We also confirm that defaults do not seem to engage a heuristic that speeds up choice and reduces the evidence required to decide.

Discussion

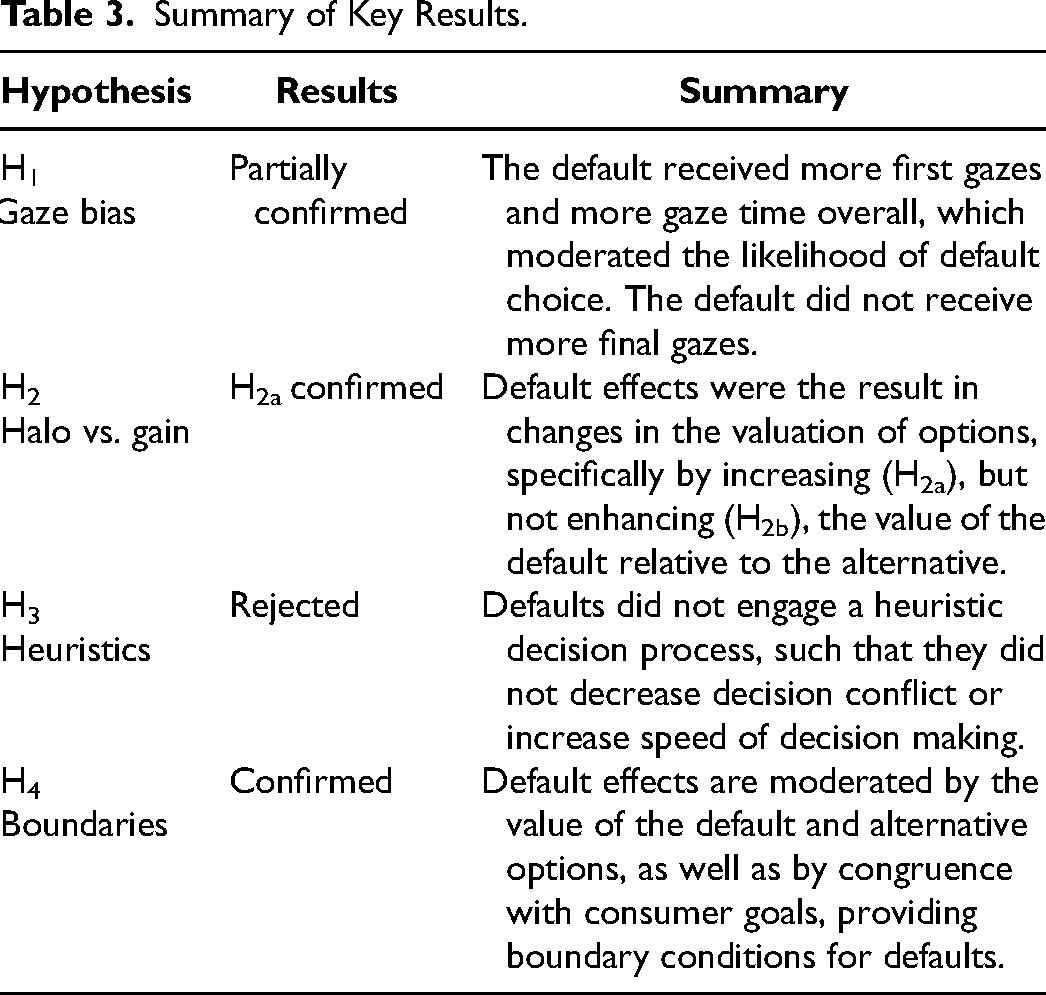

Across four studies, we found that designating one option as a default in simple binary choices lent it a “golden halo”—that is, shifted its value positively relative to an alternative, leading to preference reversals in choice. Table 3 summarizes our key findings. In Studies 1 and 2, we hypothesized and found that defaults received a greater proportional share of attention, lending them an additional advantage (H1). Study 1a, in which one option was highlighted but language about preselection was omitted, did not show the same attentional or choice bias. This supports the conclusion that the attentional effect found in Studies 1 and 2 is not due to the visual salience of the default but, rather, to the status conferred on the default. Across Studies 1–4, we find that this status lends the default an additional amount of positive value, rather than an amplification of value, as would be expected from an attentional effect alone (H2a). This was confirmed in Studies 1 and 2 by comparing a series of 13 alternative explanations for default effects in the decision process, and was tested against a common model of attention's influence on choice, which has been demonstrated across a range of studies to predict choice better than a model without it (Krajbich, Armel, and Rangel 2010; Krajbich et al. 2012; Smith and Krajbich 2018; Tavares, Perona, and Rangel 2017). Indeed, this model and our other analyses indicated that gaze bias was a significant driver of choice. Yet, the default's golden halo remained. This indicates that although the default's ability to attract a larger share of visual gaze in binary choices is a main driver of default bias, its perceived enhanced value is a driver as well. The simple value increase allowed by the Shift Only model explains choices and response times better than a predecisional starting bias alone or combined with the positive shift, as well as compared with the attention-based Gain models as well. This finding is consistent across studies, even when language that an algorithm was used to select the default, or the suspicion that participants’ preferences were used to construct choices, were removed, indicating its robustness.

Summary of Key Results.

Defaults are often considered in the realm of heuristics, but our results contradict this assumption in simple choices. We test a range of ways in which defaults could be considered to operate according to heuristic principles and find no evidence for this hypothesis (H3). In fact, what evidence we do find indicates that defaults are more likely to induce a slower, more cautious, and deliberative decision process—more in line with that proposed by Brown and Krishna (2004). We hypothesized that defaults would behave in ways that indicate their heuristic nature—first, that there would be a significant bias toward selecting the default at the outset of choice, before options are identified or considered. In fact, this “starting bias” was the only explanation considered in a recent investigation of default effects and other nudges (Zhao, Coady, and Bhatia 2022). Across multiple studies, some with large, generalizable sample sizes, we find no evidence that this is the case. In fact, when estimating a starting bias in addition to the golden halo, the starting bias drops to zero. This suggests that the golden halo absorbs all of the variance in default bias, leaving nothing for the starting bias to do. Further converging evidence against a default heuristic comes from a lack of effect on lengthening RTs, reducing decision thresholds (the amount of evidence required to make a choice), the decreased rate at which information accumulates, or the increased time required to estimate option values and indicate a response.

In Studies 1 and 2, we first isolated the influence of default status from several other unrelated (but often co-occurring) advantages to understand the influence of default status itself on the decision process. First, we eliminated the effort cost to switch to the alternative that is common in the field. Second, there may be a lack of awareness regarding the ability to swap the default for an alternative, which we eliminated, as both options were presented simultaneously. Third, the default and alternative did not differ in familiarity, which eliminated the advantage that that people are often more familiar with defaults. Fourth, the consequences of keeping the default are often not felt immediately, or at all, in many examinations of default bias, which often use hypothetical scenarios or choices whose consequences will not be actualized for months or years (e.g., organ donation, retirement portfolio selection). This temporal distancing could have reduced the care with which people treat these choices and therefore made participants more susceptible to biases like the default effect. Here, participants were expected to consume one food randomly selected from their choices immediately after the tasks or could be emailed a gift certificate within a few days; thus, the consequences of choosing a less-preferred option, simply because it had been preselected, would be felt imminently. Despite removing these advantages, we find that defaults are selected more often than their alternatives.

These effects, in Studies 1 and 2, increased the likelihood that participants made indulgent dietary choices, consistent with behavior observed in natural settings (e.g., restaurants) where defaults are typically tastier and less healthy than their alternatives. This was particularly true for those induced with a background health goal; their choices shifted more significantly when indulgent defaults were presented, compared with when no default was present. Study 3 expanded this finding to a new choice domain, gift cards, in which participants made choices between hedonic options like ice cream and more practical ones like home improvement stores. Our results indicate that replacing hedonic defaults with ones better for the consumer in the long run could be a powerful choice architecture intervention—one that improves consumer choice even in individuals with hedonic taste-driven goals. Our results indicate that interventions introducing healthier defaults—or eliminating them altogether—may have a large influence on choice. Indeed, previous research suggests that a default healthful menu increased healthy choice likelihood by 48%, even though an indulgent menu was available as an alternative (Downs, Loewenstein, and Wisdom 2009), and increased the number of healthy children's menu choices at Disney World (Peters et al. 2016). Although those studies confounded the default effect with effort effects (i.e., it was more work to switch to the alternative menu), our results demonstrate that defaults can influence choice even without that advantage. For example, a restaurant menu highlighting a healthier entree or side dish that had been preselected by the chef could increase the number of healthy choices made by customers. Similarly, diversified retirement packages could be highlighted as the default preselected option without requiring any additional effort or obfuscation of less beneficial options.

Additional research could help shed light on how defaults influence choice in the field. One key limitation of our work is that we tested fairly simple choices, usually between just two items in which both were presented prominently, whereas defaults can be employed in the field in much more complex contexts. For example, when a consumer encounters a choice among insurance or retirement plans, they may face a large number of alternatives, one of which is featured more prominently. Although we use incentive-compatible experiments, defaults could have different effects in real-world settings with greater incentives, higher involvement, competing demands on attention that could introduce distraction, and the presence of risk. Future work could test whether and when a heuristic is instead deployed for such contexts. Further, participants’ choices determined the only option that participants received; however, dietary defaults in restaurants are more likely to be accompaniments, such as fries with an entrée that can be replaced by a salad. Further research could investigate the nuance of defaults in dietary choice to see if they are more or less powerful for side items.

Participants may have believed that the default was chosen for them in some meaningful way or was a recommendation. To reduce this suspicion, we did not collect preference ratings until after choice tasks. Although the preferred option was not more likely to be the default option, participants in Studies 1 and 2 could have suspected that defaults were designated using the taste and health food ratings collected beforehand. This can be seen as a limitation of the study design. However, we note that this default-as-recommendation suspicion is likely to exist for many defaults in consumer settings, and its effects could lead to both the positive shift in value we see in this study and the more general effects of defaults when consumers make choices. To further attempt to eliminate this concern, in Studies 3 and 4 we included no prechoice ratings and provided no information about how or why their default was preselected.

In Study 2, we induced background goals to test whether defaults that conflict with consumer goals can influence choice. We did this through task descriptions that highlighted the benefits of eating what is either healthier or tastier, with the intention of making those benefits more accessible. This manipulation alters both the processes and outcomes of choice, such as by changing the amount of taste information that is considered during option comparison (e.g., Sullivan and Huettel 2021). However, we emphasize that inducing goals in this way is susceptible to demand effects (e.g., Khademi et al. 2021; Sturm and Antonakis 2015; Zizzo 2010), which could limit its real-world relevance.

Study 1, Study 2, and the second portion of Study 4 all utilize a repeated-trials design to assess the process underlying defaults. There has been concern regarding the validity of repeated-trials tasks (Li et al. 2022), specifically that behavior changes over the course of the task. To address this concern, we ensured that both behavioral and gaze results are robust across trials (e.g., Figure W2), which Li et al. (2022) note is a key step for establishing the validity of a repeated trials task. The behavioral and modeling results of Studies 3 and 4 confirm our findings in one-off choices, using nationally representative large samples. Yet, we cannot rule out the possibility that external validity is harmed in the repeated-trials tasks.

With the exception of one question in Study 4, our questions were all binary choice (2AFC) tasks. Although 2AFC tasks have high internal (Barakchian, Beharelle, and Hare 2021) and external (Kang, Lindell, and Prater 2007; Linley and Hughes 2013; Natter and Feurstein 2002; Quaife et al. 2018) validity, they are different from many real-world choices, and therefore those results could have reduced external validity. To address this, we confirm our results in a five-option choice in Study 4, which indicates that our findings are not exclusive to 2AFC lab studies or to repeated-trials studies but could generalize to a wider class of decisions in the field. However, our results cannot extend past simple choices like the ones we test here. For example, we cannot argue that they extend to complex, high-involvement, or high-risk choices.

In this article, we claim that the default receives a positive bonus in value, but our model cannot mathematically differentiate between this and a discount for the alternative. Neuroimaging evidence provides some indication that a positive default shift is more likely; keeping defaults activates regions consistently shown to be activated by various types of rewards (Yu et al. 2010). However, our data can only indicate that the default has a

The DDM is a popular class of decision models in cognitive psychology, but its use in marketing has been limited. This work demonstrates the DDM's ability to differentiate among competing theories for the process underlying a consumer choice nudge. Our work also highlights the importance of testing multiple alternative models. For example, recent work indicated that a starting bias exists in default choices (Zhao, Coady, and Bhatia 2022), but our work indicates that this is only the case when a shift is not

Looking beyond our behavioral results, our eye-tracking and modeling results have implications outside of the context of food, gift card, and aesthetic choices. While default bias is a well-established behavioral phenomenon, there has been little theoretical consensus as to its mechanisms. Defaults are commonly thought to engage a simple choice bias or heuristic; designating an option as a default would act as a signal for the right choice and make decisions faster and easier (Kahneman, Knetsch, and Thaler 1991). Our data indicate that that defaults in simple choices instead may increase the evidence required to make a choice and alter how different features of the decision shape the choice process. Specifically, defaults conferred more positive value, in some cases reversing the preference between the default and alternative. This suggests that the influence of nonbinding defaults could extend to any context. For example, introducing a default option in financial investing may not only influence choice (Basu and Drew 2010; Choi et al. 2004; Cronqvist and Thaler 2004) but also alter how consumers weigh potential returns. In health care, a doctor indicating a default may differentially influence patients’ perceptions of a drug's efficacy. Although many studies on defaults in clinical settings focus on physician choice—that is, choices of an expert on behalf of their patient (e.g., Ansher et al. 2014; Hart and Halpern 2014; Patel et al. 2014)—our research indicates that defaults could offer benefits for the patient themselves as well. For example, consumer defaults could make difficult choices seem more palatable in a manner similar to another “golden halo”: the placebo response in pain treatment (Humphrey 2002; Miller and Rosenstein 2006).

Altogether, across four studies we find that participants exhibit a significant default bias, even when the typical contextual advantages that co-occur with defaults are removed. Using a combination of cognitive modeling and process tracing, we present evidence that this bias is primarily driven by the “golden halo”—an increase in perceived value—the default receives when participants are comparing their options, combined with increased attention to the default. Further, we delineate the boundaries of default effects, when and how they can best be used to bias choice, and when they may be an ineffective intervention. This set of studies not only suggests a mechanism by which defaults receive an advantage during the decision process but also demonstrates the power of this intervention for improving choice.

Supplemental Material

sj-pdf-1-mrj-10.1177_00222437241303738 - Supplemental material for The Golden Halo of Defaults in Simple Choices

Supplemental material, sj-pdf-1-mrj-10.1177_00222437241303738 for The Golden Halo of Defaults in Simple Choices by Nicolette J. Sullivan, Alexander Breslav, Samyukta S. Doré, Matthew D. Bachman and Scott A. Huettel in Journal of Marketing Research

Footnotes

Acknowledgments

The authors thank the UCLA Anderson School of Management Marketing Seminar group, University of Toronto Marketing Seminar group, Amitav Chakravarti, and Heather Barry Kappes for their feedback on this work.

Coeditor

Rebecca Hamilton

Associate Editor

Eric Johnson

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Duke University Institutional Funds.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.