Abstract

Data reporting in the Australian education system produces deficit-based comparisons of academic achievement between Indigenous and non-Indigenous students. Since 2009, data from the National Assessment Program – Literacy and Numeracy (NAPLAN) have been used by the Australian government to allow it to report on ‘Closing the Gap’ policy. These data are used to justify government-funded initiatives designed to improve educational outcomes for Indigenous students. While the logic of this approach has political cachet, the ‘gaps’ have persisted. Instead, we propose using ‘within-cohort, peer matching’ quantitative methods to achieve such aspirational goals. Using publicly available NAPLAN data, we examined patterns of relative performance within the cohort of Indigenous students. We assessed trends in Indigenous students’ performance in NAPLAN relative to their Indigenous peers across grades (3, 5, 7, and 9), states and territories, remoteness categories, and calendar years from 2010 to 2019 inclusive. Key insights available through this approach include detailed patterns of higher and lower relative performance for Indigenous students within matched remoteness categories, across states and territories, and over time, laying the groundwork for further analyses of the local, systemic, and school-level factors associated with these differing outcomes. Our methods provide novel findings that justify changing the deficit assumption, supporting the use of within-cohort peer matching instead.

Keywords

1 Introduction

Data-led pedagogical decision-making is a growing area of interest within education research and practice that aims to develop insights from quantitative education data to support evidence-based best practice (Schifter et al., 2014; Mandinach et al., 2015; Schildkamp, 2019). There are significant opportunities for a data-led approach to build greater understanding of patterns of educational performance among Indigenous 1 students within a national education system and through comparative methods internationally. A data-led approach can be insightful in its own right, and guide more effective investigation of the systemic and school-level factors that facilitate support and improved outcomes for Indigenous learners. Historically in Australia, comparative statistical methods have been used to report ‘gaps’ in achievement between Indigenous and non-Indigenous cohorts across government services, including education. Such statistical comparisons underpin numerous Indigenous education policy debates – for example, an assumed notion of ‘Closing the Gap’ has provided an appealing narrative that acts as a symbolic gesture towards directing assistance to the Indigenous population of Australia (Department of the Prime Minister and Cabinet, 2018).

The ‘Closing the Gap’ approach for education relies on data gained by comparing test scores achieved by Indigenous and non-Indigenous children in the National Assessment Program – Literacy and Numeracy (NAPLAN), the annual national assessment for all students in Years 3, 5, 7, and 9. This is the only nationwide assessment that all Australian children undertake (https://www.nap.edu.au/). This paper diverges from the typical statistical reporting undertaken by the Australian federal, state, and territory governments, by employing a within-cohort peer matching quantitative approach. We do this in order to examine data-driven patterns of education achievement by Indigenous students, undertaking a closer statistical examination of what is known about the education achievements of Indigenous students as a defined cohort. By comparing Indigenous students’ test scores to the performance of their age-matched Indigenous peers around the country, we depart from a presumptive, deficit-oriented approach that tends to focus on the ‘gap’ in Indigenous students’ underperformance relative to non-Indigenous students. Instead, our approach reveals that a within-cohort peer matching approach can deliver an evidence base to guide an improved understanding of what is working well and what is not working to enable educational success for Indigenous learners. Our aim is to shift away from a deficit-oriented perspective that traditionally compares Indigenous to non-Indigenous students. Our study instead focuses on identifying disparities within the Indigenous student population, stratified by variables such as year, grade, and matched remoteness categories. This nuanced approach allows us to move beyond generalisations and offers a more targeted understanding of educational outcomes within various subgroups of Indigenous students. These findings are not examining one ‘gap’ between two cohorts, but instead looking at a spectrum of relative performance according to these different variables.

As Anderson et al. (2020, p. xxix) observed, originally the concept of ‘deficit’ was a financial term. The meaning of this word is ‘the amount by which a sum of money, or the like, falls short of what is due or required’. The word has been borrowed into the field of Education Studies to describe education theories and practices that approach the learner as ‘falling short, having a deficiency’ rather than considering that it is the education system that might be failing the learner, and that the existing methods used to develop evidence-based policy are flawed at the design phase.

1.1 Rights-based perspectives in Indigenous education research

A rights-based perspective in Indigenous education research and policymaking foregrounds the rights of Indigenous Peoples in considering how data are collected, analysed, and utilised in research work and in the development and operationalisation of government policies. In 2009, Australia endorsed the Declaration of the Rights of Indigenous Peoples (UNDRIP). Professor James Anaya, the United Nations Special Rapporteur on the Rights of Indigenous Peoples, noted that despite some recent advances, Australia’s legal and policy landscape must be reformed. He recommended: The Commonwealth and state governments should review all legislation, policies, and programs that affect Aboriginal and Torres Strait Islanders, in light of the Declaration (Ananya, 2010).

In the spirit of his advice, we began collecting data that would enable us to provide a longitudinal analysis that would inform the review of the policies and programs used to govern the provision of education services to Indigenous Australians. The research design for this work is predicated on the view that data-driven research has historically not been mobilised with the rights of Indigenous Peoples in mind. The educative history of Indigenous Australia is one of trauma and violence with ‘native’ institutions established specifically to prepare Indigenous people for a lifetime of servitude through the teaching of rudimentary numeracy and literacy skills (Anderson et al., 2022). Therefore, it is through a rights-based approach that foregrounds and privileges an Indigenous standpoint, that we may begin to deconstruct a system designed to exclude and oppress by uncovering the underlying contextual factors that impact Indigenous students’ rights to education. The rights-based approach, together with a raft of Australia’s policy commitments to social and economic justice for all Australians, has created a very positive environment in which to consider alternative approaches to the use of large publicly available datasets such as NAPLAN.

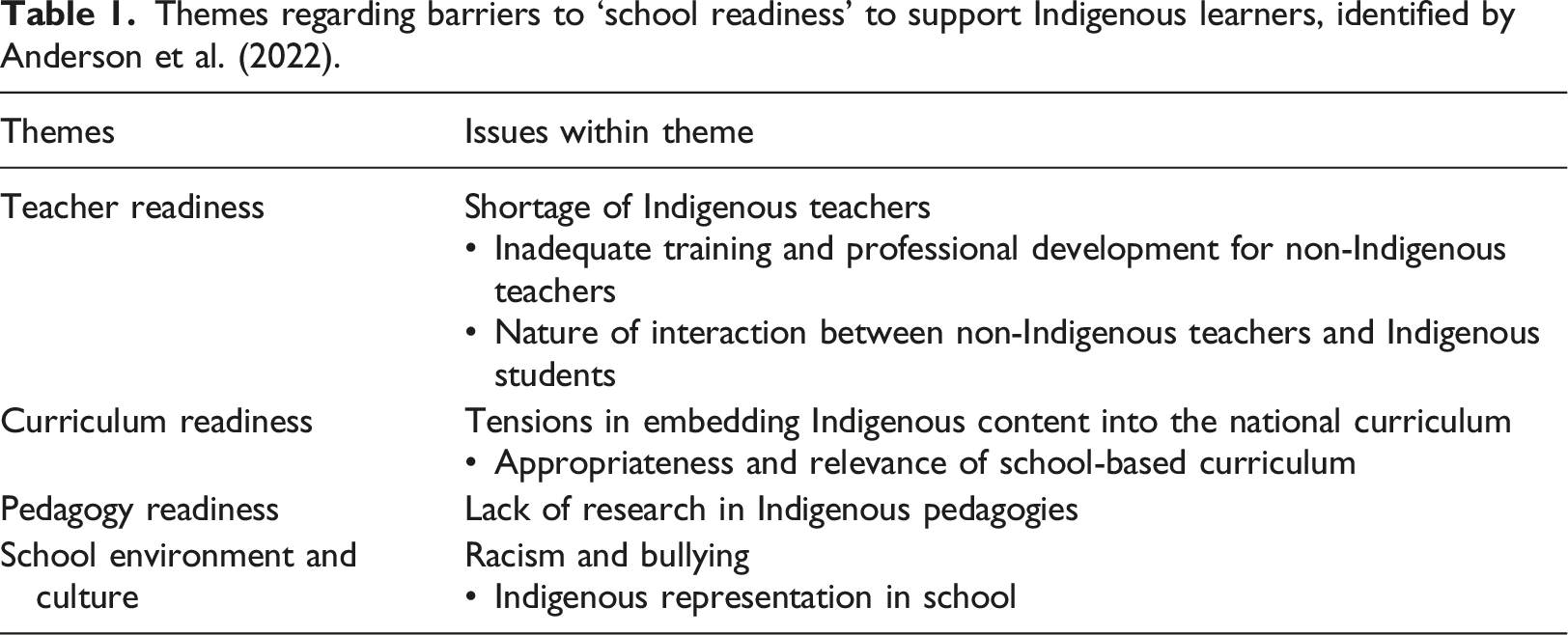

Indigenous education initiatives in Australia tend to have been framed primarily within one of the following two theoretical perspectives: social justice and self-determination. These frameworks rightly advocate for the inclusion of Indigenous peoples and voices in education initiatives that concern them. However, Anderson et al. (2022) found the Australian education system frequently and unjustly attributes the underperformance of Indigenous students to Indigenous culture and peoples themselves, yet schools remain systematically unprepared for fostering Indigenous academic achievement, due to a number of factors including issues around effective data collection and reporting. Given these considerations, it is both timely and essential to adopt a rights-based approach, compelling educational systems to properly implement social justice and self-determination initiatives, in order to sustain successful outcomes for Indigenous Australian students.

1.2 National testing outcomes over 10 years

Prior to 2008, each state and territory undertook student performance assessments that were not comparable. Before this time, there was no reliable data set collected nationally about Indigenous educational performance. Despite all the criticisms about NAPLAN, in the matters under consideration, the public availability of such a data set is specific to this historical moment. Therefore, the 10-year dataset (2010–2019) assembled for this work on Indigenous students’ performance in NAPLAN presents a unique opportunity for statistical analyses to provide peer matched, data-driven insights into how Indigenous students’ educational performance varies according to different spatial and demographic factors over time in Australia.

Themes regarding barriers to ‘school readiness’ to support Indigenous learners, identified by Anderson et al. (2022).

As a result of their study, Anderson et al. (2022) concluded that many Australian schools are not ready to educate Indigenous children and young adults, and that the insistence on comparing NAPLAN test scores between Indigenous and non-Indigenous children fails to account for the evident ‘gap’ in preparedness by the institutions that are testing these students.

It is acknowledged that NAPLAN is a flawed measure of educational attainment and performance for Indigenous students, being linguistically and culturally unsuitable, particularly in remote communities (Wigglesworth et al., 2011). Quantitative metrics of education outcomes tend to be shaped by the context of structural racism in policy goals, and it is important to consider quantitative success metrics critically in light of this context (Street et al., 2022). However, notwithstanding these limitations, NAPLAN data are the best available data source with temporal and spatial coverage to investigate nation-wide patterns of educational performance among Indigenous students. We also acknowledge that the NAPLAN framework is inherently city-centric and Eurocentric, falling short of equitable and culturally appropriate assessment of educational performance across diverse Indigenous communities. We aim to account for these limitations in our analyses and planned future research, keeping in mind that NAPLAN scores cannot fully capture the complexities affecting educational outcomes in remote Indigenous communities.

We designed the present research study to produce data-driven insights into high and low performance within the cohort of Indigenous students that might guide subsequent comparative analyses, using qualitative and mixed methods to investigate the school-level and systemic factors that are associated with these differences in performance. We argue that these quantitative insights offer more accurate opportunity than the conventional approach to improve evidence-based policymaking and resource allocation for Indigenous education. Our data-driven analyses form a foundational evidence base to support hypothesis generation and priority setting for further research to guide improved understanding and action in this space. Throughout the paper, we reflect on how this peer matching approach to data analysis offers more useful and detailed insights compared to a traditional deficit-oriented perspective, highlighting opportunities for future research and policy development based on patterns of relative performance among Indigenous students as a cohort in their own right. We recognise that the population of Indigenous students encompasses a diverse array of cultural and linguistic backgrounds, and this study aims to be sensitive to this diversity while focussing on general data-driven trends and patterns between different remoteness categories, grades, and over time.

Section 2 outlines the methodology for this study including research approach and design (2.1), data collection and processing (2.2), and statistical methods including exploratory data analysis and principal analyses (2.3). Section 3 presents results of exploratory analyses (3.1), and principal analyses including more detailed findings by remoteness areas and grades (3.2). Section 4 discusses key findings, linking to broader literature and implications of peer matching analysis, limitations, and future directions.

2 Methods

2.1 Research approach and methodological design

2.1.1. Research approach

Partnership with Aboriginal and Torres Strait Islander peoples: Our commitment

This research acknowledges that we live and work on the countries of Indigenous Australian traditional owners. With respect to our work in partnership with Aboriginal and Torres Strait Islander peoples, we recognise the data sovereignty rights of Indigenous Peoples and affirm that we are committed to a partnership approach in this research that works within National Health and Medical Research Council (NHMRC) and Australian Institute of Aboriginal and Torres Strait Islander Studies (AIATSIS) guidelines on the protocols for the conduct of research and evaluation with Aboriginal people as key stakeholders to ensure that our conduct is at all times culturally sensitive, guided by Aboriginal people, that there are accessible consultation opportunities, and that Aboriginal standpoints on all aspects of this work are represented accurately in our reporting.

Key research question

In this article, which analyses peer matched educational performance of Indigenous students in Australia, the key question guiding the research is: What emergent trends are revealed using a ‘within-cohort’ peer matching approach for statistical modelling of relative achievement of Aboriginal and Torres Strait Islander students in the 10-year dataset of NAPLAN test results?

2.1.2. Methodological design

Using a critical interpretative and empirical approach, the methodological design of the within-cohort peer matching analyses had two key objectives. The first was to assess the trends in the NAPLAN performance of Indigenous students across remoteness categories, by grade, and over time. The second objective was to examine the corresponding levels of consequence associated with the number of students affected by the expected relative performance level in a given area.

2.2 Data collection and processing

Prior to 2009, the practice of reporting on Indigenous-specific education data in Australia had been inconsistent, with different reporting parameters adopted by individual states and territories, together with individual state and territory-based examination of literacy and numeracy achievement. These data appeared with differing formats and reporting standards over time and across different sources. From 2009 to 2019, we collected the NAPLAN Indigenous-specific datasets that were reported annually in the Report on Government Services (Australian Productivity Commission, 2021).

In 2020, we began the process of collating the annual datasets together into a unified dataset. Despite the data being publicly available annually, there is no central repository that collates NAPLAN data over multiple years into a coherent standardised dataset. Members of our research team collated and harmonised a 10-year dataset on NAPLAN scores according to different NAPLAN domains (Reading, Writing, and Numeracy), Indigenous status, grade, state or territory, remoteness category, and calendar year from 2010 to 2019 inclusive. Data on population estimates for numbers of Aboriginal or Torres Strait Islander students at the primary or secondary level in each remoteness area were also included in our dataset, based on 2016 Census data (Australian Curriculum Assessment and Reporting Authority, 2019). This dataset has been assembled from multiple sources including Reports on Government Services (RoGS) documents (Australian Productivity Commission, 2021), NAPLAN reporting (Australian Curriculum Assessment and Reporting Authority, 2019), and the Australian Bureau of Statistics (Australian Bureau of Statistics [ABS], 2016). Data cleaning and pre-processing were conducted using the tidyverse package version 1.3.0 (Wickham et al., 2019), within the R statistical programming language version 4.0.4 (R Core Team, 2013) to collate this multi-source data into a common format. Key explanatory variables of interest in this data included grade, state/territory, remoteness category, and calendar year. Response variables were peer matched relative performance scores, described in section 2.3.1. These variables were chosen to address key objectives for investigating trends in Indigenous students’ NAPLAN performance across remoteness categories, by grade, and over time.

2.2.1. Remoteness categories: Dealing with changes in classification

Two different remoteness classifications were used in RoGS documents for reporting NAPLAN scores according to remoteness between 2009 and 2019. Until 2016, remoteness categories were defined in RoGS reports using the Standing Council on School Education and Early Childhood (SCSEEC) remoteness indicator, which includes the categories ‘Very Remote’, ‘Remote’, ‘Provincial’, and ‘Metropolitan’ (Standing Council on School Education & Early Childhood, 2012). From 2017 onwards, remoteness categories in RoGS were defined according to the Australian Statistical Geography Standard (ASGS) Remoteness Structure (Australian Bureau of Statistics, 2011), which includes the categories ‘Very Remote’, ‘Remote’, ‘Outer Regional’, ‘Inner Regional’, and ‘Major Cities’.

Due to the lack of a standardised document guiding the correspondence between these two remoteness standards, we made decisions about their concordance on the basis of direct consultation with the ABS Geography team. The categories ‘Provincial’ (SCSEEC), ‘Inner Regional’, and ‘Outer Regional’ (ASGS) were re-labelled as one combined category which we termed ‘Regional’. The category ‘Metropolitan’ (SCSEEC) was re-labelled to align with ‘Major Cities’ (ASGS). The labels ‘Remote’ and ‘Very Remote’ were preserved from both standards. This concordance approach was reasonable given that the spatial boundaries of remoteness areas are constantly evolving, and we were interested in assessing the high-level trends associated with these four remoteness categories that demonstrate stable and substantial differences over time.

In order to assess the effect of merging two ASGS categories into the ‘Regional’ category between 2017 and 2019 we conducted a sensitivity analysis, for which the details are reported in Section 3 of the Supplemental Materials. On the basis of this sensitivity analysis, we merged ‘Inner Regional’ and ‘Outer Regional’ into one new category labelled ‘Regional’ for our main analyses, in order to implement a consistent set of remoteness category labels across the full 10 years of the dataset.

It is important to note that this study employs ABS remoteness categories based on road distances from populated localities to various types of service centres. These categories are designed to offer a standardised, nationally consistent measure of geographic remoteness, but they do not account for cultural and linguistic diversity, or other demographic factors.

2.2.2. Population estimates: Addressing a lack of detailed estimates of student populations disaggregated by explanatory variables

In addition to modelling the relative performance of Indigenous students in different remoteness categories across states/territories, we also designed the analysis to investigate the corresponding ‘consequence’ level in terms of how many students were affected by the relative performance level in each area. Unfortunately, data were not publicly available from the ABS or other government sources to provide student population estimates according to all of our explanatory variables including grade, Indigenous status, state/territory, remoteness category, and calendar year. From ABS Census data, the best available data on student population estimates was presented according to all of these variables except for grade, and instead was reported according to the number of students enrolled in primary or secondary school. To assess the relative size of the population of Indigenous students corresponding to expected relative performance for a given area, we used these primary/secondary student population estimates as the best available proxy for the corresponding number of students affected. These population estimates were used in generating Figures 2 and 7 in Section 3.2 of the Results, and Supplemental Figures S3, S4, and S9–S11.

2.3 Statistical analyses

In order to assess how Indigenous students’ NAPLAN performance varied relative to their peers across the national cohort, we rescaled scores for each area to be represented as peer matched relative performance scores. In the initial exploratory analysis phase, we obtained univariate and multivariate statistical summaries of the data (mean, median, standard deviation, and pairwise correlations) and inspected the empirical distributions (histograms, boxplots, and barplots) of each variable. We also applied nonparametric regression trees to evaluate the relationships between the explanatory variables and the responses, and to identify variables with substantial explanatory power in this dataset (Breiman et al., 1984). The regression tree approach is described in Section 2.3.2. In the subsequent primary analysis phase, we implemented multiple linear regression models to investigate differences in expected performance according to the spatial, temporal, and sociodemographic factors described above. These models are detailed in Section 2.3.3.

2.3.1 Peer matched relative performance scores

NAPLAN scores in each domain were rescaled so that in each geographical area, the relative performance score represented the difference in performance for students in that area compared to the national average for all Indigenous students in the same grade in that calendar year. Relative performance scores range between −281.5 and 61.3, with a score of 0 indicating no difference from the national average for that grade and calendar year. This range is negatively skewed because Remote and Very Remote areas tended to have both poorer relative performance and smaller populations.

In addition to enabling comparison of Indigenous students to their peers in the same grade, reporting the outcome data in this way also enabled clearer interpretation of variation in performance across different remoteness areas, and ensured that relative performance was comparable on a similar scale across grades (as raw NAPLAN scores increase from grade 3 to grade 9).

2.3.2 Regression trees

Regression tree models were fitted for each NAPLAN domain to identify how Indigenous students’ relative performance scores were divided according to the available explanatory variables. Regression tree analysis is a nonparametric decision tree method that segments data into subgroups with similar responses, based on a set of splits of the explanatory variables (Breiman et al., 1984). For these models, the response variable was peer matched relative performance scores in the corresponding NAPLAN domain (see Section 2.3.1), and the candidate explanatory variables were state/territory, remoteness area, grade, and calendar year. This method also identifies the relative importance of each of the explanatory variables in segmenting the responses.

We applied regression trees using the R package ‘rpart’, version 4.1–15 (Therneau & Atkinson, 2019), with the ANOVA option (as appropriate for continuous data), a minimum of 10 observations required in each node in order for a split to be attempted, and a complexity parameter of 0.01 indicating that a new split must improve the model fit by a factor of 0.01 in order to be included in the final model results. Stability of regression tree models was assessed using bootstrapping stability analysis with the R package ‘stablelearner’, version 0.1–4 (Philipp et al., 2016).

2.3.3 Multiple linear regression models

We implemented a multiple linear regression model for data in each NAPLAN domain (Writing, Reading, and Numeracy), using the base package stats in R (R Core Team, 2013). The response variable for each model was peer matched relative performance scores in the corresponding NAPLAN domain (see Section 2.3.1). Each model included direct effects and two-way interactions for the following explanatory variables: calendar year, state/territory, remoteness category, and grade. Calendar year was treated as a continuous variable, to investigate the presence of any trends over time. State/territory, remoteness category, and grade were all input as categorical variables.

In linear regression, each categorical variable requires a reference (baseline) category. The following reference categories were selected in our regression models. For state/territory, New South Wales (NSW) was chosen as the reference category as it has the largest population of Indigenous primary and secondary school students. For grade, ‘Grade 3’ was chosen as the reference category as it has the largest number of Indigenous students enrolled relative to other grades in which NAPLAN testing is conducted. For remoteness areas, ‘Regional’ was chosen as the reference category as it was the category represented across the largest number of states/territories, being present in every state/territory except ACT.

We assessed statistical assumptions for multiple linear regression using a variety of model diagnostics with the performance package in R, version 0.8.0 (Lüdecke et al., 2021). Goodness of fit for regression models was assessed using R2 values, indicating the proportion of variation in the response variable that is explained by the explanatory variables in the model.

Results of the multiple linear regression models were presented and interpreted in terms of expected marginal means, which are the estimated average value of the response variable for a chosen explanatory variable (or set of variables), holding other variables constant at their average value. Expected marginal means are arguably more interpretable than regression parameter estimates, since the magnitude and direction of the latter are dependent on direct and interaction terms across multiple categorical explanatory variables. Expected marginal means were calculated using the effects package, version 4.2–0 (Fox & Weisberg, 2018a; 2018b), and the ggeffects package, version 1.0–2 in R (Lüdecke, 2018). Plots were generated using the package ggplot2, version 3.3.5 (Wickham, 2016). Throughout this paper, we use the term ‘expected relative performance’ to refer to the expected marginal mean for relative performance scores from the model.

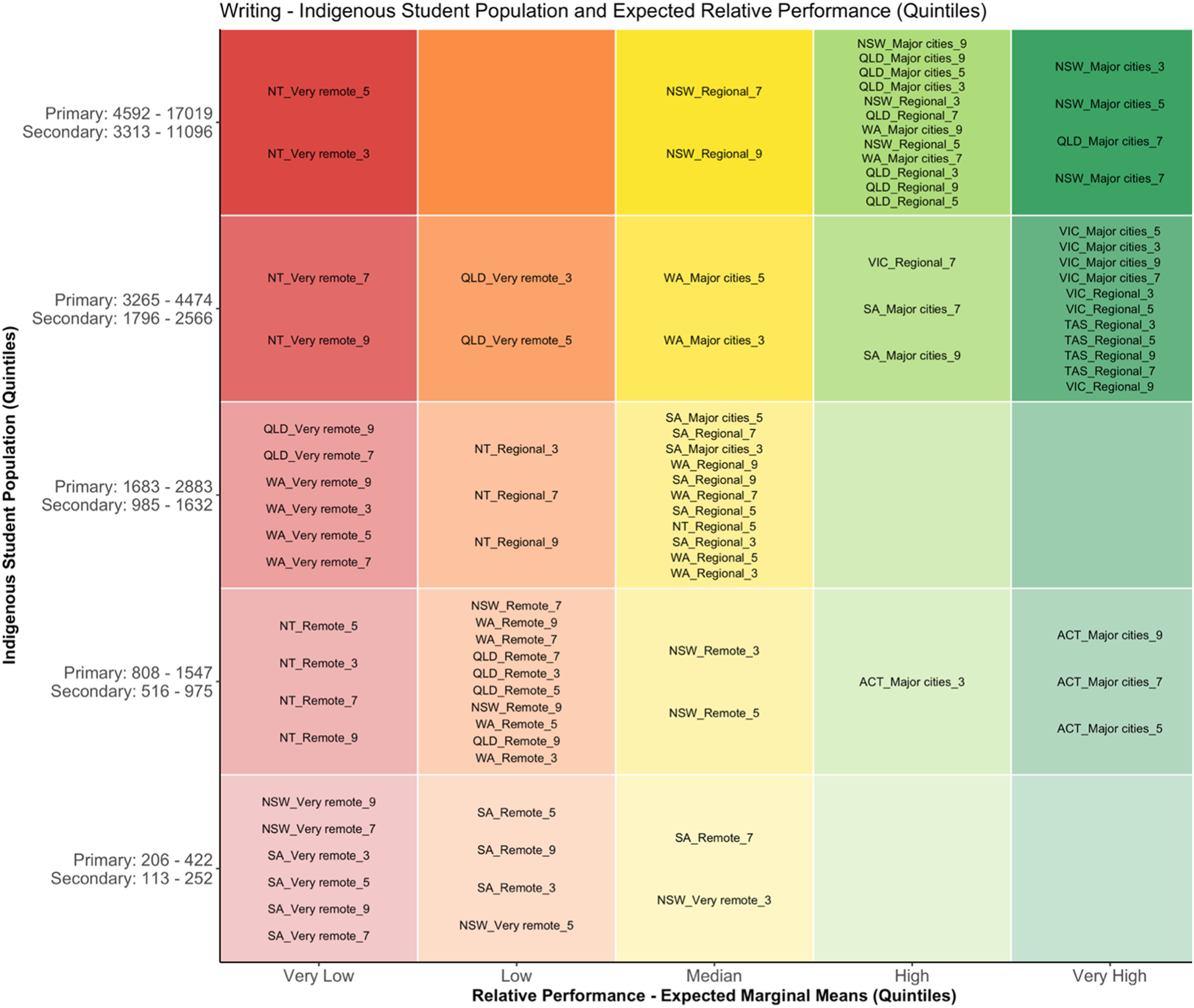

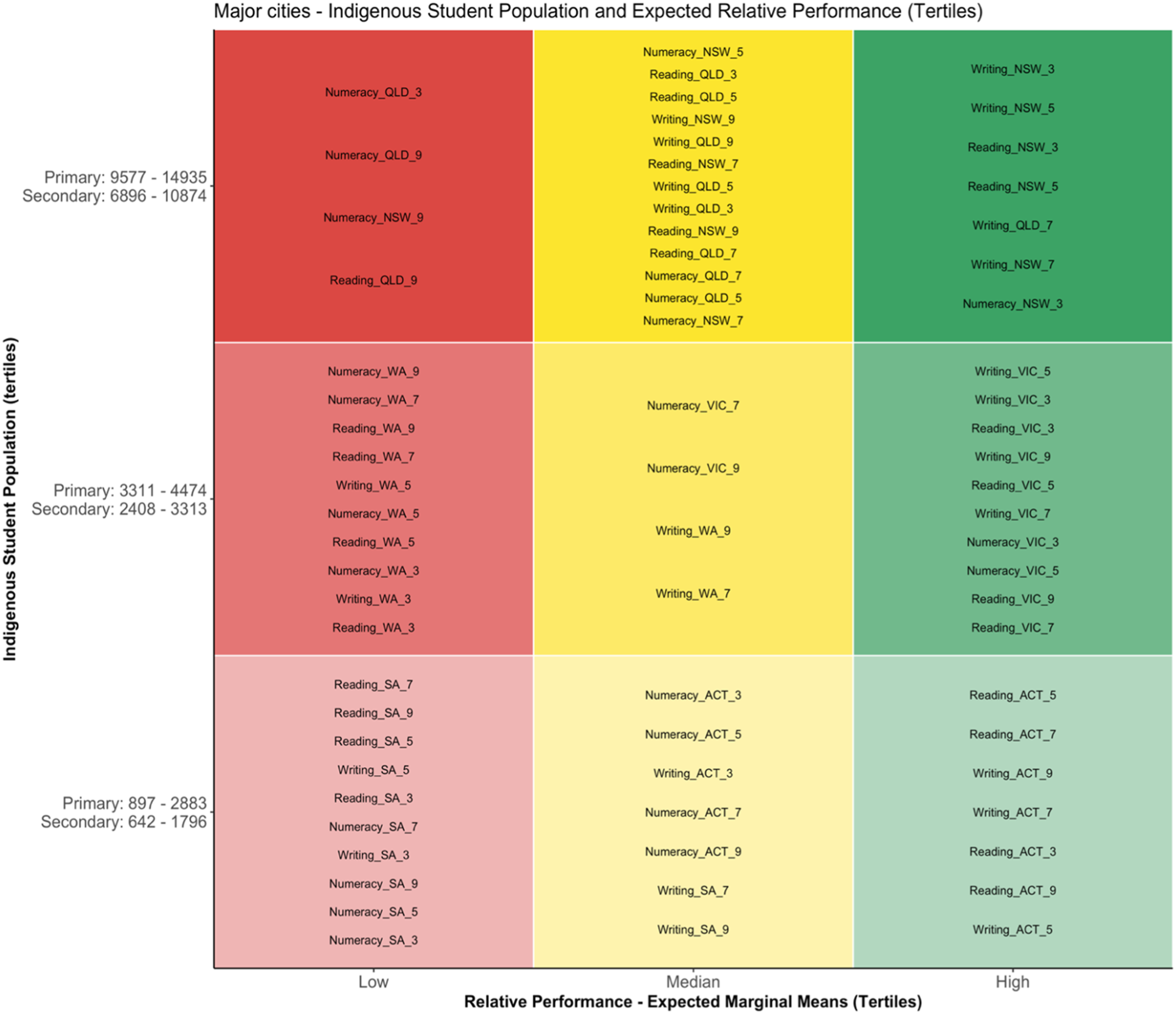

2.3.4 Visualising peer matched performance: Matrix plots

Matrix plots were chosen as a way of summarising and visualising the primary findings of this paper, based on the regression analyses (Figures 4, S3 and S4). As a guide to interpreting these plots, areas appearing in the upper left region represent low expected performance scores affecting a large population of Indigenous students, whereas areas appearing in the upper right region represent high expected performance scores affecting a large population of Indigenous students, and so on. Population quintiles were calculated separately for primary and secondary student populations and indicate how many Indigenous students were enrolled in that state/territory and remoteness area, relative to all other remoteness areas across the country according to 2016 Census data. We used a similar approach to generate Figure 7 and Supplemental Figures S9–S11, displaying expected performance and population within remoteness categories, but these figures use tertile bands (0%–33%, 33%–66%, and 66%–100%) instead of quintiles given the smaller number of datapoints in each of these subsets.

3 Results

Given the large body of outputs generated from our analyses, the results presented in this section alternate between NAPLAN Writing, Reading, and Numeracy domains. Full results for all three NAPLAN domains are included in the Supplemental Materials.

3.1 Exploratory data analysis

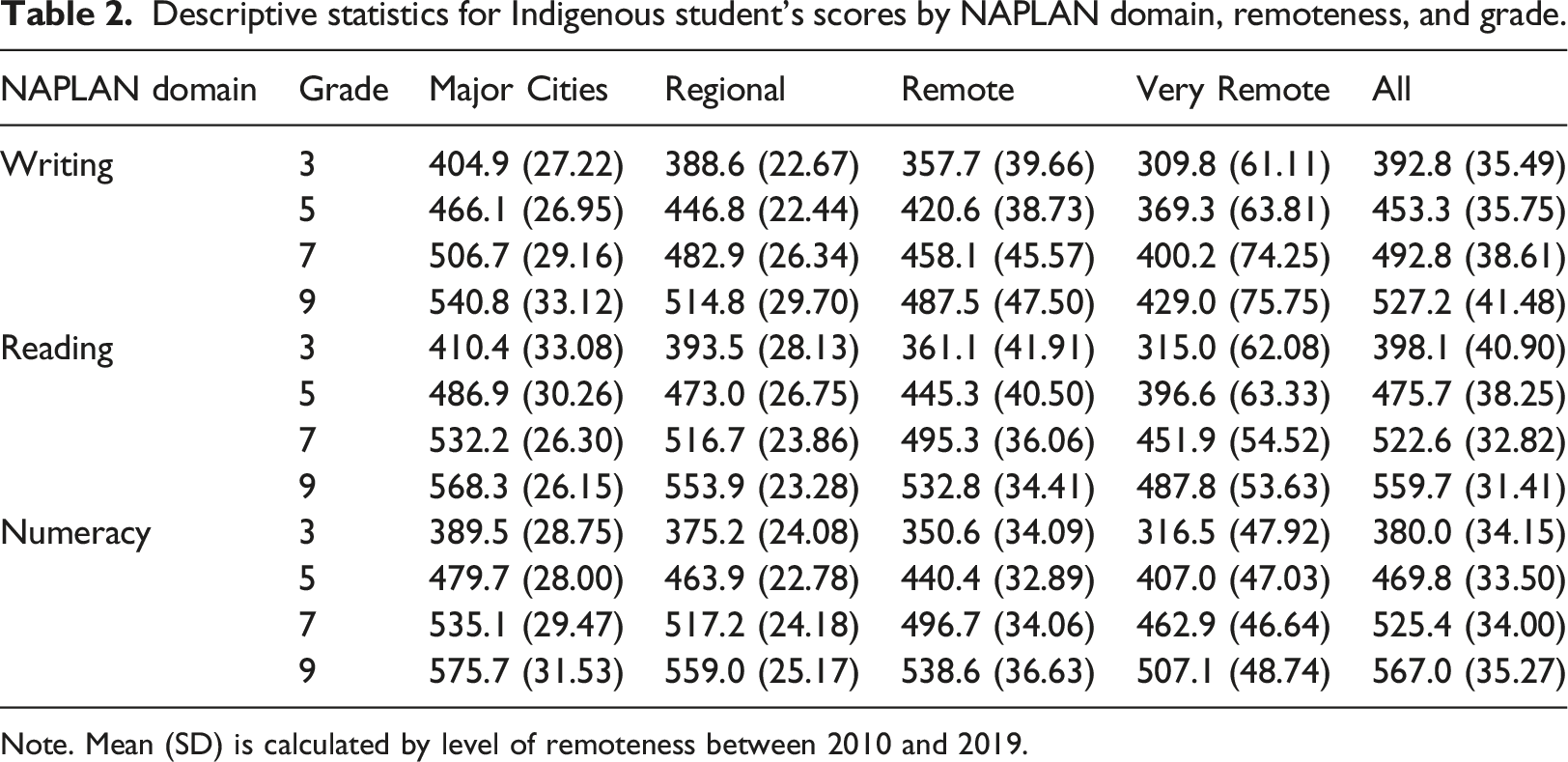

Descriptive statistics for Indigenous student’s scores by NAPLAN domain, remoteness, and grade.

Note. Mean (SD) is calculated by level of remoteness between 2010 and 2019.

Second, with respect to remoteness areas, the overall trend is that performance decreases with increasing remoteness. However, variability in NAPLAN performance is also higher in more remote areas. In particular, the standard deviation for NAPLAN scores (calculated across remoteness areas nationally) shows larger variability in performance in Remote and Very Remote areas. This indicates that there are comparatively highly successful schools in Remote and Very Remote areas across all grades.

Third, in addition to the wide variation in numbers of Indigenous children across remoteness areas, there is also very wide variation across states and territories, with a substantial majority of Indigenous students residing in NSW (55,608) and QLD (48,675), moderate populations in WA (18,033), NT (12,493), VIC (11,736), and smaller populations in SA (8,553), TAS (6,178), and ACT (1,539) according to 2016 Census estimates. Supplemental Table S1 provides the estimated number of Indigenous students in each remoteness category by state/territory from 2016 Census data.

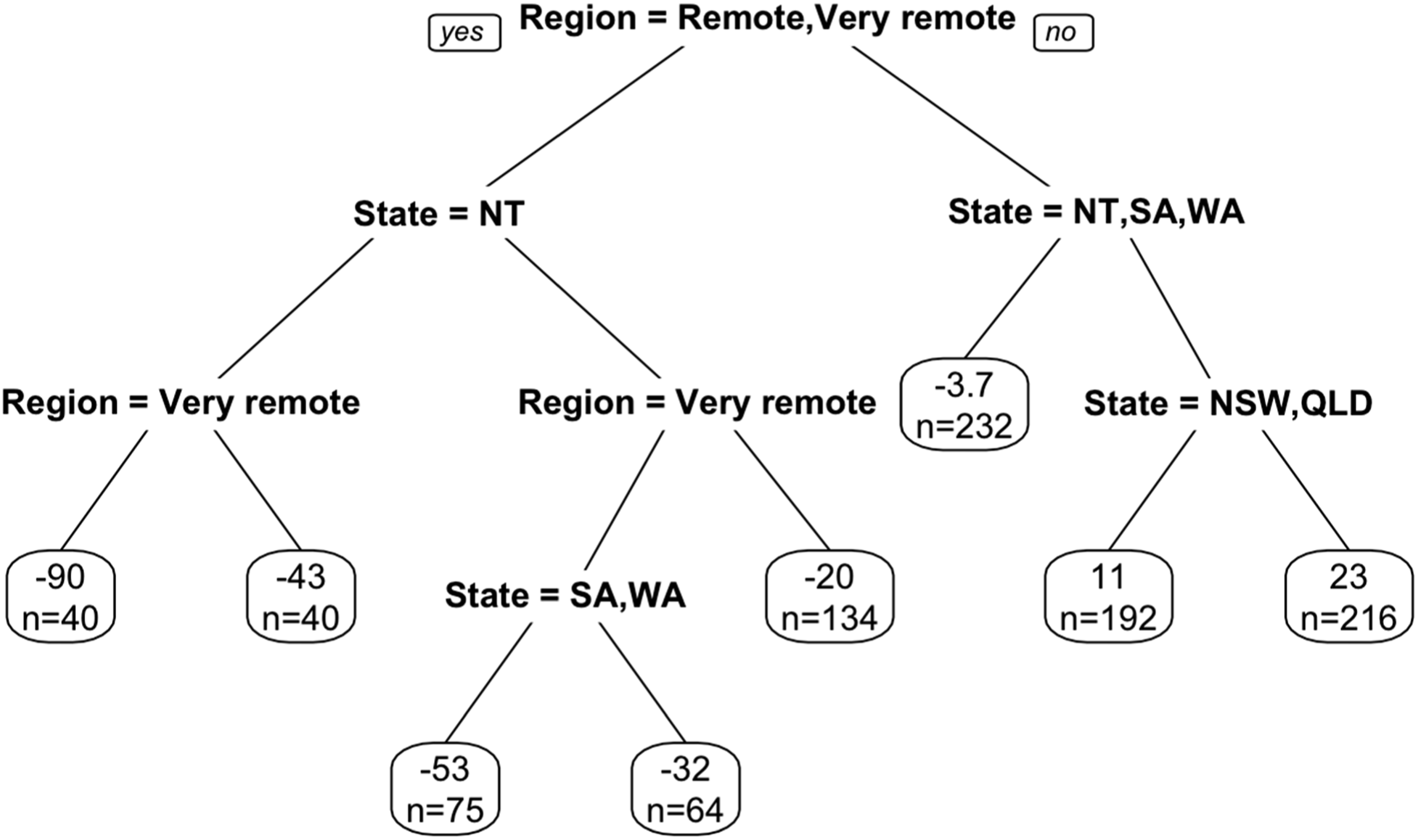

Figure 1 and Supplemental Figures S1–S2 display the results from the regression tree models for NAPLAN Numeracy, Writing, and Reading, respectively. Among the four candidate explanatory variables (state/territory, remoteness area, grade, and calendar year), remoteness area and state/territory were identified as the most important in segmenting the NAPLAN relative performance scores into homogenous clusters (Figure 1). Eight such clusters were found for Numeracy (represented by the final nodes in Figure 1), with 7 and 8 clusters found for Writing and Reading, respectively (Supplemental Figures S1–S2). For all three NAPLAN domains, remoteness was the first, and hence most influential, variable that divided the relative performance scores. The second most influential variable was state/territory, with Northern Territory, South Australia, and Western Australia performing poorly compared to other states and territories across all remoteness categories. For Reading and Numeracy, a further split within Regional areas and Major Cities indicated that New South Wales and Queensland had lower relative performance in these remoteness areas compared to Victoria, Tasmania, and Australian Capital Territory. After re-scaling scores by peer matched performance within grades, no substantive differentiation in performance was detected across grades relative to the more dominant influences of remoteness area and state/territory. Similarly, differences over time by calendar year were not associated with substantial differentiation relative to the influence of state/territory and remoteness area. NAPLAN Numeracy − Regression Tree. Left branches = “yes” to variable levels identified at junction; Right branches = “no” to variable levels identified at junction (all other categories for that variable). In each box, n indicates the number of corresponding areas (state/territory, remoteness and grade) in calendar years.

Bootstrapping stability analyses indicated high stability in performance for all three regression tree models. Using 100 bootstrap repetitions holding out 25% of the data, the median concordance correlation coefficients assessed using ‘stablelearner’ were 0.990 for Writing, 0.998 for Reading, and 0.996 for Numeracy.

3.2 Regression analyses

Multiple linear regression models demonstrated substantial patterns of differing performance in all three NAPLAN domains across states, grades, and remoteness areas between 2010 and 2019. All models had excellent goodness of fit, with R2 values of 0.9578 (Writing), 0.9563 (Reading), and 0.9336 (Numeracy). Results of these diagnostic metrics supporting valid statistical assumptions are presented in Section 4 of the Supplemental Materials, Supplemental Figures S12–S14.

Figure 2 and Supplemental Figures S3–S4 present at-a-glance summaries of the results from multiple linear regression models for each NAPLAN domain. These matrix plots indicate expected patterns of relative performance for Indigenous student cohorts by NAPLAN domain, grade and remoteness area between 2010 and 2019, alongside associated population size estimates. Expected marginal means for relative performance scores in each NAPLAN domain by state/territory, grade and remoteness are presented in Supplemental Tables S2–S4. NAPLAN Writing matrix plot.

Figure 2 is a matrix plot that shows the comparative performance of Indigenous students in the NAPLAN Writing assessment with associated population estimates, revealing patterns of similarity and difference across peer groups with shared characteristics. The horizontal axis indicates quintiles of expected marginal means for relative performance, with red indicating lower relative performance and green indicating higher relative performance. The vertical axis indicates quintiles of Indigenous Student Population, with higher colour saturation indicating larger populations. Empty boxes indicate that no area was represented in that combination of expected performance quintile and population quintile. Point labels follow the format ‘State_Remoteness_Grade’ – for example, ‘NSW_Major Cities_3’ represents outcomes for grade 3 in Major Cities of NSW.

Figure 2 reveals that, for instance, schools in Major Cities in NSW (Grades 3–7) and QLD (Grade 7) share the common characteristics of having large numbers of Indigenous students, and recording very high Writing scores relative to their peers in the same grades across Australia (top right box). Similarly, schools in Very Remote areas of NSW (grades 7–9) and SA (grades 3–9) share the common characteristics of having small numbers of Indigenous students, and having very low Writing scores relative to their peers (bottom left box). As expected, the general pattern of improved performance with decreasing remoteness is apparent with Very Remote areas appearing on the left side of the plot with low relative performance, and Major Cities appearing on the right side of the plot with high relative performance. Detailed patterns of expected performance among peer groups with shared characteristics are shown in these matrix plots for national outcomes (Figure 2, Supplemental Figures S3–S4) and matrix plots comparing outcomes within remoteness categories (Figure 7, Supplemental Figures S9–S11).

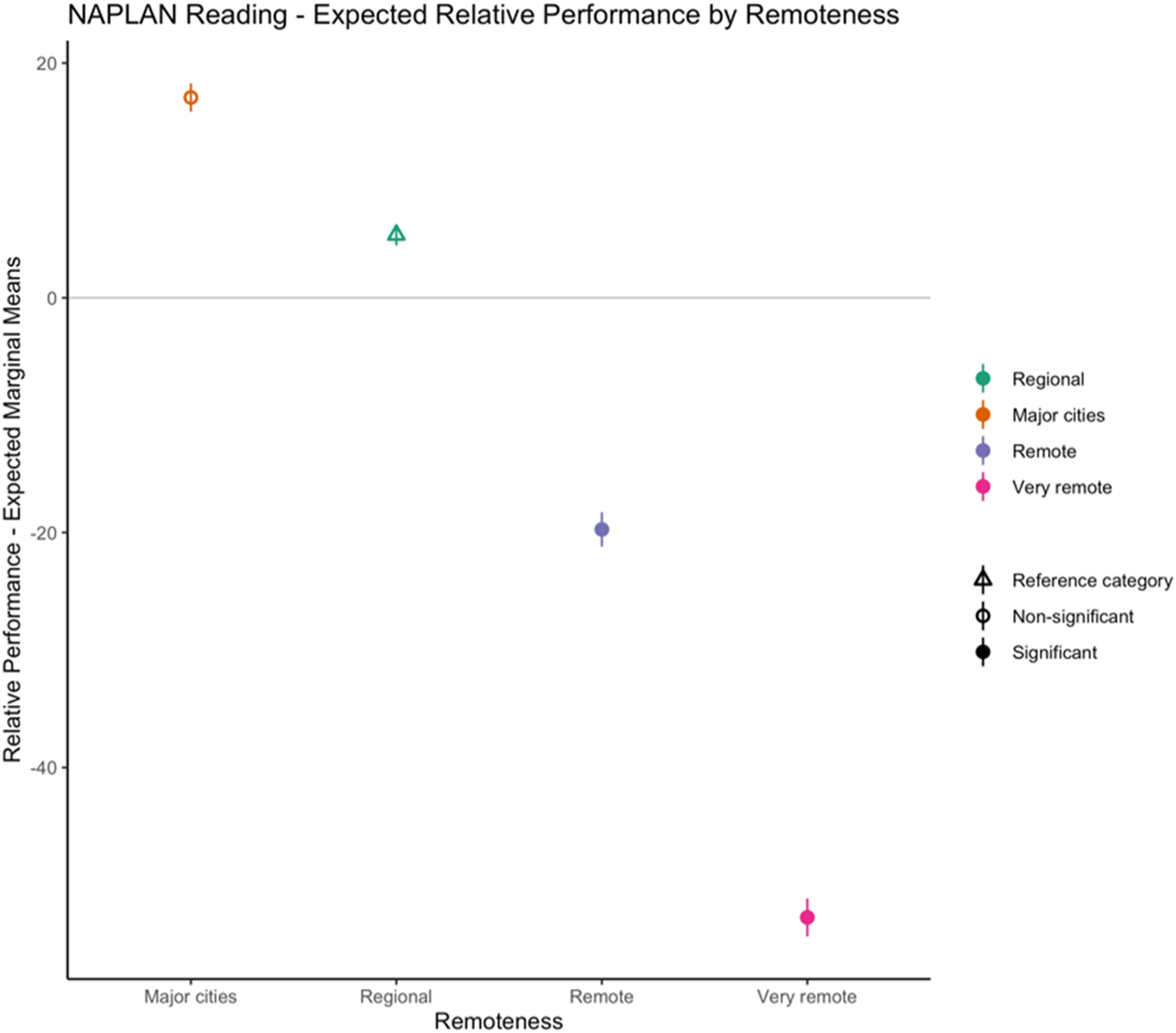

Figure 3 and Supplemental Figure S5 show that for all three NAPLAN domains, expected performance was highest in Major Cities, followed by Regional, Remote, and Very Remote areas. For Reading (Figure 3), there were statistically significant direct effects for Remote and Very Remote areas having lower expected performance (compared to Regional as the reference category). For Writing and Numeracy (Supplemental Figure S5), the expected values were also substantially different between remoteness categories, but the interaction effects between remoteness area and other explanatory variables were revealed as more influential for these two domains. Expected relative performance by remoteness for NAPLAN Reading. Triangles indicate reference categories. Solid circles indicate statistically significant corresponding effects in regression model (p < 0.05). Vertical lines depict 95% confidence intervals around expected marginal means. Horizontal line at 0 ‐ within each grade, a relative performance score of 0 indicates no difference from the national average in a given calendar year.

The findings above indicate that while there are broad trends in relative performance between remoteness categories for Indigenous students in each NAPLAN domain, there is also significant variability in performance within each remoteness category. Extending the methodological principle of peer matching, here we look more closely at the findings for comparison of peer cohorts matched within remoteness categories.

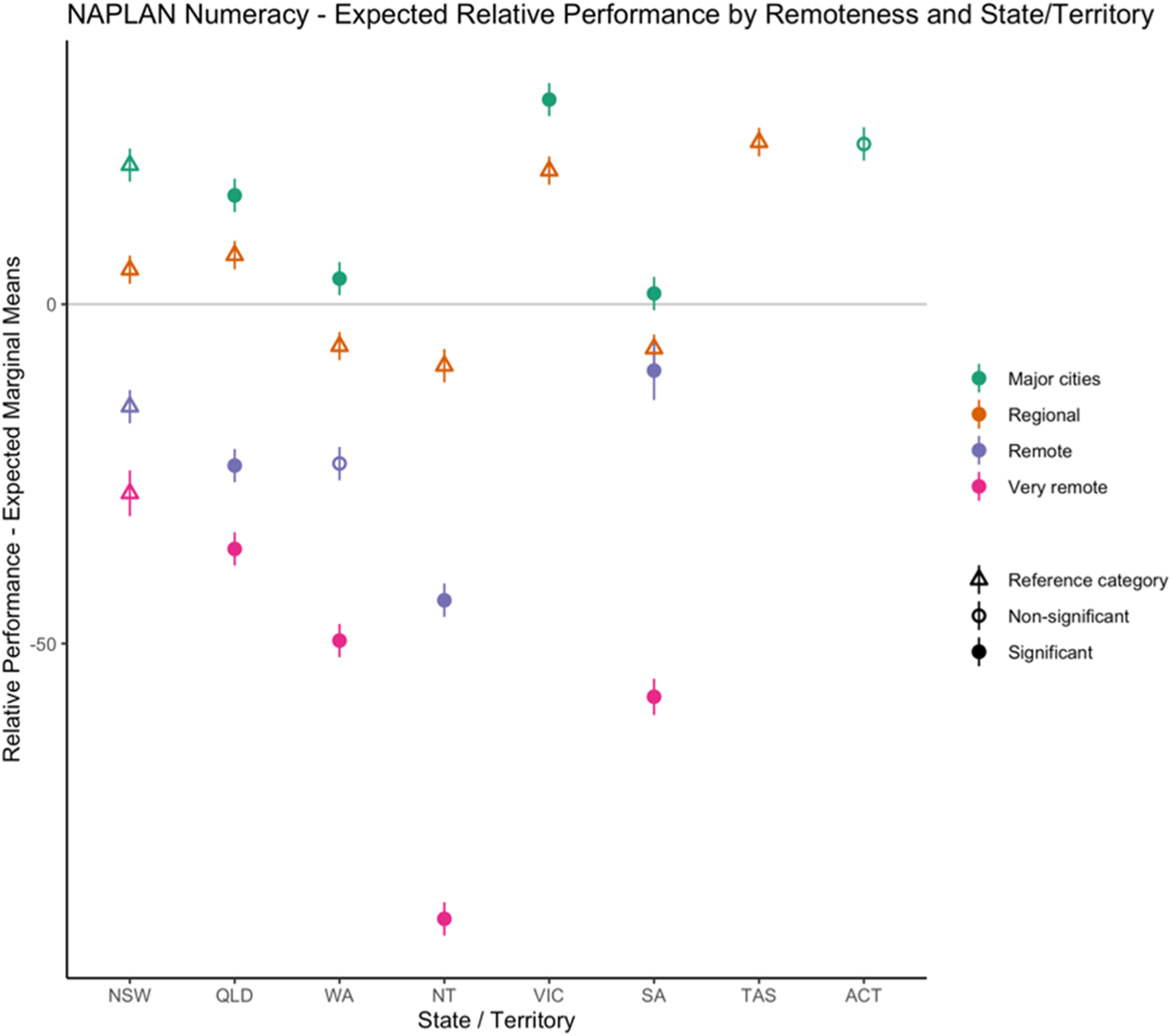

Figure 4 and Supplemental Figure S6 show that the effect of remoteness on expected performance differs substantially across states and territories. Of the three NAPLAN domains, Writing has the largest trend of poorer performance associated with increasing remoteness, followed by Reading (Supplemental Figure S6). Compared to Writing, Numeracy (Figure 4) has a substantially smaller spread in expected performance between more and less remote areas. Expected relative performance by remoteness and state/territory for NAPLAN Numeracy. Triangles indicate reference categories. Solid circles indicate statistically significant corresponding effects in regression model (p < 0.05). Vertical lines depict 95% confidence intervals around expected marginal means. Horizontal line at 0 ‐ within each grade, a relative performance score of 0 indicates no difference from the national average in a given calendar year.

Across all NAPLAN domains, both QLD and NT have significantly and substantially poorer expected relative performance in Remote and Very Remote areas compared to Regional areas. In WA and SA, Very Remote areas were also found to be significantly underperforming relative to Regional areas. Interestingly in SA, Remote areas performed significantly better than in other states/territories, with SA having the highest expected relative performance across Remote areas nationally for all NAPLAN domains – almost matching expected performance in Regional areas of SA.

Compared to other states, Major Cities outperformed Regional areas by a large margin in NSW. All states other than NSW had significantly smaller differences in performance between Regional areas and Major Cities (where both remoteness categories are present). This indicates that in NSW, there was a higher than average trend of under-performance in Regional areas compared to Major Cities.

While our models did not statistically compare performance between Regional areas across states/territories (as Regional was used as the reference category), Figure 4 and Supplemental Figure S6 show that on average across NAPLAN domains the highest performing states/territories in Regional areas were VIC and TAS, while the lowest performing states/territories were WA, NT, and SA. In NT, expected performance in Regional areas was comparable to SA and WA, while expected performance in Remote and Very Remote areas was much poorer for NT.

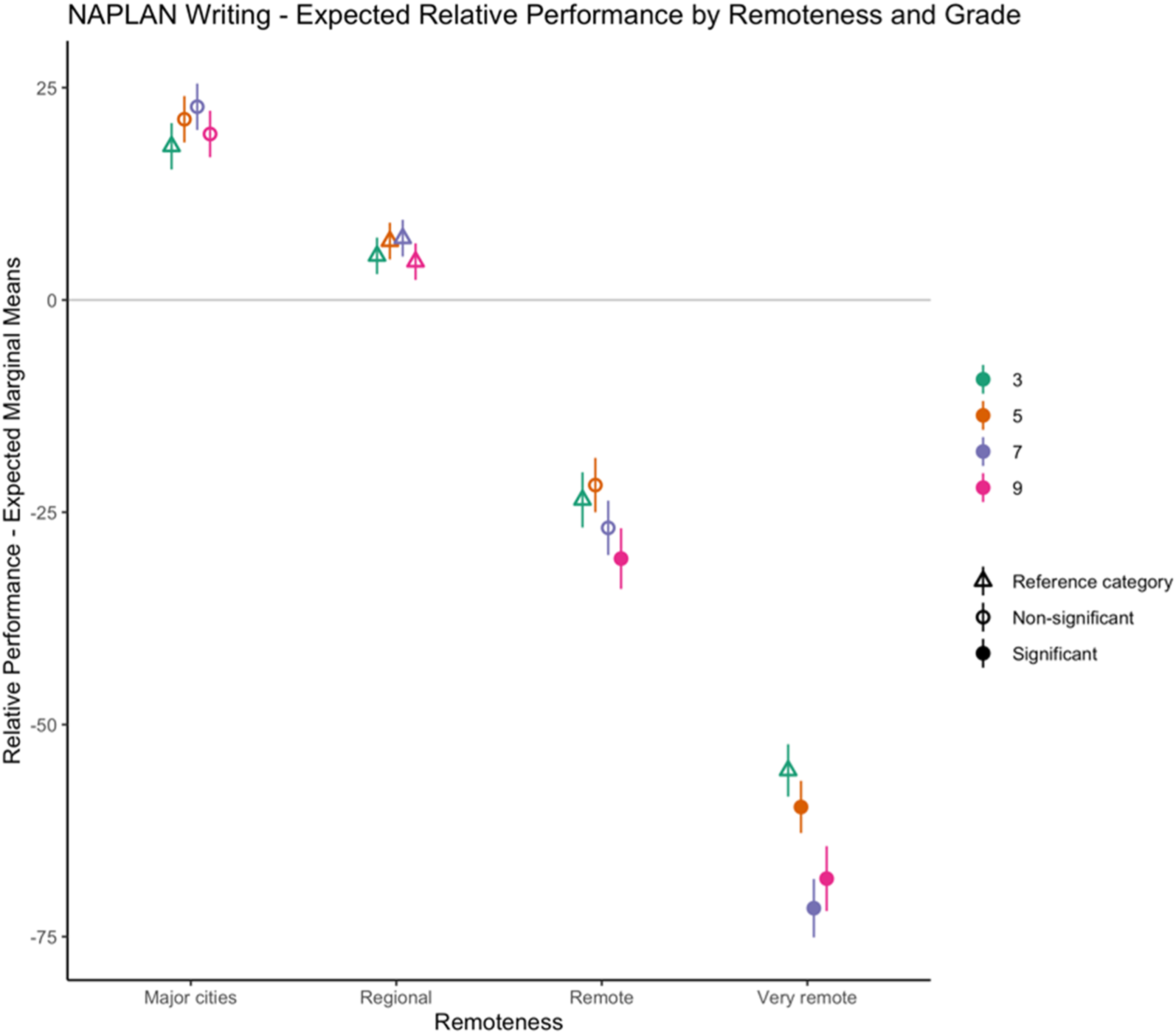

Expected performance by grade varied substantially across remoteness areas, shown in Figure 5 and Supplemental Figure S7. Here the relevant reference categories are Regional and grade 3. For Writing, relative performance in secondary students was worse in more remote areas (Figure 5). In contrast, the opposite pattern was observed for Reading and Numeracy where the relative performance of secondary students in Very Remote areas was significantly better than grade 3 students (Supplemental Figure S7). Expected relative performance by remoteness and grade for NAPLAN Writing. Triangles indicate reference categories. Solid circles indicate statistically significant corresponding effects in regression model (p < 0.05). Vertical lines depict 95% confidence intervals around expected marginal means. Horizontal line at 0 ‐ within each grade, a relative performance score of 0 indicates no difference from the national average in a given calendar year.

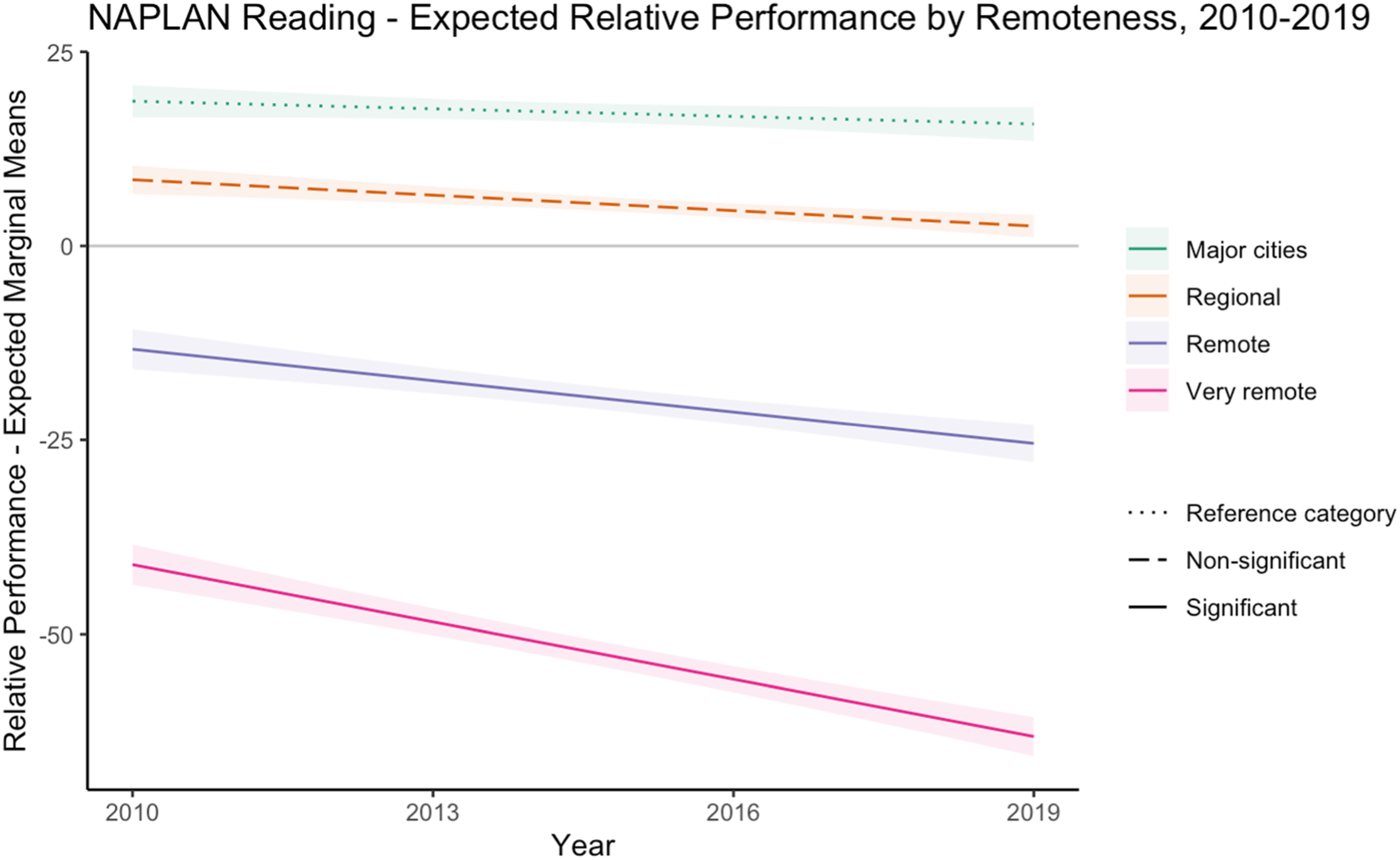

Figure 6 and Supplemental Figure S8 show the trend in expected relative performance over time for the period 2010–2019 across remoteness categories, indicating a slight overall decline in performance across all groups. In addition, expected performance became significantly worse over time for Reading in Remote and Very Remote areas between 2010 and 2019 (Figure 6), compared to Regional areas which had relatively stable expected Reading performance over this period. Expected relative performance by remoteness over time (2010-2019) for NAPLAN Reading. Dashed lines indicate reference categories; Solid lines indicate statistically significant effects in regression model. Shaded ribbons depict 95% confidence intervals around expected marginal means. Horizontal line at 0 ‐ within each grade, a relative performance score of 0 indicates no difference from the national average in a given calendar year.

Applying a similar approach as for Figure 2, we compare performance within each remoteness category in Figure 7 (Major Cities) and Supplemental Figures S9–S11 (Regional, Remote, and Very Remote, respectively). These matrix plots present expected relative performance and Indigenous student population split by tertile bands (0%–33%, 33%–66%, and 66%–100%) within each remoteness category of Major Cities, Regional, Remote, and Very Remote.

In Major Cities in 2016, 62,006 Indigenous students were enrolled in primary and secondary schools across Australia, representing 38.1% of the national Indigenous cohort. Comparing within the remoteness category of Major Cities (Figure 7), notable examples of poor expected performance in Major Cities with large populations included: grade 9 for Reading and Numeracy in QLD; grade 9 for Numeracy in NSW; and grade 3 for Numeracy in QLD. Notable examples of strong expected performance in Major Cities with large populations included: grade 3 in NSW for all NAPLAN domains; grade 5 in NSW for Writing and Reading; grade 7 in NSW for Writing; and grade 7 in QLD for Writing. Matrix plot for expected performance in Major cities - all NAPLAN domains. The horizontal axis indicates tertiles of expected relative performance calculated across Major cities, and the vertical axis indicates tertiles of Indigenous Student Population calculated within this remoteness category. Point labels follow the format “Domain_State_Grade” ‐ e.g. “Writing_NSW_3” represents NAPLAN Writing in NSW for grade 3 students.

In Regional areas (combined ‘Inner Regional’ and ‘Outer Regional’ from ASGS) in 2016, 74,898 Indigenous students were enrolled in primary and secondary schools across Australia, representing 46.0% of the total Indigenous cohort. Comparing within the remoteness category of Regional areas (Supplemental Figure S9), there is an apparent trend that areas with smaller populations (WA, NT, and SA) performed poorly compared to areas with larger populations (NSW, QLD, VIC, and TAS). Notable examples of poor expected performance within Regional areas included: primary schools in WA for all NAPLAN domains; primary schools in SA for all NAPLAN domains; primary and secondary schools in NT for all NAPLAN domains; grade 7 in WA for Reading and Numeracy; grade 9 in WA for Reading; and grade 9 in SA for Numeracy. Notable examples of strong expected performance in Regional areas with large populations included: grade 3 in NSW for Writing and Reading; grade 5 in NSW for Writing; and grade 7 in QLD for Writing.

In Remote areas in 2016, 8,987 Indigenous students were enrolled in primary and secondary schools across Australia, representing 5.5% of the total Indigenous cohort. Notable examples of poor expected performance in Remote areas with large populations (Supplemental Figure S10) included: grade 3 in QLD for Writing; grade 5 in QLD for Writing; and grade 9 in QLD for Writing. Notable examples of strong expected performance in Remote areas included: grade 9 in WA for Numeracy; primary schools in NSW for all NAPLAN domains; grade 9 in NSW for reading; and primary and secondary schools in SA for all NAPLAN domains. As noted above, SA had particularly strong relative performance in Remote areas compared to other states and territories, and particularly weak performance in Very Remote areas.

In Very Remote areas in 2016, 16,924 Indigenous students were enrolled in primary and secondary schools across Australia, representing 10.4% of the total cohort. Comparing within the remoteness category of Very Remote areas (Supplemental Figure S11), notable examples of poor performance in Very Remote areas with large populations included: primary and secondary schools in NT for all NAPLAN domains. Notable examples of strong expected performance included: primary schools in QLD for all NAPLAN domains; and secondary schools in QLD for Reading and Numeracy.

These findings indicate that when comparing Indigenous students across Very Remote areas, QLD and NSW had higher expected NAPLAN performance relative to other states and territories. However, it is important to keep in mind that when considering all Indigenous students nationally (Figure 2 and Supplemental Figures 3, 4), Very Remote areas in QLD were in the lowest quintile for expected relative performance in every NAPLAN domain, and this affects a large population of Indigenous students.

4 Discussion

Since 1788, with the colonisation of Terra Australis by the English, the idea of comparison between Indigenous and immigrant populations has been foundational to the colonial project and has been employed effectively to mobilise government monies. We argue here that the centrepiece of the Australian governments’ ‘Closing the Gap’ framework, established in 2008 to address systemic Indigenous disadvantage, has had limited success despite policy rhetoric and ministerial endorsement at federal, state, and territory levels, in the government, independent, and Catholic education systems across regions, school levels, and by gender because it uses the wrong statistical methods for comparison (see, e.g., Australian Productivity Commission, 2021).

Peer matching offers an approach to educational data analysis that foregrounds the rights of Indigenous peoples, providing more nuanced understanding and opportunities for action when Indigenous students are compared to their Indigenous peers. A deficit-oriented comparative analysis is inherently more limited to identify patterns of variation in the gaps between Indigenous and non-Indigenous outcomes. This deficit approach carries the assumption that the appropriate benchmark to assess Indigenous students’ performance is comparison to their non-Indigenous peers, and that variability in the gap between these cohorts is of more importance than variability of performance within the Indigenous cohort. In contrast, our peer matching design offers unique opportunities to understand evidence-based patterns of performance among Indigenous students as a cohort in their own right. This allows for more detailed and actionable statistical insights on varying outcomes between peer matched groups of Indigenous students, complementing investigation of the systemic and institutional factors that contribute to a school being ready to support Indigenous learners (Anderson et al., 2022). Our findings support the utility of analysing this data using the peer matching approach, to enable potential identification of exemplar higher-performing schools within a comparable cohort, for example, by remoteness category, and using case study methodologies (see, e.g., Hancock et al., 2021; Hillage et al., 1998) to support subsequent detailed analysis of the school-level factors associated with differing educational outcomes for Indigenous students.

The results reported in this article highlight substantial patterns of variation in NAPLAN performance within the cohort of Indigenous students nationally between 2010 and 2019. While the broad pattern of poorer performance with greater remoteness is apparent, and often used by government policymakers, politicians, and the media to justify the ‘Closing the Gap’ deficit narrative and create a sense of urgency to ‘do something’, our analyses provide a detailed understanding of relative performance by grade, within remoteness areas, and according to the size of the corresponding population of Indigenous students. These peer matched patterns of variability offer insights that would be obscured when focussing solely on the gaps in performance between Indigenous and non-Indigenous students. For instance, while the overall influence of remoteness on NAPLAN performance is well-recognised, our study offers additional layers of nuance. We have delved into how performance varies according to grade, by NAPLAN domain, and over time, providing a multi-faceted view that enhances the understanding of this relationship. Moreover, our unique analyses enable insights into relative performance within remoteness categories. For instance, our data reveals that although overall scores are generally lower in more remote areas, there are notable differences in outcomes when comparing states and territories within the same remoteness categories.

In summary, our analysis is able to show that: 1. The expected relative performance for Numeracy was less adversely affected by increasing remoteness. While NAPLAN domains were each modelled separately in this work, this trend is apparent when comparing within remoteness categories. 2. Indigenous students have high expected performance across multiple NAPLAN domains (relative to their age-matched peers in similar remoteness areas) in the following areas: • Major Cities: primary schools in NSW; primary and secondary schools in Victoria. • Regional areas: grade 3 in NSW; primary and secondary schools in VIC; primary and secondary schools in TAS. • Remote areas: primary schools in NSW; primary and secondary schools in SA. • Very Remote areas: primary and secondary schools in NSW; primary and secondary schools in QLD. 3. Areas where Indigenous students have low expected relative performance across multiple NAPLAN domains (relative to their age-matched peers in similar remoteness areas) include: • Major Cities: grade 9 in QLD; primary and secondary schools in WA; and primary and secondary schools in SA. • Regional areas: grade 7 in WA; primary and secondary schools in NT; and primary schools in SA. • Remote areas: primary schools in WA; primary and secondary schools in NT. • Very Remote areas: primary and secondary schools in NT; primary schools in SA.

These data-driven patterns provide a useful foundation for hypothesis generation and priority setting to guide further comparative analyses using qualitative and mixed methods. For instance, future research could examine what systemic and school-level factors drive the strong performance of Indigenous students in Remote areas in SA, relative to other states and territories. By investigating exemplar schools identified in high and low performing areas, within strata defined by remoteness categories, subsequent research can investigate the local systemic and school-level factors that are supporting Indigenous learners to succeed. It may be useful for policymaking and resourcing applications to interpret these findings according to the size of the population of Indigenous students represented in each area.

Limitations

This work had several limitations. We recognise that NAPLAN serves as a blunt instrument for educational assessment, lacking the granularity that would be preferable for a more nuanced analysis. Specifically, NAPLAN data is not sufficiently disaggregated to account for critical variables that can significantly impact performance, such as English as an Additional Language or Dialect (EAL/D) status and socioeconomic background. This omission limits the scope of our findings and their interpretative power. In addition, considering the study through a rights-based lens amplifies another significant limitation: the lack of consultative processes with Aboriginal communities in NAPLAN’s development. These shortcomings further undermine the suitability of NAPLAN for measuring educational outcomes in Indigenous communities. We wish to emphasise that while NAPLAN data offers some valuable insights, it is by no means a comprehensive measure of educational performance, and published datasets do not capture important factors known to be associated with educational outcomes, such as Standard Australian English language ability and socioeconomic status, among other demographic, systemic, and contextual variables. In our statement that there are ‘comparatively highly successful schools’ in Remote and Very Remote areas, we are referring only to the patterns of relative NAPLAN performance within these specific remoteness categories, acknowledging that this is but one narrow lens through which to evaluate educational success.

NAPLAN has an additional domain, Language Conventions (spelling, grammar, and punctuation), which is not included in this study. We sought publicly available NAPLAN results as published in the annual Report on Government Services, and as a result, we included the three domains which were consistently available in these publications (Writing, Reading, and Numeracy).

Another limitation regarding remoteness and associated demographic variation is in the use of the ARIA + remoteness index, which aims to enable as fair a comparison as possible between different categories of remoteness across states, for instance, through comparing Remote areas across states and territories. However, we acknowledge that within these geographic divisions, there may be significant variations in cultural and linguistic diversity which are not explicitly captured in this data.

Future research and concluding comments

In recognition of the multi-dimensional challenges impacting educational outcomes in Indigenous communities, our future research aims to investigate the effects of other systemic issues. These contextual and school-level factors could include, but are not limited to, lack of access to essential health services, inadequate housing facilities, scarcity of educational resources, and high teacher turnover rates. Our objective is to provide a more holistic understanding that takes into account these significant socioeconomic and systemic variables, offering a richer, more nuanced understanding of educational disparities. As another direction for future research, we propose interweaving publicly available data from sources like the Census to enrich the assessment criteria. This could include factors like EAL/D proportions and various socioeconomic indicators, aiming for a more comprehensive and contextualised understanding of educational performance. In our future work, we also aim to extend this quantitative analysis with deeper qualitative and mixed-methods research to elucidate the complex interplay of local demographic, systemic, and school-level factors contributing to educational outcomes. We also advocate for future educational data collection to be more inclusive of these critical variables to provide a richer, more nuanced understanding of educational disparities.

Within the context of Australian policy for the provision of education services to Indigenous students, discussions always seem to revolve around preparing Indigenous children to ‘fit in’ or assimilate into the education system. Much of this approach is fuelled by comparative data that exposes ‘the gap’. This approach is fundamentally flawed, as it fails to question the readiness of the education system to educate Indigenous children (Carbine et al., 2008; Krakouer, 2016; McTurk et al., 2008), regarding Indigenous students and their cultures as being in deficit to the more advanced or developed culture around them, needing education to improve their chances of success in the contemporary world. This deficit perspective is still prevalent within many of Australia’s Indigenous education policies, yielding limited success (Dockett et al., 2010).

The policy aspirations for education in Australia are articulated in the Mparntwe Alice Springs Education Declaration (Department of Education Skills & Employment, 2019), promoting excellence and equity and enabling successful learning opportunities for all students. Australian policymakers regard formal education as a social leveller to close the gap in Indigenous disadvantage. The intractable concern with this approach is that it fixates on a deficit framing to compare Indigenous and non-Indigenous outcomes, and pathologises Indigenous culture as the ‘problem’ that causes the disadvantage. The ahistorical and ethnocentric nature of such assumptions has faced sustained critique by Aboriginal and Torres Strait Islander peoples (Anderson & Ma Rhea, 2018), non-Indigenous education experts (Ma Rhea, 2015), and by policy analysts (Markham & Biddle, 2017).

Ma Rhea and Anderson (2011) have argued that standards-based education in Australia has established benchmark expectations for academic achievement nationally, allowing for the measurement of the efficiency and effectiveness of education services provision to Aboriginal and Torres Strait Islander children. Accountability frameworks such as the annual reports produced by the Productivity Commission have exposed statistical differences in academic achievement between Aboriginal and Torres Strait Islander and non-Indigenous students in Australian schools (Australian Productivity Commission, 2019). High quality Aboriginal and Torres Strait Islander education is recognised as a key determinant in improving the quality of life for Indigenous Australians. If Aboriginal and Torres Strait Islander students are not achieving the expected standard, then there are serious consequences for them in achieving their rights, and social and economic justice, for themselves and their families. However, what seem to be lacking are real, tangible outcomes in terms of educational and economic improvements for Aboriginal and Torres Strait Islander people.

Countering this deficit-oriented perspective, the present study forms a data-driven evidence base to support further analysis of the school-level and systemic factors associated with peer matched patterns of difference in NAPLAN scores within the cohort of Indigenous students.

Using a peer matching approach to undertake statistical modelling of test scores within the strata of remoteness areas, grades and NAPLAN domains, we have generated detailed insights regarding patterns of low and high relative performance for Indigenous students around Australia for over a decade, 2010–2019. These results create a foundation for further comparative analyses to understand systemic and institutional factors associated with these differing patterns of performance, which will in turn support improved data-driven priority setting and decision-making in an Indigenous education policy context.

Supplemental Material

Supplemental Material - Patterns of educational performance among Indigenous students in Australia, 2010–2019: Within-cohort, peer matching analysis for data-led decision-making

Supplemental Material for Patterns of educational performance among indigenous students in Australia, 2010–2019: Within-cohort, peer matching analysis for data-led decision-making by Peter J. Anderson, Owen Forbes, Kerrie Mengersen, and Zane M. Diamond in Australian Journal of Education

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

All data used in this research are publicly available from the Reports on Government Services and the NAPLAN website.

Supplemental Material

Supplemental material for this article is available online