Abstract

Objective:

Early-intervention units have proliferated over the last decade, justified in terms of cost as well as treatment effect. Strong claims for extension of these programmes on economic grounds motivate a systematic review of economic evaluations of early-intervention programmes.

Method:

Searches were undertaken in the Cochrane Central Register of Controlled Trials, PubMed, EMBASE, and PsycINFO with keywords including ‘early intervention’, ‘ultra-high risk’, ‘prodrome’, ‘cost-effectiveness’, ‘psychosis’, ‘economic’, and ‘at-risk mental state’. Relevant journals, editorials, and the references of retrieved articles were hand-searched for appropriate research.

Results:

Eleven articles were included in the review. The more rigorous research (two randomized control trials and two quasi-experimental studies) suggested no difference in resource utilization or costs between early-intervention and treatment-as-usual groups. One small case-control study with evidence of significant bias concluded annual early-intervention costs were one-third of treatment-as-usual costs. Modelling studies projected reduced costs of early intervention but were based on assumptions since definitively revised. Cost-effectiveness analyses did not strongly support the cost-effectiveness of early intervention. No studies appropriately valued outpatient costs or addressed the feasibility of realizing reduced hospitalization in reduced costs.

Conclusions:

The published literature does not support the contention that early intervention for psychosis reduces costs or achieves cost-effectiveness. Past failed attempts to reduce health costs by reducing hospitalization, and increased outpatient costs in early-intervention programmes suggest such programmes may increase costs. Future economic evaluation of early-intervention programmes would need to correctly value outpatient costs and accommodate uncertainty regarding reduced hospitalization costs, perhaps by sensitivity analysis. The current research hints that cost differences may be greater early in treatment and in patients with more severe illness.

Introduction

In the absence of compelling evidence for a sustained clinical or functional response to early intervention in psychosis (EI; Marshall and Rathbone, 2011), whether by increasing clinical resources such as clinician time and education (Bertelsen et al., 2008; Gafoor et al., 2010), reduction of the duration of untreated psychosis (DUP; Larsen et al., 2011), or treatment of those at risk of psychotic illness (Olsen and Rosenbaum, 2006), recent debate has examined the relative costs of specialized and general services. Faced with ongoing scepticism about clinical outcomes, proponents have argued that EI programmes should be implemented to save mental health resources to be used in other areas (McGorry, 2011). The cited research shows EI utilizes more outpatient and less inpatient resources and argues overall costs may be reduced (McCrone et al., 2010).

Critics counter that EI techniques are similarly effective in general psychiatric populations and arbitrary specialization unfairly distributes scarce resources and interferes with continuity of care (Pelosi and Birchwood, 2003; Pelosi, 2010). Recent events in Australia demonstrate the competition for resources within mental health. Despite resistance from the medical profession, funding of GP managed psychological interventions has been significantly reduced (AAP, 2011) at the same time as large financial resources have been steered towards EI (Roxon et al., 2011, p.6). In this competitive environment economic evaluation might be particularly persuasive in convincing decision-makers to fund certain programmes. Economic evaluation of healthcare often focuses on cost-effectiveness, but it is rarely acknowledged that cost-effectiveness does not mean reduced costs but value for outlay. Implementation of a cost-effective treatment is perfectly consistent with increased total costs (Fenwick and Byford, 2005). For example, if a treatment is so expensive that it is not used, a cheaper treatment might greatly increase costs because it is used by many people even if it is less effective than the first.

EI programmes utilize variations of assertive community treatment (ACT), which specifies low patient/clinician ratios with frequent clinical contact by specifically trained multidisciplinary teams. Generally one team member performs the role of key worker for each patient, developing a treatment plan including actions in crisis, and transitions between inpatient and outpatient care. EI usually involves other components, such as psychoeducation of families and social skills training with patients (Bertelsen et al., 2008). Under this model, the cost to the mental health service of outpatient contact does not change according to the number of contacts, but by the ratio of clinicians to patients. An outpatient service that manages 100 patients with a case load of 1:10 will require 10 clinicians; a case load of 1:25 will require four clinicians. Therefore ACT costs a significant multiple of usual treatment regardless of number of contacts.

Perhaps more significantly, past efforts to reduce healthcare costs by reducing hospitalization rates have increased total costs (Reinhardt, 1996; Mooney and Scotton, 1998). This arises because hospitalization costs include 80% or more fixed costs that continue whether or not hospital beds are used (Roberts et al., 1999). Reducing hospitalization rates for individuals by increasing outpatient services increases costs in the community without reducing hospital costs. To realize theoretical cost reductions by reducing hospitalization rates requires closure of hospital beds, transfer of inpatient clinician time to outpatient care, and the extraction of the sunk capital of a general purpose mental health ward (Rauh et al., 2010).

The author has identified elsewhere a tendency in the EI literature towards selective bias in the presentation of results (Amos, 2012b). A clear example can be seen in the recent debate regarding the merits of EI in the pages of this journal (Castle, 2012; Yung, 2012). Close examination suggests that the research cited by Yung (2012), claiming superior outcomes from EI programmes, relies in part upon non-peer-reviewed evidence with internal inconsistencies and reports only favourable evidence from relevant research (Amos, 2012a). The same tendency in economic evaluations might manifest in a failure to consider benchmark studies; to test multiple outcome variables and report only significant results; and to analyse costs and cost-effectiveness in a way that favours EI.

Assessing economic evaluation in healthcare

The importance of economic evaluation in healthcare planning has long been recognized (Gold et al., 1996). The Panel on Cost-Effectiveness in Health and Medicine established a useful starting point for analysing the adequacy of cost-effectiveness research (Weinstein et al., 1996). Drummond et al. (2005) provide a recent elaboration of the Panel’s recommendations for critical assessment of economic evaluations of healthcare programmes. They identify key elements of economic evaluation and discuss characteristics of well-executed studies, summarized below.

Was a well-defined question posed in answerable form?

The research question should identify the alternatives compared and the viewpoint of the particular stakeholder making the comparison, such as a healthcare provider, an institution, or a patient or group of patients. The adequacy of a study cannot be assessed without a clear and explicit identification of the intended audience. An evaluation performed for an administrator within a public mental health service facing budget cuts might limit its scope to direct costs to the mental health service and direct measurement of clinical outcomes. An evaluation performed for a Minister of Health might usefully include socially important variables including employment outcomes, accommodation, patient access to care, and impact on family members. Thus the cost to patients and their family members of attending outpatient appointments might be excluded from an analysis intended for the administrator, but included as a socially important outcome for audiences with a broader societal viewpoint.

Was a comprehensive description of the competing alternatives given?

Adequate descriptions of the primary objectives of competing alternatives are critical in selecting amongst cost-effectiveness analysis (CEA), cost–utility analysis (CUA), and cost–benefit analysis (CBA), defined below. These descriptions allow readers to judge the application of research to their own circumstances; to assess whether costs or consequences have been omitted; and to allow replication of the programme.

Was the effectiveness of the programmes or services established?

Not all information can be taken from randomized control trials (RCTs), but the strongest evidence available should be used. Where evidence of clinical effectiveness is taken from the same study as costs, it is important to establish that it is representative of the general body of evidence about the particular treatment.

Were all the important and relevant costs and consequences for each alternative identified?

Although it is unlikely all costs and consequences can be measured, there should be a concerted effort to identify all important and relevant ones.

Were costs and consequences measured accurately in appropriate physical units?

Where outcomes have been clearly identified, choice of measures is usually straightforward. The time frame should be long enough to capture major outcomes such as treatment effect and adverse reactions.

Were costs and consequences valued credibly?

The consequences being measured determine what type of economic evaluation is most appropriate. There are four main types. CEA examines incremental costs of achieving a single health outcome between treatments where each treatment may be more or less effective and more or less costly than the others. Costs are measured in monetary terms and effects in terms of single health measures, such as changes in psychotic symptom scales. Cost-minimization analysis (CMA) is thought to be appropriate when there are no differences in clinical effect. Rather than compare costs and effects, it simply reports the difference in cost between treatments.

CUA is similar to CEA, measuring costs in monetary equivalents, but employs measures of utility such as quality-adjusted life years (QALYs) to estimate the value of treatment effects rather than specific health outcomes. A QALY is a weighted average of time spent at a particular quality of life compared to perfect health, where perfect health is 1.0 and death is 0.0. This is used as a proxy for utility, an abstract concept associated with the preferences of an individual or group. The higher the preference of an individual or group for a set of conditions, the higher the utility of that set of conditions, relative to other possible sets.

CBA attempts to evaluate the net benefit of a treatment where costs and consequences are translated into monetary equivalents, and the current monetary value of the treatment calculated. The amount a patient or other stakeholder is willing to pay is often used in this type of analysis.

Costs should be valued in local currency, discounted to a base year, based on prevailing market prices.

Were costs and consequences adjusted for differential timing?

In general, a cost that occurs in 1 year’s time is preferable to a cost today, while a benefit in 1 year’s time is less preferred than one today. Discounting is the process that allows costs and consequences to be valued at a single point in time. Weinstein et al. (1996) recommended a flat 3% discount rate when considering healthcare evaluations.

Was an incremental analysis of costs and consequences of alternatives performed?

Evaluation of health treatments should compare the difference in costs between programmes with the difference in outcomes between programmes. CUA and CEA traditionally report an incremental cost-effectiveness ratio (ICER), which is the difference in cost divided by the difference in effect or utility. CBA is expressed as a net present value, which incorporates monetary equivalents of costs and consequences.

Because ICERs do not deal well with uncertainty, alternative presentations of cost-effectiveness have been developed. Bootstrapping generates empirical estimates of confidence intervals around ICERs. Cost-effectiveness acceptability curves (CEACs) are graphical representations of the probability that a treatment is more cost-effective than others (O’Brien and Briggs, 2002). They can be interpreted as a Bayesian method that shows the probability that one treatment is the most cost-effective of the alternatives given the observed data. They are a compact means of accommodating uncertainty, as they chart the change in probability of cost-effectiveness relative to a change in willingness to pay for a particular outcome (such as the gain of 1 QALY, or 1 unit on a negative symptom scale).

Was allowance made for uncertainty in the estimates of costs and consequences?

Sensitivity analysis and modelling are commonly used to manage uncertainty. Sensitivity analysis examines how statistical results change depending on changes in assumptions and parameters holding other parameters constant.

Did the presentation and discussion of study results include all issues of concern to users?

Whether study results and discussion are useful depends upon the viewpoint of the research, the viewpoint of the users, and the presentation of the information.

Aim of the study

The literature reporting economic evaluations of EI programmes was critically evaluated with reference to the recommended qualities outlined above. Research should: use designs that minimize bias and error, or measure it where it cannot be controlled; identify a consistent framework of value which incorporates all relevant costs and outcomes; accurately and reliably measure health effects; and accurately measure costs to all parties. It was hypothesized that evidence would be found that the relative cost-effectiveness of EI programmes would be associated with decreased hospitalization costs and increased outpatient costs.

Methods

Search strategy

Searches in the Cochrane Central Register of Controlled Trials, PubMed, EMBASE, and PsycINFO between 1990 and October 2011 were performed. Keywords included ‘early intervention’, ‘ultra-high risk’, ‘prodrome’, ‘cost-effectiveness’, ‘psychosis’, ‘economic’, and ‘at-risk mental state’. Further papers were identified by hand-searching the major papers and references published by the main research groups associated with EI research (identified in the recent Cochrane review by Marshall and Rathbone, 2011).

Study selection

Papers were selected for inclusion if they reported original economic evaluations of EI for recent-onset psychosis or at-risk mental states (ARMS) using current EI paradigms (e.g. Bertelsen et al., 2008; Francey et al., 2010; Larsen et al., 2011). Where research groups had reported on the same sample at different time points (e.g. Mihalopoulos et al., 1999, 2009), the later paper was preferred, as initial gains tend not to be retained over time (Bertelsen et al., 2008; Larsen et al., 2011). The limitations of the literature prevented application of exclusion criteria such as age, affective psychosis, and non-RCTs. Studies were excluded if the comparison did not focus on patients with recent onset of psychosis (e.g. samples including recent-onset and more chronic patients), or if the comparison involved only a subset of the EI paradigm (such as CBT for psychosis).

Reports from the quasi-experimental trials by the OPUS and TIPS research groups were included to benchmark resource utilization and clinical outcomes.

Analysis

The collected research was critically evaluated for design and implementation features likely to introduce bias. Meta-analyses were not attempted as the methods, populations, statistical analyses, exchange rates, and underlying healthcare systems did not permit sensible conclusions to be drawn.

Results

Description of studies

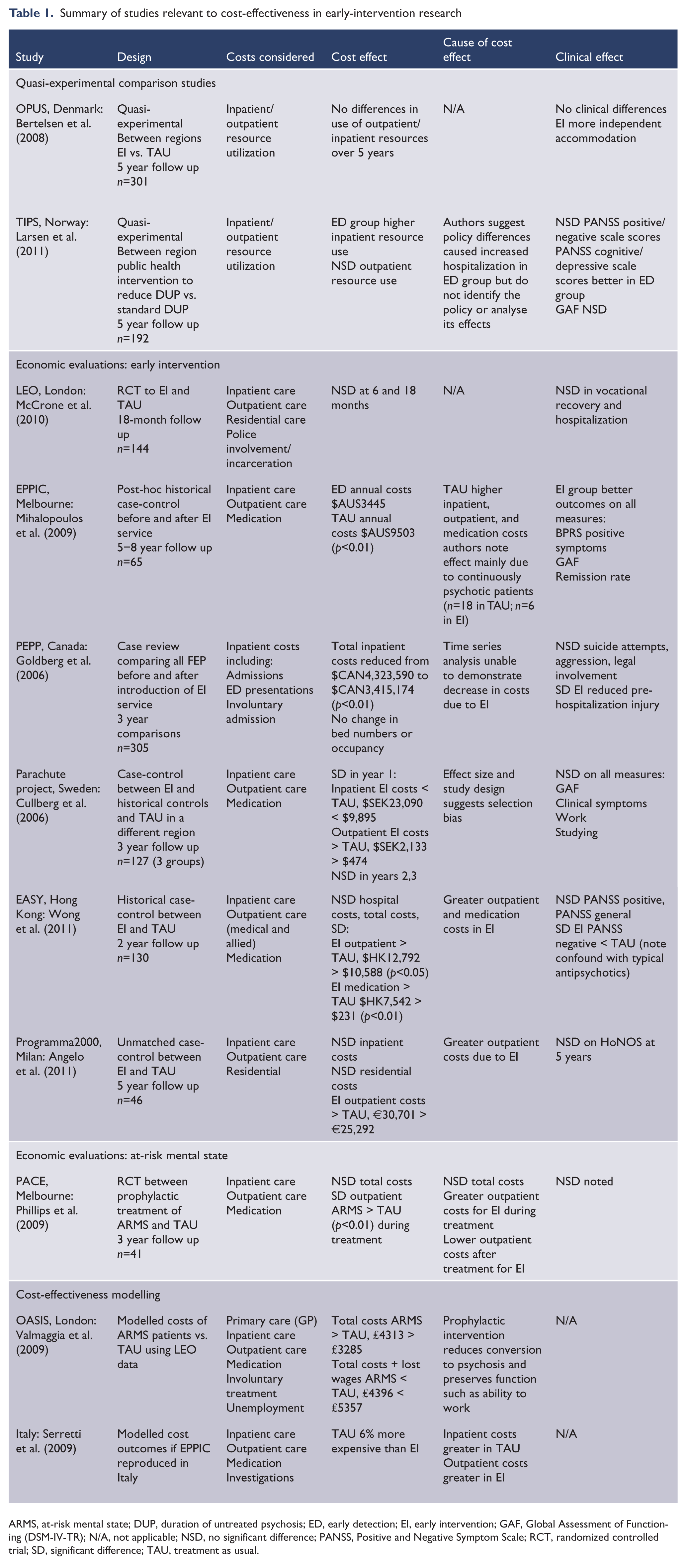

Search of electronic databases and hand-search identified 87 articles. Of these, 39 were selected on the basis of their title and abstract. Examination of these articles identified 11 papers appropriate for inclusion in the study after eliminating papers reporting the same research at different times, editorials, and review papers (selected papers listed in Table 1).

Summary of studies relevant to cost-effectiveness in early-intervention research

ARMS, at-risk mental state; DUP, duration of untreated psychosis; ED, early detection; EI, early intervention; GAF, Global Assessment of Functioning (DSM-IV-TR); N/A, not applicable; NSD, no significant difference; PANSS, Positive and Negative Symptom Scale; RCT, randomized controlled trial; SD, significant difference; TAU, treatment as usual.

Six papers compared EI and treatment as usual (TAU). One was a RCT assigning patients with first- or second-episode psychosis to EI or TAU (McCrone et al., 2010). Four were case-control studies comparing EI to historical control groups (Goldberg et al., 2006; Mihalopoulos et al., 2009; Wong et al., 2011), one of which also looked at a contemporary control group from another region (Cullberg et al., 2006); and one an unmatched control study from selected patients in a single region (Angelo et al., 2011).

One paper estimated costs of a RCT in ARMS patients assigned to TAU or EI offering CBT and low-dose risperidone (Phillips et al., 2009).

There were two modelling papers one of which modelled potential cost savings applying Early Psychosis Prevention and Intervention Centre (EPPIC) results to an Italian context (Serretti et al., 2009); and another which modelled cost savings applying EI to an ARMS cohort (Valmaggia et al., 2009).

The studies had small samples [n between 17 and 144; Goldberg et al. (2006) had n=305, but only included inpatient costs] and did not report power analyses. Benchmark power analyses suggest these studies would have been underpowered with their smaller sample sizes. The studies do not compare their results with methodologically more rigorous studies to detect bias.

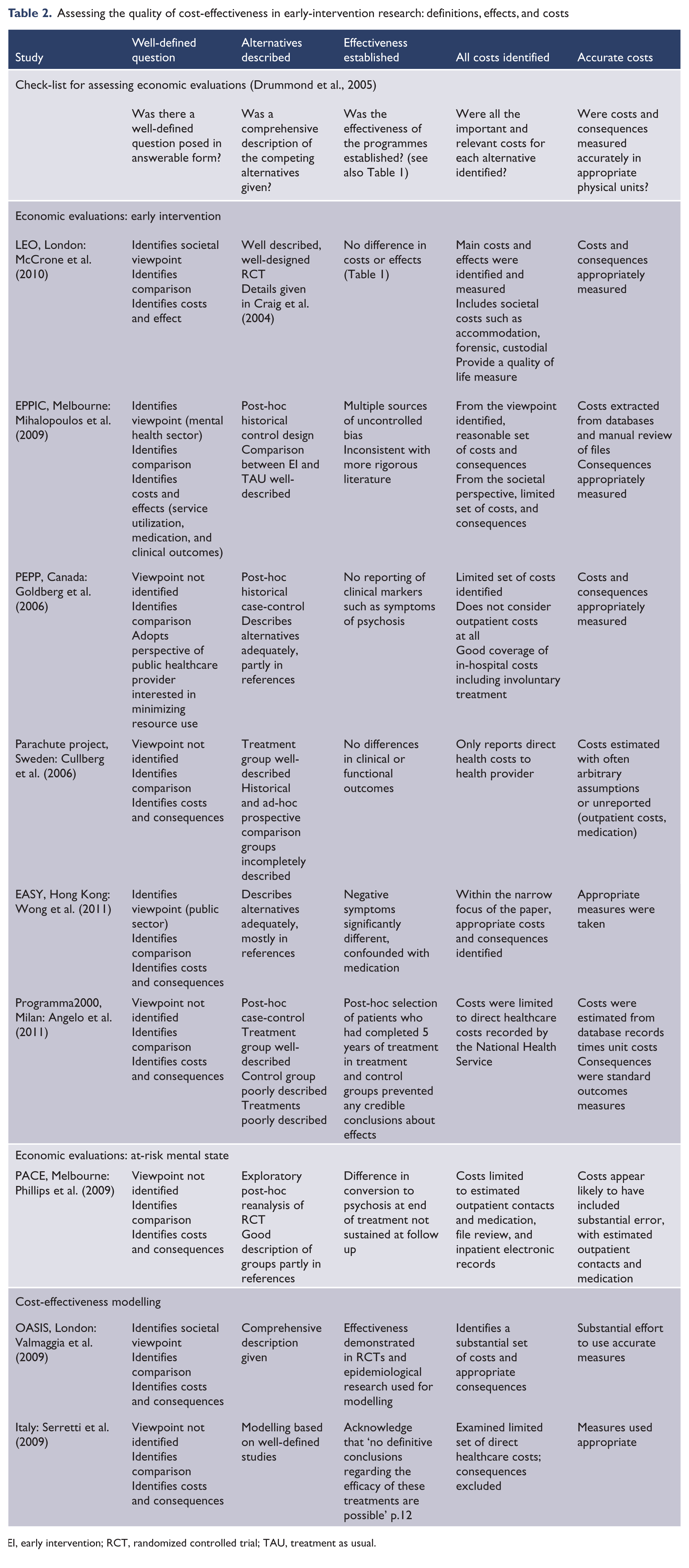

Approach to the research question

Four of nine studies identified their viewpoint, comparisons, and costs and effects (Table 2). These studies had well-described treatment alternatives and well-designed comparisons. The other studies tended to be less well structured, preventing relevant conclusions. For example, Goldberg et al. (2006) implicitly adopted the approach of a public healthcare provider, but only addressed inpatient costs. As a result, their research was unable to comment upon the net effect of EI.

Assessing the quality of cost-effectiveness in early-intervention research: definitions, effects, and costs

EI, early intervention; RCT, randomized controlled trial; TAU, treatment as usual.

Was effectiveness established?

There was evidence of significant bias across the studies and most studies failed to find significant clinical differences (Table 1). Consistent with the comparison studies (Bertelsen et al., 2008; Larsen et al., 2011), effectiveness was not established by these studies.

The RCTs did most to control for confounding factors. McCrone et al. (2010) described an adequate randomization procedure, but did not analyse probable confounding factors such as differences in gender, ethnicity, episode of psychosis (first or later), and patterns of attrition. Phillips et al. (2009) estimated the costs associated with a previously completed RCT. They analysed possible confounding factors and patterns of attrition and found no evidence of bias.

Risk of bias in the case-control studies was high, as they opportunistically selected historical or regional control groups with known and likely confounds. Goldberg et al. (2006) had perhaps the cleanest comparison, with historical controls in the same service before and after introduction of an early psychosis service separated by 2 years.

Mihalopoulos et al., (2009) obtained samples by tracking down patients between 5 and 8 years after treatment, with large attrition differences between control and treatment groups, which they did not directly test. The suspicion of bias is supported by the large differences between their results and those of the OPUS study (Bertelsen et al., 2008). Mihalopoulos et al., (2009) also acknowledged that most of the effect between EI and TAU is due to patients with continuous psychosis in the control group.

The other case-control studies suffered from significant bias. Of the EI group studied by Wong et al. (2011), 60% were on atypical antipsychotics, with 91% of TAU on typical antipsychotics (Chen, 1999). The difference was not acknowledged and was confounded with the only significant difference (negative symptoms).

Angelo et al. (2011) selected their sample from patients in treatment and control conditions of pre-existing studies that had already completed 5 years, ensuring 100% follow up. Their approach made it impossible to generalize their results to any population wider than the group of people included in the study.

Modelling studies

Valmaggia et al. (2009) developed a comprehensive model but more recent research has invalidated key assumptions. Larsen et al. (2011) demonstrated that reducing DUP does not improve long-term outcome and may increase use of resources including hospitalization; while Yung et al. (2008) revised the rate of transition to psychosis from 40% to 16%. The sensitivity analysis by Valmaggia et al. (2009) showed that these changes reverse the outcome from reduced to increased costs in the EI group.

The model of Serretti et al. (2009) was based upon a small historical control study, while the OPUS study had a quasi-experimental design with large sample size and adequate power (Bertelsen et al., 2008). The latter demonstrated that EI does not change hospitalization use or outpatient contacts over 5 years. These more robust assumptions invalidate the model of Serretti et al. (2009).

Analysis of costs and values

Studies that specified a societal viewpoint measured the broadest set of variables (e.g. McCrone et al., 2010). Where a viewpoint was not specified, or with a narrow public sector or mental health sector viewpoint, there was a small set of direct healthcare costs. The most restricted was Goldberg et al. (2006) with only inpatient costs, although they considered inpatient costs such as seclusion and involuntary treatment. None of the studies analysed indirect costs such as consumption of carer time, or negative effects of treatment such as side effects of medication. One interesting possibility not assessed is that the effort to reduce hospitalization of people with severe mental illness might lead to reduced access to physical health care.

Costs and consequences were generally measured accurately in appropriate physical units, with several exceptions. No study reported QALYs, even where the adoption of a societal viewpoint would have suggested this. McCrone et al. (2010) reported a measure of quality of life as an outcome effect. Unfortunately they relied upon patient report of contacts over a 6-month period, reducing the accuracy of their cost estimates. Their analysis did not account for the use of an extended hours service available on weekends and public holidays, which would inflate EI outpatient costs.

Phillips et al. (2009) relied upon patient report of resource utilization, and converted qualitative descriptions of service use such as ‘sporadic attendance’ to quantitative estimates of actual contacts, such as 17 appointments in 6 months. Although they described the assumptions used in estimating unit costs, they did not provide details sufficient to assess their assumptions.

Mihalopoulos et al. (2009) made significant efforts to accurately estimate costs, but did not provide enough information to assess the accuracy of their estimates. Outpatient measures relied on ad-hoc file requests, the accuracy of which is impossible to judge. They excluded secondary and tertiary consultations, non-client centred contacts, and brief phone contacts. Angelo et al. (2011) relied upon even more tenuous data extraction from health databases for number of contacts. It is unclear why they used EPPIC data rather than the more sound OPUS results available at the time.

Several studies described their estimation procedures but did not provide the relevant assumptions. Mihalopoulos et al. (2009) identified how they found their utilization data, but not what the data were, simply reporting calculated results. Cullberg et al. (2006) mentioned how they calculated outpatient costs without details. They specified assumptions about inpatient/residential care but were unable to measure differences across their sites so assumed them to be the same. Wong et al. (2011) referred to costing formulas but did not describe or provide them. They also included hospitalization as both cost and consequence and do not acknowledge the confound.

Goldberg et al. (2006) measured the costs of inpatient treatment accurately and were the only group to mention hospital bed numbers and occupancy. They did not provide figures, but noted that neither bed numbers nor occupancy changed with the introduction of EI.

If the effects of EI include intensive initial treatment leading to less intensive treatment later in the course, few of the studies considered long-enough periods to test clinical or cost outcomes. Mihalopoulos et al. (2009; 8 years), and Angelo et al. (2011; 5 years) were most adequate. Only three of the non-modelling studies used discounting.

Modelling studies

Valmaggia et al. (2009) and Serretti et al. (2009) relied upon multiple sources of information to construct their models. In addition to superseded assumptions, Valmaggia et al. (2009) included lost earnings due to the differential rate of employment of patients with long and short DUP. However, costs are only relevant in comparisons between treatments if the treatment changes outcome. The TIPS study suggested that changing DUP does not affect vocational outcome over 5 years (Larsen et al., 2011).

Outpatient costs estimated at individual level

None of the reviewed literature accurately assessed outpatient costs. All the studies used the number and type of contacts per patient per time period multiplied by unit cost. This approach distorts the cost of outpatient care to the considerable advantage of EI services with low case loads. Because all of the studies, with the exception of Mihalopoulos et al. (2009), were consistent with the expected pattern of higher outpatient costs in EI and higher inpatients costs with TAU, with total costs approximately equal, the systematic underestimation of EI outpatient costs suggests that none of these comparisons can be relied upon.

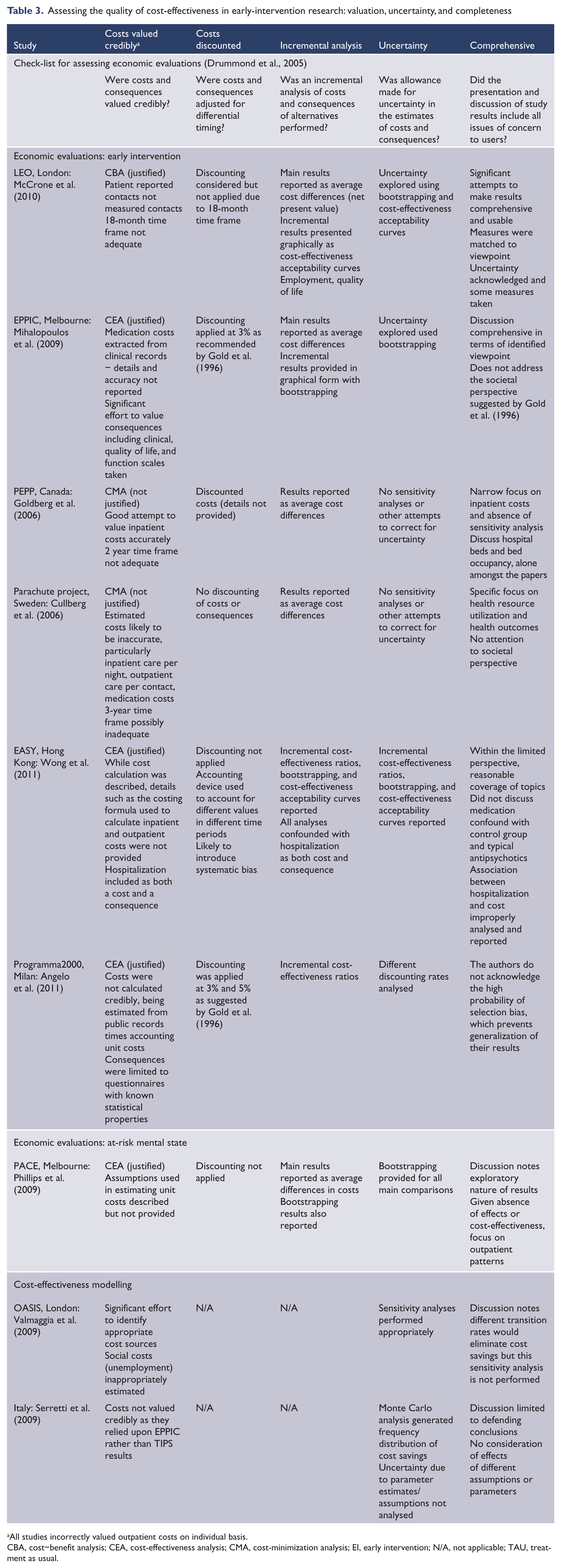

Analysis of results including incremental analysis and uncertainty

There was limited use of bootstrapping, CEACs, and sensitivity analyses to analyse uncertainty (Table 3). None of the papers identified circumstances that would reverse their results. Valmaggia et al. (2009) was the only group to apply sensitivity analysis to a thorough set of parameters and assumptions. They reported that EI was more expensive if the rate of transition to psychosis of TAU was <0.27, and less expensive if this rate was >0.27. Based on earlier research, they had assumed a rate of transition of 0.35. Subsequently Yung et al. (2008) revised the rate of transition to 0.16 and noted that EI may delay rather than prevent transition.

Assessing the quality of cost-effectiveness in early-intervention research: valuation, uncertainty, and completeness

All studies incorrectly valued outpatient costs on individual basis.

CBA, cost–benefit analysis; CEA, cost-effectiveness analysis; CMA, cost-minimization analysis; EI, early intervention; N/A, not applicable; TAU, treatment as usual.

Serretti et al. (2009) did not provide sensitivity analyses so their model could not tolerate parameter revisions. However, if the assumptions from the more robust OPUS study are substituted with those of EPPIC, the model of Serretti et al. (2009) is unlikely to return a significant difference in total costs.

Of the RCT and case-control studies, McCrone et al. (2010) provided the best discussion of results and analysis of uncertainty. In particular, this group acknowledged a limited time frame, absence of QALYs, use of hospitalization as an input cost and an outcome, and possible sources of bias including systematically missing data. Strangely, they acknowledged a regionally similar research group’s results that suggested no effect of EI on hospitalization rate over 5 years (Gafoor et al., 2010), but did not question the implications for their study. Their CEACs suggested that EI is cost-effective for improving vocational outcome and quality of life at 18 months. However, these curves relied upon accurate estimates of outpatient costs in the same way as CBA. In addition, Bertelsen et al. (2008) suggested that vocational outcomes are almost identical in EI and TAU groups at 2 and 5 years, highlighting the inadequacy of an 18-month trial when costs and effects are expected to continue for decades.

Mihalopoulos et al. (2009) showed bootstrapping graphs indicating that EI was more effective and less expensive (called dominance in CEA) than TAU in reducing the positive symptom subscale of the Brief Psychiatric Rating Scale, consistent with their anomalous results. Wong et al. (2011) showed both bootstrapping and a CEAC comparing costs with reduced hospitalization, which suggested cost-effectiveness of EI for reducing number of admissions, but did not acknowledge the confound of having hospitalization as both a cost and an outcome. Angelo et al. (2011) provided an ICER suggesting cost-effectiveness of EI in the reduction of Health of the Nation Outcome Scale scores, which given the clear selection bias in their design, was difficult to interpret.

It should be clear that each set of authors selected a positive cost-effectiveness measure from among multiple possible comparisons and that none of the authors’ results supported any of the others. As McCrone et al. (2010) acknowledged, and the other authors did not, none corrected for multiple comparisons, which greatly increases the possibility of false positives.

Goldberg et al. (2006) and Cullberg et al. (2006) were the only papers that did not analyse some level of uncertainty, although Angelo et al. (2011) only considered different discounting rates, and most of the other papers were limited to a small set of cost variables.

Uncertainty analysis, the cost of outpatient services and hospitalization

All papers assumed that the cost of outpatient contacts is equivalent for EI and TAU, despite the fact that differential case loads with an ACT model make EI outpatient treatment more than twice as expensive as TAU, regardless of the number of contacts. None of the papers examined the assumption that theoretical cost reductions associated with reduced hospitalization are perfectly realizable, despite considerable evidence that such reductions are extremely difficult to achieve. Either assumption alone seemed able to reverse the conclusions of most of the papers (Mihalopoulos et al., 2009 perhaps excepted); both together might suggest significantly higher costs for EI over TAU.

Findings

Considered as a whole, the studies did not show that EI reduces costs (Table 1), despite Mihalopoulos et al. (2009), which concluded that EI is one-third the cost of TAU. The modelling by Valmaggia et al. (2009) concluded that if lost wages are excluded, healthcare costs are higher in EI; if included, societal costs are higher in TAU. Serretti et al. (2009) concluded that TAU costs are 6% higher than EI, but the revision of major assumptions as well as the absence of sensitivity analyses limited the force of their conclusions. Goldberg et al. (2006) reported a significant reduction in inpatient costs but could not comment on total or societal costs as they did not consider costs incurred outside the hospital setting.

Although Mihalopoulos et al. (2009) did not test subgroups, they noted most of the effect was caused by patients with continuous psychosis. These patients cost $AUS105,473 in TAU versus $25,720 in EI. In patients with an episodic course, the costs were $38,756 in TAU and $24,110 in EI; and in those not actively psychotic throughout the study $17,653 in TAU and $19,904 in EI. Thus the relative cost of EI varied proportionally with severity of pathology. Comparison with the more rigorous OPUS study (Bertelsen et al., 2008) suggests that these outcomes may have more to do with selection bias than treatment effects.

Cullberg et al. (2006) reported large differences in the first year favouring EI overall and for inpatient costs, and favouring TAU for outpatient costs; in the second and third years there were no differences in inpatient or outpatient costs. Angelo et al. (2011) reported higher costs for the treatment group in the first year that reversed over 5 years, although the total cost of both conditions was not significantly different.

Discussion

Nine economic evaluations of EI were systematically reviewed. One study with evidence of bias and results substantially different from larger, more methodologically rigorous research, found evidence that EI is both clinically superior and cheaper than TAU on both inpatient and outpatient costs (Mihalopoulos et al., 2009). The rest of the literature suggested no clinical difference between EI and TAU, with EI incurring less inpatient costs but more outpatient costs, as expected. There was some evidence that EI might be cost-effective for particular outcomes including vocational outcome and quality of life. However, methodological concerns suggested that these claims were preliminary.

None of the papers considered that the actual cost of outpatient care is determined by case load. This systematically distorted both cost and cost-effectiveness analyses, underestimating one of the two main costs in EI significantly enough to invalidate their conclusions.

There was also no analysis of the difficulty of translating reduced rates of hospitalization into reduced costs of hospitalization. Goldberg et al. (2006) noted that reduced hospitalization rates did not change bed numbers or occupancy, suggesting EI does not reduce hospital costs even where individual hospitalization is reduced. Therefore, both sides of the main comparison of this literature are likely to distort analysis if extrapolated to the systemic level.

Clinical effects

Consistent with more methodologically rigorous studies (Bertelsen et al., 2008; Larsen et al., 2011), this literature found little evidence of clinical superiority in EI (Table 1). Exceptions seemed more indicative of bias than clinical effect (e.g. Wong et al., 2011). Although Mihalopoulos et al. (2009) showed impressive results at 8 years, the post-hoc nature of their analysis, with evidence of bias in selection and attrition and the substantial difference in their results from similar studies and the more rigorous OPUS study suggest that their results were due more to bias than to treatment.

Difference in costs and cost-effectiveness

Ignoring the systematic distortion, the research suggested no difference in total costs between EI and TAU (Table 1). Mihalopoulos et al. (2009) produced results so different from the other studies and the quasi-experimental comparison study (Bertelsen et al., 2008) that they must be viewed as an outlier. There was evidence of decreased inpatient costs and increased outpatient costs in EI. In addition, there was a suggestion that costs might be particularly elevated in the most severely ill patients and in the early years of treatment.

Although there was evidence of cost-effectiveness of EI in the literature, it was sporadic and no clear pattern emerged. The universal absence of correction for multiple comparisons, and other more specific methodological limitations, suggested that these results may be false positives, leaving aside the question of distorted outpatient costs. To reduce the impression of opportunistic selection of results, studies should identify a rationale suggesting why particular measures have been analysed with bootstrapping, CEACs, or similar techniques, rather than reporting only positive results or those consistent with the author’s position.

Methodological concerns

Tables 1–3 demonstrate that, while there were papers with reasonable methodology (particularly Valmaggia et al., 2009; McCrone et al., 2010), there were also systematic deficiencies in the literature. In particular, small sample sizes in the absence of power analyses limited the ability of studies to identify real effects. There was generally an absence of comparison to larger, more rigorous studies for benchmarking. Even McCrone et al. (2010) noted that a major facet of their study (vocational outcomes) was in conflict with a larger, longer study (Gafoor et al., 2010), but did not consider the implications.

Where papers identified their viewpoint and type of economic evaluation, they were also usually more comprehensive and complete in identifying appropriate costs and consequences to measure. Nevertheless, the literature did not stray far beyond direct healthcare costs, with McCrone et al. (2010), from a societal perspective, the most ambitious. Drummond et al., (2005) noted that the most useful analyses for decision makers are those that incorporate the most information about broader societal concerns in the most concise way (see Carter et al., 2008 for an extension of this approach that considers information necessary for priority setting). Following Gold et al., (1996), Drummond et al., (2005) recommended the use of CUA and the QALY, or CBA. None of the reviewed research approached this level of analysis.

Reliance upon opportunistic, post-hoc historical and regional case-control designs was another failing of the literature. Paired with the small sample sizes and absence of power analyses, and noting the lack of effect in large well-designed quasi-experimental studies, the force of these less powerful designs was limited. This was exacerbated by desultory attempts to measure and account for possible bias and other sources of error. Only one author even noted the possibility of correcting for multiple comparisons. This was particularly apparent in the cost-effectiveness analyses such as bootstrapping and CEACs, which reported different variables in each study, with no rationale suggested by past research or theory.

Given the controversial nature of the area, and the equivocal nature of the results, it is surprising that more use was not made of sensitivity analysis. Where it was used, it was on a small subset of cost-related parameters. Valmaggia et al. (2009) was the sole exception. Sensitivity analysis allowed their study to survive a revision of assumptions in the literature by reversing their conclusion. The modelling assumptions by Serretti et al. (2009) have similarly been superseded, but in the absence of sensitivity analyses, their model is simply demonstrably incorrect.

Recommendations

Economic evaluations of EI should use a strong methodology such as those suggested by Drummond et al. (2005) or Carter et al. (2008). Post-hoc historical and regional case-control designs appear to be inadequate given the small effect sizes and the multiple sources of bias. Bias should be measured and analysed, including selection bias and attrition bias. Correction should be made for multiple comparisons. Conscious definition of the viewpoint of the study, and the type of analysis to perform, appear likely to produce more coherent and complete studies. Power analyses are necessary, and follow up should be at least 5 years.

Economic evaluation of EI, particularly modelling studies, should identify and incorporate the strongest available evidence (e.g. Bertelsen et al., 2008; Larsen et al., 2011). Where a small, low-powered, biased study conflicts with these, the difference should be identified, analysed, and explained, not discarded, as McCrone et al. (2010) did.

Drummond et al. (2005) recommended the accurate measurement of as many relevant costs and consequences as possible. McCrone et al. (2010) were possibly the role models in this literature and acknowledged the need for future research to include CUA using QALYs.

Sensitivity analyses should be used in all economic evaluations of EI. This might be an elegant way to deal with the problems associated with calculating outpatient costs, and the impact of reduced hospitalization. It should be possible to analyse a range of values for outpatient costs incorporating the inflated cost of outpatient contacts due to reduced case loads, increased non-clinical work, and factors such as extended hours services. Similarly, the impact of reduced hospitalization rates could be examined across a range of realized cost reductions. Given previous research suggesting less than 20% might be realized (Roberts et al., 1999), 0–40% might be an appropriate range.

Future research

There is some evidence that the economic differences between EI and TAU are greatest in the first year of treatment (Cullberg et al., 2006; Angelo et al., 2011). Others suggest that outpatient costs are greater in EI than TAU over the first year (Mihalopoulos et al., 1999), but less over later years (Mihalopoulos et al., 2009; Phillips et al., 2009). If there is less need for outpatient contact after 2 years, there would appear to be no need for reduced case loads. Thus it is unclear which facets of EI are required into the third and subsequent years. Mihalopoulos et al. (2009) also found that most of the difference in costs between EI and TAU was due to the most severely affected patients. Future research might usefully assess whether patterns of cost difference are due to particular subgroups, such as the most severely ill, or particular time frames, such as the first year of treatment.

Limitations of the study

No meta-analyses were attempted due to the heterogeneity of populations, methods, and statistics in the identified papers. The reported research was limited to two RCTs, case-control studies with clear significant bias, and modelling studies based on assumptions that have been superseded by more recent research. Despite the limited nature of the research, the analysis was undertaken because of the importance of the question in resource allocation in modern mental health systems and the strength of the rhetoric used to support extension of the EI paradigm despite the limited evidence.

Strengths of the study

The study attempted to identify all relevant research utilizing both standard databases, appropriate comparison studies with more rigorous methodology, and chasing references through research groups, review papers, and editorials. The paper attempted to extend analysis beyond the standard paradigm to suggest significant oversights in current economic evaluations of EI. It is hoped that this will stimulate research providing accurate information to healthcare administrators to guide allocation of resources.

Conclusions

Extant economic evaluations of EI are not adequately designed and powered to answer the questions they propose. Small sample sizes, reliance upon biased case-controls, and inaccurate outpatient cost estimation mean that there are no reports which report the actual costs and the feasible reduction of costs by substituting outpatient for inpatient care. There would appear to be little value in future research which relies upon case-control designs. Strong evidence would require RCTs that acknowledge the extra costs of the small case load of ACT and that demonstrate reduction of hospital costs. Sensitivity analysis might be one approach to these problems. Further research could assess calls for extension of EI to 5 years by examining the pattern of resource utilization over time to determine whether small case loads are expected to reduce costs. Ambitious research could investigate whether there is a subset of psychotic patients, such as those continuously psychotic, which can be identified and targeted early in presentation.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial or not-for-profit sectors.

Declaration of interest

The authors report no conflicts of interest. The authors alone are responsible for the content and writing of the paper.